The Unseen Variable: How Sample Preparation Directly Dictates Spectroscopic Data Quality and Reliability

This article provides a comprehensive examination of the critical, yet often underestimated, role of sample preparation in spectroscopic analysis.

The Unseen Variable: How Sample Preparation Directly Dictates Spectroscopic Data Quality and Reliability

Abstract

This article provides a comprehensive examination of the critical, yet often underestimated, role of sample preparation in spectroscopic analysis. Tailored for researchers, scientists, and drug development professionals, we explore the fundamental principles linking preparation to spectral integrity, detail technique-specific methodologies for XRF, ICP-MS, and FT-IR, and present advanced troubleshooting and optimization strategies. Drawing on recent studies and validation protocols, the article synthesizes foundational knowledge with practical applications, offering a systematic framework to minimize analytical errors, enhance reproducibility, and ensure data validity in biomedical and clinical research settings.

The Critical Link: Why Sample Preparation is the Foundation of Reliable Spectroscopy

In the realm of analytical science, the quality of results is fundamentally dictated by the steps taken before instrumentation ever comes into play. Inadequate sample preparation is the startling cause of as much as 60% of all spectroscopic analytical errors [1]. This statistic underscores a critical vulnerability in analytical workflows: unless samples are properly prepared, researchers risk collecting misleading data that can compromise research projects, quality control practices, and analytical conclusions [1]. Sample preparation for spectroscopic proof requires a high degree of care and technique-specific methods, whether employing XRF, ICP-MS, FT-IR, or Raman spectroscopy [1]. The journey from raw material to analyzable specimen directly determines the quality of the final data, making sample preparation not merely a preliminary step, but the foundation upon which analytical accuracy is built.

This technical guide examines the dominant source of error in analytical results through the context of a broader thesis on how sample preparation affects spectroscopic results research. For researchers, scientists, and drug development professionals, understanding these principles is not optional—it is essential for producing valid, reliable, and meaningful analytical data.

The Foundation: Why Sample Preparation Introduces Error

Fundamental Principles of Analytical Error

Sample preparation directly relates to the quality and integrity of spectroscopic data, and not even the most advanced instrumentation can compensate for badly prepared samples [1]. Preparation problems affect results through several fundamental mechanisms that originate from the material's inherent characteristics and its interaction with preparation equipment and processes.

The physical and chemical properties of a sample directly influence how radiation behaves during analysis. Rough surfaces scatter light randomly, while monodisperse particle size ensures uniform interaction with radiation [1]. Furthermore, excessive variation in particle size creates sampling error that compromises quantitative analysis. These issues are compounded by matrix effects, where sample matrix constituents absorb or add to spectral signals, obscuring or enhancing the analyte response [1]. Proper preparation techniques remove such interferences through dilution, extraction, or matrix matching.

Homogeneity is equally crucial for representative sampling. Heterogeneous samples yield non-reproducible results because the examined portion may not represent the whole sample [1]. Grinding, milling, and mixing techniques prepare homogeneous samples that yield reproducible, reliable data. Perhaps most insidiously, contamination introduces unwanted material that generates spurious spectral signals. Cross-contamination between samples or from preparation equipment can render results worthless, making proper cleaning techniques essential throughout the preparation process [1].

Classification of Errors in the Analytical Workflow

Within the complete analytical pathway, errors can be systematically classified according to their nature and origin. The Theory of Sampling (TOS) identifies that sampling errors originate from only three fundamental sources: the material (which is always heterogeneous to some degree), the sampling equipment design, and the sampling process execution [2]. Traditional analytical chemistry further categorizes errors into three major types, as detailed in the table below.

Table: Classification of Analytical Error Types

| Error Type | Effect on Results | Common Sources in Sample Preparation | Corrective Approaches |

|---|---|---|---|

| Systematic (Determinate) Errors | Affect accuracy; cause all results to be consistently too high or too low | Contaminated reagents, incorrect calibration standards, improper dilution techniques, method limitations [2] [3] | Use high-purity reagents, implement matrix-matched calibration, employ internal standardization [3] [4] |

| Random (Indeterminate) Errors | Affect precision; cause scatter around the mean value | Inhomogeneous samples, particle size variations, inconsistent weighing or pipetting, environmental fluctuations [2] | Improve homogenization, control environmental conditions, use appropriate measurement tools, replicate measurements [1] [3] |

| Gross Errors | Large deviations from true value; often obvious outliers | Sample mix-ups, incorrect calculations, complete method failure, transcription errors [2] [3] | Implement rigorous documentation, use automated systems where possible, establish quality control protocols |

A crucial distinction exists between error and uncertainty in the context of sampling. It is not possible to ascertain the representativity status of a specific sample or analytical aliquot from any observable feature of the sample itself [2]. Representativity can only be defined and documented as a characteristic of the sampling process—everything depends on the sampling equipment, how it is designed, used, and maintained [2]. This is where representativity can be forfeited through sampling errors that have not been suitably eliminated or reduced.

Technical Deep Dive: Preparation Techniques and Their Associated Errors

Solid Sample Preparation Techniques

Solid sample preparation remains the foundation for producing repeatable spectroscopic data, as physical characteristics directly influence spectral quality [1]. Several specialized techniques transform raw materials into analyzable specimens, each with specific protocols to minimize associated errors.

Grinding and Milling: Grinding reduces particle size and generates homogeneous samples through mechanical friction, significantly impacting spectral quality through equal interaction with radiation [1]. When selecting grinding equipment, technicians must consider material hardness, final particle size requirements (typically <75μm for XRF), and contamination hazards [1]. Swing grinding machines are particularly effective for tough samples like ceramics and ferrous metals as they use oscillating motion rather than direct pressure, reducing heat formation that might alter sample chemistry [1]. For optimum results, grind every sample set under identical conditions and clean intensively between samples to prevent cross-contamination.

Milling provides more particle size reduction control than grinding, with fine-surface milling machines producing higher surface quality, particularly with non-ferrous materials [1]. The even, flat surfaces from milling enhance spectral quality by minimizing light scattering effects, offering consistent density across the sample surface, and exposing internal material structure for more representative analysis [1]. Modern spectroscopic milling machines feature programmable parameters like rotational speed, feed rate, and cutting depth, with dedicated cooling systems to reduce thermal degradation during processing.

Pelletizing and Fusion: Pelletizing transforms powdered samples into solid disks of uniform surface properties and density for XRF analysis, yielding samples with uniform X-ray absorption properties essential for accurate quantitative analysis [1]. The process typically involves blending the ground sample with a binder (e.g., wax or cellulose), pressing using hydraulic or pneumatic presses (typically 10-30 tons), and producing pellets with flat, smooth surfaces and equal thickness [1]. Proper pellet preparation dramatically affects analytical accuracy through improved sample stability and reduced matrix effects.

Fusion represents the most stringent preparation technique for complete dissolution of refractory materials into homogeneous glass disks, preventing particle size and mineral effects that plague other preparation techniques [1]. The fusion process involves blending the ground sample with a flux (typically lithium tetraborate), melting at temperatures between 950-1200°C in platinum crucibles, and casting the molten charge as a disk for analysis [1]. Fusion is superior for silicate materials, minerals, and ceramics as it totally breaks down crystal structures and standardizes the sample matrix, eliminating effects that slow quantitative analysis.

Liquid and Gas Sample Preparation Techniques

Liquid and gaseous samples present unique analytical challenges that require specialized preparation methods. Their physical state affects everything from container selection to handling protocols, with understanding these nuances being essential for delivering precise, reproducible results across diverse spectroscopic techniques.

Dilution and Filtration for ICP-MS: Inductively Coupled Plasma Mass Spectrometry (ICP-MS) demands stringent liquid sample preparation due to its high sensitivity, where subtle preparation errors can radically skew analytical results [1]. Dilution plots analyte concentrations into the optimal instrument detection range, reduces matrix effects that disrupt accurate measurement, and prevents damage to sensitive instrument components from high salt levels [1]. Samples with dense dissolved solid content generally require greater dilution—sometimes exceeding 1:1000 for highly concentrated solutions.

Filtration subsequently removes suspended material that could contaminate nebulizers or hinder ionization [1]. Filtration using 0.45 μm membrane filters is adequate for most ICP-MS applications, though ultratrace analysis might necessitate 0.2 μm filtration [1]. Technicians must select filter materials that won't introduce contamination or adsorb the analyte of interest, with PTFE membranes typically providing the best balance of chemical resistance and low background. High-purity acidification with nitric acid (typically to 2% v/v) retains metal ions in solution by preventing precipitation and adsorption against vessel walls [1].

Solvent Selection Principles: The choice of solvent significantly influences spectral quality for both UV-Visible and FT-IR spectroscopy [1]. The optimum solvent dissolves the sample completely without being spectroscopically active in the analytical region of interest. For UV-Vis, key solvent properties include cutoff wavelength (below which the solvent absorbs strongly), polarity (affecting solubility of target compounds), and purity grade (with sensitivity-grade solvents minimizing background interference) [1]. For FT-IR, solvent selection is even more critical since solvent absorption bands can overlap with significant analyte features [1].

Table: Sample Preparation Techniques and Associated Error Mitigation Strategies

| Preparation Technique | Primary Applications | Common Errors | Error Mitigation Strategies |

|---|---|---|---|

| Grinding & Milling | XRF, Solid sampling techniques | Contamination from equipment, particle size inconsistencies, heat degradation [1] | Use specialized grinding surfaces, control grinding time and pressure, employ cooling systems, clean thoroughly between samples [1] |

| Pelletizing | XRF analysis | Inhomogeneous binding, inconsistent density, surface irregularities [1] | Use appropriate binders, apply consistent pressure, ensure homogeneous powder mixing [1] |

| Fusion | Refractory materials, minerals, ceramics | Incomplete fusion, flux contamination, loss of volatile elements [1] | Optimize temperature and time, use high-purity flux, employ proper crucible materials [1] |

| SPE (Solid-Phase Extraction) | LC-MS, GC-MS, sample cleanup | Inconsistent sample loading, inadequate washing, incomplete elution [3] [4] | Condition sorbent properly, use internal standards, optimize loading/elution solvents [3] |

| Liquid-Liquid Extraction | Soluble analytes, matrix separation | Incomplete phase separation, emulsion formation, inefficient partitioning [4] | Adjust solvent polarity, use centrifugation, add salts to improve separation [4] |

| Nitrogen Blowdown Evaporation | Sample concentration, solvent exchange | Sample loss, degradation of volatile compounds, contamination [4] | Control temperature (30-40°C), optimize gas flow rate, use appropriate vessels [4] |

Methodologies: Experimental Protocols for Error Reduction

Quantitative Sample Preparation Workflow

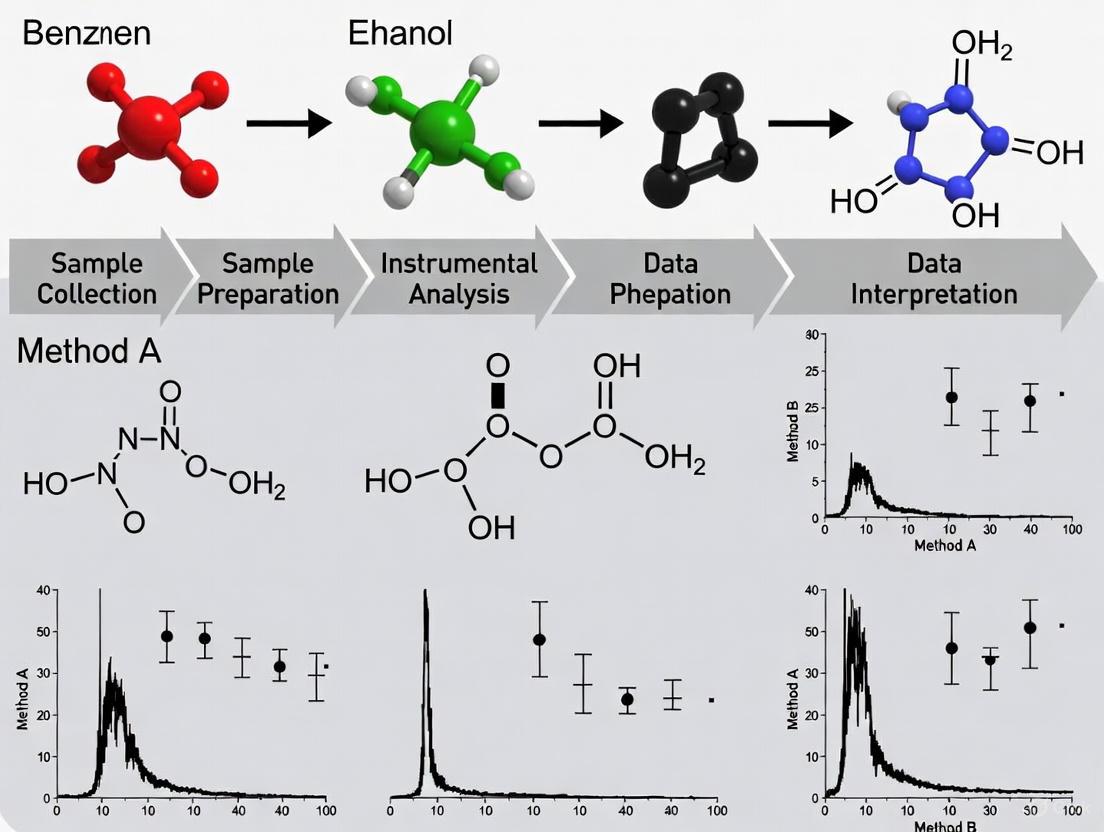

The following diagram illustrates the comprehensive workflow for quantitative sample preparation, highlighting critical control points where accuracy must be verified to minimize systematic and random errors.

Internal Standardization Protocol

For precise quantitative analysis, particularly in chromatography-mass spectrometry applications, internal standardization provides a powerful method to compensate for preparation inconsistencies. The following protocol details the optimal approach:

Materials Required:

- High-purity analytical standards (analyte and internal standard)

- Precision micropipettes (calibrated regularly)

- MS-grade solvents

- Appropriate volumetric glassware

Procedure:

- Add a known amount of internal standard to the sample immediately after weighing/volume measurement, ensuring it is present before any extraction steps [3].

- Process the sample through all preparation steps (extraction, cleanup, concentration).

- The internal standard will undergo the same proportional losses as the analyte during preparation.

- Calculate analyte concentration using the response ratio relative to the internal standard, which corrects for preparation inconsistencies [3].

Calculation Formula: When using an internal standard, the concentration can be calculated using the modified formula where the final sample extract volume is not a factor, thereby removing a significant source of potential error [3]:

Where:

- C = Concentration of the analyte

- AS = Area or height count of the sample compound peak

- CIS = Concentration of internal standard

- D = Dilution factor, if applicable

- AIS = Area or height count of the internal standard peak

- RF = Average calibration response factor from the calibration curve

- VS = Total volume of the sample used

This approach significantly improves quantitative accuracy compared to external standardization methods where a 5% error in sample volume leads directly to a 5% change in the calculated amount [3].

The Scientist's Toolkit: Essential Research Reagent Solutions

Proper sample preparation requires specific high-quality materials and reagents to maintain sample integrity and prevent introduction of errors. The following table details essential items for reliable sample preparation workflows.

Table: Essential Research Reagent Solutions for Sample Preparation

| Item | Function | Application Notes |

|---|---|---|

| MS-Grade Solvents | High-purity solvents minimize background interference and contamination [4] | Essential for LC-MS/MS and GC-MS applications; lower UV cutoff for UV-Vis |

| Stable Isotope-Labeled Internal Standards | Correct for matrix effects and preparation inconsistencies [3] [4] | Should be added as early as possible in the preparation process |

| High-Purity Acids (HNO₃, HCl) | Sample digestion and preservation without introducing trace metal contamination [1] | Trace metal grade or better for elemental analysis |

| SPE Cartridges (Various Phases) | Selective extraction and cleanup of samples to remove interfering matrix components [3] [4] | C18 for reversed-phase, silica for normal-phase, specialized for specific compound classes |

| PTFE Membrane Filters (0.2μm, 0.45μm) | Remove particulate matter that could damage instrumentation or cause interference [1] | 0.45μm for general use; 0.2μm for ultratrace analysis or UHPLC applications |

| Inert Sample Vials (Amber Glass) | Prevent sample degradation and adsorption; protect light-sensitive compounds [4] | Amber glass protects against UV light; silanized glass reduces adsorption |

| Certified Reference Materials | Method validation and quality control to ensure accuracy [2] | Should match sample matrix as closely as possible |

| High-Purity Fusion Fluxes (Li₂B₄O₇) | Complete dissolution of refractory materials for XRF analysis [1] | Platinum crucibles required for high-temperature fusion |

The evidence is unequivocal: sample preparation constitutes the dominant source of error in analytical results, accounting for approximately 60% of spectroscopic analytical errors [1]. This technical examination has demonstrated that regardless of the sophistication of analytical instrumentation, proper sample preparation remains non-negotiable for generating valid, reliable data. From solid sample techniques like grinding and fusion to liquid sample preparation through dilution and filtration, each step introduces potential error sources that must be systematically controlled.

The path to superior analytical outcomes requires treating sample preparation with the same rigor as instrumental analysis itself. This includes implementing robust methodologies like internal standardization, using appropriate high-purity reagents, understanding and controlling for matrix effects, and maintaining scrupulous attention to potential contamination sources. For researchers, scientists, and drug development professionals, embracing these principles transforms sample preparation from a mundane preliminary task to a critical scientific process that ultimately determines the validity of analytical results.

The fidelity of spectroscopic data is paramount across scientific disciplines, from pharmaceutical development to environmental analysis. The journey from a raw sample to a reliable spectral reading is fraught with potential variables that can compromise data integrity. This whitepaper delineates the core principles governing how particle size, homogeneity, and matrix effects directly influence spectral outcomes. Framed within a broader thesis on how sample preparation affects spectroscopic results, this guide provides researchers and drug development professionals with the foundational knowledge and practical methodologies needed to mitigate these pervasive challenges. As emphasized in liquid chromatography troubleshooting, developing a solid understanding of matrix effects and mitigation strategies is crucial both during new method development and when troubleshooting existing methods [5].

Fundamental Concepts and Definitions

Particle Size

Particle size refers to the dimensions of the solid particulates within a sample. It is not a mere physical attribute but a critical parameter that governs light interaction. The size of particles relative to the wavelength of incident light fundamentally affects scattering efficiency, absorption depth, and overall spectral intensity. In spectroscopic practice, particle size controls the effective path length and the amount of material sampled, making it a primary variable in quantitative analysis.

Homogeneity

Homogeneity describes the uniformity of a sample's composition and physical properties throughout its volume. A perfectly homogeneous sample exhibits identical spectroscopic properties at any sampled location. In reality, most samples possess some degree of heterogeneity, which introduces sampling variance and reduces the reproducibility of spectral measurements. The goal of sample preparation is often to maximize homogeneity to ensure that a small, analyzed aliquot is representative of the whole.

Matrix Effects

The matrix is defined as all components of a sample other than the analyte of interest [5]. Matrix effects, therefore, refer to the phenomenon where these co-existing components alter the detector's response to the analyte, leading to either signal suppression or enhancement. The fundamental problem is that the matrix the analyte is detected in can interfere with the detection principle itself, compromising quantitative accuracy [5]. These effects are particularly pronounced in complex samples such as biological fluids, environmental extracts, and pharmaceutical formulations, where numerous interfering compounds may co-elute or interact with the analyte.

The Impact of Particle Size on Spectral Data

Mechanisms of Influence

Particle size affects spectral data through multiple physical mechanisms. As particle dimensions change, so do the relative contributions of absorption and scattering. Smaller particles exhibit greater surface area-to-volume ratios, potentially enhancing surface-related spectral features but also increasing opportunities for light scattering. The interplay between particle size and wavelength determines whether scattering dominates (large particles relative to wavelength) or absorption features are emphasized (small particles).

Experimental Evidence Across Techniques

Transmission Low-Frequency Raman Spectroscopy (TLRS): Research on pharmaceutical tablets containing crystalline carbamazepine Form III demonstrated that particle size significantly impacts both signal intensity and reproducibility. Larger particle sizes (>212 μm) yielded higher Raman signal intensities at 37 cm⁻¹ but exhibited greater variability, while smaller particles (≤100 μm) provided more reproducible spectra and improved semi-quantitative accuracy for detecting crystalline content in amorphous matrices [6].

Attenuated Total Reflection Fourier Transform Infrared (ATR FT-IR) Spectroscopy: Systematic studies with mineral powders revealed explicit dependencies between particle size and spectral band characteristics. As particle size increases, the intensity and area of IR bands typically decrease while band width increases [7]. Notably, band positions often shift to higher wavenumbers with decreasing particle size, and the most intensive IR spectra for minerals were observed in the 2-4 μm particle size fraction [7].

Optical Property Measurements: Spectral deconvolution methods applied to multi-wavelength aerosol extinction, absorption, and scattering measurements can extract particle-size-related information, including the fraction of extinction produced by fine-mode particles and their effective radius [8]. This approach validates that particle size distributions directly govern spectral patterns in optical data.

Quantitative Relationships

Table 1: Particle Size Effects on Spectral Parameters Across Techniques

| Analytical Technique | Particle Size Range | Observed Effect on Spectral Features | Quantitative Impact |

|---|---|---|---|

| ATR FT-IR [7] | <2 μm | Decreased band intensity | Underestimation compared to coarser phases |

| ATR FT-IR [7] | 2-4 μm | Maximum band intensity and area | Optimal for quantification |

| ATR FT-IR [7] | >4 μm | Progressive decrease in intensity and area; band broadening | Nonlinear reduction in sensitivity |

| TLRS [6] | ≤100 μm | Lower intensity but high reproducibility | Improved semi-quantitative accuracy |

| TLRS [6] | >212 μm | Higher intensity but greater variability | Reduced quantification reliability |

Homogeneity and Its Spectral Consequences

Defining Spectral Homogeneity

Spectral homogeneity refers to the consistency of spectral features when measuring different aliquots or locations of the same sample. A homogeneous sample produces nearly identical spectra regardless of sampling position, while a heterogeneous sample exhibits significant spectral variations. This property is crucial for ensuring that analytical results are representative and reproducible.

Assessment Through Multivariate Analysis

Principal Component Analysis (PCA) has proven invaluable for objectively assessing sample homogeneity from spectral data. In studies of asteroid Ryugu returned samples, FTIR spectroscopy combined with PCA demonstrated that 97% of individual grains belonged to a single spectral group, indicating high homogeneity [9]. The remaining 3% exhibited unique spectral features, revealing subtle heterogeneity. In this analysis, PC1 (accounting for >99.7% of variance) correlated with reflectance values, while PC2 (0.2% of variance) related to spectral slope, providing a quantitative measure of homogeneity [9].

Practical Implications for Analysis

The transmission low-frequency Raman spectroscopy study further highlighted homogeneity's importance, finding that tablets prepared with smaller particles (≤100 μm) exhibited more distinct clustering in PCA score plots based on crystalline-to-amorphous ratios [6]. This enhanced differentiation stems from increased homogeneity and better spectral averaging in finer powders. When the spectral analysis region was expanded to include multiple features (10-200 cm⁻¹), improved clustering occurred even for larger particle sizes, demonstrating how analytical parameters can partially compensate for heterogeneity [6].

Origins of Matrix Effects

Matrix effects arise from the physical and chemical interactions between analytes and co-existing sample components throughout the analytical process. In liquid chromatography, the "matrix" includes both components of the sample other than the analyte and the mobile phase components [5]. These effects are particularly problematic when matrix components have retention properties similar to the analyte, causing them to co-elute and enter the detector simultaneously [5].

Manifestations Across Detection Techniques

Mass Spectrometric (MS) Detection: The most well-known matrix effects occur in electrospray ionization, where analytes compete with matrix components for available charge during desolvation, leading to ion suppression or enhancement [5].

Fluorescence Detection: Matrix components can affect the quantum yield of the fluorescence process through quenching phenomena, leading to signal suppression [5].

UV/Vis Absorbance Detection: Solvatochromism effects can occur, where the absorptivity of analytes is affected by mobile phase solvents, leading to increases or decreases in observed absorption [5].

Evaporative Light Scattering (ELSD) and Charged Aerosol Detection (CAD): Mobile phase additives can influence aerosol formation processes, resulting in significant response enhancement or suppression [5].

Quantifying Matrix Effects

Matrix effect can be quantified by comparing analyte response in a matrix-matched solution to that in a pure solvent [10]. The calculation is straightforward:

Matrix Effect (%) = (SignalinMatrix / SignalinNeat_Standard) × 100%

For example, if the signal in the matrix solution is 70% of the signal for the neat standard, this indicates 30% signal loss due to matrix effects [10]. A value of 100% indicates no matrix effect, while values below 100% indicate suppression and values above 100% indicate enhancement.

Experimental Protocols for Investigation

Protocol 1: Assessing Particle Size Effects in Transmission Raman Spectroscopy

Objective: To systematically evaluate how particle size influences signal intensity and reproducibility in transmission low-frequency Raman spectroscopy of pharmaceutical tablets.

Materials:

- API (e.g., carbamazepine)

- Excipients for tablet formulation

- Sieve sets for particle size separation (e.g., ≤100 μm, 100-212 μm, >212 μm)

- Tablet press

- Transmission Raman spectrometer

Methodology:

- Prepare sample materials with distinct crystalline/amorphous ratios.

- Sieve powders into defined size fractions (≤100 μm, 100-212 μm, >212 μm).

- Compress tablets at controlled pressures (e.g., 10-30 MPa) using constant mass.

- Acquire transmission Raman spectra with fixed laser power and exposure time.

- Measure peak intensity (e.g., at 37 cm⁻¹ for carbamazepine Form III) and calculate coefficient of variation across replicates.

- Perform principal component analysis on spectral datasets (21-52 cm⁻¹ and 10-200 cm⁻¹ ranges) to assess clustering by composition.

Expected Outcomes: Smaller particles (≤100 μm) will yield lower absolute intensity but superior reproducibility and clearer multivariate clustering, enabling more reliable quantification of crystalline content in amorphous matrices [6].

Protocol 2: Evaluating Matrix Effects in LC-MS

Objective: To quantify matrix-induced suppression/enhancement for analytes in complex samples using liquid chromatography-mass spectrometry.

Materials:

- Blank matrix (e.g., biological fluid, tissue extract)

- Analyte standards

- Appropriate solvents for preparation of neat standards

- LC-MS system with electrospray ionization

Methodology:

- Prepare post-extraction blank matrix samples (n ≥ 6 from different sources).

- Spike blank matrices with target analyte at low, mid, and high concentrations.

- Prepare neat standard solutions in mobile phase at identical concentrations.

- Inject all samples in randomized sequence and record peak areas.

- Calculate matrix effect (ME) for each concentration: ME% = (Mean peak area of post-extraction spiked samples / Mean peak area of neat standards) × 100

- Determine IS-normalized ME if using internal standards.

Expected Outcomes: Signal suppression (ME% < 100%) is commonly observed in electrospray ionization MS due to competition for charge during ionization [5] [10]. High variability in ME across different matrix sources indicates significant matrix effect.

Protocol 3: Investigating Homogeneity via FTIR Spectroscopy

Objective: To assess sample homogeneity using Fourier Transform Infrared spectroscopy with principal component analysis.

Materials:

- Sample set (e.g., multiple grains or powder aliquots)

- FTIR spectrometer with reflectance accessory

- Gold standard background reference material

Methodology:

- Acquire background spectrum using diffuse gold reflectance standard.

- Collect FTIR spectra (e.g., 2.65-4.1 μm range) from multiple sample positions/locations.

- Pre-process spectra (vector normalization, baseline correction).

- Perform principal component analysis on spectral dataset.

- Examine score plots for clustering patterns and identify outliers.

- Calculate percentage of samples falling within main spectral group.

Expected Outcomes: Highly homogeneous samples will cluster tightly in PCA score plots, with PC1 (>99% variance) primarily reflecting reflectance intensity rather than spectral shape differences [9].

Mitigation Strategies and Best Practices

Addressing Particle Size Issues

- Standardized Milling and Sieving: Implement controlled size reduction followed by separation into defined size fractions to ensure batch-to-batch consistency [6].

- Geometric Dilution: For powder mixtures, employ progressive dilution with excipients to enhance homogeneity of low-concentration components.

- Laser Power Optimization: Balance signal intensity against potential sample degradation, particularly for heat-sensitive materials [6].

Enhancing Sample Homogeneity

- Appropriate Mixing Techniques: Utilize Turbula mixing or similar three-dimensional motion that provides gentle but effective blending without particle segregation.

- Multiple Sampling Points: In backscattering Raman measurements, implement multipoint acquisition, rotation, or wide-area sampling to average heterogeneous distributions [6].

- Transmission Mode Selection: For depth penetration in solid formulations, prefer transmission-mode Raman which probes the entire sample volume rather than just surface features [6].

Counteracting Matrix Effects

Table 2: Strategies for Mitigating Matrix Effects in Analytical Separations

| Strategy Category | Specific Approaches | Mechanism of Action | Applicability |

|---|---|---|---|

| Sample Clean-up | Solid-phase extraction (SPE), Liquid-liquid extraction, Protein precipitation | Removes interfering matrix components prior to analysis | Broad applicability across sample types |

| Chromatographic Optimization | Improved separation, Gradient elution, Alternative stationary phases | Increases temporal separation of analyte from matrix interferences | LC-MS, GC-MS |

| Internal Standardization | Stable isotope-labeled analogs, Structural analogues | Compensates for variable ionization efficiency | Primarily MS detection |

| Calibration Approaches | Matrix-matched standards, Standard addition method | Matches calibration environment to sample environment | All detection techniques |

| Ionization Source Selection | Switching ESI to APCI, or vice versa | Alters ionization mechanism to reduce interference | MS detection |

The internal standard method is particularly effective when practical, especially when using stable isotope-labeled internal standards that behave nearly identically to the analyte yet are detectable separately [5]. As with many troubleshooting topics, developing a solid understanding of matrix effects on quantitation and potential mitigation strategies is helpful for both developing new methods and troubleshooting problems with existing methods [5].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Materials for Investigating Spectral Influences

| Item | Primary Function | Application Notes |

|---|---|---|

| Standard Sieve Sets | Particle size separation and classification | Essential for creating defined size fractions; ASTM certified provides best reproducibility |

| Stable Isotope-Labeled Standards | Internal standards for quantification | Ideally (^{13}\text{C}), (^{15}\text{N}), or (^{2}\text{H})-labeled analogs of target analytes for MS applications |

| Matrix-Matched Blank Materials | Preparation of calibration standards | Should be free of target analytes but otherwise compositionally similar to samples |

| Solid-Phase Extraction (SPE) Cartridges | Sample clean-up and concentration | Select chemistries (C18, ion exchange, mixed-mode) based on target analyte properties |

| Reference Standard Materials | Method validation and quality control | Certified reference materials for verifying method accuracy and precision |

| ATR Crystals (Diamond, ZnSe) | FT-IR spectroscopy sampling | Different crystal materials offer varying hardness, chemical resistance, and wavelength ranges |

Particle size, homogeneity, and matrix effects represent three interconnected pillars that fundamentally govern spectral data quality. Particle size dictates light-matter interaction efficiency, homogeneity ensures representative sampling, while matrix effects directly modulate detector response. Through systematic investigation using the described protocols and implementation of appropriate mitigation strategies, researchers can significantly enhance the reliability of their spectroscopic analyses. As analytical challenges grow increasingly complex with novel pharmaceutical formulations and complex biological samples, adherence to these core principles will remain essential for generating meaningful, reproducible spectral data that advances scientific understanding and product development.

The accuracy and reliability of spectroscopic analysis are fundamentally rooted in the steps taken before a sample ever reaches the instrument. Sample preparation represents the most significant source of potential error in quantitative analysis, directly influencing the validity of experimental results and subsequent conclusions [11]. For researchers and drug development professionals, understanding these technique-specific requirements is not merely procedural but foundational to generating scientifically defensible data. This guide details the unique preparation paradigms for X-Ray Fluorescence (XRF), Inductively Coupled Plasma Mass Spectrometry (ICP-MS), and Fourier-Transform Infrared (FT-IR) spectroscopy, framing them within the critical context of how preparation choices directly affect analytical outcomes. The overarching thesis is that inappropriate sample preparation can systematically bias results, leading to inaccurate concentrations, misidentified compounds, and flawed scientific interpretations, whereas rigorous, technique-specific preparation ensures data quality and analytical integrity.

X-Ray Fluorescence (XRF) Spectroscopy

Core Principles and Preparation Philosophy

XRF spectroscopy determines elemental composition by measuring the characteristic X-rays emitted from a sample following excitation by a primary X-ray source. The fundamental premise of XRF sample preparation is achieving a homogeneous, representative, and flat surface to ensure accurate and reproducible results [11] [12]. The intense focus on physical state and surface characteristics stems from the shallow depth of analysis; for light elements like sodium, the effective layer thickness from which 99% of the signal originates is a mere 4 µm, comparable to the thickness of a human hair [11]. Consequently, any lack of homogeneity or surface imperfection disproportionately impacts the analytical signal.

Detailed Experimental Protocols

Pressed Powder Pellet Method

This common approach is ideal for powdered samples like soils, ores, and heterogeneous biological materials [13] [12].

- Grinding: The bulk sample must be ground to a fine, consistent particle size. The optimal grain size is typically <75 µm to minimize particle size effects and ensure homogeneity [12]. A grinding curve analysis can determine the optimal grinding duration [11].

- Mixing with Binder: The powdered sample is mixed with a binder, such as powdered wax or cellulose, at a specified ratio (e.g., 20-30% binder). The binder provides structural integrity to the pellet. Studies show that the binder ratio is a critical parameter, as variations can introduce systematic errors, underestimating light elements (Mg, Al) and overestimating heavier elements (Mn, Fe) [13].

- Pressing: The mixture is poured into a die and pressed under high pressure (typically 15-25 tons) for 60-120 seconds to form a solid, stable pellet [13]. The pellet must have an infinite thickness for the wavelengths of interest, meaning its thickness is sufficient that further increases do not affect the measured intensity [11].

Fusion Method

For achieving the highest accuracy, particularly with complex mineralogical samples, the fusion method is preferred.

- Weighing: A precise amount of powdered sample (e.g., 1.000 g) is weighed.

- Flux Addition: A flux, such as lithium metaborate or tetraborate (e.g., 5.000 g), is added to the sample. The flux acts as a solvent during the melting process.

- Fusion: The sample-flux mixture is heated in a platinum crucible to high temperatures (often above 1000°C) until it completely melts and homogenizes.

- Casting: The molten mixture is poured into a pre-heated mold to create a stable, homogeneous glass bead. This method effectively destroys all original mineral structures, eliminating mineralogical and particle size effects [11].

The Scientist's Toolkit: Essential Reagents for XRF

Table 1: Key Research Reagent Solutions for XRF Sample Preparation.

| Reagent/Material | Function | Technical Notes |

|---|---|---|

| Powdered Wax/Cellulose Binder | Provides structural integrity to pressed powder pellets. | The ratio to sample is critical; higher ratios can cause systematic errors for light and heavy elements [13]. |

| Lithium Metaborate/Tetraborate | High-temperature flux for fusion method. | Creates a homogeneous glass bead, eliminating mineralogical effects [11]. |

| Polyester/Polypropylene Film | Supports loose powders or liquids in sample cups. | Prevents contamination; type must be selected based on the sample (e.g., polyester for oil products) [12]. |

| Grinding Mill & Vials | Reduces particle size for homogeneity. | Achieves optimal particle size of <75 µm; materials (e.g., WC, Cr-steel) must avoid contaminating analytes [12]. |

Impact of Preparation on Analytical Results

The choice of preparation method directly dictates analytical accuracy. The fusion method consistently yields high accuracy and precision by creating a perfectly homogeneous sample, as illustrated by the bull's-eye on the right in Figure 2 of the source material [11]. In contrast, the pressed pellet method, while faster, can yield high precision but poor accuracy if standards and unknowns differ in mineralogy, particle size, or density—a phenomenon known as the "mineralogical effect" [11]. For example, analyzing polymorphs of Al₂SiO₅ (kyanite, sillimanite) using one as a standard for another can result in analysis totals ranging from 75% to 125% despite identical chemical composition [11].

Inductively Coupled Plasma Mass Spectrometry (ICP-MS)

Core Principles and Preparation Philosophy

ICP-MS is a powerful technique for trace and ultra-trace elemental (and isotopic) analysis. Its sample preparation philosophy centers on achieving complete dissolution of the analyte into a stable, liquid form while managing the Total Dissolved Solids (TDS) content and mitigating spectral and non-spectral interferences [14] [15]. The high-temperature plasma (6000-8000°C) efficiently atomizes and ionizes samples, but the sample introduction system (nebulizer, spray chamber) is highly susceptible to clogging from particulates or high dissolved solids [14] [15].

Detailed Experimental Protocols

Acid Digestion of Solid Samples

For solid samples like tissues, soils, or pharmaceuticals, acid digestion is often mandatory.

- Weighing: Accurately weigh 0.1 - 0.5 g of sample into a digestion vessel.

- Acid Addition: Add a suitable acid or acid mixture. Common choices include:

- Nitric Acid (HNO₃): A primary choice for its oxidizing power and low interference in the plasma [15] [16].

- Aqua Regia (3:1 HCl:HNO₃): Effective for dissolving metallic materials and stabilizing mercury and platinum group elements [15].

- Hydrofluoric Acid (HF): Essential for digesting silicates but requires specialized inert sample introduction systems (e.g., fluoropolymer nebulizers) due to its corrosiveness [15] [17].

- Digestion: Heat the vessels using a hot block or, preferably, a closed-vessel microwave digestion system. Microwave digestion allows for higher temperatures and pressures, ensuring complete decomposition while minimizing contamination and the loss of volatile elements [15] [16]. A clear, particle-free solution indicates complete digestion.

Dilution of Liquid Samples

Biological fluids like blood and urine often require simple dilution.

- Aliquot: Pipette a precise aliquot of the liquid sample (e.g., 100 µL of blood).

- Diluent Addition: Add a diluent to a defined volume (e.g., 10 mL total volume for a 1:100 dilution). Common diluents include:

- Dilute Nitric Acid (1-5%): Stabilizes metals but can precipitate proteins in blood [16] [17].

- Alkaline Diluents with Chelators: A solution of Tetramethylammonium Hydroxide (TMAH) and a chelating agent like EDTA or APDC is better tolerated by proteinaceous blood samples, preventing precipitation and stabilizing elements like mercury [14] [17].

- Detergent Addition: Reagents like Triton X-100 are added to solubilize and disperse lipids and membrane proteins, ensuring homogeneity [14] [17].

The Scientist's Toolkit: Essential Reagents for ICP-MS

Table 2: Key Research Reagent Solutions for ICP-MS Sample Preparation.

| Reagent/Material | Function | Technical Notes |

|---|---|---|

| High-Purity Nitric Acid (HNO₃) | Primary oxidant for digestion; dilute diluent for liquids. | Must be high-purity grade to prevent contamination; unsuitable for blood alone due to protein precipitation [15] [17]. |

| Tetramethylammonium Hydroxide (TMAH) | Alkaline diluent for biological fluids. | Solubilizes tissues and is better tolerated by proteinaceous samples than acid [14] [17]. |

| Triton X-100 | Non-ionic surfactant. | Disperses lipids and membrane proteins, preventing nebulizer clogging and ensuring homogeneity [14] [17]. |

| Ammonium Pyrrolidinedithiocarbamate (APDC) | Chelating agent for soft metals. | Forms stable, water-soluble complexes with elements like mercury, eliminating memory effects in the introduction system [17]. |

| Internal Standard Mixture | Corrects for non-spectral matrix effects and instrument drift. | Elements like Sc, Y, In, Tb, or Bi are added online to all samples and standards to monitor and correct signal suppression/enhancement [16]. |

Impact of Preparation on Analytical Results

Inadequate ICP-MS preparation directly causes analytical failures. A TDS content exceeding 0.2 - 0.5% (m/v) can lead to signal drift and suppression from matrix effects and nebulizer blockage [15]. The choice of acid is also critical; sulfuric acid should be avoided as it creates polyatomic interferences and damages Teflon digestion vessels [15]. Furthermore, the aqueous chemistry of the analyte must be considered. For example, uranium in pure water will adhere to the introduction system tubing, yielding a false negative, while acidification with 1% HNO₃ stabilizes it in solution and provides the correct result [17]. Memory effects from elements like thorium and mercury can be mitigated only by using specific chelating agents (e.g., fluoride for Th, APDC for Hg) in the rinse solution [17].

Fourier-Transform Infrared (FT-IR) Spectroscopy

Core Principles and Preparation Philosophy

FT-IR spectroscopy probes molecular structure by measuring the absorption of infrared light by molecular bonds, which vibrate at characteristic frequencies. The resulting spectrum is a "molecular fingerprint." The core preparation philosophy for FT-IR is to present the sample in a form that allows for efficient and reproducible infrared light interaction without introducing artifacts or obscuring the spectral regions of interest [18] [19]. A paramount concern is the elimination of water, as it has strong, broad absorptions that can dominate the spectrum and mask important sample peaks [19].

Detailed Experimental Protocols

Attenuated Total Reflectance (ATR) Sampling

ATR is a nearly universal sampling mode that requires minimal preparation.

- Sample Presentation: For solids, the sample is placed in direct, firm contact with the ATR crystal (e.g., Diamond, ZnSe). For liquids, a few drops are placed on the crystal.

- Applying Pressure: A pressure clamp is used to ensure good optical contact between the sample and the crystal. Intimate contact is critical for a quality spectrum.

- Drying: For biological samples (tissues, cells, biofluids), it is essential to remove all water. This is typically done by air-drying or under a gentle stream of nitrogen gas while monitoring the spectrum until the broad water bands diminish [19].

- Data Collection: The infrared beam is directed into the crystal, where it undergoes total internal reflection, generating an evanescent wave that interacts with the sample in contact with the crystal.

Transmission Sampling

This traditional method is used for samples that can be prepared as thin films or diluted in IR-transparent matrices.

- Solid Preparation (KBr Pellet):

- Grind 1-2 mg of the sample with 100-200 mg of dry potassium bromide (KBr) powder.

- Press the mixture under high vacuum in a die to form a transparent pellet. This method dilutes the sample in an IR-transparent medium.

- Liquid Preparation: The liquid sample is sandwiched between two IR-transparent windows (e.g., KBr, NaCl) separated by a thin spacer to define the pathlength.

Impact of Preparation on Analytical Results

Sample preparation directly affects the quality and interpretability of FT-IR spectra. The choice of sampling mode influences spectral features; for instance, transflection measurements can produce distorted band intensities due to the electric field standing wave effect [19]. For biological tissues, analyzing formalin-fixed paraffin-embedded (FFPE) samples requires a rigorous dewaxing procedure with xylol to remove the paraffin, whose strong IR bands would otherwise obscure the biological signal [19]. Inadequate drying leaves residual water vapor, which contributes sharp, overlapping peaks that complicate baseline correction and data interpretation [19]. Proper preparation is essential for revealing the true "molecular fingerprint" of the sample.

The unique preparation requirements for XRF, ICP-MS, and FT-IR stem from their fundamental physical principles: XRF probes elemental composition via X-ray excitation of a solid surface, ICP-MS requires a liquid solution for atomization/ionization, and FT-IR investigates molecular structure through IR absorption. The experimental workflows for each technique, driven by these principles, are summarized below.

The path from a raw sample to a reliable analytical result is paved with technique-specific preparation. The overarching conclusion is that sample preparation is not a peripheral concern but a foundational component of spectroscopic analysis. The "Golden Rule for Accuracy in XRF"—that standards and unknowns must be nearly identical in physical and mineralogical characteristics—holds true across all techniques [11]. For ICP-MS, it translates to matrix-matching and controlling TDS; for FT-IR, it means ensuring proper physical form and removing interferents like water. Neglecting these specific requirements introduces systematic errors that no advanced instrument or sophisticated software can later correct. Therefore, a deep understanding and meticulous application of these technique-specific foundations are indispensable for any researcher committed to data integrity in scientific and drug development endeavors.

In analytical spectroscopy, the sophistication of modern instrumentation can create an illusion of inherent accuracy. However, even the most advanced spectrometer cannot compensate for a poorly prepared sample. Sample preparation serves as the critical bridge between a raw, complex material and a reliable analytical result, forming the foundation upon which all subsequent data is built. Within the context of a broader thesis on spectroscopic results research, it is evident that the preparation phase is not merely a preliminary step but a pivotal determinant of analytical success. Inadequate preparation is the source of as much as 60% of all spectroscopic analytical errors, embedding inherent weaknesses into the study before data acquisition even begins [1]. This article examines the direct consequences of inadequate sample preparation on both quantitative and qualitative analysis, detailing the mechanisms of failure and providing validated protocols to safeguard data integrity for researchers, scientists, and drug development professionals.

The necessity of rigorous sample preparation stems from several fundamental requirements. Firstly, it aims to remove or reduce matrix effects, where co-eluting components can suppress or enhance the analyte signal, particularly in techniques like mass spectrometry [20]. Secondly, it ensures homogeneity, guaranteeing that the analyzed aliquot is representative of the entire sample, which is crucial for obtaining reproducible results [1]. Thirdly, it brings the analyte concentration within the detectable range of the instrument, often through pre-concentration, thereby improving sensitivity and achieving lower limits of detection (LOD) and quantification (LOQ) [20]. Finally, proper preparation protects costly and sensitive instrumentation from contamination by particulates, salts, or other interfering substances that can cause instrumental drift, clogging, or long-term damage [20].

Consequences of Inadequate Preparation

The pitfalls of inadequate sample preparation manifest across various spectroscopic techniques, directly impacting the validity of both quantitative measurements and qualitative identification. The following sections dissect these consequences, which range from introducing substantial analytical errors to completely misleading the analytical interpretation.

Impacts on Quantitative Analysis

Quantitative analysis relies on the precise correlation between the measured signal and the analyte concentration, most famously described by the Beer-Lambert law in absorption spectroscopy [21]. Inadequate preparation directly undermines the assumptions of this relationship, leading to significant errors in concentration determination as shown in the table below.

Table 1: Impact of Inadequate Sample Preparation on Quantitative Analysis

| Preparation Failure | Consequence on Quantitative Analysis | Underlying Mechanism | Typical Resulting Error |

|---|---|---|---|

| Insufficient Homogenization | Non-representative sampling and high result variance [1]. | Heterogeneous distribution of analyte; measured portion does not reflect the whole. | High standard deviation between replicates; inaccurate mean concentration. |

| Incomplete Digestion/Dissolution | Low analyte recovery and signal suppression [22]. | Analyte trapped in solid matrix or undissolved particles; not available for detection. | Negatively biased results; reported concentration lower than true value. |

| Improper pH Adjustment | Altered extraction efficiency and chemical stability [20]. | Ionizable analytes change form; recovery during extraction is pH-dependent. | Inconsistent and unpredictable recovery, either low or high. |

| Contamination Introduction | Positively biased results and elevated baselines [1]. | Contaminants from equipment or reagents introduce additional signals. | Falsely high concentration readings; impossible to distinguish from true analyte. |

| Inadequate Cleanup (Matrix Effects) | Signal suppression or enhancement in MS and OES [20]. | Co-eluting matrix components interfere with ionization efficiency in the source. | Inaccurate concentration, often not corrected by internal standard. |

For instance, in the quantitative analysis of trace metals in drinking water using ICP-MS, failure to employ filtration and acidification can lead to suspended particulates or microbial growth, producing artificially elevated readings or unstable baselines [20]. Similarly, in pharmaceutical testing, if excipients like binders and fillers are not removed through solid-phase extraction (SPE) or liquid-liquid extraction (LLE), the chromatogram may display overlapping peaks, making reliable quantification of the active pharmaceutical ingredient (API) impossible [20].

Impacts on Qualitative and Structural Analysis

Qualitative analysis depends on the integrity of the spectral "fingerprint" for accurate identification. Compromised sample preparation introduces artefacts and obscures critical spectral features, leading to misidentification and a fundamental failure of the analysis as shown in the table below.

Table 2: Impact of Inadequate Sample Preparation on Qualitative Analysis

| Preparation Failure | Consequence on Qualitative Analysis | Underlying Mechanism | Typical Resulting Error |

|---|---|---|---|

| Particle Size/Surface Irregularity | Increased light scattering and distorted spectral baselines [1]. | Rough surfaces and variable particle size cause non-uniform interaction with radiation. | Obscured absorption bands; incorrect functional group identification (IR/Raman). |

| Contamination Introduction | Appearance of spurious spectral peaks [1]. | Foreign substances from equipment or environment produce their own spectral signals. | False positives; misidentification of contaminants as sample components. |

| Inadequate Sample Form | Incorrect elemental composition results [1]. | XRF analysis requires flat, homogeneous pellets; otherwise, X-ray absorption varies. | Erroneous elemental identification and concentration ratios. |

| Solvent Interference | Masking of analyte peaks [1]. | Solvent absorption bands (e.g., in FT-IR) overlap with critical analyte features. | Key molecular vibrations are hidden, preventing accurate structural elucidation. |

| Sample Degradation | Loss of genuine spectral features and formation of new ones. | Improper storage or harsh preparation alters the native chemical structure. | Identification of degradation products instead of the original analyte. |

A prominent example is found in Surface-Enhanced Raman Spectroscopy (SERS) for environmental analysis. The presence of Natural Organic Matter (NOM), such as humic substances, in water samples can cause a microheterogeneous distribution of the target analytes on the nanoparticle substrate. This matrix effect degrades SERS performance and introduces spectral artefacts, complicating or preventing the accurate identification of pollutants [23]. Furthermore, for techniques like FT-IR, the choice of solvent is critical; an inappropriate solvent with strong absorption bands in the mid-IR region can completely obscure the characteristic peaks of the analyte, rendering the spectrum useless for identification [1].

Experimental Protocols: Optimized Methodologies

To mitigate the consequences described above, the implementation of rigorously optimized and technically robust sample preparation protocols is essential. The following sections detail specific methodologies that have been experimentally validated to ensure data quality.

Central Composite Design for Multi-Residue Analysis in Waters

The optimization of a sample preparation protocol for the determination of 172 emerging contaminants (ECs)—including pharmaceuticals, personal care products, illicit drugs, and flame retardants—in wastewater and tap water serves as a prime example of a systematic approach. The method employed solid-phase extraction (SPE) followed by analysis with liquid chromatography-high-resolution mass spectrometry (LC-Orbitrap MS/MS) [24].

- Objective: To develop a single, generic SPE protocol capable of efficiently extracting a wide range of compounds with diverse physicochemical properties (logP from -3.6 to 10.2).

- Experimental Design: A Central Composite Design (CCD) was used to optimize the critical SPE parameters beyond the traditional one-variable-at-a-time approach. This response surface methodology allowed for the empirical modeling of polynomial relationships between factors and extraction efficiency.

- Factors Optimized:

- Water Sample pH: Tested across a range to account for acidic, neutral, and basic analytes.

- Elution Solvent Composition: Evaluated different ratios and types of organic solvents (e.g., MeOH, Acetonitrile, Ethyl Acetate).

- Elution Volume: Optimized to ensure complete analyte desorption while minimizing dilution.

- Outcome: The CCD approach successfully identified the optimal set of conditions that provided a compromise for the high-throughput analysis of the entire suite of 172 ECs, demonstrating that meticulous, statistically guided optimization is possible even for highly complex sample matrices.

Solid Sample Preparation Workflow for Elemental Analysis

The analysis of solid samples for elemental content requires a dedicated workflow to ensure homogeneity and representative analysis. The following diagram illustrates a robust workflow for solid sample preparation, integrating multiple techniques to achieve an analyzable specimen.

Solid Sample Preparation Workflow

- Grinding and Milling: The initial step involves reducing particle size and creating homogeneity. The choice between grinding (for brittle materials) and milling (for more controlled surface finishing) depends on the sample's hardness and the required final particle size (typically <75 μm for XRF). This step is critical to minimize sampling error and ensure uniform interaction with radiation [1].

- Pelletizing (for XRF): The homogenized powder is mixed with a binder (e.g., wax or cellulose) and pressed under high pressure (10-30 tons) into a solid, flat pellet. This process creates a sample with uniform density and surface properties, which is essential for accurate X-ray fluorescence intensity measurements [1].

- Fusion (for refractory materials): For difficult-to-dissolve materials like silicates and ceramics, fusion is the preferred method. The ground sample is mixed with a flux (e.g., lithium tetraborate) and melted at high temperatures (950-1200°C) to form a homogeneous glass disk. This technique completely destroys the original crystal structure, eliminating mineralogical effects and standardizing the matrix [1].

- Acid Digestion (for ICP-MS): For elemental analysis via ICP-MS, total dissolution of the solid sample is required. This is typically achieved using microwave-assisted acid digestion with a mixture of high-purity acids (e.g., HNO₃, HCl) under controlled temperature and pressure. This process ensures the analyte is brought into solution for subsequent nebulization and ionization in the plasma [22].

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key reagents and materials critical for successful sample preparation in spectroscopic analysis.

Table 3: Essential Research Reagent Solutions for Spectroscopic Sample Preparation

| Item | Function | Key Application Example |

|---|---|---|

| High-Purity Acids (e.g., HNO₃, HCl) | Digest and dissolve samples for elemental analysis; minimize background contamination. | Microwave digestion for ICP-MS analysis of metals in biological tissues [22]. |

| Solid-Phase Extraction (SPE) Cartridges (e.g., Oasis HLB) | Extract, clean up, and pre-concentrate analytes from liquid samples. | Multi-residue extraction of emerging contaminants from water samples for LC-MS analysis [24]. |

| Spectroscopic Grinding/Milling Equipment | Reduce particle size and homogenize solid samples. | Preparing a uniform powder from soil samples prior to pelletizing for XRF [1]. |

| Binders (e.g., Boric Acid, Cellulose) | Provide structural integrity to powdered samples during pellet formation. | Producing mechanically stable pellets for XRF analysis that will not fracture under vacuum [1]. |

| Fluxes (e.g., Lithium Tetraborate) | Dissolve refractory materials at high temperatures to form homogeneous glass disks. | Fusion preparation of mineral ores for accurate and matrix-effect-free XRF analysis [1]. |

| Internal Standards | Correct for variability in sample preparation and instrument response. | Added to all samples and calibrants in ICP-MS to account for signal drift and matrix effects [1]. |

The evidence is unequivocal: inadequate sample preparation is the primary source of error in spectroscopic analysis, with the capacity to invalidate both quantitative and qualitative results. The consequences—from erroneous concentration data and false positives to compromised structural identification and instrumental damage—highlight that preparation is not a mere preliminary step but an integral part of the analytical method itself.

To mitigate these risks, laboratories must adopt a "total workflow" approach [22] [25]. This philosophy looks beyond the core digestion or extraction step to encompass every stage of the process, including: automated reagent dosing for consistency and safety; in-house acid purification to control cost and ensure supply; and automated labware cleaning to prevent cross-contamination and free up technician time. By systematically optimizing the entire workflow, rather than isolated components, laboratories can overcome daily challenges, avoid disruptive reruns, and consistently produce high-quality, reliable spectroscopic data. In doing so, researchers and drug development professionals can ensure that their conclusions are built upon a foundation of analytical integrity, not compromised by preventable preparation failures.

From Solid to Solution: Technique-Specific Preparation Protocols for Accurate Analysis

In modern analytical laboratories, techniques like X-ray Fluorescence (XRF) and Fourier Transform Infrared (FT-IR) spectroscopy are indispensable for determining elemental composition and identifying molecular structures. However, the accuracy and precision of these powerful analytical tools are profoundly dependent on the quality of sample preparation. In fact, inadequate sample preparation accounts for approximately 60% of all spectroscopic analytical errors [1]. This guide details standardized protocols for solid sample preparation, focusing on grinding, milling, and pelletizing methods, to ensure that analytical results are both accurate and reproducible.

The physical state of a sample—including its particle size, homogeneity, and surface characteristics—directly influences how it interacts with electromagnetic radiation [1]. For XRF, improper preparation can lead to effects such as particle size heterogeneity and mineralogical variation, which significantly impact the intensity of the emitted X-rays [11]. For FT-IR, issues like poor contact with crystals or sample thickness can cause scattering, saturation, and ultimately, uninterpretable spectra [26]. Therefore, meticulous sample preparation is not merely a preliminary step but a critical component of the analytical process that validates the entire experimental outcome.

Core Principles of Sample Preparation

The Impact of Preparation on Analytical Results

The primary goal of sample preparation is to present a specimen to the spectrometer that is representative of the entire bulk material. Several fundamental principles must be adhered to for all spectroscopic techniques.

- Homogeneity: The sample must be uniform in composition and particle size throughout. Heterogeneous samples yield non-reproducible results because the analyzed portion may not represent the whole [1].

- Optimal Particle Size: Fine and consistent particle size is crucial. For XRF, the general requirement is a particle size of <75 μm, with <50 μm being ideal for creating high-quality pellets [27] [12]. For FT-IR, fine grinding is essential for making clear KBr pellets that produce spectra with sharp peaks [26].

- Surface Quality: The surface presented for analysis must be flat and clean. For XRF, this ensures consistent X-ray penetration and emission [11]. For FT-IR using Attenuated Total Reflectance (ATR), a flat surface ensures proper contact with the crystal [28].

- Avoidance of Contamination: Cross-contamination from equipment or between samples can introduce spurious signals, rendering results worthless. Proper cleaning of all apparatus between samples is mandatory [1].

Accuracy vs. Precision in Sample Preparation

It is vital to distinguish between accuracy and precision. Precision refers to the closeness of agreement between replicate measurements, while accuracy refers to the closeness of a measured value to the true value [11]. Sample preparation methods can yield highly precise results (repeatable), but if a systematic error like contamination exists, the results will be inaccurate. The choice of preparation method directly influences accuracy; for instance, the fusion method for XRF often provides higher accuracy than pressed powders for complex matrices because it eliminates mineralogical effects [11].

Sample Preparation for X-Ray Fluorescence (XRF) Analysis

Grinding and Milling Protocols for XRF

The initial step for solid XRF samples is particle size reduction to achieve a homogeneous and fine powder.

- Grinding Hard Materials: For hard, brittle materials like ores, ceramics, and cements, use a swing grinding mill with an oscillating motion. This method reduces heat generation, which can alter sample chemistry. Grind for a consistent, predetermined time to achieve the target particle size of <75 μm [1].

- Milling Metallic Alloys: For metals, particularly soft, non-ferrous alloys like aluminum and copper, a milling machine is preferred. Milling provides a controlled, flat surface by using a cutting head to remove the top layer, exposing a fresh, representative surface for analysis. This minimizes light scattering and ensures consistent density [29] [1].

- Contamination Control: Select grinding and milling surfaces that will not contaminate the sample. For example, use tungsten carbide components if iron is an analyte of interest [30]. Clean equipment thoroughly between samples to prevent cross-contamination [27].

Pelletizing / Briquetting Techniques

Transforming the powdered sample into a solid pellet ensures a flat, uniform surface of consistent density, which is critical for quantitative XRF analysis.

Step-by-Step Protocol:

- Grinding: Finely grind the sample to a particle size of <50 μm [27].

- Mixing with Binder: Combine the powdered sample with a binding agent. A typical sample-to-binder ratio is 20-30% binder [27]. Common binders include cellulose, wax, or boric acid. The binder must be homogenized with the sample to ensure the pellet holds together and to reduce matrix effects [1].

- Pressing: Place the mixture into a clean die, typically 32 mm or 40 mm in diameter [30]. Use a hydraulic press to apply a load between 15 and 40 tons, with many samples requiring 25-35 tons for 1-2 minutes. This pressure is sufficient to recrystallize the binder and fully compress the sample without void spaces [27].

- Ejection and Storage: Eject the finished pellet from the die. For protection and ease of handling, the powder can be pressed within a supportive aluminum cap or a metal ring [30] [11].

Table 1: Key Parameters for XRF Pellet Preparation

| Parameter | Recommended Specification | Purpose and Rationale |

|---|---|---|

| Final Particle Size | < 75 μm (optimal < 50 μm) [27] [12] | Ensures homogeneity and minimizes particle size effects on X-ray intensity. |

| Binder Ratio | 20-30% binder to sample [27] | Binds powder for handling; over-dilution affects measured concentrations. |

| Pressing Pressure | 15-40 tons (typical 25-35 tons) [30] [27] | Creates a robust, void-free pellet of consistent density. |

| Pressing Time | 1-2 minutes under full pressure [27] | Allows for binder recrystallization and pellet stabilization. |

Advanced Technique: Fusion Method

For the highest accuracy, particularly with refractory materials or complex mineralogies, the fusion method is the gold standard. It involves:

- Mixing the ground sample with a flux (e.g., lithium tetraborate) [1].

- Melting the mixture in a platinum crucible at high temperatures (950-1200°C) to form a homogeneous glass bead or disk [29] [1].

- Casting and cooling the melt into a solid disk. Fusion completely destroys the original crystal structure of the sample, eliminating mineralogical and particle size effects, thereby providing unparalleled accuracy for quantitative analysis of materials like cement, slags, and minerals [11] [1].

The following workflow illustrates the two primary XRF solid sample preparation paths:

Sample Preparation for FT-IR Analysis

Grinding for Transmission FT-IR (KBr Pellet Method)

The traditional transmission FT-IR method requires the solid sample to be transparent to IR light, achieved by dispersing it in an IR-transparent matrix.

Step-by-Step Protocol (KBr Pellet Method):

- Grinding: Finely grind 1-2 mg of the solid sample using an agate mortar and pestle [26].

- Mixing with Matrix: Mix the ground sample with 100-200 mg of dry, powdered potassium bromide (KBr). KBr is hygroscopic, so working quickly is advised to minimize moisture absorption [26].

- Pressing: Transfer the mixture into a pellet die. Apply pressure using a hydraulic press to form a clear, transparent pellet. The required pressure is typically lower than for XRF pellets.

- Analysis: Place the resulting pellet directly into the FT-IR spectrometer's sample holder for transmission analysis [26].

This method produces high-quality spectra suitable for library matching but requires consistency in preparation for reproducibility [28].

Minimal-Preparation Techniques: ATR and DRIFTS

Modern FT-IR accessories have simplified sample preparation significantly.

Attenuated Total Reflectance (ATR): This is the most common FT-IR technique today due to its ease of use.

- Place the solid sample (powder, film, or bulk) directly onto the ATR crystal.

- Apply pressure to ensure intimate contact between the sample and the crystal.

- Run the analysis directly [26] [28]. ATR requires little to no sample preparation, as fine grinding is often unnecessary. The choice of crystal material (e.g., diamond for robustness, germanium for high refractive index) is important for optimal performance [28].

Diffuse Reflectance (DRIFTS): This technique is suitable for fine powders that are easily ground.

The following workflow will help you select the appropriate FT-IR preparation method:

Quantitative Aspects of FT-IR Preparation

The quality of FT-IR sample preparation directly impacts spectral data. A key study evaluating FT-IR performance found that for well-resolved, non-saturated peaks, the wavenumber accuracy is within 1.1 cm⁻¹ when using spectral resolutions of 4 cm⁻¹ or higher. This high level of precision is critical for identifying subtle spectral shifts that indicate phenomena like crystal polymorphism in pharmaceuticals [31]. The study also demonstrated that instrument-to-instrument variation is minimal, on the order of < 2.2 cm⁻¹ for resolutions of 8 cm⁻¹ or better, which is an order of magnitude better than some historical guidelines suggested [31]. This underscores that proper sample preparation is the dominant factor in achieving accurate results.

Table 2: FT-IR Sample Preparation Methods and Data Quality

| Method | Typical Sample Prep Time | Key Quality Metric | Effect of Poor Preparation |

|---|---|---|---|

| KBr Pellet (Transmission) | Moderate to High | Pellet clarity and thickness | Saturated peaks, scattering, poor reproducibility [26]. |

| ATR | Very Low | Quality of sample-crystal contact | Weak, distorted signals due to poor contact [28]. |

| DRIFTS | Low | Consistency of particle size and mixing | Non-representative spectra, poor reproducibility [28]. |

The Scientist's Toolkit: Essential Materials and Reagents

Successful sample preparation relies on the use of appropriate, high-quality consumables and equipment.

Table 3: Research Reagent Solutions for Spectroscopic Sample Preparation

| Item | Function | Application Notes |

|---|---|---|

| Hydraulic Pellet Press | Applies high pressure (15-40T) to powder samples to form solid pellets. | Essential for XRF pelletizing and FT-IR KBr pellets; available as manual or programmable presses [30] [27]. |

| Cellulose or Wax Binder | Binds powdered samples together to form a coherent pellet for handling and analysis. | Critical for creating robust XRF pellets; typical dilution ratio is 20-30% binder to sample [30] [27]. |

| Potassium Bromide (KBr) | IR-transparent matrix used to dilute and support solid samples for FT-IR analysis. | High-purity, dry KBr is essential for creating clear pellets for transmission FT-IR [26]. |

| Agate Mortar and Pestle | Manually grinds samples to a fine, homogeneous powder. | Used for both XRF and FT-IR preparation; agate is hard and resistant to contamination [26]. |

| Lithium Tetraborate Flux | Fluxing agent that dissolves solid samples at high temperatures to form homogeneous glass disks. | Used in the fusion method for XRF to eliminate mineralogical effects for ultimate accuracy [1]. |

| ATR Crystal (Diamond/ZnSe/Ge) | Enables direct measurement of solids and liquids with minimal sample preparation for FT-IR. | Diamond is robust for most samples; Germanium (Ge) offers a shallow penetration depth for highly absorbent materials [28]. |

The path to reliable and accurate spectroscopic data is paved long before the sample is placed in the spectrometer. As demonstrated, protocols for grinding, milling, and pelletizing are not mere preliminaries but are integral to the analytical methodology itself. For XRF analysis, consistent pelletizing and the strategic use of fusion are paramount for quantitative elemental accuracy. For FT-IR spectroscopy, the choice between traditional KBr pellets and modern ATR techniques dictates the balance between spectral quality and preparation efficiency. By adhering to the detailed protocols and principles outlined in this guide, researchers and scientists can systematically eliminate sample preparation as a major source of error, thereby ensuring that their XRF and FT-IR results truly reflect the composition and structure of the materials under investigation.

In modern analytical science, the accuracy of any spectroscopic result is contingent upon the steps taken before the sample even reaches the instrument. Inadequate sample preparation is the root cause of an estimated 60% of all spectroscopic analytical errors [1]. This guide details the core liquid handling techniques—dilution, filtration, and solvent selection—for two powerful analytical techniques: Inductively Coupled Plasma Mass Spectrometry (ICP-MS) and UV-Visible (UV-Vis) Spectroscopy. The central thesis is that rigorous, technique-specific sample preparation is not a mere preliminary step but a critical determinant of data validity, affecting everything from detection limits and signal stability to the very truth of the analytical conclusion [1].