A Modern Guide to Validation Protocols for Quantitative Spectroscopic Measurements

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on validating quantitative spectroscopic methods.

A Modern Guide to Validation Protocols for Quantitative Spectroscopic Measurements

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on validating quantitative spectroscopic methods. It covers foundational regulatory principles from ICH Q2(R2) and other guidelines, detailing essential performance parameters like accuracy, precision, and specificity. The content explores modern methodological applications, including hyphenated techniques and Quality-by-Design (QbD) approaches, and addresses persistent challenges such as sample heterogeneity and calibration transfer. A dedicated section on validation protocols outlines lifecycle management and comparative strategies to ensure data integrity and regulatory compliance, synthesizing traditional requirements with emerging trends like AI and real-time release testing.

The Principles and Regulatory Landscape of Spectroscopic Method Validation

In the pharmaceutical industry, the reliability of analytical data forms the bedrock of quality control, regulatory submissions, and ultimately, patient safety. The concept of "fitness for purpose" is the cornerstone principle of analytical method validation as defined by both the International Council for Harmonisation (ICH) and the U.S. Food and Drug Administration (FDA). This principle asserts that an analytical procedure must be scientifically demonstrated to be reliable and consistent for its intended application [1]. Rather than being a one-time checklist, validation is a continuous process that ensures a method can consistently produce results that accurately reflect the quality of the drug substance or product throughout its lifecycle [1].

The ICH provides a harmonized global framework for these requirements, which the FDA, as a key member, adopts and implements [1]. The recent simultaneous issuance of the revised ICH Q2(R2) on the validation of analytical procedures and the new ICH Q14 on analytical procedure development marks a significant evolution in regulatory thinking [1] [2]. This modernized approach shifts the focus from a prescriptive, "check-the-box" activity to a more scientific, risk-based, and lifecycle-oriented model. For researchers and drug development professionals, this means that proving a method's "fitness for purpose" now requires a deeper understanding of the method's capabilities and limitations from development through to routine use, supported by a structured control strategy [3] [2].

Core Validation Parameters: The Pillars of "Fitness for Purpose"

To objectively demonstrate that a method is fit for its purpose, ICH Q2(R2) outlines a set of fundamental performance characteristics that must be evaluated [1] [3]. The specific parameters tested depend on the type of method (e.g., identification, quantitative assay, or impurity test). The table below summarizes the core parameters and their role in establishing reliability.

Table 1: Core Analytical Method Validation Parameters per ICH Q2(R2)

| Validation Parameter | Definition | Role in Establishing "Fitness for Purpose" |

|---|---|---|

| Accuracy | The closeness of agreement between the measured value and a true or accepted reference value [1]. | Demonstrates that the method yields the correct result, ensuring product quality and patient safety [3]. |

| Precision | The degree of agreement among individual test results when the procedure is applied repeatedly to multiple samplings of a homogeneous sample. Includes repeatability and intermediate precision [1]. | Ensures the method produces consistent results over time, across analysts, and between instruments [3]. |

| Specificity | The ability to assess the analyte unequivocally in the presence of components that may be expected to be present, such as impurities, degradation products, or matrix components [1] [3]. | Proves the method can measure the target analyte without interference, which is critical for stability-indicating methods [3]. |

| Linearity | The ability of the method to obtain test results that are directly proportional to the concentration of the analyte within a given range [1]. | Establishes that the method's response is predictable and reliable across the intended operating range. |

| Range | The interval between the upper and lower concentrations of the analyte for which the method has demonstrated suitable levels of linearity, accuracy, and precision [1]. | Defines the concentrations over which the method is proven to be applicable. |

| Limit of Detection (LOD) | The lowest amount of analyte in a sample that can be detected, but not necessarily quantitated, as an exact value [1]. | Critical for impurity identification methods, ensuring the detection of trace-level components. |

| Limit of Quantitation (LOQ) | The lowest amount of analyte in a sample that can be quantitatively determined with suitable precision and accuracy [1]. | Essential for quantifying low-level impurities or degradation products. |

| Robustness | A measure of the method's capacity to remain unaffected by small, deliberate variations in method parameters (e.g., pH, temperature, flow rate) [1]. | Evaluates the method's reliability during normal use and identifies critical parameters that must be controlled [3]. |

Experimental Protocols and Data in Spectroscopic Measurement Validation

The theoretical framework of "fitness for purpose" is substantiated through rigorous experimental protocols. The following case studies from peer-reviewed research illustrate how these core validation parameters are tested in practice for spectroscopic methods, with data summarized for direct comparison.

Case Study 1: Validation of a Spectral Method for Protein Solution Color

A study developed a quantitative spectral method to replace subjective visual assessment of protein drug solution color, converting visible absorption spectra into quantitative Lab* color values [4].

Table 2: Key Experimental Details for Protein Solution Color Method

| Aspect | Protocol Detail |

|---|---|

| Analytical Technique | Spectrophotometry (UV-Vis) |

| Measured Output | Visible absorption spectrum converted to CIE Lab* values |

| Validation Goal | Qualify the instrument and assay for clinical quality control |

| Precision Assessment | Compared different instruments, cuvettes, protein solutions, and analysts; employed a unique statistical method for 3D precision |

The method's validation demonstrated that the spectral assay was suitable for assessing the color of drug substances and products, providing a precise and objective alternative to the European Pharmacopeia's visual method [4].

Case Study 2: Validation of ICP-OES for Quality Assessment of ⁶⁷Cu

In the production of the radiometal ⁶⁷Cu for targeted therapy, researchers validated an Inductively Coupled Plasma Optical Emission Spectrometry (ICP-OES) method to assess chemical purity by quantifying non-radioactive metal impurities [5].

Table 3: Validation Data for ICP-OES Method from ⁶⁷Cu Study

| Validation Parameter | Experimental Protocol & Findings |

|---|---|

| Accuracy & Linearity | Calibration standards (CRM) for elements like Ag, Ca, Co, Cu, Fe, Mg, Zn, Al, Cr, Ni, Sn, and Pb were prepared in a defined concentration range (e.g., 2.5-20 µg/L for most elements). Criteria were met for most elements, though Al and Ca suffered from matrix effects [5]. |

| Specificity | The method was shown to be effective for detecting trace metal impurities, though spectral and solvent matrix effects required careful consideration for accurate quantification [5]. |

| Intended Purpose | To ensure the molar activity of ⁶⁷Cu and to confirm that metallic impurities do not interfere with the radiolabeling efficiency or safety of the final radiopharmaceutical [5]. |

The study followed ICH guidelines as a benchmark, validating the method for accuracy, precision, specificity, linearity, and sensitivity to ensure the safety and efficacy of the radiopharmaceutical product [5].

Case Study 3: Quantitative Evaluation of Nanoplastics using UV-Vis Spectroscopy

A 2025 study evaluated UV-Visible spectroscopy as a practical tool for quantifying environmentally relevant nanoplastics, comparing it against established mass-based techniques [6].

Table 4: Comparative Analytical Techniques for Nanoplastic Quantification

| Analytical Technique | Technique Category | Key Findings in Comparison |

|---|---|---|

| UV-Visible Spectroscopy | Optical Spectroscopy | Provided a rapid, accessible, and effective means of quantification, especially with limited sample volumes. Results were consistent in order of magnitude with other methods, despite some concentration underestimation [6]. |

| Pyrolysis GC-MS (Py-GC-MS) | Mass-Based | An established benchmark technique for polymer identification and quantification. |

| Thermogravimetric Analysis (TGA) | Mass-Based | Another established mass-based technique used for comparison. |

| Nanoparticle Tracking Analysis (NTA) | Number-Based | Provides particle concentration and size distribution information. |

The experimental protocol involved generating true-to-life polystyrene nanoplastics via mechanical fragmentation and isolating them through sequential centrifugations [6]. The validation demonstrated that UV-Vis spectroscopy could serve as a reliable, non-destructive tool for rapid quantification, expanding the analytical toolkit for complex materials.

The Modernized Lifecycle Approach: ICH Q2(R2) and ICH Q14

The implementation of the revised ICH Q2(R2) and the new ICH Q14 guideline represents a fundamental shift in the regulatory landscape, moving from validation as a one-time event to Analytical Procedure Lifecycle Management [1] [2]. This modernized approach is built on two key concepts:

- The Analytical Target Profile (ATP): Introduced in ICH Q14, the ATP is a prospective summary that defines the intended purpose of the analytical procedure and its required performance criteria [1] [3]. It is the foundational document that drives the entire lifecycle, ensuring the method is designed to be fit-for-purpose from the very beginning.

- Science- and Risk-Based Approach: The enhanced approach encouraged by these guidelines relies on using prior knowledge and risk management (e.g., per ICH Q9) to gain a deeper understanding of the method. This scientific justification provides flexibility, particularly for managing post-approval changes more efficiently [1] [2].

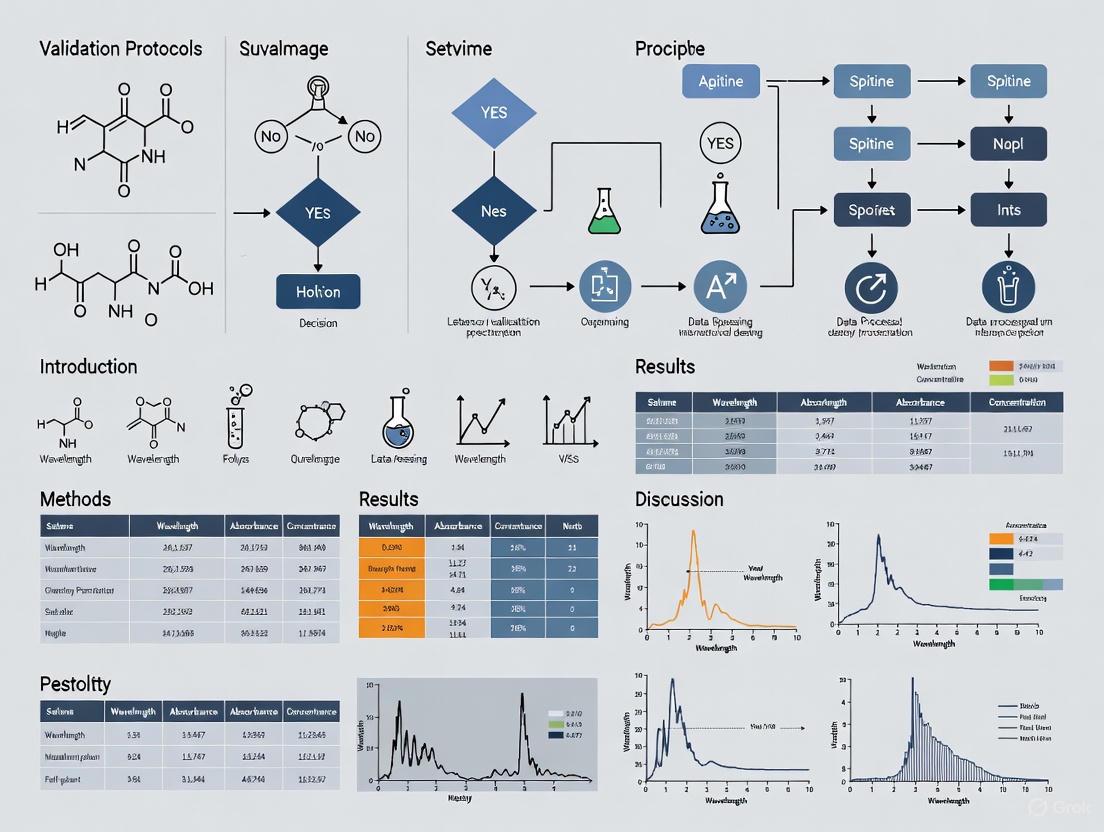

The following workflow diagram illustrates how these elements integrate throughout the analytical procedure lifecycle.

The Scientist's Toolkit: Essential Research Reagent Solutions

The successful execution of validation protocols, especially for sensitive spectroscopic methods, depends on the use of high-quality reagents and materials. The following table details key solutions used in the featured research.

Table 5: Essential Research Reagent Solutions for Analytical Validation

| Reagent / Material | Function in Validation | Example from Research Context |

|---|---|---|

| Certified Reference Materials (CRMs) | Used to prepare calibration standards to establish accuracy, linearity, and range of a method [5]. | A TraceCERT multielement standard solution was used for ICP-OES calibration in the ⁶⁷Cu study [5]. |

| High-Purity Solvents | Act as diluents and blanks to minimize background interference and ensure specificity. | 1% HNO₃ from high-purity water was used as a diluent and blank for ICP-OES [5]. Ultra-trace grade water was used for molar activity determination [5]. |

| Surrogate/Placebo Matrix | Used to evaluate accuracy and specificity in the absence of the actual sample matrix, which may contain interfering endogenous components. | PBS-0.1% BSA served as a surrogate matrix for preparing validation samples in the oxytocin LC-MS/MS assay [7]. A placebo spike was mentioned as a method for assessing accuracy [1]. |

| Chromatographic Resins | Used in sample preparation and purification to isolate the analyte from impurities, directly impacting specificity and accuracy. | CU-resin and TK200 resin were used for the chromatographic purification of ⁶⁷Cu, crucial for achieving radionuclidic and chemical purity [5]. |

| Characterized Test Materials | Realistic, well-defined test materials are essential for evaluating and validating methods intended for complex samples. | True-to-life nanoplastics, generated from fragmented polystyrene items, were used as controlled test materials to validate the UV-Vis quantification method [6]. |

"Fitness for purpose," as defined by ICH and FDA guidelines, is a dynamic and multi-faceted principle that governs the lifecycle of an analytical procedure. It is demonstrated not by a single study, but through the rigorous and documented evaluation of core validation parameters like accuracy, precision, and specificity, with acceptance criteria tailored to the method's intended use. The modernized framework established by ICH Q2(R2) and ICH Q14 reinforces this by championing a proactive, science- and risk-based approach, centered on the Analytical Target Profile. For researchers and scientists, mastering this framework is essential. It ensures that the analytical data underpinning drug development and quality control are not only regulatorily compliant but are fundamentally reliable, reproducible, and scientifically sound, thereby safeguarding product quality and public health.

In the realm of pharmaceutical development and quality control, robust analytical methods are paramount for ensuring the identity, strength, quality, purity, and potency of drug substances and products. The analytical method lifecycle, encompassing development, validation, and continual verification, is governed by key international regulatory guidelines. The International Council for Harmonisation (ICH) provides two complementary guidelines: ICH Q2(R2) focusing on validation of analytical procedures, and ICH Q14 on analytical procedure development. For bioanalytical methods specifically used in nonclinical and clinical studies to support regulatory submissions, the FDA M10 Bioanalytical Method Validation guideline applies. These documents provide a structured, science- and risk-based framework that ensures analytical data is reliable, reproducible, and fit for its intended purpose, thereby supporting the availability, safety, and efficacy of medications. [8] [9] [10]

The evolution of these guidelines reflects advances in analytical technology and a growing recognition of the importance of a holistic lifecycle approach. ICH Q2(R2) and ICH Q14 were recently revised to provide more detailed guidance, including specific examples for advanced techniques like spectroscopic methods and mass spectrometry, and to clarify the relationship between development and validation activities. [9] Similarly, the FDA M10 guideline, finalized in November 2022, harmonizes regulatory expectations for bioanalytical methods used to generate pharmacokinetic data, replacing the previous draft guidance. [11] Understanding the scope, requirements, and interrelationships of these guidelines is crucial for researchers, scientists, and drug development professionals designing validation protocols, especially for quantitative spectroscopic measurements.

The following table summarizes the core focus, scope, and key concepts of the three primary guidelines governing analytical and bioanalytical methods.

Table 1: Comparison of Key Regulatory Guidelines for Analytical Methods

| Guideline | Core Focus & Purpose | Regulatory Status & Date | Primary Scope & Application | Key Concepts & Approaches |

|---|---|---|---|---|

| ICH Q2(R2) [8] | Validation of analytical procedures to demonstrate fitness for intended purpose. | Final version; represents current regulatory expectations. | Drug substances & products (chemical/biological) for release/stability testing. Can be applied to other procedures risk-based. [8] | Validation parameters (Accuracy, Precision, Specificity, LOD, LOQ, Linearity, Range); Lifecycle management. [8] [12] |

| ICH Q14 [10] | Science- and risk-based development of analytical procedures. | Finalized scientific guideline. | Drug substances & products (chemical/biological) for release/stability testing. Can be applied to other procedures risk-based. [10] | Enhanced vs. Minimal development approaches; Analytical Target Profile (ATP); Robustness; Lifecycle management. [9] [10] |

| FDA M10 [11] | Validation of bioanalytical methods for nonclinical/clinical studies supporting regulatory submissions. | Finalized in November 2022. | Chromatographic & ligand-binding assays for drugs & active metabolites in biological matrices. [11] | Method validation & study sample analysis for pharmacokinetic data; addresses endogenous compounds. [11] [13] |

Inter-guideline Relationships and Nuances

A critical understanding for implementation is how these guidelines interact. ICH Q14 and ICH Q2(R2) are designed to be used together, with Q14 covering the development phase and Q2(R2) covering the validation phase of the same analytical procedure lifecycle. [9] The FDA M10 guideline, however, operates in a distinct but related space. It is specifically intended for bioanalytical methods generating data to support regulatory submissions for human and veterinary medicines. [11] A notable nuance involves biomarker bioanalysis, where the FDA's finalized 2025 guidance for biomarkers directs users to ICH M10, despite M10 explicitly stating it does not apply to biomarkers. This creates a complex landscape where the context of use (COU) becomes paramount for appropriate application. [13]

Experimental Validation Protocols and Data Presentation

The practical application of these guidelines is demonstrated through structured experimental validation protocols. The following workflow illustrates the typical analytical procedure lifecycle governed by ICH Q14 and Q2(R2).

Diagram 1: Analytical Procedure Lifecycle Workflow

Core Validation Parameters and Acceptance Criteria

Adherence to ICH Q2(R2) requires experimental testing of key validation parameters. The table below outlines the typical experiments, protocols, and illustrative data for a quantitative spectroscopic method, drawing from principles applied in X-ray fluorescence and quantitative NMR studies. [14] [15]

Table 2: Key Validation Experiments, Protocols, and Representative Data

| Validation Parameter | Experimental Protocol Summary | Exemplary Quantitative Data / Outcome |

|---|---|---|

| Accuracy [12] | Analysis of samples with known concentrations (e.g., spiked placebo or reference standards) in replicate. Comparison of measured vs. true value. | Recovery: 98.5% - 101.2%Confidence Interval: Combined uncertainty of 1.5% for 95% CI (as demonstrated in validated qNMR) [15] |

| Precision [12] | Repeatability: Multiple measurements of homogeneous samples by same analyst, same conditions.Intermediate Precision: Different days, analysts, or equipment. | Repeatability RSD: ≤ 1.0%Intermediate Precision RSD: ≤ 2.0% |

| Specificity [12] | Demonstrate that the signal is from the analyte alone, free from interference from excipients, impurities, or matrix. | Peak Purity: Passes (e.g., in HPLC-DAD or spectroscopy)Resolution: No co-elution or spectral overlap with interfering components. |

| Linearity & Range [12] | Analyze a series of standard solutions at different concentration levels (e.g., 5-8 levels). Plot response vs. concentration. | Linear Range: 50% - 150% of target concentrationCorrelation Coefficient (r): ≥ 0.998Y-Intercept: Not statistically significant from zero. |

| LOD & LOQ [14] [12] | LOD: Signal-to-noise ratio of 3:1 or based on standard deviation of response.LOQ: Signal-to-noise ratio of 10:1 or based on standard deviation of response and slope. | LOD: 0.05 µg/mL (for a given analyte)LOQ: 0.15 µg/mL (for a given analyte)Varies significantly with matrix and instrument. [14] |

| Robustness [9] [12] | Deliberate, small variations in method parameters (e.g., temperature, flow rate, pH, excitation voltage) to evaluate method resilience. | All results meet system suitability criteria despite variations, demonstrating the method is robust under normal operational fluctuations. |

Application in Spectroscopic Measurements: An XRF Case Study

Research on the validation of spectroscopic methods for Ag-Cu alloys provides a concrete example of applying these principles. The study utilized both Energy Dispersive (ED-XRF) and Wavelength Dispersive (WD-XRF) spectrometers to analyze alloy compositions. [14] The experimental protocol involved:

- Sample Preparation: Certified reference materials and commercial alloys with known compositions (Ag~x~Cu~1-x~) were prepared as discs.

- Instrumentation: ED-XRF measurements were performed with an Rh anode spectrometer, while WD-XRF used a system with an Rh tube and LiF analyzer crystal.

- Data Acquisition & Analysis: K-X-ray spectra were measured, and intensities were used to estimate concentrations, which were compared against reference values.

- Determination of Detection Limits: Various detection limits (LLD, ILD, CMDL, LOD, LOQ) were calculated to define the method's sensitivity, demonstrating how the sample matrix significantly influences these values. [14]

This study underscores the importance of a thorough, method-specific validation protocol, as the performance characteristics are highly dependent on both the instrumental technique and the sample matrix.

The Scientist's Toolkit: Essential Reagents and Materials

The successful development and validation of a quantitative spectroscopic method rely on several key materials and solutions. The following table details these essential components.

Table 3: Key Research Reagent Solutions for Quantitative Spectroscopic Analysis

| Item / Solution | Function & Purpose in Analysis |

|---|---|

| Certified Reference Materials (CRMs) [14] | Provides a traceable standard with known composition and uncertainty, essential for calibrating instruments, determining accuracy, and establishing method linearity. |

| High-Purity Solvents & Reagents | Ensures that the sample matrix and preparation do not introduce contamination or interference, which is critical for achieving low detection limits and high specificity. |

| Stable Isotope-Labeled Internal Standards | Used in techniques like MS to correct for sample loss during preparation and instrument variability, significantly improving the precision and accuracy of quantitation. |

| System Suitability Test Solutions | A mixture of analytes used to verify that the total analytical system (instrument, reagents, and settings) is performing adequately before and during the analysis. |

| Quality Control (QC) Samples | Samples with known concentrations (low, mid, high) analyzed alongside unknown samples to monitor the ongoing performance and reliability of the analytical method. |

The regulatory landscape for analytical method validation is clearly defined by ICH Q2(R2), ICH Q14, and FDA M10, each with a distinct yet complementary scope. ICH Q14 and Q2(R2) promote a robust, science-based lifecycle approach for the quality control of drug substances and products, while FDA M10 provides specific, harmonized expectations for bioanalytical methods supporting pharmacokinetic studies. For researchers, particularly in spectroscopic fields, the successful application of these guidelines requires a deep understanding of their requirements. This involves designing comprehensive experimental validation protocols to characterize all relevant performance parameters, from specificity and linearity to LOD/LOQ and robustness. As demonstrated by the XRF case study, a rigorously validated method, supported by high-quality reagents and standards, is fundamental to generating reliable data that ensures product quality and patient safety.

In pharmaceutical development and quality control, the integrity of analytical data is the bedrock of product quality, regulatory compliance, and ultimately, patient safety [1]. Analytical method validation provides documented evidence that a testing procedure is fit for its intended purpose, ensuring that results are reliable, consistent, and universally acceptable [16]. This process confirms that a method will consistently yield results that accurately reflect the quality of the drug substance or product being tested. For quantitative spectroscopic measurements and other analytical techniques, validation is not a one-time event but a continuous lifecycle commitment, beginning with method development and extending through all phases of a product's market life [17] [1]. This guide focuses on the five core parameters—Accuracy, Precision, Specificity, Linearity, and Range—providing a structured comparison and detailed experimental protocols for researchers and drug development professionals.

Comparative Analysis of Core Validation Parameters

The following table summarizes the definitions, experimental methodologies, and acceptance criteria for the five core validation parameters, offering a direct comparison of their roles in demonstrating method validity.

| Parameter | Core Definition & Purpose | Typical Experimental Approach | Common Acceptance Criteria |

|---|---|---|---|

| Accuracy [16] [18] | The closeness of agreement between the test result and an accepted reference value (true value). It measures methodological trueness. | 1. Analysis of a known standard: Compare result with true value of a reference material.2. Spiking (Recovery): Spike a placebo or blank matrix with a known analyte amount. Compare measured value to expected value.3. Standard Addition: Add known analyte quantity to a sample and re-analyze. Recovery of the added amount demonstrates accuracy [16] [19]. | Recovery of 98–102% for drug substance; 98–102% for drug product (depending on concentration) [1] [16]. |

| Precision [16] [18] | The closeness of agreement between a series of measurements obtained from multiple sampling of the same homogeneous sample. | 1. Repeatability: Multiple measurements of the same sample under identical, short-time conditions (same analyst, day, equipment).2. Intermediate Precision: Measurements under varied conditions within the same lab (different days, analysts, equipment).3. Reproducibility: Measurements between different laboratories [1] [19]. | Relative Standard Deviation (RSD) < 2% for repeatability. Higher RSD may be acceptable for intermediate precision depending on method complexity [20] [18]. |

| Specificity [1] [18] | The ability to assess the analyte unequivocally in the presence of other components that may be expected to be present. | Demonstrate that the analytical response (e.g., spectral peak) is due solely to the analyte by analyzing:1. Blank matrix (placebo).2. Samples spiked with potential interferents (impurities, degradants, matrix components) [17] [16]. | The method should be unaffected by interferents (e.g., no peak overlap in spectroscopy). The analyte response is resolved from all other responses [17] [1]. |

| Linearity [16] [18] | The ability of the method to produce results that are directly proportional to analyte concentration. | Analyze a minimum of 5-6 standard solutions over a range, typically 50-150% of the target concentration. Plot response vs. concentration and apply statistical analysis (linear regression) [20] [16]. | Correlation coefficient (r) ≥ 0.99 [20] [16]. A y-intercept not significantly different from zero is also evaluated. |

| Range [1] [16] | The interval between the upper and lower analyte concentrations for which suitable levels of linearity, accuracy, and precision have been demonstrated. | The range is established from linearity and accuracy studies. It is the concentration region where the method operates reliably. | The specific range is defined by the intended application. It must encompass the full span of expected sample concentrations [1] [16]. |

Experimental Protocols for Validation

Protocol for Accuracy and Precision Assessment

A combined protocol for assessing accuracy and precision, as demonstrated in a spectroscopic assay of ceftriaxone sodium, is outlined below [20].

- Materials: Drug substance (e.g., ceftriaxone sodium), placebo matrix (excipients for a formulation), appropriate solvent (e.g., distilled water), volumetric glassware, and a validated spectrophotometer or spectrometer [20].

- Procedure:

- Preparation of Solutions: Prepare a standard stock solution of the analyte at a known concentration (e.g., 1 mg/mL). From this, prepare solutions for accuracy at three levels (e.g., 80%, 100%, 120% of the target concentration) by spiking the analyte into a placebo or blank matrix [20] [16].

- Analysis:

- For accuracy, analyze each level in triplicate. Calculate the mean recovery for each level and the overall mean recovery.

- For precision (repeatability), prepare six independent samples at 100% of the test concentration and analyze under the same conditions. Calculate the mean, standard deviation (SD), and relative standard deviation (RSD) [20].

- Intermediate Precision: Repeat the precision study on a different day, with a different analyst, or using a different instrument. The combined data from both sets provides a measure of intermediate precision [1] [19].

Protocol for Specificity

This protocol is critical for demonstrating that the method is free from interference, especially from degradation products.

- Materials: Drug substance, drug product (formulation with excipients), forced degradation samples (see below), and potential known interferents.

- Procedure:

- Forced Degradation: Stress the drug substance and product under various conditions:

- Acid/Basic Hydrolysis: Treat with 0.1 N HCl or 0.1 N NaOH for 1 hour at room temperature [20].

- Oxidative Degradation: Treat with 3% hydrogen peroxide for 1 hour at room temperature [20].

- Photolytic Degradation: Expose drug solution to UV light (e.g., 254 nm) for 24 hours [20].

- Thermal Degradation: Heat solid drug in an oven at 100°C for 24 hours [20].

- Analysis: Analyze the stressed samples, an unstressed standard, and a blank (placebo). For spectroscopic methods, compare the spectra or chromatograms to confirm that the analyte peak is pure and unaffected by peaks from degradants or excipients [17] [20].

- Forced Degradation: Stress the drug substance and product under various conditions:

Protocol for Linearity and Range

This protocol establishes the concentration range over which the method is valid.

- Materials: Standard stock solution, serial volumetric flasks, and solvent.

- Procedure:

- Preparation of Calibration Standards: From the stock solution, prepare a minimum of five standard solutions covering the intended range (e.g., 50%, 80%, 100%, 120%, 150% of the target concentration) [20] [16].

- Analysis: Analyze each standard solution in a randomized order. Measure the analytical response (e.g., absorbance in spectroscopy).

- Data Analysis: Plot the mean response against the concentration for each standard. Perform linear regression analysis to calculate the slope, y-intercept, and correlation coefficient (r) [20]. The range is then defined as the concentration interval between the lowest and highest standards for which acceptable linearity, accuracy, and precision are confirmed [1].

Signaling Pathways and Workflows

The following diagram illustrates the logical relationship and workflow between the core validation parameters, showing how they collectively contribute to a validated analytical method.

The Scientist's Toolkit: Key Research Reagent Solutions

The table below details essential materials and reagents commonly required for executing the validation protocols for quantitative spectroscopic measurements.

| Item | Function in Validation |

|---|---|

| Drug Substance (Analyte) Reference Standard | Serves as the primary benchmark with a known purity and identity for preparing calibration standards and accuracy/spiking studies [20]. |

| Placebo/Blank Matrix | Contains all formulation components except the analyte. Critical for specificity testing to rule out excipient interference and for accuracy/recovery studies [1] [16]. |

| High-Purity Solvents | Used for dissolving samples and standards. Consistency in solvent grade is vital for robustness and reproducibility of the spectroscopic measurement [20]. |

| Forced Degradation Reagents | Chemicals like hydrochloric acid (HCl), sodium hydroxide (NaOH), and hydrogen peroxide (H₂O₂) are used to intentionally degrade the sample, validating method specificity [20]. |

| Certified Volumetric Glassware | Essential for accurate and precise preparation of standard solutions, sample dilutions, and spiking experiments, directly impacting accuracy and linearity results [20] [16]. |

A rigorous understanding and implementation of accuracy, precision, specificity, linearity, and range form the non-negotiable foundation of any reliable analytical method in pharmaceutical research and development. As per modern ICH Q2(R2) and other international guidelines, a science- and risk-based approach is paramount [1]. By following the structured comparison and detailed experimental protocols provided in this guide, scientists can ensure their quantitative spectroscopic methods are not only compliant with global regulatory standards but also robust and reliable enough to safeguard product quality and patient well-being.

Distinguishing Between Qualification, Verification, and Full Validation

In quantitative spectroscopic measurements, ensuring the reliability and accuracy of data is paramount. The terms qualification, verification, and validation represent distinct but interconnected processes within a quality assurance framework. Validation provides comprehensive evidence that a method is suitable for its intended purpose, verification confirms that a previously validated method works in a new specific context, and qualification demonstrates that equipment is properly installed and functions correctly [21] [22] [23]. For researchers and drug development professionals, understanding these distinctions is critical for regulatory compliance and generating scientifically defensible data, particularly when using sophisticated analytical techniques like spectroscopy [24].

The analytical lifecycle begins with qualified instruments, proceeds through validated methods, and incorporates verification when applying established methods to new conditions. This structured approach forms the foundation of the Data Quality Triangle, where instrument qualification supports method validation, which in turn ensures reliable analytical results [24]. This guide compares these critical processes through detailed definitions, practical applications in spectroscopic contexts, and supporting experimental data.

Defining the Core Concepts

Qualification

Qualification is the process of demonstrating that instruments or equipment are properly installed, function correctly, and perform according to predefined specifications [23] [24]. It focuses on the instrument itself rather than the analytical method. The commonly used "4Qs model" includes Design Qualification (DQ), Installation Qualification (IQ), Operational Qualification (OQ), and Performance Qualification (PQ) [24]. For spectroscopic systems, qualification ensures that the spectrometer's key parameters - such as wavelength accuracy, photometric linearity, and signal-to-noise ratio - meet manufacturer specifications and user requirements before being released for analytical use [24].

Verification

Verification is the confirmation, through provision of objective evidence, that specified requirements have been fulfilled [23]. In pharmaceutical testing, verification specifically confirms that a compendial procedure (such as a USP method) performs satisfactorily under actual conditions of use [25] [22]. It is not a re-validation, but rather a demonstration that the method works as expected in a new laboratory with different analysts, equipment, and reagents [22]. The United States Pharmacopeia (USP) states in Chapter 1226 that verification involves assessing a subset of validation characteristics to generate appropriate relevant data rather than repeating the entire validation process [25].

Validation

Validation is the comprehensive process of establishing, through laboratory studies, that the performance characteristics of an analytical procedure meet the requirements for its intended analytical applications [21]. The International Conference on Harmonisation (ICH) defines it as "demonstrating that the procedure is suitable for its intended purpose" [21]. Method validation provides documented evidence that the process consistently produces a result meeting predetermined specifications and quality attributes [21]. For quantitative spectroscopic methods, this involves systematically evaluating multiple performance characteristics including accuracy, precision, specificity, linearity, range, detection limit (LOD), quantitation limit (LOQ), and robustness [26] [22].

Conceptual Relationships

The relationship between qualification, verification, and validation can be visualized as a hierarchical framework where each process builds upon the previous one to ensure overall data quality.

Comparative Analysis: Application in Spectroscopic Measurements

When to Use Each Approach

The decision to perform qualification, verification, or validation depends on multiple factors including regulatory requirements, the nature of the method, and its stage in the product lifecycle.

Table 1: Appropriate Application of Qualification, Verification, and Validation

| Process | When Applied | Typical Scenarios in Spectroscopy | Regulatory Basis |

|---|---|---|---|

| Qualification | When installing new instruments or when equipment is relocated or repaired | Spectrometer installation; Periodic performance checks; After major repairs or maintenance | USP <1058> [24]; WHO TRS 1019 Annex 3, Appendix 6 [24] |

| Verification | When implementing a compendial or previously validated method in a new laboratory setting | Adopting USP method for drug substance testing; Transferring methods between laboratories | USP <1226> [25]; ICH Guidelines [22] |

| Validation | When developing new analytical methods or significantly modifying existing ones | New spectroscopic method for API quantification; Method for novel drug formulation | ICH Q2(R1) [22]; FDA Guidance [21] |

Scope and Documentation Requirements

The extent of work and documentation differs significantly among qualification, verification, and validation. Understanding these differences helps organizations allocate appropriate resources and maintain regulatory compliance.

Table 2: Comparison of Scope and Documentation Requirements

| Aspect | Qualification | Verification | Full Validation |

|---|---|---|---|

| Primary Focus | Instrument performance and specifications | Method performance in new environment | Overall method reliability for intended use |

| Key Parameters | Wavelength accuracy, photometric linearity, signal-to-noise, baseline stability [24] | Accuracy, precision, specificity [25] | Accuracy, precision, specificity, LOD, LOQ, linearity, range, robustness [26] [22] |

| Documentation Level | Instrument-specific protocols and results | Limited assessment against acceptance criteria | Comprehensive validation protocol and report |

| Resource Intensity | Moderate | Low to Moderate | High |

| Personnel Involvement | Technical staff, engineers | Analysts, quality control | Multidisciplinary team (R&D, QA, analysts) |

Experimental Evidence: Comparative Method Performance

A 2022 study comparing UV spectroscopy and HPLC-UV for piperine quantification in black pepper provides illustrative experimental data on method validation parameters [26]. This comparison demonstrates how different analytical techniques based on spectroscopy yield distinct validation characteristics, informing method selection for specific applications.

Table 3: Validation Parameters for Spectroscopic Methods in Piperine Quantification [26]

| Validation Parameter | UV Spectroscopy Method | HPLC-UV Method |

|---|---|---|

| Specificity | Good | Good |

| Linearity | Good | Good |

| Limit of Detection (LOD) | 0.65 | 0.23 |

| Accuracy Range | 96.7–101.5% | 98.2–100.6% |

| Precision (RSD) | 0.59–2.12% | 0.83–1.58% |

| Measurement Uncertainty | 4.29% at 49.481 g/kg | 2.47% at 34.819 g/kg |

| Key Conclusion | - | More sensitive and accurate |

The experimental data demonstrates that while both methods showed acceptable performance characteristics for piperine quantification, HPLC-UV demonstrated superior sensitivity (lower LOD) and lower measurement uncertainty, making it more suitable for precise quantitative applications [26]. The validation process objectively identified these performance differences, enabling informed method selection based on analytical requirements.

Methodologies and Protocols

Qualification Protocol for Spectroscopic Instruments

The qualification process for spectroscopic instruments follows a structured approach to ensure fitness for purpose [24]. This includes:

Design Qualification (DQ): Documenting instrument specifications and intended use. For commercial spectrometers, this is often replaced by a selection report confirming the chosen system meets user requirements [24].

Installation Qualification (IQ): Verifying proper installation in the intended environment, including:

- Verification of components against shipping list

- Confirmation of proper installation environment (power, temperature, humidity)

- Documentation of firmware and software versions

Operational Qualification (OQ): Testing to ensure the instrument operates according to specifications in the user's environment [24]. For spectrometers, this includes:

- Wavelength accuracy verification using certified reference materials

- Photometric accuracy testing

- Linearity assessment across the measurable range

- Signal-to-noise ratio measurement at specified levels

Performance Qualification (PQ): Ongoing verification that the instrument continues to perform appropriately for its intended use under actual operating conditions [24]. This involves:

- Periodic testing using system suitability protocols

- Documentation of performance trends over time

Verification Protocol for Compendial Methods

For verification of compendial spectroscopic methods, the United States Pharmacopeia recommends a focused assessment of critical performance characteristics [25]:

Specificity: Demonstrate that the method can unequivocally identify and quantify the analyte in the presence of potential interferents present in the sample matrix.

Accuracy: Conduct spike recovery studies using a minimum of three concentration levels with multiple replicates. Acceptance criteria typically require mean recovery between 98-102% with RSD ≤2%.

Precision: Perform repeatability testing using six independent samples at 100% of test concentration. The RSD should not exceed 2% for drug substances.

Comparison of Results: Compare obtained results with established acceptance criteria documented in the verification protocol [25].

The verification process must be documented in an approved protocol that describes the procedure to be verified, establishes the number and identity of batches used in verification, details the analytical performance characteristics evaluated, and specifies acceptable result ranges [25].

Comprehensive Validation Protocol

For full method validation, a more extensive protocol is required [26] [22]:

Specificity: Demonstrate resolution from potentially interfering components using forced degradation studies.

Linearity and Range: Prepare and analyze a minimum of five concentration levels across the specified range. The correlation coefficient should be ≥0.999 for HPLC methods [26].

Accuracy: Conduct recovery studies at three concentration levels (80%, 100%, 120%) with triple determinations at each level.

Precision:

- Repeatability: Multiple injections of homogeneous sample

- Intermediate precision: Different days, analysts, or instruments

Detection and Quantitation Limits: Determine using signal-to-noise ratio (typically 3:1 for LOD, 10:1 for LOQ) or based on standard deviation of response and slope [26].

Robustness: Evaluate effects of deliberate variations in method parameters (wavelength, mobile phase composition, etc.).

The workflow for establishing a fully validated analytical method progresses systematically from initial requirements through continuous monitoring.

Essential Research Reagents and Materials

Successful implementation of qualification, verification, and validation protocols requires specific high-quality materials. The following table details essential research reagent solutions for spectroscopic method validation.

Table 4: Essential Research Reagent Solutions for Spectroscopic Method Validation

| Material/Reagent | Function in Validation Process | Application Examples |

|---|---|---|

| Certified Reference Materials | Establish traceability and accuracy for qualification and calibration | Wavelength calibration; Qualification of spectroscopic instruments [24] |

| Phantom Materials | Calibrate instrumental response in diffuse reflectance spectroscopy | Intralipid phantoms for calibration in spatially resolved DRS [27] |

| High-Purity Analytical Standards | Method development and validation for quantitative analysis | Piperine standard for validation of analytical methods [26] |

| Characterized Samples | Evaluation of method precision, accuracy, and robustness | Black pepper samples with varying piperine content [26] |

| System Suitability Test Materials | Verify chromatographic system performance before sample analysis | USP system suitability reference standards [21] |

Qualification, verification, and validation represent distinct but complementary processes in the lifecycle of analytical spectroscopic methods. Qualification ensures instruments are fit for purpose, validation provides comprehensive evidence that methods are suitable for their intended use, and verification confirms that validated methods perform appropriately in new settings [22] [23] [24].

The experimental data presented demonstrates that the rigorous application of these processes enables objective comparison of analytical methods and informs selection based on performance characteristics rather than presumption [26]. For researchers and drug development professionals, understanding these distinctions is essential for designing efficient yet compliant analytical workflows that generate reliable spectroscopic data while optimizing resource allocation.

The Role of Risk Management (ICH Q9) in Scoping Validation Activities

In the pharmaceutical industry, validation activities are fundamental to demonstrating that processes, equipment, and analytical methods consistently produce results meeting predetermined specifications and quality attributes. ICH Q9 (Quality Risk Management) provides a systematic framework for assessing, controlling, communicating, and reviewing risks to product quality, making it indispensable for defining the scope and extent of validation activities [28] [29]. The 2023 revision (Q9(R1)) further clarifies concepts like risk-based decision-making and formality in quality risk management processes, offering enhanced guidance for their application across the product lifecycle [30].

For researchers and scientists developing validation protocols for quantitative spectroscopic measurements, ICH Q9 principles enable a science-based approach to prioritize efforts. Instead of applying uniform validation intensity to all aspects, a risk-based approach focuses resources on parameters and system components with the greatest potential impact on data integrity, product quality, and patient safety [29]. This guide explores the practical application of ICH Q9 in scoping validation activities, supported by experimental data and structured methodologies relevant to analytical development.

The ICH Q9 Risk Management Process in Validation

The Structured QRM Process

ICH Q9 outlines a structured, iterative process for quality risk management comprising four core phases [28]:

- Risk Assessment: The initial step involving the identification of potential hazards, followed by the analysis and evaluation of risks associated with those hazards. For validation, this includes identifying critical process parameters and quality attributes [28].

- Risk Control: The process of deciding whether to accept, reduce, or eliminate identified risks. This includes establishing risk mitigation actions and determining the extent of validation required to demonstrate control [29].

- Risk Communication: The sharing of risk management outcomes across appropriate organizational levels and to relevant stakeholders to ensure informed decision-making [28].

- Risk Review: The ongoing monitoring and re-evaluation of risks in light of new data or process changes, ensuring the validation state remains current throughout the system or method lifecycle [28].

Determining Formality in Risk Assessment

The ICH Q9(R1) revision provides crucial clarification on determining the appropriate level of formality for risk assessments, which directly influences validation strategy. The level of formality should be commensurate with the level of risk—higher-risk scenarios typically warrant more formal, team-based, and documented assessments [30].

Table: Determining Risk Assessment Formality for Validation Activities

| Risk Level | Assessment Approach | Documentation Level | Team Involvement | Validation Response |

|---|---|---|---|---|

| High | Formal, structured method (e.g., FMEA, HAZOP) | Comprehensive documentation with detailed rationale | Cross-functional team review | Extensive validation with rigorous testing protocols |

| Medium | Semi-formal approach | Documented procedure with summary rationale | Key stakeholders from relevant departments | Targeted validation based on risk prioritization |

| Low | Informal assessment | Brief documentation within validation protocols | Individual expert assessment with supervisor review | Basic verification or reliance on existing data |

Practical Application in Scoping Validation Activities

Defining Validation Strategy and Priorities

Quality risk management provides a systematic approach to determine which systems, processes, or methods require validation and the appropriate extent of that validation [29]. By evaluating the potential impact on product quality, safety, and efficacy, organizations can establish scientifically defensible validation priorities.

For quantitative spectroscopic measurements, this means focusing validation efforts on aspects most likely to affect the accuracy, precision, and reliability of results. A risk-based approach might reveal that wavelength accuracy and photometric linearity require more rigorous testing than aspects like instrument footprint or data storage capacity.

Table: Risk-Based Prioritization for Spectroscopic System Validation

| System Component/Parameter | Impact on Product Quality | Risk Level | Validation Priority | Recommended Validation Approach |

|---|---|---|---|---|

| Detector Linearity | Direct impact on quantitative results | High | Critical | Full validation with statistical analysis of multiple concentration levels |

| Wavelength Accuracy | Affects method specificity | High | Critical | Validation against certified reference materials |

| Sample Temperature Control | May affect spectral characteristics | Medium | Moderate | Limited verification under expected operating ranges |

| Software Data Integrity | Potential impact on result reliability | High | Critical | Audit trail functionality testing and data security verification |

| System Suitability Checks | Ensures ongoing method validity | High | Critical | Incorporation into routine operational procedures |

Methodologies and Tools for Risk Assessment

ICH Q9 does not mandate specific methodologies but suggests various tools that can be applied to validation activities [28]:

- FMEA (Failure Mode and Effects Analysis): A systematic method that identifies potential failure modes, their causes, and effects on performance. It assesses severity, occurrence, and detection to calculate a Risk Priority Number (RPN) [28].

- FTA (Fault Tree Analysis): A top-down, deductive approach that identifies the causal chain leading to a predefined undesired event [28].

- HAZOP (Hazard and Operability Study): A structured technique that identifies potential deviations from intended design and their consequences [28].

- HACCP (Hazard Analysis and Critical Control Points): A systematic, preventive approach that identifies physical, chemical, and biological hazards [28].

Experimental Protocol: FMEA for a Spectroscopic Method

Objective: To conduct a Failure Mode and Effects Analysis for a quantitative UV-Vis spectroscopic method to determine critical validation parameters.

Materials and Methods:

- System: UV-Vis spectrophotometer with automated sampling

- Method: Quantitative analysis of active pharmaceutical ingredient (API) in solution

- Team: Cross-functional team including analytical chemist, quality representative, and laboratory manager

- Tool: FMEA worksheet with scoring matrix (1-10 for severity, occurrence, detection)

Procedure:

- Define the scope and boundaries of the spectroscopic method

- Identify all potential failure modes for each method component/step

- For each failure mode, determine:

- Potential effect on analytical results

- Severity rating (1=no effect to 10=hazardous to patient)

- Potential causes and occurrence rating (1=very unlikely to 10=inevitable)

- Existing controls and detection rating (1=certain detection to 10=uncertain detection)

- Calculate Risk Priority Number (RPN = Severity × Occurrence × Detection)

- Prioritize failure modes with highest RPN for validation controls

- Define mitigation strategies and re-calculate RPN after implementation

Expected Output: A prioritized list of failure modes informing the validation protocol design, focusing testing on highest-risk areas.

Risk-Based Decision Making in Validation Scope

Determining Extent of Validation

The risk assessment output directly determines the scope and rigor of validation activities. Higher-risk elements typically require more extensive testing, stricter acceptance criteria, and more comprehensive documentation [29].

For quantitative spectroscopic methods, this risk-based approach translates to:

- High-risk parameters: Full validation following ICH Q2(R1) guidelines with extensive testing for accuracy, precision, linearity, range, specificity, and robustness

- Medium-risk parameters: Partial validation or verification focusing on key performance indicators

- Low-risk parameters: Qualification or simple verification sufficient to demonstrate fitness for purpose

The Scientist's Toolkit: Essential Materials for Risk-Based Validation

Table: Key Research Reagent Solutions for Spectroscopic Method Validation

| Reagent/Material | Function in Validation | Risk Management Application |

|---|---|---|

| Certified Reference Materials | Establish accuracy and traceability | Mitigates risk of systematic error in quantitative measurements |

| Stability-Indicating Standards | Demonstrate method specificity | Controls risk of degraded product interference |

| System Suitability Standards | Verify ongoing method performance | Addresses risk of system drift over time |

| Forced Degradation Samples | Establish method robustness | Identifies risk factors affecting method performance |

| Placebo/Matrix Blanks | Assess interference and selectivity | Controls risk of excipient interference in formulation analysis |

Case Study: Implementation with Quantitative Data

Experimental Data from Risk-Based Validation Approach

A comparative study was conducted to evaluate the efficiency of risk-based versus conventional comprehensive validation approaches for a quantitative HPLC-UV method for API assay.

Table: Comparative Validation Metrics - Risk-Based vs. Conventional Approach

| Validation Parameter | Conventional Approach | Risk-Based Approach | Efficiency Improvement |

|---|---|---|---|

| Validation Timeline | 28 days | 18 days | 35.7% reduction |

| Number of Experimental Runs | 30 | 18 | 40% reduction |

| Documentation Pages | 145 | 92 | 36.6% reduction |

| Critical Issues Identified | 3 | 3 | No difference in problem detection |

| Method Robustness | Established across full operating range | Focused on high-risk parameters | Equivalent control of critical factors |

| Regulatory Compliance | Full compliance | Full compliance | Equivalent outcome |

The experimental data demonstrates that applying ICH Q9 principles to validation scoping can achieve significant efficiency gains without compromising quality or compliance. By focusing resources on high-risk areas identified through systematic risk assessment, the validation process became more targeted and cost-effective while maintaining scientific rigor.

Integration with Pharmaceutical Quality System

ICH Q9 does not operate in isolation but integrates with other ICH quality guidelines to form a comprehensive pharmaceutical quality system [31]:

- ICH Q8 (Pharmaceutical Development) provides the foundation for risk-based development through Quality by Design (QbD) principles [31] [32]

- ICH Q10 (Pharmaceutical Quality System) establishes the model for an effective quality system that incorporates quality risk management as a key enabler [31] [32]

- ICH Q7 (GMP for APIs) sets the quality requirements for active pharmaceutical ingredients, which include validation based on risk assessment [31]

This integration ensures that risk-based decisions made during validation align with the overall product lifecycle management strategy, promoting consistency and facilitating continuous improvement.

The application of ICH Q9 principles to scoping validation activities represents a paradigm shift from uniformly intensive validation to a scientific, risk-based approach that prioritizes resources based on potential impact to product quality and patient safety. For researchers developing validation protocols for quantitative spectroscopic measurements, this framework offers a systematic methodology to:

- Identify critical method parameters requiring rigorous validation

- Determine the appropriate level of validation effort based on risk assessment

- Document the scientific rationale for validation decisions

- Communicate risk control strategies to stakeholders

- Establish a foundation for ongoing risk review throughout the method lifecycle

By adopting this approach, scientific professionals can design more efficient, focused, and defensible validation protocols that maintain the highest quality standards while optimizing resource utilization.

Implementing and Applying Validated Spectroscopic Methods in the Laboratory

This guide outlines a systematic framework for developing and validating quantitative spectroscopic methods, providing a direct comparison of established and emerging techniques to support robust analytical protocols in pharmaceutical and material science research.

Phase 1: Feasibility Assessment and Technique Selection

The initial phase determines whether an analytical technique is scientifically suitable for the intended application, establishing the foundational parameters for method development.

Core Considerations for Feasibility:

- Analyte and Matrix Characterization: The first requirement for a valid measurement is a representative sample with understood concomitants, characterized interferences, and documented thermal and chemical history. Measurements that work for pure samples in distilled water may not perform well with real-world samples [33].

- Spectral Feature Identification: Determine if the analyte has selective, measurable features. Atomic absorption lines are typically below 0.05 nm wide, while molecular absorption in UV-Vis spans 10-50 nm. Near-infrared features are broader and highly overlapped, often requiring pattern recognition for quantification [33].

- Dynamic Range and Expected Concentration: Apply Beer-Lambert Law considerations with awareness of its limitations. For an absorptivity (ε) of 50,000 L mol⁻¹ cm⁻¹ in a 1 cm cuvette, 0.2 µM analyte yields A~0.01. High concentrations (e.g., 1 mM yielding A=50) are impractical due to photon limitations, requiring dilution to maintain A<3 [33].

- Photonic Requirements and Noise: The ultimate precision is limited by photon statistics, where the highest possible signal-to-noise ratio is N¹/² for N detected photons. Precision of 1% requires 10⁴ photons, dictating detector and digitization specifications [33].

Table 1: Comparative Analysis of Spectroscopic Techniques for Quantification

| Technique | Optimal Application Scope | Key Strengths | Critical Limitations | Reported Performance Metrics |

|---|---|---|---|---|

| UV-Vis Spectroscopy | Quantification of nanoplastics, proteins in solution [6] | Rapid, accessible, non-destructive; requires small sample volumes (microvolume systems) [6] | Underestimation of concentration vs. mass-based methods; pigment interference [6] | Consistent order-of-magnitude accuracy vs. Py-GC-MS/TGA; reliable trend identification [6] |

| Pyrolysis GC-MS | Mass-based nanoplastic quantification [6] | High specificity for polymer identification | Destructive; requires µg-scale sample; no size/shape information [6] | Used as benchmark mass-based technique [6] |

| Thermogravimetric Analysis (TGA) | Mass-based quantification [6] | Direct mass measurement | Destructive; no structural information [6] | Used as benchmark mass-based technique [6] |

| Energy-Dispersive XRF | Elemental analysis in alloys (e.g., Ag-Cu) [14] | Multi-element analysis; minimal sample prep | Matrix effects influence detection limits [14] | Detection limits significantly influenced by sample matrix [14] |

| Wavelength-Dispersive XRF | Elemental analysis in alloys [14] | Higher resolution than ED-XRF | Matrix effects influence detection limits [14] | Better detection limits for Ag in Ag-Cu alloys than ED-XRF [14] |

| Quantitative NMR | Determination of molar ratios, purity assessment [15] | Inherently quantitative; structural information | Requires rigorous protocol for accuracy [15] | Maximum combined measurement uncertainty of 1.5% (95% confidence) [15] |

Phase 2: Protocol Development and Experimental Design

Once feasibility is established, develop a detailed, controlled protocol that ensures reliability and reproducibility.

Establishing a Validation Protocol for Quantitative NMR

A validated protocol for quantitative ¹H-NMR using single pulse excitation has been confirmed through round-robin tests, considering linearity, robustness, specificity, selectivity, accuracy, instrument parameters, and data processing. This approach yields a maximum combined measurement uncertainty of 1.5% for a 95% confidence interval for both molar ratios and amount fractions [15].

Designing a Comparative Validation Study

Research on nanoplastic quantification demonstrates effective validation by benchmarking new methods against established techniques:

- Multi-Technique Comparison: UV-Vis spectroscopy was compared with mass-based techniques (Py-GC-MS, TGA) and number-based technique (NTA) under well-defined conditions [6].

- Controlled Material Generation: True-to-life nanoplastics were generated from fragmented polystyrene items under controlled laboratory conditions to produce environmentally relevant test materials [6].

- Specific Protocol: White, unpigmented polystyrene materials were selected to avoid pigment interference in UV-visible extinction spectra. Mechanical fragmentation under cryogenic conditions produced micropowder, followed by suspension in MilliQ water and sequential centrifugation to separate nanoplastics [6].

Instrument Qualification and Validation Framework

For regulated environments, spectrometer qualification must be integrated with computerized system validation:

- Integrated Approach: You cannot qualify the instrument without the software, nor validate the software without the instrument. USP <1058> provides an umbrella framework connecting Analytical Instrument Qualification (AIQ) and Computerized System Validation (CSV) [24].

- User Requirements Specification (URS): Develop a current URS defining intended use before selection. It must cover instrument/software requirements, GxP, data integrity, and pharmacopoeial requirements—not merely copy supplier specifications [24].

- Lifecycle Management: Specifications are living documents requiring version control through the project lifecycle. Initial generic URS identifies gaps against requirements addressed before system implementation [24].

Spectroscopic Method Validation Parameters

Critical validation parameters must be experimentally demonstrated to ensure confidence in analytical results [14].

Table 2: Detection Limit Definitions and Calculations in Spectroscopic Validation

| Detection Limit Parameter | Definition | Calculation Method | Confidence Level |

|---|---|---|---|

| Lower Limit of Detection (LLD) | Smallest amount detectable with 95% confidence | Equivalent to two standard errors (σB) of measured background | 95% [14] |

| Instrumental Limit of Detection (ILD) | Minimum net peak intensity detectable by instrument | Defined for given analyte in given sample | 99.95% [14] |

| Limit of Detection (LOD) | Minimum concentration distinguishable from background | Peak marked when 3x larger than background | Not specified [14] |

| Limit of Quantification (LOQ) | Lowest concentration quantifiable with confidence | Defined with specified confidence level | Specified confidence level [14] |

Phase 3: Protocol Execution and Data Quality Assurance

The final phase focuses on rigorous implementation, verification, and ongoing quality assessment.

Experimental Execution: Nanoplastic Quantification Case Study

In the nanoplastic study, the execution phase involved:

- Microvolume UV-Vis Measurement: Utilizing microvolume spectrophotometer for limited sample volumes, enabling sample recovery for subsequent analyses [6].

- Parallel Analysis with Reference Techniques: Simultaneous quantification using Py-GC-MS, TGA, and NTA for comparative assessment [6].

- Data Correlation Analysis: Evaluating consistency across different methods, showing UV-vis provided reliable trends despite some concentration underestimation [6].

Photometer Validation Methods for Process Analytics

For inline process monitoring, photometer validation can employ several approaches:

- Process Concentration Variation: Varying process concentration between maxima/minima while comparing photometer readings with laboratory data [34].

- Verified Sample Introduction: Removing inline measurement cell and introducing verified samples/standard solutions [34].

- Certified Reference Materials: Using NIST-specified glass filters for non-intrusive traceable validation of photometric accuracy and linearity [34].

Spectral Quality Assessment Implementation

For Raman spectroscopy in surgical applications, a quantitative quality factor (QF) metric was developed and validated:

- Objective Quality Threshold: QF based on variance from stochastic noise in key tissue bands (C-C stretch, CH₂/CH₃ deformation, amide bands) [35].

- Performance Validation: Receiver-operator-characteristic analysis showed 89% sensitivity and 90% specificity for separating high/low-quality spectra [35].

- Impact Assessment: Implementation increased cancer detection sensitivity by 20% and specificity by 12% [35].

Essential Research Reagent Solutions

Table 3: Key Materials and Reagents for Spectroscopic Method Development

| Reagent/Material | Function/Purpose | Application Example | Critical Considerations |

|---|---|---|---|

| Unpigmented Polystyrene Materials | Generation of true-to-life nanoplastic test materials | Nanoplastic quantification studies [6] | Avoids pigment interference in UV-Vis spectra [6] |

| Certified Reference Materials (Ag-Cu Alloys) | Validation of elemental analysis methods | XRF spectroscopy method development [14] | Enables detection limit determination across matrices [14] |

| NIST-Specified Glass Filters | Validation of photometric accuracy and linearity | Process photometer validation [34] | Provides traceable reference standard; long-term stability [34] |

| Holmium Perchlorate Solution | Wavelength validation for spectrophotometers | Wavelength accuracy verification [34] | Contains distinct peaks for wavelength calibration [34] |

| Ultrapure Water (Milli-Q System) | Sample preparation and dilution | General spectroscopic applications [6] [34] | Ensures minimal background contamination [34] |

Applying Quality-by-Design (QbD) and Design of Experiments (DoE) for Robust Methods

The paradigm for developing analytical methods has decisively shifted from traditional, empirical approaches to systematic, science-based frameworks. Within validation protocols for quantitative spectroscopic measurements and chromatographic analyses, Quality by Design (QbD) and Design of Experiments (DoE) have emerged as pivotal methodologies for ensuring robust, reliable, and regulatory-compliant methods. QbD is a systematic approach to development that begins with predefined objectives and emphasizes product and process understanding and process control, based on sound science and quality risk management [36]. It represents a holistic system for building quality into products and processes from the outset, rather than relying solely on end-product testing [37]. DoE, in contrast, is a statistical technique used within the QbD framework to systematically investigate and optimize process variables by deliberately varying multiple factors simultaneously to understand their individual and combined effects on the output [37]. The synergy between these approaches enables researchers to efficiently identify critical method parameters, establish a robust "design space" for operation, and develop effective control strategies, thereby significantly reducing the risk of method failure and enhancing operational flexibility [38] [36].

Table 1: Core Comparison of QbD and DoE

| Feature | Quality by Design (QbD) | Design of Experiments (DoE) |

|---|---|---|

| Primary Nature | Holistic, systematic philosophy for development [37] | Statistical tool for experimentation and optimization [37] |

| Core Objective | Build quality in from the beginning; understand and control sources of variability [36] [37] | Systematically explore factor effects and optimize process performance [37] |

| Key Components | QTPP, CQAs, Risk Assessment, Design Space, Control Strategy [36] | Factors, Responses, Experimental Runs, Mathematical Models [39] |

| Role in Development | Overarching framework that defines the development roadmap [38] | Technique used within QbD for experimentation and modeling [37] |

| Regulatory Impact | Provides regulatory flexibility (e.g., changes within design space not considered a change) [36] | Provides scientific evidence and data rigor to support regulatory submissions [40] |

Core Principles and Workflow

The implementation of QbD and DoE follows a structured, sequential workflow designed to translate predefined objectives into a well-understood and controlled analytical method. The process begins with the definition of the Analytical Target Profile (ATP), which outlines the method's purpose and the performance requirements it must fulfill [41] [39]. For a quantitative spectroscopic method, the ATP would specify critical analytical attributes (CAAs) such as accuracy, precision, specificity, and linearity.

A risk assessment is then conducted to identify which method parameters (e.g., pH, temperature, sample preparation time) potentially influence the CAAs. Tools like Ishikawa (fishbone) diagrams and Failure Mode and Effects Analysis (FMEA) are typically employed for this purpose [36] [42]. High-risk parameters, termed Critical Method Parameters (CMPs), are selected for further investigation through DoE [39] [42].

The DoE phase involves a screening stage to identify the most influential factors, followed by an optimization stage. During optimization, response surface methodologies like Central Composite Design (CCD) or Box-Behnken Design (BBD) are used to explore factor interactions and build mathematical models that predict CAA behavior across a range of CMP values [40] [39] [42]. The output of this modeling is the establishment of the Method Operable Design Region (MODR), also known as the design space. The MODR is the multidimensional combination of CMPs where the method performs robustly, meeting all quality criteria defined in the ATP [39]. A control strategy is then developed to ensure the method remains within the MODR during routine use.

Experimental Protocols and Data

Case Study 1: QbD-Driven LC-MS-MS Method for Fluoxetine Quantification

This study developed a robust bioanalytical method for quantifying fluoxetine in human plasma [40].

- Methodology: An LC-MS-MS system with a C18 column was used. Fluoxetine-D5 served as the internal standard. Sample preparation was performed via solid-phase extraction.

- DoE Application: A Box-Behnken Design (BBD) was employed to optimize three critical method parameters: mobile phase flow rate (X1), pH (X2), and mobile phase composition (X3). The responses measured were retention time (Y1) and peak area (Y2) [40].

- Results and Outcomes: The design space was established, revealing that the method variables could be controlled to improve robustness. The validated method showed excellent linearity (2–30 ng/mL), accuracy, precision, and sensitivity. Stability studies confirmed no significant degradation under various conditions [40].

Case Study 2: AQbD for an In-line UV-Vis Spectroscopic Method

This research exemplifies the application of AQbD for a quantitative spectroscopic method, using in-line UV-Vis to monitor piroxicam content during hot melt extrusion [41].

- Methodology: A UV-Vis spectrophotometer with optical fiber probes was installed in the extruder die in a transmission configuration. Transmittance data (230–816 nm) was collected and used to calculate API content and CIELAB color parameters [41].

- DoE Application: The method's robustness was tested by evaluating the effects of screw speed (150–250 rpm) and feed rate (5–9 g/min) on the prediction of piroxicam content.

- Results and Outcomes: Validation was based on the accuracy profile strategy. The results showed that 95% β-expectation tolerance limits for all concentration levels were within the acceptance limits of ±5%. The method was demonstrated to be a robust PAT tool for real-time monitoring [41].

Case Study 3: AQbD for Robust RP-HPLC Method for Buserelin Acetate

This study developed an RP-HPLC method for analyzing buserelin acetate in polymeric nanoparticles using AQbD [42].

- Methodology: The ATP was defined, and risk assessment was performed using a fishbone diagram and Risk Assessment Method (RAM). The flow rate and pH of the buffer were identified as CMPs impacting retention time and peak area (CAAs) [42].

- DoE Application: Optimization was performed using a Central Composite Design (CCD). The chromatographic separation used a water:acetonitrile mobile phase and a C18 column, with detection at 220 nm.

- Results and Outcomes: The method showed a linear range of 10–60 μg/mL (R² = 0.9991). The LOD and LOQ were 0.051 μg/mL and 0.254 μg/mL, respectively. The method was successfully applied to analyze drug release from nanoparticles over 48 hours [42].

Table 2: Summary of DoE Designs and Outcomes from Case Studies

| Case Study / Analytic | DoE Design Used | Critical Method Parameters (CMPs) | Critical Analytical Attributes (CAAs) | Established Method Performance |

|---|---|---|---|---|

| Fluoxetine in Plasma [40] | Box-Behnken Design (BBD) | Flow rate, pH, Mobile phase composition | Retention time, Peak area | Linearity: 2-30 ng/mL; Validated per ICH guidelines |

| Piroxicam in HME [41] | Robustness Test (2 factors) | Screw speed, Feed rate | API Content Prediction Accuracy | Accuracy profile tolerance limits within ±5% |

| Buserelin Acetate [42] | Central Composite Design (CCD) | Flow rate, pH of buffer | Retention time, Peak area | Linearity: 10-60 μg/mL (R²=0.9991); LOD: 0.051 μg/mL |

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of QbD and DoE requires not only a statistical framework but also the use of high-quality reagents, software, and instrumentation. The table below details key materials and tools referenced in the cited studies.

Table 3: Key Research Reagent Solutions for QbD/DoE Experiments

| Item Name / Category | Specification / Example | Primary Function in QbD/DoE |

|---|---|---|

| Chromatography Columns | Ascentis express C18 (75 × 4.6 mm, 2.7 μm);Zorbax Eclipse plus C18 (4.6 mm × 150 mm × 5 μm) [40] [42] | Stationary phase for chromatographic separation; a critical material attribute (CMA) that can be screened in early DoE stages. |