A Practical Guide to Method Comparison Experiments: Designing Robust Studies for Systematic Error Assessment in Biomedical Research

This article provides a comprehensive framework for designing and executing method comparison experiments to accurately assess systematic error (bias) in analytical measurements.

A Practical Guide to Method Comparison Experiments: Designing Robust Studies for Systematic Error Assessment in Biomedical Research

Abstract

This article provides a comprehensive framework for designing and executing method comparison experiments to accurately assess systematic error (bias) in analytical measurements. Tailored for researchers, scientists, and drug development professionals, it covers foundational principles, advanced methodological applications, troubleshooting strategies, and validation techniques. Readers will learn to select appropriate comparative methods, determine optimal sample size and stability parameters, apply statistical tools like linear regression and Bland-Altman analysis, and implement quality control measures to ensure reliable, actionable results that meet regulatory standards and enhance research credibility.

Understanding Systematic Error and the Pillars of a Robust Comparison Experiment

In scientific research, particularly in fields like drug development and clinical measurement, the validity of any conclusion is fundamentally dependent on the quality of the data. Measurement error—the difference between an observed value and the true value—is an unavoidable reality in scientific investigation [1]. However, not all errors are created equal. Systematic error, or bias, represents a consistent, predictable deviation from the true value and poses a far greater threat to data integrity than random variability [1] [2]. While random error introduces imprecision or "noise," systematic error introduces inaccuracy, consistently skewing results in one direction and potentially leading to false conclusions and invalid research outcomes [2]. The design of robust method-comparison experiments is therefore not merely a technical exercise but a critical safeguard for research integrity, enabling scientists to quantify, understand, and mitigate systematic errors before they compromise scientific or clinical decisions.

Theoretical Foundation: Distinguishing Systematic and Random Error

Definitions and Core Characteristics

Understanding the distinct nature of systematic and random error is the first step in controlling their impact.

Systematic Error (Bias): This is a consistent or proportional difference between the observed and true values of something [1]. For example, a miscalibrated scale that consistently registers weights as higher than they truly are introduces a systematic error. The key characteristic of systematic error is its consistency; it affects measurements in a predictable direction and often by a similar magnitude [3]. It cannot be reduced by simply repeating measurements [4].

Random Error: This is a chance difference between the observed and true values caused by unknown and unpredictable changes in the experiment [1] [3]. Examples include electronic noise in an instrument or natural variations in experimental contexts. Random error affects measurements in unpredictable ways, making them equally likely to be higher or lower than the true values [1]. Unlike systematic error, its effect can be reduced by taking repeated measurements and averaging them [1].

Impact on Accuracy and Precision: A Visual Analogy

The concepts of accuracy and precision provide a useful framework for understanding the impact of these errors, often explained through the analogy of a dartboard [1]:

- Random error mainly affects precision, which is how reproducible the same measurement is under equivalent circumstances. A high level of random error means measurements are scattered widely.

- Systematic error affects the accuracy of a measurement, or how close the observed value is to the true value. It shifts the entire set of measurements away from the true value in a specific direction.

The table below summarizes the key differences for quick reference.

Table 1: Fundamental Differences Between Systematic and Random Error

| Characteristic | Systematic Error (Bias) | Random Error |

|---|---|---|

| Definition | Consistent, predictable deviation | Unpredictable, chance fluctuation |

| Impact | Reduces accuracy | Reduces precision |

| Direction | Consistently in one direction | Varies randomly |

| Elimination by Averaging | No | Yes |

| Cause | Faulty calibration, biased procedure | Environmental noise, instrument limitations |

| Ease of Detection | Difficult, may require reference standard | Evident from scatter in repeated measures |

A Visual Representation of Error Types

The following diagram illustrates the relationship between random and systematic error and their combined effect on accuracy and precision.

Designing a Method-Comparison Experiment

The comparison of methods experiment is a critical study design specifically intended to estimate the systematic error, or inaccuracy, of a new measurement method (the test method) relative to an established one [5] [6]. Such experiments are foundational when clinicians or researchers need to determine if a new technique can validly substitute for a current method in practice.

Core Design Considerations

A well-designed method-comparison experiment requires careful planning across several dimensions to ensure its conclusions are valid.

Table 2: Key Design Factors for a Method-Comparison Experiment

| Design Factor | Considerations & Recommendations |

|---|---|

| Selection of Methods | The established "comparative method" should ideally be a reference method with documented correctness. If a routine method is used, large discrepancies require further investigation to identify which method is inaccurate [5]. |

| Number of Specimens | A minimum of 40 different patient specimens is recommended. Specimens should cover the entire working range of the method and represent the expected spectrum of diseases. Larger samples (100-200) help assess method specificity [5] [6]. |

| Measurement Replication | While single measurements are common, duplicate analyses of each specimen are advantageous. They provide a check for sample mix-ups, transposition errors, and confirm whether large differences are repeatable [5]. |

| Time Period | The experiment should span multiple analytical runs over a minimum of 5 days to minimize systematic errors unique to a single run. Extending the study over a longer period (e.g., 20 days) improves robustness [5]. |

| Timing & Stability | Measurements must be taken simultaneously, or as close as possible, to ensure the underlying quantity being measured has not changed. Specimen handling must be systematized to prevent differences due to instability [5] [6]. |

Experimental Protocol for Method Comparison

The following workflow outlines the standardized protocol for conducting a method-comparison study, from design to data readiness.

Data Analysis and Interpretation

Graphical Analysis: The Bland-Altman Plot

The first and most fundamental step in analyzing method-comparison data is visual inspection. Bland and Altman recommended a specific type of plot, now widely known as the Bland-Altman plot, to assess agreement between methods [6]. This plot provides an intuitive visual representation of the bias and its pattern across the measurement range.

- Construction: The plot displays the average of the paired values from the test and comparative methods on the x-axis [(Test + Comparative)/2]. The difference between the paired values (Test - Comparative) is plotted on the y-axis [6].

- Interpretation: The plot allows for the direct visualization of the bias (the mean of all the differences) and the limits of agreement (bias ± 1.96 standard deviations of the differences) [6]. A consistent spread of points above and below the zero line suggests only random error, while a clear trend or shift indicates systematic error.

Statistical Analysis: Quantifying Systematic Error

After graphical inspection, statistical calculations provide numerical estimates of the error.

For a Wide Analytical Range (e.g., glucose, cholesterol): Linear regression statistics are preferred [5]. The regression line (Y = a + bX, where Y is the test method and X is the comparative method) provides estimates of:

- Slope (b): A slope different from 1.0 indicates a proportional systematic error.

- Y-intercept (a): A non-zero intercept indicates a constant systematic error. The systematic error (SE) at any critical medical decision concentration (Xc) can be calculated as: SE = (a + bXc) - Xc [5].

For a Narrow Analytical Range (e.g., sodium, calcium): It is often best to simply calculate the average difference between the methods, also known as the bias [5] [6]. This is typically derived from a paired t-test analysis and represents the overall systematic shift between the two methods.

Table 3: Statistical Methods for Quantifying Systematic Error

| Analysis Method | Application Context | Key Outputs | Interpretation |

|---|---|---|---|

| Linear Regression | Wide analytical range of data | Slope (b), Y-intercept (a) | Proportional (slope ≠ 1) and constant (intercept ≠ 0) error. |

| Bias & Limits of Agreement | Any range, provides clinical context | Mean Difference (Bias), Standard Deviation of differences, Limits of Agreement (Bias ± 1.96SD) | Estimates the average systematic error and the range within which 95% of differences between methods lie. |

| Paired t-test | Compares means of paired measurements | Mean difference (Bias), p-value | Determines if the observed systematic error (bias) is statistically significant from zero. |

Success in method-comparison studies relies on both physical materials and statistical tools.

Table 4: Essential Reagents and Resources for Method-Comparison Studies

| Item / Resource | Function & Importance |

|---|---|

| Well-Characterized Comparative Method | Serves as the benchmark for comparison. A reference method provides the highest quality comparison, while a routine method requires careful interpretation of differences [5]. |

| Patient Specimens Covering Full Analytic Range | Provides the matrix for testing across all clinically relevant concentrations. Crucial for detecting proportional systematic error [5] [6]. |

| Reference Materials / Calibrators | Used to verify the calibration and linearity of both the test and comparative methods, helping to isolate error to the test method itself [1]. |

| Statistical Software (e.g., MedCalc, R) | Automates the calculation of bias, linear regression, and creation of Bland-Altman plots, ensuring accurate and reproducible data analysis [6]. |

| Data Dictionary | A pre-defined document that explains all variable names, coding, and units. This ensures interpretability and prevents errors during data processing and analysis [7]. |

Systematic error represents a fundamental challenge to data integrity, capable of skewing results and leading to invalid scientific and clinical conclusions. Unlike random error, it cannot be mitigated by increasing sample size and is often subtle and difficult to detect. Through a rigorously designed method-comparison experiment—incorporating a sufficient number of specimens across the analytical range, replicated measurements over time, and careful data analysis using both graphical (Bland-Altman plots) and statistical tools (regression, bias calculations)—researchers can effectively quantify systematic error. This process is not merely a validation technique but a cornerstone of responsible research, ensuring that new methods and the decisions based on them are founded on accurate and reliable data.

In laboratory medicine and clinical research, the accuracy of measurement methods is paramount. The core purpose of a Comparison of Methods experiment is to estimate inaccuracy or systematic error when introducing a new analytical method or test procedure [5]. This experimental approach systematically quantifies the differences between a test method and a comparative method using real patient specimens across clinically relevant concentrations [5]. The resulting systematic error estimates at critical medical decision concentrations provide essential data for evaluating whether a method is clinically acceptable for patient testing and diagnostic applications. Understanding both the magnitude and nature (constant or proportional) of these systematic errors helps researchers and clinicians interpret test results accurately and make informed decisions about method implementation.

Experimental Protocols for Systematic Error Assessment

Key Design Considerations

A rigorously designed Comparison of Methods experiment requires careful attention to multiple methodological factors to ensure reliable systematic error estimation [5].

Table 1: Key Experimental Design Factors for Method Comparison Studies

| Design Factor | Protocol Specification | Rationale |

|---|---|---|

| Comparative Method | Select reference method when possible; otherwise use routine method with careful interpretation [5] | Determines whether errors can be attributed solely to test method |

| Sample Size | Minimum 40 patient specimens; 100-200 recommended for specificity assessment [5] | Ensures adequate statistical power and interference detection |

| Sample Characteristics | Cover entire working range; represent spectrum of diseases [5] | Evaluates performance across clinically relevant conditions |

| Measurements | Single or duplicate analysis per specimen [5] | Duplicates provide validity checks for discrepant results |

| Time Period | Minimum 5 days; ideally 20 days with 2-5 specimens daily [5] | Minimizes systematic errors from single analytical run |

| Specimen Stability | Analyze within 2 hours unless preservatives/refrigeration used [5] | Prevents differences due to specimen handling variables |

Practical Application Protocol

The practical implementation follows a structured approach. Researchers should select patient specimens to cover the entire analytical measurement range of interest, not just randomly available samples [5]. Each specimen is analyzed by both the test method (new method under evaluation) and the comparative method (established reference or routine method) within a short time frame to maintain specimen integrity [5]. The experiment should span multiple days (minimum 5 days, ideally extending to 20 days) to account for day-to-day analytical variation [5]. When possible, duplicate measurements rather than single analyses provide valuable quality checks by identifying potential sample mix-ups, transposition errors, or other mistakes that could disproportionately impact conclusions [5].

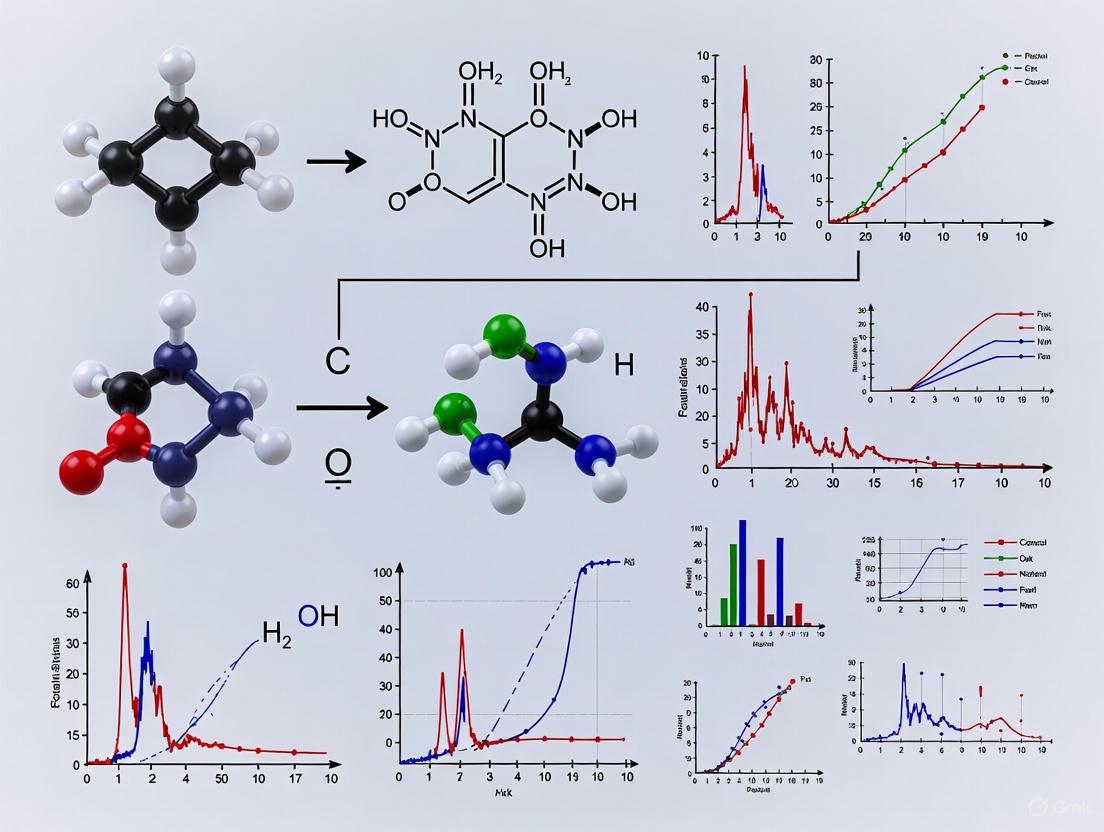

Figure 1: Experimental workflow for comparison of methods studies showing key stages from objective definition through clinical significance assessment.

Data Analysis and Statistical Approaches

Graphical Data Assessment

The initial analysis involves visual inspection of data relationships through graphing. For methods expected to show one-to-one agreement, a difference plot displays the difference between test and comparative results (test minus comparative) on the y-axis versus the comparative result on the x-axis [5]. These differences should scatter randomly around the zero line, with approximately half above and half below. For methods not expected to show exact agreement (e.g., enzyme analyses with different reaction conditions), a comparison plot displaying test results on the y-axis versus comparative results on the x-axis is more appropriate [5]. Graphical analysis helps identify discrepant results, outliers, and potential constant or proportional systematic errors based on visual patterns.

Statistical Analysis Methods

Table 2: Statistical Methods for Systematic Error Quantification

| Statistical Method | Application Context | Output Metrics | Clinical Interpretation |

|---|---|---|---|

| Linear Regression | Wide analytical range (e.g., glucose, cholesterol) [5] | Slope (b), y-intercept (a), standard error of estimate (s~y/x~) [5] | Y~c~ = a + bX~c~; Systematic Error = Y~c~ - X~c~ at decision level X~c~ [5] |

| Bland-Altman Analysis | Repeatability studies, narrow analytical ranges [8] | Mean difference (bias), limits of agreement, fixed and proportional bias [8] | Identifies systematic trends in retesting; establishes minimal detectable change (MDC) [8] |

| Paired t-test | Narrow analytical range (e.g., sodium, calcium) [5] | Mean difference (bias), standard deviation of differences, t-value [5] | Average systematic error across measured range with statistical significance |

| Correlation Analysis | Assessment of data range adequacy [5] | Correlation coefficient (r) [5] | r ≥ 0.99 indicates sufficient range for reliable regression estimates [5] |

Practical Statistical Application

For data spanning a wide analytical range, linear regression statistics are preferred as they enable estimation of systematic error at multiple medical decision concentrations [5]. The regression equation (Yc = a + bXc) calculates the systematic error (SE = Yc - Xc) at critical decision levels [5]. For example, with a regression line Y = 2.0 + 1.03X, at a clinical decision level of 200 mg/dL, the calculated Y value would be 208 mg/dL, indicating a systematic error of 8 mg/dL [5]. The correlation coefficient (r) primarily indicates whether the data range is sufficient for reliable regression estimates, with values of 0.99 or greater indicating adequate range [5]. For narrower analytical ranges, the average difference (bias) between methods with standard deviation of differences provides the most meaningful error estimation [5].

Figure 2: Data analysis decision pathway for method comparison studies showing graphical and statistical approaches for systematic error estimation.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Materials for Method Comparison Experiments

| Item Category | Specific Examples | Function in Experiment |

|---|---|---|

| Patient Specimens | Carefully selected to cover working range, represent disease spectrum [5] | Provides biologically relevant matrix for comparing method performance across clinical conditions |

| Reference Materials | Certified reference materials, calibration standards [5] | Establishes traceability and enables accuracy assessment against recognized standards |

| Quality Controls | Commercial quality control materials at multiple levels [5] | Monitors analytical performance stability throughout comparison study |

| Comparative Method | Reference method or established routine method [5] | Serves as benchmark for evaluating test method performance |

| Data Analysis Tools | Statistical software with regression, Bland-Altman capabilities [5] [8] | Enables systematic error quantification and statistical significance determination |

| Specimen Handling | Preservatives, refrigeration equipment, aliquot containers [5] | Maintains specimen stability between paired analyses |

Clinical Significance and Error Interpretation

The ultimate value of method comparison data lies in its interpretation for clinical decision-making. Systematic errors must be evaluated against medically allowable error specifications at critical decision concentrations [5]. For example, in a two-step test for locomotive syndrome assessment, Bland-Altman analysis revealed fixed bias in young adults with a minimal detectable change (MDC) of 0.17 cm/height for test value, providing a clinically useful indicator for interpreting intervention effects [8]. This systematic error assessment directly impacts how test results are interpreted in clinical practice—whether a measured change represents true physiological change or falls within expected method variation [8]. By quantifying systematic errors at decision points and establishing thresholds for clinically significant change, method comparison experiments bridge analytical performance with clinical utility, ensuring that measurement methods provide reliable data for patient care decisions.

In method comparison experiments, the selection of a comparative method is the cornerstone for reliably estimating systematic error (inaccuracy). This choice directly determines whether observed differences are correctly attributed to the test method or are artifacts of an imperfect comparator [5]. The fundamental distinction lies between reference methods, which provide a higher-order benchmark for accuracy, and routine methods, which offer a practical but less definitive standard [5] [9]. Reference methods are characterized by their established traceability to definitive methods or international standards, often listed by organizations like the Joint Committee for Traceability in Laboratory Medicine (JCTLM) [10]. Their use allows any significant difference to be assigned as an error of the test method. In contrast, routine methods are standard laboratory techniques whose correctness is not fully documented. When a routine method is used as a comparator, large differences must be interpreted with caution, as it may be unclear which method is the source of inaccuracy [5]. This guide provides an objective comparison for researchers and scientists, detailing the implications of this critical choice within the framework of method validation and drug development.

Core Comparison: Reference Methods vs. Routine Methods

The table below summarizes the key characteristics, implications, and optimal use cases for reference and routine comparative methods.

Table 1: Core Comparison Between Reference Methods and Routine Methods as Comparators

| Aspect | Reference Method | Routine Method |

|---|---|---|

| Definition & Traceability | A method with high quality and documented correctness, traceable to a "definitive method" or higher-order reference materials [5] [10]. | A general term for a standard laboratory method without documented traceability or proven correctness [5]. |

| Primary Implication | Differences from the test method are assigned to the test method, providing a definitive assessment of inaccuracy [5]. | Differences must be carefully interpreted; it may not be clear which method is the source of the error [5]. |

| Key Utility | Assessing the trueness (bias) of a new test method; establishing traceability chains [10]. | Assessing the relative accuracy and agreement between two established or similar methods in a specific laboratory setting. |

| Availability & Cost | Often limited, expensive, and require specialized reference laboratories [5] [10]. | Widely available, cost-effective, and familiar to laboratory personnel. |

| Result Standardization | Enables standardization of results across different laboratories and manufacturers [10]. | Promotes internal consistency but does not ensure standardization across different platforms. |

| Experimental Follow-up | Typically not required if the difference is significant, as the error is assigned to the test method. | Required if differences are large; may involve additional experiments (e.g., recovery, interference) to identify the inaccurate method [5]. |

Experimental Protocols for Method Comparison

A robust method comparison experiment, whether using a reference or routine method, requires a carefully controlled design to generate reliable data for systematic error assessment.

Key Experimental Design Factors

The following factors are critical for a valid comparison of methods experiment, regardless of the comparator chosen [5]:

- Number of Specimens: A minimum of 40 different patient specimens is recommended. The quality and range of concentrations are more critical than the absolute number. Specimens should cover the entire working range of the method and represent the expected spectrum of diseases [5].

- Measurement Replication: While single measurements are common, duplicate analyses are advantageous. Duplicates should be performed on different samples analyzed in different runs or different order to provide a check for sample mix-ups or transposition errors [5].

- Time Period: The experiment should be conducted over several different analytical runs on different days (minimum of 5 days) to minimize systematic errors specific to a single run. Extending the study over a longer period, such as 20 days, while analyzing 2-5 specimens per day, is preferable [5].

- Specimen Handling: Specimens should be analyzed by both methods within two hours of each other to avoid stability issues, unless the analyte is known to be less stable. Handling procedures must be systematized to ensure differences are due to analytical error and not specimen degradation [5].

Data Analysis and Statistical Evaluation

After data collection, a two-phase approach to analysis is recommended:

- Graphical Inspection: The data should be graphed and visually inspected at the time of collection. For methods expected to show a 1:1 agreement, a difference plot (test result minus comparative result vs. comparative result) is used. For methods not expected to agree exactly (e.g., different enzyme methodologies), a comparison plot (test result vs. comparative result) is used. This initial inspection helps identify discrepant results that need immediate re-analysis [5].

- Statistical Calculations: Statistical analysis provides numerical estimates of systematic error.

- For a wide analytical range: Linear regression analysis is preferred. It provides a slope (b) and y-intercept (a) that describe the proportional and constant systematic error, respectively. The systematic error (SE) at any critical medical decision concentration (Xc) can be calculated as:

Yc = a + b*Xc, thenSE = Yc - Xc[5]. - For a narrow analytical range: The average difference (bias) between the two methods, often derived from a paired t-test, is a suitable estimate of constant systematic error [5].

- The correlation coefficient (r) is more useful for assessing whether the data range is wide enough for reliable regression (r ≥ 0.99) than for judging method acceptability [5].

- For a wide analytical range: Linear regression analysis is preferred. It provides a slope (b) and y-intercept (a) that describe the proportional and constant systematic error, respectively. The systematic error (SE) at any critical medical decision concentration (Xc) can be calculated as:

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials required for conducting a rigorous method comparison study.

Table 2: Essential Research Reagents and Materials for Method Comparison Experiments

| Item | Function & Importance | Key Considerations |

|---|---|---|

| Patient Specimens | The primary sample for analysis, providing the real-world matrix for evaluating method performance [5]. | Must cover the entire reportable range and represent the spectrum of diseases. Fresh or appropriately stabilized specimens are crucial [5]. |

| Certified Reference Material (CRM) | A high-quality reference material accompanied by a certificate, used to assess the trueness of the test or reference method [11]. | The certified value has a stated uncertainty. It is the best reference for assessing accuracy when a reference method is not available [11]. |

| Commutable Control Material | A quality control material that behaves like a native patient sample across different measurement procedures [12] [13]. | Commutability is critical. Non-commutable materials can introduce matrix-related bias, leading to incorrect conclusions about method agreement [12] [13]. |

| Calibrators | Substances used to calibrate the measurement procedures, establishing the relationship between signal and analyte concentration [10]. | The traceability of calibrator values to a higher-order reference system is fundamental for achieving accurate and standardized results [10]. |

Visualizing Hierarchical Relationships and Experimental Workflow

The Traceability Chain in Laboratory Medicine

The diagram below illustrates the hierarchical model of traceability, from the patient sample to the highest metrological level, as defined by standards such as ISO 17511 [10].

Experimental Workflow for Method Comparison

This flowchart outlines the key steps and decision points in a method comparison experiment, from selection of the comparator to the final interpretation.

The selection between a reference method and a routine method as a comparator is a pivotal decision that dictates the interpretative power of a method comparison study. A reference method provides an unimpeachable benchmark, allowing for a definitive assessment of a test method's trueness and facilitating standardization. Its use is ideal for formal validation and establishing traceability. Conversely, a routine method offers a practical solution for verifying relative accuracy within a laboratory, but requires cautious interpretation of discrepancies and may necessitate further experimentation to pinpoint the source of error. By adhering to rigorous experimental protocols—including appropriate sample selection, replication, and statistical analysis—researchers can ensure their comparison yields reliable data, ultimately supporting robust method validation and informed decision-making in both drug development and clinical practice.

In the assessment of systematic error, the validity of a method comparison experiment hinges on two critical, pre-planned elements: a sufficiently large sample size and a strategic selection of patient specimens that adequately cover the analytical working range. An underpowered study, due to an insufficient number of specimens, risks failing to detect clinically significant biases, while a poorly selected sample set may misrepresent the method's performance across the spectrum of concentrations encountered in real-world practice [14] [15] [5]. This guide objectively compares established approaches to these challenges, providing researchers and drug development professionals with the experimental data and protocols necessary to design definitive method comparison studies. The ensuing sections will dissect the core components of sample size calculation, detail protocols for specimen selection, and present a comparative analysis of methodological strategies, all framed within the broader objective of rigorous systematic error assessment.

Core Concepts and Key Terminology

Before delving into strategies, it is essential to define the key parameters that govern sample size and selection.

Table 1: Key Components of Sample Size Calculation

| Component | Description | Role in Sample Size Calculation |

|---|---|---|

| Effect Size | The minimum difference or bias considered clinically or practically significant [14] [15]. | The primary driver; a smaller effect size requires a larger sample size for detection. |

| Statistical Power | The probability that the study will detect an effect (e.g., a bias) if one truly exists [15]. | Typically set at 80% or 90%; higher power requires a larger sample size. |

| Significance Level (α) | The probability of rejecting a true null hypothesis (Type I error, or false positive) [15]. | Conventionally set at 0.05; a lower α requires a larger sample size. |

| Precision (Margin of Error) | The acceptable width of the confidence interval for an estimate [14] [16]. | Used in descriptive studies; a narrower margin of error requires a larger sample size. |

The following workflow outlines the decision process for determining specimen selection and sample size in a method comparison study.

Diagram 1: Experimental design workflow for method comparison studies.

Quantitative Sample Size Recommendations

The required sample size varies significantly based on the study's primary objective. The table below summarizes evidence-based recommendations.

Table 2: Sample Size Recommendations by Study Objective

| Study Objective | Recommended Sample Size | Key Rationale & Supporting Data |

|---|---|---|

| Method Comparison (Bias Detection) | Minimum of 40 specimens [5]. 100-200 may be needed for interference assessment [5]. | A minimum of 40 specimens is needed for a reliable estimate of systematic error using linear regression. Larger samples (100-200) are recommended to investigate method specificity and identify matrix-related interferences [5]. |

| Descriptive Studies (Precision) | ~200 specimens for cost outcomes [16]. | For a continuous outcome like cost with a coefficient of variation (cv) of 0.72, a sample of 200 yields a 95% CI precise to within ±10% of the mean [16]. |

| Identifying Treatment Patterns | 200 specimens to observe treatments with ≥1% frequency [16]. | For a treatment given to 5% of the population, a sample of 200 yields a 95% CI with a precision of ±3% [16]. |

| Pilot Studies | No formal calculation required [14]. | Primary purpose is feasibility testing and estimating parameters (e.g., SD, effect size) for a larger, definitive study [14] [15]. |

Comparative Analysis of Specimen Selection Strategies

The quality of a method comparison experiment is as dependent on specimen selection as it is on sample size. Different strategies offer distinct advantages.

Table 3: Comparison of Specimen Selection and Handling Protocols

| Strategy | Protocol Description | Advantages | Limitations / Considerations |

|---|---|---|---|

| Covering the Working Range | Select 40+ patient specimens to cover the entire analytical range of the method [5]. | Allows evaluation of constant and proportional error via regression analysis [5] [17]. | Requires prior knowledge of analyte concentrations. Obtaining rare, high-value specimens can be challenging. |

| Single vs. Duplicate Measurements | Analyze each specimen singly by test and comparative methods. Duplicates involve two different aliquots analyzed in different runs [5]. | Duplicates act as a validity check for sample mix-ups and transcription errors [5]. Singles are more resource-efficient. | Duplicate analysis increases analytical time and cost. With single measurements, discrepant results must be reanalyzed immediately [5]. |

| Stability & Handling | Analyze test and comparative methods within 2 hours of each other [5]. | Minimizes differences due to specimen deterioration rather than analytical error. | For unstable analytes, strict handling protocols (e.g., centrifugation, freezing) are mandatory [5]. |

| Probability Sampling | Using random selection from a defined population (e.g., simple random, stratified) [18]. | Ensures generalizability of the findings to the target population. | Can be logistically complex and costly, especially for rare conditions or specific concentration ranges. |

The Scientist's Toolkit: Essential Reagents and Materials

Table 4: Key Research Reagent Solutions for Method Comparison

| Item | Function in Experiment |

|---|---|

| Certified Reference Material | A sample with a known quantity of the analyte, used as a gold standard to assess the accuracy (trueness) of a new method and identify systematic error [17]. |

| Patient Specimens | Real clinical samples that represent the spectrum of diseases and matrices the method will encounter, used for the primary comparison of methods [5]. |

| Quality Control (QC) Samples | Materials with known, stable characteristics run at regular intervals to monitor the precision and stability of the analytical method throughout the study period [17]. |

| Appropriate Collection Tubes | Specimen containers with the correct additives and preservatives (e.g., EDTA for hematology, citrate for coagulation) to ensure sample integrity and prevent pre-analytical errors like clotting [19] [20]. |

The experimental comparison of methods for systematic error assessment is a foundational activity in laboratory medicine and drug development. The evidence presented demonstrates that a one-size-fits-all approach is ineffective. For a standard method comparison aiming to characterize inaccuracy, a minimum of 40 carefully selected patient specimens covering the entire working range is a scientifically defensible and widely accepted standard [5]. However, researchers must be prepared to increase this number to 100-200 if the goal includes a thorough investigation of methodological specificity or interference [5]. For descriptive studies, such as those characterizing treatment patterns or costs, sample sizes should be calculated based on the desired precision of the estimate, with ~200 specimens often providing a robust practical target [16].

The most robust studies will combine a sufficient sample size with a rigorous specimen selection strategy that includes a wide concentration range, relevant pathological states, and strict handling protocols. By adhering to these principles and leveraging the detailed protocols and comparative data herein, researchers can design method comparison experiments that yield credible, reproducible, and clinically relevant conclusions about systematic error.

In method comparison studies, the goal is to estimate the systematic error or inaccuracy between a new test method and a comparative method [5]. The reliability of this estimation hinges on the integrity of the pre-experimental phase. Factors such as specimen stability, the time period over which data is collected, and the choice between single or duplicate measurements are not merely logistical details; they are critical determinants of the study's internal validity [5] [21]. Missteps in these areas can introduce systematic error that confounds the results, leading to incorrect conclusions about a method's performance [21] [22]. This guide objectively compares the impact of different approaches to these pre-experimental factors, providing researchers with the data and protocols needed to design robust experiments.

The Impact of Pre-Experimental Factors on Data Validity

The core relationship between pre-experimental factors and the ultimate validity of study data is conceptualized in the flowchart below. It illustrates how decisions regarding timing, replication, and specimen handling directly influence the risk of bias, thereby determining the reliability of the systematic error assessment.

Comparative Analysis of Pre-Experimental Factors

The table below provides a detailed comparison of the three core pre-experimental factors, summarizing key considerations, experimental recommendations, and the associated impacts on data quality.

Table 1: Comprehensive Comparison of Critical Pre-Experimental Factors

| Factor | Key Considerations & Recommendations | Impact on Data Quality & Experimental Outcome |

|---|---|---|

| Specimen Stability [5] | Recommended Protocol: Analyze test and comparative method specimens within 2 hours of each other. Use preservatives, centrifugation, refrigeration, or freezing for unstable analytes (e.g., ammonia, lactate).Key Consideration: Pre-study definition and systematization of specimen handling procedures is critical. | High Risk: Differences observed may be due to specimen handling variables rather than true systematic analytical error, leading to inaccurate bias estimates. |

| Time Period [5] | Recommended Protocol: Conduct analysis over a minimum of 5 days, ideally extending over a longer period (e.g., 20 days) with 2-5 patient specimens per day.Key Consideration: Using multiple analytical runs on different days helps minimize systematic errors that could occur in a single run. | Medium Risk: A single-run study may over- or under-estimate systematic error due to day-to-day analytical variation, threatening the generalizability of the results. |

| Single vs. Duplicate Measurements [5] | Recommended Protocol: Perform duplicate measurements on different sample cups, analyzed in different runs or at least in different order.Alternative (if no duplicates): Closely inspect data as it is collected and immediately repeat analyses on specimens with large differences.Key Consideration: Duplicates act as a validity check for individual method measurements. | High Risk: Without duplicates, mistakes (sample mix-ups, transposition errors, random outliers) can disproportionately impact conclusions and cause uncertainty about whether discrepancies are real. |

Detailed Experimental Protocols for Assessing Pre-Experimental Factors

Protocol for Validating Specimen Stability

1. Objective: To determine the maximum allowable time interval between sample collection and analysis for a specific analyte without significant degradation.

2. Materials:

- Fresh patient specimens (n ≥ 10) covering the analytical range (low, medium, high).

- Appropriate sample collection tubes.

- Equipment for processing (centrifuge, aliquoting tubes).

- Storage facilities (refrigerator, freezer).

3. Procedure:

- Step 1: Collect a sufficient volume of each patient specimen and split it into multiple aliquots immediately after processing.

- Step 2: Analyze one set of aliquots immediately (T=0 baseline).

- Step 3: Store the remaining aliquots under defined conditions (e.g., room temperature, 4°C).

- Step 4: Analyze stored aliquots at pre-defined time points (e.g., T=1 hour, 2 hours, 4 hours, 8 hours).

- Step 5: For each time point, calculate the percentage difference or bias from the T=0 baseline measurement for each specimen.

4. Data Analysis:

- Use a Bland-Altman plot to visualize the mean bias and limits of agreement against the T=0 values at each time point [6].

- The stability threshold is the longest time point before the mean bias and its confidence interval exceed a pre-defined, clinically acceptable limit.

Protocol for Implementing the Time Period Factor

1. Objective: To integrate a multi-day experimental timeline into a method comparison study.

2. Materials:

- Scheduled access to both the test and comparative method instruments.

- A pool of available patient specimens.

3. Procedure:

- Step 1: In the study protocol, schedule a minimum of 5 different days for analysis over a 2-to-4-week period [5].

- Step 2: Each day, select 2-5 patient specimens that are representative of the laboratory's workload.

- Step 3: Analyze the selected specimens by both the test and comparative methods on the same day, following the established stability guidelines.

- Step 4: Repeat this process until the target number of specimens (e.g., 40) is accumulated across all days.

4. Data Analysis:

- The data set will inherently include variance from different calibration events, operators, and environmental conditions, providing a more realistic estimate of long-term systematic error.

Protocol for Implementing Duplicate Measurements

1. Objective: To verify the repeatability of measurements and identify procedural errors.

2. Materials:

- Patient specimens (aliquoted into separate cups for duplicates).

- Data recording system (LIMS or spreadsheet).

3. Procedure:

- Step 1: For each patient specimen, prepare two separate aliquots (different cups).

- Step 2: Analyze these aliquots in the same run but in a different, randomized order. Ideally, analyze them in two different analytical runs [5].

- Step 3: For both the test and comparative methods, record the results from the first and second measurements separately.

4. Data Analysis:

- Calculate the difference between duplicate measurements for each method and specimen.

- Establish acceptability criteria for within-duplicate difference (e.g., based on within-run precision).

- Flag any duplicate pair that exceeds this criteria for investigation. This process helps confirm that large differences between methods are real and not due to a single erroneous measurement.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Materials and Reagents for Method Comparison Studies

| Item | Function in Pre-Experimental Context |

|---|---|

| Characterized Patient Pools | Pre-tested, well-mixed patient serum/plasma pools with target values assigned. Used for verifying method performance and as quality controls during the comparison study. |

| Stabilizing Reagents | Preservatives (e.g., sodium azide), protease inhibitors, or anticoagulants (e.g., EDTA, heparin) added to specimens to maintain analyte stability throughout the testing period [5]. |

| Standard Reference Materials (SRMs) | Materials certified by a standards body (e.g., NIST). Used to validate the accuracy of the comparative method and to establish traceability, strengthening the assumption of its correctness [5]. |

| Aliquoting Tubes | Low-adsorption, barcoded tubes for partitioning patient specimens into multiple identical aliquots. Essential for stability studies and for creating true duplicate samples for analysis. |

The pre-experimental phase of a method comparison study is a foundational element that cannot be separated from the analytical results. As demonstrated, rigorous control of specimen stability, a sufficiently long time period, and the use of duplicate measurements are not optional best practices but are essential requirements for minimizing bias and producing a reliable estimate of systematic error [5] [21] [22]. By adhering to the detailed protocols and comparisons provided in this guide, researchers and drug development professionals can design studies whose conclusions are valid, defensible, and fit for informing critical decisions in laboratory medicine and product development.

Executing the Experiment: From Data Collection to Statistical Analysis

Method comparison studies are fundamental to assessing systematic error (bias) when introducing new measurement procedures in research and clinical practice. Before complex statistical analyses, graphical data inspection provides an intuitive, powerful first step for identifying patterns, outliers, and potential biases between methods. Visual examination of difference plots and comparison plots enables researchers to quickly assess the degree of agreement between an established method and a new method, forming the critical initial phase of systematic error assessment [6] [23].

These visualization techniques transform abstract numerical data into accessible visual patterns, allowing immediate detection of problematic measurements that might otherwise obscure analysis. When properly implemented within a rigorous method comparison framework, graphical inspection serves as both a quality control checkpoint during data collection and a foundational analytical tool that guides subsequent statistical evaluation [5] [6]. This guide examines the complementary roles of difference plots and comparison plots, providing detailed methodologies for their implementation in systematic error assessment research.

Understanding Comparison Plots

Definition and Purpose

A comparison plot (also known as a scatter plot or correlation plot) displays paired measurements obtained from two methods simultaneously, with the reference method values on the x-axis and the test method values on the y-axis [23]. This visualization provides a comprehensive overview of the analytical range covered by the data, reveals the linearity of response across this range, and illustrates the general relationship between methods through the angle and position of the data cluster [5].

The primary strength of comparison plots lies in their ability to visualize the overall agreement pattern across the entire measurement spectrum. Each point on the plot represents a single paired measurement, creating an immediate visual impression of method concordance [23]. When the two methods agree perfectly, all points fall along the line of identity (a 45-degree line through the origin). Deviations from this line indicate potential disagreements that warrant further investigation [23].

Construction Methodology

Step-by-Step Protocol:

Data Preparation: Collect a minimum of 40 paired measurements from patient samples covering the entire clinically meaningful measurement range [6] [23]. For duplicate measurements, use the mean value for plotting [23].

Axis Configuration: Plot the values from the reference or established method on the x-axis and values from the new test method on the y-axis [23].

Reference Line: Add the line of identity (y = x) as a visual reference for perfect agreement [23].

Visual Inspection: Examine the scatter of points for gaps in the measurement range, outliers, and systematic patterns in the discrepancies [23].

Table 1: Key Components of a Comparison Plot

| Component | Description | Purpose |

|---|---|---|

| X-axis Values | Measurements from reference method | Serves as comparison baseline |

| Y-axis Values | Measurements from test method | Represents new method performance |

| Line of Identity | Straight line with slope = 1, intercept = 0 | Visual reference for perfect agreement |

| Data Points | Paired measurements from both methods | Reveals agreement patterns and outliers |

Interpretation Guidelines

When interpreting comparison plots, researchers should assess:

- Data Distribution: Check whether points adequately cover the analytical range without significant gaps [23].

- Overall Pattern: Determine if points scatter randomly around the line of identity or show systematic deviations [5].

- Proportional Effects: Observe if discrepancies between methods widen or narrow as the measurement value increases [5].

- Outliers: Identify points that fall far from the main data cluster that may represent measurement errors or special cases [23].

A well-constructed comparison plot immediately reveals whether two methods show one-to-one agreement or exhibit systematic differences that require further quantification [5].

Understanding Difference Plots

Definition and Purpose

Difference plots (specifically Bland-Altman plots) visualize the agreement between two methods by plotting the differences between paired measurements against their averages [6] [23]. This approach shifts focus from the actual measured values to the discrepancies between methods, making it particularly effective for identifying systematic biases and their behavior across the measurement range [6].

In this visualization, the x-axis represents the average of the two measurements ( (Method A + Method B)/2 ), while the y-axis shows the difference between them ( (Method B - Method A) ) [6]. The plot includes horizontal lines representing the mean difference (bias) and limits of agreement (bias ± 1.96 × standard deviation of the differences), which estimate the range where most differences between the two methods lie [6].

Construction Methodology

Step-by-Step Protocol:

- Calculate Averages: For each pair of measurements, compute the average of the two methods' values.

- Compute Differences: For each pair, subtract the reference method value from the test method value.

- Plot Configuration: Place averages on the x-axis and differences on the y-axis.

- Reference Lines: Add horizontal lines for the mean difference (bias) and the upper and lower limits of agreement (bias ± 1.96SD) [6].

- Zero Line: Include a horizontal line at zero for visual reference.

Table 2: Key Components of a Difference Plot

| Component | Description | Purpose |

|---|---|---|

| X-axis Values | Average of paired measurements (Test+Reference)/2 |

Represents magnitude of measurement |

| Y-axis Values | Difference between methods Test - Reference |

Quantifies disagreement between methods |

| Mean Difference | Average of all differences (bias) | Estimates systematic error |

| Limits of Agreement | Bias ± 1.96 × SD of differences | Range containing 95% of differences |

| Zero Reference Line | Horizontal line at y=0 | Visual reference for no difference |

Interpretation Guidelines

When interpreting difference plots, researchers should assess:

- Bias Direction and Magnitude: Determine if the mean difference line lies above or below zero and how far it deviates [6].

- Uniform Variance: Check if the spread of differences remains consistent across the measurement range (homoscedasticity).

- Relationship with Magnitude: Identify if differences increase or decrease as the measurement average increases.

- Outliers: Identify points outside the limits of agreement that may represent special cases or errors [6].

- Clinical Significance: Evaluate whether the observed bias and agreement limits are clinically acceptable for the intended use.

The following workflow diagram illustrates the decision process for interpreting difference plots in method comparison studies:

Direct Comparison: Difference Plots vs. Comparison Plots

Side-by-Side Comparison

Table 3: Comprehensive Comparison of Difference Plots and Comparison Plots

| Characteristic | Difference Plots | Comparison Plots |

|---|---|---|

| Primary Purpose | Visualize agreement and bias between methods [6] | Display relationship and correlation between methods [5] |

| Variables Plotted | Differences vs. averages of paired measurements [6] | Test method vs. reference method values [23] |

| Bias Detection | Direct visualization of mean difference and its pattern [6] | Indirect assessment through deviation from identity line [5] |

| Range Assessment | Shows how agreement varies with measurement magnitude [6] | Reveals coverage of analytical measurement range [23] |

| Statistical Measures | Mean difference (bias), limits of agreement [6] | Correlation coefficient, visual linearity [23] |

| Outlier Detection | Identifies points outside agreement limits [6] | Reveals points distant from main data cluster [23] |

| Interpretation Focus | Magnitude and pattern of disagreements [6] | Overall relationship and proportional effects [5] |

| Common Applications | Clinical method comparison, bias assessment [6] [23] | Initial data exploration, range verification [5] |

Complementary Applications in Research

Difference plots and comparison plots serve complementary roles in method comparison studies:

Comparison plots excel during initial data collection by revealing whether the sample adequately covers the analytical range and highlighting gross discrepancies that may require immediate re-measurement [5] [23]. They are particularly valuable for identifying gaps in the measurement range that might limit the reliability of subsequent statistical analyses [23].

Difference plots provide more nuanced information about the nature and magnitude of systematic error, distinguishing between constant and proportional bias [6]. The visualization of differences against averages directly reveals whether the disagreement between methods remains consistent or changes across the measurement spectrum [6].

The following diagram illustrates the integrated workflow for utilizing both visualization types in a complete method comparison study:

Experimental Protocols for Systematic Error Assessment

Method Comparison Study Design

Robust graphical analysis requires a properly designed method comparison experiment. Key design considerations include:

Sample Selection: Use 40-100 patient specimens carefully selected to cover the entire working range of the method and represent the spectrum of diseases expected in routine application [5] [23]. Specimen quality and range coverage are more critical than simply maximizing sample size [5].

Measurement Timing: Analyze specimens simultaneously by both methods whenever possible, with randomization of measurement order to minimize time-dependent biases [6]. For stable analytes, measurements within 2 hours may be acceptable [6].

Replication Strategy: Perform duplicate measurements to minimize random variation effects and identify measurement errors [5] [23]. Use mean values from replicates for plotting and analysis [23].

Study Duration: Conduct measurements over multiple days (minimum 5 days) to capture typical between-run variation and minimize the impact of single-day anomalies [5] [6].

Data Collection and Quality Control

Implement rigorous quality control procedures during data collection:

- Sample Stability: Establish and adhere to strict specimen handling protocols to prevent artifacts from improper processing or storage [5].

- Blinding: Measure samples without knowledge of paired results to prevent conscious or unconscious bias in measurement or recording.

- Real-time Visualization: Create preliminary plots during data collection to identify discrepant results while specimens are still available for re-analysis [5] [23].

Essential Research Reagent Solutions

Table 4: Key Materials and Reagents for Method Comparison Studies

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Certified Reference Materials | Provides samples with known analyte concentrations for bias estimation [17] | Essential for establishing trueness and calibrating measurements |

| Quality Control Samples | Monitors precision and detects systematic errors over time [17] | Use at multiple concentration levels; plot via Levey-Jennings charts |

| Patient Specimens | Source of biologically relevant matrix for method comparison [5] [6] | Select to cover clinical range with various disease states |

| Calibrators | Establishes quantitative relationship between signal and concentration | Matrix-matched to patient samples when possible |

| Statistical Software | Performs complex calculations and generates standardized plots [6] | Specialized packages (MedCalc) or programming (R) with visualization libraries |

Difference plots and comparison plots serve as fundamental, complementary tools in the initial assessment of systematic error during method comparison studies. While comparison plots provide an excellent overview of the measurement range and general relationship between methods, difference plots offer superior visualization of the magnitude, pattern, and clinical significance of systematic biases [5] [6] [23].

Used together within a rigorously designed method comparison experiment, these graphical techniques form an essential first step in systematic error assessment, guiding researchers toward appropriate statistical analyses and evidence-based decisions about method interchangeability. Their visual nature makes complex data patterns accessible, facilitating immediate quality assessment during data collection and providing intuitive summaries for research reporting and publication [6] [23].

In systematic error assessment research, selecting the appropriate statistical methodology is paramount for drawing valid conclusions about method comparability. The paired t-test and linear regression represent two fundamental analytical approaches with distinct applications in method-comparison studies. While the paired t-test evaluates whether the mean difference between paired measurements equals zero, linear regression characterizes the relationship between two methods across a concentration range, quantifying both constant and proportional errors [24] [6]. This guide provides an objective comparison of these statistical tools based on data characteristics and analytical requirements, supported by experimental data and implementation protocols.

Statistical Tool Comparison: Paired t-test vs. Linear Regression

Fundamental Definitions and Applications

Paired t-test (also known as dependent samples t-test) assesses whether the mean difference between paired measurements is statistically significantly different from zero [24] [25]. This method is ideal for focused comparisons at a single point.

Linear regression in method-comparison studies establishes a functional relationship between measurements from two methods, providing estimates of systematic error at multiple decision levels through the regression equation Y = a + bX, where 'a' represents constant bias and 'b' represents proportional bias [6] [26].

Table 1: Core Applications and Outputs of Each Statistical Method

| Feature | Paired t-test | Linear Regression |

|---|---|---|

| Primary Purpose | Tests if mean paired difference equals zero | Models relationship between two methods across concentrations |

| Error Components | Provides single estimate of average bias (systematic error) | Separates constant error (y-intercept) and proportional error (slope) |

| Data Range Utility | Single medical decision level | Multiple medical decision levels across analytical range |

| Key Assumptions | Normally distributed differences; paired measurements; independent subjects [24] [25] | Linear relationship; normally distributed residuals; homoscedasticity |

| Interpretation Focus | Statistical significance of mean difference | Systematic error estimation at critical decision concentrations |

Decision Framework: Data Range and Medical Decision Levels

The choice between paired t-test and linear regression hinges principally on the number of medically relevant decision concentrations and the data range covered in the study.

Single Medical Decision Level: When method comparison focuses on a single critical medical decision concentration, the paired t-test provides a straightforward, appropriate analysis [26]. Specimens should be collected around this decision level, and the estimate of systematic error (bias) is derived from the average difference between paired measurements.

Multiple Medical Decision Levels: When clinical interpretation occurs at multiple decision concentrations across an analytical range, linear regression becomes necessary [26]. This approach requires specimens covering the entire expected physiological range, enabling estimation of systematic error at each medical decision level through the regression equation.

The correlation coefficient (r) serves as a practical indicator for assessing whether the data range is sufficient for reliable regression analysis. When r ≥ 0.99, ordinary linear regression typically provides reliable estimates of slope and intercept. When r < 0.975, the data range may be insufficient, necessitating data improvement or alternative statistical approaches [26].

Experimental Protocols for Method-Comparison Studies

Paired t-Test Methodology

Experimental Design Considerations:

- Sample Size: A minimum of 40 patient specimens is recommended, though carefully selected specimens based on concentration may provide better information than randomly selected specimens [5].

- Paired Measurements: Each subject or specimen is measured by both methods being compared, with simultaneous sampling to ensure the same underlying value is being measured [6].

- Data Collection: Record paired results for each specimen, ensuring proper blinding and randomization of measurement order to minimize systematic bias.

Analysis Protocol:

- Calculate differences between paired measurements (Method B - Method A)

- Verify normality of differences using histograms, Q-Q plots, or formal normality tests [24]

- Compute mean difference (bias) and standard deviation of differences

- Calculate t-statistic: t = (mean difference) / (standard error of differences)

- Compare calculated t-value to critical t-value with n-1 degrees of freedom

- Interpret results: Significant p-value (typically <0.05) indicates mean difference ≠ 0

Interpretation Guidelines: The calculated bias represents the average systematic error between methods. The standard deviation of differences reflects random variation between methods. The 95% confidence interval for the mean difference provides a range of plausible values for the population bias [25].

Linear Regression Methodology

Experimental Design Considerations:

- Sample Size: 40-100 specimens minimum, with 100-200 recommended when assessing method specificity with different measurement principles [5]

- Concentration Range: Specimens should cover the entire working range of the method, representing the spectrum of diseases expected in routine application [5] [6]

- Measurement Protocol: Analyze specimens in random order across multiple days (minimum 5 days) to incorporate routine analytical variation [5]

Analysis Protocol:

- Plot test method results (Y-axis) versus comparative method results (X-axis)

- Visually inspect for linearity, outliers, and constant variance

- Calculate regression statistics: slope (b), y-intercept (a), and standard error of estimate (sₑ/ₓ)

- Assess correlation coefficient (r) to evaluate data range adequacy

- Estimate systematic error at medical decision concentrations (X꜀) using: SE = (a + bX꜀) - X꜀ [26]

- Evaluate residuals to verify model assumptions

Interpretation Guidelines: The y-intercept (a) estimates constant systematic error, while the slope (b) estimates proportional systematic error. The standard error of estimate (sₑ/ₓ) quantifies random error around the regression line. When the correlation coefficient exceeds 0.99, regression parameters are generally reliable; below 0.975, consider data improvement or alternative regression techniques [26].

Quantitative Comparison of Performance

Table 2: Statistical Performance Metrics Under Different Data Conditions

| Data Characteristic | Paired t-test Performance | Linear Regression Performance |

|---|---|---|

| Narrow concentration range (r < 0.975) | Reliable bias estimate at mean concentration | Unreliable slope and intercept estimates |

| Wide concentration range (r ≥ 0.99) | Limited to average bias across range | Excellent characterization of concentration-dependent errors |

| Single decision level | Optimal efficiency and interpretation | Unnecessarily complex; provides no advantage |

| Multiple decision levels | Inadequate; cannot estimate errors at different concentrations | Essential for comprehensive error assessment |

| Presence of proportional error | Detects net bias but cannot characterize error type | Explicitly quantifies proportional error through slope deviation from 1 |

Research demonstrates that when the medical decision level coincides with the mean of the comparison data, both paired t-test and linear regression provide identical estimates of systematic error [26]. This equivalence occurs because the regression line must pass through the mean of both methods' data, making the systematic error estimate at the mean concentration equal to the simple average difference between methods.

Visual Decision Framework and Analytical Workflows

Figure 1: Statistical Method Selection Based on Medical Decision Requirements

Figure 2: Analytical Workflows for Paired t-Test and Linear Regression

Research Reagent Solutions for Method-Comparison Studies

Table 3: Essential Materials and Analytical Requirements

| Reagent/Resource | Function in Method Comparison | Specification Guidelines |

|---|---|---|

| Certified Reference Materials | Provides true value for accuracy assessment | Traceable to international standards; covers medical decision levels |

| Patient Specimens | Natural matrix for realistic performance evaluation | 40-200 specimens; covers analytical measurement range |

| Quality Control Materials | Monitors precision and stability during study | At least two concentration levels (normal and abnormal) |

| Statistical Software | Calculates bias, regression parameters, and confidence intervals | Capable of paired t-tests, linear regression, and Bland-Altman analysis |

| Calibrators | Establishes measurement traceability and scale | Commutable with patient samples; value-assigned by reference method |

The selection between paired t-test and linear regression in method-comparison studies depends fundamentally on the study objectives related to data range and medical decision levels. For studies focused on a single medical decision concentration, the paired t-test provides a statistically powerful, straightforward approach to assess average systematic error. For comprehensive evaluation across multiple decision levels covering the analytical measurement range, linear regression is indispensable for characterizing both constant and proportional errors. Researchers should align their statistical approach with these methodological considerations to ensure appropriate quantification of systematic error in method-comparison experiments.

Calculating Systematic Error at Critical Medical Decision Concentrations using Regression Statistics

In the field of clinical laboratory science and drug development, the verification of analytical method accuracy is paramount. The comparison of methods experiment serves as a critical procedure for estimating inaccuracy or systematic error when introducing a new measurement technique [5]. This process involves analyzing patient samples using both a new test method and a established comparative method, then calculating the systematic differences observed between them. The core objective is to quantify the systematic errors that occur at critical medical decision concentrations—those specific analyte levels at which clinical interpretation directly impacts patient diagnosis, treatment, or monitoring [5] [26].

Understanding the nature and magnitude of systematic error is essential for ensuring that laboratory results remain clinically reliable. Systematic error, often referred to as bias, represents a consistent deviation of test results from the true value [26]. This error can manifest in different forms: constant systematic error, which remains the same regardless of analyte concentration, and proportional systematic error, which changes in proportion to the concentration level [27]. Through appropriate experimental design and statistical analysis, particularly regression techniques, researchers can not only quantify the total systematic error but also discern its constant and proportional components, providing valuable insights for method improvement and calibration [5] [27].

Theoretical Foundation of Regression Analysis for Error Quantification

The Regression Model in Method Comparison

Regression analysis provides a mathematical framework for modeling the relationship between measurements obtained by two different methods. When comparing a test method (Y) to a comparative method (X), linear regression generates an equation of the form Y = a + bX, where 'b' represents the slope and 'a' represents the y-intercept [5] [27]. This equation creates a predictive line that characterizes the systematic relationship between the methods across the analytical range.

The slope (b) of the regression line primarily indicates the presence of proportional systematic error. An ideal slope of 1.00 signifies perfect proportionality between the methods, while deviations from this value indicate proportional error that increases with concentration [27]. The y-intercept (a) reveals constant systematic error, representing a fixed difference between methods that persists even at zero concentration [27]. Ideally, the intercept should be zero, indicating no constant error component.

Estimating Systematic Error at Medical Decision Concentrations

The critical application of regression statistics in method validation lies in estimating systematic error at medically important decision levels. For a given medical decision concentration (Xc), the corresponding value from the test method (Yc) is calculated using the regression equation: Yc = a + bXc [5]. The systematic error (SE) at that decision level is then determined by: SE = Yc - Xc [5] [27].

This approach is particularly valuable when multiple medical decision concentrations exist within the analytical range, as it allows researchers to evaluate method performance at each critical level rather than relying solely on an average bias estimate that might mask concentration-dependent errors [27]. For example, a glucose method might be assessed at hypoglycemic (50 mg/dL), fasting (110 mg/dL), and post-prandial (150 mg/dL) decision levels, with potentially different systematic errors at each point [27].

Experimental Protocol for Method Comparison Studies

Specimen Selection and Handling

A well-designed method comparison experiment requires careful attention to specimen selection, handling, and analysis protocols. The following table outlines key experimental considerations:

Table 1: Experimental Design Specifications for Method Comparison Studies

| Experimental Factor | Recommendation | Rationale |

|---|---|---|

| Number of Specimens | Minimum of 40 patient specimens [5] | Provides sufficient data points for reliable statistical analysis |

| Specimen Characteristics | Cover entire working range; represent spectrum of diseases [5] | Ensures evaluation across clinically relevant concentrations and conditions |

| Measurement Replication | Single or duplicate measurements per specimen [5] | Duplicates help identify sample mix-ups or transposition errors |

| Time Period | Minimum of 5 days, ideally 20 days [5] | Minimizes systematic errors that might occur in a single run |

| Specimen Stability | Analyze within 2 hours by both methods [5] | Prevents differences due to specimen deterioration rather than method performance |

Comparative Method Selection

The choice of comparative method significantly influences the interpretation of results. A reference method with documented correctness through definitive method comparisons or traceable reference materials is ideal, as any differences can be attributed to the test method [5]. When using a routine method as the comparative method, differences must be interpreted more cautiously, as it may be unclear whether discrepancies originate from the test or comparative method [5]. In such cases, additional experiments like recovery and interference studies may be necessary to identify the source of inaccuracy.

Statistical Analysis and Data Interpretation

Graphical Data Analysis

Visual inspection of method comparison data represents a fundamental first step in analysis. Two primary graphing approaches are recommended:

- Comparison Plot: Displays test method results (Y) on the vertical axis versus comparative method results (X) on the horizontal axis [5]. This plot helps visualize the analytical range, linearity of response, and the general relationship between methods as shown by the angle of the regression line and its intercept with the y-axis [5].

- Difference Plot (Bland-Altman Plot): Shows the difference between test and comparative results (Y-X) on the y-axis versus the comparative result (or average of both methods) on the x-axis [5] [26]. This visualization helps identify patterns in differences across concentrations and reveals constant or proportional systematic errors [5].

Graphical analysis should be performed during data collection to immediately identify discrepant results that might require repeat analysis while specimens are still available [5].

Regression Statistics and Error Estimation

The following diagram illustrates the workflow for statistical analysis and systematic error estimation in method comparison studies:

Statistical Analysis Workflow for Method Comparison

The correlation coefficient (r) serves as an important indicator for determining the appropriate statistical approach. When r ≥ 0.99, the data range is typically sufficient for reliable ordinary linear regression analysis [26]. When r < 0.99, the data range may be too narrow, and alternatives such as improving the data range, using paired t-test statistics, or employing more sophisticated regression techniques (Deming or Passing-Bablock) should be considered [26].

Practical Application Example

Consider a cholesterol method comparison where regression analysis yields the equation: Y = 2.0 + 1.03X (y-intercept = 2.0 mg/dL, slope = 1.03) [5]. To estimate systematic error at the critical decision level of 200 mg/dL:

Yc = 2.0 + 1.03 × 200 = 208 mg/dL Systematic Error = 208 - 200 = 8 mg/dL

This indicates that at the decision concentration of 200 mg/dL, the test method demonstrates a positive systematic error of 8 mg/dL [5]. The following table illustrates how to calculate and present systematic errors at multiple medical decision concentrations:

Table 2: Systematic Error Calculation at Medical Decision Concentrations

| Medical Decision Concentration (Xc) | Regression Equation | Calculated Yc | Systematic Error (SE) |

|---|---|---|---|

| 50 mg/dL | Y = 2.0 + 1.03X | 53.5 mg/dL | +3.5 mg/dL |

| 110 mg/dL | Y = 2.0 + 1.03X | 115.3 mg/dL | +5.3 mg/dL |

| 150 mg/dL | Y = 2.0 + 1.03X | 156.5 mg/dL | +6.5 mg/dL |

| 200 mg/dL | Y = 2.0 + 1.03X | 208.0 mg/dL | +8.0 mg/dL |

Error Components and Method Performance Characterization

Deconstructing Systematic Error

Regression analysis enables researchers to deconstruct systematic error into its fundamental components, providing insights into potential sources of inaccuracy:

- Constant Systematic Error (CE): Represented by the y-intercept (a) in the regression equation, this error remains consistent across all concentration levels [27]. Potential causes include methodological interferences, inadequate blank correction, or miscalibrated zero points [27].

- Proportional Systematic Error (PE): Indicated by deviations of the slope (b) from the ideal value of 1.00, this error changes in proportion to analyte concentration [27]. This often stems from calibration inaccuracies or matrix effects that vary with concentration [27].

The standard error of the estimate (s_y/x) quantifies random error between methods, incorporating imprecision from both methods plus any sample-specific variations [27].

Assessing Statistical Significance of Error Components