Absorption, Emission, and Scattering in Spectroscopy: A Guide for Pharmaceutical Scientists

This article provides a comprehensive guide to the fundamental principles and practical applications of absorption, emission, and scattering phenomena in spectroscopy.

Absorption, Emission, and Scattering in Spectroscopy: A Guide for Pharmaceutical Scientists

Abstract

This article provides a comprehensive guide to the fundamental principles and practical applications of absorption, emission, and scattering phenomena in spectroscopy. Tailored for researchers and drug development professionals, it explores the theoretical underpinnings of light-matter interactions, details methodological approaches for pharmaceutical analysis, addresses common troubleshooting scenarios, and offers a comparative framework for technique selection. By integrating foundational science with real-world applications, this resource aims to enhance analytical capabilities in drug discovery, formulation, and quality control, supporting the advancement of both small-molecule and biologic therapies.

Core Principles: How Light Interacts with Matter in Pharmaceutical Analysis

Electromagnetic radiation is a form of energy that exhibits properties of both waves and particles, and its behavior is fundamental to spectroscopic analysis [1]. It consists of oscillating electric and magnetic fields that propagate through space, characterized by key properties such as velocity, amplitude, frequency, and wavelength [1]. The entire electromagnetic spectrum is organized by frequency or wavelength, divided into separate bands including radio waves, microwaves, infrared, visible light, ultraviolet, X-rays, and gamma rays [2]. Throughout most of this spectrum, spectroscopy serves as the primary technique to separate waves of different frequencies, measuring radiation intensity as a function of frequency or wavelength to study interactions between electromagnetic waves and matter [2].

The energy of electromagnetic radiation is directly proportional to its frequency and inversely proportional to its wavelength, as described by the equation ( E = hf = \frac{hc}{\lambda} ), where ( h ) is Planck's constant, ( c ) is the speed of light, ( f ) is frequency, and ( \lambda ) is wavelength [1] [2]. This relationship is crucial for understanding how different regions of the spectrum probe various molecular processes. When electromagnetic radiation interacts with single atoms and molecules, its effect depends significantly on the amount of energy per photon it carries [2]. The fundamental principle underlying all organic spectroscopy is that different compounds absorb and emit electromagnetic radiation at specific wavelengths characteristic of their molecular structure and chemical environment [3].

Fundamental Interaction Mechanisms: Absorption, Emission, and Scattering

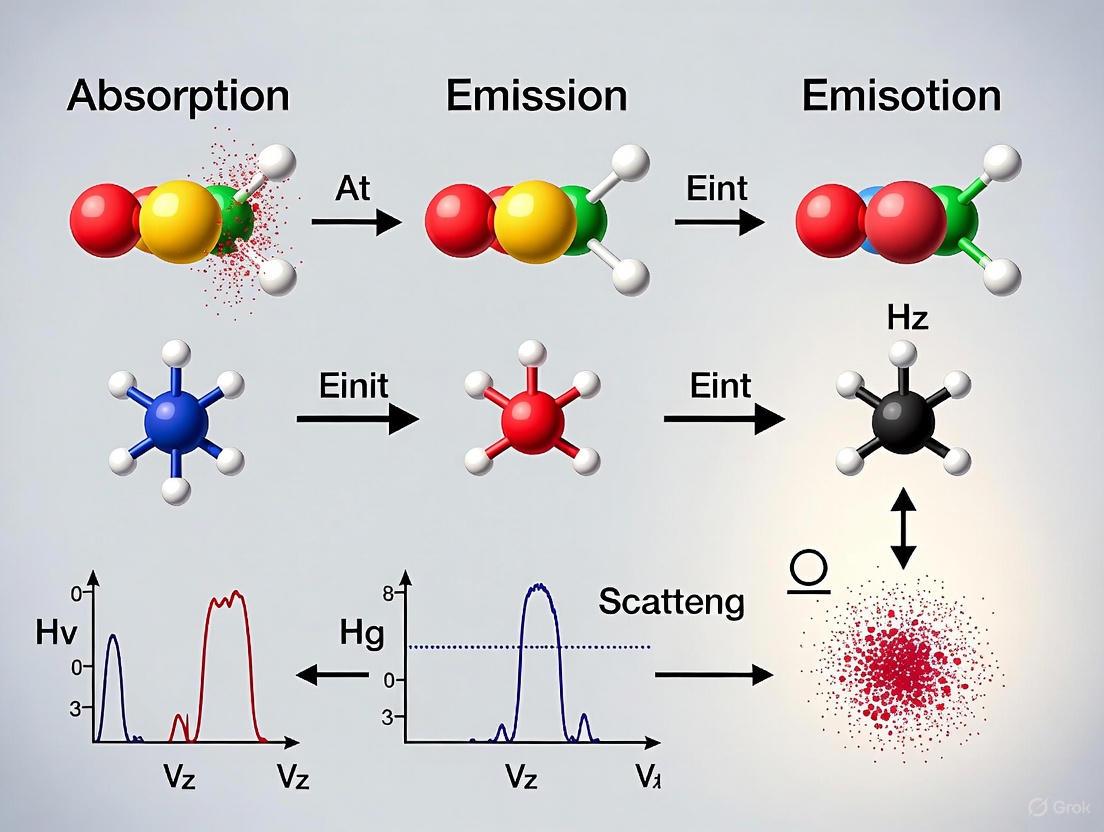

The interaction between matter and electromagnetic radiation occurs through three primary mechanisms: absorption, emission, and scattering. These processes reveal crucial information about molecular structure and energy states [4].

Absorption Processes

Absorption occurs when a molecule takes in energy from electromagnetic radiation, causing it to transition from a lower energy state to a higher energy state [4]. This process happens when the energy of the incident radiation matches the exact energy difference between two molecular energy states [4]. The probability of absorption is determined by the transition dipole moment, which depends on the change in the electronic, vibrational, or rotational state of the molecule [4]. The intensity of the absorbed radiation is proportional to the population of molecules in the lower energy state, as described by the Boltzmann distribution [4]. In absorption spectroscopy, the amount of light absorbed by a sample at different wavelengths is measured, providing critical data for identifying and quantifying substances [5].

Emission Processes

Emission occurs when a molecule releases energy in the form of electromagnetic radiation as it transitions from a higher energy state to a lower energy state [4]. This process can occur through two distinct mechanisms:

- Spontaneous emission: A molecule in an excited state spontaneously decays to a lower energy state, releasing a photon with energy corresponding to the difference between the initial and final states [4].

- Stimulated emission: An incident photon interacts with a molecule in an excited state, causing it to emit an additional photon with the same energy, phase, and direction as the incident photon [4].

The intensity of emitted radiation is proportional to the population of molecules in the higher energy state [4]. Emission spectroscopy studies the light emitted by a substance when excited by an energy source, analyzing the characteristic emission spectrum to identify elements and compounds based on their unique emission lines [5].

Scattering Processes

Scattering is the process where electromagnetic radiation interacts with a molecule and is deflected or redirected without being absorbed or emitted [4]. Unlike absorption and emission, scattering processes do not involve net energy transfer between the molecule and the radiation [4]. Several types of scattering are significant in spectroscopic analysis:

Rayleigh scattering: An elastic scattering process where incident radiation interacts with a molecule, causing it to oscillate and re-emit radiation at the same frequency [4]. The intensity of Rayleigh scattering is proportional to the square of the polarizability and inversely proportional to the fourth power of the wavelength [4]. This wavelength dependence explains why shorter wavelengths (blue light) are more strongly scattered in the atmosphere, resulting in the blue color of the sky [4].

Raman scattering: An inelastic scattering process where the incident radiation interacts with a molecule, causing it to transition to a different vibrational or rotational energy state and re-emit radiation at a different frequency [4]. Stokes Raman scattering occurs when the scattered radiation has a lower frequency than the incident radiation, while anti-Stokes Raman scattering occurs when the scattered radiation has a higher frequency [4]. The frequency shifts observed in Raman scattering provide valuable information about the vibrational and rotational energy levels of molecules [4].

Brillouin scattering: An inelastic scattering process involving the interaction of electromagnetic radiation with acoustic phonons (collective vibrational modes) in a material, resulting in a small frequency shift determined by the velocity of acoustic phonons and the wavelength of the incident radiation [4].

Electromagnetic Spectrum Regions and Their Analytical Applications

The electromagnetic spectrum spans a tremendous range of frequencies and wavelengths, with different regions providing distinct information about molecular structure and composition. The following table summarizes the primary regions, their characteristics, and their key applications in analytical spectroscopy.

Table 1: Electromagnetic Spectrum Regions and Analytical Applications

| Region | Wavelength Range | Frequency Range | Photon Energy | Molecular Process Probed | Primary Analytical Applications |

|---|---|---|---|---|---|

| Gamma Rays | < 10 pm | > 30 EHz | > 124 keV | Nuclear transitions | Nuclear structure analysis, PET scanning, radiation therapy |

| X-Rays | 10 pm - 10 nm | 30 EHz - 30 PHz | 124 eV - 124 keV | Inner electron transitions | Crystal structure determination (XRD), medical imaging, elemental analysis |

| Ultraviolet (UV) | 10 - 400 nm | 30 PHz - 750 THz | 3.1 - 124 eV | Electronic transitions | Quantification of nucleic acids, proteins, drug purity analysis |

| Visible | 400 - 700 nm | 750 - 430 THz | 1.8 - 3.1 eV | Electronic transitions | Colorimetric assays, concentration measurements, pH indicators |

| Infrared (IR) | 700 nm - 1 mm | 430 THz - 300 GHz | 1.24 meV - 1.8 eV | Molecular vibrations | Functional group identification, molecular structure elucidation |

| Microwaves | 1 mm - 1 m | 300 GHz - 300 MHz | 1.24 μeV - 1.24 meV | Molecular rotations | Rotational spectroscopy, microwave-assisted synthesis |

| Radio Waves | > 1 m | < 300 MHz | < 1.24 μeV | Nuclear spin transitions | Nuclear Magnetic Resonance (NMR), Magnetic Resonance Imaging (MRI) |

Data compiled from [1], [2], and [3]

Our atmosphere creates specific "atmospheric windows" that allow certain wavelengths to pass through while blocking others [6]. Regions of the spectrum with wavelengths that can pass through the atmosphere are referred to as atmospheric windows, while other regions are largely absorbed or reflected by atmospheric gases such as water vapor, carbon dioxide, and ozone [6]. Some microwaves can even pass through clouds, making them ideal for transmitting satellite communication signals [6]. This atmospheric filtering effect is particularly important for astronomical observations, as instruments often need to be positioned above Earth's energy-absorbing atmosphere to "see" higher energy and even some lower energy light sources such as quasars [6].

High-Energy Regions: Gamma Rays and X-Rays

The high-frequency end of the spectrum includes gamma rays, X-rays, and extreme ultraviolet rays, collectively known as ionizing radiation because their high photon energy can ionize atoms by knocking electrons out of atoms, causing chemical reactions [6] [2]. This ionizing capability can alter atoms and molecules and damage cells in organic matter, with effects that can be both harmful (sunburn) and beneficial (cancer treatment) [6].

In spectroscopic analysis, X-ray spectroscopy uses X-rays to probe materials' electronic structure and chemical composition, with techniques like X-ray diffraction (XRD) and X-ray fluorescence (XRF) used to study crystalline structures and elemental composition, respectively [5]. These methods are essential for materials science, geology, and environmental analysis [5].

UV-Visible Region: Probing Electronic Transitions

The ultraviolet-visible (UV-Vis) region of the spectrum involves the study of the absorption of ultraviolet and visible light by organic compounds [3]. This technique measures the amount of light absorbed by a sample as a function of wavelength, providing information about the electronic structure of molecules [3]. It is particularly useful for determining the presence of conjugated systems, aromatic compounds, and chromophores [3].

UV-Visible spectroscopy is widely used in analyzing organic compounds in solution and finds applications in pharmaceuticals, environmental monitoring, and materials science [3]. It helps quantify compounds, monitor reactions, and study the kinetics of photochemical processes [3]. The technique typically employs cuvettes - small, transparent containers that hold liquid samples - selected based on the wavelength range being studied to ensure accurate absorbance measurements by minimizing interference and maximizing light transmission [5].

Infrared Region: Molecular Fingerprinting

Infrared spectroscopy involves studying the absorption, reflection, or transmission of infrared radiation by organic molecules [3]. This technique provides valuable information about the functional groups present in a compound, as each functional group has a characteristic absorption pattern in the infrared region, allowing chemists to identify and characterize compounds based on their IR spectra [3].

IR spectroscopy is highly effective in determining the presence of various functional groups such as alcohols, carbonyl compounds, amines, and acids [3]. It is extensively used in analyzing organic compounds, including drug discovery, forensic analysis, and quality control in industries [3]. Fourier Transform Infrared (FT-IR) spectroscopy, which involves simultaneously measuring a broad range of wavelengths, enhances the resolution and speed of spectral data collection, allowing for detailed analysis of complex samples [5].

Microwave and Radio Wave Regions: Structural Elucidation

The low-energy end of the spectrum includes microwaves and radio waves, which have the lowest photon energies and longest wavelengths [2]. These regions are particularly important for studying molecular rotations and nuclear spin transitions.

Nuclear Magnetic Resonance (NMR) spectroscopy uses radio waves and magnetic fields to study the interactions of atomic nuclei, providing detailed information about molecular structure, dynamics, and environment [5]. NMR is widely used in organic chemistry, biochemistry, and materials science for determining the connectivity of atoms, confirming the structure of organic compounds, analyzing reaction mechanisms, and studying molecular dynamics [3]. It has applications in drug development, natural product isolation, and metabolomics [3].

Experimental Methodologies in Spectroscopy

General Spectroscopic Workflow

The following diagram illustrates the core logical workflow of a spectroscopic experiment, from sample preparation to data interpretation:

Key Experimental Protocols

UV-Visible Absorption Spectroscopy Protocol

Purpose: To determine the concentration and electronic properties of a compound in solution.

Materials and Equipment:

- UV-Vis spectrophotometer

- Matching quartz or glass cuvettes

- Analytical balance

- Volumetric flasks

- Pipettes and micropipettes

- Appropriate solvent (e.g., water, methanol, hexane)

- Standard reference materials

Procedure:

- Instrument Calibration: Warm up the spectrophotometer for 15-30 minutes. Perform baseline correction with the solvent blank.

- Sample Preparation: Precisely weigh the analyte and dissolve in appropriate solvent to prepare stock solution. Prepare serial dilutions for calibration standards.

- Spectrum Acquisition: Fill cuvette with sample, ensuring no air bubbles. Place in sample holder and acquire spectrum over desired wavelength range (typically 200-800 nm).

- Data Collection: Record absorbance at characteristic wavelength (λmax). Measure all standards and unknown samples in triplicate.

- Data Analysis: Construct calibration curve from standards. Determine unknown concentration using Beer-Lambert law: A = εlc, where A is absorbance, ε is molar absorptivity, l is path length, and c is concentration.

Quality Control: Verify instrument performance using standard reference materials. Ensure absorbance values remain within linear range (typically 0.1-1.0 AU).

Fourier Transform Infrared (FT-IR) Spectroscopy Protocol

Purpose: To identify functional groups and characterize molecular structure through vibrational spectroscopy.

Materials and Equipment:

- FT-IR spectrometer with DTGS or MCT detector

- ATR accessory or KBr pellets

- Hydraulic press for pellet preparation

- Anhydrous potassium bromide (KBr)

- Mortar and pestle

- Desiccator

Procedure:

- Sample Preparation:

- ATR Method: Place solid sample directly on ATR crystal. Apply consistent pressure to ensure good contact.

- KBr Pellet Method: Grind 1-2 mg sample with 200 mg dry KBr. Press mixture into transparent pellet using hydraulic press.

- Background Collection: Acquire background spectrum with clean ATR crystal or empty sample holder.

- Sample Measurement: Place prepared sample in spectrometer and acquire spectrum (typically 4000-400 cm⁻¹).

- Data Acquisition: Collect minimum of 16 scans at 4 cm⁻¹ resolution to ensure adequate signal-to-noise ratio.

- Data Processing: Subtract background, apply atmospheric suppression (CO₂ and H₂O), and perform baseline correction.

Quality Control: Verify wavelength accuracy using polystyrene standard. Ensure peaks are sharp and well-resolved.

Advanced Spectroscopic Techniques

Recent advances in spectroscopic instrumentation have significantly enhanced analytical capabilities. The 2025 review of spectroscopic instrumentation highlights several cutting-edge developments [7]:

Quantum Cascade Laser (QCL) Microscopy: Systems like the LUMOS II ILIM use QCL technology operating from 1800 to 950 cm⁻¹ to create high-resolution chemical images in transmission or reflection at rates of 4.5 mm² per second [7]. These systems incorporate patented spatial coherence reduction features to reduce speckle or fringing in images [7].

Specialized Biopharmaceutical Analyzers: Instruments like the Horiba Veloci A-TEEM Biopharma Analyzer simultaneously collect absorbance, transmittance, and fluorescence excitation emission matrix (A-TEEM) data, providing an alternative to traditional separation methods for analyzing monoclonal antibodies, vaccine characterization, and protein stability [7].

Broadband Chirped Pulse Microwave Spectrometry: BrightSpec has introduced the first commercial product using broadband chirped pulse microwave spectrometry to measure the microwave rotational spectrum of small molecules and unambiguously determine structure and configuration in the gas phase [7].

Research Reagent Solutions and Essential Materials

Successful spectroscopic analysis requires specific reagents and materials tailored to each technique. The following table details essential research reagent solutions used in spectroscopic experiments.

Table 2: Essential Research Reagents and Materials for Spectroscopic Analysis

| Reagent/Material | Application Area | Function/Purpose | Technical Specifications |

|---|---|---|---|

| Anhydrous KBr | IR Spectroscopy | Matrix for pellet preparation | FT-IR grade, <0.001% moisture, optical purity |

| Deuterated Solvents | NMR Spectroscopy | Solvent for NMR samples | 99.8% D atom minimum, NMR-grade with TMS reference |

| Spectrophotometric Cuvettes | UV-Vis Spectroscopy | Sample containment | Quartz (UV), glass (Vis), pathlength 10mm, matched pairs |

| ATR Crystals | FT-IR Spectroscopy | Internal reflection element | Diamond, ZnSe, or Ge crystals, specific refractive indices |

| NMR Reference Standards | NMR Spectroscopy | Chemical shift calibration | Tetramethylsilane (TMS) or DSS for aqueous solutions |

| Fluorescence Dyes | Fluorescence Spectroscopy | Molecular tagging and detection | High quantum yield, photostability, specific excitation/emission |

| Mass Spec Standards | Mass Spectrometry | Mass calibration and quantification | Certified reference materials, specific to mass range |

| Ultrapure Water | General Spectroscopy | Solvent and sample preparation | 18.2 MΩ·cm resistivity, TOC <5 ppb, filtered 0.2μm |

Data compiled from [7], [5], and [8]

Instrumentation and Technological Advances

Spectroscopic instrumentation continues to evolve with significant advancements in sensitivity, resolution, and portability. The core components of spectroscopic instruments include:

- Spectrometers: Measure light properties over specific portions of the electromagnetic spectrum, analyzing spectral content to identify substance composition [5].

- Spectrophotometers: A type of spectrometer that measures light intensity as a function of wavelength, commonly used for quantitative analysis through absorbance and transmittance measurements [5].

- Spectrographs: Instruments that record spectra, capturing light emitted, absorbed, or scattered by samples [5].

Key instrumental components include the light source, which provides necessary illumination (lamps, lasers, or LEDs depending on spectroscopy type), and diffraction gratings, which disperse light into component wavelengths for precise spectrum measurement [5].

Recent market trends show a dramatic division between laboratory and field/portable/handheld instrumentation [7]. Portable spectrometers are increasingly used for on-site analysis in agriculture, geochemistry, pharmaceutical quality control, and hazardous materials response [7]. For example, the 2025 review highlights the TaticID-1064ST handheld Raman spectrometer aimed at hazardous materials response teams, featuring an on-board camera and note-taking capability for documentation [7].

The integration of artificial intelligence and machine learning with spectroscopy software solutions represents another significant advancement, enhancing data gathering, analysis, and interpretation processes [8]. These technologies enable faster processing of spectral data, pattern detection, and predictive analytics [8]. The global spectroscopy software market, valued at approximately USD 1.1 billion in 2024 and estimated to grow at 9.1% CAGR through 2034, reflects the increasing importance of computational methods in spectroscopic analysis [8].

The electromagnetic spectrum provides a fundamental framework for understanding and utilizing different energy regions to probe molecular structure and interactions. From high-energy gamma rays that reveal nuclear structure to low-energy radio waves that illuminate molecular dynamics through NMR, each region offers unique analytical capabilities. The continued advancement of spectroscopic technologies, particularly the development of portable instruments and integration of artificial intelligence for data analysis, ensures that electromagnetic spectroscopy remains at the forefront of analytical science. These developments are particularly crucial for pharmaceutical applications, where spectroscopic techniques play an indispensable role in drug discovery, quality control, and the characterization of complex biologics, supporting the growing market for molecular spectroscopy projected to reach USD 9.04 billion by 2034 [9].

Absorption spectroscopy stands as a fundamental analytical technique across scientific disciplines, from drug development to materials science. At its core lies the interaction between matter and electromagnetic radiation, governed by quantum mechanical principles including the photoelectric effect and electron transitions [10]. This technical guide examines the fundamental mechanisms of absorption processes, detailing how the photoelectric effect provides the theoretical foundation for understanding electron transitions during light-matter interactions. We explore the intricate relationship between absorption, emission, and scattering phenomena, with particular emphasis on their applications in spectroscopic research and analytical methodology. The precise quantification of these interactions enables researchers to extract detailed information about molecular structure, composition, and dynamics across diverse scientific domains from pharmaceutical development to astronomical spectroscopy.

Theoretical Foundations

The Photoelectric Effect

The photoelectric effect describes the emission of electrons from a material surface when illuminated by light of sufficient frequency [11]. This phenomenon provided crucial evidence for the quantum nature of light, demonstrating that electromagnetic energy transfers in discrete packets or photons rather than as a continuous wave.

The fundamental relationship governing the photoelectric effect establishes that the maximum kinetic energy ((K{\max})) of emitted photoelectrons depends linearly on the frequency (ν) of incident radiation: [K{\max} = h\nu - W] where (h) represents Planck's constant and (W) denotes the work function, defined as the minimum energy required to eject an electron from a specific metal surface [11]. This work function corresponds to a threshold frequency ((ν_0)), below which no electron emission occurs regardless of radiation intensity [12].

Experimental observations critical to understanding the photoelectric effect include:

- Threshold Frequency: Electron emission only occurs when incident light exceeds a material-specific frequency threshold [11]

- Instantaneous Emission: The photoelectric effect occurs virtually instantaneously (<10⁻⁹ seconds) after illumination [11]

- Kinetic Energy Independence: Electron kinetic energy depends solely on light frequency, not intensity [12]

- Current Proportionality: Photoelectric current correlates directly with incident light intensity at frequencies above threshold [12]

Table 1: Photoelectric Effect Parameters and Relationships

| Parameter | Symbol | Relationship | Experimental Observation |

|---|---|---|---|

| Photon Energy | (E_{photon}) | (E = hf) | Determines if electron emission can occur |

| Work Function | (W) | (W = hf_0) | Material-specific property |

| Threshold Frequency | (f_0) | Minimum for emission | No emission below this frequency |

| Electron Kinetic Energy | (K_{\max}) | (K_{\max} = hf - W) | Independent of light intensity |

| Photoelectric Current | (I) | Proportional to intensity | Increases with brighter light at fixed frequency |

Atomic and Molecular Energy Levels

The absorption and emission of radiation fundamentally involve transitions between discrete energy states within atoms and molecules. Electrons occupy specific energy levels characterized by quantum mechanical constraints, where each element possesses a unique configuration of these levels serving as an atomic "fingerprint" [13].

Three primary transition types occur in spectroscopic processes:

- Electronic Transitions: Involve electrons moving between energy levels in atoms or molecules, typically occurring in visible and ultraviolet regions [14]

- Vibrational Transitions: Correspond to changes in molecular vibrational states, primarily in infrared regions [14]

- Rotational Transitions: Involve changes in molecular rotational states, typically in microwave regions [14]

These quantized energy states explain why absorption and emission spectra consist of discrete lines rather than continuous distributions. The energy difference between initial and final states precisely matches the energy of absorbed or emitted photons according to the relationship: [\Delta E{\text{electron}} = Ef - Ei = hf] where (Ef) and (E_i) represent the final and initial energy states, respectively, (h) denotes Planck's constant, and (f) indicates the photon frequency [13].

Spectroscopic Processes and Relationships

Absorption, Emission, and Scattering

The interaction between electromagnetic radiation and matter manifests through three primary processes, each providing distinct information about molecular structure and composition.

Absorption occurs when a photon's energy matches the difference between two molecular energy states, causing the molecule to transition from a lower to a higher energy state [4]. The probability of absorption depends on the transition dipole moment, which reflects changes in the electronic, vibrational, or rotational state [4]. Absorption intensity correlates directly with the population of molecules in the lower energy state, following Boltzmann distribution principles.

Emission represents the reverse process, where molecules in excited states release energy as photons when transitioning to lower energy states. Two distinct emission mechanisms operate:

- Spontaneous Emission: Excited state molecules spontaneously decay to lower energy states, emitting photons with energy corresponding to the state difference [4]

- Stimulated Emission: Incident photons interact with excited-state molecules, triggering additional photon emission with identical energy, phase, and direction [4]

Scattering processes involve photon redirection without energy transfer (elastic) or with energy modification (inelastic):

- Rayleigh Scattering: Elastic process where radiation re-emits at the incident frequency [4]

- Raman Scattering: Inelastic process involving molecular transitions to different vibrational/rotational states, emitting radiation at modified frequencies [4]

- Brillouin Scattering: Inelastic process involving interactions with acoustic phonons in materials [4]

Diagram 1: Absorption spectroscopy process and electron transitions

Complementary Nature of Absorption and Emission Spectra

Absorption and emission spectra represent complementary manifestations of the same quantum mechanical transitions between energy states. The absorption spectrum appears as dark lines superimposed on a continuous spectrum, corresponding precisely to the bright lines observed in the emission spectrum of the same element [13]. This inverse relationship occurs because:

- Absorption lines correspond to photon energies that match energy differences between electronic states

- Emission lines correspond to photons released when electrons transition from higher to lower energy states

- The specific wavelengths absorbed or emitted are identical for a given element, as both processes involve the same energy level differences [13]

Table 2: Comparative Analysis of Spectroscopic Processes

| Process | Energy Transfer | Spectral Characteristics | Key Applications |

|---|---|---|---|

| Absorption | Photon energy transferred to molecule | Discrete lines at specific wavelengths | Chemical identification, concentration measurement |

| Emission | Molecular energy released as photon | Discrete lines at specific wavelengths | Elemental analysis, astronomical spectroscopy |

| Rayleigh Scattering | No net energy transfer | Continuous spectrum, same frequency as source | Atmospheric phenomena, structural analysis |

| Raman Scattering | Energy exchange with molecule | Frequency-shifted lines | Molecular vibration studies, structural analysis |

Experimental Methodologies

Photoelectron Spectroscopy (PES)

Photoelectron spectroscopy represents a direct application of the photoelectric effect for investigating electronic structures of atoms, molecules, and solids. PES quantitatively measures kinetic energies of photoelectrons ejected by photon irradiation, enabling determination of binding energies, intensities, and angular distributions of these electrons [15].

The technique divides into two primary categories based on ionization energy sources:

- X-ray Photoelectron Spectroscopy (XPS): Utilizes high-energy X-rays (1000-1500 eV) to eject electrons from core atomic orbitals, providing information about elemental composition and chemical states [15]

- Ultraviolet Photoelectron Spectroscopy (UPS): Employs ultraviolet radiation (<41 eV) to eject electrons from valence orbitals, revealing details about molecular orbital energy levels [15]

The fundamental equation governing PES derives from the photoelectric effect: [Ek = h\nu - EB] where (Ek) represents the measured photoelectron kinetic energy, (h\nu) denotes the known photon energy, and (EB) indicates the electron binding energy [15].

The phenomenological three-step model describes photoemission from solids:

- Photoabsorption: Photon absorption causes direct optical transition between occupied and unoccupied electronic states [11]

- Travel to Surface: Excited electron propagates toward material surface, potentially scattering with other constituents [11]

- Escape into Vacuum: Electron transmits through surface barrier into vacuum for detection [11]

Absorption Spectroscopy Techniques

Absorption spectroscopy methodologies measure the attenuation of electromagnetic radiation as it passes through a sample material. The basic experimental arrangement involves directing a generated radiation beam through a sample and detecting transmitted intensity [14].

Key measurement considerations include:

- Reference Spectra: Measuring radiation spectrum without sample establishes baseline characteristics [14]

- Sample Spectra: Remeasuring with sample in place identifies absorption features [14]

- Spectral Combination: Comparing reference and sample spectra yields material-specific absorption spectrum [14]

The Beer-Lambert law provides quantitative relationship between absorption and concentration: [A = \varepsilon l c] where (A) represents absorbance, (\varepsilon) denotes molar absorptivity, (l) indicates path length, and (c) signifies concentration [14].

Table 3: Absorption Spectroscopy Techniques Across Electromagnetic Spectrum

| Technique | Radiation Type | Energy Transition | Typical Applications |

|---|---|---|---|

| X-ray Absorption Spectroscopy | X-rays | Inner shell electrons | Elemental analysis, material characterization |

| UV-Vis Absorption Spectroscopy | Ultraviolet-Visible | Valence electrons | Concentration measurement, chemical kinetics |

| IR Absorption Spectroscopy | Infrared | Molecular vibrations | Functional group identification, compound verification |

| Microwave Absorption Spectroscopy | Microwave | Molecular rotations | Molecular structure determination |

Advanced Spectroscopic Applications

Contemporary research employs sophisticated spectroscopic techniques to investigate complex molecular interactions:

Vibrational Stark Effect: This method utilizes vibrational probes (typically nitriles) to measure electric fields within molecular environments. The nitrile vibrational frequency shifts linearly with applied electric field in aprotic environments, enabling quantification of electrostatic contributions to non-covalent interactions [16]. This approach has been implemented within metal-organic frameworks (MOFs) to systematically build and characterize non-covalent interactions with precise geometrical control [16].

Infrared Spectroscopy with DFT Calculations: Combining experimental IR spectroscopy with density functional theory (DFT) computations enables detailed investigation of molecule-surface interactions. This methodology has revealed pronounced vibrational blue shifts, such as CO adsorption on UO₂(111) surfaces shifting from 2143 cm⁻¹ (gas phase) to 2160 cm⁻¹, providing insights into surface chemical bonding and relativistic effects [17].

Research Reagents and Materials

Table 4: Essential Research Reagents and Materials for Spectroscopic Experiments

| Reagent/Material | Function | Application Example |

|---|---|---|

| Monochromatic Light Source | Provides precise wavelength photons | Determining threshold frequencies in photoelectric effect |

| Metal Electrodes (e.g., Cs, K, Ca) | Low work function surfaces | Enhancing photoelectron emission efficiency |

| Vacuum Systems | Eliminates electron-gas molecule collisions | Photoelectron spectroscopy measurements |

| Nitrile Vibrational Probes | Electric field sensing via Stark effect | Quantifying non-covalent interactions in MOFs |

| Reference Compounds | Spectral calibration and quantification | Beer-Lambert law concentration determinations |

| Metal-Organic Frameworks (MOFs) | Precise molecular scaffolding | Systematic study of non-covalent interactions |

| UV-Transparent Containers | Sample housing for spectroscopy | Absorption measurements in ultraviolet region |

Applications in Scientific Research

Pharmaceutical and Biomedical Applications

Spectroscopic techniques provide critical analytical capabilities throughout drug development pipelines:

- Metabolite Screening: Analyzing metabolic profiles and pathways for pharmaceutical compounds [18]

- Protein Characterization: Determining protein structure, folding, and interaction dynamics [18]

- Drug Structure Optimization: Guiding molecular design through precise measurement of chemical properties [10]

Magnetic Resonance Spectroscopy (MRS), often coupled with MRI technology, enables non-invasive diagnosis and monitoring of chemical changes in tissues, facilitating detection of conditions ranging from depression to tumors through metabolic profiling [18].

Environmental and Astronomical Spectroscopy

Absorption spectroscopy enables remote sensing applications with particular significance for environmental monitoring and astronomical investigation:

- Pollutant Identification: Infrared gas analyzers distinguish atmospheric pollutants from expected constituents through characteristic absorption signatures [14]

- Greenhouse Effect Studies: Analyzing atmospheric absorption of infrared radiation reveals mechanisms underlying global warming [13]

- Exoplanet Characterization: Measuring absorption spectra during planetary transits determines atmospheric composition, temperature, pressure, and mass of extrasolar planets [14]

Astronomical spectroscopy leverages the Doppler effect (redshift/blueshift) in spectral lines to determine celestial object velocities relative to Earth, enabling measurements of galactic motion and universe expansion [13].

Diagram 2: Relationship between fundamental processes and applications

The photoelectric effect and electron transitions constitute the fundamental physical mechanisms underlying absorption spectroscopy and related analytical techniques. The quantized nature of energy transfers between matter and electromagnetic radiation enables precise determination of molecular composition, structure, and dynamics across scientific disciplines. Contemporary research continues to refine spectroscopic methodologies, enhancing sensitivity and expanding applications from single-molecule investigations to astronomical observations. The integration of theoretical frameworks with experimental innovation ensures spectroscopy remains an indispensable tool for scientific advancement, particularly in pharmaceutical development where molecular-level understanding drives therapeutic progress.

Following the absorption of energy, an atom in an excited state must return to a lower energy state through processes collectively known as atomic relaxation. A critical pathway for this energy release is fluorescence, which involves the emission of a photon. The probability that an excited atom will de-excite through this radiative pathway, rather than a non-radiative one, is quantified by its fluorescence yield [4] [19]. This parameter is fundamental across spectroscopic techniques, from X-Ray Fluorescence (XRF) to Atomic Fluorescence Spectrometry (AFS), as it directly influences the intensity of the measured signal and the ultimate sensitivity of an analytical method [20] [19]. Understanding these mechanisms is therefore essential for optimizing spectroscopic instrumentation and interpreting experimental data, particularly in research and drug development where precise elemental detection is crucial.

Fundamental Atomic Relaxation Mechanisms

When an inner-shell electron is ejected, typically by an incident X-ray photon, the atom is left in a highly unstable, excited state. The subsequent return to stability involves a cascade of possible electronic transitions, which can be categorized into radiative and non-radiative processes.

The following diagram illustrates the core atomic relaxation pathways that compete to de-excite an atom following the initial ionization event.

Radiative Relaxation: Fluorescence

Fluorescence is a radiative process where the energy released during an electron transitioning from a higher to a lower energy state is emitted as a photon [4] [19]. The emitted photon, known as characteristic X-ray radiation, has an energy specific to the element and the electronic orbitals involved, forming the basis for elemental identification in techniques like XRF [20].

Non-Radiative Relaxation: The Auger Effect

In the Auger effect, the energy from an electron filling an inner-shell vacancy is transferred to another electron within the same atom (e.g., from the L-shell), which is then ejected as a Auger electron [20]. This is a non-radiative process that competes directly with fluorescence emission. The kinetic energy of the ejected Auger electron is characteristic of the element.

Quantitative Parameters: Fluorescence Yield and Transition Probabilities

The relaxation process is governed by well-defined atomic parameters. The key quantitative factors that determine the probability and nature of the emitted radiation are summarized below.

Table 1: Key Atomic Parameters Governing X-ray Fluorescence Intensity [20]

| Parameter | Symbol | Description | Impact on Fluorescence |

|---|---|---|---|

| Fluorescence Yield | ωK | Probability of radiative (vs. Auger) relaxation after a core-hole creation. | Directly proportional; higher yield means stronger signal. |

| Absorption Jump Ratio | JK | Ratio of mass absorption coefficients across an absorption edge; probability that a photoelectric interaction ejects a K-shell electron. | Determines the fraction of absorbed photons that create a specific core-hole. |

| Transition Probability | gKα | Relative probability that a specific transition (e.g., Kα) occurs among all possible transitions from a given shell. | Determines the intensity distribution of spectral lines (e.g., Kα vs Kβ). |

The overall probability for producing a specific fluorescent line, known as the excitation factor (Q), is the product of these individual probabilities [20]: Q = (Absorption Jump Ratio) × (Transition Probability) × (Fluorescence Yield)

The fluorescence yield varies significantly across the periodic table, as shown in the table below.

Table 2: Experimentally Determined K-Shell Fluorescence Yields (ωK) and Transition Probabilities (gKα) for Selected Elements [20]

| Element | Atomic Number | K-Shell Fluorescence Yield (ωK) | Kα / Kβ Intensity Ratio | Kα Transition Probability (gKα) |

|---|---|---|---|---|

| Fe (Iron) | 26 | 0.347 | 6.78 | 0.882 |

| Cu (Copper) | 29 | 0.440 | 7.65 | 0.884 |

| Mo (Molybdenum) | 42 | 0.765 | 5.39 | 0.843 |

| W (Tungsten) | 74 | 0.958 | - | - |

Experimental Methodology in Atomic Fluorescence Spectrometry

Atomic Fluorescence Spectrometry (AFS) leverages the principles of fluorescence yield for ultra-trace elemental analysis. The following diagram outlines the core workflow for an AFS experiment.

Core Experimental Protocols

Sample Atomization and Vapor Generation

- Objective: Convert the elemental analyte in a liquid or solid sample into a cloud of free, gaseous atoms in the instrument's observation zone (atom cell) [19].

- Protocol for Hydride-Forming Elements (As, Se, Hg, Sb):

- Liquid Sample Introduction: An acidified liquid sample is introduced into a vapor generation system.

- Chemical Reduction: For elements like As and Se, a reducing agent (e.g., sodium tetrahydroborate, NaBH4) is added to convert the element into a volatile hydride (e.g., AsH3, H2Se) [19].

- Mercury Cold Vapor: Mercury is reduced to elemental mercury vapor directly.

- Gas-Liquid Separation: The generated gaseous hydrides or vapor are separated from the liquid matrix and transported by an inert gas (e.g., Argon) into the atom cell.

- Alternative Atom Cells: Electrothermal atomizers (graphite furnaces) or inductively coupled plasmas (ICP) can also be used to produce ground-state atoms from a wider range of elements [19].

- Objective: Selectively excite the analyte atoms and detect the resulting fluorescence photons.

- Excitation Protocol:

- A high-intensity, tuned light source (e.g., a hollow cathode lamp or tunable laser) is directed at the atom cloud [19].

- The wavelength of the source is set to match a strong absorption line of the analyte element, promoting ground-state atoms to an excited electronic state via stimulated absorption [19].

- Detection Protocol:

- The resulting fluorescence is collected at a direction (often 90°) to the excitation beam to minimize scattered background radiation.

- For non-resonance fluorescence, the detected wavelength is different from the excitation wavelength, further reducing scatter [19].

- A wavelength-selective detector (monochromator or polychromator with photomultiplier tube or CCD) measures the intensity of the specific fluorescence line.

- The signal intensity is proportional to the concentration of the analyte in the original sample.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Materials for Atomic Fluorescence Spectrometry [19]

| Item | Function / Description |

|---|---|

| Sodium Tetrahydroborate (NaBH4) | A strong reducing agent used to generate volatile hydrides from elements like As, Se, and Sb for introduction into the atom cell. |

| High-Purity Acids (HNO3, HCl) | Used for sample digestion, preservation, and acidification to enable the vapor generation reaction. |

| Tunable Laser Systems | High-radiance excitation sources that can saturate the atomic transition, maximizing the excited state population and fluorescence signal. |

| Electrothermal Atomizer (Graphite Furnace) | An electrically heated graphite tube that thermally decomposes a liquid sample to produce a cloud of free atoms. |

| Hydride Generation System | A specialized accessory consisting of a gas-liquid separator and pumps to generate and introduce analyte hydrides into the atom cell. |

| Hollow Cathode Lamps (HCLs) | Line sources that emit light characteristic of a specific element, used for selective excitation in AFS. |

Advanced Considerations and Analytical Figures of Merit

Sensitivity and Quantum Yield

The sensitivity in AFS is directly dependent on the excitation source intensity, as it increases the population of the excited state. However, this relationship holds true until the system reaches saturation, where the rates of stimulated absorption and stimulated emission equalize [19]. In practical atom reservoirs like atmospheric-pressure flames, collisions with other molecules can deactivate excited states without photon emission, a process known as quenching. The fraction of atoms that emit a fluorescence photon after absorption is the quantum yield (Φ), which can be very low in high-collision environments but close to 1 in low-pressure cells [19].

Calibration and Interferences

At low concentrations, fluorescence intensity is linear with analyte concentration. However, at elevated concentrations, self-absorption can occur, where emitted fluorescence photons are re-absorbed by other atoms of the same element in the ground state within the atom cell. This leads to curvature in the calibration graph and, in extreme cases, can cause the calibration curve to roll over [19]. For hydride-generation AFS, it is critical to note that different species of an element (e.g., methylated vs. inorganic Arsenic) can form hydrides at different rates and efficiencies, potentially requiring species-specific calibration [19].

Fluorescence yield is a fundamental atomic property that dictates the efficiency of the radiative relaxation pathway. Its precise understanding, coupled with the detailed mechanisms of competing processes like the Auger effect, forms the theoretical foundation for powerful analytical techniques like XRF and AFS. By optimizing experimental parameters such as excitation source intensity and atomization conditions, and by accounting for factors like quantum yield and self-absorption, researchers can leverage these principles to achieve exceptional analytical sensitivity. This enables applications ranging from the quality control of metal alloys to the detection of trace elements and species in biological and environmental matrices, providing invaluable data for scientific research and drug development.

In spectroscopy research, the interaction of light with matter is foundational. While absorption and emission processes involve the direct exchange of energy between photons and a material, scattering describes processes where light is deflected from its original path, often undergoing changes in direction, polarization, or energy in the process [21]. Understanding the distinct mechanisms of Rayleigh, Raman, and Mie scattering is crucial for interpreting spectroscopic data, designing experiments, and developing analytical applications across scientific disciplines, including drug development. These phenomena are not merely sources of noise or loss; they are powerful probes of molecular structure, particle size, and material composition. This guide provides an in-depth examination of these core scattering processes, framed within the broader context of how light interacts with matter in spectroscopic research.

Theoretical Foundations of Light Scattering

Fundamental Definitions and Processes

Light scattering encompasses a range of phenomena governed by the interaction between an incident electromagnetic wave and the electrons within a molecule or particle. The fundamental process can be conceptualized as the oscillating electric field of a photon inducing a polarization in the molecular electron cloud [22] [23]. This transiently forms a higher-energy "virtual state," from which a photon is almost immediately re-emitted as scattered light [23]. Scattering processes are broadly categorized as either elastic or inelastic. In elastic scattering, the energy (and thus wavelength) of the scattered photon is unchanged from the incident photon. In inelastic scattering, energy is exchanged between the photon and the molecule, resulting in a shift in the wavelength of the scattered light [21]. The following table summarizes the core characteristics of the primary scattering types.

Table 1: Fundamental Types of Light Scattering

| Scattering Type | Energy Change | Particle Size (relative to λ) | Key Characteristic | Typical Application |

|---|---|---|---|---|

| Rayleigh | Elastic (No change) | Much smaller than λ [24] | ~λ⁻⁴ wavelength dependence [21] | Determining molecular polarizability [22] |

| Raman | Inelastic (Change in ν) | Much smaller than λ | Molecular "fingerprint" spectra [23] | Chemical identification and structure |

| Mie | Elastic (No change) | Similar to or larger than λ [25] | Strong forward scattering [21] [26] | Particle sizing, cloud physics |

The Interaction Cross-Section

A critical quantitative parameter in scattering theory is the scattering cross-section, denoted as σs. It represents the effective area that a particle presents to the incident radiation for scattering and has units of area (e.g., m² or cm²). The cross-section quantifies the probability that a scattering event will occur. For Rayleigh scattering by a gas, the cross-section can be calculated using the refractive index and the King correction factor [27]: $$σν = \frac{24π^3ν^4}{N^2} \left( \frac{nν^2-1}{nν^2+2} \right)^2 Fk(ν)$$ where (ν) is the wavenumber, (N) is the gas number density, (nν) is the refractive index, and (Fk(ν)) is the King correction factor accounting for molecular non-sphericity [27]. Accurate knowledge of these cross-sections is essential for applications ranging from atmospheric radiative transfer models to the calibration of high-finesse optical cavities used in trace gas detection [27].

Rayleigh Scattering

Physical Mechanism and Theory

Rayleigh scattering is the elastic scattering of light by particles much smaller than the wavelength of the radiation [21] [24]. The physical mechanism involves the electric field of the incident light inducing an oscillating electric dipole in the molecules or small particles [24] [22]. This oscillating dipole then radiates light at the same frequency in all directions, acting as the source of the scattered light. Because the process is coherent and elastic, the inner energy of the scattering particles remains unchanged [21]. The intensity of Rayleigh-scattered light has an extreme dependence on the wavelength of light, scaling with the inverse fourth power of the wavelength (~λ⁻⁴) [21] [24]. This means that shorter wavelength blue light is scattered much more efficiently than longer wavelength red light.

Key Applications and Experimental Manifestations

The most familiar manifestation of Rayleigh scattering is the blue color of the daytime sky [24]. As sunlight passes through the atmosphere, its blue component is scattered far more strongly by oxygen and nitrogen molecules than other colors, giving the sky its characteristic hue. Conversely, at sunrise and sunset, sunlight travels through a thicker layer of atmosphere, scattering away most of the blue light and leaving the direct light from the sun appearing reddish [24]. In optical technology, Rayleigh scattering is the dominant source of propagation loss in high-quality optical glass fibers at shorter wavelengths (e.g., in the visible and ultraviolet ranges) [21]. This scattering occurs at microscopic, unavoidable density fluctuations in the glass. Consequently, the lowest propagation losses in silica fibers are achieved at longer, infrared wavelengths around 1.5-1.6 μm [21]. Rayleigh scattering is also routinely used to create image contrast in microscopy and in display screens [21].

Quantitative Measurement in Gases

Advanced spectroscopic techniques like Broadband Cavity-Enhanced Spectroscopy (BBCES) are used for precise, direct measurement of Rayleigh scattering cross-sections in gases. The following table details key reagents and materials used in such experiments.

Table 2: Research Reagent Solutions for Rayleigh Scattering Cross-Section Measurement

| Reagent/Material | Function in Experiment |

|---|---|

| Calibration Gases (He, N₂) | Used to calibrate the path length of the optical cavity; their well-known cross-sections provide a reference [27]. |

| Sample Gases (CO₂, N₂O, SF₆, O₂, CH₄) | Gases whose Rayleigh scattering and absorption cross-sections are being measured [27]. |

| High-Finesse Optical Cavity | Two high-reflectivity mirrors between which light is reflected thousands of times, creating a very long effective path length for sensitive extinction measurement [27]. |

| Broadband Light Source | A laser-driven light source (e.g., Xenon arc lamp) that provides light across a continuous wavelength range (e.g., 307–725 nm) [27]. |

| High-Resolution Spectrometer | Analyzes the intensity of light transmitted through the optical cavity as a function of wavelength [27]. |

The experimental protocol involves filling the BBCES cavity with a sample gas at a specific pressure. The decay rate of light intensity (or its reciprocal, the ring-down time) within the cavity is measured with and without the sample gas. The difference in these decay rates is directly related to the extinction coefficient of the gas, from which the scattering cross-section is derived [27]. The workflow for this measurement is outlined in the diagram below.

Raman Scattering

Physical Mechanism and Theory

Raman scattering is an inelastic scattering process where there is an exchange of energy between the incident photon and the scattering molecule [28] [23]. This results in the scattered photon having a different energy, and therefore a different wavelength, than the incident photon. The process is mediated by a short-lived virtual state and involves a change in the molecular polarizability during vibration [23]. There are two types of Raman scattering:

- Stokes Raman scattering: The molecule gains energy, and the scattered photon has a lower energy (longer wavelength) than the incident photon.

- Anti-Stokes Raman scattering: The molecule loses energy, and the scattered photon has a higher energy (shorter wavelength) than the incident photon [28] [23].

At thermal equilibrium, the majority of molecules reside in the ground vibrational state, making Stokes scattering statistically more probable and thus more intense than anti-Stokes scattering [23]. The energy difference between the incident and scattered light is called the Raman shift, and it corresponds directly to the vibrational energy levels of the molecule, providing a unique spectroscopic "fingerprint" [23].

Instrumentation and Selection Rules

Modern Raman spectroscopy almost exclusively uses lasers as excitation sources due to their high intensity and monochromaticity, which is necessary to observe the weak Raman effect [28]. The scattered light is typically detected with high-sensitivity charge-coupled devices (CCDs) [28]. The selection rule for a vibration to be Raman active is that the molecular polarizability must change during the vibration ((∂α/∂Q ≠ 0)) [28]. This is in contrast to infrared (IR) absorption spectroscopy, which requires a change in the permanent dipole moment. This difference in selection rules makes Raman and IR spectroscopy complementary techniques; some vibrational modes may be active in one but not the other. For a non-linear molecule with N atoms, the number of fundamental vibrational modes is 3N-6, any of which can be Raman-active [23].

Advanced Application: Determining the Standard Chemical Potential

Raman scattering, particularly when combined with advanced techniques like femtosecond two-dimensional (2D) spectroscopy, can be used to probe fundamental thermodynamic properties of materials. For quantum-confined systems like PbS quantum dots, which have inherent structural heterogeneity, ensemble absorption and emission spectra are broadened by static inhomogeneities. 2D spectroscopy can separate this static inhomogeneity from the dynamic linewidth. By applying single-molecule generalized Einstein relations to the dynamical absorption and emission spectra obtained from 2D fits, researchers can determine the standard chemical potential difference ((Δμ^o_{j,0→X})) between the lowest excited and ground electronic states [29]. This potential defines the maximum photovoltage the excited state can generate, a critical parameter for energy applications. Furthermore, the ensemble Stokes' shift, in conjunction with these relations, can be used to determine the average single-molecule dynamical linewidth [29].

Mie Scattering

Physical Mechanism and Theory

Mie scattering describes the elastic scattering of light by spherical particles whose diameter is comparable to or larger than the wavelength of the incident light [25] [26]. Unlike Rayleigh scattering, which can be treated as a simple oscillating dipole, the Mie solution requires solving Maxwell's electromagnetic equations for the interaction of a plane wave with a homogeneous sphere, taking into account phase variations and contributions from multiple electric and magnetic multipoles [25] [30]. The full Mie solution is expressed as an infinite series of spherical multipole partial waves [25]. Key features that distinguish Mie scattering from Rayleigh scattering include:

- A much weaker dependence on wavelength.

- A pronounced asymmetry in angular distribution, with a strong preference for forward scattering [21] [26].

- The appearance of Mie resonances, where the scattering efficiency oscillates as a function of the size parameter (x = 2πr/λ) [26].

Applications and Observational Evidence

Mie scattering is responsible for the white appearance of clouds [21] [25]. The water droplets in clouds have sizes on the order of the wavelength of visible light, and all wavelengths are scattered approximately equally, resulting in the white or gray color we observe. This is in stark contrast to the blue sky caused by Rayleigh scattering. Mie theory is essential in a wide range of fields, including meteorological optics, biomedical optics (e.g., light scattering by cells), and for characterizing colloidal suspensions and aerosols [21] [26]. It is the theoretical foundation for laser diffraction particle sizing instruments, which inversely calculate particle size distributions from measured scattering patterns [26]. The following diagram illustrates the core logic of Mie theory and its application to particle characterization.

Table 3: Key Characteristics of Mie Scattering for Different Particle Sizes

| Particle Size Regime | Wavelength Dependence | Angular Distribution | Scattering Efficiency (σ/πa²) |

|---|---|---|---|

| Rayleigh (x << 1) | Strong (~λ⁻⁴) | Symmetric (forward = backward) | Proportional to (a/λ)⁴ [26] |

| Mie Resonance (x ≈ 1) | Oscillatory and complex | Multiple maxima/minima, stronger forward lobe | Oscillates with size parameter [26] |

| Large Particle (x >> 1) | Nearly independent of λ | Very strong forward lobe, diffraction pattern | Approaches 2 (extinction paradox) [26] |

Comparative Analysis and Research Implications

Integrated Framework for Spectroscopy Research

In practical spectroscopy research, Rayleigh, Raman, and Mie scattering phenomena are not isolated events but represent different facets of light-matter interaction that must be considered holistically when designing and interpreting experiments. The choice of excitation wavelength in a spectroscopic application is often a compromise between these processes. For instance, in fluorescence spectroscopy, a common technique in drug development, Rayleigh scattering can overlap with and obscure weak emission signals, while Raman scattering from the solvent can create interfering peaks. Selecting a longer excitation wavelength can reduce Rayleigh scattering (due to its λ⁻⁴ dependence) and minimize this interference, but it must be balanced against the absorption profile of the fluorophore. Similarly, the development of sensors based on elastic (Rayleigh or Mie) scattering must account for the particle size of the analyte and the resulting wavelength dependence and angular distribution of the scattered signal [22].

Technological and Methodological Synergies

The distinctions between scattering mechanisms are leveraged in advanced instrumentation. Broadband Cavity-Enhanced Spectroscopy (BBCES), as described for Rayleigh cross-section measurement, fundamentally relies on the wavelength-dependent nature of the scattering loss within the cavity to determine gas concentrations and properties [27]. Confocal Raman microscopy spatially filters light to suppress out-of-focus Rayleigh and Mie scattered light, allowing for the clear detection of the weaker inelastically Raman-scattered photons used for chemical identification. Furthermore, the combination of scattering techniques with absorption and emission measurements, as demonstrated by 2D spectroscopy, provides a more complete picture of a material's electronic and vibrational structure, enabling the determination of key parameters like the standard chemical potential of excited states [29]. Understanding these core scattering phenomena is therefore not merely an academic exercise but a practical necessity for pushing the boundaries of analytical science, materials characterization, and pharmaceutical development.

Spectroscopy and spectrometry are foundational techniques in analytical science, yet the terms are often used interchangeably, leading to conceptual ambiguity. For researchers and drug development professionals, a precise understanding of the distinction is crucial for both methodological design and data interpretation. Spectroscopy constitutes the theoretical science investigating the interactions between radiated energy and matter [18] [31]. It is the study of how matter absorbs, emits, or scatters electromagnetic radiation to reveal information about its structure and dynamics [4]. In contrast, spectrometry represents the practical application used to acquire quantitative measurements of a spectrum [18] [32]. It is the methodological process of generating and measuring spectral data, often using instruments called spectrometers [33].

This distinction frames a critical partnership: spectroscopy provides the theoretical models for understanding energy-matter interactions, while spectrometry supplies the empirical data that validates and refines those models. Within drug development, this synergy enables everything from elucidating molecular structures to quantifying analyte concentrations in complex biological matrices.

Core Principles: Absorption, Emission, and Scattering

The theoretical framework of spectroscopy is built upon three fundamental molecular processes: absorption, emission, and scattering. These interactions between molecules and electromagnetic radiation reveal crucial information about molecular energy states, composition, and dynamics [4].

Absorption and Emission Processes

Absorption occurs when a molecule takes in energy from incident electromagnetic radiation, causing a transition from a lower energy state to a higher, excited state. The probability of absorption is determined by the transition dipole moment, which depends on the change in the molecule's electronic, vibrational, or rotational state [4].

Emission is the reverse process, where an excited molecule releases energy as electromagnetic radiation when returning to a lower energy state. This occurs through two primary mechanisms:

- Spontaneous emission: An excited molecule spontaneously decays to a lower energy state, emitting a photon with energy corresponding to the difference between states.

- Stimulated emission: An incident photon interacts with an excited molecule, inducing the emission of a second photon with identical energy, phase, and direction [4].

The intensity of absorbed or emitted radiation depends on the population of molecules in the initial and final energy states, governed by the Boltzmann distribution [4].

Scattering Phenomena

Scattering redirects electromagnetic radiation without net energy transfer to the molecule. Several scattering processes provide distinct spectroscopic information:

- Rayleigh scattering: An elastic process where radiation interacts with a molecule and is re-emitted at the same frequency. The intensity of Rayleigh scattering is proportional to the square of the molecular polarizability and inversely proportional to the fourth power of the radiation wavelength [4].

- Raman scattering: An inelastic process where the scattered radiation frequency shifts due to transitions between vibrational or rotational energy states. Stokes Raman scattering occurs at lower frequency (higher wavelength) when molecules transition to higher energy states, while Anti-Stokes Raman scattering occurs at higher frequency (lower wavelength) when molecules transition from higher to lower states [4].

- Brillouin scattering: An inelastic process involving interaction with acoustic phonons (collective vibrational modes) in materials, resulting in small frequency shifts determined by acoustic phonon velocity and incident radiation wavelength [4].

Table 1: Characteristics of Molecular Spectroscopy Processes

| Process | Energy Transfer | Spectral Characteristics | Key Applications |

|---|---|---|---|

| Absorption | Molecule gains energy | Discrete peaks at specific wavelengths | Quantitative analysis, concentration determination [14] |

| Emission | Molecule releases energy | Discrete peaks at specific wavelengths | Elemental analysis, fluorescence spectroscopy |

| Rayleigh Scattering | No net transfer | Same frequency as incident radiation | Atmospheric science, particle size analysis [4] |

| Raman Scattering | No net transfer | Frequency-shifted peaks | Molecular fingerprinting, bond vibration analysis [4] |

Spectroscopic and Spectrometric Techniques: A Comparative Analysis

The principles of absorption, emission, and scattering form the basis for diverse analytical techniques. The distinction between spectroscopy (theoretical framework) and spectrometry (measurement application) manifests across multiple methodological domains.

Major Spectroscopic Techniques

Absorption Spectroscopy encompasses techniques that measure radiation absorption as a function of frequency or wavelength [14]. The absorption spectrum reveals electronic, vibrational, and rotational energy level structures. Major subtypes include:

- Ultraviolet-Visible (UV-Vis) Spectroscopy: Measures electronic transitions in molecules; widely used for concentration determination via the Beer-Lambert law [32].

- Infrared (IR) Absorption Spectroscopy: Probes vibrational transitions in molecular bonds; essential for functional group identification and molecular fingerprinting [18].

- X-ray Absorption Spectroscopy: Investigates inner-shell electron excitations; provides information about atomic environment and oxidation states [14].

Emission Techniques include:

- Optical Emission Spectroscopy (OES): Excites atoms to higher energy states, then analyzes characteristic wavelengths emitted as they return to ground states; extensively used for elemental analysis in metallurgy [31].

- Atomic Emission Spectroscopy: Similar to OES but specifically focuses on atomic rather than molecular emissions.

Scattering-Based Techniques include:

- Raman Spectroscopy: Exploits inelastic scattering to provide vibrational fingerprints complementary to IR spectroscopy [4].

- Brillouin Spectroscopy: Measures frequency shifts from acoustic phonon interactions; used to determine elastic properties of materials [4].

Spectrometric Implementation

Spectrometry implements spectroscopic theory to generate quantitative measurements. Key spectrometric methods include:

- Mass Spectrometry (MS): Measures mass-to-charge ratio of ions; identifies molecular weights and structures [18] [31].

- Ion-Mobility Spectrometry (IMS): Separates ions based on size, shape, and charge in a carrier gas under an electric field [31].

- Rutherford Backscattering Spectrometry (RBS): Analyzes backscattered ions from a sample to determine composition and layer thickness [31].

Table 2: Spectroscopy vs. Spectrometry Comparative Analysis

| Aspect | Spectroscopy | Spectrometry |

|---|---|---|

| Primary Focus | Theoretical study of energy-matter interactions [18] [31] | Quantitative measurement of spectral data [18] [32] |

| Nature | Conceptual framework | Practical application and measurement |

| Output | Understanding of interaction mechanisms | Numerical data, spectra, quantifiable results [33] |

| Key Question | How does matter interact with radiation? | What is the intensity at specific wavelengths/mass-to-charge ratios? |

| Example Techniques | Absorption theory, Emission theory, Scattering theory | Mass spectrometry, Ion-mobility spectrometry [31] |

Experimental Protocols and Methodologies

Protocol for Absorption Spectroscopy Measurements

Principle: Determine the presence and concentration of a substance by measuring its absorption of electromagnetic radiation at characteristic wavelengths [14].

Materials and Equipment:

- Light source (e.g., deuterium lamp for UV, tungsten lamp for visible)

- Monochromator or wavelength selector

- Sample holder (cuvette with defined path length)

- Photodetector

- Data acquisition system

Procedure:

- Instrument Calibration: Power on the spectrophotometer and allow it to warm up for 15-30 minutes. Calibrate using a blank/reference sample containing only the solvent [33].

- Sample Preparation: Prepare analyte solutions at appropriate concentrations. For solid samples, ensure proper mounting to minimize light scattering.

- Baseline Correction: Measure the blank solution to establish a baseline, accounting for solvent absorption and reflection losses.

- Spectral Acquisition: Introduce the sample and measure absorbance across the desired wavelength range (e.g., 200-800 nm for UV-Vis).

- Quantitative Analysis: Apply the Beer-Lambert law (A = εlc, where A is absorbance, ε is molar absorptivity, l is path length, and c is concentration) to determine unknown concentrations [14].

Data Interpretation: Absorption peaks indicate electronic or vibrational transitions characteristic of specific functional groups or molecular structures. Peak intensity correlates with concentration, while peak position provides structural information.

Protocol for Mass Spectrometric Analysis

Principle: Identify and quantify compounds by measuring mass-to-charge ratios of gas-phase ions [18].

Materials and Equipment:

- Ionization source (e.g., electron impact, electrospray ionization)

- Mass analyzer (e.g., quadrupole, time-of-flight, orbitrap)

- Ion detector (e.g., electron multiplier)

- High vacuum system

- Data processing software

Procedure:

- Sample Introduction: Introduce the sample via direct probe, liquid chromatography, or gas chromatography interface.

- Ionization: Convert sample molecules to gas-phase ions using an appropriate ionization technique (e.g., ESI for biomolecules, EI for small molecules).

- Mass Analysis: Separate ions based on mass-to-charge ratio (m/z) using electric and/or magnetic fields.

- Detection: Measure abundance of separated ions at the detector.

- Data Analysis: Interpret the mass spectrum to identify compounds based on molecular ions, fragment patterns, and accurate mass measurements.

Applications in Drug Development: MS is indispensable for metabolite identification, pharmacokinetic studies, protein characterization, and quality control of pharmaceutical compounds [18].

Visualizing Spectroscopic and Spectrometric Relationships

The following diagrams illustrate the conceptual relationship between spectroscopy and spectrometry, along with the fundamental processes underlying spectroscopic analysis.

Diagram 1: Theoretical-practical relationship in spectral analysis.

Diagram 2: Fundamental processes in molecular spectroscopy.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful spectroscopic analysis requires appropriate selection of reagents, reference materials, and instrumentation components. The following table details essential items for a comprehensive spectroscopy laboratory.

Table 3: Essential Research Reagents and Materials for Spectroscopic Analysis

| Item | Function | Application Examples |

|---|---|---|

| Reference Standards | Calibration and quantification | Certified elemental standards for OES, pharmaceutical reference standards for UV-Vis assay development [14] |

| Solvents (HPLC/UV-Vis Grade) | Sample preparation and dilution | Methanol, acetonitrile, and water for preparing samples for UV-Vis, IR, or MS analysis [33] |

| Cuvettes/Sample Cells | Sample containment for measurement | Quartz cuvettes for UV-Vis, NaCl plates for IR spectroscopy, NMR tubes [33] |

| Matrix Compounds | Sample preparation for MSI | Matrix-assisted laser desorption/ionization (MALDI) matrices like α-cyano-4-hydroxycinnamic acid for MS imaging [34] |

| Deuterated Solvents | NMR spectroscopy | Deuterated chloroform (CDCl₃), dimethyl sulfoxide (DMSO-d₆) for NMR solvent suppression |

| Ionization Reagents | Facilitating ion formation in MS | Trifluoroacetic acid (TFA) for ESI-MS, electron impact ionization gases for GC-MS |

| Calibration Mixtures | Instrument performance verification | Polystyrene standards for molecular weight determination, wavelength calibration standards [33] |

Emerging Trends: AI and Advanced Data Analysis in Spectroscopy

The integration of artificial intelligence (AI) and chemometrics represents a paradigm shift in spectroscopic analysis. While classical methods like principal component analysis (PCA) and partial least squares (PLS) regression remain vital, they are now complemented by advanced AI frameworks that automate feature extraction, nonlinear calibration, and data fusion [35].

Machine Learning Applications:

- Supervised Learning: Algorithms including Support Vector Machines (SVMs) and Random Forests are trained on labeled spectral data to perform regression or classification tasks, enabling automated spectral quantification and compositional analysis [35].

- Unsupervised Learning: Techniques like PCA and clustering discover latent structures in unlabeled spectral data, valuable for exploratory analysis and outlier detection [35].

- Deep Learning: Convolutional Neural Networks (CNNs) and other deep neural architectures automatically extract hierarchical features from raw spectral data, particularly effective for processing unstructured data sources like hyperspectral images [35].

In pharmaceutical applications, AI-enhanced spectroscopy enables rapid, non-destructive analysis for drug authentication, quality control, and biomedical diagnostics. These approaches improve classification accuracy and feature selection while providing interpretable insights into spectral-chemical relationships [35].

The distinction between spectroscopy as a theoretical framework and spectrometry as practical measurement remains fundamental to analytical science. Spectroscopy provides the conceptual models for understanding absorption, emission, and scattering processes, while spectrometry delivers the quantitative measurements that validate these models and extract chemically relevant information. For drug development professionals, this synergy enables sophisticated molecular characterization, quantitative analysis, and structural elucidation essential to modern pharmaceutical research. As spectroscopic technologies continue to evolve, particularly with the integration of artificial intelligence and advanced data analysis methods, this foundational partnership between theory and measurement will continue to drive innovation in analytical methodology.

Element selectivity is a foundational principle of modern X-ray spectroscopy, allowing researchers to probe the specific chemical state and local environment of a chosen element within complex, multi-component materials. This capability is primarily enabled by the existence of unique, element-specific absorption edges—sharp increases in X-ray absorption that occur when the incident photon energy matches the binding energy of a core-level electron. This technical guide details the fundamental mechanisms of absorption edges, their critical role in advanced analytical techniques like X-ray Absorption Near Edge Structure (XANES) and Extended X-ray Absorption Fine Structure (EXAFS), and their application through contemporary, AI-enhanced workflows in fields ranging from battery research to pharmaceutical development.

In the realm of materials characterization, the ability to interrogate a specific element without interference from the surrounding matrix is a powerful analytical advantage. Element selectivity is this very capability, and in X-ray spectroscopy, it is achieved through the exploitation of absorption edges [36]. An absorption edge is a sharp discontinuity in the absorption spectrum of a substance that occurs at a wavelength where the energy of an incident photon corresponds precisely to the binding energy of a specific core-level electron (e.g., 1s, 2p) in a particular element [37]. When the photon energy surpasses this threshold, a photoelectron is ejected, resulting in a significant increase in the absorption probability.