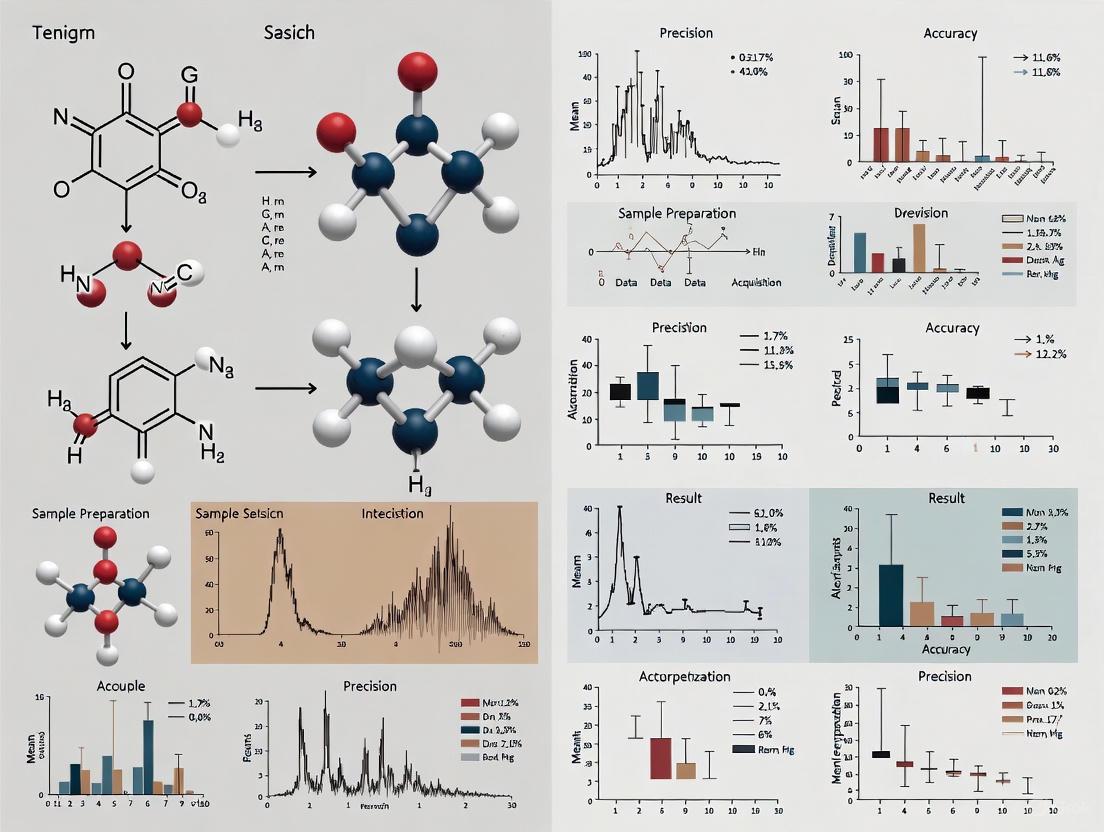

Accuracy and Precision in Spectroscopic Methods: A 2025 Guide for Pharmaceutical Research and QA/QC

This article provides a comprehensive 2025 evaluation of accuracy and precision in spectroscopic methods, tailored for researchers and drug development professionals.

Accuracy and Precision in Spectroscopic Methods: A 2025 Guide for Pharmaceutical Research and QA/QC

Abstract

This article provides a comprehensive 2025 evaluation of accuracy and precision in spectroscopic methods, tailored for researchers and drug development professionals. It explores foundational principles, including definitions of precision (reproducibility) and accuracy (closeness to truth), and the role of standards from bodies like NIST. The review covers methodological advances in UV-Vis, IR, NMR, and mass spectrometry for pharmaceutical QA/QC, process analytical technology (PAT), and food authentication. It details systematic troubleshooting for sample preparation, instrumentation, and data analysis, and concludes with rigorous validation protocols, regulatory compliance, and comparative analyses of techniques to guide method selection for ensuring drug quality and safety.

Core Principles and Standards: Defining Accuracy and Precision in Modern Spectroscopy

Distinguishing Precision (Reproducibility) from Accuracy (Closeness to Truth) in Quantitative Analysis

Core Conceptual Framework

In quantitative analysis, particularly in spectroscopic methods, accuracy and precision are fundamental, yet distinct, concepts describing measurement quality [1] [2].

Accuracy refers to the closeness of agreement between a measured result and a true or accepted reference value [3] [1]. It is a qualitative measure of correctness, often compromised by systematic error (bias), which consistently shifts measurements in one direction from the true value [2] [4]. In the context of the International Organization for Standardization (ISO) standards, the term trueness describes the closeness to the true value, while accuracy encompasses both trueness and precision [1].

Precision, also described as reproducibility, refers to the closeness of agreement between independent measurement results obtained under specified conditions [5] [6]. It describes the scatter or dispersion of repeated measurements and is a function of random error [2] [4]. High precision means that repeated measurements under unchanged conditions will show very little variation [1]. Precision can be categorized into:

- Repeatability: Precision under the same operating conditions over a short period of time (smallest possible variation) [5].

- Intermediate Precision: Precision within a single laboratory over a longer period, incorporating variations like different analysts or equipment [5].

- Reproducibility: Precision between different laboratories, under changed conditions [5] [6].

The relationship between these concepts is crucial for validating any analytical method, as a measurement system can be precise but not accurate, accurate but not precise, neither, or both [1].

Quantitative Comparison of Metrics

Table 1: Key Characteristics and Assessment of Accuracy vs. Precision

| Feature | Accuracy (Trueness) | Precision (Reproducibility) |

|---|---|---|

| Core Definition | Closeness to the true value [3] [1] | Closeness of repeated measurements to each other [1] [4] |

| Type of Error | Systematic Error (Bias) [2] | Random Error [2] |

| Primary Assessment Method | Comparison to Certified Reference Materials (CRMs) [3] | Standard deviation of repeated measurements [6] |

| Quantitative Expression | Percent Difference, Percent Recovery [3] | Standard Deviation, Variance, Range [6] |

| Dependence | Independent of precision [2] | Independent of accuracy [2] |

Table 2: Guidelines for Acceptable Analytical Bias (Accuracy) at Different Concentration Levels [3]

| Analytical Classification | Analyte Concentration | Acceptable Deviation from Certified Value |

|---|---|---|

| Quantitative | Major (>1%) | < 3% Relative |

| Quantitative | Minor (0.1 - 1%) | < 5% Relative |

| Quantitative | Trace (<0.1%) | < 12% Relative |

Experimental Protocols for Assessment

Protocol for Assessing Accuracy

The following methodology is used to determine the accuracy of a quantitative analytical method, such as spectrochemical analysis [3].

- Select Certified Reference Materials (CRMs): Obtain one or more CRMs whose matrix matches the sample of interest as closely as possible. The certified values of these materials, determined through inter-laboratory studies, serve as the best estimate of the "true value" [3].

- Perform Measurement: Analyze the CRM using the established analytical method (e.g., spectrometer). The measurement should be replicated multiple times (e.g., n=5 or more) to account for random variation [2].

- Quantify Bias: Calculate the agreement between the measured value and the certified value. Common calculations include:

- Evaluate Results: Compare the calculated RPD or Percent Recovery against acceptable criteria, such as those outlined in Table 2. A correlation curve plotting certified values (x-axis) against measured values (y-axis) can also be used; a slope of 1.0 and a high correlation coefficient (R² > 0.98) indicate high accuracy [3].

Protocol for Assessing Precision (Reproducibility)

This protocol, based on ISO standards, evaluates reproducibility as a standard deviation by altering one key condition of measurement at a time [6].

- Design the Experiment: Use a one-factor balanced, fully nested experimental design.

- Level 1: Define the measurement function and value (e.g., measure 1.0 kg mass with a specific balance).

- Level 2: Select the single reproducibility condition to evaluate (e.g., different operators, different days, different instruments).

- Level 3: Define the number of replicate measurements under each condition (e.g., 10 repeated measurements) [6].

- Execute Measurements: Have two or more independent operators (or on different days, etc.) perform the test, each collecting the defined number of replicate measurements under their respective conditions.

- Calculate Reproducibility Standard Deviation: Evaluate the results by calculating the standard deviation across the different conditions. This standard deviation represents the reproducibility of the measurement for the changed factor [6].

Signaling Pathways and Workflows

Relationship Between Measurement, Precision, and Accuracy

The Scientist's Toolkit: Essential Reagents & Materials

Table 3: Key Research Reagent Solutions for Accurate and Precise Spectroscopy

| Item | Primary Function in Analysis |

|---|---|

| Certified Reference Materials (CRMs) | Provide an accepted reference value with documented uncertainty; essential for method validation, calibration, and determining accuracy (trueness) [3]. |

| High-Purity Fluxes (e.g., Lithium Tetraborate) | Used in fusion techniques to dissolve refractory samples completely into a homogeneous glass disk, eliminating mineral and particle size effects for accurate XRF analysis [7]. |

| Spectroscopic Grinding/Milling Equipment | Creates homogeneous samples with consistent particle size, which is critical for minimizing light scattering and ensuring reproducible interaction with radiation in techniques like XRF [7]. |

| Hydraulic Pellet Presses | Transform powdered samples into solid, uniform-density pellets for XRF analysis, ensuring consistent X-ray absorption properties and enabling quantitative accuracy [7]. |

| High-Purity Solvents & Acids | Used for sample dissolution, dilution, and acidification in techniques like ICP-MS; their purity is critical to prevent contamination that causes systematic errors and undermines accuracy [7]. |

| Microporous Filters (0.45 μm, 0.2 μm) | Remove suspended particles from liquid samples for ICP-MS, preventing nebulizer clogging and spectral interferences, thereby protecting both accuracy and precision [7]. |

The Role of NIST Standard Reference Materials (SRMs) for Instrument Calibration and Method Validation

Within the landscape of analytical science, Standard Reference Materials (SRMs) from the National Institute of Standards and Technology (NIST) represent the highest order of measurement certainty, serving as the bedrock for instrument calibration and method validation in spectroscopic research. These materials are disseminated with a Certificate of Analysis (COA) that provides certified values for specific properties, with measured uncertainties that are traceable to the International System of Units (SI) [8]. This traceability is fundamental for establishing the accuracy and precision of analytical methods, as it directly links laboratory measurements to internationally recognized standards. In the context of spectroscopic methods research, where the validity of results hinges on the instrument's performance, SRMs provide an objective "ground truth" to ensure that instruments are reporting correct spectral intensities and wavelengths.

NIST offers a tiered system of reference materials to meet diverse research and quality assurance needs, as detailed in Table 1. This hierarchy allows researchers to select the appropriate material based on the required level of measurement certainty, intended application, and regulatory constraints. Standard Reference Materials (SRMs) sit at the apex as NIST's "best assertion of truth," followed by Reference Materials (RMs) for non-certified best measurements, and Research Grade Test Materials (RGTMs) for interlaboratory comparisons and early-stage research [8]. Understanding this hierarchy is the first step in selecting the proper tool for validating the accuracy and precision of any spectroscopic method.

Table 1: Hierarchy of NIST Reference Materials for Metrology

| Material Type | Traceability & Certification | Primary Documentation | Typical Use Cases | Example |

|---|---|---|---|---|

| Standard Reference Material (SRM) | Certified values with uncertainties; traceable to SI | Certificate of Analysis (COA) | Critical measurements, instrument calibration, method validation for regulatory compliance | SRM 965c Glucose in Frozen Human Serum [9] |

| Reference Material (RM) | Non-certified values; may be traceable to a standard method | Reference Material Information Sheet (RMIS) | Quality assurance, method development when an SRM is not available | NIST Monoclonal Antibody Standard (NISTmAb) [8] |

| Research Grade Test Material (RGTM) | Not traceable; "fit for purpose" | Information Sheet for intended use | Interlaboratory comparisons, early-stage research | Mpox, H5N1 (Bird Flu) materials [8] |

SRMs in Spectroscopy: From Relative Intensity Correction to Pharmaceutical Validation

The application of NIST SRMs in spectroscopy is diverse, addressing critical needs for both qualitative and quantitative analysis. A prime example is the suite of standards developed for relative intensity correction in fluorescence and Raman spectroscopy.

The Critical Need for Relative Intensity Standards

In fluorescence and Raman spectroscopy, the measured signal is an absolute value, meaning that every instrument has a unique spectral responsivity [10]. This inherent characteristic causes the spectral shape and absolute intensity of an identical sample to appear different on every instrument, and can even vary on a single instrument over time. Without correction, this compromises the accuracy, reproducibility, and transferability of spectral data, which is particularly problematic for quantitative assays and database matching. To combat this, NIST developed fluorescent glass SRMs, such as SRM 2940 (Orange Emission) and SRM 2941 (Green Emission) [11]. These are metal-ion-doped glasses chosen for their high photostability, making them resistant to photodegradation and suitable for repeated use as performance validation standards [10] [11].

SRMs for Regulated Pharmaceutical Development

The impact of SRMs extends deeply into the pharmaceutical industry, where regulatory requirements from bodies like the U.S. Food and Drug Administration (FDA) demand rigorous instrument qualification and method validation. The use of SRMs aids in satisfying these quality assurance mandates [10]. For instance, NIST has developed SRMs to support the genomic analysis of biomarkers like HER2 in breast cancer, which is critical for determining patient eligibility for targeted therapies [12]. Furthermore, a newer "living reference material" called NISTCHO is designed to accelerate the research and development of biological drugs by providing a consistent standard for ensuring they are safe and effective [12]. This application highlights the role of SRMs in moving beyond instrument calibration to the broader validation of entire analytical methodologies in life-saving contexts.

Table 2: Select NIST SRMs for Spectroscopic Calibration and Validation

| NIST SRM | Technical Description | Application in Spectroscopy | Key Certified Parameters |

|---|---|---|---|

| SRM 2940 [11] | Uranyl-ion-doped glass, photostable | Relative intensity correction for fluorescence spectroscopy | Emission intensities (500-800 nm) when excited at 412 nm |

| SRM 2941 [11] | Mn-ion-doped glass, photostable | Relative intensity correction for fluorescence spectroscopy | Emission intensities (450-650 nm) when excited at 427 nm |

| SRM 2241-2243 [10] | Suite of relative intensity standards | Calibration of Raman spectrometers | Relative intensity for excitations at 488, 515, 532, 633, 785, and 1064 nm |

| SRM 2373 [12] | Genomic DNA standard | Validation of spectroscopic assays for HER2 gene amplification | Certified values for HER2 gene copy number |

Experimental Protocols for SRM-Based Instrument Calibration

Workflow for Validating a Fluorescence Spectrometer

The process of using an SRM to validate and correct a fluorescence spectrometer follows a systematic workflow to ensure the accuracy of the instrument's spectral response. The following diagram outlines the key stages of this protocol.

Diagram: SRM Calibration Workflow for Fluorescence Spectrometers

Step 1: SRM Measurement. The researcher places the appropriate SRM (e.g., SRM 2941 for green emission) into the spectrometer and excites it using the certified excitation wavelength specified on the COA (427 nm for SRM 2941) [11]. The emission spectrum is collected across the certified wavelength range (450 nm to 650 nm for SRM 2941).

Step 2: Data Comparison and Correction Factor Calculation. The measured intensity values at each wavelength are compared against the certified values provided by NIST. The ratio of the certified intensity to the measured intensity at each wavelength generates a set of wavelength-dependent correction factors for the instrument [11].

Step 3: Application to Unknown Samples. The fluorescence spectrum of any unknown sample analyzed on the now-characterized instrument is multiplied by the derived correction factors. This mathematical operation yields the "true" spectral shape of the unknown, compensating for the instrument's inherent responsivity function [11].

Protocol for Raman Spectrometer Calibration

The calibration of a Raman spectrometer using SRMs 2241-2243 follows a similar conceptual framework but is adapted for Raman shifts. The instrument is configured for a specific excitation wavelength (e.g., 532 nm, 785 nm), and the SRM is measured. The resulting Raman spectrum is compared to the certified relative intensities provided by NIST for that specific excitation wavelength across the Raman shift range (e.g., 150 cm⁻¹ to 3500 cm⁻¹) [10]. The calculated correction curve is then applied to all subsequent sample measurements to ensure that the relative peak intensities in the Raman spectrum are accurate, which is crucial for both chemical identification and quantitative analysis.

Comparative Analysis: SRMs versus Alternative Calibration Approaches

To fully evaluate the role of NIST SRMs, it is essential to compare them against other common calibration and validation approaches used in spectroscopic laboratories. The following table provides an objective comparison based on key metrological criteria.

Table 3: Comparison of NIST SRMs with Alternative Calibration Methods

| Calibration Method | Metrological Traceability | Documented Uncertainty | Primary Application | Key Advantage | Inherent Limitation |

|---|---|---|---|---|---|

| NIST SRM [8] [13] | Yes, to SI | Yes, certified with uncertainties | Instrument calibration, primary method validation | Highest available accuracy; provides legal defensibility | Cost; potential lead times (e.g., shipping delays [12]) |

| Commercial RM | Varies (often not to SI) | Sometimes | Routine quality control, secondary calibration | Readily available; often more affordable | Lack of guaranteed SI traceability and full uncertainty budget |

| In-House Standard | No | No (or estimated) | Daily performance checks, system suitability | Low cost; highly customizable | No external validation; prone to same lab biases |

| Theoretical/Software Correction | No | No | Initial instrument setup | No physical material required | Relies on ideal instrument models; may not capture all real-world variables |

As illustrated in Table 3, NIST SRMs are distinguished by their SI traceability and fully characterized uncertainties, making them the optimal choice for establishing fundamental accuracy and defending measurement results in regulatory submissions or scientific publications. Commercial RMs or in-house standards serve important roles for routine quality control but lack the same level of metrological rigor.

The Scientist's Toolkit: Essential Research Reagent Solutions

A well-equipped spectroscopy laboratory leverages a combination of materials to ensure data integrity across different stages of work. The following toolkit details essential reagents and their functions.

Table 4: Essential Research Reagent Solutions for Spectroscopic Calibration and Validation

| Tool/Reagent | Function/Purpose | Example in Use |

|---|---|---|

| NIST SRM for Intensity Correction | Calibrates the spectral responsivity (wavelength-dependent sensitivity) of fluorescence and Raman spectrometers. | SRM 2941 used to correct emission spectra from 450-650 nm, ensuring quantitative accuracy [11]. |

| NIST SRM for Clinical Assays | Validates the accuracy of spectroscopic methods used in clinical diagnostics; provides traceability for regulated measurements. | SRM 965c used to validate clinical assays for blood glucose levels in human serum [9]. |

| NIST HER2 Genomic DNA Standard | Serves as a quantitative standard for validating spectroscopic assays that measure gene amplification, crucial for personalized medicine. | SRM 2373 used to ensure accurate measurement of the HER2 gene in breast cancer diagnostics [12]. |

| Photostability-Tested Materials | Provides a stable, non-degrading standard for long-term performance validation (PQ) of instrumentation. | Metal-ion-doped glass SRMs (e.g., SRM 2940) are irradiated to confirm resistance to photodegradation [10]. |

| Research Grade Test Material (RGTM) | Enables interlaboratory comparison studies to assess measurement reproducibility before method standardization. | Mpox or H5N1 materials distributed to multiple labs to compare and harmonize spectroscopic results [8]. |

NIST Standard Reference Materials are indispensable for anchoring the accuracy and precision of spectroscopic methods in an unbroken chain of traceability to the SI. While alternative materials like commercial RMs are suitable for routine checks, SRMs provide the highest order of measurement certainty for critical calibration and validation tasks. Their application, from correcting the relative intensity of fluorescence spectrometers to validating clinical diagnostic assays, directly supports the development of reliable, defensible, and reproducible scientific data. As spectroscopic techniques evolve towards greater quantitative rigor, particularly in regulated industries like pharmaceuticals and clinical diagnostics, the role of NIST SRMs as the definitive "truth in a bottle" will only become more pronounced.

In spectroscopic measurements, the reliable detection and quantification of analytes at low concentrations are paramount for method validation and data integrity. The Limit of Detection (LOD) and Limit of Quantitation (LOQ) are critical performance characteristics that define the fundamental boundaries of analytical methods. This guide provides a comprehensive comparison of frameworks and calculation approaches for LOD and LOQ, contextualized within spectroscopic applications. Through evaluation of experimental protocols and comparative data analysis, we objectively assess the performance, applicability, and limitations of different methodological approaches to empower researchers in selecting optimal strategies for their specific analytical requirements.

In analytical chemistry, particularly in spectroscopy, the Limit of Detection (LOD) represents the lowest concentration of an analyte that can be reliably distinguished from the analytical background noise, but not necessarily quantified as an exact value [14] [15]. It signifies the threshold at which detection is feasible, answering the fundamental question: "Is the analyte present or not?" In contrast, the Limit of Quantitation (LOQ) defines the lowest concentration at which the analyte can not only be detected but also quantified with acceptable accuracy and precision, meeting predefined goals for bias and imprecision [16] [17]. The LOQ establishes the lower boundary for reliable quantitative analysis, ensuring measurements fall within an acceptable uncertainty range [14].

These parameters are essential for characterizing the sensitivity and reliability of spectroscopic methods across diverse applications, from pharmaceutical analysis to environmental monitoring and material science [18] [19]. Proper determination of LOD and LOQ provides researchers with crucial information about the capabilities and limitations of their analytical methods, ensuring they are "fit for purpose" for specific applications [16].

Theoretical Frameworks and Definitions

The Relationship Between Blank, Detection, and Quantification

A comprehensive understanding of LOD and LOQ requires consideration of the Limit of Blank (LoB), which represents the highest apparent analyte concentration expected when replicates of a blank sample (containing no analyte) are tested [16] [15]. The LoB characterizes the background signal or "analytical noise" of the method [16].

These concepts exist in a hierarchical relationship: LoB < LOD < LOQ [16]. The LoB establishes the baseline noise level; the LOD represents a concentration sufficiently high to be reliably distinguished from this noise with statistical confidence; and the LOQ represents a yet higher concentration where quantification with acceptable precision becomes feasible [15]. This progression is illustrated in Figure 1, which shows the probability density functions for measurements at each of these critical levels.

Statistical Foundations and Error Considerations

The determination of LOD and LOQ is inherently statistical, addressing two types of potential errors [16]. Type I errors (false positives) occur when a blank sample produces a signal above the detection limit, suggesting the analyte is present when it is not. Type II errors (false negatives) occur when a sample containing the analyte at a low concentration produces a signal below the detection limit, failing to detect its presence [16].

For a signal at the LOD, the probability of a false positive (α error) is small (typically 1-5%), but the probability of a false negative (β error) can be as high as 50% [20]. This means there is a 50% chance that a sample containing the analyte exactly at the LOD concentration will yield a measurement below the LOD. At the LOQ, both α and β errors are minimized, allowing for reliable quantification [20].

Calculation Methodologies: A Comparative Analysis

Multiple approaches exist for determining LOD and LOQ, each with distinct advantages, limitations, and applicability to different spectroscopic contexts.

Standard Deviation of the Blank and Slope-Based Methods

This approach utilizes the statistical characteristics of blank measurements and the calibration curve. The Limit of Blank (LoB) is calculated as the mean blank signal plus 1.645 times its standard deviation (assuming a one-sided 95% confidence interval) [16]. The LOD is then derived using both the LoB and a low-concentration sample: LOD = LoB + 1.645(SD-low concentration sample) [16].

Alternatively, based on the standard deviation of the response and the slope of the calibration curve, LOD and LOQ can be calculated as [17] [15]:

- LOD = 3.3 × σ / S

- LOQ = 10 × σ / S

Where 'σ' represents the standard deviation of the response (often the residual standard deviation of the regression line) and 'S' is the slope of the calibration curve [17] [15]. The slope converts the variation in the instrumental response back to the concentration domain [15].

Signal-to-Noise Ratio Approach

The signal-to-noise (S/N) method is widely used in spectroscopic techniques that exhibit baseline noise, such as chromatography and spectrometry [17] [15]. This approach directly compares the magnitude of the analyte signal to the background noise of the measurement system.

For instrumental techniques like HPLC with spectroscopic detection, the following ratios are generally accepted [17] [14]:

- LOD: S/N ratio of 2:1 or 3:1

- LOQ: S/N ratio of 10:1

This method is particularly intuitive for techniques where visual inspection of chromatograms or spectra is possible, as it corresponds directly to the observable characteristics of the signal [15].

Visual Evaluation Method

For non-instrumental methods or those requiring subjective interpretation, visual evaluation may be appropriate [17] [15]. This empirical approach involves analyzing samples with known concentrations of analyte and establishing the minimum level at which the analyte can be reliably detected or quantified [15].

In practice, samples at progressively lower concentrations are tested, and detection/quantitation capabilities are assessed by analysts or automated systems. The results are often analyzed using logistic regression, with LOD typically set at 99% detection probability and LOQ at 99.95% detection probability [15].

Table 1: Comparison of LOD and LOQ Calculation Methodologies

| Method | Basis | Typical Applications | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Standard Deviation of Blank & Slope | Statistical parameters of blank samples and calibration curve | General spectroscopic methods, quantitative assays without background noise | Objectively based on statistical principles; accounts for method-specific variance | Requires substantial replication; assumes normal distribution of errors [16] |

| Signal-to-Noise Ratio | Direct comparison of analyte signal to background noise | HPLC, chromatography, techniques with observable baseline noise [17] | Intuitive; directly observable in many instruments; minimal calculations [15] | More subjective; dependent on specific instrument conditions [15] |

| Visual Evaluation | Empirical assessment of detection capability | Non-instrumental methods, qualitative assays, particle detection [17] | Practical for methods without instrumental output; reflects real-world use | Subjective; requires multiple analysts and replicates for statistical validity [15] |

Experimental Protocols for Determination

Protocol for Blank and Calibration-Based Methods

For reliable determination of LOD and LOQ using blank and calibration-based approaches, the following protocol is recommended based on Clinical and Laboratory Standards Institute (CLSI) guidelines [16]:

Sample Preparation: Prepare a minimum of 20 replicates of blank samples (containing no analyte) and 20 replicates of a sample containing a low concentration of analyte near the expected LOD [16]. For manufacturer establishment, 60 replicates of each are recommended [16].

Instrumental Analysis: Analyze all samples using the validated spectroscopic method under identical conditions to minimize extraneous variability.

Data Analysis:

- Calculate the mean and standard deviation (SD) of the blank measurements

- Compute LoB = meanblank + 1.645(SDblank)

- Calculate the mean and SD of the low-concentration sample

- Compute LOD = LoB + 1.645(SDlowconcentration_sample) [16]

Verification: Confirm the calculated LOD by analyzing samples prepared at the LOD concentration. No more than 5% of values should fall below the LoB [16].

Protocol for Signal-to-Noise Ratio Determination

For S/N-based determinations in techniques like HPLC-UV [21]:

Sample Preparation: Prepare 5-7 concentrations in the expected low concentration range, with 6 or more determinations for each concentration [15].

Instrumental Analysis: Analyze all samples and appropriate blank controls under consistent chromatographic or spectroscopic conditions.

Signal Measurement: For each concentration, measure the signal height (or area) of the analyte peak and the noise magnitude from the blank control, typically measured as the peak-to-peak noise in a representative region of the baseline [15].

Calculation and Modeling:

- Calculate S/N ratio for each determination

- Use nonlinear modeling (e.g., 4-parameter logistic regression) of S/N versus log concentration

- Determine LOD as the concentration corresponding to S/N = 2:1 or 3:1

- Determine LOQ as the concentration corresponding to S/N = 10:1 [15]

Protocol for Visual Evaluation

For methods requiring visual assessment [15]:

Sample Preparation: Prepare 5-7 concentrations from a known reference standard, covering the range from non-detectable to clearly detectable.

Blinded Assessment: Present samples to multiple trained analysts in a blinded fashion, with 6-10 determinations for each concentration.

Response Recording: For each presentation, record whether the analyte was detected (binary response).

Statistical Analysis:

- Use logistic regression to model the probability of detection as a function of concentration

- Set LOD at the concentration corresponding to 99% detection probability

- Set LOQ at the concentration corresponding to 99.95% detection probability [15]

Comparative Performance in Spectroscopic Applications

Method-Dependent Variations in Sensitivity

Recent comparative studies highlight significant variations in LOD and LOQ values depending on the calculation method employed. Research comparing approaches for HPLC analysis of pharmaceuticals found that the signal-to-noise ratio method typically provided the lowest LOD and LOQ values, while the standard deviation of response and slope method yielded the highest values [21]. This variability underscores the importance of consistent methodology when comparing analytical techniques or establishing standardized protocols.

A comprehensive study evaluating bioanalytical methods using HPLC for sotalol in plasma compared classical statistical approaches with graphical tools (uncertainty profile and accuracy profile) [19]. The classical strategy based on statistical concepts provided underestimated values of LOD and LOQ, while the graphical approaches offered more relevant and realistic assessments of these critical parameters [19].

Matrix Effects in Spectroscopic Analysis

The sample matrix significantly influences detection and quantification capabilities in spectroscopic measurements. Research on Ag-Cu alloys using Energy Dispersive X-ray Fluorescence (ED-XRF) and Wavelength Dispersive X-ray Fluorescence (WD-XRF) demonstrated that detection limits are markedly affected by the composition of the sample matrix [18]. This matrix effect necessitates method validation in representative matrices rather than pure standard solutions, particularly for complex samples like biological fluids, environmental samples, or alloy systems [18].

Table 2: LOD and LOQ Values for Different Spectroscopic Applications

| Analytical Technique | Analyte/Matrix | LOD Value | LOQ Value | Determination Method | Reference |

|---|---|---|---|---|---|

| HPLC-UV | Carbamazepine | Variable by method | Variable by method | Comparison of S/N vs. SDR methods | [21] |

| HPLC-UV | Phenytoin | Variable by method | Variable by method | Comparison of S/N vs. SDR methods | [21] |

| HPLC | Sotalol in plasma | Realistic estimates | Realistic estimates | Uncertainty profile approach | [19] |

| ED-XRF/WD-XRF | Ag-Cu alloys | Matrix-dependent | Matrix-dependent | Multiple definitions (LLD, ILD, CMDL) | [18] |

| Circular Dichroism | Antibody drugs | - | - | Spectral distance methods | [22] |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for LOD/LOQ Studies

| Item | Function in LOD/LOQ Studies | Application Examples |

|---|---|---|

| High-Purity Analytical Standards | Provides known concentration reference materials for calibration and validation | Pharmaceutical compounds (carbamazepine, sotalol) [19] [21] |

| Matrix-Matched Blank Materials | Serves as negative controls for determining background signals and LoB | Biological fluids (plasma), alloy samples [16] [18] |

| Appropriate Solvent Systems | Dissolves analytes and standards without interfering signals | Mobile phases for HPLC, solvents for sample preparation [21] |

| Certified Reference Materials | Validates method accuracy against standardized materials | Certified alloy compositions (Ag-Cu alloys) [18] |

| Quality Control Samples | Monitors method performance and stability over time | Low-concentration samples near LOD/LOQ [16] |

Visualization of Method Selection and Relationships

Figure 1: LOD/LOQ Determination Workflow - A decision flow for selecting appropriate methodology based on analytical technique and application requirements.

The accurate determination of Limit of Detection and Limit of Quantitation is fundamental to validating spectroscopic methods and ensuring reliable analytical data. As demonstrated through comparative analysis, different calculation approaches yield varying sensitivity parameters, necessitating careful method selection aligned with analytical requirements and regulatory guidelines.

Blank-based methods provide statistical rigor for quantitative assays, while signal-to-noise approaches offer practical solutions for techniques with observable baseline noise. Emerging graphical strategies like uncertainty profiles present promising alternatives that integrate measurement uncertainty directly into the validation process [19]. The influence of matrix effects further underscores the importance of context-specific method validation rather than relying on universal thresholds.

For researchers and drug development professionals, establishing appropriately determined LOD and LOQ values remains crucial for method credibility, regulatory compliance, and scientific accuracy in spectroscopic measurements. The continued refinement of detection and quantification limit methodologies will further enhance the reliability of analytical data across scientific disciplines.

Doppler-free laser spectroscopy represents a pinnacle of precision in experimental physics, enabling researchers to probe atomic and molecular energy levels with unparalleled accuracy. This technique effectively eliminates the Doppler broadening that occurs when particles moving at different velocities absorb light at slightly different frequencies, thereby obscuring the natural linewidth of a transition. The resulting dramatic increase in spectral resolution is not merely an academic achievement; it provides a critical tool for testing fundamental physical theories, such as quantum electrodynamics (QED), and for determining the values of fundamental physical constants with extraordinary precision. These constants, such as the proton-to-electron mass ratio, form the bedrock of our understanding of the physical universe, and refining their values can reveal subtle hints of physics beyond the Standard Model.

The core principle of Doppler-free spectroscopy often involves nonlinear optical techniques such as saturated absorption spectroscopy. In this method, a pump laser beam saturates a transition for a specific velocity class of atoms or molecules, while a counter-propagating probe beam interrogates the same velocity class. This configuration yields a sharp, Doppler-free dip in the absorption profile at the center of the broader Doppler-broadened line, revealing the transition's true resonance frequency. Other advanced implementations, such as Noise-Immune Cavity-Enhanced Optical Heterodyne Molecular Spectroscopy (NICE-OHMS), combine cavity enhancement with frequency modulation to achieve exceptional sensitivity and frequency resolution, pushing measurement uncertainties into the kHz regime and below [23].

Comparative Analysis of Precision Achievements

The application of Doppler-free spectroscopy to simple quantum systems has yielded a series of groundbreaking measurements. The table below summarizes key recent achievements, highlighting the exceptional precision enabled by these techniques.

Table 1: Benchmark Precision Measurements of Fundamental Constants Using Doppler-Free Spectroscopy

| Physical System | Fundamental Constant | Achieved Precision | Technique | Key Reference |

|---|---|---|---|---|

| Molecular Hydrogen Ion (H₂⁺) | Proton-to-electron mass ratio (mₚ/mₑ) | 26 parts per trillion | Doppler-free laser spectroscopy on sympathetically cooled ions | [24] |

| Water Molecule (H₂¹⁶O) | Ortho-water ground state energy | 2.5 x 10⁻⁸ cm⁻¹ | NICE-OHMS saturation spectroscopy | [23] |

| Helium-4 Atom (⁴He) | 23P fine structure splitting | 130 Hz (for 23P₀-23P₂) | Atomic beam spectroscopy with laser cooling | [25] |

The data in Table 1 underscores the transformative impact of Doppler-free methods. The measurement of the proton-to-electron mass ratio at HHU Düsseldorf, for instance, improved accuracy by three orders of magnitude compared to previous measurements [24]. This was achieved by performing spectroscopy on the simple H₂⁺ molecular ion and employing a sympathetic cooling technique to minimize Doppler effects. Similarly, the application of the SNAPS (Spectroscopic-Network-Assisted Precision Spectroscopy) approach to water vapor has allowed for the determination of energy levels with kHz accuracy, which in turn enables the prediction of thousands of other transition frequencies with calibration-quality precision [23]. These are not incremental gains but leapfrog advancements that open new frontiers in precision metrology.

Detailed Experimental Protocols and Workflows

Protocol 1: High-Accuracy Spectroscopy on Trapped Ions

This protocol, used for the record-breaking measurement of mₚ/mₑ, involves several critical stages to control and probe the molecular ion [24].

- Ion Trapping and Sympathetic Cooling: H₂⁺ ions are co-trapped with laser-coolable atomic ions (e.g., beryllium or calcium) in a radiofrequency (RF) ion trap. The atomic ions are laser-cooled to millikelvin temperatures, and through Coulomb interactions, they sympathetically cool the H₂⁺ ions, drastically reducing their thermal motion and the associated Doppler broadening.

- Doppler-Free Spectroscopy Geometry: A carefully aligned spectroscopy laser interrogates the trapped ions. To completely eliminate the residual first-order Doppler shift, a special geometry is used, often involving a standing wave or a careful selection of the laser's propagation direction relative to the ion crystal's modes of motion.

- Systematic Error Control: External electric and magnetic fields, which can shift energy levels via Stark and Zeeman effects, are actively shielded or their strengths are precisely mapped. The transition frequency is measured at different field strengths and extrapolated to zero field.

- Frequency Metrology and Data Analysis: The spectroscopy laser is referenced to an optical frequency comb, providing an absolute frequency measurement traceable to the primary time standard. The measured vibration frequency is then compared with ab initio theoretical calculations to extract the value of the proton-to-electron mass ratio.

Protocol 2: Spectroscopic-Network-Assisted Precision Spectroscopy (SNAPS)

The SNAPS methodology is a powerful, intelligent framework for maximizing the information gained from a limited set of measurements [23].

- Target Line Selection: Using network theory, the spectroscopic network of the molecule (e.g., H₂¹⁶O) is analyzed. Transitions are selected for measurement based on their utility in connecting previously isolated "islands" of energy levels and in improving the accuracy of highly connected "hub" levels. This selection considers experimental constraints like laser frequency range and transition strength.

- Doppler-Free Measurement: The selected transitions are measured using a high-finesse optical cavity enhanced technique like NICE-OHMS. This allows for the detection of saturated absorption signals with kHz-level uncertainty.

- Pressure Shift Extrapolation: Line center frequencies are measured at various gas pressures. The data is extrapolated to zero pressure to remove the systematic error introduced by pressure shifts.

- Network Validation and Energy Level Determination: The newly measured, high-accuracy transitions are integrated into the spectroscopic network. The generalized Ritz principle is used to form cycles and paths, which validate the internal consistency of the data. A global least-squares adjustment then yields the most precise values for the underlying energy levels.

- Line Prediction: The newly determined, highly accurate energy levels allow for the calculation of a vast number of "calibration-quality" transition frequencies throughout the spectrum that have not been directly measured, thereby enriching spectroscopic databases.

The following diagram illustrates the logical workflow of the SNAPS approach, from target selection to the expansion of accurate spectroscopic data.

Diagram 1: The SNAPS Workflow for Precision Spectroscopy

The Scientist's Toolkit: Essential Research Reagents and Solutions

The experimental realization of ultra-precise spectroscopy relies on a suite of sophisticated tools and methodologies. The table below details the key "research reagent solutions" essential for work in this field.

Table 2: Essential Toolkit for Ultra-Precise Doppler-Free Spectroscopy

| Tool/Technique | Function in Research | Specific Example/Implementation |

|---|---|---|

| Optical Frequency Comb | Serves as an absolute frequency ruler; provides direct traceability to the SI second, enabling frequency measurements with ultra-low uncertainty. | Used to reference the spectroscopy laser in both H₂⁺ [24] and H₂¹⁶O [23] experiments. |

| High-Finesse Optical Cavity | Dramatically increases the effective path length of light interaction with the sample, boosting sensitivity and enabling the observation of very weak transitions. | Core component of the NICE-OHMS technique used for water spectroscopy [23]. |

| (Sympathetic) Laser Cooling | Reduces the thermal motion of atoms or molecules, minimizing Doppler broadening and facilitating Doppler-free detection. | Used to cool H₂⁺ ions via co-trapped atomic ions [24]. |

| Ion/Atom Traps | Confines particles in a small volume for prolonged interrogation times, allows for excellent control over particle kinematics, and enables sympathetic cooling. | RF ion trap for H₂⁺ [24]; atomic beam apparatus for helium spectroscopy [25]. |

| Spectroscopic Networks & SNAPS | An intellectual and computational framework for designing efficient experiments and validating the consistency of measured data via the Ritz principle. | Applied to H₂¹⁶O to connect 156 new measurements with 28 literature lines, determining 160 energy levels with high accuracy [23]. |

| Advanced Laser Systems | Provide the highly stable, monochromatic, and tunable light source required to probe narrow resonances. Includes techniques like dual-frequency lasers for improved stability. | NICE-OHMS laser system [23]; dual-frequency laser for Doppler-free spectroscopy on Cesium [26]. |

Doppler-free laser spectroscopy has firmly established itself as an indispensable tool for fundamental physics, enabling determinations of physical constants at the part-per-trillion level of precision. Techniques like sympathetic cooling of ions and network-assisted experiment design are pushing the boundaries of what is measurable. The resulting data provides stringent tests for fundamental theories like QED and can reveal subtle discrepancies that may point toward new physics.

Future directions in this field are clear and compelling. Researchers aim to further improve measurement accuracy by developing new cavity-enhanced spectroscopic technologies and by combining them with cryogenic environments and molecular beam techniques [25]. A paramount goal is the precision comparison of matter and antimatter—specifically, comparing the spectroscopy of H₂⁺ with its antimatter counterpart, anti-H₂⁺, to test CPT invariance with unprecedented sensitivity [24]. As these tools and methodologies continue to evolve, they will undoubtedly continue to refine our understanding of the universe's most fundamental building blocks and the laws that govern them.

Advanced Applications and Techniques for Enhanced Spectroscopic Analysis

In the highly regulated pharmaceutical industry, ensuring the identity, purity, and potency of drug substances and products is paramount for patient safety and therapeutic efficacy. Quality Assurance and Quality Control (QA/QC) workflows rely on robust analytical techniques to verify critical quality attributes, with spectroscopic methods forming the technological backbone of these assessments. Ultraviolet-Visible (UV-Vis), Fourier-Transform Infrared (FT-IR), and Nuclear Magnetic Resonance (NMR) spectroscopy represent three cornerstone techniques that provide complementary chemical information essential for comprehensive pharmaceutical analysis [27].

These methods operate on different physical principles, yielding distinct yet interconnected data profiles for pharmaceutical compounds. UV-Vis spectroscopy measures electronic transitions, FT-IR spectroscopy probes molecular vibrations, and NMR spectroscopy provides detailed insights into atomic-level structure and environment [27] [28]. When applied individually or in concert, they create a powerful analytical framework for confirming drug identity, detecting impurities, and quantifying active ingredients throughout the pharmaceutical development and manufacturing lifecycle.

This guide objectively compares the performance characteristics, applications, and experimental considerations for these three spectroscopic techniques within the context of modern pharmaceutical QA/QC workflows. The evaluation is framed by the broader thesis of assessing accuracy and precision in spectroscopic methods research, with supporting experimental data and protocols drawn from current literature and practice standards.

Fundamental Principles and Instrumentation

Each spectroscopic technique interrogates molecules through distinct energy-matter interactions, providing unique analytical information valuable for pharmaceutical assessment.

UV-Vis Spectroscopy measures the absorption of ultraviolet or visible light by a molecule, resulting from electronic transitions between energy states. When molecules absorb UV or visible radiation, electrons are promoted from ground state orbitals to higher energy excited states. This technique is particularly sensitive to molecules containing chromophores—functional groups with conjugated π-bond systems that absorb in the UV-Vis region (typically 200-700 nm) [27] [29]. The resulting absorption spectrum provides information about electronic properties and concentration, with specific absorption peaks corresponding to energies required for π→π, n→π, and σ→σ* transitions [27].

FT-IR Spectroscopy probes the vibrational motions of chemical bonds within molecules. When infrared radiation (typically 4000-400 cm⁻¹) is passed through a sample, chemical bonds absorb energy at characteristic frequencies corresponding to their vibrational modes [27] [30]. These absorption patterns create a "molecular fingerprint" that reveals specific functional groups present in the molecule and their molecular environment [30]. The Fourier Transform algorithm enhances traditional IR spectroscopy by allowing simultaneous measurement of all wavelengths, significantly improving speed, sensitivity, and signal-to-noise ratio compared to dispersive instruments [27] [30].

NMR Spectroscopy exploits the magnetic properties of certain atomic nuclei (such as ¹H and ¹³C) when placed in a strong magnetic field. These nuclei absorb and re-emit electromagnetic radiation at characteristic frequencies that are highly sensitive to their local electronic environment [31] [28]. NMR parameters including chemical shifts, coupling constants, and signal intensities provide detailed information about the number and types of atoms in a molecule, their connectivity, and spatial arrangement [31]. This atomic-level resolution makes NMR particularly powerful for structural elucidation and studying molecular interactions [28].

Key Instrumentation Components

The instrumentation for each technique comprises specialized components optimized for specific measurement principles:

UV-Vis spectrophotometers typically include: a light source (deuterium lamp for UV, tungsten/halogen lamp for visible regions); a monochromator (diffraction grating or prism) for wavelength selection; sample holders (quartz cuvettes for UV, glass/plastic for visible); and detectors (photomultiplier tubes or photodiode arrays) to measure transmitted light intensity [27] [29]. Modern instruments often incorporate double-beam optics to compensate for source fluctuations and xenon lamps for broad wavelength coverage [29].

FT-IR spectrometers center around a Michelson interferometer containing a beam splitter, fixed mirror, and moving mirror. The interferometer generates an interferogram signal that contains information about all infrared frequencies, which is subsequently converted to a conventional spectrum via Fourier transformation [27] [30]. Sampling accessories vary by application and include transmission cells, attenuated total reflection (ATR) crystals, and diffuse reflectance accessories. ATR-FTIR has gained significant popularity in pharmaceutical analysis due to minimal sample preparation requirements [30].

NMR spectrometers consist of: a powerful superconducting magnet to create the static magnetic field; a radiofrequency transmitter to excite nuclei; a sensitive receiver coil to detect the NMR signal; and sophisticated computer systems for data processing [28]. Modern high-field NMR instruments (≥400 MHz for ¹H) provide unprecedented resolution and sensitivity for pharmaceutical applications, with cryoprobes further enhancing detection capabilities [31]. Automated sample changers and flow-injection systems enable high-throughput analysis for QA/QC applications.

Experimental Protocols and Methodologies

Standard Sample Preparation and Analysis Procedures

Proper sample preparation is critical for obtaining accurate and reproducible spectroscopic results in pharmaceutical analysis.

UV-Vis Spectroscopy Protocols: For quantitative analysis, samples are typically dissolved in a suitable solvent that does not absorb significantly in the spectral region of interest. Common pharmaceutical solvents include water, buffered solutions, methanol, or ethanol. The solution concentration should be adjusted to ensure absorbance values fall within the instrument's linear range (generally 0.1-1.0 AU) [29]. Quartz cuvettes with 1 cm path length are standard for UV measurements, while glass or plastic may be used for visible region analysis only. The reference cell should contain pure solvent or blank matrix. For solid dosage forms, extraction into appropriate solvent is typically required prior to analysis [32].

FT-IR Spectroscopy Protocols: Sample preparation methods vary significantly based on physical form and sampling technique:

- ATR-FTIR: Solid powders can be directly placed on the crystal and compressed with a pressure clamp; liquids can be applied neat. This technique requires minimal preparation and is particularly valuable for rapid identity testing [30].

- Transmission FTIR: Solid samples may be mixed with potassium bromide (KBr) and compressed into pellets; liquids can be analyzed as thin films between salt plates.

- Diffuse Reflectance (DRIFTS): Powdered samples are mixed with non-absorbing matrix (KBr) and placed in a sample cup. For pharmaceutical solids, ATR-FTIR has become the preferred method for routine analysis due to its simplicity and minimal sample requirements [30]. Samples must be thoroughly dried, as water absorbs strongly in the mid-IR region and can interfere with spectral interpretation [30].

NMR Spectroscopy Protocols: Samples are typically dissolved in deuterated solvents (e.g., D₂O, CDCl₃, DMSO-d₆) to provide a lock signal for field stability and to avoid overwhelming the proton signal with solvent protons. Concentration requirements depend on instrument sensitivity and nucleus being observed, but typically range from 0.1-10 mM for ¹H NMR in modern spectrometers [28]. Sample volumes of 500-600 μL are standard for conventional NMR tubes, while smaller volume systems are available for limited samples. For quantitative NMR (qNMR), relaxation delays must be sufficiently long (typically >5×T₁ of the slowest relaxing nucleus) to ensure complete relaxation between scans [28]. Reference standards (e.g., TMS at 0 ppm) or internal quantitative standards are added for chemical shift referencing and concentration determination.

Quantitative Analysis Methodologies

UV-Vis Quantification primarily employs Beer-Lambert Law, which states that absorbance (A) is proportional to concentration (c): A = εlc, where ε is the molar absorptivity and l is the path length [29]. Quantitative methods include:

- Direct Comparison: Using known ε values from reference standards

- Calibration Curve: Measuring absorbance of standard solutions at known concentrations

- Standard Addition: Adding known quantities of analyte to the sample to correct for matrix effects

FT-IR Quantification typically uses baseline-corrected peak heights or areas of specific absorption bands, with calibration against standard reference materials [30]. Multivariate calibration methods (e.g., PLS regression) are often employed for complex mixtures where bands may overlap [30]. ATR-FTIR requires special consideration for quantitative work, as penetration depth is wavelength-dependent, necessitating specialized calibration models or correction algorithms.

NMR Quantification leverages the direct proportionality between signal intensity and number of nuclei, making it inherently quantitative without need for compound-specific response factors [28]. qNMR methods include:

- Internal Standard: Using a known amount of reference compound with well-resolved signals

- External Standard: Comparison to a separate standard sample

- Electronic Reference: Using proprietary electronic signal referencing technologies Pulse sequences with sufficient relaxation delay and proper calibration are essential for accurate quantification [28].

Performance Comparison and Applications

Technique Capabilities for QA/QC Parameters

Table 1: Performance Characteristics of Spectroscopic Techniques in Pharmaceutical QA/QC

| Parameter | UV-Vis Spectroscopy | FT-IR Spectroscopy | NMR Spectroscopy |

|---|---|---|---|

| Primary QA/QC Applications | Quantitative analysis of chromophores, dissolution testing, content uniformity [32] | Identity testing, polymorph screening, raw material verification [30] | Structural confirmation, impurity profiling, stereochemistry determination [31] [28] |

| Identity Testing | Limited to chromophore verification | Excellent (molecular fingerprint) [30] | Excellent (atomic-level structure) [28] |

| Purity Assessment | Detects impurities with different chromophores | Good for known impurities | Excellent (detects impurities structurally related to API) [28] |

| Potency/Assay | Excellent for compounds with strong chromophores | Good with multivariate calibration | Excellent (qNMR as primary method) [28] |

| Detection Limits | ~10⁻⁶ - 10⁻⁷ M | ~0.1% for major components | ~0.1% for ¹H NMR (400 MHz) [28] |

| Quantitative Precision | 1-2% RSD | 2-5% RSD | 0.5-2% RSD (qNMR) [28] |

| Sample Throughput | High (minutes) | High (minutes) | Moderate to Low (minutes to hours) |

| Regulatory Status | Well-established for specific assays | Accepted for identity testing | Pharmacopoeial methods emerging [28] |

Complementary Roles in Pharmaceutical Workflows

These spectroscopic techniques play complementary rather than competitive roles in comprehensive pharmaceutical QA/QC systems:

UV-Vis Spectroscopy serves as the workhorse for quantitative analysis of active pharmaceutical ingredients (APIs) in dissolution testing, content uniformity, and assay determinations [32]. Its simplicity, speed, and established regulatory status make it ideal for high-throughput quality control laboratories. UV-Vis is particularly valuable for verifying that drug compounds are present within specified concentration ranges, a critical aspect of potency assessment [32] [29].

FT-IR Spectroscopy excels in identity testing and raw material verification due to its unique "molecular fingerprinting" capability [30]. The technique can distinguish between different polymorphic forms—a critical quality attribute for many pharmaceutical solids—and detect counterfeit or mislabeled materials [30]. Its minimal sample preparation and rapid analysis (especially ATR-FTIR) enable quick release decisions for incoming materials. FT-IR also finds application in monitoring chemical reactions and process analytical technology (PAT) [30].

NMR Spectroscopy provides the most comprehensive structural information, making it invaluable for confirming complex molecular structures and elucidating impurity profiles [31] [28]. As a "gold standard" technique, NMR can definitively identify and quantify structurally similar impurities that may be challenging to distinguish by other methods [28]. Quantitative NMR (qNMR) is increasingly recognized as a primary analytical method for purity assessment of reference standards [28]. NMR's ability to study molecular interactions also provides insights into drug formulation behavior and stability.

Workflow Integration and Visual Guide

Strategic Implementation in Pharmaceutical Development

The integration of these spectroscopic techniques across the pharmaceutical development lifecycle follows a strategic progression:

Early Development: NMR dominates for structural confirmation of synthetic compounds and impurity identification. FT-IR assists in polymorph screening and raw material qualification. UV-Vis establishes preliminary analytical methods for API quantification.

Formulation Development: FT-IR monitors API-excipient interactions and polymorphic transitions. UV-Vis develops dissolution and content uniformity methods. NMR may investigate formulation compatibility and degradation pathways.

Quality Control: UV-Vis becomes the primary tool for routine potency and dissolution testing. FT-IR serves as the first-line identity test for raw materials and finished products. NMR provides referee methods for dispute resolution and complex impurity profiling.

Experimental Workflow Visualization

The following diagram illustrates the complementary relationships and decision pathways for applying these techniques in pharmaceutical identity, purity, and potency assessment:

Pharmaceutical QA/QC Spectroscopic Workflow - This diagram illustrates the complementary application of FT-IR, NMR, and UV-Vis spectroscopy for identity, purity, and potency testing in pharmaceutical quality systems.

Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for Pharmaceutical Spectroscopy

| Reagent/Material | Function/Purpose | Application Notes |

|---|---|---|

| UV-Vis Grade Solvents (HPLC-grade water, acetonitrile, methanol) | Sample dissolution and reference blanks | Low UV absorbance; spectroscopically pure to minimize background interference [29] |

| Quartz Cuvettes (1 cm path length) | UV-Vis sample containment | Required for UV range; transparent down to ~190 nm; matched pairs for reference and sample [29] |

| ATR Crystals (diamond, ZnSe, Ge) | FT-IR sample interface | Diamond: durable, universal use; ZnSe: mid-range cost, avoid strong bases; Ge: high refractive index [30] |

| Deuterated Solvents (D₂O, CDCl₃, DMSO-d₆) | NMR sample preparation | Provides field frequency lock; minimizes solvent proton interference; specific choice depends on analyte solubility [28] |

| NMR Reference Standards (TMS, DSS) | Chemical shift calibration | TMS: 0 ppm reference in organic solvents; DSS: water-soluble reference for aqueous samples [28] |

| qNMR Standards (maleic acid, dimethyl terephthalate) | Quantitative NMR calibration | High purity compounds with well-characterized purity; stable, non-hygroscopic, with simple NMR spectra [28] |

| KBr Powder (FT-IR grade) | FT-IR pellet preparation | Transparent to IR radiation; used for transmission measurements with solid samples [30] |

| NMR Tubes (5 mm standard) | NMR sample containment | High-quality tubes ensure optimal spectral resolution; matched to instrument specifications [28] |

UV-Vis, FT-IR, and NMR spectroscopy represent a complementary triad of analytical techniques that collectively address the fundamental QA/QC requirements of identity, purity, and potency testing in pharmaceutical development and manufacturing. Each method brings unique capabilities and performance characteristics that make it particularly suited for specific aspects of pharmaceutical analysis.

The ongoing evolution of these technologies—including improved detector sensitivity, enhanced data processing algorithms, and increased automation—continues to expand their utility in pharmaceutical quality systems. Furthermore, the integration of multivariate analysis and artificial intelligence with spectroscopic data promises to further enhance the accuracy, precision, and efficiency of pharmaceutical QA/QC workflows.

When strategically implemented within a quality by design (QbD) framework, these spectroscopic methods provide comprehensive chemical characterization that ensures drug products meet their predefined quality attributes, ultimately safeguarding patient health and therapeutic outcomes.

Real-Time Bioprocess Monitoring with In-line Vibrational and Fluorescence Spectroscopy as Part of PAT

Process Analytical Technology (PAT) has emerged as a systematic framework for designing, analyzing, and controlling pharmaceutical manufacturing through timely measurements of critical quality and performance attributes [33]. The paradigm has shifted from traditional end-product testing (Quality by Testing) to a more proactive Quality by Design (QbD) approach, where quality is built into the product through thorough process understanding and control [33]. Within this framework, real-time monitoring using advanced spectroscopic techniques enables manufacturers to maintain processes within a defined design space, ensuring consistent product quality while reducing production failures and costs [33].

The implementation of PAT relies heavily on real-time monitoring technologies that can be deployed directly within bioprocess streams. These are categorized based on their integration method: in-line (measurement within the process stream without removal), on-line (measurement through a bypass or flow cell), or at-line (measurement near the process after sample removal) [34]. For bioprocesses characterized by complex matrices and dynamic conditions, vibrational spectroscopy (including Raman and infrared techniques) and fluorescence spectroscopy have proven particularly valuable as they provide non-invasive, label-free molecular fingerprinting capabilities suitable for real-time decision-making [34] [35].

Fundamental Principles and Comparison of Spectroscopic Techniques

Theoretical Foundations

Vibrational spectroscopy encompasses techniques that probe molecular vibrations to generate chemical fingerprints of samples. The underlying principle states that molecular energy is quantized into levels corresponding to vibrational modes, allowing molecules to absorb infrared radiation at frequencies specific to their molecular bonds [34]. The fundamental relationship governing these vibrations is expressed as:

ν = 1/(2π) * √(k/μ)

where ν represents vibrational frequency, k is the bond force constant, and μ is the reduced mass of the vibrating atoms [34]. This relationship differentiates the capabilities of various vibrational techniques based on their interaction with molecular vibrations.

Fluorescence spectroscopy operates on fundamentally different principles, measuring the emission of light from molecules that have been excited by specific wavelengths. When molecules absorb photons, they transition to excited electronic states, then return to ground states by emitting photons of lower energy (longer wavelength) [34]. This technique is exceptionally sensitive for detecting fluorescent compounds but limited to molecules with appropriate fluorophores.

Direct Technique Comparison

Table 1: Comparative analysis of spectroscopic PAT techniques

| Parameter | Raman Spectroscopy | NIR Spectroscopy | MIR Spectroscopy | 2D-Fluorescence Spectroscopy |

|---|---|---|---|---|

| Principle | Inelastic scattering of monochromatic light | Molecular overtone and combination vibrations | Fundamental molecular vibrations | Emission from excited fluorophores |

| Spectral Range | 0-1900 cm⁻¹ [36] | 4000-10,000 cm⁻¹ [36] | 400-4000 cm⁻¹ | Excitation: 270-550 nm; Emission: 310-590 nm [36] |

| Water Interference | Low (weak scatterer) | High | Very high | Moderate |

| Measurement Depth | Surface-weighted (~µm) | Deep (mm-cm) | Shallow (µm) | Depth-dependent |

| Key Strengths | Minimal sample preparation; suitable for aqueous solutions; specific molecular information [35] | Deep penetration; fiber-optic compatible | Rich chemical information; fundamental vibrations | High sensitivity; specific to fluorescent compounds |

| Primary Limitations | Fluorescence interference; weak signal [34] | Overlapping bands; complex calibration [34] | Strong water absorption; requires specialized accessories [34] | Limited to fluorescent analytes; affected by quenching [34] |

Experimental Performance Data and Analytical Capabilities

Metabolite Monitoring in Bioreactors

Comparative studies have systematically evaluated the performance of spectroscopic techniques for monitoring key metabolites in cell culture processes. One comprehensive investigation designed a Design of Experiments (DoE) approach to assess NIR, Raman, and 2D-fluorescence for measuring glucose, lactate, and ammonium under operational constraints relevant to miniature bioreactor systems (sample volume <50 µL, analysis time <1 hour for 48 vessels) [36].

Table 2: Performance comparison for metabolite monitoring in cell culture [36]

| Analyte | Technique | Accuracy (RMSECV) | Robustness | Suitability for Low Volumes |

|---|---|---|---|---|

| Glucose | Raman | 0.92 g·L⁻¹ | Most robust | Excellent |

| NIR | Not reported | Less robust | Excellent | |

| 2D-Fluorescence | Not reported | Least robust | Excellent | |

| Lactate | Raman | 1.11 g·L⁻¹ | Most robust | Excellent |

| NIR | Not reported | Less robust | Excellent | |

| 2D-Fluorescence | Not reported | Least robust | Excellent | |

| Ammonium | Raman | Not reported | Less robust | Excellent |

| NIR | Not reported | Less robust | Excellent | |

| 2D-Fluorescence | 0.031 g·L⁻¹ | Most robust | Excellent |

The study concluded that Raman spectroscopy was most suitable for this application, particularly for glucose and lactate monitoring, while 2D-fluorescence excelled specifically for ammonium detection [36].

Low-Concentration Applications and Pharmaceutical Quality Control

For low-concentration applications where NIR spectroscopy reaches its detection limits, Light-Induced Fluorescence (LIF) spectroscopy has demonstrated exceptional capability. One investigation measured tryptophan in dynamic powder flow at concentrations as low as 0.10 w/w% with remarkable accuracy (RMSEP = 0.008 w/w%) [37]. The study found that Support Vector Machines (SVM) regression outperformed partial least squares regression in handling non-linearities between calibration tests and in-line measurements [37].

In pharmaceutical quality control settings, vibrational spectroscopy has been successfully applied to chemotherapeutic drug analysis. Research demonstrated that both Raman and ATR-IR spectroscopy can discriminate between chemotherapeutic agents like doxorubicin, daunorubicin, ifosfamide, and methotrexate, with Raman proving particularly effective for quantifying concentrations in therapeutic ranges despite matrix effects from NaCl or glucose solutions [35].

Experimental Protocols and Methodologies

Standardized Workflow for Spectroscopic PAT Implementation

The implementation of spectroscopic PAT follows a systematic workflow encompassing experimental design, spectral acquisition, data processing, and model development. The following diagram illustrates this standardized methodology:

Diagram 1: PAT implementation workflow. CQAs: Critical Quality Attributes; CPPs: Critical Process Parameters; DoE: Design of Experiments

Detailed Methodological Protocols

Raman Spectroscopy Protocol for Metabolite Monitoring

Based on the miniature bioreactor study [36], the experimental protocol for Raman spectroscopy includes:

- Sample Presentation: 20 µL of cell culture supernatant placed in plastic disposable 96-well plates (Nunc)

- Instrumentation: Raman WorkStation microscope (Kaiser Optical Systems) with 785 nm excitation laser

- Acquisition Parameters: Laser power of 400 mW at source (~100 mW at sample), 10× magnification objective, exposure time of 10s by 2 scans co-added for total 20s per acquisition

- Spectral Processing: Cosmic ray interference removal applied, total processing time of 40s per sample

- Spectral Range: 0-1900 cm⁻¹ at 0.3 cm⁻¹ resolution

- Calibration: Reference metabolite concentrations determined using Nova BioProfile 400 bioanalyzer

Fluorescence Spectroscopy Protocol for Low-Concentration API Quantification

For quantification of low-concentration tryptophan in powder flow [37]:

- Setup: In-line implementation for dynamic powder flow measurement

- Instrumentation: Light-Induced Fluorescence (LIF) spectroscopy system

- Concentration Range: 0.10 w/w% tryptophan in powder formulation

- Chemometrics: Support Vector Machines (SVM) regression to handle non-linearities

- Validation: Root Mean Square Error of Prediction (RMSEP) of 0.008 w/w%

Chemotherapeutic Drug Analysis Protocol

For quality control of chemotherapeutic preparations [35]:

- Sample Preparation: Drug solutions prepared in relevant clinical matrices (0.9% NaCl or 5% glucose)

- Concentration Range: Across therapeutic ranges for each drug

- Techniques: Parallel analysis by ATR-IR and Raman spectroscopy

- Spectral Analysis: Focus on fingerprint region (500-1800 cm⁻¹) for both techniques

- Quantification: Partial Least Squares (PLS) regression for concentration prediction

- Discrimination: Principal Component Analysis (PCA) for drug identification

Essential Research Reagents and Materials

Table 3: Essential research reagents and materials for spectroscopic PAT applications

| Category | Specific Items | Function/Application | Experimental Considerations |

|---|---|---|---|

| Consumables | Disposable 96-well plates (Greiner Bio-One, Nunc) | Sample holding for spectral acquisition | Material compatibility (minimal background interference) |

| Cell culture supernatant samples | Analysis matrix | Historical samples can be used for DoE approaches [36] | |

| Calibration Standards | Glucose, lactate, ammonium standards | Model calibration and validation | Cover expected concentration ranges in bioprocess [36] |

| Chemotherapeutic drug standards (doxorubicin, daunorubicin, etc.) | Pharmaceutical quality control | Prepare in relevant clinical matrices [35] | |

| Reference Analytics | Nova BioProfile 400 | Reference metabolite concentration measurement | Requires larger sample volume (600 µL) [36] |

| HPLC-UV systems | Gold standard for pharmaceutical QC [35] | Longer analysis time but high accuracy | |

| Data Processing Tools | Multivariate analysis software (PLS, PCA algorithms) | Spectral data processing and model development | Critical for extracting meaningful information [34] |

| Machine learning platforms (SVM, ANN) | Handling non-linear spectral responses | Particularly valuable for complex matrices [37] |

Integration with Chemometrics and Data Analysis

The effective implementation of spectroscopic PAT relies heavily on advanced chemometric methods for extracting meaningful information from complex spectral data. As raw spectra typically contain noise and exhibit collinearity, various preprocessing algorithms must be applied, including Savitzky-Golay smoothing, multiplicative scatter correction, and adaptive iteratively reweighted penalized least squares for baseline correction [38] [34].

For model development, both traditional multivariate and modern machine learning approaches have proven valuable:

- Principal Component Analysis (PCA): Unsupervised pattern recognition for spectral discrimination [35]

- Partial Least Squares (PLS) Regression: Primary method for quantitative spectral analysis [36]

- Support Vector Machines (SVM): Effective for handling non-linear responses, with optimized variants like Grey Wolf Optimized SVM achieving high accuracy (98.25% for saliva FTIR spectra) [38]

- Artificial Neural Networks (ANN): Increasingly applied for intricate nonlinear pattern recognition in complex bioprocess datasets [34]

The integration of swarm intelligence optimization algorithms, such as Grey Wolf Optimization, with traditional chemometric methods has demonstrated enhanced classification performance for spectroscopic data, particularly for complex biological samples [38].

The comparative analysis of vibrational and fluorescence spectroscopy techniques reveals distinct application domains where each technology excels. Raman spectroscopy emerges as the most versatile technique for general bioprocess monitoring, particularly for metabolite quantification in miniature bioreactor systems and pharmaceutical quality control where minimal sample preparation and compatibility with aqueous solutions are paramount [36] [35]. Fluorescence spectroscopy offers superior sensitivity for specific applications involving fluorescent compounds or low-concentration analytes where other techniques approach detection limits [37]. NIR spectroscopy provides practical advantages for deep penetration measurements but requires more complex calibration models due to overlapping spectral features [34].

Selection criteria should prioritize:

- Target analytes and their inherent spectroscopic properties

- Matrix complexity and potential interferences

- Required sensitivity and detection limits

- Operational constraints including sample volume and analysis time

- Implementation costs and expertise requirements

The convergence of spectroscopic PAT with advanced machine learning algorithms and optimization techniques continues to expand the capabilities of real-time bioprocess monitoring, enabling more robust control strategies and facilitating the transition toward continuous manufacturing paradigms in the pharmaceutical industry [33] [34].

Performance Validation of Low-Field NMR for Quantitative Analysis of Finished Medicinal Products

Quantitative Nuclear Magnetic Resonance (qNMR) spectroscopy is a well-established technique for determining the absolute quantity of compounds in complex mixtures. While high-field NMR (HF NMR) has been the traditional standard for such analyses, the advent of modern, affordable low-field NMR (LF NMR) spectrometers (typically operating at 40–100 MHz) has prompted rigorous evaluation of their performance for pharmaceutical applications [39]. This guide objectively compares the quantitative accuracy and precision of LF NMR against high-field alternatives and other spectroscopic methods, providing researchers with experimental data and methodologies for informed analytical decisions. The context frames this comparison within the broader thesis of evaluating accuracy and precision in spectroscopic methods research, focusing specifically on finished medicinal products where dosage accuracy is paramount.

Performance Comparison: Low-Field vs. High-Field NMR

Table 1: Quantitative Performance Comparison Between Low-Field and High-Field NMR

| Performance Metric | Low-Field NMR (80 MHz) | High-Field NMR (500-600 MHz) |

|---|---|---|

| Typical Recovery Rates (Deuterated Solvents) | 97% - 103% [39] | >99.9% (theoretical ideal) [40] |

| Typical Recovery Rates (Non-deuterated Solvents) | 95% - 105% [39] | Less commonly used |

| Average Bias (vs. Reference HF NMR) | 1.4% (deuterated), 2.6% (non-deuterated) [39] | Reference Method |

| Key Application Shown | Analysis of 33 finished medicinal products (tablets, capsules, creams) [39] | Lipoprotein analysis (25 markers) [41] |