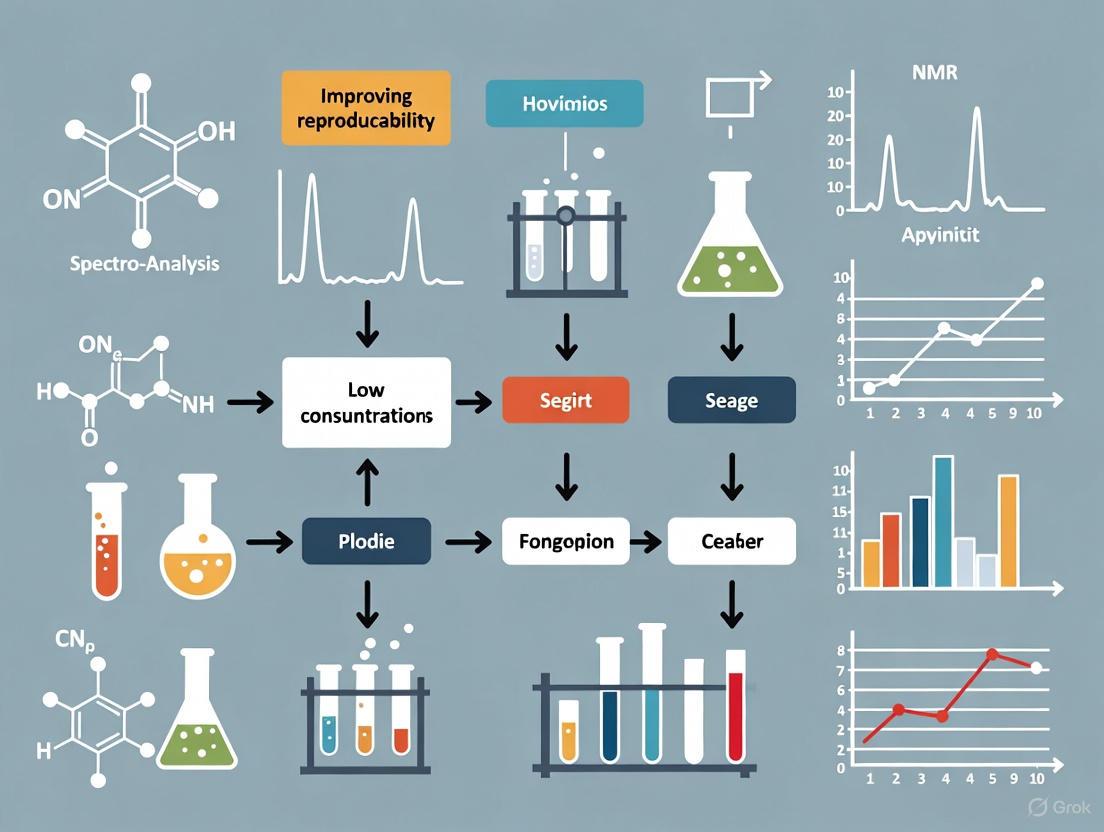

Achieving Reliable Results: A Comprehensive Guide to Improving Reproducibility in Low Concentration Measurements

This article provides a systematic framework for researchers, scientists, and drug development professionals to overcome the critical challenge of poor reproducibility in low-concentration measurements.

Achieving Reliable Results: A Comprehensive Guide to Improving Reproducibility in Low Concentration Measurements

Abstract

This article provides a systematic framework for researchers, scientists, and drug development professionals to overcome the critical challenge of poor reproducibility in low-concentration measurements. Covering the journey from foundational concepts to advanced validation, we explore the core principles of measurement variation, practical methodological optimizations for sample handling and analysis, targeted troubleshooting for common pitfalls, and the rigorous statistical and metrological practices required for method validation. By integrating perspectives from metrology, statistics, and practical laboratory science, this guide delivers actionable strategies to enhance data reliability, ensure regulatory compliance, and build confidence in scientific findings at the limits of detection.

The Reproducibility Challenge: Understanding Variation and Uncertainty at Low Concentrations

Defining Reproducibility, Repeatability, and Precision in a Metrology Context

FAQs on Core Metrology Concepts

What is the difference between repeatability and reproducibility?

Repeatability is the measurement precision under a set of identical conditions: the same operator, same measuring instrument, same conditions, same location, and repeated over a short period of time. It represents the smallest possible variation in results [1]. Reproducibility, however, is the measurement precision under changed conditions, such as different operators, different measuring systems, or different laboratories [1] [2]. In essence, repeatability is the variation observed when one person measures the same item multiple times with the same tool, while reproducibility is the variation observed when different people measure the same item with the same tool [3] [4].

Why is reproducibility a major concern in drug development research?

Failures in research reproducibility have significant economic and translational consequences. Studies indicate that a substantial portion of preclinical research is not reproducible, which risks the validity of conclusions and wastes critical resources [5]. One analysis found that in the United States alone, approximately $50 billion is spent annually on irreproducible life science research [5]. Another study noted that for every 100 drugs that enter Phase 1 trials, only about 10 receive final approval, a 90% failure rate often linked to difficulties in translating promising preclinical findings [6]. This irreproducibility creates a "valley of death" that prevents discoveries from moving from the lab to patients [6].

How can I assess the repeatability and reproducibility of my measurement system?

A common method is to conduct a Measurement System Analysis (MSA) using a Gage Repeatability and Reproducibility (Gage R&R) study [3]. This typically involves a designed experiment where multiple operators measure the same set of parts multiple times in a randomized order. The resulting data is analyzed to quantify how much of the total measurement variation is due to the measurement device itself (repeatability) and how much is due to differences between operators (reproducibility) [2] [3]. This helps pinpoint sources of error and guides improvements.

Troubleshooting Guides

Problem: High variation in low-concentration measurements between different analysts.

This is a classic issue of poor reproducibility.

- Potential Cause 1: Insufficiently detailed protocols. Small, unrecorded differences in technique between analysts can lead to large discrepancies in results, especially with sensitive, low-concentration assays.

- Solution:

- Create and validate highly detailed Standard Operating Procedures (SOPs). Document every critical step, including reagent preparation (e.g., gravimetric vs. volumetric method [2]), mixing times and speeds, incubation times, and equipment calibration schedules [4].

- Implement rigorous operator training and ensure all analysts are certified on the SOP before generating data.

- Potential Cause 2: Uncontrolled reagent and material variability.

- Solution:

Problem: Inconsistent results for the same sample when measured over several days.

This indicates an issue with intermediate precision, which is the precision obtained within a single laboratory over a longer period [1].

- Potential Cause 1: Environmental drift.

- Solution:

- Potential Cause 2: Changes in analytical components. Factors like different reagent batches, columns (in LC-MS), or calibrants that are constant within a day but change over time can behave as random variables and increase long-term variation [1].

- Solution:

- Where possible, use a single, large batch of critical reagents for a long-term study.

- If new batches must be used, perform a cross-validation against the old batch to ensure consistency.

- Document all changes in components as part of the data metadata.

Quantitative Data on Reproducibility

Table 1: Documented Rates of Irreproducibility in Preclinical Research

| Field of Study | Reproducibility Rate | Context and Source |

|---|---|---|

| Psychology | 36% - 47% | Only 36% of 100 re-studied experiments had statistically significant findings; fewer than half were subjectively judged successful [7]. |

| Oncology (Haematology/Oncology) | ~11% (6 of 53 studies) | Scientists at Amgen could only confirm findings in 6 out of 53 landmark published papers [8]. |

| Oncology Drug Development | 20% - 25% | Only 20-25% of validation studies were "completely in line" with original reports [7]. |

Table 2: Economic Impact of Irreproducible Biomedical Research

| Scope | Estimated Annual Cost | Key Findings |

|---|---|---|

| United States (Life Sciences) | $50 Billion | About 50% of research in drug discovery and life sciences is not reproducible, wasting time and resources [5]. |

| United States (Preclinical Research) | $28 Billion | A study focused on the cost of irreproducible preclinical research [9]. |

Experimental Protocols for Assessment

Protocol 1: One-Factor Balanced Experiment for Reproducibility

This protocol, based on ISO 5725-3, evaluates the impact of a single changing condition (e.g., different operators) on measurement reproducibility [2].

- Select Test Function: Choose a specific, well-defined measurement (e.g., "concentration of analyte X in standard solution Y").

- Determine Requirements: Identify all equipment, reagents, and procedural steps from the validated SOP.

- Select Reproducibility Condition: Choose one factor to evaluate. For most labs, different operators is the most impactful starting point [2].

- Perform the Test:

- Condition A: Operator 1 performs the measurement on 10 identical samples [2].

- Condition B: Operator 2 performs the same measurement on 10 identical samples from the same batch.

- (Additional conditions, such as Operator 3, can be added).

- Evaluate Results: Calculate the standard deviation of the results across all operators. This standard deviation is a quantitative measure of the measurement's reproducibility under the changed condition [2].

Protocol 2: Distinguishing Repeatability and Reproducibility in a Gage R&R Study

This standard industrial protocol helps isolate different sources of variation in a measurement system [3].

- Select Parts and Operators: Choose a set of 5-10 parts that represent the entire expected range of variation. Select 2-3 operators who normally perform the measurement.

- Design the Experiment: Create a randomized run order where each operator measures each part multiple times (e.g., 2-3 times). The operators should be blind to the part identity and previous results.

- Execute Measurements: Operators measure the parts in the randomized order, following the standard procedure.

- Statistical Analysis: Analyze the data using ANOVA or similar methods to decompose the total variation into:

- Repeatability: The variation due to the measurement device (consistency of a single operator).

- Reproducibility: The variation due to differences between operators.

- Part-to-Part: The actual variation between the parts.

Visualizing Key Concepts and Workflows

Precision Concept Decision Tree

Reproducibility Test Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Materials for Robust Low-Concentration Measurements

| Item | Function | Considerations for Reproducibility |

|---|---|---|

| Validated Reagents | High-purity chemicals, antibodies, and assay kits with provided certificates of analysis. | Using validated reagents from trusted vendors minimizes batch-to-batch variability and provides traceability [5]. |

| Certified Reference Materials (CRMs) | Substances with one or more specified property values that are certified by a valid procedure. | CRMs are essential for calibrating instruments and validating methods, providing a foundation for metrological traceability [10]. |

| Electronic Lab Notebook (ELN) | Software for recording protocols, raw data, and observations in a structured, searchable format. | ELNs facilitate detailed protocol documentation, audit trails for data changes, and sharing of methods, which is crucial for reproducibility Types A and B [7]. |

| Standard Operating Procedures (SOPs) | Documents that provide step-by-step instructions for a specific, repetitive task. | Highly detailed SOPs are critical for communicating the intricacies of complex biological experiments and ensuring consistency across different operators and time [5]. |

| Quality Controlled Cell Lines | Cell lines that have been tested for identity, sterility, and freedom from contaminants. | Using contaminated or misidentified cell lines is a major source of irreproducible data. Regular quality control is essential [5]. |

FAQs on Core Concepts

What are the key sources of measurement uncertainty in trace-level analysis? At trace levels, measurements are susceptible to numerous uncertainty sources that can be grouped into several categories [11]:

- Sample Preparation: Incomplete extraction, incomplete digestion, contamination, volatilization, and adsorption to container walls.

- Instrumental Analysis: Variations in detector response, column performance (for chromatography), temperature fluctuations, and electronic noise.

- Calibration: Uncertainty in the preparation of calibration standards and the fit of the calibration curve.

- Data Processing: Integration errors for chromatographic peaks and background subtraction.

- The Sampling Process: The sample measured may not represent the defined measurand (inadequate homogeneity) [12].

How does metrological traceability improve confidence in low-concentration measurements? Metrological traceability is the "property of a measurement result whereby the result can be related to a reference through a documented unbroken chain of calibrations, each contributing to the measurement uncertainty" [13] [14]. Establishing traceability to international or national standards (e.g., SI units) ensures that measurements are accurate and comparable across different laboratories and over time, which is a fundamental requirement for reproducible research [13] [15].

What is the difference between repeatability and reproducibility, and why are they critical for trace analysis?

- Repeatability: Precision under conditions where the same measurement procedure, same operators, same measuring system, same operating conditions, and same location are used over a short period of time. It represents the best-case precision of your method [12].

- Reproducibility: Precision under conditions that may involve different locations, operators, measuring systems, and replicate measurements. It assesses the reliability of a result across different laboratory environments [12].

In trace analysis, the relative standard deviations (RSDs) indicating reproducibility among different laboratories are often larger than the RSDs indicating repeatability in a single laboratory, highlighting the challenge of obtaining consistent results across different setups [16].

How is measurement uncertainty calculated for a trace-level measurement procedure?

Measurement uncertainty is typically calculated by identifying and quantifying all known contributors to error, then combining them. A common method is the root sum square [13]:

U = k × √(u₁² + u₂² + u₃² + ...)

where u₁, u₂, u₃,... are the standard uncertainties of each component, and k is a coverage factor (often 2 for 95% confidence). This "bottom-up" approach is outlined in the Guide to the Expression of Uncertainty in Measurement (GUM) [15].

Troubleshooting Guides

Symptom: High Background Noise or Signal Drift

| Potential Cause | Investigation | Solution |

|---|---|---|

| Contaminated Solvents/Reagents | Run a procedural blank. | Use high-purity solvents and reagents. Ensure clean glassware [17]. |

| Carryover from Previous Samples | Inject a solvent blank after a high-concentration sample. | Increase wash/equilibration times in the autosampler. Optimize the injection program or use a dedicated needle wash [11]. |

| Unstable Instrument Baseline | Monitor the baseline signal over time without injection. | Ensure the instrument is properly warmed up and conditioned. Check for dirty source components (e.g., mass spec ion source) or aging detector lamps [17] [11]. |

| Environmental Fluctuations | Record laboratory temperature and humidity. | Maintain a stable operating environment for sensitive instrumentation [17]. |

Symptom: Poor Recovery of Analyte

| Potential Cause | Investigation | Solution |

|---|---|---|

| Incomplete Extraction | Spike a sample with a known amount of analyte and measure recovery. | Optimize extraction time, temperature, and solvent composition. Use a different extraction technique [16]. |

| Adsorption to Labware | Test different vial types (e.g., silanized glass, polypropylene). | Use low-adsorption, certified labware. Add a carrier protein or modify the solution to compete for binding sites [17]. |

| Analyte Degradation | Analyze sample stability over time in the preparation solvent and matrix. | Control temperature during preparation, protect from light, and reduce processing time [15]. |

| Improper Internal Standard Use | Check if the internal standard is behaving similarly to the analyte. | Use a stable isotope-labeled internal standard, which most closely mimics the analyte's chemical behavior [15]. |

Symptom: Inconsistent Replicate Measurements

| Potential Cause | Investigation | Solution |

|---|---|---|

| Inhomogeneous Sample | Prepare and measure replicates from different parts of the sample. | Ensure thorough mixing or homogenization before aliquoting [12]. |

| Variation in Derivatization | If a derivatization step is used, check the reaction consistency. | Strictly control reaction time and temperature. Use fresh derivatization reagents [11]. |

| Pipetting Inaccuracy | Calibrate pipettes gravimetrically with water. | Use calibrated, high-quality pipettes and train operators on proper technique. Use positive displacement pipettes for viscous solvents [15]. |

| Integration Variability | Re-integrate the same chromatographic peak using different parameters. | Establish and consistently apply a clear integration rule for all data [11]. |

Experimental Protocol: A Bottom-Up Approach to Quantifying Uncertainty

This protocol outlines a detailed methodology for estimating the measurement uncertainty of a mass spectrometry-based procedure for quantifying a protein biomarker at trace levels, following the GUM framework [15].

1. Specification of the Measurand Clearly define what is being measured. Example: "The mass concentration of albumin (in µg/L) in frozen human urine."

2. Identification of Uncertainty Components using a Cause-and-Effect Diagram Construct a diagram (see visualization below) that maps all potential sources of uncertainty. Major components for an ID-LC-MS/MS protein quantification typically include [15]:

- Purity of the primary calibrator (u_char)

- Weighing operations (u_weigh)

- Volume/pippeting operations (u_vol)

- Digestion efficiency (u_dig)

- Ionization efficiency in the MS source (u_ion)

- Instrument response/calibration curve fit (u_cal)

- Within-run precision (u_prec)

Uncertainty Cause-and-Effect Diagram

3. Quantifying the Uncertainty Components

- Type A Evaluation: Estimate components by statistical analysis of a series of observations. Example: The standard deviation of repeated measurements of a quality control sample determines

u_prec[15] [12]. - Type B Evaluation: Estimate components by means other than statistical analysis. Examples:

u_weigh: Calculated from the balance's calibration certificate.u_vol: Calculated from the manufacturer's tolerance for the pipette and the laboratory's temperature range.u_dig: Estimated from a Design of Experiments (DOE) study optimizing digestion parameters [15].

4. Calculating the Combined Standard Uncertainty

The individual standard uncertainties are combined as a root sum of squares [13] [15]:

u_c = √(u_char² + u_weigh² + u_vol² + u_dig² + u_ion² + u_cal² + u_prec²)

5. Calculating the Expanded Uncertainty

The combined standard uncertainty is multiplied by a coverage factor (k), typically k=2, to obtain an expanded uncertainty (U) that defines an interval expected to encompass a large fraction of the value distribution [13] [15].

U = k × u_c

The Scientist's Toolkit: Essential Research Reagent Solutions

| Reagent / Material | Function in Trace-Level Analysis |

|---|---|

| Certified Reference Materials (CRMs) | Provides a metrological traceability link to higher-order standards. CRMs have certified property values with associated uncertainties and are essential for method validation and calibration [14] [17]. |

| Stable Isotope-Labeled Internal Standards | Mimics the analyte's chemical behavior during sample preparation and analysis. Corrects for losses during extraction, digestion, and ionization, thereby reducing uncertainty [15]. |

| High-Purity Solvents & Acids | Minimizes background contamination and signal interference, which is critical for achieving low detection limits and ensuring accurate quantification of the target analyte [17]. |

| Certified Low-Background Labware | Prevents adsorptive losses of the trace analyte to container walls. Using low-binding, certified vials and tubes improves recovery and reduces a significant source of bias and uncertainty [17]. |

Trace-Level Analysis Workflow

Distinguishing Between Analytical Variation and Biological Variation

FAQ: Core Concepts and Definitions

What is the fundamental difference between analytical and biological variation?

Biological Variation (BV) refers to the natural fluctuation of a measurand (the quantity being measured) around an individual's homeostatic set point over time. These innate physiological variations can be random or follow daily, monthly, or seasonal rhythms [18]. It has two components:

- Within-individual biological variation (CVI): The variation observed in repeated measurements from a single individual around their own homeostatic set point [18].

- Between-individual biological variation (CVG): The variation due to differences in the homeostatic set points among different individuals in a population [18].

Analytical Variation (CVA) is the variability introduced by the measurement system itself—the imprecision of the equipment, reagents, and procedures used to perform the assay. It represents the variation observed among replicate measurements of the same specimen [18].

Why is it crucial to distinguish between these variations in low-concentration research? Accurately distinguishing between CVA and CVI is fundamental for improving reproducibility. It allows researchers to determine whether a change in a serial measurement is due to a true physiological shift in the subject or merely a result of measurement imprecision. This is especially critical when measurements are near an assay's detection limit, where analytical "noise" can easily obscure biological "signal," leading to false positives or negatives in data interpretation [18] [19].

How do the concepts of repeatability and reproducibility relate to these variations? While these terms have specific definitions in fields like MRI research, their principles are universally applicable [20]:

- Repeatability (same team, same setup) is primarily concerned with minimizing analytical variation.

- Reproducibility (different team, same setup) and Replicability (different team, different setup) are challenged by both analytical and biological variations. A reproducible method must have low analytical variation, and the study must account for the biological variation present in the sample population.

FAQ: Impact on Data Interpretation and Quality Control

How can biological variation data be used to set analytical performance goals?

Biological variation data provide a framework for setting rational, analyte-specific quality goals for your analytical methods. The following table summarizes the formulas for calculating desirable performance specifications based on the within-individual (CVI) and between-individual (CVG) biological variation coefficients [21].

Table 1: Formulas for Setting Analytical Quality Goals Based on Biological Variation

| Performance Goal | Calculation Formula | Basis of the Goal |

|---|---|---|

| Desirable Imprecision (I) | ( I < 0.5 \times CVI ) | Ensures analytical "noise" adds minimally to the true biological signal [21]. |

| Desirable Bias (B) | ( B < 0.25 \times \sqrt{CVI^2 + CVG^2} ) | Limits the systematic error to minimize misclassification of individuals relative to population reference intervals [21]. |

| Total Allowable Error (TEa) | ( TEa < 1.65 \times I + B ) | Combines imprecision and bias into a single total error budget at a 95% confidence level [21]. |

What is the Reference Change Value (RCV) and how is it used? The Reference Change Value (RCV), also known as the Critical Difference, is a crucial tool for interpreting serial results from a single patient or research subject. It calculates the minimum difference between two consecutive measurements required to be statistically significant, considering both the analytical and within-subject biological variation [18]. The formula is: ( RCV = Z \times \sqrt{2} \times \sqrt{CVA^2 + CVI^2} ) Where ( Z ) is the Z-score for the desired level of statistical confidence (e.g., 1.96 for 95% confidence). A change between two serial results that exceeds the RCV is likely to reflect a true biological change rather than random variation [18].

What is the Index of Individuality (II) and what does it tell us? The Index of Individuality is the ratio of within-subject to between-subject variation, calculated as ( II = \sqrt{CVI^2 + CVA^2} / CVG ) [18]. It indicates the usefulness of population-based reference intervals (pRIs):

- Low II ( < 0.6): Suggests that an individual's values fluctuate within a relatively narrow range compared to the population spread. For such analytes, population-based reference intervals are less useful, and monitoring change relative to the individual's baseline (using RCV) is more powerful [18].

- High II ( > 1.4): Indicates that an individual's variation is large compared to the differences between individuals. For these analytes, population-based reference intervals are more effective [18].

Troubleshooting Guide: Common Scenarios and Solutions

Problem: Inconsistent results when measuring low-concentration biomarkers across different research sites.

- Potential Cause: High analytical variation (CVA) due to a lack of method standardization. Differences in equipment, reagent lots, or operator techniques between sites can introduce significant, uncontrolled variability.

- Solution:

- Standardize Protocols: Develop and document a single, detailed standard operating procedure (SOP) for sample preparation, measurement, and data analysis for all sites [20].

- Harmonize Methods: Use the same make and model of analytical instruments and the same batch of critical reagents whenever possible.

- Implement QC: Use identical quality control materials (QCMs) and pooled patient specimens across all sites to monitor and control for analytical variation [18].

Problem: Unable to determine if a small change in a low-concentration analyte over time is real or due to noise.

- Potential Cause: The observed change is smaller than the combined analytical and biological variability. Without knowing the RCV, you cannot assess the significance of the change.

- Solution:

- Calculate the RCV: Use the formula above. You will need a robust estimate of CVA from your own method validation data (preferably from patient sample replicates) and a published CVI estimate for your analyte from a reputable biological variation database (e.g., the EFLM Biological Variation Database) [18] [21].

- Compare Data to RCV: If the absolute difference between the two results is greater than the RCV, you can be confident (e.g., 95% confident if Z=1.96) that a significant change has occurred.

Problem: High imprecision (CVA) is obscuring the biological signal.

- Potential Cause: The analytical method's imprecision is too high relative to the inherent biological variation (CVI) of the measurand. This is a common challenge with low-concentration analytes.

- Solution:

- Benchmark Performance: Check your method's CVA against the "desirable" imprecision goal from Table 1 ((0.5 \times CVI)) [21]. If your CVA is higher, the method may not be fit for monitoring individual changes.

- Technical Replicates: Increase the number of replicate measurements per sample and use the mean value. This can reduce the effective CVA.

- Method Improvement: If possible, transition to a more precise analytical method or optimize the current method to reduce noise.

The following workflow diagram summarizes the strategic approach to managing and interpreting variation in your research.

The Scientist's Toolkit: Essential Reagents and Materials

Table 2: Key Research Reagent Solutions for Variation Studies

| Item | Function in Variation Analysis |

|---|---|

| Stable Quality Control (QC) Materials | Used to longitudinally monitor and estimate the analytical variation (CVA) of the measurement platform over time [18]. |

| Pooled Patient Specimens | Often provide a more accurate matrix-matched estimate of CVA compared to commercial QC materials, as they better reflect the behavior of real clinical samples [18]. |

| Reference Materials | Used to evaluate and correct for method bias, a key component of total analytical error [21]. |

| Calibrators | Substances of known concentration used to calibrate analytical instruments, ensuring measurement accuracy and traceability [21]. |

The Impact of Low Concentrations on Signal-to-Noise and Data Dispersion

Frequently Asked Questions (FAQs)

Q1: Why are my low-concentration measurements so inconsistent and difficult to reproduce? At low concentrations, the signal from the target analyte becomes very weak. The primary challenge is a low Signal-to-Noise Ratio (SNR), where the meaningful signal is of similar magnitude or even drowned out by background noise. This noise can come from electronic instrument fluctuations, environmental interference, or inherent molecular variability. A low SNR makes it difficult to distinguish the true signal, leading to high data dispersion and poor reproducibility between experiments [22] [23].

Q2: What is Signal-to-Noise Ratio and why is it critical for my measurements? Signal-to-Noise Ratio (SNR) is a measure that compares the level of a desired signal to the level of background noise. It is defined as the ratio of signal power to noise power and is often expressed in decibels (dB) [23].

- High SNR: Indicates a clear, strong signal that is easy to detect and interpret, leading to reliable and reproducible data [23].

- Low SNR: The signal is obscured by noise, making it difficult to distinguish, which increases the risk of false positives/negatives and significantly reduces measurement precision and reproducibility [22] [23].

Q3: My results are inconsistent even when I follow the protocol exactly. What could be wrong? This often points to challenges with reproducibility versus replicability, which are distinct concepts:

- Reproducibility is obtaining consistent results when the same team uses the same data and methods to reanalyze the original experiment [24].

- Replicability is obtaining consistent results when a different team uses new data and independent methods to confirm the original findings [24]. At low concentrations, minor, unaccounted-for variations in sample handling, reagent batches, or environmental conditions that are negligible at high concentrations can become major sources of noise, undermining both reproducibility and replicability [24].

Q4: How can I accurately quantify nucleic acids at low concentrations when my spectrophotometer gives unreliable readings? UV spectrophotometers can overestimate nucleic acid concentration at low levels due to interference from contaminants that also absorb at 260 nm. For more accurate low-concentration quantification, use a fluorometric assay (e.g., Qubit assays). These assays use dyes specific to intact nucleic acids and are less affected by common contaminants, providing a more reliable signal [25].

Troubleshooting Guides

Problem 1: Low Signal-to-Noise Ratio in Spectrofluorometry

This problem manifests as a weak signal from your sample that is difficult to distinguish from the system's background noise.

Possible Causes & Solutions:

| Possible Cause | Solution |

|---|---|

| Suboptimal instrument settings | Use a longer integration time to collect more signal and narrow bandwidth slits to reduce background light, but be aware this can reduce throughput [26]. |

| Inappropriate detector | Ensure you are using a photomultiplier tube (PMT) suitable for your wavelength range. Cooled PMT housings can reduce dark noise [26]. |

| High background from buffer or components | Run a blank and ensure your sample matrix is pure. Use optical filters to reduce stray light reaching the detector [26]. |

| Incorrect calculation method | Use the appropriate SNR formula for your detector type. The FSD method (SNR = (Peak Signal - Background) / √(Background)) is for photon-counting systems, while the RMS method is better for analog detectors [26]. |

Experimental Protocol: Water Raman Test for System SNR This industry-standard test assesses the baseline sensitivity of a spectrofluorometer [26].

- Sample: Use ultrapure water.

- Excitation: Set to 350 nm.

- Emission Scan: Scan from 365 nm to 450 nm.

- Parameters: Use 5 nm excitation/emission slit widths and a 1-second integration time.

- Calculation:

- Peak Signal: Intensity at the water Raman peak (~397 nm).

- Background Noise: Intensity at a non-emissive region (e.g., 450 nm).

- Apply the FSD formula to calculate the SNR [26].

Problem 2: Low Yield and DNA Degradation in HMW DNA Extraction

This problem occurs when extracting High Molecular Weight (HMW) DNA, resulting in insufficient quantity or quality for downstream applications.

Possible Causes & Solutions:

| Possible Cause | Solution |

|---|---|

| Input amount too low | Use the recommended input amount of cells or tissue. Recovery efficiency drops drastically below a certain threshold [27]. |

| DNA shearing from handling | Always use wide-bore pipette tips for HMW DNA. Avoid vortexing and extended heating above 56°C [27]. |

| Nuclease activity | Process fresh tissue immediately. For frozen samples, add lysis buffer directly to the frozen tissue to inhibit nucleases. Homogenize samples quickly and place them in the thermal mixer immediately after [27]. |

| Incomplete binding to beads | For high-input samples, increase the binding time with the beads to 8 minutes to ensure complete and tight DNA attachment [27]. |

Problem 3: Inaccurate Quantification of Low-Concentration Nucleic Acids

This problem involves significant discrepancies between different quantification methods (e.g., spectrophotometry vs. fluorometry) and high variability between replicates.

Possible Causes & Solutions:

| Possible Cause | Solution |

|---|---|

| Contaminant interference | Molecules like salts or proteins can absorb UV light. Fluorometric assays (e.g., Qubit) are more specific and less susceptible to these contaminants [25]. |

| Sample out of assay range | The sample concentration may be too low or too high for the assay's dynamic range. Dilute a concentrated sample or use a more sensitive assay kit (e.g., switch from BR to HS assay) [25]. |

| Improper pipetting of viscous samples | Pipetting errors are magnified with low volumes. Dilute viscous samples and use a larger volume for measurement to increase accuracy [25]. |

| Temperature fluctuations | The fluorescent signal is temperature-sensitive. Ensure the assay buffer, dye, and samples are all at stable room temperature before measurement [25]. |

The Scientist's Toolkit: Essential Reagents and Materials

| Item | Function |

|---|---|

| Fluorometric Assay Kits (e.g., Qubit) | Provides highly specific and sensitive quantification of nucleic acids or proteins at low concentrations, minimizing contaminant interference [25]. |

| Wide-Bore Pipette Tips | Prevents shearing and fragmentation of high molecular weight DNA during pipetting, preserving sample integrity [27]. |

| Proteinase K | An enzyme that rapidly inactivates nucleases during cell lysis and tissue homogenization, protecting nucleic acids from degradation [27]. |

| Optical Filters | Used in spectroscopic instruments to block specific wavelengths of stray light, thereby reducing background noise and improving SNR [26]. |

| Cooled PMT Housing | A detector accessory that reduces dark noise (thermally generated electrons) in spectrofluorometers, which is crucial for detecting weak signals at low concentrations [26]. |

Experimental Workflows and Signaling Pathways

Workflow for Reproducible Low-Concentration Analysis

Relationship Between Signal, Noise, and Concentration

Signal-to-Noise Ratio Calculation Methods

| Calculation Method | Formula | Best For | Key Considerations |

|---|---|---|---|

| Power Ratio (dB) | SNRₕₐ = 10 log₁₀(Pₛᵢgₙₐₗ/Pₙₒᵢₛₑ) |

General system comparison [23] | Standard logarithmic scale for wide dynamic ranges. |

| Amplitude Ratio (dB) | SNRₕₐ = 20 log₁₀(Aₛᵢgₙₐₗ/Aₙₒᵢₛₑ) |

Voltage or current measurements [23] | Assumes signal and noise measured across same impedance. |

| First Standard Deviation (FSD) | SNR = (Sₚₑₐₖ - Sᵦg)/√(Sᵦg) |

Photon-counting detectors [26] | Assumes noise follows Poisson statistics. |

| Root Mean Square (RMS) | SNR = (Sₚₑₐₖ - Sᵦg)/RMSₙₒᵢₛₑ |

Analog detection systems [26] | Requires kinetic scan to measure noise over time. |

Reproducibility is a fundamental principle of the scientific method, defined as the ability to duplicate the results of a prior study using the same materials and procedures as the original investigator [28]. In recent years, many scientific fields have faced what has been termed a "reproducibility crisis," with studies across disciplines struggling to be reproduced [29] [10] [28]. This challenge is particularly pronounced in fields involving low concentration measurements and computational analysis, where minor variations in methodology can significantly impact results.

The framework presented in this technical support center addresses five specific types of reproducibility, providing researchers with practical guidance, troubleshooting advice, and methodological support to enhance the reliability and verifiability of their scientific work, especially in demanding research areas such as low-concentration analyte measurements.

The Five-Type Reproducibility Framework

Based on established frameworks for evaluating reproducibility in scientific research [30], we identify five distinct types of reproducibility, each with specific assessment criteria and methodological requirements.

Table 1: The Five Types of Reproducibility

| Type of Reproducibility | Definition | Key Assessment Indicators | Primary Applications |

|---|---|---|---|

| Computational Reproducibility | Ability to reproduce results using the same data, code, and computational methods [30] [31] | Same code produces identical outputs; shared scripts and datasets | Bioinformatics, AI/ML, data-intensive research |

| Recreate Reproducibility | Reproducing results by repeating the experimental procedure with the same methodology [30] | Protocol adherence; same equipment and materials; consistent results | Wet lab experiments, clinical studies |

| Robustness Reproducibility | Testing if results hold when varying analytical choices (e.g., statistical methods, parameters) [30] | Results persist across methodological variations; sensitivity analysis | Method validation, assay development |

| Direct Replicability | Testing if results hold in new data collected using identical procedures [30] | Same experimental protocol with new samples; consistent findings | Multi-center trials, longitudinal studies |

| Conceptual Replicability | Testing if the fundamental concept holds using different methods or experimental conditions [30] | Core hypothesis supported across different approaches; generalizable principles | Basic science, mechanistic studies |

Technical Support Center: Troubleshooting Guides and FAQs

FAQ 1: What constitutes successful computational reproducibility?

Answer: Successful computational reproducibility requires that an independent researcher can use the same raw data to build the same analysis files and implement the same statistical analysis to obtain the same results [28]. This extends beyond just shared code to include the complete computational environment.

Common Issues and Solutions:

- Problem: Code produces different results when run on different systems

- Solution: Use containerization technologies (Docker) to encapsulate the complete computational environment [32]

- Problem: Missing or version-mismatched dependencies

- Solution: Use package management tools that capture specific dependency versions (e.g., pip freeze, conda env export) [29]

- Problem: Non-deterministic algorithms producing variable results

- Solution: Set random seeds explicitly and document all stochastic elements [29]

FAQ 2: How do we address variability in low-concentration measurements?

Answer: Low-concentration measurements present particular challenges for recreate reproducibility. A study on dissolved radiocesium concentrations in freshwater (0.001-0.1 Bq L⁻¹) demonstrated that reproducibility standard deviations among different laboratories were consistently larger than repeatability standard deviations within individual laboratories [16].

Troubleshooting Guide:

- Issue: High inter-laboratory variability

- Root Cause: Subtle differences in pre-concentration methods, calibration procedures, or sample handling

- Solution: Implement standardized protocols with detailed documentation of all procedural steps [16]

- Prevention: Use certified reference materials and participate in inter-laboratory comparison studies

Table 2: Measurement Performance in Low-Concentration Radiocesium Study

| Pre-concentration Method | Number of Laboratories | Repeatability (Within Lab) | Reproducibility (Between Lab) | Key Variables |

|---|---|---|---|---|

| Prussian Blue Cartridges | 8 | Lower RSD | Higher RSD | Flow rate, cartridge type, geometric correction |

| AMP Coprecipitation | 5 | Lower RSD | Higher RSD | pH adjustment, filtration technique |

| Evaporation | 3 | Lower RSD | Higher RSD | Evaporation temperature, container type |

| Solid-Phase Extraction | 3 | Lower RSD | Higher RSD | Disk type, pressure filtration settings |

| Ion-Exchange Resin | 2 | Lower RSD | Higher RSD | Column preparation, flow control |

FAQ 3: What documentation is essential for robustness reproducibility?

Answer: Robustness reproducibility requires comprehensive documentation of all analytical choices and parameters that could influence results. This includes the complete range of hyperparameters considered, method for selecting optimal parameters, and explicit reporting of statistical measures used [28].

Essential Documentation Checklist:

- Complete description of algorithms with complexity analysis

- Range of hyperparameters considered and selection methodology

- Clear definitions of statistical measures including central tendency and variance

- Number of evaluation runs and measures of variability

- Computing infrastructure specifications [28]

FAQ 4: How can we improve direct replicability in experimental studies?

Answer: Direct replicability requires that the entire experimental procedure can be repeated with new samples or data while obtaining consistent results. Barriers include insufficient methodological detail, undocumented procedural nuances, and environmental factors.

Troubleshooting Guide:

- Problem: Cannot replicate published experimental results

- Diagnosis: Check for undocumented "tacit knowledge" or procedural details omitted from methods section

- Solution: Implement detailed protocols with video demonstrations where possible

- Verification: Conduct internal pre-replication studies before full-scale replication attempts

FAQ 5: What validates conceptual replicability?

Answer: Conceptual replicability is demonstrated when the fundamental finding or relationship holds across different methodological approaches, experimental conditions, or model systems. This represents the strongest evidence for a scientific claim as it demonstrates generalizability beyond specific experimental conditions.

Assessment Framework:

- Test the core hypothesis using different measurement techniques

- Vary key experimental parameters beyond the original range

- Apply to related but distinct biological systems or conditions

- Use complementary approaches (e.g., pharmacological and genetic interventions)

Experimental Protocols for Reproducibility Assessment

Protocol 1: Computational Reproducibility Checklist

Based on established frameworks for computational research [29] [28], implement the following protocol:

Code Version Control

- Use Git with descriptive commit messages

- Tag specific versions used for publication

- Maintain a changelog of significant modifications

Environment Specification

- Capture package versions (e.g., requirements.txt, environment.yml)

- Document operating system and critical software versions

- Use containerization (Docker) for complex dependencies

Data and Code Linkage

- Implement automated data retrieval where possible

- Ensure code contains proper paths to data assets

- Verify that data inputs match code expectations

Execution Automation

- Create master scripts that reproduce complete analyses

- Implement one-command execution where feasible

- Document any manual steps required

Protocol 2: Low-Concentration Measurement Validation

Adapted from radiocesium measurement methodology [16], this protocol ensures measurement reproducibility:

Method Details:

- Sample Collection: Collect samples using standardized containers and procedures. For water samples, use pre-cleaned polyethylene containers and maintain consistent collection conditions [16].

- Filtration: Immediately filter samples through 0.45μm membrane filters to separate dissolved and particulate fractions [16].

- Pre-concentration: Apply one of five validated methods:

- Prussian-blue-impregnated filter cartridges

- Coprecipitation with ammonium phosphomolybdate (AMP)

- Controlled evaporation

- Solid-phase extraction disks

- Ion-exchange resin columns

- Measurement: Analyze using calibrated germanium semiconductor detectors with appropriate geometry corrections [16].

- Statistical Analysis: Calculate z-scores to compare results across laboratories and determine relative standard deviations (RSD) for repeatability and reproducibility assessment [16].

Table 3: Research Reagent Solutions for Reproducibility

| Tool/Category | Specific Examples | Function in Reproducibility | Application Context |

|---|---|---|---|

| Version Control Systems | Git, GitHub, GitLab | Track code changes and enable collaboration | Computational reproducibility |

| Containerization Platforms | Docker, Singularity | Encapsulate complete computational environments | Computational reproducibility |

| Workflow Management Systems | Snakemake, Nextflow, CWL | Automate multi-step computational analyses | Computational reproducibility |

| Electronic Lab Notebooks | Benchling, LabArchives | Document experimental procedures and parameters | Recreate reproducibility |

| Reference Materials | Certified reference materials, internal controls | Calibrate instruments and validate methods | Robustness reproducibility |

| Pre-concentration Materials | PB cartridges, AMP reagents, ion-exchange resins | Enable low-concentration analyte detection | Low-concentration measurements |

| Statistical Software | R, Python (scipy), JMP | Implement consistent analytical approaches | All reproducibility types |

| Data Repositories | Zenodo, Figshare, Dryad | Share research data for verification | Direct replicability |

Implementation Framework

Assessment Protocol for Research Programs

To implement this reproducibility framework in your research program:

- Categorize your research activities according to the five reproducibility types

- Select appropriate assessment metrics from the provided tables

- Implement relevant troubleshooting guides for your specific challenges

- Document using the provided protocols and checklists

- Validate through internal reproducibility testing before publication

The consistent application of this framework will enhance the reliability and credibility of research findings, particularly in challenging domains such as low-concentration measurements where methodological rigor is paramount for generating trustworthy results.

Robust Method Development: Best Practices for Low-Concentration Assays

Optimal Sample Preparation and Storage to Prevent Degradation

Troubleshooting Guides

Guide 1: Addressing Poor Reproducibility in Quantitative Analysis

Problem: Significant, unexplained variations in quantitative results between sample replicates, including failure to detect target compounds.

| Problem Area | Specific Issue | Recommended Solution |

|---|---|---|

| Sample Preparation & Storage | Inadequate sample size for heterogeneous solids; improper storage leading to cross-contamination. | Increase sample size for heterogeneous solids [33]. Use designated, separate storage containers/bags for different sample types and clean storage areas regularly [33]. |

| Compound Stability | Degradation of light/heat-sensitive or oxidation-prone compounds (e.g., penicillin, vitamin A). | Minimize light/heat exposure. Add antioxidants (e.g., vitamin C, sodium sulfite). Adjust pH or use buffered mobile phases to maintain stability [33]. |

| Extraction & Cleanup | Incomplete sample dispersion; suboptimal extraction time/temperature; target compound loss during cleanup. | Ensure thorough sample dispersion via vortexing/shaking. Optimize extraction time and temperature. Analyze compound concentration at each cleanup step to identify loss points [33]. |

| Contamination | Background contamination from ubiquitous compounds (e.g., phthalates, bisphenol A). | Perform background screening of solvents. Designate clean solvents for specific analyses. Identify and replace contaminated laboratory instruments [33]. |

Guide 2: Controlling Contamination to Preserve Sample Integrity

Problem: Contaminants are leading to false positives, altered results, and reduced analytical sensitivity [34].

| Problem Area | Specific Issue | Recommended Solution |

|---|---|---|

| Laboratory Tools | Cross-contamination from improperly cleaned or reusable homogenizer probes and tools [34]. | Use disposable probes (e.g., Omni Tips) for sensitive assays. For reusable stainless steel probes, implement rigorous cleaning and run a blank solution to test for residual analytes [34]. |

| Reagents | Impurities in chemicals used for sample preparation [34]. | Verify reagent purity and use high-grade standards. Regularly test reagents for contaminants before use [34]. |

| Laboratory Environment | Airborne particles, surface residues, and human-sourced contaminants (skin, hair, clothing) [34]. | Use laminar flow hoods or cleanrooms. Decontaminate surfaces with 70% ethanol, 10% bleach, or specific solutions like DNA Away. Manage airflow with HEPA filtration and pressure differentials [35]. |

| Sample Handling | Well-to-well contamination in 96-well plates during seal removal [34]. | Spin down sealed plates to remove liquid from seals. Remove seals slowly and carefully to prevent aerosol generation [34]. |

Guide 3: Managing Sample Storage to Prevent Analyte Degradation

Problem: Sample degradation during storage, leading to inaccurate or unreliable data [36].

| Problem Area | Specific Issue | Recommended Solution |

|---|---|---|

| Temperature Control | Temperature excursions or fluctuations that degrade sensitive samples [35] [36]. | Use continuous temperature monitoring systems (CTMS) with deviation alarms [35]. Ensure freezers have backup power and dual compressors. Map thermal gradients within storage units [35] [36]. |

| Storage Duration & Conditions | Time-related degradation; improper container materials causing leaching or adsorption [37]. | Minimize storage time; analyze samples promptly [37]. Use inert container materials that do not interact with sample constituents [37]. For light-sensitive samples, use amber or opaque vials [35]. |

| Freeze/Thaw Cycles | Sample damage from repeated freezing and thawing [36]. | Store samples in smaller single-use aliquots to avoid repeated freeze-thaw cycles [36]. Thaw samples slowly on ice [36]. |

| Humidity Control | Desiccation (low humidity) or condensation and microbial growth (high humidity) [35]. | Maintain relative humidity between 30% and 60% using humidification/dehumidification systems. Use sealed containers to prevent moisture transfer [35]. |

Frequently Asked Questions (FAQs)

Q1: What is the overarching goal of sample preparation? The primary goal is to ensure the sample is in the right form, free from contaminants, and at a suitable concentration for analysis [38]. This process isolates target analytes from the sample matrix, removes interfering substances, and enhances the sensitivity, accuracy, and reliability of your results [39].

Q2: What are the critical steps in the sample preparation workflow? The workflow generally involves six key steps [38]:

- Collection: Meticulous gathering of samples under controlled conditions.

- Storage: Preserving samples to prevent alteration or degradation.

- Enrichment: Concentrating analytes and removing the sample matrix.

- Extraction: Isolating the analyte, often involving chemical modification.

- Quantification: Confirming analyte levels are within the detection limits of your instrument.

- Concentration or Dilution: Adjusting the final analyte level for optimal analysis.

Q3: How do I choose the correct storage temperature for my biological samples? The optimal temperature depends on the sample type and required storage duration [36]. See the table below for guidance.

| Storage Temperature | Suitable For |

|---|---|

| Room Temp (15°C - 27°C) | Formalin or paraffin-fixed samples; some DNA/RNA in stabilizing solutions [38] [36]. |

| Refrigerated (2°C - 8°C) | Short-term storage of reagents, buffers, and freshly collected tissues or blood [36]. |

| Freezer (-20°C) | DNA/RNA (short-term); samples and reagents used routinely that do not require ultra-low temps [36]. |

| ULT Freezer (-80°C) | Long-term storage of tissues, cells, and samples for retrospective studies [36]. |

| Cryogenic (-150°C or lower) | Complex tissues like stem cells, embryos, and bone marrow to suspend all biological activity [36]. |

Q4: My samples are prone to cross-contamination. What are the best practices to prevent this? To minimize cross-contamination:

- Use Disposable Tools: Employ single-use plastic homogenizer probes or pipette tips where possible [34].

- Validate Cleaning: For reusable tools, clean thoroughly and run a blank solution to check for residual analytes [34].

- Manage Workflow: Segregate laboratory spaces for different activities (e.g., reagent prep, sample analysis). Use physical barriers and controlled airflow (positive/negative pressure) to contain contaminants [35].

- Handle Plates with Care: Centrifuge sealed well-plates and remove seals slowly to prevent well-to-well contamination [34].

Q5: How can I stabilize compounds that are sensitive to light, heat, or oxidation?

- Light-Sensitive Compounds: Use amber or opaque vials and work under low-light conditions [35].

- Heat-Sensitive Compounds: Keep samples on ice during processing and store at recommended low temperatures [36] [33].

- Oxidation-Prone Compounds: Add antioxidants like vitamin C or sodium sulfite to your samples [33].

Workflows and Visual Guides

Sample Preparation Workflow

This diagram outlines the core steps for preparing samples to ensure analytical integrity.

Sample Storage Decision Tree

Use this flowchart to determine the appropriate storage conditions for your biological samples.

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function |

|---|---|

| Disposable Homogenizer Probes | Single-use probes (e.g., Omni Tips) eliminate cross-contamination between samples during homogenization [34]. |

| Solid-Phase Extraction (SPE) Columns | Used to isolate and purify compounds from liquid samples based on their physical and chemical properties, removing interfering matrix components [38]. |

| Antioxidants (e.g., Vitamin C, Sodium Sulfite) | Added to samples to prevent oxidative degradation of sensitive compounds [33]. |

| pH Buffers | Maintain a stable pH environment in solutions, which is critical for the stability of pH-sensitive compounds and consistent analytical performance [33]. |

| Stabilizing Solutions for DNA/RNA | Chemical solutions that allow for the safe short-term storage of nucleic acids at room temperature or -20°C, preventing degradation [36]. |

| Inert Storage Vials | Amber or opaque vials made of non-reactive materials prevent light-induced degradation and chemical leaching or adsorption [35] [37]. |

| Digital Data Logger (DDL) | A continuous temperature monitoring device that provides detailed records and alarms for storage units, essential for audit trails and sample integrity [36]. |

Stabilizing Light-Sensitive and Heat-Sensitive Compounds

Troubleshooting Guides

Troubleshooting Light Sensitivity

| Problem | Possible Cause | Solution |

|---|---|---|

| Sample degradation during analysis (e.g., TLC) | Compound is sensitive to ambient light or UV light used for visualization [40]. | Work under amber or red safelight conditions; use UV light only briefly for TLC analysis [41]. |

| Unwanted color change or precipitation in biologic drug solutions | Exposure to UV or visible light during storage, preparation, or administration induces photodegradation [42]. | Store in original container, often an amber vial or one overwrapped in opaque material; protect from light during all handling steps [42]. |

| Loss of potency or increased immunogenicity in therapeutic proteins | Photodegradation leads to breakage of polymer chains and formation of free radicals [43]. | Use formulations with excipients that act as UV absorbers or radical scavengers; ensure proper primary packaging [42]. |

Troubleshooting Heat Sensitivity

| Problem | Possible Cause | Solution |

|---|---|---|

| Reaction mixture decomposes upon heating | The target compound, starting material, or a byproduct is thermally unstable [44]. | Determine the thermal decomposition temperature of all chemicals; avoid heating pyrophoric compounds, strong oxidizers, and peroxides [44]. |

| Biologic drug forms aggregates or loses efficacy | Exceeded the recommended storage temperature range, leading to denaturation [42]. | Store at the recommended temperature (often 2-8°C for biologics); do not freeze unless explicitly instructed [42]. |

| Over-pressurization or explosion during a heated reaction | The system is closed and vapors or gases are being produced [44]. | Use a condensing apparatus and continuously vent the system through a bubbler; never heat a closed system [44]. |

| Uneven heating causes localized decomposition | Inefficient stirring or heat transfer in the reaction vessel [44]. | Use an appropriately sized magnetic stir bar or overhead mechanical stirrer to ensure even mixing and heating [44]. |

Frequently Asked Questions (FAQs)

Q: Why is improving the stability of light- and heat-sensitive compounds critical for reproducibility in research? A: Inconsistent handling and storage of these compounds introduce a major, uncontrolled variable. If a reagent degrades unpredictably between experiments due to improper temperature or light exposure, the concentration and quality of the starting material change, making it impossible to replicate results accurately [42] [45]. Proper stabilization is a foundational practice for rigorous, reproducible science.

Q: For a solution-based biologic, when are sensitivity indications most critical? A: Sensitivity is always critical, but specific instructions become paramount when the formulation is reconstituted or diluted. A survey of therapeutic proteins showed that while 82% of as-supplied formulations had light protection instructions, this dropped to only 40% for reconstituted and 32% for diluted solutions, indicating a potential gap in labeling that researchers must proactively manage [42].

Q: What are the primary engineering controls for safely heating a reaction? A: Key controls include using a thermocouple or thermostat-controlled heat source (like an oil bath), securely clamping a temperature probe directly in the heating medium, using a lab jack for quick removal of heat, and employing a condensing apparatus with secure tubing for reactions near a solvent's boiling point [44].

Q: Are some container types better for protecting light-sensitive formulations? A: Yes, product surveys indicate that sensitivity is often more well-defined for products in autoinjectors, prefilled-syringes, and pens compared to those in standard vials, likely due to integrated design features [42]. When in doubt, use amber glass or apply opaque covers.

Q: What is a fundamental administrative control for a heated reaction? A: Do not leave heated reactions unattended. If it is absolutely necessary, you must post an "Unattended Operation" sign with the date, reaction details, and emergency contact information. Furthermore, you should set the equipment's adjustable over-temperature control to a safe maximum limit [44].

Prevalence of Sensitivity in Therapeutic Proteins

The following data is derived from a comprehensive survey of 557 unique formulations of licensed, biotechnology-derived therapeutic proteins [42].

| Sensitivity Indication | As-Supplied Formulations | Reconstituted Formulations | Diluted Formulations |

|---|---|---|---|

| Protect From Light | 82% (459 formulations) | 39% (63 formulations) | 32% (47 formulations) |

| Do Not Freeze | 81% (450 formulations) | Data not specified | Data not specified |

| Both Indications | 73% (407 formulations) | Data not specified | Data not specified |

Common Heating Bath Media and Their Properties

| Bath Material | Flash Point (°C) | Useful Range (°C) |

|---|---|---|

| Water | N/A | 0 to 70 |

| Mineral Oil | 113 | 25 to 100 |

| Silicone Oil | 300 | 25 to 230 |

| Eutectic Salt Mixtures | N/A | 142 to 500 |

| Sand | N/A | 25 to 500+ |

Source: PennEHRS Fact Sheet on Heating Reactions [44]. Note: The media should never be heated over its flash point.

Experimental Protocols

Protocol 1: Safe Setup for a Heated Reaction

This protocol outlines the steps for setting up a reaction at elevated temperature to minimize risks of thermal degradation, explosion, and fire.

- Hazard Assessment: Prior to setup, consult Safety Data Sheets (SDS) for all chemicals to identify boiling points, flashpoints, and thermal decomposition temperatures. Evaluate the potential for gas evolution or runaway reactions [44].

- Equipment Assembly:

- Select a heat source with thermocouple/thermostat control (e.g., thermostatted hot plate, heating mantle).

- Place the reaction vessel on a lab jack for quick lowering.

- Clamp all glassware securely. For reactions near or above a solvent's boiling point, assemble a condensing apparatus.

- Ensure the system is continuously vented to the atmosphere through a bubbler; never heat a closed system [44].

- Heating Bath Setup:

- Choose a bath medium (see Table above) suitable for your target temperature.

- Fill the bath container no more than halfway.

- Securely clamp the thermometer or thermocouple probe in the bath medium.

- For precise control, also use a thermometer adapter to monitor the temperature of the reaction mixture directly [44].

- Initiation and Monitoring:

- Begin stirring to ensure even heating.

- Apply heat incrementally until the target temperature is reached and stabilized.

- Do not leave the reaction unattended until it has equilibrated. Use an audible timer as a reminder to check the reaction [44].

Protocol 2: Evaluating Photostability by Thin Layer Chromatography (TLC)

This protocol uses TLC to quickly assess if a compound is degrading under standard laboratory lighting.

- Sample Preparation: Prepare two identical, dilute solutions of the compound in a suitable solvent.

- Control Setup:

- Spot the TLC plate with the sample in several lanes.

- Immediately place one of the spotted plates in a sealed container, wrapped in aluminum foil to block all light.

- Light Exposure:

- Place the second, identically spotted TLC plate under the normal laboratory lighting conditions (or a specific light source you are testing) for a predetermined period (e.g., 1 hour).

- Analysis:

- Develop both TLC plates simultaneously in the same developing chamber.

- Visualize the plates using an appropriate method (e.g., UV light, chemical stain).

- Interpretation: Compare the TLC plates. The appearance of new, unexpected spots (degradants) or the smearing of the primary spot on the light-exposed plate, but not on the light-protected control plate, indicates that the compound is light-sensitive under the tested conditions. This method is adapted from common TLC troubleshooting practices [40].

Visual Workflows and Diagrams

Experimental Workflow for Handling Sensitive Compounds

Decision Tree for Heating Bath Selection

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function | Application Notes |

|---|---|---|

| Amber Glassware | Blocks visible and UV light to prevent photodegradation during storage and handling. | The standard for storing light-sensitive stock solutions and reagents. |

| Temperature-Controlled Storage | Maintains a consistent, low temperature (e.g., 2-8°C) to slow thermal degradation. | Essential for biologics, enzymes, and many labile chemicals. |

| Thermostatted Heating Bath | Provides precise and uniform heating for reactions, minimizing hot spots and localized decomposition. | Preferable to open flames. Oil baths offer excellent heat transfer [44]. |

| UV Absorbers (e.g., in packaging) | Excipients or packaging materials that absorb harmful UV radiation, protecting the core compound. | A common strategy in the formulation of therapeutic proteins [42] [43]. |

| Radical Scavengers / Antioxidants | Compounds that interrupt the free-radical chain reactions of oxidation. | Can be added to formulations to mitigate both thermal and photo-oxidative degradation [42] [46]. |

| Stabilizer Mixtures (e.g., Ca/Zn salts) | Act as heat stabilizers by trapping HCl or decomposing peroxides that catalyze degradation. | Widely used in polymers like PVC; the principles inform biochemical stabilization [47] [46]. |

Improving Extraction Efficiency and Sample Cleanup Techniques

This technical support center provides troubleshooting guides and FAQs to help researchers address common challenges in sample preparation, with a focus on enhancing reproducibility in low-concentration measurements.

Sample Preparation Fundamentals

What is the primary goal of sample preparation? The core aim is to isolate target analytes from the sample matrix while removing interfering substances [48]. This process ensures the sample is clean, concentrated appropriately, and in the right form for accurate analysis, which directly enhances sensitivity, reduces errors, and improves data reliability [48].

Why is sample preparation particularly critical for low-concentration measurements and reproducibility? In low-concentration research, contaminants can mask target analytes or produce false positives, severely compromising data integrity [34]. Consistent sample preparation is the foundation for reproducible results; without it, minor variations in extraction or cleanup can lead to significant data inconsistencies, making it difficult to validate findings across experiments and labs [34].

Troubleshooting Guides

Problem: Low Extraction Efficiency

Symptoms: Lower-than-expected yield of target analytes, reduced biological activity in extracts, poor sensitivity in downstream analysis.

Solutions:

- Optimize Extraction Parameters: Systematically optimize key parameters such as extraction temperature, duration, and solvent-to-water ratio. Using advanced optimization methods like an Artificial Neural Network–Genetic Algorithm (ANN-GA) approach can yield extracts with higher concentrations of phenolic compounds and greater antioxidant activity compared to classical methods like Response Surface Methodology (RSM) [49].

- Evaluate Solvent Composition: The ethanol-to-water ratio is a critical variable. Test a range of polarities (e.g., 0%, 50%, 100% ethanol) to determine the optimal solvent for your specific analytes, as the ideal composition depends on the target compounds' properties [49].

- Consider Advanced Extraction Techniques: Modern techniques like Microwave-Assisted Extraction (MAE) use microwave energy to rapidly heat solvents, reducing processing times and solvent volumes while improving analyte recovery and reproducibility [48].

Problem: Inconsistent Results Between Batches or Operators

Symptoms: High variability in measurements from identical sample types, inability to replicate previous findings.

Solutions:

- Standardize Protocols with a Structured Framework: Implement a schema-driven framework for standardizing data collection protocols. Tools like ReproSchema help define and manage survey components (like questionnaire data) to ensure consistency across studies, research teams, and over time, directly supporting reproducibility [50].

- Implement Rigorous Contamination Control: Up to 75% of laboratory errors occur during the pre-analytical phase [34]. Use disposable tools like plastic homogenizer probes to eliminate cross-contamination [34]. For reusable tools, validate cleaning procedures by running a blank solution to check for residual analytes [34].

- Adhere to Detailed Reporting Checklists: For experimental protocols, use comprehensive checklists like PECANS (for cognitive and neuropsychological studies) to ensure all critical methodological information is reported. This provides the necessary detail for direct replication of experiments [51].

Problem: Sample Matrix Interference

Symptoms: High background noise, suppression or enhancement of analyte signal (especially in MS), coelution of peaks in chromatography.

Solutions:

- Implement Effective Cleanup Techniques:

- Solid-Phase Extraction (SPE): Uses a cartridge to retain either the target analytes or impurities, effectively cleaning and preconcentrating the sample [52] [48].

- Solid-Phase Microextraction (SPME): A solvent-free technique that uses a coated fiber to extract analytes from liquid or gas matrices, ideal for on-site sampling and complex matrices [48].

- Use Appropriate Internal Standards: To correct for matrix effects during mass spectrometric analysis, use stable isotopically labeled internal standards (e.g., 13C or 15N labeled). These experience the same ionization suppression/enhancement as the analyte, allowing for accurate correction. Note that deuterated standards can exhibit chromatographic isotope effects [52].

Experimental Protocols for Optimization

Protocol 1: Optimizing Extraction Parameters using RSM and ANN-GA

This methodology is adapted from a study optimizing the extraction of bioactive compounds from Phylloporia ribis [49].

1. Define Parameters and Levels:

- Independent Variables: Extraction temperature (e.g., 45°C, 55°C, 65°C), extraction duration (e.g., 5, 10, 15 hours), and ethanol-to-water ratio (e.g., 0%, 50%, 100%) [49].

- Response Variable: A quantifiable measure of success, such as Total Antioxidant Status (TAS), total phenolic content, or target analyte concentration [49].

2. Perform Experimental Trials:

- Conduct a full factorial design (e.g., 27 trials) using a system like a Soxhlet apparatus. Keep the solvent-to-solid ratio constant across all runs [49].

3. Model and Optimize:

- Response Surface Methodology (RSM): Use software to fit the data to a second-order polynomial model and generate 3D surface plots to find optimal conditions [49].

- Artificial Neural Network–Genetic Algorithm (ANN-GA):

- ANN Modeling: Develop a model using extraction parameters as inputs and your response variable (e.g., TAS) as the output. Divide data into training (80%), validation (10%), and testing (10%) sets. Use algorithms like Levenberg–Marquardt for training [49].

- GA Optimization: Use a genetic algorithm to search for the input parameters that maximize the predicted response from the ANN model [49].

4. Validate and Compare: Validate the optimal conditions predicted by both RSM and ANN-GA. Studies show ANN-GA can produce extracts with superior biological activity [49].

Protocol 2: Selecting a Sample Cleanup Method

Table 1: Comparison of Extraction Optimization Techniques [49]

| Optimization Method | Key Features | Reported Advantages | Considerations |

|---|---|---|---|

| Response Surface Methodology (RSM) | Uses a second-order polynomial model and 3D surface plots. | Established statistical framework, good for understanding factor interactions. | May be less accurate for highly complex, non-linear systems. |

| Artificial Neural Network–Genetic Algorithm (ANN-GA) | AI-based; ANN models the process, GA finds global optimum. | Superior predictive accuracy for complex systems; produced extracts with higher antioxidant activity and phenolic content. | Requires larger datasets; computationally intensive. |

Table 2: Common Sample Cleanup Techniques and Characteristics [52] [48]

| Technique | Principle | Best For | Advantages | Disadvantages |

|---|---|---|---|---|

| Solid-Phase Extraction (SPE) | Analyte retention/impurity removal on a cartridge. | Preconcentrating analytes from large aqueous volumes; desalting. | High selectivity, customizable phases. | Can be labor-intensive; cartridge cost. |

| Solid-Phase Microextraction (SPME) | Equilibrium distribution onto a coated fiber. | Volatile/non-volatile analysis from liquid/gas matrices; on-site sampling. | Solvent-free, minimal sample volume. | Fiber can be fragile; limited coating phases. |

| Liquid-Liquid Extraction (LLE) | Partitioning between two immiscible liquids. | Separating and concentrating compounds, including thermolabile ones. | Cost-effective, simple setup. | Emulsion formation; large solvent volumes. |

| Stir-Bar Sorptive Extraction (SBSE) | Equilibrium distribution onto a stir-bar coating. | Trace analysis in environmental, food, and biological samples. | High recovery, good reproducibility. | Limited availability of coatings. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Extraction and Cleanup

| Item | Function/Description | Application Example |

|---|---|---|

| Stable Isotopically Labeled Internal Standards (e.g., 13C, 15N) [52] | Corrects for matrix effects and fluctuations during sample preparation and MS ionization. | Quantitation of low-concentration analytes in complex biological matrices. |

| Molecularly Imprinted Polymers (MIPs) [48] | Synthetic materials with high selectivity and affinity for specific target molecules. | Sample pretreatment for selective extraction of a specific compound class from complex samples. |

| One-Pot/One-Step Sample Prep Kits [53] | Simplified, automated sample preparation protocols for proteomics. | Preparing protein samples for low-cost, high-throughput proteomic analysis (e.g., the "$10 proteome"). |

| SPME Fibers (various coatings) [48] | A needle with a retractable fiber for solvent-free extraction of volatiles and non-volatiles. | Headspace sampling for volatile organic compounds (VOCs) in blood or environmental samples. |

| SPE Cartridges (various sorbents) [52] [48] | Small columns containing a stationary phase for separation and purification. | Isolating nonsteroidal anti-inflammatory drugs (NSAIDs) from wastewater. |

Frequently Asked Questions (FAQs)

Q1: What are the most critical steps to prevent contamination during sample preparation?

- Use disposable tools like plastic homogenizer probes to eliminate cross-contamination between samples [34].

- Regularly clean and disinfect lab surfaces with appropriate solutions (e.g., 70% ethanol, DNA Away for genomics work) [34].

- Always run blank samples after cleaning reusable tools and as controls in your analytical batch to detect any residual contamination [34].

Q2: How can I improve the reproducibility of my sample preparation protocol for a multi-site study?

- Utilize a structured, schema-driven framework like ReproSchema to define and version your data collection and survey protocols, ensuring every site uses identical, validated methods [50].

- Provide detailed, step-by-step Standard Operating Procedures (SOPs) and use checklists like PECANS to ensure all critical methodological information is documented and reported [51].

Q3: My analytical method sensitivity is insufficient for low-concentration analytes. What sample prep adjustments can help?

- Preconcentrate your sample: Use techniques like SPE or LLE to transfer your analyte into a smaller final volume [52] [48].

- Minimize sample dilution: Choose sample prep methods that avoid excessive dilution steps.

- Ensure efficient extraction: Optimize your extraction protocol (e.g., using ANN-GA) to maximize the release of target analytes from the matrix [49].

Q4: Are there automated alternatives to manual, time-consuming sample prep methods like LLE? Yes, several techniques offer higher efficiency. Solid-Phase Microextraction (SPME) automates sampling and is solvent-free [48]. Microwave-Assisted Extraction (MAE) significantly reduces processing times [48]. For proteomics, automated one-pot preparation workflows can process thousands of samples per day at low cost [53].

Selecting and Validating Sensitive Analytical Systems (e.g., SPR, LC-MS)

Reproducibility forms the cornerstone of scientific integrity, distinguishing robust research from pseudoscience. In the context of low concentration measurements, ensuring reproducible results becomes particularly challenging due to factors like variability in data collection, small sample sizes, and incomplete methodological reporting [20]. For techniques such as Surface Plasmon Resonance (SPR) and Liquid Chromatography-Mass Spectrometry (LC-MS/MS), dedicated quality assurance and control procedures are essential to quantify experimental stability, detect outliers, and minimize variability in outcome measures [20]. This technical support center provides targeted troubleshooting guides and FAQs to help researchers overcome common challenges in selecting and validating these sensitive analytical systems, thereby enhancing the reliability of their data.

Foundational Concepts: Reproducibility in Analytical Science

Defining Key Terms

Understanding the terminology is crucial for implementing reproducible practices:

- Repeatability: Same team, same experimental setup [20].

- Reproducibility: Different team, same experimental setup [20].