Advanced Strategies for Managing Spectral Interference in Quantitative Analysis: From Fundamentals to Cutting-Edge Applications in Drug Development

This comprehensive review addresses the critical challenge of spectral interference in quantitative analysis, a pervasive issue affecting accuracy in pharmaceutical and biomedical research.

Advanced Strategies for Managing Spectral Interference in Quantitative Analysis: From Fundamentals to Cutting-Edge Applications in Drug Development

Abstract

This comprehensive review addresses the critical challenge of spectral interference in quantitative analysis, a pervasive issue affecting accuracy in pharmaceutical and biomedical research. We explore foundational principles of spectral artifacts, including environmental noise, instrumental factors, and scattering effects. The article details a spectrum of methodological approaches—from traditional preprocessing to advanced machine learning techniques—for interference correction and avoidance. A strong emphasis is placed on troubleshooting, optimization strategies, and rigorous validation frameworks to ensure analytical reliability. By synthesizing insights from spectroscopic and chromatographic techniques, this work provides researchers and drug development professionals with practical, validated strategies to enhance measurement precision, support robust quality control, and accelerate therapeutic discovery.

Understanding Spectral Interference: Origins, Types, and Impact on Analytical Accuracy

# FAQ: Fundamental Concepts

◎ What is spectral interference?

Spectral interference is a phenomenon in spectroscopic analysis that occurs when a signal from an interfering species (which can be an atom, ion, or molecule) overlaps with, obscures, or distorts the analytical signal of the element or compound you are trying to measure. This overlap leads to inaccuracies in both qualitative identification and, most critically, in quantitative analysis by causing positive or negative errors in the measured concentration of your target analyte. [1] [2] [3]

◎ What are the main types of spectral interference?

The main types of spectral interference can be categorized based on the nature of the interfering species and the type of overlap. The table below summarizes the primary types.

Table 1: Primary Types of Spectral Interference

| Type of Interference | Description | Common Occurrence |

|---|---|---|

| Direct Spectral Overlap | An emission or absorption line of an interferent completely or nearly completely overlaps with the analyte's line. [1] [4] | ICP-OES, AAS |

| Wing Overlap | The broad wing of a high-intensity line from an interferent overlaps with a nearby analyte line. [1] [4] | ICP-OES |

| Background Interference | A broad signal from molecular absorption, light scattering, or background radiation elevates the baseline around the analyte signal. [1] [2] [3] | ICP-OES, AAS |

| Molecular Band Overlap | Broad absorption bands from molecules (e.g., PO, OH) overlap with narrow atomic absorption lines. [3] | AAS |

# Troubleshooting Guide: Resolving Spectral Interference

◉ Symptom: Inaccurate quantitative results, elevated detection limits, or non-linear calibration curves despite proper calibration.

When your quantitative results are consistently off, the signal is noisier than expected, or your calibration curve is not linear, spectral interference is a likely culprit. The following workflow provides a systematic approach to diagnosing and resolving these issues.

◉ Step 1: Confirm the Presence of Interference

Before attempting corrections, confirm that interference is the root cause.

- Compare Sample and Blank Spectra: Collect a spectrum of your sample and a procedural blank. A significant difference in the baseline or the presence of unexpected peaks in the sample spectrum indicates potential interference. [1] [5]

- Check for Baseline Abnormalities: Look for a sloping, curved, or elevated baseline in the region of your analytical line, which suggests background interference. [1] [6]

- Analyze a Standard with and without Matrix: Measure a known concentration of your analyte in a simple solvent and then in the presence of your sample matrix. A significant difference in the measured concentration suggests matrix-induced interference. [3]

◉ Step 2 & 3: Identify the Type and Apply Correction Strategies

Once confirmed, use the table below to identify the specific interference type and the appropriate methodological correction.

Table 2: Spectral Interference Identification and Correction Methods

| Interference Type | Key Identifying Feature | Primary Correction Methodology | Example & Notes |

|---|---|---|---|

| Direct Spectral Overlap | Unusually high signal for analyte at a line known to have a potential interferent. [1] [4] | Avoidance: Select an alternative, interference-free analytical line. This is the most robust solution. [1] [4] | As on Cd: The As 228.812 nm line directly overlaps with the Cd 228.802 nm line. Switching to another Cd line avoids the issue. [1] |

| Wing Overlap | High background from a nearby, very intense emission line of a matrix element. [1] | Mathematical Correction: Use an interference correction factor (K-factor) provided by instrument software to subtract the interferent's contribution. [1] [4] | Requires precise measurement of the interferent's concentration and its contribution to the analyte signal. |

| Background Interference (Flat/Sloping) | Consistent elevation or a steady slope of the baseline under the analyte peak. [1] | Background Subtraction: Measure background intensity on one or both sides of the analyte peak and subtract it from the peak intensity. [1] [7] | For a flat background, points on either side are averaged. For a sloping background, points must be equidistant from the peak. [1] |

| Background Interference (Complex/Drift) | Curved or irregularly shifting baseline, often from scattering or molecular absorption. [1] [6] | Advanced Algorithms: Use techniques like penalized least squares for baseline drift correction. [8] [6] | Common in FTIR of solids and gas analysis. Corrects for complex, non-linear baseline shapes. [6] |

| Molecular Absorption | Broad absorption bands in atomic spectrometry, often from matrix components. [2] [3] | Background Correction Systems: Use instrumental methods like Deuterium (D₂) lamp background correction or Zeeman effect correction. [2] [3] | The D₂ lamp measures broad background, which is subtracted from the total absorption (analyte + background). [2] |

◉ Symptom: Peaks are missing, suppressed, or show unexpected shapes.

This issue is common in molecular spectroscopy (e.g., FTIR, Raman) and is often related to sample preparation.

- Cause 1: Poor Sample Homogeneity or Particle Size. Large or irregular particles scatter light, leading to distorted peaks and reststrahlen bands. [9] [10]

- Solution: Grind solid samples to a fine, uniform particle size (< 40 µm). Dilute samples in a non-absorbing matrix like KBr and ensure consistent packing density. [10]

- Cause 2: Specular Reflection. In Diffuse Reflectance Infrared Fourier Transform Spectroscopy (DRIFTS), glossy surfaces can cause mirror-like reflection, which distorts the spectrum. [10]

- Solution: Use a dilution method and ensure the sample surface is matte and not overly compressed. [10]

- Cause 3: High Moisture Content. Water vapor can absorb IR radiation, leading to broad peaks that obscure analyte signals. [10] [6]

- Solution: Oven-dry samples and reference materials before analysis and store them in a desiccator. [10]

# Experimental Protocol: Baseline Correction for Complex Spectra

This protocol details the use of the Adaptive Smoothness Parameter Penalized Least Squares method, an effective approach for correcting complex baseline drift, as applied in FTIR analysis of gases. [6]

Principle: The method models the baseline, z, by minimizing a cost function that balances the fidelity to the original spectrum, y, with the smoothness of the baseline. The function is: Q = Σ(y_i - z_i)² + λ Σ(Δ²z_i)², where λ is a smoothing parameter that controls the trade-off. [6]

Procedure:

- Acquire Spectrum: Collect the IR spectrum of your sample, noting any obvious baseline curvature or drift.

- Select Initial Smoothing Parameter (λ): Choose an initial value for

λ. A higher value produces a smoother baseline. - Iterative Optimization: Use an algorithm to adaptively adjust

λbased on the local characteristics of the spectrum. This allows the baseline to fit the curved drift without fitting the analytical peaks. - Calculate Corrected Spectrum: Subtract the calculated baseline,

z, from the original spectrum,y, to obtain the corrected spectrum:y_corrected = y - z. - Validation: Validate the correction by analyzing a standard sample with a known, flat baseline to ensure the algorithm does not introduce artifacts.

# The Scientist's Toolkit: Essential Reagents for Mitigating Interference

The following reagents and materials are critical for sample preparation and methodological strategies to prevent or minimize spectral interference.

Table 3: Key Research Reagents and Materials for Spectral Interference Management

| Reagent/Material | Function | Application Technique |

|---|---|---|

| High-Purity Acids (e.g., HNO₃) | Sample digestion and stabilization; minimizes introduction of contaminant metals that cause interference. [9] | ICP-MS, ICP-OES |

| Lithium Tetraborate | Flux for fusion techniques; creates homogeneous glass disks that eliminate particle size and mineralogy effects. [9] | XRF |

| Potassium Bromide (KBr) | Non-absorbing matrix for dilution; reduces scattering and specular reflection for solid samples. [10] | FTIR, DRIFTS |

| Certified Standard Gases | Used for calibration and creating interference correction models in gas analysis. [6] | FTIR Gas Analysis |

| Deuterated Solvents (e.g., CDCl₃) | Solvents with minimal IR absorption in key spectral regions to avoid solvent peak overlap with analytes. [9] | FTIR, NMR |

| Boric Acid / Cellulose | Binders for pelletizing powdered samples to create a uniform, flat surface for analysis. [9] | XRF |

| Internal Standard Solutions | Added in known concentration to all samples and standards to correct for instrument drift and matrix effects. [9] | ICP-MS, ICP-OES |

Troubleshooting Guides and FAQs

Spectral interferences in quantitative analysis generally originate from three primary sources: the instrument itself, the sample being analyzed, and the environment in which the analysis is conducted. These interferences can manifest as baseline drift, spurious peaks, elevated background signals, or distorted spectral features, ultimately compromising the accuracy and reliability of your quantitative results [11] [12].

How can I correct for strong fluorescence background in Raman spectroscopy?

A strong fluorescence background from samples is a common sample-derived artifact that can swamp the weaker Raman signal.

- Preventive Experimental Techniques: The simplest approach is to use a laser with a longer wavelength (e.g., 785 nm or 1064 nm instead of 532 nm) to reduce the energy that excites fluorescent transitions [11].

- Computational Correction Methods: If changing the laser is not feasible, several numerical methods can be applied during data processing. These include polynomial fitting algorithms to model and subtract the fluorescent baseline [11] [8]. Advanced deep learning (DL) approaches are also emerging as powerful tools for automatically isolating the Raman signal from complex backgrounds [11].

Our FTIR spectra show significant baseline drift. How can we address this?

Baseline drift is a frequent instrumental or environmental artifact, often caused by instrumental instability or temperature fluctuations [12].

- Correction Protocol: The adaptive smoothness parameter penalized least squares (asPLS) method is an effective computational technique for correcting baseline drift. The workflow involves an iterative process of fitting a baseline to the spectrum, comparing it to the original data, and re-weighting the fit to minimize the influence of true spectral peaks, resulting in a drift-corrected spectrum [12].

What should I do when I encounter direct spectral overlap from multiple elements?

Direct spectral overlap occurs when an emission or absorption line from an interfering species is too close to the analyte's line, a common issue in atomic spectroscopy like ICP-OES [1].

- Avoidance Strategy: The most straightforward solution is to select an alternative, interference-free analytical line for your quantitation [1].

- Correction Strategy: If you must use an interfered line, you can use an interference correction algorithm. This requires measuring the intensity of the interfering element at the analysis line in a separate standard and determining a "correction coefficient" (counts/ppm). This coefficient is then used to mathematically subtract the interferent's contribution from the total signal in unknown samples [1].

How does moisture in samples affect NIR quantitative models and how can this be mitigated?

Water has strong, broad absorption bands in the Near-Infrared (NIR) region, which can obscure the signals of target analytes and introduce significant variance unrelated to the analyte's concentration, leading to inaccurate models [13].

- Mitigation Protocol: A Spectral Decomposition Optimization Algorithm (SDOA) can be used to reduce moisture interference. This method involves constructing a spectral dataset of samples at varying moisture levels, performing singular value decomposition (SVD) on the difference spectra to identify primary interference factors, and then applying projection transformations to remove these moisture-related spectral components, resulting in spectra that are more consistent and directly related to the analyte of interest [13].

Table 1: Common artifacts, their origins, and data presentation in spectra.

| Interference Type | Primary Origin | Manifestation in Spectrum | Quantitative Impact |

|---|---|---|---|

| Fluorescence | Sample-Derived | Broad, sloping background that can obscure Raman signals [11] | High baseline reduces signal-to-noise ratio and detection limits [11] |

| Spectral Overlap | Sample-Derived / Instrumental | Incomplete resolution of analyte and interferent peaks [1] [3] | Positive bias in concentration measurements [1] |

| Baseline Drift | Instrumental / Environmental | Vertical shift of the entire spectrum [12] | Inaccurate absorbance/intensity readings, leading to concentration errors [12] |

| Cosmic Rays | Environmental | Sharp, intense, random spikes [8] | Causes spurious peaks that can be mistaken for real signals [8] |

| Moisture Interference | Sample-Derived / Environmental | Strong, broad absorption bands in NIR region [13] | Obscures analyte signals, reduces model accuracy and reliability [13] |

Table 2: Overview of correction methods for different interference types.

| Interference Type | Preventive/Experimental Strategies | Computational/Numerical Corrections |

|---|---|---|

| Fluorescence | Use longer wavelength laser (e.g., 785 nm, 1064 nm) [11] | Polynomial baseline fitting, Deep Learning (DL) algorithms [11] [8] |

| Spectral Overlap | Select an alternative analytical line [1] | Interference correction coefficients, Advanced peak deconvolution [1] |

| Baseline Drift | Ensure instrument warm-up and stable temperature control [12] | Adaptive penalized least squares (e.g., asPLS) [12] |

| Cosmic Rays | Use spectrometer with cosmic ray mitigation hardware | Spike removal algorithms, median filtering [8] |

| Moisture Interference | Control sample environment, use dry gas purges | Spectral Decomposition Optimization Algorithm (SDOA) [13] |

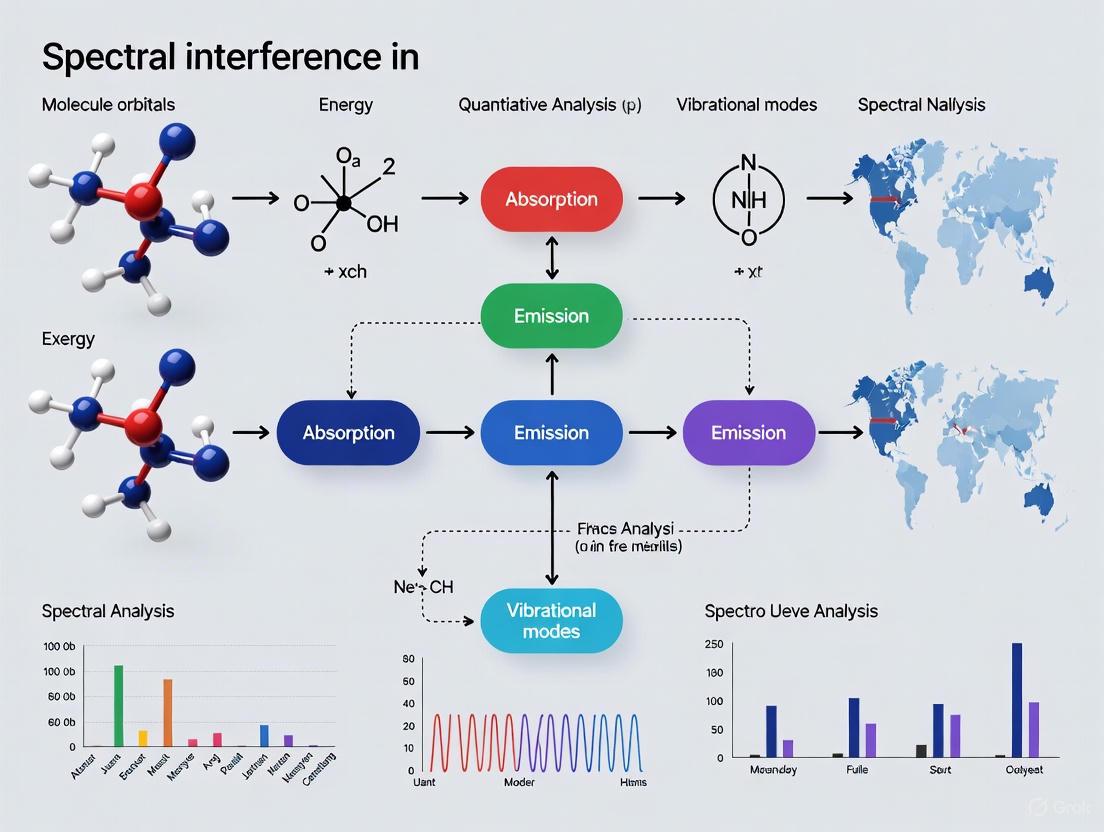

Workflow for Identifying and Correcting Spectral Interference

The following diagram illustrates a systematic workflow for troubleshooting spectral interference.

The Scientist's Toolkit: Key Reagents and Materials

Table 3: Essential research reagents and materials for managing spectral interference.

| Item | Function / Application |

|---|---|

| Certified Standard Gas Mixtures | Used for calibration and validation of quantitative models in gas analysis (e.g., FTIR). Certified concentrations are traceable to national standards [12]. |

| High-Purity Nitrogen (Balance Gas) | An inert gas used as the balance or diluent in preparing standard gas mixtures for spectroscopy to prevent unwanted reactions or absorption [12]. |

| Stable Isotope Tracers | Used in ICP-MS to overcome spectral overlaps via isotope dilution analysis, an internal standardization technique. |

| Matrix-Matched Standards | Calibration standards that closely mimic the sample's chemical and physical matrix, helping to correct for matrix-induced interferences [1]. |

| Chemical Modifiers (e.g., Phosphate) | Used in atomic absorption spectroscopy to alter the volatility of the analyte or interferent, thereby minimizing chemical interferences during atomization [3]. |

Technical Support Center

Troubleshooting Guides & FAQs

FAQ: Spectral Interferences in Quantitative Analysis

Q1: What are the primary types of spectral interference encountered in spectroscopic analysis?

Spectral interferences are typically categorized into three main types, each with distinct characteristics and origins [1] [2]:

- Spectral Overlap (or Direct Overlap): This occurs when an analyte's emission, absorption, or mass line directly overlaps with a line from an interfering element or molecule. In atomic spectroscopy, this can be an isobaric interference in ICP-MS or an emission line overlap in ICP-OES [1] [14]. In molecular spectroscopy, vibrational/rotational bands of different species can interfere, a phenomenon sometimes called "spectral cross-talk" [15].

- Background Interference (Background Radiation): This is a broadband signal originating from sources other than the analyte. In flame and plasma techniques, this can be caused by molecular species or particulates scattering or emitting radiation [1] [2]. In FTIR, baseline drift due to environmental factors or instrumental effects is a common form of background interference [12] [5].

- Scattering Effects: This is observed when radiation from the source is scattered by undissociated particles or matrix components in the sample, leading to a reduction in the transmitted light and an falsely high apparent absorbance [2]. This is a significant concern at wavelengths below 300 nm [2].

Q2: My calibration curve shows poor linearity. What could be the cause?

Poor linearity in a calibration curve, especially at lower concentrations, can result from several issues [14]:

- Contamination: Check your blank sample for a signal. Contamination during sample preparation or from the sample introduction system can cause non-linear responses at low concentrations [14].

- Insufficient Sensitivity: The analyte concentration might be near or below the instrument's detection limit, leading to random variance [14].

- Sample Introduction Problems: Gradual increases in signal can indicate that measurements began before the sample introduction system stabilized [14].

- Incorrect Calibration Weighting: For wide concentration ranges, using an unweighted least squares regression can magnify errors at the low end. Applying a weighting factor (e.g., 1/I) can improve accuracy in the low concentration region [14].

Q3: The baseline in my FTIR spectrum is unstable and drifting. How can I fix this?

Baseline drift in FTIR can be attributed to instrumental or sample-related factors [12] [5]:

- Instrumental Causes: Fluctuations in the infrared light source temperature or mechanical disturbances that misalign the interferometer are common culprits [5].

- Sample-Related Causes: The sample itself can cause matrix effects, or contamination may have been introduced during preparation [5].

- Troubleshooting Steps:

- Record a fresh blank spectrum under identical conditions.

- If the blank also exhibits drift, the issue is likely instrumental. Ensure the instrument has warmed up sufficiently and check for environmental disturbances like air conditioning cycles or vibrations [5].

- If the blank is stable, the problem is likely sample-related. Re-prepare your sample, ensuring proper technique to avoid contamination [5].

- Apply a baseline correction algorithm, such as the adaptive smoothness parameter penalized least squares (asPLS) method, to correct drifted spectra post-acquisition [12].

Q4: How can I correct for a direct spectral overlap between two elements in ICP-OES?

Correcting for a direct spectral overlap is challenging and avoidance is often preferred. If correction is necessary, a quantitative approach involves [1]:

- Measure the Interferent's Contribution: Precisely measure the concentration of the interfering element (e.g., As) using another, non-interfered line.

- Determine a Correction Coefficient: In a separate measurement, analyze a standard containing only the interfering element at a known concentration. Measure the intensity of this standard at the analyte's wavelength (e.g., Cd line) to calculate a correction coefficient (counts/ppm interferent).

- Apply the Correction: For your sample, subtract the calculated intensity contribution of the interferent (interferent's concentration × correction coefficient) from the total measured intensity at the analyte's wavelength to obtain the corrected analyte intensity [1]. This approach assumes that instrumental conditions affect the analyte and interferent equally, which may not always hold true [1].

Troubleshooting Common Spectral Anomalies

Symptom: Missing or Suppressed Peaks

- Potential Causes:

- Insufficient Laser Power (Raman): The laser power may be too low to generate a detectable signal [5].

- Detector Malfunction: An aging or faulty detector can lead to a significant loss of sensitivity [5].

- Inconsistent Sample Preparation: Variations in concentration or a lack of homogeneity can result in analyte levels below the detection threshold [5].

- Paramagnetic Species (NMR): These can broaden lines or shift peaks outside the detection window [5].

- Resolution Protocol:

- Verify instrument calibration and detector performance.

- Check and optimize source power (e.g., laser, lamp).

- Re-prepare the sample, ensuring consistency and correct concentration [5].

Symptom: Excessive Spectral Noise

- Potential Causes:

- Electronic Interference from nearby equipment.

- Temperature Fluctuations or mechanical vibrations.

- Inadequate Purging in FTIR, leading to interference from atmospheric water vapor and CO₂ [5].

- Resolution Protocol:

- Isolate the instrument from sources of vibration and electrical noise.

- Ensure stable temperature control in the lab.

- Check and maintain purge gas flow rates and sample compartment seals [5].

Quantitative Data on Spectral Interferences

The tables below summarize quantitative data related to detection limits and spectral interference effects.

Table 1: Quantitative Analysis Performance for Coal Mine Gases by FTIR This table shows the detection and quantification limits achievable for various gases using a validated FTIR method, demonstrating the technique's sensitivity for quantitative multi-component analysis [12].

| Gas Species | Detection Limit (ppm) | Quantification Limit (ppm) |

|---|---|---|

| CH₄ | 0.5 | <10 |

| C₂H₆ | 1 | <10 |

| C₃H₈ | 0.5 | <10 |

| n-C₄H₁₀ | 0.5 | <10 |

| i-C₄H₁₀ | 0.5 | <10 |

| C₂H₄ | 0.5 | <10 |

| C₂H₂ | 0.2 | <10 |

| C₃H₆ | 0.5 | <10 |

| CO | 1 | <10 |

| CO₂ | 0.5 | <10 |

| SF₆ | 0.1 | <10 |

Table 2: Impact of Spectral Overlap on Analytical Figures of Merit This table illustrates the significant degradation in relative error and detection limit for Cadmium (Cd) when measured at a line overlapped by Arsenic (As), highlighting the critical impact of spectral interferences [1] [16].

| Cd Conc. (ppm) | As/Cd Ratio | Uncorrected Relative Error (%) | Best-Case Corrected Relative Error (%) |

|---|---|---|---|

| 0.1 | 1000 | 5100 | 51.0 |

| 1 | 100 | 541 | 5.5 |

| 10 | 10 | 54 | 1.1 |

| 100 | 1 | 6 | 1.0 |

Experimental Protocols

Protocol 1: Baseline Drift Correction for FTIR Spectra

Objective: To correct for baseline drift in FTIR spectra using the adaptive smoothness parameter penalized least squares (asPLS) method [12].

Materials:

- FTIR spectrometer

- Software capable of implementing the asPLS algorithm (e.g., MATLAB, Python with appropriate libraries)

Procedure:

- Acquire Spectral Data: Collect the infrared absorption spectrum of your sample.

- Implement the asPLS Algorithm:

- The algorithm iteratively fits a baseline,

z, to the original spectrum,y, by minimizing the following function [12]:Q = Σ (y_i - z_i)² + λ Σ (Δ²z_i)²whereλis a smoothness parameter. - A weight vector,

w, is updated in each iteration to reduce the influence of peak regions on the baseline fit.

- The algorithm iteratively fits a baseline,

- Apply Correction: Subtract the fitted baseline,

z, from the original spectrum,y, to obtain the baseline-corrected spectrum. - Validation: Validate the method using standard gases with known concentrations to ensure quantitative accuracy is maintained [12].

Protocol 2: Quantitative Correction for Spectral Overlap in ICP-OES

Objective: To quantitatively correct for the interference of Arsenic (As) on the Cadmium (Cd) 228.802 nm line [1].

Materials:

- ICP-OES instrument

- High-purity calibration standards for Cd and As

Procedure:

- Characterize the Interference:

- Run a high-purity standard containing 100 µg/mL As.

- Record the net intensity at the Cd 228.802 nm wavelength. This intensity divided by the As concentration gives the correction coefficient (counts/ppm As) [1].

- Analyze the Sample:

- Measure the concentration of As in the sample using a separate, non-interfered As line.

- Measure the total intensity at the Cd 228.802 nm line.

- Perform the Correction:

- Calculate the corrected Cd intensity using the formula:

I_Cd(corrected) = I_Total - (C_As × Correction Coefficient) - Convert the corrected intensity to Cd concentration using the Cd calibration curve [1].

- Calculate the corrected Cd intensity using the formula:

Workflow and Relationship Diagrams

Spectral Anomaly Diagnosis Path

Spectral Interference Correction Workflow

The Scientist's Toolkit: Key Reagents & Materials

Table 3: Essential Research Reagents and Materials for Spectroscopic Analysis

| Item | Function | Application Example |

|---|---|---|

| High-Purity Calibration Standards | Used to establish accurate calibration curves and determine interference correction coefficients. | ICP-OES, ICP-MS, FTIR quantification [1] [12]. |

| Deuterated Solvents (e.g., CDCl₃) | Solvents with minimal interfering absorption bands in the mid-IR region. | FT-IR sample preparation to avoid solvent peak overlap [9]. |

| Lithium Tetraborate Flux | Used to fuse and dissolve refractory materials into homogeneous glass disks for analysis. | XRF sample preparation to eliminate mineral and particle size effects [9]. |

| Collision/Reaction Gases (He, H₂) | Gases used in ICP-MS collision/reaction cells to remove polyatomic and doubly charged ion interferences. | ICP-MS interference removal (e.g., H₂ for Ar-Ar interference on Se) [14]. |

| Internal Standard Elements | Elements added in known amounts to samples and standards to correct for instrument drift and matrix effects. | ICP-MS quantitative analysis to improve precision and accuracy [14]. |

| Certified Reference Materials (CRMs) | Materials with certified composition and concentration, used for method validation and quality control. | Verifying the accuracy of quantitative analyses and interference corrections across techniques [17]. |

Technical Support Center

Troubleshooting Guides

Guide 1: Troubleshooting Degraded Measurement Accuracy in Spectral Analysis

Reported Issue: Inconsistent or inaccurate quantitative results from spectroscopic data (e.g., NIRS, XRF). Primary Symptom: High prediction error (RMSE) or poor model fit (Low R²) even after standard preprocessing.

| Investigation Step | Diagnostic Procedure | Expected Outcome | Potential Faulty Component |

|---|---|---|---|

| 1. Signal Quality Check | Visually inspect raw spectra for abnormal baseline, noise level, or obscured peaks. | Smooth baseline with clear, distinct peaks. | Sample impurities, instrument noise, scattering effects [8]. |

| 2. Background Interference | Apply Spectral Feature Extraction Module (SFEM) to enhance peaks and suppress background [18]. | Meaningful peaks are adaptively weighted and enhanced. | Uncorrected background interference from sample matrix or instrument. |

| 3. Preprocessing Impact | Compare model performance (R², RMSE) with and without preprocessing on a validation set [19]. | Minimal performance difference with a robust model like MBML Net. | Inappropriate preprocessing methods (e.g., incorrect scattering correction) [8] [19]. |

| 4. Model Robustness | Test the MBML Net model, which is designed to operate on raw data without preprocessing [19]. | High prediction accuracy (RPD > 7.5) on validation datasets [18]. | Traditional linear models (PLS) with poor handling of nonlinear features [19]. |

Resolution Protocol:

- Data Acquisition: Ensure system-level QA is performed. For DCE-MRI, use a reference region (e.g., cerebellum) to establish accuracy and repeatability coefficients [20].

- Feature Extraction: Implement a deep learning fusion network (e.g., MSAF-Net) that uses adaptive weighting and multi-energy state fusion to prevent important peaks from being obscured by noise [18].

- Model Selection: Employ a multi-branch, multi-level CNN model (MBML Net) that fuses shallow and deep spectral features from raw data, eliminating the need for cumbersome preprocessing and its associated errors [19].

Guide 2: Troubleshooting Biased Feature Extraction in Text Mining & NLP

Reported Issue: Text mining model fails to capture relevant patterns, leading to poor classification or topic extraction. Primary Symptom: Low accuracy and recall across different datasets or text domains.

| Investigation Step | Diagnostic Procedure | Expected Outcome | Potential Faulty Component |

|---|---|---|---|

| 1. Text Preprocessing | Analyze text after tokenization and stopword removal. Check for consistent token/lemma forms. | Text size reduction of 35-45% after stopword removal; consistent word roots [21]. | Improper tokenization, incomplete stopword lists, or failure to use lemmatization for morphologically rich languages [21]. |

| 2. Feature Space Analysis | Calculate the dimensionality of the feature set before and after feature selection. | A reduced feature set retaining only the most informative elements [21]. | High-dimensional feature space with many irrelevant or noisy terms. |

| 3. Method Suitability | Evaluate the choice of feature extraction technique (e.g., traditional vs. deep learning-based). | Ability to capture complex, non-linear patterns in text data [21]. | Use of simplistic feature extraction methods (e.g., BOW) for complex tasks. |

Resolution Protocol:

- Data Cleaning: Implement a rigorous preprocessing pipeline: tokenization, stopword removal, and lemmatization to maintain linguistic validity [21].

- Dimensionality Reduction: Apply feature selection to retain crucial information and remove noise [21].

- Advanced Feature Extraction: Transition from traditional statistical methods to deep learning-based feature extraction, which can decipher complex patterns and underlying semantics in text [21].

Frequently Asked Questions (FAQs)

Q1: My NIRS quantitative model's performance is highly unstable and depends heavily on the preprocessing method I choose. How can I make my analysis more robust?

A1: Model instability often stems from preprocessing steps that inadvertently remove important signal information or introduce artifacts. The solution is to reduce dependency on these steps.

- Strategy 1: Adopt a Robust Model Architecture. Use a model specifically designed for robustness against raw spectral variations. The MBML Net (Multi-branch and Multi-level feature extraction Network) has been shown to achieve higher prediction accuracy on raw NIRS data than traditional models (PLS, SVR) that require preprocessing. On the Tablets 655 dataset, it achieved an RMSE as low as 0.0056 without any preprocessing [19].

- Strategy 2: Implement Intelligent Spectral Enhancement. For techniques like XRF, employ a Spectral Feature Extraction Module (SFEM) that uses adaptive weighting to enhance meaningful peaks while suppressing background interference, preventing crucial information from being obscured [18].

Q2: How can I quantitatively assess and account for systematic errors (bias) in my observational research, rather than just mentioning them as limitations?

A2: You can use Quantitative Bias Analysis (QBA), a set of methods developed to estimate the direction and magnitude of systematic error [22].

- Methodology:

- Identify Bias: Use Directed Acyclic Graphs (DAGs) to pinpoint potential sources like unmeasured confounding, selection bias, or information bias (measurement error) [22].

- Select QBA Method:

- Simple Bias Analysis: Uses single values for bias parameters (e.g., sensitivity/specificity of a measurement) to adjust the observed effect. Best for initial assessment [22].

- Probabilistic Bias Analysis: The most robust approach. It specifies probability distributions for bias parameters, runs multiple simulations to account for uncertainty, and produces a distribution of bias-adjusted estimates [22].

- Inform Parameters: Use data from internal validation studies or the literature to estimate bias parameters (e.g., prevalence of unmeasured confounders, measurement sensitivity) [22].

Q3: In a clinical trial setting using quantitative imaging (QI) metrics, how can I ensure the accuracy and precision of each measurement in real-time before making a critical decision?

A3: Implement a framework for real-time quantitative assessment using a stable reference region [20].

- Protocol:

- Establish a Baseline: From a sample of patients (

npatients), calculate the mean value and Repeatability Coefficient (RC) of the QI metric (e.g., Blood Volume) in a reference region unaffected by therapy (e.g., cerebellum) [20]. - Set Decision Rules: For a new patient, the QI map is considered accurate and precise if the values in the reference region agree with the established baseline mean and the difference between repeated scans (e.g., pre-therapy and 2-weeks post-therapy) is within the RC with 95% confidence [20].

- Flag and Review: Data that fail these criteria are flagged for further evaluation before being used in the trial, preventing decisions based on inaccurate data [20].

- Establish a Baseline: From a sample of patients (

Q4: What are the most critical steps in text preprocessing to minimize bias in feature extraction for text mining?

A4: The goal is to reduce noise while preserving semantic meaning. Critical steps include [21]:

- Tokenization: Breaking text into meaningful units (words, sub-words) appropriate for the language (e.g., character-level for Chinese, white-space for English).

- Stopword Removal: Eliminating high-frequency, low-information words (e.g., "the," "and"), which can reduce text size by 35-45% and help the model focus on meaningful content.

- Lemmatization (Preferred over Stemming): Reducing words to their base dictionary form (lemma) using vocabulary and morphological analysis, which preserves valid language and is more accurate than heuristic stemming.

Experimental Protocols for Cited Methodologies

Objective: To ensure the accuracy and precision of Quantitative Imaging (QI) maps in individual patients during a clinical trial. Materials:

- Medical imaging scanner (e.g., 3T MRI scanner)

- Phantom for system-level QA (e.g., ACR water phantom)

- Quantitative analysis software (e.g., in-house FIAT package for DCE-MRI)

Workflow:

- System-Level QA: Perform daily, weekly, and yearly QA of the hardware and software using phantoms following established protocols (e.g., ACR protocol). Record signal-to-noise ratio variations [20].

- Establish Reference Values: In a cohort of

npatients, define a Volume of Interest (VOI) in a normal reference region unaffected by the therapy (e.g., cerebellum). Calculate the mean value and Repeatability Coefficient (RC) of the QI metric (e.g., Blood Volume) within this VOI for all patients [20]. - Acquire Patient Data: For each new patient, perform the QI scan according to the trial's protocol.

- Real-Time Assessment: Extract the QI values from the reference region VOI in the new patient's data.

- Check Accuracy: The mean value should agree with the pre-established reference mean.

- Check Precision: The difference between repeated scans (if available) should agree with the RC.

- Decision Point: If the data agree with the reference values and RC with 95% confidence, the QI map is cleared for use in the trial. Otherwise, the data is flagged for investigation [20].

Objective: To quantitatively account for the impact of systematic error (e.g., from unmeasured confounding or measurement error) on an observed effect estimate. Materials:

- Summary-level (2x2 table) or individual-level observational data.

- Statistical software capable of running simulations (e.g., R, Python).

- Prior information on bias parameters from validation studies or literature.

Workflow:

- Define the Bias Structure: Create a Directed Acyclic Graph (DAG) to illustrate the hypothesized relationships between exposure, outcome, confounders, and sources of bias [22].

- Specify Bias Parameters and Distributions:

- For unmeasured confounding: Define distributions for the prevalence of the confounder among exposed/unexposed and the strength of its association with the outcome.

- For information bias: Define distributions for the sensitivity and specificity of exposure/outcome measurement.

- Set up the Probabilistic Model:

- Program a model that relates the bias parameters to the observed data.

- Randomly sample values for the bias parameters from their specified distributions over a large number of iterations (e.g., 10,000).

- Run Analysis and Summarize:

- For each set of sampled parameters, compute a bias-adjusted estimate.

- Summarize the distribution of all bias-adjusted estimates (e.g., with a mean and percentiles) to provide a final estimate that incorporates uncertainty about the bias [22].

Research Workflow and Signaling Pathways

Diagram: Spectral QI & Text Data Robust Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item Name | Function & Application | Key Characteristic |

|---|---|---|

| MBML Net (Multi-branch Multi-level Network) | A CNN model for NIRS quantitative analysis; fuses shallow/deep features from raw spectra, eliminating need for preprocessing [19]. | Enables high prediction accuracy (e.g., RMSE¯ = 0.0056 on Tablets 655) on raw data [19]. |

| MSAF-Net (Multi-energy State Attention Fusion Network) | A deep learning model for XRF spectroscopy; integrates data from multiple energy states for enhanced elemental analysis [18]. | Achieves high coefficients of determination (R² > 0.96) for elements like Si, Al, Fe [18]. |

| Spectral Feature Extraction Module (SFEM) | A module within MSAF-Net that adaptively weights spectral data to enhance peaks and suppress background noise [18]. | Prevents important spectral peaks from being obscured by noise or interference [18]. |

| CEE Critical Appraisal Tool | A domain-based tool for assessing risk of bias in primary environmental research; helps systematize evaluation of confounding, selection, and measurement biases [23]. | Facilitates structured identification of systematic errors in observational studies [23]. |

| andl-datasets Python Package [24] | A software library to simulate realistic single-particle tracking data for benchmarking analysis methods [24]. | Provides ground truth data for objectively evaluating method performance in detecting motion changes [24]. |

Methodological Arsenal: Techniques for Spectral Interference Correction and Avoidance

Troubleshooting Guides and FAQs

Frequently Asked Questions

1. How do I choose between first and second derivative spectroscopy for my quantitative analysis? The choice depends on the nature of your baseline interference and the analytical information you require. Use first derivative spectra primarily to eliminate linear, sloping baselines. Use second derivative spectra to remove baseline curvature that can be fitted to a quadratic equation and to resolve overlapping spectral features. The second derivative also provides sharper-appearing bands, which can enhance resolution, but be aware that it creates artifact peaks (positive-going peaks flanking the main negative-going peak) that must be correctly identified. [25]

2. My derivative spectrum is very noisy. What are the key parameters to optimize? A noisy derivative indicates that the computational parameters are not optimized for your data. You must optimize two key instrumental and computational parameters:

- Data Interval: The wavelength interval used to record the original spectrum. For derivative work, a relatively small data interval is best to capture high-frequency features. [25]

- Derivative Interval: The interval used to calculate the derivative itself. This can be smoothed to reduce noise, but some trial and error is necessary. Using an algorithm designed for noisy data, like a regularized inverse problem approach or Savitzky-Golay smoothing, is superior to simple finite-difference methods for noisy data. [26] [25]

3. When should I use scattering correction versus baseline correction? These techniques address different physical phenomena:

- Scattering Correction: Apply when variations in sample physical properties (e.g., particle size, shape, texture) cause multiplicative and additive effects on the spectrum. Techniques like Multiplicative Scatter Correction (MSC) and Standard Normal Variate (SNV) are designed to correct for these scattering-induced deviations. [27]

- Baseline Correction: Apply when the interference is due to instrumental or environmental factors (e.g., light source variations, temperature, humidity) that cause a slow, smooth drift in the spectral baseline. [28] The RA-ICA algorithm is particularly effective when absorption peaks are severely overlapping and lack reference baseline points. [28]

4. Can derivative spectroscopy be used for analyzing mixtures? Yes, derivative spectroscopy is particularly useful for analyzing mixtures where components have different spectral bandwidths. It emphasizes sharp spectral features at the expense of broad features. This makes it possible to quantify a component with sharp bands even in the presence of another component with broad, overlapping spectral features. [25] For dual-component analysis, the zero intercept method can be applied using second derivative spectra. [29]

Troubleshooting Common Experimental Issues

Problem: Inaccurate quantification due to severe baseline drift in long-term experiments.

- Issue: Baseline drift alters characteristic peak positions and intensities, leading to systematic errors in quantitative results.

- Solution: Implement the Relative Absorbance-based Independent Component Analysis (RA-ICA) algorithm. [28]

- Procedure:

- Collect a time-series of single-beam spectra (I₁, I₂, ..., Iₙ) from your mixture.

- Select a reference spectrum (e.g., I₁) and calculate the relative absorbance (Aᵣᵢ) for all other spectra: Aᵣᵢ = lg(I₁/Iᵢ). This step eliminates the unknown true baseline.

- Use the FastICA algorithm to decompose the relative absorbance matrix (Aᵣ) into independent components (S), assuming the number of components (m) is known or can be estimated: Aᵣ = M × S, where M is the mixing matrix.

- Use the extracted independent components to fit the spectra requiring correction and reconstruct a high-fidelity baseline.

- Procedure:

- Advantage: This method performs exceptionally well even when absorption peaks of different components severely overlap and no reference baseline points are available in the absorption bands. [28]

Problem: Low signal-to-noise ratio and poor contrast in interferometric scattering microscopy (iSCAT) for tracking small molecules.

- Issue: The inherent trade-off between suppressing reference light (to reduce background noise) and preserving the weak scattering signal from the target.

- Solution: Apply a Spatial-Frequency Domain Deconvolution (SF) algorithm to individual image frames. [30]

- Procedure:

- Acquire raw iSCAT images.

- Preprocess to remove static background using a median image.

- Apply the SF deconvolution, which combines Wiener deconvolution and Richardson-Lucy (RL) deconvolution, followed by a low-pass filter in the frequency domain.

- Use a Gaussian function as the convolution kernel, with its size determined by the optical parameters (wavelength, numerical aperture). [30]

- Procedure:

- Outcome: This processing can achieve an approximately 3-fold improvement in contrast and reduce particle localization error by 20% without the need for hardware modification or frame-averaging that sacrifices temporal resolution. [30]

Problem: Scattering effects in NIR spectra of complex mixtures (e.g., food) impairing model performance.

- Issue: Multiplicative scattering effects caused by physical variations in samples skew the spectral data, violating the assumptions of linear regression models like PLS.

- Solution: Utilize a Spectral Ratio (SR) fusion method to correct for multiplicative effects. [27]

- Procedure:

- Calculate a ratio matrix from the raw spectral data through simple division.

- Fuse this SR algorithm with a conventional preprocessing technique. Experimental results show that:

- Input the preprocessed data into your quantitative model (e.g., PLS, Random Forest).

- Procedure:

- Advantage: The SR method does not rely on an "ideal spectrum" and can be generalized to process multispectral data, effectively correcting for dominant multiplicative scattering. [27]

The table below summarizes key quantitative findings from the cited research on the performance of various preprocessing techniques.

Table 1: Performance Metrics of Advanced Preprocessing Techniques

| Technique | Application Context | Key Performance Metrics | Reference |

|---|---|---|---|

| 2nd Derivative Spectroscopy (Rainbow R6) | Dissolution testing | Enables readings every 3 seconds; no sample filtration required; capable of dual-component analysis. [29] | |

| Iterative Shift Difference (ISDF) | SCGD-AES for metal elements (Zn, Fe, Mg, Cu, Ca) | Achieved calibration curve fitting accuracy R² > 0.995; reduced measurement error to ~5%. [31] | |

| Spectral Ratio Fusion (SR-SNV) | NIR analysis of meat | PLS models for moisture (R²=0.992), protein (R²=0.970), and fat (R²=0.994) in test sets. [27] | |

| Spatial-Frequency Deconvolution | iSCAT microscopy | Improved signal contrast by ~3-fold; reduced localization error by 20%. [30] |

Detailed Experimental Protocols

Protocol 1: Applying the ISDF Algorithm for Baseline Correction in SCGD-AES

This protocol is adapted from Zheng et al. for correcting spectral interference and continuum background in Solution Cathode Glow Discharge Atomic Emission Spectroscopy (SCGD-AES). [31]

- Spectral Acquisition: Acquire raw emission spectra from the SCGD-AES system for your analyte (e.g., Zn, Fe, Mg, Cu, Ca) in aqueous solution.

- Windowing: Apply a windowing function to the raw spectrum, confining the analysis region to ±20 data points (±1.4 nm for an instrument resolution of 0.07 nm) around the target analyte wavelength.

- Iterative Shift and Difference:

- Apply a forward wavelength shift (Δλ) to the original spectrum (O) to create a shifted spectrum (S).

- Subtract the shifted spectrum from the original to generate a difference spectrum (D): D = O - S.

- This step minimizes background fluctuations.

- Profile Restoration: Restore the original spectral profile using a deconvolution operation on the difference spectrum (D).

- Iterative Optimization: Iterate steps 3 and 4, optimizing the shift step (Δλ) based on the accuracy of the calibration curve fit (e.g., highest R² value). This adaptive approach accommodates spectral differences across elements.

Protocol 2: Implementing Robust Derivative Spectroscopy for Noisy Data

This protocol is based on the robust algorithm proposed for computing first and second-order derivative spectra from noisy data, formalized as an inverse problem. [26]

- Data Preparation: Begin with evenly spaced, noisy spectral data (e.g., absorbance or reflectance).

- Matrix Formulation: Using the fundamental theorem of calculus, formalize the relationship between the spectrum and its derivative via a Volterra-type integral equation. Convert this into a matrix equation.

- Regularization: Solve this inverse problem using a regularization technique (e.g., Tikhonov regularization) to obtain a stable, robust estimate of the first-order derivative spectrum. The "balancing principle" is used to select an optimal regularization parameter.

- Higher-Order Derivatives: To obtain the second-order derivative spectrum, use the algorithm in sequence on the estimated first-order derivative spectrum.

- Validation: This method has been tested successfully on synthetic spectral data contaminated with additive white Gaussian noise and real absorbance/reflectance data from water samples, showing superiority over finite-difference and Fourier-transform-based techniques. [26]

Signaling Pathways and Workflows

Derivative Spectrum Calculation Workflow

Diagram 1: Robust derivative estimation from noisy spectra.

RA-ICA Baseline Correction Logic

Diagram 2: RA-ICA baseline correction process.

Research Reagent Solutions

The table below lists key instruments and platforms mentioned in the research, which are essential for implementing the described techniques.

Table 2: Key Research Instruments and Platforms

| Item / Platform | Function / Application | Key Feature |

|---|---|---|

| Rainbow R6 System (Pion) | Real-time dissolution testing using 2nd Derivative UV-Vis spectroscopy. [29] | Allows up to 8 simultaneous experiments; no sample filtration; measures concentration as frequently as every 3 seconds. [29] |

| Dianthus Platform (NanoTemper) | High-throughput analysis of macromolecular interactions (protein-ligand, protein-protein). | Uses Spectral Shift (SpS) and Temperature-Related Intensity Change (TRIC); immobilization-free and mass-independent. [32] |

| SCGD-AES Setup | Liquid-phase elemental analysis for trace metals. | Enables direct transport of analytes from liquid to plasma without auxiliary gas; simple configuration for aqueous samples. [31] |

| iSCAT Microscopy Setup | Label-free tracking of nanoparticles and single molecules. | Overcomes limitations of fluorescence-based techniques (e.g., photo-bleaching); enables nanoscale visualization. [30] |

FAQs on Core Principles and Applications

Q1: What is the fundamental difference between high-resolution ICP-MS and the Zeeman effect in background correction?

High-resolution ICP-MS and Zeeman-effect background correction are techniques designed for different instrumental platforms to combat spectral interferences.

- High-Resolution ICP-MS (HR-ICP-MS): This technique utilizes a high-resolution double echelle monochromator with a spectral resolution (λ/Δλ) of approximately 110,000 or greater, coupled with a CCD array detector. It allows the analyst to view the entire spectral environment around the analytical line (e.g., over a range of ~0.3 nm). This capability directly reveals and helps avoid fine-structured molecular absorption interferences, such as those from SO2 molecules or nearby atomic lines from iron, which are indistinguishable on lower-resolution systems [33].

- Zeeman-Effect Background Correction (Zeeman AAS): This technique is used in Atomic Absorption Spectrometry. It applies a magnetic field to the atomizer, which splits the atomic absorption line. The background absorption is measured at the original wavelength while the analyte absorption is altered by the magnetic field. This allows for the specific subtraction of broad-band background, including that from certain molecules, without requiring high spectral resolution. It is particularly effective for controlling spectral interferences in complex matrices like marine sediments [33] [34].

Q2: When should a D2 lamp not be trusted for background correction in AAS?

A Deuterium (D2) lamp should be used with caution when the background absorption has a fine rotational structure. The D2 lamp technique measures total absorbance (analyte + background) with the hollow cathode lamp and then background absorbance with the D2 lamp at a slightly broader bandwidth. If the background is a fine-structured molecular band, the measurement with the D2 lamp's broader bandwidth may not accurately capture the precise background absorbance at the very narrow analyte line, leading to over- or under-correction [34]. In such cases, Zeeman-effect background correction, which measures background at the exact analytical wavelength, is more reliable [33] [34].

Q3: How can polyatomic interferences in ICP-MS be characterized and overcome?

Polyatomic interferences can be addressed through several strategies:

- Collision/Reaction Cell (CRC) Technology: Gases like helium or hydrogen are introduced into the cell to collide with polyatomic ions, causing their dissociation or shifting their masses, thereby freeing the analyte signal [35].

- High-Resolution Mass Spectrometry: Using a high-resolution sector-field ICP-MS can physically separate the analyte ion from the interfering polyatomic ion if their masses differ sufficiently [36].

- Interference Modeling: As demonstrated in the analysis of Selenium, potential polyatomic interferences can be quantitatively characterized through modeling, which informs the selection of the best analyte isotope (e.g., 77Se or 82Se) and CRC conditions for interference-free detection [35].

- Alternative Polyatomic Species: In some cases, deuterium analysis can be performed by monitoring heavier, deuterium-containing polyatomic species like ArD+ (mass 42) to avoid the low-mass range and isobaric interferences associated with D+ itself [36].

Troubleshooting Guides

Troubleshooting Signal Drift in ICP-MS

Signal drift can significantly impact quantitative accuracy. The following table outlines common symptoms and solutions.

| Symptom | Probable Cause | Corrective Action |

|---|---|---|

| Drift Upwards | Poor conditioning of new or cleaned sampler/skimmer cones [37]. | Condition cones by aspirating a conditioning solution (e.g., 1% HNO3-0.5% HCl-5% Ethanol) before analysis [37] [38]. |

| Drift Downwards | Build-up of matrix (e.g., high total dissolved solids) on sample introduction components (nebulizer, torch injector, cones) [37]. | Perform maintenance: clean or replace the nebulizer, torch, and cones. Dilute samples or use a matrix-matching modifier [37]. |

| Unstable Drift (Up/Down) | Poor grounding, leading to static charge effects; or a loose gas connection [37]. | Inspect and ensure proper connection of the ground clip on the peri-pump. Check all gas connections for tightness [37]. |

| Drift in specific gas modes | Improperly purged cell gas lines or insufficient stabilization time [37]. | Purge the collision/reaction gas lines thoroughly and confirm the stabilization time in the method is adequate [37]. |

Troubleshooting Spectral Interferences in ETAAS

Electrothermal AAS is highly susceptible to spectral interferences in complex matrices.

| Symptom | Probable Cause | Corrective Action |

|---|---|---|

| High/Erratic Background | Fine-structured molecular absorption (e.g., from SO2 molecules near 280 nm) [33]. | Use Zeeman-effect background correction instead of D2 lamp. Utilize a high-resolution CS AAS to identify the interference and adjust the temperature program [33]. |

| Inaccurate Tl measurement near 276.8 nm | Strong absorption from a nearby Iron line at 276.752 nm at high atomization temperatures [33]. | Use a high-resolution spectrometer to resolve the lines, or implement a chemical modifier to separate the volatilization of analyte and interferent. |

| Persistent interference after chemical modification | Complex, unresolved molecular spectra or chloride interference [33]. | Employ a sophisticated algorithm (e.g., least squares fitting) to subtract a model spectrum of the interferent. Use a permanent modifier like Ruthenium with Ammonium Nitrate [33]. |

Detailed Experimental Protocols

Protocol: Determination of Thallium in Marine Sediments using HR-CS ETAAS

This protocol is adapted from the investigation of spectral interferences in marine sediment reference materials [33].

1. Instrumentation and Conditions:

- Spectrometer: High-resolution continuum-source AAS with a xenon short-arc lamp, a double echelle monochromator (resolution λ/Δλ >100,000), and a CCD array detector.

- Atomizer: Transversely heated graphite furnace.

- Wavelength: Thallium primary resonance line at 276.787 nm.

- Sample Introduction: Slurry sampling.

2. Reagents and Modifiers:

- Chemical Modifiers: Ammonium nitrate solution, or Ruthenium as a permanent modifier.

- Calibration Standards: Aqueous thallium standard solutions.

- Model Interferent Solution: Potassium hydrogen sulfate (KHSO4) solution for recording the SO2 model spectrum.

3. Procedure:

- Sample Preparation: Prepare a homogeneous slurry of the marine sediment reference material in a suitable diluent.

- Furnace Temperature Program:

- Drying Stage: Ramp to ~110°C to dry the sample.

- Pyrolysis Stage: Optimize temperature (e.g., ~400°C) to remove organic matter and some salts without losing volatile thallium species. The use of NH4NO3 modifier helps to volatilize NaCl as NH4Cl and NOCl.

- Atomization Stage: Atomize at >2000°C and record the absorption spectrum.

- Spectral Interference Correction:

- Atomize a pure KHSO4 solution to record the characteristic electron excitation spectrum of the SO2 molecule.

- Use a least-squares algorithm in the instrument software to subtract this model spectrum from the sample spectrum obtained during atomization.

- Quantification: Use a calibration curve established with aqueous standards. The characteristic mass for Tl should be approximately 15-16 pg.

Protocol: Quantitative Analysis of Selenium and Mercury in Biological Samples using LA-ICP-MS

This protocol summarizes the methodology for simultaneous, spatially resolved quantification [35].

1. Instrumentation:

- ICP-MS: Instrument equipped with a collision/reaction cell (CRC) technology. Laser Ablation system for solid sample introduction.

- Operating Mode: Either standard LA-ICP-MS or LA-ICP-MS/MS.

2. Method Optimization:

- CRC Optimization: Optimize the type and flow rate of the cell gas (e.g., He, H2) to suppress polyatomic interferences on Selenium isotopes.

- Isotope Selection: Monitor 77Se and 82Se after CRC optimization to achieve interference-free detection.

- Interference Modeling: Pioneered quantitative characterization of polyatomic interferences via modeling to inform the above choices.

- Calibration Strategy: Use matrix-matched solid standards. Note that signal behavior is matrix-dependent (organic-rich liver vs. protein-dominated gelatin), requiring a calibration strategy specific to the tissue type.

3. Procedure:

- Sample Preparation: Cryo-section biological tissues to obtain thin slices. Mount on slides without further treatment.

- Laser Ablation: Ablate the sample surface using a laser beam with micrometer-scale spatial resolution.

- ICP-MS Analysis: Transport the ablated material to the ICP plasma with argon carrier gas.

- Data Acquisition and Processing: Acquire data for 77Se and 82Se, and relevant Hg isotopes. Use the established calibration curve to quantify elements and generate bioimages showing Se/Hg biodistribution.

Research Reagent Solutions

The following table details key reagents used in the advanced methodologies discussed in this guide.

| Reagent Name | Function/Application | Technical Explanation |

|---|---|---|

| Ammonium Nitrate Modifier | Used in ETAAS for chloride removal [33]. | Volatilizes NaCl in samples (e.g., marine sediments) as NH4Cl and NOCl during the pyrolysis stage, preventing the formation of stable TlCl and its subsequent loss or interference. |

| Ruthenium Permanent Modifier | Enhances thermal stability of analytes in graphite furnace [33]. | Coated onto the graphite tube, it forms a refractory surface or intermetallic compounds with volatile analytes like Thallium, allowing for higher pyrolysis temperatures and better separation from the matrix. |

| Ethanol (as Matrix Markup) | Used in Matrix Overcompensation Calibration (MOC) for ICP-MS [38]. | Added at 5% (v/v) to both samples and standards to overwhelm and dominate the carbon-based matrix effects from organic samples, creating a consistent and correctable environment for quantification. |

| KHSO4 (Potassium Hydrogen Sulfate) | Used to model SO2 spectral interference [33]. | When atomized, it generates a predictable and reproducible SO2 molecular spectrum, which can be recorded and subtracted from sample spectra using algorithms to correct for this specific interference. |

| Collision/Reaction Cell Gases (He/H2) | Mitigation of polyatomic interferences in ICP-MS [35]. | In the CRC, these gases collide with polyatomic ions, causing their dissociation through kinetic energy transfer or chemical reaction, thereby removing the interference on the analyte ion. |

Frequently Asked Questions

What is the main challenge when using neural networks for spectral data, and how can it be overcome? A primary challenge is that neural networks are often seen as "black boxes," making it difficult to interpret the relative influence of different input variables on the model's prediction [39]. This can be addressed by employing variable selection methods specifically designed for neural networks. Techniques like weight saliency estimation and variance-based approaches can prune redundant input variables, which leads to better model generalization, improved prediction ability, and more stable results [39] [40].

My spectral data shows baseline drift. What can I do before building a model? Baseline drift is a common issue caused by environmental noise and instrumental artifacts [8]. It is crucial to correct this in the preprocessing stage. One effective method is the adaptive smoothness parameter penalized least squares (asPLS) algorithm, which can automatically correct baseline drift in absorption spectra, thereby ensuring the reliability of subsequent quantitative analysis [12].

How do I handle severely overlapping spectral peaks from multiple components? For gases or components with severely overlapping absorption peaks, traditional peak detection methods fail [41]. A robust solution is to use a Convolutional Neural Network (CNN). A 1D-CNN can be trained to identify the central wavelengths of overlapped spectra directly. Research has demonstrated this approach achieves high-precision demodulation with a root-mean-square error as low as 1.819 pm [41].

What is the difference between Filter, Wrapper, and Embedded feature selection methods?

- Filter Methods: Use statistical measures (e.g., correlation, mutual information) to select features independently of the machine learning model. They are fast and model-agnostic but may miss interactions between features [42] [43].

- Wrapper Methods: Use the performance of a specific predictive model to evaluate feature subsets. They are computationally expensive but can yield high-performing feature sets tuned to that model. Recursive Feature Elimination is a common example [42] [43].

- Embedded Methods: Perform feature selection as an integral part of the model training process. Examples include LASSO and the feature importance scores in tree-based models. They offer a good balance between efficiency and effectiveness [42] [43].

Troubleshooting Guides

Problem: Poor Model Generalization and Overfitting

Symptoms: Your model performs well on training data but poorly on validation or new, unseen data.

| Possible Cause | Solution |

|---|---|

| Too many redundant input variables. | Apply variable selection pruning algorithms for neural networks. Start with a deliberately large number of inputs, then prune the least relevant ones to remove redundancies and reduce the risk of chance correlation [39]. |

| Insufficient or low-quality training data. | For overlapping spectra, use innovative data set construction. For serial FBG networks, inscribe superimposed FBGs where one grating creates the overlapped data and the other marks the central wavelength, providing a reliable training set [41]. |

| Suboptimal preprocessing. | Implement a context-aware adaptive preprocessing pipeline. This includes cosmic ray removal, baseline correction, and scattering correction to enhance data quality before model training [8]. |

Problem: Inaccurate Quantitative Analysis with Overlapping Signals

Symptoms: Inability to accurately identify or quantify individual components in a mixture due to spectral interference.

| Possible Cause | Solution |

|---|---|

| Failure to distinguish between different types of spectral overlaps. | Categorize the problem first. For distinct absorption peaks, use curve-fitting methods on the peak and adjacent troughs. For severely overlapping peaks, use a wavelength selection strategy based on variable impact, then model with a BP Neural Network [12]. |

| Limited integration of multi-source data. | Employ a advanced deep learning fusion architecture like the Multi-energy State Attention Fusion Network (MSAF-Net). This network adaptively weights data from different energy states, enhancing meaningful peaks and suppressing background for superior quantitative analysis [18]. |

| Using linear models for nonlinear relationships. | Utilize a Partial Least Squares (PLS) regression model. PLS finds components that maximize the covariance between spectral data (X) and the property of interest (y), making it more powerful than PCR for modeling subtle, overlapping spectral changes [44]. |

Experimental Protocols & Data

Protocol 1: Variable Selection for a Neural Network using Pruning

This methodology is adapted from studies on variable selection in QSAR and multivariate calibration [39].

- Initial Model Setup: Begin by training your neural network with a deliberately large set of input variables (e.g., all spectral wavelengths or principal component scores).

- Pruning Algorithm Application: Apply one or more pruning algorithms to estimate the importance of each input variable. The "Optimal Brain Surgeon" saliency estimation or variance-based methods are recommended over simple Hinton diagrams for more stable and reliable results [39].

- Variable Removal: Remove the input variables identified as least relevant or redundant.

- Model Retraining: Retrain the neural network using the pruned subset of variables.

- Performance Validation: Validate the new model's prediction ability on an independent test set. Improved generalization is typically observed after pruning redundant inputs [39] [40].

Protocol 2: Demodulating Overlapping Spectra with a 1D-CNN

This protocol is based on work for demodulating overlapping Fiber Bragg Grating (FBG) spectra [41].

- Data Set Construction: For serial sensor networks where obtaining separate spectra is impossible, create a training set using superimposed FBGs. One FBG generates the overlapped spectral data, while a second, co-located FBG serves as a precise marker for the central wavelength shift.

- Data Preprocessing: Normalize the spectral data. The central wavelength of the marker FBG is used as the label for the overlapped spectrum.

- Model Architecture: Construct a one-dimensional Convolutional Neural Network (1D-CNN). The architecture should include:

- Input layer for the spectral signal.

- Several 1D convolutional layers with ReLU activation to extract local features.

- Pooling layers for down-sampling.

- Fully connected layers at the end for regression.

- Model Training & Evaluation: Train the network to predict the central wavelength directly from the overlapped spectrum. Evaluate performance using Root Mean Square Error (RMSE). State-of-the-art models can achieve an RMSE of 1.819 pm with a computation time under 53.86 ms [41].

Performance Comparison of Advanced Modeling Techniques

The table below summarizes quantitative data from various studies for easy comparison.

| Model / Technique | Application Context | Key Performance Metric (Value) | Reference |

|---|---|---|---|

| Multi-energy State Attention Fusion Network (MSAF-Net) | Quantitative XRF Elemental Analysis | R²: 0.9832 (Si), 0.9844 (Al), 0.9891 (Fe); Mean R² for heavy metals: >0.98 | [18] |

| 1D Convolutional Neural Network (1D-CNN) | Demodulating Overlapping FBG Spectra | Root-Mean-Square Error: 1.819 pm | [41] |

| BP Neural Network with Variable Selection | FTIR Analysis of Coal Mine Gases | Detection Limits: 0.5 ppm (CH₄), 1 ppm (CO), 0.2 ppm (C₂H₂) | [12] |

| PLS Discriminant Analysis (PLS-DA) | Supervised Discrimination & Classification | -- (Widely regarded as more performant than PCR for calibration) | [44] |

Research Reagent Solutions

This table lists key computational tools and analytical techniques essential for experiments in this field.

| Item | Function in Research |

|---|---|

| Partial Least Squares (PLS) Regression | A core chemometric method for building multivariate calibration models, especially when variables (X) are correlated with the target property (y). It is the most used method in chemometrics [44]. |

| Pruning Algorithms (e.g., Optimal Brain Surgeon) | Algorithms used to identify and remove unimportant weights or input variables in a neural network, improving model interpretability and generalization ability [39]. |

| Superimposed Fiber Bragg Gratings (FBGs) | A physical data construction technique where multiple gratings are inscribed at the same location to generate reliable labeled data for training models on severely overlapping spectra [41]. |

| Adaptive Penalized Least Squares (asPLS) | A preprocessing algorithm used to correct for baseline drift in spectral data, which is critical for ensuring accurate quantitative analysis [12]. |

| Multi-energy State Attention Fusion Network (MSAF-Net) | A advanced deep learning architecture designed to integrate spectral data from multiple energy states, enhancing peaks and suppressing background for superior quantitative analysis [18]. |

Workflow for Spectral Analysis

This diagram illustrates a high-level workflow for tackling spectral analysis problems, integrating the concepts discussed in this guide.

Spectral Analysis Workflow

NN Variable Selection Process

This diagram outlines the specific process for selecting the most relevant variables in a Neural Network model.

NN Variable Selection Process

Should you require further assistance with a specific experimental setup, please consult the original research articles or contact your technical support specialist.

Troubleshooting Guides & FAQs

How can I identify and minimize matrix effects in LC-ESI-MS bioanalysis?

Matrix effects occur when co-eluting compounds from the biological sample suppress or enhance the ionization of your analyte, compromising quantitative accuracy [45] [46].

Troubleshooting Steps:

- Post-column infusion test: Continuously infuse your analyte into the MS detector while injecting a blank, extracted matrix sample. A deviation from the stable baseline indicates regions of ionization suppression or enhancement [45].

- Post-extraction spike assay: Compare the MS response of an analyte spiked into a pre-extracted blank matrix with the response of a pure standard solution. The difference indicates the relative matrix effect [45] [46].

- Investigate sample preparation: Improve sample clean-up by switching techniques (e.g., from protein precipitation to solid-phase extraction or liquid-liquid extraction) to remove more phospholipids and other interfering matrix components [45] [47].

Preventive Measures:

- Use stable isotope-labeled internal standards (SIL-IS): These internal standards co-elute with the analyte and experience nearly identical matrix effects, providing robust correction [46] [47].

- Optimize chromatography: Improve the separation of the analyte from the region of ion suppression by adjusting the mobile phase, gradient, or column to shift the analyte's retention time [46].

- Dilute the sample: A simple dilution of the final sample extract can reduce the concentration of interfering matrix components, mitigating their impact, provided the method's sensitivity allows for it [46].

What strategies resolve signal interference between a drug and its metabolite?

Structural similarities often cause a drug and its metabolite to co-elute and interfere with each other's ionization, leading to inaccurate quantification. This is a prevalent yet frequently overlooked issue [46].

Diagnosis: Perform a stepwise dilution assay. Prepare mixed standards of the drug and metabolite at expected concentrations and serially dilute them. A non-linear response in peak area versus concentration indicates the presence of ionization interference [46].

Resolution Methods:

- Chromatographic separation: The most effective approach is to optimize the LC method to achieve baseline separation of the drug and its metabolite, preventing them from entering the ion source simultaneously [46].

- Sample dilution: If sensitivity permits, diluting the sample can reduce the absolute amount of the interfering species entering the MS, minimizing the competition for charge [46].

- SIL-IS correction: Using a stable isotope-labeled internal standard for the drug and, if available, for the metabolite, can correct for the interference, as the internal standard will be affected in the same way as the analyte [46].

My calibration curve is non-linear. What could be the cause?

Non-linearity can stem from the detector being saturated at high concentrations or from ionization effects.

Troubleshooting Steps:

- Check for detector saturation: Dilute a high-concentration standard. If the response becomes linear after dilution, the detector was saturated. Reduce the injection volume or dilute samples in the upper calibration range [46].

- Assess for ionization interference: As described above, signal interference from a metabolite or other co-administered drug can induce non-linearity. Perform the dilution assay to investigate this [46].

- Review internal standard selection: Ensure the internal standard is appropriate. It should be chemically similar to the analyte and not suffer from the same interference issues. A stable isotope-labeled standard is often the best choice [45] [47].

Experimental Protocol for Assessing Matrix Effects & Ionization Interference

This protocol provides a detailed methodology for evaluating two critical challenges in bioanalytical LC-MS/MS.

Objective: To quantitatively assess matrix effects (ion suppression/enhancement) and specific ionization interference between a drug and its metabolite.

Materials:

- LC-ESI-MS/MS System: Triple quadrupole or similar mass spectrometer.

- Analytes: Drug and metabolite reference standards.

- Internal Standard: Stable isotope-labeled analog of the drug (recommended) or a structurally similar compound.

- Biological Matrix: Blank plasma (or other relevant matrix) from at least 6 different sources [45].

- Solvents: HPLC-grade methanol, acetonitrile, and water.

Procedure:

Part A: Post-Column Infusion for Matrix Effect Visualization

- Prepare analyte solution: Create a solution of the analyte at a concentration that gives a steady signal.

- Set up infusion: Use a T-union to connect the LC effluent to the analyte solution, which is being infused post-column at a constant flow rate via a syringe pump.

- Run LC-MS method: Inject an extracted blank plasma sample and monitor the analyte's signal. Any dip (suppression) or peak (enhancement) in the baseline indicates a matrix effect and its chromatographic location [45].

Part B: Quantitative Assessment via Post-Extraction Spiking

- Prepare samples:

- Set A (Extracted Samples): Spike the analyte and IS into blank plasma (n=6 from different sources). Process the samples through the entire sample preparation method.

- Set B (Post-Extraction Spikes): Process blank plasma samples from the same 6 sources without the analyte or IS. After processing, reconstitute the dried extracts with a solution containing the analyte and IS at the same concentration as Set A.