Analytical vs. Functional Sensitivity: A Guide for Researchers and Drug Developers

This article clarifies the critical distinction between analytical sensitivity and functional sensitivity, two fundamental but often confused performance parameters in assay development and validation.

Analytical vs. Functional Sensitivity: A Guide for Researchers and Drug Developers

Abstract

This article clarifies the critical distinction between analytical sensitivity and functional sensitivity, two fundamental but often confused performance parameters in assay development and validation. Tailored for researchers, scientists, and drug development professionals, we explore the foundational definitions, methodological approaches for determination, common pitfalls in troubleshooting, and current standards for validation. By synthesizing these concepts, the article provides a comprehensive framework for selecting and optimizing assays to ensure they are fit for purpose in both research and clinical applications, ultimately enhancing the reliability of data and efficacy of therapeutic developments.

Core Concepts: Defining Analytical and Functional Sensitivity

What is Analytical Sensitivity? Understanding the Detection Limit

Analytical sensitivity defines the smallest amount of an analyte that can be reliably distinguished from a blank sample, a fundamental performance parameter for any quantitative analytical method. Often used interchangeably with the Limit of Detection (LoD), it is crucial for researchers and scientists to understand that this concept is distinct from functional sensitivity, which describes the lowest analyte concentration measurable with acceptable precision and accuracy for clinical use. This guide details the definitions, calculation methods, and experimental protocols for determining analytical sensitivity, framing it within the critical research on its differences with functional sensitivity to ensure reliable data in drug development and scientific research.

Analytical sensitivity, in its most common and practical usage, is defined as the lowest concentration of an analyte that can be consistently distinguished from a sample containing none of the analyte (a blank) [1] [2]. This concept is central to characterizing the performance of analytical procedures, from clinical chemistry to molecular diagnostics and environmental monitoring. The term is often used synonymously with the Limit of Detection (LoD) or Detection Limit [3] [4]. The LoD is formally described as the lowest signal, or the corresponding quantity to be determined, that can be observed with a sufficient degree of confidence or statistical significance [5]. It represents a threshold for reliable detection, though not necessarily for precise quantification.

It is vital to differentiate this concept from calibration sensitivity. Pure calibration sensitivity refers simply to the slope of the analytical calibration curve (S = dy/dx), indicating how strongly the measurement signal responds to a change in analyte concentration [6] [2]. A steeper slope signifies a more sensitive method. However, this definition does not account for the scatter of data points around the calibration curve. A method can have a very steep slope (high calibration sensitivity) but also high imprecision (noise), making it poor at detecting low analyte levels. Therefore, the more robust definition of analytical sensitivity incorporates this element of uncertainty, defined as the ratio of the calibration curve's slope to the standard deviation of the measured signal at a given concentration [2]. This provides a measure of the method's ability to distinguish between two different concentration values.

Confusion in terminology is common, particularly between analytical sensitivity and diagnostic sensitivity. Diagnostic sensitivity is a clinical performance characteristic that measures a test's ability to correctly identify individuals who have a disease (true positive rate) [2]. This guide focuses exclusively on the analytical performance parameters relevant to method validation.

Distinguishing Analytical Sensitivity from Functional Sensitivity

A critical understanding in assay performance characterization is the difference between the capability to merely detect an analyte and the ability to reliably measure it at low concentrations. This distinction is captured by comparing the Limit of Detection (LoD), representing analytical sensitivity, with the Limit of Quantitation (LoQ), for which functional sensitivity is a common, specific application.

Table 1: Comparison of Analytical Sensitivity (LoD) and Functional Sensitivity

| Feature | Analytical Sensitivity (Limit of Detection) | Functional Sensitivity |

|---|---|---|

| Core Definition | Lowest analyte concentration distinguishable from a blank [1] | Lowest concentration measurable with clinically acceptable precision (e.g., CV ≤ 20%) [1] [7] |

| Primary Focus | Signal vs. noise; detection certainty [5] | Measurement precision and accuracy [1] |

| Statistical Basis | Based on mean and standard deviation of blank and low-concentration samples (e.g., LoB + 1.645*SD) [3] [7] | Based on long-term imprecision (CV) profiles at low concentrations [1] |

| Typical Use Case | Determining if an analyte is present or absent [3] | Providing a quantitative result reliable enough for clinical or research decision-making [1] [2] |

| Relationship | The LoD is typically lower than the functional sensitivity/LoQ [7] | The functional sensitivity/LoQ is at a higher concentration than the LoD [7] |

Functional sensitivity was developed to address the real-world limitation of analytical sensitivity. While an assay can signal the presence of a substance at the LoD, the imprecision at this concentration is often so great that the result lacks clinical or research utility [1]. For example, a result at the LoD may not be reproducible. Functional sensitivity is therefore defined as the lowest concentration at which an assay can report clinically useful results, typically specified by an acceptable inter-assay coefficient of variation (CV), most commonly 20% [1] [2] [7]. This concept emphasizes that reproducibility, not just detectability, determines the practical lower limit of an assay's reporting range.

Statistical Definitions and Calculation Methods

The accurate determination of analytical sensitivity (LoD) relies on a structured statistical framework that accounts for the distribution of signals from blank and low-concentration samples. Key concepts in this framework include the Limit of Blank (LoB) and the Limit of Detection (LoD) itself.

Table 2: Key Statistical Parameters for Determining LoD

| Parameter | Description | Statistical Formula |

|---|---|---|

| Limit of Blank (LoB) | The highest apparent analyte concentration expected to be found when replicates of a blank sample are tested. It represents the 95th percentile of blank measurements [7]. | LoB = mean_blank + 1.645 * SD_blank (Assumes a Gaussian distribution of blank signals) [3] [7] |

| Limit of Detection (LoD) | The lowest analyte concentration likely to be reliably distinguished from the LoB. It is the concentration at which a signal has a 95% probability of being greater than zero [7] [8]. | LoD = LoB + 1.645 * SD_low concentration sample [3] [7] |

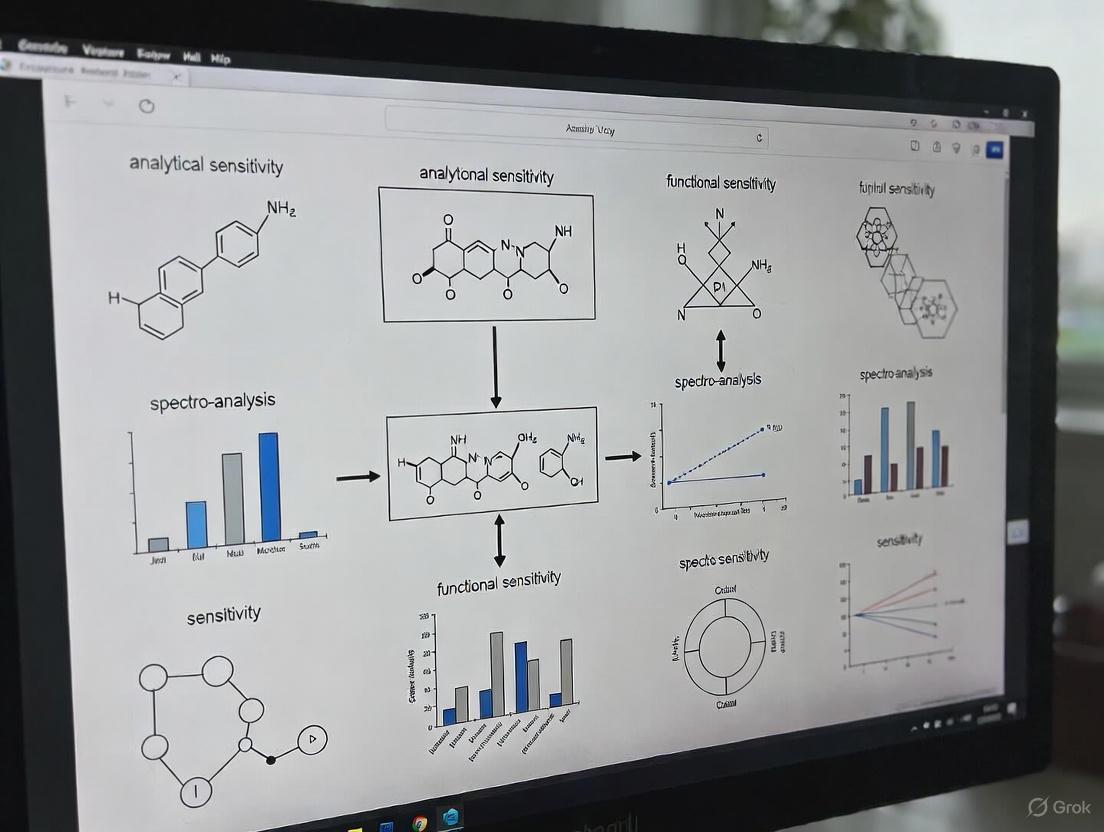

The following diagram illustrates the statistical relationship and decision process involving the Blank, LoB, and LoD.

The calculation process accounts for two types of statistical errors:

- Type I Error (α - False Positive): The probability that a blank sample produces a signal above the LoB. By definition, this is 5% [7].

- Type II Error (β - False Negative): The probability that a sample containing analyte at the LoD produces a signal below the LoB. The formulas above also set this probability at 5% [7].

For techniques with non-linear or non-Gaussian responses, such as qPCR, alternative statistical approaches like logistic regression are employed. These models fit a curve to the binary detection data (positive/negative) across a dilution series to determine the concentration at which detection becomes reliable [3].

Experimental Protocols for Determining LoD and Functional Sensitivity

Determining Limit of Detection (LoD)

The CLSI EP17-A2 guideline provides a standardized protocol for determining LoD [7]. The process requires two sets of samples: a blank sample containing no analyte, and a low-concentration sample known to contain an analyte concentration near the expected LoD.

Table 3: Research Reagent Solutions for LoD Experiments

| Reagent / Material | Function and Specification | Experimental Role |

|---|---|---|

| Blank Sample | A sample with a matrix matching real specimens but containing no analyte [1]. | Serves as the baseline for establishing the background noise (LoB). |

| Low-Concentration Sample | A sample with a known, low concentration of analyte, ideally close to the expected LoD [7]. | Used to determine the imprecision at a detectable level for LoD calculation. |

| Calibrators | A series of samples with known analyte concentrations for constructing the calibration curve [6]. | Essential for converting the raw analytical signal (e.g., counts, absorbance) into a concentration value. |

| Control Materials | Commutable controls, such as whole bacteria or viruses for molecular assays, that challenge the entire analytical process [4]. | Used to verify the performance of the assay during the LoD validation. |

A detailed workflow for this experiment is as follows:

Procedure:

- Sample Preparation: Prepare a blank sample and a low-concentration sample. The matrix should be commutable with real patient or test specimens [7].

- Replicate Analysis: Analyze a sufficient number of replicates of each sample. For a robust establishment of LoD, 60 replicates of each are recommended, ideally across multiple instruments and reagent lots. For verification of a manufacturer's claim, 20 replicates may suffice [7].

- Data Calculation:

- Calculate the mean and standard deviation (

SD_blank) of the results from the blank sample. - Calculate the LoB using the formula:

LoB = mean_blank + 1.645 * SD_blank. - Calculate the mean and standard deviation (

SD_low) of the results from the low-concentration sample. - Calculate the provisional LoD:

LoD = LoB + 1.645 * SD_low[7].

- Calculate the mean and standard deviation (

- Verification: Test multiple replicates of a sample at the provisional LoD concentration. No more than 5% of the results (approximately 1 in 20) should fall below the LoB. If this condition is not met, the LoD must be re-estimated using a sample with a slightly higher concentration [7].

Determining Functional Sensitivity

Functional sensitivity is determined by assessing the long-term imprecision (CV) of an assay at low analyte concentrations. The original application was for TSH assays, where a CV of 20% was deemed the maximum tolerable imprecision for clinical usefulness [1]. This concept has since been applied to other assays.

Procedure:

- Sample Selection: Obtain multiple patient samples or pools with analyte concentrations in the low range. Undiluted patient samples are ideal, but carefully diluted samples or control materials are acceptable alternatives [1].

- Long-Term Replication: Analyze these samples in replicate over an extended period (days or weeks) to capture true day-to-day (inter-assay) imprecision. A single run of 20 replicates is not sufficient [1].

- CV Calculation and Interpolation: For each sample, calculate the mean, standard deviation, and CV. Plot the CV against the concentration. The functional sensitivity is the concentration at which the CV intersects the predetermined goal (e.g., 20%). This can be estimated by interpolation if the exact CV goal was not directly measured at a specific concentration [1].

Advanced Considerations and Method-Specific Challenges

The determination of analytical sensitivity can be complicated by the specific nature of the analytical technique. A prime example is quantitative Real-Time PCR (qPCR). The measured output, the quantification cycle (Cq), is proportional to the logarithm of the starting target concentration. Furthermore, negative samples do not yield a Cq value, making it impossible to calculate a standard deviation for the blank in a linear scale [3]. Consequently, the standard CLSI approach for determining LoD must be modified.

For qPCR, a logistic regression approach is recommended. This involves running a high number of replicates (e.g., 64-128) across a serial dilution of the target nucleic acid [3]. The results are recorded as a binary outcome (detected/not detected) at a predefined Cq cut-off. A logistic regression curve is then fitted to the binary data, modeling the probability of detection as a function of the logarithm of the concentration. The LoD can be defined as the concentration at which detection reaches a certain probability, such as 95% [3] [9].

Another critical consideration is the difference between Instrument Detection Limit (IDL) and Method Detection Limit (MDL). The IDL is the detection capability of the instrument alone, typically measured by analyzing a standard in a clean solvent. The MDL, which is more comprehensive and practically relevant, includes all sample preparation steps (e.g., digestion, extraction, concentration) and therefore accounts for additional sources of error and variability introduced prior to instrumental analysis. The MDL is invariably higher than the IDL [5].

A clear and statistically rigorous understanding of analytical sensitivity is indispensable for researchers, scientists, and drug development professionals. It is the cornerstone for defining the detection capabilities of an analytical method, most commonly expressed as the Limit of Detection (LoD). However, it is crucial to recognize that the mere ability to detect an analyte at the LoD does not guarantee that a measurement at this level is reproducible or fit for a specific purpose.

This guide has framed analytical sensitivity within the critical distinction between detection and reliable quantification. Functional sensitivity, a practical reflection of the Limit of Quantitation (LoQ), provides the concentration level at which an assay delivers clinically or research-useful results with defined precision. By employing the standardized experimental protocols outlined—such as those from CLSI guidelines—scientists can rigorously characterize their assays, ensure the validity of data at low concentrations, and make informed decisions about the appropriate reporting ranges for their specific applications. Ultimately, recognizing and applying these concepts ensures the generation of high-quality, reliable data that underpins robust scientific and clinical conclusions.

What is Functional Sensitivity? Defining Clinically Useful Precision

Functional sensitivity represents a critical performance characteristic in clinical laboratory science, defining the lowest analyte concentration that can be measured with clinically acceptable precision. This technical guide explores the concept of functional sensitivity, contrasting it with analytical sensitivity and other detection limit metrics, with particular emphasis on its foundational role in ensuring reliable patient results in diagnostic testing. Developed initially for thyroid-stimulating hormone (TSH) assays, functional sensitivity has expanded to become a cornerstone for assay validation across diverse clinical applications, providing a pragmatic threshold for clinical decision-making that transcends mere detectability.

In clinical diagnostics, the ability to detect an analyte at low concentrations represents only part of the analytical challenge. While analytical sensitivity (or detection limit) defines the lowest concentration that can be distinguished from background noise, this metric fails to address whether measurements at this level provide sufficient precision for clinical utility [1]. The fundamental limitation of analytical sensitivity lies in its disregard for precision – at concentrations near the detection limit, imprecision increases rapidly, potentially rendering results clinically unreliable despite being technically detectable [1].

Functional sensitivity emerged as a solution to this limitation, shifting focus from what is merely detectable to what is clinically usable. Originally developed by researchers evaluating TSH assays in the 1990s, this concept established a precision-based threshold for the lowest reportable result [1] [2]. The researchers defined functional sensitivity as "the lowest concentration at which an assay can report clinically useful results," specifically operationalized as the concentration corresponding to a day-to-day coefficient of variation (CV) of 20% for TSH assays [1]. This specification of acceptable imprecision marked a significant advancement in assay characterization, creating a direct link between analytical performance and clinical requirements.

Defining Key Concepts and Terminology

Analytical Sensitivity Versus Functional Sensitivity

Analytical sensitivity (detection limit) represents the lowest concentration distinguishable from zero. Typically determined by measuring replicates of a blank sample, it is calculated as the mean blank measurement plus 2 standard deviations (for immunometric assays) or minus 2 standard deviations (for competitive assays) [1]. This parameter answers the question: "Can the assay detect the presence of analyte above background noise?"

In contrast, functional sensitivity establishes the lowest concentration measurable with defined precision requirements, typically a CV ≤ 20% [1] [2]. This parameter answers the more clinically relevant question: "Can the assay provide reproducible results at this concentration that support reliable clinical decisions?"

The relationship between these parameters follows a consistent pattern: functional sensitivity occurs at a higher concentration than analytical sensitivity, with the magnitude of difference dependent on the assay's precision profile [1].

The Conceptual Hierarchy of Detection and Quantification

The landscape of assay sensitivity includes multiple parameters that form a continuum from detection to reliable quantification:

Limit of Blank (LoB): The highest apparent analyte concentration expected when replicates of a blank sample are tested [7]. Calculated as meanblank + 1.645(SDblank), it represents the 95th percentile of blank measurements [7].

Limit of Detection (LoD): The lowest analyte concentration likely to be reliably distinguished from LoB [7]. Determined using both blank samples and low-concentration samples, calculated as LoB + 1.645(SDlow concentration sample) [7].

Functional Sensitivity: The concentration at which predetermined precision goals (typically CV ≤ 20%) are met [7]. Positioned between LoD and LoQ in the capability spectrum.

Limit of Quantitation (LoQ): The lowest concentration at which the analyte can be quantified with predefined goals for both bias and imprecision [7]. Represents the threshold for reliable quantification.

Table 1: Comparative Analysis of Sensitivity Metrics

| Parameter | Definition | Typical Determination | Clinical Utility |

|---|---|---|---|

| Analytical Sensitivity | Lowest concentration distinguishable from background | Mean blank ± 2 SD | Limited; indicates detectability only |

| Functional Sensitivity | Lowest concentration with ≤20% CV | Interassay precision profile | High; defines clinically reportable range |

| Limit of Blank (LoB) | Highest apparent concentration in blank samples | Meanblank + 1.645(SDblank) | Establishes background noise level |

| Limit of Detection (LoD) | Lowest concentration distinguished from LoB | LoB + 1.645(SDlow concentration) | Better than analytical sensitivity but still limited clinical utility |

| Limit of Quantitation (LoQ) | Lowest concentration meeting bias and imprecision goals | Variable based on performance specifications | Highest; suitable for precise quantification |

The Critical Need for Functional Sensitivity in Clinical Practice

Limitations of Analytical Sensitivity

The precision profile of any immunoassay demonstrates that imprecision increases rapidly as analyte concentration decreases [1]. This phenomenon means that even at concentrations significantly above the analytical sensitivity, imprecision may be sufficiently high to compromise result reproducibility and clinical utility [1]. Consequently, analytical sensitivity rarely represents the lowest measurable concentration that is clinically useful.

This limitation manifests practically when comparing serial results from the same patient. For example, with a TSH assay having analytical sensitivity of 0.3 µg/dL but functional sensitivity of 1.0 µg/dL, values of 0.4 µg/dL and 0.7 µg/dL might not represent clinically meaningful differences despite both being above the detection limit [1]. Reporting such results as specific values rather than "<1.0 µg/dL" risks misinterpretation by clinicians who may attribute significance to what is essentially analytical noise [1].

Clinical Consequences and Applications

The development of functional sensitivity emerged from very specific clinical needs in thyroid testing. For "third generation" TSH assays, the definition explicitly required functional sensitivity in the 0.01-0.02 µIU/mL region [1]. This precision at low concentrations enabled reliable distinction between euthyroid and hyperthyroid patients, whose TSH values typically fall below normal ranges [10].

The concept has since expanded to other clinical domains where precise low-end measurement carries diagnostic significance, including:

- Tumor markers monitoring residual disease after treatment

- Cardiac biomarkers for early myocardial infarction detection

- Infectious disease markers for early infection identification

- Therapeutic drug monitoring at low concentrations

Quantitative Assessment of Functional Sensitivity

Establishing the 20% CV Threshold

The selection of 20% CV as the benchmark for functional sensitivity, while somewhat arbitrary in its origins, reflected the clinical consensus regarding the maximum tolerable imprecision for TSH measurements [1]. This threshold represents a practical compromise between analytical achievability and clinical requirements.

The implications of this CV threshold are substantial for result interpretation. At a concentration of 0.1 µIU/mL with 20% CV, the range encompassing 95% of expected results from repeat analysis would be ±40% (±2 SD), or 0.06 µIU/mL to 0.14 µIU/mL [1]. Understanding this inherent variability is essential for appropriate clinical interpretation of serial measurements.

Comparative Performance Data

Substantial variability exists in functional sensitivity performance across analytical platforms, even when claiming the same "generation" of performance. A study evaluating seven automated TSH immunoassays demonstrated this disparity clearly [10].

Table 2: Functional Sensitivity Performance Across TSH Immunoassay Platforms

| Analytical Platform | Functional Sensitivity (mIU/L) | Third Generation Claim |

|---|---|---|

| Dimension ExL | 0.003 | Yes |

| Immulite 2000 | 0.003 | Yes |

| Dimension Vista 1500 | 0.003 | Yes |

| ADVIA Centaur | 0.006 | Yes |

| ARCHITECT i2000 | 0.007 | Yes |

| Modular Analytics E170 | 0.008 | Yes |

| Access 2 | 0.039 | No |

This comparative data, derived from testing serum pools over six weeks using two reagent lots and two calibrations, highlights the need for harmonization, particularly at low concentrations where clinical decisions are most sensitive to analytical performance [10].

Experimental Protocols for Determination

Sample Preparation and Matrix Considerations

Determining functional sensitivity requires appropriate samples spanning the low concentration range of interest. The ideal approach utilizes undiluted patient samples or pools of patient samples with concentrations bracketing the target range [1]. When such samples are unavailable, reasonable alternatives include:

- Patient samples diluted to concentrations spanning the target range

- Control materials with concentrations in or near the target range

- Dilutions of the lowest non-zero calibrator

The diluent selection is critical when sample dilution is necessary. Routine sample diluents intended for high-concentration samples may contain low apparent analyte concentrations that could bias functional sensitivity determination [1].

Testing Protocol and Data Analysis

A robust functional sensitivity study should incorporate these key elements:

- Testing duration: Analysis over multiple different runs, ideally spanning days or weeks to capture day-to-day (interassay) precision [1]

- Replication: Sufficient replicates at each concentration level to reliably estimate CV

- Concentration levels: Multiple samples spanning the expected functional sensitivity range

- Instrumentation and reagents: Inclusion of multiple instrument units and reagent lots to capture expected performance variability

The experimental workflow for determining functional sensitivity follows a systematic process:

Following data collection, CV values are calculated for each concentration level tested. The functional sensitivity is determined as the concentration at which the CV reaches the predetermined limit, estimated by interpolation if necessary [1]. This approach differs fundamentally from analytical sensitivity determination, which typically involves only 20 replicates of a zero sample in a single run [1].

The Researcher's Toolkit: Essential Materials and Reagents

Successful determination of functional sensitivity requires careful selection of materials and reagents to ensure clinically relevant results.

Table 3: Essential Research Reagents and Materials for Functional Sensitivity Determination

| Reagent/Material | Specifications | Function in Protocol |

|---|---|---|

| Patient Samples | Undiluted, with concentrations spanning target range; commutable with clinical specimens | Provides biologically relevant matrix for testing; gold standard when available |

| Control Materials | Third-party or manufacturer controls with concentrations near expected functional sensitivity | Alternative to patient samples; must demonstrate commutability |

| Calibrators | Manufacturer-provided, traceable to reference standards | Ensures accurate concentration assignment throughout measurement range |

| Sample Diluent | Matrix-appropriate, demonstrated low analyte content | Critical for preparing diluted samples when needed; avoids bias from analyte in diluent |

| Quality Control | Materials at multiple concentration levels, including low QC | Monitors assay performance stability throughout extended testing period |

Integration with Regulatory and Laboratory Standards

CLIA '88 and Reportable Range Verification

For laboratories in the United States operating under CLIA '88 regulations, the only sensitivity-related performance characteristic requiring verification is the lower limit of the reportable range [1]. Functional sensitivity determination, while not explicitly mandated, provides the scientific foundation for establishing this reportable range.

The reporting range implemented in automated immunoassay system software typically represents the manufacturer's recommendation for the clinically valid performance range, often set above the analytical sensitivity based on comprehensive assessment of functional performance [1].

CLSI Guidelines and Standardized Protocols

The Clinical and Laboratory Standards Institute (CLSI) has contributed to standardizing sensitivity terminology through guidelines such as EP17-A2, which distinguishes between Limit of Blank (LoB), Limit of Detection (LoD), and Limit of Quantitation (LoQ) [2] [7]. These guidelines help resolve historical confusion in terminology and methodology.

The relationship between these CLSI-defined parameters and functional sensitivity can be visualized as follows:

Advanced Applications and Future Directions

Expanding Beyond Clinical Chemistry

While functional sensitivity originated in clinical chemistry, particularly for endocrine testing, the underlying principle has applications across diagnostic disciplines. In molecular diagnostics, similar concepts apply to determining the lower limit of quantification for viral load testing or minimal residual disease detection.

In novel sensor technologies, such as graphene-based gas sensors, comparable optimization challenges exist where sensitivity shows non-monotonic relationships with defect density [11]. Though in different domains, these fields face similar challenges in balancing detection capability with measurement reliability.

Precision Oncology and Therapeutic Monitoring

Functional sensitivity concepts are increasingly relevant in precision medicine applications, particularly for biomarker-guided therapies. In oncology, accurate quantification of low-abundance biomarkers can guide targeted therapy selection [12]. Similarly, therapeutic drug monitoring requires precise measurement at low concentrations to optimize dosing while minimizing toxicity.

The integration of functional sensitivity principles into these advanced applications represents the evolving recognition that reliable quantification at low concentrations is fundamental to personalized medicine.

Functional sensitivity represents a pivotal concept in clinical assay validation, bridging the gap between what is analytically detectable and what is clinically usable. By establishing precision-based thresholds for reportable results, functional sensitivity ensures that laboratory measurements support rather than mislead clinical decision-making, particularly at critical low concentrations.

The determination of functional sensitivity through rigorous, extended precision profiling provides laboratories with an objective and clinically meaningful indication of an assay's practical lower limit. As diagnostic technologies evolve and clinical applications demand increasingly sensitive measurements, the principles of functional sensitivity remain essential for defining clinically useful precision.

Core Conceptual Distinctions

In analytical chemistry and clinical diagnostics, the terms "analytical sensitivity" and "functional sensitivity" describe fundamentally different performance characteristics of an assay. Their confusion can lead to significant errors in method selection and data interpretation [2].

Analytical sensitivity (often synonymous with the detection limit) is formally defined as the lowest concentration of an analyte that can be distinguished from a blank sample containing no analyte [1]. It describes the fundamental detection capability of an assay.

Functional sensitivity, a concept developed in the early 1990s for thyrotropin (TSH) assays, is defined as the lowest analyte concentration that can be measured with a specified imprecision, typically a coefficient of variation (CV) of 20% [2] [1]. It describes the concentration at which an assay can report clinically useful results [1].

The table below summarizes their key differentiating features.

| Feature | Analytical Sensitivity | Functional Sensitivity |

|---|---|---|

| Definition | Lowest concentration distinguishable from background noise [1] | Lowest concentration measurable with a defined imprecision (e.g., CV ≤ 20%) [2] [1] |

| Primary Focus | Detection capability; signal-to-noise ratio [1] | Clinical utility and reproducibility of results [1] |

| Determining Factor | Slope of the calibration curve and standard deviation of the blank [2] | Long-term imprecision (CV) at low analyte concentrations [2] [1] |

| Relation to LOD/LOQ | Often used interchangeably with Limit of Detection (LOD) [7] | Aligns more closely with the Limit of Quantitation (LOQ), but is not identical [2] [7] |

| Clinical Utility | Limited; indicates presence of analyte but not necessarily reliable quantification [1] | High; defines the lower limit for reporting clinically reliable results [1] |

| Typical Imprecision | Not defined; the measurement is often highly imprecise at this level [13] | Defined by a precision goal, most commonly a CV of 20% [2] [7] |

Relationship to Other Detection Limits

Analytical and functional sensitivity exist within a hierarchy of performance characteristics for low-level analytes, which also includes the Limit of Blank (LoB) and Limit of Quantitation (LoQ) [7].

- Limit of Blank (LoB): The highest apparent analyte concentration expected to be found when replicates of a blank sample are tested. It is calculated as meanblank + 1.645(SDblank) and represents the 95th percentile of blank measurements [7].

- Limit of Detection (LoD): The lowest analyte concentration likely to be reliably distinguished from the LoB. It is determined using both the LoB and a low-concentration sample: LoD = LoB + 1.645(SD_low concentration sample). The analytical sensitivity is often equated with the LoD [7].

- Functional Sensitivity: Defines a concentration where the total imprecision (CV) meets a specific clinical requirement (e.g., 20%), ensuring results are reproducible enough for medical decision-making [2] [1].

- Limit of Quantitation (LoQ): The lowest concentration at which the analyte can be quantified with acceptable precision and trueness (bias). The LoQ may be equivalent to the functional sensitivity or set at a higher concentration with more stringent performance goals [7].

Detailed Experimental Protocols

Protocol for Determining Analytical Sensitivity

The following workflow outlines the standard procedure for establishing a method's analytical sensitivity, which focuses on distinguishing a signal from background noise [1] [13].

- Sample Preparation: A true "blank" sample is required. The ideal sample has the same matrix as patient specimens (e.g., serum, plasma) but contains no analyte. A zero-concentration calibrator is often used [1] [13].

- Replicate Measurement: The blank sample is assayed repeatedly in a single run. A minimum of 20 replicate measurements is standard to obtain a reliable estimate of the mean and standard deviation [1] [13].

- Data Calculation: The mean value and standard deviation (SD) of the measured results (which could be in counts, absorbance, or concentration units) are calculated [1].

- Result Determination: The analytical sensitivity is calculated as the concentration corresponding to:

Protocol for Determining Functional Sensitivity

Determining functional sensitivity requires a more extensive experiment focused on long-term precision at low analyte concentrations, as shown in the workflow below [1].

- Sample Preparation: Obtain several samples with analyte concentrations in the low range near the expected functional sensitivity. Undiluted patient samples or pools are ideal. If dilutions are necessary, the diluent must be carefully chosen to avoid bias [1].

- Set Precision Goal: Define the maximum acceptable imprecision (CV) for clinically useful results. While a CV of 20% is historically common (from TSH assays), this goal should be based on the assay's intended clinical application and may be stricter [1].

- Long-Term Replicate Analysis: The samples are analyzed repeatedly over multiple separate runs, ideally over a period of days or weeks. This assesses the day-to-day (inter-assay) imprecision, which is critical for functional sensitivity. A single run with multiple replicates is not sufficient [1].

- Data Calculation: For each sample, the mean concentration and standard deviation are calculated, from which the CV is derived [1].

- Result Determination: The functional sensitivity is identified as the lowest analyte concentration at which the CV is less than or equal to the predefined precision goal (e.g., 20%). This can be estimated by interpolation if the tested concentrations do not exactly match the goal [1].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials required for conducting the experiments to characterize analytical and functional sensitivity.

| Item | Function & Importance |

|---|---|

| Matrix-Matched Blank Sample | A sample with the same base material as patient specimens (e.g., serum, plasma) but containing no analyte. Critical for obtaining a realistic LoB and analytical sensitivity [1] [13]. |

| Low-Level Patient Pools | Undiluted patient samples with endogenous analyte at low concentrations. The preferred material for functional sensitivity studies due to commutability, ensuring they behave like real patient samples [1]. |

| Precision Controls | Commercially available control materials with assigned values at low concentrations. Used as an alternative to patient pools for imprecision testing [1]. |

| Appropriate Diluent | A solution used to dilute high-concentration samples to the low range required for study. Must be validated to ensure it does not contain the analyte or cause matrix effects that bias results [1]. |

| Calibrators | A set of standards with known analyte concentrations, used to construct the calibration curve that converts instrument signal into concentration values. The lowest calibrator is often used as a "spiked sample" in LoD experiments [13]. |

Navigating Common Confusions in Research and Development

A primary point of confusion is the conflation of analytical sensitivity with the Limit of Detection (LOD) and functional sensitivity with the Limit of Quantitation (LOQ). While these concepts are related, they are not identical [2].

- Analytical Sensitivity vs. LOD: While used interchangeably, "analytical sensitivity" formally refers to the calibration sensitivity (slope of the calibration curve), while the LOD is the corresponding concentration. In practice, "analytical sensitivity" has become a synonym for LOD, defined as the mean of the blank plus 2 standard deviations [2] [6].

- Functional Sensitivity vs. LOQ: Functional sensitivity is a specific type of LOQ. The LOQ is a broader term defined as the lowest concentration at which an analyte can be quantified with defined levels of both imprecision and bias. Functional sensitivity typically only sets a goal for imprecision (e.g., CV ≤ 20%), not necessarily for bias [2] [7].

For researchers in drug development, understanding this distinction is critical. Analytical sensitivity determines whether a biomarker or drug metabolite can be seen at all in early-phase pharmacokinetic studies. In contrast, functional sensitivity defines the threshold for obtaining reproducible data that is reliable enough to make critical decisions, such as determining a drug's half-life or establishing a target engagement biomarker profile. Relying solely on the manufacturer's stated analytical sensitivity for these purposes can lead to reporting non-reproducible, low-level results that undermine research validity [1].

The Clinical and Laboratory Standards Institute (CLSI) Viewpoint

In the field of clinical laboratory science, the term "sensitivity" carries distinct meanings that are frequently confused, potentially leading to misinterpretation of test capabilities and results. The Clinical and Laboratory Standards Institute (CLSI), a globally recognized standards-developing organization, provides critical guidance to harmonize terminology and methodologies across laboratory medicine [14]. Within this context, analytical sensitivity and functional sensitivity represent two fundamentally different performance characteristics, each with unique definitions, measurement approaches, and clinical applications. CLSI's standards serve to resolve longstanding ambiguities by establishing precise definitions and validation protocols that enable laboratories to accurately characterize the detection capabilities of their measurement procedures [2] [15]. This whitepaper examines the CLSI viewpoint on these distinct concepts, providing researchers and drug development professionals with a technical framework for proper evaluation and implementation of clinical laboratory tests.

Distinguishing Between Analytical and Functional Sensitivity

Analytical Sensitivity: Traditional Definition and Limitations

Analytical sensitivity has traditionally been defined as the smallest amount of an substance in a sample that can be accurately measured by an assay [16] [4]. In quantitative terms, it represents the lowest concentration that can be distinguished from background noise [1]. The conventional method for determining analytical sensitivity involves repeatedly measuring a blank sample (containing no analyte), calculating the mean signal and standard deviation (SD), and then determining the concentration equivalent to the mean blank signal plus 2 SD (for immunometric assays) or minus 2 SD (for competitive assays) [1]. Mathematically, for immunometric assays, this is expressed as:

Analytical Sensitivity = Meanblank + 2 × SDblank

Despite its historical use, analytical sensitivity has significant limitations in clinical practice. The primary issue is that imprecision increases substantially as analyte concentration decreases, meaning that even at concentrations above the stated analytical sensitivity, results may lack sufficient reproducibility for clinical utility [1]. This limitation prompted the development of a more clinically relevant concept—functional sensitivity.

Functional Sensitivity: A Clinically Relevant Approach

Functional sensitivity emerged in the early 1990s when researchers evaluating thyroid-stimulating hormone (TSH) assays recognized the need for a more practical measure of low-end performance [2] [1]. They defined functional sensitivity as "the lowest concentration at which an assay can report clinically useful results" with a maximum coefficient of variation (CV) of 20% [2]. This concept acknowledges that clinical usefulness requires not just detectability but also acceptable precision at low concentrations.

Unlike analytical sensitivity, which focuses solely on detectability, functional sensitivity incorporates precision requirements that reflect real-world clinical needs. The 20% CV threshold, while initially established for TSH assays, has been widely adopted for other biomarkers despite its somewhat arbitrary origins [1]. CLSI guidelines provide the methodological framework for properly determining functional sensitivity through rigorous multi-day precision testing at low analyte concentrations.

The CLSI Framework: EP17-A2 and Terminology Harmonization

CLSI addresses the confusion surrounding sensitivity terminology through the EP17-A2 guideline ("Evaluation of Detection Capability for Clinical Laboratory Measurement Procedures") [2] [17]. This document provides standardized approaches for evaluating and documenting the detection capability of clinical laboratory measurement procedures, including limits of blank (LOB), detection (LOD), and quantitation (LOQ) [17].

Notably, CLSI deliberately distances itself from the terms "analytical sensitivity" and "functional sensitivity" because of their history of incorrect usage and confusion with LOD and LOQ [2]. Instead, EP17-A2 promotes a standardized framework based on:

- Limit of Blank (LOB): The highest apparent analyte concentration expected to be found when replicates of a blank sample containing no analyte are tested [2] [15]

- Limit of Detection (LOD): The lowest true analyte concentration likely to be reliably distinguished from the LOB and at which detection is feasible [15]

- Limit of Quantitation (LOQ): The lowest concentration at which an analyte can not only be reliably detected but also measured with acceptable precision and trueness [15]

Table 1: Comparison of Key Concepts in Measurement Procedure Capability

| Term | Definition | Key Feature | Clinical Utility |

|---|---|---|---|

| Limit of Blank (LOB) | Highest measurement result likely to be observed for a blank sample [2] | Meanblank + 1.65 × SDblank [2] |

Defines the threshold above which a signal is distinguishable from background noise |

| Limit of Detection (LOD) | Lowest concentration that can be distinguished from the LOB with high probability [15] | Typically LOB + 1.65 × SDlow concentration [15] |

Indicates detectability but not necessarily quantitative reliability |

| Limit of Quantitation (LOQ) | Lowest concentration that can be quantified with acceptable precision and trueness [15] | Concentration where CV meets predefined goal (e.g., 20%) [15] | Defines the lower limit for clinically reportable quantitative results |

| Functional Sensitivity | Lowest concentration measurable with ≤20% CV [2] [1] | Based on long-term precision profiles | Determines clinically useful lower reporting limit |

Experimental Protocols for Detection Capability Evaluation

Protocol for Determining Limit of Blank (LOB) and Limit of Detection (LOD)

CLSI EP17-A2 provides detailed methodologies for establishing the fundamental detection capabilities of measurement procedures. For LOB determination, the protocol requires testing multiple blank samples (containing no analyte) in duplicate over multiple days (typically 3-5 days) using at least two different reagent lots [15]. This design captures both within-run and between-day variability. The resulting data set should include at least 60 measurements, which are used to calculate the mean and standard deviation of the blank responses. The LOB is then determined as:

LOB = Meanblank + 1.65 × SDblank (assuming 95% one-sided confidence interval) [2]

For LOD determination, samples with low concentrations of analyte (near the expected detection limit) are similarly tested over multiple days with multiple reagent lots. The LOD is calculated as:

LOD = LOB + 1.65 × SDlow concentration sample [15]

This protocol was exemplarily applied in a recent SARS-CoV-2 serology assay validation study, where LOB was determined using five negative plasma samples collected prior to December 2019, tested in duplicate over three days by one operator using two reagent lots [15].

Protocol for Determining Functional Sensitivity

The determination of functional sensitivity requires a precision-based approach that evaluates the assay's performance at progressively lower analyte concentrations. The CLSI-recommended protocol involves:

Sample Selection: Obtain or prepare samples (e.g., patient pools, control materials) with concentrations spanning the expected low-end quantitative range. Ideally, use undiluted patient samples, though diluted samples or appropriate control materials are acceptable alternatives [1]

Study Design: Analyze samples repeatedly over an extended period (typically 10-20 days) using multiple reagent lots and operators to capture total imprecision [1]

Data Analysis: Calculate the mean, standard deviation, and coefficient of variation (CV = [SD/Mean] × 100%) for each concentration level

Result Interpretation: Plot CV against concentration and determine the lowest concentration where the CV meets the predefined precision goal (traditionally 20% for many assays) [2] [1]

This methodology was implemented in a COVID-19 serology study where samples diluted to various concentrations in negative matrix were tested extensively to establish functional sensitivity for anti-Spike and anti-Nucleocapsid IgG and IgM assays [15].

Protocol for Verification of Manufacturer Claims

For laboratories verifying manufacturer claims for detection capabilities, CLSI provides specific verification protocols. These typically require testing a smaller number of replicates than full characterization studies but maintain the principles of multi-day testing with appropriate materials. The verification experiment should include:

- Testing of blank samples for LOB verification

- Low-concentration samples for LOD verification

- Samples across the low concentration range for LOQ/functional sensitivity verification

The laboratory compares its results against manufacturer claims using predefined acceptance criteria, often based on statistical confidence intervals [17].

Relationships and Applications in Clinical Practice

The Relationship Between Different Detection Capability Metrics

The various detection capability metrics exist in a hierarchical relationship, with each serving a distinct purpose in characterizing assay performance. This progression from detection to quantitation represents increasing levels of performance requirement, with functional sensitivity (conceptually similar to LOQ) representing the most stringent criterion for clinically useful measurement.

Detection Capability Relationship

Impact on Clinical Decision Making and Research Applications

The distinction between detection capability metrics has direct implications for clinical practice and research. Functional sensitivity determines the lower limit of the reportable range—the concentration below which results should be reported as "less than" rather than as numeric values [1]. This prevents clinicians from interpreting numerically different but imprecise low values as clinically significant changes.

In research settings, particularly in drug development and biomarker discovery, understanding these distinctions is crucial for:

- Assay Selection: Choosing tests with appropriate functional sensitivity for monitoring treatment response

- Protocol Development: Defining inclusion criteria and endpoints based on reliably measurable analyte levels

- Data Interpretation: Recognizing the limitations of values near the detection limit

- Method Comparison: Ensuring equitable comparison of results from different measurement procedures

Application in Specific Testing Scenarios

The proper application of detection capability concepts varies by clinical context:

Infectious Disease Testing For quantitative molecular tests (e.g., viral load monitoring), functional sensitivity determines the threshold for reliable detection of treatment response or disease progression. The low-end precision is critical for distinguishing biologically significant changes from analytical variation [4].

Endocrinology In hormone testing (e.g., TSH, cortisol), functional sensitivity establishes the concentration below which results cannot reliably distinguish between hypofunction and normal variation [1].

Serology Testing For antibody quantification (e.g., SARS-CoV-2 serology), functional sensitivity defines the minimum antibody level that can be reliably tracked over time to monitor immune response [15].

Table 2: Research Reagent Solutions for Detection Capability Studies

| Reagent Type | Function in Experiments | Application Example | Considerations |

|---|---|---|---|

| International Standards | Calibration to reference materials for result harmonization [15] | WHO International Standard for anti-SARS-CoV-2 immunoglobulin [15] | Enables comparability across different laboratories and platforms |

| Negative Matrix Samples | Determination of LOB and background signal [15] | Pre-pandemic plasma/serum for infectious disease assays [15] | Must be truly analyte-free with appropriate matrix composition |

| Low-Level Controls | Evaluation of LOD and functional sensitivity [1] | Diluted patient samples or commercial controls near detection limit [1] | Should mimic patient sample matrix; avoid artificial diluents |

| Linearity Panels | Assessment of reportable range and LOQ [15] | Serially diluted clinical samples in negative matrix [15] | Must cover concentration range from below LOD to upper limit |

| Multiplex Validation Materials | Verification of analytical specificity [4] | Panels of related organisms for cross-reactivity testing [4] | Should include common cross-reactants and interfering substances |

The CLSI viewpoint provides a crucial framework for understanding and applying detection capability concepts in clinical laboratory medicine. By distinguishing between fundamental detection limits (LOD) and clinically useful quantification limits (functional sensitivity/LOQ), the EP17-A2 guideline enables researchers and drug development professionals to properly validate and implement measurement procedures. The adoption of standardized terminology and methodologies ensures that laboratory results are both reliable and clinically applicable, ultimately supporting better patient care and robust research outcomes. As laboratory medicine continues to evolve with new technologies and biomarkers, adherence to these consensus standards will remain essential for generating comparable and trustworthy data across the healthcare continuum.

In both clinical diagnostics and preclinical drug development, the accurate characterization of assay performance at low analyte concentrations is paramount. Two distinct but often conflated concepts—analytical sensitivity (the lowest concentration distinguishable from background noise) and functional sensitivity (the lowest concentration measurable with clinically usable precision)—govern this space. While analytical sensitivity defines the theoretical detection limit, functional sensitivity determines the practical utility of an assay in real-world applications. This whitepaper elucidates the critical differences between these performance characteristics, their experimental determination protocols, and their profound implications for research validity, diagnostic accuracy, and drug development efficacy. Understanding this distinction enables researchers to select appropriate assays, interpret data correctly, and avoid costly misinterpretations in critical decision-making processes.

In analytical chemistry and clinical diagnostics, "sensitivity" is an overloaded term that requires careful disambiguation. The distinction between analytical and functional sensitivity represents a fundamental divide between theoretical detection capability and practical measurement utility. Analytical sensitivity, formally defined as the lowest concentration that can be distinguished from background noise, represents the theoretical detection limit of an assay [1]. In practice, this is typically determined by measuring replicates of a blank sample and calculating the concentration equivalent to the mean of the blank plus 2 standard deviations (for immunometric assays) or minus 2 standard deviations (for competitive assays) [1]. This parameter, often termed the Limit of Detection (LoD), answers the question: "What is the lowest concentration this assay can theoretically detect?"

In contrast, functional sensitivity addresses a more pragmatic concern: "What is the lowest concentration at which this assay can report clinically useful results?" [1] Developed originally for thyroid-stimulating hormone (TSH) assays in the 1990s, functional sensitivity is defined as the lowest analyte concentration that can be measured with a specified precision, typically a coefficient of variation (CV) of ≤20% [1] [2]. This parameter acknowledges that even well above the analytical sensitivity, imprecision may be so substantial that results lack clinical or research utility due to poor reproducibility.

Table 1: Fundamental Definitions and Distinctions

| Characteristic | Analytical Sensitivity | Functional Sensitivity |

|---|---|---|

| Definition | Lowest concentration distinguishable from background noise | Lowest concentration measurable with clinically usable precision |

| Common Terminology | Limit of Detection (LoD), Detection Limit | Practical Quantitation Limit |

| Primary Focus | Signal-to-noise separation | Measurement reproducibility |

| Typical CV Requirement | None specified | ≤20% (or other predefined precision goal) |

| Determining Factors | Blank variability, assay signal strength | Overall assay imprecision at low concentrations |

Theoretical Foundations and Statistical Underpinnings

The Statistical Basis of Detection and Quantification

The conceptual framework for understanding analytical and functional sensitivity rests on statistical principles governing measurement uncertainty. The Limit of Blank (LoB) establishes the baseline, defined as the highest apparent analyte concentration expected when replicates of a blank sample are tested [7]. Calculated as LoB = meanblank + 1.645(SDblank) for a 95% confidence level, it represents the threshold above which a signal is unlikely to come from a blank sample [7].

Building on this foundation, the Limit of Detection (LoD), synonymous with analytical sensitivity, represents the lowest concentration that can be reliably distinguished from the LoB. According to CLSI guidelines, LoD is determined using both the measured LoB and test replicates of a sample with low analyte concentration: LoD = LoB + 1.645(SDlow concentration sample) [7]. This calculation ensures that 95% of measurements from a sample at the LoD will exceed the LoB, minimizing false negatives.

Functional sensitivity operates in a different statistical realm, focusing not merely on detection but on reliable quantification. At concentrations near the LoD, the relative imprecision (CV) increases dramatically, compromising result reliability. Functional sensitivity establishes a precision threshold—typically a CV of 20% or less—that defines the lowest concentration suitable for practical application [1] [7]. This aligns with the concept of Limit of Quantitation (LoQ), though functional sensitivity specifically emphasizes clinical or research utility rather than purely analytical performance.

Mathematical Representations

The relationship between concentration and precision follows a predictable pattern captured in precision profiles, which graphically represent how assay imprecision changes with analyte concentration [1]. These profiles typically show high CV values at very low concentrations, with improving precision as concentration increases. The functional sensitivity is identified as the point where the precision profile crosses the predetermined CV threshold (e.g., 20%).

For calibration sensitivity, which differs from both analytical and functional sensitivity, the relationship is defined as the slope of the calibration curve (S = dy/dx), where a steeper slope indicates greater responsivity to concentration changes [2] [6]. However, this responsivity alone does not indicate the lowest measurable concentration, as it lacks information about measurement variability.

Figure 1: Relationship between blank assessment, detection limits, and functional sensitivity

Experimental Protocols for Determination

Determining Analytical Sensitivity (Limit of Detection)

Establishing the analytical sensitivity requires a systematic approach focusing on signal distinction from background noise. According to CLSI guidelines and industry best practices, the following protocol is recommended:

Sample Preparation and Testing:

- Utilize a true blank sample with an appropriate matrix (e.g., zero calibrator or analyte-free serum) [1]

- Prepare 20-60 replicates (20 for verification; 60 for establishment) of the blank sample [7]

- For molecular diagnostics, include controls for nucleic acid extraction to detect process errors [4]

- Test replicates in multiple analytical runs to capture system variability

Calculation and Interpretation:

- Calculate the mean and standard deviation (SD) of the measured signals (e.g., counts per second, optical density) from blank replicates

- For immunometric assays: Analytical Sensitivity = meanblank + 2(SDblank) [1]

- For competitive assays: Analytical Sensitivity = meanblank - 2(SDblank) [1]

- Express the result as the concentration equivalent to the calculated signal value

This protocol estimates the concentration at which a sample can be distinguished from blank with approximately 95% confidence, assuming a normal distribution of blank measurements. However, this approach primarily verifies the ability to detect presence versus absence of analyte without regard to measurement precision at low concentrations.

Determining Functional Sensitivity

Establishing functional sensitivity requires a more comprehensive approach that evaluates assay precision across a low concentration range. The recommended protocol, adapted from clinical laboratory guidelines and molecular diagnostics best practices, involves:

Sample Selection and Preparation:

- Ideally, use undiluted patient samples or pools with concentrations spanning the expected functional sensitivity range [1]

- When natural low-concentration samples are unavailable, prepare dilutions of known positive samples in appropriate matrix [1]

- Avoid using routine sample diluents for creating low concentrations, as they may contain detectable analyte levels that bias results [1]

- Include 3-5 different concentration levels bracketing the expected functional sensitivity

Testing Protocol:

- Analyze replicates at each concentration level across multiple different runs (at least 5-10 separate runs) [1]

- Space testing over days or weeks to capture true day-to-day (interassay) variability [1]

- A single run with multiple replicates does not adequately assess functional sensitivity

- Include 20 measurements at, above, and below the likely functional sensitivity for robust determination [4]

Data Analysis and Interpretation:

- For each concentration level, calculate the mean, standard deviation, and coefficient of variation (CV = SD/mean × 100%)

- Plot CV against concentration to generate a precision profile

- Identify the lowest concentration where the CV meets the predefined precision goal (typically ≤20%)

- If no tested concentration coincides exactly with the CV threshold, interpolate from the precision profile

Table 2: Comparison of Experimental Protocols

| Protocol Aspect | Analytical Sensitivity | Functional Sensitivity |

|---|---|---|

| Sample Type | True blank/zero sample | Low-concentration patient samples or pools |

| Replicates | 20-60 replicates | Multiple concentrations tested over multiple runs |

| Timeframe | Single experiment possible | Requires multiple days/weeks |

| Key Calculations | Meanblank ± 2(SDblank) | CV = (SD/mean) × 100% |

| Acceptance Criterion | Distinguishable from blank | CV ≤ 20% (or other predefined precision goal) |

| Primary Outcome | Concentration distinguishable from zero | Lowest clinically/research-useful concentration |

Applications in Research and Drug Development

Diagnostic Assay Development and Validation

In clinical diagnostics, the distinction between analytical and functional sensitivity directly impacts patient care decisions. For example, in thyroid function testing, distinguishing euthyroid from hyperthyroid patients requires precise measurement of very low TSH concentrations [1] [7]. An assay with excellent analytical sensitivity (low LoD) but poor functional sensitivity (high CV at low concentrations) might detect TSH but fail to reliably monitor suppression therapy. This explains why package inserts for immunoassays typically specify both parameters, with the lower reporting limit often set at or above the functional sensitivity rather than the analytical sensitivity [1].

In molecular diagnostics, particularly for infectious diseases like SARS-CoV-2, analytical sensitivity determines the lowest viral load detectable, while functional sensitivity ensures consistent detection near the clinical decision threshold [4] [18]. During the COVID-19 pandemic, RT-qPCR protocols were rigorously validated for both characteristics to ensure reliable detection of infected individuals, particularly those with low viral loads [18]. The modified RdRP and E gene assays in one evaluation demonstrated adequate analytical sensitivity but were ultimately replaced by the N1 assay due to better functional performance with clinical samples [18].

Preclinical Drug Development Models

In preclinical toxicology, sensitivity and specificity take on related but distinct meanings. Analytical sensitivity in this context refers to a model's ability to correctly identify toxic compounds (true positive rate), while specificity indicates the ability to correctly identify safe compounds (true negative rate) [19]. The relationship between these characteristics involves a fundamental trade-off—increasing sensitivity typically decreases specificity and vice versa.

Advanced models like liver-chips demonstrate how this balance impacts drug development decisions. In one study, researchers set a threshold to achieve 100% specificity (no false positives), meaning no safe drugs would be incorrectly flagged as toxic [19]. At this threshold, the model maintained 87% sensitivity, correctly identifying most toxic compounds without sacrificing good drugs [19]. This balance is critical in early drug development, where discarding a promising compound due to false toxicity signals can waste billions in development costs and deprive patients of potential treatments.

Figure 2: Impact of sensitivity-specificity balance on drug development decisions

Signaling Pathway Analysis and Drug Target Identification

Sensitivity analysis in systems biology employs related but distinct concepts to identify potential drug targets in signaling pathways. Local sensitivity analysis examines how changes in model parameters (e.g., kinetic rates) affect system responses, helping identify processes whose modulation would significantly alter pathway behavior [20].

In a p53/Mdm2 regulatory module study, sensitivity analysis identified parameters whose reduction would prolong elevated p53 levels, potentially promoting apoptosis in cancer cells [20]. This approach differs from classical analytical sensitivity but shares the fundamental principle of quantifying how system outputs respond to input changes. The highest-ranking parameters from such analyses indicate processes that represent promising drug targets, guiding subsequent searches for active compounds that modulate these targets [20].

Essential Research Reagents and Materials

Successful determination of analytical and functional sensitivity requires appropriate research materials and controls. The following table summarizes key reagents and their applications in sensitivity characterization:

Table 3: Essential Research Reagent Solutions for Sensitivity Determination

| Reagent/Control Type | Function/Purpose | Key Considerations |

|---|---|---|

| Matrix-Matched Blank | Establishing baseline signal and determining LoB | Must use true zero analyte material in appropriate sample matrix [1] |

| ACCURUN Molecular Controls | Challenging entire assay process from extraction through detection | Whole-organism controls appropriate for molecular assays [4] |

| Linearity/Performance Panels | Evaluating precision across concentration range | AccuSeries and similar panels expedite functional sensitivity determination [4] |

| Low-Positive Patient Pools | Assessing functional sensitivity with real-world samples | Undiluted patient samples preferred over artificial dilutions [1] |

| Appropriate Diluents | Preparing low-concentration samples | Avoid routine sample diluents that may contain detectable analyte [1] |

| Multiplex Microsphere Sets | Simultaneously evaluating multiple biomarkers | Color-coded beads allow multiple analyses in single sample [21] |

The distinction between analytical and functional sensitivity is far more than semantic pedantry—it represents the crucial divide between theoretical detection capability and practical measurement utility. In research and drug development, overlooking this distinction risks costly misinterpretations: an assay with exemplary analytical sensitivity may prove inadequate for monitoring treatment response, while a model optimized for sensitivity without regard to specificity may prematurely eliminate promising drug candidates.

Understanding these concepts enables researchers to make informed decisions about assay selection, experimental design, and data interpretation. By rigorously determining both analytical and functional sensitivity during assay validation, and by carefully considering the sensitivity-specificity balance in preclinical models, researchers can enhance the reliability of their findings, improve development efficiency, and ultimately contribute to better health outcomes. As analytical technologies advance and therapeutic targets become increasingly challenging, this distinction will only grow in importance for extracting meaningful signals from biological complexity.

Measurement and Application: How to Determine and Use Sensitivity Metrics

Methodology for Determining Analytical Sensitivity

In the realm of clinical and analytical chemistry, accurately determining the sensitivity of an assay is fundamental to ensuring reliable diagnostic and research outcomes. The methodology for establishing analytical sensitivity is often framed in the context of distinguishing it from the related, yet distinct, concept of functional sensitivity. While analytical sensitivity refers to the lowest concentration of an analyte that an assay can reliably differentiate from zero, typically defined by the limit of detection (LOD), functional sensitivity represents the lowest concentration at which an assay can precisely measure the analyte, usually defined by a coefficient of variation (CV) of 20% [22]. This distinction is critical for researchers and drug development professionals who must validate assays for clinical or research use, ensuring that measurements are not merely detectable but also reproducible and precise at clinically relevant decision thresholds.

This guide provides an in-depth technical examination of the established methodologies for determining analytical sensitivity, supported by contemporary experimental data and protocols. It further explores the practical implications of this differentiation through case studies in thyroid cancer monitoring and infectious disease testing.

Core Methodological Frameworks

Establishing the Limit of Detection (LOD)

The Limit of Detection (LOD) is the foundational metric for analytical sensitivity. It is defined as the lowest concentration of an analyte that can be detected, but not necessarily quantified, under stated experimental conditions. The most common methodologies for its determination are based on statistical analysis of blank and low-concentration samples.

- Procedure Using Blank and Low-Concentration Samples: A recommended protocol involves repeatedly measuring (e.g., n=20) a blank sample (containing no analyte) and a series of low-concentration samples. The LOD can be calculated as the mean signal of the blank plus three standard deviations (SD) of the blank measurements. Alternatively, using a low-concentration sample, the LOD can be derived from the concentration value corresponding to the mean signal of the low-concentration sample plus 2-3 SDs. This method directly estimates the concentration at which the signal can be distinguished from noise with high confidence.

- Signal-to-Noise Ratio: In techniques like chromatography or spectroscopy, the LOD is often determined as the concentration that yields a signal-to-noise ratio of 2:1 or 3:1. This approach is practical for instrumental analysis where background noise is readily measurable.

Table 1: Key Definitions in Sensitivity Assessment

| Term | Definition | Typical Determination Criterion |

|---|---|---|

| Analytical Sensitivity (LOD) | The lowest concentration an assay can reliably distinguish from a blank. | Mean signal of blank + 2 or 3 Standard Deviations. |

| Functional Sensitivity | The lowest concentration an assay can measure with acceptable precision. | Concentration at which the CV is 20%. |

| Limit of Quantification (LOQ) | The lowest concentration that can be quantitatively measured with acceptable precision and accuracy. | Often defined as a CV of 10% or 15%. |

Determining Functional Sensitivity

While the LOD answers "Can I see it?", functional sensitivity answers "Can I measure it reliably?". The standard methodology involves a precision-profile experiment.

- Experimental Protocol: Prepare and analyze a dilution series of the analyte across a wide concentration range, including very low levels near the expected LOD. Each concentration level should be tested in multiple replicates (e.g., 20 replicates) over multiple days to capture both within-run and total imprecision.

- Data Analysis: Calculate the CV for each concentration level. Plot the CV against the analyte concentration. The point where the precision profile curve crosses the pre-defined CV threshold (e.g., 20%) is the functional sensitivity. This represents the practical lower limit of the assay's useful working range.

Case Study: Ultrasensitive vs. Highly Sensitive Thyroglobulin Assays

A 2025 study on differentiated thyroid cancer (DTC) monitoring provides a clear, real-world application of these methodologies, directly comparing a third-generation (ultrasensitive) and a second-generation (highly sensitive) thyroglobulin (Tg) assay [22].

Experimental Protocol and Materials

- Assays Compared: The highly sensitive Tg (hsTg) assay was the BRAHMS Dynotest Tg-plus (functional sensitivity: 0.2 ng/mL). The ultrasensitive Tg (ultraTg) assay was the RIAKEY Tg immunoradiometric assay (functional sensitivity: 0.06 ng/mL) [22].

- Subject Cohort: 268 DTC patients who had undergone total thyroidectomy and radioiodine treatment.

- Sample Collection: Both unstimulated and TSH-stimulated serum samples were collected. Stimulation was achieved via levothyroxine withdrawal or recombinant human TSH injection.

- Measurement: Serum samples were stored at -20°C until evaluation. Tg levels were measured using both IRMA kits. For values below the analytical sensitivity, the sensitivity threshold value itself was used as a substitute for statistical analysis.

- Statistical Analysis: Correlation between assays was assessed using Pearson correlation. Diagnostic performance to predict a stimulated Tg level of ≥1 ng/mL was evaluated using Receiver Operating Characteristic (ROC) curve analysis to determine optimal cut-off values, sensitivity, and specificity.

Key Findings and Quantitative Data

The study's results quantitatively demonstrate the impact of differing analytical sensitivities on clinical performance.

Table 2: Performance Comparison of hsTg and ultraTg Assays [22]

| Assay Parameter | Highly Sensitive Tg (hsTg) | Ultrasensitive Tg (ultraTg) |

|---|---|---|

| Functional Sensitivity | 0.2 ng/mL | 0.06 ng/mL |

| Analytical Sensitivity (LOD) | 0.1 ng/mL | 0.01 ng/mL |

| Correlation with Stimulated Tg | R=0.79 (P<0.01) | R=0.79 (P<0.01) |

| Optimal Cut-off for Predicting Stimulated Tg ≥1 ng/mL | 0.105 ng/mL | 0.12 ng/mL |

| Sensitivity at Optimal Cut-off | 39.8% | 72.0% |

| Specificity at Optimal Cut-off | 91.5% | 67.2% |

The data shows that the ultraTg assay, with its superior analytical and functional sensitivity, offered significantly higher clinical sensitivity (72.0% vs. 39.8%) for predicting disease recurrence, albeit with lower specificity. This trade-off is a critical consideration in clinical decision-making. The study identified discordant cases where hsTg was low but ultraTg was elevated; some of these patients later developed structural recurrence, highlighting the potential clinical benefit of the more sensitive assay [22].

Advanced Applications and Protocol Optimization

Pooled Testing for SARS-CoV-2

The methodology for determining sensitivity is also crucial for optimizing testing strategies, such as sample pooling during the SARS-CoV-2 pandemic. A 2025 study developed a mathematical model to balance reagent efficiency with analytical sensitivity in pool-based RT-qPCR testing [23].

- Experimental Protocol: 30 samples were tested both individually and in pools ranging from 2 to 12 samples. Using Passing Bablok regressions, the shift in Cycle threshold (Ct) values for each pool size was estimated. This Ct shift was then used to project sensitivity loss based on the Ct distribution of 1,030 individually tested positive samples.

- Findings: The study demonstrated that sensitivity is inversely related to pool size. A 4-sample pool maximized reagent efficiency with only a modest drop in sensitivity (to 87.18%-92.52%). In contrast, a 12-sample pool led to a significant sensitivity loss (77.09%-80.87%), making it unreliable for detection. This highlights how understanding an assay's inherent analytical sensitivity is critical for designing effective and reliable large-scale testing protocols.

Comparative Sensitivity of Commercial Assays

A comparative study of seven common commercial SARS-CoV-2 molecular assays illustrates the methodology for directly evaluating analytical sensitivity (LOD) across different platforms [24].

- Experimental Protocol: A single positive clinical specimen was serially diluted in viral transport media and quantified using a droplet digital PCR (ddPCR) assay as a gold standard. Replicate samples at various concentrations were then tested on all seven platforms to establish the LOD for each.

- Findings: All seven assays demonstrated 100% detection at a concentration of approximately 1,300 copies/mL (for N1 and N2 genes). However, at a one-log lower concentration, only the Abbott Molecular, Roche, and Xpert Xpress assays maintained 100% detection of replicates. This protocol provides a robust framework for the head-to-head comparison of assay LODs, which is essential for laboratory selection and validation.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key reagents and materials essential for experiments determining analytical and functional sensitivity, based on the cited studies.

Table 3: Essential Reagents and Materials for Sensitivity Determination

| Item | Function / Description | Example from Literature |

|---|---|---|