Benchtop vs. Portable Spectrometers: A Performance and Application Guide for Scientists

This article provides a comprehensive comparison of benchtop and portable spectrometer performance characteristics, tailored for researchers, scientists, and drug development professionals.

Benchtop vs. Portable Spectrometers: A Performance and Application Guide for Scientists

Abstract

This article provides a comprehensive comparison of benchtop and portable spectrometer performance characteristics, tailored for researchers, scientists, and drug development professionals. It covers foundational principles, including key performance metrics like accuracy, resolution, and wavelength range. The scope extends to methodological applications in pharmaceutical quality control and counterfeit drug screening, troubleshooting common operational challenges, and validation protocols for ensuring data reliability and regulatory compliance. The goal is to offer a definitive guide for selecting the optimal spectrometer technology based on specific research, quality control, and field application needs.

Core Principles and Performance Specifications of Benchtop and Portable Spectrometers

For researchers and drug development professionals, selecting the appropriate spectrometer is a critical decision that balances analytical performance with operational flexibility. This guide provides an objective comparison of benchtop and portable spectrometers, underpinned by experimental data and performance metrics, to inform your strategic instrument selection.

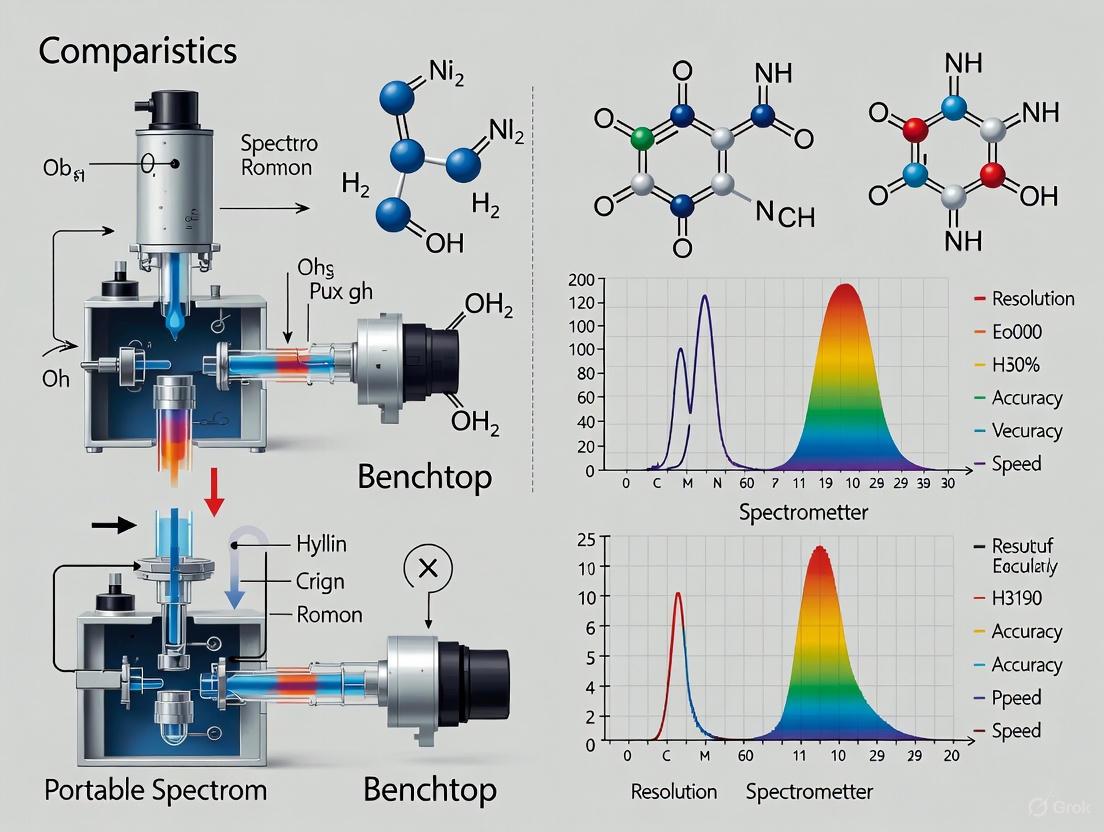

Performance Characteristics: A Quantitative Comparison

The core distinction between benchtop and portable systems lies in their performance profiles. Benchtop instruments are engineered for maximum precision in controlled environments, while portable devices prioritize mobility for on-site analysis. The following table summarizes key performance differences based on published studies and manufacturer specifications.

Table 1: Comparative Performance of Benchtop and Portable Spectrometers

| Performance Characteristic | Benchtop Spectrometers | Portable Spectrometers |

|---|---|---|

| Measurement Precision | High precision and repeatability; superior for research and regulatory compliance [1] [2]. | Acceptable for most industrial applications; subject to user technique and environment [1] [3]. |

| Spectral Resolution & Range | Expanded wavelength capabilities (UV, Visible, IR); higher spectral resolution [1]. | Often limited to specific ranges (e.g., Visible with limited UV); narrower detection capabilities [1]. |

| Sample Throughput & Automation | High throughput with automated sample handling and robotic interfaces [2]. | Rapid single measurements; manual operation limits overall throughput [2]. |

| Quantitative Analysis (Example: Cell Culture Metabolites) | RMSECV for Glucose: ~0.92 g·L⁻¹ (Raman) [4]. | Not specifically reported for portable Raman in MB culture constraints. |

| Limit of Detection (Example: Cocaine HCl in Adulterants) | 25% concentration (Portable IR) [5]. | 10% concentration (Color Test); 25% concentration (Portable Raman) [5]. |

| Key Advantages | Maximum accuracy, extensive feature sets, superior reproducibility [1] [2]. | Operational mobility, cost-effectiveness, rapid on-site measurement [1] [2]. |

Experimental Protocols: Methodologies for Performance Evaluation

The data presented in Table 1 are derived from rigorous experimental protocols. The following sections detail key methodologies used to evaluate spectrometer performance in real-world research scenarios.

Protocol for Cell Culture Metabolite Monitoring

A study comparing spectroscopy technologies for monitoring metabolites in miniature bioreactor (MB) cultures established a standard protocol to assess performance under constraints relevant to drug development (sample volume <50 µL, analysis of 48 vessels within 1 hour) [4].

- Sample Preparation: A library of historical GS-CHO cell culture supernatant samples was compiled. A design-of-experiment (DOE) approach using an IV-optimal algorithm selected 20 samples that best represented the design space for glucose (0–10 g·L⁻¹), lactate (0–15 g·L⁻¹), and ammonium (0–0.3 g·L⁻¹) [4].

- Spectra Acquisition:

- FT-NIR: 20 µL of supernatant was placed in a 96-well plate. Spectra were recorded in transmission mode (4000–10,000 cm⁻¹, 4 cm⁻¹ resolution, 64 scans) [4].

- Raman: 20 µL of supernatant was analyzed in a 96-well plate with a 785 nm laser. Spectra were collected (0–1900 cm⁻¹, 0.3 cm⁻¹ resolution, 20 s exposure) with cosmic ray removal [4].

- Data Analysis: Multivariate data analysis (MVDA) techniques, including Partial Least Squares (PLS), were used to develop predictive models for each analyte. Model performance was compared based on Root Mean Square Error of Cross-Validation (RMSECV) [4].

Protocol for Substance Identification in Field Applications

Research comparing field-based methods for cocaine analysis evaluated the limit of detection (LOD) and specificity of portable spectrometers against color-based tests [5].

- Sample Preparation: Two-component mixtures were created using pure cocaine HCl and common adulterants (lidocaine, mannitol, caffeine, artificial sweetener, baby formula). Samples with cocaine concentrations from 0.1% to 50% by mass were prepared [5].

- Analysis: Samples were tested with:

- Color-based Test: A Narcotics Identification Kit (NIK), where a specific color sequence indicates a positive result [5].

- Portable IR Spectroscopy: Using a Smiths Detection HazMatID Elite with a diamond ATR element. A "hit" from the onboard library defined a positive result [5].

- Portable Raman Spectroscopy: Using a Smiths Detection ACE-ID. A library "hit" defined a positive result [5].

- LOD Determination: The LOD was established as the lowest concentration at which a positive result was consistently obtained for each method and adulterant mixture [5].

Protocol for Translating Lipoprotein Analysis to Benchtop NMR

A 2025 multi-site study demonstrated the translation of quantitative lipoprotein analysis from high-field NMR to benchtop systems, highlighting the capabilities of modern benchtop technology [6].

- Sample Preparation: Serum samples from three independent cohorts (Ntotal=358) were prepared identically across all sites. Each 300 µL serum aliquot was mixed with 300 µL of phosphate buffer (75 mM Na₂HPO₄, 2 mM NaN₃, 4:1 H₂O/D₂O, pH 7.4) [6].

- Data Acquisition: Each prepared sample was analyzed in parallel on a 600 MHz NMR system and an 80 MHz benchtop NMR system. The high-field data were processed using a established lipoprotein subclass analysis (B.I.-LISA) method to generate quantitative reference data [6].

- Model Building & Validation: A joint calibration model was built by regressing the 80 MHz spectral data from all three sites against the reference lipoprotein parameters from the 600 MHz systems. The model's accuracy was assessed by its ability to recover the known parameters [6].

Workflow and Decision Pathways

The choice between spectrometer types is often dictated by the primary goal of the analysis, balancing the need for definitive results against operational constraints. The following diagrams illustrate a typical analytical workflow and a decision-making pathway for instrument selection.

Essential Research Reagent Solutions

The execution of reliable spectroscopic analyses, as detailed in the experimental protocols, depends on the use of specific, high-quality reagents and consumables.

Table 2: Key Reagents and Materials for Spectroscopic Analysis

| Reagent/Material | Function in Experimental Protocol |

|---|---|

| Phosphate Buffer (e.g., 75 mM Na₂HPO₄, with preservatives in H₂O/D₂O) | Provides a stable pH matrix for serum and biofluid analysis, crucial for reproducible NMR results [6]. |

| Internal Standard (e.g., TSP - trimethylsilylpropanoic acid) | Serves as a chemical shift reference (0.0 ppm) and quantitative standard for NMR spectroscopy [6]. |

| External Calibration Standards (e.g., QuantRefC for NMR; white reference for reflectance) | Enables instrument calibration and quantitative concentration analysis across different systems and sites [6] [1]. |

| Cell Culture Media & Supplements | Supports the growth of cells (e.g., GS-CHO) for metabolomic studies and biomarker discovery [4]. |

| Metabolite Standards (e.g., Glucose, Lactate, Ammonium) | Used for calibrating predictive models in multivariate analysis for cell culture monitoring [4]. |

| ATR Crystal (e.g., Diamond) | Enables sample measurement in portable IR spectrometers with minimal preparation for solid and liquid samples [5]. |

For researchers, scientists, and drug development professionals, selecting the appropriate spectrometer is a critical decision that directly impacts the reliability and scope of analytical data. The choice between benchtop and portable configurations extends beyond mere convenience, striking at the core analytical trade-offs between performance and operational flexibility. This guide provides an objective comparison grounded in experimental data, focusing on the three pivotal metrics that define instrument capability: accuracy, precision, and resolution. These parameters are fundamentally influenced by the instrument's design—benchtop systems leverage larger, more stable optical components and sophisticated environmental controls, while portable devices achieve miniaturization through advanced micro-electromechanical systems (MEMS) and solid-state technologies, often at the cost of some performance [1] [2] [7]. Understanding these engineering trade-offs is essential for aligning instrument selection with specific application requirements, whether in a controlled laboratory setting or in the field.

Performance Metric Comparison: Benchtop vs. Portable Spectrometers

The following table summarizes the key performance characteristics of benchtop and portable spectrometers, providing a clear comparison of their typical capabilities.

| Performance Metric | Benchtop Spectrometers | Portable Spectrometers |

|---|---|---|

| Typical Wavelength Range | Expanded capabilities across UV, Visible, and IR ranges [1] | Often limited to Visible range; some with limited UV [1] |

| Spectral Resolution | Higher, due to superior optical components and stable environment [2] [8] | Lower, constrained by miniaturized optics [2] |

| Measurement Accuracy | Maximum accuracy for applications with very tight tolerances [1] | Accurate, but may not match benchtop precision for trace analysis [1] [9] |

| Measurement Repeatability | Exceptional reproducibility and repeatability; instrumental variance as low as 0.06 [3] | Good for field use; higher instrumental variance, e.g., around 0.13 [3] |

| Signal-to-Noise Ratio | Generally higher, due to robust optics and stable power [8] | Can be lower, affected by battery power and environmental factors [10] |

| Key Influencing Factors | Stable magnet homogeneity, temperature-controlled components, sophisticated calibration [2] [8] | Operator technique, environmental conditions, backing requirements [1] [3] |

Experimental Data: A Case Study in Food Authentication

A 2024 study on Iberian ham provides a direct, quantitative comparison of performance between benchtop and portable spectrometers in a real-world application. The research aimed to discriminate between "100% Iberian" (Black Label) and "Iberian x Duroc cross" (Red Label) hams to prevent labeling fraud, a task requiring high analytical precision [11].

Experimental Protocol and Methodology

- Sample Preparation: A total of 60 ham samples (24 purebred, 36 crossbred) were used. Spectra were recorded on three sample types: fat tissue only, lean muscle only, and a whole slice, with no prior sample preparation, simulating a rapid screening scenario [11].

- Instrumentation: The study employed two benchtop NIR spectrometers (Büchi NIRFlex N-500 and Foss NIRSystem 5000) and five portable devices. The portables included four NIR units (VIAVI MicroNIR 1700 ES, TellSpec Enterprise Sensor, Thermo Fischer Scientific microPHAZIR, and Consumer Physics SCiO Sensor) and one Raman device (BRAVO handheld) [11].

- Data Acquisition & Analysis: Spectra from all devices were collected and evaluated using discriminant algorithms based on partial least squares (PLS) regression. Different mathematical pre-treatments were applied to the spectra to optimize the classification models [11].

- Performance Measurement: The primary metric for success was the percentage of correctly classified samples in both calibration and validation sets, directly measuring the accuracy and robustness of each instrument [11].

Key Experimental Findings and Performance Data

The results demonstrated that portable devices could, in this specific application, outperform benchtop units. The table below summarizes the classification success rates for the top-performing portable devices.

| Spectrometer Device | Type | Sample Type | Correctly Classified (Calibration) | Correctly Classified (Validation) |

|---|---|---|---|---|

| SCiO Sensor | Portable NIR | Whole Slice | 97% | 92% |

| SCiO Sensor | Portable NIR | Lean Meat | 97% | 83% |

| TellSpec Enterprise | Portable NIR | Whole Slice | 100% | 81% |

| microPHAZIR | Portable NIR | Lean Meat | 84% | 81% |

| BRAVO Handheld | Portable Raman | Fat Tissue | 96% | 78% |

Source: Adapted from [11]

The study concluded that portable devices showed better discrimination results than benchtop spectrometers for this application, with the SCiO sensor delivering the highest overall accuracy [11]. This highlights that the choice of technology must be application-specific.

Experimental Workflow and Performance Relationship

The diagram below illustrates the general experimental workflow for a comparative spectrometer study and how core performance metrics influence the final results.

Essential Research Reagent Solutions for Spectroscopic Analysis

The table below details key materials and their functions as commonly used in spectroscopic experiments, such as the food authentication study cited.

| Item | Function in Experiment |

|---|---|

| Calibration Standards | White reference tiles and wavelength standards are essential for calibrating the instrument, ensuring measurement accuracy and repeatability over time [3]. |

| Reference Samples | Certified samples with known composition (e.g., purebred vs. crossbred ham) are used to build and validate the classification models [11]. |

| Chemometric Software | Software packages employing algorithms like PLS regression are critical for analyzing complex spectral data and building predictive models [11] [12]. |

| Specialized Sample Holders | Benchtop models often include built-in backing and holders to ensure consistent presentation, which must be manually controlled for portables [1] [3]. |

| UV Calibration Plaque | For applications involving optical brighteners, a UV calibration plaque is necessary to maintain consistency in UV-enabled instruments [3]. |

Key Takeaways for Instrument Selection

- Prioritize Benchtop Spectrometers for applications demanding the highest accuracy, precision, and resolution, such as color formulation, creating primary standards, detecting trace elements, and regulatory compliance work where measurement traceability is critical [1] [3] [9].

- Opt for Portable Spectrometers when the application requires mobility, speed, and on-site analysis, and where the performance is proven to be sufficient, as in the case of food authenticity screening, raw material identification, and production line spot-checks [1] [11] [13].

- Base the Final Decision on Application-Specific Data. As the Iberian ham study demonstrates, portable technology can sometimes deliver superior results. Validating the technology against your specific samples and analytical question is the most reliable path to a successful investment [11].

In modern scientific research and industrial quality control, spectroscopic techniques including Ultraviolet-Visible (UV-Vis), Near-Infrared (NIR), and Raman spectroscopy provide critical analytical capabilities for material characterization. As spectrometer technology evolves, a significant trend has emerged toward miniaturization and portability, enabling analytical capabilities to move from controlled laboratory environments directly to sample locations. The global portable spectrometer market, valued at $1,675.7 million in 2020, is projected to reach $4,065.7 million by 2030, reflecting a compound annual growth rate of 9.1% [14].

This comparison guide objectively evaluates the performance characteristics of benchtop versus portable spectrometers across UV-Vis, NIR, and Raman techniques, providing researchers and drug development professionals with experimental data and methodological frameworks for informed instrument selection. We examine wavelength coverage, detection capabilities, and analytical performance through comparative experimental data, with particular focus on applications relevant to pharmaceutical and materials science research.

Fundamental Principles and Wavelength Ranges

UV-Vis spectroscopy analyzes electronic transitions in molecules, typically covering 175-1100 nm, with extended systems reaching 3300 nm [15] [16] [17]. It provides quantitative analysis of chromophores through absorption, transmission, and reflectance measurements.

NIR spectroscopy operates primarily in the 780-2500 nm range (4000-12,821 cm⁻¹), measuring molecular overtone and combination vibrations, particularly of -CH, -OH, -SH, and -NH bonds [18]. This technique excels at rapid, non-destructive quantification of organic compounds.

Raman spectroscopy detects inelastically scattered light to probe molecular vibrational fingerprints, typically measuring in the 50-1800 cm⁻¹ Raman shift range (788-914 nm with 785 nm excitation) [18]. As a complementary technique to IR spectroscopy, Raman provides enhanced information about symmetric vibrations and IR-inactive functional groups.

Comparative Performance: Benchtop vs. Portable Systems

Table 1: Wavelength Coverage and Performance Characteristics

| Spectrometer Type | Typical Wavelength Range | Spectral Resolution | Key Applications | Portability Considerations |

|---|---|---|---|---|

| Benchtop UV-Vis-NIR | 175-3300 nm [15] | High (variable with configuration) | Absorption/transmission/reflectance studies, material characterization [17] [19] | Requires laboratory setting, large sample compartment [15] |

| Portable UV-Vis-NIR | 190-1100 nm [16] | ~1-20 nm [16] | Field analysis, OEM applications, biomedical sensing [16] | Compact (162×105×60 mm³), 800g, USB-powered [16] |

| Benchtop NIR | 1100-2498 nm [18] | High (2 nm increment [18]) | Quantitative analysis of organic compounds, quality control | Laboratory setting with controlled conditions |

| Portable NIR | 780-2500 nm [14] | Moderate | Food quality control, pharmaceutical analysis, agricultural products [18] [14] | Handheld designs, battery operation, field-deployable |

| Benchtop Raman | 50-1800 cm⁻¹ [18] | High (configurable) | Material identification, molecular structure analysis [20] [19] | Laboratory setting, often with microscope integration [18] |

| Portable Raman | 400-2300 cm⁻¹ [21] | 16-19 cm⁻¹ [21] | Hazardous material identification, pharmaceutical verification, forensic analysis [22] [21] | Miniaturized designs (as small as 6.3×3.9×1.7 cm), smartphone integration [21] |

Table 2: Analytical Performance Comparison in Quantitative Analysis

| Analysis Type | Spectrometer Platform | Performance Metrics | Application Context |

|---|---|---|---|

| Curcuminoid quantification [18] | Benchtop Raman | RMSEP: 0.44% w/w [18] | Turmeric quality control |

| Curcuminoid quantification [18] | Portable Raman | Comparable to benchtop [18] | Turmeric quality control |

| Curcuminoid quantification [18] | Benchtop NIR | RMSEP: 0.41% w/w [18] | Turmeric quality control |

| Curcuminoid quantification [18] | Portable NIR | No significant difference from benchtop [18] | Turmeric quality control |

| Material Identification [21] | Benchtop Raman | High S/N, full spectral range | Laboratory research |

| Material Identification [21] | Portable Raman | S/N improved 10x over earlier generations [21] | Field analysis |

Performance differences between benchtop and portable instruments have notably diminished with technological advances. While benchtop systems traditionally offered superior sensitivity and measurement range, modern portable instruments demonstrate comparable performance for many applications. Portable Raman spectrometers have shown remarkable improvement, with signal-to-noise ratios improving by approximately 10-fold compared to earlier portable generations due to transmission grating designs and better component integration [21].

Experimental Evidence: Benchtop vs. Portable Performance

Methodology for Comparative Performance Assessment

A rigorous 2022 study directly compared benchtop and portable spectrometer performance for quantifying active compounds in natural products [18]. The experimental protocol employed:

Sample Preparation: Researchers prepared 55 turmeric powder samples with varying curcuminoid concentrations (6-13% w/w) through geometric dilution of certified standards. Turmeric was selected for its well-characterized composition and relevance to quality control applications [18].

Reference Analysis: HPLC with UV detection at 425 nm served as the reference method, validated for specificity, linearity, accuracy, and precision according to Eurachem guidelines [18].

Spectroscopic Measurements:

- Benchtop NIR: FOSS NIR System Model 5000 (1100-2498 nm, 2 nm resolution)

- Portable NIR: Viavi Solutions MicroNIR

- Benchtop Raman: Horiba LabRAM HR Evolution (785 nm laser, 50-1800 cm⁻¹ range)

- Portable Raman: Not specified, but comparable parameters

- FT-IR: Nicolet iS5 with ATR accessory (400-4000 cm⁻¹) [18]

Chemometric Analysis: Partial least squares regression (PLSR) models were developed for each technique using 40 calibration samples, with 15 independent validation samples assessing model performance via root mean square error of prediction (RMSEP) [18].

Figure 1: Experimental workflow for comparative assessment of benchtop versus portable spectrometer performance.

Key Findings and Analytical Performance

The comparative study demonstrated that portable spectrometers can achieve analytical performance comparable to benchtop systems for quantitative analysis [18]. For turmeric quality control:

- Raman methods (benchtop and portable) showed excellent performance with RMSEP of 0.44% w/w and comparable results, respectively [18]

- NIR methods (benchtop and portable) demonstrated excellent performance with RMSEP of 0.41% w/w and comparable results, respectively [18]

- Statistical analysis revealed no significant differences between benchtop and portable methods in terms of precision and accuracy [18]

These findings substantiate the suitability of portable devices for food and pharmaceutical quality control in situ, offering speed, minimal sample preparation, and field deployment capabilities without sacrificing analytical rigor [18].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for Spectroscopic Analysis

| Item | Function | Application Context |

|---|---|---|

| Curcuminoid standards [18] | Reference materials for calibration | Quantification of active compounds in turmeric and similar matrices |

| Silica gel GF254 [18] | Stationary phase for TLC fingerprinting | Herbal material authentication according to pharmacopeia standards |

| C18 column [18] | HPLC separation | Compound separation prior to spectroscopic validation |

| Methanol and acetonitrile [18] | Extraction and mobile phase solvents | Sample preparation for reference analysis |

| SERS substrates [21] | Signal enhancement and fluorescence quenching | Trace detection in portable Raman applications |

| Custom spectral libraries [21] | Material identification and verification | Narcotics detection, pharmaceutical quality control |

| Holographic VPG gratings [16] | Spectral dispersion | Compact spectrometer design for portable applications |

Selection Guidelines for Research Applications

Application-Specific Considerations

Pharmaceutical Development: Benchtop UV-Vis-NIR systems (e.g., JASCO V-700 series) offer comprehensive characterization from far-UV (187 nm) to NIR (3200 nm) with regulatory compliance features for GxP environments [17]. Portable NIR instruments provide rapid raw material identity testing and moisture analysis.

Field Analysis and Point-of-Need Testing: Portable Raman spectrometers with spatially offset Raman spectroscopy (SORS) capabilities, such as the Agilent Resolve, enable analysis through sealed containers and barriers—particularly valuable for forensic and security applications [22].

Material Science Research: Benchtop systems with high-resolution capabilities (e.g., Andor Shamrock spectrographs) provide detailed characterization of nanomaterials, thin films, and quantum dots through Raman, photoluminescence, and absorption techniques [19].

Decision Framework

When selecting between benchtop and portable spectrometers:

Prioritize benchtop systems when requiring ultimate sensitivity, broadest spectral range, accessory versatility, or regulatory compliance in controlled environments

Choose portable systems for field deployment, point-of-need testing, rapid screening, or when sample transport to laboratories is impractical

Consider hybrid approaches using portable instruments for initial screening followed by benchtop confirmation for complex analyses

The convergence of performance between portable and benchtop systems continues as miniaturization technologies advance. Modern portable Raman spectrometers have reduced in size by over 1000-fold compared to first-generation portable instruments while simultaneously improving signal-to-noise ratios [21].

The comparative analysis of UV-Vis, NIR, and Raman spectroscopic techniques reveals a dynamic landscape where traditional performance gaps between benchtop and portable instruments are rapidly narrowing. While benchtop systems maintain advantages in ultimate sensitivity and measurement versatility, portable instruments now deliver sufficient performance for an expanding range of quantitative applications, particularly when combined with robust chemometric models.

The choice between benchtop and portable platforms ultimately depends on specific application requirements, with portable systems offering transformative potential through field deployment capabilities. As miniaturization technologies continue advancing, alongside improvements in spectral libraries and identification algorithms, portable spectrometers are positioned to become increasingly ubiquitous in research and quality control environments beyond traditional laboratory settings.

Market Landscape and Key Innovations Driving Adoption

The analytical instrumentation landscape is increasingly defined by a choice between benchtop and portable spectrometers, a decision that critically impacts research efficiency, data quality, and operational flexibility. In pharmaceutical research and drug development, selecting the appropriate spectrometer type involves balancing precision, portability, and application-specific requirements. Benchtop spectrometers, characterized by their stationary laboratory setup and superior analytical performance, are valued for high-sensitivity applications including quantitative analysis, regulatory compliance, and detailed structural elucidation [23] [9]. Conversely, portable spectrometers sacrifice some analytical precision for unparalleled mobility, enabling real-time, on-site analysis in manufacturing, field studies, and point-of-care diagnostics [24] [25]. The market for both segments demonstrates robust growth, driven by technological advancements and expanding application areas. The global benchtop spectrometer market is anticipated to advance at a CAGR of 7.56% (2026-2033), reaching USD 23.3 billion by 2033 [23], while the mobile spectrometer market is projected to grow at a CAGR of 7.7%, reaching USD 2.46 billion by 2034 [24]. This article provides a comparative analysis of their performance characteristics, supported by experimental data and structured to guide researchers and drug development professionals in strategic instrument selection.

Performance Comparison: Quantitative Data Analysis

The core distinction between benchtop and portable spectrometers lies in their measurable performance metrics. The following tables synthesize quantitative data across critical parameters to facilitate objective comparison.

Table 1: Overall Performance and Operational Characteristics Comparison

| Feature | Benchtop Spectrometers | Portable/Handheld Spectrometers |

|---|---|---|

| Primary Use Case | Laboratory-based, high-precision analysis [9] | On-site, real-time screening and analysis [24] |

| Typical Accuracy & Sensitivity | High sensitivity and precision; ideal for trace element detection [9] | Moderate accuracy; sufficient for most industrial screening [9] [2] |

| Spectral Resolution | Superior resolution capabilities [2] [26] | Lower resolution and more spectral noise [26] |

| Sample Throughput | High, often with automation options [2] | Rapid, single-sample measurement [9] |

| Analysis Time | Longer, includes sample preparation [9] | Seconds to minutes, minimal preparation [9] |

| Portability | Stationary, requires lab setup [9] | Highly portable for field use [24] [9] |

| Sample Type Versatility | High; handles liquids, powders, solids [9] | Limited; typically small, solid, surface-level samples [9] |

| Initial Investment | Higher cost [9] [27] | More affordable, lower initial investment [9] [2] |

| Skill Requirement | Requires specialized expertise [23] [27] | Simplified operation, reduced training needs [2] |

Table 2: Market and Application Landscape (2025-2033 Forecast)

| Parameter | Benchtop Spectrometers | Portable/Handheld Spectrometers |

|---|---|---|

| Market Size (2025/2029) | USD 15.05 Billion (2025) [23] | USD 1.18 Billion (2025) [28] |

| Projected Market Size | USD 23.3 Billion (2033) [23] | USD 1.91 Billion (2029) [28] |

| Compound Annual Growth Rate (CAGR) | 7.56% (2026-2033) [23] | 12.8% (2025-2029) [28] |

| Dominant Regional Market | North America [23] | North America [24] [28] |

| Fastest Growing Region | Asia-Pacific [23] [27] | Asia-Pacific [24] [25] [28] |

| Key Application Sectors | Pharmaceuticals, Biotechnology, Environmental Monitoring, Academic Research [23] [27] | Food Safety, Environmental Monitoring, Agriculture, Point-of-Care Diagnostics [24] [25] [29] |

Experimental Protocols for Performance Validation

Protocol 1: Soil Phosphorus Sorption Capacity Analysis

A direct comparative study between benchtop (Bruker) and handheld (Agilent) mid-infrared (MIR) spectrometers evaluated their efficacy in predicting the soil phosphorus sorption maximum (Smax), a key parameter in agricultural and environmental science [26].

- Objective: To predict the Langmuir parameter of soil P sorption maximum capacity (Smax, mg·kg⁻¹) using MIR spectroscopy and compare the predictive accuracy of benchtop versus handheld instruments.

- Sample Preparation: Soil samples were prepared in two particle size ranges: <0.100 mm (ball-milled) and <2 mm (crushed) to assess the impact of sample homogeneity [26].

- Instrumentation:

- Benchtop Spectrometer: Bruker FTIR spectrometer.

- Handheld Spectrometer: Agilent handheld FTIR spectrometer.

- Methodology:

- Spectral Library Creation: Four separate spectral libraries were built using the two spectrometers and the two sample types [26].

- Chemometric Modeling: Multiple regression models were developed and validated for each library, including Partial Least Squares (PLS), Cubist, Support Vector Machine (SVM) regression, and Random Forest (RF) [26].

- Model Validation: Model performance was evaluated using the Ratio of Performance to Inter-Quartile Distance (RPIQV). Models were classified as 'excellent' (RPIQV > 3.0), 'approximate quantitative' (RPIQV ~2.7), or 'fair' (RPIQV ~2.2) [26].

- Key Findings:

- The benchtop (Bruker) spectrometer produced 'excellent' models for both ball-milled and <2 mm samples, with SVM achieving RPIQV values of 4.50 and 4.25, respectively. This indicates high prediction accuracy without the need for extensive sample preparation [26].

- The handheld (Agilent) spectrometer performed best with homogeneous, ball-milled samples, yielding an 'approximate quantitative' model (RPIQV = 2.74). With <2 mm samples, its performance decreased to a 'fair' model (RPIQV = 2.23), suitable only for classifying 'low' and 'high' sorption capacities [26].

- Conclusion: Benchtop MIR spectrometers provide superior analytical accuracy and are less sensitive to sample preparation, whereas handheld devices require more homogeneous samples for approximate quantitative analysis and are better suited for classification tasks in field settings [26].

Key Research Reagent Solutions for Spectroscopic Analysis

Table 3: Essential Materials and Their Functions in Spectroscopic Analysis

| Research Reagent / Material | Function in Analysis |

|---|---|

| Ball-Milled Homogeneous Samples | Creates fine, consistent particle size to reduce scattering and improve spectral signal-to-noise ratio, crucial for handheld devices [26]. |

| Chemometric Software (PLS, SVM, RF) | Applies statistical and machine learning models to correlate spectral data with quantitative parameters of interest (e.g., concentration, sorption capacity) [26]. |

| Calibration Standards | Provides known reference materials for instrument calibration, ensuring measurement traceability and accuracy over time [9] [2]. |

| Specialized Spectral Libraries | Application-specific databases of chemical spectra that enable rapid identification and verification of unknown samples [25]. |

Decision Framework and Workflow Integration

Selecting between benchtop and portable spectrometers is a multi-faceted decision. The following workflow diagram outlines the key considerations for researchers.

The choice between benchtop and portable spectrometers is not a matter of superiority, but of strategic alignment with research objectives and operational constraints. Benchtop spectrometers remain the gold standard for applications demanding the highest levels of accuracy, sensitivity, and quantitative results, such as drug development, regulatory compliance, and detailed research and development [23] [9] [27]. Their higher initial cost and fixed location are justified by their unparalleled performance. Portable spectrometers, however, are transformative tools that bring the laboratory to the sample, enabling rapid decision-making in quality control, environmental monitoring, and point-of-care diagnostics [24] [25] [29]. While they may not match the ultimate precision of their benchtop counterparts, continuous technological innovations in miniaturization, battery life, and data connectivity are rapidly closing the performance gap and expanding their application scope [24] [28]. For modern research laboratories and drug development professionals, a hybrid approach—leveraging the precision of benchtop systems for core research and the agility of portable units for rapid screening and field application—often represents the most powerful and efficient strategy.

For researchers and drug development professionals, selecting between benchtop and portable spectrometers is a strategic decision that directly impacts data quality, workflow efficiency, and project outcomes. This guide provides an objective comparison of their performance characteristics to inform your selection process.

Performance at a Glance: Key Quantitative Comparisons

The choice between benchtop and portable instruments often involves a trade-off between analytical performance and operational flexibility. The following tables summarize core performance metrics and functional characteristics across common spectrometer types.

Table 1: Core Performance Metrics for Spectrometer Types

| Feature | Benchtop Spectrometer | Portable Spectrometer |

|---|---|---|

| Typical Accuracy & Precision | Very high to exceptional accuracy, repeatability, and reproducibility [2] [30]. | Satisfactory, but generally lower than benchtop models due to smaller size and environmental susceptibility [30]. |

| Spectral Resolution | Superior resolution; research-grade models significantly exceed portable alternatives [2]. | Lower resolution; limited by compact optical design [31]. |

| Signal-to-Noise Ratio | Higher, due to robust components and stable environment (e.g., Benchtop NMR with TE-MCT detector doubles SNR versus portable DTGS) [32]. | Lower, as miniaturization can compromise signal quality [32]. |

| Measurement Reproducibility | Excellent reproducibility across multiple instruments, crucial for multi-site studies [3]. | Good for a single device, but higher variance between different units [3]. |

| Sensitivity (e.g., Trace Elements) | High sensitivity, ideal for trace element detection (e.g., Benchtop XRF) [9]. | Less sensitive, particularly for light elements; suited for bulk analysis [9]. |

Table 2: Functional Characteristics and Application Fit

| Characteristic | Benchtop Spectrometer | Portable Spectrometer |

|---|---|---|

| Sample Versatility | High versatility for solids, liquids, powders; measures reflectance & transmittance [30]. | Often designed for a specific application or sample type (e.g., solid surfaces) [30]. |

| Measurement Spot Size | Larger spot size, averaging out surface imperfections [3]. | Smaller spot size, potentially influenced by minor surface defects [3]. |

| Typical Wavelength Range | Expanded capabilities across UV, visible, and IR ranges [1]. | Often limited to visible light, with some UV capabilities [1]. |

| Operational Environment | Requires a controlled laboratory environment [2] [30]. | Designed for fieldwork; resistant to dust, shocks, and temperature variations [2] [32]. |

| Data & Connectivity | Sophisticated interconnectivity with LIMS, SPC, and other data systems [1]. | Basic connectivity; some offer Bluetooth or cloud transfer for immediate analysis [31] [32]. |

Experimental Protocols: Validating Performance in Application

Objective comparison requires data from controlled experiments. The following protocol, adapted from a study on chlorophyll content prediction, illustrates a methodology for evaluating spectrometer performance.

Experimental Objective

To assess the performance of a portable spectrophotometer in predicting the chlorophyll content of Hami melon leaves through non-destructive spectral measurement and regression modeling [31].

Methodology and Workflow

The experiment involves simultaneous collection of spectral data and reference measurements, followed by data processing and model building to correlate spectral signals with analyte concentration.

Detailed Experimental Steps:

- Sample Preparation: Collect 100 leaf samples from Hami melon plants at different growth stages and nutrient states. Ensure samples are free of physical damage [31].

- Reference Measurement: Using a calibrated chlorophyll meter (e.g., Top Cloud-agri TYS-4N), measure the SPAD value of each leaf sample. Avoid the thick veins of the leaf to ensure accuracy. This provides the ground-truth data for model training [31].

- Spectral Measurement: Using the portable spectrophotometer (e.g., a device with an AS7341 sensor), collect spectral data from the same location on the leaf where the SPAD value was taken. The device should be equipped with a leaf-fixing plate to ensure a consistent and equidistant measurement for every sample [31].

- Data Preprocessing: Apply preprocessing algorithms to the raw spectral data to improve model performance. The referenced experiment tested eight preprocessing methods for denoising. Use algorithms like Principal Component Analysis (PCA) and Isolation Forest to detect and remove spectral outliers from the dataset [31].

- Model Training and Validation: Split the paired dataset (spectral data vs. SPAD values) into training and prediction sets. Train multiple regression models (e.g., Linear Regression, Decision Tree, Support Vector Regression, Extra Trees Regressor (ETR), Random Forest Regressor (RFR)) on the training set. Validate the model's predictive performance on the separate prediction set [31].

- Performance Metrics: Evaluate model performance using standard metrics. The key metrics are:

- R² (Coefficient of Determination): Closer to 1.0 indicates a better fit.

- RMSE (Root Mean Square Error): Lower values indicate higher prediction accuracy.

- Example Outcome: In the cited study, the ETR model on original data yielded a training R² (Rc²) of 0.9905 and a prediction R² (Rp²) of 0.8035. After outlier removal, the RFR model improved prediction R² (Rp²) to 0.8683 [31].

Essential Research Reagent Solutions

The table below lists key materials and software used in the featured experiment and related spectroscopic fields.

Table 3: Essential Research Reagents and Materials

| Item | Function/Application |

|---|---|

| Chlorophyll Meter (e.g., TYS-4N) | Provides reference measurements (SPAD values) for model calibration and validation in agricultural studies [31]. |

| Standard Reference Materials | Essential for instrument calibration across all fields (e.g., polymer samples for NMR, color plaques for spectrophotometers) [3] [33]. |

| Deuterated Solvents (e.g., CDCl₃) | Used in NMR spectroscopy to provide a lock signal without interfering with the sample's proton spectrum [34]. |

| NMR Sample Tubes | Standard 5mm O.D. tubes for benchtop NMR analysis; compatible with automation [34]. |

| Data Analysis Software (e.g., TopSpin, OPUS) | Specialized software for instrument control, data acquisition, processing, and analysis (e.g., NMR, FT-IR) [34] [32]. |

Decision Workflow: Aligning Instrument with Project Goals

Selecting the right instrument requires a structured assessment of your primary project requirements. The following workflow diagram outlines the key decision points.

In summary, benchtop spectrometers are the unequivocal choice for applications where the highest standards of data quality, regulatory compliance, and process automation are required, such as in pharmaceutical R&D and quality control labs [34] [2]. Portable spectrometers offer unparalleled strategic value for applications demanding rapid, on-site decision-making, field-based research, and point-of-use testing, despite a measured trade-off in ultimate analytical precision [32] [35]. By applying the structured comparisons and workflows outlined in this guide, researchers can make an objective, strategic selection that best aligns with their specific project goals and operational constraints.

Strategic Deployment in Research and Industry: From Lab to Field

The accurate analysis of Active Pharmaceutical Ingredients (APIs) and formulations is a critical pillar of pharmaceutical quality control (QC), ensuring drug safety and efficacy. Selecting the appropriate analytical instrumentation is fundamental to this process. This guide provides an objective comparison between benchtop and portable spectrometers, two prominent classes of instruments, focusing on their performance characteristics for API purity and formulation analysis. Framed within broader research on spectrometer performance, this article synthesizes experimental data to help researchers, scientists, and drug development professionals make informed decisions tailored to their specific operational needs, whether in controlled laboratories or in the field.

Benchtop spectrometers are stationary instruments designed for use in laboratory environments. They are characterized by their high performance, stability, and comprehensive features, often supporting complex sample handling and advanced data analysis [1]. Portable spectrometers, in contrast, are compact, handheld, or mobile devices designed for on-site analysis. Their primary advantages are mobility, ease of use, and the ability to provide rapid results at the point of need, such as on a production floor or at a raw material receiving bay [36] [37].

The core technologies employed in pharmaceutical analysis include:

- Nuclear Magnetic Resonance (NMR) Spectroscopy: Exploits the magnetic properties of atomic nuclei to provide detailed information on molecular structure, identity, and quantity [38] [39].

- Near-Infrared (NIR) Spectroscopy: Measures the absorption of light in the near-infrared region, which is sensitive to molecular vibrations (e.g., C-H, O-H, N-H bonds), allowing for rapid, non-destructive quantification of components [40] [41].

- X-Ray Fluorescence (XRF): Used primarily for elemental analysis, XRF analyzers determine the elemental composition of a material by measuring the fluorescent X-rays emitted when the material is excited by a primary X-ray source [36] [42].

The following tables summarize key performance characteristics of benchtop and portable spectrometers, based on head-to-head comparative studies and manufacturer specifications for QC applications.

Table 1: Quantitative Performance Comparison in Analytical Applications

| Application | Instrument Type & Technology | Key Performance Metric | Result | Reference Experiment |

|---|---|---|---|---|

| Impurity Detection | Benchtop NMR (400 MHz) | Limit of Detection (LOD) for a choline impurity | 0.01% | Analysis of choline and O-(2-hydroxyethyl)choline [43] |

| Impurity Detection | Benchtop NMR (60 MHz) | Limit of Detection (LOD) for a choline impurity | 2% | Analysis of choline and O-(2-hydroxyethyl)choline [43] |

| Drug Quantification | Benchtop NMR (60 MHz) with QMM | RMSE for methamphetamine HCl purity | 1.3 mg/100 mg | Analysis of binary/ternary mixtures [39] |

| Drug Quantification | HPLC-UV (Reference Method) | RMSE for methamphetamine HCl purity | 1.1 mg/100 mg | Analysis of binary/ternary mixtures [39] |

| Age Grading of Mosquitoes | Benchtop NIR (Labspec 4i) | Predictive Accuracy (ANN Model) | 94% | Classification into < or ≥ 10 days old [40] |

| Age Grading of Mosquitoes | Portable NIR (NIRvascan) | Predictive Accuracy (ANN Model) | 90% | Classification into < or ≥ 10 days old [40] |

| Juice Adulteration | Benchtop FT-NIRS | PLS-DA Model Accuracy | 94% | Discrimination of genuine vs. adulterated lime juice [41] |

| Juice Adulteration | Portable SW-NIRS | PLS-DA Model Accuracy | 94% | Discrimination of genuine vs. adulterated lime juice [41] |

Table 2: General Operational Characteristics for Pharmaceutical QC

| Characteristic | Benchtop Spectrometers | Portable Spectrometers |

|---|---|---|

| Primary Use Case | High-precision specification, formulation, and impurity profiling in a lab [38] [1] | On-site raw material identification, production line spot-checks [36] [37] |

| Accuracy & Precision | Maximum accuracy and repeatability; essential for setting standards [1] [3] | High accuracy, but can be affected by operator technique and environment [1] |

| Sensitivity | High sensitivity and resolution (e.g., high-field NMR) [43] | Generally lower sensitivity and spectral resolution [40] [39] |

| Measurement Capabilities | Often includes reflectance, transmittance, and haze measurements [1] [3] | Primarily designed for reflectance measurements [1] |

| Operational Cost & Maintenance | Higher initial cost; may require cryogens (high-field NMR); maintenance agreements available [38] | Lower initial cost; no cryogens; minimal maintenance; ruggedized design [38] [36] |

| Throughput & Automation | Supports full automation and 24/7 operation with sample-to-report workflows [38] | Rapid measurement for spot-checks; workflow depends on manual operation [36] |

Detailed Experimental Protocols

Protocol 1: Quantitative Analysis of API Purity via Benchtop NMR

This protocol, adapted from a study on quantifying methamphetamine hydrochloride (MA), demonstrates the application of benchtop NMR with advanced modeling for precise API purity analysis [39].

- Objective: To accurately quantify the purity of an API (e.g., MA) in the presence of cutting agents and impurities in binary and ternary mixtures.

- Instrumentation: 60 MHz benchtop NMR spectrometer equipped with quantum mechanical modeling (QMM) software (e.g., Q2NMR) [39].

- Sample Preparation:

- Prepare mixtures of the API with common excipients or known impurities (e.g., methylsulfonylmethane, caffeine, phenethylamine hydrochloride) across a purity range of 10-90 mg API per 100 mg sample.

- Weigh samples accurately and dissolve in a suitable deuterated solvent (e.g., D₂O) to ensure a homogeneous solution for analysis.

- Data Acquisition:

- Acquire ¹H NMR spectra for each sample mixture.

- Maintain consistent parameters (e.g., number of scans, pulse angle, relaxation delay) across all samples.

- Data Analysis:

- Process the spectral data using a QMM algorithm. The model uses known chemical shifts and coupling constants of the pure compounds to deconvolute overlapping signals in the mixture spectra.

- The QMM fits a theoretical spectrum to the experimental data, allowing for the simultaneous quantification of the API and other components without the need for identical physical standards [39].

- Performance: This method achieved an RMSE of 1.3 mg/100 mg for API purity, a performance comparable to HPLC-UV (RMSE 1.1 mg/100 mg) but with the advantage of simultaneous multi-component analysis and reduced solvent use [39].

Protocol 2: Raw Material Identification via Portable NIRS

This protocol, based on studies comparing portable and benchtop NIRS, outlines the use of handheld devices for rapid, non-destructive identification of incoming raw materials [40] [41].

- Objective: To rapidly verify the identity of a raw material (e.g., an excipient or API) at the point of receipt, minimizing the risk of using incorrect materials.

- Instrumentation: Portable NIR spectrometer (e.g., operating range 900-1700 nm) with an integrated touchscreen and connectivity options [40] [37].

- Sample Preparation:

- Minimal preparation is required. Present the material in its original container if the packaging is NIR-transparent (e.g., polyethylene bags).

- For opaque packaging, place a small, representative sample in a suitable glass vial. Ensure a consistent sample presentation for all measurements.

- Data Acquisition:

- Calibrate the instrument according to the manufacturer's instructions before use.

- Press the handheld probe firmly against the sample or packaging.

- Trigger a measurement. Data acquisition is typically completed within seconds.

- Data Analysis:

- Performance: Portable NIRS provides a rapid and reliable identification method. While its absolute predictive accuracy may be slightly lower than a benchtop equivalent in some complex classification tasks (e.g., 90% vs 94% for mosquito age grading [40]), it is highly effective for identity verification, a critical first step in QC.

Workflow and Signaling Pathways

The following diagram illustrates the decision-making workflow for selecting between benchtop and portable spectrometers in a pharmaceutical QC context.

Diagram 1: Spectrometer Selection Workflow for Pharmaceutical QC. This flowchart outlines key decision points for choosing between benchtop and portable instruments based on analytical requirements.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents, materials, and software solutions essential for conducting the experiments described in this guide.

Table 3: Key Reagents and Materials for Spectroscopic Analysis in Pharma QC

| Item | Function / Application | Specific Example / Note |

|---|---|---|

| Deuterated Solvents | Provides a field-frequency lock and does not produce interfering signals in NMR spectroscopy. | D₂O, Deuterated Chloroform (CDCl₃), Deuterated DMSO (DMSO-d6) [39] |

| Quantum Mechanical Modelling (QMM) Software | Advanced software for deconvoluting overlapping signals in low-field NMR spectra for accurate quantification. | Enables quantification in complex mixtures with RMSE comparable to HPLC [39] |

| Certified Reference Materials | Provides known, traceable standards for instrument calibration and method validation. | Critical for building accurate spectral libraries in NIRS and for HPLC-UV calibration [39] |

| Spectralon Calibration Panel | A diffuse reflectance standard used for calibrating NIR and spectrophotometer instruments. | Ensures measurement accuracy and repeatability over time [40] |

| Chemometrics Software | Uses statistical models to extract meaningful information from spectral data (e.g., PCA, PLS-DA, ANN). | Essential for developing classification and quantification models from NIR spectra [40] [41] |

| HPLC-UV System | A gold-standard reference method for quantifying API purity, used for cross-validation. | Provides high precision (RMSE ~1.1 mg/100 mg) but requires analyte-specific standards [39] |

The choice between benchtop and portable spectrometers is not a matter of one technology being superior to the other, but rather of selecting the right tool for the specific analytical challenge and context. Benchtop spectrometers are unequivocally the choice for applications demanding the highest levels of precision, sensitivity, and automation, such as quantifying low-level impurities to meet ICH guidelines, developing color formulations, or establishing master quality standards [1] [43]. In contrast, portable spectrometers offer a powerful solution for accelerating QC processes through rapid, on-site analysis, enabling tasks like raw material identification and production line spot-checks with minimal sample preparation and operational cost [38] [37].

Emerging research shows that with advanced data processing techniques like QMM and machine learning, the performance gap for certain quantitative applications is narrowing. However, the fundamental trade-offs in sensitivity, measurement capabilities, and operational flexibility remain. The optimal modern QC laboratory may therefore leverage both technologies in a complementary manner, using benchtop systems for central lab precision and portable devices to decentralize and expedite testing where appropriate.

The global pharmaceutical supply chain faces an escalating threat from counterfeit drugs, which may contain incorrect ingredients, improper dosages, or toxic contaminants. Conventional laboratory techniques like chromatography, while highly accurate, require transporting samples to centralized facilities, involve extensive preparation, and generate significant analysis delays. Portable Raman spectroscopy has emerged as a powerful solution for rapid, on-site screening, enabling field deployment at border crossings, law enforcement operations, and supply chain checkpoints. This technology provides molecular fingerprinting capabilities through a non-destructive, point-and-shoot interface that can analyze substances even through transparent packaging.

This guide objectively compares the performance characteristics of portable Raman spectrometers against traditional benchtop systems specifically for counterfeit drug detection. We present experimental data and validation protocols to help researchers and drug development professionals understand the capabilities, limitations, and optimal implementation of portable Raman for pharmaceutical screening applications.

Technical Comparison: Benchtop vs. Portable Raman Spectrometers

Performance Specifications and Analytical Capabilities

The fundamental operating principle of Raman spectroscopy—inelastic light scattering providing molecular fingerprint information—remains consistent across instrument platforms. However, key differences in design priorities create distinct performance characteristics between benchtop and portable systems.

Table 1: Technical Specification Comparison Between Benchtop and Portable Raman Instruments

| Feature | Benchtop FT-Raman | Portable Raman |

|---|---|---|

| Laser Wavelength | 1064 nm [44] | 785 nm [44] |

| Spectral Range | 150-1500 cm⁻¹ [44] | 250-1500 cm⁻¹ [44] |

| Laser Power | Higher-powered [44] | Lower-powered [44] |

| Portability | Laboratory-bound [44] | Field-deployable [44] |

| Analysis Speed | Longer cycle times [44] | Rapid, real-time results [44] |

| Fluorescence Interference | Less interference due to longer excitation wavelength [44] | More susceptible with shorter excitation wavelength [44] |

| Sample Throughput | Lower due to lab requirements [44] | Higher for field screening [44] |

Experimental Performance Data in Counterfeit Detection

Controlled studies demonstrate how these technical differences translate to practical performance in detecting counterfeit pharmaceuticals. One comprehensive investigation compared both systems for screening fourteen counterfeit tablets representing four distinct counterfeit groups against authentic reference products [44].

The benchtop FT-Raman instrument successfully identified all counterfeit tablets by detecting absent API peaks (particularly at 720 cm⁻¹, a selective marker for the authentic API) and identifying formulation discrepancies, such as the presence of titanium dioxide in Group 3 counterfeits not found in genuine products [44]. The portable instrument achieved equivalent discrimination outcomes, successfully flagging all counterfeits with a failing result (p-value < 0.05) and authentic products with a passing result (p-value ≥ 0.05) in a qualified screening method [44]. This demonstrates that despite its smaller size and lower power, the portable instrument provided equivalent decision-making capability for the samples tested.

For real-world detection limits, a validation study on a handheld Raman spectrometer for cocaine detection found that the limit of detection (LOD) varied significantly with sample composition, ranging between 10-40 wt% cocaine in binary mixtures with common cutting agents [45]. This dependence highlights the importance of testing portable instruments with realistic mixtures rather than pure standards alone. In a retrospective analysis of 3,168 case samples, the same handheld instrument demonstrated a 97.5% true positive rate for cocaine detection with no false positives, confirming its reliability for screening street samples where the average cocaine content typically exceeds these detection limits [45].

Experimental Protocols for Method Validation

Instrument Qualification and Method Development

Proper validation is essential before deploying portable Raman for critical screening applications. The following protocol outlines a standardized approach for instrument qualification and method development:

Spectral Validation and Calibration: Perform wavelength/raman shift calibration using standard reference materials according to established guides (ASTM E1840), with common standards including naphthalene, sulfur, cyclohexane, or acetaminophen [46]. Verify spectral resolution using a standard with narrow, well-defined peaks such as the 1,712 cm⁻¹ (cocaine base) or 1,716 cm⁻¹ (cocaine HCl) peaks for forensic applications [45].

Library Development: Compile comprehensive spectral libraries of authentic pharmaceutical products using the portable instrument itself to ensure consistency. Include multiple lots of genuine products to account for natural variation [44].

Method Creation and Threshold Setting: Develop a screening method within the instrument's software by acquiring spectra of authentic products and storing them as spectral references. Set pass/fail thresholds statistically, typically using a p-value of 0.05, where a p-value ≥ 0.05 generates a "Pass" and < 0.05 generates a "Fail" [44].

Method Qualification: Confirm method performance by testing that authentic samples generate passing results and known counterfeit samples generate failing results. The method should successfully differentiate between authentic and counterfeit pharmaceuticals before deployment [44].

Sample Analysis Workflow

The analytical workflow for counterfeit screening with portable Raman follows a systematic sequence to ensure reliable results, from initial setup through final confirmation of suspect samples.

Optimization Strategies for Portable Raman Performance

Parameter Optimization for Field Conditions

Maximizing signal quality while minimizing fluorescence interference and false readings requires careful parameter optimization specific to pharmaceutical samples:

Laser Power: Begin with full laser power to maximize Raman signal strength, then reduce power exponentially if sample burning occurs, particularly for dark-colored samples or those with absorption bands near the excitation wavelength [47]. Accurate measurement and fine control at tenths of milliwatts level is desirable for sensitive samples [47].

Aperture Selection: Use the largest aperture (e.g., 50-100 μm) whenever possible to maximize signal intensity, with only minor spectral resolution loss [47]. Reserve smaller apertures (10-25 μm) for applications requiring highest resolution, such as distinguishing between polymorphs [47].

Signal Acquisition: Maximize exposure time rather than the number of exposures for weak Raman scatterers, as longer exposure times yield lower noise for a given total measurement time [47]. For fluorescent samples, the difference between long exposures and multiple averaged scans becomes less pronounced due to shot noise dominance [47].

Advanced Data Analysis Techniques

Portable instruments increasingly incorporate sophisticated algorithms to overcome limitations in mixture analysis and detection thresholds:

Chemometric Modeling: Implement partial least squares regression (PLS-R) and discriminant analysis (PLS-DA) models to improve detection limits and classification accuracy in complex mixtures [45]. Studies demonstrate these models can successfully quantify cocaine in binary mixtures from 10-100 wt% and further improve instrument performance beyond built-in algorithms [45].

Fluorescence Mitigation: Employ mathematical techniques including baseline correction, sequentially shifted excitation (SSE), and time-gating approaches to reduce fluorescence interference, a common challenge with real-world samples [21]. Longer wavelength lasers (1064 nm) also reduce fluorescence but require different detector technology and result in larger instruments [21].

The Research Toolkit: Essential Materials and Reagents

Table 2: Key Research Reagent Solutions for Pharmaceutical Raman Analysis

| Item | Function | Application Example |

|---|---|---|

| ASTM Calibration Standards | Wavenumber calibration and verification | Naphthalene, sulfur, cyclohexane, acetaminophen for instrument calibration [46] |

| Authentic Pharmaceutical Reference Standards | Method development and validation | Genuine API and finished dosage forms for spectral library creation [44] |

| Common Excipient Materials | Interference assessment and mixture analysis | Lactose, cellulose, titanium dioxide, magnesium stearate to model real formulations [45] [44] |

| Surface-Enhanced Raman Scattering (SERS) Substrates | Signal enhancement for trace detection | Metal nanoparticle substrates to boost sensitivity for low-concentration analytes [21] |

| Validated Counterfeit Samples | Method qualification and performance testing | Known counterfeit specimens with documented composition for testing screening algorithms [44] |

Portable Raman spectrometers provide a scientifically valid solution for rapid, on-site screening of counterfeit pharmaceuticals, delivering performance sufficient for field decision-making while maintaining minimal operational footprints. Although benchtop systems retain advantages in ultimate resolution, sensitivity, and fluorescence avoidance, portable instruments have demonstrated remarkable capability in authenticating pharmaceutical products and detecting counterfeits across diverse real-world scenarios.

The convergence of improved spectrometer miniaturization, enhanced spectral libraries, sophisticated mixture algorithms, and standardized validation protocols positions portable Raman technology as an indispensable tool for securing the global pharmaceutical supply chain. As this technology continues evolving toward even smaller form factors and greater analytical capabilities, researchers and regulatory professionals can confidently deploy these systems for frontline defense against pharmaceutical crime.

Near-Infrared Spectroscopy (NIRS) has emerged as a transformative analytical technique in the ongoing battle to ensure food safety and authenticity. This non-destructive method operates on the principle of measuring molecular overtone and combination vibrations, primarily from C-H, O-H, and N-H bonds, when matter interacts with light in the 780–2500 nm spectral region [48] [49]. The food industry faces persistent challenges from adulteration—the deliberate addition of inferior substances to increase volume or reduce costs—which compromises quality, safety, and consumer trust. Traditional analytical methods like high-performance liquid chromatography (HPLC) and gas chromatography-mass spectrometry (GC-MS), while accurate, are destructive, time-consuming, and require extensive sample preparation and skilled personnel [50] [49].

NIRS addresses these limitations by providing rapid, non-destructive analysis without requiring chemicals or complex preparation [48] [51]. The integration of NIRS with chemometrics—statistical tools that extract meaningful information from spectral data—enables both qualitative authentication and quantitative prediction of food composition [48] [49]. This technical capability is particularly valuable for detecting adulterants in high-value products like honey, spices, dairy powders, and olive oil, where fraudulent practices generate significant economic losses and potential health risks [52] [53]. The evolution of NIRS technology from benchtop to portable and handheld devices has further expanded its application, allowing for on-site screening at various points in the food supply chain [50] [51].

Fundamental Principles of NIRS Operation

Spectroscopic Foundations

NIRS technology functions within the electromagnetic radiation range of 12,500–3800 cm⁻¹ (800–2500 nm), where the energy level is sufficient to induce rotational and vibrational molecular transitions but not electron excitation [48]. Unlike mid-infrared spectroscopy that measures fundamental vibrations, NIRS detects broader, overlapping overtones and combination bands, which accounts for its complex spectral patterns requiring advanced chemometric analysis [48] [49]. The primary molecular bonds analyzed—C-H, O-H, and N-H—are characteristic components of organic compounds found in foods, making NIRS particularly suitable for assessing food composition and detecting foreign substances [48].

The analytical process follows the Beer-Lambert law, where absorbance is proportional to both concentration and optical path length [53]. When NIR radiation interacts with a sample, specific wavelengths are absorbed while others are reflected or transmitted, creating a unique spectral fingerprint that corresponds to the sample's chemical composition [49]. Infra-active molecules and molecular groups that change their dipole moment in response to electromagnetic radiation can be studied in this range, enabling the identification of specific compounds and their concentrations within complex food matrices [48].

Spectral Acquisition Modes

The method of spectral acquisition varies depending on sample characteristics and instrument type. For solid samples like powdered foods or grains, the diffuse reflection method is typically employed, where photons penetrate a few millimeters into the sample and the reflected light is measured [48]. For liquids or colloidal samples, transmission technique is applied, where light passes through the sample held in a cell with precise path length (typically 0.5–2 mm) [48]. A hybrid approach called transflection combines both principles and is particularly useful for analyzing problematic colloids or heterogeneous samples [48].

Each acquisition mode presents specific considerations. In diffuse reflection, particle size distribution must be carefully controlled to avoid detrimental scattering phenomena [48]. In transmission measurements, sample homogeneity is crucial, and signal loss may occur with improper layer thickness [48]. For challenging samples, rotation during scanning can provide a more representative "average" spectral image [48]. Understanding these operational parameters is essential for obtaining reliable, reproducible results across different food matrices and instrument platforms.

Benchtop vs. Portable NIRS Systems: A Technical Comparison

Design and Operational Characteristics

The fundamental distinction between benchtop and portable NIRS systems lies in their design philosophy and operational environments. Benchtop systems are stationary instruments designed for laboratory settings where precision, stability, and comprehensive data analysis are paramount [2]. These systems typically incorporate larger optical components, sophisticated light path designs, and temperature-controlled environments that maintain stable optical alignment and consistent measurement performance [2]. They often feature advanced calibration procedures with multiple calibration standards and automated verification protocols to ensure measurement traceability and long-term stability [2].

Portable NIRS systems prioritize mobility, ruggedness, and operational convenience for field applications [50] [2]. These compact devices employ miniaturized technologies such as Micro-Electro-Mechanical Systems (MEMS), Micro-Opto-Electro-Mechanical Systems (MOEMS), and Linear Variable Filters (LVFs) to reduce size, weight, and power consumption while maintaining analytical capability [50]. They incorporate shock-absorbing designs to maintain optical alignment despite transportation stresses and weather-resistant housings to protect sensitive components from environmental challenges [2]. While portable instruments traditionally sacrificed some performance for mobility, technological advances have significantly narrowed this gap in recent years [2].

Performance Comparison Data

The following tables synthesize performance characteristics derived from comparative studies, including a direct assessment of three NIRS instruments for coriander seed authentication [50] and general specifications from technical comparisons [2].

Table 1: Technical Specifications of Representative NIRS Instruments

| Parameter | Benchtop (Thermo Fisher iS50) | Portable (Ocean Insights Flame-NIR) | Handheld (Consumer Physics SCiO) |

|---|---|---|---|

| Spectral Range | Not specified in study | Not specified in study | 740–1070 nm |

| Spectral Resolution | Higher resolution | Moderate resolution | ~28 nm average |

| Detector Type | Not specified | Not specified | Silicon photodiode array |

| Measurement Modes | Multiple (reflection, transmission) | Diffuse reflection | Diffuse reflection |

| Portability | Stationary, lab-based | Portable, field-deployable | Handheld, on-site use |

| Analysis Time | Moderate with preparation | Rapid results | Seconds |

| Sample Throughput | High with automation | Moderate | Lower |

Table 2: Performance Metrics in Adulteration Detection Studies

| Performance Metric | Benchtop (iS50) | Portable (Flame-NIR) | Handheld (SCiO) |

|---|---|---|---|

| Correct Prediction of Adulterated Samples | 100% | 100% | 100% |

| Correct Prediction of Authentic Samples | 100% | 98.5% | 95.6% |

| Quantitative Analysis Capability | Excellent | Limited | Limited |

| Suitability for Screening | Reference method | Good | Acceptable |

| Application Scope | Regulatory compliance, research | Field verification, quality control | Rapid screening, supply chain |

Table 3: Economic and Operational Considerations

| Consideration | Benchtop Systems | Portable Systems |

|---|---|---|

| Initial Investment | High ($15,000-$50,000+) | Lower ($2,000-$20,000) |

| Infrastructure Requirements | Dedicated lab space, environmental controls | Minimal, battery operation |

| Maintenance Complexity | Higher, requires specialized service | Lower, user-replaceable components |

| Operator Skill Requirements | Technical expertise needed | Simplified operation |

| Analysis Cost Per Sample | Lower at high volumes | Competitive for field use |

| Return on Investment | Justified by precision and throughput | Justified by mobility and speed |

The comparative study on coriander seed authentication demonstrates that while all instrument types successfully identified adulterated samples (100% detection), performance diverged for authentic sample prediction, with benchtop systems achieving perfect classification (100%) compared to portable (98.5%) and handheld (95.6%) devices [50]. Additionally, the development of regression models highlighted the limitations of portable and handheld devices for precise quantitative analysis compared to benchtop systems, suggesting their primary value as screening rather than reference tools [50].

Experimental Protocols for NIRS Adulteration Detection

Standardized Workflow for Method Development

Implementing NIRS for adulteration detection follows a systematic workflow encompassing sample preparation, spectral acquisition, data preprocessing, model development, and validation. The following diagram illustrates this generalized experimental protocol:

Sample Preparation and Spectral Acquisition

Sample preparation protocols vary based on the food matrix but typically include homogenization to ensure representative sampling, particle size control through milling or sieving (particularly important for powdered foods), and moisture equilibration to minimize spectral variability [49] [53]. For honey analysis, samples are typically warmed to dissolve crystals, well-mixed to eliminate air bubbles, and scanned at consistent temperature (typically 25°C) using transmission or transflectance cells [49]. For powdered foods like spices or milk powder, samples are often sieved to specific particle sizes (e.g., <500μm) and packed uniformly in sample cups to ensure consistent light penetration and minimize scattering effects [53].

Spectral acquisition parameters must be optimized for each application. Benchtop systems typically employ higher resolution (4-16 cm⁻¹) across broader wavelength ranges (1000-2500 nm) with InGaAs detectors for superior sensitivity [49]. Portable systems like the Viavi MicroNIR 1700ES cover 950-1650 nm with 12.5 nm resolution, while handheld devices like the SCiO operate in a more limited range (740-1070 nm) with ~28 nm resolution [50]. Appropriate measurement geometry (reflectance for solids, transmission for liquids, transflectance for colloids) must be selected, and replicate scans averaged to improve signal-to-noise ratio [48].

Data Preprocessing and Chemometric Modeling

Raw NIR spectra contain both relevant chemical information and unwanted variation from physical effects like light scattering, particle size differences, and instrumental noise. Data preprocessing is therefore critical to enhance spectral features and remove artifacts [48] [53]. Common techniques include:

- Multiplicative Scatter Correction (MSC) and Standard Normal Variate (SNV) to correct for scattering effects and path length differences [48] [49]

- Savitzky-Golay smoothing and derivatives (first or second derivative) to enhance spectral resolution and remove baseline offsets [48]

- Detrending to eliminate linear baseline trends [53]

- Standardization or normalization to make spectra comparable across instruments [53]

Following preprocessing, chemometric modeling extracts meaningful relationships between spectral data and sample properties. For qualitative authentication (e.g., pure vs. adulterated), classification algorithms like Principal Component Analysis (PCA) coupled with Linear Discriminant Analysis (LDA) or Soft Independent Modeling of Class Analogy (SIMCA) are employed [49]. For quantitative analysis (e.g., determining adulteration percentage), regression methods like Partial Least Squares Regression (PLSR) and Principal Component Regression (PCR) are most common [48] [49]. More recently, non-linear methods including Support Vector Machines (SVM), Artificial Neural Networks (ANN), and deep learning approaches have shown enhanced performance for complex classification tasks [48] [53].

Model Validation and Performance Metrics

Robust validation is essential to ensure model reliability and prevent overfitting. The recommended approach involves cross-validation (typically leave-one-out or k-fold) during model development followed by external validation using an independent sample set not included in model calibration [49]. For classification models, performance is assessed using metrics calculated from confusion matrices:

- Sensitivity = TP/(TP+FN) - ability to correctly identify adulterated samples [48]

- Specificity = TN/(TN+FP) - ability to correctly identify authentic samples [48]

- Precision = TP/(TP+FP) - proportion of correctly identified adulterants among all samples flagged as adulterated [48]

- Accuracy = (TP+TN)/(TP+TN+FP+FN) - overall correct classification rate [48]