Beyond the Instrument: Why Sample Preparation is the Unseen Foundation of Spectroscopic Accuracy in Biomedical Research

This article addresses the critical, yet often overlooked, role of sample preparation in achieving reliable spectroscopic results for researchers and drug development professionals.

Beyond the Instrument: Why Sample Preparation is the Unseen Foundation of Spectroscopic Accuracy in Biomedical Research

Abstract

This article addresses the critical, yet often overlooked, role of sample preparation in achieving reliable spectroscopic results for researchers and drug development professionals. It explores the foundational principles linking preparation to data validity, covers methodological advances for complex biological samples, provides troubleshooting and optimization strategies for common pitfalls, and establishes frameworks for method validation and comparative analysis. By synthesizing current best practices and emerging trends, this guide aims to equip scientists with the knowledge to transform sample preparation from a bottleneck into a strategic asset, thereby enhancing the reproducibility and accuracy of spectroscopic data in biomedical research.

The Unseen Foundation: How Sample Preparation Dictates Spectroscopic Data Integrity

{# Introduction}

In analytical chemistry, the precision of multi-million dollar instrumentation can be rendered useless by a process that occurs before the sample even reaches the detector: sample preparation. It is estimated that inadequate sample preparation is the cause of as much as 60% of all spectroscopic analytical errors [1]. This figure establishes sample preparation not as a mere preliminary step, but as the most critical variable in ensuring analytical accuracy. For researchers and drug development professionals, this "60% problem" represents a significant risk to research validity, quality control, and product development.

This whitepaper details the quantitative evidence behind this problem, breaks down the specific types of errors encountered, and provides structured methodologies and visual guides to mitigate these errors, thereby safeguarding the integrity of analytical data.

{# The Quantitative Evidence: Error Distribution in the Analytical Process}

The predominance of pre-analytical and sample preparation errors is consistently demonstrated across various analytical fields, from clinical laboratories to materials science.

{Table: Quantifying Error Sources in Analytical Processes}

| Analytical Phase | Sub-category | Reported Error Rate | Technique / Context | Primary Data Source |

|---|---|---|---|---|

| Overall Pre-Analytical | Specimen Integrity (e.g., Hemolysis) | 69.6% (of all errors) | Clinical Laboratory Testing | [2] |

| Overall Pre-Analytical | All Non-Hemolysis Errors | 94.6% (of non-hemolysis errors) | Clinical Laboratory Testing | [2] |

| Sample Preparation | Inadequate Preparation | ~60% of all errors | General Spectroscopy | [1] |

| Analytical | Instrument/Measurement | 0.5% (of all errors) | Clinical Laboratory Testing | [2] |

| Analytical | Instrument/Measurement | 1.7% (of non-hemolysis errors) | Clinical Laboratory Testing | [2] |

| Post-Analytical | Data Processing/Reporting | 1.1% (of all errors) | Clinical Laboratory Testing | [2] |

The data from clinical laboratories shows that pre-analytical errors constitute the vast majority (over 98%) of all errors [2]. In spectroscopy, the figure is similarly stark, with sample preparation being the single largest contributor to analytical inaccuracy [1]. Advances in instrument stability and data processing software have paradoxically elevated sample preparation as the largest remaining source of error, making it the limiting factor for accuracy in techniques like X-ray fluorescence (XRF) spectroscopy [3].

{# A Taxonomy of Sample Preparation Errors}

Understanding the "60% problem" requires a breakdown of the specific error types. These can be categorized as follows:

Solid Sample Preparation Errors

The physical preparation of solid samples introduces multiple error vectors.

- Particle Size and Homogeneity: Rough surfaces scatter light randomly, and a lack of homogeneous, monodisperse particle size creates sampling error, compromising quantitative analysis [1]. The "mineralogical effect" in XRF, where different mineral forms of the same chemical composition yield different intensities, is a prime example that can only be resolved by fusion techniques [3].

- Surface and Density Irregularities: Techniques like XRF require flat, homogeneous surfaces with consistent density. Variations affect X-ray absorption and emission, leading to inaccurate intensity measurements [1] [3].

Liquid Sample and Standard Preparation Errors

The preparation of solutions and standards is fraught with opportunities for error, particularly in highly sensitive techniques like ICP-MS and HPLC.

- Adsorption to Containers: Target components can adsorb onto the walls of containers, reducing measured concentration. This depends on the component, solvent, and container material (e.g., cations adsorbing to glass) [4].

- Component Instability: Analytes can oxidize or decompose during storage. For example, ascorbic acid concentration decreases over time due to oxidation by dissolved oxygen [4].

- Inaccurate Dilution and Volumetrics: Errors in weight measurement, operator error during dilution, and inadequate dissolution directly impact concentration accuracy [4].

- Matrix Effects: In LC-MS and ICP-MS, co-eluting compounds from the sample matrix can suppress or enhance ionization, leading to inaccurate quantification [5].

Contamination and Carry-Over

The introduction of external contaminants or analytes from previous samples can invalidate results.

- Cross-Contamination: Grinding equipment, containers, and labware can introduce contaminants or cause carry-over between samples if not cleaned thoroughly [1] [5].

- Reagent Purity: The use of solvents and reagents that are not MS-grade can introduce interfering compounds that cause false positives or negatives [5].

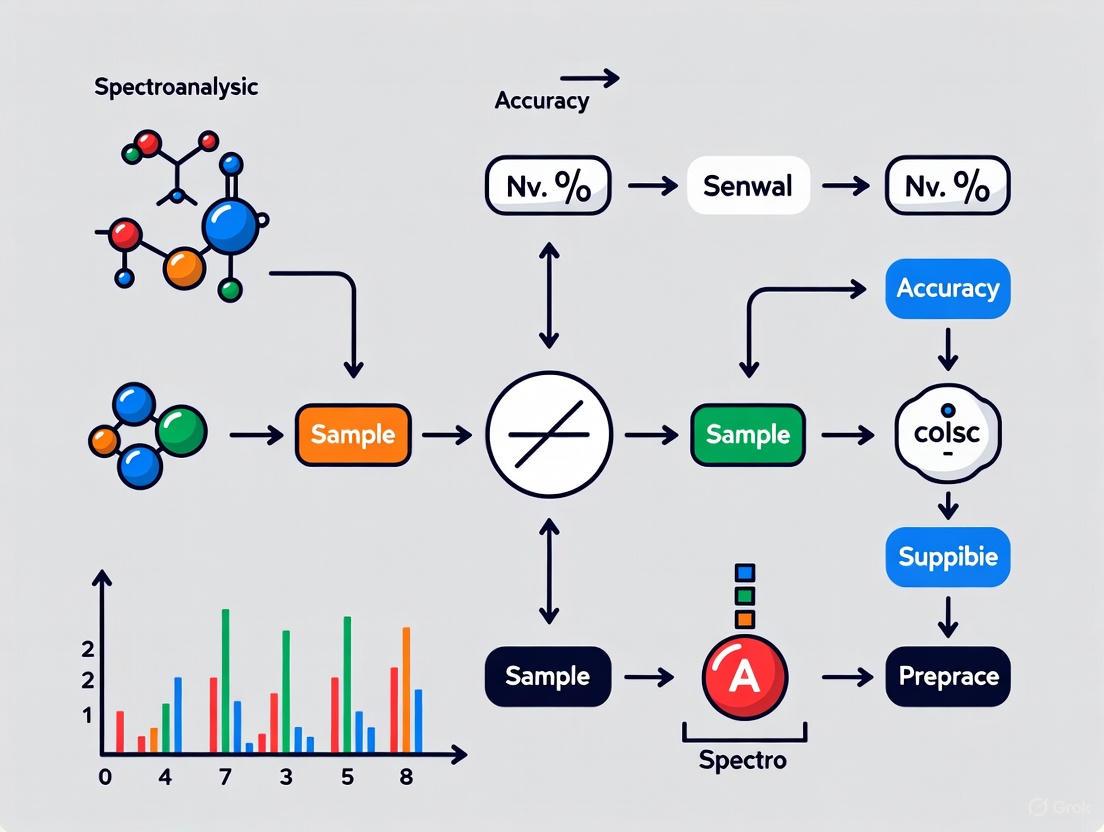

The following diagram illustrates how these errors propagate through a standard analytical workflow and their impact on the final result.

{# Experimental Protocols for Mitigating Key Errors}

To combat the errors detailed above, robust and standardized experimental protocols are essential. The following section provides detailed methodologies for critical preparation techniques.

Protocol 1: Pressed Pellet Preparation for XRF Spectroscopy

This protocol is designed to produce homogeneous, stable pellets for quantitative XRF analysis, minimizing particle size and mineralogical effects [1] [3].

- Principle: Powdered samples are mixed with a binder and pressed into a solid pellet of uniform density and surface characteristics, creating a consistent matrix for X-ray interaction.

- Materials:

- Spectroscopic grinding machine (e.g., swing mill)

- Binder (e.g., cellulose, boric acid, wax)

- Hydraulic or pneumatic press (10-30 ton capacity)

- Powder die set (typically 30-40 mm diameter)

- Aluminum caps or backing for stability

- Step-by-Step Procedure:

- Grinding: Place a representative subsample of the bulk material into the grinding machine. Grind to a particle size of typically <75 μm. The optimal time should be determined by a "grinding curve analysis" [3].

- Mixing: Precisely weigh the ground sample and mix it thoroughly with a binder (e.g., a 5:1 sample-to-binder ratio is common) to ensure homogeneity and provide structural integrity.

- Pressing: Transfer the mixture into a die set. Press at a controlled force (e.g., 20 tons) for a specified time (e.g., 60 seconds) to form a solid, flat pellet.

- Storage: Store the pellet in a desiccator to prevent moisture absorption or surface degradation before analysis. The surface must not be touched or contaminated.

- Troubleshooting:

- Pellet crumbles: Increase binder concentration or pressing force/time.

- Poor analytical precision: Verify grinding consistency and mixing homogeneity. Ensure the pellet surface is flat and free of defects.

Protocol 2: Standard Solution Preparation for HPLC/ICP-MS

This protocol minimizes errors related to adsorption, decomposition, and inaccurate dilution during standard and sample solution preparation [4] [5].

- Principle: To create accurate and stable standard solutions through precise weighing, dilution, and chemical stabilization, using techniques that mitigate analyte loss.

- Materials:

- High-purity analytical balance

- Class A volumetric glassware or calibrated pipettes

- High-purity solvents (MS-grade)

- Appropriate container materials (e.g., glass, specific polymers)

- Stable isotope-labeled internal standards (for ICP-MS/LC-MS)

- Step-by-Step Procedure:

- Weighing: Accurately weigh the primary standard substance. Account for hygroscopicity or hydrate water content [4].

- Primary Stock Solution: Dissolve the standard in an appropriate solvent in a volumetric flask. Ensure complete dissolution, potentially with sonication or mild heating.

- Stabilization:

- Serial Dilution: Perform serial dilutions to prepare working standards. Use consistent technique and allow solutions to equilibrate to room temperature before final volume adjustment.

- Add Internal Standard: Add a stable isotope-labeled internal standard to all samples and standards to correct for matrix effects and instrument drift [5].

- Troubleshooting:

- Non-linear calibration curves: Suspect adsorption if the curve does not pass through the origin. Change container material (e.g., from glass to polymer) or solvent pH to inhibit adsorption [4].

- Decreasing peak areas over time: Indicates analyte decomposition. Improve stabilization measures (e.g., lower temperature storage, nitrogen atmosphere).

{# The Scientist's Toolkit: Essential Reagents and Materials}

Selecting the correct tools and reagents is fundamental to successful sample preparation. The table below lists key items and their functions.

{Table: Key Research Reagent Solutions for Sample Preparation}

| Item / Reagent | Primary Function | Key Consideration |

|---|---|---|

| Lithium Tetraborate (Flux) | Fuses silicate materials into homogeneous glass disks for XRF, eliminating mineralogical effects [1]. | Platinum crucibles are required due to high (950-1200°C) temperatures [1]. |

| Cellulose / Boric Acid (Binder) | Binds powdered samples into cohesive pellets for analysis by XRF or FT-IR [1] [3]. | The binder must not contain elements that interfere with the analytes of interest. |

| Stable Isotope-Labeled Internal Standard | Compensates for matrix effects and instrument drift in mass spectrometry (ICP-MS, LC-MS) [5]. | The standard should be chemically identical to the analyte but with a different mass. |

| MS-Grade Solvents | High-purity solvents for LC-MS/HPLC to minimize background interference and ion suppression [5]. | Check the solvent's UV cutoff wavelength for compatibility with UV-Vis detection [1]. |

| Solid-Phase Extraction (SPE) Cartridges | Cleanup of complex samples to remove interfering compounds and concentrate analytes [5]. | Select sorbent phase based on the chemical properties of the target analyte. |

| PTFE Membrane Filters (0.45/0.2 μm) | Removes suspended particles from liquid samples to protect instrument nebulizers (ICP-MS) [1]. | Ensure the filter material does not adsorb the target analytes. |

{# Conclusion}

The evidence is conclusive: sample preparation is the dominant source of error in the analytical workflow, accounting for the majority of inaccuracies in spectroscopic and chromatographic data. The "60% problem" cannot be solved by instrumental advancements alone. It demands a disciplined, systematic approach grounded in a thorough understanding of error sources—from particle heterogeneity and mineralogical effects to analyte adsorption and matrix interference.

By adopting the rigorous protocols and best practices outlined in this whitepaper, researchers and drug development professionals can directly address this critical bottleneck. Mastering sample preparation is not merely a technical skill but a strategic imperative for ensuring data integrity, accelerating research, and upholding quality standards in the pharmaceutical industry and beyond.

In modern spectroscopic analysis, the precision of an instrument can be rendered meaningless by inadequate sample preparation. It is estimated that inadequate sample preparation is the cause of as much as 60% of all spectroscopic analytical errors [1]. The core principles of homogeneity, contamination control, and managing matrix effects therefore form the bedrock of reliable analytical data, directly influencing the validity of research outcomes in drug development and other scientific fields. This technical guide examines these foundational principles, providing a detailed framework for researchers and scientists to optimize sample preparation protocols, thereby ensuring data integrity and supporting robust spectroscopic accuracy in complex matrices.

Core Principle 1: Homogeneity

The Critical Role of Homogeneity

Sample homogeneity is a prerequisite for representative and reproducible spectroscopic results. Heterogeneous samples introduce significant sampling error, as the analyzed portion may not reflect the overall composition of the material, leading to non-reproducible results [1]. The physical characteristics of a sample, particularly particle size and surface uniformity, directly govern how radiation interacts with the material. Inconsistent particle sizes cause uneven scattering and absorption of light, compromising quantitative analysis [1]. Achieving homogeneity is especially critical for spatially resolved techniques like mass spectrometry imaging, where inherent chemical complexity, such as the distinct microstructures found in brain tissue, can lead to significant analytical variability [6].

Techniques for Achieving Homogeneity

Several mechanical and processing techniques are employed to transform raw, heterogeneous materials into homogeneous, analyzable specimens.

Grinding and Milling: Grinding reduces particle size through mechanical friction, creating homogeneous samples. The choice of equipment depends on material properties; for instance, swing grinding machines are ideal for tough samples like ceramics and ferrous metals as their oscillating motion minimizes heat generation that could alter sample chemistry [1]. Milling offers greater control over particle size reduction and produces superior surface quality for non-ferrous materials. The resulting flat, uniform surfaces minimize light scattering, thereby enhancing signal-to-noise ratios [1].

Pelletizing for XRF: This technique involves transforming powdered samples into solid disks using a hydraulic press (typically at 10-30 tons pressure), often with a binder. This process creates samples with uniform density and surface properties, which is essential for consistent X-ray absorption and accurate quantitative XRF analysis [1].

Fusion Techniques: For refractory materials like silicates and ceramics, fusion is the most stringent method. It involves mixing the ground sample with a flux (e.g., lithium tetraborate) and melting it at high temperatures (950-1200°C) to create a homogeneous glass disk. This process completely destroys crystal structures and standardizes the sample matrix, effectively eliminating mineralogical and particle size effects [1].

Table 1: Homogenization Techniques for Different Spectroscopic Methods

| Technique | Primary Use | Key Parameters | Target Particle Size |

|---|---|---|---|

| Grinding | General purpose homogenization | Material hardness, grinding time | <75 μm for XRF [1] |

| Milling | Creating flat surfaces for solids | Rotational speed, feed rate, cutting depth | N/A (Surface finish focused) |

| Pelletizing | XRF Sample Preparation | Pressure (10-30 tons), binder type | Prior grinding to <75 μm [1] |

| Fusion | Difficult-to-dissolve materials | Flux type, temperature (950-1200°C) | Total dissolution into glass disk |

Core Principle 2: Contamination Control

Contamination introduces extraneous material that generates spurious spectral signals, which can render analytical results worthless [1]. Sources are ubiquitous throughout the sample preparation workflow, including cross-contamination between samples, impurities from reagents and solvents, and leaching from equipment. The impact is particularly severe in trace-level analysis, such as ICP-MS, where the technique's high sensitivity makes it vulnerable to skewed results from even minute contaminant introductions [1]. For example, in the analysis of toxic metals, the reliability of results is entirely dependent on stringent contamination control from reagents and labware [7].

Strategies for Effective Contamination Control

A proactive and multi-faceted approach is essential for mitigating contamination risks.

Equipment Selection and Cleaning: Using grinding and milling surfaces constructed from materials that will not introduce interfering elements is crucial. Furthermore, intensive cleaning between samples is mandatory to prevent cross-contamination [1]. For liquid samples, using high-purity grade solvents and acids is non-negotiable for trace metal analysis [1].

Process Controls: For liquid samples in ICP-MS, filtration (typically with 0.45 μm or 0.2 μm membranes) removes suspended particles that could clog nebulizers or contribute to spectral interference [1]. Employing silanized glass vials is an effective strategy to prevent the adsorption of target analytes (like ochratoxin A) onto container walls, which would lead to biased low results [8].

Core Principle 3: Managing Matrix Effects

Understanding Matrix Effects

Matrix effects (MEs) are a paramount challenge in mass spectrometry, particularly when using electrospray ionization (ESI). They occur when co-eluting matrix components from a complex sample suppress or enhance the ionization of target analytes, thereby biasing quantitative results [9] [8]. These effects are especially pronounced in heterogeneous samples like urban runoff or biological tissues, where the matrix composition can vary dramatically between samples [6] [9]. For instance, in MALDI-MSI of brain tissue, the chemical differences between gray matter (densely packed neurons) and white matter (myelinated axons) lead to uneven lateral matrix effects and local suppression, posing a significant quantitation challenge [6].

Advanced Strategies for Mitigating Matrix Effects

Several sophisticated methodological strategies can be employed to correct or compensate for matrix effects.

Stable Isotope-Labeled Internal Standards (SIL-IS): This is considered one of the most effective approaches. A SIL-IS is a structurally identical version of the analyte labeled with stable isotopes (e.g., Deuterium, Carbon-13). It is added to the sample prior to extraction and perfectly co-elutes with the native analyte, undergoing the same ionization suppression/enhancement. The analyte signal is then normalized to the IS signal, correcting for the matrix effect [8]. Using an isotope dilution mass spectrometry (IDMS) approach, such as double (ID2MS) or quintuple (ID5MS) dilution, can yield results with high accuracy, as demonstrated in the quantitation of ochratoxin A in flour, where external calibration underestimated values by 18-38% compared to the certified value [8].

Standard Addition Method: This technique involves spiking the sample with known, varying concentrations of the native analyte. The signal intensity is plotted against the added concentration, and the absolute value of the x-intercept gives the original analyte concentration in the sample. This method accounts for the specific matrix of the sample. A novel application for MALDI-MSI involves homogeneously spraying standard solutions onto consecutive tissue sections instead of manual spotting, which minimizes variations caused by tissue heterogeneity and provides a spot-free calibration [6].

Individual Sample-Matched Internal Standard (IS-MIS): A recent innovation for non-target screening, this strategy involves analyzing each individual sample at multiple dilution levels to match internal standards based on the specific behavior of that sample. Although it requires 59% more analysis runs, it significantly outperforms methods using a pooled sample for correction, achieving <20% RSD for 80% of features in highly variable urban runoff samples [9].

Table 2: Comparison of Matrix Effect Mitigation Strategies

| Strategy | Mechanism | Best For | Advantages | Limitations |

|---|---|---|---|---|

| Stable Isotope-Labeled IS [8] | Signal normalization using a co-eluting analogue | Targeted analysis | High accuracy and precision; compensates for losses | Limited availability; can be costly |

| Standard Addition [6] | Calibration within the sample's own matrix | Complex, unique, or variable matrices | Accounts for specific sample matrix | Reduces throughput; requires more sample |

| Sample Dilution [9] | Reduces concentration of interfering compounds | Non-targeted screening; high-sensitivity instruments | Simple and effective | Can dilute analyte below LOQ |

| IS-MIS [9] | Matches IS to features in each individual sample | Non-target screening of highly variable samples | Unmatched accuracy for heterogeneous sets | Increases analytical time and cost |

Experimental Protocols for Core Principles

Protocol: Standard Addition for Quantitative MALDI-MSI

This protocol details the use of a standard addition approach with homogeneous spraying for the accurate quantitation of neurotransmitters in rodent brain tissue, effectively managing spatial matrix effects [6].

- Step 1: Tissue Sectioning. Cut sagittal brain tissue sections (12 μm thickness) and mount them centrally on ITO-coated slides with sufficient distance to avoid cross-contamination.

- Step 2: Internal Standard Application. Use a robotic sprayer (e.g., TM-sprayer) to homogeneously apply a SIL internal standard (e.g., DA-d4 for dopamine) over all tissue sections in six passes. Parameters: nozzle temperature 90°C, flow rate 70 μL/min, velocity 1100 mm/min, track spacing 2.0 mm.

- Step 3: Calibration Standard Application. Quantitatively spray different concentrations of calibration standards (e.g., dopamine, norepinephrine) over the tissue sections in four passes. Cover non-target sections with a coverslip during spraying.

- Step 4: Matrix Application. Apply a derivatizing MALDI matrix (e.g., FMP-10) in 20 passes with optimized spraying parameters.

- Step 5: Data Acquisition & Analysis. Perform MALDI-MSI analysis. Extract signal intensities from the region of interest, plot against the amount of added analyte, and calculate the endogenous concentration from the x-intercept of the trend line.

Standard Addition MALDI-MSI Quantitation Workflow

Protocol: Isotope Dilution MS for Mycotoxin Quantification

This protocol compares ID1MS, ID2MS, and ID5MS for the accurate quantification of ochratoxin A (OTA) in flour, overcoming ionization suppression [8].

- Step 1: Sample Extraction. Weigh 5 g of flour into an extraction vessel. Gravimetrically add a known amount of isotopically labelled internal standard solution ([13C6]-OTA) and 11.1 g of 85% acetonitrile/water (v/v). Vortex, shake on an orbital shaker (450-475 RPM) for 1 hour, and centrifuge.

- Step 2: Calibration Solution Preparation (for ID2MS/ID5MS). For ID5MS, gravimetrically prepare multiple calibration solutions that bracket the expected analyte concentration by mixing different masses of the native OTA standard solution with a fixed mass of the [13C6]-OTA internal standard solution.

- Step 3: LC-MS Analysis. Inject sample extracts and calibration solutions. Use a C18 column with gradient elution (water/acetonitrile with 0.05% acetic acid). Operate the mass spectrometer in positive ESI mode.

- Step 4: Quantification.

- ID1MS: Calculate concentration from the known amount of internal standard and the measured analyte/IS signal ratio.

- ID2MS/ID5MS: Use the calibration solutions to establish the relationship between the measured ratio and the amount of native analyte, then calculate the unknown sample concentration.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents for Managing Homogeneity and Matrix Effects

| Reagent / Material | Function | Application Example |

|---|---|---|

| Stable Isotope-Labelled (SIL) Analogues [6] [8] | Internal standard for normalization and calibration; corrects for matrix effects and analyte loss. | Quantification of dopamine in brain tissue using DA-d4 [6]; accurate quantitation of ochratoxin A using [13C6]-OTA [8]. |

| Lithium Tetraborate Flux [1] | Fusion agent for creating homogeneous glass disks from refractory materials; eliminates mineralogical effects. | Sample preparation for cement, slag, and minerals prior to XRF analysis [1]. |

| Cellulose or Boric Acid Binders [1] | Binding agent for powder pelletization; provides structural integrity and uniform density for analysis. | Forming stable pellets from powdered geological samples for XRF [1]. |

| Deuterated Solvents (e.g., CDCl3) [1] | Spectroscopically transparent solvent for FT-IR; minimizes interfering absorption bands in the mid-IR region. | Dissolving organic compounds for FT-IR analysis without solvent peaks obscuring analyte signals [1]. |

| High-Purity Acids (e.g., HNO3) [1] | Acidification agent for trace metal analysis; prevents adsorption and precipitation of metals, controls contamination. | Sample preservation and preparation for ultratrace analysis by ICP-MS [1]. |

| Specialized MALDI Matrices (e.g., FMP-10) [6] | Derivatizing matrix for MALDI-MS; enhances ionization efficiency of specific analyte classes (e.g., neurotransmitters). | Spatial quantitation of small molecules like catecholamines in brain tissue sections [6]. |

Matrix Effects Causes and Mitigation Strategies

In analytical spectroscopy, the quality of the final spectral fingerprint is fundamentally determined long before instrumental analysis begins. Inadequate sample preparation is responsible for approximately 60% of all spectroscopic analytical errors, making it the most significant source of inaccuracy in spectroscopic analysis [1]. Unless samples are properly prepared, researchers risk collecting misleading data that can compromise research projects, quality control practices, and analytical conclusions [1]. This technical guide examines the fundamental relationships between preparation methodologies and spectral quality, providing researchers with evidence-based protocols to optimize their analytical workflows.

The concept of a "spectroscopic fingerprint" relies on the unique interaction between electromagnetic radiation and a sample's molecular structure. However, these interactions are highly sensitive to physical and chemical properties altered during preparation—including particle size, homogeneity, surface characteristics, and matrix composition [1]. Even the most advanced instrumentation cannot compensate for poorly prepared samples, as preparation-induced artifacts directly affect the spectral baseline, peak positions, intensities, and widths, potentially obscuring critical analytical information [10].

Fundamental Mechanisms: How Preparation Affects Spectral Data

Sample preparation influences spectral quality through multiple interconnected mechanisms that affect how radiation interacts with the analytical sample. Understanding these core principles enables researchers to select appropriate preparation strategies for their specific analytical challenges.

Key Physical and Chemical Factors

- Surface and Particle Characteristics: Rough surfaces scatter light randomly, while monodisperse particle sizes ensure uniform interaction with radiation. Excessive variation in particle size creates sampling error that compromises quantitative analysis [1].

- Matrix Effects: Sample matrix constituents can absorb or augment spectral signals, obscuring or enhancing the analyte response. Proper preparation techniques remove such interferences through dilution, extraction, or matrix matching [1].

- Homogeneity Requirements: Heterogeneous samples yield non-reproducible results because the analyzed portion may not represent the whole sample. Grinding, milling, and mixing techniques create homogeneous samples that yield reliable, reproducible data [1].

- Contamination Risks: Contamination introduces extraneous material that generates spurious spectral signals. Cross-contamination between samples or from preparation equipment can render results worthless, necessitating rigorous cleaning protocols throughout the preparation process [1].

Spectral Data Integrity Challenges

Modern spectroscopic analysis faces significant challenges in data interpretation that originate from preparation artifacts. Spectroscopic signals remain highly prone to interference from environmental noise, instrumental artifacts, sample impurities, scattering effects, and radiation-based distortions such as fluorescence and cosmic rays [10]. These perturbations not only degrade measurement accuracy but also impair machine learning-based spectral analysis by introducing artifacts and biasing feature extraction [10]. The field is undergoing a transformative shift driven by three key innovations: context-aware adaptive processing, physics-constrained data fusion, and intelligent spectral enhancement [10]. These cutting-edge approaches enable unprecedented detection sensitivity achieving sub-ppm levels while maintaining >99% classification accuracy, with transformative applications spanning pharmaceutical quality control, environmental monitoring, and remote sensing diagnostics [10].

Technique-Specific Preparation Methodologies

Different spectroscopic techniques have distinct preparation requirements optimized for their specific measurement principles and analytical challenges. The table below summarizes optimal preparation techniques for major spectroscopic methods:

Table: Technique-Specific Sample Preparation Requirements

| Technique | Primary Preparation Methods | Critical Parameters | Optimal Sample Form |

|---|---|---|---|

| XRF | Grinding, milling, pelletizing, fusion | Particle size <75 μm, flat homogeneous surfaces, uniform density | Pressed pellets or fused beads |

| ICP-MS | Total dissolution, filtration, dilution, acidification | Complete dissolution, accurate dilution, particle removal | Liquid, filtered, acidified |

| FT-IR | Grinding with KBr, pellet preparation, solvent selection | Appropriate solvents, concentration optimization, minimal moisture | KBr pellets, liquid cells |

| Raman | Surface enhancement, fluorescence mitigation | Low fluorescence substrates, quenching methods | SERS-active surfaces, dry powders |

| NIR | Minimal preparation often sufficient | Particle size control, homogeneity | Intact or lightly processed solids |

Solid Sample Preparation Techniques

Solid samples require careful processing to ensure representative analysis and proper interaction with incident radiation:

- Grinding and Milling: Mechanical reduction of particle size creates homogeneous samples with consistent radiation interaction properties. Swing grinding machines use oscillating motion rather than direct pressure, reducing heat formation that might alter sample chemistry. For optimal results, grind samples under identical time parameters and clean intensively between samples to prevent cross-contamination [1].

- Pelletizing for XRF Analysis: Pelletizing transforms powdered samples into solid disks with uniform surface properties and density essential for quantitative XRF analysis. The process typically involves blending ground samples with a binder (e.g., wax or cellulose) and pressing using hydraulic or pneumatic presses at 10-30 tons to produce pellets with flat, smooth surfaces and consistent thickness [1].

- Fusion Techniques: Fusion represents the most stringent preparation technique for complete dissolution of refractory materials into homogeneous glass disks. The process involves blending ground samples with a flux (typically lithium tetraborate), melting at 950-1200°C in platinum crucibles, and casting the molten material as disks for analysis. Fusion prevents particle size and mineral effects that plague other preparation techniques, making it ideal for silicate materials, minerals, and ceramics [1].

Liquid and Gas Sample Preparation

Liquid and gaseous samples present unique analytical challenges requiring specialized preparation approaches:

- Dilution and Filtration for ICP-MS: This sensitive technique demands stringent liquid sample preparation due to its high detection capability. Dilution brings analyte concentrations into optimal detection ranges while reducing matrix effects. Filtration (typically 0.45 μm or 0.2 μm for ultratrace analysis) removes suspended material that could contaminate nebulizers or hinder ionization. High-purity acidification with nitric acid (typically to 2% v/v) maintains metal ions in solution by preventing precipitation and adsorption to vessel walls [1].

- Solvent Selection for Molecular Spectroscopy: Solvent choice significantly influences spectral quality for both UV-Visible and FT-IR spectroscopy. Optimal solvents completely dissolve samples without being spectroscopically active in the analytical region of interest. For UV-Vis, consider cutoff wavelength (below which the solvent absorbs strongly), polarity, and purity grade. For FT-IR, solvent absorption bands must not overlap with significant analyte features, making deuterated solvents like CDCl₃ excellent alternatives with minimal interfering absorption bands [1].

Quantitative Impacts: Preparation-Induced Errors and Solutions

The quantitative effect of sample preparation on analytical results can be systematically evaluated through specific error mechanisms and their corresponding mitigation strategies:

Table: Quantitative Impact of Sample Preparation on Analytical Results

| Error Mechanism | Effect on Spectral Data | Quantitative Impact | Corrective Strategy |

|---|---|---|---|

| Particle Size Variation | Increased light scattering, reduced signal-to-noise | >30% variance in reflectance measurements | Standardized grinding to <75μm |

| Surface Irregularity | Spectral baseline distortion, peak broadening | 15-25% accuracy reduction in XRF | Precision milling/polishing |

| Matrix Interference | Signal suppression/enhancement, false peaks | 40-60% concentration error | Fusion, matrix matching |

| Moisture Contamination | IR absorption obscures analyte signals | Complete masking of fingerprint region | Controlled drying, desiccants |

| Spectral Mixing | Poor chromatographic separation | >20% co-elution errors | SPE, selective enrichment |

Data Preprocessing and Statistical Enhancement

Advanced statistical preprocessing techniques can partially compensate for preparation-induced artifacts, though they cannot replace proper physical preparation:

- Spectral Standardization: Transforming raw data to distributions with mean 0 and variance 1 preserves the features of the original distribution while enhancing comparability between samples [11].

- Affine Transformation Min-Max Normalization (MMN): This approach fits data within a standardized range (typically [0,1]), highlighting spectral shapes while preserving local maxima, minima, and underlying trends [11].

- Scattering Correction: Multiplicative scatter correction (MSC) and standard normal variate (SNV) transformations compensate for scattering effects and baseline shifts introduced by variations in particle size, texture, and surface morphology [12].

Statistical preprocessing functions applied to raw spectroscopic data are essential for obtaining reliable results, as the interaction between light and matter is a complex process distorted by noise produced by optical interference or instrument electronics [11]. These techniques preserve the relationships of initial raw data and the graphical representation of spectral signatures while accentuating peaks, valleys, and trends, thereby improving multivariate statistical analysis and classification outcomes [11].

Advanced Preparation Strategies for Enhanced Performance

Contemporary research has developed sophisticated preparation methodologies that significantly enhance analytical performance across multiple parameters:

High-Performance Preparation Approaches

- Functional Material-Based Strategies: Employing advanced materials including magnetic nanoparticles, porous carbon materials, metal-organic frameworks (MOFs), covalent organic frameworks (COFs), and ionic liquids that act as additional phases to disrupt sample preparation system equilibrium, enabling efficient enrichment and selective separation of target analytes [13].

- Reaction-Based Processes: Utilizing chemical or biological reactions to transform analytes into more detectable forms, significantly enhancing detection sensitivity while biological recognition mechanisms greatly increase selectivity [13].

- Energy Field-Assisted Techniques: Applying external energy fields (thermal, ultrasonic, microwave, electric, magnetic) to accelerate mass transfer and reduce phase separation duration, significantly improving extraction efficiency and separation performance [13].

- Device-Based Integration: Implementing specialized devices including miniaturized, arrayed, or online configurations to enhance automation, precision, accuracy, and environmental compatibility while reducing preparation time [13].

Targeted Enrichment Strategies

Targeted enrichment methods have emerged as particularly powerful approaches for analyzing specific small molecules in complex matrices:

- Chemical Functional Group Targeting: Designing materials with specific chemical functionalities (e.g., boric acid for cis-diol compounds) that achieve selective adsorption of target molecules through covalent interactions [14].

- Metal Coordination Approaches: Utilizing noble metals and metal oxides that coordinate with specific analytes such as glutathione and insulin, achieving exceptional sensitivity with detection limits as low as 150 amol [14].

- Hydrophobic Interaction Strategies: Employing modified surfaces including silane monolayer-modified porous silicon and 3D monolithic SiO₂ for enrichment of hydrophobic compounds including antidepressant drugs and lipids [14].

- Electrostatic Adsorption Methods: Developing charged materials including modified MXenes and porous organic frameworks that efficiently capture ionic species through electrostatic interactions [14].

Experimental Protocols: Detailed Methodologies

XRF Pellet Preparation Protocol

For quantitative X-ray fluorescence analysis, proper pellet preparation is essential for obtaining accurate results:

- Sample Grinding: Grind representative sample to particle size <75μm using spectroscopic grinding equipment with contamination-resistant surfaces.

- Binder Addition: Blend ground sample with binder (typically cellulose or wax) at 10-30% w/w ratio to provide structural integrity during pressing.

- Homogeneous Mixing: Mix sample and binder thoroughly using mechanical mixer for 5-10 minutes to ensure uniform distribution.

- Pressing Procedure: Transfer mixture to die set and press using hydraulic press at 15-25 tons pressure for 2-5 minutes.

- Pellet Storage: Store finished pellets in desiccator to prevent moisture absorption before analysis.

This methodology yields samples with uniform X-ray absorption properties essential for quantitative analysis [1].

ICP-MS Liquid Sample Preparation

For trace element analysis by ICP-MS, meticulous liquid sample preparation is critical:

- Sample Digestion: For solid samples, achieve complete dissolution using appropriate acid mixtures (typically HNO₃:HCl for metals) with heating as needed.

- Dilution Optimization: Dilute sample to bring analyte concentrations within instrumental linear range while minimizing matrix effects; typical dilution factors range from 1:10 to 1:1000 for complex matrices.

- Filtration: Pass sample through 0.45μm membrane filter (0.2μm for ultratrace analysis) to remove particulate matter.

- Acidification: Add high-purity nitric acid to final concentration of 2% v/v to maintain analyte stability in solution.

- Internal Standard Addition: Incorporate appropriate internal standards (e.g., Sc, Y, In, Bi) to correct for matrix effects and instrument drift.

This protocol ensures accurate quantification while protecting sensitive instrument components [1].

The Scientist's Toolkit: Essential Research Reagents

Table: Essential Research Reagents for Spectroscopic Sample Preparation

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Lithium Tetraborate | Flux for fusion preparations | XRF analysis of minerals, ceramics |

| High-Purity Nitric Acid | Digestion and preservation agent | ICP-MS metal analysis |

| Potassium Bromide (KBr) | IR-transparent matrix material | FT-IR pellet preparation |

| Erythrosine Dye | Ion-pair complexation agent | RRS-based drug quantification [15] |

| Boric Acid-Functionalized Materials | Selective cis-diol enrichment | SALDI-TOF MS of sugars, nucleosides [14] |

| Covalent Organic Frameworks | Selective enrichment matrices | Trace contaminant analysis [14] |

Sample preparation is not merely a preliminary step but an integral component of the analytical process that directly determines the quality and reliability of spectroscopic fingerprints. The relationship between preparation methodology and spectral quality follows fundamental principles of radiation-matter interaction that cannot be circumvented by instrumental sophistication alone. As spectroscopic applications expand into increasingly complex matrices and lower detection limits, advanced preparation strategies employing functional materials, energy fields, and specialized devices will become increasingly essential. By understanding and implementing the principles and protocols outlined in this guide, researchers can ensure their spectroscopic fingerprints accurately represent the true chemical composition of their samples, thereby validating their analytical conclusions and supporting scientific advancement across diverse fields from pharmaceutical development to environmental monitoring.

In the rigorous fields of analytical chemistry and pharmaceutical research, the generation of reliable, reproducible data forms the very foundation upon which scientific and commercial decisions are built. Spectroscopic accuracy is paramount, yet its achievement is critically dependent on a frequently undervalued initial step: sample preparation. It is estimated that inadequate sample preparation is the cause of as much as 60% of all spectroscopic analytical errors [1]. This neglect creates a cascade of consequences, compromising research validity, stalling drug development pipelines, and inflating costs. The pursuit of reproducibility—defined as the ability to reproduce results using the same data and analysis as the original study—is a central challenge in modern science [16]. This whitepaper details the profound technical and economic costs of neglecting sample preparation, framed within the context of spectroscopic research and its pivotal role in drug development.

The Foundational Role of Sample Preparation in Spectroscopic Analysis

Spectroscopic methods, including X-ray Fluorescence (XRF), Inductively Coupled Plasma-Mass Spectrometry (ICP-MS), and Fourier Transform Infrared Spectroscopy (FT-IR), are indispensable for determining material composition and molecular structure. These techniques measure the interaction of electromagnetic radiation with matter, producing unique spectral "fingerprints" [1]. The fidelity of these fingerprints is directly governed by the quality of the sample presented to the instrument.

How Preparation Directly Influences Analytical Accuracy

Sample preparation is not merely a preliminary step but a critical determinant of data quality. Its impact manifests through several key physical and chemical principles [1]:

- Surface and Particle Characteristics: Rough surfaces scatter light randomly, while uniform particle size ensures consistent interaction with radiation. Significant variation in particle size introduces sampling error that cripples quantitative analysis.

- Matrix Effects: Constituents in the sample matrix can absorb or enhance spectral signals, obscuring the true analyte response. Proper preparation techniques, such as dilution or extraction, remove these interferences.

- Homogeneity: Heterogeneous samples yield non-reproducible results because the analyzed portion may not represent the whole. Grinding, milling, and mixing create homogeneous samples essential for reliable data.

- Contamination: The introduction of unwanted material from equipment or the environment generates spurious spectral signals, rendering results worthless.

Table 1: Sample Preparation Requirements for Common Spectroscopic Techniques

| Technique | Primary Function | Critical Preparation Requirements |

|---|---|---|

| XRF (X-Ray Fluorescence) | Elemental composition | Flat, homogeneous surfaces; particle size <75 μm; pressed pellets or fused beads for uniform density [1]. |

| ICP-MS (Inductively Coupled Plasma-Mass Spectrometry) | Sensitive elemental/isotopic analysis | Total dissolution of solid samples; accurate dilution; filtration to remove particles; high-purity reagents to prevent contamination [1]. |

| FT-IR (Fourier Transform Infrared Spectroscopy) | Molecular structure identification | Grinding solids with KBr for pellet production; use of appropriate solvents and cells for liquids; specialized gas cells [1]. |

Quantitative Costs: The Impact of Irreproducibility on Drug Development

The failure to ensure reproducibility at the analytical level has magnified consequences throughout the drug development lifecycle. The root cause often traces back to unreliable foundational data.

The High Stakes of Pharmaceutical Research and Development

The pharmaceutical industry invests immense resources into research and development (R&D). In 2024, the average cost to develop a single asset reached $2.23 billion, with the average forecast peak sales per product at $510 million [17]. The average internal rate of return (IRR) for the top 20 biopharma companies, while improving, remains at a delicate 5.9% [17]. In this high-stakes environment, the efficiency of R&D is critical. Decisions based on irreproducible data can lead to the pursuit of false leads, failure to identify promising compounds, and ultimately, a dilution of returns.

A 2025 RAND study on drug development costs provides a more nuanced view, suggesting that the typical cost may not be as high as generally believed, but is skewed by a few ultra-costly outliers. The study found the median direct R&D cost was $150 million, compared to an average (mean) of $369 million [18]. After adjusting for the cost of capital and failures, the median cost was $708 million, with the average rising to $1.3 billion [18]. The study noted that excluding just two high-cost outliers reduced the average cost by 26%, to $950 million [18]. This highlights the immense financial variability and risk inherent in drug development, where inefficiencies and inaccuracies in foundational research can create catastrophic cost overruns.

Table 2: Key Findings from RAND Study on Drug Development Costs (2025)

| Metric | Cost (Million USD) | Notes |

|---|---|---|

| Median Direct R&D Cost | $150 | For 38 FDA-approved drugs [18] |

| Mean (Average) Direct R&D Cost | $369 | Skewed by high-cost outliers [18] |

| Median Full Cost (Capital & Failures) | $708 | Across the 38 drugs examined [18] |

| Mean (Average) Full Cost | $1,300 | Driven by a small number of ultra-costly medications [18] |

| Mean Full Cost (Excluding 2 Outliers) | $950 | Demonstrates the impact of outliers on averages [18] |

Documented Reproducibility Challenges Across Scientific Fields

The challenge of reproducibility is widespread. In mass spectrometry-based proteomics, a multi-laboratory study demonstrated that while data-independent acquisition (SWATH-MS) could consistently detect and quantify over 4,000 proteins across 11 labs, this high level of reproducibility required stringent standardization of protocols [19]. Similarly, a 2017 study on quantitative MRI found that while many structural measurements showed excellent reproducibility, others—like fractional anisotropy in specific white matter tracts and regional blood flow—demonstrated moderate-to-low reproducibility, defining the inherent variability that must be accounted for in longitudinal studies [20].

In laser-induced breakdown spectroscopy (LIBS), a 2025 study explicitly identified "unsatisfactory" long-term reproducibility due to laser energy fluctuation, instrument drift, and environmental changes. This necessitates frequent re-calibration, undermining the technique's advantage of fast analysis and impeding its commercial development [21]. These examples underscore that reproducibility is an active and ongoing challenge, the neglect of which directly compromises analytical utility.

Experimental Protocols for Ensuring Spectroscopic Reproducibility

To mitigate the costs of neglect, researchers must adopt rigorous, standardized sample preparation and data validation protocols. The following methodologies, drawn from current research and practice, provide a framework for enhancing reproducibility.

Protocol 1: Solid Sample Preparation for XRF Analysis

Objective: To produce a homogeneous, contamination-free pellet for quantitative elemental analysis [1].

Materials & Equipment:

- Spectroscopic grinding or milling machine (e.g., swing mill for hard materials)

- Hydraulic or pneumatic press (10-30 ton capacity)

- Binder (e.g., boric acid, cellulose, wax)

- Powder sample

- Grinding vessels and balls (material selected to avoid contamination)

Procedure:

- Coarse Crushing: If the sample is bulk solid, pre-crush to a size of <5 mm.

- Fine Grinding/Milling: Load the sample into the grinding machine. Grind for a predetermined, consistent time to achieve a final particle size of <75 μm. Clean equipment thoroughly between samples to prevent cross-contamination.

- Mixing with Binder: Weigh the ground powder and mix thoroughly with an appropriate binder (e.g., 1:10 binder-to-sample ratio). The binder ensures the pellet coheres and provides a consistent matrix.

- Pelletizing: Transfer the mixture into a pellet die. Press at a defined pressure (e.g., 20 tons) for a set duration (e.g., 60 seconds) to form a solid, flat disk with a smooth surface.

- Storage: Store the pellet in a desiccator to prevent moisture absorption before analysis.

Quality Control: The surface of the pellet must be smooth and free of cracks. Repeatability can be assessed by preparing and analyzing multiple pellets from the same homogenized powder.

Protocol 2: Liquid Sample Preparation for ICP-MS

Objective: To achieve complete dissolution and stabilization of a solid sample for ultra-trace elemental analysis, while minimizing matrix effects and contamination [1].

Materials & Equipment:

- High-purity acids (e.g., nitric acid, trace metal grade)

- Ultrapure water (e.g., from a system like Milli-Q)

- Teflon (PTFE) digestion vessels

- Hotblock or microwave digester

- Class 100 laminar flow hood

- Syringe filters (0.45 μm or 0.2 μm pore size, PTFE membrane)

Procedure:

- Digestion: Precisely weigh (~0.1 g) the solid sample into a clean Teflon vessel. Add a digestion acid mixture (e.g., 5 mL concentrated HNO₃) in a laminar flow hood to minimize airborne contamination.

- Heating: Heat the vessels in a hotblock or microwave digester according to a stepped temperature program (e.g., ramp to 180°C over 30 mins, hold for 60 mins) to ensure complete dissolution.

- Cooling and Dilution: Allow vessels to cool. Quantitatively transfer the digestate to a volumetric flask. Dilute to volume with ultrapure water. For samples with high dissolved solid content, a further dilution (e.g., 1:100 or 1:1000) may be required to bring analyte concentrations into the optimal instrument range and reduce matrix effects.

- Filtration: Filter the diluted solution through a 0.45 μm PTFE syringe filter to remove any remaining particulate matter that could clog the nebulizer.

- Acidification and Internal Standardization: Acidify the final solution to 2% v/v with high-purity nitric acid to keep metals in solution. Add a known concentration of an internal standard (e.g., Indium, Rhodium) to correct for instrument drift and matrix suppression/enhancement.

Quality Control: Process reagent blanks (all reagents, no sample) simultaneously to correct for background contamination. Use certified reference materials (CRMs) to validate the entire preparation and analytical method.

Protocol 3: Assessing Reproducibility in MS-Based Metabolomics

Objective: To statistically identify reproducible metabolite features across replicate experiments, distinguishing them from irreproducible signals [22].

Materials & Equipment:

- Processed metabolomics data set (e.g., from GC-MS or LC-MS) with peak-aligned and quantified features.

- Statistical computing environment (e.g., R).

Procedure:

- Data Preparation: For a set of replicate samples (technical or biological), compile a data matrix where rows represent metabolite features and columns represent replicate runs.

- Rank Calculation: For each replicate run, rank all metabolite features based on a chosen metric (e.g., abundance, p-value, fold-change).

- Apply MaRR Procedure: Use the

MaRRpackage in R (Bioconductor) to analyze the rank pairs. The algorithm calculates a maximal rank statistic to identify the point at which the correlation between replicate ranks drops, indicating a transition from reproducible to irreproducible signals. - Estimate Reproducible Proportion: The procedure outputs the estimated proportion of reproducible metabolites and a statistical cutoff. Metabolites with ranks above this cutoff are considered reproducible.

Quality Control: The method effectively controls the False Discovery Rate (FDR). It is recommended to apply this to both technical replicates (to assess analytical reproducibility) and biological replicates (to separate technical noise from biological variation) [22].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Spectroscopic Sample Preparation

| Item | Function | Application Examples |

|---|---|---|

| Swing Mill Grinder | Reduces particle size of hard, brittle samples via impact and friction. Minimizes heat generation. | Preparation of ceramics, ferrous metals, and minerals for XRF [1]. |

| Hydraulic Pellet Press | Compresses powdered samples with a binder into solid, uniform-density disks. | Creating stable pellets with flat surfaces for quantitative XRF analysis [1]. |

| High-Purity Acid (e.g., HNO₃) | Digests and dissolves solid samples in a controlled manner. Purity is critical to prevent contamination. | Sample dissolution for ICP-MS and ICP-OES [1]. |

| PTFE Membrane Filter (0.45/0.2 μm) | Removes suspended particles from liquid samples to protect instrumentation. | Filtration of digested samples prior to ICP-MS/ICP-OES analysis to prevent nebulizer clogging [1]. |

| Microwave Digestion System | Uses controlled high temperature and pressure to rapidly and completely digest refractory materials. | Total dissolution of complex matrices like soils, tissues, and polymers for ICP-MS [1]. |

| Certified Reference Material (CRM) | Provides a known matrix and analyte composition to validate method accuracy and precision. | Quality control for all quantitative spectroscopic methods (XRF, ICP-MS, LIBS) [23]. |

| Stable Isotope-Labeled Standards (SIS) | Acts as an internal standard for mass spectrometry to correct for sample loss and matrix effects. | Quantitative targeted proteomics (SRM) and SWATH-MS for precise protein quantification [19]. |

Visualizing the Workflow and Impact of Sample Preparation

Sample Preparation Workflow

The following diagram outlines the critical decision points and pathways in a generalized spectroscopic sample preparation workflow, highlighting steps where negligence introduces error.

Consequences of Neglect on Drug Development

This diagram maps the cascading impact of poor sample preparation through the drug development pipeline, ultimately affecting financial returns and patient outcomes.

The evidence is clear: neglect of rigorous sample preparation imposes a severe and multi-faceted cost on scientific research and drug development. It is the primary source of spectroscopic error, leading directly to irreproducible data that undermines target validation, candidate selection, and clinical success. The financial repercussions are quantifiable, contributing to soaring R&D costs that now average over $2 billion per asset and threaten the sustainability of pharmaceutical innovation [17].

To mitigate this cost, the research community must elevate sample preparation from a routine task to a core scientific discipline. This requires:

- Standardization: Implementing and adhering to detailed, validated protocols like those outlined in this guide.

- Investment: Allocating appropriate resources—both in equipment and trained personnel—to sample preparation laboratories.

- Validation: Routinely using certified reference materials and statistical tools like the MaRR procedure to quantitatively assess and ensure reproducibility [22].

By embracing these practices, researchers and drug developers can build a foundation of reliable data, enhance the efficiency of R&D pipelines, reduce financial waste, and accelerate the delivery of effective therapies to patients. The cost of neglect is simply too high to bear.

From Theory to Bench: Advanced Sample Preparation Strategies for Complex Bioanalytical Matrices

In spectroscopic analysis, the quality of the final data is inextricably linked to the initial steps of sample preparation. Inadequate sample preparation is, in fact, the cause of as much as 60% of all spectroscopic analytical errors [1]. For techniques like X-Ray Fluorescence (XRF) and Fourier-Transform Infrared (FT-IR) spectroscopy, which are widely used for elemental and molecular structure analysis in material science and pharmaceutical development, proper solid sample preparation is not merely a preliminary step but a critical determinant of analytical success [24] [1]. This guide details the core protocols—grinding, milling, and pelletizing—to ensure that the prepared sample is representative, homogeneous, and physically optimized to interact consistently with X-ray or infrared radiation, thereby guaranteeing data that is both accurate and reproducible [25].

Fundamentals of Sample-Technique Interaction

The physical state of a sample directly influences its interaction with electromagnetic radiation, making tailored preparation essential for different spectroscopic methods.

X-Ray Fluorescence (XRF): This technique measures the secondary X-rays emitted from a material when irradiated with high-energy X-rays. Preparation focuses on creating a flat, homogeneous surface with a consistent particle size (typically <75 μm, ideally <50 μm) and uniform density to ensure accurate and reproducible quantification of elemental composition [1] [25]. The sample must be "infinitely thick" to the X-rays to ensure the emitted radiation reaching the detector is representative of the entire sample matrix [25].

Fourier-Transform Infrared (FT-IR) Spectroscopy: FT-IR identifies molecular structures by analyzing the absorption of infrared light, which excites specific vibrational modes in chemical bonds [24]. The resulting spectrum acts as a unique molecular "fingerprint." For solid samples, preparation aims to ensure the correct path length and particle size to avoid excessive scattering of the IR beam, which can lead to distorted baselines and inaccurate data [1]. A common method involves grinding the sample with potassium bromide (KBr) to create a transparent pellet [1].

Solid Sample Preparation Techniques

Transforming a raw solid sample into an analyzable specimen requires specific mechanical techniques to achieve the necessary homogeneity and surface properties.

Grinding and Milling

Grinding and milling are foundational processes for particle size reduction and homogenization.

Grinding: This process uses mechanical friction to reduce particle size. Swing grinding machines are particularly effective for tough samples like ceramics and ferrous metals, as their oscillating motion minimizes heat generation that could alter sample chemistry [1]. The primary goal is to achieve a fine, consistent powder to ensure the sample interacts uniformly with radiation [1].

Milling: Milling offers greater control over particle size and produces a fine, flat surface, which is crucial for quantitative XRF analysis. Spectroscopic milling machines can be programmed for parameters like rotational speed and feed rate, and often include cooling systems to prevent thermal degradation [1]. This is the preferred method for soft, non-ferrous metals like aluminum and copper alloys, as it creates a clean, flat analytical surface without cross-contamination [26].

Table 1: Comparison of Grinding and Milling Techniques

| Feature | Grinding | Milling |

|---|---|---|

| Primary Mechanism | Mechanical friction | Cutting and shearing |

| Ideal For | Tough samples (ceramics, ferrous metals) [1] | Soft alloys (Al, Cu) and hard materials [1] [26] |

| Surface Result | Fine powder | Flat, smooth surface |

| Heat Generation | Moderate (minimized by swing mills) [1] | Low (controlled by cooling systems) [1] |

| Key Advantage | Effective homogenization | Superior surface quality for quantitative analysis [1] |

Pelletizing for XRF Analysis

Pelletizing involves compressing a powdered sample into a solid, stable disk with a uniform surface.

Process Overview: The ground sample is mixed with a binding agent and pressed in a die under high pressure (15-40 tons) using a hydraulic press [27] [25]. This process creates a pellet with consistent density and surface properties, which is critical for reliable XRF results [1].

The Role of Binders: Binders, such as cellulose or wax mixtures, are essential for holding the powder together during handling and analysis. They prevent loose powder from contaminating the spectrometer [25]. A typical sample-to-binder dilution ratio of 20-30% binder is recommended to ensure pellet integrity without excessively diluting the analyte [25].

Die Types: The choice of die depends on the spectrometer's sample holder.

Pelletizing for FT-IR Analysis

For FT-IR, the most common method for solid samples is the KBr pellet technique. A small quantity of the finely ground sample is mixed with purified potassium bromide powder and then pressed under high pressure in a die. The pressure forms a transparent pellet through which the IR beam can pass, allowing for the collection of a clear absorption spectrum [1].

Detailed Experimental Protocols

Protocol: Preparing a Pressed Pellet for XRF Analysis

This protocol provides a step-by-step methodology for creating high-quality pressed pellets for XRF analysis.

Step 1: Grinding/Milling. Use a spectroscopic grinder or mill to reduce the sample to a fine powder with a particle size of <75 μm (targeting <50 μm for optimal results) [25]. Clean equipment thoroughly between samples to prevent cross-contamination [1].

Step 2: Mixing with Binder. Weigh the ground powder and mix it with an appropriate binder (e.g., cellulose/wax) in a ~30% binder to 70% sample ratio. Ensure thorough homogenization [25].

Step 3: Loading the Die. Transfer the mixture into a clean, high-quality XRF pellet die. For standard dies, a crushable aluminium cup may be used to support the pellet [27].

Step 4: Pressing. Place the die in a hydraulic press and apply a load of 25-35 tons for 1-2 minutes [25]. Using a press with a programmable cycle, including a "step function" to gradually increase pressure, can help trapped gasses escape and prevent pellet capping [27].

Step 5: Ejection and Storage. Eject the finished pellet carefully. If a ring die was used, the pellet is already protected. Otherwise, store the pellet in a desiccator to prevent moisture absorption.

Protocol: Preparing a KBr Pellet for FT-IR Analysis

This protocol outlines the procedure for creating a transparent KBr pellet for FT-IR spectroscopy.

Step 1: Grinding. Finely grind a small amount of the solid sample (1-2 mg) with approximately 200 mg of anhydrous KBr powder in a mortar and pestle or a vibratory mill. The goal is a fine, homogeneous mixture.

Step 2: Loading the Die. Transfer the mixture into a dedicated KBr pellet die, ensuring it is spread evenly.

Step 3: Pressing under Vacuum. Place the die in a press and apply pressure (typically 8-10 tons) while under vacuum. The vacuum is crucial for removing air and moisture, which can cause scattering and obscure IR bands.

Step 4: Ejection and Analysis. Eject the transparent pellet and immediately place it in the FT-IR spectrometer's sample holder for analysis.

Comparative Analysis and Technical Specifications

Table 2: Key Parameters for XRF and FT-IR Pellet Preparation

| Parameter | XRF Pelletizing | FT-IR (KBr) Pelletizing |

|---|---|---|

| Sample Form | Fine powder (<75 μm) [25] | Fine powder mixed with KBr |

| Binder / Matrix | Cellulose, wax (20-30% ratio) [25] | Potassium Bromide (KBr) |

| Typical Pressure | 25-35 tons [25] | 8-10 tons |

| Pressing Time | 1-2 minutes [25] | 1-2 minutes (under vacuum) |

| Critical Consideration | Infinite thickness to X-rays [25] | Transparency to IR light |

| Primary Purpose | Quantitative elemental analysis | Molecular structure identification |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Materials and Equipment for Sample Preparation

| Item | Function |

|---|---|

| Hydraulic Pellet Press | Applies high pressure (15-40 ton range) to compress powdered samples into solid pellets [27] [25]. |

| XRF/FT-IR Pellet Die | A high-quality stainless steel mold; creates pellets of specific diameters (e.g., 32 mm or 40 mm) [27] [1]. |

| Cellulose or Wax Binder | Binding agent that recrystallizes under pressure to hold XRF sample powders together [25]. |

| Potassium Bromide (KBr) | High-purity salt used as a matrix for FT-IR pellets; it is transparent to infrared radiation [1]. |

| Swing Grinding Mill | Reduces particle size and homogenizes tough samples via oscillating motion, minimizing heat [1]. |

| Spectroscopic Milling Machine | Creates a flat, high-quality surface on metal samples for quantitative analysis [1] [26]. |

In the rigorous fields of pharmaceutical development and material science, the path to definitive spectroscopic results begins long before the instrument initiates a scan. As this guide has detailed, the meticulous processes of grinding, milling, and pelletizing are not ancillary tasks but are integral to the analytical workflow. By adhering to these standardized protocols for XRF and FT-IR—controlling for particle size, homogeneity, pressure, and binder use—researchers and scientists can transform variable solid samples into reliable analytical specimens. This disciplined approach to sample preparation is the definitive strategy for mitigating error, unlocking the full potential of spectroscopic instrumentation, and ensuring the integrity of the data that underpins critical research and quality control decisions.

Inadequate sample preparation is a primary source of error in spectroscopic analysis, accounting for nearly 60% of all analytical errors [1]. For Inductively Coupled Plasma Mass Spectrometry (ICP-MS), a technique renowned for its ultra-trace elemental detection capabilities, proper liquid handling is not merely a preliminary step but a fundamental determinant of data integrity. The technique's extreme sensitivity, capable of detecting elements at parts-per-trillion levels, makes it vulnerable to inaccuracies introduced during sample preparation [28]. This guide details the core liquid handling techniques—dilution, filtration, and acidification—that ensure ICP-MS delivers the precise and accurate results required for advanced research and drug development.

The evolution of ICP-MS from a specialized technique to a more accessible analytical tool has intensified the need for robust sample preparation protocols. With single quadrupole ICP-MS systems now comprising approximately 80% of the market and instrument costs decreasing significantly, the technique has expanded into diverse applications from environmental monitoring to pharmaceutical development [28]. This broadening user base necessitates comprehensive understanding of sample preparation principles to maintain data quality across varying sample matrices and expertise levels.

Foundational Principles of ICP-MS Sample Preparation

The Impact of Sample Quality on Analytical Results

Sample preparation directly influences ICP-MS performance through multiple mechanisms. The physical and chemical characteristics of prepared samples affect ionization efficiency, signal stability, and background levels, ultimately determining the validity of analytical findings [1]. Three fundamental principles govern this relationship:

- Matrix Effects: Sample matrix constituents can suppress or enhance analyte signals, leading to inaccurate quantification. Proper preparation techniques mitigate these interferences through dilution, matrix matching, or selective extraction [1].

- Physical Interferences: Suspended particles or high dissolved solid content can clog nebulizers and cones, reducing sensitivity and increasing downtime. Filtration and appropriate dilution prevent these issues [28].

- Contamination Control: The exceptional sensitivity of ICP-MS means that trace-level contamination from reagents, containers, or the laboratory environment can significantly skew results. Meticulous technique and high-purity reagents are essential [29].

Essential Sample Preparation Workflow

The following diagram illustrates the core decision-making pathway for preparing liquid samples for ICP-MS analysis, integrating the three key techniques of dilution, filtration, and acidification:

Sample Preparation Workflow for ICP-MS Analysis. This diagram outlines the logical sequence for preparing liquid samples, highlighting critical decision points for filtration, dilution, and acidification based on sample characteristics [1] [30].

Core Liquid Handling Techniques: Methodologies and Protocols

Dilution: Principles and Quantitative Strategies

Dilution serves multiple purposes in ICP-MS sample preparation: bringing analyte concentrations within the instrument's linear dynamic range, reducing matrix effects, and minimizing damage to instrumental components from high dissolved solids [1]. The appropriate dilution factor depends on both the expected analyte concentration and matrix complexity.

Table 1: ICP-MS Dilution Strategies for Different Sample Types

| Sample Type | Typical Dilution Factor | Primary Purpose | Technical Considerations |

|---|---|---|---|

| Biological Fluids (Blood, Serum) | 1:50 - 1:100 [30] | Reduce organic matrix complexity | Use diluent containing acid and surfactant (Triton X-100) to maintain stability |

| Environmental Waters | 1:10 - 1:20 | Bring analytes within calibration range | Acidification first to prevent adsorption to container walls |

| Digested Solid Samples | 1:100 - 1:1000 [1] | Reduce acid concentration and total dissolved solids | May require serial dilution to achieve accurate pipetting |

| High-Purity Chemicals | Minimal (1:5 - 1:10) | Maintain detectability while reducing contamination risk | Use high-purity acids in clean lab environment |

The development of a lithium quantification method for postmortem whole blood demonstrates meticulous dilution strategy. Researchers implemented a 100-fold dilution using a diluent containing 2% nitric acid, germanium internal standard, and 0.1% Triton X-100 to adequately reduce the blood matrix complexity while maintaining representative lithium concentrations [30]. This approach enabled accurate measurement using only 40 μL of whole blood, crucial when sample volume is limited.

Filtration: Techniques for Particulate Management

Filtration removes suspended particles that could clog nebulizers, disrupt plasma stability, or introduce elemental contaminants [1]. For most ICP-MS applications, 0.45 μm membrane filters provide sufficient particulate removal, though ultratrace analysis may require 0.2 μm filtration [1]. Filter material selection is critical to avoid contamination or analyte adsorption.

Automated filtration systems like the FiltrationStation streamline this process by integrating filtration with dilution and acidification capabilities. These systems automatically draw samples through a Luer-adapting probe and dispense them through a Luer filter, offering significant time savings while reducing contamination risks [31]. This automation is particularly valuable in high-throughput laboratories processing diverse sample matrices.

For complex matrices such as environmental samples or digests containing even small particulates and high salt levels, innovative nebulizer designs with robust non-concentric configurations and larger sample channel diameters can provide improved resistance to clogging [28]. This design enhancement maintains analytical throughput by eliminating frequent interruptions for nebulizer maintenance.

Acidification: Protocols for Sample Preservation

Acidification serves dual purposes in ICP-MS sample preparation: preventing adsorption of trace elements to container walls and digesting organic components in the sample matrix. High-purity nitric acid is the acidification agent of choice due to its compatibility with ICP-MS and effectiveness in keeping metals in solution.

Table 2: Acidification Protocols for Different Sample Matrices

| Matrix Type | Acid Type & Concentration | Purpose | Special Considerations |

|---|---|---|---|

| Aqueous Samples | 1-2% HNO₃ [30] | Prevent analyte adsorption to container walls | Use high-purity acids (e.g., Suprapur) to minimize blank contamination |

| Biological Tissues | 65% HNO₃ (digestion) [29] | Organic matter destruction | Closed-vessel digestion at 220°C for 8 hours ensures complete dissolution |

| Oils & Lipids | 65% HNO₃ + 30% H₂O₂ [32] | Oxidative destruction of organic matrix | Gradual heating to prevent violent reactions; may require specialized vessels |

| Blood & Serum | 1-2% HNO₃ [30] | Protein precipitation & stabilization | Combined with dilution; centrifugation removes precipitated proteins |

The analysis of metal(loid)s in fish tissue exemplifies rigorous acid digestion protocols. Researchers digested 25 mg of dried tissue in 8 mL of 65% nitric acid and 1 mL of 30% hydrogen peroxide using closed heat-resistant vessels at 220°C for 8 hours [29]. This exhaustive digestion ensured complete dissolution of the biological matrix and accurate quantification of trace metals, with all calibration curves exhibiting correlation coefficients (R² > 0.999) indicating excellent linearity.

Integrated Workflow: Application in Experimental Practice

Case Study: Lithium Quantification in Postmortem Whole Blood

A comprehensive method development study for lithium quantification in postmortem whole blood demonstrates the strategic integration of all three liquid handling techniques. The researchers optimized a sample preparation protocol consisting of:

- Dilution: 100-fold dilution with acidified diluent

- Acidification: 2% nitric acid final concentration

- Specialized Rinse Protocol: Between injections, a systematic rinse with 2% nitric acid, 5% hydrochloric acid, and 0.05% Triton X-100 in 2% nitric acid minimized carry-over effects [30]

This method demonstrated exceptional precision and accuracy, with total coefficient of variation ≤2.3% and accuracies ranging from 105 to 108% at all concentrations in quality control samples [30]. The success of this protocol highlights how tailored liquid handling techniques can overcome challenging matrices like whole blood.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Reagents for ICP-MS Sample Preparation

| Reagent / Material | Function | Purity Requirements | Application Example |

|---|---|---|---|

| Nitric Acid (HNO₃) | Sample acidification; organic matter digestion | High-purity (e.g., Suprapur) [30] | Digestion of fish tissue for metal analysis [29] |

| Hydrogen Peroxide (H₂O₂) | Oxidative digestion aid | Trace metal grade (e.g., 30% Merck) [29] | Combined with HNO₃ for complete organic matrix destruction |

| Hydrochloric Acid (HCl) | Rinse solution component; specialized digestions | High-purity (e.g., Suprapur) [30] | 5% solution for rinse protocol to reduce carry-over [30] |

| Triton X-100 | Surfactant to improve sample homogeneity | Analytical grade | 0.05-0.1% in diluent for blood samples [30] |

| Internal Standards (Ge, In, Sc, Bi) | Correction for matrix effects & instrument drift | ICP-MS grade standard solutions | Germanium as internal standard for lithium quantification [30] |

| PTFE Membrane Filters | Particulate removal | Low trace metal background | 0.45 μm filtration for environmental waters [1] |