Chemometrics in Multivariate Spectral Analysis: From Foundational PCA to AI-Driven Applications in Biomedical Research

This article provides a comprehensive overview of chemometric methods for multivariate spectral analysis, tailored for researchers and professionals in drug development and biomedical sciences.

Chemometrics in Multivariate Spectral Analysis: From Foundational PCA to AI-Driven Applications in Biomedical Research

Abstract

This article provides a comprehensive overview of chemometric methods for multivariate spectral analysis, tailored for researchers and professionals in drug development and biomedical sciences. It covers the foundational principles of exploratory data analysis using techniques like Principal Component Analysis (PCA) and progresses to advanced methodological applications, including calibration with Partial Least Squares (PLS) and classification with Linear Discriminant Analysis (LDA). The content further addresses critical troubleshooting and optimization strategies for model robustness, and concludes with rigorous validation protocols to ensure reliability and regulatory compliance. By integrating traditional chemometrics with cutting-edge artificial intelligence (AI) and explainable AI (XAI), this guide serves as an essential resource for developing accurate, interpretable, and actionable spectroscopic models in pharmaceutical quality control and clinical diagnostics.

Uncovering Hidden Patterns: Exploratory Chemometrics for Spectral Data

The Role of Exploratory Data Analysis in Spectroscopy

Exploratory Data Analysis (EDA) serves as the critical first step in the analysis of spectroscopic data, transforming raw spectral measurements into actionable chemical insights. Within the field of chemometrics, which is defined as the mathematical extraction of relevant chemical information from measured analytical data, EDA provides the foundational understanding necessary for building robust multivariate models [1]. Modern process analytical technologies, such as near-infrared (NIR) and Raman spectroscopy, generate massive volumes of complex spectral data containing hidden chemical and physical information about pharmaceutical formulations, food products, and other complex materials [2]. The role of EDA is to navigate this complexity through visual and statistical techniques that uncover patterns, detect anomalies, and inform subsequent modeling decisions.

The integration of EDA with chemometrics is particularly valuable in pharmaceutical analysis, where it helps researchers understand complex data sets produced by analytical technologies [2]. By promoting a thorough initial investigation of spectral data, EDA enables researchers to understand data structure, identify outliers, recognize key variables, and establish relationships between variables prior to applying more advanced multivariate algorithms like Principal Component Analysis (PCA) or Partial Least Squares (PLS) regression [2] [1]. This systematic approach to data exploration has become increasingly important as spectroscopic techniques continue to generate larger and more complex datasets in applications ranging from pharmaceutical formulations to nuclear materials analysis [2] [3].

Theoretical Foundations

Key EDA Concepts in Spectral Analysis

Exploratory Data Analysis in spectroscopy encompasses several distinct types of investigation, each serving a specific purpose in understanding spectral data. Univariate analysis focuses on the distribution and properties of single variables or spectral intensities at individual wavelengths, providing insights into central tendency, spread, and presence of outliers within specific spectral regions [4]. Bivariate analysis examines relationships between two variables, such as spectral intensities at two different wavelengths, or between a spectral feature and a sample property [5]. Multivariate analysis extends these concepts to multiple variables simultaneously, essential for handling the high-dimensional nature of spectral data where thousands of correlated wavelength intensities are measured for each sample [4] [5].

The fundamental statistical descriptors used in spectral EDA include measures of central tendency (mean, median spectra), spread (standard deviation, variance across spectra), and shape (skewness, kurtosis of spectral feature distributions) [4]. For spectral data, understanding these characteristics across wavelengths rather than just within individual wavelengths is crucial, as the relationships between spectral regions often contain the most valuable chemical information. Outlier detection forms another critical component of spectral EDA, identifying spectra that deviate significantly from expected patterns due to measurement artifacts, sample abnormalities, or other unusual conditions [4].

The Chemometrics Workflow

EDA serves as the essential gateway in the comprehensive chemometrics workflow for spectral analysis. The process begins with raw spectral data acquisition from analytical techniques such as NIR, Raman, or UV-Vis spectroscopy [2] [6]. The EDA phase that follows encompasses data preprocessing, quality assessment, and initial pattern recognition, which collectively inform the selection of appropriate multivariate models [2] [1]. Based on EDA findings, researchers proceed to model development using techniques such as PCA for exploratory analysis or PLS for quantitative calibration [2] [1]. The final stage involves model validation and interpretation, where insights gained during EDA help contextualize and verify model results [2].

This workflow is particularly crucial in pharmaceutical applications, where EDA helps researchers understand how formulation variables affect final products. For example, in analyzing freeze-dried pharmaceutical formulations, EDA can reveal how increasing levels of excipients like sucrose and arginine influence spectral clustering and regression results [2]. Furthermore, EDA can uncover subtler patterns, such as the impact of the operator performing the analysis and the session in which data were collected, highlighting the method's sensitivity to both sample composition and procedural variability [2].

Experimental Protocols

Protocol 1: Comprehensive EDA for Spectral Data

Principle: This protocol provides a systematic approach for conducting exploratory data analysis on spectral datasets, enabling researchers to assess data quality, identify patterns, and detect anomalies prior to multivariate modeling [2] [7] [4].

Materials and Reagents:

- Spectral data set (e.g., from NIR, Raman, or UV-Vis spectrophotometer)

- Software tools (Python with Pandas, Scikit-learn, and Matplotlib/Seaborn libraries OR MATLAB with PLS Toolbox) [7] [8]

- Standard normal variate (SNV) or multiplicative scatter correction (MSC) algorithms for scatter correction

- Savitzky-Golay filters for spectral smoothing and derivative calculations

Procedure:

- Data Acquisition and Import

- Acquire spectral measurements using appropriate spectroscopic technique (NIR, Raman, UV-Vis)

- Import spectral data into analysis software (e.g., using Pandas

read_csv()in Python) [7] - Verify data structure: samples as rows, wavelengths/wavenumbers as columns

- Check metadata integrity (sample identifiers, class labels, experimental conditions)

Initial Data Assessment

Data Preprocessing

- Apply necessary preprocessing: scatter correction (SNV, MSC), smoothing, derivatives

- Visualize preprocessed spectra to verify improvement without introducing artifacts

- For multivariate analysis, consider mean-centering or auto-scaling as needed

Univariate Analysis

- Select key wavelengths of chemical interest based on prior knowledge

- Create histograms and boxplots for intensities at these key wavelengths [5]

- Identify potential outliers (>3 standard deviations from mean)

- Examine distributions for normality using Q-Q plots if applicable

Bivariate and Multivariate Analysis

- Generate correlation heatmaps between wavelengths using

sns.heatmap(df.corr())[7] - Create scatter plots of intensities at key wavelength pairs

- Perform PCA on preprocessed data to visualize sample clustering

- Interpret loadings to identify influential wavelengths

- Generate correlation heatmaps between wavelengths using

Documentation and Reporting

- Compile key visualizations and statistical summaries

- Document any outliers or anomalies detected and actions taken

- Formulate hypotheses for subsequent modeling phase

Notes: The entire EDA process should be documented thoroughly, as insights gained will directly inform subsequent chemometric modeling decisions. Particular attention should be paid to detecting and understanding outliers rather than automatically removing them, as they may contain valuable information about unusual samples or measurement artifacts.

Protocol 2: EDA for Pharmaceutical Formulation Analysis

Principle: This specialized protocol applies EDA techniques to analyze complex pharmaceutical formulations, with emphasis on detecting formulation variables, process variations, and quality attributes using spectral data [2] [8].

Materials and Reagents:

- Spectral data from pharmaceutical formulations (e.g., NIR spectra of freeze-dried products)

- Reference values for active pharmaceutical ingredients (APIs) and excipients

- Software with multivariate analysis capabilities (MATLAB with PLS Toolbox or Python with Scikit-learn)

- Design of experiment (DoE) information if available

Procedure:

- Data Organization and Preparation

- Organize spectra according to experimental design factors (e.g., API level, excipient ratios, processing parameters)

- Apply appropriate preprocessing to minimize physical effects (particle size, scattering)

- Create sample groupings based on formulation characteristics

Exploratory Analysis of Formulation Effects

- Perform PCA on preprocessed spectral data

- Color-code scores plot by formulation variables (e.g., sucrose concentration, arginine level) [2]

- Examine loadings to identify spectral regions most influenced by formulation changes

- Use biplots to visualize relationship between samples and spectral features

Detection of Process Variations

- Color-code PCA scores by processing parameters (e.g., operator, session, instrument) [2]

- Use ANOVA on principal components to test significance of processing factors

- Create distribution plots of key spectral features across different processing conditions

Quality Attribute Assessment

- Correlate spectral features with reference measurements of critical quality attributes

- Create scatter plots of specific spectral intensities vs. API concentration

- Use clustering techniques to identify natural groupings in formulation space

Multivariate Statistical Process Control

- Establish control limits based on PCA model statistics (Hotelling's T², Q-residuals)

- Plot historical data with control limits to identify atypical formulations

- Monitor batch-to-batch consistency using trajectory plots in scores space

Notes: This pharmaceutical-focused EDA emphasizes understanding both intentional formulation variables and unintentional process variations. The goal is to build comprehensive process knowledge before developing quantitative calibration models for quality control applications.

Data Presentation

Chemometric Techniques Enabled by EDA

Table 1: Multivariate Chemometric Techniques for Spectral Analysis

| Technique | Type | Primary Application | EDA Prerequisites |

|---|---|---|---|

| Principal Component Analysis (PCA) | Unsupervised | Dimensionality reduction, outlier detection, cluster analysis | Data scaling assessment, missing value treatment, outlier screening [2] [1] |

| Partial Least Squares (PLS) | Supervised | Quantitative calibration, prediction of analyte concentrations | Analysis of X-Y relationships, collinearity assessment, outlier detection [2] [8] |

| Multivariate Curve Resolution-Alternating Least Squares (MCR-ALS) | Supervised/Unsupervised | Resolution of component spectra from mixtures | Evaluation of spectral purity, initial concentration estimates [9] |

| Principal Component Regression (PCR) | Supervised | Quantitative calibration using PCA components | Same as PCA, plus relationship between scores and response variables [8] [9] |

| Artificial Neural Networks (ANN) | Supervised | Nonlinear calibration, complex pattern recognition | Data partitioning assessment, input variable selection, noise evaluation [9] |

Spectral Preprocessing Techniques

Table 2: Common Spectral Preprocessing Methods and Their Applications

| Technique | Purpose | Typical Use Cases | EDA Verification Method |

|---|---|---|---|

| Standard Normal Variate (SNV) | Scatter correction, removal of multiplicative interference | NIR spectra of powdered samples, heterogeneous samples | Examination of baseline variations before/after processing |

| Multiplicative Scatter Correction (MSC) | Scatter correction, compensation for additive and multiplicative effects | Solid samples with particle size effects | Comparison of within-class spectral variability |

| Savitzky-Golay Smoothing | Noise reduction, improvement of signal-to-noise ratio | Noisy spectra, derivative calculations | Analysis of high-frequency components before/after smoothing |

| Savitzky-Golay Derivatives | Enhancement of spectral features, baseline removal | Overlapping bands, small features on large background | Visualization of peak resolution improvement |

| Mean Centering | Emphasis of variations around mean | Preparation for PCA and other multivariate methods | Assessment of data distribution before/after centering |

| Auto-scaling | Equal weighting of all variables | When all wavelengths should contribute equally | Examination of variable standardizations |

Visualization

EDA Workflow for Spectral Data

Pharmaceutical Spectral Analysis Pathway

The Scientist's Toolkit

Essential Research Reagent Solutions

Table 3: Key Materials and Software for Spectral EDA

| Item | Function | Application Example |

|---|---|---|

| Python with Pandas/NumPy | Data manipulation, numerical computations | Basic data inspection, transformation, and statistical calculations [7] |

| Matplotlib/Seaborn | Data visualization and plotting | Creating histograms, scatter plots, and correlation heatmaps [7] [5] |

| Scikit-learn | Machine learning and multivariate analysis | Performing PCA, PLS, and other chemometric techniques [7] |

| MATLAB with PLS Toolbox | Advanced chemometric analysis | Developing PCR, PLS, and MCR-ALS models for spectral data [8] [9] |

| UV-Vis Spectrophotometer | Spectral data acquisition | Generating absorption spectra for pharmaceutical formulations [8] |

| NIR/Raman Spectrometer | Vibrational spectral data acquisition | Non-destructive analysis of pharmaceutical formulations and food products [2] [6] |

| Ethanol (HPLC grade) | Green solvent for sample preparation | Preparing standard solutions for spectrophotometric analysis [8] |

Advanced Applications

EDA in Complex Pharmaceutical Analysis

In complex pharmaceutical formulations containing multiple active ingredients, EDA plays a crucial role in resolving spectral overlaps and identifying critical quality attributes. For example, in the analysis of fixed-dose antihypertensive combinations containing Telmisartan, Chlorthalidone, and Amlodipine, EDA techniques help researchers select appropriate wavelength ranges and preprocessing methods before applying multivariate calibration techniques [8]. The successive spectrophotometric resolution methods, including successive ratio subtraction and successive derivative subtraction coupled with constant multiplication, rely heavily on initial exploratory analysis to identify optimal spectral processing pathways [8].

Advanced chemometric techniques such as Interval-Partial Least Squares (iPLS) and Genetic Algorithm-Partial Least Squares (GA-PLS) build upon foundational EDA to enhance model performance. These variable selection techniques benefit tremendously from initial exploratory analysis that identifies relevant spectral regions and potential interferences [8]. Similarly, the application of artificial neural networks (ANNs) for modeling complex nonlinear relationships in pharmaceutical spectra requires thorough EDA to determine optimal network architecture, learning parameters, and input variable selection [9].

Integration with Green Analytical Chemistry

The role of EDA extends beyond traditional analytical performance to support the implementation of Green Analytical Chemistry principles in spectroscopic analysis. By enabling the development of effective multivariate spectrophotometric methods, EDA helps replace traditional chromatographic techniques that typically consume larger amounts of hazardous solvents and generate more waste [8] [9]. The greenness of these analytical methods can be assessed using metrics such as the Analytical Greenness Metric (AGREE), Blue Applicability Grade Index (BAGI), and White Analytical Chemistry principles, all of which benefit from the method optimization guided by initial exploratory analysis [8].

In one pharmaceutical application, researchers developed green smart multivariate models for analyzing Paracetamol, Chlorpheniramine maleate, Caffeine, and Ascorbic acid in combined formulations. The EDA-guided approach achieved an AGREE score of 0.77 and an eco-scale score of 85, demonstrating excellent environmental performance while maintaining analytical validity [9]. This alignment with United Nations Sustainable Development Goals highlights the broader impact of effective exploratory data analysis in promoting sustainable analytical practices within the pharmaceutical industry.

Exploratory Data Analysis serves as the indispensable foundation for effective spectroscopic analysis within chemometrics applications. By promoting a thorough understanding of spectral data before model development, EDA enables researchers to make informed decisions about preprocessing techniques, variable selection, and multivariate method choice. The structured approach to data exploration outlined in this article provides a framework for extracting meaningful chemical information from complex spectral datasets, particularly in pharmaceutical applications where understanding formulation variables and process effects is critical for quality control. As spectroscopic techniques continue to evolve and generate increasingly complex data, the role of EDA as the critical first step in the chemometrics workflow will only grow in importance for transforming raw spectral measurements into actionable chemical insights.

Principal Component Analysis (PCA) is a foundational dimensionality reduction technique in chemometrics and multivariate spectral analysis, used to simplify complex datasets while preserving critical information [10]. By transforming a large set of variables into a smaller one, PCA allows researchers to identify key patterns, reduce data redundancy, and enhance computational efficiency, which is particularly valuable for analyzing spectral data containing thousands of correlated wavelength intensities [11] [1]. The method works by identifying new, uncorrelated variables known as principal components, which are constructed as linear combinations of the original variables and are designed to capture the maximum possible variance within the data [10]. This process effectively transforms the data into a new coordinate system where the axes (principal components) are orthogonal and ranked by the amount of variance they explain, with the first component (PC1) accounting for the largest possible variance, the second (PC2) for the next largest, and so on [12]. For spectroscopists, this capability is transformative, enabling the distillation of complex spectral signatures into more manageable components for calibration, classification, and exploratory analysis [1].

Theoretical Foundation

Core Mathematical Concepts

The mathematical engine of PCA relies on linear algebra to deconstruct the data structure. The principal components are essentially the eigenvectors of the data's covariance matrix, and their corresponding eigenvalues indicate the amount of variance carried by each component [11] [12]. Geometrically, PCA can be thought of as fitting a p-dimensional ellipsoid to the data, where each axis represents a principal component. The direction of the longest axis of this ellipsoid is the first principal component, the next longest is the second, and so forth [12]. The process ensures that each successive component is uncorrelated with (perpendicular to) the preceding ones, thus capturing orthogonal directions of variance [10].

The PCA Workflow

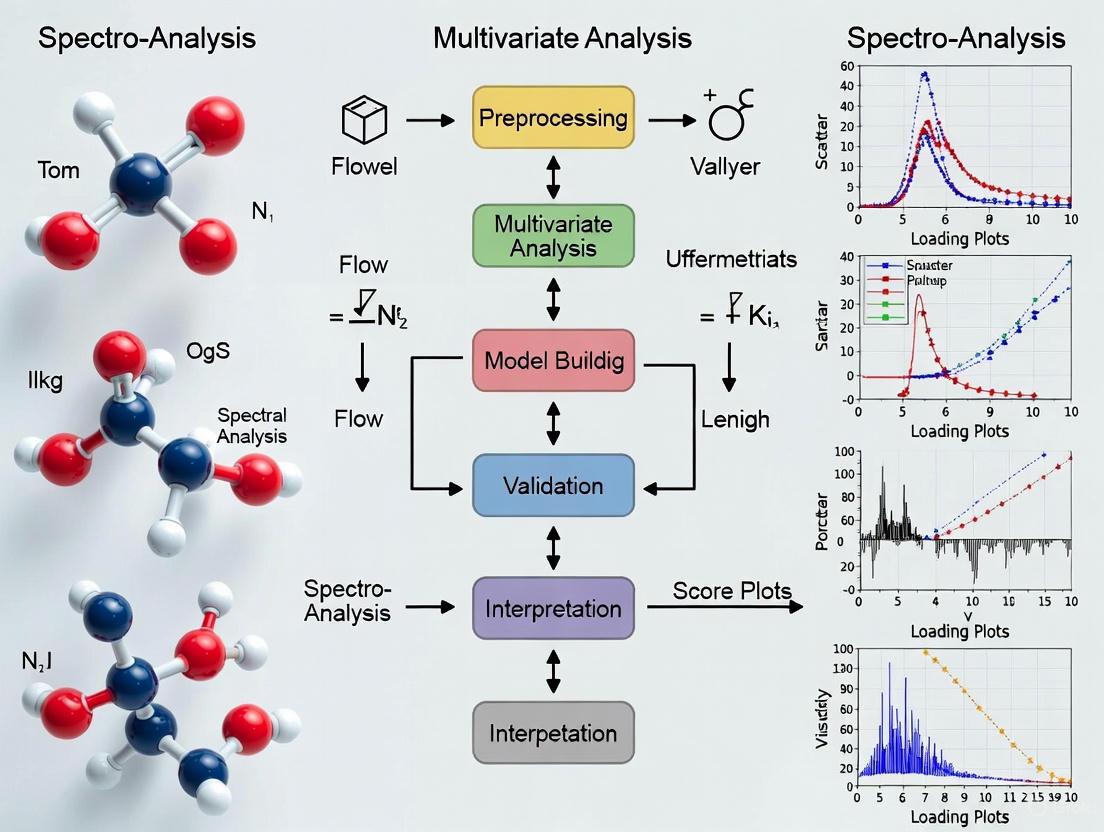

The transformation of raw data into its principal components follows a systematic, five-step workflow. Figure 1 below provides a high-level overview of this process.

Figure 1. The PCA Workflow. This diagram outlines the five key steps for performing Principal Component Analysis, from data preprocessing to the final transformed dataset.

Step-by-Step Protocol for Spectral Data

This protocol details the application of PCA to multivariate spectral data, such as from FTIR or NIR spectroscopy, for exploratory analysis and feature reduction.

Materials and Reagents

Table 1: Essential Research Reagents and Solutions for Spectral Analysis

| Item | Function / Description |

|---|---|

| Blood Serum Samples | Biological fluid for analysis; requires protein precipitation before spectral acquisition [13]. |

| Perchloric Acid (7 M) | Used for protein precipitation in serum samples to reduce interference in spectral reading [13]. |

| Ethanol (70% v/v) & Acetone p.a. | Mixture for cleaning the Attenuated Total Reflection (ATR) crystal before and between sample measurements [13]. |

| ATR-FTIR Spectrometer | Instrument for acquiring infrared spectra; equipped with a diamond crystal reflectance element [13]. |

Procedure

Step 1: Sample Preparation and Spectral Acquisition

- Prepare Serum Samples: Thaw frozen blood serum aliquots at room temperature for 30-40 minutes. Precipitate proteins by adding 1.5 µL of 7 M perchloric acid to a 100 µL aliquot of serum. Vortex the mixture for 15 seconds and centrifuge at 12,000 rpm for 12 minutes at 4°C. Use the supernatant for analysis [13].

- Acquire Spectra: Clean the ATR crystal with a 1:1 mixture of 70% ethanol and acetone, followed by 70% ethanol only before each new sample. Acquire a new background spectrum. Apply a drop (~10 µL) of the prepared supernatant to the crystal. Collect spectra in the range of 4000–600 cm⁻¹ with 32 scans and a resolution of 4 cm⁻¹. Perform all measurements in triplicate to ensure technical reproducibility [13].

Step 2: Data Preprocessing and Standardization

- Format Data: Compile the spectra into a data matrix X (samples × wavenumbers).

- Preprocess Spectra: Apply preprocessing techniques to remove unwanted artifacts. A recommended sequence includes:

- Average Replicates: Average the triplicate pre-processed spectra for each sample to create a single, representative spectrum per sample [13].

- Standardize the Data: This critical step ensures that each wavenumber contributes equally to the analysis. For each wavenumber, subtract the mean absorbance across all samples and divide by the standard deviation [10] [11]. This centers the data and gives it unit variance, preventing variables with larger scales from dominating the model. The standardized value z is calculated as: ( Z = \frac{X - \mu}{\sigma} ) where X is the original absorbance, μ is the mean absorbance for that wavenumber, and σ is its standard deviation [11].

Step 3: Covariance Matrix Computation

- Compute the covariance matrix of the standardized data matrix. This symmetric matrix reveals the relationships between all pairs of wavenumbers, showing how they vary together from the mean [10]. A positive covariance between two wavenumbers indicates they increase or decrease together, while a negative value suggests an inverse relationship [10].

Step 4: Eigen Decomposition and Principal Component Identification

- Perform eigen decomposition on the covariance matrix. This calculation yields eigenvectors and eigenvalues [10].

- Interpret the Output: The eigenvectors represent the principal components (PCs)—the new, orthogonal directions of maximum variance. The corresponding eigenvalues quantify the amount of variance captured by each PC [10] [12].

- Rank the Components: Sort the eigenvectors in descending order of their eigenvalues. The eigenvector with the highest eigenvalue is the first principal component (PC1), the second is PC2, and so on [10].

Step 5: Feature Selection and Data Projection

- Select Principal Components: Decide how many components (k) to retain. This is often done by examining a Scree Plot (eigenvalues vs. component number) and looking for an "elbow," or by calculating the cumulative percentage of total variance explained [10].

- Form Feature Vector: Create a feature vector matrix, which is composed of the first k eigenvectors as its columns [10].

- Project the Data: Transform the original standardized data into the new PCA subspace by multiplying the standardized data matrix by the feature vector matrix: ( \mathbf{T} = \mathbf{X} \mathbf{W} ), where T is the scores matrix, X is the standardized data, and W is the feature vector matrix [12]. The resulting scores matrix contains the coordinates of the original samples in the new PC space and is used for all subsequent analysis and visualization.

Data Analysis and Interpretation

- Scores Plot: Plot the scores of different samples against the first few PCs (e.g., PC1 vs. PC2) to visualize sample clustering, trends, and potential outliers [13].

- Loadings Plot: Plot the loadings (weights) of the original wavenumbers for each PC. This helps identify which spectral regions (wavenumbers) contribute most to the variance captured by that PC, providing chemical interpretability [13].

Practical Implementation and Validation

Code Implementation for PCA

The following Python code demonstrates a typical PCA workflow on a sample dataset, including visualization.

Experimental Validation: A Case Study in Osteosarcopenia Detection

A 2023 study published in Scientific Reports provides a robust example of PCA applied in spectroscopic chemometrics for disease detection [13]. The research aimed to distinguish older women with osteosarcopenia from healthy controls using ATR-FTIR spectroscopy of blood serum.

Table 2: Key Experimental Parameters and Performance Metrics from Osteosarcopenia Study

| Parameter / Metric | Description / Value |

|---|---|

| Samples | 62 total (30 osteosarcopenia, 32 healthy controls) [13] |

| Spectral Preprocessing | Savitzky-Golay smoothing, automatic-weighted least squares baseline correction, mean-centering [13] |

| Data Splitting | Kennard-Stone algorithm: 70% training, 30% testing [13] |

| PCA Performance | PCA-SVM model achieved 89% accuracy in distinguishing patient samples [13] |

Experimental Workflow: The study followed a meticulous workflow, summarized in Figure 2, which integrated PCA with a classification algorithm.

Figure 2. Chemometric Analysis Workflow for Disease Detection. This diagram outlines the experimental and computational steps used to detect osteosarcopenia from blood serum spectra, culminating in a high-accuracy PCA-SVM model [13].

Discussion

Advantages of PCA in Spectral Analysis

PCA offers several key benefits for chemometric applications [11]:

- Handles Multicollinearity: Spectral data often contain highly correlated absorbances across adjacent wavenumbers. PCA creates new, uncorrelated variables that overcome this issue.

- Noise Reduction: By discarding components with low eigenvalues, which often correspond to noise, PCA can enhance the signal-to-noise ratio of the data.

- Data Compression and Visualization: It allows for the representation of complex spectral data in a reduced number of dimensions (e.g., 2D or 3D scores plots), making it easier to visualize sample clusters and trends.

- Outlier Detection: Samples that deviate significantly from the majority in the scores plot can be easily identified as potential outliers.

Limitations and Considerations

Despite its utility, researchers must be aware of PCA's limitations [11]:

- Interpretability Challenge: Principal components are mathematical constructs (linear combinations of all original wavenumbers) and can be difficult to relate back to specific chemical entities.

- Linearity Assumption: PCA is a linear technique and may struggle to capture complex, nonlinear relationships in spectral data.

- Sensitivity to Scaling: The results are heavily dependent on proper data standardization. Without it, variables with larger scales will dominate the model.

- Information Loss: Reducing dimensions inherently discards some information. The key is to retain enough components to preserve the chemically relevant variance.

Interpreting Scores and Loadings Plots for Sample Clustering and Outlier Detection

Within the field of multivariate spectral analysis, Principal Component Analysis (PCA) serves as a foundational chemometric technique for exploring complex data structures. It is primarily used for dimensionality reduction, transforming a large set of interrelated spectral variables into a smaller set of uncorrelated variables called principal components (PCs) while retaining most of the original information [14]. For researchers in pharmaceutical development and analytical chemistry, PCA provides a powerful means to identify patterns, detect sample clusters, and flag potential outliers in spectral datasets, such as those derived from UV-Vis spectrophotometry used in analyzing multi-component pharmaceutical formulations [9]. The interpretation of scores plots and loadings plots is central to extracting meaningful chemical and biological information from these models, enabling scientists to make informed decisions during drug development and quality control processes without requiring preliminary separation steps [9].

Theoretical Foundations: Scores and Loadings

The PCA Model and Component Extraction

The PCA model decomposes the original data matrix X into a product of two matrices: the scores matrix (T) and the loadings matrix (P), plus a residual matrix E, expressed as X = TP' + E [15]. The loadings define the direction of the principal components in the original variable space and represent the contributions of each original variable to the new components. They can be understood as the coefficients linking the original variables to the principal components [15] [16]. The scores are the projections of the original samples onto the new principal components, representing the coordinates of the samples in the reduced-dimensionality PC space [15].

Each principal component is associated with an eigenvalue that represents the amount of variance explained by that component. The size of the eigenvalue determines the importance of each component, with the first PC capturing the most variance, the second PC (orthogonal to the first) capturing the next largest amount, and so on [15] [14]. The cumulative proportion of variance explained by consecutive components helps determine how many PCs to retain for adequate data representation [15].

Key Quantitative Metrics for Interpretation

Table 1: Key PCA Metrics and Their Interpretation in Spectral Analysis

| Metric | Calculation | Interpretation in Chemometrics |

|---|---|---|

| Eigenvalue | Variance of the principal component | Determines component significance; according to the Kaiser criterion, retain PCs with eigenvalues >1 [15] |

| Proportion | Eigenvalue / Total variance | Proportion of total data variability explained by each PC; higher values indicate more important components [15] |

| Cumulative Proportion | Sum of consecutive proportions | Total variance explained by retained PCs; for descriptive purposes, 80% may be adequate, while 90%+ is preferred for further analysis [15] |

| Loadings | Correlation between original variables and PCs | Identify which spectral wavelengths or variables contribute most to each pattern; high absolute values indicate important variables [15] [16] |

| Scores | Linear combinations of original data using loadings as coefficients | Position of each sample in the reduced PC space; used for clustering and outlier detection [15] |

Experimental Protocols for PCA in Spectral Analysis

Protocol 1: Data Preprocessing and PCA Model Construction

Purpose: To properly prepare spectral data and build a robust PCA model for multivariate analysis.

Materials and Reagents:

- UV-Vis Spectrophotometer (e.g., Shimadzu 1605 UV-spectrophotometer): For acquiring spectral data [9]

- MATLAB with PLS Toolbox or R with FactoMineR package: For multivariate data analysis [9] [17]

- Standard solutions of analytes of interest (e.g., Paracetamol, Chlorpheniramine maleate, Caffeine, Ascorbic acid) [9]

- Methanol or appropriate solvent for preparing sample solutions [9]

Procedure:

- Spectral Acquisition: Measure absorption spectra of standards and samples over an appropriate wavelength range (e.g., 200-400 nm) with 1 nm intervals [9].

- Data Matrix Construction: Construct a data matrix where rows represent samples and columns represent absorbance values at different wavelengths.

- Data Normalization: Standardize the data by mean-centering and scaling to unit variance using normalization procedures to ensure each variable contributes equally to the model [17].

- Correlation Analysis: Explore correlations between variables to identify highly correlated spectral regions; while PCA handles correlated variables, understanding these relationships aids interpretation [17].

- PCA Execution: Perform PCA on the preprocessed data matrix, retaining all components initially for comprehensive evaluation.

- Component Selection: Determine the number of significant components to retain using criteria such as eigenvalues >1 (Kaiser criterion), scree plot analysis, and target cumulative variance (e.g., 80-90%) [15].

Protocol 2: Interpretation of Loadings for Spectral Feature Identification

Purpose: To identify which spectral wavelengths or variables contribute most to the observed patterns in the PCA model.

Procedure:

- Loadings Examination: For each retained principal component, examine the loadings values for all original variables (wavelengths).

- Significance Threshold: Establish a correlation threshold for deeming loadings significant (e.g., |r| > 0.5) based on specialized knowledge and data context [16].

- Pattern Identification: Identify variables with large-magnitude loadings (positive or negative) for each component.

- Chemical Interpretation: Interpret the components based on the variables with significant loadings. For example, in pharmaceutical analysis, a component with high loadings at specific wavelengths might represent particular chemical compounds or functional groups [9].

- Loadings Plot Visualization: Create a loadings plot to visualize the contribution of each variable to the first two or three components, highlighting the most influential spectral regions.

Table 2: Interpretation Guide for Loadings Patterns in Spectral Analysis

| Loadings Pattern | Chemical Interpretation | Example in Pharmaceutical Analysis |

|---|---|---|

| Multiple variables with high positive loadings on PC1 | These spectral wavelengths vary together; when one increases, others tend to increase | May represent the common spectral profile of the active pharmaceutical ingredient [16] |

| Variables with high negative loadings | These spectral features vary inversely with features having positive loadings | Could indicate spectral regions affected by interfering compounds or excipients [15] |

| Specific wavelengths with dominant loadings | Key spectral signatures for specific chemical compounds | Identification of characteristic absorption bands for paracetamol, caffeine, etc. [9] |

| Different variables loading on different components | Each PC captures distinct sources of variation in the spectra | PC1 might represent API concentration, while PC2 captures baseline variation [16] |

Protocol 3: Sample Clustering Based on PCA Scores

Purpose: To identify natural groupings of samples based on their projected positions in the principal component space.

Materials:

- PCA scores for the retained components

- Visualization software (e.g., R with factoextra package, MATLAB) [17]

Procedure:

- Scores Extraction: Extract the scores for the first 2-3 principal components that explain sufficient cumulative variance.

- Preliminary Visualization: Create a scatter plot of the scores (PC1 vs. PC2) to visually inspect for natural sample groupings.

- Cluster Analysis: Perform formal clustering algorithms (e.g., k-means, hierarchical clustering) directly on the PCA scores to identify sample groups [17].

- Cluster Validation: Validate clusters using statistical measures and chemical knowledge to ensure meaningful groupings.

- Interpretation: Correlate cluster membership with sample characteristics (e.g., formulation type, manufacturing batch, origin) to derive chemical insights.

Protocol 4: Outlier Detection in Spectral Data

Purpose: To identify unusual or anomalous samples that deviate from the majority of the dataset.

Procedure:

- Visual Inspection: Examine the scores plot for samples that appear separated from the main cluster of points.

- Reconstruction Error Analysis: Calculate the reconstruction error for each sample - the difference between the original data and the data reconstructed using only the retained principal components. Samples with high reconstruction errors are potential outliers [14].

- Component Extreme Analysis: Examine each principal component individually for extreme values, particularly in later components, as points that don't follow the general data patterns tend to be extreme in later components [14].

- Statistical Testing: Apply statistical tests to the scores of each component to identify extreme values, using methods such as robust PCA to mitigate the influence of outliers on the model itself [14].

- Investigation: Investigate the chemical or procedural reasons for outlier status, which may include formulation errors, measurement artifacts, or truly unique samples.

Workflow Visualization

Case Study: Pharmaceutical Formulation Analysis

In a recent study applying PCA for the analysis of Grippostad C capsules, researchers utilized PCA to explore patterns in quality of life data across countries, which shares methodological similarities with spectral analysis [17]. The analysis began with correlation analysis to identify highly correlated variables, though all variables were retained since PCA naturally handles correlated variables. Following data standardization, PCA was performed, revealing that the first three principal components explained approximately 84.1% of the total variance in the data, indicating that these components captured the majority of the systematic information [15].

The loadings interpretation revealed that the first principal component was strongly associated with Arts, Health, Transportation, Housing, and Recreation, essentially measuring overall quality of life. The scores plot clearly showed Mexico as a significant outlier, positioned far from other countries in the principal component space [17]. After removing this outlier, further analysis using k-means clustering on the PCA scores identified three distinct country clusters based on their well-being characteristics [17]. This approach demonstrates how PCA scores and loadings can be effectively used for both outlier detection and sample clustering in multivariate data.

In a more direct chemometric application, researchers successfully employed PCA-based methods including Principal Component Regression (PCR) for analyzing complex pharmaceutical formulations containing Paracetamol, Chlorpheniramine maleate, Caffeine, and Ascorbic acid [9]. The models enabled resolution of highly overlapping spectra without preliminary separation steps, with the PCR model demonstrating excellent predictive capability for quantifying each component in the formulation. This highlights the practical utility of PCA interpretation in standard pharmaceutical analysis within product testing laboratories [9].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Computational Tools for PCA in Spectral Analysis

| Item | Function/Application | Example Specifications |

|---|---|---|

| UV-Vis Spectrophotometer | Acquisition of spectral data from chemical samples | Shimadzu 1605 UV-spectrophotometer with 1.00 cm quartz cells, range 200-400 nm [9] |

| Standard Reference Materials | Calibration and validation of chemometric models | Certified reference standards of active pharmaceutical ingredients (e.g., Paracetamol, Caffeine) [9] |

| MATLAB with Toolboxes | Multivariate data analysis and model development | MATLAB R2014a with PLS Toolbox, MCR-ALS Toolbox, Neural Network Toolbox [9] |

| R Statistical Software | Open-source alternative for multivariate analysis | R with FactoMineR, factoextra, paran packages for PCA and visualization [17] |

| HPLC System | Reference method validation | Comparison of PCR/PCA results with standard chromatographic methods [9] |

| Data Normalization Software | Preprocessing of spectral data | clusterSim package in R for data standardization and normalization [17] |

Best Practices and Troubleshooting

When interpreting scores and loadings plots for sample clustering and outlier detection, several best practices enhance the reliability of conclusions:

- Assess Component Significance: Use multiple criteria (eigenvalues >1, scree plot, cumulative variance) to determine the appropriate number of components to retain, as over-extraction can lead to modeling of noise [15].

- Validate Clusters: Confirm that sample clusters identified in scores plots are chemically meaningful rather than statistical artifacts by correlating with known sample characteristics.

- Investigate Outliers: Before excluding outliers, investigate whether they represent analytical errors, unique formulations, or potentially valuable discoveries [14] [17].

- Consider Robust Methods: When data contains significant outliers, use robust PCA variants to prevent extreme values from unduly influencing the model [14].

- Leverage Domain Knowledge: Interpret loadings in the context of chemical expertise; a wavelength with high loadings should make chemical sense for the system being studied [16].

Common challenges include overinterpretation of minor components, failure to properly preprocess data, and attributing chemical meaning to random variations. These can be mitigated through cross-validation, randomization tests, and validation with known standards.

In the pharmaceutical industry, ensuring product quality and correctly identifying formulations are paramount for patient safety and regulatory compliance. Analytical techniques like Near-Infrared (NIR) and Raman spectroscopy are widely used for their desirable characteristics: they are rapid, non-destructive, and applicable both offline and online [18]. However, these techniques produce complex, high-dimensional data profiles that require advanced statistical tools for interpretation. Chemometrics, the application of mathematical and statistical methods to chemical data, provides the necessary framework to extract meaningful information from this spectral complexity [19].

This application note demonstrates the practical use of Principal Component Analysis (PCA), a foundational chemometric technique, for differentiating pharmaceutical formulations. We present a detailed protocol and case study showing how PCA can uncover hidden patterns in spectral data, distinguish between different drug products, and identify potential outliers, thereby supporting quality control and formulation development.

Theoretical Background: Principal Component Analysis (PCA)

Principal Component Analysis is an unsupervised projection method used for exploratory data analysis. Its primary goal is to reduce the dimensionality of a complex dataset while preserving the most significant sources of variance, allowing for the visualization of underlying data structure [18] [19].

Given a data matrix X (with dimensions N samples × M variables, e.g., spectral wavelengths), PCA performs a bilinear decomposition expressed as: X = TP^T + E Where:

- T is the scores matrix, containing the coordinates of the samples in the new principal component (PC) space.

- P is the loadings matrix, defining the directions of the principal components, which are the directions of maximum variance.

- E is the residuals matrix, representing the variance not captured by the PCA model [18].

The scores allow for the visualization of sample patterns, trends, or clusters in a reduced-dimensional space (typically 2D or 3D). The loadings explain which original variables (wavelengths) contribute most to each PC, providing a means of interpreting the chemical or physical meaning behind the observed sample separation [19].

Case Study: Differentiating Ibuprofen and Ketoprofen Tablets Using Mid-IR Spectroscopy

Objective

To apply PCA on Mid-Infrared (IR) spectroscopic data to differentiate tablets containing two different Active Pharmaceutical Ingredients (APIs): Ibuprofen and Ketoprofen.

Experimental Workflow

The following diagram illustrates the complete experimental and data analysis workflow.

Materials and Reagents

Table 1: Essential Research Reagent Solutions and Materials

| Item | Function/Description | Application in Protocol |

|---|---|---|

| Pharmaceutical Tablets | 51 tablets containing either Ibuprofen or Ketoprofen as the Active Pharmaceutical Ingredient (API) [18]. | The samples under investigation. |

| Mid-IR Spectrometer | Instrument for collecting absorption/transmission spectra in the mid-infrared range [18]. | Spectral data acquisition. |

| Spectral Preprocessing Software | Software for applying preprocessing techniques (e.g., Mean Centering, Standard Normal Variate, Derivatives) to raw spectra [20] [21]. | Preparing data for robust PCA modeling. |

| Chemometrics Software Platform | Platform (e.g., MATLAB with PLS Toolbox, Python with Scikit-learn, or other dedicated software) capable of performing PCA and generating scores/loadings plots [20] [21]. | Performing PCA calculations and visualization. |

Detailed Methodology

Spectral Data Acquisition

- Instrumentation: Use a Mid-IR spectrometer.

- Spectral Range: Collect absorption spectra over the range of 2000–680 cm⁻¹, resulting in profiles with 661 data points (variables) per spectrum [18].

- Sample Handling: Analyze each tablet directly, leveraging the non-destructive nature of the technique.

- Data Structure: Arrange the collected spectra into a data matrix X, where rows represent the 51 individual samples and columns represent the 661 wavenumbers (variables) [18].

Data Preprocessing

- Mean Centering: Subtract the average spectrum of the entire dataset from each individual spectrum. This preprocessing step is critical as it makes the PCA model focus on the variation between samples rather than the absolute values, improving the interpretability of the components [19].

PCA Model Calculation

- Perform PCA on the preprocessed data matrix X.

- The model will extract principal components (PCs) sequentially, with PC1 describing the largest source of variance, PC2 the second largest (orthogonal to PC1), and so on.

- For this specific case, the first two principal components (PC1 and PC2) were found to account for approximately 90% of the total cumulative variance in the data, providing a highly accurate low-dimensional representation [18].

Results and Interpretation

Table 2: Quantitative Results from PCA on Mid-IR Data

| Parameter | Result | Interpretation |

|---|---|---|

| Number of Samples | 51 | Tablets of Ibuprofen and Ketoprofen. |

| Spectral Variables | 661 | Wavenumbers in the range 2000–680 cm⁻¹. |

| Variance Explained by PC1 | ~90% (Cumulative with PC2) | PC1 is the dominant source of variance. |

| Cluster Separation | Complete separation along PC1 | Ibuprofen and Ketoprofen tablets form distinct, non-overlapping clusters. |

Scores Plot Interpretation

The scores plot (PC1 vs. PC2) reveals two completely distinct clusters with no overlap:

- Ketoprofen samples are located at positive scores on PC1.

- Ibuprofen samples are located at negative scores on PC1 [18]. This clear separation indicates that the spectral differences between the two APIs constitute the largest and most significant source of variation in the dataset.

Loadings Interpretation

To understand the chemical basis for the separation, the loadings for PC1 are examined. When plotted in a profile-like fashion, the loadings indicate which specific spectral regions are responsible for differentiating the formulations.

- Positive Loadings Peaks: Wavelengths where Ketoprofen samples (positive scores) have higher absorbance.

- Negative Loadings Peaks: Wavelengths where Ibuprofen samples (negative scores) have higher absorbance [18]. By identifying the chemical bonds associated with these key wavenumbers, researchers can verify that the model is separating the samples based on chemically meaningful spectral features of the two distinct APIs.

Advanced Application: Outlier Detection with PCA

Beyond differentiation, PCA is a powerful tool for detecting anomalous or outlying samples that may indicate production issues, contamination, or formulation errors. The Hotelling T² statistic is commonly used for this purpose [19].

It is calculated for each sample i as: T²i = Σ (t²ia / s²a) for *a = 1* to *A* PCs Where *tia* is the score of sample i for component a, and s²_a is the variance of that component. A 95% confidence ellipse (e.g., the T² ellipse) can be drawn on the scores plot. Samples falling outside this ellipse are considered potential outliers and warrant further investigation [19].

This practical case study demonstrates that PCA is a powerful, intuitive tool for the differentiation of pharmaceutical formulations based on vibrational spectroscopy data. The protocol successfully distinguished Ibuprofen from Ketoprofen tablets based on their Mid-IR spectra, with the first two principal components capturing 90% of the total spectral variance. The integration of scores and loadings plots provides not only a visual confirmation of class separation but also a chemically interpretable understanding of the basis for that separation.

When incorporated into a quality control workflow, PCA offers a robust, non-destructive method for rapid formulation verification and the critical task of outlier detection, ultimately contributing to the assurance of pharmaceutical product safety and efficacy.

The analysis of complex chemical mixtures, such as pharmaceuticals, often requires methods to decipher spectral data where components significantly overlap. Traditional techniques like High-Pressure Liquid Chromatography (HPLC), while effective, can be costly, time-consuming, and generate hazardous waste [9]. Multivariate spectrophotometric methods coupled with chemometrics present a powerful, green alternative, enabling the simultaneous quantification of multiple components without preliminary separation [9]. This Application Note details the practical implementation of four principal chemometric models—Principal Component Regression (PCR), Partial Least-Squares (PLS), Multivariate Curve Resolution-Alternating Least Squares (MCR-ALS), and Artificial Neural Networks (ANN). These models facilitate the extraction of meaningful quantitative information from multivariate spectral data, transforming a complex data matrix into actionable chemical insight. Designed for researchers and drug development professionals, this protocol provides a step-by-step guide for determining compounds like Paracetamol (PARA), Chlorpheniramine maleate (CPM), Caffeine (CAF), and Ascorbic Acid (ASC) in a commercial pharmaceutical capsule (Grippostad C) [9]. The methods outlined herein are validated, offer comparable accuracy and precision to official methods, and are assessed as environmentally friendly using the Analytical GREEnness Metric Approach (AGREE) and eco-scale tools [9].

Chemometrics is a chemical discipline that employs mathematics, statistics, and formal logic to extract meaningful qualitative and quantitative information from chemical data [9]. In the context of multivariate spectral analysis, the core challenge is the resolution of highly overlapping spectra. The data, typically organized in a matrix (\mathbf{X}), contains rows representing observations (e.g., different samples or mixtures) and columns representing variables (e.g., absorbance at different wavelengths) [22]. When multiple absorbing species are present, their individual spectra sum into a single, complex profile, making it impossible to quantify individual components using univariate calibration.

The chemometric approach resolves this by treating the entire spectral profile as a multivariate entity. The core principle is the application of multivariate calibration models that correlate the spectral data matrix ((\mathbf{X})) with a concentration matrix ((\mathbf{Y})) [9] [23]. These models can handle complex, collinear data and, when properly optimized, can accurately predict the concentration of individual components in unknown mixtures. The synergy between spectroscopic techniques and chemometric data handling is thus paramount for modern, efficient analytical investigations in pharmaceutical quality control and beyond [23].

Theoretical Foundations of Multivariate Models

The choice of chemometric model depends on the nature of the data and the specific analytical problem. The following table summarizes the key characteristics of the four models discussed in this protocol.

Table 1: Key Chemometric Models for Multivariate Spectral Analysis

| Model | Acronym | Primary Function | Key Strength | Typical Application in Spectroscopy |

|---|---|---|---|---|

| Principal Component Regression [9] [23] | PCR | Regression & Quantification | Reduces data dimensionality and noise by using principal components for regression. | Quantifying active ingredients in formulations with overlapping UV-Vis spectra. |

| Partial Least-Squares [9] [23] | PLS | Regression & Quantification | Maximizes covariance between spectral data (X) and concentration (Y), often leading to more robust models than PCR. | Correlation of spectral signals with properties of interest like concentration or sensory scores. |

| Multivariate Curve Resolution-Alternating Least Squares [9] | MCR-ALS | Resolution & Quantification | Resolves the spectral data matrix into pure concentration profiles and spectra for each component without prior information. | Extracting pure component spectra and concentrations from unresolved mixture profiles. |

| Artificial Neural Networks [9] | ANN | Non-linear Regression & Modeling | Models complex non-linear relationships between variables, superior for handling severe non-linearity. | Handling non-linear spectral responses in complex matrices where linear models fail. |

Dimensionality Reduction and Visualization

Underpinning many chemometric techniques is the concept of dimensionality reduction, which is crucial for both exploration and modeling. Methods like Principal Component Analysis (PCA) project high-dimensional data into a lower-dimensional space (e.g., 2D or 3D) defined by principal components (PCs) that capture the maximum variance in the data [22] [23] [24]. This creates a "chemical space map" or "chemography" where the spatial arrangement of samples reveals inherent patterns, similarities, or differences [24]. For instance, PCA can cluster similar coffee samples and identify outliers based on their chemical fingerprints [23]. While PCA is a linear method, non-linear techniques like t-SNE and UMAP often provide superior neighborhood preservation for complex, high-dimensional data, creating more interpretable visualizations of chemical space [24].

Experimental Protocol: A Step-by-Step Guide

This protocol outlines the simultaneous quantification of PARA, CPM, CAF, and ASC in a capsule formulation using UV-Vis spectroscopy and multivariate calibration.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Reagents

| Item | Specification / Function |

|---|---|

| Analytical Standards | High-purity Paracetamol (PARA), Chlorpheniramine maleate (CPM), Caffeine (CAF), and Ascorbic Acid (ASC) [9]. |

| Pharmaceutical Formulation | Grippostad C capsules (or equivalent combination product) [9]. |

| Solvent | Methanol, HPLC grade. Serves as the dissolution and dilution solvent for standards and samples [9]. |

| UV-Vis Spectrophotometer | Capable of scanning from 200–400 nm with 1.00 cm quartz cells [9]. |

| Software | MATLAB with PLS Toolbox, MCR-ALS Toolbox, and Neural Network Toolbox for data analysis and model construction [9]. |

Procedures

Standard Solution and Sample Preparation

- Stock Standard Solutions (1 mg/mL): Accurately weigh 100.00 mg of each pure PARA, CPM, CAF, and ASC powder into separate 100 mL volumetric flasks. Dissolve and make up to volume with methanol [9].

- Working Standard Solutions (100 µg/mL): Dilute the stock solutions appropriately with methanol to obtain working standards [9].

- Calibration and Validation Sets: A five-level, four-factor calibration design is employed to create 25 mixtures with varying concentrations of the four analytes [9].

- Concentration ranges: PARA (4.00–20.00 µg/mL), CPM (1.00–9.00 µg/mL), CAF (2.50–7.50 µg/mL), ASC (3.00–15.00 µg/mL) [9].

- In 10 mL volumetric flasks, combine different aliquots from the working solutions and dilute to the mark with methanol.

- Sample Preparation (Grippostad C capsules):

- Empty the contents of ten capsules and mix thoroughly.

- Accurately weigh a portion equivalent to one capsule's claimed content.

- Transfer to a suitable volumetric flask, dissolve, and dilute with methanol. Filter if necessary. Further dilute an aliquot to fit within the calibration range [9].

Spectral Data Acquisition

- Using the spectrophotometer, measure the absorption spectra of all calibration mixtures, validation mixtures, and the prepared sample solution across the wavelength range of 200.0–400.0 nm [9].

- Export the spectral data points at 1 nm intervals, specifically for the 220.0–300.0 nm region, for data analysis. This results in 81 data points per spectrum for model building [9].

Data Analysis and Model Construction

The workflow for building and validating the chemometric models is systematic. The following diagram illustrates the logical flow from raw data to chemical insight.

Data Preprocessing

- Mean-Centering: Before model construction, mean-center the spectral data. This preprocessing step uses the average spectrum as the new origin, which is a mandatory step for PCA and highly recommended for other models to focus on the variance within the data set rather than the absolute distance from zero [9] [23].

Model-Specific Optimization

- PCR & PLS Models:

- Use leave-one-out cross-validation to optimize the number of Latent Variables (LVs).

- Select the number of LVs that corresponds to the least significant error of calibration. For this specific quaternary mixture, four LVs were found to be optimal [9].

- MCR-ALS Model:

- Apply non-negativity constraints to the concentration and spectral profiles, which is a physically meaningful constraint obliging concentrations and absorbances to be zero or positive [9].

- ANN Model:

- A feed-forward model based on the Levenberg–Marquardt backpropagation training algorithm is established.

- Optimal network architecture is achieved with:

- Four hidden neurons.

- A purelin-purelin transfer function.

- A learning rate of 0.1.

- 100 epochs [9].

Model Validation and Application

- Use the optimized models to predict the concentrations of the five samples in the external validation set.

- Assess the predictive power of each model using recovery percent (%) and Root Mean Square Error of Prediction (RMSEP) [9].

- Apply the final, validated models to the spectral data of the prepared pharmaceutical sample (Grippostad C) to determine the concentration of each component.

Results and Interpretation

Performance Comparison of Chemometric Models

The four developed models were compared for their efficiency in predicting the concentrations of the validation set samples. The following table summarizes the typical performance metrics that can be expected from such an analysis.

Table 3: Comparative Performance of Multivariate Calibration Models

| Model | Key Optimized Parameters | Prediction Accuracy (Typical Recovery %) | Precision (Typical RMSEP) | Remarks |

|---|---|---|---|---|

| PCR | 4 Latent Variables | 98.5 - 101.5% | Low | Robust linear model; performance similar to PLS [9]. |

| PLS | 4 Latent Variables | 98.5 - 101.5% | Low | Often slightly more robust than PCR due to covariance maximization [9] [23]. |

| MCR-ALS | Non-negativity constraints | 98.0 - 102.0% | Low | Provides pure spectra; powerful for resolution without prior info [9]. |

| ANN | 4 hidden neurons, purelin | 99.0 - 101.0% | Lowest | Superior for capturing non-linearities; most complex to optimize [9]. |

All models can be efficiently applied with no need for a preliminary separation step, demonstrating their capability as green substitutes for chromatography in standard pharmaceutical analysis [9].

Greenness Assessment

The greenness of the proposed multivariate spectrophotometric method was evaluated against traditional HPLC. Using the Analytical GREEnness (AGREE) metric tool, the method scored 0.77 (on a 0-1 scale, where 1 is ideal greenness) [9]. Furthermore, using the eco-scale assessment, which deducts penalty points from 100 for hazardous practices, the method scored 85, confirming its excellent environmental profile [9].

Troubleshooting and Best Practices

- Outlier Detection: Always check for outliers during model calibration by plotting Q residuals vs. Hotelling's T². Samples outside the confidence threshold can exert excessive leverage on the model and should be investigated and potentially removed [23].

- Model Complexity vs. Overfitting: When selecting the number of LVs for PCR/PLS or hidden neurons for ANN, avoid overfitting. Use cross-validation to find the point where the error of prediction is minimized; adding more parameters beyond this point models noise rather than signal [9] [23].

- Linearity Assumption: If the data exhibits strong non-linear behavior, linear models like PCR and PLS may perform suboptimally. In such cases, ANN is the recommended model due to its ability to model complex non-linear relationships [9].

Building Predictive Models: Chemometric Methods for Quantification and Classification

Partial Least Squares (PLS) regression is a foundational chemometric technique widely used for multivariate spectral analysis. PLS is a powerful method for developing predictive models when dealing with data where predictor variables are numerous, highly collinear, and contain noise [25]. Unlike multiple linear regression which requires independent predictors, PLS excels in handling correlated variables by projecting them into a new space of latent variables (LVs) that maximize covariance with the response variable [26]. This technique has become indispensable in spectroscopic analysis, pharmaceutical research, and environmental monitoring where it transforms complex spectral datasets into actionable chemical insights [27] [28] [1].

Theoretical Foundations of PLS Regression

Core Mathematical Principles

The PLS algorithm operates on the fundamental equation: X = TP^T + E and Y = UQ^T + F, where X is the predictor matrix (spectral data), Y is the response matrix (concentrations or properties), T and U are score matrices, P and Q are loading matrices, and E and F are error matrices [25] [26]. The method iteratively extracts latent factors that capture the maximum covariance between X and Y, making it particularly effective for analyzing spectroscopic data with numerous correlated wavelength variables.

PLS addresses the multicollinearity problem common in spectral data by projecting the original variables into a reduced set of uncorrelated latent variables [26]. This projection serves two critical functions: it reduces dimensionality while preserving essential information, and it filters out noise, leading to more robust predictive models compared to traditional regression techniques.

Key Advantages for Spectral Analysis

- Handles correlated predictors: PLS effectively manages thousands of correlated spectral wavelengths [1]

- Works with more variables than samples: Unlike traditional regression, PLS can model systems where the number of variables exceeds observations [27]

- Simultaneous modeling of X and Y: PLS models the relationship between predictor and response spaces while describing their underlying structures [25]

- Robust to noise: By weighting variables according to their importance, PLS minimizes the influence of uninformative or noisy spectral regions [28]

Experimental Design and Data Preparation

Research Reagent Solutions and Materials

Table 1: Essential Research Reagents and Computational Tools for PLS-Based Spectral Analysis

| Category | Specific Examples | Function in PLS Analysis |

|---|---|---|

| Spectral Acquisition | NIR Spectrometer, QEPAS, Raman Spectrometer | Generates primary spectral data (X-matrix) [28] [26] |

| Reference Analytics | ICP-OES, AAS, HPLC | Provides reference measurements for Y-matrix [28] |

| Chemometric Software | SIMCA-P, MATLAB, Python with PLS libraries | Implements PLS algorithms and model validation [27] [26] |

| Molecular Descriptors | logP, logS, PSA, VDss, Hydrogen Bond Donors/Acceptors | Provides structural and physicochemical predictors [27] |

| Data Preprocessing | SNV, MSC, Savitzky-Golay Smoothing, Mean Centering | Enhances signal quality and model performance [29] [28] |

Sample Collection and Variable Selection

Proper experimental design begins with assembling a representative sample set covering the expected chemical and physical variability of the system. For pharmaceutical applications like steroid permeability prediction, researchers compiled 37 molecular descriptors including solubility (logS), partition coefficient (logP), distribution coefficient (logD), polar surface area (PSA), and volume of distribution (VDss) to build robust models [27]. Variable selection techniques such as the Firefly algorithm (FFiPLS) can enhance model performance by identifying the most informative spectral regions or molecular descriptors [28].

Protocol: Implementing PLS for Multivariate Calibration

Data Preprocessing and Optimization

Step 1: Spectral Preprocessing Apply appropriate preprocessing techniques to enhance spectral features and reduce unwanted variability. Common methods include:

- Multiplicative Scatter Correction (MSC) or Standard Normal Variate (SNV) to address light scattering effects [28]

- Savitzky-Golay smoothing to reduce high-frequency noise while preserving spectral shape [28]

- Mean centering to focus analysis on variation rather than absolute values [29]

Step 2: Outlier Detection Implement the Isolation Forest algorithm or similar techniques to identify anomalous samples that could disproportionately influence model calibration [29].

Step 3: Data Splitting Divide the dataset into training (calibration) and test (validation) sets using methods such as Kennard-Stone or random sampling, ensuring both sets represent the overall population.

Step 4: Variable Selection (Optional) For complex datasets with many uninformative variables, apply variable selection algorithms such as FFiPLS, iPLS, or iSPA-PLS to identify optimal spectral regions or molecular descriptors [28].

Model Calibration and Validation

Step 1: Determine Optimal Number of Latent Variables Use k-fold cross-validation (typically 10-fold) to identify the number of latent variables that minimizes the root mean square error of cross-validation (RMSECV) while avoiding overfitting [29] [26].

Step 2: Build PLS Model Calibrate the PLS model using the training set and the predetermined number of latent variables. The algorithm will calculate regression coefficients that maximize covariance between spectral data (X) and reference values (Y).

Step 3: Model Validation Validate the model using the test set and calculate key performance metrics including:

- Coefficient of determination (R²)

- Root mean square error of prediction (RMSEP)

- Residual prediction deviation (RPD) [28]

Step 4: Model Interpretation Analyze Variable Importance in Projection (VIP) scores to identify which spectral regions or molecular descriptors contribute most significantly to the model's predictive power [27].

Advanced Applications and Integration with Machine Learning

Pharmaceutical and Biomedical Applications

PLS regression has demonstrated exceptional utility in pharmaceutical research. One study developed a PLS model to predict the apparent permeability coefficient (Papp) of 33 steroids across synthetic membranes, achieving high predictive ability (R²Y = 0.902, Q²Y = 0.722) [27]. The model identified specific molecular properties (logS, logP, logD, PSA, and VDss) as critical determinants of permeability, enabling prediction of new candidate drugs without extensive laboratory testing.

In targeted drug delivery, researchers have integrated PLS with machine learning algorithms to predict drug release from polysaccharide-coated formulations. By using PLS for dimensionality reduction of Raman spectral data (over 1500 variables) and applying AdaBoost with multilayer perceptron (MLP) regression, they achieved exceptional prediction accuracy (R² = 0.994, MSE = 0.000368) [29].

Environmental and Material Sciences

In environmental monitoring, PLS has been successfully applied to predict metal content in soils using NIR spectroscopy. Models for aluminum, iron, and titanium achieved residual prediction deviation (RPD) values greater than 2, indicating excellent predictive capability [28]. This approach provides a rapid, cost-effective alternative to traditional analytical methods like ICP-OES or AAS.

Gas mixture analysis represents another advanced application where PLS excels. Researchers have employed PLS with quartz-enhanced photoacoustic spectroscopy (QEPAS) to quantify individual components in multicomponent gas mixtures with strongly overlapping absorption features, achieving superior performance compared to multilinear regression [26].

Integration with Machine Learning Frameworks

Modern chemometrics increasingly integrates PLS with machine learning algorithms to handle complex, nonlinear relationships in spectral data. PLS serves as an effective dimensionality reduction technique before applying algorithms such as:

- AdaBoost with MLP for modeling complex drug release profiles [29]

- Support Vector Machines (SVM) for classification and regression tasks [1]

- Random Forest for feature selection and model enhancement [1]

This hybrid approach leverages the strengths of both traditional chemometrics and modern machine learning, providing enhanced predictive performance while maintaining interpretability.

Data Analysis and Performance Metrics

Quantitative Assessment of Model Performance

Table 2: Key Validation Metrics for PLS Regression Models

| Metric | Formula/Description | Interpretation Guidelines | Exemplary Values from Literature |

|---|---|---|---|

| R²Y | Coefficient of determination for Y-variance explained | >0.9 excellent, >0.7 good, <0.5 poor | 0.902 (Steroid permeability) [27] |

| Q²Y | Cross-validated coefficient of determination | >0.7 excellent, >0.5 good, <0.3 poor | 0.722 (Steroid permeability) [27] |

| RMSEE | Root Mean Square Error of Estimation | Lower values indicate better fit | 0.00265379 (Steroid Papp prediction) [27] |

| RMSEP | Root Mean Square Error of Prediction | Lower values indicate better prediction | 0.0077 (Steroid Papp prediction) [27] |

| RPD | Ratio of standard deviation to RMSEP | >2.0 excellent, 1.5-2.0 good, <1.5 poor | >2.0 (Soil metal prediction) [28] |

Workflow Visualization

Figure 1: Comprehensive workflow for developing and validating PLS regression models for spectral analysis, highlighting the iterative nature of model optimization.

Troubleshooting and Technical Notes

Common Challenges and Solutions

- Overfitting: Despite PLS's inherent resistance to overfitting, it can occur with too many latent variables. Always use cross-validation to determine the optimal number of components [29] [26].

- Poor Predictive Performance: If models show adequate fit but poor prediction, consider variable selection algorithms (FFiPLS, iPLS) to eliminate uninformative predictors [28].

- Nonlinear Relationships: For strongly nonlinear systems, integrate PLS with machine learning approaches or consider nonlinear PLS variants [29] [1].

- Model Interpretation Difficulty: Use VIP scores to identify influential variables and ensure chemical interpretability of latent factors [27].

Optimization Strategies

- Data Quality: Ensure reference values (Y-matrix) are accurate and precise, as errors propagate through the model.

- Representative Sampling: The calibration set should encompass expected variability in future samples.

- Preprocessing Selection: Test multiple preprocessing techniques to identify optimal methods for specific data characteristics.

- Model Updating: Periodically recalibrate models with new data to maintain predictive performance over time.

PLS regression remains a cornerstone technique in chemometrics, providing a robust framework for extracting meaningful chemical information from complex multivariate data. When properly implemented and validated, PLS models serve as powerful tools for quantitative spectral analysis across diverse scientific domains.

Within the framework of chemometrics for multivariate spectral analysis, qualitative classification techniques are indispensable for transforming complex spectral data into actionable, qualitative information. These methods are pivotal for applications ranging from pharmaceutical quality control and clinical diagnostics to food authentication, where they enable the identification of sample categories based on their spectral fingerprints [18] [1]. Techniques such as Partial Least Squares Discriminant Analysis (PLS-DA), Soft Independent Modeling of Class Analogy (SIMCA), Linear Discriminant Analysis (LDA), and Support Vector Machines (SVM) each offer distinct philosophical and mathematical approaches to tackling classification challenges [30] [31]. This application note provides a detailed comparison of these methods, complete with structured protocols derived from recent scientific studies, to guide researchers in the selection, implementation, and critical evaluation of classification models for spectral analysis.

The following table summarizes the core characteristics, advantages, and limitations of the four key classification techniques.

Table 1: Comparison of Qualitative Classification Techniques in Chemometrics

| Technique | Core Principle | Best For | Key Advantages | Key Limitations |

|---|---|---|---|---|

| PLS-DA | Supervised; finds latent variables that maximize covariance between spectral data (X) and class membership (Y) [1]. | Binary or multi-class problems with highly correlated variables (e.g., spectra) [30]. | - Handles multicollinear data effectively.- Provides interpretable regression coefficients.- Well-established in spectroscopy. | - Prone to overfitting if not properly validated.- Can model irrelevant variation in X if not careful. |

| SIMCA | Supervised; builds a separate PCA model for each class. Classifies new samples based on their fit to these models [18] [30]. | Multi-class problems where classes have distinct, intrinsic structures; class modeling [30]. | - Provides a measure of model fit (leverage) and residual distance.- A sample can be assigned to multiple classes or none.- Robust for class-specific patterns. | - Model performance depends on the quality of individual PCA models.- Less straightforward for binary discrimination than PLS-DA. |

| LDA | Supervised; finds linear combinations of variables that maximize separation between classes relative to within-class variance. | Problems where class separation is linear and data follows a roughly normal distribution. | - Simple, fast, and computationally efficient.- Provides a probabilistic class assignment. | - Requires more samples than variables to avoid overfitting.- Assumes classes have similar covariance structures. |