Cross-Validation of Spectroscopic and Chromatographic Methods: A Strategic Framework for Enhanced Analytical Accuracy in Pharmaceutical Research

This article provides a comprehensive guide for researchers and drug development professionals on the cross-validation of spectroscopic and chromatographic methods.

Cross-Validation of Spectroscopic and Chromatographic Methods: A Strategic Framework for Enhanced Analytical Accuracy in Pharmaceutical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the cross-validation of spectroscopic and chromatographic methods. It explores the foundational principles of these complementary techniques, detailing methodological applications for simultaneous analysis of complex drug formulations. The content addresses common troubleshooting scenarios and optimization strategies, and establishes a rigorous framework for validation and comparative analysis. By synthesizing current developments, including multiplex LC-MS/MS and high-throughput workflows, this resource aims to empower scientists with practical knowledge to ensure data reliability, regulatory compliance, and enhanced decision-making in pharmaceutical analysis and therapeutic drug monitoring.

Fundamental Principles: Understanding the Complementary Roles of Spectroscopy and Chromatography

In the realm of analytical chemistry, particularly within pharmaceutical development and natural product research, separation, identification, and quantification represent three fundamental processes. The cross-validation of results between spectroscopic and chromatographic methods is a cornerstone of rigorous scientific analysis, ensuring that findings are both accurate and reliable [1]. This guide provides an objective comparison of these techniques, focusing on their distinct roles, performance characteristics, and the powerful synergies created when they are combined in hyphenated analytical platforms.

The core of this comparison lies in understanding that these processes, while often used in tandem, have different primary objectives. Separation techniques, such as chromatography, are designed to physically isolate individual components from a complex mixture. Identification techniques, predominantly spectroscopy, are used to determine the molecular structure or identity of a compound. Quantification measures the amount or concentration of a specific substance present in a sample. Modern analytical science leverages the combined strengths of these approaches to overcome the limitations inherent in any single method [1] [2].

Core Conceptual Differences

The table below summarizes the fundamental objectives, technological focuses, and data outputs that distinguish separation, identification, and quantification processes.

Table 1: Fundamental Differences Between Core Analytical Processes

| Aspect | Separation | Identification | Quantification |

|---|---|---|---|

| Primary Objective | Isolate individual components from a complex mixture. | Determine the molecular structure or identity of a compound. | Measure the amount or concentration of a substance. |

| Technological Focus | Resolving power, peak capacity, and efficiency of partitioning. | Molecular interaction with electromagnetic radiation; spectral specificity. | Signal response linearity, dynamic range, and detection sensitivity. |

| Typical Data Output | Chromatogram (signal vs. time) showing separated peaks. | Spectrum (signal vs. wavelength/wavenumber) providing a molecular fingerprint. | Concentration value (e.g., mg/mL) with associated uncertainty. |

Comparative Performance of Analytical Techniques

Different analytical techniques offer varying strengths for identification and quantification tasks. The selection of a method depends on factors such as the required specificity, sensitivity, and the nature of the sample. The following table compares the performance of several common spectroscopic techniques based on a study of protein secondary structure, illustrating how methods can be evaluated against one another.

Table 2: Comparison of Spectroscopic Techniques for Protein Secondary Structure Analysis [3]

| Technique | Performance for α-Helix | Performance for β-Sheet | Key Application Parameters |

|---|---|---|---|

| ATR-IR Spectroscopy | Excellent (via PLS models) | Excellent (via PLS models) | High signal-to-noise in mid-IR range; minimal sample preparation. |

| Raman Spectroscopy | Excellent (via PLS models) | Excellent (via PLS models) | Provides complementary information to IR; sensitive to symmetric vibrations. |

| Far-UV CD Spectroscopy | Good (via CONTINLL algorithm) | Good (via CONTINLL algorithm) | Sensitive to chiral environments; ideal for solutions and rapid conformational analysis. |

| Polarimetry | Good (with new calibration) | Not reported as a strength | Rapid measurement; simpler instrumentation but less structural detail. |

Detailed Experimental Protocols

To illustrate how these concepts are applied in practice, below are detailed protocols for a typical experiment involving cross-validation between chromatographic and spectroscopic methods.

This protocol is critical for Halal and Kosher certification, addressing the need to distinguish between porcine, bovine, and fish gelatins.

- Objective: To identify the source of gelatin in a complex product matrix using FTIR spectroscopy coupled with multivariate data analysis.

- Sample Preparation:

- Extract gelatin from the product using a warm water bath (40-50°C).

- Precipitate and purify the gelatin if necessary.

- Prepare a thin film for FTIR analysis by depositing the purified gelatin solution onto an ATR (Attenuated Total Reflection) crystal and allowing the solvent to evaporate.

- Instrumental Analysis:

- Acquire FTIR spectra in the mid-IR range (e.g., 4000-400 cm⁻¹).

- Use a high-resolution spectrometer (e.g., 4 cm⁻¹ resolution) with 32 or more scans per spectrum to ensure a high signal-to-noise ratio.

- Data Processing and Identification:

- Pre-process spectra using standard normal variate (SNV) or multiplicative scatter correction (MSC), followed by derivative spectroscopy (e.g., Savitzky-Golay) to enhance spectral features.

- Employ chemometric techniques for pattern recognition:

- Principal Component Analysis (PCA): For unsupervised exploration of natural clustering between different gelatin types.

- Partial Least Squares-Discriminant Analysis (PLS-DA):

- Soft Independent Modeling of Class Analogy (SIMCA):

- Validate the model using a separate set of samples with known origins.

This protocol emphasizes the quantification of specific, clinically relevant compounds after their separation and identification.

- Objective: To accurately quantify the concentration of a target biomarker (e.g., a specific plant secondary metabolite) in a natural medicine extract.

- Sample Preparation:

- Powder the plant material using a laboratory mill.

- Perform solid-liquid extraction (e.g., maceration, sonication) using an appropriate solvent like methanol or hydroalcoholic mixtures.

- Filter and concentrate the extract, then reconstitute it in the initial mobile phase for LC-MS analysis.

- Chromatographic Separation (LC):

- Utilize a reverse-phase C18 column.

- Employ a binary gradient elution with mobile phases such as water (with 0.1% formic acid) and acetonitrile.

- Optimize the gradient program and flow rate to achieve baseline separation of the target biomarker from other co-extractives.

- Mass Spectrometric Quantification (MS):

- Operate the mass spectrometer in multiple reaction monitoring (MRM) mode for high specificity and sensitivity.

- Use an electrospray ionization (ESI) source, typically in positive or negative mode as optimized for the analyte.

- Generate a calibration curve by analyzing a series of standard solutions with known concentrations of the pure biomarker.

- Quantify the analyte in the sample extracts by comparing the integrated peak area from the MRM transition to the calibration curve.

Synergies in Hyphenated Techniques and Data Integration

The integration of separation with identification and quantification creates a powerful analytical workflow. This synergy is most evident in hyphenated techniques like LC-MS (Liquid Chromatography-Mass Spectrometry) or GC-IR (Gas Chromatography-Infrared Spectroscopy), where the instruments are physically coupled and an effluent from the chromatography system is directly introduced into the spectroscopy system [1].

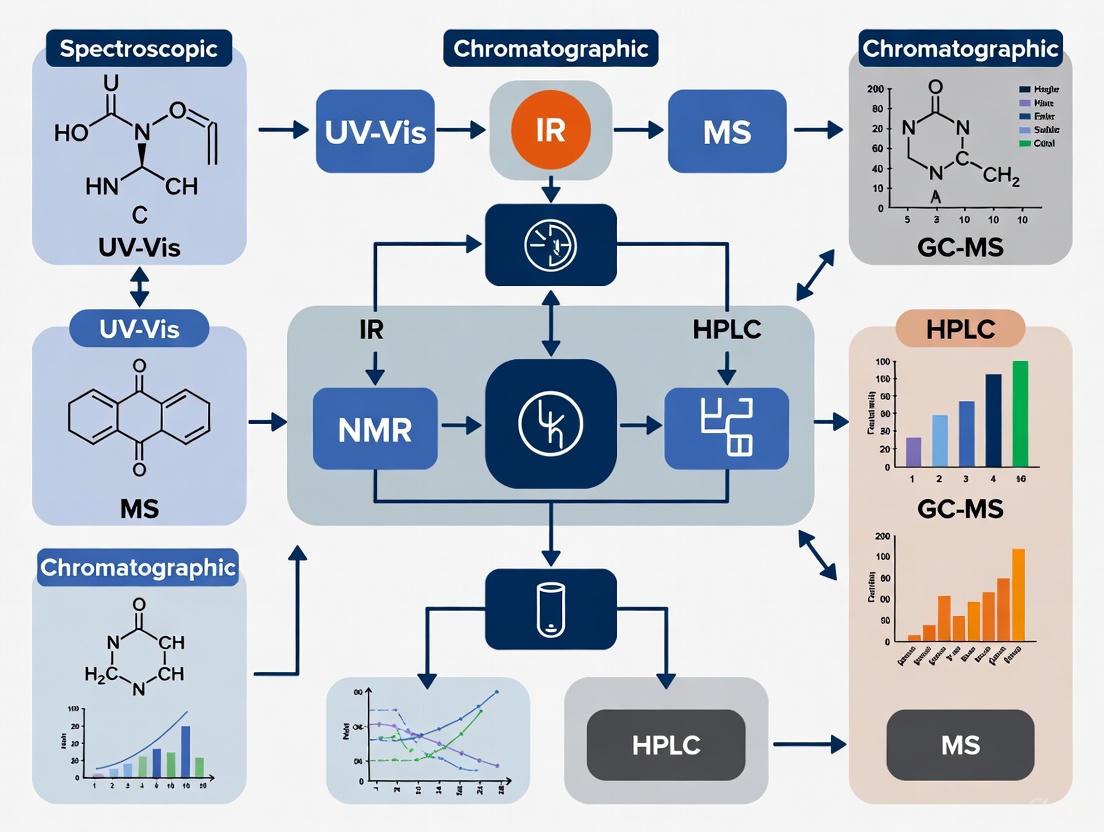

The following diagram illustrates the logical workflow and synergistic relationship between these processes in a hyphenated system.

This workflow shows how separation acts as a critical pre-processing step for identification. By simplifying the mixture, it reduces spectral overlaps and interferences, leading to more confident identifications [1]. Subsequently, a confident identification is a prerequisite for accurate quantification, as it ensures that the measured signal is correctly assigned to the target analyte. The quantification step then validates the entire process, providing the essential numerical data required for applications like drug development and quality control.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution of the protocols above requires specific, high-quality materials. The following table lists key reagents and their functions in analytical methods development.

Table 3: Essential Research Reagents and Materials for Analytical Method Development

| Reagent/Material | Function in Analysis | Example Use Case |

|---|---|---|

| Ultrapure Water | Serves as a solvent, mobile phase component, and for sample dilution without introducing impurities. | Critical for preparing mobile phases in HPLC to avoid baseline noise and column damage [4]. |

| Certified Reference Standards | Provides a known concentration and identity for calibrating instruments and validating methods. | Used to generate the calibration curve for accurate quantification in LC-MS [1]. |

| LC-MS Grade Solvents | High-purity solvents for mobile phases and sample preparation to minimize ion suppression and background noise. | Acetonitrile and methanol for LC-MS mobile phases ensure high sensitivity and reproducible retention times [1]. |

| Stationary Phases | The packed material within chromatography columns that enables separation based on chemical interactions. | C18 reverse-phase columns are standard for separating mid- to non-polar compounds [2]. |

| Chemometrics Software | Statistical software for processing and interpreting complex multivariate data from spectroscopic and chromatographic instruments. | Used for PCA and PLS-DA to classify gelatin sources based on FTIR spectral data [2]. |

Separation, identification, and quantification are distinct yet deeply interconnected processes in analytical chemistry. While separation techniques excel at purifying complex mixtures, spectroscopic methods provide unparalleled capabilities for structural elucidation and identification. Quantification ties these processes together by providing the essential numerical data required for decision-making.

The most significant advances in the field arise from the synergistic integration of these techniques, rather than from their use in isolation. Hyphenated instruments, combined with powerful chemometric tools, create a robust framework for the cross-validation of analytical results. This integrated approach is fundamental to progress in fields ranging from the authentication of halal pharmaceuticals [2] to the determination of protein secondary structure [3] and the comprehensive analysis of natural products [1], ultimately driving innovation and ensuring quality and safety in drug development and beyond.

Chromatographic techniques form the backbone of modern pharmaceutical analysis, providing the separation power necessary to resolve complex mixtures encountered in drug discovery, development, and quality control. Within the context of cross-validation research, where independent analytical methods verify each other's results, chromatography offers a orthogonal approach to spectroscopic techniques. The fundamental principle of chromatography involves the distribution of analytes between a stationary phase and a mobile phase, with different compounds migrating at varying rates based on their physicochemical properties. This separation mechanism provides a complementary approach to spectroscopic techniques, which primarily depend on the interaction of molecules with electromagnetic radiation. The cross-validation of spectroscopic results with chromatographic methods significantly enhances the reliability of analytical data in pharmaceutical research, as it combines separation-based identification with structural characterization capabilities.

Liquid chromatography with ultraviolet detection (LC-UV) represents one of the most established workhorses in pharmaceutical laboratories, offering robust quantitative analysis for compounds containing chromophores. Ultra-high performance liquid chromatography (UHPLC) has advanced these capabilities through the use of smaller particle sizes and higher operating pressures, delivering improved resolution and faster analysis times. Gas chromatography (GC) excels in separating volatile and semi-volatile compounds, making it indispensable for residual solvent analysis and metabolic profiling. Comprehensive two-dimensional liquid chromatography (2D-LC) represents a cutting-edge approach that combines two independent separation mechanisms, dramatically increasing peak capacity for the analysis of highly complex samples. Each technique offers distinct advantages and limitations that must be carefully considered when designing cross-validation protocols for pharmaceutical applications.

Technical Principles and Instrumentation

Fundamental Separation Mechanisms

The separation power of chromatographic techniques stems from differential partitioning of analytes between stationary and mobile phases. In reversed-phase LC, which dominates pharmaceutical applications, separation occurs primarily through hydrophobic interactions between analytes and alkylated stationary phases (typically C8 or C18), with polar aqueous-organic mobile phases driving elution [5]. The selectivity can be modulated through pH adjustment, organic modifier selection (acetonitrile or methanol), and temperature. In contrast, normal-phase chromatography utilizes polar stationary phases with non-polar organic mobile phases, separating compounds based on polarity differences. Hydrophilic interaction liquid chromatography (HILIC) represents a valuable alternative for retaining polar compounds, employing reversed-phase-type mobile phases with polar stationary phases [5].

Gas chromatography relies on volatility differences for separation, with analytes vaporized and carried through a capillary column by an inert gas mobile phase. Separation occurs through interactions between the gaseous analytes and the stationary phase coated on the column interior, with temperature programming enabling the elution of compounds with varying volatilities. The comprehensive 2D-LC approach combines two independent separation mechanisms (e.g., reversed-phase with ion-exchange or HILIC) to achieve dramatically increased peak capacities [6]. The orthogonality between dimensions—meaning the separation mechanisms are statistically independent—is crucial for maximizing the resolving power of 2D-LC systems [5].

Instrumentation Configurations

Table 1: Core Instrumentation Components Across Chromatographic Techniques

| Technique | Pump System | Injection System | Separation Column | Detection Options |

|---|---|---|---|---|

| LC-UV | Single high-pressure pump | Fixed-loop autosampler | C8/C18, 50-150 mm, 3-5 μm | UV/Vis DAD (190-800 nm) |

| UHPLC | Binary UHPLC pump (<1000 bar) | Low-dispersion autosampler | C8/C18, 50-100 mm, sub-2 μm | UV/Vis, MS, CAD, ELSD |

| GC | Gas pressure control (He, N₂, H₂) | Heated split/splitless injector | Fused silica capillary with stationary phase | FID, MS, ECD, NPD |

| 2D-LC | Dual (or more) independent pumps | Specialized interface (valve/loops) | Two different chemistries (e.g., RPLC+HILIC) | MS, UV, combination |

LC-UV systems typically incorporate a single pump delivering isocratic or gradient mobile phase, an autosampler, a stainless steel column packed with 3-5μm particles, and a UV/Vis detector. UHPLC instrumentation operates at significantly higher pressures (up to 1000-1500 bar) using pumps with improved hydraulic systems, low-dispersion tubing, and detectors with reduced cell volumes to maintain separation efficiency [5]. Modern UHPLC systems often incorporate binary or quaternary pumps for precise gradient formation and temperature-controlled column compartments for enhanced retention time stability.

GC systems consist of a pressurized gas supply, heated injector port, capillary column housed in a precision oven, and various detection options. Flame ionization detection (FID) provides universal response for organic compounds, while mass spectrometry (MS) offers identification capability. Comprehensive 2D-LC represents the most complex instrumentation, requiring two independent separation systems connected via an interface that manages fraction transfer between dimensions [5]. The most common interface employs an multi-port switching valve with dual storage loops that alternately collect and transfer effluent from the first to the second dimension [6]. This configuration enables continuous operation where the second dimension separation occurs concurrently with the first dimension separation.

Performance Comparison and Cross-Validation Applications

Analytical Figures of Merit

Table 2: Performance Characteristics of Chromatographic Techniques

| Technique | Peak Capacity | Analysis Time | Sensitivity | Orthogonality in Cross-Validation |

|---|---|---|---|---|

| LC-UV | 100-500 | 5-30 min | ng-μg (molar absorptivity dependent) | Complementary to NMR, IR for purity |

| UHPLC | 200-800 | 1-10 min | ng-μg (similar to LC-UV) | Higher throughput alternative to LC-UV |

| GC | 1,000-10,000 | 10-60 min | pg-ng (detector dependent) | Orthogonal to LC for volatile analytes |

| 2D-LC | 1,000-10,000 | 30-120 min | ng-μg (dilution factor limitation) | High orthogonality for complex mixtures |

The performance characteristics of each technique determine its suitability for specific cross-validation applications. LC-UV provides moderate peak capacity (typically 100-500) with analysis times ranging from 5-30 minutes, making it well-suited for quality control applications where robustness and cost-effectiveness are priorities [7]. Detection sensitivity is highly compound-dependent, determined by the molar absorptivity of the chromophore at the selected wavelength. UHPLC enhances separation efficiency through the use of sub-2μm particles, providing higher peak capacities (200-800) and shorter analysis times (1-10 minutes) compared to conventional LC [5]. The reduced particle size increases efficiency but requires instrumentation capable of withstanding higher operating pressures.

GC offers exceptional separation power with peak capacities reaching 1,000-10,000 in complex applications, particularly when comprehensive two-dimensional GC (GC×GC) is employed [8]. The technique provides excellent sensitivity for compatible analytes, with detection limits in the picogram to nanogram range depending on the detection method. Comprehensive 2D-LC represents the pinnacle of liquid separation power, with peak capacities reaching 1,000-10,000 [9]. This makes it particularly valuable for analyzing highly complex samples like natural product extracts, proteomic digests, and polymer mixtures that exceed the separation capacity of one-dimensional techniques.

Cross-Validation Applications in Pharmaceutical Research

Cross-validation of spectroscopic results with chromatographic methods provides enhanced confidence in analytical data through method orthogonality. LC-UV frequently serves as the reference method for potency assays in pharmaceutical quality control, with cross-validation against spectroscopic techniques like NMR or NIR confirming method accuracy [7]. The chromatographic separation preceding detection provides specificity that pure spectroscopy may lack, especially for complex formulations where excipients may interfere. For compounds with weak chromophores, LC with charged aerosol detection (LC-CAD) provides an alternative quantitative approach that responds independent of chemical structure, serving as an excellent orthogonal method for cross-validation [7].

UHPLC enables higher throughput cross-validation studies, with faster analysis times allowing more comprehensive method comparison within practical time constraints. In drug metabolism and pharmacokinetic studies, UHPLC-MS/MS often serves as the primary quantitative method, with cross-validation against LC-UV confirming results for higher concentration samples [10]. This approach combines the sensitivity of MS with the broader dynamic range and linearity of UV detection for comprehensive method verification.

GC and GC×GC provide orthogonal separation mechanisms for cross-validating LC-based methods, particularly for volatile analytes like residual solvents, metabolic profiling, and essential oil characterization [8]. The different separation principles (volatility versus polarity) and detection options make GC an ideal partner technique for verifying LC results in comprehensive testing schemes. Comprehensive 2D-LC offers unprecedented separation power for characterizing complex mixtures, with cross-validation against spectroscopic techniques confirming peak identity and purity. The structured chromatograms produced by orthogonal 2D-LC separations often group chemically related compounds, facilitating compound identification when combined with spectroscopic detection [5].

Experimental Protocols and Methodologies

LC-UV Method Development for Cleaning Verification

Cleaning verification represents a critical application of LC-UV in pharmaceutical manufacturing, ensuring equipment surfaces are free from API carryover between batches. A typical protocol involves: (1) Surface sampling using swabs wetted with an appropriate solvent; (2) Sample extraction from swabs; (3) LC-UV analysis with method-specific conditions [7]. For example, a validated 6-minute UHPLC-UV method for mometasone furoate determination employs a Waters Acquity UHPLC HSS T3 column (50 × 2.1 mm, 1.8 μm) at 40°C with acetonitrile and water (1:1, v/v) as isocratic mobile phase at 0.5 mL/min flow rate [7]. Method validation demonstrates linearity from 0.2-2.6 μg/mL, with precision and accuracy meeting regulatory requirements.

For multi-component cleaning verification, gradient methods provide simultaneous quantification of multiple APIs. Dong and colleagues developed a 10-minute gradient HPLC-UV method capable of quantifying multiple proprietary APIs at levels of 0.2 to 10 μg/mL [7]. Such universal methods significantly reduce method development time while maintaining the specificity required for reliable cleaning verification. The cross-validation of these LC-UV methods with spectroscopic techniques like FT-IR or direct surface analysis provides comprehensive cleaning verification, with each technique compensating for the limitations of the others.

Comprehensive 2D-LC Method Implementation

Implementing comprehensive 2D-LC requires careful optimization of multiple parameters to achieve successful separations. A standard protocol includes: (1) Selection of orthogonal separation mechanisms based on sample characteristics; (2) Optimization of first dimension conditions to maintain peak capacity with low flow rates (typically 0.1-0.5 mL/min); (3) Development of fast second dimension separations (typically 0.5-2 minutes) to maintain first dimension resolution; (4) Interface configuration ensuring efficient transfer between dimensions [5].

The combination of normal-phase LC in the first dimension with reversed-phase LC in the second dimension provides high orthogonality for natural product analysis, but presents significant mobile phase compatibility challenges [5]. The use of micro-flow rates in the first dimension helps reduce dilution and provides flow rates compatible with second dimension injection volumes. Alternatively, the combination of hydrophilic interaction liquid chromatography (HILIC) and reversed-phase conditions offers improved mobile phase compatibility while maintaining high orthogonality [5].

For complex samples requiring the ultimate in separation power, stop-flow methods can be implemented when the second dimension separation cannot keep up with the first dimension sampling frequency [5]. This approach allows the use of longer second dimension columns, enhancing resolution and peak capacity at the cost of increased analysis time. The implementation of active modulation techniques, such as stationary-phase assisted modulation or active solvent modulation, helps address mobile phase incompatibility issues that may arise when combining orthogonal separation mechanisms [9].

Inter-laboratory Cross-Validation Protocol

Cross-validation across multiple laboratories ensures analytical data comparability in global clinical trials. A representative protocol for lenvatinib determination in human plasma involved: (1) Method validation at each laboratory according to regulatory guidelines; (2) Cross-validation using quality control samples and clinical study samples with blinded concentrations; (3) Statistical comparison of results across laboratories [10]. In this study, seven bioanalytical methods were developed across five laboratories, employing different sample preparation techniques (protein precipitation, liquid-liquid extraction, or solid-phase extraction) but all utilizing LC-MS/MS detection [10].

The cross-validation demonstrated that accuracy of quality control samples was within ±15.3% and percentage bias for clinical study samples was within ±11.6% across all laboratories and methods [10]. This approach confirms that concentration data can be reliably compared across different laboratories and clinical studies, despite methodological differences. Such cross-validation is particularly important for pharmaceutical development, where studies often span multiple continents and years, requiring assurance of data consistency throughout the drug development lifecycle.

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Reagents and Materials for Chromatographic Techniques

| Category | Specific Examples | Function in Analysis | Technical Considerations |

|---|---|---|---|

| Stationary Phases | C8, C18, HILIC, phenyl-hexyl, pentafluorophenyl | Molecular separation based on chemical interactions | Selectivity, pH stability, retention mechanism |

| Mobile Phase Additives | Formic acid, ammonium acetate, ammonium formate, TFA | Modulate retention, improve ionization, control pH | MS-compatibility, volatility, concentration optimization |

| Sample Preparation | Oasis HLB, C18, MCX, WCX cartridges | Extract, clean-up, and concentrate analytes | Recovery, selectivity, automation compatibility |

| Reference Standards | Certified reference materials, stable isotope-labeled internal standards | Quantification, method calibration, recovery determination | Purity, stability, traceability, availability |

The selection of appropriate research reagents and materials significantly impacts chromatographic method performance. Stationary phase chemistry determines the fundamental separation mechanism, with C18 providing reversed-phase retention for moderate to non-polar compounds, while HILIC phases retain polar compounds through a combination of partitioning and electrostatic interactions [5]. The trend toward superficially porous particles (also called core-shell) provides improved efficiency compared to fully porous particles, approaching the performance of sub-2μm particles without the same pressure limitations.

Mobile phase additives play a critical role in separation optimization and detection compatibility. Formic acid and acetic acid (typically 0.05-0.1%) improve protonation in positive ion MS detection, while ammonium acetate or formate (1-10mM) provide buffering capacity for retention time stability [10]. Trifluoroacetic acid (TFA) offers excellent peak shape for peptides and proteins but can cause significant ion suppression in MS detection, making it less suitable for LC-MS applications.

Sample preparation materials, particularly solid-phase extraction (SPE) sorbents, enable analyte extraction, clean-up, and concentration from complex matrices. Mixed-mode sorbents combining reversed-phase and ion-exchange mechanisms provide enhanced selectivity for basic or acidic compounds in biological matrices [10]. The move toward 96-well plate formats has automated and increased throughput for sample preparation in high-volume applications like bioanalysis and metabolomics.

Reference standards ensure method accuracy and precision, with certified reference materials providing traceability to international standards. Stable isotope-labeled internal standards (e.g., ²H, ¹³C, ¹⁵N) correct for variability in sample preparation and matrix effects, particularly in LC-MS bioanalysis [10]. The availability of appropriate reference materials often determines the feasibility of method development and validation for pharmaceutical compounds.

Workflow Visualization and Signaling Pathways

Chromatographic Analysis Workflow

The workflow for chromatographic analysis begins with sample preparation, where compounds of interest are extracted from the sample matrix and potential interferents are removed. Method selection follows, with technique choice dictated by analyte properties (volatility, polarity, stability), matrix complexity, and required sensitivity. The chromatographic separation then occurs, with compounds differentially partitioning between stationary and mobile phases based on their physicochemical properties. Detection follows separation, with technique selection (UV, MS, CAD, FID) determined by analyte characteristics and information requirements. Data analysis transforms detector signals into quantitative results through peak integration and calibration curves. Cross-validation with spectroscopic techniques confirms method accuracy and identifies potential interferences. The final stage involves scientific interpretation of results and reporting within the context of the study objectives.

2D-LC Instrument Configuration

The comprehensive 2D-LC system configuration employs two independent separation systems connected via a modulation interface. The first dimension pump delivers mobile phase through the first dimension column, where the initial separation occurs based on one retention mechanism (e.g., reversed-phase). The modulation interface, typically a multi-port switching valve with dual storage loops, continuously collects small-volume fractions of the first dimension effluent. The second dimension pump delivers a separate mobile phase, typically at higher flow rates, through the second dimension column with different selectivity (e.g., HILIC). The modulation interface alternately injects the collected fractions onto the second dimension column for rapid secondary separation. Finally, the detection system monitors the column effluent, with mass spectrometry providing the additional dimensionality needed for compound identification in complex mixtures.

Chromatographic techniques provide powerful separation capabilities that complement spectroscopic methods in comprehensive analytical strategies. LC-UV offers robust, cost-effective quantification for quality control applications, while UHPLC enhances throughput and resolution for demanding separations. GC provides exceptional separation power for volatile compounds, with comprehensive 2D-GC extending these capabilities to extremely complex mixtures. Comprehensive 2D-LC represents the cutting edge in liquid separation science, with orthogonality between dimensions dramatically increasing peak capacity for the most challenging samples.

The cross-validation of chromatographic and spectroscopic results establishes a foundation of data reliability in pharmaceutical research, with each technique compensating for the limitations of the others. As analytical challenges continue to evolve with increasingly complex pharmaceutical formulations and stricter regulatory requirements, the strategic implementation of orthogonal chromatographic techniques will remain essential for comprehensive product characterization. The continued development of stationary phases, instrumentation, and data analysis tools will further enhance the capabilities of these separation techniques, ensuring their central role in pharmaceutical analysis for the foreseeable future.

The integration of spectroscopic and spectrometric techniques with chromatographic methods represents a cornerstone of modern analytical science, particularly in pharmaceutical development and quality control. These hybrid approaches provide a powerful framework for cross-validating analytical results, ensuring data reliability, and meeting stringent regulatory requirements. Ultraviolet-Visible (UV-Vis) spectroscopy, Mass Spectrometry (MS), and Charged Aerosol Detection (CAD) each offer unique capabilities that address specific analytical challenges across the drug development pipeline. Within the framework of analytical cross-validation, these techniques provide complementary data streams that collectively build a comprehensive molecular understanding of drug substances and products.

The contemporary analytical laboratory leverages these techniques not in isolation, but as interconnected components of an integrated workflow. This guide provides a detailed comparison of UV, MS, and CAD technologies, focusing on their operational principles, performance characteristics, and practical application in conjunction with chromatographic separations. Recent instrumentation advances in 2025 have further enhanced the speed, sensitivity, and workflow efficiency of these techniques, making them more accessible and powerful than ever for researchers and drug development professionals tasked with ensuring product quality, safety, and efficacy.

Technique Fundamentals and Instrumentation Trends

The foundational principles of UV, MS, and CAD dictate their specific applications, strengths, and limitations within the analytical laboratory. Understanding these core mechanisms is essential for selecting the appropriate technique for a given analytical challenge.

UV-Vis Spectroscopy operates on the principle of electronic transitions, where molecules absorb light in the ultraviolet and visible regions (typically 190-800 nm), promoting electrons to higher energy states. The resulting absorption spectrum provides information about chromophore presence and concentration. A significant 2025 development in UV-Vis instrumentation focuses on improved optical stability through robust components with fewer moving parts, thermal regulation, and solid-state light sources, which reduces drift over time and increases instrument lifespan [11]. Modern systems also feature smaller footprints without compromising performance, addressing the premium on bench space in shared and mobile laboratories [11]. Furthermore, intuitive user interfaces with guided workflows and real-time visual feedback have become standard, minimizing training time and user error for multidisciplinary teams [11].

Mass Spectrometry separates and detects ionized atoms or molecules based on their mass-to-charge ratio (m/z). Its unparalleled specificity comes from its ability to precisely determine molecular mass and elucidate structural features through fragmentation patterns. The dominant trend in 2025 MS instrumentation is the push toward higher performance in smaller formats. Benchtop systems now deliver capabilities once reserved for floor-standing instruments, with Waters' Xevo MRT MS, for example, providing ultra-high 100k resolution with sub-ppm sensitivity in a compact qTOF format [12]. There is also a significant shift toward top-down proteomic approaches for intact protein analysis, overcoming limitations of bottom-up methods for characterizing proteoforms and post-translational modifications [12]. Instruments like Bruker's timsTOF series and Thermo Fisher's Orbitrap Astral Zoom are designed for this purpose, offering faster scan speeds, higher throughput, and expanded multiplexing capabilities [13] [12].

Charged Aerosol Detection is a universal chromatographic detection technique based on aerosol mass measurement. The effluent from a chromatograph is nebulized into droplets, which are dried to remove volatile mobile phase components, leaving analyte particles. These particles are then charged and detected by a highly sensitive electrometer, generating a signal proportional to the analyte mass [14]. A key characteristic of CAD is its uniform response for non-volatile and semi-volatile analytes, which simplifies quantitation even in the absence of authentic standards [14]. Recent advances focus on optimization and troubleshooting protocols to ensure data quality and instrument reliability. Critical parameters include optimizing the Power Function Value (PFV) to improve dynamic range and linearity, and employing an inverse gradient (admixed post-column solvent) to minimize baseline drift in gradient elution methods [14].

Table 1: Comparative Overview of Fundamental Techniques

| Feature | UV-Vis Spectroscopy | Mass Spectrometry (MS) | Charged Aerosol Detection (CAD) |

|---|---|---|---|

| Fundamental Principle | Electronic energy transitions | Mass-to-charge ratio separation & detection | Aerosol charging & charge measurement |

| Detection Mechanism | Photodetection of absorbed light | Ion detection (e.g., electron multiplier) | Electrometer measurement of particle charge |

| Primary Output | Absorption spectrum (A vs. λ) | Mass spectrum (Intensity vs. m/z) | Chromatogram (Response vs. Time) |

| Key Instrument Trends (2025) | Compact designs, intuitive interfaces, stable optics [11] | Top-down proteomics, benchtop high-resolution, workflow efficiency [13] [12] | Optimized PFV for linearity, inverse gradient for gradient elution [14] |

| Sample Destructiveness | Non-destructive | Destructive | Destructive |

Performance Comparison and Experimental Data

Selecting the appropriate analytical technique requires a clear understanding of performance metrics and how they relate to specific application needs. The following section provides a detailed, data-driven comparison.

Sensitivity and Dynamic Range: MS consistently offers the highest sensitivity, capable of detecting analytes at femtomole to attomole levels, particularly in Selected Reaction Monitoring (SRM) modes on triple quadrupole instruments. The Thermo Orbitrap Excedion Pro MS, for instance, provides enhanced sensitivity for characterizing biotherapeutics like monoclonal antibodies [13]. CAD provides universal detection for non-volatile compounds with sensitivity typically in the low nanogram range [14]. Its response is relatively uniform across chemically diverse analytes, which is a significant advantage over UV for compounds lacking a strong chromophore. UV-Vis sensitivity is highly dependent on the molar absorptivity of the analyte, with typical detection limits in the microgram range for compounds with good chromophores. A 2025 study on pharmaceutical tablets demonstrated that UV-Vis can provide sufficient sensitivity for real-time release testing, with effective sample characterization up to 0.4 mm penetration depth [15].

Specificity and Universality: MS is the unrivaled leader in specificity due to its ability to discriminate by exact mass and generate unique fragmentation patterns. The Orbitrap Astral Zoom MS, for example, enables deeper proteomic coverage and more confident biomarker identification [13]. UV-Vis specificity is low to moderate, as spectra are often broad and overlapping, though diode array detection can aid in peak purity assessment. CAD is a universal technique, providing a response for any non-volatile analyte, but it offers no inherent structural information, relying entirely on chromatographic separation for specificity [14].

Table 2: Quantitative Performance Comparison for Representative Applications

| Performance Parameter | UV-Vis Spectroscopy | Mass Spectrometry (MS) | Charged Aerosol Detection (CAD) |

|---|---|---|---|

| Typical Detection Limit | Microgram range | Femtomole to attomole range | Low nanogram range [14] |

| Dynamic Range | ~2-3 orders of magnitude | 4-6 orders of magnitude | 3-4 orders of magnitude (can be extended with PFV optimization) [14] |

| Universality of Response | Response requires a chromophore | Response depends on ionization efficiency | Universal for non-volatile analytes [14] |

| Quantitative Precision (RSD) | Typically <1% | Typically 1-5% (can be higher with complex samples) | 0.4%-2.1% (demonstrated for sialic acids) [16] |

| Key Quantitative Strength | Well-suited for high-throughput concentration assays | Unmatched sensitivity and specificity for targeted assays | Uniform response enables quantification without pure standards [14] |

Chromatographic Integration and Cross-Validation workflows

The true power of UV, MS, and CAD is realized when they are coupled with chromatographic separation techniques, primarily High-Performance Liquid Chromatography (HPLC). This combination provides a multidimensional analytical platform where separation and detection are optimized independently.

HPLC-UV/VIS remains the most widely deployed combination for quantitative analysis of chromophoric compounds in quality control laboratories due to its robustness, ease of use, and cost-effectiveness. Its application in impurity profiling is well-established.

HPLC-MS is the gold standard for identification, structural elucidation, and trace-level quantification. Its superiority in cross-validation comes from its ability to provide definitive identity confirmation based on molecular mass and fragmentation pattern, orthogonal to retention time. The 2025 introductions of systems like the Thermo Orbitrap Excedion Pro, which combines Orbitrap mass analysis with alternative fragmentation technologies, are particularly impactful for the characterization of complex biomolecules, enabling a deeper understanding of biotherapeutics [13].

HPLC-CAD excels where UV detection is inadequate and MS detection is unnecessary or problematic. It is a premier choice for quantifying compounds lacking chromophores, such as carbohydrates, lipids, inorganic ions, and surfactants. A salient 2025 application from the field of biopharmaceuticals is the determination of total sialic acid in therapeutic proteins using a label-free HPLC-CAD method [16]. This method utilized a mixed-mode HILIC-IEX separation with an in-line pre-column protein trap, successfully validating the method for specificity, linearity (R > 0.999), precision (RSD 0.4-2.1%), and accuracy (recovery 93-102%) [16]. This showcases CAD's role in cross-validating critical quality attributes that are challenging for other techniques.

The diagram below illustrates a generalized workflow for cross-validating analytical results using these complementary techniques.

Figure 1: Workflow for Cross-Validating Chromatographic Results. This diagram illustrates how a single sample, after HPLC separation, can be analyzed in parallel or sequentially by three detection techniques (UV, MS, CAD). The resulting data streams are fused to provide a cross-validated analytical result, leveraging the unique strengths of each detector.

Essential Research Reagents and Materials

Successful implementation of these analytical techniques requires careful selection of supporting reagents and materials. The following table details key components for a standardized workflow, such as the HPLC-CAD method for sialic acid quantification.

Table 3: Key Research Reagent Solutions for Featured HPLC-CAD Workflow

| Reagent/Material | Function/Purpose | Example from Literature |

|---|---|---|

| Mixed-Mode HILIC-IEX Column | Stationary phase for retention and separation of polar, ionizable sialic acids. | Used in HILIC-IEX separation of NANA and NGNA [16]. |

| Pre-column C18 Protein Trap Cartridge | In-line removal of proteins to prevent interference and analytical column contamination. | Replaceable C18 cartridge for direct injection of protein samples [16]. |

| Volatile Mobile Phase Salts | Provides ionic strength for HILIC-IEX separation while maintaining CAD compatibility. | Ammonium formate or ammonium acetate used in mobile phase [16] [17]. |

| Charged Aerosol Detector (CAD) | Universal detection of non-volatile sialic acids post-separation. | Used for quantification of underivatized sialic acids [16] [14]. |

| High-Purity Water | Critical for mobile phase preparation and sample reconstitution to minimize background noise. | Ultrapure water from systems like Milli-Q [16]. |

| Sialidase Enzyme | Enzymatic release of sialic acids from glycoproteins for total sialic acid determination. | SialEXO used to hydrolyze sialic acids from therapeutic proteins [16]. |

Application Protocols: HPLC-CAD for Sialic Acid Quantification

The following detailed protocol is adapted from the 2025 study that developed and validated a label-free HPLC-CAD method for determining total sialic acid in therapeutic proteins [16]. This protocol serves as a concrete example of a modern CAD application addressing a real-world bioanalytical challenge.

A novel label-free HPLC method utilizing mixed-mode hydrophilic interaction-ion exchange liquid chromatography (HILIC-IEX) coupled with charged aerosol detection (CAD) for the quantification of total sialic acid (N-acetylneuraminic acid, NANA, and N-glycolylneuraminic acid, NGNA) in therapeutic protein drug products.

Experimental Workflow

Figure 2: HPLC-CAD Workflow for Sialic Acid Analysis. This diagram outlines the key steps in the label-free quantification of total sialic acid in therapeutic proteins, highlighting the integrated protein trapping and HILIC-IEX separation.

Step-by-Step Procedure

Sample Preparation (Desialylation):

- Transfer an aliquot of the therapeutic protein solution (containing approximately 50-200 µg of protein) to a centrifugal filter unit (e.g., 10 kDa MWCO).

- Exchange the formulation buffer with an enzymatic reaction buffer (e.g., 25 mM sodium phosphate, pH 6.0) via centrifugation.

- Add sialidase enzyme (e.g., SialEXO, 2000 units) to the retentate.

- Incubate the mixture at 37°C for 3 hours to quantitatively release sialic acids.

- Filter the hydrolysate through the centrifugal device. The released sialic acids (NANA and NGNA) will be in the filtrate, while the desialylated protein is retained.

Chromatographic Separation and Detection:

- HPLC System: Configure a UHPLC or HPLC system with a mixed-mode HILIC-IEX column (e.g., Acclaim Mixed-Mode WAX-1). The system should be equipped with a six-port valve and a replaceable pre-column C18 cartridge (e.g., 4.6 × 10 mm) installed prior to the injection valve for in-line protein trapping.

- Mobile Phase: Prepare a volatile mobile phase. Mobile Phase A: 90% Acetonitrile (ACN) with 10% 100 mM Ammonium Formate (pH 4.5). Mobile Phase B: 10% ACN with 90% 100 mM Ammonium Formate (pH 4.5).

- Gradient: Use a linear gradient from 100% A to 60% A over 10 minutes, at a flow rate of 0.8 mL/min.

- Detection: Use a Charged Aerosol Detector. Set the evaporator temperature to 40°C and the data collection rate to 10 Hz. Optimize the Power Function Value (PFV) for the desired dynamic range (typically between 1.0 and 2.0).

- Injection: Directly inject the filtered hydrolysate (recommended: 5-10 µL).

Quantification:

- Identify NANA and NGNA peaks by comparing their retention times with those of authentic standards.

- Construct a calibration curve (e.g., 1-100 µg/mL) for NANA and NGNA. The method demonstrated excellent linearity (R > 0.999) [16].

- Calculate the total sialic acid content in the original protein sample based on the summed peak areas of NANA and NGNA, using the calibration curve and accounting for dilution factors.

Key Method Parameters and Validation Data

The developed method was rigorously validated, demonstrating satisfactory performance characteristics essential for a robust analytical procedure [16]:

- Specificity: Achieved baseline separation of NANA and NGNA without interference from the protein matrix.

- Linearity: R > 0.999 over the validated range.

- Precision: Repeatability demonstrated by RSD between 0.4% and 2.1%.

- Accuracy: Recovery of sialic acids ranged between 93% and 102%.

- Sensitivity: Quantitation limit (QL) was below 25 ng.

The synergistic use of UV-Vis spectroscopy, Mass Spectrometry, and Charged Aerosol Detection provides a powerful, multi-faceted toolkit for modern pharmaceutical analysis. As demonstrated, each technique occupies a distinct niche: UV-Vis for robust, cost-effective quantification of chromophores; MS for unparalleled specificity, sensitivity, and structural elucidation; and CAD for universal detection of non-volatile analytes where UV and MS face limitations. The ongoing evolution of these technologies in 2025—toward greater sensitivity, miniaturization, user-friendliness, and workflow integration—further solidifies their central role. When strategically deployed within a framework of cross-validation, either in parallel or via orthogonal method development, these techniques provide the comprehensive data integrity required to advance drug candidates confidently from discovery through development and into quality-controlled manufacturing, ultimately ensuring the delivery of safe and effective therapeutics.

In the rigorous world of analytical science, particularly in regulated industries like pharmaceutical development and food safety, the reliability of analytical methods is paramount. Cross-validation has emerged as a critical process to ensure that different analytical methods, whether within the same laboratory or across multiple sites, produce comparable, reliable, and accurate data. This process is especially crucial when comparing established methods with emerging technologies or when methods are transferred between laboratories. The International Council for Harmonisation (ICH) M10 guideline explicitly mandates cross-validation to demonstrate data comparability when multiple bioanalytical methods or laboratories are involved in a single study or across studies whose data will be compared [18]. Without proper cross-validation, discrepancies between methods can lead to incorrect conclusions about a drug's pharmacokinetics, efficacy, or safety, ultimately compromising regulatory decisions and public health [18].

The fundamental rationale for cross-validation lies in its ability to provide assurance of method reliability across three essential analytical performance characteristics: specificity, accuracy, and precision. Specificity ensures that the method can distinguish the analyte from interfering components in complex matrices. Accuracy reflects the closeness of measured values to the true value, while precision indicates the agreement between a series of measurements from multiple sampling of the same homogeneous sample. In complex matrices—such as biological samples, food products, or environmental samples—the presence of interfering components makes these parameters challenging to maintain, thus necessitating robust cross-validation protocols. This article examines the application of cross-validation through the lens of spectroscopic and chromatographic method comparison, providing a structured framework for analytical scientists and researchers.

Theoretical Foundations of Cross-Validation

Defining Cross-Validation in Analytical Contexts

Cross-validation in analytical science serves a distinct purpose from its namesake in machine learning. In the context of method validation, cross-validation is defined as a comparison of validation parameters of two bioanalytical methods to ensure data comparability [18]. This process is required when data are obtained from different fully validated methods within a study, from different laboratories using the same bioanalytical method, or from different fully validated methods across studies that will be combined or compared to support special dosing regimens or regulatory decisions regarding safety, efficacy, and labeling [18].

The statistical foundation of cross-validation rests on demonstrating that two methods provide equivalent results within defined acceptance criteria, or that any systematic bias between methods is quantified and accounted for in data interpretation. Unlike traditional validation parameters that often have predefined acceptance criteria (e.g., ±15% for accuracy and precision), cross-validation under ICH M10 deliberately omits specific acceptance criteria, instead emphasizing statistical approaches to assess comparability [18]. This represents a significant shift from previous industry practices where Incurred Sample Reanalysis (ISR) criteria were often applied as a surrogate benchmark for cross-validation acceptance [18].

The Challenge of Complex Matrices

Complex matrices present unique challenges for analytical methods, particularly regarding specificity. Biological samples like plasma, serum, or tissue homogenates contain numerous interfering substances that can co-elute or produce signals that overlap with the target analyte. Similarly, food matrices contain a wide variety of compounds that can interfere with analysis [19] [20]. These matrix effects can disproportionately affect different analytical techniques, making cross-validation between disparate methods especially important.

For instance, chromatographic methods like HPLC separate analytes from matrix components temporally, while spectroscopic techniques like ICP-MS or Raman spectroscopy must rely on spectral resolution or sample preparation to minimize interferences [19]. Without proper cross-validation, a method might appear valid for a simple standard solution but fail to provide accurate results in complex sample matrices. Cross-validation exercises specifically test whether different methods can overcome these matrix effects consistently, ensuring results are comparable regardless of the analytical technique employed.

Cross-Validation Methodologies and Regulatory Frameworks

Regulatory Expectations and Guidelines

The regulatory landscape for cross-validation has evolved significantly with the implementation of ICH M10, which establishes global standards for bioanalytical method validation. According to these guidelines, cross-validation should be performed to demonstrate data comparability when multiple methods or laboratories are involved in generating data for a single study or across studies where comparison will be performed [18]. However, unlike other validation parameters, ICH M10 does not specify acceptance criteria for cross-validation, creating both challenges and opportunities for the industry to develop scientifically sound approaches [18].

Prior to ICH M10, bioanalytical method validation was guided by regional guidelines from the FDA and EMA. The FDA's 2018 Bioanalytical Method Validation Guidance emphasized cross-validation when two or more methods are used to generate data within the same study or across different studies, but similarly did not define specific acceptance criteria [18]. The EMA 2011 Guideline provided more specific guidance, suggesting that when quality control (QC) samples are used for cross-validation, the mean accuracy between QCs of each method should be <15%, and when study samples are used, at least two-thirds of the samples should be within 20% [18]. The transition to ICH M10 represents a move away from rigid pass/fail criteria toward more nuanced statistical assessment of comparability.

Practical Implementation Approaches

Implementing cross-validation requires careful experimental design and statistical analysis. The fundamental principle involves analyzing the same set of samples using both methods being compared. These samples can include calibration standards, quality control samples, and, most importantly, actual study samples that represent the complete matrix complexity [18]. The resulting data are then subjected to statistical analysis to determine the level of agreement between methods and to identify any consistent bias.

ICH M10 recommends assessing the bias between methods using statistical approaches, with the responsibility for implementing and interpreting statistical analysis often falling on clinical pharmacology or biostatistics departments rather than the bioanalytical laboratory alone [18]. This collaborative approach ensures that the end users of the data understand any nuances in method comparability and can make informed decisions about combining or comparing data from different sources. The outcome of a cross-validation study should be either a confirmation that methods are equivalent or a quantification of bias that can be accounted for in data interpretation [18].

Experimental Design for Cross-Validation Studies

Sample Selection and Preparation

Proper sample selection is critical for meaningful cross-validation results. The samples used should represent the entire range of concentrations expected in actual study samples and should include the complete biological or material matrix with all its potential complexities. For pharmaceutical applications, this typically means using incurred samples (samples from dosed subjects) rather than just spiked quality control samples, as incurred samples may contain metabolites or matrix effects not present in artificially prepared samples [18].

The number of samples should be sufficient to provide adequate statistical power for comparing methods. While there is no universally prescribed number, regulatory guidelines from other contexts (such as incurred sample reanalysis) often use 5-10% of study samples or a minimum number of samples (e.g., 20) as a benchmark [18]. Samples should be aliquoted appropriately to ensure that each method tests portions from the same original sample, and stability of the analytes during the testing process should be confirmed.

Experimental Workflow for Method Comparison

The following workflow visualization outlines a generalized approach for conducting cross-validation studies between analytical methods:

Figure 1: Cross-Validation Workflow for Analytical Methods

Comparative Analysis of Spectroscopic and Chromatographic Methods

Methodological Principles and Strengths

Chromatographic methods, particularly reversed-phase high-performance liquid chromatography (RP-HPLC), separate compounds based on their differential partitioning between a mobile and stationary phase. When coupled with detection methods like diode array detection (DAD) or mass spectrometry, HPLC provides high specificity, accuracy, and precision for quantifying target analytes in complex matrices [21]. The AQbD (Analytical Quality by Design) approach further enhances method robustness by systematically identifying and controlling factors that impact method performance [21].

Spectroscopic techniques encompass a broad range of technologies that measure the interaction between matter and electromagnetic radiation. These include atomic techniques like inductively coupled plasma optical emission spectrometry (ICP-OES) for elemental analysis [22], molecular techniques like Raman spectroscopy [19], and nuclear magnetic resonance (NMR) spectroscopy for structural information [19]. These methods often offer advantages in speed, minimal sample preparation, and the ability to perform non-destructive analysis.

Quantitative Performance Comparison

The table below summarizes key performance characteristics of representative spectroscopic and chromatographic methods based on recent applications in complex matrices:

Table 1: Performance Comparison of Analytical Techniques in Complex Matrices

| Analytical Technique | Application Context | Specificity Indicators | Accuracy/Recovery | Precision (RSD) | Detection Limits | Reference |

|---|---|---|---|---|---|---|

| RP-HPLC with DAD | Favipiravir quantification in tablets | Peak purity >99%; Resolution from impurities | 98-102% | <2% | Not specified | [21] |

| ICP-OES | Trace element analysis in coffee | Spectral resolution of element-specific emissions | 93.4-103.1% | <10% (relative error <20%) | LOQ: 0.06-7.22 µg/kg | [19] |

| ICP-MS | Heavy metals in plastic food packaging | Mass resolution of target isotopes | 82.6-106% | Not specified | LOD: 0.10-0.85 ng/mL | [19] |

| Raman Spectroscopy | Alcohol measurement in beverages | Unique molecular fingerprint spectra | Comparable to reference methods | Not specified | Non-destructive, through-container | [19] |

| LA-ICP-OES | Solid food material analysis | Spatial and spectral resolution | Relative error <20% for most elements | >10% reproducibility | LOQ: 0.06 μg/g (Sr) to 400 μg/g (S) | [19] |

Comparative Experimental Data

Recent studies provide direct comparisons between methods for specific applications. In pharmaceutical analysis, an RP-HPLC method developed using an AQbD approach for favipiravir quantification demonstrated excellent performance characteristics, with system suitability parameters within United States Pharmacopeia (USP) limits and RSD values <2%, indicating high precision [21]. The method showed excellent linearity, sensitivity, and selectivity, with the mobile phase consisting of acetonitrile and disodium hydrogen phosphate anhydrous buffer (pH 3.1, 20 mM) in an 18:82 v/v ratio, using an Inertsil ODS-3 C18 column (250 mm, 4.6 mm, 5 μm, and 100 Å) with DAD detection at 323 nm [21].

In elemental analysis, ICP-OES methods have been validated for assessing chemical purity in radiopharmaceutical production. One study established that ICP-OES met validation criteria for most elements, though aluminum and calcium suffered from matrix effects [22]. The apparent molar activity calculated by ICP-OES was congruent with DOTA-titration-based effective molar activity when these problematic elements were excluded, demonstrating how cross-validation can identify specific limitations in analytical methods [22].

Case Studies: Cross-Validation in Practice

Pharmaceutical Quality Control

In a recent study validating methods for quality assessment of 67Cu from cyclotron production, researchers performed rigorous cross-validation between ICP-OES and γ-spectrometry methodologies [22]. The ICP-OES method was validated for determining non-radioactive metal impurities, while HPGe γ-spectrometry was validated for assessing radionuclidic purity. This approach recognized that neither technique alone could provide complete quality assessment—ICP-OES quantified chemical impurities but could not distinguish radioactive isotopes, while γ-spectrometry identified radionuclidic impurities but could not quantify stable metal contaminants [22].

The cross-validation revealed that apparent molar activity calculated by ICP-OES aligned with effective molar activity determined by DOTA-titration when specific elements affected by matrix effects (Al and Ca) were excluded from the calculation [22]. This finding demonstrates how cross-validation can identify specific limitations in analytical methods and guide appropriate data interpretation strategies for regulatory submissions.

Food Authentication and Adulteration Detection

In food science, cross-validation between spectroscopic techniques has become increasingly important for detecting adulteration. A systematic review of analytical techniques for yogurt adulteration detection found that infrared, Raman, fluorescence, NMR spectroscopy, and hyperspectral imaging, when combined with chemometric approaches or machine learning models, consistently achieve high sensitivity and specificity [20]. These spectroscopic methods were effective at detecting adulterants such as vegetable oils, non-dairy proteins, and synthetic additives, with performance validated through cross-correlation with multiple techniques [20].

The integration of machine learning algorithms like principal component analysis, partial least squares methods, support vector machines, artificial neural networks, and deep learning approaches has enhanced discrimination accuracy, enabling reliable classification of adulterated samples even at trace concentrations [20]. This represents an advanced form of cross-validation where computational models serve as virtual validation tools against multiple analytical techniques.

The Scientist's Toolkit: Essential Materials for Cross-Validation Studies

Table 2: Essential Research Reagents and Materials for Cross-Validation Experiments

| Item | Function in Cross-Validation | Application Example | Critical Considerations |

|---|---|---|---|

| Certified Reference Materials (CRMs) | Provide traceable standards for method calibration and accuracy assessment | TraceCERT Multielement standard for ICP-OES calibration [22] | Certification according to ISO/IEC 17025 and ISO 17034 ensures reliability |

| Matrix-Matched Quality Controls | Assess method performance in relevant sample matrices | Incurred biological samples for bioanalytical method comparison [18] | Should represent complete matrix complexity, not just spiked standards |

| Internal Standard Solutions | Correct for analytical variability and matrix effects | Stable isotope-labeled analogs in mass spectrometry | Should be absent from native samples and not interfere with analyte detection |

| Chromatographic Columns | Provide separation mechanism for LC-based methods | Inertsil ODS-3 C18 column for RP-HPLC [21] | Column batch-to-batch reproducibility affects method transfer |

| Sample Introduction Systems | Interface samples with analytical instruments | Laser ablation systems for solid sample ICP-OES [19] | Must provide representative sampling of heterogeneous materials |

| Data Analysis Software | Enable statistical comparison of method results | MODDE 13 Pro software for Monte Carlo simulation [21] | Should implement appropriate statistical tests for method comparison |

Advanced Visualization: Cross-Validation Decision Pathway

The following diagram illustrates the decision-making process for interpreting cross-validation results and determining appropriate actions based on statistical outcomes:

Figure 2: Cross-Validation Results Decision Pathway

Cross-validation serves as a critical bridge between analytical techniques, ensuring that data generated from different methods, instruments, or laboratories maintain the specificity, accuracy, and precision required for informed decision-making in pharmaceutical development, food safety, and other regulated fields. The move away from rigid pass/fail criteria toward statistical assessment of comparability, as embodied in ICH M10, represents a maturation of the field—acknowledging that analytical method performance exists on a continuum rather than as a binary outcome [18].

As analytical technologies continue to evolve, with spectroscopic methods offering increasingly rapid and non-destructive analysis and chromatographic methods providing unparalleled separation power, the role of cross-validation will only grow in importance. By implementing robust cross-validation protocols that include appropriate sample selection, statistical analysis, and bias assessment, researchers can confidently combine and compare data across multiple analytical platforms, ultimately accelerating scientific discovery while maintaining the highest standards of data integrity.

Regulatory Framework and Guidelines Governing Analytical Method Cross-Validation

Analytical method cross-validation represents a critical process in pharmaceutical development and quality control, ensuring that analytical methods produce comparable and reliable results when transferred between different laboratories, instruments, or methodologies. For researchers and drug development professionals working with spectroscopic and chromatographic methods, cross-validation provides scientific evidence that methods perform consistently across different analytical platforms, thereby supporting data integrity and regulatory submissions. Within the framework of a broader thesis on cross-validation of spectroscopic results with chromatographic methods, this guide examines the regulatory expectations, experimental protocols, and practical implementation strategies that govern this essential process.

The modern regulatory landscape has evolved from a prescriptive "check-the-box" approach to a more scientific, risk-based lifecycle model. Recent guidelines, including the simultaneous release of ICH Q2(R2) and ICH Q14, emphasize building quality into analytical methods from the initial development phase rather than treating validation as a one-time event [23]. This paradigm shift recognizes that analytical procedures exist within a dynamic ecosystem where changes in instrumentation, site operations, and technological advancements necessitate robust cross-validation protocols to ensure ongoing data reliability, particularly when correlating results between sophisticated analytical platforms like spectroscopy and chromatography.

Key Regulatory Guidelines and Their Applications

The regulatory framework for analytical method cross-validation is established through several harmonized guidelines from international organizations and regulatory bodies. These guidelines provide the foundation for demonstrating method equivalence and ensuring data integrity during method transfers between laboratories or across different analytical platforms.

Table 1: Key Regulatory Guidelines Governing Analytical Method Cross-Validation

| Regulatory Body | Guideline | Focus Areas | Cross-Validation Requirements |

|---|---|---|---|

| International Council for Harmonisation (ICH) | ICH Q2(R2): Validation of Analytical Procedures [23] | Validation parameters, modernized approach for new technologies | Establishes foundational validation criteria that must be demonstrated across sites |

| International Council for Harmonisation (ICH) | ICH Q14: Analytical Procedure Development [23] | Analytical Target Profile (ATP), risk-based development | Promotes proactive definition of performance criteria for cross-site consistency |

| U.S. Food and Drug Administration (FDA) | M10 Bioanalytical Method Validation [24] | Bioanalytical assays for nonclinical/clinical studies | Harmonized expectations for regulatory submissions |

| U.S. Food and Drug Administration (FDA) | Guidance for Industry on Analytical Procedure Validation [25] | Lifecycle approach, data integrity throughout | Focus on continued method verification rather than one-time validation |

| European Medicines Agency (EMA) | EU GMP Annex 15: Qualification and Validation [25] | Risk-based validation, process validation lifecycle | Requires risk assessment to determine validation extent |

| World Health Organization (WHO) | WHO Technical Report Series [25] | Analytical method validation parameters | Global framework emphasizing method suitability for diverse settings |

The Lifecycle Approach to Method Validation

Contemporary regulatory thinking, as reflected in recent ICH and FDA guidelines, has embraced a lifecycle approach to analytical procedures [23] [25]. This perspective recognizes that method validation is not a single event but an ongoing process that begins with method development and continues throughout the method's operational life. The Analytical Target Profile (ATP), introduced in ICH Q14, serves as a prospective summary of the method's intended purpose and required performance criteria [23]. By defining the ATP at the outset, laboratories establish clear benchmarks for cross-validation activities, ensuring that transferred methods maintain their fitness-for-purpose regardless of where they are implemented.

The FDA specifically emphasizes a risk-based approach to validation, where the rigor of cross-validation should correspond to the method's criticality regarding patient safety and product quality [25]. This principle is echoed in EU GMP Annex 15, which requires pharmaceutical manufacturers to conduct thorough risk assessments to determine the appropriate extent of validation activities [25]. For cross-validation between spectroscopic and chromatographic methods, this means focusing resources on demonstrating equivalence for critical quality attributes that directly impact product performance and patient safety.

Experimental Design for Cross-Validation

Sample Selection and Preparation

Proper experimental design forms the foundation of scientifically sound cross-validation studies. Sample selection requires careful consideration to ensure results are statistically meaningful and representative of real-world conditions. Regulatory guidelines recommend testing a minimum of 40 patient specimens, though 100 specimens are preferable to identify unexpected errors due to interferences or sample matrix effects [26]. These specimens should be carefully selected to cover the entire clinically meaningful measurement range rather than through random selection [27].

For cross-validation studies between spectroscopic and chromatographic platforms, establishing appropriate quality control (QC) samples is crucial. Recent research demonstrates that using pooled QC samples containing all chemicals from all samples analyzed provides a reliable basis for normalization across different analytical platforms [28]. In long-term studies, creating a "virtual QC sample" by incorporating chromatographic peaks from all QC results through retention time and mass spectrum verification serves as a meta-reference for analyzing and normalizing test samples [28]. This approach is particularly valuable when sample components may not fully overlap with QC components over extended periods.

Experimental Protocol and Timeline

Cross-validation experiments should be conducted over multiple analytical runs on different days to minimize systematic errors that might occur in a single run [27]. A minimum of 5 days is recommended, though extending the experiment over a longer period (e.g., 20 days) better mimics real-world conditions [27] [26]. When comparing methods, samples should be analyzed within two hours of each other unless specimen stability requires shorter timeframes [27]. This temporal proximity is particularly important when comparing spectroscopic and chromatographic methods that may have different sample preparation requirements.

Duplicate measurements for both the reference and test method are recommended to minimize random variation effects [26]. Ideally, these duplicates should be two different samples analyzed in different runs or at least in different order rather than back-to-back replicates on the same sample [27]. Duplicate analyses help identify problems arising from sample mix-ups, transposition errors, and other mistakes that could significantly impact conclusions drawn from the experiment. For cross-validation between different analytical platforms, this replication provides essential data on method precision across different environments.

Diagram 1: Cross-Validation Experimental Workflow

Data Analysis and Statistical Methods

Graphical Data Analysis Techniques

Initial data analysis in cross-validation studies should begin with graphical techniques to visualize the relationship between methods and identify potential outliers or systematic errors. The scatter plot displays the test method results on the y-axis against the reference method results on the x-axis, providing a visual representation of the agreement between methods throughout the measurement range [26]. This graphical method helps identify the linearity of response and reveals any proportional relationships between methods.

The difference plot (Bland-Altman plot) displays the differences between methods on the y-axis against the average of the methods on the x-axis [26]. This visualization technique helps identify systematic biases that may be concentration-dependent and reveals whether differences are consistent across the measurement range. When inspecting these graphs, researchers should look for points that fall outside the general pattern, as these may indicate interferents or matrix effects that differentially affect the spectroscopic and chromatographic methods being compared [27].

Statistical Approaches for Method Comparison

Appropriate statistical analysis is essential for demonstrating method equivalence in cross-validation studies. While correlation analysis and t-tests are commonly used, they are inadequate for fully assessing method comparability [26]. Correlation measures the strength of relationship between methods but cannot detect constant or proportional biases, while t-tests may fail to detect clinically meaningful differences in small sample sizes or detect statistically significant but clinically irrelevant differences in large datasets [26].

Linear regression statistics, including Deming regression and Passing-Bablok regression, are preferable for methods comparison studies as they allow estimation of systematic error at multiple medical decision concentrations and provide information about the proportional and constant nature of the error [27] [26]. For a cholesterol comparison study where the regression line is Y = 2.0 + 1.03X, the systematic error at a critical decision level of 200 mg/dL would be 8 mg/dL [27]. For methods with narrow analytical ranges, calculating the average difference (bias) between methods using paired t-test calculations is often more appropriate [27].

Table 2: Statistical Methods for Cross-Validation Data Analysis

| Statistical Method | Application Context | Key Outputs | Interpretation Guidelines |

|---|---|---|---|