Design and Optimize Spectroscopy Systems: A Guide to Optical Software for Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on leveraging optical software for spectroscopy system design and optimization.

Design and Optimize Spectroscopy Systems: A Guide to Optical Software for Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on leveraging optical software for spectroscopy system design and optimization. It covers foundational principles of light-matter interactions and software selection, details methodologies for building and simulating systems like Raman and absorption spectrometers, offers strategies for troubleshooting and performance optimization, and outlines best practices for validation and comparative analysis to ensure regulatory compliance and robust results in biomedical applications.

Core Principles and Software Selection for Spectroscopy Design

Light-matter interactions form the foundational principles underlying optical spectroscopy, a critical analytical methodology across scientific research and industrial applications. These interactions—absorption, emission, and scattering—occur when photons encounter atoms or molecules, leading to energy exchanges that reveal essential information about material composition, structure, and dynamics. Optical spectroscopy software transforms raw spectral data into actionable insights by processing and interpreting these interactions, enabling researchers to perform precise material characterization, quality control, and compliance with regulatory standards across diverse sectors.

The global optical spectroscopy software market, valued at approximately $1.2 billion in 2024, is projected to reach $2.5 billion by 2033, growing at a compound annual growth rate (CAGR) of 9.2% [1]. This growth is propelled by increasing demand for advanced analytical tools in pharmaceuticals, biotechnology, environmental testing, and food and beverage industries. These tools enhance the accuracy, efficiency, and automation of analytical processes, thereby supporting informed decision-making and innovation in research and development [1]. North America currently holds the largest market share, driven by a strong presence of key industry players and advanced research facilities, while the Asia-Pacific region is experiencing rapid growth due to expanding industrial activities and rising investments in research and development [1].

Table 1: Global Optical Spectroscopy Software Market Overview (2024-2033)

| Metric | 2024 Value | 2033 Projected Value | CAGR (2026-2033) |

|---|---|---|---|

| Market Size | USD 1.2 Billion | USD 2.5 Billion | 9.2% |

| Dominant Region | North America | - | - |

| Fastest-Growing Region | - | Asia-Pacific | - |

The synergy between nanophotonics and machine learning is driving significant innovation in this field. Intelligent photonic systems, such as metasurfaces and diffractive optical processors, are revolutionizing how optical information is captured and processed, leading to more compact, efficient, and versatile spectroscopic systems [2]. Furthermore, emerging research continues to refine our understanding of these interactions; for instance, a recent study demonstrated a novel, eco-friendly method for fabricating optical microcavities that precisely control light-matter coupling, paving the way for more accessible and energy-efficient research into quantum technologies [3].

Technical Background: Core Principles and System Components

Fundamental Interaction Mechanisms

At the heart of spectroscopy lie three primary light-matter interaction phenomena, each providing distinct information about a sample:

- Absorption: This process occurs when a photon's energy is transferred to an atom or molecule, promoting it from a ground state to an excited electronic, vibrational, or rotational state. The wavelength at which absorption occurs provides a fingerprint for identifying substances and quantifying their concentration, following the Beer-Lambert law.

- Emission: After absorbing energy, a system can return to its ground state by emitting a photon. This emission, which can be spontaneous or stimulated, reveals information about the energy levels and dynamics of the system. Fluorescence and phosphorescence are key emission-based techniques.

- Scattering: This involves the redirection of light by a material without permanent energy transfer. Elastic scattering (e.g., Rayleigh scattering) conserves photon energy, while inelastic scattering (e.g., Raman scattering) involves energy exchange, providing detailed vibrational information about the sample.

Advanced studies, such as those on single benzene fluorophores (SBFs), leverage these principles to design materials with exceptional properties. For example, a novel fluorophore designated TGlu achieves close-to-unity fluorescence quantum yields (exceeding 90%) in both solution and solid states by strategically balancing donor-acceptor interactions within its molecular structure to control radiative and non-radiative decay pathways [4].

Essential Components of a Spectroscopy System

A modern spectroscopy system integrates several key components to control, measure, and interpret these interactions:

- Light Source: Provides photons across a specific wavelength range (e.g., UV, Vis, NIR).

- Sample Interface: Holds and presents the sample to the light beam in a consistent manner.

- Optical Components: Lenses, mirrors, and monochromators that direct and select wavelengths.

- Detector: Captures the light after interaction with the sample and converts it into an electrical signal.

- Spectroscopy Software: The critical element that controls hardware, acquires data, processes signals, and interprets results.

Table 2: Key Functionalities of Modern Spectroscopy Software

| Functionality | Description | Common Techniques |

|---|---|---|

| Data Acquisition | Controls instruments and collects raw spectral data. | UV-Vis, IR, NMR, Mass Spectrometry |

| Data Analysis | Processes spectra (e.g., baseline correction, peak fitting). | Raman, Fluorescence, NIR |

| Data Management | Stores, organizes, and retrieves spectral data. | All techniques |

| Reporting & Visualization | Generates reports and visual representations of data. | All techniques |

| Instrument Control | Automates and manages spectrometer settings. | All techniques |

The software segment is increasingly leveraging artificial intelligence (AI) and machine learning (ML) to enhance data analysis capabilities. AI-driven tools can automate spectral interpretation, identify complex patterns in large datasets, and even assist in inverse design of photonic components [5] [2]. Furthermore, the integration of spectroscopy software with Laboratory Information Management Systems (LIMS) creates streamlined workflows, improving traceability and efficiency in analytical laboratories [5].

Application Protocols in Industry and Research

Optical spectroscopy software enables a wide array of quantitative and qualitative analyses across diverse sectors. The table below summarizes the dominant applications, their key drivers, and relevant spectroscopic techniques.

Table 3: Key Application Areas and Drivers for Spectroscopy Software

| Application Area | Primary Drivers | Common Techniques Used |

|---|---|---|

| Pharmaceutical Quality Assurance | Regulatory compliance (FDA, EMA), need for non-destructive testing, batch consistency [6]. | UV-Vis, NIR, Raman |

| Material Identification | Need for rapid, non-destructive verification of raw material purity in aerospace, automotive, electronics [6]. | XRF, LIBS, OES |

| Environmental Monitoring | Stringent regulations on pollutants and heavy metals in air, water, and soil [6] [7]. | ICP-OES, Absorption Spectroscopy |

| Food Safety & Quality Control | Consumer safety concerns, regulatory requirements, detection of adulterants [6] [5]. | NIR, Fluorescence |

| Academic & Scientific Research | Discovery of new materials, analysis of biological samples, study of chemical reactions [6]. | Fluorescence, NMR, Mass Spec |

Protocol: Pharmaceutical Quality Assurance via UV-Vis Spectroscopy

Application Note: Verifying the composition and concentration of active pharmaceutical ingredients (APIs) in tablet form without destructive testing.

Principle: The API absorbs specific wavelengths of UV-Vis light proportional to its concentration in the tablet, allowing for quantification and verification against manufacturing specifications.

Materials & Equipment:

- UV-Vis spectrophotometer with integrating sphere accessory for solid samples

- Spectroscopy software with quantification and chemometrics modules (e.g., Thermo Fisher Scientific, Agilent, PerkinElmer)

- Certified reference standards of the API

- Intact tablets from the production batch

Procedure:

- Instrument Calibration:

- Power on the spectrophotometer and software. Allow the instrument to stabilize for at least 30 minutes.

- Using the software interface, create a new method for "Solid Tablet Analysis."

- Set the wavelength range to cover the API's known absorption maximum (e.g., 250-350 nm).

- Perform a background correction by collecting a baseline with an empty sample holder.

Standard Curve Generation:

- Grind a set of certified reference standard tablets with known API concentrations (e.g., 80%, 90%, 100%, 110%, 120% of label claim).

- For each ground standard, collect an absorption spectrum in triplicate using the software's data acquisition function.

- Use the software's quantitative analysis tool to plot the average absorbance at the λ_max against the known concentration and generate a linear calibration curve. The software should report the R² value, which must be >0.995 for acceptance.

Sample Analysis:

- Place an intact production tablet into the sample holder.

- Collect the absorption spectrum using the pre-defined method.

- The software will automatically compare the sample's absorption at λ_max to the calibration curve and calculate the API concentration.

Data Integrity & Reporting:

- The software should assign a unique, time-stamped ID to each acquired spectrum and maintain an audit trail of all actions.

- Generate a compliance report via the software's reporting module, including sample ID, calculated concentration, pass/fail status based on pre-set limits, and spectral graphs.

Outcome Metrics: This non-destructive method reduces waste and accelerates batch release times by providing real-time feedback on production quality, ensuring consistent drug efficacy and compliance with regulatory standards [6].

Protocol: Studying Strong Light-Matter Coupling with Solution-Processed Microcavities

Application Note: Fabricating and characterizing optical microcavities to study polariton formation, a hybrid state of light and matter.

Principle: Microcavities confine light between two mirrors, enhancing its interaction with excitons in an emissive material. When the interaction is strong enough, new quantum states called polaritons form, which can be observed as a splitting in the emission energy (Rabi splitting). This protocol uses a novel, low-cost, solution-based fabrication method [3].

Materials & Equipment:

- Spectroscopic software for photoluminescence (PL) and reflectance measurements

- CCD spectrometer or similar detection system

- Spin coater or dip-coating apparatus

- Precursor solutions for dielectric mirrors (e.g., polymer-based or colloidal solutions)

- Solution of the organic emitter under study (e.g., TGlu or similar single benzene fluorophore [4])

- Clean, optically flat substrate (e.g., glass slide, silicon wafer)

Procedure:

- Microcavity Fabrication:

- Bottom Mirror Deposition: Using the spin coater, deposit the first dielectric mirror layer onto the substrate. Program the software controlling the spin coater for the required speed and time to achieve the target thickness. Cure if necessary.

- Active Layer Deposition: Spin-coat the solution of the organic emitter directly onto the bottom mirror layer. Optimize parameters to form a uniform, smooth film.

- Top Mirror Deposition: Carefully deposit the top mirror layer using the same solution-process technique (spin or dip coating), completing the microcavity structure.

Spectral Characterization:

- Photoluminescence (PL) Measurement: Direct a laser at the excitation wavelength onto the microcavity sample. Use the spectroscopy software to acquire the PL spectrum emitted from the sample surface.

- Reflectance Measurement: Using a broadband light source, acquire the reflectance spectrum of the microcavity to identify the cavity mode.

Data Analysis & Polariton Observation:

- In the spectroscopy software, plot the PL and reflectance spectra on the same energy scale.

- Observe the emission peaks in the PL spectrum. The appearance of two distinct peaks near the energy where the cavity mode and exciton energy anticross is the signature of polariton formation (Rabi splitting).

- Use the software's peak fitting tool to measure the energy separation between these two peaks, which is the Rabi splitting energy (Ω).

Outcome Metrics: This protocol provides a low-cost, energy-efficient alternative to vacuum-based fabrication. It enables the observation of key quantum phenomena like polariton-mediated suppression of bimolecular annihilation, which can improve the stability and efficiency of light-emitting devices [3] [4].

Experimental Workflow and System Optimization

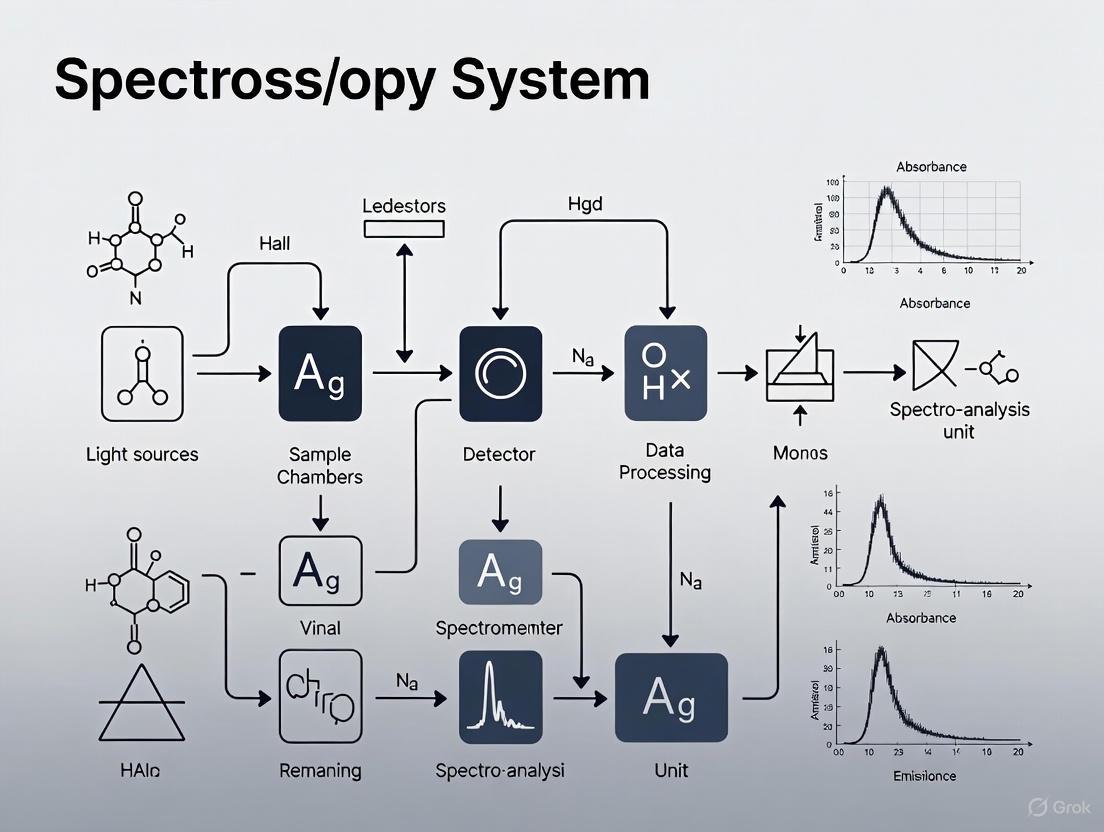

The process of conducting spectroscopic analysis and optimizing the system involves a logical sequence of steps, from sample preparation to data interpretation and system refinement. The workflow below visualizes this integrated process.

Diagram 1: Spectroscopy analysis workflow

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential materials and software solutions used in advanced spectroscopic studies, particularly those involving novel fluorophores and microcavities as described in the protocols.

Table 4: Essential Research Reagents and Materials for Advanced Spectroscopic Studies

| Item Name | Function/Description | Application Example |

|---|---|---|

| Single Benzene Fluorophores (SBFs) | Donor-acceptor substituted benzene cores acting as highly emissive organic materials with high quantum yields in both solution and solid states [4]. | TGlu fluorophore for waveguiding and photocatalysis studies. |

| Dielectric Mirror Precursors | Polymer or colloidal solutions used to create highly reflective mirrors via spin-coating or dip-coating for microcavity fabrication [3]. | Building solution-processed optical microcavities. |

| Polariton Microcavity | A structure that confines light, enhancing its interaction with matter to form hybrid light-matter particles (polaritons) for quantum studies [3]. | Studying strong light-matter coupling and quantum phenomena. |

| Spectroscopy Software with AI/ML Modules | Software incorporating machine learning algorithms for automated spectral analysis, peak identification, and data interpretation from large datasets [5] [2]. | High-throughput analysis, spectral pattern recognition. |

| ICP-OES Vertical Plasma Systems | Spectrometers with vertical plasma orientation for enhanced matrix tolerance and lower detection limits for trace metal analysis [7]. | Environmental monitoring of heavy metals; semiconductor purity testing. |

Structural Thermal Optical Performance (STOP) Analysis Workflow

For high-power applications and systems operating in harsh environments (e.g., space telescopes, manufacturing lasers), it is critical to simulate how structural and thermal changes affect optical performance. STOP analysis is an engineering workflow used in the design stage to optimize systems for real-world conditions [8].

Diagram 2: STOP analysis for system robustness

Procedure for STOP Analysis:

Define Environmental Loads: In the simulation software (e.g., ANSYS Mechanical), specify the thermal and structural loads the system will encounter, such as in-orbit temperature variations for a CubeSat or heat generation from a high-power laser [8].

Perform Structural & Thermal Analysis: Run a Finite Element Analysis (FEA) to calculate the resulting structural deformations and temperature distributions throughout the optical system.

Map Data to Optical Model: Export the resulting surface deformations and refractive index gradient data from the FEA and map them onto the corresponding components in the optical design software (e.g., ANSYS Optical Studio).

Ray Tracing & Wavefront Analysis: Perform a ray trace through the deformed optical system. Analyze key metrics such as the wavefront error, spot diagram, and beam profile at the image or focal plane.

Evaluate System Performance: Assess whether the system still meets performance requirements (e.g., maintaining a specific beam size or focal point) under the applied environmental loads.

Optimize Design: If performance is degraded, iteratively adjust the mechanical design, material choices, or support structures and repeat the analysis until the system performs robustly in all expected conditions.

Outcome Metrics: STOP analysis prevents costly redesigns and failures by ensuring optical systems maintain their performance specifications after being exposed to real-world structural and thermal stresses, a critical step for manufacturability and reliability [8].

In modern spectroscopy, the journey from conceptual design to a functional, optimized system relies heavily on advanced optical simulation and analysis software. These tools enable researchers and engineers to bypass traditional, costly cycles of physical prototyping and testing, thereby accelerating development and enhancing performance. This Application Note provides a detailed comparison of three prominent software packages—Ansys Speos, TracePro, and Bruker OPUS—framed within the context of designing and optimizing spectroscopy systems for drug development and scientific research. While Speos and TracePro are powerful simulation tools for the design and virtual prototyping of optical systems, OPUS serves as the dedicated platform for operating spectroscopic instruments and analyzing acquired data [9] [10] [11]. This document will outline their distinct capabilities, provide protocols for their application, and illustrate their roles within the spectroscopy system lifecycle.

The table below summarizes the core attributes, primary strengths, and ideal application contexts for each software package, providing a high-level overview for selection.

| Software | Primary Function | Key Strengths | Typical Application Context in Spectroscopy |

|---|---|---|---|

| Ansys Speos [9] [12] | Optical system design & simulation | - Human Vision simulation- GPU acceleration (Live Preview)- Strong automotive & sensor (LiDAR) focus- Stray light analysis | - Design of illumination for sample analysis- Sensor (camera, LiDAR) integration and layout simulation- Assessing human-readable displays in spectroscopic instruments |

| TracePro [10] [13] [14] | Optical & illumination design & simulation | - Biomedical-specific features (tissue scattering, fluorescence)- Strong stray light analysis- Non-sequential ray tracing for complex systems | - Design and optimization of spectrometer optical trains (gratings, lenses, detectors)- Modeling light-tissue interaction for medical diagnostics- Minimizing noise via stray light analysis in sensitive systems |

| Bruker OPUS [11] [15] | Spectral data acquisition & analysis | - Direct instrument control for FT-IR, NIR, Raman- Validated for cGMP/GLP/GAMP- Multivariate quantification (QUANT3) & library search | - Operating Bruker spectrometers- Processing and quantifying spectral data (e.g., QUANT3 with SVR/LR algorithms)- Ensuring regulatory compliance in pharmaceutical labs |

For researchers, the choice between these tools is not mutually exclusive. Speos and TracePro are design and simulation tools used during the R&D and engineering phase to create and model the physical spectrometer. In contrast, OPUS is an operational and data analysis software used to run the instrument and interpret results after the hardware is built. The following visualization maps the logical workflow of a spectroscopy project, showing the complementary roles of simulation and operational software.

Detailed Capabilities for Spectroscopy

Ansys Speos

Speos excels in the design and validation of optical systems, with a strong emphasis on real-world performance and perception. Its capabilities are crucial for developing the optical components and sensor integrations often found in advanced spectroscopic instruments.

- Core Simulation Engine: Speos uses a ray tracing and ray propagation engine to predict light propagation within a 3D model. A key differentiator is its Human Vision capability, which physiologically models the human eye's response to light, allowing for high-fidelity visualization of illumination, crucial for designing user interfaces on spectroscopic devices [9] [12].

- GPU Acceleration: The Speos Live Preview function, powered by NVIDIA GPUs, allows for real-time interactive simulation. This drastically cuts iteration time, enabling designers to see results from the beginning of a simulation and make immediate adjustments to optical properties [9].

- Key Spectroscopy Features:

- Stray Light Analysis: Tools like Light Expert allow users to visualize interactions between light and geometry to identify causes of ghost images, reflections, and stray light, which is critical for maintaining signal-to-noise ratio [9].

- Sensor Simulation: Speos can assess camera and LiDAR raw signals, enabling the virtual validation of sensor layout on vehicles or other platforms, which can be pertinent for field-deployed spectroscopic systems [9] [16].

- Material and Surface Properties: It can model complex surface behaviors, including measured spectral 3D BxDFs, and volume behaviors like spectral absorption and scattering [9].

TracePro

TracePro is a dedicated optical engineering tool renowned for its precision in modeling light propagation in complex systems, making it particularly suited for the intricate optical trains of spectrometers and biomedical devices.

- Core Simulation Engine: Its foundation is a powerful non-sequential ray tracing engine, which is essential for simulating the complex paths of light in spectroscopy systems, including multiple reflections, refraction, scattering, and diffraction [10] [13].

- Biomedical and Spectroscopy Features:

- Material and Tissue Modeling: TracePro can model light scattering within biological tissues, a critical capability for designing medical diagnostic devices like those used in diffuse optical tomography or fluorescence-guided surgery [14].

- Diffractive Optical Elements (DOEs): It includes specialized tools for modeling holographic optical elements (HOEs) and computer-generated holograms (CGHs), which are essential for dispersing light in grating-based spectrometers [13].

- Comprehensive Stray Light Analysis: The software includes a dedicated Stray Light Analyzer to identify and eliminate ghost reflections and unwanted light paths, directly improving the signal-to-noise ratio in spectral measurements [13].

- Fluorescence and Polarization: It allows for detailed modeling of fluorescence effects (excitation and emission) and polarization effects, which are fundamental to techniques like fluorescence spectroscopy and polarization-sensitive imaging [10] [14].

Bruker OPUS

OPUS is not a system design tool but the operational software for Bruker's spectroscopy instruments. It is the platform for acquiring, processing, and evaluating spectral data, and is a standard in many industrial and research laboratories.

- Core Functionality: OPUS provides state-of-the-art measurement, processing, and evaluation of IR, NIR, and Raman spectra. It is a validated software prepared for operation in regulated environments compliant with cGMP/GLP/GAMP [11].

- Advanced Data Analysis Features:

- Multivariate Quantification (QUANT3): The latest version, OPUS 9.2, includes the QUANT3 package for developing multivariate calibrations. It introduces new algorithms like Support Vector Regression (SVR) and Local Regression (LR) to handle heterogeneous data sets and non-linearities more effectively than traditional PLS [15].

- Project-Based Design: QUANT3 uses a project-based structure, storing all models, spectra, and results in a single file to simplify data management and validation [15].

- Library Search and Identification: It offers advanced library search capabilities for the identification of unknown substances, including a new Autonomous Composition Identifier (A.I.D.) in OPUS-TOUCH for analyzing complex mixtures [11] [15].

Experimental Protocols

Protocol: Designing a Spectrometer Optical Train Using TracePro

This protocol outlines the methodology for designing and optimizing the core optical path of a Raman spectrometer using TracePro's non-sequential ray tracing capabilities [13] [14].

1. Objective: To model the excitation, scattering, and collection pathways of a Raman spectrometer to maximize signal collection efficiency and minimize stray light at the detector.

2. Research Reagent Solutions (Virtual Components):

| Component | Function in Simulation |

|---|---|

| Laser Source | Models the excitation wavelength, divergence, and spatial profile. |

| Sample Volume | Defines optical properties (absorption, scattering) to simulate Raman scattering and tissue interaction. |

| Collection Lenses/Mirrors | Guides scattered light onto the diffraction grating; performance is optimized for minimal aberration. |

| Diffraction Grating | Disperses collected light by wavelength; modeled as a diffractive optical element (DOE). |

| Detector | A virtual sensor that captures the dispersed spectrum and measures irradiance. |

3. Procedure:

- Step 1: Geometry Import/Creation. Import the CAD model of the spectrometer housing and optical components or create the geometry directly within TracePro.

- Step 2: Define Optical Properties. Assign precise surface and material properties to all components. For the diffraction grating, define its groove density and efficiency. For the sample, apply scattering and absorption coefficients representative of the target biological tissue [14].

- Step 3: Configure Light Source. Set up the laser source with the correct wavelength, power, and spatial characteristics. Define the Raman emission from the sample as a secondary, wavelength-shifted source.

- Step 4: Run Non-Sequential Ray Trace. Execute the ray trace simulation with a sufficient number of rays to achieve statistically significant results at the detector.

- Step 5: Stray Light Analysis. Use the Stray Light Analyzer tool to identify and trace paths of unwanted light (e.g., from ghost reflections or scatter) that reach the detector. Introduce baffles or apply anti-reflective coatings in the model to mitigate this noise [13].

- Step 6: Analyze and Optimize. Review the irradiance map on the detector. Evaluate signal strength and spectral resolution. Iteratively adjust the positions and curvatures of collection optics to maximize signal collection and uniformity.

The workflow for this design and optimization process is illustrated below.

Protocol: Validating Material Optical Properties with Ansys Speos

This protocol describes how to use Ansys Speos to validate the optical properties of a material used within a spectroscopic instrument, such as a housing coating to minimize stray light [9] [12].

1. Objective: To simulate and measure the reflectance and absorption properties of a material sample under controlled illumination to verify its suitability for reducing internal stray light.

2. Research Reagent Solutions (Virtual Components):

| Component | Function in Simulation |

|---|---|

| Standard Illuminant | Provides a controlled, spectrally defined light source (e.g., D65). |

| Material Sample | A virtual sample with assigned surface properties (e.g., BSDF) from a measured database. |

| Imaging Sphere Sensor | A simulated integrating sphere to capture hemispherical reflectance. |

| Luminance Camera Sensor | Provides a human-vision-based view of the material's appearance. |

3. Procedure:

- Step 1: Build Virtual Setup. In the Speos 3D environment, create a simple scene with the standard illuminant, the material sample plate, and the imaging sphere sensor.

- Step 2: Assign Material. Apply the material under test from the Speos custom materials library. This material should have pre-defined optical properties, such as a measured BSDF (Bidirectional Scattering Distribution Function).

- Step 3: Configure Simulation. Set up the simulation to run in the desired spectral range (e.g., Visible, NIR). Activate the Human Vision option if assessing visual appearance is required.

- Step 4: Run GPU-Accelerated Simulation. Execute the simulation using Speos Live Preview on an NVIDIA GPU for rapid iterative results.

- Step 5: Analyze Results. Examine the results in the post-processing tool. Quantify the total hemispherical reflectance from the imaging sphere sensor. Use the Light Expert tool to visualize specific light paths contributing to reflection and identify potential stray light contributors.

Protocol: Quantitative Analysis of Pharmaceutical Formulations using OPUS

This protocol details the steps for developing and using a multivariate calibration model in Bruker OPUS to quantify active pharmaceutical ingredient (API) concentration in a tablet [11] [15].

1. Objective: To create and validate a quantitative model (QUANT3) using NIR spectra to predict the concentration of an API in a solid dosage form.

2. Research Reagent Solutions:

| Component | Function |

|---|---|

| Bruker FT-NIR Spectrometer | Instrument for acquiring spectral data, controlled by OPUS. |

| Calibration Set Tablets | Tablets with known, varying API concentrations (reference values). |

| Validation Set Tablets | An independent set of tablets for testing the model's predictive accuracy. |

3. Procedure:

- Step 1: Spectral Acquisition. Using the OPUS software, measure the NIR spectra of all tablets in the calibration and validation sets under consistent operational parameters.

- Step 2: Data Preparation and Pre-processing. In the QUANT3 interface, load the spectra of the calibration set. Perform necessary spectral pre-processing (e.g., vector normalization, derivative) to enhance the spectral features related to the API.

- Step 3: Model Development. Assign the known reference values (API concentrations) to the corresponding spectra. Select an algorithm (PLS, SVR, or Local Regression) and allow OPUS to build the calibration model. The software will automatically handle dataset splitting (e.g., using the Kennard-Stone algorithm) to create training and test sets for internal validation [15].

- Step 4: Model Validation. Validate the model's performance using the independent validation set. OPUS will provide figures of merit such as the Root Mean Square Error of Prediction (RMSEP) and the coefficient of determination (R²).

- Step 5: Deploy for Prediction. Once validated, the model can be used within OPUS to predict the API concentration in unknown tablet samples routinely.

The workflow for this quantitative analysis is methodically outlined in the following diagram.

The effective design and optimization of modern spectroscopy systems require a suite of specialized software tools, each playing a distinct and critical role. Ansys Speos and TracePro are powerful allies in the virtual design and prototyping phase, enabling engineers to simulate optical performance, predict interactions, and eliminate costly errors before physical manufacturing. Speos brings strengths in human-centric visualization and sensor simulation, while TracePro offers unparalleled precision for modeling complex optical paths and biomedical interactions. Once a system is realized, Bruker OPUS takes the lead as a robust and compliant platform for instrument control, spectral acquisition, and advanced quantitative analysis, directly supporting research and quality control in demanding fields like pharmaceutical development. By understanding the capabilities and synergies between these platforms, researchers and scientists can make informed decisions that streamline the entire spectroscopy system lifecycle, from initial concept to final analytical results.

Optical spectroscopy software is a specialized tool designed to work with spectrometers for the collection, analysis, and interpretation of spectral data. It serves as the foundation of smart technologies that can determine the composition of any given material, enabling researchers to acquire data, gather information, and produce reports for better decision-making [17]. The global spectroscopy software market, valued at approximately USD 1.1 to 1.2 billion in 2024, is projected to grow at a compound annual growth rate (CAGR) of 9.1% to 9.2%, reaching around USD 2.5 billion by 2033-2034 [17] [1]. This growth is largely driven by increasing demand from the pharmaceutical industry, stringent environmental and food safety regulations, and continuous technological advancements [17].

For researchers, scientists, and drug development professionals, selecting the appropriate spectroscopy software is a critical decision that directly impacts data integrity, workflow efficiency, and regulatory compliance. This application note establishes a structured framework for evaluation based on three pivotal criteria: accuracy, CAD integration, and application-specific features, contextualized within the broader objective of designing and optimizing spectroscopy systems.

Core Selection Criteria and Evaluation Protocols

Accuracy and Performance Validation

Definition and Importance: Accuracy in spectroscopy software refers to the precision of data collection, processing, and interpretation. It ensures that spectral data reliably reflects the true composition and properties of the sample, which is non-negotiable in applications like drug development where decisions directly impact product safety and efficacy [6].

Quantitative Benchmarks: The following table summarizes key quantitative benchmarks for assessing software accuracy.

Table 1: Key Quantitative Benchmarks for Software Accuracy

| Performance Metric | Benchmark Value | Validation Method |

|---|---|---|

| Spectral Resolution | < 0.1 nm (UV-Vis) | Measurement of FWHM (Full Width at Half Maximum) of atomic emission lines [6]. |

| Peak Identification Accuracy | > 99.5% | Analysis of standard reference materials with known spectral peaks [6]. |

| Quantitative Analysis Error | < 1.0% RSD | Repeated measurement of standard concentrations for calibration curve validation [17]. |

| Algorithm Processing Speed | Millions of rays/sec (for optical simulation) | Ray tracing simulations on standardized hardware [18]. |

Experimental Protocol 1: Protocol for Validating Spectral Accuracy and Reproducibility

- Objective: To verify the software's accuracy in identifying known spectral features and its reproducibility across multiple measurements.

- Materials:

- Spectroscopy software under test (e.g., solutions from Thermo Fisher Scientific, Agilent Technologies, Bruker) [17].

- Calibrated spectrometer.

- Standard Reference Materials (SRMs): NIST-traceable holmium oxide filter (for wavelength accuracy) and a certified intensity standard.

- Procedure:

- System Calibration: Follow the software and instrument manufacturer's prescribed calibration procedure.

- Data Acquisition: Acquire spectra from the SRMs using three replicate measurements.

- Peak Analysis: Use the software's automated peak detection function to identify the characteristic peaks of the SRM.

- Data Comparison: Compare the software-identified peak positions (wavelengths) and intensities against the certified values from the SRM documentation.

- Statistical Analysis: Calculate the mean, standard deviation, and relative standard deviation (RSD) for the replicate measurements.

- Data Interpretation: The software is deemed to have passed the validation if the mean peak positions are within ±0.1 nm of the certified values and the RSD for intensity measurements is less than 1.0% [6].

Experimental Protocol 2: Protocol for Benchmarking Computational Performance

- Objective: To assess the software's efficiency in processing large spectral datasets, which is crucial for high-throughput screening in drug discovery [17].

- Materials:

- Spectroscopy software installed on a standardized workstation.

- A benchmark dataset comprising 10,000 spectral files.

- Procedure:

- Task Definition: Design a batch processing task that includes baseline correction, peak picking, and quantitative analysis.

- Execution: Execute the batch job and record the total processing time using the software's internal timer or an external stopwatch.

- Resource Monitoring: Use the operating system's resource monitor to track CPU and memory usage during the task.

- Data Interpretation: Compare the processing time and resource utilization against predefined project requirements or competing software solutions. Faster processing with stable resource usage indicates superior performance for high-throughput environments.

CAD and Software Ecosystem Integration

Definition and Importance: CAD integration refers to the software's ability to seamlessly interface with Computer-Aided Design (CAD) environments and other data management systems like LIMS (Laboratory Information Management Systems). This capability bridges the gap between mechanical design and optical analysis, enabling a cohesive workflow from component design to optical performance simulation [9] [18]. It reduces toolchain hand-offs, minimizes manual errors, and allows for early manufacturability evaluation, thereby accelerating the overall design cycle [19].

Evaluation Metrics: The table below outlines key metrics for evaluating integration capabilities.

Table 2: Metrics for Evaluating CAD and Ecosystem Integration

| Integration Feature | High-Quality Indicator | Application Benefit |

|---|---|---|

| Native CAD Plugin | Availability of a dedicated add-in (e.g., for SOLIDWORKS, Creo) [18]. | Allows application of optical properties and analysis directly within the CAD environment. |

| File Format Support | Robust import/export of STEP, SAT, IGES formats [18]. | Ensures compatibility and collaboration between mechanical and optical design teams. |

| LIMS/Data System Connectivity | Built-in connectors for automated data transfer to systems like LIMS [6]. | Streamlines data management, ensures traceability, and supports regulatory compliance (e.g., 21 CFR Part 11). |

| Scripting & Automation API | Access to a powerful macro or scripting environment (e.g., Scheme, Python) [18]. | Enables automation of repetitive tasks, creation of custom workflows, and extension of software capabilities. |

Experimental Protocol 3: Protocol for Testing CAD-to-Simulation Workflow Fidelity

- Objective: To validate the integrity and efficiency of transferring a CAD model into the spectroscopy or optical simulation software for analysis.

- Materials:

- CAD software (e.g., SOLIDWORKS).

- Spectroscopy/Optical software with claimed CAD integration (e.g., TracePro with its RayViz add-in for SOLIDWORKS) [18].

- Test model: A parametric CAD assembly of a simple optical mount or cuvette holder.

- Procedure:

- Model Preparation: Create or obtain the test model in the CAD environment.

- Export/Transfer: Use the software's integrated plugin or standard file format (e.g., STEP) to transfer the model.

- Fidelity Check: In the optical software, verify that all geometric features, dimensions, and assemblies are preserved and accurately represented.

- Optical Property Assignment: Apply material optical properties (e.g., refractive index, absorption) to the components within the optical software or via the CAD plugin.

- Simulation Execution: Run a basic ray-tracing or spectral simulation.

- Data Interpretation: A successful integration is demonstrated by a seamless transfer without geometry loss, the correct assignment and application of optical properties, and the successful execution of a simulation without errors attributable to the CAD model.

Application-Specific Features for Drug Development

Definition and Importance: Application-specific features are specialized functionalities that cater directly to the workflows, regulatory requirements, and analytical challenges of a particular sector. For pharmaceutical professionals, this includes capabilities for drug quality assurance, high-throughput screening, and regulatory compliance [17] [6].

Key Feature Analysis: The following table details critical application-specific features for the pharmaceutical industry.

Table 3: Essential Application-Specific Features for Pharmaceutical Drug Development

| Feature Category | Specific Software Capabilities | Impact on Pharmaceutical Workflows |

|---|---|---|

| Quantitative Analysis | Advanced chemometrics, multivariate calibration, and concentration prediction models [6]. | Enables precise determination of active pharmaceutical ingredient (API) concentration and impurity levels. |

| Compliance & Data Integrity | Audit trails, electronic signatures, user access controls, and compliance with 21 CFR Part 11 [6]. | Ensures data is reliable and traceable, meeting strict FDA and EMA regulatory standards for drug approval. |

| High-Throughput Screening | Automated batch processing, data visualization tools, and compatibility with microplate readers [17]. | Accelerates drug discovery by allowing rapid analysis of large compound libraries. |

| Material Identification & Purity | Spectral library searching, principal component analysis (PCA), and conformity tests [6]. | Verifies the identity and purity of raw materials and excipients, preventing defects and ensuring product safety. |

Experimental Protocol 4: Protocol for Evaluating a Pharmaceutical Quality Control Workflow

- Objective: To test the software's end-to-end capability in a simulated pharmaceutical quality control scenario for drug composition verification [6].

- Materials:

- Spectroscopy software with pharmaceutical analysis features.

- Spectrometer.

- Samples: API standard, excipient, and a batch of formulated tablets.

- Procedure:

- Method Development: Create an analytical method in the software. This includes building a calibration curve using the API standard at different concentrations.

- Library Management: Add reference spectra of the API and excipients to the software's spectral library.

- Sample Analysis: Acquire spectra from the tablet samples and run the pre-defined method.

- Automated Reporting: Use the software's reporting module to generate a compliance report that includes the identified components, quantified API concentration, and any spectral anomalies.

- Data Interpretation: The software is evaluated on its ability to correctly identify the API and excipients via library search, accurately quantify the API concentration against the calibration curve and flag any outliers or spectral mismatches that could indicate impurities or formulation errors.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table lists key materials and software solutions essential for experiments in this field.

Table 4: Key Research Reagent Solutions for Spectroscopy System Optimization

| Item | Function / Application |

|---|---|

| NIST-Traceable Standard Reference Materials (SRMs) | Provides an absolute benchmark for validating the wavelength and photometric accuracy of the spectroscopy system [6]. |

| Optical Spectroscopy Software (e.g., from Thermo Fisher, Agilent, Bruker) | Core platform for data acquisition, processing, analysis, and reporting; enables material characterization and quality control [17] [6]. |

| CAD-Integrated Optical Software (e.g., Ansys Speos, TracePro) | Facilitates the design and virtual validation of optical components and systems within a CAD environment, reducing prototyping needs [9] [18]. |

| Parametric CAD Model of Spectrometer Component | Serves as a digital twin for simulating optical paths, component integration, and performing virtual tolerance analysis [19] [18]. |

| Scripting Macro (e.g., Scheme, Python) | Automates repetitive software tasks, customizes analysis routines, and enhances workflow efficiency and reproducibility [18]. |

Workflow Visualization

The following diagram illustrates the logical workflow for selecting and validating spectroscopy software based on the key criteria discussed.

Software Selection and Validation Workflow

This diagram outlines a systematic, iterative process for selecting optical spectroscopy software. It begins with clearly defining system requirements, followed by concurrent evaluation of the three core criteria: Accuracy, CAD Integration, and Application-Specific Features. Each criterion is assessed through specific experimental protocols. The results feed into a final decision point; if the software fails any criterion, the process loops back to requirement definition, ensuring a rigorous and comprehensive selection process.

Defining System Requirements for Pharmaceutical and Clinical Applications

Core System Requirements for Pharmaceutical Spectroscopy

Designing spectroscopy systems for pharmaceutical and clinical applications requires a meticulous approach to ensure data integrity, regulatory compliance, and analytical precision. The core requirements span hardware, software, and operational protocols.

System Qualification & Compliance Requirements

All spectroscopic instruments intended for regulated environments must undergo a rigorous qualification and validation process. The requirements can be categorized as follows:

Table 1: System Qualification & Compliance Requirements

| Requirement Category | Description | Key Standards & Examples |

|---|---|---|

| System Qualification | A three-tiered process to verify instrument performance [20]. | Design Qualification (DQ): Verifies the instrument's design attributes are acceptable.Operational Qualification (OQ): Confirms adherence to intended use specifications.Performance Qualification (PQ): Defines instrument's intended performance for specific applications [20]. |

| Software Compliance | Software must ensure data integrity and security [21]. | 21 CFR Part 11 / EU Annex 11: Mandates electronic signatures, audit trails, and unique user log-ins. Software like Vision Air fulfills these technical requirements [21]. |

| Pharmacopoeia Compliance | Instruments must adhere to established testing protocols for their intended use [20]. | Adherence to USP, European, Japanese, or Chinese pharmacopoeias. Automated software tests adhering to NIST standards, such as the polystyrene standard in FTIR, are essential [20]. |

| Operator Training | Formalized processes are required to ensure operators are competent [20]. | Crucial for maintaining standardized operations and data credibility [20]. |

Application-Specific Technical Requirements

The technical specifications of a spectroscopy system are dictated by its specific application within the pharmaceutical workflow, from raw material inspection to final product quality assurance.

Table 2: Application-Specific Technical Requirements

| Application | Technology | Key System Requirements |

|---|---|---|

| Raw Material Identification | NIR Spectroscopy [21], Raman Spectroscopy [22] | Portability for use in warehouses [21]. Spatial resolution and molecular specificity for verifying API and excipient identity and purity [22]. |

| Polymorph & Crystallinity Characterization | Raman Spectroscopy [22] | High sensitivity and spectral resolution to differentiate between polymorphic forms that influence drug solubility and efficacy [22]. |

| Inline/Online Process Control | NIR Spectroscopy [21] | Real-time monitoring capability, fiber optic probes, ruggedized design for manufacturing environments, and software for real-time data analysis [21]. |

| Content Uniformity & Blend Homogeneity | NIR Spectroscopy [21], Raman Chemical Mapping [22] | For NIR: Rapid measurement of multiple tablets simultaneously [21]. For Raman: Confocal microscopy for detailed chemical mapping of API distribution within a tablet [22]. |

| Finished Product Quality Assurance | NIR Spectroscopy [21], FTIR Spectroscopy [20] | Non-destructive analysis, ability to analyze products in blisters, and determine multiple parameters (e.g., content, dissolution profile, hardness) [21]. FTIR must provide data on identity, purity, and quantity [20]. |

Experimental Protocols

Protocol: Raw Material Identity Verification using Handheld NIR Spectroscopy

This protocol outlines the procedure for the rapid, non-destructive identification of incoming raw materials (APIs, excipients) in a pharmaceutical warehouse or weighing area [21].

Workflow

The following workflow illustrates the procedural and data integrity steps for raw material verification.

Materials and Equipment

Table 3: Research Reagent Solutions & Essential Materials

| Item | Function |

|---|---|

| Handheld or Portable NIR Spectrometer | Allows for rapid, on-site analysis of materials without the need to transport samples to a central lab [21]. |

| 21 CFR Part 11 Compliant Software | Ensures data integrity through user authentication, electronic signatures, and a complete, uneditable audit trail [21]. |

| Qualified Spectral Library | A validated database of reference spectra for all approved raw materials, used by the software to compare and identify unknown samples [21]. |

| Vial or Bag of Raw Material | The sample to be tested, often analyzed directly in its container with minimal or no preparation [21]. |

Protocol: Tablet Content Uniformity and Chemical Mapping using Confocal Raman Microscopy

This protocol uses Confocal Raman Microscopy to non-destructively assess the distribution and identity of the Active Pharmaceutical Ingredient (API) within a solid dosage form, providing critical information for formulation development and quality control [22].

Workflow

The workflow details the steps from sample mounting to image generation for analyzing component distribution within a tablet.

Key Methodology Details

- Spatial Resolution: The confocal design enables chemical analysis with microscale spatial resolution, allowing visualization of individual API particles and excipients [22].

- Non-Destructive Analysis: The sample remains intact after analysis, allowing for further testing or archival, which is crucial for expensive APIs or limited-quantity samples [22].

- Polymorph Identification: The high molecular specificity of Raman spectroscopy allows it to differentiate between different crystalline forms (polymorphs) of the API, which is critical for predicting drug performance [22].

- Regulatory Compliance: Systems should include 21 CFR Part 11 compliant software to support method validation and data integrity requirements for use in regulated environments [22].

Protocol: Inline Process Monitoring of Granulation using NIR Spectroscopy

This protocol describes the use of an inline NIR probe to monitor a granulation process in real-time, enabling proactive control of Critical Process Parameters (CPPs) and supporting Quality by Design (QbD) and PAT initiatives [21].

Workflow

The workflow illustrates the continuous feedback loop of real-time data acquisition and process control.

Key Methodology Details

- Real-Time Monitoring: The NIRS XDS Process Analyzer fitted with a fiber optic probe can monitor parameters like residual solvent and water content in powders and granulates in real-time, reducing material loss and maximizing time efficiency [21].

- Multivariate Modeling: The system relies on pre-calibrated multivariate models (e.g., PLS regression) that correlate spectral data to reference values for moisture and concentration [21].

- PAT Integration: This methodology is a core component of the FDA's Process Analytical Technology (PAT) initiative, which encourages real-time quality control to ensure final product quality [21].

Building and Simulating Systems: From Raman to Hyperspectral Imaging

The design and optimization of modern spectroscopy systems rely on a tightly coupled workflow between virtual modeling and experimental validation. This integrated approach allows researchers and drug development professionals to predict optical performance, refine system parameters, and significantly reduce prototyping costs and development time. The process typically begins with computational modeling of the molecular system or optical setup, proceeds through virtual simulation of spectroscopic results, and culminates in experimental validation using advanced instrumentation. This methodology is particularly valuable in pharmaceutical applications where precision in material identification and quality assurance is critical [6] [9]. Advanced optical design software like Ansys Speos now enables researchers to simulate a system's optical performance and evaluate final illumination effects based on human vision capabilities, creating a seamless bridge between virtual prototyping and physical realization [9].

Computational Modeling and Molecular Dynamics

Molecular Environment Characterization

The foundation of accurate spectroscopic simulation begins with realistic modeling of molecular environments. Classical molecular dynamics (MD) simulations generate the atomic-level trajectories and configurations that represent the system's behavior over time. For complex molecular systems with nanoscopic heterogeneities—such as drug compounds in multi-component solutions—this step is crucial for capturing the intricate molecular arrangements that arise from diverse interactions among components [23].

The Instantaneous Frequencies of Molecules (IFM) method represents a significant advancement in this area. This parameter-free methodology couples with classical MD simulations to predict vibrational observables, including the Frequency Fluctuation Correlation Function (FFCF) and solvatochromic shifts. When applied to N-methylacetamide (NMA) in seven different chemical environments, the IFM method demonstrated strong agreement with experimental results for both NMA solvatochromism and FFCF dynamics, including characteristic times and amplitudes of fluctuations [23].

Table: Key Components for Computational Modeling

| Research Reagent/Component | Function in Workflow |

|---|---|

| Molecular Dynamics (MD) Simulation Software | Generates atomic trajectories and configurations of the molecular system over time |

| GFN2-xTB Semiempirical Method | Calculates vibrational frequencies with low computational cost while maintaining accuracy |

| Frequency Maps | Transforms molecular coordinates into spectroscopic observables like instantaneous frequencies |

| N-methylacetamide (NMA) | Model compound for validating computational methodologies via its sensitive amide I vibrational mode |

| Solvent Environments (D2O, DMSO, etc.) | Provide varied chemical environments for testing computational method transferability |

Computational Protocol

Protocol 1: Molecular Dynamics with Instantaneous Frequency Calculation

- System Preparation: Construct initial coordinates of the solute molecule (e.g., N-methylacetamide) and solvent boxes representing the chemical environments of interest. Energy-minimize the initial structure to remove steric clashes [23].

- Force Field Parameterization: Apply appropriate classical force fields to all components. Ensure the force field accurately describes non-covalent interactions, which are critical for simulating spectroscopic observables [23].

- Equilibration Phase: Run the MD simulation under the desired thermodynamic conditions (NPT or NVT ensemble) until system properties (density, potential energy) stabilize. This typically requires 1-5 nanoseconds depending on system complexity [23].

- Production Phase: Execute a multi-nanosecond MD simulation, saving molecular coordinate snapshots at regular intervals (e.g., every 100 fs) for subsequent frequency analysis.

- Instantaneous Frequency Calculation: For each saved snapshot, extract the solute molecule and its surrounding solvation shells. Use the GFN2-xTB semiempirical method to compute the vibrational spectrum and extract the frequency of the specific vibrational mode of interest [23].

- Data Analysis: Calculate the average frequency (for linear IR) and construct the frequency-frequency time correlation function to obtain the FFCF for comparison with 2DIR experiments [23].

Optical System Simulation

Monte Carlo Methods for Light Transport

For simulating how light propagates through biological samples or optical components, Monte Carlo (MC) methods provide a powerful stochastic approach to solving the radiative transfer equation. These simulations model key light-tissue interaction mechanisms including absorption, elastic scattering, fluorescence, and Raman scattering. A recently developed spectroscopic MC package enables researchers to simulate all these competing phenomena simultaneously, providing a comprehensive platform for predicting depth-resolved spectroscopic signals [24].

MC methods are particularly valuable for designing and optimizing fiber-optic probes used in biomedical Raman spectroscopy. These simulations can establish rigorous relationships between Raman sensing depth and tissue optical properties, which is essential for developing clinically viable systems. For instance, MC simulations have demonstrated that for a realistic Raman probability of 10⁻⁶, the sensing depth ranges between 10 and 600 μm for absorption coefficients of 0.001 to 1.4 mm⁻¹ and reduced scattering coefficients of 0.5 to 30 mm⁻¹ [24].

Table: Quantitative Analysis of Raman Sensing Depth via Monte Carlo Simulation

| Absorption Coefficient (mm⁻¹) | Reduced Scattering Coefficient (mm⁻¹) | Raman Sensing Depth (μm) |

|---|---|---|

| 0.001 | 1 | 105-225 |

| 0.001-1.4 | 0.5-30 | 10-600 |

| Values obtained for a realistic Raman probability of 10⁻⁶ [24] |

Optical Simulation Protocol

Protocol 2: Monte Carlo Simulation of Spectroscopic Signals

- Optical Property Definition: Specify the absorption coefficient (μₐ), scattering coefficient (μₛ), anisotropy factor (g), and refractive index (n) for all materials in the simulation domain [24].

- Source Configuration: Define the light source parameters including wavelength, beam diameter, numerical aperture, and incident angle. For Raman simulations, incorporate the appropriate Raman probability (typically 10⁻⁶ for biological tissues) [24].

- Geometry Construction: Create a digital model of the sample geometry. This can range from simple layered structures to complex voxel-based representations of heterogeneous tissues [24].

- Photon Launching: Simulate photon propagation through the medium using stochastic sampling of interaction probabilities. Track photon weight, position, and direction after each scattering event [24].

- Interaction Handling: At each step, determine whether absorption, elastic scattering, fluorescence, or Raman scattering occurs based on the relative probabilities of each process [24].

- Signal Collection: Implement virtual detectors to record the desired spectroscopic signals (e.g., Raman photons within a specific wavelength shift) based on their position and trajectory [24].

- Data Analysis: Process the collected photons to generate predicted spectra or spatial maps of the spectroscopic signal, including depth-resolved information [24].

Experimental Validation and Instrumentation

Spectroscopic Instrumentation for Validation

The final phase of the workflow involves validating computational predictions using advanced spectroscopic instrumentation. Recent innovations in this domain show a distinct trend toward field-portable devices and specialized laboratory systems with enhanced capabilities. The 2025 review of spectroscopic instrumentation highlights several cutting-edge technologies relevant to pharmaceutical and research applications [25].

Fluorescence instrumentation has seen specialized developments like the Veloci A-TEEM Biopharma Analyzer from Horiba, which simultaneously collects absorbance, transmittance, and fluorescence excitation-emission matrix (A-TEEM) data. This provides an alternative to traditional separation methods for characterizing monoclonal antibodies, vaccines, and protein stability [25].

In Raman spectroscopy, new systems include the PoliSpectra rapid Raman plate reader designed for fully automated measurement of 96-well plates, addressing the needs of pharmaceutical and biopharmaceutical markets with high-throughput screening tools. For hazardous materials identification, the TaticID-1064ST handheld Raman spectrometer offers analysis guidance with onboard documentation features [25].

Mid-infrared spectroscopy continues to advance with systems like the Bruker Vertex NEO platform, which incorporates a vacuum ATR accessory that maintains samples at normal pressure while keeping the entire optical path under vacuum. This effectively removes atmospheric interference, which is particularly beneficial for protein studies and far-IR research [25].

Experimental Validation Protocol

Protocol 3: Experimental Validation of Simulated Results

- Sample Preparation: Prepare standardized samples of the material system under investigation. For pharmaceutical applications, this may include drug compounds, excipients, or biological macromolecules in controlled solvent environments [25] [23].

- Instrument Calibration: Perform standard calibration procedures using reference materials specific to the spectroscopic technique being employed (e.g., polystyrene for IR, cyclohexane for Raman) [25].

- Data Acquisition: Collect spectroscopic data using the appropriate instrument configuration. For comparison with dynamics simulations, 2DIR spectroscopy may be employed to measure the FFCF directly [23].

- Spectral Processing: Apply necessary preprocessing steps including baseline correction, normalization, and noise reduction to the experimental spectra [6].

- Quantitative Comparison: Compare experimental results with computational predictions using statistical metrics. For IR spectroscopy, this includes analyzing solvatochromic frequency shifts and FFCF parameters (characteristic times and amplitudes) [23].

- Model Refinement: If discrepancies exist between simulation and experiment, iteratively refine the computational models (e.g., force field parameters, optical properties) to improve agreement [23].

- Validation Reporting: Document the degree of agreement between simulated and experimental results, including uncertainty estimates and limitations of both approaches.

Table: Selected Advanced Spectroscopic Instrumentation (2024-2025)

| Instrument | Technique | Key Features | Applications |

|---|---|---|---|

| Veloci A-TEEM Biopharma Analyzer | Fluorescence (A-TEEM) | Simultaneous absorbance, transmittance, fluorescence EEM | Monoclonal antibodies, vaccine characterization |

| Vertex NEO Platform | FT-IR | Vacuum ATR accessory, multiple detector positions | Protein studies, far-IR research |

| PoliSpectra | Raman | Automated 96-well plate reading, liquid handling | High-throughput screening in pharma |

| SignatureSPM | Raman/Photoluminescence | Integrated scanning probe microscope | Semiconductors, nanotechnology |

| BrightSpec MRR | Microwave | Broadband chirped pulse microwave spectrometer | Molecular structure determination |

Non-Sequential Ray Tracing for Complex Optical Paths and Stray Light Analysis

Non-sequential ray tracing is a powerful simulation methodology that allows optical engineers to model the behavior of light without requiring rays to follow a predefined sequence of optical surfaces [26]. Unlike sequential ray tracing, where rays are confined to propagate from one defined surface to the next in a specific order, non-sequential modeling enables rays to interact with optical components in any order, with the capability to hit objects multiple times or not at all [26]. This fundamental characteristic makes it particularly valuable for analyzing complex optical phenomena where light paths are not easily predictable, such as stray light analysis, ghost reflections, and illumination system design.

Within optical design software like Zemax OpticStudio, non-sequential ray tracing operates by modeling optical components as true three-dimensional objects, including both surfaces and solid volumes [26]. Each object is positioned globally with independent x, y, z coordinates and orientation, allowing for accurate representation of real-world optical systems. This approach is essential for modeling complex components that cannot be accurately represented by single surfaces, including prisms, corner cubes, light pipes, and CAD-imported geometries [26]. For spectroscopy system optimization, this capability provides critical insights into light behavior throughout the entire optical path, enabling researchers to identify and mitigate performance-degrading effects before physical prototyping.

Fundamentals of Stray Light Analysis

Stray light refers to unwanted light in an optical system that can significantly degrade performance by introducing noise and reducing contrast [27]. In the context of spectroscopy systems used for drug development, stray light can compromise measurement accuracy, leading to unreliable data and potentially affecting research outcomes. Stray light manifests through several physical mechanisms, each with distinct characteristics and mitigation requirements.

- Reflections: These occur when light bounces off surfaces not intended to contribute to the signal. In spectroscopic instruments, internal surface reflections can create flare and ghosting effects that interfere with accurate spectral measurements, particularly in high-contrast scenarios [27].

- Scattering: This phenomenon results from light interacting with surface imperfections, contaminants, or roughness. Scattering causes a diffuse spread of light, potentially obscuring fine spectral features and reducing the system's overall signal-to-noise ratio [27]. This is particularly problematic in spectroscopy systems requiring high sensitivity.

- Diffraction: Diffraction occurs when light bends around edges or through small apertures, creating unwanted patterns that reduce spectral sharpness and clarity [27]. In spectrometers, diffraction from aperture edges can limit resolution and cause spectral cross-talk between adjacent channels.

The combined effects of these stray light sources can lead to reduced measurement contrast, false spectral signals, and decreased signal-to-noise ratio [27]. For pharmaceutical researchers relying on spectroscopic data for drug development, effective stray light control is not merely an optimization concern but a fundamental requirement for data integrity.

Software Tools and Implementation

Non-Sequential Ray Tracing in OpticStudio

Zemax OpticStudio provides two distinct modes for non-sequential analysis: Pure Non-Sequential Mode and Mixed Sequential/Non-Sequential Mode [26]. In Pure Non-Sequential Mode, all optical components reside in a single non-sequential group where sources and detectors are configured to launch and record rays. This mode offers comprehensive source modeling capabilities, allowing complex three-dimensional source distributions beyond the point sources available in sequential mode [26]. The software's ray tracing engine can handle ray splitting, scattering, and diffraction at phase surfaces, with analysis outputs including radiometric detector data and ray history databases.

Mixed Mode combines sequential and non-sequential capabilities, where non-sequential groups are embedded within a larger sequential system [26]. Sequentially traced rays enter the non-sequential group through an entrance port, interact with the three-dimensional components inside, then exit through an exit port to continue propagating through the sequential system. This approach is particularly valuable for spectroscopy systems that are fundamentally sequential but contain components better modeled as 3D volumes, such as complex sample cells, integrating spheres, or specialized filters.

Table 1: Non-Sequential Ray Tracing Modes in OpticStudio

| Mode Type | Key Features | Best Applications in Spectroscopy |

|---|---|---|

| Pure Non-Sequential | All components in non-sequential group; comprehensive source modeling; ray splitting and scattering | Illumination uniformity studies; complex component analysis; stray light mapping |

| Mixed Mode | Non-sequential groups embedded in sequential system; entrance and exit ports; sequential performance metrics | Systems with both imaging and non-sequential elements; spectrometers with complex sample compartments |

Specialized Stray Light Analysis Tools

TracePro stands as a specialized software solution for stray light analysis, employing Monte Carlo ray tracing to simulate light paths with high statistical accuracy [27]. Originally developed for NASA, TracePro includes advanced features such as path sorting and ray visualization tools essential for identifying significant stray light contributions in complex systems [27]. The software offers robust CAD integration capabilities, allowing users to import and analyze intricate mechanical geometries that might contribute to stray light through scattering or unintended reflections.

For spectroscopy systems, these software tools enable researchers to quantify stray light performance through metrics such as Point Source Normalized Irradiance Transmittance (PSNIT) and to identify critical surfaces contributing to stray light through path analysis. This capability is particularly valuable during the design phase of spectroscopic instruments for pharmaceutical applications, where regulatory requirements often demand rigorous characterization of measurement accuracy.

Table 2: Stray Light Analysis Software Capabilities

| Software Tool | Key Stray Light Features | Strengths for Spectroscopy Applications |

|---|---|---|

| Zemax OpticStudio | Non-sequential ray tracing; detector objects; ray database files; path analysis | Integration with sequential optical design; parametric optimization; sensitivity analysis |

| TracePro | Monte Carlo ray tracing; CAD integration; advanced path sorting; NASA-developed algorithms | Handling complex mechanical assemblies; statistical accuracy; specialized stray light visualization |

Quantitative Analysis and Performance Metrics

Effective stray light analysis requires quantifying performance through standardized metrics that enable objective comparison between design alternatives. The most common metric is the Point Source Normalized Irradiance Transmittance (PSNIT), which measures the system's response to an off-axis bright source relative to the in-axis signal. For spectroscopy systems, this is particularly important when measuring weak spectral features in the presence of strong nearby lines or when the instrument must operate with bright sources in its field of view.

In non-sequential ray tracing software, these quantitative assessments are typically performed using detector objects that capture irradiance distributions [26]. The detectors support various data types including incoherent irradiance, coherent irradiance, radiant intensity, and true color photometric results [26]. For spectroscopic applications, the spectral response can be characterized by configuring sources with specific wavelength distributions and analyzing detector response across wavelength bands.

Table 3: Key Stray Light Performance Metrics

| Metric | Definition | Application in Spectroscopy Systems |

|---|---|---|

| PSNIT (Point Source Normalized Irradiance Transmittance) | Ratio of stray light irradiance to input irradiance as a function of field angle | Quantifies susceptibility to off-axis light sources; critical for fluorescence spectroscopy |

| BSDF (Bidirectional Scattering Distribution Function) | Angular distribution of scattered light from a surface | Characterizes scattering from optical components; essential for low-light-level Raman spectroscopy |

| Ghost Reflection Intensity | Relative strength of unwanted reflections compared to primary signal | Important for high-dynamic-range absorption spectroscopy |

Experimental Protocols for Stray Light Analysis

Protocol 1: Baseline Stray Light Characterization

This protocol establishes a standardized methodology for quantifying stray light performance in spectroscopy systems using non-sequential ray tracing.

Materials and Equipment:

- Optical modeling software with non-sequential ray tracing capability (OpticStudio or TracePro)

- Complete system optical model including mechanical surfaces

- Computational resources adequate for Monte Carlo simulations

Procedure:

- Model Preparation: Ensure the optical model includes all significant optical and mechanical surfaces with appropriate optical properties assigned. Mechanical surfaces should have measured or estimated scatter properties based on surface finish.

- Source Definition: Configure a collimated source representing the input light to the spectroscopy system. For full system characterization, define multiple field points across the input aperture.

- Detector Placement: Position detector objects at key system locations, including the focal plane where the spectral dispersion occurs. Additional detectors may be placed at intermediate image planes and at critical mechanical surfaces to identify scattering sources.

- Ray Tracing: Execute a ray trace with sufficient rays to achieve statistical significance (typically 1,000,000+ rays for initial analysis). Use importance sampling if available to improve efficiency.

- Path Analysis: Utilize path sorting tools to identify the most significant stray light paths contributing to noise at the detector plane. Filter ray databases to isolate paths that involve multiple bounces or scattering events.

- Quantification: Calculate stray light metrics including PSNIT values for critical field angles and the total stray light contribution as a percentage of the primary signal.

Interpretation: Paths contributing more than 1% of the primary signal intensity should be flagged for mitigation. The analysis should prioritize paths that directly reach the detector plane over those that terminate elsewhere in the system.

Protocol 2: Scattering Surface Analysis

This protocol specifically characterizes the impact of surface roughness and contamination on stray light performance, critical for maintaining spectroscopy system reliability in pharmaceutical research environments.

Materials and Equipment:

- Surface scatter measurement data (if available)

- ABg scatter model parameters for typical surface finishes

- Software supporting bidirectional scattering distribution function (BSDF) models

Procedure:

- Surface Property Assignment: Apply appropriate scatter models to optical and mechanical surfaces based on their material properties and manufacturing specifications. Use measured BSDF data when available or industry-standard ABg parameters for typical surface finishes.

- Parametric Analysis: Vary surface roughness parameters across expected manufacturing tolerances to establish sensitivity of system performance to finish quality.

- Contamination Modeling: Introduce representative contaminant particles (dust, fingerprints) at critical surfaces with appropriate scattering properties to simulate real-world operating conditions.

- Comparative Ray Tracing: Execute identical ray traces for clean and contaminated states, maintaining constant ray counts for valid comparison.

- Detector Analysis: Quantify the increase in stray light noise at the detector plane attributable to surface scatter and contamination.

Interpretation: Surfaces contributing more than 0.1% additional stray light when contaminated should be identified for special handling procedures or design modification. The results inform cleaning protocols and tolerance specifications for critical surfaces.

Mitigation Strategies and System Optimization

Based on the identification of problematic stray light paths through non-sequential analysis, several mitigation strategies can be implemented to optimize spectroscopy system performance.

Optical Design Optimization

Surface treatments and optical design modifications represent the first line of defense against stray light. Anti-reflection coatings can be optimized for specific wavelength ranges used in pharmaceutical spectroscopy applications to reduce surface reflections that might otherwise contribute to ghost images [27]. Baffles and light traps can be strategically placed to intercept stray light paths before they reach critical components, with their effectiveness validated through non-sequential simulation before implementation.