Emission Spectra in Qualitative Chemical Analysis: Principles, Applications, and Innovations for Biomedical Research

This article provides a comprehensive overview of the critical role emission spectroscopy plays in qualitative chemical analysis, particularly for researchers and professionals in drug development.

Emission Spectra in Qualitative Chemical Analysis: Principles, Applications, and Innovations for Biomedical Research

Abstract

This article provides a comprehensive overview of the critical role emission spectroscopy plays in qualitative chemical analysis, particularly for researchers and professionals in drug development. It explores the fundamental principles of light-matter interactions that enable elemental and molecular fingerprinting, details cutting-edge methodologies like LIBS imaging and ICP-OES being applied in pharmaceutical quality control and nuclear medicine, addresses key challenges in signal optimization and data processing, and validates techniques against regulatory standards. By synthesizing foundational knowledge with current applications and future trends, this review serves as an essential guide for leveraging emission spectroscopy to advance biomedical research and clinical diagnostics.

The Fundamentals of Emission Spectroscopy: How Light-Matter Interaction Enables Chemical Identification

Theoretical Foundations of Atomic Emission

Atomic emission spectroscopy (AES) is a powerful analytical technique used to determine the elemental composition of a sample. The core principle resides in the quantized nature of atomic energy levels and the interaction between matter and electromagnetic radiation [1] [2].

When atoms absorb energy, their electrons are promoted from the ground state (the lowest energy state) to a higher-energy excited state [2]. This excited state is unstable. Consequently, the electrons spontaneously return to a lower energy level, releasing the excess energy in the form of a photon [1]. The energy of this emitted photon is precisely equal to the energy difference between the two electronic states involved in the transition, as described by the formula: ( E_{\text{photon}} = h\nu ), where ( h ) is Planck's constant and ( \nu ) is the frequency of the photon [1].

The wavelength (or frequency) of the emitted light is characteristic of the specific electronic transition within a given element. Since every element has a unique electronic structure, it also possesses a unique set of possible energy transitions. This results in a distinctive atomic emission spectrum—a pattern of discrete spectral lines that serves as a "fingerprint" for the element [1] [2]. Analysis of this emitted light allows for the identification and quantification of elements in a sample of unknown composition, forming the basis for qualitative chemical analysis [1].

Modern Instrumentation and Technological Advances

The field of spectroscopic instrumentation is dynamic, with continuous innovations enhancing sensitivity, resolution, and application scope. A review of products introduced from 2024 to 2025 highlights key trends, particularly the divergence between laboratory and field-portable instrumentation [3].

Table 1: Advanced Spectroscopic Instrumentation (2024-2025)

| Technology | Instrument Example | Key Features | Target Applications |

|---|---|---|---|

| Inductively Coupled Plasma AES (ICP-AES) | Multi-collector ICP-MS [3] | High-resolution, multi-collector capability to resolve isotopes from interferences; flexible analysis customization [3] | High-precision elemental and isotopic analysis [3] [4] |

| Molecular Fluorescence | FS5 v2 Spectrofluorometer (Edinburgh Instruments) [3] | Increased performance and capabilities for detailed fluorescence analysis [3] | Photochemistry and photophysics research [3] |

| Molecular Fluorescence | Veloci A-TEEM Biopharma Analyzer (Horiba) [3] | Simultaneous Absorbance, Transmittance, and Excitation-Emission Matrix (A-TEEM) data collection [3] | Biopharmaceutical analysis (e.g., monoclonal antibodies, vaccine characterization) [3] |

| Quantum Cascade Laser (QCL) Microscopy | LUMOS II ILIM (Bruker) [3] | QCL-based imaging from 1800-950 cm⁻¹; fast image acquisition (4.5 mm²/s); reduces speckle via spatial coherence reduction [3] | High-resolution chemical imaging in materials science [3] |

| Quantum Cascade Laser (QCL) Microscopy | ProteinMentor (Protein Dynamic Solutions) [3] | QCL-based system (1800-1000 cm⁻¹) designed specifically for proteins [3] | Biopharmaceuticals: protein impurity ID, stability, deamidation monitoring [3] |

| Handheld/Raman | TaticID-1064ST (Metrohm) [3] | Handheld device with on-board camera, note-taking, and analysis guidance [3] | Hazardous materials identification and documentation by response teams [3] |

| Microwave Spectroscopy | Broadband Chirped Pulse System (BrightSpec) [3] | First commercial broadband chirped pulse microwave spectrometer [3] | Unambiguous determination of molecular structure and configuration in the gas phase [3] |

A significant market trend is the growing demand for elemental analysis in environmental testing, biotechnology, and clinical applications, propelling the adoption of techniques like ICP-AES [4]. The market is also characterized by innovation in automation, miniaturization for portability, and the expansion of applications into new areas such as food safety and pharmaceutical analysis [3] [4].

Experimental Methodology and Workflows

The practical application of atomic emission spectroscopy involves a sequence of critical steps to convert a sample into a measurable atomic emission signal.

Experimental Protocol: Flame Atomic Emission Spectroscopy

This is a foundational method for elemental analysis, particularly for alkali and alkaline earth metals [5].

- Sample Preparation: The solid or liquid sample is dissolved in a suitable solvent, typically an aqueous acid or solvent, to create a homogeneous solution [4].

- Nebulization: The sample solution is drawn into the burner and dispersed as a fine aerosol or spray using a nebulizer [1].

- Desolvation and Atomization: The aerosol is introduced into the flame. The solvent first evaporates, leaving behind finely divided solid particles. These particles then move to the hottest region of the flame, where they vaporize and dissociate into free, gaseous ground-state atoms [1].

- Excitation: In the flame, collisions with thermal energy cause a fraction of the gaseous atoms to become excited, promoting their electrons to higher energy levels [1] [2].

- Emission and Detection: The excited atoms spontaneously decay to lower energy states, emitting photons at characteristic wavelengths [2]. The emitted light is collected and passed through a monochromator, which separates the wavelengths. A detector then measures the intensity of the specific spectral line(s) of interest [1].

- Quantification: The intensity of the emitted light at a specific wavelength is proportional to the concentration of the corresponding element in the sample. Quantification is achieved by comparing the signal intensity to those from a series of standard solutions of known concentration [1].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Atomic Emission Spectroscopy

| Item/Reagent | Function |

|---|---|

| High-Purity Gases (e.g., Acetylene, Nitrous Oxide) | Serves as fuel and oxidant to generate a high-temperature flame for atomization and excitation in flame AES [1]. |

| Certified Reference Materials | Standard solutions with known elemental concentrations used for instrument calibration and quality assurance to ensure analytical accuracy [1]. |

| Ultrapure Water | Used for sample preparation, dilution, and cleaning to prevent contamination from trace elements found in tap or deionized water [3]. |

| High-Purity Acids (e.g., HNO₃, HCl) | Used for sample digestion and dissolution to bring solid samples into solution for analysis [4]. |

| ICP Torches | The core component in ICP-AES where argon plasma is sustained, providing a high-temperature (6000-10000 K) source for efficient atomization and excitation [4]. |

Signaling Pathways and Analytical Workflows

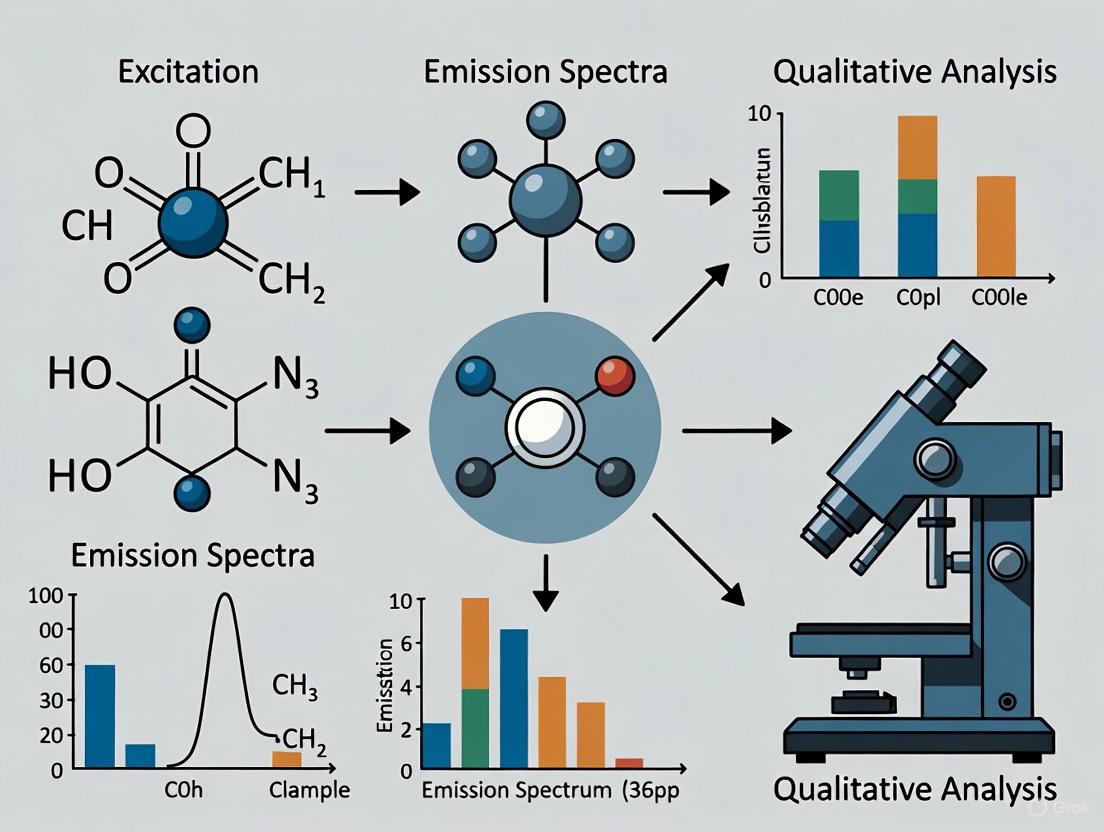

The following diagram illustrates the core logical process from sample introduction to data interpretation in atomic emission spectroscopy.

The Critical Role in Qualitative Chemical Analysis

The unique "fingerprint" nature of atomic emission spectra makes AES an indispensable tool in qualitative chemical analysis research. The technique's power lies in its ability to definitively identify elements based on their characteristic spectral lines [1]. This application has profound implications across numerous scientific disciplines.

In astronomical spectroscopy, the emission (and absorption) spectra of distant stars are analyzed to determine their elemental composition, providing crucial information about the universe's makeup and the nucleosynthesis processes within stars [1] [6]. In environmental and clinical fields, AES is used to detect and quantify trace metals and other elements in complex samples like water, soil, and biological tissues [4]. The historical significance of AES is also notable; the observation that the dark Fraunhofer lines in the solar spectrum coincided with emission lines of known elements led to the groundbreaking conclusion that these lines were caused by absorption in the sun's atmosphere, revealing the composition of the sun itself [1].

The ongoing technological advancements, including the development of more sensitive, portable, and automated instruments, continue to expand the role of emission spectroscopy in research. These innovations enable faster analysis, lower detection limits, and the ability to perform sophisticated chemical analysis directly in the field, thereby solidifying the technique's central role in modern analytical chemistry [3] [4].

The electromagnetic (EM) spectrum represents the entire range of electromagnetic radiation, classified by wavelength and frequency. In chemical analysis, the interaction between matter and specific regions of this spectrum provides a powerful foundation for identifying and quantifying substances. The core principle underlying this analytical approach is that atoms and molecules absorb or emit specific wavelengths of energy, creating characteristic signals that serve as molecular fingerprints [7].

When electromagnetic radiation interacts with matter, it can excite molecules to higher energy states. The measurement of this energy absorption or emission forms the basis of spectroscopic techniques that are indispensable across scientific disciplines, from pharmaceutical development to environmental monitoring [7]. This whitepaper examines the major regions of the electromagnetic spectrum utilized in qualitative chemical analysis, with particular emphasis on their operating principles, applications, and methodological considerations for researchers.

Fundamental Regions of the Spectrum for Chemical Analysis

High-Energy Regions: X-Rays and Ultraviolet

X-rays, with wavelengths of approximately 0.01 to 10 nanometers, possess sufficient energy to excite inner-shell electrons in atoms. This excitation enables elemental identification and is particularly valuable for determining atomic structures in crystallography and analyzing inorganic compounds [7].

Ultraviolet (UV) radiation (10-400 nm) promotes valence electrons to higher energy orbitals, making it especially sensitive to conjugated systems and double bonds in organic molecules. UV spectroscopy provides critical information about electronic transitions and is routinely employed for quantifying nucleic acids, proteins, and pharmaceuticals with chromophores [7].

The Infrared Region: Molecular Fingerprinting

The infrared region (approximately 700 nm to 1 mm) is particularly significant for molecular identification. Within this region, the mid-infrared portion (approximately 2.5-25 μm) is often called the "fingerprint region" because it provides unique absorption patterns specific to individual compounds [7]. When IR radiation interacts with a molecule, the energy absorbed corresponds to specific molecular vibrations, including stretching and bending motions of chemical bonds [8].

Fourier Transform Infrared (FTIR) spectroscopy has become the dominant methodology in this region, offering significant advantages through its application of interferometry. FTIR provides the Jacquinot advantage (higher energy throughput), Fellget's advantage (simultaneous measurement of all frequencies), and Connes' advantage (superior wavelength accuracy) [8]. These technical benefits make FTIR exceptionally suitable for analyzing complex biological samples, as demonstrated by its application in wheat proteome studies where it successfully quantified protein secondary structures and concentrations across different varieties [9].

Microwave and Radio Wave Regions

Microwaves (1 mm to 1 m) are primarily associated with rotational transitions in molecules and are utilized in rotational spectroscopy for studying gas-phase molecules. Meanwhile, radio waves form the basis for Nuclear Magnetic Resonance (NMR) spectroscopy, which exploits the magnetic properties of atomic nuclei to determine molecular structure and dynamics [10].

Table 1: Analytical Regions of the Electromagnetic Spectrum

| Spectral Region | Wavelength Range | Energy Transitions | Primary Applications |

|---|---|---|---|

| X-rays | 0.01-10 nm | Inner-shell electrons | Elemental analysis, crystallography |

| Ultraviolet (UV) | 10-400 nm | Valence electrons | Quantitative analysis of chromophores |

| Visible | 400-700 nm | Valence electrons | Colorimetry, spectrophotometry |

| Near-IR (NIR) | 700 nm-2.5 μm | Overtone & combination vibrations | Process monitoring, food analysis |

| Mid-IR (MIR) | 2.5-25 μm | Fundamental vibrations | Molecular fingerprinting, structure elucidation |

| Microwave | 1 mm-1 m | Molecular rotations | Rotational spectroscopy |

| Radio Waves | 1 m-100 km | Nuclear spin | NMR spectroscopy |

Complementary Vibrational Spectroscopy Techniques

Mid-Infrared (MIR) and Near-Infrared (NIR) Spectroscopy

While both MIR and NIR spectroscopy measure molecular vibrations, they target different types of transitions. MIR spectroscopy probes fundamental vibrations of chemical bonds, producing sharp, well-defined peaks that are highly specific for functional group identification [8]. In contrast, NIR spectroscopy measures overtones and combination bands of CH, NH, and OH vibrations, which are approximately 10-100 times weaker than fundamental absorptions [8].

The practical implication of this difference is that NIR can penetrate deeper into samples and requires minimal sample preparation, making it ideal for process monitoring and analysis of intact samples. However, NIR spectra exhibit broad, overlapping bands that require sophisticated multivariate analysis for interpretation [8]. MIR provides more detailed structural information but often requires specific sampling techniques, particularly for strongly absorbing materials.

Raman Spectroscopy: A Complementary Approach

Raman spectroscopy measures inelastic scattering of monochromatic light, typically from a laser in the visible or near-infrared range [11]. Unlike infrared absorption, Raman activity requires a change in molecular polarizability during vibration rather than a permanent dipole moment [8]. This fundamental difference in selection rules makes Raman and IR complementary techniques—vibrations that are strong in one are often weak in the other, particularly for symmetric vibrations and bonds without permanent dipole moments [11].

Recent innovations have significantly advanced Raman capabilities for quantitative analysis. The multi-laser-power calibration (MLPC) method enables accurate quantification using a single calibration solution by varying applied laser power, reducing reagent use and chemical waste while maintaining analytical precision [12]. This development has proven particularly valuable for distinguishing specific nitrogen species (ammonium, nitrate, urea) and phosphorus forms (phosphate vs. phosphite) in agricultural and environmental samples [12].

Table 2: Comparison of Vibrational Spectroscopy Techniques

| Parameter | Mid-IR (MIR) | Near-IR (NIR) | Raman |

|---|---|---|---|

| Physical Process | Absorption | Absorption | Inelastic scattering |

| Spectral Range | 4000-400 cm⁻¹ | 12500-4000 cm⁻¹ | Dependent on laser |

| Sample Preparation | Often required | Minimal | Minimal |

| Water Compatibility | Poor (strong absorber) | Good | Excellent |

| Information Content | High (fundamentals) | Lower (overtones) | Complementary to MIR |

| Quantitative Capability | Good (ATR-FTIR) | Excellent (with chemometrics) | Good (with MLPC) |

Methodological Framework and Experimental Protocols

Attenuated Total Reflectance Mid-Infrared (ATR-MIR) Analysis of Wheat Proteins

Principle: ATR-MIR spectroscopy enables direct analysis of protein secondary structures in complex biological samples by measuring absorption in the mid-infrared region, particularly the amide I and II bands [9].

Materials and Reagents:

- Spectrometer: FTIR spectrometer with ATR accessory

- Protein Fractions: Albumin, globulin, gliadin, glutenin extracts

- Reference Materials: Potassium bromide (KBr) for background

- Software: Multivariate analysis package (ASCA, PCA)

Experimental Workflow:

- Sample Preparation: Isolate wheat protein fractions using sequential extraction protocol

- Instrument Calibration: Collect background spectrum with clean ATR crystal

- Spectral Acquisition: Apply samples directly to ATR crystal; measure 4000-400 cm⁻¹ range

- Secondary Structure Analysis: Deconvolute amide I region (1600-1700 cm⁻¹) to quantify α-helix, β-sheet, β-turn components

- Statistical Validation: Apply ANOVA simultaneous component analysis (ASCA) to confirm significance of spectral differences

Key Findings: Albumin and globulin fractions showed predominant α-helix structures (57.8% and 45.9%, respectively), while gliadins contained 38.3% β-turn and 36.9% α-helix, and glutenins predominantly exhibited β-turn structures (44.8%) [9]. Quantitative analysis revealed protein concentration ranges from 1.7-3.6 g/100g for albumins to 4.0-5.4 g/100g for gliadins, demonstrating the method's utility for comprehensive proteome analysis [9].

Principle: This method combines excitation-emission matrix fluorescence with a two-dimensional convolutional neural network (2DCNN) for quantitative analysis of complex mixtures, specifically diesel emulsified oil content in marine environments [13].

Materials and Reagents:

- Spectrometer: FLS1000 spectrometer with temperature control

- Software: Python with TensorFlow/Keras for AR-2DCNN implementation

- Calibration Standards: Diesel emulsified oil samples (0.1-100 mg/L)

- Chemometric Tools: Partial least squares regression (PLSR) with contribution rate analysis

Protocol:

- Sample Presentation: Prepare homogeneous emulsified oil suspensions

- EEM Acquisition: Collect fluorescence spectra across excitation (200-500 nm) and emission (250-550 nm) ranges

- Feature Extraction: Implement attention mechanism and regularization in 2DCNN architecture

- Model Validation: Compare AR-2DCNN + CR-PLSR performance against traditional methods (ResNet-50, ConvNeXt)

- Quantitative Prediction: Apply optimized model to predict oil content in unknown samples

This approach demonstrated superior performance for emulsified oil quantification, highlighting the growing integration of artificial intelligence with spectroscopic methods [13].

The Modern Spectroscopic Toolkit: Advanced Reagents and Materials

Contemporary spectroscopic analysis relies on specialized reagents and materials optimized for specific techniques and applications.

Table 3: Essential Research Reagent Solutions for Spectroscopic Analysis

| Reagent/Material | Technical Function | Application Context |

|---|---|---|

| ATR Crystals (diamond, ZnSe) | Internal reflection element for sample interface | FTIR spectroscopy of liquids, pastes, solids |

| Extended InGaAs Detectors | NIR light detection to 2.5 μm | NIR spectroscopic analysis |

| Calibration Standards | Quantitative reference materials | MLPC-Raman quantification |

| Multivariate Calibration Sets | Chemometric model development | NIR and MIR quantitative analysis |

| FT-Raman Lasers (Nd:YAG, 1064 nm) | Excitation source minimizing fluorescence | FT-Raman spectroscopy |

Integration of Artificial Intelligence in Spectral Analysis

The field of spectroscopy is undergoing a transformation through the integration of artificial intelligence (AI) and chemometrics. Classical methods like principal component analysis (PCA) and partial least squares (PLS) regression remain fundamental but are now complemented by advanced AI frameworks that automate feature extraction, nonlinear calibration, and data fusion [14].

Machine Learning (ML) algorithms excel at identifying structure in spectroscopic data without explicit programming, improving analytical performance as they process more data. Key ML paradigms include:

- Supervised Learning: Trained on labeled data for regression or classification (e.g., PLS, support vector machines, Random Forest)

- Unsupervised Learning: Discovers latent structures in unlabeled data (e.g., PCA, clustering)

- Deep Learning: Employs multi-layered neural networks for hierarchical feature extraction [14]

Specific algorithms finding increasing application in spectroscopy include Random Forest for spectral classification with strong generalization capability; Support Vector Machines (SVM) for optimal separation of classes in high-dimensional spectral space; and Convolutional Neural Networks (CNNs) for automated feature extraction from complex spectral data [14]. The integration of Explainable AI (XAI) frameworks addresses the interpretability challenge of complex models, helping researchers identify diagnostically significant wavelength regions and maintain chemical insight [14].

Workflow Visualization

Spectroscopy Analysis Workflow

Molecular Interactions with EM Spectrum

Electromagnetic spectrum analysis provides an indispensable toolkit for qualitative and quantitative chemical analysis across scientific disciplines. From high-energy X-rays to radio waves, each spectral region offers unique insights into molecular structure and composition. The continuing evolution of these techniques—particularly through integration with artificial intelligence and advanced chemometrics—ensures their growing relevance in research and industrial applications.

The complementary nature of different spectroscopic methods allows researchers to select optimal approaches for specific analytical challenges. As methodological innovations like MLPC-Raman and ATR-FTIR demonstrate, ongoing technical refinements continue to enhance the precision, efficiency, and applicability of electromagnetic techniques for decoding molecular information across the spectrum.

Emission spectroscopy stands as a cornerstone technique in qualitative chemical analysis, enabling researchers to identify elements and compounds based on their unique electromagnetic signatures. The fundamental principle underpinning this methodology is that when atoms or molecules absorb energy, their electrons transition to excited states; upon returning to lower energy states, they emit photons at characteristic wavelengths that serve as unique identifiers [15] [16]. This technical guide examines the core distinctions between atomic and molecular emission phenomena, with particular emphasis on their characteristic lines and fingerprint regions—concepts fundamental to their application in research and industry.

Within the context of a broader thesis on the role of emission spectra in qualitative chemical analysis research, understanding the dichotomy between atomic and molecular emission patterns becomes paramount. Atomic emission manifests as discrete, sharp spectral lines resulting from electronic transitions between well-defined energy levels in isolated atoms [15] [17]. In contrast, molecular emission produces broad, complex bands arising from the combined effects of electronic, vibrational, and rotational transitions [18]. These distinctive signatures provide researchers across pharmaceutical development, environmental monitoring, and materials science with powerful tools for substance identification and characterization.

Fundamental Principles of Emission Spectroscopy

Atomic Emission Mechanisms

Atomic emission occurs when a valence electron in a higher-energy atomic orbital returns to a lower-energy atomic orbital, emitting a photon with energy corresponding to the difference between these discrete energy levels [17]. The energy of emitted photons is precisely determined by the quantum mechanical structure of the atom, following the relationship E = hc/λ, where h is Planck's constant, c is the speed of light, and λ is the wavelength of the emitted radiation [15]. Each element possesses a unique electronic configuration and therefore exhibits a characteristic emission spectrum that serves as its "fingerprint" for identification purposes [16].

The intensity of an atomic emission line, Ie, is quantitatively described by the equation Ie = kN, where k is a constant accounting for transition efficiency and N represents the number of atoms populating the excited state [17]. For systems in thermal equilibrium, the population of excited states follows the Boltzmann distribution, establishing a direct relationship between emission intensity and elemental concentration that forms the basis for quantitative analysis [17].

Molecular Emission Mechanisms

Molecular emission spectra exhibit considerably greater complexity than atomic spectra due to the involvement of multiple energy transitions types. Unlike atoms, molecules possess three distinct categories of energy states: electronic, vibrational, and rotational. The total energy of a molecule can be approximated as the sum of these components: Etotal = Eelectronic + Evibrational + Erotational [18].

When molecules undergo electronic transitions, they simultaneously experience vibrational and rotational transitions, resulting in emission bands comprising numerous closely-spaced lines rather than discrete lines [18]. These broad, structured bands create characteristic patterns that serve as molecular fingerprints, particularly in the infrared region where vibrational transitions dominate [18]. The specific wavelengths absorbed or emitted depend on factors including bond strength, atomic masses, and molecular geometry, with different functional groups displaying distinct absorption peaks within defined wavelength regions [18].

Characteristic Spectral Features: Comparative Analysis

Atomic Spectral Lines

Atomic emission spectra consist of sharp, well-defined lines at discrete wavelengths corresponding to electronic transitions between quantized energy levels. For example, hydrogen atoms emit at precisely 410 nm (violet), 434 nm (blue), 486 nm (blue-green), and 656 nm (red) in the visible spectrum [16]. These discrete lines correspond to electrons transitioning between different orbital energy levels, with the shortest wavelength (410 nm) representing the highest-energy transition [16].

The nomenclature for atomic spectral lines often includes Fraunhofer designations for strong visible lines (such as the K-line for singly-ionized calcium at 393.366 nm) or Roman numerals indicating ionization state (Cu I for neutral copper, Cu II for singly-ionized) [15]. The spectral lines' exact positions remain largely unaffected by chemical environment, making atomic emission particularly valuable for elemental identification regardless of molecular composition [15].

Molecular Fingerprint Regions

Molecular spectra feature in characteristic "fingerprint regions" where absorption and emission patterns provide unique identifiers for specific compounds. In infrared spectroscopy, for example, the region between approximately 500 cm⁻¹ to 1500 cm⁻¹ contains complex vibrational patterns highly specific to individual molecules [18]. Unlike atomic lines, these molecular fingerprints arise from the combined contributions of multiple vibrational modes, bond rotations, and molecular symmetries.

The fingerprint region enables unambiguous identification of molecular species, including complex pharmaceuticals and organic compounds. For instance, the presence of specific functional groups like hydroxyl, carbonyl, or amine groups produces characteristic emissions that facilitate structural elucidation [18]. This region is particularly valuable for distinguishing between structurally similar compounds or confirming molecular identity in quality control applications.

Table 1: Comparative Features of Atomic and Molecular Emission Spectra

| Characteristic | Atomic Emission | Molecular Emission |

|---|---|---|

| Spectral Appearance | Discrete, sharp lines | Broad, structured bands |

| Origin | Electronic transitions between atomic orbitals | Combined electronic, vibrational, and rotational transitions |

| Spectral Complexity | Relatively simple, element-specific | Complex, compound-specific |

| Identifying Features | Characteristic line patterns | Fingerprint regions |

| Primary Analytical Use | Elemental identification and quantification | Molecular identification and structural analysis |

| Influencing Factors | Nuclear charge, electron configuration | Molecular structure, functional groups, bond strengths |

Instrumentation and Methodological Approaches

Atomic Emission Spectroscopy Techniques

Atomic emission spectroscopy (AES) employs various excitation sources to atomize and excite samples, with the choice of source significantly influencing analytical performance. Flame AES utilizes combustion flames to excite atoms, particularly effective for alkali metals and other easily-excited elements [19] [17]. Inductively coupled plasma (ICP) sources operate at substantially higher temperatures (6000-10000 K), providing superior atomization efficiency and excitation capability for a wider range of elements [19] [17]. Spark and arc techniques serve primarily for solid conductive samples, especially in metallurgical applications [19].

Modern advancements in AES instrumentation include multi-collector ICP-MS systems designed for enhanced flexibility and high-resolution isotope analysis, capable of resolving isotopes from their interferences [3]. These developments support increasingly sophisticated applications in environmental monitoring, clinical analysis, and pharmaceutical research where precise elemental quantification is required [4].

Molecular Spectroscopy Techniques

Molecular emission analysis employs diverse spectroscopic techniques targeting different regions of the electromagnetic spectrum. Infrared spectroscopy measures vibrational transitions, providing detailed information about functional groups and molecular structure [18]. Fluorescence spectroscopy, including advanced implementations such as A-TEEM (Absorbance-Transmittance and Excitation-Emission Matrix), offers enhanced sensitivity for characterizing complex biological molecules like monoclonal antibodies and vaccines [3].

Recent innovations include quantum cascade laser (QCL) based microscopy systems such as the LUMOS II, which generates infrared images in transmission or reflection modes at rapid acquisition rates of 4.5 mm² per second [3]. Specialized instruments like the ProteinMentor system specifically address the needs of biopharmaceutical research, enabling protein impurity identification, stability assessment, and deamidation process monitoring [3].

Experimental Protocols for Emission Analysis

Atomic Emission Spectroscopy Protocol

Sample Preparation:

- For liquid samples: Introduce via nebulizer to create fine aerosol [19] [17]

- For solid samples: Use spark or arc ablation, or laser vaporization for introduction into excitation source [19]

- Conduct appropriate dilution to remain within instrumental linear range [17]

Instrument Calibration:

- Prepare standard solutions with known concentrations of target elements

- Establish calibration curve by measuring emission intensities at characteristic wavelengths

- Verify calibration with certified reference materials [19]

Measurement Procedure:

- Introduce sample into excitation source (flame, plasma, spark, or arc)

- Monitor emission intensity at element-specific wavelengths

- Record spectrum across relevant wavelength range for qualitative analysis [19] [17]

Data Analysis:

- Identify elements present based on characteristic emission lines

- Quantify concentration using measured intensity and calibration curve [17]

- Apply correction for spectral interferences when necessary

Molecular Emission Spectroscopy Protocol

Sample Preparation:

- Prepare appropriate sample form (solid, liquid, or gas) compatible with technique

- For IR spectroscopy: Use KBr pellets for solids, solution cells for liquids

- Ensure optimal sample thickness/pathlength for measurable signal without saturation [18]

Instrument Configuration:

- Select appropriate spectral range (e.g., mid-IR: 4000-400 cm⁻¹ for fingerprint region)

- Choose suitable detector and source based on analytical requirements

- Optimize resolution settings based on sample complexity [3] [18]

Data Collection:

- Collect background spectrum for reference

- Acquire sample spectrum under identical conditions

- For fluorescence: Scan excitation and emission wavelengths to generate EEM plots [3]

Spectral Interpretation:

- Identify characteristic band patterns in fingerprint region

- Assign vibrational modes to specific functional groups

- Compare with reference spectra for compound identification [18]

Research Reagents and Essential Materials

Table 2: Essential Research Reagents and Materials for Emission Spectroscopy

| Reagent/Material | Function/Application | Technical Specifications |

|---|---|---|

| High-Purity Gases (Argon, Nitrogen) | Plasma generation and nebulization in ICP-AES | High purity (≥99.995%) to minimize spectral interference |

| Certified Reference Materials | Instrument calibration and method validation | Traceable to national standards with certified element concentrations |

| Ultrapure Water Systems | Sample preparation and dilution | Resistivity ≥18.2 MΩ·cm at 25°C to prevent contamination |

| KBr Powder (IR Grade) | Preparation of pellets for IR spectroscopy | Spectral grade, dry, for transparent pellets in fingerprint region analysis |

| Solvents (HPLC Grade) | Sample dissolution and extraction | Low UV cutoff, minimal fluorescent impurities |

| Deuterated Lamps | Wavelength calibration in UV-Vis instruments | Provides sharp emission lines for accurate wavelength verification |

Advanced Applications in Pharmaceutical Research

Emission spectroscopy techniques provide critical analytical capabilities throughout drug development pipelines. Atomic emission methods, particularly ICP-AES, enable precise quantification of metal catalysts and detection of elemental impurities in active pharmaceutical ingredients (APIs) to comply with regulatory requirements [4] [20]. The technique's multi-element capability and wide linear dynamic range make it indispensable for pharmaceutical quality control [19].

Molecular emission spectroscopy facilitates drug characterization and interaction studies. Infrared and Raman spectroscopy reveal structural information about APIs, polymorph forms, and formulation components [3] [18]. Recent advances in protein-specific spectroscopic systems allow researchers to monitor protein stability, identify degradation products, and study drug-biomolecule interactions without extensive sample preparation [3] [20].

Emerging techniques including X-ray emission spectroscopy (XES) offer enhanced capabilities for studying metal-containing pharmaceuticals, providing element-selective probes of local electronic structure and ligand environments [20] [21]. These methods support investigations of catalytic mechanisms, redox processes, and metal speciation in pharmaceutical systems with minimal sample preparation [20].

Current Trends and Future Perspectives

The field of emission spectroscopy continues evolving through technological innovations and expanding applications. Notable trends include the development of miniaturized and portable instruments enabling field-based analysis across environmental, agricultural, and pharmaceutical domains [3] [4]. Automation and high-throughput systems address growing demands for efficiency in drug discovery and quality control environments [3].

Advanced detection systems incorporating focal plane array detectors and quantum cascade lasers enhance spatial resolution and acquisition speeds for spectroscopic imaging [3]. The integration of multivariate analysis and machine learning algorithms with spectral data facilitates more sophisticated pattern recognition in complex samples [3].

The growing emphasis on product quality and safety continues to drive spectroscopic innovation, particularly in regulated industries. Atomic and molecular emission techniques remain foundational to chemical analysis research, with their distinctive capabilities complementing each other in comprehensive material characterization strategies. As instrumental sensitivity and resolution improve, emission spectroscopy applications continue expanding into new domains including nanomaterial characterization, single-cell analysis, and real-time process monitoring.

Diagram 1: Comparative workflows for atomic and molecular emission analysis showing divergent pathways from sample preparation to spectral interpretation.

Modern spectrometers are indispensable instruments in chemical analysis, enabling researchers to decipher the elemental fingerprint of matter through its emission spectra. This technical guide deconstructs the core components of optical spectrometers, detailing the fundamental principles and engineering trade-offs inherent in their design. Framed within the context of qualitative chemical analysis, this whitepaper provides an in-depth examination of how these instruments measure the unique emission lines generated by excited atoms, allowing scientists to identify substances with high specificity. The discussion is supported by structured data tables, experimental protocols, and visualizations of the instrumental workflow, providing a comprehensive resource for researchers and drug development professionals engaged in analytical spectroscopy.

Emission spectrometry is a powerful method for the spectroscopic analysis of sample materials, based on the fundamental principle that excited atoms and ions emit light at characteristic wavelengths [6]. When an atom absorbs energy, its electrons are promoted to higher energy orbits. As these electrons relax back to their ground state, they release photons of specific energies, corresponding to precise wavelengths of light [22]. This collection of wavelengths, known as the emission spectrum, serves as a unique fingerprint for each chemical element, enabling its identification [6].

The foundation of this analytical method dates back to the 17th century with Isaac Newton's light dispersion experiments, but it was the work of Bunsen and Kirchhoff in the 19th century that established the direct link between characteristic spectral lines and specific elements [22]. Qualitative chemical analysis by emission spectra leverages these discrete, element-specific lines to identify the atomic composition of a sample without necessarily quantifying the amounts present [23]. This technique is particularly valuable for its sensitivity and ability to detect multiple elements simultaneously, making it crucial in fields ranging from biomedical research to astrophysics and materials science [6].

Core Components of an Optical Spectrometer

While various spectrometer types exist (e.g., mass, NMR), the optical spectrometer is the most common platform for emission spectroscopy. Its fundamental purpose is to take light generated by a sample, separate it into its constituent wavelengths, and measure the intensity of each [24]. Achieving this requires several key components working in concert, each with distinct design considerations and trade-offs.

Entrance Slit

The optical pathway begins at the entrance slit, which performs the critical function of defining the incoming light beam [24].

- Function: Controls the amount and geometry of light entering the spectrometer system.

- Design Trade-off: A wide slit allows more light to enter, enhancing the system's ability to measure faint sources but reducing the maximum achievable spectral resolution. Conversely, a narrow slit increases spectral resolution at the expense of signal intensity [24].

- Typical Specifications: In compact devices, the slit width is often fixed (e.g., 25 μm), while larger laboratory spectrometers may feature adjustable slits to accommodate varying experimental needs [24].

Table 1: Performance Trade-offs of Entrance Slit Width

| Slit Width | Signal Intensity | Spectral Resolution | Ideal Use Case |

|---|---|---|---|

| Narrow | Lower | Higher | Analysis of bright sources requiring fine detail |

| Wide | Higher | Lower | Analysis of faint light sources or rapid measurements |

Dispersive Element: Diffraction Grating or Prism

The heart of the wavelength separation process is the dispersive element, which spatially spreads the light based on its wavelength [24].

- Diffraction Grating: This is the most common component, typically featuring a surface with many parallel, closely spaced grooves. The grating operates on the principle of constructive interference, described by the grating equation: ( m\lambda = d(\sin\thetam - \sin\thetai) ) where ( d ) is the grating spacing, ( \thetam ) is the diffraction angle, ( \thetai ) is the angle of incidence, ( \lambda ) is the wavelength, and ( m ) is the diffraction order [24].

- Grating Specifications: Gratings are characterized by their groove density (grooves per mm). A higher groove density (smaller ( d )) spreads light over a larger angular range, providing higher resolution but covering a smaller wavelength range per detector segment and reducing signal strength [24].

- Prisms: While less common due to higher cost and generally lower resolution, prisms disperse light via refraction, bending different wavelengths by different amounts [24].

Table 2: Comparison of Dispersive Elements

| Feature | Diffraction Grating | Prism |

|---|---|---|

| Dispersion Principle | Constructive interference | Refraction |

| Resolution | Typically higher | Typically lower |

| Cost | Generally lower | Generally higher |

| Light Efficiency | Can be high with optimized coatings | Dependent on material transmittance |

| Spectral Range | Can be very broad | Limited by material absorption |

Detector

The detector translates the optical signal into an electrical one for quantification. Modern optical spectrometers predominantly use Charge-Coupled Devices (CCDs), which are arrays of light-sensitive pixels [24] [6].

- Function: Each pixel corresponds to a specific wavelength band, and it generates an electrical signal proportional to the intensity of the light falling upon it [24].

- Key Characteristics: CCDs are favored for their high dynamic range and uniform pixel response, allowing for precise intensity measurements across the spectrum [24].

- Noise Reduction: To minimize thermal noise (dark current), CCD detectors in scientific-grade instruments are often cooled [24].

Routing Optics and System Configuration

The routing optics, which can be a system of mirrors or lenses, guide the light through the instrument from the slit to the grating and finally onto the detector [24].

- Mirrors vs. Lenses: Curved mirrors are generally preferred over lenses because they introduce fewer chromatic and spatial image aberrations [24].

- Optical Configurations: Several established configurations exist, such as the Czerny-Turner and Fastie-Ebert designs, each with relative advantages and disadvantages concerning optical aberrations, stray light rejection, and physical size [24].

Additional Components: Higher-Order Filters

Instruments with a wide spectral detection range may require higher-order filters. These are necessary because a diffraction grating can produce multiple overlapping spectra (different orders, ( m )) for a single input of white light. A filter blocks these higher-order spectra from reaching the detector, ensuring that the detected signal corresponds only to the desired wavelength range [24].

Experimental Protocol: Qualitative Analysis Using Emission Spectra

The following protocol outlines a general methodology for identifying elements in a solid sample using an optical emission spectrometer with an electrical excitation source.

Sample Preparation

- Solid Samples: For conductive metals, the sample may be used directly as an electrode. For non-conductive powders (e.g., soils, ceramics), mix the sample homogenously with a high-purity graphite powder and pack it into a graphite electrode cup.

- Liquid Samples: Aspirate the liquid directly into the excitation source if using an inductively coupled plasma (ICP) system. Alternatively, deposit a known volume onto a graphite electrode and dry under an infrared lamp.

- Blanks: Prepare a procedural blank containing all reagents and materials except the analyte to track potential contamination.

Instrument Calibration and Setup

- Wavelength Calibration: Introduce a light source with known emission lines (e.g., a mercury-argon lamp) into the spectrometer. Record the pixel positions of these known lines to create a wavelength-pixel calibration curve.

- Source Conditioning: Ignite the plasma or arc source and allow it to stabilize for the time recommended by the manufacturer (typically 10-30 minutes). Pre-burn any solid electrodes to remove surface contaminants.

- Acquisition Parameters: Set the integration time and detector gain to ensure a strong signal without saturating the detector pixels.

Data Acquisition

- Sample Introduction: Position the electrode or initiate the liquid aspiration to introduce the sample into the excitation source.

- Excitation: The sample is vaporized, atomized, and excited within the high-energy source (e.g., plasma, arc, or spark). The excited atoms and ions will emit light at their characteristic wavelengths.

- Spectral Collection: The emitted light is collected, dispersed by the grating, and recorded by the CCD detector, generating a plot of intensity versus wavelength.

Data Analysis and Qualitative Identification

- Peak Identification: Analyze the acquired spectrum to identify the positions (wavelengths) of all significant emission peaks.

- Database Matching: Compare the identified wavelengths against a database of known elemental emission lines (e.g., the NIST Atomic Spectra Database).

- Validation: Confirm the presence of an element by identifying multiple, non-interfered lines for that element within the spectrum. The relative intensities of these lines should be consistent with their known transition probabilities.

Figure 1: Experimental workflow for qualitative analysis using emission spectra.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents and materials essential for conducting emission spectrometric analysis, particularly for sample preparation and calibration.

Table 3: Essential Research Reagents and Materials for Emission Spectrometry

| Item Name | Function & Application |

|---|---|

| High-Purity Graphite Powder | Serves as a conductive matrix for diluting and packing non-conductive solid samples (e.g., soils, ceramics) for electrode analysis. |

| Graphite Electrodes | Act as the support and conductor for solid samples during arc/spark excitation. Available in various shapes (cups, rods) for different sample types. |

| Mercury-Argon Calibration Lamp | Provides sharp, well-defined atomic emission lines at known wavelengths for accurate spectrometer wavelength calibration. |

| Certified Reference Materials (CRMs) | Samples with known and certified elemental compositions. Used for method validation and verifying the accuracy of qualitative identification. |

| High-Purity Acids (e.g., HNO₃, HCl) | Used for sample digestion and dissolution to prepare liquid samples for analysis, particularly in ICP-OES. |

| Ultrapure Water | Used for diluting samples, preparing blanks, and rinsing the sample introduction system to prevent cross-contamination. |

The power of modern spectrometers for qualitative chemical analysis is built upon the precise integration of their core components—the entrance slit, diffraction grating, detector, and routing optics. Each component introduces specific performance trade-offs between resolution, sensitivity, and spectral range that researchers must balance for their specific applications. By harnessing the fundamental principles of atomic physics, whereby each element emits light at a unique set of wavelengths, these instruments transform light into definitive chemical information. The rigorous experimental protocols and specialized reagents outlined in this guide ensure that the unique emission spectra of elements can be accurately captured and interpreted, solidifying emission spectrometry's role as a cornerstone technique in research and analytical laboratories worldwide.

Within the framework of research on the role of emission spectra in qualitative chemical analysis, the interpretation of the analytical signal is paramount. Qualitative analysis fundamentally involves identifying the chemical species present in a sample, and emission spectra provide a rich source of information for this purpose. The unique spectral patterns emitted by elements and molecules—their "spectral fingerprints"—serve as the primary basis for identification. This guide details the core spectral patterns used in qualitative analysis, the experimental protocols for acquiring high-quality data, and the advanced techniques that leverage these patterns for material characterization, with a specific focus on the insights that can be gleaned from X-ray, atomic, and molecular emission spectra.

Core Spectral Patterns and Their Analytical Significance

The identification of chemical species relies on recognizing specific, reproducible features within an emission spectrum. These patterns are direct consequences of the electronic structure and chemical environment of the emitting atom or molecule. The table below summarizes the key spectral features and their analytical significance in qualitative analysis.

Table 1: Key Spectral Patterns for Qualitative Chemical Analysis

| Spectral Feature | Spectral Origin | Analytical Significance in Qualitative Analysis | Typical Technique Examples |

|---|---|---|---|

| Spectral Line Energy/Position | Electronic transitions between quantized energy levels of an atom or ion. | Uniquely identifies elements; the most fundamental qualitative measurement. | ICP-OES, Atomic Emission Spectroscopy (AES) |

| Chemical Shift | Changes in the core energy levels of an atom due to its chemical bonding and oxidation state. | Identifies the oxidation state and local chemical environment (e.g., Fe²⁺ vs. Fe³⁺). | XES, XPS |

| Satellite Lines (e.g., Kβ', Kβ₂,₅) | Transitions involving electron orbitals involved in chemical bonding or from multi-electron processes. | Provides information on ligand identity and coordination chemistry. | High-Resolution XES |

| Line Shape & Width | Lifetime broadening, instrumental effects, and chemical environment. | Can indicate specific chemical states or phases, particularly when measured with high resolution. | XES, NMR |

| Vibrational Fine Structure | Vibrational energy levels superimposed on electronic transitions. | Provides a molecular "fingerprint" for identifying specific compounds and functional groups. | Raman Spectroscopy, FT-IR |

As evidenced in recent studies, these patterns are crucial for advanced material characterization. For instance, in X-ray Emission Spectroscopy (XES), the Kβ' satellite structure for elements between magnesium (Z=12) and chlorine (Z=17) exhibits an energy shift that correlates with the atomic number of the ligand atoms, allowing for ligand identification. Furthermore, the intensity ratio of the Kβ₂,₅ to Kβ₁,₃ satellite structures in elements from calcium (Z=20) to iron (Z=26) serves as a reliable indicator of the emitter's valence state [25].

Experimental Protocols for Spectral Analysis

Accurate interpretation of spectral patterns is contingent upon high-quality data acquisition. The following section outlines detailed methodologies for two critical spectroscopic approaches.

Protocol 1: Chemical Speciation Using High-Resolution X-Ray Emission Spectroscopy (XES)

This protocol is designed for determining chemical speciation—identifying specific oxidation states and chemical compounds—in solid materials using a laboratory-scale wavelength-dispersive X-ray spectrometer [25].

- Objective: To extract chemical speciation information (e.g., oxidation state, ligand identity) from the high-resolution Kα and Kβ fluorescence spectra of elements ranging from carbon (Z=6) to manganese (Z=25).

- Materials and Equipment:

- Wavelength-Dispersive XRF Spectrometer: Equipped with a Rhodium (Rh) anode X-ray tube.

- Crystal Analyzer: Suitable for the energy range of interest (e.g., LiF 200 for transition metals).

- Sample Preparation Tools: Pellet press for creating homogeneous solid samples, if applicable.

- Procedure:

- Sample Preparation: For solid powders, homogenize the material and press into a pellet without binding agents if possible to avoid contamination. The sample surface should be flat and representative of the bulk material.

- Spectrometer Optimization:

- Select Analytical Line: Choose the Kα or Kβ emission line group for the element of interest.

- Adjust Beam Optics: Select the appropriate mask size (e.g., 5 mm to 34 mm diameter) to define the target area and optimize intensity and resolution [25].

- Tune the Crystal Analyzer: Precisely set the Bragg angle for the crystal to maximize the intensity and resolution of the emission lines under investigation. This may involve fine-adjustments to the spectrometer's geometry.

- Data Acquisition:

- Set the X-ray tube to operate at optimal power (e.g., 50 kV, 1 kW max).

- Perform a wavelength scan across the spectral region of interest, including the main Kα or Kβ lines and their satellite regions.

- Use sufficient counting time per step to ensure a high signal-to-noise ratio, which is critical for resolving subtle spectral features.

- Data Interpretation:

- Identify Peak Shifts: Compare the centroid energy of the main emission lines (e.g., Kα₁, Kβ₁,₃) with those measured from standard compounds of known oxidation state.

- Analyze Satellite Structures: For 3d transition metals, measure the energy difference between the Kβ₁,₃ main line and the Kβ' satellite to infer ligand atomic number. Calculate the intensity ratio of the Kβ₂,₅ to Kβ₁,₃ features to determine the valence of the emitting atom [25].

Protocol 2: Signal Processing for Power Spectrum Estimation

This protocol, common in various forms of spectroscopy and signal processing, outlines the steps for transforming a time-domain signal into a frequency-domain power spectrum to identify constituent frequencies, which is analogous to identifying specific emission lines [26].

- Objective: To estimate the power distribution across different frequency components of a digitized signal, revealing periodicities and dominant frequencies.

- Materials and Equipment:

- Digitized Signal: A signal acquired through an analog-to-digital converter (ADC) with a known sampling frequency.

- Spectral Analysis Software: Capable of performing Fast Fourier Transform (FFT) with windowing and averaging functions.

- Procedure:

- Signal Preparation: Ensure the signal is digitized at a sampling frequency ( fₛ ) at least twice the highest frequency component of interest (Nyquist criterion).

- Define FFT Parameters:

- Segment Length: Choose a number of samples that is a power of two (e.g., 1024, 2048) for the FFT algorithm. This determines the frequency resolution; more samples yield higher resolution.

- Windowing: Apply a windowing function (e.g., Hamming window) to each data segment to taper the edges and minimize "spectral leakage" artifacts [26].

- Spectral Estimation:

- Averaging: To reduce the uncertainty in the power estimate, average the power spectra from multiple, successive FFT episodes.

- Overlap: Employ an overlap (typically 50%) between successive segments to recover the data lost from windowing and improve the efficiency of averaging [26].

- Output and Analysis:

- Generate a plot of power (or its logarithm) versus frequency.

- Identify dominant frequency components by locating peaks in the power spectrum. The frequency of a peak indicates a periodic component in the original signal, and its power indicates the strength of that component.

Visualization of Workflows

The following diagrams illustrate the logical workflows for the two core methodologies described above.

XES Chemical Speciation Workflow

Spectral Estimation & Analysis Workflow

The Scientist's Toolkit: Essential Reagents and Materials

The following table catalogs key reagents, standards, and materials essential for conducting rigorous qualitative analysis via emission spectroscopy.

Table 2: Essential Research Reagent Solutions and Materials

| Item Name | Function/Application | Critical Notes for Use |

|---|---|---|

| Certified Standard Reference Materials (SRMs) | Calibration of spectrometer energy scale and quantitative validation of methods. | Use matrix-matched standards (e.g., NIST reference materials) for accurate results in solid sample analysis [25] [27]. |

| High-Purity Cellulose/Boric Acid | Binding agent for preparing powder pellets in XRF/XES analysis. | Must be spectroscopically pure to avoid introducing trace element contaminants that generate interfering spectral lines. |

| Molecularly Imprinted Polymers (MIPs) | Selective pre-concentration of target analytes in complex matrices for SERS detection. | Enhances sensitivity and mitigates matrix interference by providing specific binding sites for target molecules [27]. |

| Internal Standard Solutions (e.g., Yttrium, Scandium) | Added to liquid samples to correct for instrumental drift and variations in sample introduction efficiency in ICP techniques. | The element chosen should not be present in the sample and should have similar spectroscopic behavior to the analytes. |

| Microfluidic Chips with Trapping Zones | Platform for integrating cell capture, enrichment, and Raman-based detection of pathogens. | Enables point-of-care testing; trapping methods can be optical, electrical, mechanical, or acoustic [27]. |

| Calibration Gas Mixtures | Establishing and verifying the wavelength scale in optical emission spectrometers. | Required for initial instrument calibration and periodic performance checks. |

Advanced Methodologies and Cutting-Edge Applications in Biomedical Research and Pharma

Laser-Induced Breakdown Spectroscopy (LIBS) is an advanced atomic emission spectrochemical technique capable of stand-off and in-situ detection of solid, liquid, gaseous, and colloidal specimens [28]. The core principle of LIBS involves using high-energy laser pulses to ablate a minute amount of material and generate a transient microplasma. The collected light from this plasma is dispersed and detected, yielding an emission spectrum where the wavelengths of characteristic spectral lines provide fingerprint signatures for element identification, and their intensities relate to element concentrations [28] [29]. Elemental mapping by LIBS extends this fundamental capability to two-dimensional spatial analysis, allowing for the visualization of elemental distribution across a sample surface. This is achieved by performing a series of sequential LIBS measurements at predefined points on a raster grid, subsequently converting the intensity of a specific elemental emission line at each point into a pixel in a false-color map [29]. This technique has gained significant traction due to its rapid analysis capability, minimal sample preparation requirements, and capacity to detect both light and heavy elements with micrometer-scale spatial resolution [30] [31].

Technical Foundations of LIBS Imaging

Core Physical Principles

The LIBS process initiates when a focused high-power laser pulse reaches the sample surface, causing ablation and plasma formation. Within this laser-induced plasma, the ablated material is atomized and excited. As the plasma cools, the excited atoms and ions emit element-specific radiation during their relaxation to lower energy states. The relationship between spectral line intensity and elemental concentration is governed by the fundamental LIBS equation, which can be simplified for practical use as [32]:

I = X Y (g_k A_{ki} C / U(T)) K(a,x) e^{-E_k / kT}

where:

- I is the measured line intensity.

- C is the concentration of the emitting species.

- g_k is the statistical weight of the upper level.

- A_{ki} is the transition probability.

- U(T) is the partition function.

- E_k is the energy of the upper level.

- T is the plasma temperature.

- X, Y, K(a,x) are experimental factors related to plasma geometry, collection efficiency, and self-absorption [32].

For accurate qualitative and quantitative analysis, researchers often rely on databases such as the NIST Atomic Spectra Database (ASD), which provides a dedicated LIBS interface for simulating spectra based on plasma composition, electron temperature, density, and spectral resolution [33].

Critical Instrumentation Components

The performance of LIBS imaging systems depends critically on several key components, each with specific technical requirements to achieve high-quality elemental maps.

Table 1: Essential Instrumentation for LIBS Elemental Mapping

| Component | Technical Specifications | Function in Imaging |

|---|---|---|

| Laser Source | Nd:YAG (e.g., 1064 nm, 266 nm), 4 ns pulse width, 9 mJ-100 mJ energy, 1-100 Hz repetition rate [28] [30] | Generates plasma via ablation; repetition rate dictates mapping speed. |

| Spectrometer | Multiple channels (e.g., 240-340 nm, 340-540 nm, 540-850 nm), resolution ~0.07 nm [28] [30] | Disperses collected plasma light into constituent wavelengths. |

| Detector | CMOS, ICCD, or CCD; ICCD offers gating for temporal resolution [31] | Captures emission spectra at each ablation point; type affects spatial resolution. |

| Translation Stage | Micrometer precision, computer-controlled [29] | Moves sample or laser beam to create a predefined measurement grid. |

| Data Acquisition System | Hyperspectral data cube handling (x, y, λ) [29] | Compiles individual spectra into a spatially resolved 3D data cube for mapping. |

Experimental Workflow for LIBS Mapping

The following diagram illustrates the generalized end-to-end workflow for creating quantitative elemental maps using LIBS, incorporating steps for sample preparation, data acquisition, processing, and calibration.

Sample Preparation Protocols

Proper sample preparation is critical for obtaining reliable LIBS mapping results, particularly for heterogeneous or soft materials.

- Solid Geological Materials: Powders are often pressed into pellets using a standardized compression molding process to create a uniform, flat surface for analysis and to reduce variability in plasma temperature [28] [32].

- Biological Tissues: Thin sections (e.g., 30 µm thickness) are prepared using a cryogenic cutting device at temperatures like -70 °C. The sections are then deposited onto carrier materials such as silicon wafers, which provide optimal properties. A gentle vacuum may be applied to remove some water content [30].

- Engineered Materials: Surrogate TRISO fuel particles, for example, can be analyzed directly, with the primary requirement being precise positioning to enable cross-sectional layer analysis [31].

Data Acquisition Parameters

Detailed acquisition parameters are essential for replicating experiments. The following protocols are derived from recent studies.

Table 2: Exemplary Experimental Protocols from Recent LIBS Studies

| Application | Laser & Ablation Parameters | Spectral Acquisition | Spatial Resolution |

|---|---|---|---|

| Multi-distance Geochemical Analysis [28] | Nd:YAG, 1064 nm, 9 mJ, 1-3 Hz, Gate delay: 0 µs, Gate width: 1 ms | Three channels: 240-340 nm, 340-540 nm, 540-850 nm | Varying distances (2.0 m to 5.0 m) |

| Biological Tissue Mapping [30] | Nd:YAG, 266 nm, Ablation gas: Argon (1.0 L/min) | Range: 190-1040 nm, Resolution: 0.07 nm | Micrometer-range (cellular level) |

| TRISO Fuel Particle Analysis [31] | Not specified in snippet | Detectors: CMOS and ICCD | CMOS: 4 µm, ICCD: 2 µm |

Data Processing and Quantitative Calibration

Converting raw spectral data into quantitative elemental maps requires robust data processing and calibration strategies.

- Spectral Preprocessing: Raw spectra must undergo dark background subtraction, wavelength calibration, ineffective pixel masking, spectrometer channel splicing, and background baseline removal [28].

- Normalization: To account for pulse-to-pulse laser energy fluctuations and matrix effects, signal normalization is often applied. A common method uses the intensity of a ubiquitous element line, such as the C I 247.8 nm spectral line in organic tissues [30].

- Quantitative Calibration: Achieving accurate quantification requires matrix-matched external calibration. A powerful methodology involves pixel-by-pixel automatic matrix recognition (e.g., using Linear Discriminant Analysis - LDA) on the LIBS hyperspectral data set to identify different tissue types or material phases. A specific, pre-recorded calibration curve is then applied to each pixel based on its identified matrix [30]. This approach has demonstrated high accuracy (98% for distinguishing grey and white matter in swine brain) and yields concentration maps that agree with reference methods like ICP-OES [30].

Advanced Data Analysis and Machine Learning

Handling Analytical Challenges: The Distance Effect

In practical applications like Mars exploration, the LIBS detection distance can vary significantly, inducing the "distance effect." This effect alters laser spot size, plasma conditions, and light collection efficiency, leading to considerable spectral profile discrepancies even for the same sample [28]. Traditional mitigation strategies involve element-specific distance correction functions, which are laborious [28].

A modern approach bypasses correction by directly analyzing multi-distance mixed spectra using machine learning. A Deep Convolutional Neural Network (CNN) can be trained to classify geochemical samples directly from multi-distance LIBS spectra. Performance is further enhanced by employing a spectral sample weight optimization strategy during CNN training, which tailors a specific weight for each training sample based on its detection distance. On an eight-distance LIBS dataset, this method achieved a 92.06% testing accuracy, an improvement of 8.45 percentage points over a standard CNN with equal sample weighting [28].

The following diagram illustrates the architecture and workflow of this advanced CNN model for handling multi-distance LIBS data.

Key Reagents and Materials for LIBS Research

Table 3: Essential Research Reagent Solutions and Materials

| Item | Function/Application | Exemplary Details |

|---|---|---|

| Certified Reference Materials (CRMs) | Calibration and validation of quantitative analysis | Chinese national reference materials (GBW series) [28]; OREAS Geological Samples [32] |

| Silicon Wafers | Carrier substrate for thin-sectioned samples (e.g., tissues) | Provides inert, flat surface for analysis [30] |

| Ablation Gas (e.g., Argon) | Enhances plasma emission intensity and stability | Used at flow rate of 1.0 L/min in biological LIBS [30] |

| Acids for Digestion (HNO₃, H₂O₂) | Sample preparation for reference analysis (e.g., ICP-OES) | Used for acid digestion of tissue samples for reference concentration measurements [30] |

| Calibration Standards | Creating matrix-matched calibration curves | ICP Multi-element standard solutions, used for external calibration [30] |

Applications and Case Studies

LIBS imaging has demonstrated remarkable versatility across diverse scientific fields.

- Planetary Exploration: LIBS instruments (ChemCam, SuperCam, MarSCoDe) onboard Mars rovers perform remote classification of Martian surface materials. Advanced machine learning models are crucial for interpreting spectra obtained at varying distances [28].

- Biological Tissue Analysis: LIBS enables quantitative mapping of essential elements (Na, K, Mg) in tissues. The distribution of these elements in swine brain grey and white matter, as revealed by LIBS, agreed with literature values and reference ICP-OES measurements, but the actual concentration distribution was often quite different from what was suggested by the raw LIBS signal intensity map, highlighting the necessity of quantitative conversion [30].

- Nuclear Material Characterization: LIBS mapping rapidly analyzes the spatial dimensions and layer thicknesses of complex engineered materials, such as surrogate TRISO fuel particles. A novel image analysis tool measured ZrO₂ layer thicknesses with a 3.7% relative difference compared to SEM-EDS but with a 95% reduction in measurement time [31].

- Environmental Microplastic Analysis: LIBS is employed for the direct analysis of pristine and environmentally aged microplastics, detecting heavy metals and additives using a PCA-based approach for classification and mapping [34].

Laser-Induced Breakdown Spectroscopy has firmly established itself as a powerful and rapid technique for elemental imaging and mapping. Its minimal sample preparation, capability for both light and heavy element detection, and micrometer-scale spatial resolution make it indispensable in fields ranging from planetary science to biology and materials engineering. The ongoing integration of advanced machine learning methods, such as deep convolutional neural networks with optimized training strategies, is effectively overcoming traditional challenges like the distance effect, pushing the boundaries of quantitative analysis. Furthermore, robust calibration protocols involving matrix recognition and matrix-matched standards are transforming qualitative intensity maps into accurate quantitative concentration distributions. As instrumentation advances and data analysis techniques grow more sophisticated, the role of LIBS-based elemental mapping in qualitative and quantitative chemical analysis research is poised for continued expansion and innovation.

Inductively Coupled Plasma Optical Emission Spectroscopy (ICP-OES) and Inductively Coupled Plasma Mass Spectrometry (ICP-MS) represent two of the most powerful analytical techniques for elemental determination, both fundamentally rooted in the principles of atomic emission. These techniques have revolutionized trace element analysis across diverse scientific disciplines, from environmental monitoring to pharmaceutical development. At their core, both methods utilize an argon plasma torch reaching temperatures of approximately 9,000-10,000K to atomize and excite sample components [35] [36]. The measurement of electromagnetic radiation emitted as excited electrons return to ground state provides the theoretical foundation for qualitative identification and quantitative measurement of elemental composition [36].

This technical guide examines the principles, capabilities, and applications of ICP-OES and ICP-MS, with particular emphasis on their role in advancing emission spectrometry for chemical analysis research. The exceptional sensitivity, multi-element capability, and wide dynamic range of these techniques have established them as indispensable tools for researchers requiring precise elemental characterization at concentrations ranging from major components to ultra-trace levels.

Fundamental Principles and Instrumentation

ICP-OES: Spectroscopic Detection of Photon Emissions

In ICP-OES, the sample is introduced as an aerosol into the high-temperature argon plasma, where it undergoes desiccation, vaporization, and dissociation into atoms that are subsequently excited to higher energy states [37] [36]. As these excited atoms and ions return to ground state, they emit photons at characteristic wavelengths. The emitted light is resolved by a diffraction grating and detected, with intensity proportional to elemental concentration [35] [36]. This wavelength-specific emission enables simultaneous multi-element analysis, with modern instruments capable of measuring up to 70 elements in a single analysis [38] [35].

ICP-MS: Mass-to-Charge Ratio Determination

ICP-MS similarly employs the argon plasma for sample atomization and ionization, but subsequently separates and detects the resulting ions based on their mass-to-charge ratios (m/z) using a mass spectrometer [39]. This fundamental difference in detection principle provides ICP-MS with significantly lower detection limits, typically in the parts-per-trillion range, compared to parts-per-billion for ICP-OES [39]. The technique also provides isotopic information, which is invaluable for tracer studies and geochemical analysis [37].

Table 1: Fundamental comparison of ICP-OES and ICP-MS techniques

| Parameter | ICP-OES | ICP-MS |

|---|---|---|

| Detection Principle | Measurement of photon emissions at characteristic wavelengths [39] | Measurement of mass-to-charge ratio of ions [39] |

| Detection Limits | Parts-per-billion (ppb) range [39] | Parts-per-trillion (ppt) range [39] |

| Linear Dynamic Range | Up to 6 orders of magnitude [39] | Up to 8-9 orders of magnitude [39] |

| Isotopic Analysis | Not available | Available [39] |

| Elemental Coverage | Most elements (73+) [39] | Largest number of elements (82+) [39] |

| Analysis Speed | 1-60 elements per minute [39] | Full elemental analysis in less than 1 minute [39] |

| Primary Applications | Routine multi-element analysis at higher concentrations [39] | Trace and ultra-trace element analysis [39] |

Analytical Performance and Technical Specifications

Detection Capabilities and Sensitivity

The superior sensitivity of ICP-MS stems from its fundamental detection principles, where the relationship between sensitivity and detection limits is mathematically defined by the equation: detection limit = (3 × σbl)/sensitivity, where σbl represents the standard deviation of the blank expressed in counts per second [40]. This relationship demonstrates that higher sensitivity directly improves detection limits, particularly when background contamination is minimized [40].

Table 2: Analytical performance characteristics for elemental analysis

| Performance Characteristic | ICP-OES | ICP-MS |

|---|---|---|

| Typical Detection Limits | ~1-100 ppb [39] | ~0.001-0.1 ppb (ppt) [39] |

| Precision (% RSD) | 0.5-2% [41] | 1-3% [41] |

| Sample Throughput | High (simultaneous multi-element) [37] | Very high (rapid sequential multi-element) [39] |

| Spectral Interferences | Moderate (overlapping emission lines) [39] | Moderate (isobaric and polyatomic) [39] |

| Matrix Tolerance | High (TDS up to 10-30%) [39] | Moderate (TDS typically <0.1-0.2%) [39] |

Technique Selection Guidelines

The choice between ICP-OES and ICP-MS depends on specific analytical requirements. ICP-OES is generally preferred for samples with higher elemental concentrations (>100 ppb), complex matrices, and when operational cost is a primary consideration [39]. ICP-MS is indispensable for ultra-trace analysis, isotopic measurements, and when the widest dynamic range is required [39]. For nuclear material characterization and isotope ratio analysis, as demonstrated in the award-winning research of Benjamin T. Manard, ICP-MS coupled with laser ablation provides unparalleled capabilities for nuclear safeguards and forensic applications [42].

Experimental Protocols and Methodologies

Sample Preparation Requirements

Both ICP-OES and ICP-MS typically require sample digestion to transform solid samples into liquid form for analysis. Microwave-assisted acid digestion has emerged as a highly effective approach, utilizing combinations of HNO₃, HCl, HF, or H₂O₂ at temperatures up to 200°C under pressure [36] [41]. For example, the analysis of palladium and platinum in automotive catalysts employs optimized microwave digestion using HNO₃:HCl mixtures, followed by dilution and analysis [41].

A critical step in sample preparation involves filtration through 0.45 μm, 0.22 μm, or 0.1 μm filters to remove undissolved particulates that could clog nebulizers or introduce inaccuracies [36]. Polypropylene filters are preferred over glass fiber due to their minimal adsorption and contamination potential [36]. For trace element analysis, stringent contamination control is essential, requiring high-purity reagents, acid-washed labware, and controlled laboratory environments [40] [36].

Instrument Optimization and Calibration