From Raw Data to Reliable Insights: Modern Data Processing Solutions for Spectroscopic Instrumentation

This article addresses the critical challenges and advanced solutions in data processing for modern spectroscopic instrumentation, tailored for researchers and drug development professionals.

From Raw Data to Reliable Insights: Modern Data Processing Solutions for Spectroscopic Instrumentation

Abstract

This article addresses the critical challenges and advanced solutions in data processing for modern spectroscopic instrumentation, tailored for researchers and drug development professionals. It explores the foundational shift from targeted to untargeted analysis and the growing integration of AI and machine learning. The content provides a methodological guide to spectral preprocessing, data fusion, and chemometric modeling, alongside practical strategies for troubleshooting common data quality issues. Finally, it outlines robust validation frameworks and comparative analyses of software solutions essential for meeting regulatory standards and ensuring data integrity in biomedical research and quality control.

The Evolving Data Landscape: From Spectra to Smart Analysis

FAQs & Troubleshooting Guides

Frequently Asked Questions (FAQs)

Q1: What is the core difference between targeted and untargeted analysis?

Targeted analysis is designed to identify and quantify a specific, pre-defined set of known compounds. In contrast, untargeted analysis (NTA) is a hypothesis-generating approach that aims to profile all measurable analytes in a sample, including unknown compounds, without pre-existing knowledge of the sample's chemical composition [1]. NTA is particularly valuable for discovering unknown impurities, metabolites, and pollutants [1].

Q2: What are the most significant challenges in LC-MS-based untargeted metabolomics?

The main challenges include [1] [2] [3]:

- Chemical Identification: Confidently identifying unknown metabolites from complex data remains a major bottleneck.

- Annotation Consistency: A multi-laboratory study showed that annotation performance varies significantly, with different teams identifying only 24% to 57% of the same analytes in a sample [3].

- Matrix Effects: Complex sample matrices can suppress or enhance ionization, leading to inaccurate data [1] [4].

- Data Complexity: The process generates vast, complex datasets that require advanced bioinformatics tools for interpretation [1].

- False Positives/Negatives: Features from in-source fragmentation, adducts, and redundant ions can lead to false positives, while the lack of analytical standards makes false negatives hard to ascertain [1] [3].

Q3: How can I improve the confidence of metabolite identifications in my untargeted workflows?

To advance from tentative to confident identifications, incorporate multiple lines of evidence [2] [3]:

- Use MS/MS Spectral Libraries: Match fragmentation data against reference spectra.

- Employ Orthogonal Data: Utilize retention time prediction and, if available, ion mobility collision cross section (CCS) values, which are physical properties less variable than retention time [2].

- Validate with Standards: Ultimately, validation using authentic chemical reference standards is nearly always required for the highest confidence level [2].

Q4: What is a spectral "fingerprint" and how is it used in pharmaceutical analysis?

In vibrational spectroscopy like Raman analysis, the fingerprint region (300–1900 cm⁻¹) is used to characterize molecules based on their unique vibrational patterns [5]. A specific sub-region from 1550–1900 cm⁻¹, sometimes called the "fingerprint in the fingerprint," is particularly useful for identifying Active Pharmaceutical Ingredients (APIs). This is because common excipients typically show no Raman signals in this region, while APIs display unique vibrations from functional groups like C=O and C=N, enabling excipient-free API identity testing [5].

Q5: What are common sample preparation pitfalls in untargeted analysis and how can I avoid them?

Common mistakes during sample preparation can severely compromise NTA results [1] [4]:

- Inadequate Sample Cleanup: Insufficient cleanup leaves interfering compounds that can cause ion suppression/enhancement. Use appropriate techniques like solid-phase extraction (SPE) or liquid-liquid extraction.

- Ignoring Matrix Effects: Always use matrix-matched calibration standards and stable isotope-labeled internal standards to mitigate quantification errors.

- Improper Sample Storage: This leads to degradation. Store samples at correct temperatures in suitable containers and avoid repeated freeze-thaw cycles.

- Contamination: Use high-quality MS-grade solvents and be aware of contaminants leaching from plasticware.

Troubleshooting Guide for Untargeted Analysis

This guide addresses common experimental issues, their causes, and solutions.

| Problem | Possible Causes | Recommended Solutions |

|---|---|---|

| Unstable/Drifting Readings | - Instrument not warmed up- Sample too concentrated- Air bubbles in sample- Environmental vibrations [6] | - Allow 15-30 min lamp warm-up- Dilute sample to optimal absorbance range (0.1–1.0 AU)- Gently tap cuvette to dislodge bubbles- Place instrument on a stable, vibration-free surface [6] |

| Poor Chromatographic Separation | - Incorrect LC column chemistry- Suboptimal mobile phase or gradient- Column contamination or degradation | - Select a column chemistry suited to your analyte properties (e.g., HILIC for polar compounds) [2]- Re-optimize the elution gradient- Clean or replace the column |

| Low Annotation Confidence | - Over-reliance on accurate mass alone- Lack of MS/MS spectral data- Poor match to database entries [2] [3] | - Acquire data-dependent (DDA) or data-independent (DIA) MS/MS spectra [2]- Use in-silico fragmentation tools and retention time prediction- Confirm identity with an analytical standard where possible [2] |

| High Background/Chemical Noise | - Contaminated solvents or labware- Sample carry-over- Matrix effects [4] | - Use high-purity solvents- Run blank injections and implement a robust needle wash program [4]- Improve sample cleanup procedures (e.g., SPE) [4] |

| Inconsistent Replicate Analyses | - Inconsistent sample preparation- Sample degradation over time- Instrument performance drift | - Standardize sample prep protocols meticulously- Minimize time between preparation and analysis; keep samples cool and dark- Perform regular system suitability tests |

Experimental Protocol: Untargeted Metabolomics Using LC-HRMS

This protocol provides a general workflow for liquid chromatography-high-resolution mass spectrometry (LC-HRMS) based untargeted metabolomics [1] [2].

1. Sample Collection and Storage

- Collection: Collect biological samples (e.g., urine, plasma, cell extracts) using a standardized protocol to minimize pre-analytical variation.

- Quenching: For cells, immediately quench metabolism using liquid nitrogen or cold methanol.

- Storage: Snap-freeze samples in liquid nitrogen and store at -80°C. Use low-binding tubes and avoid repeated freeze-thaw cycles [4].

2. Sample Preparation and Metabolite Extraction

- Thawing: Thaw samples slowly on ice.

- Protein Precipitation: Add a cold organic solvent (e.g., methanol or acetonitrile, typically at a 2:1 or 3:1 ratio to sample volume) to precipitate proteins. Vortex vigorously.

- Incubation: Incubate at -20°C for at least 1 hour.

- Centrifugation: Centrifuge at high speed (e.g., 14,000-16,000 x g) for 15-20 minutes at 4°C.

- Collection: Transfer the supernatant (containing metabolites) to a new vial.

- Concentration (if needed): Evaporate the solvent under a gentle stream of nitrogen gas and reconstitute the residue in a solvent compatible with the initial LC mobile phase [4]. Note: The choice of extraction solvent will impact the range of metabolites recovered [2].

3. LC-HRMS Data Acquisition

- Liquid Chromatography: Utilize reversed-phase (C18) chromatography for non-polar to medium-polarity metabolites or HILIC for polar metabolites. A quality control (QC) pool, created by combining a small aliquot of every sample, should be run periodically throughout the sequence to monitor instrument stability.

- Mass Spectrometry: Acquire data using a high-resolution mass spectrometer (e.g., Q-TOF, Orbitrap).

- MS1 (Full Scan): Acquire data in profile mode with a resolution > 30,000 to obtain accurate mass.

- MS2 (Fragmentation): Use Data-Dependent Acquisition (DDA) to fragment the most abundant ions, or Data-Independent Acquisition (DIA, e.g., SWATH) to fragment all ions within sequential mass windows [2].

4. Data Processing and Annotation

- Peak Picking and Alignment: Use software (e.g., XCMS, MS-DIAL, OpenMS) for feature detection, retention time alignment, and integration. A "feature" is defined by its mass-to-charge (m/z) and retention time (RT) [2].

- Statistical Analysis: Perform multivariate statistical analysis (e.g., PCA, PLS-DA) to identify features differentiating sample groups.

- Metabolite Annotation: Annotate significant features by:

- Querying accurate mass against databases (e.g., HMDB, KEGG, ChemSpider).

- Matching MS/MS spectra to spectral libraries (e.g., MassBank, GNPS).

- Predicting structures with in-silico tools (e.g., CFM-ID, SIRIUS) [1] [2].

- Reporting: Always report the level of confidence for each identification (e.g., confirmed by standard, putative annotation based on MS/MS, etc.) [2].

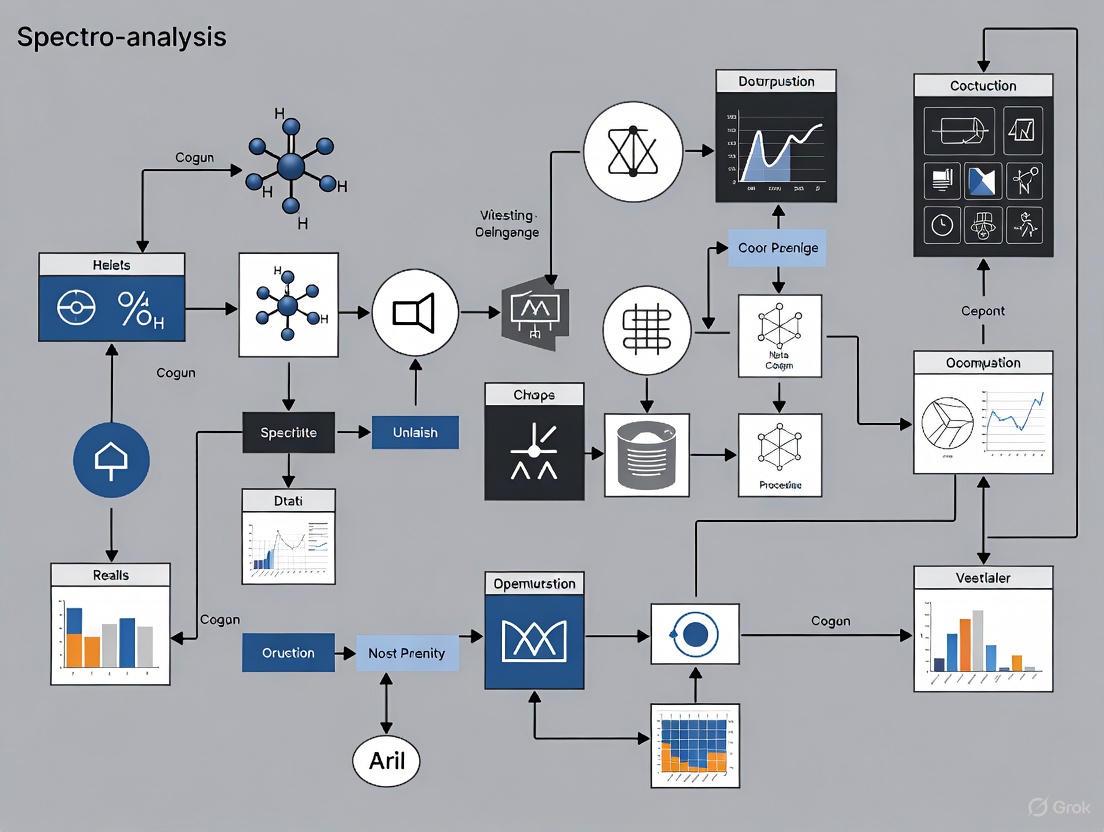

Workflow Visualization

The following diagram illustrates the generalized workflow for an untargeted analysis study.

Untargeted Analysis Workflow

The confidence in metabolite identification varies significantly. The following diagram maps the common levels of identification confidence.

Levels of Identification Confidence

The Scientist's Toolkit: Essential Research Reagents & Materials

The table below details key materials and solutions used in untargeted metabolomics to ensure reliable and reproducible results.

| Item | Function & Application |

|---|---|

| Stable Isotope-Labeled Internal Standards | Added to samples to monitor and correct for variability during sample preparation and ionization (matrix effects) [4]. |

| MS-Grade Solvents (Water, Methanol, Acetonitrile) | High-purity solvents are essential to minimize background chemical noise and prevent signal suppression in the mass spectrometer [4]. |

| Solid-Phase Extraction (SPE) Cartridges | Used for sample cleanup to remove interfering compounds and salts from complex matrices, reducing ion suppression and protecting the LC column [1] [4]. |

| Nitrogen Evaporator | Provides a gentle, controlled method for concentrating samples after extraction by using a stream of nitrogen gas, minimizing the loss of volatile analytes [4]. |

| Authentic Chemical Standards | Pure, known compounds used to confirm metabolite identities by matching retention time and fragmentation spectra, providing the highest level of confidence (Level 1 identification) [2]. |

Modern spectroscopic research generates complex data characterized by the Four V's: Volume, Variety, Velocity, and Veracity. These properties present significant challenges for researchers in drug development and material science who rely on accurate, interpretable data.

Volume refers to the massive datasets generated by modern instruments. Spectral imaging and high-throughput screening can produce thousands of spectra in a single session, with the global spectroscopy software market valued at approximately USD 1.1 billion in 2024 and growing at 9.1% CAGR [7]. Variety encompasses the diverse data formats from techniques like Raman, FT-IR, NIR, and mass spectrometry. Velocity addresses the demand for real-time analysis, with inline spectral sensors enabling continuous monitoring of chemical composition during manufacturing [8]. Veracity ensures data accuracy and reliability, challenging due to instrumental artifacts, environmental factors, and processing errors [9] [10].

Volume: Managing Large-Scale Spectral Data

The Data Volume Challenge

High-resolution spectral imaging systems and automated high-throughput screening generate terabytes of spectral data. For example, Bruker's LUMOS II ILIM QCL-based microscope acquires images at 4.5 mm² per second [11], while hyperspectral imaging in pharmaceutical and biomedical applications produces massive multidimensional datasets.

Solutions for Data Volume Management

- Cloud Computing and Storage: Cloud-based spectral data processing supports centralized monitoring, scalability, and collaborative research across geographies [12]. This approach allows researchers to scale storage and computing resources elastically with project demands.

- Data Compression and Efficient Encoding: Novel data formats and compression algorithms specific to spectral characteristics help reduce storage requirements without sacrificing critical information.

- AI-Driven Data Reduction: Machine learning algorithms identify and retain only the most information-rich spectra or regions of interest, dramatically reducing effective data volume while preserving scientific value [7].

The Data Variety Landscape

Spectral data originates from diverse technologies, each with unique formats and characteristics:

Table: Spectral Techniques and Their Data Characteristics [11]

| Technique | Common Applications | Data Dimensionality | Key Data Features |

|---|---|---|---|

| FT-IR | Polymer analysis, organic compound identification | 1D spectra (wavenumber vs. absorbance) | Fingerprint region for molecular identification |

| Raman Spectroscopy | Pharmaceutical analysis, material science | 1D spectra (Raman shift vs. intensity) | Vibrational modes, minimal water interference |

| NIR Spectroscopy | Food safety, agriculture | 1D spectra (wavelength vs. absorbance) | Overtone and combination bands |

| Spectral Flow Cytometry | Immunology, cell biology | High-dimensional (30+ parameters) | Full emission spectra across multiple lasers |

| UV-Vis Spectroscopy | Concentration analysis, colorimetry | 1D spectra (wavelength vs. absorbance) | Electronic transitions |

Solutions for Data Variety

- Standardized Spectral Libraries: Global efforts focus on standardizing spectral libraries and metadata across vibrational, electronic, and atomic spectroscopies using frameworks like JCAMP-DX, ANDI, and IUPAC recommendations [13].

- FAIR Data Principles: Implementing Findable, Accessible, Interoperable, and Reusable principles ensures data remains usable across different analytical platforms and research institutions.

- Unified Data Platforms: Software solutions with compatibility across multiple spectrometer types and techniques enable consolidated analysis, with leading vendors offering platforms that support various spectroscopic methods [7].

Velocity: Real-Time Data Processing Demands

High-Velocity Data Scenarios

Modern applications require rapid spectral acquisition and processing:

- Process Analytical Technology (PAT): Inline NIR analyzers like the Metrohm 2060 Series provide real-time monitoring of chemical processes, enabling immediate quality control interventions [14].

- High-Throughput Screening: Automated systems like Horiba's PoliSpectra rapid Raman plate reader measure 96-well plates with full automation, generating data at unprecedented speeds [11].

- Field Deployment: Portable instruments like SciAps' vis-NIR and handheld Raman spectrometers provide immediate analytical results in agricultural, environmental, and pharmaceutical settings [11].

Acceleration Solutions

- Edge Computing: Processing data directly at the instrument reduces latency. Nova's spectral sensors incorporate edge-computing capabilities for low-latency insights in manufacturing environments [8].

- FPGA and Hardware Acceleration: Liquid Instruments' Moku Neural Network uses field programmable gate arrays (FPGA) to embed neural networks directly into measurement instruments for enhanced real-time analysis [11].

- Streaming Data Architectures: Implementing pipeline processing where data is analyzed continuously as it's generated, rather than in batch mode, enables immediate feedback for process control.

Veracity: Ensuring Data Accuracy and Reliability

Common Data Veracity Challenges

Multiple factors threaten spectral data quality. The diagram below outlines key veracity challenges and their relationships.

Troubleshooting Guide: Frequently Asked Questions

Problem: Unexplained negative absorbance peaks or baseline distortion in FT-IR spectra. Solutions:

- Clean ATR Crystals: Contaminated crystals cause negative absorbance peaks. Clean with appropriate solvent and collect a fresh background scan.

- Check for Instrument Vibrations: FT-IR spectrometers are highly sensitive to physical disturbances. Relocate the instrument away from pumps, hoods, or other sources of vibration.

- Verify Data Processing: In diffuse reflection measurements, ensure data is processed in Kubelka-Munk units rather than absorbance for accurate representation.

- Distinguish Surface vs. Bulk Effects: With materials like plastics, collect spectra from both the surface and a freshly cut interior to identify if you're measuring surface oxidation/additives versus bulk material.

Problem: Skewed signals, correlation between channels, and hyper-negative events in flow cytometry data. Solutions:

- Optimize Single-Color Controls: Ensure controls match your samples exactly in terms of fixation, staining conditions, and cell type. "Like-with-like" autofluorescence is critical.

- Validate Control Quality: Check that controls show a single clear positive population without contamination or tandem dye breakdown.

- Use Proper Gating Strategies: Gate on homogeneous populations avoiding doublets and mixed cell types to reduce variation in median fluorescence intensity.

- Address Autofluorescence Properly: For highly autofluorescent samples, use targeted autofluorescence identification rather than fully automated extraction when possible.

- Avoid Manual Matrix Editing: Manually edited compensation matrices often introduce hidden errors; use automated tools like AutoSpill with proper controls instead.

Problem: Ensuring accuracy and compliance of NIR spectroscopic methods. Solutions:

- Regular Calibration: Perform instrument calibration using certified NIST standards after hardware modifications and annually as part of service intervals.

- Systematic Validation: Conduct performance tests regularly based on risk assessment, including wavelength accuracy, photometric linearity, and signal-to-noise ratio.

- Understand Limitations: Recognize that samples with high carbon black content cannot be analyzed by NIR, and most inorganic substances have minimal NIR absorbance.

- Reference Method Accuracy: Remember that NIR method accuracy depends on the reference method accuracy; a good prediction model typically has about 1.1x the accuracy of the primary method.

Essential Research Reagent Solutions

Table: Key Reagents and Materials for Spectral Data Quality [15] [14]

| Item | Function | Quality Considerations |

|---|---|---|

| NIST Traceable Standards | Instrument calibration for wavelength and photometric accuracy | Certification documentation, proper storage, expiration monitoring |

| Single-Stain Control Particles | Generating reference spectra for flow cytometry | Lot-to-lot consistency, matching biological matrix to samples |

| Viability Dye Controls | Accurate dead cell identification in spectral flow | Properly matched autofluorescence (heat-killed controls) |

| Certified Reflection Standards | Reflectance calibration for dispersive NIR systems | Ceramic materials with defined reflectance properties |

| Stable Tandem Dyes | Multipanel labeling for high-parameter experiments | Minimal lot-to-lot variation, protection from light and fixation |

| Reference Library Materials | Long-term method transfer and instrument qualification | Stability documentation, proper storage conditions |

Integrated Experimental Protocol for Quality Assurance

The workflow below outlines a comprehensive approach to ensure spectral data quality across the experimental lifecycle.

- Bright is Better: Ensure positive peaks in reference controls are as bright or brighter than in multi-color samples.

- Like-with-Like Autofluorescence: Match autofluorescence between positive and negative controls exactly (e.g., for viability dyes, use heat-killed cells for both stained positive and unstained negative).

- Matched Fluorophore: Use identical fluorophores in controls and experiments (no substitutions like GFP for FITC).

- Same Tandem Lot: Use the same lot of tandem dyes for controls and experiments due to significant lot-to-lot variation.

- Identical Conditions: Expose controls to identical buffers, fixatives, permeabilization, light, and temperature conditions as experimental samples.

- Performance Testing: Conduct regular instrument performance tests including wavelength accuracy, photometric linearity, and signal-to-noise measurements at high and low light fluxes.

- Calibration Schedule: Perform comprehensive calibration using NIST standards after any hardware modification and at least annually.

- Pre-Acquisition Monitoring: Validate critical parameters including event rates, laser stability, and background signal levels before data collection.

- Reference Library Maintenance: Establish and validate reusable reference controls, with monthly validation of spectral consistency for long-term experiments.

Addressing the Four V's of spectral data requires integrated approaches combining technical solutions, standardized protocols, and ongoing validation. The frameworks presented here provide researchers with practical methodologies to enhance data quality across diverse spectroscopic applications. As spectroscopic technologies continue evolving with higher throughput and greater complexity, maintaining focus on these fundamental data challenges will remain essential for research integrity and innovation.

The Rise of AI and Machine Learning in Spectral Interpretation

Technical Support Center

Troubleshooting Guides

Q1: My AI model for spectral classification is overfitting. How can I improve its generalization with limited experimental data?

A: Overfitting occurs when models become overly complex and fit noise in limited training data. Implement these solutions:

- Data Augmentation with Generative AI: Use Generative Adversarial Networks (GANs) or diffusion models to create synthetic spectral data. These tools realistically augment datasets, improving calibration robustness. A 2025 study demonstrated generative models can simulate spectral profiles to mitigate small or biased datasets [16].

- Regularization Techniques: Apply L1 (Lasso) or L2 (Ridge) regularization to loss functions during training. This adds penalty terms to complex solutions, preventing over-reliance on specific spectral features [17].

- Transfer Learning: Leverage pre-trained models from platforms like SpectrumLab or SpectraML. These foundation models trained on millions of spectra provide robust feature extraction layers adaptable to smaller datasets [16].

Table: Solutions for AI Spectral Model Overfitting

| Solution | Mechanism | Suitable Spectral Types |

|---|---|---|

| Generative AI (GANs) | Creates synthetic training spectra from limited data | IR, Raman, X-ray, NIR |

| Regularization (L1/L2) | Penalizes complex model parameters during training | All spectral types |

| Transfer Learning | Uses features from large pre-trained models | UV-Vis, MS, NMR |

| Data Augmentation | Expands dataset with mathematical transformations (e.g., noise addition) | Optical spectroscopy, LIBS |

Q2: How can I interpret and trust predictions from "black box" deep learning models for critical quality control decisions?

A: Implement Explainable AI (XAI) techniques to reveal which spectral features drive predictions:

- SHAP (SHapley Additive exPlanations): Quantifies contribution of each wavelength/feature to final prediction. For example, in edible oil classification using FT-IR, SHAP can identify specific molecular vibration bands influencing purity decisions [16].

- LIME (Local Interpretable Model-agnostic Explanations): Creates locally faithful explanations around specific predictions. This helps associate diagnostic features with specific vibrational bands, reinforcing chemical interpretability [16].

- XAI-Enhanced Chemometrics: Combine XAI with traditional Partial Least Squares (PLS) methods. This provides interpretable calibrations while maintaining AI's predictive power, essential for pharmaceutical regulatory compliance [16].

Experimental Protocol for XAI Validation:

- Train your classification model (CNN, Random Forest) on spectral data

- Apply SHAP/LIME to prediction instances to identify important wavelengths

- Correlate important features with known chemical assignments via literature

- Validate with domain experts to confirm biochemical plausibility

Q3: My AI-predicted spectra don't match subsequent physical measurements. How do I diagnose the discrepancy?

A: This accuracy mismatch often stems from training data issues or domain shift:

- Training Data Evaluation: Verify if training data matches your experimental conditions. Theoretical simulation data often lacks instrumental noise and real-world variability present in experimental spectra [17].

- Domain Adaptation: Use physics-informed neural networks that incorporate domain knowledge and real spectral constraints. This preserves physical plausibility in predictions [16].

- Cross-Modal Validation: For tools like SpectroGen (converting between spectral modalities), validate with known standards. MIT's virtual spectrometer achieved 99% correlation between AI-generated and physically scanned spectra [18].

Table: Spectral Prediction Accuracy Diagnostics

| Issue | Diagnostic Steps | Potential Solutions |

|---|---|---|

| Domain shift | Compare training data statistics with experimental data | Apply domain adaptation techniques, fine-tuning |

| Insufficient features | Analyze error patterns across different sample types | Expand training data diversity, use data augmentation |

| Incorrect preprocessing | Verify preprocessing matches model training protocol | Standardize preprocessing pipelines |

| Model architecture limitations | Test with simpler models first | Use architectures designed for spectral data (1D CNNs) |

Q4: How can I implement AI for real-time spectral analysis in manufacturing quality control?

A: Deploy optimized AI systems for production environments:

- FPGA-Based Neural Networks: Implement field-programmable gate array (FPGA) technology like Liquid Instruments' Moku Neural Network. This provides hardware-accelerated inference for rapid, sub-second spectral analysis [11].

- Edge Computing for Portable Devices: Utilize AI models optimized for handheld spectrometers. For example, Metrohm's TaticID-1064ST handheld Raman provides analysis guidance with onboard processing [11].

- Streamlined Models: Deploy distilled neural networks with reduced complexity. These maintain accuracy while enabling faster inference suitable for high-throughput screening, such as Horiba's PoliSpectra Raman plate reader analyzing 96-well plates [11].

Frequently Asked Questions (FAQs)

Q1: What are the most promising AI techniques currently for spectral interpretation?

The field is advancing rapidly with several particularly promising approaches:

- Explainable AI (XAI): SHAP and LIME methods provide interpretability to complex models by identifying influential spectral features, essential for scientific validation and regulatory compliance [16].

- Generative AI: GANs and diffusion models create synthetic spectral data for augmentation and enable inverse design—predicting molecular structures from spectral data [16].

- Multimodal Deep Learning: Fusion architectures process data from multiple spectroscopic techniques (e.g., Raman + IR + MS) simultaneously, providing comprehensive sample characterization [16].

- Physics-Informed Neural Networks: These incorporate physical laws and domain knowledge into AI models, preserving real spectral and chemical constraints for more plausible predictions [16].

Q2: How much training data is typically required to develop accurate AI models for spectroscopy?

Data requirements vary significantly by application:

- Theoretical Data: Models trained on quantum chemical simulations typically require thousands to tens of thousands of spectra for robust predictions [17].

- Experimental Data: Fine-tuning with experimental data can be effective with hundreds of well-curated samples, especially using transfer learning approaches [17].

- Challenging Cases: For complex biological samples or mixtures, larger datasets (1000+ samples) are often necessary to capture variability. Generative AI can help mitigate data requirements through synthetic data generation [16].

Q3: What software platforms specifically support AI-enabled spectral analysis?

The landscape includes both commercial and emerging platforms:

- Integrated Commercial Solutions: Major vendors like Thermo Fisher Scientific, Bruker, and Agilent now incorporate AI/ML capabilities into their spectroscopy software suites [7].

- Research Platforms: Unified platforms like SpectrumLab and SpectraML offer standardized benchmarks for deep learning research, integrating multimodal datasets and transformer architectures [16].

- Emerging Tools: SpectroGen from MIT researchers acts as a "virtual spectrometer," generating spectra across modalities with 99% accuracy compared to physical instruments [18].

Workflow Visualization

(Diagram: AI Spectral Analysis Workflow)

AI Spectral Analysis Troubleshooting Flowchart

(Diagram: AI Spectral Issue Resolution)

XAI Spectral Interpretation Process

(Diagram: XAI Spectral Interpretation)

Research Reagent Solutions

Table: Essential AI Spectroscopy Research Tools

| Tool/Platform | Function | Application Examples |

|---|---|---|

| SpectroGen (MIT) | Generative AI virtual spectrometer converting between spectral modalities | Converting IR to X-ray spectra; quality control with single instrument [18] |

| SpectrumLab/SpectraML | Standardized deep learning platforms with multimodal datasets | Benchmarking AI models; transfer learning for spectral analysis [16] |

| SHAP/LIME Libraries | Explainable AI packages for model interpretability | Identifying influential wavelengths in classification models [16] |

| FPGA Neural Networks (Liquid Instruments) | Hardware-accelerated AI inference | Real-time spectral analysis in manufacturing environments [11] |

| GAN/Diffusion Models | Synthetic spectral data generation | Data augmentation for limited datasets; inverse molecular design [16] |

| Multimodal Fusion Tools | Integrating multiple spectroscopic techniques | Combined Raman+IR+MS analysis for comprehensive characterization [16] |

Troubleshooting Guide for Modern Spectroscopic Systems

Handheld and Portable Spectrometers

Problem 1: Inconsistent or Noisy Readings in Field Environments

- Question: My handheld spectrometer gives stable readings in the lab, but becomes noisy and inconsistent during field use. What could be causing this and how can I fix it?

- Answer: This is a common challenge when moving from controlled lab environments to the field. The issue is often related to environmental factors or sample presentation.

- Environmental Interference: Variances in temperature and humidity can affect both the instrument's electronics and the sample itself. Check the manufacturer's specified operating range [19].

- Sample Presentation: For techniques like Near-Infrared (NIR), inconsistent pressure, orientation, or distance from the sample probe can cause significant signal variance. Use a consistent method and, if available, a sample clamp.

- Lighting Conditions: For portable Raman or UV-Vis systems, strong, direct sunlight can interfere with the signal. Try to use the instrument in a shaded area.

- Vibration: Physical movement during measurement can introduce noise. Ensure the instrument is held steady on a stable surface or use a tripod.

- Troubleshooting Protocol:

- Re-calibrate On-Site: Perform all manufacturer-recommended calibration steps in the field environment immediately before use.

- Check Battery Power: Low battery can lead to voltage drops and inconsistent source lamp or laser performance. Ensure the battery is fully charged [19].

- Use a Reference Standard: Measure a known reference standard in the field to verify instrument performance.

Problem 2: Data Transfer and Connectivity Issues with Cloud Platforms

- Question: The wireless data transfer from my portable spectrometer to the cloud software platform is unreliable, leading to data management bottlenecks.

- Answer: Modern handheld devices often feature cloud connectivity, but this can be a failure point [19].

- Connection Protocol: Ensure the device's firmware and mobile application are up-to-date. Compatibility issues are a common source of connection drops.

- Data Workflow: Verify the integrity of the local network (Wi-Fi or cellular). If the signal is weak, data packets may be lost.

- Troubleshooting Protocol:

- Check Network Strength: Before starting measurements, verify the stability of the internet connection.

- Validate File Format: Ensure the device is exporting data in a format (e.g., .csv, .jcamp) compatible with your cloud or data analysis software.

- Small Batch Test: Attempt to transfer a small batch of files first to confirm the entire workflow is functional before a full day's data collection.

High-Throughput and Automated Systems

Problem 1: Poor Reproducibility in 96-Well Plate Readers

- Question: My high-throughput Raman plate reader shows high well-to-well variability, making the data from my screening assay unreliable.

- Answer: In high-throughput systems, reproducibility is paramount. Variability often stems from the sample plate or the measurement process itself.

- Plate Quality: Inconsistent well depth, optical clarity, or autofluorescence of the plate material can create significant background differences. Validate plates from different suppliers.

- Liquid Handling: Inaccuracies in automated liquid handling can lead to slight variations in sample volume or meniscus, affecting the signal path.

- Instrument Focus: A misaligned or inconsistent autofocus system can cause the measurement focal point to vary between wells.

- Troubleshooting Protocol:

- Blank Plate Scan: Run a measurement on a plate filled only with your buffer or solvent to establish a background baseline for each well.

- Control Homogeneity: Use a homogeneous control sample distributed across the plate to map out positional variability.

- Calibration Check: Use the instrument's built-in calibration routines to verify the wavelength and intensity axes. For example, systems like the PoliSpectra rapid Raman plate reader should have guided calibration workflows [11].

Problem 2: Integration Failure Between Automated Spectrometers and Data Analysis AI

- Question: The AI/ML model that worked well for analyzing data from our research-grade FT-IR is performing poorly when integrated with our new high-throughput automated system.

- Answer: This is a classic data drift problem. The new system may be introducing subtle, systematic differences in the spectral data that the model was not trained on.

- Spectral Preprocessing: The data preprocessing steps (e.g., normalization, smoothing, baseline correction) must be identical to those used during the model's training. Even small differences can break a model.

- Data Distribution Shift: The high-throughput system may have a different signal-to-noise ratio, optical resolution, or background contribution.

- Troubleshooting Protocol:

- Data Auditing: Collect a set of standard samples on both the old and new instruments. Compare the raw and preprocessed spectra to identify the nature of the shift (e.g., offset, scaling).

- Model Retraining/Fine-Tuning: Use a small subset of data from the new instrument to retrain or fine-tune the existing AI model. Techniques like transfer learning can be effective here [20].

- Consult Vendor: Many vendors of high-throughput systems, like those developing targeted systems for biopharmaceuticals, provide specialized software and models; ensure you are using the recommended data pipeline [11].

Frequently Asked Questions (FAQs)

FAQ 1: What are the key advantages of using portable spectrometers in drug development? Portable spectrometers enable rapid, on-site analysis, which is invaluable for tasks like raw material identification (RMI) at the receiving dock, in-process checks during manufacturing, and quality control of final products. This reduces the need to send samples to a central lab, drastically cutting down decision-making time from days to minutes and helping to ensure compliance with regulations [19] [21].

FAQ 2: How is AI improving data processing for high-throughput spectroscopy? AI and machine learning automate and enhance the analysis of the large, complex datasets generated by high-throughput systems. Key improvements include:

- Automated Feature Extraction: Deep learning models, like Convolutional Neural Networks (CNNs), can automatically identify relevant spectral features without manual input, saving time and reducing subjectivity [20].

- Nonlinear Calibration: Algorithms like Random Forest and XGBoost can model complex, nonlinear relationships between spectral data and chemical properties, often outperforming traditional linear methods like PLS [20].

- Predictive Analytics: ML models can be used for pattern detection and predictive analytics, flagging potential anomalies or predicting sample properties directly from the spectrum [7].

FAQ 3: What should I consider when validating a portable spectrometer for a GxP environment? The validation process should be rigorous and documented.

- Performance Qualification (PQ): Demonstrate that the instrument consistently performs according to its specifications in your specific environment and for your intended application.

- Data Integrity: Ensure the system has features like audit trails, user access controls, and electronic signatures if it will be used for regulated work. On-premises software solutions are often preferred in these environments for greater data control [7].

- Standard Operating Procedures (SOPs): Develop detailed SOPs for operation, calibration, and maintenance.

- Comparison to Compendial Methods: Run a validation study comparing the results from the portable device to your established laboratory methods.

The Scientist's Toolkit: Research Reagent and Material Solutions

The following table details key materials and software solutions essential for effective experimentation with modern spectroscopic systems.

| Item Name | Function & Explanation |

|---|---|

| Ultrapure Water Purification System (e.g., Milli-Q SQ2) | Provides water free of ionic, organic, and microbial contaminants. Essential for preparing mobile phases, sample dilution, and cleaning to prevent background interference and contamination in sensitive measurements [11]. |

| Stable Reference Standards | Certified materials with known composition and spectral properties. Used for daily instrument performance validation (qualification), wavelength calibration, and ensuring data comparability across different instruments and locations [21]. |

| Specialized Spectral Libraries | Application-specific databases of reference spectra (e.g., for excipients, active ingredients, or common adulterants). Critical for the accurate identification of unknown samples by handheld NIR or Raman instruments, serving as the training basis for AI/ML models [19]. |

| Chemometrics & AI Software | Software platforms (e.g., from Bruker, Thermo Fisher, Agilent) that include machine learning algorithms (PLS, Random Forest, XGBoost). Used to build, train, and deploy quantitative and qualitative calibration models that transform spectral data into actionable chemical insights [7] [20]. |

| Validated Calibration Sets | Carefully characterized sets of samples spanning the expected concentration range of the analyte of interest. The foundation for building robust quantitative models; the quality and breadth of this set directly determine model accuracy and reliability [20]. |

Workflow and Data Analysis Diagrams

High-Throughput Spectral Screening Workflow

AI-Driven Spectral Data Analysis Pipeline

Table 1: Portable Handheld Spectrometer Market Forecast (2025-2033)

| Metric | Value | Notes / Context |

|---|---|---|

| 2024 Market Size | ~USD 1.1 Billion (Spectroscopy Software) [7] | Base year for related software market. |

| 2025 Projected Market Size | ~USD 1.5 Billion (Portable Handheld Spectrometers) [19] | Estimated market size for the hardware segment. |

| Forecast Period CAGR | 6.5% (2025-2033) [19] | Compound Annual Growth Rate for the portable spectrometer market. |

| 2034 Projected Market Size | USD 2.5 Billion (Spectroscopy Software) [7] | Projection for the broader software market driving instrument utility. |

Table 2: Key Application Areas for Portable/HTS Systems

| Application Area | Common Technology | Primary Use Case in Drug Development |

|---|---|---|

| Raw Material Identification | Handheld NIR, Handheld Raman [19] | Rapid verification of incoming chemicals and excipients at the warehouse. |

| High-Throughput Screening | Raman Plate Readers (e.g., PoliSpectra) [11] | Automated analysis of 96-well plates for drug candidate screening or formulation stability. |

| Process Analytical Technology (PAT) | In-line NIR probes, Portable Spectrometers [19] | Real-time monitoring of chemical reactions and processes during manufacturing. |

| Quality Control & Counterfeit Detection | Handheld XRF, NIR [19] | On-site checking of final product composition and detection of counterfeit drugs. |

The Critical Link Between Data Quality and Model Performance

For researchers in drug development and materials science, the reliability of spectroscopic data directly dictates the success of machine learning models and analytical outcomes. High-quality data ensures accurate model predictions for critical tasks like protein characterization, contaminant identification, and formulation analysis [11] [22]. This guide provides practical troubleshooting and best practices to help scientists diagnose, resolve, and prevent common data quality issues in spectroscopic analysis.

Troubleshooting Guides

Guide 1: Addressing Spectral Baseline Anomalies

Problem: A drifting or unstable baseline appears in your spectra, compromising quantitative analysis.

- Step 1: Differentiate Source - Record a fresh blank spectrum under identical conditions. If the blank shows similar drift, the issue is instrumental. If the blank is stable, the problem is sample-related [23].

- Step 2: Instrumental Checks -

- UV-Vis Systems: Verify that deuterium or tungsten lamps have reached thermal equilibrium, as intensity fluctuations during warm-up cause drift [23].

- FTIR Systems: Check for interferometer misalignment due to thermal expansion or mechanical disturbances. Ensure proper purging to minimize atmospheric water vapor and CO₂ interference [23].

- Environmental Factors: Monitor for air conditioning cycles or vibrations from adjacent equipment, which can disturb optical components [23].

- Step 3: Sample-Related Checks - Inspect sample preparation for consistency. Look for matrix effects or potential contamination introduced during preparation [23].

Guide 2: Diagnosing Missing or Suppressed Peaks

Problem: Expected peaks are absent, weak, or diminish progressively across measurements.

- Step 1: Verify Detector & Signal - Check detector sensitivity and ensure it has not degraded. Confirm that signal collection parameters (e.g., integration time, laser power in Raman, detector gain) are correctly set for the expected analyte concentration [23].

- Step 2: Review Sample Preparation - Confirm sample concentration, homogeneity, and absence of paramagnetic species (for NMR). Inconsistent preparation is a common cause of insufficient analyte signal [23].

- Step 3: Assess Instrument Tuning - Check for minor drifts in instrument sensitivity or calibration. Use certified reference compounds to verify mass calibration in Mass Spectrometry or wavelength accuracy in optical techniques [23].

Guide 3: Resolving Excessive Spectral Noise and Artifacts

Problem: Random fluctuations or artifacts obscure the true signal, reducing the signal-to-noise ratio.

- Step 1: Identify Noise Sources -

- Step 2: System Maintenance -

- Step 3: Optimize Acquisition - Increase integration time or co-add more scans where feasible. Apply appropriate smoothing functions and ensure correct baseline correction protocols are used [23].

Frequently Asked Questions (FAQs)

Q1: Our NIR prediction model's performance has degraded over time. What is the most likely cause? A: This is typically caused by model drift. The samples being analyzed have likely changed, for instance, due to a new raw material supplier, alterations in the production process, or seasonal variations in natural products. To fix this, the prediction model must be updated with new sample spectra and corresponding reference values that represent the current product variability [24].

Q2: Why is data integrity especially critical in pharmaceutical spectroscopy? A: Data integrity—ensuring data is accurate, complete, and consistent throughout its lifecycle—is a regulatory cornerstone. It is mandated by standards like FDA's 21 CFR Part 11 for electronic records. Compromised integrity, such as a missing audit trail or improper access controls, can invalidate pharmacopoeia tests for drug quality, leading to severe regulatory actions [22].

Q3: We see broad, overlapping bands in our NIR spectra. Is the data still usable for quantitative analysis? A: Yes. NIR spectra are characterized by broad, overlapping bands due to the nature of the overtone and combination vibrations. This is why NIR is considered a secondary technology. It requires chemometrics to correlate the complex spectral data with reference values from a primary method (like Karl Fischer titration for water content) to build a robust prediction model [24].

Q4: How can I quickly check if my spectrometer is functioning correctly before a critical run? A: Perform a "five-minute quick assessment": 1. Run a blank to check for baseline stability. 2. Measure a standard reference material to verify peak positions and intensities are within expected ranges. 3. Check the signal-to-noise ratio on a standard to confirm instrument sensitivity has not degraded [23].

Q5: What is the minimum number of samples needed to develop a reliable NIR prediction model? A: The number depends on the sample matrix complexity. For a simple matrix (e.g., water in a halogenated solvent), 10-20 samples covering the entire concentration range may suffice. For more complex applications (e.g., active ingredient in a tablet), a minimum of 40-60 samples is recommended to capture product variability reliably [24].

Essential Research Reagent Solutions

Table: Key reagents and materials for ensuring spectroscopic data quality.

| Item | Primary Function in Research |

|---|---|

| Certified Reference Materials (CRMs) | Essential for instrument calibration and method validation, ensuring accuracy and traceability to standards [23]. |

| Ultrapure Water (e.g., Milli-Q SQ2) | Critical for sample preparation, buffer/mobile phase creation, and dilution to prevent contaminant interference [11]. |

| Magnetic Nanoparticles | Used in novel preconcentration techniques to enhance sensitivity in atomic spectroscopy (e.g., FAAS) [25]. |

| Silver/Gold Nanoparticles (SERS Substrates) | Enable surface-enhanced Raman spectroscopy (SERS) for highly sensitive detection of low-concentration pollutants [25]. |

| Deuterated Solvents | Necessary for NMR spectroscopy to provide a non-interfering signal lock and maintain a stable field for accurate measurements. |

Workflow Visualizations

Building Your Data Processing Toolkit: Preprocessing, Fusion, and Modeling

FAQs and Troubleshooting Guides

Baseline Correction

Q1: Why does my baseline-corrected spectrum show negative values or distorted peaks?

A: This common issue often arises from an improperly fitted baseline that subtracts too much from the signal. The problem frequently stems from incorrect parameter selection in iterative algorithms.

- Root Cause: In penalized least squares methods (like airPLS, AsLS), using default smoothing parameters (λ, τ, p) that are too aggressive can cause the baseline to intersect your spectral peaks [26].

- Troubleshooting Steps:

- For airPLS/AsLS methods: Systematically fine-tune the λ (smoothness penalty) and τ (convergence tolerance) parameters. Research shows that adaptive grid search optimization can reduce mean absolute error (MAE) in baseline estimation by 91-99% compared to using default parameters [26].

- Validate on known regions: For FTIR of hydrocarbon gases, use non-sensitive areas (where absorbance approaches zero) to validate your baseline fit. The NasPLS method uses these regions to automatically optimize parameters [27].

- Consider advanced methods: Newer approaches like Triangular Deep Convolutional Networks automatically learn optimal correction parameters, preserving peak intensity and shape while reducing computation time [28].

Q2: How can I automatically correct baselines without manual parameter tuning for high-throughput applications?

A: Machine learning approaches now enable fully automated baseline correction with minimal user intervention.

- ML-airPLS Framework: This method combines principal component analysis with random forest (PCA-RF) to predict optimal λ and τ parameters directly from spectral features. It processes each spectrum in approximately 0.038 seconds while achieving 90±10% improvement over default parameters [26].

- Deep Learning Solutions: Triangular Deep Convolutional Networks provide greater adaptability and enhance automation by learning appropriate corrections from data, eliminating manual parameter tuning for different spectral datasets [28].

- Automatic Methods: The NasPLS algorithm automatically identifies non-sensitive spectral regions and optimizes parameters based on root mean square error minimization between original and fitted baselines in these regions [27].

Scattering Correction

Q3: When should I use Multiplicative Scatter Correction (MSC) versus Standard Normal Variate (SNV) for scatter effects?

A: The choice depends on your sample characteristics and the nature of the scattering effects in your data.

- MSC is most effective when all samples have similar chemical compositions and you have a representative reference spectrum. It assumes a linear relationship between scatter and concentration [29] [30].

- SNV performs better for heterogeneous samples without requiring a reference spectrum. It centers and scales each spectrum individually, making it robust for samples with varying compositions [31].

- Performance Consideration: Studies show that SNV generally performs better with noisy spectra because it relies on reflectance values across the entire spectrum rather than limited reference points [31].

- Advanced Alternative: Extended Multiplicative Scatter Correction (EMSC) can handle more complex scattering effects and separate them from chemical absorbance, though it requires more computational resources [29].

Q4: Why do my quantitative results vary after scatter correction, and how can I prevent this?

A: Overly aggressive scatter correction can remove biologically or chemically relevant variance, compromising quantitative accuracy.

- Root Cause: Traditional MSC and SNV assume scattering effects are the dominant source of variance, which may not always be true. This can lead to overfitting and removal of meaningful chemical information [29].

- Prevention Strategies:

- Apply validation tests: Compare the variance explained by treatment groups before and after correction. Effective correction should reduce technical variance while preserving biological variance [32].

- Use EMSC variants: These incorporate chemical knowledge into the correction model, preserving chemically relevant features while removing physical scatter effects [29].

- Evaluate multiple methods: Test different correction approaches on a subset of data with known outcomes to determine which method best preserves your quantitative relationships [30].

Normalization

Q5: How do I choose the right normalization method for my hyperspectral imaging data?

A: Normalization method selection should be guided by your data characteristics and analytical goals, particularly for HSI with its spatial-spectral complexity.

- For noisy spectra: Standard Normal Variate (SNV) and area under the curve (AUC) methods generally perform better because they utilize information across the entire spectrum rather than relying on limited reflectance values [31].

- For preserving relative intensities: Probabilistic Quotient Normalization (PQN) adjusts distribution based on ranking of a reference spectrum, making it robust for multi-omics temporal studies [32].

- Validation approach: Systematically evaluate normalization methods by:

- Applying multiple normalization techniques to your HSI data

- Implementing uniform scaling to enable direct comparison

- Quantifying consistency with reference spectra or known standards [31]

Q6: Which normalization methods work best for temporal studies in multi-omics applications?

A: Temporal studies require methods that reduce technical variation without distorting time-dependent biological patterns.

- Top Performers: Evaluation across metabolomics, lipidomics, and proteomics from the same samples identified:

- Methods to Use Cautiously: Machine learning-based SERRF normalization, while powerful, may inadvertently mask treatment-related variance in temporal data [32].

- Key Principle: Effective normalization in temporal studies should enhance quality control sample consistency while preserving both time and treatment-related biological variance [32].

Quantitative Data Comparison Tables

Table 1: Performance Comparison of Baseline Correction Methods

| Method | Core Mechanism | Parameter Sensitivity | Computation Speed | Accuracy (MAE Reduction) | Best Application Context |

|---|---|---|---|---|---|

| Triangular Deep Convolutional Networks [28] | Deep learning architecture | Low (automated) | Fast | Superior correction accuracy, preserves peak integrity | Raman spectroscopy with fluorescence distortion |

| OP-airPLS [26] | Optimized penalized least squares | Medium (requires optimization) | Medium (adaptive grid search) | 96±2% improvement over defaults | Complex spectral shapes with varying baselines |

| ML-airPLS [26] | PCA-RF parameter prediction | Low (automated) | Very fast (0.038s/spectrum) | 90±10% improvement | High-throughput processing |

| NasPLS [27] | Reweighted PLS for non-sensitive areas | Low (automatic parameter selection) | Fast | Accurate in noisy conditions | FTIR gas analysis (e.g., methane, ethane) |

| Traditional airPLS [26] | Penalized least squares | High (manual tuning required) | Fast | Variable (depends on parameter tuning) | Simple baselines with expert tuning |

Table 2: Normalization Method Performance Across Spectral Types

| Method | Mathematical Basis | HSI Performance [31] | Multi-omics Performance [32] | Noisy Data Robustness | Key Advantages |

|---|---|---|---|---|---|

| Standard Normal Variate (SNV) | Centering and scaling | Excellent (utilizes full spectrum) | Not assessed | High | No reference needed, handles heterogeneity |

| Area Under Curve (AUC) | Total area scaling | Good | Not assessed | Medium | Maintains relative peak relationships |

| Probabilistic Quotient (PQN) | Reference spectrum ratio | Not assessed | Optimal for metabolomics/lipidomics | Medium | Robust to dilution effects |

| LOESS | Local regression | Not assessed | Optimal for metabolomics/lipidomics/proteomics | Medium | Handles non-linear trends |

| Median Normalization | Median scaling | Not assessed | Excellent for proteomics | High | Robust to outliers |

| Maximum Reflectance | Max value scaling | Poor with noisy spectra | Not assessed | Low | Simple implementation |

Experimental Protocols

Protocol 1: Systematic Evaluation of Normalization Methods for HSI Cameras

Objective: To identify the most robust normalization method for standardizing performance evaluation of hyperspectral imaging cameras under varying conditions [31].

Materials and Equipment:

- High-resolution HSI camera (e.g., 4250 VNIR with Fabry–Perot Interferometer)

- Spectralon wavelength calibration target (WCS-EO-010) with Erbium Oxide spikes

- Two different light sources (Xenon and Tungsten Halogen)

- NIST-traceable white reflectance target (SRT-99-100)

- Dark room environment

Procedure:

- System Setup: Turn on camera and light sources one hour before measurements to stabilize. Conduct all measurements in a dark room.

- Reference Measurements:

- Capture dark signal (Idark) with camera cap in place

- Measure white reference signal (Iw) using SRT-99-100 target

- Use same camera settings for all acquisitions

- Target Measurement:

- Position calibration target in field of view

- Adjust exposure time to achieve high intensity without saturation

- Capture spectral data (I) from 200 × 200-pixel region at image center

- Repeat triplicate measurements for each light source

- Reflectance Calculation:

- Normalization Application: Apply nine different normalization methods to calculated reflectance spectra:

- Area Under Curve (AUC), Standard Normal Variate (SNV), Centering Power, Max, Min, Mean, Median, Vector, and Range Normalization

- Uniform Scaling: Apply consistent scaling to enable cross-method comparison

- Performance Evaluation: Compare normalized spectra to manufacturer reference spectra to quantify method effectiveness

Validation Metric: Consistency with reference spectra across different illumination conditions.

Protocol 2: Optimized airPLS Baseline Correction for Raman Spectra

Objective: To implement optimized airPLS (OP-airPLS) for superior baseline correction of Raman spectra with complex baselines [26].

Materials:

- Simulated spectral dataset with 12 spectral shapes (3 peak types × 4 baseline variations)

- Workstation with Python 3.11.5 (NumPy, Pandas, SciPy, Scikit-learn)

- Experimental Raman spectra for validation

Procedure:

- Spectral Simulation:

- Generate 500 spectra for each of 12 spectral shapes

- Include three peak types: Broad (B), Convoluted (C), Distinct (D)

- Incorporate four baseline shapes: Exponential (E), Gaussian (G), Polynomial (P), Sigmoidal (S)

- Adaptive Grid Search Optimization:

- Fix smoothness order p = 2

- Systematically vary λ (10^0 to 10^8) and τ (10^-8 to 10^-1)

- For each spectrum, initialize with (λ0, τ0) = (100, 0.001) or optimized parameters from previous similar spectrum

- Compute baseline estimate for each parameter combination

- Calculate Mean Absolute Error (MAE) between estimated and true baseline

- Select parameters (λ, τ) that minimize MAE

- Convergence Determination:

- Refine grid progressively around best-performing combinations

- Stop when MAE improvement < 5% across 5 consecutive refinement steps

- Machine Learning Implementation (Optional):

- Extract spectral features via Principal Component Analysis (PCA)

- Train Random Forest model to predict optimal (λ, τ) from spectral features

- Validate model on holdout dataset

- Performance Quantification: where MAEDP uses default parameters and MAEOP uses optimized parameters

Validation: Target PI > 90% (equivalent to MAE reduction by one order of magnitude).

Workflow and Signaling Pathway Diagrams

Spectral Preprocessing Hierarchy

Baseline Correction Troubleshooting

Research Reagent Solutions

Table 3: Essential Materials for Spectral Preprocessing Validation

| Material/Software | Specification/Version | Function in Preprocessing | Application Context |

|---|---|---|---|

| Spectralon Wavelength Calibration Target [31] | WCS-EO-010 with Erbium Oxide | Provides sharp absorption spikes at 490, 522, 654, 800 nm for validation | HSI camera performance evaluation |

| NIST-traceable White Reflectance Target [31] | SRT-99-100 (99% reflectance) | Reference standard for reflectance calculation | HSI system calibration |

| Python Scientific Stack [26] | Python 3.11.5 with NumPy, Pandas, SciPy, Scikit-learn | Implementation of optimization algorithms and machine learning models | Custom preprocessing development |

| MATLAB [27] | 2022b | Algorithm implementation and validation | NasPLS and related baseline methods |

| Fabry–Perot Interferometer HSI Camera [31] | 4250 VNIR (Hinalea Imaging Corp.) | High-resolution spectral data acquisition | Medical HSI research |

| Multi-collector ICP-MS [11] | Custom configuration | High-resolution isotope analysis | Atomic spectroscopy baseline validation |

In modern spectroscopic instrumentation research, data fusion has emerged as a powerful paradigm for overcoming the inherent limitations of individual analytical techniques. By strategically integrating multiple data sources—from various vibrational and atomic spectroscopies—researchers can achieve a more comprehensive understanding of complex samples, enhancing both predictive accuracy and analytical robustness. This technical support center provides essential guidance for implementing these advanced data fusion strategies within your research workflows.

Core Concepts: Data Fusion Strategies

Data fusion techniques are generally categorized into three main levels, each with distinct advantages and implementation requirements [33].

Table 1: Data Fusion Levels and Characteristics

| Fusion Level | Description | Key Techniques | Best Use Cases |

|---|---|---|---|

| Early Fusion (Low-Level) | Concatenates raw or pre-processed data from multiple sources into a single matrix [33]. | PCA, PLSR [33] | Simple, fast integration of homogeneous data types. |

| Intermediate Fusion (Mid-Level) | Combines features extracted from each data source, often using dimension reduction [33]. | PCA, PLS Latent Variables, Variable Selection [33] [34] | Leveraging complementary information while reducing noise and redundancy. |

| Late Fusion (High-Level) | Builds separate models for each data source and combines the final predictions [33]. | Model Averaging, Weighted Voting [33] | Preserving model interpretability and handling very heterogeneous data. |

| Complex-Level Fusion | A sophisticated, two-layer ensemble method that jointly selects variables and stacks models [35]. | Genetic Algorithm, PLS, XGBoost [35] | Complex industrial and geological applications requiring high predictive accuracy from limited samples. |

Frequently Asked Questions (FAQs)

Q1: What are the primary benefits of using data fusion over single-source spectroscopic analysis?

Data fusion provides enhanced chemical specificity, quantitative robustness, and interpretability by combining complementary information from different techniques [33]. For example, while vibrational spectroscopy (like IR or Raman) probes molecular vibrations and functional groups, atomic spectroscopy (like ICP-AES) reveals elemental composition. Fusing these data sources creates a more complete picture of sample composition, which is particularly valuable in complex applications like pharmaceutical quality control or environmental monitoring [33]. Research shows that in over 80% of studies, fusion methods positively affected results, with only 2% reporting negative effects compared to non-fusion methods [34].

Q2: When should I choose a Complex-Level Fusion (CLF) approach over simpler fusion methods?

Complex-Level Fusion is particularly suited for challenging industrial and geological applications where sample sizes are limited (fewer than one hundred samples) and predictive accuracy is critical [35]. This method is a two-layer chemometric algorithm that jointly selects variables from concatenated spectra (e.g., MIR and Raman) using a genetic algorithm, projects them via partial least squares, and stacks the latent variables into an XGBoost regressor. Benchmarking studies have demonstrated that CLF consistently outperforms single-source models and classical low-, mid-, and high-level fusion schemes by effectively leveraging complementary spectral information [35].

Q3: What are the most common data pre-processing challenges in fusion, and how can I address them?

The primary challenges are data alignment (different resolutions/sampling grids), scaling and normalization (differing dynamic ranges), and redundancy/multicollinearity (overlapping spectral features) [33]. To address these:

- Alignment: Use interpolation or warping functions to match data points [33].

- Scaling: Apply mean-centering and autoscaling to ensure equal variance across data blocks [33] [34].

- Redundancy: Implement regularization methods like Ridge regression or Sparse PLS to mitigate multicollinearity issues [33].

Effectively integrating heterogeneous data requires a structured approach:

- Understand the complementary nature: Vibrational methods (IR, NIR, Raman) quantify excipients and molecular structures, while atomic methods (ICP-MS, ICP-AES) track elemental impurities [33].

- Choose the appropriate fusion level: For vastly different data types, late fusion (decision-level integration) often maintains interpretability, while intermediate fusion using shared latent space models (like MB-PLS) can capture deeper relationships [33].

- Apply block scaling: Weight each data block appropriately to prevent bias, especially when dealing with large dimensional differences (e.g., thousands of spectral variables versus a few elemental concentrations) [34].

Troubleshooting Guides

Problem 1: Poor Model Performance After Data Fusion

Symptoms: Decreased prediction accuracy, high error rates, or inconsistent results after implementing data fusion.

Potential Causes and Solutions:

- Cause: Improper data scaling causing one modality to dominate.

- Solution: Apply block scaling or normalization techniques like Standard Normal Variate (SNV) to ensure each data block contributes equally to the model [34].

- Cause: Incorrect alignment of spectral variables from different instruments.

- Solution: Implement data alignment protocols using interpolation or warping functions to reconcile different resolutions and sampling grids [33].

- Cause: High redundancy or multicollinearity between features from different sources.

- Solution: Use variable selection techniques like interval PLS (iPLS) or regularization methods (Ridge regression, Sparse PLS) to eliminate redundant variables [33].

Validation Protocol: After addressing these issues, validate model performance using k-fold cross-validation and compare the root mean square error of prediction (RMSEP) against single-source baselines [34].

Problem 2: Technical Instrumentation Issues Affecting Data Quality

Symptoms: Noisy spectra, drifting baselines, inconsistent readings between instruments, or negative peaks.

Potential Causes and Solutions:

- Cause: Instrument vibrations or environmental interference.

- Solution: Ensure spectrometers are on vibration-dampening platforms, away from pumps or heavy lab activity [9].

- Cause: Dirty accessories (e.g., ATR crystals, optical windows).

- Cause: Aging or misaligned light sources.

- Solution: Inspect and replace deuterium or tungsten-halogen lamps according to manufacturer intervals. Verify and correct lamp alignment [37].

- Cause: Contaminated argon supply or poor probe contact in OES.

- Solution: Ensure argon purity and check for leaks. Increase argon flow to 60 psi and use custom seals for irregular surfaces to ensure proper probe contact [36].

Problem 3: Implementation Challenges with Complex Fusion Algorithms

Symptoms: Long computational times, difficulty interpreting results, or convergence failures in advanced fusion models.

Potential Causes and Solutions:

- Cause: High-dimensional data without sufficient variable reduction.

- Cause: Incompatible data structures between different spectroscopic techniques.

- Solution: Use multi-block methods like SO-PLS or PO-PLS that are specifically designed to handle data blocks with different dimensionalities and variances [34].

- Cause: Insufficient sample size for complex models.

- Solution: Consider simpler fusion approaches or incorporate transfer learning techniques to apply models trained on larger datasets to your specific application [33].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Research Reagent Solutions for Spectroscopic Data Fusion

| Item/Reagent | Function in Data Fusion Workflows |

|---|---|

| Certified Reference Materials | Essential for cross-instrument calibration and validation, ensuring data compatibility from different spectroscopic sources. |

| Ultrapure Water Systems | Critical for sample preparation and dilution to prevent contamination that could introduce artifacts in sensitive spectroscopic measurements [11]. |

| Standardized Solvents | Ensure consistent sample preparation across multiple analytical techniques, reducing variability between data sources. |

| ATR Cleaning Solutions | Maintain crystal integrity in FT-IR spectroscopy; contaminated crystals cause negative peaks and data artifacts [9]. |

| Calibration Gas Mixtures | Required for atomic spectroscopy techniques like ICP-MS/OES to maintain plasma stability and ensure quantitative accuracy [36]. |

| Alignment & Validation Standards | Certified materials with known spectral properties used to verify instrument alignment and data quality before fusion. |

Detailed Experimental Protocol: Implementing Complex-Level Fusion for Spectroscopic Data

This protocol outlines the methodology for implementing a Complex-Level Fusion (CLF) approach, based on the method that demonstrated significantly improved predictive accuracy in industrial lubricant additives and mineral analysis [35].

Materials and Equipment

- Paired spectroscopic datasets (e.g., Mid-Infrared (MIR) and Raman spectra from the same samples)

- Computational environment with Python/R and necessary libraries (e.g., scikit-learn, XGBoost)

- Chemometrics software package capable of Genetic Algorithms, PLS, and ensemble modeling

Procedure

Data Collection and Preprocessing

- Collect paired MIR and Raman spectra from identical sample spots.

- Apply necessary preprocessing: Savitzky-Golay smoothing, derivatives, multiplicative scatter correction, and Standard Normal Variate (SNV) normalization [34].

- Perform block scaling to ensure equal variance between the MIR and Raman datasets.

Variable Selection via Genetic Algorithm

- Concatenate the preprocessed MIR and Raman spectra into a single data matrix.

- Implement a genetic algorithm to jointly select informative variables from the concatenated spectral data.

- Optimize the genetic algorithm parameters (population size, generations, crossover/mutation rates) to maximize selection of chemically relevant features.

Latent Variable Projection

- Project the selected variables using Partial Least Squares (PLS) regression.

- Extract latent variables (LVs) that maximize covariance between the spectral data and the response variable.

- Retain the optimal number of LVs based on cross-validation performance to avoid overfitting.

Model Stacking with XGBoost

- Stack the retained latent variables from both MIR and Raman models into a new dataset.

- Use this stacked dataset as input to an XGBoost regressor.

- Tune XGBoost hyperparameters (learning rate, max depth, number of estimators) using cross-validation.

Model Validation

- Validate the CLF model using k-fold cross-validation or an independent test set.

- Compare performance against single-source models (MIR-only, Raman-only) and traditional fusion schemes (low-, mid-, high-level).

- Evaluate using relevant metrics: Root Mean Square Error of Prediction (RMSEP) and R² values.

Expected Outcomes

When successfully implemented, the CLF technique should demonstrate significantly improved predictive accuracy compared to individual models and traditional fusion methods, effectively leveraging the complementary information in different spectral sources [35].

Selecting the Right Chemometric and Machine Learning Model for Your Goal

Frequently Asked Questions

What is the fundamental difference between chemometrics and machine learning? A: Chemometrics primarily relies on linear relationships within datasets and is used for optimizing methods and extracting information from analytical data [38]. In contrast, machine learning is designed to handle large, non-linear datasets, training algorithms with chemical data to learn by example and deliver intelligent decisions [38].

My model performance is poor. What are the first things I should check? A: Begin by investigating your data quality. In spectroscopy, inadequate sample preparation is a leading cause of analytical errors [39]. Ensure your samples are homogeneous and that you have thoroughly cleaned accessories like ATR crystals to prevent contamination and negative peaks in your spectra [9]. Finally, verify that you have performed appropriate data preprocessing.

How do I know if I have enough data to train a machine learning model? A: Data availability is a recognized challenge in applying machine learning to chemistry [38]. While there is no universal minimum, the complexity of your model and the nature of your problem are key factors. Complex models like deep learning require substantial data, while simpler chemometric methods may yield robust results with smaller, well-curated datasets. It is often better to start with a simpler model and ensure your data is high-quality.

My spectral data is noisy. Can machine learning still be effective? A: Yes, but the source of the noise should be addressed first. Identify and mitigate physical disturbances, such as instrument vibrations, which can introduce false spectral features [9]. Many machine learning and chemometric techniques include inherent noise-handling capabilities. Furthermore, specific preprocessing steps like smoothing or filtering can be applied to the data before model training to improve results.

Troubleshooting Common Model and Data Issues

Problem: Poor Model Generalization and Overfitting

Issue: Your model performs well on training data but poorly on new, unseen validation or test data. Solutions:

- Action: Simplify your model. For chemometric models, reduce the number of latent variables or principal components. For machine learning, increase regularization parameters or choose a less complex algorithm.

- Action: Increase your dataset size. Augment your data with more samples or use data augmentation techniques specific to spectral data (e.g., adding controlled noise, applying minor shifts).

- Action: Apply cross-validation. Use k-fold cross-validation to ensure your model's performance is consistent across different subsets of your data.

Problem: Incorrect or Unphysical Predictions

Issue: The model generates predictions that violate known chemical principles or are clear outliers. Solutions:

- Action: Review domain knowledge. Incorporate chemical rules and constraints into the model where possible. Refer to foundational expert systems like DENDRAL and LHASA, which were built on chemical logic and transformation rules [38].

- Action: Clean your training data. Remove or correct outliers and ensure that the data used for training is accurate and representative of the expected chemical space.

- Action: Check for data leakage. Ensure that no information from your validation or test sets was accidentally used during the training process.

Problem: Noisy or Unreliable Spectral Data

Issue: The input spectra have a low signal-to-noise ratio, leading to unstable models. Solutions:

- Action: Improve sample preparation. Ensure samples are homogeneous and have a uniform particle size, as these factors significantly influence how radiation interacts with your sample [39]. For solids, techniques like grinding, milling, and pelletizing are crucial [39].

- Action: Optimize instrument settings. Verify that your spectrometer is properly configured and calibrated. Ensure the instrument is placed in a vibration-free environment [9].

- Action: Apply spectral preprocessing. Use techniques like Savitzky-Golay smoothing, standard normal variate (SNV), or multiplicative scatter correction (MSC) to reduce noise and enhance relevant spectral features.

Chemometric and Machine Learning Model Selection Guide