HPLC Method Validation per ICH Q2(R2): A Complete Guide to Parameters, Procedures, and Lifecycle Management

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on validating High-Performance Liquid Chromatography (HPLC) methods in accordance with the modernized ICH Q2(R2) and Q14 guidelines.

HPLC Method Validation per ICH Q2(R2): A Complete Guide to Parameters, Procedures, and Lifecycle Management

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on validating High-Performance Liquid Chromatography (HPLC) methods in accordance with the modernized ICH Q2(R2) and Q14 guidelines. It covers foundational principles, from defining core validation parameters like specificity, accuracy, and precision to implementing a science- and risk-based lifecycle approach. Readers will find practical methodologies for developing stability-indicating methods, strategies for troubleshooting and optimization, and a clear framework for executing validation studies that meet global regulatory standards for pharmaceutical quality control.

Understanding ICH Q2(R2) and Q14: The New Foundation for HPLC Method Validation

The International Council for Harmonisation (ICH) Q2(R2) guideline, formally adopted in November 2023, represents a significant evolution in the validation of analytical procedures, including High-Performance Liquid Chromatography (HPLC) [1] [2]. This updated guideline expands upon its predecessor, ICH Q2(R1), to address modern analytical technologies and promote a more robust, science-based approach to method validation [3] [4]. For researchers and drug development professionals utilizing HPLC, understanding and implementing Q2(R2) is crucial for ensuring regulatory compliance, data integrity, and the reliability of analytical results throughout the method lifecycle. This guide explores the key changes introduced in Q2(R2), their specific implications for HPLC method validation, and provides practical experimental protocols for compliance.

The Evolution from ICH Q2(R1) to Q2(R2): Key Changes for HPLC

The original ICH Q2(R1) guideline had been in place for nearly two decades before the update to Q2(R2) [4] [5]. This revision reflects the substantial technological advancements in analytical science and addresses gaps in the original guidance [2] [5].

Core Enhancements in Q2(R2):

- Expanded Scope: While Q2(R1) primarily focused on chromatographic techniques, Q2(R2) explicitly includes validation requirements for a broader range of analytical procedures, including spectroscopic methods and bioanalytical assays [4]. This provides a more harmonized framework for laboratories employing multiple technique types.

- Lifecycle Management: Q2(R2) aligns with the principles of ICH Q14 ("Analytical Procedure Development"), promoting an integrated approach to the entire lifecycle of an analytical procedure, from development through validation and ongoing routine use [6] [4]. This encourages continuous monitoring and improvement of HPLC methods.

- Risk-Based Approach: The updated guideline encourages a more dynamic risk assessment during method validation [4]. This allows laboratories to focus validation efforts on the most critical aspects of their HPLC methods, ensuring they are fit for their intended purpose.

- Clarification of Terms: Q2(R2) provides more precise definitions and guidance on validation parameters, such as clearly differentiating between the reportable range (range with established accuracy and precision) and the working range (range where the method generates meaningful data) [5].

Table 1: Core Comparison of ICH Q2(R1) vs. ICH Q2(R2)

| Feature | ICH Q2(R1) | ICH Q2(R2) |

|---|---|---|

| Scope | Primarily chromatographic methods | Includes biological assays, spectroscopic methods (NMR, ICP-MS), and multivariate analyses [4] [5] |

| Lifecycle Approach | Not explicitly covered | Integrated with ICH Q14 for development and lifecycle management [6] [4] |

| Foundation | Primarily prescribed validation | Encourages risk-based validation strategy [4] |

| Range Definition | Single range concept | Distinguishes between reportable range and working range [5] |

| Robustness | General requirements | More specific requirements for different method types [5] |

Critical Validation Parameters for HPLC under ICH Q2(R2)

The fundamental validation parameters for HPLC remain consistent, but Q2(R2) provides enhanced guidance on their application and evaluation [1] [4].

Accuracy, Precision, and Specificity

- Accuracy should demonstrate that the method yields results close to the true value. For HPLC assay of drug substances, recovery rates are typically expected to be within 98-102%, and for drug products, 95-105% [4].

- Precision encompasses repeatability (same operating conditions), intermediate precision (different days, analysts, equipment), and reproducibility (between laboratories). For HPLC assays, Relative Standard Deviation (RSD) is typically ≤2.0% for the drug substance and product, while for impurity testing, it may be ≤5.0% [4].

- Specificity is the ability to assess the analyte unequivocally in the presence of components that may be expected to be present, such as impurities, degradants, and matrix. The guideline emphasizes that when specificity is challenging (e.g., for complex molecules), confirmation by a second, orthogonal method is recommended [5].

Linearity, Range, and Robustness

- Linearity is the ability of the method to obtain test results proportional to the concentration of the analyte. Q2(R2) acknowledges that not all response curves are linear (e.g., immunoassays) and provides guidance for validating such methods [5].

- Range is the interval between the upper and lower concentrations for which suitability has been demonstrated. The new distinction between reportable and working range provides greater clarity for setting system suitability criteria and understanding the method's operational limits [5].

- Robustness evaluates the method's capacity to remain unaffected by small, deliberate variations in method parameters. For HPLC, this includes testing the impact of changes in mobile phase pH (±0.2 units), composition (±2-5%), column temperature (±2-5°C), and flow rate (±10-20%) [4] [5]. The results help establish system suitability tests and control strategies.

Table 2: Summary of Key HPLC Validation Parameters and Typical Acceptance Criteria under ICH Q2(R2)

| Validation Parameter | Experimental Focus for HPLC | Typical Acceptance Criteria (Example) |

|---|---|---|

| Accuracy | Recovery of known amounts of analyte spiked into matrix | Drug Substance: 98-102% [4] |

| Precision (Repeatability) | Multiple injections of a homogeneous sample | RSD ≤ 2.0% for Assay [4] |

| Specificity | Resolution from potential interferents (impurities, degradants) | No interference; Peak purity confirmed |

| Linearity | Response across a range of concentrations (e.g., 50-150% of target) | Correlation coefficient (r) > 0.999 |

| Range | Established from linearity, accuracy, and precision data | Encompasses 80-120% of test concentration (for assay) |

| Robustness | Deliberate variation of chromatographic conditions | Resolution ≥ 2.0; Tailing Factor ≤ 2.0 |

Experimental Protocols for HPLC Method Validation

The following protocols outline a standardized approach for validating an HPLC method according to ICH Q2(R2) principles.

Protocol for Specificity and Forced Degradation Studies

This protocol is designed to demonstrate that the HPLC method can accurately measure the analyte in the presence of other components.

Methodology:

- Sample Preparation:

- Standard Solution: Prepare a high-purity reference standard of the analyte at the target concentration.

- Placebo/Blank Solution: Prepare a sample of the formulation matrix without the active ingredient.

- Forced Degradation Samples: Stress the drug substance and product under various conditions:

- Acidic Hydrolysis: Treat with 0.1M HCl at room temperature for several hours.

- Basic Hydrolysis: Treat with 0.1M NaOH at room temperature for several hours.

- Oxidative Degradation: Treat with 3% H₂O₂ at room temperature for several hours.

- Thermal Degradation: Expose solid sample to 60°C for 1-2 weeks.

- Photolytic Degradation: Expose to UV/Visible light as per ICH Q1B.

- Chromatographic Analysis:

- Inject the standard, placebo, and each degradation sample into the HPLC system.

- Use a photodiode array (PDA) detector to assess peak purity of the main analyte peak in all stressed samples.

- Data Evaluation:

- Specificity: The chromatogram of the placebo should show no peaks co-eluting with the analyte. The peak purity angle should be less than the peak threshold for the analyte in all stressed samples, confirming no co-eluting degradants.

- Forced Degradation: The method should be able to separate and resolve degradation products from the main peak, demonstrating stability-indicating properties.

Protocol for Establishing Accuracy and Precision

This protocol combines the assessment of method correctness (accuracy) and variability (precision).

Methodology:

- Sample Preparation:

- Prepare a minimum of nine determinations across a specified range (e.g., 80%, 100%, 120% of label claim) with at least three concentrations and three replicates each [4].

- For a drug product, this involves spiking the placebo with known quantities of the drug substance.

- Chromatographic Analysis:

- Analyze all samples using the validated HPLC method.

- For intermediate precision, repeat the entire procedure on a different day, with a different analyst, and on a different HPLC system to assess the impact of these variables.

- Data Evaluation:

- Accuracy: Calculate the percentage recovery for each sample. The mean recovery across all levels should meet predefined criteria (e.g., 98-102%).

- Precision: Calculate the Relative Standard Deviation (RSD%) for the replicate measurements at each concentration level (repeatability) and for the total data set from the intermediate precision study. The RSD should typically be ≤2.0% for an assay.

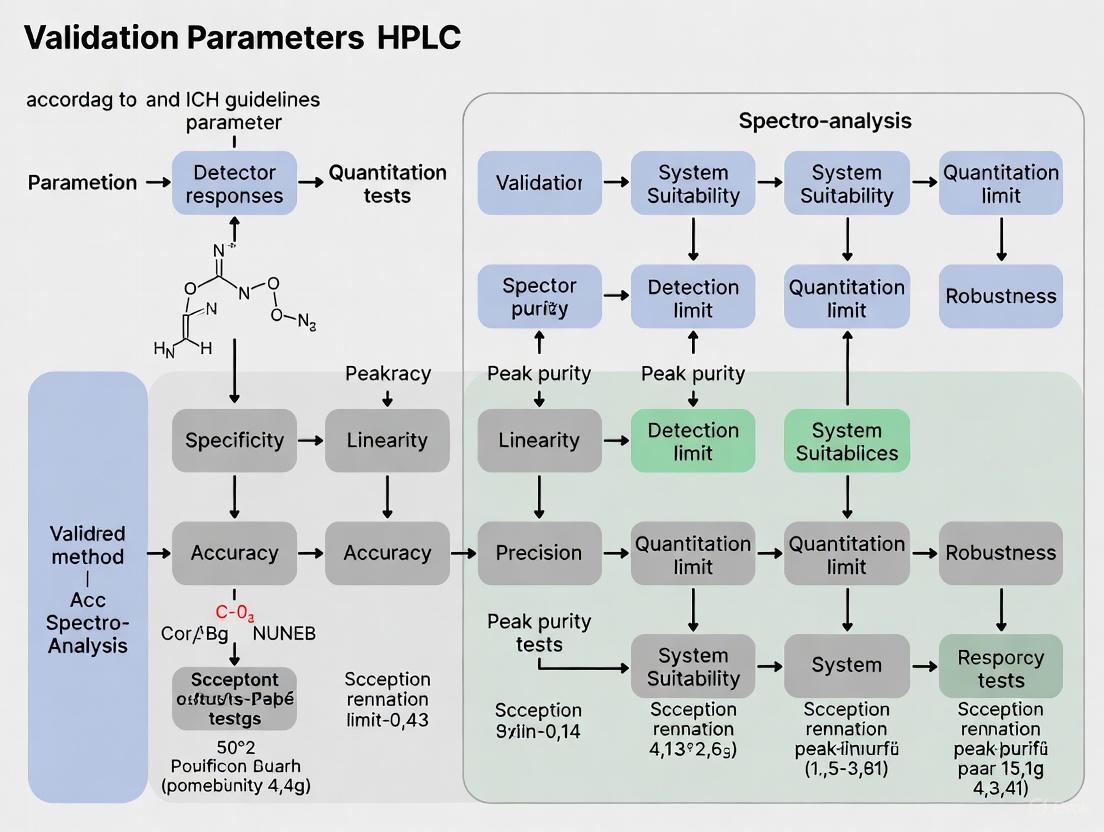

The following workflow diagram visualizes the key stages and decision points in the HPLC method validation lifecycle under ICH Q2(R2).

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful HPLC method validation under ICH Q2(R2) requires high-quality materials and a clear understanding of their function.

Table 3: Essential Research Reagents and Materials for HPLC Validation

| Item | Function & Importance in Validation |

|---|---|

| Certified Reference Standard | High-purity analyte used to prepare calibration standards; critical for establishing method accuracy, linearity, and precision [5]. |

| Placebo Formulation | The drug product matrix without the active ingredient; essential for demonstrating specificity and absence of interference. |

| Forced Degradation Reagents | Acids (HCl), bases (NaOH), oxidants (H₂O₂) used in stress studies to generate degradants and prove method stability-indicating capability [5]. |

| HPLC-Grade Solvents | High-purity mobile phase components (water, acetonitrile, methanol) to minimize baseline noise and prevent system contamination. |

| Buffering Salts | For preparing pH-controlled mobile phases (e.g., phosphate, acetate buffers); crucial for achieving robust and reproducible separation. |

| Characterized Impurities | Isolated or synthesized impurities/degradants; used to confirm specificity, determine relative response factors, and establish quantification limits. |

The adoption of ICH Q2(R2) marks a pivotal shift towards a more holistic, risk-based, and scientifically rigorous framework for analytical method validation. For professionals relying on HPLC, this updated guideline provides a modernized pathway to ensure methods are not only validated for initial use but are also maintained as robust, reliable tools throughout the product lifecycle. By integrating the principles of Q2(R2) with those of Q14, pharmaceutical scientists can develop higher quality methods, facilitate more efficient regulatory reviews, and implement more agile post-approval change management, ultimately contributing to the consistent delivery of safe and effective medicines to patients.

For nearly two decades, ICH Q2(R1) served as the foundational guideline for validating analytical procedures in the pharmaceutical industry, providing a standardized set of parameters to demonstrate that a method is fit for its intended purpose [7]. However, significant advancements in analytical technologies and the increasing complexity of biological products revealed limitations in the original guideline, which was primarily designed around traditional small-molecule drugs and lacked specific guidance for modern analytical challenges [7] [8].

The recently implemented ICH Q2(R2) and the complementary ICH Q14 guideline represent a substantial shift in regulatory philosophy, moving from a prescriptive, "check-the-box" approach to a scientific, risk-based framework that encompasses the entire analytical procedure lifecycle [8] [9]. This evolution aligns with the principles of ICH Q8 (Pharmaceutical Development), Q9 (Quality Risk Management), and Q10 (Pharmaceutical Quality System), creating a more integrated and holistic approach to analytical quality [8]. The update not only includes guidance for contemporary analytical techniques but also formalizes the connection between method development and validation, emphasizing a proactive approach to quality rather than a reactive one [10] [8].

Core Conceptual Shift: From One-Time Validation to Lifecycle Management

The most significant change in the transition from ICH Q2(R1) to Q2(R2) is the fundamental shift in perspective regarding what constitutes analytical procedure validation. The traditional model treated validation as a discrete event—a series of experiments conducted to confirm a method was suitable before its operational use [11]. In contrast, the modern framework introduced in Q2(R2) and detailed in ICH Q14 embraces a continuous lifecycle approach, viewing method validation as an ongoing process that begins with initial development and continues throughout the method's operational use [7] [9].

This lifecycle model consists of three interconnected stages:

- Procedure Design and Development: Derived from an Analytical Target Profile (ATP) and employing enhanced development approaches [11]

- Procedure Performance Qualification: Corresponding to the traditional method validation, but now informed by development knowledge [11]

- Procedure Performance Verification: The ongoing monitoring of the method's performance during routine use to ensure it remains in a state of control [11]

The introduction of the Analytical Target Profile (ATP) is a cornerstone of this new paradigm [9]. The ATP is a prospective summary that defines the intended purpose of the analytical procedure and its required performance criteria [8] [9]. By establishing the ATP at the outset, development efforts are directed toward creating a method that is designed for its specific purpose from the very beginning, rather than having performance criteria applied retrospectively after development is complete [9].

Table 1: Comparison of Traditional vs. Lifecycle Approaches to Analytical Procedures

| Aspect | Traditional Approach (Q2(R1)) | Lifecycle Approach (Q2(R2)/Q14) |

|---|---|---|

| Core Philosophy | Validation as a one-time event | Continuous validation throughout the method's life |

| Starting Point | Method development completion | Analytical Target Profile (ATP) definition |

| Development Approach | Empirical, linear process | Systematic, risk-based, science-driven |

| Regulatory Flexibility | Limited flexibility for changes | Enhanced approach allows more flexible post-approval changes |

| Focus | Verifying performance | Building quality into the method design |

| Documentation | Focus on validation protocol and report | Extensive knowledge management throughout lifecycle |

Detailed Comparison of Key Changes and Additions

Structural and Philosophical Enhancements

The revised guideline introduces several structural changes that reflect its updated philosophical approach. ICH Q2(R2) now explicitly incorporates risk management principles throughout the validation process, aligning with ICH Q9 and encouraging a more scientific justification for validation strategies [8]. This risk-based approach allows laboratories to focus their validation efforts on the most critical aspects of their analytical procedures, potentially reducing unnecessary testing while strengthening control over high-risk parameters [9].

Another significant enhancement is the formal recognition of different analytical technologies. While Q2(R1) was primarily focused on chromatographic methods, Q2(R2) provides guidance for a broader range of techniques, including multivariate methods and other advanced analytical technologies that have emerged since the original guideline was published [8] [9]. This expansion ensures the guideline remains relevant and applicable to modern analytical laboratories working with complex modalities such as biologics [7].

Specific Validation Parameter Updates

While the core validation parameters from Q2(R1) are maintained in Q2(R2), the revised guideline provides enhanced guidance on their application and evaluation, particularly for more complex analytical procedures.

Accuracy and Precision: The updated guideline includes more comprehensive requirements for demonstrating accuracy and precision, with an emphasis on studies that evaluate intra- and inter-laboratory reproducibility to ensure method reliability across different settings [7].

Linearity and Range: The assessment of linearity and range has been refined, with a strengthened requirement to link the method's validated range directly to its ATP, ensuring the range is appropriate for the method's intended use [7].

Detection Limit (LOD) and Quantitation Limit (LOQ): Methodologies for determining LOD and LOQ have been expanded and clarified, with recognition of additional statistical approaches beyond the signal-to-noise ratio method that was commonly (sometimes inappropriately) applied under Q2(R1) [11] [7].

Robustness: Under Q2(R2), robustness testing has evolved from an often informal evaluation to a compulsory, systematic assessment [7]. The guideline now explicitly connects robustness to the lifecycle approach, requiring continuous evaluation to demonstrate a method's stability against expected operational variations [7].

Table 2: Comparison of Validation Parameter Requirements Between Q2(R1) and Q2(R2)

| Validation Parameter | ICH Q2(R1) Requirements | Key Updates in ICH Q2(R2) |

|---|---|---|

| Accuracy | Recovery studies using spiked samples | More comprehensive requirements; inter-laboratory reproducibility emphasized |

| Precision | Repeatability and intermediate precision | Enhanced statistical evaluation; expanded reproducibility expectations |

| Specificity | Ability to measure analyte unequivocally | Enhanced guidance for complex matrices (e.g., biologics) |

| Linearity | Direct proportionality of response to concentration | Statistical methods more explicitly defined; stronger link to ATP |

| Range | Interval where linearity, accuracy, precision are demonstrated | Direct linkage to ATP required; justification more explicitly required |

| LOD/LOQ | Signal-to-noise or standard deviation approaches | Additional statistical approaches recognized and detailed |

| Robustness | Often informal testing of parameter variations | Formalized as compulsory; integrated with lifecycle management |

Implementation Framework: The Enhanced Approach

The Analytical Procedure Lifecycle Workflow

The implementation of the analytical procedure lifecycle involves a systematic workflow that begins with defining requirements and continues through development, validation, and ongoing monitoring. The following diagram illustrates this continuous process:

This workflow demonstrates the continuous nature of the lifecycle approach, with feedback loops enabling method improvement based on operational experience and a foundation of knowledge management supporting all stages [11].

Analytical Quality by Design (AQbD) in Practice

The enhanced approach endorsed by ICH Q14 incorporates Analytical Quality by Design (AQbD) principles, which involve a systematic understanding of the method based on sound science and quality risk management [12] [8]. A practical example of this approach can be seen in the development of an RP-HPLC method for favipiravir, where a risk assessment identified critical factors (solvent ratio, buffer pH, and column type) that significantly impacted method performance [12].

Using a d-optimal experimental design, researchers systematically studied the impact of these factors on multiple responses (peak area, retention time, tailing factor, and theoretical plates) [12]. Through Monte Carlo simulation, they established a Method Operable Design Region (MODR), defining the parameter space where the method consistently meets its performance criteria [12]. This AQbD approach resulted in a more robust and well-understood method compared to what traditional one-factor-at-a-time development could achieve [12].

The Scientist's Toolkit: Essential Elements for HPLC Method Validation

Implementing the enhanced approach for HPLC methods requires specific materials and strategic approaches. The following table details key research reagent solutions and their functions within the context of modern method validation:

Table 3: Essential Research Reagent Solutions for HPLC Method Validation

| Reagent/Material | Function in Validation | Application Example |

|---|---|---|

| Reference Standards | Provide known purity substance for accuracy, linearity, and precision studies | Certified reference material for active pharmaceutical ingredient (API) |

| System Suitability Solutions | Verify chromatographic system performance before and during validation | Mixture of key analytes and degradation products at specified concentrations |

| Forced Degradation Samples | Demonstrate specificity against degradation products | Samples of drug substance subjected to acid, base, oxidative, thermal, and photolytic stress |

| Placebo/Matrix Blanks | Establish specificity against excipients or matrix components | Pharmaceutical formulation without active ingredient or biological matrix |

| Mass Spectrometry Compatible Buffers | Enable hyphenated techniques for structural identification | Volatile buffers like ammonium formate for LC-MS methods |

Experimental Protocols for the Enhanced Approach

Protocol 1: Establishing the Analytical Target Profile

Objective: To define the ATP for an analytical procedure before initiating development activities.

Methodology:

- Define Measurand: Precisely identify the analyte(s) of interest and any required separations from potential interferents [9]

- Establish Performance Requirements: Define the required level of accuracy, precision, range, and other performance characteristics based on the intended use of the method [9]

- Document ATP: Create a formal ATP document containing:

- The analyte and matrix of interest

- Required measurement range

- Target accuracy (as % bias or recovery)

- Target precision (as %RSD)

- Specificity requirements

- Required limits of detection and quantitation, if applicable [9]

Protocol 2: Risk-Based Robustness Testing

Objective: To systematically identify and evaluate the impact of critical method parameters on performance.

Methodology:

- Parameter Identification: Identify all method parameters that may impact performance (e.g., mobile phase pH, column temperature, flow rate) [12]

- Risk Assessment: Use a structured tool (e.g., Failure Mode Effects Analysis) to prioritize high-risk parameters [7]

- Experimental Design: Apply a structured design (e.g., fractional factorial or Plackett-Burman) to efficiently evaluate multiple parameters [12]

- Data Analysis: Statistically analyze results to determine which parameters significantly impact method outcomes [12]

- Control Strategy: Define appropriate controls for critical parameters to ensure robust method performance [7]

Protocol 3: Ongoing Procedure Performance Verification

Objective: To continuously monitor method performance during routine use to ensure it remains in a state of control.

Methodology:

- Control Chart Establishment: Implement control charts for critical performance attributes (e.g., system suitability parameters, reference standard results) [11]

- Trend Analysis: Regularly review control data for statistically significant trends that may indicate method deterioration [11]

- Periodic Review: Conduct formal annual reviews of method performance data, out-of-specification results, and deviations [7]

- Continuous Improvement: Use monitoring data to identify opportunities for method refinement and optimization [11]

The transition from ICH Q2(R1) to Q2(R2) represents a significant evolution in how analytical procedures are developed, validated, and maintained. By embracing the lifecycle approach and implementing the enhanced methodologies outlined in ICH Q14, pharmaceutical companies and analytical laboratories can develop more robust, reliable, and scientifically sound analytical procedures [10] [9].

This shift requires a change in mindset from viewing validation as a regulatory hurdle to approaching it as an integral part of continuous quality assurance [8]. While the initial implementation may require more extensive development activities and documentation, the long-term benefits include more resilient methods, reduced investigations, and more efficient management of post-approval changes [7] [9].

For researchers and drug development professionals, successfully navigating this transition will require investment in training, process reevaluation, and potentially enhanced documentation systems [7]. However, the result will be analytical methods that are not merely validated, but are truly designed for quality throughout their operational life, ultimately contributing to more reliable pharmaceutical quality control and enhanced patient safety [8].

For researchers, scientists, and drug development professionals, high-performance liquid chromatography (HPLC) methods serve as critical tools for analyzing drug substances and products. The reliability of these methods hinges on a rigorous validation process conducted according to international standards. The International Council for Harmonisation (ICH) provides the definitive framework for this process through its ICH Q2(R2) guideline, which defines the core validation parameters essential for demonstrating that an analytical procedure is fit for its intended purpose [1]. This guide explores these parameters—specificity, accuracy, precision, linearity, range, LOD, LOQ, and robustness—within the context of HPLC method validation, providing a comparative analysis of their definitions, experimental protocols, and acceptance criteria.

Core Principles of ICH Analytical Method Validation

The ICH Q2(R2) guideline, officially titled "Validation of Analytical Procedures," outlines a harmonized approach to validation for registration applications submitted to regulatory authorities [1]. It applies to new or revised analytical procedures used for the release and stability testing of commercial drug substances and products, both chemical and biological [1]. The guideline's primary objective is to establish documented evidence that provides a high degree of assurance that a specific analytical process will consistently produce results that meet its predefined criteria and are suitable for their intended use [10].

A fundamental principle in modern analytical development is the integration of Quality by Design (QbD). Framed by ICH Q8, Q9, and Q10, and further supported by the recent ICH Q14 guideline on analytical procedure development, the QbD approach emphasizes a deep, science-based understanding of the method [13] [14]. It begins with defining an Analytical Target Profile (ATP), which outlines the required quality of the analytical data. Through risk assessment and systematic experimentation, Critical Method Attributes (CMAs) and Critical Process Parameters (CPPs) are identified and controlled. This proactive methodology ensures the development of more robust and rugged methods and establishes a Method Operable Design Region (MODR) or design space—a multidimensional combination of parameter ranges within which the method provides reliable results without the need for revalidation [14].

The following workflow outlines the lifecycle of an analytical method, from initial planning to continual improvement, integrating both QbD principles and core validation activities.

Comparative Analysis of Core Validation Parameters

The table below summarizes the purpose, key experimental protocols, and typical acceptance criteria for each of the core validation parameters as defined by ICH Q2(R2) [1] [10] [15].

Table 1: Core HPLC Validation Parameters and Acceptance Criteria

| Validation Parameter | Purpose and Definition | Key Experimental Protocol | Typical Acceptance Criteria |

|---|---|---|---|

| Specificity [15] | Ability to measure the analyte unequivocally in the presence of other components like impurities, degradants, or matrix [10]. | Analyze samples containing the analyte along with potential interferents (degradation products, impurities, excipients). Compare chromatograms to those of blank samples [15]. | The analyte peak is resolved from all other peaks. Peak purity tests confirm a single component [15]. |

| Accuracy [10] [15] | Closeness of agreement between the measured value and a true or accepted reference value [15]. | Analyze a minimum of 9 determinations across at least 3 concentration levels covering the specified range. Compare results to a known reference standard [15]. | Recovery: 98-102% for assay of drug substance [15]. Expressed as percent recovery or bias. |

| Precision [10] [15] | Degree of agreement among individual test results when the procedure is applied repeatedly to multiple samplings. Includes repeatability and intermediate precision. | Repeatability: Multiple measurements of a homogeneous sample under identical conditions.Intermediate Precision: Different days, analysts, or equipment within the same lab [15]. | %RSD ≤ 2.0% for assay of drug substance. Intermediate precision should show no significant statistical difference between sets [15]. |

| Linearity [15] | Ability of the method to produce results directly proportional to analyte concentration in a defined range. | Analyze at least 5 concentration levels spanning the declared range. Plot response vs. concentration and apply linear regression [15]. | Correlation coefficient (r) ≥ 0.995 [15]. Visual inspection of the residual plot for lack of bias. |

| Range [15] | The interval between the upper and lower concentration levels over which linearity, accuracy, and precision are demonstrated. | Established from the linearity study. The range must cover the intended use of the method [15]. | Assay: 80-120% of test concentration.Impurity Quantification: LOQ to 120% of specification [15]. |

| LOD (Detection Limit) [15] | The lowest amount of analyte that can be detected, but not necessarily quantified. | Signal-to-Noise: Typically 3:1 ratio.Standard Deviation: LOD = 3.3σ/S, where σ is SD of response, S is slope of calibration curve [15]. | The analyte peak should be discernible from the baseline noise with the defined ratio or confidence level. |

| LOQ (Quantitation Limit) [15] | The lowest amount of analyte that can be quantified with acceptable accuracy and precision. | Signal-to-Noise: Typically 10:1 ratio.Standard Deviation: LOQ = 10σ/S [15]. | At the LOQ, accuracy and precision (e.g., %RSD) should meet predefined criteria for reliable quantification. |

| Robustness [16] [10] | A measure of the method's capacity to remain unaffected by small, deliberate variations in procedural parameters. | Deliberately vary method parameters (e.g., mobile phase pH, flow rate, column temperature) using a structured design (e.g., factorial design) and measure impact on results [16]. | System suitability criteria are met despite variations. No significant impact on critical quality attributes like resolution or tailing factor. |

Experimental Protocols for Key Parameters

Robustness Testing via Experimental Design

While traditional one-factor-at-a-time (OFAT) approaches are informative, modern robustness testing leverages multivariate experimental designs to efficiently study multiple parameters and their interactions simultaneously [16]. The most common screening designs include:

- Full Factorial Design: Examines all possible combinations of factors at their high and low levels. For k factors, this requires 2^k runs (e.g., 4 factors = 16 runs) [16].

- Fractional Factorial Design: A carefully selected subset of the full factorial design, used when investigating a larger number of factors to save time and resources while still estimating main effects [16].

- Plackett-Burman Design: An highly efficient screening design for identifying the most critical factors from a large set, using a number of runs that is a multiple of four [16].

For an HPLC method, typical factors to vary include mobile phase pH (±0.2 units), organic solvent composition (±2-5%), flow rate (±0.1 mL/min), column temperature (±5°C), and detection wavelength (±2-3 nm) [16]. The outputs (responses) measured are critical quality attributes like resolution, tailing factor, and theoretical plates. A robustness study not only confirms the method's reliability but also helps establish meaningful system suitability test limits [16].

Accuracy and Precision Evaluation

The protocol for assessing accuracy involves spiking a blank matrix (or placebo) with known quantities of the analyte at levels covering the range of the method, typically 80%, 100%, and 120% of the target concentration [15]. Each level should be prepared and analyzed in triplicate, for a total of nine determinations. The mean recovery value at each level is calculated and should fall within the predefined acceptance criteria (e.g., 98-102%) [15].

Precision encompasses both repeatability and intermediate precision. Repeatability is demonstrated by analyzing six independent preparations of a homogeneous sample at 100% of the test concentration and calculating the %RSD of the results [15]. Intermediate precision evaluates the method's performance within the same laboratory under varied conditions, such as a different analyst, instrument, or day. The results from both sets of experiments are compared statistically, and the %RSD for each set and the combined data should meet the acceptance criteria (e.g., %RSD ≤ 2.0%) [15].

Essential Research Reagent Solutions for HPLC Method Validation

The following table details key materials and reagents required for the development and validation of a robust HPLC method.

Table 2: Essential Research Reagent Solutions for HPLC Validation

| Item | Function in HPLC Analysis |

|---|---|

| Reference Standard | A highly characterized substance of known purity and identity used as the benchmark for quantifying the analyte and determining method accuracy [13]. |

| High-Purity Solvents (HPLC Grade) | Used for mobile phase and sample preparation. High purity is critical to minimize baseline noise, ghost peaks, and column contamination [13]. |

| Buffer Salts & pH Modifiers | Used to prepare mobile phase buffers for controlling pH, which is often a critical parameter for achieving adequate separation, peak shape, and robustness [13]. |

| Chemicals for Forced Degradation | Strong acids, bases, oxidants, and exposure to light/heat are used in stress studies to generate degradation products, which are essential for demonstrating method specificity [15]. |

| Characterized Chromatographic Column | The stationary phase is central to the separation. Using a well-characterized column from a reliable supplier and evaluating different column lots is part of robustness testing [16]. |

The core validation parameters defined in ICH Q2(R2)—specificity, accuracy, precision, linearity, range, LOD, LOQ, and robustness—form an interdependent framework that guarantees the quality, reliability, and regulatory acceptability of HPLC methods [1] [10]. Adherence to these parameters is non-negotiable for generating defensible data in drug development. The contemporary shift towards an Analytical Quality by Design (AQbD) approach, supported by ICH Q14, further strengthens this framework by building method understanding and robustness directly into the development phase [13] [14]. This involves systematic risk assessment and experimental design to define a Method Operable Design Region (MODR), providing greater regulatory flexibility and ensuring the method remains fit-for-purpose throughout its entire lifecycle [14]. For scientists, mastering these principles and protocols is not merely about regulatory compliance; it is about instilling confidence in every data point that supports the safety, efficacy, and quality of a pharmaceutical product.

The Role of ICH Q14 in Analytical Procedure Development and the Analytical Target Profile (ATP)

The International Council for Harmonisation (ICH) Q14 guideline, titled "Analytical Procedure Development," represents a significant evolution in the regulatory landscape for pharmaceutical analysis. Finalized and adopted in November 2023, this guideline provides a harmonized, science- and risk-based framework for developing and maintaining analytical procedures used to assess the quality of drug substances and drug products [17] [18]. ICH Q14 works in conjunction with the revised ICH Q2(R2) guideline on "Validation of Analytical Procedures," with both documents designed to be applied together throughout the analytical procedure lifecycle [19] [20]. The primary objectives of ICH Q14 are to enhance the robustness and reliability of analytical methods, facilitate more efficient science-based post-approval change management, and improve regulatory flexibility and efficiency [20] [21].

A fundamental shift introduced by ICH Q14 is its emphasis on the analytical procedure lifecycle, recognizing that methods must evolve in response to new technologies, scientific knowledge, and manufacturing changes [21] [22]. This lifecycle approach helps address the challenge that many quality control laboratories face when using outdated analytical procedures developed decades ago, which often lag behind advances in instrumentation and sample preparation technologies [21]. By providing a structured framework for continual improvement, ICH Q14 enables manufacturers to keep analytical procedures current with state-of-the-art technologies while maintaining regulatory compliance.

Analytical Target Profile (ATP): The Foundation of Analytical Quality

Definition and Purpose of the ATP

The Analytical Target Profile (ATP) is a cornerstone concept of the ICH Q14 enhanced approach, defined as "a prospective summary of the quality characteristics of an analytical procedure" [23]. The ATP articulates what the analytical procedure needs to achieve by defining the intended purpose of the analysis and establishing the required performance characteristics and associated acceptance criteria [24] [23]. Similar to how the Quality Target Product Profile (QTPP) guides drug product development, the ATP serves as the foundation for analytical procedure development, ensuring the method remains fit-for-purpose throughout its lifecycle [23].

The ATP captures the essential requirements that an analytical procedure must fulfill to reliably measure specific product quality attributes, translating analytical needs into measurable performance criteria [24]. By defining these requirements prospectively, the ATP guides the selection of appropriate technologies, establishes the foundation for method validation, and provides the basis for evaluating the impact of future changes to the analytical procedure [23] [18]. A well-constructed ATP ensures that the analytical procedure is designed with the end in mind, focusing on what needs to be measured rather than how to measure it, thereby allowing flexibility in technology selection and method design [18].

Key Components of an Effective ATP

A comprehensive ATP should include several essential components that collectively define the analytical requirements. The core elements of an ATP are summarized in the table below, which provides a structured framework for documenting analytical procedure requirements.

Table 1: Essential Components of an Analytical Target Profile (ATP)

| ATP Component | Description | Example |

|---|---|---|

| Intended Purpose | Clear statement of what the analytical procedure should measure | "Quantitation of the active ingredient in drug product" [23] |

| Technology Selection | Appropriate analytical technique with rationale for selection | HPLC, SDS-PAGE, cell-based assay, with justification [23] [25] |

| Link to CQAs | Connection to relevant Critical Quality Attributes | "Measure of biological potency linked to drug's mechanism of action" [23] |

| Performance Characteristics | Specific metrics for method validation | Accuracy, precision, specificity, range, robustness [19] [23] |

| Acceptance Criteria | Predefined thresholds for performance characteristics | "Acceptable accuracy level based on linearity experiment" [23] |

| Reportable Range | Range over which the method provides reliable results | "Reporting threshold of x% of specification limits" [23] |

In addition to these core components, a robust ATP should prioritize requirements based on their impact on product quality and decision-making [24]. The ATP should remain independent of specific techniques initially to allow for unbiased technology selection, with the rationale for the最终 selected technology documented based on development studies, prior knowledge, or literature evidence [23]. The performance characteristics and their acceptance criteria should be derived from the intended purpose of the analysis, considering the relevant critical quality attributes and their specification limits [24] [23].

Comparison of Minimal and Enhanced Approaches

The Minimal Approach: Traditional Methodology

ICH Q14 describes two distinct approaches to analytical procedure development: the minimal approach and the enhanced approach. The minimal approach represents the traditional methodology that has been standard practice in the pharmaceutical industry [18]. This approach requires identification of the attributes of the drug substance or drug product that need to be tested, selection of appropriate technology and instruments, evaluation of performance characteristics through development studies, and definition of the analytical procedure description including the analytical procedure control strategy [25].

While the minimal approach remains acceptable under ICH Q14, it offers less flexibility for post-approval changes [23] [18]. Changes to analytical procedures developed using the minimal approach typically require prior regulatory approval, as there is limited understanding of the method's design space and the impact of parameter variations on method performance [21] [18]. This can lead to time-consuming and complex regulatory submissions for even minor changes to analytical procedures, potentially delaying improvements and technological updates [21].

The Enhanced Approach: Systematic QbD Principles

The enhanced approach under ICH Q14 incorporates Analytical Quality by Design (AQbD) principles, providing a more systematic framework for analytical procedure development [24] [25]. This approach includes all elements of the minimal approach plus additional components such as defining an ATP, conducting formal risk assessments, performing multivariate experiments to investigate parameter interactions, and establishing a comprehensive control strategy with defined method operable design regions (MODR) or proven acceptable ranges (PAR) [25] [18].

The enhanced approach offers significant advantages in terms of regulatory flexibility, particularly for post-approval changes [21] [22]. When sufficient understanding of the method is demonstrated through enhanced development studies, changes within the defined design space often only require regulatory notification rather than prior approval [21] [18]. This facilitates continual improvement and adaptation of analytical procedures throughout their lifecycle, allowing manufacturers to incorporate new technologies and scientific advancements more efficiently [21].

Table 2: Comparison of Minimal vs. Enhanced Approaches in ICH Q14

| Aspect | Minimal Approach | Enhanced Approach |

|---|---|---|

| Regulatory Requirement | Required | Optional (can include some or all elements) [25] |

| ATP Definition | Not required | Foundation of development [24] [23] |

| Risk Assessment | Informal or not required | Formal, structured process [18] |

| Experimental Design | Typically univariate | Design of Experiments (DoE) encouraged [24] [18] |

| Knowledge Management | Limited documentation | Comprehensive knowledge capture [22] [25] |

| Control Strategy | Fixed parameters | MODR/PAR with defined ranges [25] [18] |

| Post-approval Changes | Typically prior approval required | Reduced reporting categories for some changes [21] [18] |

| Lifecycle Management | Reactive | Proactive with continuous monitoring [22] [18] |

Implementation Tools and Experimental Protocols

Practical Workflow for ICH Q14 Implementation

Implementing ICH Q14 and AQbD principles requires a structured workflow that spans the entire analytical procedure lifecycle. The following diagram illustrates the key steps in this process, from initial method conception through lifecycle management:

Diagram 1: Analytical Procedure Lifecycle Workflow

This workflow begins with a method request that clearly defines the analytical need [24]. The subsequent definition of the Analytical Target Profile (ATP) is critical, as it establishes the foundation for all development activities [24] [23]. A comprehensive risk assessment using tools such as Ishikawa diagrams or Failure Mode and Effects Analysis (FMEA) helps identify critical method parameters that require further investigation [18]. Design of Experiments (DoE) approaches are then employed to systematically evaluate these parameters and their interactions, leading to the establishment of a Method Operable Design Region (MODR) and control strategy [24] [25]. The method is then validated according to ICH Q2(R2) requirements, followed by implementation for routine use [19] [23]. Continuous monitoring and lifecycle management ensure the method remains fit-for-purpose, with data from routine use informing potential improvements [22] [18].

Essential Research Reagent Solutions and Materials

Successful implementation of ICH Q14 requires various specialized reagents, materials, and software tools. The following table details key solutions essential for conducting analytical development studies following ICH Q14 principles:

Table 3: Essential Research Reagent Solutions for ICH Q14 Implementation

| Category | Specific Examples | Function in Analytical Development |

|---|---|---|

| Chromatographic Columns | C18 (USP L1) [21] | Separation mechanism critical for specificity in HPLC methods |

| Electrophoresis Reagents | Dimethyl-β-cyclodextrin, Sulfated γ-cyclodextrin [24] | Background electrolytes for capillary electrophoresis methods |

| Design of Experiments Software | Various statistical packages | Enables efficient multivariate experimentation and MODR definition [24] [25] |

| Method Development Software | AutoChrom and similar platforms [25] | Supports QbD implementation, knowledge management, and robustness testing |

| Reference Standards | Chemical Reference Substances (CRS) [24] | Essential for method qualification and validation |

| System Suitability Test Materials | SST samples with defined characteristics [24] [18] | Verifies method performance before routine use |

| Knowledge Management Systems | Electronic lab notebooks, LIMS [25] [18] | Captures and manages prior knowledge for future development |

Experimental Protocol for ATP Definition and Method Development

Defining a robust ATP requires a systematic experimental approach. The following protocol outlines the key steps for establishing an ATP and developing an analytical method according to ICH Q14 principles:

Define Analytical Needs: Clearly articulate the purpose of the analysis and its connection to critical quality attributes (CQAs). This includes specifying what needs to be measured, required sensitivity, and the decision context for the results [24] [23].

Establish Performance Criteria: Based on the analytical needs, define specific performance characteristics (accuracy, precision, specificity, range, robustness) and their acceptance criteria. These should be derived from the product's CQAs and their specification limits [23].

Technology Selection: Evaluate multiple analytical technologies that could potentially meet the ATP requirements. Based on prior knowledge, literature review, or scouting experiments, select the most appropriate technology and document the rationale for this selection [23] [18].

Risk Assessment: Conduct a formal risk assessment using tools such as FMEA or Ishikawa diagrams to identify critical method parameters that may impact method performance. This assessment should consider factors such as sample preparation, instrumental parameters, and environmental conditions [18].

DoE Studies: Design and execute multivariate experiments to investigate the identified critical parameters and their interactions. Response surface methodology or other appropriate DoE approaches should be used to efficiently characterize the method response across the parameter space [24] [18].

MODR Definition: Based on the DoE results, establish the Method Operable Design Region (MODR) - the multidimensional combination of analytical procedure parameter ranges within which the method meets the ATP requirements [25].

Control Strategy Implementation: Define the analytical procedure control strategy, including system suitability tests, sample suitability criteria, and specific controls to ensure the method performs as expected during routine use [18].

Knowledge Documentation: Comprehensively document all development studies, decisions, and their rationales in searchable formats to support future method changes and lifecycle management [25].

Lifecycle Management and Change Control

Post-Approval Change Management

ICH Q14 introduces a structured framework for post-approval change management of analytical procedures, leveraging concepts from ICH Q12 on Pharmaceutical Product Lifecycle Management [21] [18]. This framework enables a more efficient approach to implementing changes while maintaining regulatory compliance and ensuring continuous method improvement. The change management process under ICH Q14 involves several key steps:

First, a risk assessment is conducted to evaluate the significance of the proposed change, considering factors such as test complexity, extent of modification, and relevance to product quality [21]. The change is then classified as high-, medium-, or low-risk based on this assessment. Next, analytical performance criteria according to the ATP are confirmed to ensure the modified method remains fit-for-purpose [21]. Appropriate validation studies are conducted, followed by bridging studies designed to compare the new procedure against the existing one [21]. Finally, the regulatory reporting requirements are assessed based on the risk classification and the established conditions defined during method development [21].

This structured approach to change management is particularly valuable for common scenarios such as technology upgrades, reagent or column discontinuation, and continuous improvement initiatives [21] [22]. By defining established conditions and their reporting categories during initial method development and registration, manufacturers can implement many changes with reduced regulatory burden, potentially moving from prior approval requirements to notification-based reporting [21].

Comparability and Equivalency Studies

When modifying analytical procedures, ICH Q14 emphasizes the importance of demonstrating either comparability or equivalency between the original and modified methods [22]. Understanding the distinction between these concepts is essential for proper lifecycle management:

Comparability evaluates whether a modified method yields results sufficiently similar to the original, ensuring consistent product quality decisions. Comparability studies typically confirm that modified procedures produce expected results, and these changes usually do not require regulatory filings or commitments [22].

Equivalency involves a more comprehensive assessment to demonstrate that a replacement method performs equal to or better than the original. Such changes require regulatory approval prior to implementation and typically include side-by-side testing of representative samples using both methods, statistical evaluation using tools such as paired t-tests or ANOVA, and predefined acceptance criteria based on method performance attributes and CQAs [22].

The choice between comparability and equivalency depends on the risk and scope of the change. For low-risk procedural changes with minimal impact on product quality, a comparability evaluation is often sufficient [22]. For high-risk changes such as complete method replacements, a comprehensive equivalency study is required [22].

ICH Q14, together with ICH Q2(R2), represents a fundamental shift in how analytical procedures are developed, validated, and managed throughout their lifecycle. By emphasizing a systematic, science- and risk-based approach centered on the Analytical Target Profile, these guidelines enable the development of more robust, reliable, and fit-for-purpose analytical methods [19] [24]. The enhanced approach under ICH Q14, while requiring greater initial investment in development studies, offers significant long-term benefits through increased regulatory flexibility, more efficient post-approval change management, and improved method robustness [21] [18].

For researchers, scientists, and drug development professionals, adopting ICH Q14 principles means shifting from a reactive to a proactive approach to analytical development [22]. This involves defining clear analytical targets prospectively, systematically investigating method parameters and their interactions, establishing well-defined control strategies, and implementing continuous monitoring throughout the method lifecycle [22] [18]. As the pharmaceutical industry continues to evolve with increasingly complex molecules and advanced analytical technologies, the framework provided by ICH Q14 will be essential for ensuring that analytical procedures remain capable of reliably assessing product quality while adapting to scientific and technological advancements [21].

Applying a Risk-Based Approach to HPLC Method Validation

The pharmaceutical industry is increasingly adopting systematic, risk-based approaches for analytical method development and validation to ensure drug quality and patient safety. A traditional method development approach, often referred to as Quality by Testing (QbT) or trial-and-error, typically involves varying one factor at a time (OFAT) to establish working conditions [26]. This unstructured approach has significant limitations: it often requires numerous experiments, may lead to false optimum conditions, fails to study interactions between variables, and provides limited knowledge about the method's operational boundaries [26]. Most importantly, QbT does not facilitate a thorough understanding and control of risk throughout the method's life cycle.

In contrast, the risk-based approach to High-Performance Liquid Chromatography (HPLC) method validation embodies the principles of Analytical Quality by Design (AQbD), which emphasizes building quality into the analytical procedure from the outset through method understanding and control based on sound science and quality risk management [26]. Regulatory bodies like the International Council for Harmonisation (ICH) strongly recommend this systematic approach, as defined in guidelines such as ICH Q9 on quality risk management and ICH Q2(R2) on validation of analytical procedures [26] [1]. This paradigm shift moves the focus from merely testing quality at the end of development to designing and understanding the method to consistently deliver reliable performance throughout its entire life cycle.

Core Principles of Risk-Based HPLC Method Validation

Fundamental Concepts and Terminology

The risk-based approach to HPLC method validation is grounded in several key concepts that differentiate it from traditional methods:

Risk: Defined by ICH as "the combination of the probability of occurrence of harm and the severity of that harm" [26]. In the context of HPLC method validation, this translates to the potential for the method to fail in accurately measuring the analyte of interest, potentially compromising product quality decisions.

Analytical Quality by Design (AQbD): A systematic approach to development that begins with predefined objectives and emphasizes method understanding and control based on sound science and quality risk management [26]. AQbD incorporates prior knowledge, risk management, and structured experimentation throughout the analytical method life cycle.

Method Operability Design Region (MODR): A multidimensional region of method parameters where the method can meet its intended purpose with an established probability of success [26]. Operating within this region ensures method robustness despite small, intentional variations in parameters.

Critical Method Attributes (CMAs) and Critical Method Parameters (CMPs): CMAs are the performance characteristics critical for the method to fulfill its intended purpose, while CMPs are the variables that significantly impact these attributes [26].

The Method Life Cycle Perspective

A fundamental principle of the risk-based approach is viewing HPLC method validation as part of a comprehensive method life cycle rather than a one-time event [26]. This life cycle consists of three interconnected stages:

Method Design and Development: Establishing method requirements based on intended purpose and designing experiments to understand method behavior.

Method Validation: Qualifying the method for its intended use, demonstrating it meets predefined acceptance criteria.

Continued Method Performance Verification: Ongoing monitoring to ensure the method remains in a state of control throughout its operational life [27].

This life cycle perspective acknowledges that methods may require adjustments over time and provides a structured framework for managing such changes while maintaining validated status.

Risk-Based Versus Traditional Approach: A Comparative Analysis

The differences between traditional and risk-based approaches to HPLC method validation extend beyond philosophical principles to practical implementation and outcomes. The table below summarizes key distinctions:

Table 1: Comparison of Traditional vs. Risk-Based HPLC Method Validation Approaches

| Aspect | Traditional Approach (QbT) | Risk-Based Approach (AQbD) |

|---|---|---|

| Development Strategy | One Factor At a Time (OFAT) | Multivariate experiments (DoE) |

| Quality Assurance | Quality tested at the end | Quality built into the design |

| Knowledge Building | Limited, focused on working point | Comprehensive, exploring knowledge space |

| Robustness Assessment | Evaluated after development | Built into development process |

| Risk Management | Reactive, often incomplete | Proactive, systematic throughout life cycle |

| Regulatory Flexibility | Working point fixed, changes require approval | Method Operability Design Region allows adjustments |

| Method Understanding | Limited understanding of interactions | Deep understanding of parameter effects |

The risk-based approach fundamentally changes how methods are developed, validated, and managed throughout their life cycle. Where the traditional approach establishes a single working point, the risk-based approach defines an operable region within which method parameters can be adjusted while maintaining validated status [26]. This provides significant operational flexibility while maintaining control.

Experimental data demonstrates the advantages of this approach. One study reported that a systematic strategy using screening experiments followed by optimization studies enabled efficient evaluation of multiple method variables (11 variables studied in 24 runs), identifying the most significant factors for further optimization [27]. This structured approach reduces the risk of missing critical method factors that could affect performance later in the method life cycle.

Implementing the Risk-Based Framework: A Step-by-Step Methodology

Defining the Analytical Target Profile (ATP)

The foundation of risk-based HPLC method validation is establishing a clear Analytical Target Profile (ATP) - a prospective summary of the required quality characteristics of the method [26]. The ATP defines what the method is intended to measure and under what conditions, serving as the foundation for all subsequent development and validation activities. For an HPLC content determination method, the ATP typically includes criteria for specificity, accuracy, precision, linearity, range, detection and quantification limits, and robustness [28].

Risk Assessment and Identification of CMAs/CMPs

A systematic risk assessment is conducted to identify potential factors that could impact the method's ability to meet the ATP. Tools such as Fishbone diagrams and Failure Mode Effects Analysis (FMEA) are commonly employed to structure this assessment. Through risk assessment, Critical Method Attributes (CMAs) such as specificity, accuracy, and precision are linked to Critical Method Parameters (CMPs) that may affect them [26]. For HPLC methods, typical CMPs include mobile phase composition, pH, column temperature, flow rate, and detection wavelength.

Table 2: Common Risk Assessment Factors in HPLC Method Development

| Category | Examples of Factors | Potential Impact on Method Performance |

|---|---|---|

| Chromatographic Parameters | Mobile phase composition, pH, buffer concentration, flow rate | Retention time, resolution, peak shape |

| Column Characteristics | Stationary phase chemistry, column dimensions, particle size, age | Separation efficiency, back pressure, selectivity |

| Sample Preparation | Extraction method, solvent composition, filtration | Recovery, interference, matrix effects |

| Instrumental Factors | Detector wavelength, temperature stability, injection volume | Sensitivity, precision, accuracy |

| Environmental Conditions | Room temperature, humidity | Retention time stability, baseline noise |

Structured Experimentation Using Design of Experiments (DoE)

A key differentiator of the risk-based approach is the application of Design of Experiments (DoE) to systematically study the relationship between CMPs and CMAs [26]. Unlike OFAT approaches, DoE allows efficient exploration of multiple factors and their interactions simultaneously. The typical experimentation strategy involves:

- Screening experiments to identify factors with significant effects on method performance [27].

- Optimization experiments to characterize the relationship between critical factors and method responses [27].

- Verification experiments to confirm method performance at the selected operational conditions.

For example, in developing an HPLC method for bromophenols in red algae, researchers tested multiple stationary phases with different mobile phase systems to achieve optimal separation [29]. Similarly, method development for trans-resveratrol quantification involved comparing different columns and mobile phases to identify optimal chromatographic conditions [30].

Establishing the Method Operable Design Region (MODR)

The culmination of the risk-based development process is the definition of the Method Operable Design Region (MODR) - the multidimensional combination and interaction of input variables that have been demonstrated to provide assurance of quality [26]. Operating within the MODR provides flexibility while maintaining method robustness. If method adjustments are needed (e.g., to address column obsolescence or changing sample matrices), changes within the MODR can be implemented without full revalidation, requiring only notification to regulatory bodies rather than prior approval [26].

The following diagram illustrates the complete workflow for implementing a risk-based approach to HPLC method validation:

Key Risk Mitigation Tools and Their Applications

Addressing Critical Risks in HPLC Method Validation

The risk-based approach employs specific tools to mitigate common risks throughout the method life cycle. The table below identifies six critical risks and their corresponding mitigation tools:

Table 3: Risk Mitigation Tools for HPLC Method Validation

| Critical Risk | Risk Mitigation Tool | Application in HPLC Method Validation |

|---|---|---|

| Missing important method design factors | Screening + Optimization Experiments | Systematically evaluate multiple chromatographic parameters using DoE |

| Poor quality measurements | Gage Repeatability & Reproducibility (R&R) Studies | Quantify method variation components using multiple analysts, instruments, days |

| Method not robust to deviations | Robustness Studies | Deliberately vary key parameters (e.g., mobile phase ±5%, flow rate ±10%) |

| Performance deterioration over time | Continued Method Performance Verification | Periodic testing of control samples alongside routine analysis |

| Poor sampling performance | Nested Sampling Studies | Evaluate contribution of sampling variation to total measurement variation |

| Lack of management attention | Management Review | Regular review of method performance data as part of quality system |

Gage R&R Studies for Measurement System Analysis

Gage Repeatability and Reproducibility (R&R) studies are essential for quantifying the precision of an HPLC method [27]. These studies typically involve multiple analysts performing repeated measurements of samples covering the method range. The output provides quantitative measures of:

- Repeatability: Variation under identical conditions (same analyst, instrument, day)

- Reproducibility: Variation under different conditions (different analysts, instruments, days)

- Measurement Resolution: The ability of the method to detect meaningful differences

For HPLC content determination methods, precision is typically considered acceptable when the relative standard deviation (RSD) of peak areas is less than 2% [28].

Robustness Testing

Robustness testing evaluates a method's capacity to remain unaffected by small, deliberate variations in method parameters [26]. For HPLC methods, this typically involves varying parameters such as:

- Mobile phase composition (±2-5% absolute)

- pH of aqueous phase (±0.1-0.2 units)

- Column temperature (±2-5°C)

- Flow rate (±10%)

- Detection wavelength (±2-3 nm)

One documented robustness study for a dissolution method examined eight variables including acid concentration, polysorbate concentration, stir speed, temperature, degassing, filter position, operator, and apparatus using a Plackett-Burman design [27]. The method was deemed robust when none of the factors showed statistically significant effects on the results.

Experimental Protocols for Key Validation Parameters

Specificity and Forced Degradation Studies

Specificity is the ability to measure the analyte accurately in the presence of potential interferents [28]. For HPLC methods, this is typically demonstrated through forced degradation studies under various stress conditions:

- Acidic and Basic Hydrolysis: Treatment with 1M HCl or NaOH at elevated temperatures [28] [30]

- Oxidative Degradation: Exposure to 3-10% hydrogen peroxide [28] [30]

- Thermal Degradation: Heating at 40-60°C [30]

- Photolytic Degradation: Exposure to UV light (4500 lux for 48 hours) [28] [30]

An optimal degradation level of 5-15% is recommended to generate meaningful degradation products without excessive destruction of the active compound [28]. Peak purity assessment using photodiode array detection is critical to demonstrate specificity by showing no co-eluting peaks [28] [30].

Detection and Quantification Limits

The Limit of Detection (LOD) and Limit of Quantification (LOQ) are determined using the signal-to-noise ratio method:

- LOD: The concentration where signal-to-noise ratio (S/N) ≥ 3 [28] [30]

- LOQ: The concentration where S/N ≥ 10 [28] [30]

For LOQ verification, six injections at the LOQ concentration should demonstrate precision with RSD typically <2-5% [28]. Documentation should include chromatograms of blank solvent, concentrated solution, and diluted solutions [28].

Linearity and Range

Linearity is demonstrated through 5-7 point calibration curves covering the specified range [28]. For content determination methods, typical ranges extend from LOQ to 160-200% of the target concentration [28]. The correlation coefficient (r) should typically be >0.999 [28] [29]. Critical to this assessment is ensuring that all test concentrations (including recovery studies) fall within the demonstrated linear range [28].

Precision and Accuracy

Precision and accuracy are evaluated at multiple levels:

- Precision: Six consecutive injections of the same sample with RSD <2% [28]

- Repeatability: Two reference solutions and six test solutions from the same batch with content RSD <2% [28]

- Intermediate Precision: Different analyst, instrument, and day with combined RSD (repeatability + intermediate precision) <2% [28]

- Accuracy: Recovery testing at 80%, 100%, and 120% levels with recovery range 98-102% and RSD <2% [28]

Solution Stability

Solution stability is critical for methods used in high-throughput environments. Testing should include planned time points (e.g., 0, 4, 6, 8, 10, 12, 18, 24 hours) with RSD of peak areas across time points <2% [28]. Documentation of at least 16-hour stability is typically recommended [28].

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of risk-based HPLC method validation requires specific reagents, materials, and tools. The following table details essential components of the risk-based validation toolkit:

Table 4: Essential Research Reagent Solutions for Risk-Based HPLC Method Validation

| Tool/Reagent | Function | Application Example |

|---|---|---|

| Design of Experiments Software | Plans efficient experiments and models responses | Identifying critical method parameters and their optimal ranges |

| Quality Reference Standards | Provides known purity materials for method calibration | Establishing method linearity, accuracy, and precision |

| Forced Degradation Reagents | Induces controlled degradation for specificity studies | Acid, base, oxidants, and light sources for stress testing |

| Multiple Column Brands | Evaluves method robustness to column variations | Testing 3 different column brands for separation consistency |

| Mobile Phase Modifiers | Adjusts selectivity and improves separation | Trifluoroacetic acid, ammonium formate, buffer salts |

| Sample Preparation Solvents | Extracts and dissolves analytes with appropriate stability | Selecting solvents that dissolve sample well and are miscible with mobile phase |

| System Suitability Reference | Verifies system performance before validation testing | Reference solution with known characteristics to confirm chromatography |

Regulatory Framework and Life Cycle Management

The risk-based approach to HPLC method validation aligns with regulatory expectations outlined in ICH Q2(R2) for validation of analytical procedures [1] and ICH Q9 for quality risk management [26]. This alignment provides significant benefits in regulatory submissions and post-approval method management.

When methods are developed using AQbD principles with demonstrated MODR, post-approval changes within this region are considered lower risk [26]. Regulatory agencies may permit notification rather than prior approval for such changes, significantly reducing the regulatory burden for method improvements [26].

Continued Method Performance Verification through periodic testing of control samples provides ongoing assurance of method performance throughout its operational life [27]. This aligns with the life cycle approach to method validation and provides data-driven insights into method stability over time.

The risk-based approach to HPLC method validation represents a fundamental shift from traditional compliance-focused validation to a science-based, systematic approach that emphasizes method understanding and control. By implementing risk assessment tools, structured experimentation, and life cycle management, organizations can develop more robust methods, reduce regulatory burden, and maintain data quality throughout the method's operational life.

The initial investment in comprehensive method understanding pays significant dividends in reduced method failures, easier troubleshooting, and more efficient method improvements over time. As regulatory expectations evolve toward these principles, adopting risk-based approaches positions organizations for success in an increasingly complex analytical landscape.

Practical Application: Developing and Validating Stability-Indicating HPLC Methods

Designing an Effective Validation Protocol with Predefined Acceptance Criteria

Analytical method validation is a mandatory process in the pharmaceutical industry, required by law and regulatory guidelines to ensure the reliability, accuracy, and reproducibility of test methods used in quality assessments of drug substances (DS) and drug products (DP) [31]. The fundamental objective of validation is to demonstrate that an analytical procedure is suitable for its intended purpose, providing assurance that the data generated accurately reflects the quality of the material being tested [31] [3]. For High-Performance Liquid Chromatography (HPLC) methods, which are widely used for release and stability testing of commercial drug substances and products, a well-designed validation protocol with predefined acceptance criteria is essential for regulatory compliance and product quality assurance [1] [31].

The International Council for Harmonisation (ICH) Q2(R2) guideline provides a comprehensive framework for the principles of analytical procedure validation, covering analytical uses across pharmaceutical development and manufacturing [3]. This guidance, along with regional regulatory requirements, establishes the foundation for designing validation protocols that demonstrate method robustness, precision, and accuracy throughout the product lifecycle [1] [31] [3]. The validation process confirms that an HPLC method can execute reliably and reproducibly, ensuring accurate data generation for monitoring critical quality attributes of DS and DP [31].

Core Validation Parameters and Regulatory Framework

Essential Validation Elements

Analytical method validation for HPLC procedures encompasses multiple performance characteristics that must be evaluated through structured experimental protocols. According to ICH guidelines and pharmacopeial standards, the core validation parameters include specificity, accuracy, precision, linearity, range, detection limit (LOD), quantitation limit (LOQ), and robustness [1] [31] [32]. Each parameter serves a distinct purpose in establishing the method's suitability for its intended application, whether for assay/potency testing, impurity quantification, identity confirmation, or other quantitative and qualitative measurements [1].

The specific validation requirements vary depending on the type of analytical procedure. As outlined in USP general chapter <1225>, methods are categorized into four types with differing validation expectations [31]. For instance, Category I methods (e.g., assay for drug substance) require testing for accuracy, precision, specificity, linearity, and range, while Category IV (identification tests) primarily need demonstration of specificity [31]. Most modern stability-indicating HPLC methods are "composite" reversed-phase liquid chromatography (RPLC) gradient methods with UV detection that simultaneously determine both potency (active pharmaceutical ingredient) and impurities/degradation products, thus requiring validation elements across multiple categories [31].

Phase-Appropriate Validation Approach