Hyperspectral Imaging in Environmental Monitoring: A Comprehensive Guide for Researchers and Scientists

This article provides a comprehensive overview of hyperspectral imaging (HSI) and its transformative role in environmental monitoring.

Hyperspectral Imaging in Environmental Monitoring: A Comprehensive Guide for Researchers and Scientists

Abstract

This article provides a comprehensive overview of hyperspectral imaging (HSI) and its transformative role in environmental monitoring. It covers the foundational principles of HSI technology, including how it captures continuous spectral data to create unique material 'fingerprints.' The article details methodological approaches for deploying HSI across various environmental applications—from water quality and pollution tracking to ecosystem health assessment—and explores advanced data processing techniques involving machine learning. It also addresses key operational challenges and optimization strategies for field deployment, and validates HSI performance through comparative analysis with traditional monitoring methods and real-world case studies. This resource is tailored for researchers, scientists, and development professionals seeking to understand and leverage this powerful, non-destructive sensing technology.

What is Hyperspectral Imaging? Unlocking the Science of Spectral Fingerprints

Hyperspectral imaging (HSI) is an advanced analytical technique that combines digital imaging with spectroscopy, enabling the detailed characterization of objects based on their composition. Unlike conventional RGB (Red, Green, Blue) imaging, which replicates human vision by capturing only three broad wavelength bands, hyperspectral imaging collects and processes information across a continuous range of spectral bands—from ultraviolet (UV) to long-wave infrared (LWIR). This process generates a detailed spectrum for each pixel in a spatial image, creating a rich, three-dimensional dataset known as a hyperspectral data cube [1] [2].

This foundational difference in data acquisition translates to a significant leap in analytical capability. While an RGB sensor is limited to the visible spectrum and provides data comparable to a "three-page pamphlet," a hyperspectral sensor can capture spectral responses from hundreds of wavelengths, resulting in a "220-page book" of information about the object being imaged [1]. This fine spectral resolution allows researchers to identify materials, detect subtle changes, and quantify constituents based on their unique spectral signatures—optical "fingerprints" that are impossible to discern with conventional imaging [1] [3]. The transition from RGB to hyperspectral imaging thus represents a paradigm shift from mere visual representation to comprehensive material analysis, making it a powerful tool for environmental monitoring research.

The Core Technology: From Light to Data Cube

Fundamental Principles and Data Acquisition

The core principle of hyperspectral imaging lies in measuring the interaction between light and matter across the electromagnetic spectrum. Every material absorbs, reflects, and emits electromagnetic radiation in a characteristic way, producing a unique spectral signature [1] [2]. A hyperspectral camera, or imaging spectrometer, captures this information by imaging a scene across numerous narrow, contiguous wavelength bands [1].

The result of this acquisition is a three-dimensional hyperspectral data cube. The two spatial dimensions (x, y) define the scene's layout, while the third spectral dimension (λ) contains the full spectrum of light measured at each pixel location [4] [2]. This data structure seamlessly blends spatial and chemical information, allowing researchers to determine not only what materials are present based on their spectrum but also where they are located and in what concentration [1].

Scanning Techniques and Platform Considerations

Hyperspectral data can be acquired using different scanning methodologies, each with distinct advantages for environmental applications:

- Spatial Scanning (Push Broom): This method captures a full slit spectrum (x, λ) for each line of an image. The spatial dimension is built up by moving the sensor relative to the target, making it ideal for airborne and drone-based platforms as well as conveyor-belt monitoring [2].

- Spectral Scanning: In this approach, each sensor output represents a monochromatic, spatial (x, y) map of the scene at a specific wavelength. The system scans through wavelengths by exchanging optical band-pass filters while the platform remains stationary, which is suitable for laboratory or stable tripod setups [2].

- Snapshot Imaging: These advanced systems capture the entire spatial and spectral datacube simultaneously in a single exposure. They offer a significant "snapshot advantage" with higher light throughput and shorter acquisition times, which is beneficial for dynamic environmental phenomena but often involves higher computational costs [2].

For environmental monitoring, the choice of platform—satellite, airborne, drone, or ground-based—directly impacts the spatial resolution and coverage. Satellite imagery can provide information with tens of meters resolution, while airborne data can achieve 1 cm resolution. Drone-based systems can deliver data at a sub-centimeter level, enabling the identification of subtle features missed by other methods [5].

Hyperspectral Data Processing Workflow

The journey from raw sensor data to actionable intelligence involves a multi-stage computational workflow that transforms the hyperspectral data cube into meaningful information for environmental monitoring.

Preprocessing and Dimensionality Reduction

Raw data from hyperspectral sensors is often corrupted by sensor noise, atmospheric effects, and spectral distortions. Preprocessing is crucial to prepare the data for accurate analysis [4] [6].

- Radiometric and Atmospheric Correction: This step converts raw digital numbers (DNs) into physical units of reflectance or radiance, compensating for atmospheric scattering and absorption caused by water vapor and aerosols [4] [6].

- Denoising: Techniques like the non-local meets global (NL-meets-global) approach are used to remove sensor noise while preserving important spectral features [4].

- Image Fusion (Pansharpening): To enhance the often low spatial resolution of hyperspectral data, fusion methods combine it with high-resolution multispectral or panchromatic imagery of the same scene [4].

A critical next step is dimensionality reduction. Hyperspectral data cubes contain hundreds of highly correlated, contiguous bands, leading to significant redundancy and computational burden. Dimensionality reduction alleviates this through:

- Band Selection: This approach identifies and retains the most informative and distinct bands, effectively reducing data volume while preserving the original spectral information. A 2025 study demonstrated that a standard deviation-based band selection method could reduce data size by up to 97.3% while maintaining a classification accuracy of 97.21% [7].

- Orthogonal Transforms: Techniques like Principal Component Analysis (PCA) transform the data to a lower-dimensional space by finding principal components along the maximum variances. The Maximum Noise Fraction (MNF) transform is particularly effective for noisy data, as it maximizes the signal-to-noise ratio in the derived components [4].

Table 1: Key Hyperspectral Data Preprocessing Techniques

| Technique Category | Specific Methods | Primary Function | Application Note |

|---|---|---|---|

| Noise Reduction | Non-local meets global (NL-meets-global) | Removes sensor noise while preserving spectral features | Particularly important for low-light or high-speed acquisition [4] |

| Resolution Enhancement | Coupled Non-negative Matrix Factorization (CNMF) | Fuses HSI with high-res imagery to improve spatial detail | Also known as pansharpening [4] |

| Dimensionality Reduction | Principal Component Analysis (PCA) | Reduces spectral dimensions by projecting onto axes of max variance | Components are in descending order of explained variance [4] |

| Dimensionality Reduction | Maximum Noise Fraction (MNF) | Derives components that maximize signal-to-noise ratio | Preferable to PCA for noisy data [4] [6] |

| Dimensionality Reduction | Standard Deviation-based Band Selection | Selects a subset of original bands with highest variance | Achieves >97% data reduction with minimal accuracy loss [7] |

Spectral Analysis and Classification

Once preprocessed, the data is ready for advanced analysis to identify and quantify materials.

- Spectral Unmixing: Due to the relatively low spatial resolution of many hyperspectral sensors, a single pixel often represents a mixture of different materials (a "mixed pixel"). Spectral unmixing decomposes these pixel spectra into their constituent endmembers (pure spectral signatures) and estimates their relative proportions, or abundance maps [4]. Key endmember extraction algorithms include:

- Spectral Matching and Target Detection: This process identifies materials by comparing the spectral signatures of image pixels or endmembers against reference spectra from known libraries, such as the ECOSTRESS spectral library [4]. The

spectralMatchfunction is an example of a tool that computes similarity between unknown and reference spectra for classification [4]. - Machine Learning and Deep Learning: Modern hyperspectral analysis increasingly relies on sophisticated models like 3D Convolutional Neural Networks (3DCNN) for classification. A 2025 study on air pollution classification demonstrated that a 3DCNN model using hyperspectral images converted from RGB (cHSI) achieved up to 9% higher accuracy compared to a model using traditional RGB images [8].

Applications in Environmental Monitoring

Hyperspectral imaging's ability to provide detailed, non-contact chemical analysis makes it transformative for environmental monitoring. The following table summarizes its key applications, highlighting the specific parameters measured and their significance.

Table 2: Hyperspectral Imaging Applications in Environmental Monitoring

| Application Area | Measured Parameters / Detected Targets | Environmental Significance |

|---|---|---|

| Water Quality Monitoring | Chlorophyll content, turbidity, harmful algal blooms, pollutants, microplastics [5] [3] | Tracks eutrophication, detects pollution sources, assesses ecosystem health and water safety [5] |

| Vegetation & Forest Health | Plant health, disease presence, drought stress, species identification [5] [3] | Enables early detection of biotic/abiotic stress, monitors deforestation, and assesses biodiversity [3] |

| Pollution Detection | Identification and tracking of pollutants in air (PM2.5), water, and soil; mineral-based fluids in SWIR/MWIR/LWIR [5] [8] | Provides data for regulating emissions, tracking spill spread, and assessing soil contamination [5] [8] |

| Land Cover & Land Use (LULC) Mapping | Accurate classification of forests, wetlands, urban areas, and agricultural fields [5] | Essential for urban planning, natural resource management, and monitoring changes over time [5] |

| Climate Change Analysis | Changes in vegetation, glaciers, and other environmental features [5] | Contributes to research on how ecosystems respond to shifting climatic conditions [5] |

| Disaster Management | Monitoring and prevention of wildfires, landslides, and floods; post-disaster impact assessment [5] [3] | Supports early warning systems, risk mapping, and coordinates recovery efforts [5] |

Detailed Experimental Protocol: Air Pollution Monitoring

A pertinent example of a modern HSI application is the classification of air pollution severity, as detailed in a 2025 study [8]. The following protocol outlines the methodology.

Objective: To classify aerial images of different surface types (trees, roofs, roads) as "Good," "Normal," or "Severe" based on PM2.5 pollution levels.

1. Data Acquisition and Dataset Preparation:

- Platform: An aerial camera mounted on a drone, raised to 100 meters above the ground and capturing images at a 90-degree angle.

- Spatial Resolution: Images captured at 1920 × 1080 resolution to match the input size of the subsequent 3DCNN model.

- Dataset Curation: A total of 15,137 images were collected and categorized into 4,916 tree images, 5,132 roof images, 1,791 road images, and 3,298 other images.

- Ground Truth Labeling: Each image was labeled as "Good," "Normal," or "Severe" based on the Air Quality Index (AQI). Actual PM2.5 data for validation was collected using the EdiGreen website and handheld air quality monitors [8].

2. Visible Hyperspectral Imaging (VIS-cHSI) Conversion Algorithm:

- Since dedicated hyperspectral sensors can be costly, this study employed a novel algorithm to convert standard RGB images into hyperspectral images.

- Core Concept: Establish a relationship matrix between a digital camera and a laboratory spectrometer (Ocean Optics, QE65000) using a standard 24-color checker (X-Rite classic) as a reference target.

- Process: The reflectance spectrum data and RGB color patch images are converted to the CIE 1931 XYZ color space. Multiple regression is then used to derive a correction coefficient matrix to calibrate the camera. Principal Component Analysis (PCA) is performed on the spectrometer's reflectance data, and the principal component scores are used in a regression analysis to build a transformation matrix (M) that converts camera outputs to hyperspectral data [8].

3. Model Training and Evaluation:

- The curated dataset was split into a training set and a test set in an 8:2 ratio.

- Two separate three-dimensional convolutional neural network (3DCNN) models were trained: one (RGB-3DCNN) using traditional RGB images and another (cHSI-3DCNN) using the converted hyperspectral images as inputs.

- The predictive accuracy of both models was evaluated and compared. The cHSI-3DCNN model demonstrated superior performance, improving classification accuracy by up to 9% across the different regions compared to the traditional RGB-based model [8].

The Scientist's Toolkit

Successful implementation of hyperspectral imaging for environmental research requires a suite of specialized tools, from hardware and software to reference data.

Table 3: Essential Research Reagent Solutions for Hyperspectral Imaging

| Tool / Material | Category | Function / Purpose |

|---|---|---|

| Spectral Reference Targets (e.g., 24-color checker) | Calibration Equipment | Provides known reflectance standards for empirical calibration of imagery, crucial for converting digital numbers to surface reflectance [8] |

| ECOSTRESS Spectral Library | Reference Data | A library of pure spectral signatures of materials; used for spectral matching to identify unknown substances in a scene [4] |

| Handheld Air Quality Monitors | Ground-Truthing Instrument | Provides in-situ measurements of parameters like PM2.5; used for validating and labeling remote sensing data [8] |

| ENVI, ERDAS IMAGINE | Commercial Software | Industry-standard software platforms offering comprehensive suites for processing, analyzing, and visualizing geospatial imagery [6] |

| MATLAB Hyperspectral Imaging Library | Software Toolbox | Provides a programming environment with specialized functions (e.g., hypercube, hyperpca, ppi) for representing and processing HSI data [4] [6] |

| Spectral Python (SPy), scikit-learn | Open-Source Libraries | Python libraries that provide a wide range of tools for reading, visualizing, processing, and classifying hyperspectral data [6] |

Hyperspectral imaging represents a profound advancement over traditional RGB imaging, equipping environmental researchers with the ability to move beyond superficial visual analysis to detailed compositional assessment. By capturing hundreds of narrow, contiguous spectral bands, HSI reveals the unique spectral "fingerprints" of materials, enabling the identification and quantification of environmental constituents—from harmful algae and air pollutants to stressed vegetation—that are invisible to the human eye and conventional cameras. As processing algorithms become more sophisticated and accessible, and as platforms like drones and next-generation satellites make acquisition more feasible, hyperspectral imaging is poised to become an indispensable tool in the global effort to monitor, understand, and protect our natural environment.

Hyperspectral imaging (HSI) is an advanced analytical technique that integrates spectroscopy with digital imaging, enabling the detailed characterization of materials based on their physical and chemical properties [1]. Unlike conventional color cameras that perceive light in only three broad bands (red, green, and blue), hyperspectral imaging systems divide the spectrum into numerous, contiguous bands, capturing a complete spectrum for each pixel in a scene [2] [9]. This capability to simultaneously capture spatial and spectral information makes HSI a powerful tool for environmental monitoring, allowing researchers to identify and map materials, detect pollutants, and assess ecosystem health with exceptional precision [5] [10].

The core principle of HSI lies in the fact that every material interacts with light in a unique way, creating a distinctive spectral signature or "fingerprint" [2] [1]. By analyzing these signatures across a spatial area, hyperspectral sensors can answer fundamental questions about a scene: what materials are present (based on their spectrum), where they are located (based on their spatial coordinates), and when changes occur over time [1]. This wealth of information is encapsulated in a three-dimensional data structure known as a hyperspectral data cube, which forms the foundation for all subsequent analysis and interpretation [2] [1].

The Hyperspectral Data Cube: Integrating Spatial and Spectral Dimensions

The hyperspectral data cube is the fundamental data structure generated by HSI systems, representing a synthesis of spatial and spectral information [2]. This three-dimensional cube is composed of two spatial dimensions (x, y) representing the scene's geometry, and one spectral dimension (λ) representing the wavelength [2] [11]. Figuratively speaking, a hyperspectral data cube can be visualized as a stack of images, where each layer corresponds to a specific narrow wavelength range across the electromagnetic spectrum [2].

Data Cube Axes and Information Content

- Spatial Dimensions (x, y): These axes provide the two-dimensional spatial layout of the scene, identical to a conventional image. Each spatial coordinate (pixel) contains not a single intensity value, but an entire spectral signature [1].

- Spectral Dimension (λ): This axis represents the wavelength information, with typical HSI systems capturing hundreds of contiguous spectral bands [2]. The spectral resolution, defined as the width of each spectral band, can be as fine as 1 nm or even sub-nanometer in advanced systems [12].

The power of this structure lies in the ability to analyze data from multiple perspectives. Researchers can examine a single wavelength band to view spatial patterns at that specific spectral frequency, or they can select a single pixel to analyze the complete spectral signature of a specific location, enabling material identification through spectroscopy [2] [1].

Table 1: Comparative Analysis of Hyperspectral Data Against Conventional Imaging

| Feature | Conventional RGB Imaging | Hyperspectral Imaging |

|---|---|---|

| Spectral Bands | 3 broad bands (Red, Green, Blue) [1] | Hundreds of narrow, contiguous bands [2] |

| Spectral Information | Approximates human vision; limited to color perception [1] | Provides complete spectral signature for each pixel [1] |

| Data Output | 2D color image | 3D hyperspectral data cube (x, y, λ) [2] |

| Material Identification | Limited to visual differentiation | Precise identification based on spectral fingerprints [2] [1] |

| Application Scope | Primarily visual inspection and documentation | Quantitative analysis, material classification, change detection [1] [10] |

Fundamental Scanning Techniques for Data Acquisition

Acquiring the three-dimensional hyperspectral data cube requires specialized scanning techniques. There are four primary methods for sampling the hyperspectral cube, each with distinct advantages, disadvantages, and suitability for different environmental monitoring applications [2] [9].

Spatial Scanning (Pushbroom and Whiskbroom)

Spatial scanning methods acquire spectral information along a line or point while moving the sensor relative to the target area [2] [9].

- Pushbroom Scanners: These systems capture a complete slit spectrum (x, λ) in a single integration time, with the second spatial dimension (y) collected through sensor platform movement, such as on a satellite, aircraft, or conveyor belt [2] [13]. Pushbroom scanners offer high spectral resolution and are particularly common in remote sensing applications [13] [9]. A prominent example is the "Zhuhai No.1" hyperspectral satellite constellation, which employs pushbroom technology with a 150 km image width and 10 m spatial resolution [13].

- Whiskbroom Scanners: These represent a point scanning approach where a single point on the ground is measured at a time, building up the image through scanning in both the x and y directions [2] [9]. While whiskbroom scanners can offer the highest spectral resolution, the requirement for two-dimensional scanning significantly increases acquisition time [9].

Spectral Scanning (Tunable Filters)

Spectral scanning, also referred to as plane or area scanning, involves capturing a complete two-dimensional spatial image (x, y) of the scene at one specific wavelength at a time [2] [9]. The system sequentially scans through the spectral dimension by exchanging optical band-pass filters (either tunable or fixed) while the platform remains stationary [2]. This method benefits from direct representation of spatial dimensions but is susceptible to spectral smearing if there is movement within the scene during acquisition [2]. For moving platforms like airplanes, sophisticated realignment of images captured at different wavelengths is necessary to correct for spatial offsets [2].

Non-Scanning (Snapshot) Imaging

Snapshot hyperspectral imagers capture the entire three-dimensional data cube (x, y, λ) in a single integration period without any scanning [2] [9]. These systems use a staring array to generate an image instantly, providing significant advantages in light throughput and acquisition speed, making them suitable for dynamic scenes [2] [12]. However, they often come with trade-offs in spatial resolution and require substantial computational effort for data reconstruction [2] [9]. Various technological approaches exist, including Computed Tomographic Imaging Spectrometry (CTIS), Coded Aperture Snapshot Spectral Imaging (CASSI), and Image Mapping Spectrometry (IMS) [2]. Recent advances using compressed sensing (CS) have led to snapshot systems with significantly improved sensitivity and video-rate operation (e.g., 32 fps), enabling applications in drones and other platforms requiring high temporal resolution [12].

Spatiospectral Scanning

Spatiospectral scanning represents a hybrid approach where each two-dimensional sensor output represents a wavelength-coded spatial map of the scene (λ = λ(y)) [2] [12]. A basic implementation involves placing a camera at a non-zero distance behind a slit spectroscope (slit + dispersive element) [2]. This technique unites advantages of both spatial and spectral scanning, alleviating some of their respective disadvantages while maintaining relatively simple optical arrangements [2].

Table 2: Technical Comparison of Hyperspectral Acquisition Methods

| Acquisition Method | Spatial Resolution | Spectral Resolution | Acquisition Speed | Primary Applications |

|---|---|---|---|---|

| Spatial Scanning (Pushbroom) | Moderate [9] | High (can be ≤1 nm) [12] | Moderate (limited by platform movement) [2] | Airborne and satellite remote sensing [2] [13] |

| Spectral Scanning (Tunable Filter) | High (preserves sensor resolution) [9] | Moderate to High [2] | Slow (sequential band capture) [9] | Laboratory analysis, stationary industrial inspection [2] |

| Non-Scanning (Snapshot) | Lower (due to computational reconstruction) [9] | Moderate [12] | Very Fast (single exposure) [2] [12] | Real-time monitoring, drone-based sensing, dynamic process control [12] |

| Spatiospectral Scanning | Moderate to High [2] | Moderate to High [2] | Moderate [2] | Emerging applications, portable field instrumentation [2] |

Instrumentation and Research Toolkit for Environmental Monitoring

Implementing hyperspectral imaging for environmental research requires a suite of specialized hardware and software components designed to capture, process, and analyze the complex three-dimensional datasets.

Essential Hardware Components

- Hyperspectral Sensors/Spectrometers: The core imaging device that captures spectral and spatial information. These can be based on various scanning principles (pushbroom, snapshot, etc.) and cover different spectral ranges (VNIR, SWIR, MWIR) depending on the target applications [2] [9].

- Platforms: The mounting system for the hyperspectral sensor, which can include:

- Spectral Calibration Targets: Reference materials with known reflectance properties (e.g., standardized 24-color checker) essential for converting raw sensor data into quantitative spectral reflectance values, enabling accurate comparison across different measurements and timepoints [8] [11].

- Broadband Illumination: Controlled lighting systems that provide consistent, uniform illumination across the spectral range of interest, which is particularly critical for laboratory and indoor HSI applications [11].

- Data Processing Units: High-performance computing hardware, often with Graphics Processing Units (GPU), to handle the computationally intensive tasks of data cube reconstruction, calibration, and analysis, especially for large datasets and real-time applications [12].

Critical Software and Analytical Tools

- Data Processing Platforms: Specialized software for high-throughput hyperspectral data extraction, preprocessing, and analysis. Tools like DEA (Data Extraction and Analysis) provide integrated modules for batch processing and support researchers without extensive programming expertise [14].

- Classification Algorithms: Machine learning and deep learning models (e.g., 3D Convolutional Neural Networks) that automatically identify and map materials based on their spectral signatures [8] [10] [13]. These algorithms are essential for processing large hyperspectral datasets efficiently.

- Spectral Libraries: Databases containing reference spectral signatures of known materials (e.g., minerals, vegetation types, pollutants) which serve as training data for classification algorithms and enable material identification [2] [10].

- Radiometric Calibration Software: Algorithms that convert raw digital numbers from the sensor into physically meaningful reflectance values by accounting for sensor dark current, non-linear response, and illumination irregularities [8] [11].

Experimental Protocol for Environmental Monitoring Using HSI

The application of hyperspectral imaging to environmental monitoring follows a structured workflow encompassing data acquisition, preprocessing, analysis, and interpretation. The following protocol outlines a representative experiment for air pollution monitoring using hyperspectral data, based on current research methodologies [8].

Dataset Preparation and Acquisition

- Platform and Sensor Selection: Employ an aerial platform such as a UAV (drone) equipped with a hyperspectral camera. The drone should be raised to a standard altitude (e.g., 100 meters) and capture images at a nadir (90-degree) angle to ensure consistent spatial resolution across the survey area [8].

- Spatial and Spectral Configuration: Configure the sensor to capture data at a suitable spatial resolution (e.g., 1920 × 1080 pixels) and across relevant spectral ranges (e.g., 400-1000 nm VNIR) [8] [13]. The specific resolution and range should be selected based on the target pollutants and spatial scale of interest.

- Ground Truthing: Collect simultaneous in-situ measurements for validation. For air quality studies, this involves using handheld air quality monitors or referencing monitoring station data to measure actual PM2.5 concentrations at the time of image capture [8]. For other applications like vegetation or water monitoring, corresponding field measurements (e.g., chlorophyll content, turbidity) should be collected [5].

- Reference Data Capture: Image a standardized reference target (e.g., 24-color checker) with known reflectance properties under the same illumination conditions. This is crucial for subsequent radiometric calibration [8] [11].

Data Preprocessing and Calibration

- Radiometric Calibration: Convert the raw digital numbers from the sensor to spectral reflectance values using the reference target data. This process accounts for sensor non-linearity, dark current, and varying illumination intensity across wavelengths [8] [11].

- Geometric Correction: Correct for spatial distortions introduced by the sensor optics and platform movement. This is particularly important for pushbroom scanners and airborne platforms [2].

- Atmospheric Correction (for remote sensing): Apply algorithms to remove the scattering and absorption effects of the atmosphere on the spectral signal, isolating the reflectance properties of the ground surface or target materials [10].

- Data Labeling and Segmentation: Manually or automatically classify and segment the acquired images into regions of interest (e.g., trees, roofs, roads, water bodies) based on their spatial and spectral characteristics [8]. Assign appropriate class labels (e.g., "Good," "Normal," "Severe" for pollution levels) according to the ground-truthed data [8].

Data Analysis and Model Implementation

- Algorithm Selection: Choose appropriate machine learning models for the analysis task. For hyperspectral cube classification, three-dimensional convolutional neural networks (3DCNN) are particularly effective as they can simultaneously extract spatial and spectral features [8].

- Model Training and Validation: Split the dataset into training and testing sets (e.g., 80:20 ratio). Train the selected model on the training set and evaluate its predictive accuracy on the withheld test set using metrics such as precision, recall, F1-score, and overall accuracy [8].

- Spectral Analysis: Conduct detailed examination of the spectral signatures extracted from different regions and under different environmental conditions. Compare these signatures to established spectral libraries to identify specific materials or pollutants [2] [10].

Hyperspectral imaging stands as a transformative technology that successfully unites spatial and spectral data acquisition into a single, powerful analytical framework. Through the generation of a three-dimensional data cube and the application of specialized scanning techniques—from spatial and spectral scanning to advanced snapshot methods—HSI provides an unparalleled capacity to identify and quantify materials based on their unique spectral fingerprints. For environmental researchers and monitoring professionals, these core principles enable a wide range of critical applications, from pollution detection and ecosystem health assessment to climate change impact analysis. The continued evolution of HSI platforms, sensors, and analytical algorithms promises to further enhance our ability to understand and protect the environment through detailed, data-driven insight into the complex physical and chemical processes shaping our world.

Hyperspectral imaging (HSI) is an advanced technique that captures and processes information from across the electromagnetic spectrum to obtain the spectrum for each pixel in an image of a scene [2]. The core data structure in hyperspectral imaging is the hyperspectral data cube, also known as a hypercube or spectral cube. This three-dimensional (3D) block of data represents a significant advancement over traditional imaging methods by combining spatial information with extensive spectral detail [15] [16]. Unlike traditional color cameras that capture only three broad wavelength bands (red, green, and blue), hyperspectral imaging collects hundreds of narrow, contiguous spectral bands, generating a continuous spectrum for every image pixel [2] [17]. This enables fine-grained material identification based on their unique spectral signatures—often described as optical "fingerprints" [2].

The capacity to answer not just where something is located but also what it is composed of makes hyperspectral imaging particularly valuable for environmental monitoring research [15]. In this context, the technology provides researchers with a powerful tool for detecting subtle ecological changes, tracking pollutants, assessing vegetation health, and monitoring various environmental parameters over time [5] [18]. This technical guide explores the fundamental structure of the hyperspectral data cube, its acquisition methodologies, processing workflows, and specific applications within environmental science.

Structural Anatomy of the Hyperspectral Data Cube

The hyperspectral data cube is a three-dimensional array that integrates two spatial dimensions with one spectral dimension. This structure forms the foundational framework for all subsequent analysis in hyperspectral imaging.

Core Dimensions and Components

- Spatial Dimensions (X and Y axes): The X and Y axes represent the two-dimensional spatial coordinates of the scene, similar to a conventional photograph. Each point in this spatial plane corresponds to a specific location on the target or area being imaged [15] [16].

- Spectral Dimension (λ or Z axis): The third dimension (typically denoted by λ, wavelength) represents the spectral information. It consists of numerous contiguous spectral bands, with each "slice" of the cube along this axis representing the entire spatial field of view at a specific wavelength [15] [16].

- Data Values: At each coordinate (X, Y, λ) within the cube, a numerical value is stored representing the signal intensity, reflectance, or radiance at that specific spatial location and wavelength [16]. Instead of a single color value, each pixel contains a vector of reflectance values across the electromagnetic spectrum, forming a complete spectral signature for that location [15].

Table 1: Core Components of a Hyperspectral Data Cube

| Component | Description | Representation |

|---|---|---|

| Spatial Dimensions (X, Y) | Two-dimensional image coordinates | Pixel rows and columns |

| Spectral Dimension (λ) | Wavelength, frequency, or energy channels | Contiguous spectral bands |

| Data Values | Signal intensity or flux at each (X, Y, λ) coordinate | Digital numbers, reflectance, or radiance values |

| Spectral Signature | The spectrum of reflected light at a single pixel | A vector of values across λ for one (X,Y) point |

Metadata and Calibration Information

Beyond the raw data cube, hyperspectral data requires comprehensive metadata for accurate interpretation and processing. This metadata is typically stored in header files (e.g., .hdr files), sidecar XML files, or embedded within modern formats like HDF5 [15]. Critical metadata includes:

- Spectral Parameters: Center wavelength and full width at half maximum (FWHM) for each band [15].

- Acquisition Parameters: Date, time, solar angle, and viewing geometry during capture [15].

- Sensor/Platform Information: Details about the instrument and platform (e.g., satellite, drone) [15].

- Radiometric Calibration Coefficients: Factors to convert digital numbers to physical units like radiance [15].

- Spatial Reference System: Georeferencing and geolocation details for spatial analysis [19].

Data Acquisition and Scanning Methodologies

The process of generating a hyperspectral data cube involves specialized sensors and scanning techniques. There are four primary methods for acquiring the three-dimensional (x, y, λ) dataset, each with distinct advantages and trade-offs [2].

Scanning Techniques

- Spatial Scanning (Push Broom/Whisk Broom): In spatial scanning, each two-dimensional sensor output represents a full slit spectrum (x, λ). Systems using this method project a strip of the scene onto a slit, which is then dispersed by a prism or grating [2]. Push broom scanners capture an entire line of the scene simultaneously, while whisk broom scanners use a point-like aperture to scan across the scene. These methods are common in airborne and satellite remote sensing but require stable mounts or accurate pointing information to reconstruct the complete image [2].

- Spectral Scanning (Tunable Filters): In this approach, each 2D sensor output represents a monochromatic spatial (x, y) map of the scene. The system spectrally scans the scene by exchanging optical band-pass filters (tunable or fixed) while the platform remains stationary [2]. While this "staring" method provides a direct representation of spatial dimensions, it can suffer from spectral smearing if there is movement within the scene [2].

- Non-Scanning (Snapshot Imaging): Snapshot hyperspectral imagers capture the entire datacube simultaneously in a single integration period without any scanning [2]. Techniques include computed tomographic imaging spectrometry (CTIS) and coded aperture snapshot spectral imaging (CASSI). The key benefits are higher light throughput and shorter acquisition times, which are advantageous for dynamic scenes. The trade-offs often include higher computational demands and manufacturing costs [2].

- Spatiospectral Scanning: A more advanced technique where each 2D sensor output represents a wavelength-coded spatial map of the scene. This method unites advantages of both spatial and spectral scanning, alleviating some of their limitations [2].

Table 2: Hyperspectral Data Acquisition Techniques

| Technique | Operating Principle | Typical Platforms | Advantages | Limitations |

|---|---|---|---|---|

| Spatial Scanning | Captures a slit spectrum (x,λ) for each scan line | Airborne, satellite, conveyor belts | High spectral resolution, good for mobile platforms | Requires stable platform/pointing data |

| Spectral Scanning | Captures a full 2D image (x,y) at each wavelength | Laboratory, stationary field setups | Direct spatial representation, selectable bands | Spectral smearing with scene movement |

| Non-Scanning (Snapshot) | Captures full (x, y, λ) cube simultaneously | Portable field instruments, dynamic scenes | No moving parts, fast acquisition, high light throughput | High computational cost, complex instrumentation |

| Spatiospectral | Captures a wavelength-coded (x,y) map | Emerging applications | Combines advantages of spatial and spectral scanning | Less established technology |

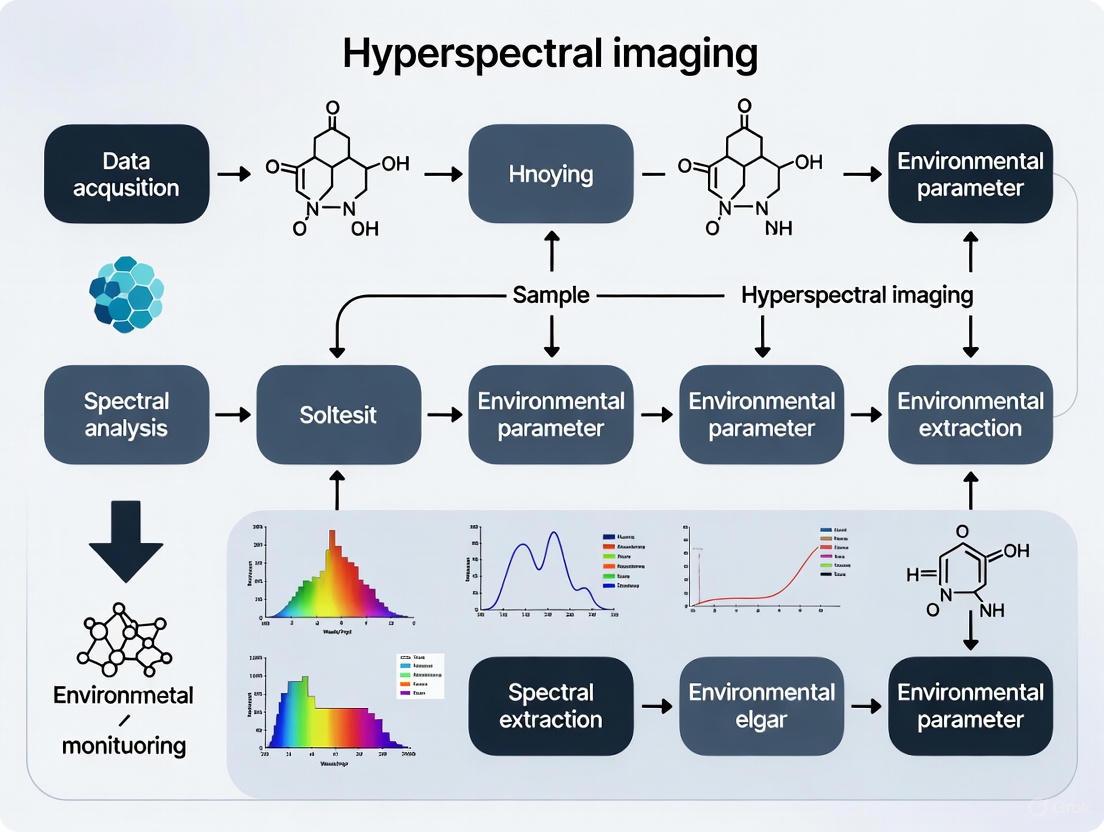

Figure 1: Workflow of hyperspectral data acquisition techniques leading to raw data cube generation.

Sensor Platforms and Resolution Considerations

Hyperspectral cubes are generated from various platforms, including airborne sensors like NASA's Airborne Visible/Infrared Imaging Spectrometer (AVIRIS), satellites like NASA's EO-1 with its Hyperion instrument, and increasingly from drones and handheld sensors [2]. Two critical resolution parameters define sensor performance:

- Spectral Resolution: The width of each spectral band captured by the sensor. Finer resolution (narrower bands) enables better discrimination between materials with similar spectral signatures [2].

- Spatial Resolution: The size of the area on the ground represented by a single pixel. Higher spatial resolution (smaller pixel size) allows for the identification of smaller objects but requires more data storage and processing capacity. If pixels are too large, multiple objects are captured in the same pixel (spectral mixing), making them difficult to identify [2].

Processing and Analysis Workflow

Transforming raw hyperspectral data into actionable insights requires a multi-step processing workflow. This pipeline involves calibration, preprocessing, and advanced analysis to extract meaningful information.

From Raw Data to Analysis-Ready Imagery

The journey from raw data to analysis involves several critical steps that prepare the data for accurate interpretation. The following workflow outlines this process:

Figure 2: Hyperspectral data preprocessing workflow from raw data to analysis-ready imagery.

- Radiometric Calibration converts raw digital numbers from the sensor to physical units of radiance, correcting for sensor-specific effects and variations [19]. This is a fundamental step for quantitative analysis and for comparing data from different sensors or acquisition dates.

- Atmospheric Correction removes the effects of atmospheric scattering and absorption (e.g., from water vapor and aerosols) to convert at-sensor radiance to surface reflectance [15]. This crucial step enables meaningful comparison of spectral signatures acquired under different atmospheric conditions.

- Geometric Correction addresses spatial distortions in the imagery caused by sensor viewing geometry, platform motion, and terrain relief, ensuring proper alignment with maps or other geospatial data [16].

Analytical Techniques and Feature Extraction

With analysis-ready data, researchers can employ various techniques to extract meaningful environmental information:

- Spectral Signature Analysis: The fundamental analysis involves examining the spectral signature of individual pixels or regions of interest. This allows researchers to identify materials by comparing their spectra to reference spectral libraries [2] [16].

- Spectral Indices: Calculated mathematical combinations of reflectance at specific wavelengths are used to highlight phenomena of interest. For example, the Normalized Difference Vegetation Index (NDVI) uses red and near-infrared bands to assess vegetation health and density [19].

- Machine Learning and Classification: Advanced algorithms, including three-dimensional convolutional neural networks (3DCNN), can automatically classify materials, detect anomalies, and identify patterns within the high-dimensional hyperspectral data [8] [16]. Studies have shown that using hyperspectral data cubes as input to 3DCNN models can improve accuracy in tasks like air pollution classification by as much as 9% compared to traditional RGB images [8].

- Dimensionality Reduction: Techniques like Principal Component Analysis (PCA) are often applied to reduce the computational burden by transforming the data into a lower-dimensional space while preserving most of the relevant information [8].

Environmental Monitoring Applications

The rich spectral information contained within hyperspectral data cubes makes them particularly valuable for environmental monitoring, enabling detection and analysis of subtle changes in ecosystems that are invisible to other imaging methods.

Key Application Areas

- Water Quality Assessment: Hyperspectral imaging is used to assess parameters like chlorophyll concentration, turbidity, and the presence of harmful algal blooms and pollutants, including microplastics [5]. The technology can detect specific spectral signatures associated with different water constituents, providing a comprehensive view of water body health.

- Pollution Detection and Monitoring: A significant application is the identification and tracking of pollutants in air, water, and soil [5]. For air quality, researchers have developed algorithms to classify particulate matter (PM2.5) pollution levels as "Good," "Normal," or "Severe" by analyzing hyperspectral images of different surfaces like trees, roofs, and roads [8]. Mineral-based fluids and materials have distinctive spectra that can be used to detect soil contamination even when it is not visible to the naked eye [5].

- Vegetation and Ecosystem Health: Hyperspectral data enables species-level classification, detection of vegetation stress, nutrient deficiencies, and disease outbreaks before they become visible [15] [18]. Shifts in chlorophyll content and other pigments can be detected early, allowing for timely interventions. This is crucial for tracking biodiversity, ecosystem degradation, and climate-driven changes [18].

- Methane Emission Detection: The high spectral fidelity of hyperspectral data allows for the quantification and localization of methane emissions with significant accuracy [18]. This application is becoming increasingly important for monitoring greenhouse gas emissions from various sources, including energy infrastructure and landfills.

- Natural Disaster Prevention and Monitoring: The technology supports monitoring and prevention of disasters like wildfires, landslides, and floods. For instance, it can map the distribution of fire-sensitive materials in nature or around infrastructure, enabling targeted preventive measures [5].

Table 3: Quantitative Examples of Hyperspectral Imaging in Environmental Research

| Application Area | Measured Parameter | Experimental Outcome/Performance | Citation |

|---|---|---|---|

| Air Pollution Classification | PM2.5 levels from surfaces | HSI-3DCNN model showed up to 9% higher accuracy than RGB-based model | [8] |

| Precision Agriculture | Crop health, disease, stress | Early detection of nutrient deficiencies and disease outbreaks | [15] [20] |

| Methane Detection | Atmospheric methane concentrations | Capable of quantifying and localizing emissions with high accuracy | [18] |

| Non-destructive Testing | Potato quality (germination) | Identification of germination sites via spectral differences at 400-1100 nm | [17] |

| Water Quality Monitoring | Chlorophyll, algal blooms, turbidity | Detection of contaminants and assessment of water body health | [5] |

Experimental Protocol: Air Pollution Monitoring Using HSI

The following detailed methodology is based on a published study that classified air pollution levels using hyperspectral imaging and 3DCNN [8]:

Data Acquisition:

- An aerial camera mounted on a drone is raised to 100 meters above ground and captures images at a 90-degree angle.

- Image resolution is set to 1920 × 1080 pixels to match the input requirements of the 3DCNN model.

- Actual PM2.5 data is collected concurrently using reference-grade handheld air quality monitors and official air quality index (AQI) data from validated sources.

Dataset Preparation:

- Captured images are classified and segmented into four categories: trees, roofs, roads, and other surfaces.

- A dataset of 15,137 images is compiled, with each image labeled as "Good," "Normal," or "Severe" according to the AQI index, with each category representing approximately one-third of the dataset.

- The dataset is divided into training and testing sets with an 8:2 ratio.

Hyperspectral Image Conversion (if using RGB source):

- A conversion algorithm (cHSI) transforms traditional RGB images into hyperspectral images.

- The relationship matrix between the camera and a spectrometer is established using a standard 24-color checker as a reference target.

- Reflectance spectrum data and color patch images are converted to the CIE 1931 XYZ color space for calibration and correction.

Model Training and Validation:

- Two separate 3DCNN models are trained: one using traditional RGB images (RGB-3DCNN) and another using the converted hyperspectral images (HSI-3DCNN).

- Model performance is evaluated using metrics including precision, recall, F1-score, and accuracy.

- The predictive accuracy of both models is compared to quantify the improvement gained by using hyperspectral data.

The Researcher's Toolkit

Working effectively with hyperspectral data cubes requires familiarity with a suite of tools, ranging from physical instruments to software libraries and data formats.

Table 4: Essential Tools for Hyperspectral Environmental Research

| Tool Category | Specific Examples/Formats | Function in Research |

|---|---|---|

| Sensors & Platforms | Airborne (AVIRIS), Satellite (Hyperion, Pixxel's Fireflies), Drone-based systems, Handheld sensors | Data acquisition at various spatial/spectral resolutions and coverage areas |

| Data Formats & Metadata | ENVI format (.hdr headers), HDF5, BSQ, BIL, BIP | Standardized storage of hyperspectral data cubes and associated metadata |

| Software & Programming Tools | MATLAB Hyperspectral Imaging Library, Python (scikit-learn, NumPy, SciPy), specialized ENVI software | Data processing, calibration, analysis, visualization, and algorithm development |

| Spectral Libraries | USGS Spectral Library, NASA/ESA databases | Reference spectra for material identification and classification |

| Calibration Targets | 24-color checker, reflectance standards | Field and lab calibration for converting digital numbers to reflectance |

The hyperspectral data cube, with its integrated spatial (x, y) and spectral (λ) dimensions, represents a powerful paradigm for environmental monitoring. Its ability to capture a continuous spectrum for each pixel in an image transforms how researchers detect, identify, and quantify materials and processes across landscapes. From assessing crop health and water quality to detecting air pollutants and methane emissions, the applications are both diverse and impactful.

The global hyperspectral imaging market, projected to grow from $301.4 million in 2024 to $472.9 million by 2029, reflects the increasing adoption and value of this technology across sectors, including environmental science [20]. As sensors become more compact and affordable, and as data processing algorithms—particularly in machine learning and AI—continue to advance, hyperspectral imaging is poised to become an even more accessible and indispensable tool. It will empower scientists and decision-makers to build a more informed and sustainable future for our planet [15] [18].

Spectral signatures are unique patterns of light absorption, reflection, and emission that serve as definitive fingerprints for materials across various environments. In the realm of hyperspectral imaging for environmental monitoring, the ability to detect and analyze these signatures enables researchers to identify pollutants, assess ecosystem health, and track environmental changes with remarkable precision. This technical guide delves into the core principles, measurement methodologies, and analytical protocols that underpin this powerful technology.

The Fundamental Principles of Spectral Signatures

What is a Spectral Signature?

A spectral signature is a unique pattern of light absorption, reflection, and emission exhibited by a material across a range of electromagnetic wavelengths. Each material interacts with light in a characteristic way based on its molecular composition, structure, and physical state. This interaction creates a distinct spectral profile that acts as a "fingerprint," allowing for precise material identification and classification [21]. For instance, the mineral kaolinite exhibits a specific double absorption feature near 2200 nanometers, which serves as a key identifier in geological analysis [22]. These signatures form the foundational data for hyperspectral imaging analysis, enabling the discrimination and mapping of materials in complex environmental scenes.

Hyperspectral vs. Multispectral Imaging

The detection and utilization of spectral signatures are accomplished through either multispectral (MSI) or hyperspectral imaging (HSI) technologies, which differ significantly in their capabilities as shown in the table below.

Table 1: Comparison Between Hyperspectral and Multispectral Imaging Technologies

| Aspect | Hyperspectral Imaging (HSI) | Multispectral Imaging (MSI) |

|---|---|---|

| Number of Spectral Bands | Hundreds to thousands of narrow bands [21] | 3 to 10 broad bands [21] |

| Spectral Resolution | High (can distinguish very close wavelengths) [21] | Moderate (less spectral detail) [21] |

| Spectral Continuity | Creates a continuous spectrum for each pixel [22] | Covers discrete, separated spectral bands [22] |

| Primary Strength | Identification of materials [22] | Discrimination between materials [22] |

| Data Complexity & Cost | Complex processing; higher cost [21] | Easier processing; more affordable [21] |

Hyperspectral sensors, often called imaging spectrometers, divide the spectrum into many narrow bands (e.g., 10 nm width or less), creating a continuous measurement of the spectrum for every pixel in an image [22]. This high spectral resolution allows HSI to identify materials by detecting subtle features in their spectral signatures that are invisible to broadband multispectral sensors like Landsat, which can only discriminate between general material categories [22].

Experimental Protocols for Hyperspectral Analysis

The process of acquiring and analyzing spectral signatures follows a structured workflow to ensure data quality and analytical rigor.

Data Acquisition and Calibration

Data Acquisition involves capturing raw spectral data using specialized imaging spectrometers mounted on platforms ranging from laboratory microscopes to drones and satellites [21] [23]. For environmental monitoring, airborne or drone-based systems are particularly valuable as they can cover large areas and provide high spatial resolution down to the sub-centimeter level [5]. A critical requirement is to perform Radiometric and Spectral Calibration to convert raw sensor readings into accurate, quantitative reflectance data. This is achieved by measuring standards with known reflectance properties, such as a 24-color checker, and establishing a relationship matrix between the camera's response and a reference spectrometer [8] [11]. This process corrects for sensor errors, illumination variations, and atmospheric effects, ensuring the resulting spectral signatures are reliable and comparable across different measurements and times [11] [22].

Data Preprocessing and Analysis

Following acquisition, Data Preprocessing is performed, which includes background subtraction, correction for the instrument's spectral response, and conversion to apparent surface reflectance [8] [23]. The core of the workflow is Spectral Analysis and Signature Extraction. In a typical analysis, pure spectra of known materials (endmembers) are collected from control samples or reference spectral libraries to create a spectral library [23] [22]. Advanced algorithms, such as linear unmixing, are then used to identify these reference signatures within the hyperspectral data cube, mapping their presence and abundance across the scene [23]. The final stage involves Interpretation and Validation, where the results are compared with ground-truth data to assess accuracy and quantify detection limits, as demonstrated in studies that determine the minimum detectable signal level for a target material like GFP in the presence of strong autofluorescence [23].

The Researcher's Toolkit: Essential Materials and Equipment

Successful hyperspectral analysis requires a suite of specialized tools and reagents, each serving a distinct function in the workflow.

Table 2: Essential Research Toolkit for Hyperspectral Analysis

| Tool or Material | Function & Application |

|---|---|

| Imaging Spectrometer (Hyperspectral Camera) | The core sensor that captures both spatial and spectral data, dispersing light into numerous narrow bands to create a data cube [21]. |

| Spectralon or 24-Color Checker | A calibrated reflectance target used for radiometric calibration to convert raw digital numbers to physical reflectance values [8]. |

| Spectral Library (e.g., USGS, JPL) | A curated collection of pure spectra from known materials (minerals, chemicals, vegetation) used as a reference for identifying unknown spectra in imagery [22]. |

| Reference Spectrometer | A non-imaging point spectrometer used to establish the ground-truth reflectance of calibration targets and samples [8]. |

| Linear Unmixing Algorithms | Computational methods used to decompose the spectrum of a mixed pixel into its constituent materials and estimate their relative abundances [23]. |

| Region of Interest (ROI) Tools | Software tools for defining specific areas in an image to extract representative mean spectra for analysis and comparison [22]. |

Key Analysis Methodologies and Techniques

Spectral Profile Analysis and Library Matching

A fundamental analytical technique is the direct comparison of spectral profiles derived from image data to spectra from reference libraries. The process involves extracting a spectrum from a single pixel or a region of interest (ROI) within a hyperspectral image and plotting it alongside library spectra of known materials [22]. Researchers then analyze key absorption and reflectance features; for example, in mineralogy, the shape and position of double absorption features near 2200 nm are critical for identifying minerals like kaolinite [22]. This visual and statistical comparison allows for the direct identification of materials present in the scene based on their unique spectral fingerprints.

Quantitative Assessment of Detection Limits

A rigorous approach to evaluating the performance of a hyperspectral assay involves quantifying its detection limits. As detailed in biomedical research, this can be achieved by combining experimental image data with a theoretical "what-if" scenario [23]. A pure spectrum of a target material (e.g., Green Fluorescent Protein) is artificially added at varying intensities to a control image that lacks the target (e.g., tissue with autofluorescence). The resulting images are then analyzed with spectral unmixing algorithms. By measuring the unmixed target signal against the background, researchers can determine key outcomes such as the linearity of sensitivity, the minimum detectable limit, the dynamic range, and the rate of false positive events [23]. This method provides a quantitative foundation for setting reliable detection thresholds in environmental monitoring applications.

Target Detection and Classification with Machine Learning

Advanced classification techniques, including machine learning, are increasingly applied to hyperspectral data for automated material mapping. In a study on air pollution, researchers developed a novel algorithm to convert standard RGB images into hyperspectral images (cHSI) [8]. They then trained two different three-dimensional convolutional neural network (3DCNN) models using both traditional RGB and the synthesized HSI data to classify air pollution levels as "Good," "Normal," or "Severe." The model utilizing hyperspectral data (HSI-3DCNN) demonstrated superior performance, improving classification accuracy by up to 9% across various regions like trees, roofs, and roads compared to the model using only RGB data (RGB-3DCNN) [8]. This demonstrates the tangible value of spectral information for complex classification tasks in environmental science.

Applications in Environmental Monitoring

The application of spectral signatures via hyperspectral imaging has become a cornerstone of modern environmental monitoring, providing critical data for ecosystem management.

Table 3: Key Environmental Monitoring Applications of Spectral Signatures

| Application Area | Specific Use Case | Measurable Parameters / Targets |

|---|---|---|

| Air Quality | Particulate Matter (PM2.5) pollution mapping and classification [8]. | Classification of pollution severity ("Good," "Normal," "Severe") based on spectral analysis of images from trees, roofs, and roads [8]. |

| Water Quality | Assessment of aquatic ecosystems and pollution events [5]. | Chlorophyll content, turbidity, harmful algal blooms, and pollutants such as microplastics [5]. |

| Forestry Management | Early detection of forest stress and disease [5]. | Health assessment, detection of diseases, insect infestations, and other stressors [5]. |

| Mineral & Geological Mapping | Identification of minerals and rock types for exploration and monitoring [5] [22]. | Identification of mineral deposits and rock types based on unique spectral signatures in geological formations [5] [22]. |

| Pollution Detection | Identification and tracking of pollutants in soil and land [5]. | Detection of mineral-based fluids and other contaminants with distinctive spectral features in the SWIR, MWIR, and LWIR ranges [5]. |

Hyperspectral imaging (HSI) represents a paradigm shift in remote sensing and environmental analysis by combining the spatial detail of imaging with the rich chemical information of spectroscopy. This technical guide elucidates how the high spatial and spectral resolution of HSI enables precise material identification and quantification, which is paramount for advanced environmental monitoring. The discussion is framed within the context of its foundational principles, supported by quantitative performance data and detailed methodological protocols, to provide researchers and scientists with a comprehensive understanding of its capabilities and applications.

Hyperspectral imaging (HSI) is an advanced analytical technique that captures and processes information across the electromagnetic spectrum to obtain the spectrum for each pixel in a image of a scene [1]. Unlike traditional cameras that measure only three broad color channels (Red, Green, and Blue), hyperspectral cameras divide the spectrum into hundreds of narrow, contiguous bands [24] [4]. This process generates a complex three-dimensional data structure known as a hypercube, which contains two spatial dimensions (x, y) and one spectral dimension (λ) [3] [4]. The hypercube allows for the detailed characterization of materials based on their unique physical and chemical properties, as determined by their specific spectral signatures or "fingerprints" [1].

The core distinction of HSI lies in its exceptional spectral resolution, which refers to the narrow width of each spectral band, often as fine as 5-10 nanometers (nm), and its spatial resolution, which determines the smallest object detectable in the image [22]. This high spectral resolution allows HSI to detect subtle variations in material composition that are impossible to distinguish with broadband multispectral sensors [22]. In environmental monitoring, this capability translates directly to the accurate identification and mapping of minerals, vegetation species, pollutants, and water constituents, providing a powerful tool for ecosystem assessment and conservation [3] [5].

The Technological Edge in Environmental Monitoring

High Spectral Resolution for Material Identification

The high spectral resolution of HSI is its most defining advantage. Each material interacts with light in a unique way, absorbing and reflecting specific wavelengths to create a characteristic spectral signature [1] [22].

- Discrimination of Similar Materials: HSI can distinguish between materials that appear identical to the human eye or to multispectral sensors. For instance, different mineral types like kaolinite and montmorillonite, which may look similar, have distinct absorption features in the shortwave infrared (SWIR) region that HSI can differentiate [22]. Similarly, it can identify specific algal species in water bodies [3] or detect early-stage plant stress before visible symptoms appear [3] [25].

- Quantitative Analysis: The detailed spectral information enables not just identification but also quantification of material properties. Table 1 summarizes the demonstrated accuracy of HSI in various environmental applications, highlighting its analytical precision.

Table 1: Quantitative Performance of Hyperspectral Imaging in Environmental Monitoring

| Application Area | Specific Metric | Reported Performance | Source |

|---|---|---|---|

| Forest Classification | Classification Accuracy | ~50% improvement over other methods | [24] |

| Soil Analysis | Soil Organic Matter Mapping | R² ≈ 0.6 | [24] |

| Pollution Detection | Marine Plastic Waste Detection | 70-80% accuracy | [24] |

| Crop Disease Detection | Detection Accuracy | 98.09% | [24] |

| Crop Disease Classification | Classification Accuracy | 86.05% | [24] |

| Air Pollution Classification | Image Classification Accuracy | Up to 9% improvement over RGB methods | [8] |

High Spatial Resolution for Precise Localization

While spectral resolution identifies the "what," spatial resolution identifies the "where." Modern airborne and satellite HSI systems, such as those from Pixxel, offer spatial resolutions as fine as 5 meters [26]. Drone-based systems can achieve sub-centimeter resolution [5]. This high spatial resolution allows for:

- Targeted Analysis: Precise mapping of heterogeneous environments. For example, it enables the mapping of individual mangrove species within a dense coastal forest [26] or identifying the exact location of a pollutant discharge into a water body.

- Reduction of Mixed Pixels: In lower resolution imagery, a single pixel often contains spectra from multiple materials (e.g., soil, dry grass, and a rock), making accurate classification difficult—a problem known as spectral unmixing [4]. Higher spatial resolution minimizes this issue by capturing purer pixels, leading to more accurate material identification and abundance estimation [4].

The synergy of high spatial and spectral resolution transforms HSI from a mere mapping tool into a powerful non-destructive technology for compositional analysis of the Earth's surface [3] [1].

Experimental Protocols for Environmental Monitoring

To leverage the advantages of HSI, researchers must follow robust experimental methodologies. The following protocol details a typical workflow for an environmental monitoring task, such as mineral mapping or water quality assessment.

Endmember Extraction and Spectral Analysis Protocol

Objective: To identify and map the distribution of specific materials (e.g., minerals, vegetation types, or pollutants) within a hyperspectral image.

Materials & Equipment:

- A calibrated hyperspectral image (reflectance data) in a supported format (e.g., BSQ, BIL, BIP) [4].

- Hyperspectral analysis software (e.g., ENVI, MATLAB Hyperspectral Imaging Library, or Python with specialized toolkits).

- Reference spectral libraries (e.g., USGS, JPL, ECOSTRESS) for the materials of interest [4] [22].

Methodology:

- Data Preprocessing:

- Radiometric & Atmospheric Correction: Convert raw digital numbers (DNs) to surface reflectance to ensure that spectral signatures are comparable to laboratory reference libraries [4] [22].

- Dimensionality Reduction: Apply transforms like Principal Component Analysis (PCA) or Maximum Noise Fraction (MNF) to reduce data volume and noise while preserving essential spectral information [4]. The

hyperpcaorhypermnffunctions in MATLAB can be used for this purpose [4].

Endmember Extraction:

- Use algorithms to find the spectrally pure pixels (endmembers) that represent the dominant materials in the scene.

- Pixel Purity Index (PPI): Use the

ppifunction to project pixel spectra to random unit vectors and identify the most extreme pixels (endmembers) in the projected space. A large number of iterations (e.g., 10,000) is recommended for better results [4]. - N-FINDR: Alternatively, use the

nfindrfunction, which iteratively finds the set of pixels that maximizes the volume of a simplex, thereby identifying the most distinct endmembers [4].

Spectral Matching & Identification:

- Plot the spectral profile of the extracted endmembers, focusing on key absorption features (e.g., the 2000-2500 nm range for minerals) [22].

- Use the

spectralMatchfunction to compare the unknown image endmember spectra with known reference spectra from a spectral library (e.g., ECOSTRESS). The software will calculate a similarity score (e.g., Spectral Angle Mapper) to identify the material [4].

Abundance Mapping (Spectral Unmixing):

- Given that many pixels are mixtures of endmembers, use the

estimateAbundanceLSfunction to estimate the fractional abundance of each endmember in every pixel [4]. - This generates abundance maps for each material, showing its distribution and concentration across the study area.

- Given that many pixels are mixtures of endmembers, use the

The following workflow diagram illustrates this multi-step analytical process.

Figure 1: This workflow outlines the key computational steps for analyzing hyperspectral data to create material identification maps, from raw data preprocessing to final classification.

Protocol for Air Pollution Monitoring with HSI Conversion

Objective: To classify air pollution levels (e.g., PM2.5) by converting standard RGB images into hyperspectral data.

Materials & Equipment:

- A dataset of RGB images captured from drones or aerial platforms [8].

- A spectrometer (e.g., Ocean Optics QE65000) for calibration [8].

- A standard 24-color checker (e.g., X-Rite classic) for reference [8].

- A 3D Convolutional Neural Network (3DCNN) model for classification [8].

Methodology:

- Dataset Preparation: Capture aerial RGB images at a consistent altitude and angle. Co-locate the capture with ground-truth air quality measurements (e.g., using handheld PM2.5 monitors) to label images as "Good," "Normal," or "Severe" [8].

- Camera Calibration: Establish a relationship matrix between the camera and a spectrometer by imaging the 24-color checker with both devices. This correlates the camera's RGB values with high-fidelity spectral data [8].

- Hyperspectral Conversion (cHSI):

- Convert the sRGB values of the image to the CIE 1931 XYZ color space [8].

- Use a multiple regression-derived correction matrix to calibrate the camera's XYZ values against the spectrometer's values [8].

- Apply Principal Component Analysis (PCA) to the spectrometer's reflection spectrum data. Use multivariate regression with the principal component scores to transform the calibrated XYZ values into a reconstructed hyperspectral data cube [8].

- Model Training & Classification: Train a 3DCNN model using the generated hyperspectral images (cHSI) as input. Studies have shown this approach can improve classification accuracy for air pollution by up to 9% compared to using traditional RGB images alone [8].

The Researcher's Toolkit for Hyperspectral Imaging

Successful implementation of HSI in research relies on a suite of specialized tools and reagents, spanning from data acquisition hardware to processing software and reference libraries.

Table 2: Essential Tools and Resources for Hyperspectral Research

| Tool Category | Specific Tool/Reagent | Function in Research | |

|---|---|---|---|

| Imaging Platforms | Satellite (e.g., Pixxel), Airborne, UAV-mounted, Handheld | Captures hyperspectral data at various spatial scales and resolutions for different monitoring applications. | [26] [5] |

| Spectral Libraries | USGS Spectral Library, JPL Spectral Library, ECOSTRESS Library | Provides reference spectra of pure materials for spectral matching and accurate identification of unknown substances. | [4] [22] |

| Calibration Targets | Standard 24-Color Checker (e.g., X-Rite) | Calibrates and validates the conversion from RGB to hyperspectral imagery; ensures data fidelity. | [8] |

| Data Processing Software | ENVI, MATLAB Hyperspectral Imaging Library, Python (e.g., Scikit-learn, Hyperspy) | Provides a suite of algorithms for preprocessing, visualization, endmember extraction, and classification. | [4] [22] |

| Algorithms & Models | Pixel Purity Index (PPI), N-FINDR, 3D Convolutional Neural Network (3DCNN) | Extracts pure spectral signatures and performs advanced classification and analysis of the hyperspectral data cube. | [8] [4] |

Hyperspectral imaging stands as a cornerstone technology for modern environmental science, offering an unparalleled combination of high spatial and spectral resolution. This guide has detailed how these capabilities facilitate the precise identification and quantification of materials—from minerals and vegetation to pollutants and water constituents—through rigorous experimental protocols and advanced analytical tools. The integration of artificial intelligence with increasingly portable and powerful HSI systems is poised to further enhance its accessibility and analytical power. For researchers and scientists, mastering HSI is no longer a niche skill but an essential competency for driving innovation in environmental monitoring, conservation, and the development of sustainable practices for the future.

From Theory to Practice: Deploying Hyperspectral Imaging for Environmental Solutions

Push Broom, Whisk Broom, and Snapshot Imaging Systems

Hyperspectral imaging (HSI) represents a revolutionary advancement over conventional imaging by capturing both spatial and spectral information from a target. Unlike traditional red, green, and blue (RGB) cameras that record only three broad color channels, hyperspectral systems collect hundreds of narrow, contiguous spectral bands for each pixel in an image [24]. This generates a three-dimensional data hypercube, with two spatial dimensions (Sx and Sy) and one spectral dimension (Sλ), enabling the construction of an almost continuous reflectance spectrum for every pixel in a scene [27]. The high spectral resolution of HSI allows for precise identification of objects, biological tissues, and materials that traditional imaging cannot distinguish, making it invaluable for environmental monitoring, agriculture, medical diagnostics, and industrial applications [24].

The core challenge in hyperspectral imaging lies in how different sensor technologies acquire this spatial and spectral data cube. Various instrumental architectures have been developed, each with distinct advantages and limitations for specific applications [27] [28]. The three primary technological approaches—push broom, whisk broom, and snapshot imaging—represent different solutions to the fundamental problem of capturing multidimensional data with two-dimensional sensor arrays. Understanding these different imaging modalities is essential for researchers and scientists selecting appropriate technology for environmental monitoring applications, as each system offers different trade-offs between spatial and spectral resolution, acquisition speed, complexity, and cost [27].

Fundamental Operating Principles

Push Broom Imaging

Push broom hyperspectral sensors, also known as line-scanning imagers, operate by capturing an entire line of spatial pixels with full spectral information in a single exposure [27]. As the sensor platform (such as a drone, aircraft, or satellite) moves forward, successive lines are recorded and stacked to form a complete spectral image cube [28]. This approach utilizes a two-dimensional detector array where one dimension represents spatial information across the scan line and the other dimension represents spectral information dispersed by a grating or prism [29].

Push broom systems are particularly favored for airborne remote sensing applications due to their high spatial and spectral resolution capabilities [27]. Since they capture an entire line of data simultaneously, they offer faster acquisition than whisk broom scanners while providing better spectral consistency across the field of view compared to snapshot systems. The push-broom hyperspectral imager described in the search results covers all atmospheric windows in the visible/near-infrared/shortwave infrared spectrum (0.45-2.5µm) and features a wide field of view (42º), making it suitable for various environmental monitoring applications including geological surveys, crop monitoring, and coastal ecosystem research [30].

Whisk Broom Imaging

Whisk broom scanners, also referred to as point-scanning systems, represent an earlier approach to hyperspectral imaging that captures data one spatial pixel at a time [27]. These systems employ a rotating mirror that sweeps perpendicular to the platform's flight direction, sequentially scanning across the terrain [27]. For each ground pixel, the complete spectral information is collected before moving to the next pixel position [24].

The fundamental architecture of whisk broom scanners makes them mechanically complex due to the moving mirror assembly [27]. This scanning mechanism can introduce spatial distortions in the image outputs as the optics rotate during acquisition [27]. Additionally, whisk broom sensors provide inherently slower frame rates than push broom units, resulting in lengthier data acquisition periods when all other factors are equal [27]. However, they can offer excellent geometric accuracy when properly calibrated and have been successfully implemented in miniaturized forms suitable for UAV deployment [27].

Snapshot Imaging

Snapshot hyperspectral imaging systems represent the most recent technological advancement, capable of capturing the entire hyperspectral data cube in a single exposure without any scanning mechanism [31]. These systems employ various optical approaches including tunable filters, coded apertures, image replicators, or filter arrays to simultaneously record both spatial and spectral information [27].