Hyperspectral Imaging in Remote Sensing: A Comprehensive Guide for Researchers and Pharmaceutical Professionals

This article provides a comprehensive examination of hyperspectral imaging (HSI) and its transformative applications in remote sensing, with a specialized focus on pharmaceutical research and development.

Hyperspectral Imaging in Remote Sensing: A Comprehensive Guide for Researchers and Pharmaceutical Professionals

Abstract

This article provides a comprehensive examination of hyperspectral imaging (HSI) and its transformative applications in remote sensing, with a specialized focus on pharmaceutical research and development. It covers the foundational principles of HSI technology, detailing its superior spectral resolution for precise material identification beyond conventional imaging. The content explores diverse methodological applications across environmental, agricultural, and biomedical fields, while addressing key implementation challenges and optimization strategies through AI integration and miniaturization. Through validation case studies and comparative analysis with other spectroscopic techniques, the article demonstrates HSI's proven efficacy in pharmaceutical quality control, counterfeit detection, and process analytical technology (PAT), offering researchers and drug development professionals actionable insights for leveraging this powerful analytical tool.

Understanding Hyperspectral Imaging: Core Principles and Technological Fundamentals

Core Principles and Technical Foundations

Hyperspectral imaging (HSI) is an advanced optical sensing technique that integrates spectroscopy and digital photography into a single system [1]. Unlike conventional imaging, which captures only a few broad spectral bands, HSI simultaneously acquires spatial and spectral data across hundreds of narrow, contiguous wavelength bands for each pixel in an image [1] [2]. This creates a three-dimensional dataset known as a hyperspectral data cube, which combines two spatial dimensions with one spectral dimension [1].

From Broad Bands to Continuous Spectra

The fundamental difference between HSI and other imaging modalities lies in its spectral resolution and continuity.

- Panchromatic imaging records a single broad spectral band, yielding high spatial resolution but minimal spectral detail [1].

- Standard RGB (color) imaging captures only three wide bands (red, green, and blue) [1] [3], providing basic color information but limited material discrimination.

- Multispectral imaging typically acquires fewer than 20 discrete spectral bands [1] [2], offering more spectral information than RGB but with gaps in coverage.

- Hyperspectral imaging captures between 50-250+ narrow, contiguous bands [2], generating a near-continuous spectrum for each pixel that serves as a unique material "fingerprint" [1].

This detailed spectral information enables precise identification and characterization of materials, biological tissues, and environmental surfaces based on their chemical composition [1].

HSI System Architecture and Imaging Modalities

A typical HSI system consists of several key components that work together to capture hyperspectral data [1]:

- Optical Assembly: Comprising lenses, mirrors, or hybrid combinations that collect and focus incident radiation, establishing critical imaging parameters like field of view (FOV) and spatial resolution [1].

- Imaging Spectrometer: The core component that spectrally disperses incoming light into numerous narrow wavelength bands using dispersion optics such as diffraction gratings, prisms, or electronically tunable filters [1].

- Detector Array: Sensors (typically CCD or CMOS) that capture spectrally dispersed optical radiation and convert it into measurable electronic signals [1].

Hyperspectral systems employ different scanning methodologies based on application requirements:

- Spatial-scanning (Whiskbroom): Captures the complete spectrum of a single spatial point at a time.

- Spectral-scanning (Pushbroom): Acquires a complete line of spatial data with full spectral information simultaneously, well-suited for airborne and satellite platforms [4].

- Snapshot HSI: Captures the entire hyperspectral cube in a single integration time, enabling imaging of dynamic scenes.

Table: Comparison of Imaging Modalities

| Imaging Modality | Spectral Bands | Spectral Resolution | Spectral Coverage | Primary Applications |

|---|---|---|---|---|

| Panchromatic | 1 | Very Broad | Visible Spectrum | High-resolution mapping |

| RGB (Color) | 3 | Broad (~100 nm) | Red, Green, Blue | General photography, basic color analysis |

| Multispectral | 3-20 | Discrete, Broad | Selected bands | Vegetation indices, land cover classification |

| Hyperspectral | 50-250+ | Narrow, Contiguous (5-10 nm) | Continuous (e.g., 400-2500 nm) | Material identification, chemical analysis, precise diagnostics |

Current Applications and Quantitative Insights

Hyperspectral imaging has evolved from a research tool to an operational technology with diverse applications across multiple sectors. The global market for HSI in agriculture alone is projected to exceed $400 million by 2025, with over 60% of precision agriculture systems expected to use hyperspectral imaging for crop monitoring [2].

Precision Agriculture and Environmental Monitoring

HSI enables non-destructive, real-time monitoring of plant health, soil conditions, and environmental impacts [2]:

- Crop Health Monitoring: Early detection of fungal, viral, or bacterial infections by revealing biochemical changes invisible to conventional sensors, enabling intervention before yield compromise [2].

- Nutrient and Water Stress Management: Identification of nutrient deficiencies and moisture stress through unique spectral signatures, guiding variable rate fertilizer applications and precision irrigation [2].

- Yield Prediction and Supply Chain Optimization: AI models leveraging hyperspectral data across entire fields can predict yields with unprecedented accuracy, improving harvest planning and food supply chain traceability [2].

Recent research demonstrates that hyperspectral remote sensing combined with machine learning can accurately predict grassland forage quality across global biomes. Random forest regression achieved high accuracy for metabolizable energy (nRMSE = 0.108, R² = 0.68) and aboveground biomass (nRMSE = 0.145, R² = 0.53) across diverse climate zones [5].

Marine Vessel Emission Monitoring

Fast-hyperspectral imaging remote sensing has been successfully deployed for quantifying nitrogen dioxide (NO₂) and sulfur dioxide (SO₂) emissions from marine vessels [6]. This technique addresses limitations of previous monitoring approaches by providing both high quantification accuracy and adequate spatiotemporal resolution, with complete plume scanning processes typically taking under 4 minutes and spatial resolution better than 0.5 m × 0.5 m [6].

Cultural Heritage and Pigment Analysis

Visible spectral imaging technology enables non-invasive pigment identification for colored relics, addressing challenges in cultural heritage preservation [7]. This approach captures both spatial distribution and spectral characteristics of pigments, enabling:

- Boundary extraction of pigment application areas through image segmentation [7]

- Identification of chemical composition through spectral fingerprint matching [7]

- Prediction of pigment mixture proportions for accurate color restoration and facsimile creation [7]

Urban Planning and Land Use Analysis

The OHID-1 dataset exemplifies HSI applications in urban sustainable development and land use analysis [4]. This large-scale hyperspectral imagery dataset comprises 10 images from diverse regions with 32 spectral bands, 512 × 512 pixel spatial dimensions, 10-meter spatial resolution, and 7 land use classes, supporting advanced classification algorithms and urban planning initiatives [4].

Table: Quantitative Market Outlook for Hyperspectral Imaging in Agriculture (2025)

| Application Area | Estimated Market Size 2025 (USD million) | Projected Growth Rate (% YoY) | Main Benefits | Adoption Level |

|---|---|---|---|---|

| Crop Monitoring | 150 | 18% | Real-time plant stress detection, yield forecasts, optimize inputs | High |

| Soil Management | 72 | 17% | Map soil chemistry, guide sustainable amendments, inform irrigation | Medium |

| Disease Detection | 64 | 20% | Early warning, precision pesticide use, reduced crop losses | High |

| Precision Irrigation | 42 | 16% | Water savings, maximize efficiency, maintain crop vigor | Medium |

| Pest/Weed Detection | 32 | 15% | Targeted chemical application, resistance management | Medium |

| Environmental Monitoring | 48 | 19% | Carbon tracking, regulatory compliance, sustainability | Medium |

Experimental Protocols and Methodologies

Protocol: Marine Vessel Emission Quantification

This protocol details the methodology for quantifying NO₂ and SO₂ emissions from marine vessels using fast-hyperspectral imaging remote sensing [6].

Instrumentation and Setup

The fast-hyperspectral imaging remote sensing instrument consists of six major components [6]:

- Visible Camera: Records live images of the imaging area for contextual reference.

- Multi-channel UV Camera System: Comprising a UV camera and filter wheel with pairs of filters centered at specific wavelengths (310/330 nm for SO₂, 405/470 nm for NO₂) to identify plume contours and absorption intensity.

- Hyperspectral Camera System: Consisting of a telescope, fiber, and spectrometer to collect solar scattering spectra with high quantification accuracy.

- 2D Scanning System: Elevation and azimuth motors to control telescope positioning for systematic area coverage.

- Power Control Module: Provides stable power to all components.

- Industrial Control Machine (IPC): Hosts software for instrument control and spectral analysis.

A critical subsystem is the precision temperature control system maintaining the spectrometer at 20°C ± 0.5°C using thermoelectric coolers (Peltiers) and temperature sensors (pt100), reducing spectral noise and ensuring measurement stability [6].

Data Acquisition Procedure

- Pre-scan Calibration: Conduct two zenith measurements before serpentine scanning as reference spectra for entire observation.

- Automated Scanning: IPC controls telescope to follow "S"-shaped trajectory across preset imaging area, with integration time of 3 seconds per spectrum.

- Plume Identification: Multi-wavelength filter images help precisely identify plume outline and trace gas distribution.

- Aerosol Characterization: Analyze variation of O₄ differential slant column densities (DSCDs) at different azimuth angles to categorize plumes as aerosol-present or aerosol-absent (standard deviation of O₄ DSCDs <20% indicates aerosol absence).

Data Processing and Analysis

- Spectral Processing: Convert raw spectra to differential slant column densities (DSCDs) of NO₂ and SO₂ using differential optical absorption spectroscopy (DOAS) techniques.

- Air Mass Factor (AMF) Calculation:

- For aerosol-absent plumes: Retrieve aerosol vertical profiles and input as constraints into radiative transfer model (RTM)

- For aerosol-present plumes: Simulate and reconstruct stereoscopic aerosol distribution using 3D-RTM

- Vertical Column Density (VCD) Determination: Calculate VCDs from DSCDs using appropriate AMFs for accurate emission quantification.

Protocol: Grassland Forage Quality Assessment

This protocol outlines the methodology for predicting grassland forage quality and quantity using hyperspectral remote sensing and machine learning [5].

Field Data Collection

- Site Selection: Compile hyperspectral data across diverse climate zones (temperate, humid tropical, and dry subtropical grasslands) capturing full growing seasons and contrasting management regimes.

- Reference Measurements: Collect ground-truth data for:

- Metabolizable energy (ME)

- Aboveground biomass (AGB)

- Metabolizable energy yield (MEY)

Spectral Data Processing

- Data Preprocessing: Apply radiometric calibration and atmospheric correction to convert raw digital numbers to surface reflectance.

- Feature Extraction: Extract spectral features from hyperspectral data across the visible, near-infrared, and shortwave infrared regions.

Model Development and Validation

- Algorithm Selection: Test multiple machine learning approaches including:

- Random Forest Regression

- Neural Networks

- Partial Least Squares Regression

- Model Training: Train models to predict ME, AGB, and MEY from spectral features.

- Performance Validation: Validate model performance using appropriate cross-validation techniques and independent test datasets, reporting metrics including normalized RMSE (nRMSE) and R² values.

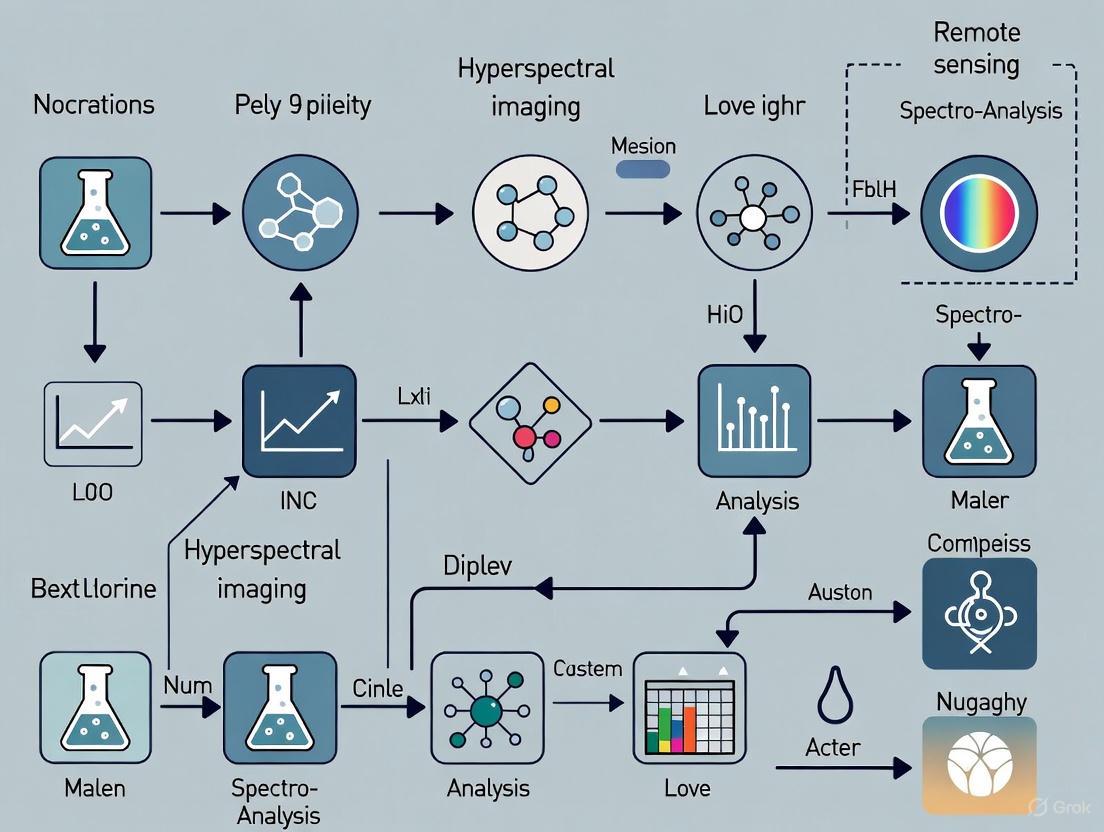

Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Research Components for Hyperspectral Imaging Systems

| Component / Solution | Function | Technical Specifications | Application Notes |

|---|---|---|---|

| Imaging Spectrometer | Spectral dispersion of incoming light | Diffraction gratings, prisms, or tunable filters; spectral resolution 5-10 nm | Choice depends on application: grating for airborne systems, tunable filters for laboratory settings [1] |

| Detector Array | Captures spectrally dispersed radiation | CCD or CMOS sensors; high quantum efficiency, dynamic range | Critical for signal-to-noise ratio and overall data quality [1] |

| Temperature Control System | Stabilizes spectrometer temperature | Precision of ±0.5°C; thermoelectric coolers | Reduces spectral noise, essential for quantitative measurements [6] |

| Tunable Filters | Electronically controlled spectral selection | LCTF, AOTF; rapid spectral selection | Enable flexible, rapid spectral scanning without moving parts [1] [7] |

| Radiometric Calibration Targets | Converts raw data to reflectance | Standards with known reflectance properties | Essential for quantitative analysis across different illumination conditions |

| Spectral Libraries | Reference for material identification | Databases of known material spectra | Enable automated identification of chemicals, minerals, and materials |

| Hyperspectral Datasets | Algorithm development and validation | OHID-1, HyTexiLa, others with multiple land cover classes | Support training and testing of classification models [4] |

Hyperspectral Imaging (HSI) is an advanced sensing technique that simultaneously captures spatial and spectral information from a target, enabling non-invasive, label-free analysis of its material, chemical, and biological properties [1]. Unlike standard red, green, blue (RGB) cameras that capture only three broad color channels, HSI systems record hundreds to thousands of contiguous spectral bands, typically spanning wavelengths from 380 nm to 2500 nm, which includes the visible, near-infrared (NIR), and shortwave infrared (SWIR) regions [1] [8]. This capability allows HSI to uncover subtle, sub-visual features for advanced monitoring, diagnostics, and decision-making.

The core analytical principle of HSI is material fingerprinting through spectral signatures. Every material interacts with electromagnetic radiation in a unique way, absorbing, reflecting, and transmitting specific wavelengths based on its molecular composition and structure [9]. The resulting pattern, known as a spectral signature or "fingerprint," enables precise identification and discrimination of materials that may appear identical in conventional imaging [1] [10]. This primer details the fundamental mechanisms of spectral signatures, the technical workflow of HSI, and provides structured protocols for their application in remote sensing research.

The Science of Spectral Signatures

A spectral signature represents the unique pattern of electromagnetic radiation reflected, absorbed, or transmitted by a material across different wavelengths [9]. These signatures are intrinsic physical properties arising from electronic transitions, molecular vibrations, and scattering effects, providing a direct link to the chemical composition of the target material [1].

Optical Properties and Endmembers

Spectral signatures manifest through three primary optical properties [9]:

- Reflectance: The fraction of incident radiation reflected by a material.

- Absorptance: The fraction of incident radiation absorbed by a material.

- Transmittance: The fraction of incident radiation transmitted through a material.

In HSI data analysis, endmember spectra represent the pure spectral signatures of individual materials within a scene, serving as reference signatures for material identification and quantification [9]. These pure signatures act as fundamental building blocks for analyzing mixed pixels, where multiple materials contribute to the observed spectrum.

Table 1: Key Characteristics of Spectral Signatures in HSI Analysis

| Characteristic | Description | Analytical Importance |

|---|---|---|

| Spectral Resolution | Width of each captured spectral band (typically 5-10 nm) [1] | Determines ability to distinguish subtle spectral features |

| Spectral Range | Total wavelength coverage (e.g., 400-1100 nm) [11] | Defines which materials can be identified based on their active spectral features |

| Absorption Features | Specific wavelengths where material absorbs energy | Directly correlates with chemical bonds and material composition |

| Spectral Mixing | Combination of multiple endmember spectra in a single pixel | Requires mathematical "unmixing" to determine constituent materials [12] |

Spectral Unmixing and Abundance Estimation

In real-world scenarios, the spatial resolution of a hyperspectral sensor often results in mixed pixels, where a single pixel contains multiple distinct materials. Spectral unmixing algorithms decompose these mixed pixel spectra into their constituent endmember contributions [12] [9].

The fill factor describes the proportion of a pixel occupied by a particular material, directly influencing the contribution of that material's spectrum to the overall pixel response [9]. Linear spectral mixing models assume that the observed spectrum results from a weighted combination of endmember spectra, with weights representing the spatial abundance of each material [9]. This approach enables quantitative assessment of material composition even when individual materials cannot be spatially resolved.

HSI Technology and Data Acquisition

HSI System Architecture

A typical HSI system consists of several key components that work together to capture spatially resolved spectral data [1]:

- Optical Assembly: Comprising lenses and/or mirrors that collect and direct incoming radiation from the scene. This assembly establishes critical imaging parameters such as field of view (FOV), spatial resolution, and spectral range.

- Imaging Spectrometer: The core component that spectrally disperses the incident radiation into numerous narrow, contiguous wavelength bands. This is typically achieved using diffraction gratings, prisms, or electronically tunable filters such as Liquid Crystal Tunable Filters (LCTFs) or Acousto-Optic Tunable Filters (AOTFs).

- Detector Array: Usually a charge-coupled device (CCD) or complementary metal-oxide semiconductor (CMOS) sensor that captures the spectrally dispersed signals and converts them into measurable electronic signals.

Scanning Techniques and Data Structure

HSI systems employ different scanning methodologies to acquire the three-dimensional (x, y, λ) dataset known as a hyperspectral cube [11]:

- Spatial Scanning (Push-broom/Whisk-broom): Records a line of spatial pixels with full spectral information for each line, building the image as the sensor or scene moves [11] [8]. Ideal for airborne and conveyor-belt applications.

- Spectral Scanning: Captures full spatial (x,y) images at one wavelength at a time, sequentially scanning through wavelengths using tunable filters [11]. Best for stationary laboratory applications.

- Snapshot Imaging: Captures the entire hyperspectral datacube in a single exposure without scanning [11] [8]. Offers advantages for dynamic scenes but with higher computational complexity.

The result of HSI acquisition is a hyperspectral data cube comprising two spatial dimensions (x, y) and one spectral dimension (λ) [1] [11]. Each pixel in this cube contains a complete spectrum, effectively creating a "spectral fingerprint" for that specific location [1].

Experimental Protocol: Linear Unmixing for Material Identification

This protocol outlines the procedure for applying Multivariate Curve Resolution-Alternating Least Squares (MCR-ALS), a powerful linear unmixing method, to identify and quantify materials in hyperspectral images. This method is particularly suitable for analyzing images from different platforms or with varying spatial resolutions [12].

Equipment and Software Requirements

Table 2: Research Reagent Solutions for HSI Analysis

| Item | Function | Application Notes |

|---|---|---|

| Hyperspectral Imager | Captures spatial and spectral data | Push-broom, spectral scanning, or snapshot systems depending on application [11] |

| Spectral Calibration Standards | Validates wavelength accuracy | Certified reflectance standards (e.g., Spectralon) |

| Computational Workstation | Processes hyperspectral data | Minimum 16GB RAM; GPU acceleration recommended for large datasets |

| MCR-ALS Software | Performs linear unmixing | Available in packages like MATLAB with PLS_Toolbox, Python (scikit-learn, Hyperspy) |

| Spectral Library | Reference endmember spectra | Custom-built or commercial libraries (e.g., USGS Spectral Library) |

Step-by-Step Procedure

Step 1: Data Acquisition and Preprocessing

- Acquire hyperspectral images of the target scene using appropriate imaging parameters (spatial resolution, spectral range, integration time).

- Perform radiometric calibration to convert raw digital numbers to physical units (reflectance or radiance) using calibration standards.

- Apply geometric correction if necessary to align spatial features across different images or platforms [1].

Step 2: Data Structuring and Organization

- Organize the hyperspectral data into a 2D matrix D (samples × wavelengths), where each row represents the spectrum of a single pixel.

- For multiplatform fusion, create a column-wise augmented matrix structure to combine data from different spectroscopic techniques [12]: Where D₁, D₂, ... Dₙ represent hyperspectral data blocks from different platforms (e.g., Raman, infrared, fluorescence).

Step 3: Initialization and Constraint Selection

- Initialize the MCR-ALS algorithm with estimated pure spectra or concentration profiles. This can be done using:

- Pure pixel indices from methods like Pixel Purity Index (PPI)

- Simplified Beer-Lambert law estimates [12]

- Random initialization with non-negativity constraints

- Select appropriate constraints based on the analytical context:

- Non-negativity for both concentrations and spectra (physically realistic)

- Closure (sum-to-one) if relative abundances are required

- Spectral shape constraints if prior knowledge exists

Step 4: Alternating Least Squares Optimization

Execute the MCR-ALS algorithm through iterative optimization:

- Concentration Update: Estimate concentration profiles C given fixed spectral profiles ST:

- Apply Constraints to concentration profiles (e.g., non-negativity, closure).

- Spectral Profile Update: Estimate spectral profiles ST given fixed concentration profiles C:

- Apply Constraints to spectral profiles (e.g., non-negativity, spectral shape).

- Check Convergence: Calculate percent variance explained and compare to previous iteration. Continue until convergence criteria are met (typically when change in residuals < 0.1-1%) [12].

Step 5: Result Interpretation and Validation

- Examine the resolved pure spectral profiles and compare with reference spectra from spectral libraries for material identification.

- Analyze the concentration maps to understand spatial distribution of identified materials.

- Validate results through comparison with known standards or complementary analytical techniques.

Application Notes

The linear mixing model assumes that the measured spectrum at each pixel equals the sum of the pure component spectra weighted by their concentrations [12]:

Where D is the measured data matrix, C is the concentration matrix, Sᵀ is the matrix of pure spectra, and E represents residuals.

MCR-ALS is particularly valuable for image fusion scenarios, where it can simultaneously analyze multiple hyperspectral images from different platforms while respecting the distinct characteristics of each technique [12].

For complex biological tissues or environmental samples, the number of components can be estimated using principal component analysis (PCA) or singular value decomposition (SVD) before MCR-ALS analysis.

Quantitative Performance Across Applications

HSI has demonstrated remarkable capability across diverse fields by leveraging the principle of spectral fingerprinting. The following table summarizes key performance metrics from recent studies.

Table 3: HSI Performance Metrics Across Application Domains

| Application Domain | Specific Use Case | Performance Metric | Result |

|---|---|---|---|

| Agriculture | Crop disease detection [8] | Classification accuracy | 98.09% |

| Crop classification [8] | Classification accuracy | 86.05% | |

| Environmental Monitoring | Forest classification [13] [8] | Accuracy improvement vs. conventional methods | +50% |

| Soil organic matter prediction [8] | R² value | 0.6 | |

| Marine plastic waste detection [13] [8] | Classification accuracy | 70-80% | |

| PM2.5 pollution detection [8] | Classification accuracy | 85.93% | |

| Medical Diagnostics | Skin cancer detection [13] [8] | Sensitivity: 87%, Specificity: 88% | 87%/88% |

| Colorectal cancer detection [13] [8] | Sensitivity: 86%, Specificity: 95% | 86%/95% | |

| Food Quality & Safety | Egg freshness prediction [13] [8] | R² value | 0.91 |

| Pine nut quality classification [13] [8] | Classification accuracy | 100% | |

| Counterfeit Detection | Fake currency detection [8] | Accuracy (400-500 nm range) | High accuracy |

| Counterfeit alcohol detection [8] | F1-score | 99.03% |

Advanced Protocol: Hyperspectral Image Fusion for Biological Tissues

This protocol provides a specialized methodology for fusing hyperspectral images from multiple spectroscopic platforms to achieve comprehensive characterization of biological tissues, as demonstrated in studies of rice leaf cross-sections [12].

Specialized Equipment

- Multiple HSI Platforms: Synchrotron Radiation Fourier Transform Infrared (SR-FTIR) imaging system, Raman microscope, and fluorescence imaging system.

- Cryostat: For preparing thin tissue sections (e.g., 7 μm thickness).

- Calcium Fluoride Slides: Optimal for multimodal HSI as they are transparent across broad spectral ranges.

- Spatial Registration System: For precise alignment of images from different platforms.

Tissue Preparation and Image Acquisition

Sample Preparation:

- Embed tissue samples (e.g., rice leaves) in agarose for stabilization.

- Section to 7 μm thickness using a cryostat at -20°C.

- Mount sections on calcium fluoride slides and cover with calcium fluoride coverslips.

- Seal with nail polish to prevent dehydration [12].

Multimodal Image Acquisition:

- SR-FTIR Imaging: Collect data at synchrotron facility (e.g., MIRAS beamline at ALBA Synchrotron). Use Fourier transform infrared spectrometer with HgCdTe detector cooled with liquid nitrogen [12].

- Raman Imaging: Acquire using Raman microscope with appropriate laser excitation wavelength and diffraction-limited spatial resolution.

- Fluorescence Imaging: Capture natural autofluorescence from fluorophores like lignin and chlorophyll using appropriate excitation/emission filters [12].

Spatial Alignment:

- Identify common structural features across all image modalities for spatial reference.

- Apply geometric transformation to align all images to a common spatial grid.

- Resample images to balance spatial resolution differences between platforms.

Data Fusion and Analysis

Data Structure Assembly:

- Organize data from all platforms into a row-wise augmented matrix:

- This structure allows MCR-ALS to resolve components that may be visible in some techniques but not others [12].

Platform-Specific Constraints:

- Apply technique-specific constraints during MCR-ALS optimization:

- Raman data: Non-negativity for both concentrations and spectra

- FTIR data: Non-negativity with possible spectral normalization

- Fluorescence data: Non-negativity with consideration of potential inner filter effects

- Apply technique-specific constraints during MCR-ALS optimization:

Integrated Interpretation:

- Analyze resolved components to identify biological structures:

- Lignified tissues (vascular system, sclerenchyma) through Raman and FTIR signatures

- Photosynthetic tissues (mesophyll) through fluorescence and Raman carotenoid signals

- Epidermal protections through FTIR lipid/protein signals [12]

- Analyze resolved components to identify biological structures:

This fusion approach provides a more complete picture of tissue composition and structure than any single technique alone, demonstrating the power of HSI for comprehensive material characterization.

Hyperspectral imaging (HSI) is an advanced optical sensing technique that integrates spectroscopy and digital imaging to simultaneously capture spatial and spectral data [1]. Unlike standard RGB cameras that record only three broad color bands, HSI systems collect hundreds of contiguous spectral bands, providing a unique spectral "fingerprint" for each pixel in a scene [13] [1]. This capability enables precise identification and characterization of materials based on their chemical composition, making it invaluable for remote sensing applications including environmental monitoring, precision agriculture, and defense surveillance [13] [14].

A critical differentiator among HSI systems is their method of data acquisition. The three primary configurations—pushbroom, whiskbroom, and snapshot—represent distinct engineering approaches to solving the fundamental challenge of assembling a three-dimensional hyperspectral data cube (two spatial dimensions plus one spectral dimension) from two-dimensional detector measurements [1]. Each configuration offers unique trade-offs between spatial resolution, spectral resolution, acquisition speed, and system complexity, making them suited for different remote sensing scenarios [15] [13]. This article examines these key system configurations, providing detailed technical comparisons and experimental protocols to guide researchers in selecting appropriate methodologies for their remote sensing applications.

Fundamental Operating Principles

Pushbroom Imaging (also referred to as line-scanning) systems capture spectral data for an entire line of pixels simultaneously. As the system moves relative to the target, it progressively builds up a two-dimensional spatial image with complete spectral information for each pixel [15] [1]. These systems typically employ a diffraction grating or prism to disperse wavelengths across a detector array [1].

Whiskbroom Imaging (point-scanning) systems measure a single pixel's complete spectrum at a time, using a rotating mirror or other scanning mechanism to sweep across the scene [16] [1]. Recent advances incorporate optical switch technology to enable time-division multiplexing, improving acquisition efficiency [16].

Snapshot Imaging systems capture the entire spatial and spectral data cube in a single exposure without scanning [17] [15]. These systems employ specialized filter arrays, typically implemented with metasurfaces or mosaic patterns, to encode spectral information directly onto the sensor [17] [15]. Computational algorithms then reconstruct the complete hyperspectral data cube from this encoded measurement.

Quantitative System Comparison

The table below summarizes key performance characteristics and typical applications for each HSI configuration, compiled from recent research and product analyses.

Table 1: Comparative Analysis of Hyperspectral Imaging Configurations

| Parameter | Pushbroom/Line-Scan | Whiskbroom/Point-Scan | Snapshot |

|---|---|---|---|

| Spatial Resolution | High (e.g., 1600 pixels/line) [15] | Moderate, limited by scanning mechanism [16] | Lower due to spatial multiplexing (e.g., 409×217 from 2045×1085 sensor) [15] |

| Spectral Resolution | High (e.g., 369 bands, 5.8 nm FWHM) [15] | High in SWIR range (900-2500 nm) [16] | Moderate (e.g., 25-151 bands) [17] [15] |

| Acquisition Speed | Moderate (limited by movement) [15] | Slow (point-by-point acquisition) [16] | Very high (single exposure, video rates) [15] [18] |

| Spectral Range | Typically 400-1000 nm (VNIR) [15] | 900-2500 nm (SWIR) [16] | 400-1000 nm or 660-950 nm [17] [15] |

| Key Advantage | High spatial/spectral resolution [15] | Cost-effective for SWIR applications [16] | Real-time imaging of dynamic scenes [15] [18] |

| Primary Limitation | Requires relative motion [15] | Low efficiency, limited integration time [16] | Spatial resolution trade-off [17] [15] |

| Representative Applications | Laboratory analysis, detailed material mapping [15] | Agricultural/forestry remote sensing in SWIR [16] | Medical diagnostics, in vivo imaging, UAV-based sensing [15] [14] |

Performance Metrics in Practical Applications

Recent comparative studies provide quantitative insights into real-world performance. In medical imaging applications, pushbroom systems demonstrated superior spectral resolution with 369 bands between 400-1000 nm compared to snapshot systems capturing 25 bands in the 660-950 nm range [15]. However, snapshot systems achieved acquisition rates compatible with real-time video, while pushbroom systems required seconds to minutes per data cube [15].

In a study comparing both technologies for brain tissue imaging, spectral signatures showed high similarity despite different acquisition methods, with Spectral Angle Mapping (SAM) values below 0.235 for key chromophores across both systems [19]. Advanced snapshot systems have achieved reconstruction quality metrics including MSE of 1.21×10⁻⁴, SAM of 0.041, PSNR of 39.72, and SSIM of 0.95 for data cubes with 151 spectral channels [17].

Experimental Protocols

Protocol 1: Pushbroom Imaging for Terrain Mapping

Objective: To acquire high-resolution hyperspectral data of terrestrial environments for material classification and change detection.

Materials and Equipment:

- Pushbroom hyperspectral camera (e.g., Specim, Headwall Photonics) [20]

- Precision translation stage or airborne/spaceborne platform

- Calibration standards (white reference, wavelength standards)

- Data acquisition workstation with specialized software

Procedure:

- System Calibration:

- Perform radiometric calibration using a standardized white reference panel

- Conduct wavelength calibration using spectral line sources or certified wavelength standards

- Geometric calibration using patterned targets

Data Acquisition:

- Mount the camera on a stable platform with linear motion control

- Alight the camera such that the cross-track dimension is perpendicular to the direction of motion

- Set exposure time based on illumination conditions (typically 100-150 ms) [15]

- Initiate platform movement and simultaneous image acquisition

- Capture dark current reference frames periodically

Data Processing:

- Convert raw data to radiance values using calibration coefficients

- Apply geometric correction for platform motion irregularities

- Perform atmospheric correction if applicable

- Generate hyperspectral data cube for analysis

Quality Control:

- Verify spectral accuracy using known material targets in the scene

- Monitor signal-to-noise ratio (>100:1 recommended)

- Ensure consistent spatial resolution across the field of view

Protocol 2: Snapshot Imaging for Dynamic Processes

Objective: To capture hyperspectral data of rapidly changing scenes for real-time monitoring applications.

Materials and Equipment:

- Snapshot hyperspectral camera (e.g., Ximea, Cubert) with mosaic filter array [15] [20]

- Appropriate illumination system

- Computational reconstruction workstation

- Validation targets with known spectral properties

Procedure:

- System Configuration:

- Select appropriate spectral range and spatial resolution for application

- Configure illumination to minimize shadows and specular reflections

- Mount camera in fixed position relative to target

Data Acquisition:

- Set exposure time to freeze motion (typically <100 ms) [15]

- Capture single-frame encoded image containing spatial and spectral information

- Acquire dark frame and white reference under identical settings

Computational Reconstruction:

Validation:

- Verify reconstruction accuracy using validation targets

- Calculate quality metrics (MSE, SAM, PSNR, SSIM) [17]

- Compare with ground truth measurements if available

Quality Control:

- Monitor reconstruction consistency across multiple acquisitions

- Validate against standard spectral libraries

- Assess spatial uniformity using homogeneous targets

System Workflows and Operational Principles

Pushbroom Imaging Operational Workflow

The following diagram illustrates the sequential data acquisition process fundamental to pushbroom hyperspectral imaging systems.

Pushbroom Imaging Data Acquisition Workflow

Snapshot Imaging Operational Workflow

The diagram below illustrates the single-shot acquisition and computational reconstruction process unique to snapshot hyperspectral imaging.

Snapshot Imaging and Reconstruction Workflow

The Researcher's Toolkit: Essential Research Reagents and Materials

Table 2: Essential Research Reagents and Materials for Hyperspectral Imaging Research

| Item | Function/Purpose | Application Examples |

|---|---|---|

| Broadband Multispectral Filter Array (BMSFA) | Spectral response encoding; modulates incident light with distinct spectral filters [17] | Core component in snapshot systems; enables single-shot spectral acquisition [17] |

| Metasurface Filters | Advanced spectral filtering using sub-wavelength structures; provides high degree of design freedom [17] | Replacement for traditional thin-film filters in modern snapshot systems [17] |

| Calibration Standards | Radiometric and wavelength calibration; ensures measurement accuracy [1] | Essential for quantitative analysis across all HSI configurations [1] |

| Hyperspectral Data Processing Software | Data cube reconstruction, spectral analysis, and material classification [17] [1] | Critical for extracting meaningful information from raw HSI data [17] |

| Optical Switch Device | Rapid switching of field of view in whiskbroom systems; enables time-division multiplexing [16] | Improves acquisition efficiency in whiskbroom imagers [16] |

Pushbroom, whiskbroom, and snapshot hyperspectral imaging configurations each offer distinct advantages for remote sensing applications. Pushbroom systems provide the highest spatial and spectral resolution, making them ideal for laboratory analysis and detailed terrain mapping. Whiskbroom systems offer cost-effective solutions particularly valuable for SWIR applications in agriculture and forestry. Snapshot systems enable real-time monitoring of dynamic processes, with recent advances in computational reconstruction dramatically improving image quality.

The emerging trend of joint hardware-software optimization, particularly through deep learning approaches, is blurring the traditional boundaries between these configurations. Systems like HSITNet demonstrate that co-design of encoding strategies and reconstruction algorithms can substantially enhance performance [17]. For researchers, selection of appropriate HSI configuration must consider the specific trade-offs between spatial resolution, spectral resolution, acquisition speed, and computational requirements inherent to each approach.

As hyperspectral imaging continues to evolve, integration with artificial intelligence and development of more compact, field-deployable systems will further expand applications across scientific, industrial, and defense domains [13] [14]. The protocols and comparisons presented here provide a foundation for researchers to effectively leverage these powerful technologies in remote sensing research.

In hyperspectral imaging (HSI), the selection of spatial and spectral resolution is a fundamental consideration that directly influences the richness of the data and the practicality of its application. HSI is an advanced optical sensing technique that assembles spectroscopy and digital photography into a single system, generating a three-dimensional dataset known as a hypercube, which contains two spatial dimensions and one spectral dimension [1]. This allows each pixel in a captured scene to possess a unique spectral signature, or "fingerprint," enabling the identification of materials based on their specific reflectance characteristics [21].

Spatial resolution refers to the smallest object that can be resolved in the image, while spectral resolution defines the ability to distinguish between adjacent wavelengths, typically reported as the width of each spectral band in nanometers (nm) [22]. A core challenge in system design is the inherent trade-off between these two resolutions; for a given detector pixel density, increasing the number of spectral bands often necessitates a reduction in the number of spatial pixels, and vice versa [17]. Effectively balancing this trade-off is critical for optimizing HSI systems for specific remote sensing applications, from invasive species mapping to mineral exploration.

Fundamental Concepts and Key Trade-offs

Defining Resolution in Hyperspectral Imaging

- Spectral Resolution and Bands: A spectral band represents a group of wavelengths. Hyperspectral data is characterized by many narrow, contiguous bands—often hundreds—that cover a specific portion of the electromagnetic spectrum [22] [1]. For example, the NEON imaging spectrometer collects approximately 426 bands within the 380 nm to 2510 nm range, with bandwidths of approximately 5 nm [22]. This high spectral resolution allows for the detection of subtle spectral features caused by molecular absorption and scattering, which are diagnostic for identifying and characterizing materials [1].

- Spatial Resolution: This refers to the area on the ground represented by a single pixel. Higher spatial resolution allows for the identification of smaller objects but may require a trade-off with spectral resolution or signal-to-noise ratio, especially for spaceborne systems where sensor resources are constrained [23].

The Spatial-Spectral Trade-off and Information Entropy

The fundamental trade-off arises because an image sensor has a limited number of pixels. Capturing a high number of spectral bands for each spatial location can force a reduction in the total number of spatial pixels acquired, potentially degrading spatial resolution [17]. The core of this issue is the loss of information entropy during the encoding process in snapshot spectral imaging systems, where three-dimensional spectral cube information is compressed into a two-dimensional image [17]. Advanced computational approaches, including deep learning, are being developed to mitigate this loss and achieve higher quality in the reconstructed spectral-spatial data cube [17].

Table 1: Comparison of Imaging Modalities Based on Spectral and Spatial Resolution Characteristics

| Imaging Modality | Typical Number of Spectral Bands | Spectral Bandwidth | Primary Strength | Common Applications |

|---|---|---|---|---|

| Panchromatic | 1 | Very Broad (e.g., entire visible spectrum) | High Spatial Resolution | Basic mapping, high-resolution topography |

| RGB (Color) | 3 | Broad (e.g., Red, Green, Blue) | Human-visual interpretation | Standard photography, basic color analysis |

| Multispectral (MSI) | < 20 | Discrete, often broad bands [1] | Balance of spatial/spectral data | Vegetation indices, land cover classification |

| Hyperspectral (HSI) | Hundreds | Narrow, contiguous (e.g., 5-10 nm) [1] | Detailed material identification & discrimination | Mineral mapping, invasive species detection, medical diagnostics [23] [24] [21] |

Quantitative Comparisons in Practical Applications

Sensor Performance in Environmental Monitoring

A study comparing four freely available sensors for mapping invasive alien trees in South Africa demonstrated the practical implications of resolution trade-offs. The sensors covered a wide range of spatial (0.25–60 m) and spectral (3–285 bands) resolutions [23].

Table 2: Sensor Performance for Invasive Alien Tree Mapping [23]

| Sensor / Technique | Spatial Resolution | Spectral Characteristics | Reported Performance |

|---|---|---|---|

| SPOT6 | Not Specified (Spaceborne) | Multispectral | Highest overall accuracy for discriminating among alien taxa. |

| Sentinel-2 | Not Specified (Spaceborne) | Multispectral | Best accuracy for distinguishing alien taxa from other vegetation classes. |

| Aerial Photography | 0.25 m | Panchromatic/RGB | Performed poorly compared to spaceborne multispectral sensors. |

| EMIT + Sentinel-2 Fusion | Mixed | Hyperspectral + Multispectral | Improved mapping accuracy by ~5% compared to single sensors. |

The key finding was that while spaceborne multispectral sensors (SPOT6, Sentinel-2) performed robustly, the data fusion of a hyperspectral sensor (EMIT, with high spectral resolution) and a multispectral sensor (Sentinel-2, with higher spatial resolution) led to a marked improvement in classification accuracy [23]. This underscores the value of combining datasets to overcome the limitations of individual systems.

Performance Across Diverse Industries

The balance between spatial and spectral resolution is critical beyond traditional remote sensing. The ability of HSI to capture subtle spectral features has led to transformative applications in medicine, agriculture, and industry.

Table 3: Application-Based Performance of Hyperspectral Imaging Systems

| Application Domain | Key Metric | Reported Performance | Relevance of Resolution |

|---|---|---|---|

| Medical Diagnostics | Sensitivity & Specificity for Cancer Detection | 87% & 88% for skin cancer; 86% & 95% for colorectal cancer [13]. | High spectral resolution is critical for differentiating tissue types based on biochemical composition [21]. |

| Precision Agriculture | Disease Detection & Classification Accuracy | 98.09% detection accuracy; 86.05% classification accuracy using HSI-TransUNet model [13]. | Spatial resolution determines the scale at which disease patches can be detected, while spectral resolution enables early identification. |

| Food Safety & Quality | Quality Classification Accuracy | 100% accuracy for pine nut quality classification; R²=0.91 for egg freshness prediction [13]. | Spectral resolution is paramount for quantifying chemical properties related to freshness and contamination. |

| Environmental Monitoring | Forest Classification Accuracy | Up to 50% improvement with hyperspectral data over other methods [13]. | High spectral resolution allows for detailed discrimination of tree species and health status. |

Experimental Protocols for Resolution-Centric Studies

Protocol: Evaluating Sensors for Vegetation Mapping

This protocol is adapted from a study that compared multiple sensors for mapping invasive tree species [23].

1. Objective: To evaluate the performance of different remote sensing sensors, with varying spatial and spectral resolutions, for classifying and mapping specific vegetation taxa.

2. Materials and Reagents:

- Sensor Data: Acquire coregistered imagery from the sensors to be evaluated (e.g., SPOT6, Sentinel-2, aerial photography, EMIT).

- Ground Truth Data: Geo-referenced polygons or points indicating the location of target vegetation classes (e.g., invasive alien trees) and other land cover classes (e.g., native vegetation, soil, water).

- Software: Image processing and classification software (e.g., Python with scikit-learn, R, or commercial packages like ENVI).

3. Experimental Workflow:

4. Procedure:

- Data Preprocessing: Perform atmospheric correction and radiometric calibration on all images to ensure comparability. Spatially co-register all datasets to a common coordinate system and pixel grid.

- Data Fusion (If Applicable): For techniques like the EMIT and Sentinel-2 fusion, employ a data fusion algorithm (e.g., pansharpening, component substitution, or deep learning-based fusion) to combine the high spectral resolution of one sensor with the high spatial resolution of another [23].

- Image Classification: Extract spectral features from the processed images. Train a supervised classification model (e.g., Random Forest, Support Vector Machine) using the ground truth data to map the target vegetation classes.

- Accuracy Assessment: Use a held-back portion of the ground truth data to calculate accuracy metrics, including Overall Accuracy, and User's and Producer's accuracies for each class.

- Performance Comparison: Compare the accuracy metrics and the visual quality of the classified maps generated from each sensor and the fused product.

Protocol: Snapshot HSI with High Spatial Resolution

This protocol is based on a study that developed the HSITNet to achieve snapshot HSI without spatial resolution degradation [17].

1. Objective: To design a snapshot hyperspectral imaging system capable of reconstructing a data cube with high spectral resolution (many channels) without sacrificing the native spatial resolution of the sensor.

2. Materials and Reagents:

- Optical System: Imaging lens set, a Broadband Multispectral Filter Array (BMSFA) fabricated using metasurfaces, and an image sensor.

- Computing Hardware: A high-performance computer or workstation with a GPU for deep learning model training and inference.

- Software: Deep learning framework (e.g., PyTorch, TensorFlow) and the proposed HSITNet architecture [17].

3. Experimental Workflow:

4. Procedure:

- Joint Optimization: Integrate a metasurface forward design neural network with the spectral reconstruction neural network (HSITNet) to jointly optimize the BMSFA's structural parameters and the decoding strategy. This co-design aligns the physical encoding with the computational decoding [17].

- Data Acquisition: The target scene is projected through the lens onto the custom-designed BMSFA. The incident spectral cube is spectrally response encoded (SRE) by the BMSFA, and this encoded information is captured by the image sensor, resulting in a compressed 2D image.

- Spectral Reconstruction: The captured 2D image is fed into the trained HSITNet decoder. The transformer-based architecture, with its broad field of view, is used to reconstruct the original 3D spectral data cube from the 2D encoded input.

- Validation: Validate the quality of the reconstructed spectral cube using metrics such as Mean Squared Error (MSE), Spectral Angle Mapper (SAM), Peak Signal-to-Noise Ratio (PSNR), and Structural Similarity Index (SSIM) [17].

The Scientist's Toolkit

Table 4: Essential Research Reagent Solutions for Hyperspectral Remote Sensing Research

| Tool / Material | Function / Description | Example Use Case |

|---|---|---|

| Open-Source HSI (OpenHSI) | A compact, pushbroom hyperspectral imager built from commercial-off-the-shelf (COTS) components, offering a low-cost, customizable alternative [25]. | Deployable on drones for environmental monitoring; spectral range 430–830 nm with 213 bands [25]. |

| Custom Data Acquisition (DAQ) System | A self-contained system using a Raspberry Pi or NVIDIA Jetson, GPS, and IMU to collect timestamped hyperspectral, navigation, and orientation data concurrently for direct georeferencing [25]. | Enables accurate georeferencing of pushbroom HSI data collected from drones or aircraft. |

| Broadband Multispectral Filter Array (BMSFA) | An optical filter array, often fabricated using metasurfaces, placed in front of an image sensor to perform spectral response encoding of the incident light [17]. | Core component in snapshot HSI systems for encoding the 3D spectral cube into a 2D image. |

| HSITNet (Hyperspectral Imaging Transformers Network) | A deep learning model based on a transformer architecture for reconstructing the 3D spectral cube from a 2D encoded image captured by a snapshot HSI system [17]. | Achieves high-fidelity reconstruction of 151 spectral channels without loss of spatial resolution. |

| Georeferencing & Calibration Targets | Panels with known reflectance properties (e.g., spectralon) and ground control points with precise GPS coordinates. | Used for radiometric calibration of HSI data and for geometric correction and accuracy assessment of the final maps. |

The hyperspectral data cube is a three-dimensional (3D) data structure that fundamentally integrates two-dimensional spatial information with one-dimensional spectral information [26] [1]. This structure combines spatial dimensions (X and Y axes), which represent the two-dimensional image coordinates similar to conventional photography, with a spectral dimension (Z axis or λ) that represents wavelength, frequency, or energy channels [26]. Each spatial pixel in the resulting data cube contains a complete spectrum rather than just a single intensity value, creating a rich dataset where each "layer" or "slice" along the spectral axis represents the image at a specific wavelength [26] [11].

This architectural framework enables comprehensive analysis of both spatial features and spectral characteristics simultaneously, providing significantly more information than traditional imaging techniques [26]. In remote sensing applications, the data cube serves as the foundational structure for harnessing the information power of Earth observation data, allowing researchers to identify materials, detect processes, and monitor environmental changes by analyzing unique spectral signatures across landscapes [27] [11].

Applications in Remote Sensing

The integration of spatial and spectral information in data cubes has enabled transformative applications across numerous remote sensing domains. The table below summarizes key application areas and their specific implementations:

Table 1: Remote Sensing Applications of Hyperspectral Data Cubes

| Application Domain | Specific Implementation | Key Metrics/Benefits |

|---|---|---|

| Environmental Monitoring | Data Cube on Demand (DCoD) systems in Bolivia and DRC [27] | Lowered complexity barriers; enhanced data sovereignty; large adoption potential |

| Agriculture & Vegetation | Crop health monitoring; vegetation stress detection [11] [1] | Disease identification; nutrient status; yield prediction |

| Geological Mapping | Mineral identification and mapping [28] [11] | Mineral detection; resource exploration; geological analysis |

| Land Cover Classification | Terrestrial mineral mapping; coastal wetland monitoring [28] | Land use classification; change detection; habitat mapping |

| Disaster Assessment | Natural disaster impact evaluation [28] | Damage assessment; recovery monitoring; risk management |

Experimental Protocols and Methodologies

Data Acquisition Workflow

The generation of spectral cubes involves sophisticated instrumentation and processing to ensure data quality and accuracy [26]. A standardized protocol for hyperspectral data acquisition includes the following critical stages:

Table 2: Hyperspectral Data Acquisition Protocol

| Processing Stage | Key Operations | Technical Considerations |

|---|---|---|

| System Setup | Configure HSI microscope; position broadband light source; calibrate motorized sample holder [29] | Illumination spectrum (360-2600 nm); step size (0.5 μm/line); objective magnification (100×) |

| Spectral Dispersion | Disperse incident radiation using diffraction gratings, prisms, or tunable filters [1] | Spectral resolution (5-10 nm); number of bands (hundreds); wavelength range (380-2500 nm) |

| Signal Detection | Capture dispersed signals with CCD or CMOS detector arrays [1] | Signal-to-noise ratio (SNR); dynamic range; quantum efficiency |

| Data Calibration | Apply radiometric calibration; geometric correction; noise reduction [26] [1] | Dark current subtraction; flat-field correction; spectral alignment |

| Cube Formation | Generate 3D hypercube (x, y, λ) from processed data [11] | Spatial registration; spectral calibration; metadata association |

Dimensionality Reduction Protocol

The high dimensionality of hyperspectral data presents substantial computational challenges [29]. The following protocol outlines an efficient standard deviation-based band selection method for dimensionality reduction:

- Data Preparation: Load the hyperspectral data cube and validate data integrity through visual inspection of sample spectral profiles.

- Standard Deviation Calculation: Compute the standard deviation for each spectral band across all pixels in the dataset to quantify information content.

- Band Ranking: Sort spectral bands in descending order based on their calculated standard deviation values.

- Band Selection: Select the top N bands with the highest standard deviation values, where N is determined by the desired reduction ratio (e.g., 2.7% of original bands) [29].

- Validation: Evaluate the reduced dataset using classification algorithms to ensure maintained performance (target accuracy: >97% compared to full spectrum) [29].

This protocol has demonstrated a data size reduction of up to 97.3% while maintaining classification accuracy of 97.21% compared to 99.30% with full-spectrum data [29].

Data Analysis Techniques

Spectral Unmixing

Hyperspectral unmixing (HU) addresses the mixed pixel problem by decomposing observed spectra into constituent endmembers and their corresponding abundances [28]. This technique is particularly valuable in remote sensing where individual pixels often contain multiple materials. The linear mixing model (LMM) serves as the foundational approach, though advanced techniques address spectral variability (SV) in HU to improve accuracy across diverse environments [28]. These methods enable precise identification of sub-pixel components in complex landscapes, supporting applications from mineral mapping to land cover classification [28].

Machine Learning Classification

Modern hyperspectral analysis increasingly leverages machine learning for material identification and classification. The protocol below integrates dimensionality reduction with neural network classification:

- Input Preparation: Utilize the reduced band set obtained through standard deviation-based selection.

- Network Architecture: Implement a straightforward convolutional neural network (CNN) optimized for spectral feature extraction.

- Model Training: Train the network using annotated hyperspectral datasets with appropriate validation splits.

- Performance Evaluation: Assess classification accuracy using metrics such as overall accuracy, precision, recall, and F1-score.

This approach has achieved classification accuracies of 97.21% on organ tissue samples with high spectral similarity, demonstrating effectiveness even with complex biological materials [29].

Visualization of Workflows

Figure 1: HSI Data Processing Workflow. This diagram illustrates the sequential stages of hyperspectral data processing from acquisition to application.

Figure 2: Dimensionality Reduction & Classification. This workflow demonstrates the standard deviation-based band selection process for efficient classification.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for Hyperspectral Imaging Research

| Component | Specifications/Examples | Primary Function |

|---|---|---|

| Hyperspectral Sensors | AVIRIS; Hyperion; push broom scanners; snapshot imagers [11] [1] | Capture spatial and spectral data simultaneously across numerous contiguous bands |

| Spectral Libraries | NASA/USGS mineral spectral libraries; vegetation spectral databases [11] | Provide reference spectra for material identification and classification |

| Calibration Targets | Spectralon panels; calibrated light sources [1] | Ensure radiometric accuracy and enable cross-calibration between datasets |

| Data Cube Platforms | Earth Observations Data Cube (EODC); Data Cube on Demand (DCoD) [27] | Manage, process, and analyze hyperspectral data cubes efficiently |

| Processing Algorithms | Spectral unmixing; PCA; standard deviation band selection [28] [29] | Extract meaningful information and reduce data dimensionality |

| Validation Datasets | Ground truth measurements; field spectroscopy [29] | Verify accuracy of hyperspectral analysis results and train machine learning models |

Hyperspectral Imaging (HSI) has fundamentally transformed remote sensing over the past three decades by integrating the disciplines of imaging and spectroscopy into a unified analytical framework. Unlike conventional imaging systems that capture data in several broad spectral bands, HSI acquires contiguous, narrow spectral bands across a wide electromagnetic range, generating a detailed spectral signature for each pixel in a scene [30]. This technological evolution originated in the 1980s when A. F. H. Goetz and colleagues at NASA's Jet Propulsion Laboratory developed pioneering instruments such as the Airborne Imaging Spectrometer (AIS), later evolving into the Airborne Visible Infra-Red Imaging Spectrometer (AVIRIS) [31]. These early systems established the foundational principle of HSI: constructing a three-dimensional data cube with two spatial dimensions and one spectral dimension, enabling both spatial mapping and material identification through spectral analysis [32].

The progression of HSI technology represents a continuous effort to balance spatial detail, spectral resolution, and signal-to-noise ratio while managing exponentially growing data volumes. Contemporary systems now cover wavelength regions from ultraviolet to short-wave infrared with hundreds of spectral channels at nominal resolutions of 5–10 nm [30] [31]. This advancement has unlocked unprecedented capabilities for quantifying the biochemical and biophysical properties of earth surface materials, transitioning remote sensing from primarily qualitative mapping to quantitative analytical science. The technology's core strength lies in its ability to detect subtle spectral variations invisible to the human eye or conventional sensors, providing what is often described as a "spectral fingerprint" for precise material identification and characterization [33].

Historical Trajectory and Technological Milestones

The evolution of HSI spans three distinct generations of technological maturation, from laboratory concept to operational deployment across diverse platforms. The initial research and development phase (1980s-1990s) was characterized by sensor physics innovations, focusing on demonstrating the scientific feasibility of imaging spectroscopy. This period witnessed the transition from whiskbroom to pushbroom scanning mechanisms, significantly improving sensor stability and data quality. The development of AVIRIS marked a critical milestone, establishing the first operational airborne HSI system with 224 spectral bands in the 400–2500 nm range, which remains a benchmark for scientific research [31].

The second generation (2000-2010) focused on data processing and analysis challenges, addressing the "curse of dimensionality" inherent in hyperspectral datasets. With limited training samples and high feature dimensionality, reliable estimation of statistical class parameters presented significant challenges—a phenomenon known as the Hughes effect [31]. Researchers developed specialized algorithms for spectral unmixing, classification, and target detection to interpret the complex information content. This era saw the emergence of support vector machines (SVMs) [31] and spectral angle mappers as standard analytical techniques, alongside the recognition that simultaneous spatial-spectral processing would be essential for robust information extraction [31].

The contemporary generation (2010-present) embraces multi-sensor fusion, artificial intelligence, and platform integration. Current systems increasingly combine HSI with complementary data sources like LiDAR (Light Detection and Ranging), leveraging spatial and spectral information synergistically [34]. The paradigm has shifted from isolated sensor operations to integrated observational systems, exemplified by innovations like HSLiNets, which utilize bidirectional reversed convolutional neural networks for efficient HSI and LiDAR data fusion [34]. Concurrently, sensor miniaturization has enabled deployment on unmanned aerial vehicles (UAVs), making HSI accessible beyond traditional airborne and satellite platforms [30]. This evolution reflects a broader trend toward intelligent, automated, and integrated remote sensing systems capable of supporting real-time decision-making across diverse application domains.

Quantitative Applications and Performance Metrics

Hyperspectral imaging has demonstrated quantifiable impacts across multiple application domains, with particularly significant adoption in precision agriculture. By 2025, over 60% of precision agriculture systems are projected to incorporate HSI for crop monitoring, with the hyperspectral imaging agriculture market expected to exceed $400 million globally [2]. This growth is driven by the technology's demonstrated capacity to improve resource efficiency and decision-making accuracy. The table below summarizes key application areas and their documented performance metrics.

Table 1: Quantitative Performance of HSI Applications in Precision Agriculture

| Application Domain | Reported Impact/Benefit | Key Measurable Outcomes |

|---|---|---|

| Crop Health Monitoring | Early stress detection before visible symptoms appear [35] | Reduction in pesticide use by up to 30% through targeted treatments [36] |

| Nutrient Management | Precision fertilization based on soil nutrient mapping [2] [35] | 15% reduction in fertilizer costs while maintaining crop uniformity [36] |

| Water Stress Detection | Identification of water deficiency through plant water content analysis [35] [36] | Significant water and energy savings in irrigation systems [36] |

| Disease Detection | Early identification of fungal, viral, and bacterial infections [2] [35] | Reduced crop losses through pre-symptomatic intervention [2] |

| Yield Prediction | Accurate forecasting through temporal spectral analysis [2] [36] | Improved harvest planning and logistics management [36] |

The transition from research to operational implementation is further evidenced by the diverse computational frameworks now supporting HSI analytics. The table below catalogs representative algorithms and their specific applications, highlighting the progression from general statistical methods to specialized deep learning architectures.

Table 2: Computational Algorithms for HSI Data Processing

| Application Scenario | Algorithm Type | Performance Advantages | Reference |

|---|---|---|---|

| Remote Sensing Classification | Super PCA, CNN + Dual swin transformer, Gabor filter + Unsupervised discriminant analysis | Preserves spatial structure; captures local and global features; high classification accuracy | [37] |

| Crop Image Classification | CNN + SVM, 3D CNN (LeNet-5), Feature selection + Folded-PCA | Combines CNN feature extraction with SVM classification; integrates spatial-spectral information; enhances classification accuracy | [37] |

| Soil Analysis | Optimal band selection + Random Forest, HSI + PLSR + RBF neural network | Improves soil salinity estimation accuracy; enables non-destructive detection of silicon and moisture | [37] |

| Data Fusion | HSLiNets (Bi-directional reversed CNN) | Efficient fusion of HSI and LiDAR data; reduces computational burden while maintaining accuracy | [34] |

| General Classification | Support Vector Machines (SVMs) with dedicated kernels | Addresses ill-posed problems with limited training samples; incorporates contextual information | [31] |

Experimental Protocols and Methodologies

Protocol: Crop Health and Stress Monitoring

Principle: This protocol utilizes hyperspectral imagery to detect biotic and abiotic plant stress through alterations in spectral reflectance profiles before visible symptoms manifest. The fundamental premise is that physiological changes in plants—from disease, nutrient deficiency, or water stress—alter their absorption and reflection properties across specific wavelengths [35]. Stressed vegetation typically shows reduced reflectance in the near-infrared region and altered absorption features in visible ranges due to chlorophyll degradation and cellular structure damage.

Equipment and Data Acquisition:

- Hyperspectral Sensor: Imaging system covering Visible-Near Infrared (VNIR, 400-1000 nm) and/or Short-Wave Infrared (SWIR, 1000-2500 nm) ranges with spectral resolution ≤10 nm [35] [37].

- Platform: UAV-based, airborne, or satellite platform depending on required spatial resolution and coverage area. UAV platforms offer high spatial resolution (cm-level) for field-scale studies [30].

- Calibration Targets: Spectralon reference panels for radiometric calibration.

- Ancillary Data: Concurrent ground truthing including leaf chlorophyll measurements, plant physiological status, and soil parameters.

Procedure:

- Experimental Design: Define sampling strategy considering crop growth stage, diurnal timing (10:00-14:00 local time recommended for minimal shadow effects), and atmospheric conditions (cloud-free preferable).

- Sensor Calibration: Perform radiometric calibration using reference panels before and after data acquisition sessions.

- Data Acquisition: Capture hyperspectral imagery over target areas, ensuring adequate spatial resolution for target features (e.g., ≥1 m for canopy-level stress, higher for individual plant analysis).

- Pre-processing:

- Apply radiometric correction to convert raw digital numbers to reflectance values.

- Perform geometric correction to rectify spatial distortions.

- Conduct atmospheric correction if using airborne/satellite data.

- Feature Extraction: Calculate vegetation indices (e.g., NDVI, PRI, WBI) or identify specific absorption features related to plant pigments, water content, and cellular structure.

- Statistical Analysis: Implement classification algorithms (SVM, Random Forest) or regression models to correlate spectral features with stress parameters.

- Validation: Compare HSI-derived stress maps with ground truth data through statistical measures (e.g., accuracy, precision, recall for classification; R², RMSE for regression).

Technical Notes: For disease detection, focus on specific spectral regions: chlorophyll absorption features (500-680 nm) for photosynthetic pigment changes, red-edge region (680-750 nm) for early stress, and SWIR (1500-1800 nm, 2000-2300 nm) for water content and cellular structure alterations [35]. The protocol's efficacy depends on establishing robust spectral libraries for different stress conditions under various environmental contexts.

Protocol: HSLiNets for HSI and LiDAR Data Fusion

Principle: This protocol details the implementation of HSLiNets (Hyperspectral Image and LiDAR Data Fusion Using Efficient Dual Non-Linear Feature Learning Networks), which integrates complementary information from hyperspectral and LiDAR sensors through a specialized deep learning architecture [34]. The framework leverages the spectral discrimination capability of HSI with the vertical structural information from LiDAR to enhance land cover classification accuracy while maintaining computational efficiency.

Equipment and Data Requirements:

- Hyperspectral Data: Calibrated hyperspectral imagery with spatial and spectral dimensions.

- LiDAR Data: Co-registered LiDAR-derived digital surface model (DSM) or canopy height model.

- Computing Environment: High-performance computing system with GPU acceleration recommended for model training.

- Software: Python with deep learning frameworks (PyTorch/TensorFlow) and specialized HSLiNets implementation.

Procedure:

- Data Pre-processing:

- Ensure precise co-registration of HSI and LiDAR datasets.

- Normalize both data sources to common spatial resolution and coordinate system.

- For HSI data: apply necessary atmospheric and geometric corrections.

- For LiDAR data: process point cloud to generate digital surface models.

Model Architecture Configuration:

- Implement dual network pathway with bidirectional reversed convolutional neural networks (CNNs).

- Configure specialized spatial analysis blocks for joint spectral-spatial feature learning.

- Set parameters for spectral attention mechanisms to capture cross-band dependencies.

Model Training:

- Partition data into training, validation, and test sets (typical ratio: 60/20/20).

- Initialize model with He or Xavier initialization strategy.

- Set hyperparameters: learning rate (0.001-0.01), batch size (16-64), epochs (50-200).

- Employ early stopping based on validation loss to prevent overfitting.

Model Evaluation:

- Assess classification performance using overall accuracy (OA), average accuracy (AA), and Kappa coefficient.

- Compare against baseline methods (FusAtNet, EndNet) to validate performance advantages.

- Analyze computational efficiency metrics: training time, inference time, and memory usage.

Interpretation and Application:

- Visualize feature maps to interpret learned representations.

- Generate classification maps integrating spectral and structural information.

- Conduct spatial pattern analysis on output products.

Technical Notes: The HSLiNets architecture specifically addresses computational efficiency challenges associated with Transformer models while maintaining high classification accuracy [34]. When applied to benchmark datasets (e.g., Houston 2013), the model has demonstrated superior performance in complex urban and natural environments, particularly for distinguishing classes with similar spectral signatures but different structural characteristics [34]. The protocol is particularly valuable for applications requiring real-time or near-real-time processing of fused remote sensing data.

Visualization and Workflow Architectures

The integration of HSI with complementary sensing technologies and analytical workflows can be visualized through the following computational graph, which illustrates the HSLiNets architecture for HSI and LiDAR data fusion:

The end-to-end processing of hyperspectral data involves multiple stages from acquisition to actionable information, as illustrated in the following workflow:

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of hyperspectral remote sensing research requires specialized tools and analytical resources. The following table catalogs essential components of the HSI research toolkit, with particular emphasis on computational resources and validation methodologies necessary for reproducible science.

Table 3: Essential Research Toolkit for HSI Investigations

| Tool/Reagent Category | Specific Examples | Function/Purpose | Technical Specifications |

|---|---|---|---|

| Hyperspectral Sensors | UAV-based systems (HySpex Mjolnir), Portable field sensors, Airborne (AVIRIS-class) [30] [37] | Data acquisition across numerous narrow, contiguous spectral bands | VNIR (400-1000 nm), SWIR (1000-2500 nm); Spectral resolution: 5-10 nm; Spatial resolution: platform-dependent [30] |

| Reference Materials | Spectralon calibration panels, Field spectrometers (ASD FieldSpec) [35] | Radiometric calibration, field validation | >95% reflectance efficiency; calibrated to national standards |

| Computational Libraries | Python (scikit-learn, TensorFlow, PyTorch), ENVI, specialized HSLiNets code [34] [31] | Data preprocessing, algorithm implementation, deep learning | Support for matrix operations, spectral unmixing, spatial-spectral analysis |

| Validation Instruments | Chlorophyll meters, Soil nutrient test kits, GPS receivers, Laboratory spectrometers [35] | Ground truth data collection, model validation | Precision location data (<1 m accuracy); correlation with spectral features |

| Algorithmic Resources | Spectral libraries (USGS, JPL), Pre-trained models, Spectral unmixing tools [30] [31] | Material identification, comparison with known spectra, decomposition of mixed pixels | Continuously expanded libraries with diagnostic spectral features |