Hyperspectral Imaging vs. Spectroscopy: A Guide to Optical Analysis for Biomedical Research

This article provides a comparative analysis of hyperspectral imaging (HSI) and spectroscopy for researchers and professionals in drug development and biomedical science.

Hyperspectral Imaging vs. Spectroscopy: A Guide to Optical Analysis for Biomedical Research

Abstract

This article provides a comparative analysis of hyperspectral imaging (HSI) and spectroscopy for researchers and professionals in drug development and biomedical science. It covers the foundational principles of both techniques, highlighting how HSI captures spatially-resolved spectral data to form a 'hypercube,' while conventional spectroscopy provides detailed spectral information from a single point. The scope extends to methodological applications in areas like cancer diagnostics, pharmaceutical analysis, and tissue characterization, exploring the integration of machine learning for data processing. The article also addresses troubleshooting for data complexity and hardware limitations and offers frameworks for the validation and comparative assessment of each technique's performance. Finally, it synthesizes key takeaways to guide the selection of the appropriate analytical method for specific research objectives.

Core Principles: From Spectral Fingerprints to Spatial Maps

In the realm of optical analysis, researchers must often choose between two powerful technological approaches: point spectroscopy and hyperspectral imaging (HSI). These techniques, while sharing a common foundation in spectroscopy, differ fundamentally in their data acquisition methodologies and informational output. Point spectroscopy is an analytical technique that captures the complete spectrum of light from a single, discrete location on a sample, providing detailed chemical information for that specific point without inherent spatial context [1]. In contrast, hyperspectral imaging (HSI) represents an advanced methodology that combines imaging and spectroscopy to simultaneously capture both spatial and spectral information from across an entire sample area [1] [2]. This technique generates a three-dimensional dataset known as a "hypercube," which contains the full spectrum for each pixel in a two-dimensional spatial plane, thereby creating a detailed chemical map of the sample surface [1].

The core distinction lies in their fundamental data acquisition approaches. Point spectroscopy employs a "point-by-point mapping" or "whiskbroom" technique, where spectra are sequentially acquired from an array of points across the region of interest [3]. Conversely, HSI can utilize either "pushbroom" imaging (capturing a line of spectra simultaneously) or "direct hyperspectral imaging" (capturing a two-dimensional spatial image for each wavelength band) [3]. This fundamental difference in acquisition strategy leads to significant implications for their application in research and drug development, affecting factors such as analysis time, spatial coverage, and the type of scientific questions that can be effectively addressed.

Technical Comparison: Data Acquisition and Performance

The operational distinctions between point spectroscopy and HSI translate directly into measurable differences in performance characteristics, which determine their suitability for specific research scenarios. The following table summarizes the key technical parameters that differentiate these two approaches.

Table 1: Performance comparison between point spectroscopy and hyperspectral imaging

| Performance Characteristic | Point Spectroscopy | Hyperspectral Imaging |

|---|---|---|

| Spatial Coverage | Single point measurement | Entire field of view (FOV) at once [3] |

| Spectral Resolution | High (instrument dependent) | High (comparable to point spectroscopy) [3] |

| Data Dimensionality | Single spectrum per measurement | Hypercube (x, y spatial dimensions + spectral dimension) [1] [3] |

| Measurement Approach | Whiskbroom (point-by-point) [3] | Direct imaging or pushbroom [3] |

| Laser Power Density at Target | Higher (concentrated on single point) | Up to 277 times lower (spread across wider area) [3] |

| Analysis Speed for Large Areas | Slow (requires spatial scanning) | Fast (simultaneous spatial coverage) [3] [4] |

| Sensitivity to Subtle Changes | Limited to spectral changes | High (detects both spatial and spectral alterations) [5] |

A comparative study of stand-off Raman configurations highlights these performance trade-offs. When both techniques were deployed at 15 meters, the direct HSI system demonstrated superior spectral resolution and signal-to-noise ratio while more than doubling the FOV of the point imaging system, despite reducing laser power densities at the target by a factor of 277 [3]. This combination of wider coverage and reduced power density makes HSI particularly valuable for analyzing sensitive or valuable samples where minimal invasiveness is crucial.

The difference in data structure is equally significant. Point spectroscopy generates a single spectrum representing the average composition within the measurement spot, potentially obscuring spatial heterogeneity. HSI preserves the spatial relationships between different chemical components, enabling researchers to visualize distribution patterns and identify minor constituents that might be missed by point sampling [1]. This capability makes HSI exceptionally sensitive for detecting localized alterations, as demonstrated in studies where it identified laser-induced changes in paintings that were undetectable by point Raman spectroscopy [5].

Experimental Applications and Protocols

Stand-off Chemical Identification

Objective: To compare the effectiveness of point imaging (PI) and direct hyperspectral Raman imaging (HSRI) for chemical identification at a distance of 15 meters [3].

Methodology:

- PI System: A collimated laser beam created an illuminated point with a diameter of 6 mm, mapped over the target area using a motorized mirror. Backscattered Rayleigh light was filtered, and Raman photons were guided via a fiber optical bundle to a spectrograph coupled with an intensified charge-coupled device (iCCD) camera [3].

- HSRI System: An expanded laser beam illuminated an area of approximately 100 mm diameter. Raman photons were filtered through a liquid crystal tunable filter (LCTF) and directly imaged onto an iCCD camera. The system collected spectral snapshots, with the hyperspectral image cube built by stacking these snapshots [3].

- Data Analysis: The output hyperspectral image data cube from the HSRI system was processed with chemometric algorithms like vertex component analysis to generate false-color images representing chemical composition [3].

Key Findings: The HSRI system demonstrated superior spectral resolution and signal-to-noise ratio while covering more than double the FOV of the PI system with significantly reduced laser power density (factor of 277 lower) [3]. This makes HSRI particularly advantageous for applications requiring analysis of sensitive materials or large areas.

Monitoring Laser-Induced Alterations on Cultural Heritage Materials

Objective: To evaluate the sensitivity of VIS-NIR HSI compared to point Raman spectroscopy for detecting subtle laser-induced alterations on paint mock-ups [5].

Methodology:

- Sample Preparation: 15-year-old oil paint mock-ups containing vermilion, realgar, and red lead pigments applied on a preparation layer of chalk in rabbit skin glue on plywood substrate [5].

- Irradiation: Three different continuous wave lasers (532 nm, 785 nm, 1064 nm) at different spot sizes, power levels, and exposure times were used to induce potential alterations [5].

- Monitoring: Simultaneous monitoring using VIS-NIR reflectance HSI (400-900 nm) and thermal imaging during Raman spectroscopy. Post-irradiation analysis using optical coherence tomography and synchrotron-based micro-X-ray powder diffraction to confirm physical and chemical changes [5].

- Data Analysis: Comparison of pre-irradiation (120 s) and post-irradiation (300 s) reflectance spectra to detect subtle changes [5].

Key Findings: HSI was "orders of magnitude more sensitive" than point Raman spectroscopy and even synchrotron-based micro-X-ray powder diffraction in detecting laser-induced alterations [5]. Transient and reversible reflectance changes detected by HSI served as precursors to permanent damage, enabling the establishment of safety thresholds for laser analysis of sensitive materials.

Microplastics Analysis

Objective: To compare the efficiency of point spectroscopy versus HSI for the identification and characterization of microplastics (MP) in environmental samples [4].

Methodology:

- Sample Preparation: Environmental samples processed through density separation, enzymatic treatment, and wet peroxidation to isolate MP, which are then deposited on filters [4].

- Point Spectroscopy Analysis: Manual or software-assisted selection of particles followed by point measurements using FT-IR or Raman spectroscopy. For automated analysis, particle identification software selects particles based on bright or dark-field imaging, followed by sequential point measurements using a motorized stage [4].

- HSI Analysis: Entire filter imaged using HSI systems, generating a data cube where each pixel contains a full spectrum. Chemical identification performed through multivariate classification algorithms applied to the entire dataset [4].

- Data Analysis: For point spectroscopy, each identified particle is analyzed individually. For HSI, chemometric analysis identifies polymer types across the entire filter surface without prior particle selection [4].

Key Findings: HSI significantly reduces analysis time compared to point-by-point mapping, especially for samples with numerous particles [4]. While point techniques can achieve slightly better spatial resolution (detecting particles down to 1 μm for Raman versus >250 μm for HSI), HSI provides complete spatial coverage without selection bias, providing more representative data for heterogeneous samples [4].

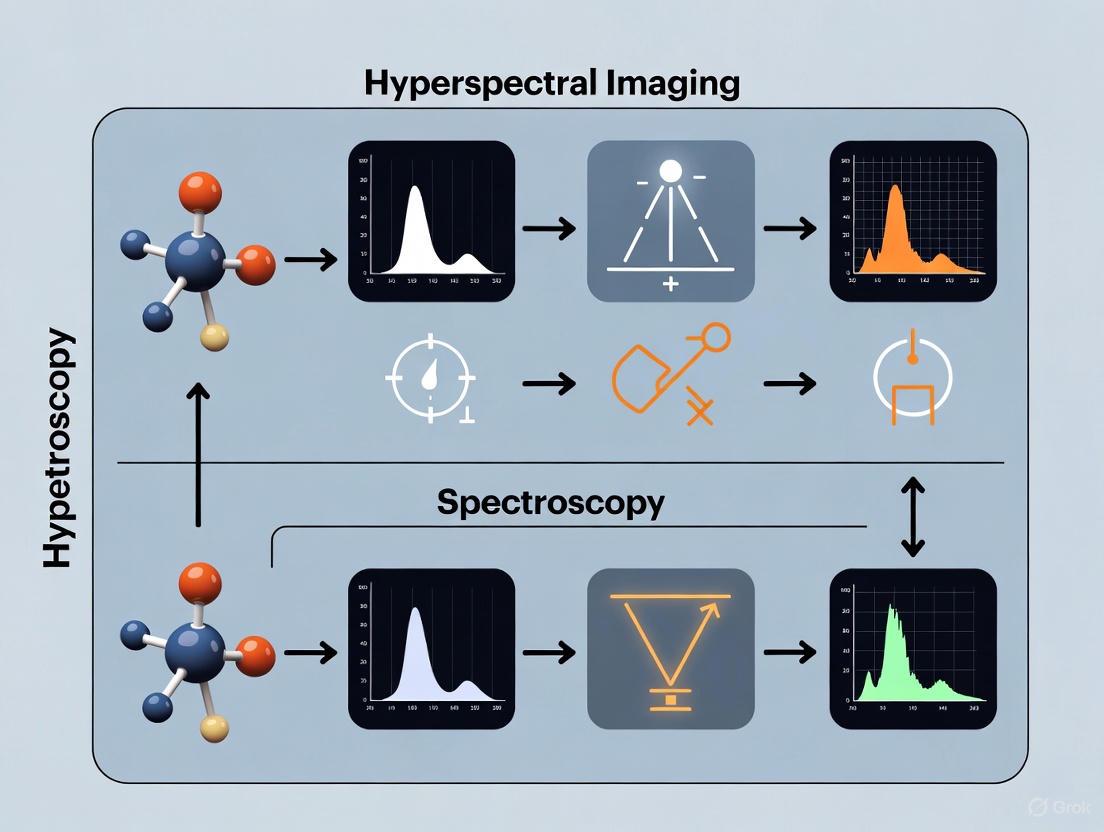

The following diagram illustrates the fundamental workflow differences between these two analytical approaches:

The Scientist's Toolkit: Key Research Reagents and Materials

Table 2: Essential equipment and materials for point spectroscopy and HSI experiments

| Item | Function | Example Applications |

|---|---|---|

| Liquid Crystal Tunable Filter (LCTF) | Electrically tunable optical filter that selects specific wavelength bands for direct HSI [3]. | Stand-off Raman imaging, chemical mapping [3]. |

| Motorized Translation Stage | Precisely moves samples for point-by-point mapping in whiskbroom imaging [3]. | Automated point spectroscopy mapping, spatial scanning [3]. |

| Intensified CCD (iCCD) Camera | High-sensitivity detector capable of gating to suppress ambient light during Raman measurements [3]. | Stand-off spectroscopy, low-light conditions [3]. |

| Fiber Optical Bundle | Transmits collected light from telescope to spectrometer in point imaging systems [3]. | Remote point spectroscopy, flexible light collection [3]. |

| VIS-NIR Hyperspectral Imager | Captures spectral data in the 400-900 nm range for reflectance spectroscopy [5]. | Monitoring laser-induced alterations, pigment analysis [5]. |

| IR-Transparent Filters | Specialized substrates that allow transmission measurements for FT-IR spectroscopy [4]. | Microplastics analysis, transmission spectroscopy [4]. |

| Algorithmically Designed Color Reference Chart | Provides spectral calibration for computational spectrometry methods [6]. | Recovering spectral information from conventional photographs [6]. |

The choice between point spectroscopy and hyperspectral imaging depends fundamentally on the specific research objectives and sample characteristics. Point spectroscopy remains invaluable for applications requiring detailed spectral analysis of specific, known sample locations, particularly when high spectral resolution is paramount and spatial distribution is either known or irrelevant. Its simpler instrumentation and potentially lower cost make it accessible for routine analytical tasks.

Conversely, hyperspectral imaging provides distinct advantages when dealing with heterogeneous samples, unknown composition distribution, or when the research question requires understanding spatial relationships between different chemical components. The ability to survey entire samples rapidly and non-destructively makes HSI particularly valuable for precious or irreplaceable samples, quality control processes requiring comprehensive assessment, and exploratory research where unexpected constituents might be present.

For drug development professionals and researchers, the emerging trend involves integrating both technologies within complementary workflows. Point spectroscopy can provide detailed verification of specific regions identified through initial HSI screening, combining the comprehensive spatial coverage of HSI with the potentially higher spectral detail of point analysis. Furthermore, advancements in computational methods, such as algorithms that extract hyperspectral information from conventional photographs, promise to make spatial-spectral analysis more accessible across various research applications [6].

Hyperspectral imaging (HSI) and spectroscopy represent two powerful paradigms for material analysis across scientific disciplines. While both techniques rely on the interaction of light with matter to generate spectral data, their fundamental output—a single spectrum versus a hypercube—dictates their respective applications, strengths, and limitations. Spectroscopy is a technique that identifies materials by analyzing their spectral signatures based on the absorption, reflection, and scattering characteristics of light at different wavelengths [7]. It typically provides a single spectrum representing the average composition of a measured spot.

In contrast, hyperspectral imaging (HSI) merges spectroscopy with digital imaging [1]. Unlike standard cameras that capture only three color channels (red, green, and blue), HSI systems record hundreds or even thousands of contiguous spectral bands for each pixel in an image [8]. This creates a three-dimensional data structure known as a hypercube or datacube, which contains two spatial dimensions (x, y) and one spectral dimension (λ) [9] [10]. This rich dataset enables researchers to not only identify chemical compositions but also visualize their spatial distribution across a sample.

Within optical analysis research, particularly in pharmaceutical development and life sciences, the choice between these techniques carries significant implications for experimental design, data complexity, and analytical outcomes. This guide provides a structured comparison of their data output fundamentals to inform method selection.

Fundamental Data Structures: From Spectra to Hypercubes

The Spectroscopy Spectrum: One-Dimensional Data Output

A spectroscopic analysis generates a spectrum—a one-dimensional plot representing light intensity as a function of wavelength or frequency. This plot serves as a unique "chemical fingerprint" where peaks and valleys correspond to specific molecular vibrations, bonds, or elements. In a typical experiment, this spectrum represents the average composition within the instrument's measurement spot, lacking inherent spatial context. Each material exhibits distinctive spectral patterns based on its chemical composition and physical properties [7].

Table: Core Characteristics of Spectroscopic Data Output

| Feature | Description | Typical Data Scale |

|---|---|---|

| Dimensionality | 1D (Intensity vs. Wavelength) | - |

| Spatial Context | None (point measurement) | Single spot analysis |

| Data Volume | Low | Kilobytes (KB) per spectrum |

| Primary Output | Spectral curve / graph | - |

| Composition Info | Bulk chemical identification | Average for measured area |

The HSI Hypercube: Three-Dimensional Data Integration

The hypercube is the defining data structure of HSI, integrating spatial and spectral information into a single three-dimensional dataset [9]. Imagine a stack of images, where each layer represents the same scene captured at a different, specific wavelength. Each pixel in this cube contains a full, continuous spectrum, allowing for the precise identification of materials based on their spectral signature while preserving their location information [8] [10].

Table: Core Characteristics of a Hyperspectral Hypercube

| Feature | Description | Typical Data Scale |

|---|---|---|

| Dimensionality | 3D (x, y, λ) | - |

| Spatial Context | High (per-pixel spectrum) | Millions of spatial pixels |

| Data Volume | Very High | Megabytes to Gigabytes (MB-GB) per cube |

| Primary Output | Data cube / hypercube | - |

| Composition Info | Chemical identification & spatial distribution | Maps of component distribution |

Diagram: HSI Hypercube Generation. The process begins with optical scanning of a physical sample, resulting in a 3D hypercube containing two spatial dimensions (X, Y) and one spectral dimension (λ). Each pixel within the spatial plane contains a full spectral signature that can be extracted for material identification.

Technical Comparison: Capabilities and Limitations

Direct Performance Comparison in Forensic Science

A 2025 study directly compared HSI and Near-Infrared (NIR) spectroscopy for estimating the age of bloodstains in forensic contexts, providing concrete experimental data on their relative performance [11]. The research aged bloodstains on various substrates over 60 days, analyzing them periodically with both techniques.

Table: Experimental Comparison of HSI vs. NIR Spectroscopy for Bloodstain Age Estimation

| Parameter | Hyperspectral Imaging (HSI) | Near-Infrared (NIR) Spectroscopy |

|---|---|---|

| Application Context | Bloodstain deposition time estimation | Bloodstain deposition time estimation |

| Data Type | Spatial-spectral hypercubes | Point-based spectral measurements |

| Key Advantage | Visualizes spatial distribution of age-related changes | Superior penetration capabilities & high sensitivity |

| Processing Method | Partial Least Squares (PLS) polynomial regression, Multilayer Perceptron (MLP) | Partial Least Squares (PLS) polynomial regression, Multilayer Perceptron (MLP) |

| Prediction Error (RMSEP) | 8.35 days (homologous data) | 8.15 days (homologous data) |

| Optimal Approach | Multimodal data fusion with NIR | Multimodal data fusion with HSI |

| Reference | Liang et al. 2025, Anal. Methods [11] | Liang et al. 2025, Anal. Methods [11] |

The study found that while both techniques achieved similar prediction errors as standalone methods, fusing data from both modalities through multimodal data fusion significantly enhanced the overall model performance and general applicability [11]. This demonstrates the complementary nature of imaging and non-imaging spectroscopic approaches.

Methodological Workflows in Practice

Spectroscopy Workflow Protocol:

- Sample Preparation: Samples are typically homogenized or representative portions selected for point measurement.

- Instrument Calibration: Standard reference materials are used to calibrate the spectrometer.

- Spectral Acquisition: The instrument collects a single spectrum from the measurement spot, averaging the signal over the sampled area.

- Spectral Preprocessing: Techniques include smoothing, baseline correction, and normalization to reduce noise and enhance features [7].

- Chemometric Analysis: Multivariate analysis (e.g., PLS regression) correlates spectral features with properties of interest [11].

HSI Workflow Protocol:

- System Setup: Appropriate HSI system selection (push broom, snapshot) based on application needs [9].

- Spatial-Spectral Calibration: White reference imaging corrects for uneven illumination; dark reference accounts for sensor noise [9].

- Hypercube Acquisition: Capture of the full spatial-spectral data cube across the desired wavelength range (e.g., 400-2500 nm) [12].

- Data Preprocessing: Includes background masking to isolate regions of interest, normalization of reflectance, and correction of lighting artifacts [9].

- Spectral-Spatial Analysis: Application of machine learning algorithms for segmentation, classification, and quantitative mapping of components [11] [1].

Diagram: Comparative Workflows: HSI vs. Spectroscopy. The workflows diverge at sample preparation, with HSI preserving spatial structure and spectroscopy requiring homogenization. The outputs differ fundamentally (chemical maps vs. bulk quantification), though they can be fused for enhanced analysis.

Application-Specific Performance Across Industries

Performance Metrics in Diverse Research Applications

The distinctive data outputs of spectroscopy and HSI make them suitable for different application scenarios across industries. The following table summarizes their documented performance in various scientific domains.

Table: Application Performance Across Scientific Domains

| Field | HSI Performance & Applications | Spectroscopy Performance & Applications |

|---|---|---|

| Pharmaceuticals & Drug Discovery | Label-free drug visualization in cells; monitoring drug metabolism [13] | Bulk chemical analysis of pharmaceutical compounds; quality control |

| Medical Diagnostics | Differentiates cancerous vs. healthy tissue (87-95% accuracy) [8] [10]; intraoperative guidance | Liquid biopsies; serum analysis; limited spatial information |

| Forensic Science | Bloodstain age estimation (RMSEP: ~8 days); species identification with machine learning [11] [1] | Bloodstain age estimation (RMSEP: ~8 days); bulk material identification [11] |

| Agriculture & Food Science | Egg freshness prediction (R²: 0.91); crop disease detection (98% accuracy) [8] [1] | Composition analysis of homogenized food samples; nutrient quantification |

| Environmental Monitoring | Soil property estimation; water quality assessment; plastic waste detection (70-80% accuracy) [8] [14] | Point measurements of soil/water parameters; limited spatial coverage |

Complementary Roles in Pharmaceutical Research

In drug discovery and development, HSI and spectroscopy offer complementary capabilities. Spectroscopy techniques like spontaneous Raman scattering provide detailed molecular information from specific cellular locations [13]. However, the emergence of hyperspectral SRS microscopy has enabled rapid, label-free visualization of drug distributions within cells and tissues by generating detailed chemical maps based on molecular vibrations [13].

This capability is particularly valuable for assessing drug uptake, localization, and metabolism in preclinical models—key factors in reducing drug attrition rates [13]. The hypercube data output allows researchers to correlate drug distribution with specific cellular compartments or tissue structures, providing insights that single-point spectroscopy cannot deliver.

Implementation Considerations for Research Applications

The Scientist's Toolkit: Essential Research Materials

Table: Essential Equipment and Analytical Tools

| Item | Function | Relevance to Data Output |

|---|---|---|

| Hyperspectral Imaging Systems (e.g., SPECIM IQ [9]) | Captures spatial-spectral hypercubes across VNIR-SWIR ranges (400-2500 nm) [12] | Generates 3D hypercube data with both spatial and spectral information |

| Spectrometers (NIR, Raman) | Measures point-based spectral signatures of samples | Produces 1D spectral data for bulk composition analysis |

| Standard Reference Panels | Calibrates instruments for accurate reflectance measurements [9] | Ensures data accuracy and comparability across both techniques |

| Chemometrics Software | Applies algorithms (PLS, PCA, MLP) for spectral analysis [11] [7] | Extracts meaningful information from spectral and spatial-spectral data |

| Machine Learning Libraries (Python, Scikit-learn [9]) | Processes high-dimensional data; performs classification & regression | Essential for analyzing complex hypercube data from HSI |

Strategic Selection Guidelines

Choosing between HSI and spectroscopy involves careful consideration of research objectives and practical constraints:

Select Hyperspectral Imaging When:

- Research questions require spatial distribution mapping of chemical components

- Samples are heterogeneous with spatial variations in composition

- The application involves unknown or variable regions of interest

- Objectives include visualizing gradients, boundaries, or localized phenomena

- Applications include tissue classification, crop health mapping, or material heterogeneity studies [8] [10]

Select Spectroscopy When:

- Primary need is high-throughput bulk composition analysis

- Samples are homogeneous or can be effectively homogenized

- Budget, data storage, or computational resources are limited

- Applications focus on quantitative analysis of known, uniform materials

- Rapid, portable measurement is prioritized over spatial information [11] [7]

For complex analytical challenges, a combined approach utilizing both techniques often provides the most comprehensive solution, as demonstrated by the forensic study where data fusion significantly enhanced prediction accuracy [11].

The fundamental distinction between a single spectrum and a hypercube defines the analytical capabilities of spectroscopy and hyperspectral imaging. Spectroscopy excels at providing precise chemical fingerprints from specific locations, while HSI delivers comprehensive spatial-chemical maps, enabling visualization of heterogeneity and distribution patterns.

In optical analysis research, particularly for drug development and life sciences, this distinction directly impacts experimental design and analytical outcomes. HSI's hypercube data structure offers unparalleled insights into spatial relationships and heterogeneity, while spectroscopy provides efficient, high-precision chemical analysis for homogeneous materials. The emerging trend of multimodal integration, combining both approaches with advanced machine learning, represents the future of optical analysis—leveraging the complementary strengths of both data paradigms to advance scientific discovery.

In optical analysis, the electromagnetic spectrum is divided into specific regions based on how light interacts with matter. The ultraviolet (UV), visible (VIS), near-infrared (NIR), and short-wave infrared (SWIR) ranges constitute the fundamental "optical window" for a vast array of analytical techniques, from microscopy to remote sensing [15]. These non-ionizing radiation bands are critical for research because their photon energies correspond to specific molecular transitions, including electronic excitations (UV-VIS) and molecular vibrations (NIR-SWIR) [15] [16]. The ability to probe these interactions forms the basis for identifying materials, assessing chemical composition, and determining physical properties in a non-destructive manner, which is particularly valuable in pharmaceutical and biomedical research [17].

The distinction between hyperspectral imaging and spectroscopy often lies in how these wavelength ranges are exploited. While conventional spectroscopy techniques typically measure a single spectrum from a sample point or volume, hyperspectral imaging generates a spatially resolved spectrum for each pixel in a scene, creating a rich three-dimensional dataset known as a hyperspectral cube [15] [18]. This integration of spatial and spectral information enables more comprehensive analysis but requires careful consideration of the operational wavelength ranges to optimize detection for specific applications.

Defining the Spectral Regions

The optical spectrum used in analytical applications is systematically divided into regions defined by specific wavelength boundaries. Each region offers unique insights based on how different materials absorb, reflect, or emit radiation.

Table 1: Standard Wavelength Ranges for Optical Analysis

| Spectral Region | Wavelength Range | Primary Interactions | Key Material Properties Probed |

|---|---|---|---|

| Ultraviolet (UV) | 200 - 400 nm [15] | Electronic transitions | Molecular conjugation, chromophores |

| Visible (VIS) | 400 - 700 nm [15] [19] | Electronic transitions | Color, pigment composition |

| Near-Infrared (NIR) | 700 - 1000/1100 nm [15] [20] | Overtone & combination vibrations | Organic functional groups (O-H, N-H, C-H) |

| Short-Wave Infrared (SWIR) | 1000 - 2500 nm [15] [16] [19] | Fundamental molecular vibrations | Moisture, proteins, sugars, mineral composition |

Ultraviolet and Visible (UV-VIS) Regions

The UV (200-400 nm) and VIS (400-700 nm) regions involve high-energy photons that cause electronic transitions in molecules [15]. These transitions occur when electrons are promoted to higher energy orbitals, making UV-VIS spectroscopy particularly sensitive to conjugated systems and chromophores. In pharmaceutical research, this region is extensively used for quantifying drug concentrations, studying protein-ligand interactions, and validating the identity of chemical compounds through characteristic absorption profiles [17].

Near-Infrared (NIR) Region

The NIR region (700-1100 nm) captures overtone and combination bands of fundamental molecular vibrations, primarily those involving C-H, O-H, and N-H bonds [15] [20]. While these signals are weaker than fundamental absorptions, they enable deeper penetration into biological tissues and are valuable for non-invasive analysis of intact samples. The NIR region is often called the "NIR-I" window in biomedical contexts, where it facilitates small animal imaging with reduced scattering compared to visible light [20].

Short-Wave Infrared (SWIR) Region

The SWIR region (1000-2500 nm) contains more specific and pronounced spectral features resulting from fundamental molecular vibrations [16]. This region provides distinct spectral fingerprints for many materials, including moisture content, sugars, proteins, and specific mineral types [16]. SWIR imaging is particularly powerful because it can probe chemical composition beyond surface appearance. Recent technological advances have expanded the traditional SWIR range (900-1700 nm) to include extended SWIR (eSWIR) up to 2500 nm, enabling detection of an even broader array of molecular species [19].

Comparative Analysis: Hyperspectral Imaging vs. Spectroscopy

The choice between hyperspectral imaging and point spectroscopy depends on the research objectives, with each approach offering distinct advantages for material characterization and analysis.

Table 2: Hyperspectral Imaging vs. Spectroscopy for Optical Analysis

| Analysis Feature | Hyperspectral Imaging (HSI) | Conventional Spectroscopy |

|---|---|---|

| Data Dimensionality | 3D (x, y, λ): Spatially resolved spectra [15] | 1D (λ): Single point or bulk measurement [21] |

| Spectral Resolution | High (hundreds of contiguous bands) [18] | Very High (can exceed HSI) [21] |

| Spatial Information | Directly provides chemical distribution maps [16] | Requires raster scanning for mapping |

| Measurement Throughput | High for large areas (parallel acquisition) [18] | Lower (sequential point measurements) |

| Data Volume | Large (data cubes require significant processing) [21] [15] | Small (individual spectra) |

| Primary Applications | Tissue heterogeneity, mineral mapping, quality control [22] [16] | Concentration quantification, kinetic studies, pure material analysis [17] |

Data Structure and Information Content

Hyperspectral imaging creates a data cube where two spatial dimensions combine with one spectral dimension, providing a complete spectrum for every pixel [15]. This structure enables researchers to visualize the spatial distribution of chemical components within a sample. For example, in pharmaceutical quality control, HSI can map the homogeneity of active ingredient distribution in a tablet, while also detecting contaminants in specific regions [22] [17]. In contrast, conventional spectroscopy generates a single spectrum representing the average composition of the sampled volume, making it excellent for uniform samples but limited for heterogeneous materials [21].

Application-Specific Performance

The selection between these techniques often depends on the fundamental research question. Hyperspectral imaging excels when spatial distribution is critical, such as in medical diagnostics for identifying tumor margins, in agriculture for mapping crop stress, or in mineralogy for identifying ore distribution [22] [16] [18]. Spectroscopy remains superior for high-precision quantification of analytes in solution, detailed kinetic studies, and when analyzing minute spectral shifts requiring the highest possible spectral resolution [21] [17]. In drug development, this might translate to using spectroscopy for precise concentration measurements in plasma, while employing HSI for characterizing drug distribution in tissue samples [17].

Experimental Protocols for Spectral Analysis

Protocol 1: SWIR Hyperspectral Imaging for Pharmaceutical Quality Control

This protocol details the detection of active pharmaceutical ingredient (API) distribution in solid dosage forms using SWIR hyperspectral imaging [16].

Methodology:

- Sample Preparation: Place tablet samples on a motorized translation stage under the SWIR hyperspectral camera.

- Image Acquisition: Use a push-broom SWIR imaging system (1000-2500 nm range) with spectral resolution ≤ 10 nm. Illuminate samples with halogen lamps at 30° incidence angle to minimize specular reflection.

- Spectral Calibration: Acquire dark current (with lens cap) and white reference (using Spectralon panel) images for radiometric correction.

- Data Collection: Scan each tablet with spatial resolution ≤ 50 μm/pixel. Generate hyperspectral cubes with spatial dimensions (x, y) and spectral dimension (λ).

- Data Analysis:

- Apply Savitzky-Golay smoothing and Standard Normal Variate (SNV) normalization to reduce noise.

- Use Principal Component Analysis (PCA) to identify spectral patterns associated with API and excipients.

- Develop partial least squares (PLS) regression models to quantify API concentration, validated with reference HPLC measurements.

Protocol 2: NIR-II/SWIR Fluorescence Spectroscopy for Preclinical Imaging

This protocol utilizes the NIR-II/SWIR window (1000-1700 nm) for deep-tissue fluorescence imaging in small animals, offering superior resolution compared to conventional NIR-I imaging [20].

Methodology:

- Fluorescent Probe Selection: Administer NIR-II fluorescent probes (e.g., single-walled carbon nanotubes, quantum dots, or rare-earth-doped phosphors) via tail vein injection in mice.

- Instrument Setup: Use a scientific-grade InGaAs camera (640×512 pixel array) with sensitivity in the 900-1700 nm range. Cool detector to -40°C to reduce dark current.

- Excitation Source: Illuminate with 808 nm laser source with appropriate bandpass filters.

- Image Acquisition: Acquire fluorescence images with exposure times 100-500 ms. Use 1300 nm longpass emission filters to isolate NIR-II signal.

- Data Processing:

- Subtract background autofluorescence using images from pre-injection time points.

- Apply flat-field correction to compensate for uneven illumination.

- Calculate signal-to-background ratio (SBR) and spatial resolution using line profile analysis across blood vessels.

- Compare image quality with parallel NIR-I (700-900 nm) imaging using silicon-based detectors.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of optical analysis techniques requires specific instrumentation and reagents tailored to the wavelength range and application.

Table 3: Essential Research Tools for Optical Analysis Across Spectral Ranges

| Tool Category | Specific Examples | Function & Application |

|---|---|---|

| Detection Systems | InGaAs FPA cameras (640×512) [23] [20], SenSWIR sensors [19], Scientific CCD/CMOS [20] | SWIR/NIR detection (InGaAs), VIS/NIR detection (Si-based sensors) |

| Spectral Separation | Diffraction gratings [15], Prisms [15], Liquid Crystal Tunable Filters (LCTFs) [15] | Wavelength dispersion for spectral resolution |

| Illumination Sources | Halogen lamps [19], 808 nm lasers [20], Tungsten-filament sources | Broadband (halogen) or specific (laser) excitation |

| Reference Materials | Spectralon panels [16], NIST-traceable standards | Radiometric calibration, wavelength verification |

| Fluorescent Probes | Single-walled carbon nanotubes [20], Rare-earth-doped phosphors [20], Quantum dots [20] | Contrast agents for NIR-II bioimaging |

| Software Tools | The Spectral Assistant/TSSA [21], LightField [20], ENVI | Hyperspectral data processing & analysis |

The strategic selection of wavelength ranges—UV-VIS-NIR-SWIR—forms the foundation of effective optical analysis in research environments. While UV-VIS provides electronic transition information valuable for quantification, the NIR-SWIR regions offer deeper material characterization through vibrational spectroscopy. The choice between hyperspectral imaging and conventional spectroscopy represents a fundamental trade-off between spatial mapping capability and spectral resolution or measurement throughput. Hyperspectral imaging excels in visualizing chemical distribution in complex, heterogeneous samples, while spectroscopy remains powerful for precise quantification of specific analytes. Emerging technologies, including extended-range InGaAs detectors, quantum-enhanced sensors, and miniaturized hyperspectral systems, continue to expand the applications of these techniques across pharmaceutical development, biomedical research, and material science [24] [19]. Researchers can optimize their analytical approaches by understanding the specific information content and technical requirements of each spectral region and measurement modality.

Hyperspectral imaging (HSI) and spectroscopy are foundational technologies in modern optical analysis, each offering distinct approaches to material characterization. Hyperspectral imaging combines imaging and spectroscopy to capture a three-dimensional data cube, providing both spatial and spectral information for every pixel in a scene [1]. This enables researchers to visualize the distribution of chemical compounds across a sample surface. In contrast, conventional spectroscopy typically acquires a single spectrum from a specific spot on a sample, providing detailed chemical information but without inherent spatial context [25]. The core hardware components—spectrometers, detectors, and imaging systems—define the capabilities, limitations, and appropriate applications for each technique, making component selection critical for research and drug development applications.

The technological landscape is evolving rapidly, with the global hyperspectral imaging systems market projected to grow from USD 847 million in 2024 to approximately USD 1,535 million by 2029, representing a compound annual growth rate (CAGR) of 12.6% [26]. This growth is fueled by emerging applications across healthcare, life sciences, and drug development that demand more precise analytical capabilities. This guide provides a detailed comparison of the key hardware components, supported by experimental data, to inform researchers and professionals in selecting the optimal technology for their specific analytical requirements.

Core Hardware Components and Technologies

Detector Technologies

The detector is a critical hardware component that converts photons into electrical signals, with its material composition determining sensitivity across specific wavelength ranges.

Table 1: Key Detector Technologies for Hyperspectral Imaging and Spectroscopy

| Detector Type | Material Composition | Wavelength Range | Common Applications | Relative Performance |

|---|---|---|---|---|

| Silicon (Si) | Crystalline Silicon | ~400-1000 nm (VNIR) | Plant phenotyping, color measurement [27] [28] | High quantum efficiency in VNIR; insensitive beyond 1000 nm |

| Indium Gallium Arsenide (InGaAs) | InGaAs photodiode arrays | 900-1700 nm (NIR) [25] | Wood analysis, pharmaceutical authentication, forensic blood detection [11] [25] [8] | Good SNR; typically requires cooling for optimal performance [25] |

| Mercury Cadmium Telluride (MCT) | HgCdTe alloy | 1000-2500 nm (SWIR) [25] | Moisture mapping, polymer analysis, chemical imaging [27] [25] | High sensitivity across SWIR; requires cryogenic cooling |

| Next-Generation Sensors (e.g., IMX990) | Advanced semiconductor | 490-1780 nm [29] | Industrial sorting, document verification, medical diagnostics [29] | Enhanced spectral detail with reduced noise; single-camera solution [29] |

Spectrometer Configurations

Spectrometers form the core of both conventional spectroscopy and hyperspectral imaging systems, with distinct configurations optimized for different measurement scenarios.

Conventional Benchtop Spectrometers typically utilize a point-based approach where light is collected from a single, defined location on a sample via a fiber optic probe or direct illumination [25]. These systems provide high spectral resolution and signal-to-noise ratio (SNR) by averaging multiple sub-scans from the same spot, making them ideal for homogeneous sample analysis or when detailed chemical information from specific points is required [25]. The hardware typically includes a high-resolution dispersive element and a sensitive detector array, with scan times averaging 40 seconds per sample in comparative studies [25].

Hyperspectral Imaging Spectrometers employ various scanning methodologies to capture spatial and spectral information simultaneously:

Pushbroom/Line-Scanning: This dominant HSI technology captures an entire line of spatial data with full spectral information for each pixel simultaneously [27] [26]. The system moves the sample or sensor to build a complete data cube. Pushbroom systems accounted for the largest market share (62% in 2024) and are expected to maintain 30% revenue share in 2025 due to their high spatial and spectral fidelity [27] [30]. They are particularly valuable for conveyor-based industrial applications and laboratory analysis of solid samples [26].

Snapshot Imaging: These systems capture the entire hyperspectral data cube in a single exposure without scanning, enabling rapid analysis of dynamic processes [27]. While offering advantages for real-time applications, they typically provide lower spatial and spectral resolution compared to pushbroom systems. The snapshot imaging segment is growing rapidly with a CAGR of 16.9% [27].

Tunable-Filter Systems: These systems employ electronically tunable filters (such as acousto-optic or liquid crystal filters) that sequentially scan through wavelengths while a conventional camera captures images at each band [27]. This approach offers flexibility in spectral band selection but involves longer acquisition times due to the sequential capture process.

Imaging Systems and Platforms

The integration of spectroscopic components into complete imaging systems creates platforms tailored to specific application environments:

Laboratory Imaging Systems include benchtop HSI instruments designed for controlled laboratory settings. These systems typically incorporate stable illumination sources, precision translation stages for sample movement, and environmental controls to ensure measurement consistency. Examples include the Specim FX120, a long-wave infrared pushbroom camera for chemical imaging [26].

Portable and Handheld Devices represent a growing segment enabled by detector miniaturization. These battery-operated instruments allow field-based analysis for applications such as agricultural monitoring, environmental assessment, and pharmaceutical authentication [8] [28]. The development of "snapshot" HSI technology is particularly relevant for smartphone integration and medical diagnostics [27].

Remote Sensing Platforms include UAV-mounted and satellite-based HSI systems for large-area monitoring. The FY 2025 U.S. defense budget earmarks significant funding for space-borne hyperspectral constellations, with companies like Orbital Sidekick already deploying satellites with 468 spectral bands dedicated to methane and pipeline monitoring [27].

Performance Comparison and Experimental Data

Analytical Performance Metrics

Direct comparisons between hyperspectral imaging and conventional spectroscopy reveal distinct performance characteristics across key metrics:

Table 2: Performance Comparison of HSI and Conventional Spectroscopy

| Performance Metric | Hyperspectral Imaging (Pushbroom) | Conventional Spectroscopy (Point-based) |

|---|---|---|

| Spatial Information | Full spatial context (data cube) [1] | Single point measurement [25] |

| Spectral Range | VNIR (400-1000 nm), SWIR (1000-2500 nm), LWIR (7.7-12.3 µm) [27] [26] | Typically limited to specific range per instrument |

| Spectral Resolution | Varies (e.g., 1.43 nm/channel for advanced systems [29]) | Typically higher resolution for benchtop systems |

| Acquisition Speed | 3-5 seconds per sample for lab systems [25] | ~40 seconds per sample for high-quality spectra [25] |

| Signal-to-Noise Ratio | Generally lower due to limited integration time per pixel [25] | Higher due to multiple averaged sub-scans [25] |

| Data Volume | Very high (1+ TB/hour for megapixel cameras) [27] | Moderate (MB per spectrum) |

Experimental Comparison Studies

Wood Property Analysis

A rigorous comparison of one NIR-HSI (900-1700 nm) and three SWIR-HSI (1000-2500 nm) cameras with a benchtop NIR spectrometer (1100-2500 nm) for analyzing specific gravity (SG) and stiffness (MOE) in Douglas-fir samples revealed important performance differences [25].

Experimental Protocol:

- Sample Preparation: 100 Douglas-fir samples were selected to represent the full range of MOE and SG values

- Spectral Acquisition: All samples were analyzed on four different HSI instruments and a benchtop NIR spectrometer

- Data Processing: Spectral data from all instruments was processed using consistent chemometric methods

- Model Validation: Calibration models for SG and MOE were developed and validated using cross-validation techniques

Key Findings: The limited wavelength range NIR-HSI camera (900-1700 nm) provided the best models for MOE prediction, while both NIR-HSI and two SWIR-HSI cameras delivered comparable SG results. The benchtop NIR spectrometer generally provided superior signal-to-noise ratio but without spatial context [25].

Bloodstain Deposition Time Estimation

Forensic research compared HSI and NIR spectroscopy for estimating bloodstain deposition time, highlighting the complementary value of both technologies [11].

Experimental Protocol:

- Sample Preparation: Bloodstains were aged on various substrates over a 60-day period

- Spectral Acquisition: Periodic analyses conducted using both HSI and NIR spectroscopy

- Data Processing: Chemometric analysis following standard normal variate (SNV) preprocessing

- Model Development: Application of PLS regression with polynomial features and multilayer perceptron (MLP) for data fusion

Key Findings: For homologous data fusion, comparable root mean square errors of prediction (RMSEP) were achieved for HSI and NIR spectra (8.35 and 8.15 days, respectively). The integration of both methods through data fusion helped mitigate external influences and enhanced general applicability [11].

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Materials for Hyperspectral Imaging and Spectroscopy

| Item | Function | Example Applications |

|---|---|---|

| Spectralon Reference Panels | Provides >99% diffuse reflectance for calibration [25] | Instrument calibration before sample measurement |

| Tungsten Halogen Illumination | Stable, broad-spectrum light source for NIR-SWIR [25] | Consistent sample illumination in laboratory HSI |

| Peltier-Cooled Detector Assemblies | Reduces thermal noise in InGaAs and MCT detectors [25] | Improving SNR in NIR and SWIR spectral regions |

| Linear Translation Stages | Provides precise sample movement for pushbroom scanning [25] | Laboratory HSI of solid samples |

| Hyperspectral Data Processing Software | Analyzes large hyperspectral data cubes [27] | Chemical mapping, classification, quantification |

| Chemometric Software Packages | Develops predictive models from spectral data [11] | PLS regression, PCA, machine learning applications |

Technology Selection Workflow

Technology Selection Workflow for Optical Analysis

Implementation Considerations for Research and Drug Development

Data Management and Computational Requirements

The substantial data volumes generated by hyperspectral imaging systems present significant implementation challenges. A megapixel camera streaming 1,000 spectral channels at 100 frames per second can produce more than 1 TB of data per hour, necessitating robust data management infrastructure [27]. Organizations must invest in high-throughput data links, petabyte-scale storage archives, and advanced computational resources for processing and analysis. Many research facilities are adopting cloud-based analytics platforms and AI-driven data reduction techniques to manage these computational demands effectively [28].

Expertise and Training Requirements

Both hyperspectral imaging and advanced spectroscopy techniques demand specialized expertise for proper implementation. Successful deployment requires knowledge in remote sensing, spectral analysis, image processing, and chemometrics [26]. The shortage of qualified professionals with these interdisciplinary skills can constrain adoption, particularly in rapidly expanding application areas. Organizations should anticipate ongoing training requirements and potentially higher operational costs associated with specialized personnel needs.

Cost Analysis and Budget Considerations

Hardware acquisition represents only one component of the total cost of ownership. Complete hyperspectral imaging solutions for research laboratories frequently exceed USD 500,000, with additional annual costs for data management and specialized software [27]. Conventional spectroscopy systems typically involve lower initial investment but may lack the spatial capabilities needed for heterogeneous sample analysis. The development of more affordable, portable systems (sub-USD 10,000 for some agricultural drone payloads) is increasing accessibility across budget-constrained research environments [27].

Future Trends and Developments

The hyperspectral imaging landscape is evolving rapidly, with several key trends shaping future capabilities:

AI Integration and Automation: The integration of artificial intelligence, particularly deep learning algorithms, is transforming data analysis workflows. Adaptive acquisition algorithms and neural network-based feature extraction are enabling automated interpretation of complex spectral data, reducing dependency on specialized expertise [8]. Edge neural networks now reside directly on detector arrays, pushing real-time throughput beyond 1.2 terabits per second for applications requiring immediate analytical results [27].

Miniaturization and Portability: Ongoing sensor miniaturization is producing increasingly compact and portable systems. The development of thin-film filter stacks from organizations like Imec allows CMOS-level manufacturing, dramatically reducing costs while increasing frame rates [27]. This trend is enabling new application scenarios in field-based analysis, medical diagnostics, and integration with consumer devices.

Cost Reduction Trends: Rapid cost erosion in VNIR/SWIR sensors is significantly improving accessibility. InGaAs wafer yields have doubled since 2024, with shared back-end packaging lines mimicking smartphone camera economics and pushing average selling prices down nearly 40% per year [27]. Cost parity with mid-range CMOS imagers is expected by 2027, potentially transitioning hyperspectral cameras from capital equipment to consumable tools in many production and research environments.

Multimodal Data Fusion: Research increasingly demonstrates the complementary value of combining multiple spectral technologies. Studies show that data fusion of HSI and NIR spectroscopy helps mitigate external influences and enhances methodological generalizability [11]. The implementation of multilayer perceptrons for regression prediction through multimodal data fusion has demonstrated improved overall model performance for analytical applications [11].

Techniques and Transformative Applications in Biomedicine and Pharma

The imperative for precise tumor margin assessment during cancer surgery is a significant challenge in surgical oncology. Up to 39% of patients leave the operating room with positive or close margins, which drastically increases the risk of local recurrence and necessitates further interventions [31] [32]. In breast-conserving surgery (BCS) specifically, re-excision rates due to positive margins can be as high as 19% [33]. Traditional methods for intraoperative margin assessment, including frozen section analysis, present limitations such as processing delays, sampling errors, and prolonged surgical times [34] [32]. These clinical challenges have driven the development of advanced, label-free optical technologies that can provide real-time, objective tissue differentiation without requiring exogenous contrast agents.

Hyperspectral imaging (HSI) and diffuse reflectance spectroscopy (DRS) represent two complementary approaches in this innovative field. Both techniques leverage the interaction between light and biological tissues to extract diagnostically valuable information based on tissue composition, morphology, and physiology [33] [34]. When light illuminates tissue, it undergoes several processes including absorption by chromophores (such as hemoglobin, water, and lipids) and scattering from cellular and subcellular structures [33]. These optical properties alter with disease progression, creating spectral signatures that can differentiate malignant from benign tissue [31] [32]. This review provides a comprehensive comparison between imaging-based (HSI) and point-based (DRS) techniques, examining their respective technologies, performance metrics, experimental protocols, and potential for integration into clinical workflows for cancer diagnostics and surgical guidance.

Technology Comparison: Hyperspectral Imaging vs. Spectroscopy

The fundamental distinction between hyperspectral imaging and spectroscopy lies in their spatial approach to spectral acquisition. Hyperspectral imaging is a whole field-of-view technique that captures both spatial and spectral information simultaneously, creating a three-dimensional dataset called a hypercube (x,y,λ) [33] [34]. This hypercube contains complete spectral information for each pixel within the imaging area, enabling the creation of spatial maps of tissue composition. In contrast, diffuse reflectance spectroscopy is a point-based technique that measures the intensity of diffusely reflected light as a function of wavelength from a single, specific tissue location using fiber optic probes placed in contact with the tissue [33].

Technical Specifications and Data Output: Hyperspectral imaging systems typically acquire data across hundreds of narrow, contiguous spectral bands, ranging from the visible to near-infrared regions (e.g., 450-900 nm) [31] [32]. This extensive spectral resolution enables precise identification of materials and tissues that traditional imaging cannot distinguish [8]. The resulting data structure facilitates both spectral analysis and spatial visualization of tissue characteristics. Conversely, diffuse reflectance spectroscopy provides a single spectral curve representing the average optical properties of the probed tissue volume without inherent spatial context. This makes DRS suitable for specific point measurements but limited for assessing large or heterogeneous tissue areas.

Clinical Integration and Practical Considerations: From a clinical implementation perspective, HSI offers non-contact operation, which is particularly advantageous for intraoperative use where maintaining sterile fields is crucial [34] [35]. The ability to rapidly scan entire surgical fields (e.g., tumor beds) without physical contact represents a significant advantage for workflow integration. However, HSI systems generate large, complex datasets that require sophisticated processing algorithms and substantial computational resources [8] [34]. DRS systems, with their simpler data structure and smaller footprint, may offer easier initial implementation and lower computational demands, but their point-sampling nature requires multiple measurements to assess larger areas, potentially increasing procedure time and introducing sampling errors.

Table 1: Fundamental Technical Characteristics of HSI and DRS

| Characteristic | Hyperspectral Imaging (HSI) | Diffuse Reflectance Spectroscopy (DRS) |

|---|---|---|

| Spatial Approach | Whole field-of-view imaging | Point-based measurement |

| Data Structure | 3D hypercube (x,y,λ) | 1D spectral curve |

| Spectral Resolution | Hundreds of contiguous bands | High spectral resolution |

| Tissue Contact | Non-contact | Contact (fiber optic probe) |

| Data Complexity | High (large datasets) | Low to moderate |

| Spatial Coverage | Wide area assessment | Single point assessment |

| Clinical Integration | Non-interference with sterile field | Potential disruption of sterile field |

Performance Comparison and Experimental Data

Multiple clinical studies have demonstrated the efficacy of both hyperspectral imaging and diffuse reflectance spectroscopy for discriminating between cancerous and normal tissues across various cancer types. A systematic review and meta-analysis of 19 studies examining spectrally resolved diffuse reflectance techniques for breast cancer detection revealed that imaging-based techniques (including HSI) achieved pooled sensitivity of 0.90 (CI 0.76–1.03) and specificity of 0.92 (CI 0.78–1.06), outperforming probe-based techniques (sensitivity: 0.84, CI 0.78–0.89; specificity: 0.85, CI 0.79–0.91) [33].

Organ-Specific Performance Metrics: In head and neck cancer applications, a study involving surgical specimens from 16 patients demonstrated that HSI could distinguish between cancer and normal tissue with an average accuracy of 90±8%, sensitivity of 89±9%, and specificity of 91±6% [31] [32]. For brain tumor delineation, an in-vivo study of 61 HS images from 34 patients achieved a median macro F1-Score of 70.2±7.9% for classifying tumor tissue, normal tissue, and blood vessels [35]. In dermatology applications, HSI has demonstrated sensitivity of 87% and specificity of 88% for skin cancer detection, while achieving 86% sensitivity and 95% specificity for colorectal cancer detection [8].

Breast Cancer Tissue Analysis: Research on breast cancer tissues has shown particularly promising results. One study utilizing HSI and K-means classification for histologic evaluation of ductal carcinoma in situ achieved sensitivity of 85.45% and specificity of 94.64% with a true negative rate of 95.8% and false positive rate of 4.2% [36]. Another investigation using laser-induced fluorescence with hyperspectral detection identified emission at 561 nm as exhibiting the greatest variation in fluorescence signal intensity between tumor and normal tissue, serving as an optical predictive biomarker for breast tumor identification [37].

Table 2: Performance Metrics Across Cancer Types and Modalities

| Cancer Type | Technique | Accuracy | Sensitivity | Specificity | Study Details |

|---|---|---|---|---|---|

| Breast Cancer | Imaging-based (pooled) | - | 0.90 | 0.92 | Meta-analysis of multiple studies [33] |

| Breast Cancer | Probe-based (pooled) | - | 0.84 | 0.85 | Meta-analysis of multiple studies [33] |

| Head & Neck | HSI | 90±8% | 89±9% | 91±6% | 16 patients, ex-vivo [31] |

| Brain Tumor | HSI | - | - | - | F1-Score: 70.2±7.9%, 34 patients, in-vivo [35] |

| Ductal Carcinoma | HSI + K-means | - | 85.45% | 94.64% | Histologic evaluation [36] |

| Skin Cancer | HSI | - | 87% | 88% | Clinical study [8] |

| Colorectal Cancer | HSI | - | 86% | 95% | Clinical study [8] |

Experimental Protocols and Methodologies

Hyperspectral Imaging Protocol

The standard experimental workflow for hyperspectral imaging in cancer diagnostics involves several methodical steps from data acquisition to classification. A typical protocol begins with system calibration using white and dark reference images. The white reference is acquired using a standard reflectance board, while the dark reference is captured with the camera shutter closed [31] [32]. These references are essential for subsequent data normalization to account for spectral non-uniformity of the illumination and dark current effects.

Following calibration, hyperspectral images of tissue specimens are acquired across a broad spectral range (typically 450-900 nm) at narrow intervals (e.g., 2-5 nm) [31] [32]. For surgical guidance applications, this imaging is performed in situ or on freshly excised specimens. The acquired raw data undergoes preprocessing including normalization using the equation: Ireflect(λ) = [Iraw(λ) - Idark(λ)] / [Iwhite(λ) - Idark(λ)], where Ireflect(λ) is the normalized reflectance value at wavelength λ, Iraw(λ) is the sample pixel intensity, and Iwhite(λ) and Idark(λ) are reference intensities [32].

A critical preprocessing step involves glare detection and removal, as specular reflections from moist tissue surfaces do not contain diagnostically useful information [31] [32]. glare detection typically involves calculating the standard deviation of spectral derivative curves or total reflectance values, with glare pixels exhibiting higher values than normal tissue pixels. Following glare removal, feature extraction is performed, which may include first- and second-order derivatives of spectral curves, statistical features (mean, standard deviation, total reflectance), and Fourier coefficients [31].

The final stage involves tissue classification using machine learning algorithms such as linear discriminant analysis (LDA) or support vector machines (SVM) [31]. For validation, classified images are compared against histopathological findings from hematoxylin and eosin (H&E) stained sections evaluated by experienced pathologists [31] [36].

HSI Experimental Workflow: The standard protocol for hyperspectral imaging in cancer diagnostics, from system calibration to histopathological validation.

Spectroscopy Protocol

Diffuse reflectance spectroscopy follows a different methodological approach centered on point measurements. The experimental setup typically involves a fiber optic probe containing multiple fibers, with one fiber connected to a broadband light source that transmits light to the tissue, and other fibers collecting diffusely reflected light for measurement by a spectrometer [33]. The probe is placed in direct contact with the tissue area of interest, and spectral measurements are taken from specific points.

The acquired DRS spectra are typically analyzed using models based on approximations of the radiative transport equation, such as diffusion theory, or through Monte Carlo simulations [33]. These analytical approaches enable the extraction of quantitative information about tissue optical properties, particularly absorption and scattering coefficients. The absorption data provides information about chromophore concentrations (hemoglobin, water, lipids), while scattering properties offer insights into tissue microstructure.

Validation of DRS findings follows a similar approach to HSI, with correlation to histopathological diagnosis from biopsied tissues. The primary distinction is that DRS provides information only from the specific points measured, requiring careful selection of measurement sites to ensure representative sampling of both cancerous and normal tissue regions for model development and validation.

Research Reagent Solutions and Essential Materials

Implementing hyperspectral imaging or spectroscopy for cancer research requires specific instrumentation and computational resources. The core components include specialized cameras, light sources, and analytical tools that vary between the two modalities.

Table 3: Essential Research Materials and Their Functions

| Component | Function | Examples/Specifications |

|---|---|---|

| HSI Camera Systems | Capture spatial and spectral data simultaneously | Maestro system (PerkinElmer), snapshot HSI (Rebellion Photonics) [31] [36] |

| Spectroscopy Systems | Measure point-based spectral data | Fiber optic probes coupled with spectrometers [33] |

| Light Sources | Provide illumination across spectral range | Halogen, xenon, or LED lamps; laser sources (e.g., 450 nm for fluorescence) [34] [37] |

| Reference Standards | Calibrate system response | White reference boards, dark reference measurements [31] [32] |

| Data Processing Software | Analyze spectral data, classify tissues | MATLAB, Python with custom algorithms for feature extraction [31] [35] |

| Machine Learning Tools | Tissue classification | LDA, SVM, K-means clustering algorithms [31] [36] [35] |

| Histopathology Materials | Validation ground truth | H&E staining, digitized slides [31] [36] |

For hyperspectral imaging, the Maestro imaging system represents a commercially available solution that incorporates a wavelength-scanning mechanism using a liquid crystal filter and a CCD detector, capable of obtaining reflectance images from 450-950 nm [31] [32]. Alternatively, snapshot HSI systems like the Arrow camera can capture 31 spectral bands within the 461-641 nm range in a single exposure, providing advantages for imaging moving tissues or reducing acquisition times [36]. For spectroscopy systems, fiber optic probes with multiple collection fibers arranged in specific geometries enable depth-resolved sampling of tissue optical properties.

Advanced computational tools are equally crucial for both techniques. Machine learning algorithms including support vector machines, linear discriminant analysis, and K-means clustering have been successfully implemented for tissue classification [31] [36] [35]. For HSI, spatial-spectral classification approaches that incorporate both spectral information and spatial relationships between pixels have demonstrated improved performance over purely spectral classifiers [34] [35].

Clinical Implementation and Future Directions

The translation of hyperspectral imaging and spectroscopy from research settings to clinical practice faces several considerations. For intraoperative guidance, HSI offers the significant advantage of providing wide-field assessment of surgical margins in near real-time, which aligns well with surgical workflow requirements [34]. The non-contact, label-free nature of the technology eliminates the need for contrast administration and associated regulatory hurdles or potential side effects [34] [32]. However, challenges remain regarding the integration of HSI systems into operating room environments, including sterility maintenance, system portability, and intuitive data visualization for surgeons.

The computational demands of HSI data processing represent another implementation challenge, particularly for real-time applications. Current research is addressing this limitation through the development of optimized algorithms, hardware acceleration, and artificial intelligence approaches that can provide rapid tissue classification [8] [34] [35]. The integration of deep learning techniques has shown particular promise for improving classification accuracy while reducing processing time [8] [35].

For spectroscopy, the simpler data structure facilitates faster processing times, but the point-sampling approach presents clinical limitations for comprehensive margin assessment. This technology may find optimal application in guided biopsy procedures or as a complementary tool to confirm suspicious areas identified through other imaging modalities.

Future developments in both fields are likely to focus on miniaturization of hardware, enhanced computational efficiency, and multi-modal integration. The combination of HSI with other imaging techniques, such as fluorescence or ultrasound, could leverage the strengths of each modality to improve diagnostic accuracy [34]. Additionally, the development of standardized protocols and validation across multiple institutions will be crucial for establishing these optical technologies as reliable tools for cancer diagnosis and surgical guidance.

As these technologies continue to evolve, their potential to provide real-time, objective tissue characterization during surgical procedures promises to significantly impact oncologic surgery outcomes. By enabling more complete tumor resections while preserving healthy tissue, both hyperspectral imaging and spectroscopy represent valuable additions to the surgeon's arsenal in the ongoing effort to improve cancer care.

Pharmaceutical Quality Control and Counterfeit Drug Detection

Counterfeit pharmaceuticals represent a critical global public health threat, contributing to hundreds of thousands of deaths annually and an estimated $200 billion illicit market [38]. The sophistication of counterfeit operations has evolved beyond simple visual inspection, necessitating advanced analytical technologies for detection. Within this landscape, hyperspectral imaging and spectroscopy have emerged as powerful orthogonal techniques for non-destructive pharmaceutical analysis.

This guide provides a systematic comparison of hyperspectral imaging versus spectroscopic techniques, evaluating their technical capabilities, performance characteristics, and practical implementation for pharmaceutical quality control and counterfeit detection. We examine specific experimental data, methodological protocols, and technological advancements to inform researchers, scientists, and drug development professionals in selecting appropriate analytical approaches for their specific applications.

Fundamental Technical Principles

Hyperspectral imaging combines spatial and spectral information by capturing images across numerous contiguous spectral bands, creating a three-dimensional data cube (x, y, λ) where each pixel contains a complete spectral signature [8]. This enables simultaneous morphological and molecular analysis of pharmaceutical products.

Vibrational spectroscopy techniques, including Raman and Fourier Transform Infrared (FT-IR) spectroscopy, analyze molecular vibrations without spatial resolution, providing detailed chemical fingerprinting through spectral peaks corresponding to specific functional groups and chemical bonds [39] [40].

Performance Comparison

The table below summarizes key performance characteristics based on experimental studies:

Table 1: Performance Comparison of Pharmaceutical Analysis Techniques

| Parameter | VNIR HSI (400-1000 nm) | SWIR HSI (1000-2500 nm) | Raman Spectroscopy | FT-IR Spectroscopy |

|---|---|---|---|---|

| Spatial Resolution | 10-30 μm | 10-30 μm | ~2 μm (microscopy) [40] | 5.5 μm (imaging) [40] |

| Penetration Depth | Surface analysis | Deeper penetration [41] | Surface and subsurface | Surface analysis |

| Sensitivity to Low-Dose Compounds | Limited | Moderate | Excellent [40] | Good |

| Detection Capabilities | Surface morphology, color variations [39] | Chemical distribution, coating penetration [41] | Molecular fingerprinting, crystal forms | Organic and inorganic compounds [40] |

| Accuracy in Counterfeit Detection | >90% with machine learning [38] | Nearly 100% with PCA/GLCM [41] | High with library matching | High with multivariate analysis |

| Analysis Time | Rapid (minutes per sample) | Moderate | Moderate to slow | Moderate |

Table 2: Experimental Results from Counterfeit Drug Detection Studies

| Study Focus | Technology Used | Key Findings | Accuracy/Performance |

|---|---|---|---|

| Viagra Counterfeits [41] | SWIR HSI (1000-2500 nm) | Significant reflectance differences at 1619.75 nm; GLCM contrast 16±4% higher in counterfeits | Nearly 100% discrimination using PCA |

| Multiple API Identification [42] | SWIR HSI (900-1700 nm) | Clear distinction between ibuprofen, paracetamol, and acetylsalicylic acid tablets | Complete classification of different APIs |

| API Concentration Variation [42] | SWIR HSI (900-1700 nm) | Identification of different ibuprofen concentrations through blister packaging | 100% accuracy in concentration differentiation |

| Falsified Antimalarials [40] | Raman vs. FT-IR Imaging | Raman superior for low-dose compounds; FT-IR detected both organic and inorganic components | Complete composition elucidation of falsified tablets |

| Adulterated Medicines [38] | VNIR HSI (350-1050 nm) with machine learning | Detection of calcium carbonate adulteration in tablet powders | >90% classification accuracy |

Experimental Protocols and Methodologies

Hyperspectral Imaging Analysis of Solid Dosage Forms

Sample Preparation Protocol:

- Use intact tablets without mechanical alteration

- For coated tablets, ensure uniform surface orientation

- Include authentic reference samples from verified sources

- Analyze multiple tablets from same batch (recommended n=3) for representativeness [40]

Data Acquisition Parameters (SWIR HSI):

- Spectral range: 900-2500 nm [41] [42]

- Spatial resolution: Dependent on objective lens, typically 10-30 μm

- Calibration: White reference (100% reflectance) and dark reference (0% reflectance)

- Environmental control: Stable illumination, minimal ambient light interference

Data Processing Workflow:

- Calibration: Normalize raw data using white and dark references

- Spectral Preprocessing: Standard Normal Variate (SNV) normalization, Savitzky-Golay smoothing, first derivative processing [41]

- Feature Extraction: Principal Component Analysis (PCA) for dimensionality reduction

- Texture Analysis: Grey-Level Co-Occurrence Matrix (GLCM) to quantify ingredient distribution homogeneity [41]

- Classification: Partial Least Squares Discriminant Analysis (PLS-DA) or machine learning classifiers

Experimental Findings: SWIR hyperspectral imaging demonstrated definite advantages over VNIR imaging, with higher wavelengths less sensitive to non-uniform illumination and capable of acquiring spectral information underneath tablet coatings [41]. The GLCM contrast parameter effectively quantified homogeneity of ingredient distribution, with falsified drugs showing 16±4% higher contrast than authentic products due to inferior manufacturing processes [41].

Raman and FT-IR Spectroscopy for Composition Elucidation

Sample Presentation:

- Unmodified tablet surfaces or cross-sections for microscopy

- Consistent pressure application for ATR-FT-IR

- Minimal sample exposure to prevent moisture absorption or degradation

Instrumental Parameters (Raman):

- Excitation source: 1064 nm laser [39]

- Laser power: ~1W

- Spectral resolution: 4 cm⁻¹

- Co-added scans: 256 for optimal signal-to-noise ratio [39]

- Wavenumber range: 150-1800 cm⁻¹

Data Analysis Methodology:

- Spectral Preprocessing: SNV normalization, first derivative (Savitzky-Golay), 23-point smoothing [39]

- Multivariate Analysis: Multivariate Curve Resolution-Alternating Least Square (MCR-ALS) for pure compound extraction [40]

- Semi-Quantification: Direct Classical Least Square (DCLS) applied to MCR-ALS pure spectrum matrix

Comparative Performance: Raman hyperspectral imaging demonstrated superior sensitivity for detecting low-dose compounds, attributed to its smaller sampling volume (~2 μm spot size) compared to FT-IR imaging (5.5 μm spatial resolution) [40]. Both techniques successfully identified organic and inorganic components in falsified antimalarial tablets, enabling complete composition elucidation and production fingerprinting.

Advanced Applications and Recent Technological Developments

Artificial Intelligence Integration

The integration of artificial intelligence has significantly enhanced hyperspectral data processing capabilities:

- Deep Learning Architectures: Convolutional Neural Networks (CNNs) enable efficient pixel-wise classification and target detection, automatically extracting nonlinear spectral features without manual preprocessing [43].

- Lightweight CNNs: 1D-CNN models provide effective onboard processing for resource-constrained environments, demonstrating feasibility in satellite missions like Phi-Sat-1 for real-time analysis under limited computational resources [43].

- Multilayer Perceptron Classifiers: Achieve >90% classification accuracy for detecting adulterated medicines, successfully identifying calcium carbonate additives in pharmaceutical powders [38].

Portable and Field-Deployable Systems

Technological miniaturization has enabled field-deployable systems for point-of-need pharmaceutical analysis:

- Mobile Laboratories: Deployment of over 400 van-based labs in China equipped with NIR spectrophotometers for in-field drug verification against spectral libraries [44].

- Handheld Spectrometers: Portable Raman and NIR devices allow rapid screening at supply chain checkpoints, pharmacies, and border controls with analysis times under 30 seconds [45].