Light and Matter: Decoding Molecular Secrets with Spectroscopic Principles for Biomedical Research

This article provides a comprehensive exploration of the fundamental principles governing the interaction of light and matter, which form the basis of spectroscopic techniques.

Light and Matter: Decoding Molecular Secrets with Spectroscopic Principles for Biomedical Research

Abstract

This article provides a comprehensive exploration of the fundamental principles governing the interaction of light and matter, which form the basis of spectroscopic techniques. Tailored for researchers, scientists, and drug development professionals, it details the quantum mechanical foundations of absorption, emission, and scattering phenomena. The scope extends from core theory to the practical application of UV-Vis, Infrared, Raman, and NIR spectroscopy in pharmaceutical and biomedical contexts. It further offers guidance on troubleshooting common analytical challenges, optimizing measurements, and validates findings through method comparison and data correlation, serving as a essential resource for analytical method development and material characterization in research and industry.

The Quantum Dance: How Light and Matter Interact at the Molecular Level

Light, or electromagnetic radiation, is a fundamental phenomenon that exhibits a dual nature, behaving as both a wave and a stream of particles. This wave-particle duality is central to our understanding of how light interacts with matter, forming the underlying principle of spectroscopic techniques used across scientific disciplines. In the context of spectroscopy research, light serves as a primary probe for investigating the composition, structure, and dynamics of physical systems at molecular and atomic levels [1].

Electromagnetic radiation is a self-propagating wave of the electromagnetic field that carries momentum and radiant energy through space, encompassing a broad spectrum classified by frequency and wavelength [2]. The interaction between the oscillating electric and magnetic fields that constitute light enables it to travel through a vacuum at a constant speed of approximately 3 × 10^8 m/s, without requiring a medium for propagation [2] [3].

The Wave Nature of Light and the Electromagnetic Spectrum

Fundamental Wave Properties

Light behaves as a transverse wave, with oscillations of the electric and magnetic fields occurring perpendicular to the direction of energy transfer [2]. These waves are characterized by several fundamental properties:

- Wavelength (λ): The distance between successive peaks of the wave [4] [3]

- Frequency (f): The number of wave cycles that pass a given point per second, measured in Hertz (Hz) [2] [3]

- Amplitude: The height or intensity of the wave, corresponding to the wave's energy

- Speed (c): In a vacuum, all electromagnetic waves travel at the speed of light (c ≈ 3 × 10^8 m/s) [2]

These properties are mathematically related through the fundamental equation: ( c = fλ ), where the speed of light equals the product of frequency and wavelength [2]. As waves cross boundaries between different media, their speeds change but their frequencies remain constant [2].

Like other waves, electromagnetic radiation can be polarized, reflected, refracted, diffracted, and can interfere with other waves [2]. The phenomenon of refraction occurs when a wave crosses from one medium to another of different density, altering its speed and direction according to Snell's law [2]. Dispersion, the wavelength-dependent refraction that creates spectra when composite light passes through a prism, is particularly crucial for spectroscopy [2] [1].

The Electromagnetic Spectrum

The electromagnetic spectrum encompasses all types of electromagnetic radiation, classified by frequency and wavelength into several regions [2] [4]. The table below summarizes the key regions, their wavelength and frequency ranges, and common applications:

Table 1: Regions of the Electromagnetic Spectrum

| Region | Wavelength Range | Frequency Range | Energy Range | Common Applications in Research |

|---|---|---|---|---|

| Gamma Rays | < 0.01 nm | > 30 EHz | Highest | Cancer treatment, nuclear research [5] |

| X-Rays | 0.01 nm - 10 nm | 30 EHz - 30 PHz | Very High | Medical imaging, material inspection [5] |

| Ultraviolet | 10 nm - 400 nm | 30 PHz - 750 THz | High | Sterilization, fluorescence studies [5] |

| Visible Light | 400 nm - 700 nm | 750 THz - 430 THz | Medium | Vision, microscopy, spectroscopy [4] [5] |

| Infrared | 700 nm - 1 mm | 430 THz - 300 GHz | Medium-Low | Thermal imaging, molecular vibrations [5] |

| Microwaves | 1 mm - 1 m | 300 GHz - 300 MHz | Low | Microwave ovens, satellite communications [5] |

| Radio Waves | > 1 mm to thousands of km | < 300 GHz to 3 Hz | Lowest | NMR, MRI, broadcasting [5] |

The visible spectrum that human eyes can detect represents only a small portion (400-700 nm) of the entire electromagnetic spectrum [4] [1]. Differences in wavelength within this range are perceived as different colors, with shorter wavelengths appearing bluer and longer wavelengths appearing redder [4].

Diagram 1: Electromagnetic spectrum showing wavelength and energy relationships

The Particle Nature of Light: Photons

Quantum Theory of Light

Complementing its wave behavior, light also exhibits particle-like properties, particularly when interacting with matter. The quantum theory of light describes electromagnetic radiation as consisting of discrete packets of energy called photons [2] [4]. Each photon carries a specific amount of energy proportional to its frequency, described by the equation:

[ E = hf ]

where ( E ) is the photon energy, ( h ) is Planck's constant (6.626 × 10^-34 J·s), and ( f ) is the frequency [2]. This relationship explains why higher-frequency radiation (e.g., ultraviolet, X-rays) carries more energy per photon than lower-frequency radiation (e.g., infrared, radio waves) [4].

Photons are uncharged elementary particles with zero rest mass that serve as the quanta of the electromagnetic field, responsible for all electromagnetic interactions [2] [3]. The particle nature of light becomes particularly evident when measuring small timescales and distances, or when electromagnetic radiation is absorbed by matter [2].

Wave-Particle Duality

The dual nature of light is not a contradiction but rather a fundamental aspect of quantum mechanics. Whether light manifests more obvious wave-like or particle-like characteristics depends on the experimental context and measurement technique [2] [3]:

- Wave characteristics are more apparent when electromagnetic radiation is measured over relatively large timescales and over large distances [2]

- Particle characteristics are more evident when measuring small timescales and distances, particularly when the average number of photons in the relevant wavelength cube is much smaller than 1 [2]

This duality is exemplified in experiments such as the self-interference of a single photon, where a low-intensity light source sent through an interferometer is detected along one arm (consistent with particle properties), yet the accumulated effect of many detections produces interference patterns (consistent with wave properties) [2].

Theoretical Framework: Maxwell's Equations and Quantum Electrodynamics

Classical Electromagnetic Theory

The mathematical foundation for understanding electromagnetic waves was established by James Clerk Maxwell in the 1860s and 1870s [2] [3] [5]. Maxwell's equations describe how electric and magnetic fields propagate and interact, mathematically predicting the existence of electromagnetic waves [3] [5]. Maxwell noticed that electrical fields and magnetic fields can couple together to form electromagnetic waves, and he summarized this relationship into four fundamental equations:

- Gauss's law for electricity: Relates electric flux to electric charge

- Gauss's law for magnetism: States that magnetic monopoles do not exist

- Faraday's law of induction: Describes how a changing magnetic field produces an electric field

- Ampère-Maxwell law: Shows how electric currents and changing electric fields produce magnetic fields

Maxwell derived a wave form from these equations, uncovering the wave-like nature of electric and magnetic fields and their symmetry [2]. The speed of EM waves predicted by his wave equation coincided with the measured speed of light, leading Maxwell to conclude that light itself is an electromagnetic wave [2] [3]. Heinrich Hertz later confirmed Maxwell's theories experimentally through his work with radio waves [2] [3].

Quantum Electrodynamics

For interactions at the atomic and molecular level, quantum electrodynamics (QED) provides the theoretical framework for understanding how electromagnetic radiation interacts with matter [2]. QED describes how charged particles interact by emitting and absorbing photons, and how photons interact with these charged particles [2].

In quantum mechanics, the electromagnetic field is quantized, and the interactions between light and matter are mediated by the exchange of photons. This quantum approach explains phenomena such as the photoelectric effect, where photons liberate electrons from materials [3], and atomic transitions, where electrons move between energy levels by absorbing or emitting photons with specific energies [2] [4].

Light-Matter Interactions: Fundamental Mechanisms for Spectroscopy

The interaction between light and matter occurs through several distinct mechanisms that form the basis for spectroscopic techniques:

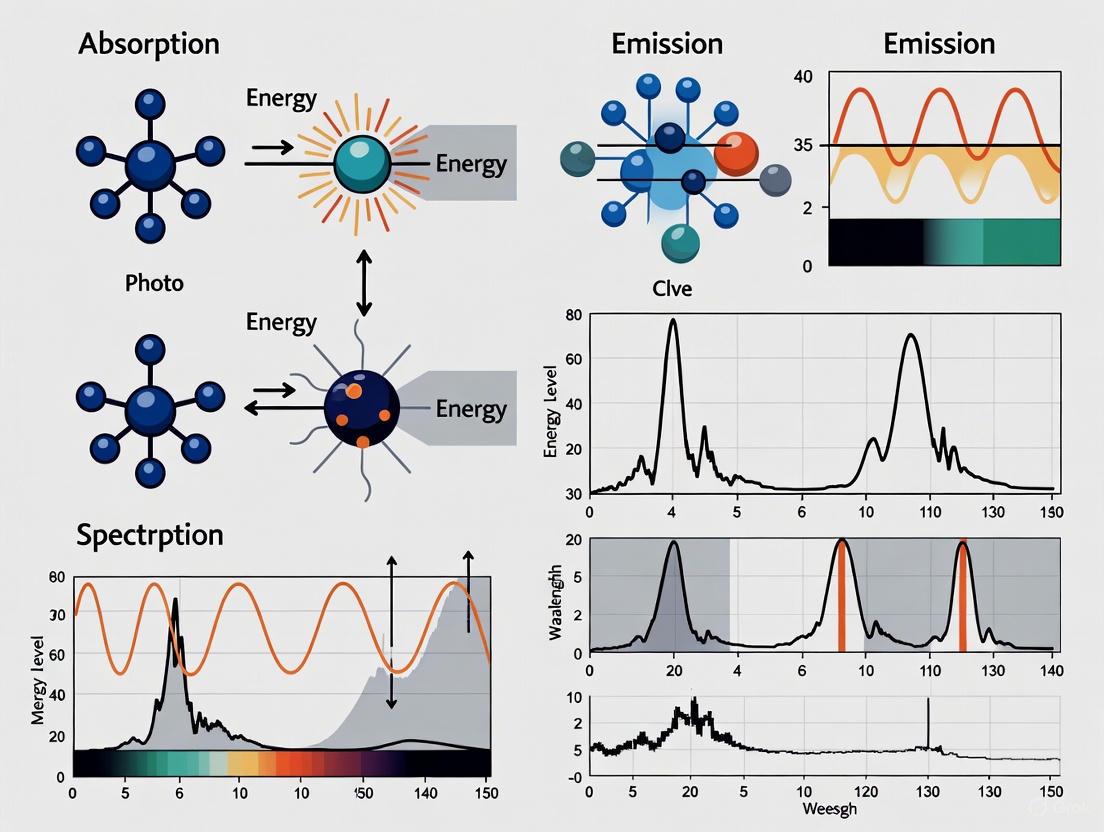

Absorption

Absorption occurs when matter captures photons, converting their energy into other forms such as thermal energy or chemical energy [4]. The specific wavelengths absorbed depend on the electronic, vibrational, and rotational energy levels of atoms and molecules [4] [1]. For example:

- Plants absorb mostly red and blue wavelengths of sunlight for photosynthesis [4]

- Asphalt appears black because it absorbs all colors of visible light very efficiently [4]

- Atoms absorb specific wavelengths that correspond to electronic transitions between discrete energy levels [4]

Emission

Matter can emit light when excited electrons return to lower energy states, releasing photons with energies corresponding to the difference between these states [2] [6]. Every object emits thermal radiation proportional to its temperature, with cooler objects emitting primarily in the infrared and hotter objects emitting visible light [4].

Reflection and Scattering

Reflection occurs when light bounces off a surface without being absorbed, while scattering involves the redirection of light in various directions by irregularities or particles in a material [4]. Snow appears white because it reflects all colors of visible light efficiently, while grass appears green because it reflects predominantly green wavelengths [4].

Transmission

Transmission occurs when light passes through a material without significant absorption or reflection [4]. Window glass transmits all colors of visible light, while colored filters selectively transmit specific wavelength ranges [4].

Diagram 2: Primary light-matter interaction mechanisms

Experimental Protocols in Spectroscopy

Basic Spectrometer Setup and Operation

The fundamental components of a spectrometer, dating back to Isaac Newton's experiments with prisms, include [1]:

- Entrance Slit: Shapes and limits incoming light to create a defined beam

- Dispersive Element: Separates light into its constituent wavelengths (prism, diffraction grating)

- Detector: Captures and measures the intensity of separated wavelengths

Table 2: Essential Research Reagents and Materials for Spectroscopic Analysis

| Item | Function | Application Example |

|---|---|---|

| Prism/Diffraction Grating | Disperses light into component wavelengths | Wavelength separation in UV-Vis spectrometers |

| Photomultiplier Tube/CCD | Detects and quantifies light intensity | High-sensitivity detection in fluorescence spectroscopy |

| Monochromator | Selects specific wavelengths from a broadband source | Isolation of excitation wavelengths |

| Reference Standards | Provides calibration for quantitative measurements | Concentration determination in absorption spectroscopy |

| Optical Cells/Cuvettes | Contains liquid samples with defined path lengths | Sample presentation in liquid phase spectroscopy |

| Polarizers | Controls the polarization state of light | Studying anisotropic materials or molecular orientation |

Modern spectroscopic instruments have evolved from these basic principles to include sophisticated components such as monochromators for precise wavelength selection, sensitive detectors like photomultiplier tubes and CCD arrays, and computer interfaces for data acquisition and analysis [1] [7].

Protocol: Near-Infrared Spectroscopy for Material Classification

This protocol outlines the methodology for using Near-Infrared Spectroscopy (NIRS) to classify materials based on their chemical composition, adapted from studies on coffee bean analysis [7]:

Materials and Reagents:

- Near-infrared spectrometer (350-2500 nm range)

- Standard reference materials for calibration

- Sample containers or holders

- Data analysis software with chemometric capabilities

Procedure:

Sample Preparation:

- Ensure samples are uniformly prepared and presented to the spectrometer

- For solid materials, use consistent particle size or physical form

- Record environmental conditions (temperature, humidity)

Instrument Calibration:

- Collect background spectrum without sample

- Measure standard reference materials to establish baseline

- Verify wavelength accuracy using known absorption features

Data Acquisition:

- Expose each sample to NIR radiation across the 350-2500 nm range

- Collect reflectance or transmittance spectra with appropriate resolution

- Perform multiple scans per sample to assess reproducibility

- Maintain consistent measurement geometry and conditions

Chemometric Analysis:

- Pre-process spectra (normalization, baseline correction, derivatives)

- Perform Principal Component Analysis (PCA) to identify patterns

- Develop classification models using Linear Discriminant Analysis (LDA)

- Validate models with independent test sets

Interpretation:

- Correlate spectral features with material properties

- Establish classification criteria based on statistical analysis

- Report classification accuracy and confidence limits

This methodology has demonstrated classification accuracies up to 100% for distinct material categories and 91-95% for more similar groups in validation studies [7].

Applications in Research and Drug Development

Spectroscopy leverages the fundamental principles of light-matter interactions to provide critical analytical capabilities across scientific disciplines:

Chemical and Pharmaceutical Applications

- Compound Identification: Specific absorption patterns serve as molecular fingerprints for identifying chemical structures [6]

- Reaction Monitoring: Real-time tracking of chemical reactions by observing changes in spectral features [6]

- Quality Control: Verification of raw materials and finished products in pharmaceutical manufacturing [7]

- Kinetic Studies: Determination of reaction rates and mechanisms through time-resolved measurements [7]

Biomedical and Diagnostic Applications

- Medical Imaging: Techniques like MRI (based on NMR principles) and optical imaging for non-invasive diagnostics [6] [5]

- Disease Biomarker Detection: Identification of molecular signatures associated with pathological conditions [7]

- Therapeutic Drug Monitoring: Quantitative measurement of drug concentrations in biological fluids [6]

- Cellular and Tissue Analysis: Investigation of biological samples using fluorescence, infrared, and Raman spectroscopy [7]

The extensive applications of spectroscopic methods stem from their ability to provide both qualitative identification and quantitative measurement of substances across various states of matter (solids, liquids, and gases) through generally non-destructive analytical techniques [6].

Spectroscopy, fundamentally, is the study of physical systems by the electromagnetic radiation with which they interact or that they produce [1]. This interaction provides a window into the atomic and molecular structure of matter. When light—electromagnetic radiation—encounters matter, it can be absorbed, reflected, or transmitted. The specific wavelengths absorbed or emitted serve as a unique fingerprint, revealing the energy level structure of the atoms or molecules under investigation [4]. This principle is universal, applying from the analysis of complex drug molecules in the lab to the determination of elemental abundances in distant stars [8] [1].

The energy of light is directly linked to its wavelength; shorter wavelengths correspond to higher energy photons [4]. This energy relationship is key to spectroscopy because it must precisely match the energy difference between two quantized states within an atom or molecule to be absorbed. The subsequent sections will detail these atomic and molecular energy states, the experimental methods used to probe them, and how this knowledge is applied in cutting-edge research and industry, such as pharmaceutical development.

Atomic Structure: The Quantum Mechanical Model

The modern understanding of atomic structure is governed by quantum mechanics, which superseded earlier models like the Bohr model due to its ability to accurately describe multi-electron atoms and incorporate wave-particle duality [9].

Core Principles

The quantum mechanical model is founded on several key principles:

- Wave-Particle Duality: Electrons exhibit both wave-like and particle-like properties [9].

- The Schrödinger Equation: This is the central equation of quantum mechanics, describing the behavior of electrons as wave functions (ψ). The time-independent form is

Hψ = Eψ, whereHis the Hamiltonian operator (representing the total energy of the system) andEis the energy eigenvalue [9]. - Atomic Orbitals: Electrons do not orbit the nucleus in fixed paths. Instead, they occupy three-dimensional probability clouds called orbitals, which define the regions where an electron is most likely to be found [9].

- Heisenberg Uncertainty Principle: It is impossible to simultaneously know both the exact position and exact momentum of an electron. This principle sets a fundamental limit on measurement precision [9].

Quantum Numbers and Electron Configuration

Every electron in an atom is uniquely described by a set of four quantum numbers, which are solutions to the Schrödinger equation and define the electron's energy and spatial distribution [9].

Table 1: The Four Quantum Numbers Defining Atomic Orbitals

| Quantum Number | Symbol | Allowed Values | Description |

|---|---|---|---|

| Principal | n | 1, 2, 3, ... | Defines the main energy level or shell (n=1 is the lowest energy). |

| Azimuthal | l | 0, 1, 2, ... , n-1 | Defines the subshell or orbital shape (s, p, d, f for l=0,1,2,3). |

| Magnetic | mₗ | -l, ..., 0, ..., +l | Specifies the orientation of the orbital in space. |

| Spin | mₛ | +½ or -½ | Specifies the intrinsic spin direction of the electron. |

The arrangement of electrons in an atom, known as the electron configuration, is determined by the sequential filling of orbitals according to the Aufbau principle, the Pauli exclusion principle (no two electrons can have the same set of four quantum numbers), and Hund's rule (electrons fill degenerate orbitals singly before pairing up) [9]. This configuration dictates an element's chemical properties and its spectroscopic behavior.

Molecular Energy Levels and Electronic Transitions

While atoms have discrete electronic energy levels, molecules possess a more complex energy structure due to the combination of atomic orbitals and the addition of vibrational and rotational degrees of freedom.

Molecular Orbitals and Chromophores

When atoms bond to form molecules, their atomic orbitals combine to form molecular orbitals. Electrons in molecules can occupy bonding, non-bonding, or antibonding orbitals [10]. The highest-energy molecular orbital that contains electrons is the Highest Occupied Molecular Orbital (HOMO), and the lowest-energy unoccupied orbital is the Lowest Unoccupied Molecular Orbital (LUMO). The energy difference between the HOMO and LUMO is a critical parameter in electronic transitions [10] [11].

Sections of molecules that undergo detectable electron transitions are called chromophores. In conjugated systems, where single and double bonds alternate, the π-electrons are delocalized across the molecule. This delocalization lowers the energy required for a π→π* transition, shifting the absorption of light from the ultraviolet to the visible region [10] [1]. For instance, the chromophore lycopene, which gives tomatoes their red color, has a conjugated structure that absorbs blue and green light, allowing red light to be transmitted [1].

Types of Molecular Electronic Transitions

The primary electronic transitions in molecules, particularly organic molecules, are categorized based on the orbitals involved [11].

Table 2: Common Types of Molecular Electronic Transitions

| Transition Type | Orbitals Involved | Typical Energy (Wavelength) | Example |

|---|---|---|---|

| σ → σ* | Bonding sigma to antibonding sigma | High (short λ, e.g., <150 nm) | Ethane (135 nm) [11] |

| n → σ* | Non-bonding to antibonding sigma | High (short λ, ~150-250 nm) | Water (167 nm) [11] |

| π → π* | Bonding pi to antibonding pi | Variable; lower in conjugated systems | Ethene (165 nm); 1,3-butadiene (conjugated) [10] |

| n → π* | Non-bonding to antibonding pi | Low (long λ, ~270-300 nm) | Compounds with C=O and lone pairs |

| Aromatic π → π* | Aromatic pi system to antibonding pi | Characteristic bands | Benzene B-band (255 nm) [11] |

These transitions are not observed as infinitely sharp lines but as broad bands in solution. This broadening occurs because electronic transitions are superimposed on a backdrop of more closely spaced vibrational and rotational energy levels. When a molecule is excited electronically, it is also excited to higher vibrational states, leading to a band of absorption rather than a single line [11]. The solvent can also significantly influence the observed transition, causing bathochromic (red) or hypsochromic (blue) shifts [11].

Experimental Protocols and Methodologies

Quantitative Proton Nuclear Magnetic Resonance (qHNMR) Protocol

Quantitative ¹H NMR (qHNMR) is a powerful method for structure analysis, purity determination, and mixture analysis, especially relevant for bioactive molecules and natural products in drug development [12]. The following provides a detailed protocol for a routine 13C-decoupled qHNMR experiment.

1. Principle: qHNMR leverages the direct proportionality between the integrated signal intensity in a ¹H NMR spectrum and the number of nuclei giving rise to that signal. This allows for the simultaneous acquisition of qualitative (structural) and quantitative (purity/composition) data [12].

2. Experimental Setup and "Cookbook" Parameters:

- Pulse Sequence: Use a 13C GARP (Globally-optimized Alternating-phase Rectangular Pulses) broadband decoupled proton acquisition sequence. This removes 13C satellite signals from the 1H spectrum, reducing complexity and increasing accuracy for minor impurities [12].

- Sample Spinning: Acquire data in non-spinning mode. This eliminates spinning sidebands, which are artifacts that can be mistaken for or obscure low-level impurities [12].

- Shimming: Proper shimming of the sample is critical to achieve a homogeneous magnetic field and a good lineshape. Gradient shimming is recommended for best results in the shortest time [12].

- Relaxation Delay (D1): Set a sufficiently long relaxation delay (typically >5 times the longitudinal relaxation time T1 of the slowest-relaxing proton of interest) to ensure complete relaxation of all nuclei between pulses. This is essential for accurate integral quantification [12].

- Acquisition Time: A standard acquisition time is sufficient.

- Number of Scans: The number of scans should be increased to achieve a high signal-to-noise ratio (S/N), typically targeting a dynamic range of 300:1 (0.3%) or better [12].

3. Data Processing:

- Apply a mild line-broadening function (e.g., 0.3 Hz) to the Free Induction Decay (FID) to improve the S/N ratio before Fourier transformation.

- Manually integrate the peaks of interest. The purity or composition is calculated based on the relative ratios of the integrals, often using the 100% method when impurity molecular weights are unknown [12].

High-Resolution Fourier Transform Spectroscopy (FTS) for Atomic Data

This methodology is used for obtaining highly accurate atomic wavelengths and energy levels, which are critical for astrophysics and testing fundamental physics [8] [13].

1. Principle: High-resolution FTS measures the interference pattern of light from a source, and a Fourier transform converts this pattern into a spectrum of intensity versus wavelength with very high accuracy [8].

2. Experimental Workflow:

- Light Source: An emission source (e.g., a hollow cathode lamp) containing the element of interest is used to produce atomic spectral lines.

- Interferometer: Light from the source is passed through a Michelson interferometer. A moving mirror creates a path difference, generating an interferogram.

- Detection: A detector records the interferogram, which encodes the entire spectrum.

- Fourier Transformation: The interferogram is digitized and computationally transformed using a Fast Fourier Transform (FFT) algorithm to produce the final spectrum.

3. Data Analysis:

- Line Identification: Spectral lines in the measured spectrum are identified and their precise wavelengths are determined.

- Energy Level Optimization: The observed wavelengths, which correspond to transitions between energy levels, are used to compute a self-consistent set of atomic energy levels. Recent advances involve using artificial intelligence, specifically graph reinforcement learning, to accelerate this traditionally laborious "term analysis" from months to overnight, though human checking is still required for reference-quality data [8].

- Uncertainty Quantification: The high resolution of FTS allows for wavelength uncertainties at least ten times lower than previous methods, enabling a major refinement of atomic structures [8].

The Scientist's Toolkit: Key Research Reagents and Materials

Table 3: Essential Materials and Tools for Spectroscopic Analysis

| Item / Reagent | Function / Application |

|---|---|

| Fourier Transform Spectrometer | An instrument for measuring high-resolution atomic emission or absorption spectra over a wide wavelength range (UV to IR) [8]. |

| NMR Spectrometer | A core instrument for determining molecular structure and quantitative composition via qHNMR, particularly for complex natural products and pharmaceuticals [12]. |

| Deuterated Solvents (e.g., CDCl₃) | Used for NMR spectroscopy to provide a lock signal for the magnetic field and to dissolve samples without adding interfering ¹H signals [12]. |

| Internal Quantitative Standards (e.g., TMS) | A reference compound with a known concentration and well-defined NMR signal used for precise quantitation in qHNMR [12]. |

| High-Purity Elemental Lamps (e.g., Mn, Co, Nd) | Emission sources used in FTS to produce the sharp atomic spectral lines needed for precise wavelength and energy level measurements [8]. |

| Prisms & Diffraction Gratings | Dispersive elements used in spectrometers to separate light into its constituent wavelengths for measurement [1]. |

| AI-Assisted Term Analysis Software | Software utilizing graph reinforcement learning (e.g., adapted from DeepMind's Rainbow DQN) to rapidly identify atomic energy levels from thousands of spectral lines [8]. |

| Relativistic Coupled Cluster Code | High-accuracy computational software (e.g., Fock-space coupled cluster) used to predict atomic spectra and properties, especially for heavy elements where relativistic effects are significant [13]. |

Current Research and Applications

The precise measurement of atomic structure and molecular energy levels is a dynamically advancing field with profound implications across science and technology.

Supporting Astrophysics and Stellar Nucleosynthesis: High-resolution laboratory spectroscopy provides the fundamental atomic data needed to interpret astronomical observations. For example, the recent large-scale analysis of neutral manganese (Mn I) with unprecedented accuracy allows researchers to use manganese as a tracer for supernova yields and galactic chemical evolution with far greater accuracy [8]. Similarly, new data on doubly-ionized neodymium (Nd III) helps interpret light from colliding neutron stars detected via gravitational waves [8].

AI and Automation in Spectral Analysis: A major recent innovation is the application of artificial intelligence to the complex task of "term analysis"—the reconstruction of an atomic energy level system from observed spectral lines. A new system using graph reinforcement learning can achieve hundreds of energy level identifications overnight, a task that traditionally took PhD students years, thereby boosting efficiency tremendously [8].

Testing Fundamental Physics and the Standard Model: Precision spectroscopy of heavy atoms and molecules provides a pathway to search for physics beyond the Standard Model, such as charge-parity violation and an electron electric dipole moment. The sensitivity to these effects scales rapidly with proton number (Z² to Z⁵), making heavy elements like radium, thorium, and nobelium ideal candidates. Theory plays a crucial role in identifying promising systems and interpreting the results of these ultra-sensitive experiments [13].

Drug Development and Natural Products Analysis: qHNMR has become an indispensable tool in the natural product and pharmaceutical research workflow. It allows for the confirmation of chemical structure, provides insight into structural equilibria (e.g., tautomerism), determines the purity of bioactive isolates, and explores the composition of complex metabolomic mixtures. This is critical for establishing reliable structure-activity relationships, as the biological activity of a compound is closely related to its purity and impurity profile [12].

The interaction of light with matter constitutes the fundamental basis of spectroscopic analysis, providing critical insights into molecular structure, dynamics, and composition. Within the broader context of light-matter interaction in spectroscopy research, three primary mechanisms—absorption, emission, and scattering—govern how energy is exchanged between photons and materials. These processes enable researchers to decode the intricate energy-level structures of atoms and molecules, facilitating advances across scientific disciplines from drug development to materials science [14]. Understanding these core mechanisms is indispensable for interpreting spectroscopic data and developing innovative analytical methodologies for research applications.

This technical guide examines the fundamental principles, theoretical frameworks, and experimental manifestations of absorption, emission, and scattering processes. By establishing a coherent foundation of these interaction mechanisms, scientists can better leverage spectroscopic techniques to address complex analytical challenges in chemical research and pharmaceutical development.

Theoretical Foundations

Fundamental Principles

The interaction between light and matter occurs through quantized energy exchanges, wherein molecules transition between discrete energy states. When a molecule interacts with electromagnetic radiation, it may undergo changes in its electronic, vibrational, or rotational states through the absorption or emission of photons [15]. The specific energy transitions are dictated by the quantum mechanical properties of the system, with each transition corresponding to a precise energy difference between initial and final states [16].

The energy of electromagnetic radiation is inversely proportional to its wavelength, making different spectral regions sensitive to distinct molecular processes. Ultraviolet and visible radiation typically induce electronic transitions, infrared radiation corresponds to vibrational changes, and microwave radiation activates rotational modifications [16]. These energy-dependent interactions form the basis for various spectroscopic techniques that probe different molecular properties and characteristics.

Energy Transfer and Spectral Characteristics

The distinct nature of absorption, emission, and scattering processes produces characteristically different spectral signatures that convey specific molecular information. Table 1 summarizes the key spectral characteristics of each interaction mechanism.

Table 1: Spectral Characteristics of Light-Matter Interaction Mechanisms

| Interaction Mechanism | Spectral Pattern | Energy Transfer | Intensity Dependence |

|---|---|---|---|

| Absorption | Discrete peaks corresponding to specific molecular transitions [15] | Involves energy transfer from radiation to molecule [15] | Proportional to population of lower energy state [15] |

| Emission | Discrete peaks corresponding to specific molecular transitions [15] | Involves energy transfer from molecule to radiation [15] | Proportional to population of higher energy state [15] |

| Scattering | Generally continuous and less structured [15] | No net energy transfer (elastic) or modified energy transfer (inelastic) [15] | Depends on molecular polarizability and concentration [15] |

Absorption and emission spectra typically display discrete, well-defined peaks that correspond to specific quantum mechanical transitions between molecular energy states. In contrast, scattering spectra generally exhibit continuous, less structured profiles that reflect the distribution of energy modifications during photon-molecule interactions [15]. The intensity of absorbed or emitted radiation follows Boltzmann distribution statistics, depending fundamentally on the population of molecules in the initial energy state preceding the transition.

Absorption Processes

Quantum Mechanical Basis

Absorption occurs when a molecule incorporates energy from incident electromagnetic radiation, promoting itself from a lower energy state to a higher energy state. This transition activates when the energy of the incoming photons precisely matches the energy difference between two quantum states of the molecule [15]. The probability of absorption is governed by the transition dipole moment, a quantum mechanical property that depends on the change in the electronic, vibrational, or rotational configuration of the molecule during the transition [15].

The absorption process follows the Beer-Lambert law, which quantitatively relates the absorption of light to the properties of the material through which the light is passing. This fundamental relationship enables the determination of substance concentration in analytical applications, making absorption spectroscopy an indispensable quantitative tool in chemical analysis [16].

Absorption Spectroscopy Techniques

Absorption spectroscopy encompasses diverse techniques across the electromagnetic spectrum, each targeting specific molecular transitions and providing unique analytical capabilities. Table 2 outlines the primary absorption spectroscopy methods and their respective applications.

Table 2: Absorption Spectroscopy Techniques and Applications

| Technique | Spectral Region | Transition Type | Primary Applications |

|---|---|---|---|

| UV-Vis Spectroscopy | Ultraviolet-Visible (200-800 nm) | Electronic transitions [16] | Determination of conjugated systems, aromatic compounds, and chromophores [14] |

| IR Spectroscopy | Infrared (0.8-1000 μm) | Vibrational transitions [16] | Functional group identification, molecular structure determination [17] |

| X-ray Absorption Spectroscopy | X-ray (0.01-10 nm) | Inner shell electron excitation [16] | Elemental analysis, oxidation state determination |

| Microwave Spectroscopy | Microwave (1 mm-1 m) | Rotational transitions [16] | Molecular geometry determination, bond length precision |

The absorption spectrum of a material reveals its electronic and molecular composition, as absorption lines occur at frequencies that match energy differences between quantum states [16]. The positions, intensities, and widths of these absorption lines provide detailed information about the molecular structure, including functional groups, chemical environment, and intermolecular interactions.

Emission Processes

Emission Mechanisms

Emission processes involve the release of electromagnetic radiation from molecules transitioning from higher energy states to lower energy states. Two distinct emission mechanisms occur in molecular systems: spontaneous emission and stimulated emission.

Spontaneous emission occurs when a molecule in an excited state spontaneously decays to a lower energy state, releasing a photon with energy corresponding to the difference between the two states [15]. This process happens naturally without external influence, with the emitted photon possessing random phase and direction.

Stimulated emission takes place when an incident photon interacts with a molecule already in an excited state, inducing the emission of a second photon identical in energy, phase, and direction to the incident photon [15]. This process forms the fundamental basis of laser operation, enabling the amplification of coherent light.

The following diagram illustrates the fundamental emission mechanisms and their relationship to molecular energy states:

Emission Spectroscopy Applications

Emission spectroscopy leverages the characteristic radiation emitted by excited molecules to determine chemical composition and quantify substances. When molecules are excited by thermal, electrical, or optical energy, they emit radiation at specific wavelengths that form unique spectral fingerprints, enabling precise identification of elements and compounds.

In analytical chemistry, emission techniques such as fluorescence spectroscopy and laser-induced breakdown spectroscopy (LIBS) provide exceptional sensitivity for trace analysis and elemental characterization [18]. These methods are particularly valuable in pharmaceutical research for studying drug-receptor interactions, monitoring metabolic processes, and detecting minute quantities of biomarkers in complex biological matrices.

Scattering Processes

Elastic Scattering

Elastic scattering occurs when incident light interacts with a molecule and is re-emitted at the same frequency, with no net energy exchange between the photon and the molecule. The most prevalent form of elastic scattering is Rayleigh scattering, where incident electromagnetic radiation causes molecular oscillation and re-emission at the identical frequency [15].

The intensity of Rayleigh scattering exhibits a strong dependence on wavelength, proportional to the inverse fourth power of the wavelength (I ∝ 1/λ⁴) [15]. This wavelength dependency explains why shorter wavelengths (blue/violet light) are scattered more efficiently in the atmosphere, creating the blue appearance of the sky. Rayleigh scattering represents the dominant scattering mechanism for particles significantly smaller than the wavelength of incident light.

Inelastic Scattering

Inelastic scattering processes involve energy exchange between incident photons and molecules, resulting in scattered radiation with modified frequency. The primary inelastic scattering phenomenon is Raman scattering, which occurs when incident light interacts with a molecule, inducing a transition to a different vibrational or rotational state and re-emitting radiation at a shifted frequency [15] [19].

Raman scattering encompasses two distinct processes:

- Stokes Raman scattering: The scattered radiation has lower frequency (longer wavelength) than the incident radiation, corresponding to the molecule transitioning to a higher vibrational or rotational state [15] [19].

- Anti-Stokes Raman scattering: The scattered radiation has higher frequency (shorter wavelength) than the incident radiation, corresponding to the molecule transitioning from a higher to a lower vibrational or rotational state [15] [19].

Stokes Raman scattering is significantly more intense than anti-Stokes scattering at standard temperatures because most molecules initially reside in the ground vibrational state, as described by Boltzmann distribution statistics [19].

The following workflow diagram illustrates the experimental process for Raman spectroscopy, highlighting key scattering mechanisms:

Advanced Scattering Techniques

Brillouin scattering represents another inelastic scattering process involving the interaction of electromagnetic radiation with acoustic phonons (collective vibrational modes) in materials [15]. This interaction produces small frequency shifts determined by the velocity of acoustic phonons and the incident radiation wavelength, providing valuable information about elastic properties and sound velocities in materials.

Surface-Enhanced Raman Spectroscopy (SERS) utilizes metallic nanostructures to amplify local electromagnetic fields, dramatically increasing Raman scattering signals by several orders of magnitude [19]. This enhancement enables single-molecule detection and expands Raman applications to trace analysis, surface science, and biological sensing where conventional Raman signals would be undetectable.

Experimental Methodologies

Absorption Spectroscopy Protocol

Objective: Determine the absorption spectrum of a sample to identify chemical composition and quantify concentration.

Materials and Methods:

- Radiation Source: Select appropriate broadband source (e.g., deuterium lamp for UV, tungsten lamp for visible, globar for IR) [16]

- Monochromator/Spectrometer: Disperse light into component wavelengths

- Sample Chamber: Hold sample in appropriate container (cuvette, ATR crystal, gas cell)

- Detector: Measure transmitted light intensity (photodiode, PMT, bolometer)

- Reference Standard: Use for background correction

Procedure:

- Collect reference spectrum (I₀) without sample or with blank solvent

- Introduce sample into the light path

- Measure transmitted light intensity (I) across wavelength range

- Calculate absorbance: A = -log₁₀(I/I₀)

- Plot absorbance versus wavelength to generate absorption spectrum

- Apply Beer-Lambert law for quantitative analysis: A = εlc, where ε is molar absorptivity, l is path length, and c is concentration

Data Analysis: Identify characteristic absorption peaks, correlate with known transitions, determine sample composition, and calculate concentrations using established calibration curves.

Raman Spectroscopy Protocol

Objective: Obtain Raman spectrum to determine molecular structure and identify chemical compounds based on vibrational fingerprints.

Materials and Methods:

- Laser Source: Monochromatic light source (e.g., 532 nm, 785 nm lasers) [19]

- Sample Platform: Stable mounting for solid, liquid, or gaseous samples

- Filter System: Bandpass filter for laser line cleaning, longpass filter for Rayleigh rejection [19]

- Spectrometer: High-resolution instrument with diffraction grating

- Detector: CCD for visible region, InGaAs for NIR region [19]

Procedure:

- Align laser excitation source to optimally illuminate sample

- Collect scattered light at 90° or 180° geometry relative to excitation

- Filter collected light to remove elastically scattered Rayleigh component

- Disperse inelastically scattered light using spectrometer

- Detect Raman signal with appropriate detector

- Record spectrum of intensity versus Raman shift (cm⁻¹)

Data Analysis: Identify characteristic Raman shifts, assign vibrational modes, compare with reference spectra for compound identification, and determine molecular symmetry and structure.

Research Reagent Solutions

Successful spectroscopic analysis requires specific materials and reagents tailored to each technique. Table 3 details essential research reagents and their functions in spectroscopic experiments.

Table 3: Essential Research Reagents for Spectroscopy Experiments

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Monochromator | Wavelength selection and dispersion [16] | Isolation of specific wavelengths in absorption spectroscopy |

| ATR Crystals (ZnSe, Diamond) | Internal reflection element for sample contact | FTIR sampling of solids, liquids, and gels without preparation |

| Spectroscopic Solvents (CDCl₃, DMSO-d₆) | NMR-compatible solvents with deuterated isotopes | Solubilization of samples for NMR analysis without interfering signals |

| Bandpass Filters | Laser line cleaning [19] | Removal of unwanted wavelengths from laser source in Raman spectroscopy |

| Longpass Filters | Rayleigh line rejection [19] | Blocking of elastically scattered light in Raman detection |

| Reference Standards | Instrument calibration and quantification | Creation of calibration curves for quantitative analysis |

| Metallic Nanoparticles (Au, Ag) | Signal enhancement substrates | Surface-enhanced Raman spectroscopy (SERS) for trace detection |

Comparative Analysis of Interaction Mechanisms

Selection Guidelines for Analytical Applications

Choosing the appropriate spectroscopic technique depends on the analytical requirements, sample characteristics, and information objectives. Table 4 provides a comparative framework for selecting interaction mechanisms based on analytical needs.

Table 4: Technique Selection Guide for Analytical Applications

| Analytical Requirement | Recommended Technique | Key Advantages | Limitations |

|---|---|---|---|

| Quantitative Concentration Measurement | UV-Vis Absorption Spectroscopy | Simple operation, high precision, wide linear dynamic range | Limited structural information, potential spectral overlap |

| Functional Group Identification | Infrared Absorption Spectroscopy | Extensive spectral libraries, non-destructive, minimal sample prep | Limited sensitivity for trace analysis, water interference |

| Molecular Structure Elucidation | NMR Spectroscopy | Detailed atomic-level structural information, quantitative capability | Expensive instrumentation, relatively low sensitivity |

| Chemical Fingerprinting | Raman Scattering Spectroscopy | Minimal sample preparation, aqueous compatibility, spatial mapping | Fluorescence interference, inherently weak signal |

| Trace Analysis | Surface-Enhanced Raman Spectroscopy | Exceptional sensitivity, single-molecule detection, multiplex capability | Complex substrate preparation, potential reproducibility issues |

Complementary Information from Multiple Techniques

Integrating multiple spectroscopic approaches often provides comprehensive molecular understanding that surpasses the capabilities of individual techniques. For example, combining IR and Raman spectroscopy offers complementary vibrational information, as IR absorption requires a change in dipole moment while Raman scattering depends on polarizability changes during molecular vibrations [15] [17]. This complementary nature allows complete assignment of vibrational modes and enhanced molecular structure determination.

Similarly, correlation of UV-Vis electronic transition data with NMR structural information enables researchers to establish structure-property relationships for novel compounds. Such multi-technique approaches are particularly valuable in pharmaceutical research for characterizing active pharmaceutical ingredients (APIs), studying drug-polymer interactions in formulations, and monitoring chemical reactions in real time.

Emerging Applications in Drug Development

Spectroscopic techniques leveraging absorption, emission, and scattering mechanisms play increasingly vital roles in modern pharmaceutical research and development. Confocal Raman microscopy provides label-free chemical imaging of pharmaceutical formulations, enabling visualization of active ingredient distribution within solid dosage forms without destructive sample preparation [19]. This capability is invaluable for optimizing manufacturing processes and ensuring product quality.

UV-Vis absorption spectroscopy remains fundamental for solubility studies, dissolution testing, and pharmacokinetic analysis, while fluorescence spectroscopy offers exceptional sensitivity for monitoring protein-ligand interactions and conformational changes in biopharmaceutical characterization. Additionally, the non-destructive nature of Raman spectroscopy enables counterfeit drug identification through packaging, supporting regulatory compliance and patient safety initiatives [19].

These applications demonstrate how fundamental light-matter interaction mechanisms translate into practical analytical solutions that accelerate drug development, enhance quality control, and advance therapeutic innovation. As spectroscopic technologies continue evolving with improved sensitivity, miniaturization, and computational integration, their impact on pharmaceutical research will undoubtedly expand, enabling new approaches to complex analytical challenges.

Quantum transitions between discrete energy states are the fundamental mechanism by which matter interacts with light, creating unique spectral fingerprints that form the basis of spectroscopy. This whitepaper delineates the quantum mechanical principles governing these transitions, presents a contemporary experimental breakthrough in detecting weak transitions, and provides detailed methodologies for their study. Framed within the broader context of light-matter interaction, this guide serves as a technical resource for researchers and drug development professionals in deploying spectroscopic techniques for material and molecular analysis.

Spectroscopy, the scientific study of the interaction between light and matter, is predicated on the quantum mechanical principle that atoms and molecules can only exist in specific, discrete energy states [6]. A quantum transition occurs when a particle absorbs or emits a photon, causing it to move between these energy levels. The energy of the photon must exactly match the energy difference between the two states, as described by the Bohr frequency condition: ΔE = hν, where ΔE is the energy difference, h is Planck's constant, and ν is the frequency of the photon [6].

Every element and molecule possesses a unique set of allowed energy levels, dictated by its chemical structure, electronic configuration, and nuclear properties. When photons are absorbed or emitted at the characteristic frequencies corresponding to these energy differences, they produce a pattern of lines or bands—a spectral fingerprint—that serves as a unique identifier for the substance [6]. The strength of a transition is governed by its transition matrix element, a quantum mechanical parameter that determines the probability of the transition occurring. Transitions are categorized as "allowed" (strong) or "forbidden" (weak) based on selection rules derived from quantum mechanics.

Breaking the Scaling Law: Enhancing Weak Transitions

A significant challenge in spectroscopy is the detection of weak transitions, which have small cross-sections due to their small transition matrix elements. These transitions are often buried in noise or obscured by stronger signals. Recent research has demonstrated a method to break the traditional scaling law, which states that the absorption cross-section (σ) is proportional to the absolute square of the transition matrix element (∣T∣²) [20].

Theoretical Concept of Enhancement

The conventional approach is described by the optical theorem: σ ∝ Im(A), where for a resonance in the linear regime, the forward scattering amplitude A is proportional to ∣T∣² [20]. The new concept introduces an additional, stronger laser-coupled pathway to the same excited state. In the presence of this intense light, the response function modifies to à ∝ T ˣ (T + T ′), where T ′ represents the contribution from the additional pathway [20]. For a weakly coupled state, if T ′ can be made much larger than T, the spectral visibility of the weak transition can be significantly enhanced, effectively boosting its transition probability [20].

Experimental Demonstration in Helium

This enhancement concept was experimentally validated using attosecond transient absorption spectroscopy in helium atoms [20]. The experiment targeted the quasi-forbidden weak transitions from the ground state (1s²) to the doubly excited 2p3d and sp²,4⁻ states. The transition probability for these states is orders of magnitude lower than for the strongly coupled 2s2p state [20].

The methodology involved:

- Light Sources: A weak, broadband extreme-ultraviolet (XUV) pulse generated via high-harmonic generation in neon, and a stronger, few-cycle visible (VIS) pulse centered at 700 nm [20].

- Beam Path: The VIS and XUV pulses propagated collinearly and were focused into a helium-filled gas cell. A variable time delay (τ) was introduced between the two pulses [20].

- Detection: The transmitted XUV radiation was dispersed by a grating and detected by a CCD camera. The absorption spectrum was characterized by the optical density, OD(ω, τ) = -log₁₀[ I(ω, τ) / I₀(ω) ], where I and I₀ are the transmitted and incident XUV spectra, respectively [20].

In the absence of the VIS pulse, the weak 2p3d and sp²,4⁻ transitions were barely visible. When the VIS pulse was applied, it strongly coupled the 2s2p state (populated by the XUV) to the target 2p3d and sp²,4⁻ states via a two-VIS-photon pathway through the 2p² intermediate state. This coupling transferred quantum-state amplitude, boosting the spectral signal of the weak transitions by an order of magnitude and making their relative spectral amplitude comparable to neighboring strong lines [20].

Quantitative Data on Helium Doubly Excited States

The table below summarizes key quantitative data for the doubly excited states in helium discussed in the experimental demonstration, illustrating the relative strengths of different transitions [20].

Table 1: Transition Strengths of Selected Helium Doubly Excited States

| State Series | Example State | Energy (eV) | Relative Transition Strength from Ground State |

|---|---|---|---|

| sp²,n+ | sp²,3+ | ~63.7 | Strong |

| sp²,n- | sp²,4- | ~64.1 | Weak (Quasi-forbidden) |

| 2pnd | 2p3d | ~64.1 | Weak (Quasi-forbidden) |

Note: The 2p3d and sp²,4⁻ states are nearly degenerate, with an energy spacing of less than 20 meV, making them indistinguishable in the reported measurement [20].

Detailed Experimental Protocol: Attosecond Transient Absorption

This protocol details the methodology for enhancing and measuring weak quantum transitions, as demonstrated in [20].

Experimental Workflow

Step-by-Step Methodology

Beam Preparation and Delay

- Generate a broadband XUV pulse via high-harmonic generation (HHG) in a noble gas target (e.g., Neon) driven by an intense infrared laser [20].

- Split the fundamental laser beam to generate a few-cycle visible (VIS) or near-infrared pulse.

- Use a high-precision, piezo-driven split mirror or delay stage to introduce a variable time delay (τ) between the XUV and VIS pulses. The convention is that a positive τ means the XUV pulse arrives first [20].

Sample Interaction

- Recombine the XUV and VIS pulses collinearly and focus them into a gas cell or jet containing the sample atoms or molecules (e.g., Helium at ~200 mbar backing pressure) [20].

- The weak XUV pulse preferentially excites the system from the ground state to various excited states, including both strongly and weakly coupled states.

- The delayed, intense VIS pulse couples these excited states, transferring population from the strongly coupled state (e.g., 2s2p in He) to the weakly coupled state (e.g., 2p3d) via a resonant multi-photon pathway [20].

Spectral Detection

- The transmitted XUV spectrum, I(ω, τ), is dispersed using a high-resolution grating spectrometer [20].

- The dispersed light is recorded by a CCD camera as a function of photon energy (ω) and time delay (τ) [20].

- A reference spectrum, I₀(ω), is recorded without the sample or without the VIS pulse to calculate the optical density: OD(ω, τ) = -log₁₀[ I(ω, τ) / I₀(ω) ] [20].

Data Analysis

- Analyze the OD(ω, τ) map to identify spectral features that emerge or are enhanced when the XUV and VIS pulses overlap in time (τ ≈ 0).

- The enhancement of a weak transition manifests as a pronounced absorption peak or a window-type resonance (a spectral dip) that appears only during temporal overlap and persists for a duration comparable to the lifetime of the involved states [20].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Reagents and Materials for Advanced Spectroscopic Experiments

| Item | Function / Role in Experiment |

|---|---|

| Ultrafast Laser System | Primary source for generating both the pump and probe pulses. Typically a Ti:Sapphire laser producing femtosecond pulses at near-infrared wavelengths (e.g., ~800 nm). |

| High-Harmonic Generation (HHG) Source | A gas target (e.g., Neon, Argon) where the intense laser is converted to coherent XUV pulses through non-linear interaction. |

| Gas Cell / Jet | Contains the atomic or molecular sample under study (e.g., Helium gas). Ensures a uniform density of target particles in the interaction region. |

| Monochromator / Spectrometer | Disperses the broad-band, transmitted light after interaction with the sample, allowing wavelength-resolved detection. |

| CCD Camera | Detects the dispersed light, recording the intensity as a function of photon energy to construct the absorption spectrum. |

| High-Precision Delay Stage | A piezo-actuated optical stage that controls the path length of one beam, enabling sub-femtosecond precision in the time delay between pump and probe pulses. |

Implications for Research and Industry

The ability to enhance weak transitions has profound implications. In fundamental physics, it enables precision tests of quantum mechanics and the study of exotic, correlated electron states [20]. For drug development and the life sciences, this methodology can be applied to boost the spectral visibility of weak but functionally critical transitions in complex biomolecules, such as proteins and nucleic acids, improving diagnostic capabilities and the understanding of molecular interactions [20]. Furthermore, the general principle of controlling quantum pathways opens avenues for manipulating chemical reactions and energy transfer processes at the quantum level.

The Beer-Lambert Law is a fundamental principle in optical spectroscopy that provides a quantitative relationship between the attenuation of light through a substance and the properties of that substance [21]. This law, unquestionably the most important law in optical spectroscopy, is indispensable for the qualitative and quantitative interpretation of spectroscopic data [22]. Its development spans centuries, beginning with the work of Pierre Bouguer in 1729, who discovered the exponential decay of light intensity during his astronomical observations of the atmosphere [23] [22]. Johann Heinrich Lambert later formalized this mathematical relationship in his 1760 work Photometria, establishing that the loss of light intensity when propagating through a medium is directly proportional to both the intensity and the path length [23]. The law was completed in 1852 when August Beer extended the concept to include the concentration of colored solutions, noting that transmittance remained constant provided the product of concentration and path length stayed constant [23] [22].

The modern formulation of the law, which merges these contributions into the absorbance equation we use today, was first presented by Robert Luther in 1913 [22]. This law serves as a cornerstone in the broader thesis of light-matter interaction, providing researchers across chemistry, biology, pharmaceutical development, and environmental science with a critical tool for quantifying molecular species in solution [24]. Despite its widespread utility, modern research continues to explore the boundaries of this fundamental law, particularly through the lens of electromagnetic theory, which reveals significant limitations and opportunities for refinement in advanced spectroscopic applications [25] [22] [24].

Theoretical Foundation

Fundamental Concepts of Light Attenuation

When monochromatic light passes through a solution-containing cuvette, several physical processes occur that reduce the intensity of the transmitted light. The incident light with intensity (I_0) undergoes attenuation primarily through absorption by the solute molecules, though scattering and reflection at interfaces also contribute to reduced transmission [21] [26]. The fundamental quantities describing this attenuation are:

- Transmittance (T): The fraction of incident light that passes through the sample, defined as (T = I/I0), where (I) is the transmitted intensity [21] [27]. It is often expressed as a percentage: (\%T = (I/I0) \times 100\%) [21].

- Absorbance (A): A dimensionless quantity defined as the negative logarithm of transmittance: (A = -\log{10}T = \log{10}(I_0/I)) [21] [27]. This logarithmic relationship means that each unit increase in absorbance corresponds to a tenfold decrease in transmittance [21].

Table 1: Relationship Between Absorbance and Transmittance

| Absorbance (A) | Transmittance (T) | % Transmittance |

|---|---|---|

| 0 | 1 | 100% |

| 0.3 | 0.5 | 50% |

| 1 | 0.1 | 10% |

| 2 | 0.01 | 1% |

| 3 | 0.001 | 0.1% |

Mathematical Formulation

The Beer-Lambert Law establishes a linear relationship between absorbance and both the concentration of the absorbing species and the path length through the solution [21] [27] [23]. The standard mathematical form is:

[A = \varepsilon \cdot c \cdot l]

Where:

- (A) is the measured absorbance (dimensionless)

- (\varepsilon) is the molar absorptivity or molar extinction coefficient (typically in L·mol⁻¹·cm⁻¹)

- (c) is the concentration of the absorbing species (in mol·L⁻¹ or M)

- (l) is the optical path length through the sample (in cm) [21] [27] [28]

The molar absorptivity ((\varepsilon)) is a substance-specific property that measures how strongly a chemical species absorbs light at a particular wavelength [28] [29]. A higher (\varepsilon) value indicates a greater probability of electronic transitions and thus stronger absorption [26].

For systems with multiple absorbing species, the law becomes:

[A = l \sumi \varepsiloni c_i]

where the total absorbance equals the sum of contributions from all absorbing components [23].

Diagram 1: Fundamental relationships in the Beer-Lambert Law showing how sample properties affect light attenuation.

Derivation from First Principles

The Beer-Lambert Law can be derived by considering the differential attenuation of light passing through an infinitesimally thin layer of absorbing medium [30] [23] [28]. For a monochromatic light beam traversing a thickness (dx) of a solution containing concentration (c) of absorbing species, the decrease in intensity (dI) is proportional to the incident intensity (I), the path length (dx), and the concentration (c):

[ -\frac{dI}{dx} = \alpha \cdot I \cdot c ]

Where (\alpha) is the proportionality constant representing the absorption characteristics [30] [26]. Rearranging and integrating both sides:

[ \int{I0}^{I} \frac{dI}{I} = -\alpha c \int_{0}^{l} dx ]

[ \ln\left(\frac{I}{I_0}\right) = -\alpha c l ]

Converting from natural logarithm to base-10 logarithm:

[ \log{10}\left(\frac{I0}{I}\right) = \frac{\alpha}{2.303} c l ]

Substituting (A = \log{10}(I0/I)) and (\varepsilon = \alpha/2.303) yields the familiar form:

[ A = \varepsilon c l ]

This derivation assumes that: (1) the light is monochromatic, (2) the absorbing species act independently, (3) the solution is homogeneous, (4) the incident radiation is parallel and perpendicular to the surface, and (5) the concentration is sufficiently low to avoid molecular interactions [30] [26].

Practical Implementation in Research

Essential Equipment and Reagents

Table 2: Essential Research Reagents and Equipment for Beer-Lambert Law Applications

| Item | Function/Description | Typical Specifications |

|---|---|---|

| Spectrophotometer | Instrument for measuring light intensity before and after sample interaction [21] [28] | UV-Vis range (190-1100 nm); monochromator or diode array detector |

| Cuvettes | Containers for holding liquid samples during measurement [21] | Path length: 1 cm (standard); material: quartz (UV), glass (Vis) |

| Molar Extinction Coefficient Reference Materials | Substances with known ε values for calibration and verification | Holmium oxide filters (wavelength accuracy) [24]; potassium dichromate (absorbance standards) |

| Chemical Standards | High-purity compounds for preparing calibration solutions | Potassium permanganate, methyl orange, copper sulfate [24] |

| Solvent Systems | Chemically inert media for dissolving analytes | Distilled water, spectral-grade organic solvents [24] |

Experimental Protocol for Concentration Determination

The primary application of the Beer-Lambert Law in research involves determining unknown concentrations of solutions through spectrophotometric measurement [21]. The following protocol provides a detailed methodology:

Solution Preparation: Prepare a series of standard solutions with known concentrations of the analyte, typically using serial dilution techniques. Ensure the concentration range produces absorbance values between 0.1 and 1.0 AU for optimal accuracy [21] [27].

Spectrophotometer Calibration:

- Turn on the instrument and allow it to warm up for 15-30 minutes.

- Perform wavelength accuracy verification using a holmium oxide filter with known absorption peaks at 361 nm, 445 nm, and 460 nm [24].

- Set the desired analytical wavelength based on the analyte's absorption maximum.

Blank Measurement:

- Fill a cuvette with the pure solvent (without analyte).

- Place in the sample compartment and set the instrument to 100% transmittance (zero absorbance).

Standard Curve Generation:

- Measure the absorbance of each standard solution at the predetermined wavelength.

- Record both concentration and absorbance values in tabular form.

- Plot absorbance versus concentration and perform linear regression to obtain the calibration curve [21].

Unknown Sample Measurement:

- Measure the absorbance of the unknown solution under identical conditions.

- Calculate the concentration using the equation from the linear regression: (c = A/\varepsilon l).

Quality Control:

- Verify measurement precision through replicate analyses (typically n=3).

- Analyze a certified reference material if available to assess accuracy.

Diagram 2: Experimental workflow for quantitative analysis using the Beer-Lambert Law.

Advanced Application: Multi-Component Analysis

For systems containing multiple absorbing species with overlapping absorption bands, the Beer-Lambert Law can be extended through matrix algebra [23]. The total absorbance at wavelength (i) is:

[Ai = l \sum{j=1}^n \varepsilon{ij} cj]

Where (\varepsilon_{ij}) is the molar absorptivity of component (j) at wavelength (i). Measurements at multiple wavelengths ((i = 1, 2, ..., m)) yield a system of equations:

[\begin{bmatrix}A1 \ A2 \ \vdots \ Am\end{bmatrix} = l \begin{bmatrix}\varepsilon{11} & \varepsilon{12} & \cdots & \varepsilon{1n} \ \varepsilon{21} & \varepsilon{22} & \cdots & \varepsilon{2n} \ \vdots & \vdots & \ddots & \vdots \ \varepsilon{m1} & \varepsilon{m2} & \cdots & \varepsilon{mn}\end{bmatrix} \begin{bmatrix}c1 \ c2 \ \vdots \ c_n\end{bmatrix}]

This approach requires prior knowledge of the molar absorptivity matrix, typically determined by measuring pure standards of each component at all analytical wavelengths.

Limitations and Modern Theoretical Refinements

Fundamental Limitations of the Classical Law

Despite its widespread utility, the Beer-Lambert Law has several significant limitations that researchers must recognize:

High Concentration Effects: At elevated concentrations (typically >0.01 M), the average distance between absorbing molecules decreases, leading to electrostatic interactions that alter absorption characteristics [30] [25] [24]. The refractive index of the solution may also change significantly with concentration, violating one of the law's fundamental assumptions [25] [24].

Chemical Deviations: Molecular associations such as dimerization or complex formation at higher concentrations change the absorption spectrum [25]. Shifts in chemical equilibrium due to changes in pH, temperature, or solvent composition can also cause deviations [24].

Instrumental Deviations: The use of polychromatic light sources causes deviations because molar absorptivity varies with wavelength [25] [29]. Stray light reaching the detector without passing through the sample similarly violates the law's assumptions [25].

Electromagnetic Effects: The classical derivation neglects the wave nature of light, including interference effects that become significant in thin films or at interfaces between media with different refractive indices [25] [22]. Multiple reflections in cuvettes with parallel windows can create etalon effects that distort measurements [25].

Table 3: Common Limitations and Practical Solutions

| Limitation Type | Underlying Cause | Practical Mitigation Strategies |

|---|---|---|

| Fundamental Deviations | High concentration effects; refractive index changes | Dilute samples to <0.01 M; use shorter path length cuvettes |

| Chemical Deviations | Molecular interactions; equilibrium shifts | Control pH, temperature; use chemical buffers |

| Instrumental Deviations | Polychromatic light; stray light; detector nonlinearity | Use narrow bandwidth; double-beam instruments; regular calibration |

| Electromagnetic Effects | Interference; scattering; reflection losses | Use non-parallel cuvettes; index-matching techniques |

Electromagnetic Theory Refinements

Recent research has addressed the limitations of the classical Beer-Lambert Law through electromagnetic theory, which provides a more rigorous foundation for light-matter interactions [22] [24]. The classical law assumes light propagates as rays rather than waves, neglecting polarization effects and the complex refractive index.

The electromagnetic approach begins with the complex refractive index:

[\hat{n} = n + ik]

Where (n) is the real part (governing refraction) and (k) is the imaginary part (governing absorption). The absorption coefficient (\alpha) relates to (k) through:

[k = \frac{\alpha}{4\pi\nu}]

Where (\nu) is the wavenumber. For dilute solutions, the refractive index can be approximated as:

[n \approx 1 + c\frac{NA\alpha'}{2\epsilon0}]

Where (\alpha') is the polarizability and (N_A) is Avogadro's number. This leads to:

[k \approx \beta c]

Which recovers the classical Beer-Lambert Law at low concentrations [24]. However, at higher concentrations, higher-order terms become significant:

[k = \beta c + \gamma c^2 + \delta c^3]

This results in a modified absorbance expression:

[A = \frac{4\pi\nu}{\ln 10}(\beta c + \gamma c^2 + \delta c^3)l]

This electromagnetic extension successfully models the non-linear behavior observed at high concentrations, where molecular interactions and local field effects become significant [24]. Experimental validation with potassium permanganate, potassium dichromate, methyl orange, copper sulfate, and iron chloride solutions demonstrates superior performance compared to the classical law, with root mean square errors below 0.06 for all tested materials [24].

Applications in Pharmaceutical Research and Development

The Beer-Lambert Law finds extensive application in drug development, where precise quantification of chemical compounds is essential throughout the research, development, and manufacturing processes:

API Quantification: Determination of active pharmaceutical ingredient (API) concentration in bulk solutions and formulated products using validated spectrophotometric methods [28].

Dissolution Testing: Monitoring the release profile of APIs from solid dosage forms by measuring concentration in dissolution media at specific time points [28].

Impurity Profiling: Detection and quantification of trace impurities and degradation products through differential spectrophotometry, often employing multi-wavelength analysis [28].

Biomolecule Analysis: Quantification of proteins, nucleic acids, and other biomolecules using their characteristic absorption bands (e.g., proteins at 280 nm, DNA at 260 nm).

Pharmacokinetic Studies: Measuring drug concentrations in biological fluids during ADME (absorption, distribution, metabolism, excretion) studies, often after appropriate sample preparation to eliminate matrix effects.

The robustness of Beer-Lambert-based methods makes them indispensable for quality control in pharmaceutical manufacturing, where they are incorporated into numerous pharmacopeial monographs and regulatory submission documents.

The Beer-Lambert Law remains a cornerstone of analytical spectroscopy, providing an essential link between absorbance measurements and chemical concentration. Its mathematical elegance and practical utility have ensured its continued relevance across diverse scientific disciplines for nearly three centuries. However, modern research has clearly delineated its limitations, particularly at high concentrations where electromagnetic effects and molecular interactions become significant.

The ongoing refinement of this fundamental law through electromagnetic theory represents an important advancement in spectroscopic science, offering more accurate models for quantitative analysis under non-ideal conditions. For researchers in drug development and other applied fields, understanding both the classical formulation and its modern extensions is crucial for designing robust analytical methods and correctly interpreting spectroscopic data.

As spectroscopic techniques continue to evolve, the Beer-Lambert Law will undoubtedly remain central to quantitative analysis while simultaneously serving as a foundation for developing more sophisticated models of light-matter interaction that push the boundaries of analytical science.

A Practical Guide to Spectroscopic Techniques in Drug Discovery and Development

Ultraviolet-Visible (UV-Vis) spectroscopy operates on the fundamental principle of light-matter interaction, specifically the absorption of electromagnetic radiation in the ultraviolet (190-400 nm) and visible (400-800 nm) regions by molecules [31] [32]. This absorption occurs when photons carrying specific amounts of energy interact with molecular electrons, promoting them from their ground state to higher energy excited states [32]. The energy of a photon is inversely proportional to its wavelength, meaning shorter ultraviolet wavelengths carry more energy than longer visible wavelengths, which directly influences which electronic transitions can be excited [33].

The technique measures this interaction quantitatively, providing data that can be used for both identification and concentration determination of analytes [31]. The specific wavelengths absorbed, and the extent of absorption, create a characteristic absorption spectrum that serves as a molecular fingerprint, influenced by the molecular structure, particularly the presence of chromophores—functional groups with electrons that can be excited at these energy levels [32]. When a compound absorbs light in the visible region, it appears to the human eye as the complementary color to the wavelength absorbed; for instance, absorption around 500-520 nm (green) makes a substance appear red [32].

Electronic Transitions and Spectral Interpretation

Types of Electronic Transitions

The absorption of UV or visible light energy promotes electrons in a molecule from the highest occupied molecular orbital (HOMO) to the lowest unoccupied molecular orbital (LUMO) [32]. The primary transitions involved for organic molecules are summarized in the table below.

Table 1: Common Electronic Transitions in UV-Vis Spectroscopy

| Transition Type | Electron Origin | Typical Wavelength Range | Example Chromophores | Molar Absorptivity (ε) |

|---|---|---|---|---|

| σ → σ* | Sigma bonding orbital | < 200 nm (Far UV) | C-C, C-H | High |

| n → σ* | Non-bonding orbital | 150 - 250 nm | H2O, CH3OH, CH3Cl | Medium (100-3000) |

| π → π* | Pi bonding orbital | 200 - 700 nm (Conjugated) | Alkenes, Carbonyls, Aromatics | High (10,000-250,000) |

| n → π* | Non-bonding orbital | 250 - 400 nm | C=O, NO2 | Low (10-100) |