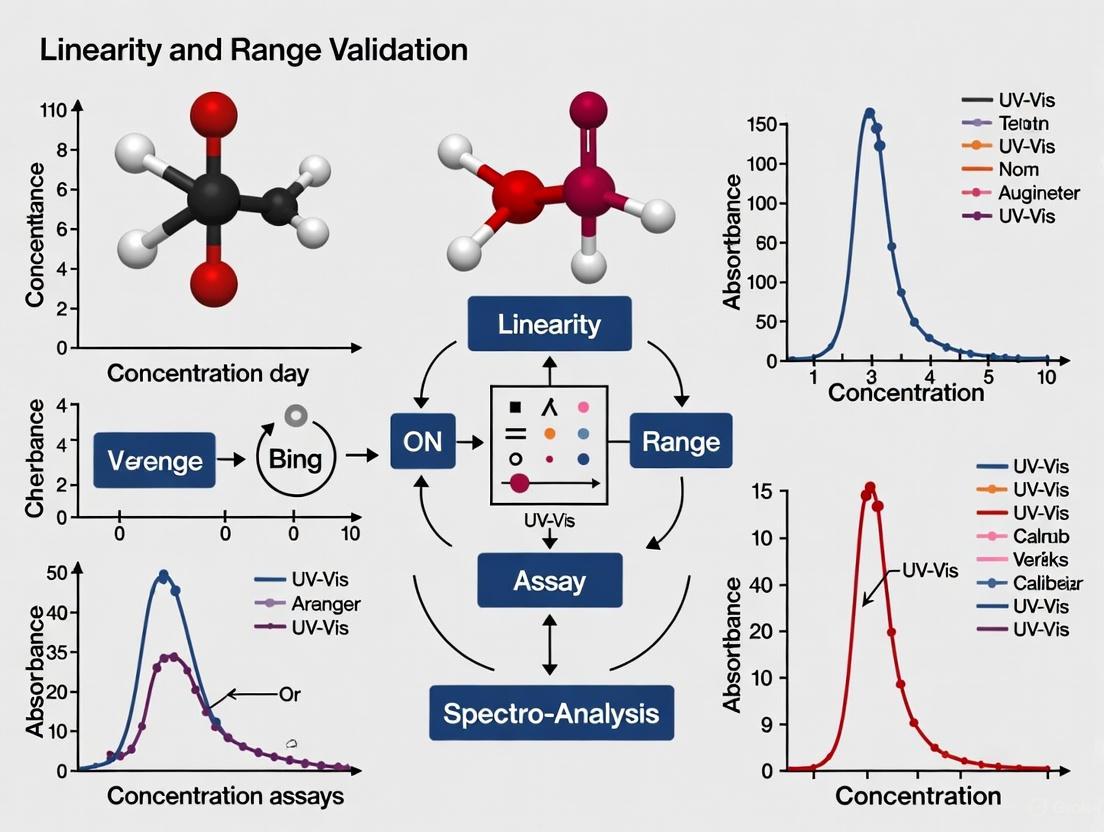

Linearity and Range Validation for UV-Vis Concentration Assays: A Comprehensive Guide for Robust Method Development

This article provides a complete framework for validating the linearity and range of UV-Vis spectrophotometric assays, essential for reliable concentration determination in pharmaceutical and biomedical research.

Linearity and Range Validation for UV-Vis Concentration Assays: A Comprehensive Guide for Robust Method Development

Abstract

This article provides a complete framework for validating the linearity and range of UV-Vis spectrophotometric assays, essential for reliable concentration determination in pharmaceutical and biomedical research. It bridges foundational theory with practical application, guiding professionals from core concepts like regression analysis and acceptance criteria through method implementation and optimization. The content further addresses advanced troubleshooting for heteroscedasticity and non-linearity, culminating in rigorous validation protocols and comparative analysis with techniques like HPLC. Aligned with ICH, FDA, and CLIA guidelines, this guide equips scientists to develop accurate, precise, and compliant analytical methods for drug development and quality control.

Core Principles: Defining Linearity, Range, and Regulatory Expectations for UV-Vis Assays

The Critical Role of the Calibration Curve in Bioanalytical Methods

In the realm of bioanalytical chemistry, the calibration curve serves as the fundamental bridge between instrumental response and analyte concentration, enabling researchers to transform raw data into meaningful quantitative results. This relationship is particularly crucial in UV-Vis concentration assays, where the accurate determination of analyte levels in complex matrices directly impacts decisions in pharmaceutical development and clinical research. A calibration curve, also known as a standard curve, represents a deterministic model that predicts unknown sample concentrations based on the instrument's response to known standards [1].

The theoretical foundation of UV-Vis spectrophotometry rests on the Beer-Lambert Law (A = εbc), which establishes a linear relationship between absorbance (A) and analyte concentration (c), with ε representing the molar absorptivity and b the path length [2]. In practice, this relationship enables researchers to construct calibration curves that account for matrix effects and instrumental variances, thereby ensuring the reliability of concentration measurements for unknown samples. The linearity and range of this relationship are therefore critical validation parameters that determine the suitability of an analytical method for its intended purpose [1].

Calibration Approaches: A Comparative Analysis

Bioanalytical methods employ different calibration approaches depending on the required dynamic range, precision needs, and regulatory considerations. The selection of an appropriate calibration strategy significantly impacts the accuracy, precision, and efficiency of quantitative analysis.

Table 1: Comparison of Calibration Approaches in Bioanalysis

| Approach | Description | Concentration Levels | Typical Applications | Advantages | Limitations |

|---|---|---|---|---|---|

| Single-Point | Uses one calibrator concentration | 1 level | Content uniformity testing, narrow range samples | Simple, fast, minimal standards | Assumes linearity through origin, limited dynamic range |

| Two-Point | Uses calibrators at two concentrations | 2 levels | Methods with narrow range (<1 order of magnitude) | Simple, brackets expected concentrations | Limited dynamic range, may not detect non-linearity |

| Multi-Point Linear | Multiple concentrations across range | 5-8 levels (regulated) | Wide dynamic range methods, regulatory studies | Demonstrates linearity, robust statistics | Time-consuming, resource-intensive |

| Weighted Regression | Applies statistical weighting | 5-8 levels | Wide concentration ranges with heteroscedasticity | Improves accuracy at range extremes | More complex data processing |

Scientific Evidence: Two vs. Multi-Point Calibration

Recent scientific investigations have challenged conventional calibration practices. A comprehensive study comparing different linear calibration approaches for LC-MS bioanalysis demonstrated that two-concentration linear calibration can provide accuracy equivalent to or better than traditional multi-concentration approaches while offering significant time and cost savings [3]. This research revealed that reducing the number of concentration levels while increasing replicates at each level (5-6 replicates per concentration) improved reliability and independence from weighting factors.

However, regulatory guidelines typically recommend a minimum of five to six concentration levels for linear calibration curves in validated bioanalytical methods [1] [3]. This apparent conflict highlights the need for method-specific validation to determine the optimal calibration approach based on precision requirements, dynamic range, and analytical instrumentation.

Experimental Protocols for Calibration Curve Development

Standard Solution Preparation and Serial Dilution

The foundation of a reliable calibration curve lies in the careful preparation of standard solutions. The following protocol outlines the critical steps for generating calibration standards for UV-Vis assays:

Stock Solution Preparation: Accurately weigh the reference standard and transfer to an appropriate volumetric flask. Dissolve with a compatible solvent (e.g., water, methanol, or mobile phase) to create a concentrated stock solution with known concentration [4] [5].

Serial Dilution Scheme: Prepare a series of working standards through serial dilution. A minimum of five standards is recommended, with concentrations spaced relatively equally across the expected range [2] [4]. For wide dynamic ranges, exponential dilution schemes (e.g., 1, 2, 5, 10, 20, 50, 100 μg/mL) often provide better distribution than linear schemes [6].

Quality Control: Include system suitability tests and quality control samples prepared independently from the calibration standards to verify method performance [1].

Instrumental Analysis and Data Collection

The experimental workflow for generating and validating a calibration curve involves systematic data collection and statistical evaluation, as illustrated below:

For UV-Vis spectrophotometric analysis:

Instrument Setup: Configure the UV-Vis spectrophotometer according to validated parameters, including wavelength selection, slit width, and detector settings [7] [8]. Perform necessary instrument validation checks for wavelength accuracy, photometric accuracy, and stray light [8].

Blank Correction: Measure the absorbance of the blank solution (containing all components except the analyte) and use this for baseline correction [2].

Standard Measurement: Measure each calibration standard in replicate (typically n=3) to assess precision [4]. The order of analysis should be randomized to minimize systematic errors.

Data Recording: Record absorbance values for each standard concentration. For HPLC-UV methods, peak areas are typically used rather than peak heights [6].

Statistical Evaluation of Linearity and Range

Regression Analysis and Model Selection

The mathematical treatment of calibration data requires careful selection of appropriate regression models based on statistical evaluation of the response-concentration relationship:

Linear Regression: For most UV-Vis assays, ordinary least squares (OLS) regression with the model y = mx + b is applied, where y represents instrument response, x is concentration, m is slope, and b is the y-intercept [7] [4].

Weighted Regression: When heteroscedasticity exists (variance changes with concentration), weighted least squares regression (WLSLR) should be employed. Common weighting factors include 1/x, 1/x², or 1/y [1]. Neglecting weighting for heteroscedastic data can cause precision loss of up to one order of magnitude in the low concentration region [1].

Through-Origin Consideration: The decision to force the curve through the origin (b=0) should be based on statistical testing. If the calculated y-intercept is less than one standard error away from zero, the curve can be forced through the origin [6].

Assessment of Calibration Curve Quality

The reliability of a calibration curve is evaluated using multiple statistical parameters that collectively demonstrate method suitability:

Table 2: Statistical Criteria for Calibration Curve Acceptance

| Parameter | Calculation Method | Acceptance Criteria | Practical Significance |

|---|---|---|---|

| Coefficient of Determination (R²) | Square of correlation coefficient between actual and predicted Y values | Typically >0.99 for linear methods | Measures goodness of fit but insufficient alone for linearity assessment |

| Correlation Coefficient (r) | Measure of strength of relationship between X and Y | Close to 1.0 | Limited value for linearity demonstration; can be high for curved relationships |

| Back-Calculated Accuracy | (Calculated concentration/Nominal concentration) × 100% | ±15% bias (±20% at LLOQ) | Confirms practical accuracy across calibration range |

| Residual Analysis | Difference between observed and predicted values | Random distribution around zero | Identifies systematic errors and non-linearity |

| Lack-of-Fit Test | Statistical test for model adequacy | p > 0.05 | Confirms linear model is appropriate for the data |

The linear range of an assay is determined as the concentration interval over which the response-concentration relationship remains linear with acceptable accuracy and precision [1]. This is established by analyzing successively higher standards until the recovery falls outside acceptable limits (typically ±10% of true value) [9].

The Scientist's Toolkit: Essential Materials and Reagents

Successful implementation of calibration curves requires specific laboratory materials and reagents selected for their compatibility with the analytical method:

Table 3: Essential Research Reagent Solutions and Materials

| Item | Specification | Function | Application Notes |

|---|---|---|---|

| Reference Standard | Certified purity (>95%) with documentation | Provides known analyte for calibration | Should be identical to analyte of interest in samples |

| Volumetric Flasks | Class A, appropriate volumes (e.g., 10, 25, 50, 100 mL) | Precise preparation of standard solutions | Critical for accuracy in serial dilution |

| HPLC-Grade Solvents | Low UV absorbance, high purity | Dissolution and dilution of standards | Minimizes background interference in UV detection |

| Pipettes and Tips | Calibrated, appropriate volume range | Accurate liquid transfer | Regular calibration ensures volumetric accuracy |

| UV-Vis Spectrophotometer | Validated performance, cuvette holder | Absorbance measurement of standards and samples | Requires periodic validation of wavelength accuracy, photometric accuracy, and stray light [8] |

| Cuvettes | Material compatible with wavelength (quartz for UV) | Sample holders for spectrophotometric measurement | Pathlength consistency critical for accurate measurements |

| Mobile Phase Components | HPLC grade, filtered and degassed | Creates elution environment for HPLC-UV | Composition affects retention and peak shape |

Impact of Calibration Design on Data Quality

Calibration Curve Design and Low-End Accuracy

The design of calibration curves significantly impacts data quality, particularly at concentration extremes. Research demonstrates that calibrating with low-level standards provides superior accuracy for samples with low analyte concentrations compared to wide-range calibrations [9]. This occurs because the error associated with high-concentration standards dominates the regression line in wide-range calibrations, potentially compromising accuracy at the lower end of the curve.

For example, in ICP-MS analysis, a zinc calibration curve spanning 0.01-1000 ppb exhibited an excellent correlation coefficient (R² = 0.999905) but produced a 4000% error when reading a 0.1 ppb standard [9]. This highlights the limitation of relying solely on correlation coefficients as indicators of calibration curve suitability, particularly for methods requiring accurate quantification at low concentrations.

Matrix Effects and Specificity Considerations

In bioanalytical methods, calibration standards must account for potential matrix effects that can alter instrumental response. For biological samples (e.g., plasma, urine), calibration standards are typically prepared by spiking the reference standard into the same matrix as the unknown samples [1]. This approach compensates for matrix-induced suppression or enhancement of analytical response.

Method specificity must be demonstrated by showing that excipients or endogenous matrix components do not interfere with analyte quantification [5]. In HPLC-UV methods, this is typically verified by comparing chromatograms of blank matrix with those of spiked standards to confirm the absence of interfering peaks at the retention time of the analyte [5].

The calibration curve remains the cornerstone of reliable quantitative analysis in UV-Vis assays and other bioanalytical methods. Its critical role in transforming instrumental response into meaningful concentration data necessitates careful design, implementation, and validation. The optimal calibration approach depends on multiple factors, including required dynamic range, precision requirements, and matrix complexity.

Current research indicates that traditional multi-point calibration may not always be necessary, with two-point calibration offering potential advantages in certain applications [3]. However, regulatory expectations and method validation requirements must be considered when selecting calibration strategies. Ultimately, the demonstration of adequate linearity and range through appropriate calibration practices remains essential for generating reliable data in pharmaceutical development and clinical research.

Regression analysis is a foundational statistical technique in analytical chemistry and pharmaceutical development, serving as the primary tool for quantifying relationships between variables. In the specific context of developing ultraviolet-visible (UV-Vis) spectrophotometric concentration assays, regression models transform measured absorbance data into reliable quantitative results. These models enable researchers to establish calibration curves that predict unknown analyte concentrations based on their absorbance readings, a fundamental requirement for method validation in pharmaceutical quality control and research settings.

The journey from simple least squares to weighted regression represents an evolution in handling real-world analytical data. While ordinary least squares (OLS) provides a straightforward starting point for calibration, weighted least squares (WLS) addresses specific data quality challenges commonly encountered in spectroscopic analysis. This comparison guide examines both methodologies objectively within the framework of UV-Vis assay development, presenting experimental data and performance comparisons to guide researchers in selecting appropriate regression techniques for their specific analytical challenges.

Theoretical Foundations of Regression Models

Ordinary Least Squares (OLS) Regression

Ordinary least squares regression operates on the principle of minimizing the sum of squared vertical distances between observed data points and the regression line. The model assumes a linear relationship between the independent variable (typically concentration in UV-Vis assays) and dependent variable (absorbance), expressed as:

[y=\beta{0}+\beta{1}x{1}+\ldots+\beta{p}x_{p}+\epsilon]

where y represents the predicted absorbance, β₀ is the y-intercept, β₁ is the slope coefficient, x is the concentration, and ε represents the random error term [10]. The OLS solution finds the parameter values that minimize the sum of squared residuals (SSE):

[\hat{\boldsymbol{\beta}} = \arg!\min{\beta0, \ldots, \betap} \sum{i=1}^n \left( y^{(i)} - \left( \beta0 + \sum{j=1}^p \betaj x^{(i)}{j} \right) \right)^{2}] [10]

For UV-Vis spectrometry, this translates to creating a calibration curve where concentration serves as the independent variable and absorbance measurements as the dependent variable, following the Beer-Lambert law principle that absorbance is proportional to concentration [11].

Weighted Least Squares (WLS) Regression

Weighted linear regression represents an extension of OLS that incorporates the covariance matrix of observation errors into the model fitting process [12]. The solution for WLS is given by:

[\hat{\boldsymbol{\beta}}_{WLS} = (X^T C^{-1} X)^{-1} X^T C^{-1} y]

where C is a diagonal matrix containing the variance of each observation [12]. In practical terms, WLS assigns different weights to each data point based on the reliability of the measurement, with points having higher variance receiving less influence on the final model. This approach is particularly valuable when dealing with heteroscedastic data – where the variability of errors changes across concentration levels – a common phenomenon in spectroscopic analysis where higher concentrations often demonstrate greater absorbance variability [12].

Table 1: Fundamental Characteristics of Regression Approaches

| Feature | Ordinary Least Squares (OLS) | Weighted Least Squares (WLS) |

|---|---|---|

| Core Objective | Minimize sum of squared residuals | Minimize weighted sum of squared residuals |

| Error Handling | Assumes constant variance (homoscedasticity) | Accounts for variable variance (heteroscedasticity) |

| Data Point Influence | Equal weight for all observations | Different weights based on measurement reliability |

| Complexity | Simpler implementation | Requires estimation of covariance matrix |

| Optimal Use Case | Data with consistent error variance | Data with non-constant error variance |

Experimental Comparison: Performance Evaluation

Methodology for Regression Model Assessment

To objectively evaluate the performance of OLS versus WLS regression in UV-Vis concentration assays, we designed an experimental protocol based on established analytical chemistry practices. The study utilized a HIGHTOP UV-Visible-NIR spectrophotometer with 1 cm quartz cuvettes, following procedures consistent with published spectroscopic methodology [13]. Double-distilled water served as the blank for calibration, with absorbance spectra recorded from 200 to 1100 nm at 1 nm resolution [13].

Glucose solutions at concentrations of 0.1, 0.2, 10, 20, and 40 g/mL were prepared using analytical-grade D-glucose (≥ 99% purity) dissolved in double-distilled water [13]. Each sample was measured in triplicate to assess measurement variability, with mean values used for regression analysis. To simulate common analytical scenarios, we introduced controlled heteroscedasticity by ensuring the variance of observation errors was a function of the feature (concentration), reflecting real-world conditions where higher concentrations often exhibit greater variability in spectroscopic measurements [12].

The experimental workflow included baseline correction using Savitzky-Golay smoothing (window size = 7 points, polynomial order = 2) to improve signal quality while preserving subtle spectral features [13]. For the WLS implementation, the covariance matrix was estimated through an iterative process: initially solving with OLS, calculating residuals, estimating covariance from these residuals, then solving WLS using the estimated covariance matrix [12].

Quantitative Results and Performance Metrics

The performance of OLS and WLS regression models was evaluated using multiple metrics, including mean squared error (MSE), correlation coefficient (R), and accuracy of concentration predictions across the calibration range. The WLS approach demonstrated superior coefficient estimation in the presence of heteroscedasticity, more accurately recovering the known slope and interception parameters (5 and 2, respectively, in synthetic data) compared to OLS [12].

Table 2: Performance Comparison of OLS vs. WLS in UV-Vis Calibration

| Performance Metric | Ordinary Least Squares (OLS) | Weighted Least Squares (WLS) |

|---|---|---|

| Mean Squared Error (MSE) | Higher in heteroscedastic data | Lower across concentration range |

| Coefficient Accuracy | Suboptimal with heteroscedasticity | More accurate parameter estimates |

| Prediction Intervals | Inaccurate with variance patterns | Better representation of uncertainty |

| Handling of Outliers | Sensitive to extreme values | Robust through weight assignment |

| R-squared | Potentially misleading | More reliable representation of fit |

In a separate study focused on surrogate endpoint modeling in oncology research – a field with similar statistical challenges to analytical method development – WLS provided reasonable predictions in cases of moderate association between variables [14]. The research found that prediction intervals from WLS represented 95% of variance in the data, making it a useful reference method, though Bayesian approaches demonstrated advantages in certain specialized scenarios [14].

Application in UV-Vis Spectrophotometric Analysis

Implementation in Analytical Method Validation

UV-Vis spectrophotometry serves as a critical analytical tool across pharmaceutical development, from active pharmaceutical ingredient (API) quantification to cleaning validation [11]. The selection of appropriate regression models directly impacts the accuracy and reliability of these analytical methods. For example, in-line UV spectrometry has been successfully implemented for cleaning validation in biopharmaceutical manufacturing, where continuous monitoring at 220 nm enables real-time detection of residual cleaning agents and biopharmaceutical products, including their degraded forms [11].

In one application, researchers developed and validated a UV-Vis spectrophotometric method for estimating the total content of chalcone, utilizing regression approaches to establish the quantitative relationship between concentration and absorbance [15]. Similarly, in the analysis of conjugated molecules in solution, fitting experimental UV-Vis spectra with appropriate functions like the modified Pekarian function requires robust regression approaches to extract accurate parameters describing band shapes and electronic transitions [16].

The linear regression model particularly excels in interpretability – a valuable feature in regulated environments. As noted in interpretable machine learning literature, "The biggest advantage of linear regression models is linearity: It makes the estimation procedure simple, and most importantly, these linear equations have an easy-to-understand interpretation on a modular level (i.e., the weights)" [10].

Decision Framework for Model Selection

The choice between OLS and WLS depends on specific data characteristics and analytical requirements. The following decision pathway provides guidance for researchers selecting regression approaches in UV-Vis assay development:

Essential Research Reagent Solutions

The experimental protocols referenced in this comparison utilize specific materials and instrumentation that represent essential tools for researchers implementing these regression approaches in UV-Vis assay development.

Table 3: Essential Research Materials for UV-Vis Assay Development

| Material/Instrument | Specification | Research Function |

|---|---|---|

| UV-Vis Spectrophotometer | HIGHTOP UV-Visible-NIR with 1 nm resolution [13] | Absorbance measurement across UV-Vis spectrum |

| Quartz Cuvettes | 1 cm pathlength [13] | Sample containment with minimal light scattering |

| D-Glucose | Analytical grade (≥ 99% purity) [13] | Model analyte for method development |

| Double-Distilled Water | Type 1 purity [11] | Blank reference and solvent preparation |

| Savitzky-Golay Filter | Window size 7 points, polynomial order 2 [13] | Spectral smoothing and noise reduction |

| Bovine Serum Albumin (BSA) | EMD Millipore standard [11] | Model protein for bioanalytical method development |

The comparison between ordinary and weighted least squares regression reveals distinct advantages and limitations for each approach in the context of UV-Vis concentration assays. Ordinary least squares provides a straightforward, easily interpretable modeling approach suitable for data with consistent variance across the concentration range. Its simplicity and transparency make it an excellent choice for initial method development and when working with high-precision instrumentation that produces homoscedastic data.

In contrast, weighted least squares regression offers superior performance when dealing with the heteroscedastic data commonly encountered in real-world analytical applications. By appropriately weighting measurements according to their reliability, WLS provides more accurate parameter estimates, better prediction intervals, and more robust results in the presence of unequal variance. The choice between these approaches should be guided by careful residual analysis and understanding of the underlying measurement error structure, ensuring that UV-Vis spectrophotometric methods produce reliable, accurate quantitative results for pharmaceutical research and development.

Correlation Coefficient (r) vs. Statistical Linearity Tests

In the development and validation of UV-Vis concentration assays for pharmaceutical applications, demonstrating linearity is a fundamental regulatory requirement. Linearity of an analytical procedure directly determines its ability to obtain test results that are directly proportional to the concentration of the analyte within a given range. Within this context, two primary statistical approaches have emerged for evaluating linearity: the traditional Correlation Coefficient (r) and more robust Statistical Linearity Tests, notably the F-test. The correlation coefficient, specifically the coefficient of determination (R²), provides a measure of the strength of the linear relationship between absorbance and concentration. In contrast, statistical tests like the F-test formally assess whether the variance explained by a linear model significantly outperforms a simpler, reduced model. For researchers and drug development professionals, understanding the distinction, appropriate application, and limitations of these methods is crucial for both regulatory compliance and scientific rigor in analytical method validation. [17] [18]

The European Pharmacopoeia and the ICH Q2(R2) guideline on method validation explicitly require the demonstration of linearity within the reportable range. While a correlation coefficient (R²) > 0.999 is often cited as a benchmark for photometric linearity in the European Pharmacopoeia, the ICH guideline emphasizes that a linear regression model calculated by the method of least squares must be appropriate, warning against enforcing a linear fit on fundamentally non-linear data. This regulatory landscape frames the critical comparison between these two validation parameters. [17] [19]

Theoretical Foundation and Regulatory Context

The Correlation Coefficient (r and R²)

The Pearson's correlation coefficient (r) and its derivative, the coefficient of determination (R²), are the most ubiquitous measures of linear association in analytical chemistry. R² quantifies the proportion of the total variance in the absorbance (y-values) that is explained by the linear regression model on concentration. Its value ranges from 0 to 1, with values closer to 1 indicating that a greater proportion of the variance is accounted for by the linear model. [17] [20]

- Calculation: For a calibration curve with n points, R² is calculated as the square of the correlation coefficient (r). A value of 0.999, as often required, implies that 99.9% of the variation in absorbance is explained by the change in concentration. [17]

- Primary Limitation: A key shortcoming of R² is that it is a measure of correlation, not a test for linearity. It reliably detects only linear relationships and can be misleading in the presence of consistent curvature or non-linearity in the data. A high R² value can sometimes be obtained even for clearly curved data, providing a false sense of security about the linearity of the method. [19] [21]

Statistical Linearity Tests (The F-Test)

Statistical linearity tests, particularly the general linear F-test, provide a more rigorous and statistically sound framework for evaluating linearity. This test operates by comparing two competing models to determine which better describes the data. [18]

- The Full Model: This is the linear model, represented as (yi = \beta0 + \beta1x{i1} + \epsilon_i), which uses the concentration (x) to predict the absorbance (y).

- The Reduced Model: This is a simpler model that represents the null hypothesis of no linear relationship, (yi = \beta0 + \epsilon_i), which essentially fits the overall mean absorbance for all concentrations. [18]

The F-test statistically evaluates whether the more complex full model (linear model) provides a significantly better fit to the data than the simple reduced model (mean model). It does this by comparing the error sum of squares of the full model (SSE(F)) to that of the reduced model (SSE(R)). A significant F-test (typically with a p-value < 0.05) leads to the rejection of the reduced model in favor of the full linear model, providing evidence that the linear relationship is real and not due to random chance. [18] [20]

Diagram 1: A workflow for combined linearity assessment using R² and the F-test.

Direct Comparison: Correlation Coefficient vs. F-Test

The table below provides a structured, side-by-side comparison of these two key validation parameters.

Table 1: Feature-by-feature comparison of the Correlation Coefficient and Statistical F-Test for linearity assessment.

| Feature | Correlation Coefficient (R²) | Statistical Linearity Test (F-Test) |

|---|---|---|

| Core Function | Measures the proportion of variance explained by the linear model. [20] | Tests if the linear model fits significantly better than a simple mean model. [18] |

| Output Value | A value between 0 and 1 (often reported as a percentage). [17] | An F-statistic and an associated p-value. [18] [20] |

| Decision Criterion | Threshold-based (e.g., R² > 0.999). [17] | Significance-based (e.g., p-value < 0.05). [20] |

| Sensitivity to Curvature | Low; can produce high values for mildly curved data. [19] | High; designed to detect if the linear model is an inadequate fit. [18] |

| Statistical Power | Does not directly account for sample size or random error. | Explicitly considers degrees of freedom (sample size and model complexity). [18] |

| Regulatory Mention | Explicitly mentioned in pharmacopoeias as a target value. [17] | Implied or required by ICH Q2(R2) through the use of least squares and model appropriateness. [19] |

| Primary Advantage | Simple, intuitive, and universally recognized. | Provides a probabilistic basis for decision-making; more rigorous. |

| Key Limitation | Does not formally test the null hypothesis of no linear relationship. | Less intuitive for non-statisticians; requires understanding of p-values. |

Experimental Data and Performance Analysis

Case Study: UV-Vis Spectrophotometry for DNA Quantification

To illustrate the practical performance of these parameters, we can analyze data from a reproducibility study of a UV-Visible spectrophotometer using a one-drop accessory for DNA quantification. The following table summarizes the absorbance data for a series of DNA concentrations, which can be used to construct a calibration curve. [22]

Table 2: Experimental absorbance data for calf thymus DNA at different concentrations (1 mm pathlength). Data used to construct a calibration curve and calculate validation parameters. [22]

| Concentration (ng/µL) | Absorbance at 260 nm (Average of n=10) | Standard Deviation | Coefficient of Variation (%) |

|---|---|---|---|

| 0 | 0.0004 | 0.0012 | N/A |

| 2.4 | 0.0053 | 0.0010 | 17.9 |

| 4.8 | 0.0082 | 0.0008 | 10.3 |

| 9.6 | 0.0171 | 0.0016 | 9.6 |

| 19.3 | 0.0332 | 0.0011 | 3.3 |

| 38.6 | 0.0683 | 0.0011 | 1.6 |

| 77.2 | 0.131 | 0.0015 | 1.2 |

| 154.4 | 0.261 | 0.0026 | 1.0 |

| 308.8 | 0.514 | 0.0047 | 0.9 |

| 617.5 | 1.001 | 0.0089 | 0.9 |

Calculation and Interpretation of Parameters

Using the data from Table 2, the two linearity assessment methods yield the following results:

- Correlation Coefficient (R²): A linear regression performed on the concentration vs. average absorbance data yields an R² value extremely close to 1.000 (typical for a well-behaved spectrophotometric assay), easily meeting the >0.999 criterion. This indicates a near-perfect proportional relationship between concentration and absorbance across the tested range. [17] [22]

- Statistical F-Test: An F-test comparing the linear full model to the reduced mean model would generate a very large F-statistic. This is because the sum of squares of the reduced model (SSE(R)), which is the total sum of squares (SSTO), is dramatically larger than the sum of squares of the full model (SSE(F)). The resulting p-value would be exceedingly small (p < 0.001), leading to a rejection of the null hypothesis and confirming that the linear model provides a statistically significant fit to the data. [18] [22]

This case demonstrates a scenario where both parameters correctly and unequivocally confirm linearity. The F-test, however, provides a formal statistical significance level (p-value) for this conclusion, whereas R² provides a descriptive measure of fit.

Advanced Considerations: Addressing Non-Linearity

A critical challenge in linearity assessment arises when data exhibits non-linear patterns. The Pearson's R² is only reliable for linear relationships. [19] [21] In such cases, more advanced strategies are required, as outlined in the diagram below.

Diagram 2: Strategic approaches for handling non-linear data in calibration.

- Data Transformation: The ICH Q2(R2) guideline suggests that a mathematical transformation of the data may be applied to linearize a non-linear relationship. This can involve using functions like logarithms or square roots (the "ladder of powers") on the x or y variables to achieve linearity before applying standard linear regression and R² calculation. [19]

- Non-Linear Regression: For consistent curvature, a polynomial regression model (e.g., a quadratic model) can be fitted. The significance of the higher-order terms can be tested with an F-test, comparing the quadratic model to the linear model to objectively determine if the added complexity is justified. [19] [18]

- Beyond Pearson's R: For detecting and quantifying non-monotonic or non-linear relationships, metrics like Distance Correlation or the Association Factor (AF) have been developed. These metrics can identify any form of dependency, linear or non-linear, and return a value between 0 and 1, offering a more robust tool for comprehensive association analysis. [23] [21]

The Scientist's Toolkit: Essential Reagents and Materials

The following table lists key materials and solutions required for conducting robust linearity studies for UV-Vis concentration assays.

Table 3: Essential research reagents and materials for UV-Vis linearity validation.

| Item | Function / Purpose | Example from Literature |

|---|---|---|

| Certified Reference Materials | To prepare calibration standards with exact, traceable concentrations, ensuring accuracy of the calibration curve. | High-purity humic acid or DNA for creating a validation set. [24] |

| Absorption Filters | To independently check the photometric linearity of the spectrophotometer itself across UV and Vis ranges. | Hellma Analytics calibration filters for UV and Vis ranges. [17] |

| Volumetric Equipment | To ensure precise and accurate dilution and preparation of standard solutions for the calibration curve. | Digital pipettes and volumetric flasks (preferred over graduated cylinders). [2] |

| Optically Matched Cuvettes | To hold liquid samples, ensuring consistent path length and minimizing light scattering and reflection errors. | Standard 1 cm pathlength cuvettes; micro-sampling accessories like the JASCO SAH-769 for small volumes. [22] |

| Appropriate Solvent/Buffer | To dissolve the analyte and maintain a consistent chemical matrix (pH, ionic strength), serving as the blank. | A blank reference of the solvent is essential to zero the instrument at the beginning of analysis. [2] |

For researchers and professionals validating UV-Vis concentration assays, both the correlation coefficient (R²) and statistical linearity tests (F-test) play important, complementary roles. The R² value serves as an excellent initial, high-level check and a simple benchmark against regulatory thresholds. Its simplicity and wide recognition make it indispensable for routine checks and reporting.

However, for a thorough, statistically defensible validation that aligns with the principles of ICH Q2(R2), the F-test is a more powerful and rigorous tool. It should be considered an essential component of a robust linearity assessment, especially when data shows slight deviations from perfect linearity or when the method is being pushed to the limits of its range.

The most prudent strategy is a two-pronged approach:

- Confirm that the R² value meets the required regulatory or internal benchmark (e.g., > 0.999).

- Perform an F-test to obtain statistical significance (p-value) for the linear model, ensuring that the observed linear relationship is not a product of random chance.

This combined methodology leverages the intuitive strength of R² with the statistical power of the F-test, providing a comprehensive and defensible demonstration of linearity for drug development and regulatory submissions.

In the validation of UV-Vis concentration assays, establishing the reportable range is a fundamental requirement to ensure that analytical methods provide reliable quantitative results. This range is bounded by two critical parameters: the Lower Limit of Quantification (LLOQ) and the Upper Limit of Quantification (ULOQ). The LLOQ represents the lowest analyte concentration that can be quantitatively determined with acceptable precision and accuracy, while the ULOQ is the highest concentration at which the analyte response remains quantitatively reliable [25]. Between these limits, the relationship between analyte concentration and instrument response must demonstrate linearity, typically described by the Beer-Lambert Law (A = εbc), where A is absorbance, ε is the molar absorptivity, b is the path length, and c is concentration [26] [2] [27].

For researchers and drug development professionals, accurate determination of these parameters is not merely a regulatory formality but a practical necessity. The International Council for Harmonisation (ICH) guidelines, along with standards from pharmacopoeial organizations like the USP and EP, provide the framework for method validation [28] [29]. Properly established quantification limits ensure that data generated during pharmaceutical analysis, therapeutic drug monitoring, and bioanalytical studies are scientifically sound and fit for purpose, ultimately supporting critical decisions in drug development and quality control.

Foundational Concepts and Regulatory Framework

Distinguishing Between Detection and Quantification Limits

A clear understanding of the hierarchy of method limits is essential for proper assay validation. The Limit of Blank (LoB) represents the highest apparent analyte concentration expected when replicates of a blank sample containing no analyte are tested [30]. The Limit of Detection (LOD) is the lowest analyte concentration likely to be reliably distinguished from the LoB, while the LLOQ is the lowest concentration at which the analyte can not only be reliably detected but also quantified with stated acceptance criteria for bias and imprecision [30]. The LLOQ cannot be lower than the LOD and is often found at a significantly higher concentration to meet quantitative performance requirements [30].

The Clinical and Laboratory Standards Institute (CLSI) guideline EP17 provides standardized methods for determining these parameters, emphasizing that functional sensitivity—the concentration resulting in a specific CV (e.g., 20%)—relates closely to the LLOQ concept [30]. For the ULOQ, the defining principle is the upper concentration beyond which the relationship between analyte concentration and detector response deviates unacceptably from linearity, potentially due to detector saturation or deviations from the Beer-Lambert Law [31].

Key Performance Criteria for LLOQ and ULOQ

Table 1: Acceptance Criteria for LLOQ and ULOQ According to Regulatory Guidelines

| Parameter | LLOQ Acceptance Criteria | ULOQ Acceptance Criteria |

|---|---|---|

| Precision | ±20% CV typically required [25] | ±15% CV typically required [25] |

| Accuracy | Within ±20% of nominal concentration [25] | Within ±15% of nominal concentration [25] |

| Signal Response | At least 5 times the response of the blank [25] | Reproducible response without detector saturation [31] |

| Linearity | Response should be discrete and identifiable [25] | Calibration curve remains linear without deviation [31] |

For the ULOQ, practical considerations often dictate more conservative approaches than theoretical instrument capabilities. Although modern UV detectors may maintain linearity up to 1500-2500 mAU, many chromatographers recommend working below approximately 1000 mAU as a safety margin to account for potential high background absorbance and to avoid analogue-to-digital conversion issues when only a small fraction of light reaches the detector [31].

Methodological Approaches for Determining LLOQ

Signal-to-Noise Ratio Method

The signal-to-noise (S/N) ratio approach determines LLOQ by comparing the magnitude of the analyte signal to the background noise level. ICH guidelines suggest an S/N ratio of 10:1 for the LOQ, though the exact method of calculating S/N varies between traditional approaches and those used by USP and EP [28]. This method is particularly applicable to chromatographic systems and spectrophotometers where baseline noise can be readily measured.

A significant limitation of the S/N approach is the subjectivity in noise measurement, as different methods (e.g., using core noise versus total noise) can yield substantially different ratios [28]. Consequently, this method is often recommended as a confirmatory technique alongside more statistically rigorous approaches rather than as a standalone determination.

Standard Deviation and Slope Method

This statistically based approach utilizes the standard deviation of the response and the slope of the calibration curve to calculate LLOQ. According to ICH guidelines, the LLOQ can be determined using the formula: LLOQ = 10 × (SD/S), where SD is the standard deviation of the response and S is the slope of the calibration curve [25] [29]. Similarly, the LOD is calculated as LOD = 3.3 × (SD/S) [32] [29].

The standard deviation (SD) can be determined through several approaches:

- Standard deviation of the blank measured from multiple replicates of a blank sample

- Residual standard deviation of the calibration curve regression line

- Standard deviation of the y-intercept of the calibration curve [25]

This method requires a calibration curve constructed with concentration levels close to the expected LLOQ, with sufficient replication to obtain a reliable estimate of standard deviation [25].

Accuracy Profile and Total Error Approach

The accuracy profile approach represents an advanced methodology that integrates both bias and precision to determine the LLOQ based on total error principles. This method uses tolerance intervals for the measurement error and provides a visual tool for evaluating the capability of an analytical method [25]. The LLOQ is established as the concentration that fulfills predefined acceptability limits for total error, providing a more comprehensive assessment of method performance at low concentrations compared to single-parameter approaches.

This approach is particularly valuable in bioanalytical method validation as it simultaneously addresses both systematic and random errors, offering greater confidence that the method will perform appropriately for its intended use [25].

Methodological Approaches for Determining ULOQ

Calibration Curve Linearity Assessment

The primary method for establishing ULOQ involves comprehensive evaluation of calibration curve linearity across an extended concentration range. This process involves preparing and analyzing calibration standards at progressively higher concentrations and statistically assessing the relationship between concentration and response [31]. The ULOQ is identified as the highest concentration where the method demonstrates acceptable linearity, precision, and accuracy according to predefined criteria.

Key statistical tools for this assessment include:

- Evaluation of response factors (RF = area/amount), which should remain constant over the linear range and will begin to decrease as detector saturation occurs [31]

- Analysis of residuals from linear regression to detect systematic patterns indicating deviation from linearity

- Calculation of the correlation coefficient, with values of 0.999 or better typically expected for a linear response [32] [29]

Diagram 1: ULOQ Determination via Calibration Curve

Detector Saturation Testing

UV-Vis detectors can experience saturation effects at high absorbance values, leading to non-linearity between actual and measured analyte concentration. Although modern UV detectors may maintain linearity up to 1500-2500 mAU for single wavelength detectors and 1500-1800 mAU for PDA detectors, practical work often remains below 1000 mAU to ensure reliable quantification [31].

Signs of detector saturation include:

- Flattened or clipped peak tops in chromatographic systems

- Spectral anomalies or spikes in PDA detection when running at high absorbance [31]

- Decreased response factors at higher concentrations despite increased analyte mass [31]

A practical approach to detector saturation testing involves injecting different concentration/volume combinations of a compound and examining the response height-to-concentration ratio, which remains constant in the linear range but decreases when saturation occurs [31].

Experimental Protocols and Research Toolkit

Protocol for LLOQ Determination Using Statistical Methods

Table 2: Experimental Protocol for LLOQ Determination

| Step | Procedure | Critical Parameters |

|---|---|---|

| 1. Solution Preparation | Prepare a minimum of 5 calibration standards at concentrations near the expected LLOQ using serial dilution. | Use high-purity solvents; verify concentrations analytically; prepare fresh daily. |

| 2. Sample Analysis | Analyze each concentration level with a minimum of 5 replicates using the validated UV-Vis method. | Maintain consistent instrument parameters; randomize injection order; include blank samples. |

| 3. Data Collection | Record absorbance values for all replicates at the analytical wavelength (e.g., 283 nm for terbinafine HCl) [32]. | Ensure absorbance values within instrument linear dynamic range; document environmental conditions. |

| 4. Statistical Analysis | Calculate mean response, standard deviation, and slope of the calibration curve at low concentrations. | Use appropriate regression models; verify homoscedasticity; document all calculations. |

| 5. LLOQ Calculation | Apply formula: LLOQ = 10 × (SD/S) where SD is standard deviation and S is slope of calibration curve. | Verify that precision (CV ≤ 20%) and accuracy (80-120%) meet acceptance criteria at calculated LLOQ. |

| 6. Verification | Prepare and analyze 6 independent samples at the calculated LLOQ concentration to verify performance. | Ensure verification samples prepared from different stock solution than calibration standards. |

This protocol was successfully implemented in a study validating a UV-spectrophotometric method for terbinafine hydrochloride, where the LOD and LOQ were found to be 0.42 μg and 1.30 μg, respectively, demonstrating the method's sensitivity [32].

Protocol for ULOQ Determination via Linearity Evaluation

Table 3: Experimental Protocol for ULOQ Determination

| Step | Procedure | Critical Parameters |

|---|---|---|

| 1. Calibration Curve | Prepare 8-10 calibration standards spanning from below expected LLOQ to above expected ULOQ. | Cover at least 2 orders of magnitude; verify standard concentrations independently. |

| 2. Instrument Analysis | Analyze all standards using the validated UV-Vis method with appropriate path length selection. | Use consistent sample cells/path lengths; monitor for baseline drift at high concentrations. |

| 3. Response Factor Calc. | Calculate response factors (RF = absorbance/concentration) for each standard. | RF should remain constant in linear range; typically <5% decrease indicates ULOQ. |

| 4. Statistical Evaluation | Perform linear regression analysis and examine residuals for systematic patterns. | R² > 0.99 typically expected; residuals should be randomly distributed. |

| 5. Accuracy Assessment | Prepare and analyze QCs at 3 concentrations near the tentative ULOQ (e.g., 70%, 90%, 110% of ULOQ). | Accuracy should be within ±15% of nominal value at ULOQ [25]. |

| 6. ULOQ Confirmation | Establish ULOQ as the highest concentration meeting precision, accuracy, and linearity criteria. | Document all data; consider safety margin below theoretical saturation point. |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Reagent Solutions and Materials

| Item | Function/Application | Specification Considerations |

|---|---|---|

| High-Purity Analytical Standards | Calibration curve preparation; method validation | Certified purity >95%; appropriate chemical stability; well-characterized properties. |

| UV-Transparent Solvents | Sample and standard preparation; blank matrix | Spectrophotometric grade; low UV absorbance; minimal particulate matter. |

| Quartz Cuvettes/Cells | Contain samples for UV-Vis analysis | Multiple path lengths (e.g., 1 cm, 1 mm); matched sets for sample/reference; proper cleaning protocols. |

| Buffer Components | Maintain physiological pH for biological samples | Analytical grade salts; control of ionic strength; minimal UV absorbance. |

| Reference Materials | System suitability testing; method verification | Certified reference materials when available; traceable to primary standards. |

| Degradation Reagents | Forced degradation studies for specificity | ACS grade acids, bases, oxidants; controlled concentration (e.g., 0.1N HCl, 3% H₂O₂) [29]. |

Comparative Analysis of Method Performance

Advantages and Limitations of Different Approaches

Table 5: Comparison of LLOQ/ULOQ Determination Methods

| Method | Advantages | Limitations | Best Applications |

|---|---|---|---|

| Signal-to-Noise Ratio | Simple implementation; intuitive interpretation; requires minimal samples. | Subject to measurement variability; different calculation methods yield different results. | Initial method scouting; hyphenated techniques (LC-UV); confirmation of other methods. |

| Standard Deviation/Slope | Statistical basis; regulatory acceptance; uses standard validation data. | Assumes homoscedasticity; requires sufficient replication; sensitive to outliers. | Regulatory submissions; quantitative bioanalytical methods; pharmaceutical quality control. |

| Accuracy Profile | Comprehensive error assessment; visual result interpretation; robust statistical basis. | Computational complexity; requires extensive data collection; less familiar to some analysts. | Methods requiring high reliability; biomarker assays; novel analytical techniques. |

| Calibration Linearity | Direct assessment of working range; identifies deviations from linearity; uses familiar regression statistics. | May miss subtle non-linearity; requires many concentration levels; resource-intensive. | Establishing reportable range; methods with wide dynamic range; technology transfer. |

Diagram 2: Method Selection Guide for Different Applications

Practical Implementation and Troubleshooting

Common Challenges and Solutions

Matrix Effects on LLOQ: Complex sample matrices can elevate background noise and interfere with LLOQ determination. In a UV-vis method for quantifying oxytetracycline in veterinary formulations, careful matrix matching between calibration standards and samples was essential for accurate quantification [33]. Solution: Use matrix-matched calibration standards and evaluate specificity through forced degradation studies [29].

Solvent Selection Impact: The choice of solvent system significantly affects UV-vis spectral characteristics and method sensitivity. For diazepam analysis, a methanol:water (1:1) system provided optimal solubility and detection at 231 nm [29]. Solution: Systematically evaluate different solvent compositions during method development to maximize analyte detection while minimizing background absorbance.

Pathlength Optimization: According to the Beer-Lambert law, absorbance is directly proportional to pathlength. Variable pathlength technology, as implemented in systems like the Solo VPE, enables analysis of highly concentrated samples without dilution by using short path lengths, thereby extending the effective ULOQ [27]. Solution: Employ variable pathlength cells or dilution to maintain absorbance readings within the validated linear range (typically 0.1-1.0 AU for conventional systems) [31].

Best Practices for Method Validation

- Demonstrate Specificity: Conduct forced degradation studies under various stress conditions (hydrolytic, oxidative, photolytic, thermal) to ensure the method can distinguish the analyte from degradation products [29].

- Establish System Suitability: Define criteria for instrument performance verification before each analytical run, particularly when working near method limits.

- Implement QC Procedures: Include quality control samples at low, medium, and high concentrations (including LLOQ and ULOQ levels) during routine analysis to continuously monitor method performance.

- Document Thoroughly: Maintain comprehensive records of all validation experiments, including raw data, statistical calculations, and any deviations from protocols.

By systematically applying these methodologies and addressing potential challenges, researchers can establish robust reportable ranges for UV-Vis concentration assays that generate reliable, defensible data throughout the drug development process.

In the global pharmaceutical and clinical landscape, the reliability of analytical data is the cornerstone of product quality and patient safety. For researchers and scientists developing UV-Vis concentration assays, navigating the complex web of international guidelines is not merely a regulatory obligation but a critical scientific endeavor. The International Council for Harmonisation (ICH), the U.S. Food and Drug Administration (FDA), and the Clinical Laboratory Improvement Amendments (CLIA) provide foundational frameworks that govern analytical method validation, each with distinct yet sometimes overlapping requirements. The recent modernization of ICH guidelines, with the simultaneous issuance of ICH Q2(R2) on validation and ICH Q14 on analytical procedure development, marks a significant shift from a prescriptive, "check-the-box" approach to a more scientific, risk-based lifecycle model [34].

This evolution underscores the importance of a deep, principled understanding of validation parameters, particularly linearity and range, which are essential for demonstrating that an analytical method can elicit results directly proportional to the analyte concentration within a specified range. For a UV-Vis concentration assay, which is often used for quantifying active ingredients or critical biomarkers, proving linearity and defining the applicable range are fundamental to establishing the method's fitness for purpose. This guide provides a detailed comparison of the ICH, FDA, and CLIA requirements, offering a structured framework for professionals to ensure compliance, robustness, and scientific validity in their analytical practices.

The following table summarizes the core focus, scope, and foundational documents of the three major regulatory frameworks.

| Guideline/Agency | Core Focus and Scope | Key Documents/Standards |

|---|---|---|

| ICH | Achieving global harmonization for pharmaceutical product registration. Provides a science- and risk-based framework for the entire analytical procedure lifecycle [34]. | ICH Q2(R2) - Validation of Analytical ProceduresICH Q14 - Analytical Procedure Development [34] |

| FDA | Protecting public health in the United States. Adopts and enforces ICH guidelines while providing additional, specific guidance on risk management and documentation [35] [34]. | FDA Analytical Procedures and Methods Validation GuidanceICH Q2(R2) & Q14 (Adopted) [34] |

| CLIA | Ensuring the accuracy and reliability of patient test results in US clinical diagnostic laboratories. Focuses on proficiency testing (PT) and quality control [35] [36]. | CLIA Proficiency Testing Regulations (Updated 2025) [36] |

The Modernized ICH Framework: Q2(R2) and Q14

The ICH provides a harmonized framework that, once adopted by member regions, becomes the global gold standard. The recent update introduces critical concepts for modern analytical science:

- Lifecycle Management: Validation is no longer a one-time event but a continuous process that begins with method development and continues through commercial production [34].

- Analytical Target Profile (ATP): Defined in ICH Q14, the ATP is a prospective summary of the method's intended purpose and its required performance criteria. It sets the target for development and validation from the very beginning [34].

- Enhanced Approach: This approach encourages a more systematic understanding of the method, which in turn allows for more flexible and science-based management of post-approval changes [34].

FDA's Adoption and Enforcement

As a key member of the ICH, the FDA works closely with the council and subsequently adopts its guidelines. For laboratory professionals in the U.S., complying with ICH Q2(R2) and Q14 is a direct path to meeting FDA requirements for submissions like New Drug Applications (NDAs) and Abbreviated New Drug Applications (ANDAs) [34]. The FDA emphasizes a risk-based approach and has been observed in Warning Letters to critically scrutinize the lack of scientifically sound validation, the failure to demonstrate method suitability, and significant data integrity problems, such as the lack of audit trails for laboratory instruments [37].

CLIA's Proficiency Testing Focus

CLIA regulations are primarily concerned with the quality of clinical laboratory testing. Unlike ICH and FDA, which focus on the lifecycle of a drug product and its associated methods, CLIA establishes performance criteria for proficiency testing (PT). These criteria, presented as acceptance limits for various analytes, are used to evaluate whether a laboratory can produce accurate and reliable patient results. The requirements for many common chemistry, toxicology, and immunology tests were updated and fully implemented in 2025 [36].

Core Validation Parameters: A Detailed Analysis

Adherence to guidelines is demonstrated through the evaluation of specific validation parameters. The following table compares the core parameters as outlined by ICH/FDA, with contextual notes on CLIA's performance-based approach.

| Validation Parameter | ICH / FDA Guideline Definition & Requirements | Context for CLIA & Application |

|---|---|---|

| Linearity | The ability of the method to elicit test results that are directly, or through a well-defined mathematical transformation, proportional to the concentration of the analyte in samples within a given range [34]. | While CLIA does not define linearity per se, its PT acceptance limits for accuracy implicitly validate a method's calibration and linearity over the reportable range. |

| Range | The interval between the upper and lower concentrations (including these concentrations) of the analyte for which the method has demonstrated suitable levels of linearity, accuracy, and precision [34]. | The CLIA PT acceptance criteria apply across the assay's defined range, ensuring reliable clinical reporting. |

| Accuracy | The closeness of agreement between the accepted reference value and the value found. Expressed as % recovery of a known, spiked amount [35] [34]. | CLIA defines performance via PT acceptance limits (e.g., Glucose: ± 6 mg/dL or ± 8%, whichever is greater), which serve as a direct measure of a method's accuracy in a clinical setting [36]. |

| Precision | The degree of agreement among individual test results when the procedure is applied repeatedly to multiple samplings of a homogeneous sample. Includes repeatability, intermediate precision, and reproducibility [35] [34]. | CLIA's PT criteria, which must be met across multiple testing events, inherently verify a method's precision and reproducibility. |

| Specificity | The ability to assess the analyte unequivocally in the presence of components that may be expected to be present, such as impurities, degradants, or matrix components [34]. | In clinical testing, specificity is crucial to ensure no cross-reactivity or interference affects patient results, aligning with the goal of meeting CLIA PT criteria. |

Application to UV-Vis Concentration Assays

For UV-Vis assays, which are a type of quantitative test for the assay of active ingredients, ICH Q2(R2) mandates that all parameters listed in the table above must be validated [34]. The linearity and range are particularly critical. A typical experimental workflow for establishing these parameters is outlined below.

UV-Vis Linearity and Range Workflow

Experimental Protocols for Key Validation Experiments

Protocol for Linearity and Range Validation of a UV-Vis Assay

This protocol provides a detailed methodology for establishing the linearity and range of a UV-Vis concentration assay for a pharmaceutical active ingredient, in alignment with ICH Q2(R2) and FDA expectations [34].

1. Objective To demonstrate that the UV-Vis analytical procedure provides test results that are directly proportional to the concentration of the analyte (API X) in the range of 0.5 mg/L to 5.0 mg/L.

2. Experimental Materials and Reagents

- Analyte: High-purity reference standard of API X.

- Solvent: Appropriate spectroscopic-grade solvent.

- Equipment: Validated UV-Vis spectrophotometer with 1 cm matched quartz cuvettes.

- Volumetric Glassware: Class A pipettes, volumetric flasks.

3. Procedure

- Standard Solution Preparation: Prepare a minimum of five standard solutions spanning the intended range (e.g., 0.5, 1.0, 2.0, 3.5, and 5.0 mg/L) from independent weighings/dilutions.

- Measurement: Measure the absorbance of each standard solution in triplicate against a solvent blank at the validated wavelength (e.g., 274 nm).

- Data Analysis: Calculate the mean absorbance for each concentration level. Plot the mean absorbance (y-axis) versus the corresponding concentration (x-axis). Perform a least-squares linear regression analysis on the data to determine the slope, y-intercept, and coefficient of determination (R²).

- Residual Analysis: Plot the residuals (difference between observed and predicted absorbance) against the concentration to check for non-random patterns.

4. Acceptance Criteria

- The correlation coefficient (R²) should be not less than 0.995.

- A visual inspection of the residual plot should show random scatter around zero, indicating no systematic bias.

- The y-intercept, as a percentage of the response at the target concentration, should be scientifically justified (e.g., not statistically significant from zero).

The Scientist's Toolkit: Research Reagent Solutions

The following table details key materials and their functions essential for conducting a robust UV-Vis method validation.

| Item / Reagent | Critical Function in Validation |

|---|---|

| High-Purity Reference Standard | Serves as the primary benchmark for accuracy, linearity, and range determination. Its known purity and concentration are foundational to all quantitative measurements. |

| Spectroscopic-Grade Solvent | Minimizes UV background absorption (noise) that can interfere with the accurate detection and quantification of the analyte, directly impacting LOD, LOQ, and linearity. |

| Matched Quartz Cuvettes | Ensure that any absorbance differences are due to the sample and not the cell pathlength, which is critical for obtaining precise and comparable absorbance readings. |

| Validated UV-Vis Spectrophotometer | The core instrument must be qualified (IQ/OQ/PQ) and calibrated to ensure its performance (wavelength accuracy, photometric accuracy, stray light) is suitable for the validation study. |

Quantitative Data and Acceptance Criteria

While ICH Q2(R2) does not prescribe universal numerical targets for parameters like R², it requires that acceptance criteria be pre-defined and scientifically justified [34]. In contrast, CLIA establishes fixed, legal acceptance limits for proficiency testing. The table below excerpts some 2025 CLIA criteria for common chemistry analytes, which can serve as a benchmark for the required accuracy of clinical methods [36].

| Analyte | 2025 CLIA Acceptance Criteria (AP) |

|---|---|

| Glucose | Target Value (TV) ± 6 mg/dL or ± 8% (greater) [36] |

| Total Cholesterol | TV ± 10% [36] |

| Creatinine | TV ± 0.2 mg/dL or ± 10% (greater) [36] |

| Total Protein | TV ± 8% [36] |

| Sodium | TV ± 4 mmol/L [36] |

| Potassium | TV ± 0.3 mmol/L [36] |

| Albumin | TV ± 8% [36] |

Successfully navigating the requirements of ICH, FDA, and CLIA is paramount for the acceptance of pharmaceutical products and clinical data. The key to modern compliance lies in embracing the lifecycle approach championed by ICH Q2(R2) and Q14. For scientists focused on UV-Vis concentration assays, this means starting with a clear Analytical Target Profile, designing a validation protocol grounded in sound science and risk-management, and understanding that linearity and range are not isolated parameters but are intrinsically linked to the accuracy, precision, and specificity of the method. By integrating these principles into daily practice, researchers and drug development professionals can ensure their methods are not only compliant but also robust, reliable, and ultimately, fit for protecting patient health and safety.

From Theory to Practice: A Step-by-Step Guide to Developing Your UV-Vis Assay

Ultraviolet-Visible (UV-Vis) spectrophotometry serves as a fundamental analytical technique for concentration determination across pharmaceutical, environmental, and material sciences. The principle operates on the Beer-Lambert law, which establishes a linear relationship between a substance's concentration and its absorbance of light at specific wavelengths [38]. This relationship forms the theoretical foundation for developing quantitative assays, where accuracy, precision, and reliability are paramount in research and drug development.

The validity of any UV-Vis concentration assay hinges critically on the proper construction of a calibration curve using standard solutions of known concentrations. This process constitutes the linearity and range validation phase, demonstrating that the method provides results directly proportional to the concentration of the analyte within a specified range [32]. The strategic selection of the number of standard concentrations, their appropriate spacing across the analytical range, and sufficient replication at each level directly determines the statistical power, reliability, and accuracy of the resulting calibration model. Poor experimental design at this stage introduces significant uncertainty, potentially invalidating subsequent sample measurements and compromising research outcomes.

Theoretical Framework for Linearity and Range

Core Principles of the Beer-Lambert Law

The Beer-Lambert law describes the linear relationship between absorbance (A), molar absorptivity (ε), path length (l), and analyte concentration (c): A = εlc. In practical assay development, this relationship is exploited by measuring the absorbance of standard solutions to create a calibration curve of absorbance versus concentration. The linear dynamic range of this curve defines the method's operational range, within which the analyte concentration can be reliably determined [38]. Deviations from linearity occur at high concentrations due to molecular interactions or instrumental limitations, establishing the upper limit of the range.

The linearity of an assay is a measure of its ability to elicit test results that are directly, or through a well-defined mathematical transformation, proportional to the analyte concentration within a given range [32]. It is typically expressed in terms of the correlation coefficient (r) or the coefficient of determination (r²), with a value exceeding 0.999 often being the target for a robust quantitative method in pharmaceutical analysis, as demonstrated in the validation of a terbinafine hydrochloride assay [32].

The Critical Role of Standard Concentration Design

A well-designed set of standard concentrations serves two primary functions: it accurately defines the central, linear portion of the calibration curve, and it reliably detects the upper and lower limits where linearity deviates. An insufficient number of concentration levels fails to adequately characterize the curve's behavior, potentially missing minor deviations from linearity. Conversely, inadequate replication at each concentration level fails to provide a robust estimate of the method's inherent variability (precision), weakening the statistical confidence in the calibration model.

Advanced applications, such as surrogate monitoring of water quality parameters using UV-Vis spectroscopy, further rely on robust calibration designs. These methods employ machine learning models (e.g., ridge regression) trained on spectral data from standards to predict concentrations of complex indicators like Chemical Oxygen Demand (COD) and Total Organic Carbon (TOC) [38]. The accuracy of these surrogate models is fundamentally dependent on the quality and design of the initial calibration data.

Experimental Design Framework

Determining the Number of Standard Concentrations

The number of standard concentration levels must be sufficient to establish a statistically sound calibration model. A minimum of five to six concentration levels is generally recommended to reliably assess linearity across the analytical range. This recommendation is supported by experimental designs in published literature, where methods are validated using multiple data points across the range to ensure comprehensive characterization of the linear response [32].

Table 1: Recommended Number of Standard Concentrations Based on Analytical Range

| Analytical Range Scope | Minimum Number of Levels | Recommended Number of Levels | Justification |

|---|---|---|---|

| Narrow Range (e.g., one order of magnitude) | 5 | 6-8 | Ensures sufficient density to confirm linearity with high confidence. |

| Wide Range (e.g., two or more orders of magnitude) | 6 | 8-10 | Captures potential subtle deviations from linearity over a broader interval. |

| Preliminary Range-Finding | 3 | 4-5 | Provides an initial estimate of the linear dynamic range before a full validation. |

For instance, in the development of a UV-spectrophotometric method for terbinafine hydrochloride, linearity was validated using six concentration levels: 5, 10, 15, 20, 25, and 30 μg/ml. This number provided enough data points to construct a reliable calibration curve and calculate a regression equation with a high correlation coefficient (r² = 0.999) [32].

Strategic Concentration Spacing and Replication

The spacing of concentration levels and the number of replicate measurements at each level are critical for defining the curve's characteristics and quantifying uncertainty.

- Concentration Spacing: A non-uniform spacing is often advantageous. Including more levels near the anticipated lower limit of quantification (LOQ) and upper limit of quantification (ULOQ) helps in precisely defining these boundaries. Even spacing across the range is also acceptable and commonly practiced.

- Replication Strategy: Replication is essential for estimating the random error (noise) associated with the measurement process at each concentration level. A minimum of two to three replicates per concentration level is standard practice. This allows for the calculation of a mean absorbance value with an associated standard deviation, providing a measure of repeatability precision.

Table 2: Replication Strategy and Its Impact on Data Quality

| Replication Level | Precision Assessment | Recommended Use Case |

|---|---|---|

| Duplicates (n=2) | Basic | Preliminary studies or when sample/material is extremely limited. |

| Triplicates (n=3) | Good | Standard for full method validation; balances robustness with resource use. |

| Quadruplicates or more (n≥4) | Excellent | Crucial for defining limits of quantification (LOQ/LOD) or when high method precision is required. |

The precision of a method, reported as % Relative Standard Deviation (%RSD), is a direct outcome of replication studies. In the terbinafine hydrochloride method, intra-day and inter-day precision were demonstrated with %RSD values less than 2%, a result achievable only through sufficient replication [32].

Diagram 1: Workflow for Designing Standard Concentration Experiments. The process involves defining the range, selecting concentration levels and replication, and statistically assessing the resulting data for linearity and precision.

Comparative Experimental Protocols

Protocol 1: Pharmaceutical Compound Assay (Terbinafine Hydrochloride)

This protocol follows a classic validation approach for a single analyte in a relatively pure system, as detailed in the research by Verma et al. [32].

- Stock Solution Preparation: Accurately weigh 10 mg of the reference standard (terbinafine hydrochloride). Transfer to a 100 ml volumetric flask, dissolve in approximately 20 ml of distilled water, and dilute to the mark to yield a 100 μg/ml stock solution.

- Standard Dilution Series: From the stock solution, prepare a series of dilutions in 10 ml volumetric flasks. The studied protocol used aliquots of 0.5, 1.0, 1.5, 2.0, 2.5, and 3.0 ml, diluted to volume with distilled water, to produce standard concentrations of 5, 10, 15, 20, 25, and 30 μg/ml, respectively [32].

- Instrumental Analysis: Scan each standard solution across the UV-Vis range (200-400 nm) to identify the wavelength of maximum absorption (λmax). For terbinafine hydrochloride, this was found to be 283 nm. Measure the absorbance of each standard solution at this λmax.

- Calibration and Validation: Construct a calibration curve by plotting the mean absorbance (from replicates) against the known concentration. Perform linear regression to obtain the equation (y = mx + c) and the correlation coefficient (r²). Validate the method by assessing its accuracy (recovery studies), precision (intra-day and inter-day %RSD), and sensitivity (LOD and LOQ) [32].

Protocol 2: Multi-Parameter Water Quality Monitoring

This protocol represents a more complex application for natural matrices with multiple interfering substances, utilizing advanced wavelength selection and machine learning [38].

- System Calibration: Calibrate the UV-Vis spectrometer by first obtaining a dark spectrum (with light off), followed by a reference spectrum using deionized water [38].

- Data Collection from Field Samples: Collect a large number of water samples from the environment (e.g., 29 samples from a river). For each sample, collect the full UV-Vis absorption spectrum (e.g., from 200-750 nm). In parallel, determine the actual concentrations of target water quality indicators (TOC, BOD₅, COD, TN, NO₃-N) using reference standard methods [38].

- Characteristic Wavelength Selection: Instead of using a single wavelength or the full spectrum, apply advanced algorithms (e.g., Competitive Adaptive Reweighted Sampling - CARS) to identify the most informative characteristic wavelengths for each water quality parameter. This step reduces model complexity and improves accuracy [38].

- Surrogate Model Development: Use machine learning models (e.g., Ridge Regression) to build a predictive relationship between the absorbance at the selected characteristic wavelengths and the concentrations measured by reference methods. The model's performance is evaluated using metrics like the coefficient of determination (R²) [38].

Table 3: Comparison of Experimental Protocols for Standard Concentration Calibration

| Aspect | Protocol 1: Pharmaceutical Assay | Protocol 2: Water Quality Monitoring |

|---|---|---|

| Analytical Goal | Quantify a single, pure active compound. | Simultaneously predict multiple indicators in a complex matrix. |

| Linearity Model | Simple linear regression (Beer-Lambert). | Multivariate machine learning (e.g., Ridge Regression). |