Mastering Wavelength Selection: A Comprehensive Guide for Accurate Quantitative Spectrophotometer Analysis

This article provides a definitive guide for researchers, scientists, and drug development professionals on selecting the proper wavelength for quantitative spectrophotometer analysis.

Mastering Wavelength Selection: A Comprehensive Guide for Accurate Quantitative Spectrophotometer Analysis

Abstract

This article provides a definitive guide for researchers, scientists, and drug development professionals on selecting the proper wavelength for quantitative spectrophotometer analysis. It covers the foundational principles of light absorption and the Beer-Lambert law, explores systematic methodologies and application-specific techniques, addresses common troubleshooting and optimization challenges, and outlines rigorous validation and comparative analysis protocols. The content synthesizes current best practices to empower professionals in achieving highly accurate, reproducible, and reliable results in biomedical and clinical research applications.

The Science of Light and Matter: Core Principles of Spectrophotometric Analysis

Understanding Absorbance, Transmittance, and the Beer-Lambert Law

Fundamental Concepts

What are Transmittance and Absorbance?

When monochromatic light passes through a sample solution, the transmittance (T) is the fraction of incident light that passes through it. It is defined as the ratio of the transmitted intensity (I) over the incident intensity (I₀) and is often expressed as a percentage [1]. Absorbance (A) has a logarithmic relationship to transmittance and is defined as A = log₁₀(I₀/I) [1] [2]. An absorbance of 0 corresponds to 100% transmittance, while an absorbance of 1 corresponds to 10% transmittance [1].

Table 1: Absorbance and Transmittance Relationship

| Absorbance | % Transmittance |

|---|---|

| 0 | 100% |

| 1 | 10% |

| 2 | 1% |

| 3 | 0.1% |

| 4 | 0.01% |

| 5 | 0.001% |

What is the Beer-Lambert Law?

The Beer-Lambert Law (or Beer's Law) states a linear relationship between the absorbance and the concentration of a solution, its molar absorption coefficient, and the optical path length [1]. The common form of the law is expressed as A = εlc, where A is the absorbance, ε is the molar absorptivity (M⁻¹cm⁻¹), l is the path length of light through the solution (cm), and c is the concentration of the absorbing species (M) [2] [3]. This law enables the concentration of a solution to be determined by measuring its absorbance [1].

Figure 1: Logical relationship between light transmission, absorbance, and the Beer-Lambert Law.

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Why are my absorbance readings unstable or drifting? A: This common issue has several potential causes and solutions [4] [5]:

- Insufficient warm-up time: Allow the instrument to warm up for 15-30 minutes before use to let the light source stabilize.

- Air bubbles in sample: Gently tap the cuvette to dislodge bubbles or prepare a new sample.

- Sample too concentrated: Dilute your sample to bring its absorbance into the optimal range of 0.1-1.0 AU.

- Environmental factors: Ensure the spectrophotometer is on a stable surface away from vibrations and temperature fluctuations.

Q2: Why does my instrument fail to set to 100% transmittance (blank)? A: This problem prevents proper instrument calibration [5]:

- Aging light source: Check the lamp usage hours; replace old lamps as needed.

- Dirty or misaligned optics: Clean the cuvette thoroughly; if internal optics are dirty, professional servicing may be required.

- Improper cuvette placement: Ensure the cuvette holder is properly seated in the instrument.

- Incorrect blank solution: Use the exact same solvent or buffer that your sample is dissolved in.

Q3: What does a negative absorbance reading indicate? A: Negative absorbance occurs when [5]:

- The blank solution was "dirtier" or absorbed more light than the sample.

- Different cuvettes were used for blank and sample measurements.

- The sample is extremely dilute, with absorbance near the instrument's baseline noise.

- Solution: Use the same cuvette for both blank and sample measurements, ensure the cuvette is clean, and concentrate dilute samples if possible.

Q4: How do I select the proper wavelength for quantitative analysis? A: Optimal wavelength selection is critical for accurate results [6] [7]:

- Perform an absorbance spectrum scan to identify the wavelength of maximum absorption (λmax).

- Using λmax typically provides the best results as it maximizes sensitivity and minimizes the effect of minor instrumental drifts.

- For mixtures, advanced algorithms based on minimum mean square error can select optimal wavelength sets for quantitative analysis [6].

Q5: Why are my replicate readings inconsistent? A: Inconsistent replicates can stem from several sources [5]:

- Cuvette orientation variation: Always place the cuvette in the holder with the same orientation.

- Sample degradation: Light-sensitive samples may degrade or photobleach with repeated measurements.

- Evaporation or reaction: Sample concentration may change over time due to evaporation or chemical reactions.

- Solution: Work quickly with unstable samples, keep cuvettes covered, and maintain consistent cuvette orientation.

Troubleshooting Quick Reference Table

Table 2: Common Spectrophotometer Issues and Solutions

| Problem | Possible Causes | Solutions |

|---|---|---|

| Drifting Readings | Insufficient warm-up, air bubbles, high concentration, environmental factors | Warm up for 15-30 min, remove bubbles, dilute sample, stabilize environment |

| Cannot Zero Instrument | Sample compartment open, high humidity, hardware/software issue | Close lid securely, reduce humidity, restart instrument |

| Negative Absorbance | Blank dirtier than sample, different cuvettes, very dilute sample | Use same cuvette for blank/sample, clean cuvette, concentrate sample |

| Inconsistent Replicates | Varying cuvette orientation, sample degradation, evaporation | Standardize orientation, work quickly with light-sensitive samples, cover cuvette |

Wavelength Selection for Quantitative Analysis

Theoretical Foundation

Selecting the proper wavelength is fundamental to accurate quantitative analysis in spectrophotometry. The Beer-Lambert Law forms the basis for determining concentrations of absorbing species in solution [1] [3]. For quantitative work, the wavelength is typically chosen at or near the absorption maximum (λmax) because this provides the greatest sensitivity and minimizes the effect of instrumental uncertainties on the results [7].

For complex mixtures containing multiple absorbers, the additive property of the Beer-Lambert Law applies [8]: Aλ = Σ(ελᵢ · cᵢ · l) + G

Where multiple components contribute to the total absorbance at a given wavelength, advanced computational methods may be employed to select optimal wavelength sets that minimize error in concentration estimates [6].

Experimental Protocol: Creating a Calibration Curve

Objective: To determine the concentration of an unknown solution using Beer's Law and a series of standard solutions.

Materials and Equipment:

- Spectrophotometer

- Matched cuvettes

- Stock solution of analyte

- Solvent for blanks and dilutions

- Volumetric flasks and pipettes

Procedure:

- Wavelength Selection: If the absorption spectrum is unknown, first scan the solution to identify λmax [7].

- Prepare Standard Solutions: Create a series of diluted standards from the stock solution, covering a concentration range expected to give absorbances between 0.1-1.0 AU.

- Prepare Blank: Use the pure solvent in which the samples are dissolved.

- Measure Absorbance:

- Allow the spectrophotometer to warm up for 15-30 minutes [5].

- Blank the instrument with the pure solvent.

- Measure and record the absorbance of each standard solution at the selected wavelength.

- Create Calibration Curve: Plot absorbance versus concentration for the standard solutions.

- Determine Unknown Concentration: Measure the absorbance of the unknown solution and use the calibration curve to determine its concentration.

Table 3: Example Calibration Data for Red Dye at 505 nm [9]

| Solution | Concentration (M) | Absorbance |

|---|---|---|

| Blank | 0.00 | 0.00 |

| Standard 1 | 0.15 | 0.24 |

| Standard 2 | 0.30 | 0.50 |

| Standard 3 | 0.45 | 0.72 |

| Standard 4 | 0.60 | 0.99 |

| Unknown | ????? | 0.39 |

For the example data above, the best-fit line equation is y = 1.64x - 0.002, where y is absorbance and x is concentration. Substituting the unknown's absorbance (0.39) gives a concentration of 0.24 M [9].

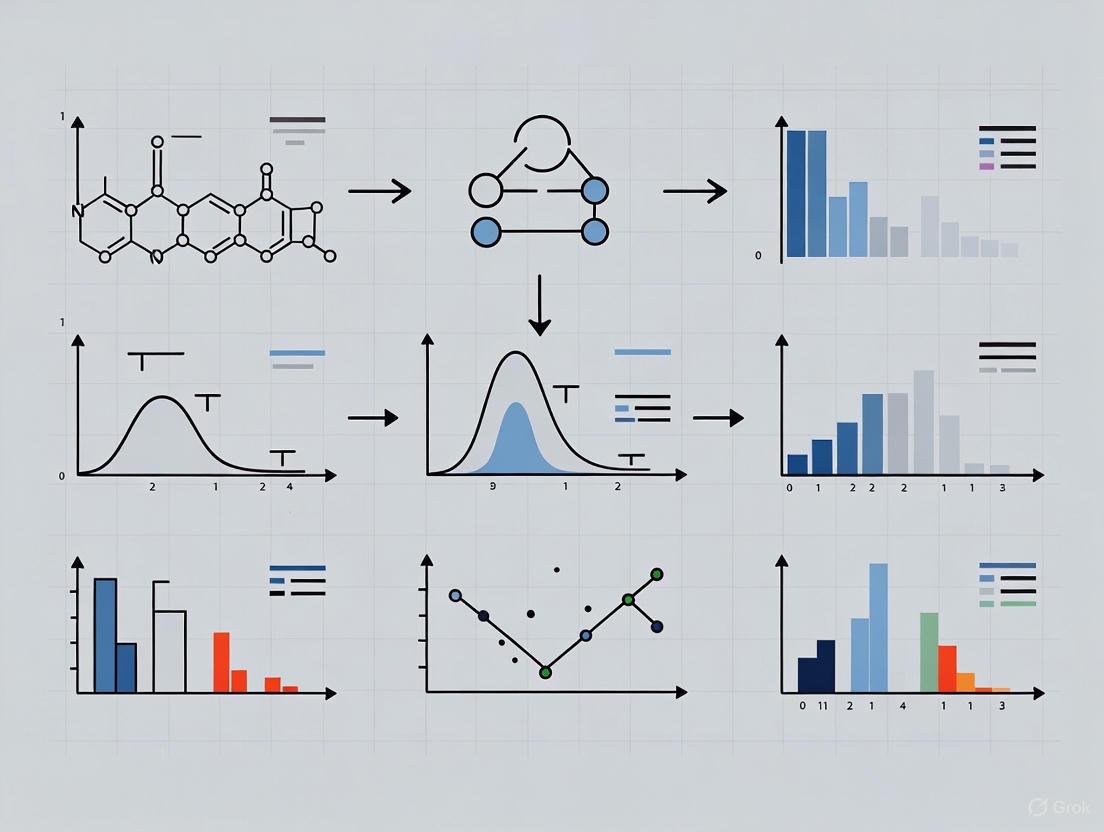

Figure 2: Workflow for quantitative spectrophotometric analysis using the Beer-Lambert Law.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagent Solutions and Materials

| Item | Function/Brief Explanation |

|---|---|

| Spectrophotometer | Instrument that supplies light at specific wavelengths and measures light intensity after it passes through a sample [7]. |

| Quartz Cuvettes | Required for measurements in the ultraviolet range (typically below 340 nm) as they transmit UV light without significant absorption [5]. |

| Glass/Plastic Cuvettes | Suitable for visible wavelength measurements; more affordable but not UV-transparent [5]. |

| Matched Cuvettes | Cuvettes with nearly identical optical properties; essential for high-precision work when using different cuvettes for blank and samples [5]. |

| Certified Reference Standards | Solutions with known concentrations and properties; used for instrument calibration and verification [4]. |

| Blank Solution | The pure solvent or buffer in which samples are dissolved; used to zero the instrument and account for solvent absorbance [3] [5]. |

| Standard Solutions | Solutions with precisely known concentrations of the analyte; used to create the calibration curve [3] [9]. |

Advanced Considerations and Modifications

Modified Beer-Lambert Law

In complex biological applications like near-infrared spectroscopy (NIRS) of tissues, the traditional Beer-Lambert Law is modified to account for light scattering [8]: Aλ = (εHHbλ · cHHb + εHbO₂λ · cHbO₂) · d · DPF + G

Where d is the distance between light emitter and detector, DPF is the differential pathlength factor representing increased light pathlength due to scattering, and G accounts for tissue scattering properties [8]. This modification is particularly relevant for drug development researchers working with biological samples.

Best Practices for Reliable Results

- Cuvette Handling: Always handle cuvettes by the frosted or ribbed sides to avoid fingerprints on optical surfaces [5].

- Consistent Technique: Use the same cuvette and orientation for both blank and sample measurements [5].

- Concentration Range: Maintain absorbances between 0.1-1.0 AU for optimal results; dilute samples with higher absorbances [5].

- Sample Clarity: Ensure samples are well-mixed and free of particulates that could scatter light [5].

- Proper Blanking: Always use the appropriate blank solution that matches the sample matrix [3] [5].

Troubleshooting Guide: Common UV-Vis-NIR Spectrophotometer Issues

This guide addresses frequent problems encountered during quantitative analysis, helping you ensure data reliability and instrument performance.

| Problem Symptom | Potential Cause | Diagnostic Steps | Solution |

|---|---|---|---|

| High noise, unstable baseline, or fluctuating readings [10] [11] | 1. Light source not warmed up.2. Sample contamination or dirty cuvette.3. Voltage instability or environmental factors. | 1. Check instrument warm-up time (20+ minutes for halogen/arc lamps). [11]2. Inspect cuvette for dirt or fingerprints; try a clean blank. [11]3. Monitor line voltage; check for high humidity. [10] | 1. Allow lamp to warm up fully.2. Thoroughly clean cuvettes with compatible solvents. [11]3. Install a voltage stabilizer; control lab environment. [10] |

| Inaccurate absorbance values (e.g., double expected values) [10] | 1. Error in sample preparation (most common).2. Stray light or photometric linearity error. [12] | 1. Verify solution preparation procedure and concentrations.2. Check instrument performance with certified reference materials. [12] | 1. Carefully re-prepare sample and standard solutions.2. Perform instrument calibration for photometric accuracy. [12] |

| Wavelength accuracy failure [12] | 1. Wavelength scale miscalibration.2. Mechanical failure in monochromator. | 1. Measure a standard with known absorption peaks (e.g., holmium oxide solution). [12]2. Listen for unusual noises from monochromator mechanism. | 1. Recalibrate wavelength using emission or absorption standards. [12]2. Contact service technician for mechanical repair. |

| "Energy Error" or "L0" displayed, calibration fails [10] | 1. Faulty or aged light source (D₂ or tungsten lamp).2. Blocked light path or open sample compartment. | 1. Check lamp hours; visually inspect if lamps are lit. [10]2. Ensure compartment is empty and lid is closed during initialization. [10] | 1. Replace expired deuterium or tungsten lamp. [10]2. Remove any obstruction from the light path. |

| Absorbance readings are nonlinear above 1.0 [13] | 1. Sample concentration is too high.2. Instrument limitation or stray light effects. [12] | 1. Check sample concentration and dilution factor.2. Verify performance with standards of known absorbance. | 1. Dilute sample to bring absorbance below 1.0. [13]2. Use a cuvette with a shorter path length. [11] |

Frequently Asked Questions (FAQs)

General Instrument Operation

Q: My spectrophotometer fails its self-test, showing "NG9" or "D2-failure." What should I do? A: This typically indicates a problem with the deuterium lamp, which is common as lamps age and lose energy output, particularly in the UV region. [10] First, confirm the lamp has been allowed to warm up sufficiently. If the error persists, the lamp is likely near the end of its life and requires replacement. If you are working exclusively in the visible range, you may temporarily proceed, but UV measurements will be unreliable. [10]

Q: Why is it crucial to let the light source warm up before measurements? A: Tungsten halogen and deuterium arc lamps require time (typically 20-30 minutes) after ignition to achieve stable light output. [11] Taking measurements before the instrument has stabilized can lead to signal drift (a fluctuating baseline) and inaccurate absorbance readings, compromising quantitative data.

Q: How do I know if my sample concentration is too high? A: A key indicator is when your absorbance values exceed 1.0, as readings can become unstable and non-linear due to the effects of stray light. [12] [13] For reliable quantitative analysis, absorbance should ideally be between 0.1 and 1.0. If the value is too high, dilute your sample or use a cuvette with a shorter path length. [11] [13]

Sample Preparation and Methodology

Q: I see unexpected peaks in my spectrum. What is the most likely cause? A: Unexpected peaks often stem from contamination. [11] Thoroughly inspect and clean your cuvettes with an appropriate solvent. Always handle cuvettes with gloves to avoid fingerprints, which can also introduce spectral features. Ensure your solvents are pure and that sample preparation tools are clean.

Q: For quantitative analysis in the UV range, what type of cuvette should I use? A: You must use quartz or silica cuvettes. [14] [13] Standard glass or plastic cuvettes absorb UV light and are only suitable for measurements in the visible range. Quartz provides high transmission from the UV through the near-infrared region, ensuring accurate results.

Data Interpretation and Wavelength Selection

Q: Why is selecting the correct wavelength so critical for quantitative analysis? A: Wavelength optimization is foundational for building dependable quantitative models. [15] [16] The accuracy of a measurement, especially in complex applications like non-invasive blood analysis, depends heavily on selecting wavelengths where the analyte of interest has significant absorption while minimizing interference from other components. [16] Advanced methods like Moving Window Partial Least Squares (MWPLS) are used for this purpose. [16]

Q: When analyzing absorption bands, is it correct to perform Gaussian fitting on a wavelength scale? A: No, this is a common misconception. The origin of spectral features is the transition between energy levels. Therefore, decomposing complex bands into individual components (like Gaussians) must be performed on an energy scale (e.g., eV, cm⁻¹), not a wavelength scale. Performing this analysis on a wavelength scale leads to incorrect interpretation of the data. [17]

Essential Protocols for Reliable Analysis

Protocol: Verifying Wavelength Accuracy

Principle: Regular verification ensures your instrument's wavelength scale is correctly aligned, which is critical for method development and proper peak identification. [12]

Materials:

- Holmium Oxide (Ho₂O₃) Filter or Solution: A certified standard with sharp, known absorption peaks. [12]

- Spectrophotometer with scanning capability.

Methodology:

- Follow the manufacturer's instructions to initiate a spectrum scan.

- Place the holmium oxide standard in the sample compartment.

- Scan the spectrum across the recommended range (e.g., 250-650 nm).

- Identify the recorded absorption peaks and compare them to the certified values provided with the standard. Common peak wavelengths for holmium are near 360 nm, 418 nm, 453 nm, and 536 nm, but always refer to your standard's certificate.

- The measured peak maxima should fall within the tolerance specified by your quality procedure (e.g., ±0.5 nm). Any significant deviation requires instrument service and recalibration. [12]

Protocol: Assessing Stray Light

Principle: Stray light—light outside the intended bandwidth that reaches the detector—can cause significant photometric errors, particularly at high absorbance values where measurements become non-linear. [12]

Materials:

- Stray Light Cut-Off Filter: A solution or solid filter that absorbs virtually all light below a specific wavelength. A common standard is a 1 cm path length of a 50 g/L potassium iodide (KI) solution for checking 240 nm, or a 10 g/L sodium nitrite (NaNO₂) solution for 340 nm. [12]

Methodology:

- Set the spectrophotometer to the wavelength of interest (e.g., 240 nm for KI).

- Zero the instrument with a distilled water blank.

- Replace the blank with the stray light filter (e.g., the KI solution).

- Measure the apparent %Transmittance (%T) of the filter.

- The reading is the stray light ratio. For quantitative work, this value should be very low (e.g., <0.1% T). A high value indicates a problem with the instrument's monochromator or optics that needs addressing. [12]

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function & Importance in Spectrophotometry |

|---|---|

| Quartz Cuvettes | Essential for UV range measurements due to high transmittance down to ~190 nm. Reusable and chemically resistant, but require careful cleaning. [14] [13] |

| Holmium Oxide Filter | A solid-state wavelength verification standard with sharp, stable absorption peaks. Used for routine performance validation of the instrument's wavelength scale. [12] |

| Potassium Iodide (KI) Solution | A liquid chemical filter used to assess stray light levels at a critical wavelength (240 nm), a key parameter for photometric accuracy. [12] |

| Neutral Density Filters | Certified filters of known absorbance used to check the photometric linearity and accuracy of the instrument across its absorbance range. [12] |

| Certified Reference Materials | Stable, well-characterized materials (e.g., potassium dichromate solutions) used in inter-laboratory comparisons to validate entire analytical methods. [12] |

Workflow and Conceptual Diagrams

Systematic Troubleshooting Workflow

Wavelength Optimization Logic

This guide provides technical support for researchers conducting quantitative spectrophotometer analysis, with a specific focus on how atomic and molecular energy levels and electronic transitions determine absorption properties. Correct interpretation of these principles is fundamental to selecting proper wavelengths for robust, reproducible analytical methods.

Theoretical Foundation: Electronic Transitions and Absorption

Electronic transitions occur when electrons in a molecule absorb energy and are excited from a lower energy level to a higher one [18]. The energy change associated with this transition is quantized, and the relationship between the energy involved and the frequency of the absorbed radiation is given by Planck's relation [18]. The specific wavelengths at which a molecule absorbs light are diagnostic of its structure and composition [18].

The table below summarizes the primary types of electronic transitions in molecules:

| Transition Type | Description | Typical Energy (Wavelength) | Example |

|---|---|---|---|

| σ → σ* | Excitation of electrons in a single sigma bond [18] | High Energy (short λ, e.g., <200 nm) [18] | Ethane (135 nm) [18] |

| n → σ* | Excitation of a non-bonding electron to a sigma antibonding orbital [18] | High Energy (short λ) [18] | Water (167 nm) [18] |

| π → π* | Excitation of electrons in a pi bond to a pi antibonding orbital [18] | Variable | Organic alkenes, Aromatic compounds [18] |

| n → π* | Excitation of a non-bonding electron to a pi antibonding orbital [18] | Lower Energy (longer λ) | Compounds with lone pairs and carbonyls [18] |

| Aromatic π → Aromatic π* | Transitions within aromatic ring systems [18] | Distinct bands | Benzene (B-band at 255 nm, E-bands at 180 & 200 nm) [18] |

The absorption spectrum is further complicated by the fact that electronic energy levels contain embedded vibrational and rotational sub-levels [19]. This can lead to vibrational fine structure in the absorption spectrum, which is often clarified by conducting measurements at lower temperatures [19].

Diagram 1: Electronic and Vibrational Energy Levels. Electronic states (n=0,1) contain vibrational sub-levels (v=0,1,2...), leading to multiple possible absorption transitions.

Wavelength Selection for Quantitative Analysis

Selecting the correct analytical wavelength is critical for method robustness. The optimal wavelength provides high absorbance for the analyte while minimizing interference from other sample components or the solvent [20] [6].

Experimental Protocol: Initial Wavelength Identification

- Instrument Calibration: Calibrate the UV-Vis spectrophotometer in absorbance mode using a matched cuvette containing only the solvent (blank) [21].

- Spectral Scanning: Obtain a full absorbance spectrum of the pure analyte solution across the available UV-Vis range (e.g., 190-1100 nm, depending on the instrument and solvent cut-off) [22].

- Peak Selection: Identify the wavelength(s) corresponding to local absorbance maxima (peaks) on the spectrum [18]. A peak with a high extinction coefficient is generally preferred for sensitivity [19].

Advanced Methodologies for Complex Mixtures

In complex matrices like biological samples or multi-component reactions, simple peak identification is insufficient. Advanced feature selection (FS) frameworks are used to discover optimal wavelengths with high discriminative power [20].

Diagram 2: Wavelength Selection Workflow. Multiple FS frameworks can process full spectral data to find a minimal, optimal wavelength set.

The table below compares common computational frameworks for wavelength selection, as demonstrated for orthopedic tissue differentiation via diffuse reflectance spectroscopy (DRS) [20].

| Framework | Principle | Key Advantage |

|---|---|---|

| Principal Component Analysis (PCA) | Transforms data to a new set of uncorrelated variables (principal components) [20]. | Effective dimensionality reduction; removes multicollinearity [20]. |

| Linear Discriminant Analysis (LDA) | Finds linear combinations of features that best separate two or more classes [20]. | Maximizes class separability; directly aims for optimal discrimination [20]. |

| Backward Interval PLS (biPLS) | Iteratively removes the least important intervals of wavelengths in a PLS model [20]. | Maintains strong performance for quantitative concentration prediction [20]. |

| Ensemble Framework | Combines multiple selection algorithms to make a more robust decision [20]. | Improved interpretability, preserves physical meaning, and robust performance [20]. |

Troubleshooting Guides & FAQs

Poor Signal-to-Noise Ratio or Noisy Baseline

- Q: My absorbance spectrum is unusually noisy, making peak identification difficult. What should I check?

- A: Follow this systematic checklist:

- Light Source: Check the age of the spectrophotometer's lamp. An aging or failing lamp is a common cause of fluctuations and low light intensity. Replace the lamp if it is near or beyond its rated lifetime [22].

- Warm-up Time: Ensure the instrument has been allowed to stabilize and warm up for the manufacturer's recommended time before use [22].

- Cuvette and Optics: Inspect the sample cuvette for scratches, residue, or fingerprints. Clean it thoroughly with an appropriate solvent. Ensure it is correctly aligned in the cuvette holder. Check for any debris obstructing the light path [22].

- Sample Concentration: Verify that the sample absorbance is within the ideal linear range of the instrument (typically 0.1 to 1.0 Absorbance Units). Overly concentrated samples (Abs >> 1) can lead to unstable, non-linear readings [21].

- A: Follow this systematic checklist:

Inconsistent Readings Between Replicates

- Q: I am getting inconsistent absorbance values for replicates of the same sample. How can I improve reproducibility?

- A: This often points to sample handling or instrument stability issues.

- Calibration: Re-calibrate the spectrometer with a fresh blank solution. Calibration must be performed every time you use Absorbance or % Transmittance mode [21].

- Cuvette Consistency: Use the same matched cuvette for both blank and sample measurements, or use a set of high-quality cuvettes that are verified to be identical. Slight differences in pathlength can cause significant variation.

- Drift Check: For dual-beam instruments, ensure the reference beam path is clear and stable. For single-beam instruments, periodically re-check the blank to account for any instrument drift [22].

- A: This often points to sample handling or instrument stability issues.

Blank Measurement Errors or Unexpected Baseline

- Q: The instrument is throwing a blank error, or the baseline correction seems incorrect.

- A:

- Reference Solution: Ensure you are using the correct solvent for the blank and that the reference cuvette is clean and properly filled [22].

- Baseline Correction: Perform a full baseline correction or recalibration if the software allows. This accounts for any inherent absorbance from the solvent or cuvette itself across the wavelength range [22].

- Solvent Cut-Off: Be aware of the "UV cut-off" of your solvent. Solvents themselves absorb strongly at shorter wavelengths (e.g., water below ~190 nm). Ensure your selected analytical wavelength is above the cut-off for your solvent [19].

- A:

The Scientist's Toolkit: Key Research Reagent Solutions

The table below lists essential materials and their functions for experiments involving electronic absorption spectroscopy and wavelength selection.

| Item | Function | Technical Notes |

|---|---|---|

| UV-Transparent Cuvettes | Holds liquid sample in the spectrophotometer's light path. | Quartz for UV range (190-400 nm); certain plastics or glass for visible range only [21]. |

| Certified Reflectance Standard | Calibrates the intensity response of a reflectance spectrophotometer [20]. | Critical for quantitative Diffuse Reflectance Spectroscopy (DRS) measurements [20]. |

| High-Purity Solvents | Dissolves analyte to create a homogeneous sample for measurement. | Must be spectroscopically pure and have a UV cut-off wavelength below the analyte's absorption band [19]. |

| Standard Reference Materials | Provides a known absorbance profile to validate instrument wavelength accuracy and photometric linearity. | e.g., Holmium oxide filter for wavelength calibration; neutral density filters for absorbance verification. |

This guide details the operation of monochromators and detectors, core components in spectrophotometers essential for quantitative analysis. Proper wavelength selection is foundational for achieving accurate and reproducible results in research and drug development.

Monochromator Fundamentals and Wavelength Selection

What is a Monochromator and What is Its Basic Principle?

A monochromator is an optical device that separates polychromatic light (like light from a lamp) into its constituent wavelengths and selects a narrow band of these wavelengths to produce monochromatic light. This name comes from the Greek roots "mono-" (single) and "chroma-" (color) [23].

The fundamental principle involves five key steps [23]:

- Light Source: Light enters the device from a source such as a lamp or laser.

- Collimation: The incoming light is made into a parallel (collimated) beam using lenses or mirrors. This is crucial for accurate wavelength selection.

- Dispersion: The collimated beam strikes a dispersive element, such as a prism or diffraction grating, which separates the light into its component wavelengths by bending each wavelength at a different angle.

- Selection: A slit is used to select the desired wavelength. By rotating the dispersive element, different wavelengths are directed toward the slit.

- Output: The selected, nearly monochromatic light exits the device through an output slit and is directed toward the sample or detector.

The following diagram illustrates the workflow and logical relationship of these components in a common Czerny-Turner configuration:

How Does a Diffraction Grating Work?

A diffraction grating is the most common dispersive element in modern monochromators. It consists of a surface with many regularly spaced, parallel grooves [23]. The working principle is defined by the grating equation [23]:

mλ = d(sinα - sinβ)

Where:

- m is the diffraction order (an integer)

- λ is the wavelength of light

- d is the spacing between grooves on the grating

- α is the angle of incident light

- β is the angle of diffracted light

When light hits the grating, each groove acts as a source of light. The reflected light rays interfere with each other, constructively reinforcing at angles where the path difference equals a multiple of the wavelength [24]. Rotating the grating changes the angle of incidence, thereby directing different wavelengths through the exit slit as described by this equation [23].

What is the Role of the Detector?

The detector captures the light that has interacted with the sample and converts its intensity into an electrical signal. The most common type of detector in optical spectrometers is the Charge-Coupled Device (CCD) [25].

A CCD is an array of light-sensitive pixels. Each pixel corresponds to a specific wavelength and generates an electrical signal proportional to the intensity of light falling on it. These signals are then processed to generate a spectrum. To reduce electronic noise, CCDs in spectrometers are often cooled [25].

Experimental Protocols for Quantitative Analysis

Protocol: Systematic Wavelength Selection for Absorption Spectroscopy

Selecting the optimal wavelength is critical for the accuracy of quantitative analysis, such as determining analyte concentration via the Beer-Lambert law.

1. Define Analytical Goal and Preliminary Scan:

- Identify the analyte and its expected absorption range (e.g., nucleic acids ~260 nm, proteins ~280 nm) [26].

- Perform a full spectrum scan (e.g., from 200 nm to 800 nm) of the analyte in solution using a spectrophotometer. This identifies the peak absorption wavelength (

λ_max).

2. Optimize for Specificity and Sensitivity:

- Confirm Specificity: Scan the solvent and any other chemicals in the buffer (blank) to ensure

λ_maxis unique to the analyte and not masked by background absorption. - Check for Isosbestic Points (for mixtures): If quantifying a component in a mixture that undergoes equilibrium (like HbO₂ and Hb), use an isosbestic point—a wavelength where the absorptivity of all species is equal. This ensures the absorbance depends only on the total concentration, not on the ratio of the species [27].

- Consider Wavelength Selection Algorithms: For complex mixtures, advanced algorithms can select optimal wavelengths by maximizing the product of the singular values of the scattering-modulated absorption matrix. This method has been shown to improve the accuracy of concentration estimates for absorbers like oxyhemoglobin, deoxyhemoglobin, and water [28].

3. Validate the Selected Wavelength:

- Create a calibration curve with standard solutions of known concentration at the chosen wavelength.

- The curve should be linear, confirming that the wavelength is suitable for quantitative analysis across the desired concentration range.

Protocol: Troubleshooting Fluorescence Measurements with Monochromators

Fluorescence measurements are highly sensitive but susceptible to issues like low signal-to-noise ratio.

1. Problem: Low Signal or High Background Noise.

- Potential Cause and Solution: Stray light from the excitation beam overwhelming the weak emission signal.

- Action: Use a double monochromator system. A single monochromator has a typical "blocking efficiency" of 10⁻³, meaning one-thousandth of the unwanted light is not blocked. In fluorescence, this stray excitation light can be as intense as the emission signal, leading to unreliable data. A double monochromator, where two monochromators are arranged in series, improves blocking efficiency to 10⁻⁶, drastically reducing stray light [29]. For high-resolution studies, a quadruple monochromator (a pair of double monochromators) may be used [24].

2. Problem: Low Light Throughput and Poor Resolution.

- Potential Cause and Solution: Incorrect slit width configuration.

- Action: Adjust the entrance and exit slit widths. A wider slit allows more light to pass, improving signal intensity for faint samples but reducing spectral resolution. A narrower slit provides higher resolution but reduces signal strength. Balance this trade-off based on your application [23] [25].

Frequently Asked Questions (FAQs)

Q: What are the main types of monochromators, and how do I choose?

- Prism Monochromators: Use a prism to disperse light. They have high light efficiency and low stray light but have non-linear dispersion (better for UV, worse for IR) and can be temperature-sensitive [23] [29].

- Grating Monochromators: Use a diffraction grating. They provide linear dispersion across all wavelengths, which is advantageous for wavelength calibration, and have low temperature dependence. However, they can produce more stray light and require filters to block higher-order diffraction peaks [23] [24] [29]. Grating monochromators, particularly the Czerny-Turner design, are most common in modern instruments [29].

Q: My spectrophotometer is giving inconsistent readings. What should I check?

- Light Source: Check and replace an aging lamp, as its output can fluctuate [26].

- Warm-up Time: Ensure the instrument has been allowed to stabilize (typically 15-30 minutes) before use [26].

- Cuvettes: Inspect the sample cuvette for scratches, residue, or improper alignment. Ensure it is clean and correctly positioned in the holder [26].

- Calibration: Perform a full recalibration and blank measurement with the correct reference solution [26].

Q: What is the difference between a single beam and a dual beam spectrophotometer?

- Single Beam: Uses a single light path. It measures the reference and the sample sequentially. It is more compact and affordable but can be prone to drift over time [26].

- Dual Beam: Splits the light into two paths; one passes through the sample and the other through a reference. This simultaneous measurement corrects for source drift and electronic fluctuations, providing better stability for longer or more precise measurements [26].

Q: How does resolution relate to a monochromator's slits and grating? Resolution is the ability to distinguish between two closely spaced wavelengths. Key factors are [23]:

- Slit Width: Narrower slits provide higher resolution but reduce light throughput.

- Dispersion: Higher dispersion (achieved with gratings that have more grooves per millimeter) improves resolution.

- Grating Quality: High-quality, holographically made gratings generate less stray light and provide better resolution than ruled gratings [24].

Q: What are the alternatives to monochromators for wavelength selection?

- Filters: Affordable and offer high permeability and good blocking. Lack flexibility, as each wavelength requires a separate, physical filter [29].

- LEDs: Provide monochromatic light directly. Inexpensive but limited to specific, fixed wavelengths [29].

- Lasers: Produce intense, highly monochromatic light. Often expensive and not easily tunable, making them suitable for specific assays only [29].

The Scientist's Toolkit: Research Reagent Solutions

The following table lists essential components and their functions in spectrophotometric instrumentation.

| Component | Function & Key Characteristics |

|---|---|

| Czerny-Turner Monochromator | Common optical design using two spherical mirrors and a diffraction grating for collimating, dispersing, and focusing light. Offers a good balance of performance and size [23] [24]. |

| Diffraction Grating | Dispersive element with parallel grooves that separates light by wavelength. Groove density (lines/mm) determines dispersion and resolution [23] [25]. |

| CCD Detector | Array of light-sensitive pixels that records light intensity as a function of wavelength. Cooled versions reduce dark current noise for sensitive measurements [25]. |

| Cuvette | Container for holding liquid samples in the light path. Must be made of material transparent to the wavelength range used (e.g., quartz for UV, glass/plastic for visible) [26]. |

| Order-Sorting Filter | A filter used in grating-based systems to block higher-order diffraction wavelengths (e.g., 2nd order 300 nm light) from reaching the detector [24] [29]. |

Technical Specifications and Comparison

The table below summarizes the core trade-offs in monochromator configuration, which are vital for method development.

| Parameter | Impact on Performance | Application Consideration |

|---|---|---|

| Slit Width | Narrow: Higher resolution, lower signal.Wide: Higher signal, lower resolution. | Use narrow slits for sharp peaks; wide slits for low-light or high-speed analysis [23] [25]. |

| Grating Groove Density | High density: Higher dispersion/resolution, narrower wavelength range.Low density: Wider wavelength range, lower resolution. | Select a grating matched to the spectral range and resolution needed for your analyte [25]. |

| Single vs. Double Monochromator | Single: Simpler, higher throughput.Double: Greatly reduced stray light (10⁻⁶ vs 10⁻³), weaker signal. | Essential for fluorescence applications; often unnecessary for routine absorption measurements [24] [29]. |

Understanding these fundamentals of monochromators and detectors, along with systematic protocols for wavelength selection and troubleshooting, will enhance the reliability and accuracy of your spectrophotometric analyses.

FAQs: Fundamental Principles of λmax

Q1: What is λmax and why is it critical for quantitative analysis?

A1: λmax (maximum absorption wavelength) is the specific wavelength at which a chemical substance absorbs the most light. It is critically important for quantitative analysis because it provides the highest sensitivity and greatest accuracy for concentration measurements [7] [30]. Using λmax ensures that even small changes in concentration result in measurable changes in absorbance, making your detection more robust. Furthermore, at the peak of the absorption band, the absorbance curve is often flattest (a region sometimes called the "peak plateau"), which makes the measurement less sensitive to minor, inevitable variations in the instrument's wavelength calibration [30].

Q2: How does using λmax improve adherence to the Beer-Lambert Law?

A2: The Beer-Lambert Law establishes a direct, linear relationship between absorbance and concentration [1]. This linearity is most reliable and covers the widest concentration range when measurements are taken at λmax. Using a wavelength on the slope of the absorption peak can lead to negative deviations from the Beer-Lambert Law. This is because the instrument uses a narrow band of light (bandwidth) rather than a single wavelength. On a steep slope, this small range of wavelengths corresponds to a range of different absorption coefficients, distorting the measurement and causing non-linearity [30].

Q3: When should I consider not using λmax for my analysis?

A3: While λmax is the default choice, there are valid experimental reasons to select an alternative wavelength. The most common reason is to avoid interference. If another substance in your sample (e.g., the solvent, buffer, or an impurity) absorbs light at or too close to your analyte's λmax, moving to a wavelength with less interference will improve accuracy, even if it slightly reduces sensitivity [30] [31]. Another reason is when the absorbance at λmax is too high (e.g., above 1.5) to be in the instrument's optimal linear range. In this case, selecting a different, less absorbing peak or a wavelength on the shoulder of the peak can bring the measurement back into a more reliable absorbance range (typically 0.1-1.0) [30] [5].

Experimental Protocol: Determining λmax and Establishing a Calibration Curve

This section provides a detailed methodology for identifying the analytical wavelength and using it for quantitative analysis.

Protocol: Determination of λmax and Quantitative Calibration

Objective: To identify the λmax of a target analyte and use it to create a calibration curve for determining the concentration of an unknown sample.

Research Reagent Solutions & Essential Materials

| Item | Function & Specification |

|---|---|

| Spectrophotometer | A UV-Vis instrument capable of scanning across the UV and visible wavelength range (e.g., 200-800 nm) [7] [32]. |

| Cuvettes | Precision optical cells for holding samples. Material is critical: Use quartz for UV measurements (<340 nm) and glass for visible range measurements. Always use a matched pair [30] [5]. |

| Stock Standard Solution | A solution of the pure analyte with a known, high concentration. |

| Solvent/Buffer | High-purity solvent that does not absorb significantly at the wavelengths of interest. It must be the same as the solution used to prepare the standard and unknown samples [30]. |

| Reference (Blank) Solution | Pure solvent/buffer without the analyte, used to zero the instrument and establish the 100% transmittance baseline [30]. |

Step-by-Step Workflow:

The following diagram illustrates the logical workflow for this experiment:

Methodology Details:

- Preparation of Standard Solutions: Prepare a series of standard solutions from the stock solution using precise serial dilution. The concentrations should bracket the expected concentration of your unknown sample. A minimum of five standards is recommended for a reliable calibration curve [1].

- Initial Spectral Scan: Fill a cuvette with the most concentrated standard solution. Place the solvent blank in another matched cuvette. Perform an absorbance scan over a wide wavelength range to generate the sample's absorption spectrum [31].

- Identification of λmax: From the generated absorption spectrum, identify the wavelength that corresponds to the highest absorbance peak. This is your λmax. An example is shown in the calibration figure below, where the peak for Rhodamine B is evident [1].

- Absorbance Measurement at λmax: Set the spectrophotometer to the fixed λmax wavelength. Measure and record the absorbance of all your standard solutions and the unknown sample against the solvent blank [1].

- Calibration and Analysis: Plot the absorbance values of the standard solutions against their known concentrations. Apply a linear regression fit to the data points to create the calibration curve. Finally, use the equation of this line to calculate the concentration of your unknown sample based on its measured absorbance [1].

Troubleshooting Guide: Wavelength Selection and λmax Issues

This table addresses common problems researchers encounter related to analytical wavelength and absorbance measurements.

| Problem | Possible Causes | Recommended Solutions |

|---|---|---|

| Non-linear Calibration Curve | 1. Polychromatic Light Deviation: Excessive instrumental bandwidth at a sharply sloping part of the absorption spectrum [30].2. Stray Light: Light outside the intended bandwidth reaches the detector, causing negative deviation at high absorbance [30] [12].3. Chemical Effects: Association or dissociation of the analyte at different concentrations. | 1. Ensure you are measuring at the true λmax (peak plateau). Verify and/or narrow the instrument's spectral bandwidth [30].2. Use a spectrometer with lower stray light. Keep optics clean and avoid measuring at the extreme ends of the instrument's wavelength range [30].3. Investigate the chemical stability of your analyte in the chosen solvent. |

| Inconsistent λmax Values Between Replicates | 1. Sample Degradation: The analyte may be photosensitive or chemically unstable, degrading between measurements [5].2. Solvent Effects: Changes in pH, temperature, or solvent composition can cause shifts in λmax (solvatochromism) [30].3. Instrument Wavelength Inaccuracy: The spectrometer's wavelength calibration is out of alignment [30] [12]. | 1. Protect light-sensitive samples from ambient light and perform measurements quickly after preparation [5].2. Control the chemical environment strictly. Ensure all samples are in the identical solvent matrix.3. Calibrate the instrument's wavelength scale using certified wavelength standards (e.g., holmium oxide filters or solution) [12]. |

| Low Sensitivity at Verified λmax | 1. Incorrect Blank: The blank solution may contain an absorbing substance, reducing the available light and compressing the absorbance scale [30] [5].2. Wavelength Drift: The instrument's actual wavelength may have drifted from its set value, placing you on the side of the peak [12].3. Sample Too Dilute: The absorbance value is too low (e.g., <0.1) to be distinguished from instrument noise [30]. | 1. Re-prepare the blank solution using high-purity solvents and ensure it is perfectly clear [30].2. Perform wavelength calibration. Allow the instrument to warm up for the recommended time (15-30 mins) to stabilize [5].3. Concentrate the sample or use a cuvette with a longer path length to increase absorbance [30]. |

| Unexpected or Broad Peaks | 1. Aggregation or Complex Formation: Molecules may form H- or J-aggregates in solution, leading to new, redshifted or blueshifted peaks [31].2. Excessive Bandwidth: An instrumental bandwidth that is too wide can obscure fine spectral features and make peaks appear broader [30].3. Sample Impurities: Contaminants in the sample have their own absorption, which overlaps with the analyte's spectrum [31]. | 1. Vary the sample concentration and monitor spectral changes. Consult literature on the analyte's behavior in solution [31].2. Reduce the spectrometer's slit width to decrease the bandwidth, thereby improving resolution [30].3. Purify the sample. Compare the spectrum against a known pure standard. |

Visualization of the Calibration Principle

The fundamental principle of using a calibration curve for quantitative analysis at λmax is summarized in the figure below, which combines the absorption profile and the resulting linear plot.

This process, as shown in the workflow, begins with scanning standard solutions to find λmax [1]. Once identified, this fixed wavelength is used to measure all standards and unknowns. The resulting calibration curve provides the linear relationship (Absorbance = ε * b * C) required for accurate quantification, demonstrating the core utility of the Beer-Lambert Law in analytical research [1].

Strategic Wavelength Selection: A Step-by-Step Framework for Reliable Quantification

Leveraging Standardized Methods and Software-Recommended Wavelengths

FAQs on Wavelength Selection and Instrument Calibration

How do I select the optimal wavelength for quantitative analysis?

The fundamental principle for selecting the optimal wavelength for quantitative analysis is to use the wavelength at maximum absorption (λmax) for your compound of interest [7]. This approach provides the highest sensitivity and minimizes the impact of minor instrumental errors, such as slight inaccuracies in wavelength calibration [7].

To implement this, you should first obtain an absorbance spectrum of your standard solution by scanning across a range of wavelengths [7]. This spectrum will reveal the peak absorbance value, or λmax. For instance, if a compound absorbs in the visible region and has a blue color, its λmax will likely be between 400 and 450 nm; a red compound will have a λmax between 700 and 750 nm [7].

While other wavelengths on the slope of the absorption peak can be used, this is generally less desirable and can lead to reduced sensitivity and precision [12]. The use of a fixed wavelength like 254 nm, common in HPLC, is often a historical holdover and may not be optimal for your specific compound; using the compound's λmax typically provides better specificity [33].

What is the difference between using a single wavelength and a wavelength scan for reaction monitoring?

Using a single fixed wavelength is sufficient for monitoring the progression of a known reaction, where the product's absorbance becomes constant upon reaction completion [33].

However, for assessing purity or detecting unknown impurities, a wavelength scan (or using a photodiode array (PDA) detector) is superior [33]. This is because different compounds absorb optimally at different wavelengths. An impurity may not be detectable at your product's λmax but could be prominent at another wavelength. Relying on a single wavelength can therefore give a false impression of purity [33]. For a true purity assessment, it is best to use the λmax of your target compound and compare its quantity to a known pure standard [33].

How do I verify the wavelength accuracy of my spectrophotometer?

Verifying the wavelength accuracy is a critical calibration step to ensure your measurements are reliable. The most precise method involves using emission line sources [12].

For instruments with a deuterium lamp, you can use the sharp emission lines of deuterium (e.g., at 656.100 nm or 485.999 nm) to check the accuracy of the wavelength scale [12]. Simply scan the region around these known lines and confirm that the instrument records the peak at the correct wavelength.

If your instrument lacks a suitable emission source, you can use standardized absorption filters or solutions. Holmium oxide solution or glass filters have sharp, well-characterized absorption bands suitable for this purpose [12]. It is recommended to perform this check at multiple wavelengths across the instrument's range to ensure uniform accuracy [12].

My spectrophotometer is giving noisy data or failing to calibrate. What should I check?

Noisy data or calibration failures often indicate insufficient light is reaching the detector [34]. Follow this systematic checklist to troubleshoot:

- Check Sample Concentration: Absorbance values are most accurate and linear between 0.1 and 1.0 absorbance units [34]. If your sample is too concentrated (absorbance too high), the signal can become noisy or non-linear. Dilute your sample and try again.

- Inspect the Light Source: A weak or failing lamp can cause low light intensity. Check the lamp's operational hours and look for a flat or abnormal spectrum in uncalibrated mode [34] [35].

- Verify the Light Path is Clear:

- Ensure cuvettes are clean, free of scratches, and properly aligned in the holder [34] [35].

- For UV measurements, use UV-compatible cuvettes (e.g., quartz). Standard plastic cuvettes block UV light [34].

- Confirm that the solvent does not absorb strongly at your analysis wavelength. If it does, consider changing or diluting the solvent [34].

- Perform a Power Reset: For persistent issues with connected systems, perform a full power reset of the spectrometer and interface (e.g., LabQuest) [34].

Troubleshooting Guide: Common Spectrophotometer Errors

The following table outlines common errors, their potential causes, and corrective actions based on standardized methods.

| Error Symptom | Possible Cause | Recommended Corrective Action |

|---|---|---|

| Inconsistent readings or baseline drift [35] | - Aging lamp- Insufficient warm-up time- Environmental fluctuations | - Replace lamp if near end of lifespan [35]- Allow instrument to warm up for 15-30 minutes before use [35]- Perform a full baseline correction and recalibrate [35] |

| High absorbance & noisy data (e.g., >1.5 AU) [34] | - Sample too concentrated- Stray light at low wavelengths [12] | - Dilute sample to bring absorbance below 1.0 [34]- Use a validated method to check and correct for stray light [12] |

| Blank measurement error [35] | - Contaminated or improper reference- Dirty reference cuvette | - Re-blank with correct reference solution [35]- Thoroughly clean or replace the reference cuvette [35] |

| Unexpected low signal or "Low Light" error [34] [35] | - Blocked light path- Wrong cuvette type (e.g., plastic for UV)- Failing light source | - Check for cuvette misalignment or debris in the path [34] [35]- Use quartz cuvettes for UV analysis [34]- Test and replace lamp if necessary [34] [35] |

| Poor photometric accuracy (concentration off) [12] | - Photometric scale error- Lack of calibration | - Calibrate using certified neutral-density absorbance filters [12]- Ensure instrument has been professionally validated |

Experimental Protocol: Determining the Optimal Wavelength (λmax)

This protocol provides a detailed methodology for establishing the optimal analysis wavelength for a novel compound, a foundational step in quantitative research.

Objective: To identify the wavelength of maximum absorption (λmax) for a target compound in solution.

Principle: A spectrophotometer scans a range of wavelengths, measuring the absorbance at each point. The resulting spectrum identifies the wavelength where the compound's electron transition is most efficient, yielding the highest analytical sensitivity [7].

Research Reagent Solutions

| Item | Function |

|---|---|

| High-Purity Standard | The purified target compound of known structure and concentration for establishing baseline spectral properties. |

| Appropriate Solvent | A chemical solvent that dissolves the standard and does not absorb significantly in the wavelength range of interest (e.g., water, methanol, acetonitrile) [34]. |

| Matched Cuvettes | A pair of high-quality cuvettes (e.g., quartz for UV, glass/plastic for VIS) that hold the sample and blank solvent. They must have identical pathlengths and optical properties. |

| Certified Reference Materials | Holmium oxide solution or filters for verifying the wavelength accuracy of the spectrophotometer prior to analysis [12]. |

Procedure:

- Instrument Preparation: Turn on the spectrophotometer and allow the lamp to warm up for at least 15 minutes to stabilize [35].

- Wavelength Calibration (Quality Control): Using a holmium oxide filter or solution, scan the appropriate region and verify that the observed absorption peaks align with certified wavelengths (e.g., ~360 nm, 450 nm, etc.). Adjust calibration if necessary [12].

- Prepare Blank: Fill a cuvette with the pure solvent to be used for the standard solution. This is your blank.

- Prepare Standard Solution: Dilute the high-purity standard in the same solvent to a concentration that is expected to yield an absorbance between 0.5 and 1.0 at its peak. This ensures the signal is within the ideal range for the detector [34].

- Perform Baseline Correction: Place the blank cuvette in the sample holder and execute a baseline correction or "auto-zero" command. This instructs the instrument to define this reading as 0 Absorbance across the scanned range.

- Acquire Absorbance Spectrum:

- Replace the blank with the standard solution cuvette.

- Set the spectrophotometer to scan mode.

- Select a wavelength range that covers the expected spectral region (e.g., 200-400 nm for UV, 400-800 nm for VIS).

- Initiate the scan.

- Identify λmax: Once the spectrum is displayed, use the software's peak-picking function to identify the wavelength(s) that correspond to the highest absorbance value(s). This is the λmax for your quantitative method.

Workflow Diagram

FAQs: Understanding and Addressing Matrix Effects

What are matrix effects and why are they a critical concern in quantitative analysis? Matrix effects refer to the combined influence of all components in a sample other than the analyte on the measurement of quantity. When a specific component can be identified as causing an effect, it is termed an interference [36]. In techniques like LC-MS, these effects occur when compounds co-eluting with the analyte interfere with the ionization process, causing ionization suppression or enhancement [37] [36]. This detrimentally affects the fundamental parameters of method validation: accuracy, reproducibility, sensitivity, and linearity [37] [36]. For spectrophotometric methods, matrix components can cause similar interferences through unwanted light absorption or scattering.

How can I quickly check if my sample has significant matrix effects? A simple, fast, and reliable method to detect matrix effects without additional hardware is the recovery-based method [37]. It involves comparing the signal response of an analyte in a neat solvent (like mobile phase) to the signal response of an equivalent amount of the analyte spiked into a blank sample matrix after extraction. The difference in response indicates the extent of the matrix effect [37]. This method can be applied to any analyte, including endogenous compounds, and to any matrix.

What is the best internal standard to correct for matrix effects in LC-MS? The most well-recognized and effective technique to correct for matrix effects is internal standardization using stable isotope-labeled (SIL) versions of the analytes [37] [36]. These standards have nearly identical chemical and physical properties to the analyte, ensuring they co-elute and experience the same ionization suppression/enhancement. However, this method can be expensive, and standards are not always commercially available [37]. As an alternative, a co-eluting structural analogue of the analyte can sometimes be used [37].

My sample matrix is complex and a blank is unavailable. How can I calibrate? The standard addition method is particularly suitable when a blank matrix is unavailable [37] [36]. This method involves adding known amounts of the analyte standard to the sample itself. It does not require a blank matrix and is therefore appropriate for compensating for matrix effects for any analyte, including endogenous metabolites in biological fluids [37].

Troubleshooting Guides

Problem 1: Inaccurate Quantification Despite Strong Analyte Signal

This problem often manifests as inconsistent results between different sample types or an inability to achieve a linear calibration curve.

- Potential Cause: Ionization suppression or enhancement from co-eluting matrix components.

- Investigation Protocol:

- Perform a Post-Column Infusion Analysis: This qualitative test helps identify regions of ion suppression/enhancement in your chromatogram.

- Connect a syringe pump containing your analyte standard to a T-piece between the HPLC column and the MS detector.

- Infuse the analyte at a constant rate while injecting a blank sample extract.

- Observe the analyte signal. Any dip or peak in the signal indicates a region where matrix components are causing suppression or enhancement [36].

- Quantify the Matrix Effect: Use the post-extraction spike method to calculate the Matrix Effect (MEionization).

- Analyze a pure standard in solvent (Areastandard).

- Spike the same concentration of analyte into a blank, extracted matrix and analyze it (Areasample).

- Calculate MEionization = (Areasample / Areastandard) × 100% [38].

- An ME value of 100% indicates no effect; <100% indicates suppression; >100% indicates enhancement [38].

- Perform a Post-Column Infusion Analysis: This qualitative test helps identify regions of ion suppression/enhancement in your chromatogram.

- Resolution Strategies:

- Improve Chromatography: Modify the chromatographic method (e.g., gradient, column) to shift the analyte's retention time away from the suppression zone identified by the post-column infusion [37] [36].

- Optimize Sample Clean-up: Use a more selective extraction technique (e.g., Liquid-Liquid Extraction instead of protein precipitation) to remove interfering compounds [38].

- Dilute the Sample: Simple sample dilution can reduce matrix effects, but this is only feasible if the assay sensitivity is high enough [37] [36].

- Change Ionization Source: If possible, switch from Electrospray Ionization (ESI) to Atmospheric Pressure Chemical Ionization (APCI), as APCI is generally less prone to matrix effects [36] [38].

Problem 2: Overcoming Spectral Interference in UV-Vis Analysis

This occurs when the sample matrix absorbs light at the same wavelength as your target analyte, leading to inflated and inaccurate concentration readings.

- Potential Cause: Overlapping absorption spectra between the analyte and matrix components.

- Investigation Protocol:

- Obtain Full Spectra: Record the UV-Vis absorption spectrum of your processed sample, a standard of your pure analyte, and a blank matrix sample if available.

- Identify Wavelengths: Visually inspect the spectra to find a wavelength where the analyte has strong absorption but the matrix interference is minimal. Advanced factorized response techniques can mathematically resolve overlapping spectra [39].

- Resolution Strategies:

- Wavelength Selection: Choose an alternative characteristic wavelength for quantification where the analyte absorbs strongly, but the matrix does not [40] [7].

- Advanced Spectral Processing: Employ advanced spectrophotometric methods that use mathematical processing to separate the analyte's signal from the matrix. These include:

- Build a Surrogate Model: For complex, multi-analyte systems like water quality monitoring, use machine learning (e.g., Ridge Regression) on selected characteristic wavelengths to predict analyte concentration despite matrix interference [40].

Experimental Protocols for Matrix Effect Assessment

Protocol 1: Post-Extraction Spike Method for Quantitative ME Assessment

This method provides a quantitative measure of the matrix effect [37] [36] [38].

- Purpose: To calculate the extent of ionization suppression or enhancement for an analyte in a specific matrix.

- Materials:

- Certified analyte standard.

- Blank matrix (e.g., drug-free plasma, purified water).

- Appropriate solvents and mobile phases.

- LC-MS or spectrophotometry system.

- Procedure:

- Prepare a standard solution of the analyte in a neat solvent (e.g., mobile phase) at a known concentration. Analyze this solution to obtain the peak area (Areastandard).

- Take several aliquots of the blank matrix through the entire sample preparation and extraction process.

- Spike the same known concentration of the analyte standard into the processed blank matrix extracts.

- Analyze these post-extraction spiked samples to obtain the peak area (Areasample).

- Calculate the matrix effect for each sample using the formula:

- MEionization = (Areasample / Areastandard) × 100% [38]

- Interpretation:

- ~100%: No significant matrix effect.

- <100%: Ionization suppression.

- >100%: Ionization enhancement.

Protocol 2: Slope Ratio Analysis for Calibration Standards

This semi-quantitative method is useful when a blank matrix is unavailable and allows assessment over a range of concentrations [36].

- Purpose: To compare the calibration curve slope in solvent to the slope in a matrix to assess ME.

- Materials: Same as Protocol 1.

- Procedure:

- Prepare a calibration curve by spiking the analyte standard at different concentration levels into a neat solvent. Analyze and obtain the slope (Slopesolvent).

- Prepare a matrix-matched calibration curve by spiking the analyte standard at the same concentration levels into the sample matrix (e.g., a pooled sample). Analyze and obtain the slope (Slopematrix).

- Calculate the slope ratio:

- Slope Ratio = Slopematrix / Slopesolvent

- Interpretation: A slope ratio significantly different from 1.0 indicates the presence of a matrix effect.

Summarized Data on Matrix Effect Evaluation Methods

The table below compares the primary methods for evaluating matrix effects.

Table 1: Comparison of Matrix Effect Evaluation Methods

| Method Name | Description | Type of Output | Key Limitations |

|---|---|---|---|

| Post-Column Infusion [36] | Infuses analyte post-column during injection of a blank extract to identify problematic retention times. | Qualitative | Does not provide a numerical value for ME; time-consuming [36]. |

| Post-Extraction Spike [37] [38] | Compares analyte signal in solvent vs. signal when spiked into a blank matrix extract. | Quantitative | Requires a blank matrix, which is not available for endogenous analytes [37]. |

| Slope Ratio Analysis [36] | Compares the slope of a calibration curve in solvent to the slope in a matrix. | Semi-Quantitative | Requires multiple concentration levels and may not be suitable for all analytes [36]. |

The Scientist's Toolkit: Key Reagent Solutions

Table 2: Essential Materials for Matrix Effect Investigation

| Item | Function | Example Use Case |

|---|---|---|

| Stable Isotope-Labeled Internal Standard (SIL-IS) | The gold standard for correcting matrix effects in MS; co-elutes with the analyte and experiences identical ionization effects [37] [36]. | Quantifying drugs in plasma where phospholipids cause ion suppression. |

| Structural Analogue Internal Standard | A less expensive alternative to SIL-IS; a chemically similar compound that co-elutes with the analyte [37]. | Used when a SIL-IS is not commercially available or is too costly. |

| Certified Reference Material (CRM) | A substance with one or more property values that are certified by a validated procedure, traceable to an international standard. Used for calibrating instruments and methods [41]. | Verifying photometric and wavelength accuracy during spectrophotometer calibration to ensure data integrity [41]. |

| Blank Matrix | A sample of the matrix free of the target analyte. Essential for developing and validating methods via post-extraction spike and standard addition [37] [36]. | Creating matrix-matched calibration standards to compensate for matrix effects. |

Workflow and Strategy Diagrams

Matrix Effect Investigation Workflow

Matrix Effect Calculation Guide

Post-Column Infusion Experimental Setup

Using Single-Element Standards to Predict and Test for Interferences

In quantitative spectrophotometric analysis, spectral interferences occur when other components in your sample matrix contribute to the signal at your analyte's target wavelength. This can lead to falsely elevated results, poor accuracy, and compromised data. Using single-element standards is a foundational technique to proactively identify and correct for these interferences, ensuring the integrity of your analytical results.

FAQs on Interference Testing

Q1: Why should I use single-element standards instead of just relying on my calibration curve? A calibration curve can confirm the relationship between concentration and signal for a pure analyte, but it cannot reveal which specific components in a complex sample are causing interference. Single-element standards allow you to simulate a high-concentration matrix component in isolation. By analyzing this standard using your method, you can observe whether this component produces any signal at your analyte's wavelength, thereby confirming or ruling it out as a source of interference [42].

Q2: My sample matrix is complex and not fully known. How can I possibly test for all interferences? For completely unknown samples, begin with a semiquantitative analysis [43]. This rapid screening technique helps identify the major and minor elements present. The results provide a "fingerprint" of the sample composition, allowing you to make an informed decision about which single-element standards (e.g., for the most abundant elements) are most critical to test for potential interferences [42] [43].

Q3: After identifying an interference, what are my options? Once an interference is confirmed, you have several paths forward:

- Select an Alternative Wavelength: Choose a different emission or absorption line for your analyte where the interfering element does not produce a signal [42].

- Employ Interference Correction: Use mathematical corrections within the instrument software, if available, to subtract the contribution of the interference [44].

- Utilize Advanced Chemometrics: In techniques like LIBS, machine learning algorithms (e.g., Light Gradient Boosting Machine) can be highly effective in selecting interference-free spectral lines or building robust multivariate calibration models [45].

Troubleshooting Guide: Using Single-Element Standards

| Problem Scenario | Diagnostic Steps | Potential Solutions |

|---|---|---|

| Unexpectedly high analyte concentration in a sample [43]. | 1. Overlay the sample spectrum with a pure analyte standard spectrum. Check for peak shape differences [42].2. Perform semiquantitative analysis to identify unexpected high-concentration elements [43].3. Test single-element standards for the identified matrix elements. | Select a new analytical wavelength where the interference is absent [42]. |

| Poor recovery of a spiked analyte in a complex matrix. | 1. Run a single-element standard for the major matrix component(s) at the analyte wavelength.2. Check if the instrument's background correction points are placed on a sloping or noisy baseline [42]. | Use the major matrix component's standard to apply an interference correction [44] or find a cleaner wavelength. |

| Disagreement between results from different analyte wavelengths. | 1. Test single-element standards for all major matrix components at each of the conflicting wavelengths.2. Verify that single-element standards have not been contaminated over time [42]. | Use the wavelength with the least interference. Use at least 2-3 wavelengths during method development for comparison [42]. |

Experimental Protocol: Systematic Interference Check

This protocol provides a step-by-step methodology for using single-element standards to predict and correct for spectral interferences, using the example of determining phosphorus in a nickel alloy [42].

The following diagram illustrates the logical workflow for the experimental protocol:

Step-by-Step Procedure

- Identify Major Matrix Components: For a digested nickel alloy sample, the major components were identified as Ni, Cr, Mo, W, and others, totaling 14 elements [42].

- Prepare Single-Element Standards: Acquire high-purity (e.g., >99.9999%) single-element standards [46]. Prepare solutions that approximate the expected concentration of each major matrix component in the final diluted sample solution. For a component expected at ~1%, a 10,000 mg/L standard is appropriate [42].

- Analyze Standards at Analytic Wavelengths: Using your ICP-OES or other spectrophotometric method, analyze the single-element standard solutions at all potential wavelengths for your analyte.

- Example: For phosphorus (P), analyze the Mo and W single-element standards at all four main P wavelengths: 177.434, 178.221, 213.617, and 214.914 nm [42].

- Overlay and Compare Spectra: In the instrument software, overlay the spectrum from the single-element standard (e.g., Mo) over the spectrum of a pure P standard at the same wavelength (e.g., P 214.914 nm).

- Interpret Results and Select Wavelength:

- If the matrix standard produces a peak directly on top of the analyte peak, that wavelength is compromised.

- In our example, at P 214.914 nm, Mo and W produced large peaks over the tiny P peak, making it unusable.

- In contrast, at P 178.221 nm, the P peak was large and none of the major matrix components interfered, making it the optimal choice [42].

Data Interpretation from Case Study

The table below summarizes the hypothetical data and conclusion from the phosphorus determination example [42].

| Phosphorus Wavelength (nm) | Signal from 0.1 ppm P Standard | Signal from Mo/W Matrix Standards | Interference? | Recommended for Use? |

|---|---|---|---|---|

| 214.914 | Very small | Large peaks directly on P peak | Yes, severe | No |

| 213.617 | Moderate | Peaks present near P peak | Yes, likely | No |

| 178.221 | Large and clear | No significant peaks | No | Yes |

| 177.434 | Large and clear | No significant peaks | No | Yes |

Research Reagent Solutions

The following table details key materials required for performing effective interference checks as described in the experimental protocols.

| Reagent / Material | Function and Importance |

|---|---|

| High-Purity Single-Element Standards | Certified Reference Materials (CRMs) with purities of 99.9999% (five 9s) or higher are essential to avoid introducing unknown contaminants that could lead to false positive interferences [46]. |

| Interference Check Standards | Commercial multi-element solutions (Mixes) specifically designed for this purpose. They contain elements known to cause common spectral overlaps, allowing for a rapid, consolidated check of your method's susceptibility [47] [46]. |

| High-Purity Acids & Solvents | The acids (e.g., HNO₃, HCl) and solvents used for sample and standard preparation must be of ultra-high purity (e.g., Optima Grade) to prevent background contamination that can obscure results or create false interferences. |

| Comparative Element Solution | In some interference correction methods, a known element like Lutetium (Lu) is added to all samples and standards. Its consistent behavior is used to mathematically correct for non-spectral interferences [44]. |

Advanced Wavelength Selection Algorithms (GA, PCA, VIP, biPLS) for Complex Samples

In the multivariate analysis of near-infrared (NIR) and other spectra, wavelength selection is not merely an optimization step but a fundamental prerequisite for developing robust, interpretable, and reliable calibration models. Spectral data often contain a large number of variables (wavelengths), many of which may be non-informative, redundant, or represent noise. The primary goal of advanced wavelength selection algorithms is to identify a lean subset of variables that carry information pertinent to the chemical or physical property of interest, thereby improving model performance and providing a more straightforward interpretation [48]. For researchers in drug development and other fields, selecting the correct wavelength is crucial for precise, reproducible results [49].

Core Wavelength Selection Algorithms: Principles and Methodologies

Variable Importance in Projection (VIP)

Variable Importance in Projection (VIP) scores are a primary method for variable screening, particularly effective in the context of Partial Least Squares Regression (PLSR). The VIP algorithm is pivotal in the creation of the PLS model. A variable is generally considered significant if its mean VIP value and one standard deviation of its bootstrap distribution are greater than 1.0 [48]. VIP scores measure the influence of each variable on the PLS model, considering both its contribution to explaining the independent variable (X) and its correlation with the dependent variable (Y).

Backward and Interval Partial Least Squares (biPLS)

The biPLS algorithm is an advanced interval-based method that has been shown to be more precise and reliable than conventional full-spectrum PLS [48]. Its operational workflow involves:

- Spectral Division: The entire spectrum is divided into a number of equal-width intervals.

- Model Evaluation: A PLS model is developed with each interval systematically excluded.