Molecular and Elemental Analysis: Techniques, Applications, and Best Practices for Pharmaceutical Research

This article provides a comprehensive overview of modern molecular and elemental analysis techniques, with a specific focus on applications in pharmaceutical research and drug development.

Molecular and Elemental Analysis: Techniques, Applications, and Best Practices for Pharmaceutical Research

Abstract

This article provides a comprehensive overview of modern molecular and elemental analysis techniques, with a specific focus on applications in pharmaceutical research and drug development. It explores foundational principles, key methodologies like ICP-MS, OES, and CHNOS analysis, and their critical role in ensuring drug safety and compliance with global pharmacopeial standards such as ICH Q3D and USP <232>/<233>. The content further addresses common analytical challenges, including interference correction and sample preparation, and offers best practices for method optimization, validation, and transfer between laboratories. Designed for researchers and scientists, this guide synthesizes current trends and practical insights to enhance the accuracy and efficiency of elemental impurity analysis in quality control workflows.

Core Principles and Regulatory Drivers in Modern Elemental Analysis

Elemental analysis is a fundamental analytical science process that involves the identification and quantification of the chemical elements within a sample [1]. This process determines both the type and amount of each element present, providing critical insights into the material's overall composition [1]. The data generated serves as a cornerstone for assessing key material properties—including weight, strength, and corrosion resistance—and is indispensable for research, quality control, and regulatory compliance across a vast spectrum of industries [1]. In the context of molecular and elemental analysis research, understanding elemental composition provides the foundational layer upon which molecular structure, functional group analysis, and other complex chemical characteristics are built.

The scope of elemental analysis extends from determining the major constituents that define a material's bulk properties to detecting trace-level impurities that can critically impact performance or safety [1]. For instance, in pharmaceutical development, the same analytical principles ensure both the correct bulk formulation of an active ingredient and the absence of toxic elemental impurities in the final drug product [2]. This guide details the core concepts, techniques, and applications that define modern elemental analysis, providing a scientific framework for researchers and drug development professionals.

The Scope of Elemental Analysis: From Bulk to Trace

Elemental analysis can be categorized based on the abundance and role of the elements being measured. The distinctions between these categories dictate the required sensitivity and the choice of analytical technique.

Major Content Analysis

Major content analysis focuses on determining the primary elements that constitute the bulk of a material, typically at concentrations exceeding 1% by weight [1]. This type of analysis is essential for verifying product integrity, ensuring a material meets compositional standards, and optimizing manufacturing processes [1]. For example, in metallurgy, quantifying the percentages of iron, carbon, and other alloying elements is fundamental to achieving the desired metal properties. Techniques such as X-ray Fluorescence (XRF) and combustion-based CHNOS analyzers are commonly employed for this purpose due to their robustness and ability to handle a wide range of sample types [1] [3].

Trace and Ultra-Trace Analysis

Trace and ultra-trace analysis detects and quantifies impurities and minor elemental components at very low levels, such as parts per million (ppm), parts per billion (ppb), or even lower [1]. Even minimal contamination at these levels can have detrimental effects in fields like semiconductor manufacturing, pharmaceuticals, and high-purity materials production [1]. In pharmaceuticals, trace elemental analysis is critical for complying with regulations like ICH Q3D, which limits elemental impurities in drug products due to their potential toxicity [2]. Techniques such as Inductively Coupled Plasma Mass Spectrometry (ICP-MS) are the gold standard for this application, offering the necessary high sensitivity and broad dynamic range [3] [2].

Fingerprint Analysis

Fingerprint analysis involves identifying a material’s unique elemental signature, which helps determine its composition, structure, and distinguishing characteristics [1]. This is often a qualitative or semi-quantitative process used to verify raw materials, ensure batch-to-batch consistency, detect contamination, or identify the origin of a material in forensic investigations [1]. Techniques like XRF or Elemental Emission Spectroscopy can generate spectral or elemental profiles for material authentication and counterfeit detection [1].

Table 1: Scopes of Elemental Analysis and Their Characteristics

| Analysis Scope | Typical Concentration Range | Primary Purpose | Common Techniques |

|---|---|---|---|

| Major Content | > 1% by weight | Determine bulk composition, verify product integrity | XRF, CHNOS Analyzers, ICP-OES |

| Trace/Ultra-Trace | ppm to ppt levels | Ensure purity, identify contaminants, safety compliance | ICP-MS, ICP-OES (high-sensitivity) |

| Fingerprint | ppm to percent levels | Material authentication, defect detection, forensic identification | XRF, ICP-OES, GDOES |

Essential Techniques in Elemental Analysis

A wide array of instrumental techniques is available for elemental analysis. The choice of method depends on factors such as the required sensitivity, the elements of interest, sample type, and whether bulk or spatial information is needed.

Plasma-Based Techniques

Inductively Coupled Plasma Optical Emission Spectroscopy (ICP-OES) and Inductively Coupled Plasma Mass Spectrometry (ICP-MS) are powerful, versatile techniques for determining elemental concentrations in liquid samples and, with specialized accessories, some solids [1] [3].

- ICP-OES works by using a high-temperature argon plasma to atomize and excite the elements in a sample. The excited atoms emit light at characteristic wavelengths, which is measured to identify and quantify the elements present. It is ideal for detecting trace elements across complex matrices, offering high precision and a broad dynamic range [1].

- ICP-MS also uses an argon plasma to atomize and ionize the sample. However, instead of measuring emitted light, it uses a mass spectrometer to separate and detect ions based on their mass-to-charge ratio. This provides extremely high sensitivity, enabling detection at ultra-trace (ppb and ppt) levels [3] [2].

A key advantage of ICP methods is their ability to perform simultaneous multi-element analysis. A primary limitation is that samples typically require dissolution, which can involve hazardous acids and poses a work safety risk [3].

X-Ray Based Techniques

X-Ray Fluorescence (XRF) is a common, non-destructive method for determining elemental composition for solids, powders, and liquids [1]. When a sample is irradiated with high-energy X-rays, the elements emit characteristic secondary (or "fluorescent") X-rays. The energy of these emitted X-rays identifies the element, and their intensity quantifies its concentration [1]. XRF is well-suited for detecting elements from fluorine to uranium and is widely used in industries like mining, electronics, and recycling for its speed and cost-effectiveness [1] [3]. While generally used for bulk analysis, modern XRF systems can also perform detailed elemental mapping over surface areas [1].

Scanning Electron Microscopy with Energy Dispersive X-ray Spectroscopy (SEM-EDX or SEM-EDS) combines high-resolution imaging with elemental analysis. The electron beam scans the sample surface, generating secondary electrons for imaging and characteristic X-rays for elemental identification via an energy-dispersive spectrometer [4]. This technique is valuable for providing spatial information about elemental distribution and is commonly used for microstructural examination and failure analysis [4] [3]. Its detection limits are typically higher (0.1-1 atomic %) than plasma-based techniques [3].

Other Key Techniques

- Combustion Analyzers (CHNOS): These instruments are designed to determine the amounts of carbon, hydrogen, nitrogen, oxygen, and sulfur in organic sample materials through combustion and subsequent gas analysis [3] [2]. They are highly effective for bulk composition analysis but lack the sensitivity for trace-level work [3].

- Glow Discharge Optical Emission Spectroscopy (GDOES): This technique enables depth-resolved analysis of solid materials, measuring elemental concentrations as a function of depth. It is extensively used in the development and quality control of coated materials, thin films, and semiconductors [1].

- Atomic Absorption Spectroscopy (AAS): AAS is a technique for detecting specific metals in low concentrations. However, it is generally limited to sequential single-element analysis, and as such, has been largely supplanted by faster, multi-element techniques like ICP-OES and ICP-MS for most applications [3].

Table 2: Comparison of Popular Elemental Analysis Techniques

| Method | Detectable Elements | Sensitivity (Approx.) | Key Applications & Notes |

|---|---|---|---|

| ICP-MS | Li to U | ppm to ppt | Ultra-trace analysis; high sensitivity; multi-element [3] |

| ICP-OES | Li to U | ppm | Trace element analysis; broad dynamic range; multi-element [1] [3] |

| AAS | Up to ~70 metallic elements | ppm | Mainly metallic elements; sequential single-element analysis [3] |

| CHNOS | C, H, N, O, S | 0.05–0.1 wt% | Bulk organic composition; cannot detect trace impurities [3] |

| XRF | Be to U | 10 ppm–1 at% | Non-destructive; solid/liquid samples; bulk and mapping [1] [3] |

| SEM-EDX | All except H, He, Li | 0.1–1 at% | Surface analysis; provides spatial/imaging data [4] [3] |

Experimental Protocols and Workflows

General Workflow for Liquid Sample Analysis via ICP-MS/OES

The analysis of a sample via plasma-based techniques follows a systematic workflow to ensure accuracy and reliability.

Step 1: Sample Preparation. For solid samples, this begins with homogenization (e.g., grinding to a fine powder) to ensure a representative aliquot is taken. The sample is then dissolved using a suitable acid or mixture of acids (e.g., nitric acid, aqua regia, or hydrofluoric acid), often with the aid of heat in a process called digestion [3] [2]. Correct sample preparation is essential for achieving reliable quantitative data [2].

Step 2: Dilution and Introduction of Internal Standards. The digested sample is diluted to a volume suitable for analysis and within the linear calibration range of the instrument. At this stage, internal standards (e.g., elements not present in the original sample, such as Indium or Rhodium) are often added to correct for instrument drift and matrix effects [3].

Step 3: Instrumental Analysis. The liquid sample is introduced into the instrument via a peristaltic pump, nebulized into a fine aerosol, and transported into the high-temperature argon plasma. In ICP-OES, the emitted light is separated by a grating and measured by a detector [1]. In ICP-MS, the generated ions are extracted into a mass spectrometer (commonly a quadrupole) and separated by their mass-to-charge ratio before detection [3].

Step 4: Calibration and Quantification. The instrument is calibrated using a series of standard solutions with known concentrations. The calibration curve is then used to convert the measured signal (intensity of light for OES, ion counts for MS) in the unknown sample into an elemental concentration [3].

Step 5: Quality Control. Quality assurance/quality control (QA/QC) measures are critical. These include the analysis of certified reference materials (CRMs), method blanks, and duplicate samples to validate the accuracy and precision of the entire analytical process [2].

Method Selection Workflow

Choosing the most appropriate analytical technique is a critical first step in any elemental analysis project. The following decision logic can guide researchers.

The Scientist's Toolkit: Key Reagents and Materials

Successful elemental analysis relies on high-purity reagents and specialized materials to prevent contamination and ensure accurate results.

Table 3: Essential Research Reagent Solutions for Elemental Analysis

| Reagent/Material | Function | Application Notes |

|---|---|---|

| High-Purity Acids (e.g., Nitric, Hydrochloric) | Sample digestion and dissolution to release elements into solution. | Essential for ICP and AAS. Must be ultra-pure (e.g., TraceMetal grade) to minimize background contamination [3] [2]. |

| Certified Reference Materials (CRMs) | Calibration and verification of method accuracy. | Materials with a certified composition that are chemically and physically similar to the unknown samples [2]. |

| Internal Standard Solutions | Correction for instrument drift and matrix effects during analysis. | Added to all samples, blanks, and standards; common elements include Sc, Y, In, Rh, Bi [3]. |

| Multi-Element Standard Solutions | Instrument calibration for quantitative analysis. | Used to create a calibration curve covering the elements and concentration range of interest [3]. |

| Ultrapure Water (18.2 MΩ·cm) | Dilution and preparation of all aqueous solutions. | Prevents introduction of interfering ions from water impurities [2]. |

| Specialized Gases (e.g., High-Purity Argon) | Sustain the plasma in ICP techniques. | Impurities in gas can cause spectral interferences and instability [3]. |

Applications in Research and Industry

Elemental analysis serves as a critical tool in numerous fields, each with specific requirements and challenges.

- Pharmaceutical Development and Production: Elemental analysis supports R&D and Good Manufacturing Practice (GMP) by ensuring the identity of raw materials and quantifying catalyst residues. Crucially, it is used to test for elemental impurities per ICH Q3D and USP <232>/<233> guidelines, which classify elements like Pb, Cd, As, and Hg based on their toxicity [2].

- Environmental Monitoring: Detecting trace elements and pollutants in air, water, soil, and waste is essential for assessing environmental impact and ensuring regulatory compliance with regulations like EU food contaminant regulation 2023/915 [1] [3].

- Material Science and Metallurgy: Characterizing coatings, layered materials, and bulk metal composition is key for resource exploration, product development, and quality control. Techniques like GDOES are used to monitor photovoltaic device manufacturing and improve Li batteries [1].

- Forensic Science and Anthropology: Elemental analysis provides "elemental fingerprints" for material identification in criminal investigations. Stable isotopic profiling of human tissues (bone, teeth, hair) can reveal an individual's dietary patterns or geographical history, aiding in identification [4].

- Consumer Product Safety: Independent testing labs perform elemental analysis to ensure products like cosmetics, packaging, and toys comply with heavy metal restrictions under regulations such as REACH [3] [2].

Elemental analysis, spanning from bulk composition to trace impurities, is a dynamic and essential field in analytical chemistry. For researchers and drug development professionals, a deep understanding of the available techniques—their principles, capabilities, and limitations—is fundamental to designing robust analytical strategies. The continuous advancement of instrumentation, coupled with rigorous methodological protocols and QA/QC, ensures that elemental analysis remains a powerful tool for driving innovation, ensuring safety, and maintaining quality across the scientific and industrial landscape. As computational methods and automation continue to evolve, the speed, sensitivity, and accessibility of these techniques are poised to expand further, opening new frontiers in material characterization and trace-level detection.

The Critical Role in Pharmaceutical Quality Control and Drug Safety

Pharmaceutical quality control and drug safety represent a critical continuum of scientific processes that ensure medicinal products meet rigorous standards of identity, purity, quality, and safety from development through patient administration. This whitepaper examines the integrated framework of modern analytical techniques, computational methodologies, and regulatory protocols that underpin this field. Within the context of molecular and elemental analysis research, we explore how advanced technologies—from Process Analytical Technology (PAT) and mass spectrometry to Artificial Intelligence (AI) in pharmacovigilance—are transforming pharmaceutical manufacturing and post-market surveillance. The convergence of these disciplines enables a proactive, data-driven approach to safeguarding patient health, ensuring product efficacy, and maintaining regulatory compliance across the global pharmaceutical landscape.

Foundations of Pharmaceutical Quality Control

Quality Control (QC) in the pharmaceutical industry is a systematic set of activities and techniques designed to monitor and verify that pharmaceutical products meet predefined standards of identity, strength, quality, and purity [5]. It operates within a broader Quality Management System (QMS) that encompasses Good Manufacturing Practices (GMP), comprehensive documentation, and skilled personnel [6]. The core functions of QC are multifaceted, focusing primarily on patient safety by ensuring medications are free from contamination and impurities, and product efficacy by verifying that drugs deliver their intended therapeutic benefits [7].

The Quality Control Process Workflow

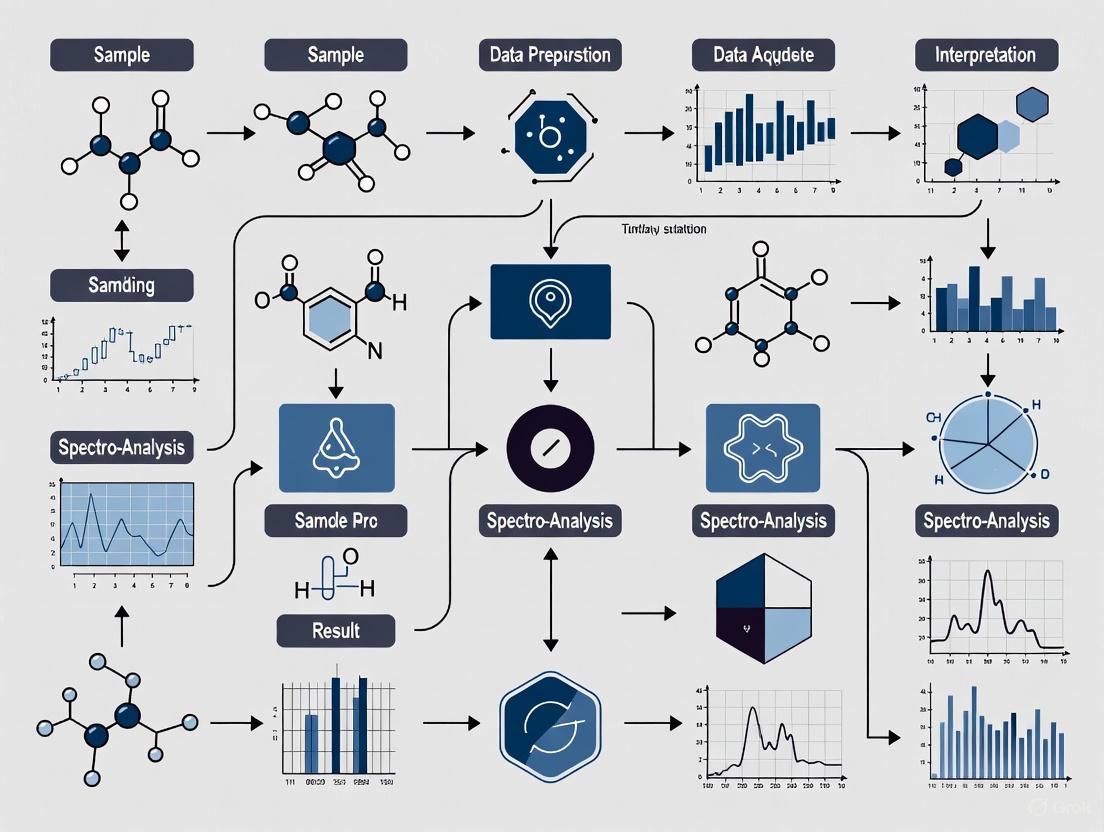

The pharmaceutical QC process is a sequence of verification stages that span the entire manufacturing lifecycle. The following diagram illustrates the core workflow:

Figure 1: Pharmaceutical Quality Control Workflow

- Raw Material Testing: All incoming primary raw materials, including Active Pharmaceutical Ingredients (APIs), excipients, and packaging components, are tested for identity, purity, and safety using sophisticated instrumental techniques like chromatography and spectroscopy [7]. This initial gate prevents contaminants from entering the production process.

- In-Process Monitoring: During manufacturing, critical parameters such as temperature, pressure, pH, and mixing time are continuously monitored. Process Analytical Technology (PAT) is a framework for real-time monitoring of Critical Quality Attributes (CQAs) and Critical Process Parameters (CPPs), enabling immediate adjustments and reducing reliance on end-product testing [7].

- Finished Product Testing: Before release, the final drug product undergoes rigorous testing, including assays, dissolution tests, stability checks, and leak tests. This confirms the product's purity, potency, and shelf life [7].

- Environmental Monitoring: Manufacturing areas, particularly those with installed HVAC systems, are constantly monitored for air quality, humidity, and microbial contamination to maintain aseptic conditions and prevent product spoilage [7].

- Quality Assurance Audits: Regular GMP audits of processes, training, equipment, and documentation ensure ongoing compliance, identify gaps, and maintain high standards [7].

Analytical Methodologies in Quality Control

The laboratory is the cornerstone of pharmaceutical QC, employing a suite of advanced analytical techniques to interrogate materials at the molecular and elemental level.

Key Analytical Techniques and Applications

Table 1: Core Analytical Techniques in Pharmaceutical QC

| Technique | Primary Application in QC | Measured Parameters | Regulatory References |

|---|---|---|---|

| Liquid Chromatography-Mass Spectrometry (LC/MS) | Identification and quantification of complex semi-volatile organic impurities, leachables, and extractables [8]. | Molecular weight, structural information, concentration. | ICH Q3B (R2) |

| Gas Chromatography-Mass Spectrometry (GC/MS) | Analysis of organic volatile impurities and residual solvents [8]. | Volatile compound identity and concentration. | USP <467>, ICH Q3C |

| Inductively Coupled Plasma Mass Spectrometry (ICP/MS) | Detection of heavy metal impurities and elemental contaminants [8]. | Elemental composition, trace metal concentration. | USP <232>, <233>, ICH Q3D |

| Ion Chromatography (IC) | Quantification of drug counterions and ionic impurities [8]. | Anion and cation concentration. | - |

| High-Resolution Accurate Mass (HRAM) Spectrometry | Unbiased screening and identification of unknown impurities with high selectivity and sensitivity [8]. | Exact mass, elemental composition. | - |

The Scientist's Toolkit: Essential Research Reagent Solutions

The execution of these analytical methods relies on a foundation of high-quality materials and reagents.

Table 2: Essential Reagents and Materials for Pharmaceutical Analysis

| Item | Function in QC Testing |

|---|---|

| Certified Reference Standards | Provides the benchmark for calibrating instruments and verifying the accuracy and precision of analytical methods for both APIs and impurities. |

| High-Purity Mobile Phases & Solvents | Ensures baseline stability in chromatographic separations (HPLC, GC) and prevents introduction of interfering artifacts or background noise. |

| Stable Isotope-Labeled Internal Standards | Used in mass spectrometry to correct for matrix effects and variability in sample preparation, improving quantitative accuracy. |

| Characterized Impurity Standards | Allows for positive identification and accurate quantification of specific known and potential impurities in a drug substance or product. |

| Sample Preparation Kits (e.g., for Leachables) | Provides standardized materials and protocols for efficient extraction, concentration, and clean-up of samples prior to instrumental analysis. |

Drug Safety Evaluation and Pharmacovigilance

Drug safety, or pharmacovigilance (PV), is the science and activities relating to the detection, assessment, understanding, and prevention of adverse effects or any other drug-related problem [9]. It is a lifecycle process, beginning with non-clinical studies and extending through a drug's entire market life.

Pre-clinical Safety Evaluation

Before human trials, therapeutic agents undergo rigorous non-clinical safety evaluation through laboratory studies in in vitro systems and in animals. This assessment is designed to identify potential toxic effects and establish a preliminary safety profile [10]. Key study types include:

- General Toxicity Studies: Acute to chronic studies to determine the relationship between dose, exposure, and adverse effects.

- Safety Pharmacology Studies: Assessment of effects on vital organ systems (e.g., cardiovascular, central nervous system).

- Reproductive and Genotoxicity Studies: Evaluation of effects on reproduction and genetic material.

- Investigative Toxicology and Biomarker Studies: Mechanistic studies to understand the basis of observed toxicities.

Post-Market Safety Surveillance

After a drug is approved, its safety profile is continuously monitored in much larger and more diverse patient populations under real-world use conditions. Traditional post-market surveillance has relied on:

- Spontaneous Adverse Event (AE) Reporting: Unsolicited communications of suspected adverse drug reactions from healthcare professionals or patients. While essential for identifying new signals, this system is limited by significant under-reporting [9].

- Aggregate Signal Detection: Using statistical methods like disproportionality analysis on large databases (e.g., FDA's FAERS) to detect patterns that may indicate a safety concern [9] [11].

The AI Revolution in Pharmacovigilance

The volume and complexity of modern safety data have rendered purely manual methods insufficient. Artificial Intelligence (AI) is now revolutionizing pharmacovigilance by enabling proactive, data-driven safety monitoring [11] [12]. The following diagram illustrates the architecture of an AI-enhanced safety monitoring system:

Figure 2: AI-Enhanced Drug Safety Monitoring Architecture

Key AI technologies transforming PV include:

- Natural Language Processing (NLP): Acts as a "universal translator" to transform unstructured text from Electronic Health Records (EHRs), social media, and call centers into structured, analyzable safety data. It uses Named Entity Recognition and Relation Extraction to identify drug-event relationships with reported accuracy reaching F-scores of 0.89 on social media data [11] [12].

- Machine Learning (ML): Serves as a "pattern-finding powerhouse," analyzing millions of data points to detect safety signals months earlier than human experts. Methods range from knowledge graphs (achieving AUCs up to 0.92) to deep neural networks, evolving toward predictive analytics for personalized risk management [11] [12].

- Robotic Process Automation (RPA): Functions as an "efficiency engine," automating repetitive tasks like data entry and initial case processing. This can free up to 40% of manual labor, allowing human experts to focus on high-value strategic analysis [12].

Leveraging Real-World Data (RWD)

The regulatory landscape has increasingly recognized the value of Real-World Data (RWD)—data relating to patient health status and/or the delivery of healthcare routinely collected from sources like EHRs and claims data [9]. Enabled by frameworks from the FDA and other international bodies, RWD can be used for more comprehensive safety surveillance, filling gaps left by pre-market clinical trials. Privacy-Preserving Record Linkage (PPRL) methods, such as tokenization, enable the creation of longitudinal patient records from disparate data sources while protecting patient privacy, offering more robust insights into long-term and rare risks [9].

Regulatory Compliance and Validation

Adherence to global regulatory standards is non-negotiable in pharmaceutical QC and drug safety. This requires a foundation of rigorous validation and qualified systems.

Equipment and Process Validation: IQ, OQ, PQ

A cornerstone of GMP is the qualification of equipment and validation of processes through a tripartite protocol [13]:

- Installation Qualification (IQ): Verifies that equipment is delivered and installed correctly according to the manufacturer's specifications.

- Operational Qualification (OQ): Demonstrates that the equipment functions as intended within its specified operating ranges.

- Performance Qualification (PQ): Confirms that the equipment can consistently perform its intended function with the actual manufacturing materials over a prolonged period.

These protocols ensure that manufacturing processes are reliable, reproducible, and capable of consistently producing a product that meets its quality attributes.

AI Validation and Regulatory Frameworks

The use of AI, particularly in safety-critical areas like pharmacovigilance, demands robust validation and governance. Regulatory expectations are crystallizing around four pillars [12]:

- Validation & Robustness: Continuous performance monitoring and validation against reference standards to detect and address "model drift" over time.

- Transparency & Explainability: Moving away from "black box" algorithms by using Explainable AI (XAI) techniques like SHAP or LIME to document decision-making processes.

- Data Integrity & Governance: Ensuring high-quality, representative data following ALCOA+ principles (Attributable, Legible, Contemporaneous, Original, Accurate) with cross-functional governance.

- Human Oversight: Maintaining a "human-in-the-loop" approach where AI augments, but does not replace, human expertise and clinical judgment.

Regulatory bodies like the FDA and EMA are developing specific guidance, such as the FDA's January 2025 draft guidance "Considerations for the Use of Artificial Intelligence to Support Regulatory Decision-Making," which establishes a risk-based framework for AI validation [12].

The fields of pharmaceutical quality control and drug safety are undergoing a profound transformation, driven by technological innovation and a shift toward more proactive, intelligence-driven paradigms.

Emerging Trends

- Hyper-Personalized Safety (2025-2026): AI will integrate genomic data, wearables, and patient-reported outcomes to predict individual patient risks [12].

- Advanced PAT and Continuous Manufacturing: Real-time monitoring and control will become standard, moving away from traditional batch testing toward more efficient continuous manufacturing [7].

- Quantum-Enhanced AI: Quantum computing principles are being explored to simulate molecular interactions, predicting adverse events based on biophysics with reported high accuracy, potentially revolutionizing early risk identification [12].

- Regulatory Harmonization: Efforts like the International Data Exchange Protocol (IDEP) aim to enable seamless safety data sharing across international borders [12].

Pharmaceutical quality control and drug safety are inextricably linked disciplines that form the bedrock of patient trust and therapeutic efficacy. The integration of sophisticated molecular and elemental analysis techniques in QC laboratories with AI-powered, data-driven approaches in pharmacovigilance creates a powerful, synergistic system for risk management. As the industry advances, the role of the scientist and safety professional will evolve toward that of a strategic partner, overseeing intelligent systems and applying irreplaceable clinical judgment. The ongoing revolution, grounded in rigorous molecular and elemental research, promises not only to enhance patient safety but also to accelerate the development of new, life-saving medicines.

The implementation of ICH Q3D, alongside supporting USP general chapters <232> and <233>, represents a fundamental shift in the control of elemental impurities in pharmaceutical products. This modernized, risk-based approach replaces outdated methods like the heavy metals test (USP <231>) with sophisticated analytical techniques to ensure patient safety. This guide provides drug development professionals with a comprehensive framework for navigating these harmonized guidelines, from fundamental principles to practical implementation strategies for compliance and robust quality control.

The global regulatory landscape for elemental impurities has evolved significantly, moving from a general, non-specific test to a targeted, risk-based approach grounded in modern toxicological science. The ICH Q3D Guideline for Elemental Impurities provides a comprehensive process for assessing and controlling elemental impurities in drug products using quality risk management principles [14]. This guideline classifies elements based on their toxicity and likelihood of occurrence, establishing Permitted Daily Exposure (PDE) limits for different routes of administration [15].

In alignment with ICH Q3D, the United States Pharmacopeia (USP) introduced new general chapters: <232> on limits and <233> on analytical procedures, effectively replacing the outdated heavy metals test (USP <231>) [16] [15]. This harmonization provides a consistent global framework for pharmaceutical manufacturers. The U.S. Food and Drug Administration (FDA) expects compliance with these standards, requiring risk assessments for both new and legacy products to be documented in regulatory submissions [15]. For medical devices, a parallel framework exists, with recent FDA draft guidance on chemical characterization aligning with the principles of ISO 10993-18 [17] [18].

Elemental Impurities: Classification and Toxicity

Elemental impurities in drug products provide no therapeutic benefit and can pose significant patient risks, including direct toxic effects or interference with drug efficacy [16]. These impurities can originate from various sources, including catalysts, excipients, process equipment, or the drug substance itself.

ICH Q3D categorizes 24 elemental impurities into four classes based on their toxicity and likelihood of occurrence in drug products [16] [15]:

- Class 1: Known human toxicants with limited or no use in pharmaceutical manufacturing (As, Cd, Hg, Pb)

- Class 2: Elements typically considered route-dependent toxicants

- Class 2A: Relatively high probability of occurrence in drug products (Co, Ni, V)

- Class 2B: Reduced probability of occurrence, primarily of concern when deliberately added (e.g., catalysts like Ir, Os, Pd, Rh, Ru)

- Class 3: Elements with relatively low toxicity by the oral route but requiring consideration for parenteral and inhalation routes (Ba, Cr, Cu, Li, Mo, Sb, Sn)

- Other Elements: Elements with low inherent toxicity that are generally excluded from risk assessment (e.g., K, Ca, Na)

The foundation of the ICH Q3D control strategy is the Permitted Daily Exposure (PDE), defined as the maximum acceptable intake of an elemental impurity per day that poses no significant risk to human health [16]. PDEs are established based on comprehensive toxicological assessments and vary according to the route of administration, reflecting differences in bioavailability and toxicity across exposure pathways.

Table 1: Permitted Daily Exposures (PDEs) for Elemental Impurities (μg/day) [16]

| Element | Class | Oral PDE | Parenteral PDE | Inhalation PDE |

|---|---|---|---|---|

| Cadmium (Cd) | 1 | 5 | 2 | 2 |

| Lead (Pb) | 1 | 5 | 5 | 5 |

| Arsenic (As) | 1 | 15 | 15 | 2 |

| Mercury (Hg) | 1 | 30 | 3 | 1 |

| Cobalt (Co) | 2A | 50 | 5 | 3 |

| Vanadium (V) | 2A | 100 | 10 | 1 |

| Nickel (Ni) | 2A | 200 | 20 | 5 |

| Palladium (Pd) | 2B | 100 | 10 | 1 |

| Lithium (Li) | 3 | 550 | 250 | 25 |

| Copper (Cu) | 3 | 3000 | 300 | 30 |

Risk Assessment and Control Strategies

A scientifically sound risk assessment forms the cornerstone of the ICH Q3D implementation process [14] [15]. This assessment evaluates the potential for elemental impurities to be present in the final drug product at levels exceeding established PDEs. The process involves three key stages: identification, analysis, and control strategy implementation.

Risk Assessment Options

ICH Q3D outlines four primary options for conducting risk assessments [15]:

- Option 1: Consider the drug product composition and identify all potential sources of elemental impurities.

- Option 2A: Evaluate individual components of the drug product based on known data.

- Option 2B: Use a summation approach where the contribution of each component is considered relative to its mass proportion in the product.

- Option 3: Measure elemental impurity levels in the final drug product.

The control threshold is set at 30% of the PDE [15]. Elements detected below this level generally do not require routine testing, while those above the threshold but below the PDE must be included in the control strategy, typically through specification limits.

The Risk Assessment Workflow

The following diagram illustrates the systematic workflow for elemental impurity risk assessment:

Analytical Methodologies and Protocols

Robust analytical methods are essential for accurate elemental impurity determination. USP general chapter <233> describes two principal procedures: Inductively Coupled Plasma-Mass Spectrometry (ICP-MS) and Inductively Coupled Plasma-Optical Emission Spectrometry (ICP-OES) [16] [15].

Sample Preparation

Proper sample preparation is critical for accurate results:

- Solubilization: The sample must be completely dissolved. Water or organic solvents may be used for intrinsically soluble materials [15].

- Acid Digestion: For insoluble materials, digestion with strong acids (typically nitric acid) using closed-vessel microwave-assisted systems is recommended to ensure complete dissolution and prevent loss of volatile elements like mercury [15].

- Recovery Studies: Sample processing steps such as solvent evaporation must be qualified with recovery rates recommended to be within 80-120% [18].

Instrumental Analysis

Table 2: Comparison of ICP-MS and ICP-OES Techniques

| Parameter | ICP-MS | ICP-OES |

|---|---|---|

| Detection Limits | Excellent (sub-ppb) | Good (low-ppb) |

| Linear Dynamic Range | 8-9 orders of magnitude | 4-6 orders of magnitude |

| Isotopic Capability | Yes | No |

| Interferences | Polyatomic, isobaric | Spectral, matrix |

| Elemental Coverage | Comprehensive | Comprehensive |

| Operational Cost | Higher | Lower |

| Sample Throughput | High | High |

The selection between ICP-MS and ICP-OES depends on the required detection limits, the elements of interest, and the sample matrix. ICP-MS generally provides superior sensitivity, particularly for elements requiring low detection limits such as Class 1 elements in inhalation products [16] [15].

Analytical Workflow

The following diagram illustrates the complete analytical workflow for elemental impurity testing:

Method Validation

According to USP <233>, analytical methods must be validated for specificity, range, accuracy, repeatability, intermediate precision, and limit of quantification (LOQ) [15]. The LOQ should be sufficiently low to detect elements at the control threshold (30% of PDE). For limit tests, only specificity and limit of detection (LOD) are required, with the LOD not exceeding 50% of the specification limit [15].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of elemental impurity controls requires specific high-quality materials and reference standards.

Table 3: Essential Research Reagents and Materials for Elemental Impurity Analysis

| Item | Function | Application Notes |

|---|---|---|

| USP Reference Standards [19] [20] | Highly characterized specimens for instrument calibration and method validation | Over 3,500 available standards; essential for compliance with USP methods |

| Single-Element Stock Solutions | Primary standards for calibration curve preparation | High-purity solutions with certified concentrations |

| Internal Standard Mix | Correction for matrix effects and instrument drift | Elements not present in samples (e.g., Sc, Y, In, Bi, Ho, Lu) |

| High-Purity Acids | Sample digestion and dilution | Trace metal grade nitric acid, hydrochloric acid |

| Tuning Solutions | ICP-MS performance optimization | Contains elements covering mass range (e.g., Li, Y, Ce, Tl, Co) |

| Quality Control Materials | Verification of method accuracy and precision | Certified reference materials with known elemental concentrations |

USP Reference Standards are particularly critical as they are explicitly required in many pharmacopeial assays and tests. These standards are established through a rigorous process involving collaborative testing and are released under the authority of the USP Board of Trustees [20]. The USP currently offers more than 3,500 Reference Standards, which are highly characterized specimens of drug substances, excipients, impurities, degradation products, and performance calibrators [19] [21].

Implementation and Compliance Strategies

Successful implementation of ICH Q3D requires a systematic approach across the product lifecycle.

Regulatory Submission Requirements

- New Products: A summary of the risk assessment should be included in CTD Section P.2 (Pharmaceutical Development) with analytical procedures detailed in Section 3.2.S.4.3/S.4.4 [15].

- Legacy Products: FDA recommends submitting risk assessments as part of the applicant's Annual Report, even if no changes are proposed [15].

- Health Canada: Requires a statement of ICH Q3D-compliance in every drug product specification [15].

- EMA: Expects a summary of risk assessments in CTD Modules 2 and 3, with full documentation available for inspection [15].

Control Strategies

Based on the risk assessment outcome, appropriate control strategies must be established:

- Supplier Qualification: Implementing strict quality agreements with API and excipient suppliers regarding elemental impurity levels.

- Specification Limits: Setting appropriate limits for the drug product or components based on PDE values and maximum daily dose.

- Routine Testing: Implementing targeted testing for elements identified as potential risks in the assessment.

- Periodic Review: Re-assessing the control strategy as part of change control procedures and periodically to address unplanned changes in components or manufacturing processes [15].

The harmonized framework of ICH Q3D, USP chapters <232>/<233>, and FDA guidance provides a scientifically rigorous, risk-based approach to controlling elemental impurities in pharmaceutical products. Successful implementation requires a thorough understanding of the regulatory requirements, robust risk assessment methodologies, and state-of-the-art analytical capabilities. By adopting this systematic approach, pharmaceutical scientists can ensure patient safety while navigating the complex global regulatory landscape efficiently. The principles of quality risk management embedded in these guidelines not only enhance product quality but also encourage continuous improvement in pharmaceutical development and manufacturing practices.

Key Market and Technology Trends Shaping Analytical Science

Analytical science is undergoing a transformative evolution driven by technological convergence across multiple domains. The integration of artificial intelligence (AI) and machine learning (ML) with traditional analytical techniques is accelerating method development and enhancing data interpretation capabilities. Concurrently, a strong emphasis on sustainability is pushing the field toward greener analytical practices, while demands for real-time, on-site analysis are fueling innovations in miniaturized and portable instrumentation. The global analytical instrumentation market, estimated at $55.29 billion in 2025, reflects this dynamism, projected to grow at a CAGR of 6.86% to reach $77.04 billion by 2030 [22]. In specialized sectors, the pharmaceutical analytical testing market demonstrates even more vigorous growth, expected to expand at a CAGR of 8.41%, from $9.74 billion in 2025 to $14.58 billion by 2030 [22]. This whitepaper examines the core technological and market trends shaping the future of molecular and elemental analysis, providing researchers and drug development professionals with a strategic overview of the evolving analytical landscape.

The analytical science market is characterized by robust growth fueled by escalating demands from the pharmaceutical, biotechnology, environmental monitoring, and materials science sectors. Rising R&D investments, coupled with stringent regulatory requirements for quality control and safety, are primary drivers of this expansion [22].

Table 1: Global Market Size and Projections for Analytical Science Segments

| Market Segment | 2025 Market Size (USD Billion) | 2030/2035 Projected Size (USD Billion) | Projected CAGR |

|---|---|---|---|

| Analytical Instrumentation [22] | 55.29 | 77.04 (by 2030) | 6.86% |

| Pharmaceutical Analytical Testing [22] | 9.74 | 14.58 (by 2030) | 8.41% |

| Life Science Analytics [23] | ~12.0 | 36.3 (by 2035) | ~11.8% |

| Data Science & Predictive Analytics [24] | 25.24 | 141.34 (by 2035) | 18.8% |

Geographically, North America currently holds the largest market share, particularly in pharmaceuticals, due to a high concentration of clinical trials and contract research organizations (CROs) [22]. However, the Asia-Pacific region is anticipated to experience the most rapid growth, driven by expanding pharmaceutical manufacturing capabilities and increasing attention to environmental concerns [22].

Key Technology Trends

AI, Machine Learning, and Automation in Analytics

The integration of AI and ML is fundamentally altering analytical workflows. These technologies enhance data analysis by automating complex processes and identifying patterns within large datasets that may elude human analysts [22].

- AI-Powered Method Development: Algorithms are increasingly used to optimize experimental parameters, such as chromatographic conditions, drastically reducing the time required for method development in techniques like HPLC [22].

- Automated Machine Learning (AutoML): AutoML platforms are democratizing analytics by automating key steps in the machine learning pipeline, such as model selection and hyperparameter tuning. This empowers non-experts to leverage predictive capabilities, accelerating research and development cycles [25].

- Predictive Maintenance: AI and ML models are being deployed to forecast instrument failures by analyzing operational data, thereby minimizing downtime and ensuring analytical consistency [26].

Table 2: AI and Data Analytics Technologies and Their Research Applications

| Technology | Core Function | Application in Analytical Science |

|---|---|---|

| Artificial Intelligence & Machine Learning [22] | Pattern recognition, process optimization, and predictive modeling. | Optimizing chromatographic separation; predicting molecular properties in silico. |

| Automated Machine Learning (AutoML) [25] | Automates the process of applying ML models. | Enables rapid development of predictive models for compound activity or toxicity. |

| Natural Language Processing (NLP) [26] | Extracts insights from textual data. | Mining scientific literature and patents for novel research insights and connections. |

| Data Democratization [27] | Makes data and tools accessible to non-experts. | User-friendly platforms for scientists to perform complex analyses without coding expertise. |

Green Analytical Chemistry and Sustainability

A significant trend is the shift toward Green Analytical Chemistry, which focuses on developing environmentally friendly procedures. Key advancements include [22]:

- Miniaturized Processes: Techniques like microextraction significantly reduce solvent consumption.

- Alternative Solvents: Employing less hazardous solvents, such as ionic liquids or supercritical fluids.

- Sustainable Techniques: Adoption of Supercritical Fluid Chromatography (SFC), which uses CO2 as the primary mobile phase, reducing reliance on organic solvents.

Miniaturization and Portable Analytical Devices

The demand for on-site, real-time analysis in fields like environmental monitoring, food safety, and forensic science is driving the development of portable and miniaturized devices [22]. Examples include:

- Portable Gas Chromatographs and hand-held XRF analyzers that provide immediate data in the field [22].

- TinyML, which involves deploying machine learning models on low-power, tiny devices, enabling intelligent data processing at the source (edge computing) for instant insights [25].

Advanced Instrumentation and Multi-dimensional Analysis

Instrumentation continues to advance, providing greater sensitivity, resolution, and throughput.

- Tandem Techniques: The combination of separation and detection techniques, such as tandem mass spectrometry (MS/MS) and LC-MS, is critical for analyzing complex mixtures in pharmaceutical and life science applications [22].

- Multidimensional Separations: Techniques like multidimensional chromatography offer superior separation power compared to one-dimensional methods, which is essential for complex samples like proteomic digests [22].

- Multi-omics Integration: Analytical chemistry is integral to multi-omics approaches (genomics, proteomics, metabolomics), which provide a holistic view of biological systems. Mass spectrometry is increasingly involved in single-cell multimodal studies, enabling early disease detection and biomarker discovery [22].

Experimental Protocols for Advanced Material Analysis

Protocol: Depth Profile Analysis of Organic/Inorganic Multilayer Coatings using Coupled GDOES and Raman Spectroscopy

This protocol details a methodology for characterizing complex multilayer systems, common in advanced materials and coatings research [28].

1. Principle: Glow Discharge Optical Emission Spectroscopy (GDOES) provides rapid, depth-resolved elemental analysis. When coupled with Raman spectroscopy, it delivers simultaneous elemental and molecular information, which is crucial for analyzing layers containing both organic and inorganic components [28].

2. Materials and Equipment:

- GDOES instrument equipped with a patented oxygen-argon (Ar/O₂) plasma source [28].

- Raman spectrometer with a microscope attachment.

- Micro-X-ray Fluorescence (µXRF) spectrometer (for complementary, non-destructive analysis).

- Solid-state multilayer sample (e.g., a polymer-metal stack from automotive coatings).

3. Procedure: Step 1: GDOES Depth Profiling with Ar/O₂ Plasma. - Mount the sample in the GDOES chamber. - Introduce the optimized Ar/O₂ gas mixture into the plasma. The oxygen enhances the sputtering efficiency and uniformity for organic materials. - Initiate the plasma and begin the depth profile analysis. The instrument records the intensity of specific elemental emission lines as a function of time, which is converted to depth. - This step generates a quantitative elemental depth profile, showing the distribution of elements (e.g., C, O, Fe, Zn) across the coating layers.

Step 2: In-situ Raman Analysis within the GDOES Crater. - After GDOES profiling, transfer the sample to the Raman microscope. - Focus the laser precisely inside the crater created by the GDOES sputtering process. - Acquire Raman spectra at various depths within the crater. The Raman spectra provide molecular fingerprinting (e.g., identifying specific polymers, oxides, or corrosion products) at different layers.

Step 3: Validation via µXRF. - Perform µXRF analysis on the same crater to obtain a non-destructive elemental map. - Correlate the XRF results with the GDOES elemental data to validate the profile and ensure no chemical alterations occurred during analysis that were not detected by Raman.

4. Data Interpretation: Correlate the GDOES elemental depth profile with the molecular fingerprints from Raman spectroscopy to build a comprehensive picture of the multilayer structure. The identical results from Raman and XRF confirm the integrity of the analyzed layers [28].

Diagram 1: Coupled GDOES-Raman analysis workflow.

Protocol: Elemental Analysis of Trace Metals in Biological Samples using ICP-MS

This protocol describes a highly sensitive method for quantifying trace metal concentrations, applicable in pharmaceutical quality control and toxicology studies [29].

1. Principle: Inductively Coupled Plasma Mass Spectrometry (ICP-MS) atomizes and ionizes a sample in a high-temperature argon plasma. The resulting ions are separated by their mass-to-charge ratio and detected, providing exceptional sensitivity for trace element analysis [29].

2. Materials and Equipment:

- ICP-MS instrument.

- Automated sample introduction system (e.g., autosampler with peristaltic pump).

- High-purity nitric acid and hydrogen peroxide.

- Certified reference materials (CRMs) for calibration and quality control.

- Ultra-pure water (18 MΩ·cm).

- Biological sample (e.g., tissue, blood).

3. Procedure: Step 1: Sample Digestion. - Accurately weigh ~0.5 g of the biological sample into a clean microwave digestion vessel. - Add 5 mL of high-purity nitric acid and 1 mL of hydrogen peroxide. - Perform microwave-assisted digestion according to a validated temperature and pressure program (e.g., ramp to 180°C over 20 minutes, hold for 15 minutes). - After cooling, quantitatively transfer the digest to a 50 mL volumetric flask and dilute to volume with ultra-pure water. Include method blanks and CRMs processed identically.

Step 2: ICP-MS Instrument Tuning and Calibration. - Tune the ICP-MS instrument daily for optimal sensitivity (e.g., using a tuning solution containing Li, Y, Ce, Tl) while minimizing oxide and doubly charged ion formation. - Prepare a multi-element calibration standard curve (e.g., at 0, 1, 10, 100, 500 ppt/ppb) covering the analytes of interest.

Step 3: Sample Analysis and Data Acquisition. - Introduce the digested samples, blanks, and CRMs into the ICP-MS via the autosampler. - Acquire data for the target isotopes. Use an internal standard (e.g., Sc, Ge, Rh, Bi) added online to all solutions to correct for instrument drift and matrix suppression/enhancement.

Step 4: Data Processing and Validation. - Calculate analyte concentrations in the sample based on the calibration curve. - Verify the accuracy of the analysis by comparing the measured values of the CRMs to their certified values. Results should fall within the certified uncertainty ranges.

Diagram 2: ICP-MS trace metal analysis workflow.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for Advanced Analytical Research

| Reagent/Material | Function/Application | Technical Notes |

|---|---|---|

| Ionic Liquids [22] | Green alternative solvents for extraction and chromatography. | Low volatility, high thermal stability, tunable properties. |

| Certified Reference Materials (CRMs) [29] | Calibration and quality control to ensure analytical accuracy and traceability. | Essential for method validation and regulatory compliance (e.g., FDA, ICH). |

| ICP-MS Tuning Solution [29] | Optimization of instrument performance (sensitivity, resolution, oxide levels). | Typically contains elements like Li, Y, Ce, Tl at known concentrations. |

| Specialty Gases (Ar/O₂) [28] | Plasma and sputtering gas for elemental analysis techniques (ICP, GDOES). | Ar/O₂ mixture critical for uniform sputtering of organic layers in GDOES. |

| Stable Isotope-Labeled Standards | Internal standards for mass spectrometry-based quantitative proteomics and metabolomics. | Enables precise and accurate quantification of biomolecules in complex samples. |

Future Outlook

The trajectory of analytical science points toward increasingly intelligent, integrated, and automated systems. Key future trends include:

- Agentic AI: The emergence of AI systems capable of autonomous decision-making will further transform workflows. These systems can set goals, plan tasks, and execute analytical sequences with minimal human intervention, boosting forecast accuracy and operational efficiency [27].

- Quantum Computing: Though still nascent, quantum computing holds potential for revolutionizing analytical science by performing computations on quantum bits (qubits). This could enable the analysis of intricate datasets and identification of complex molecular patterns on a scale impossible for classical computers, with profound implications for drug discovery and materials science [30].

- Enhanced Human-Machine Collaboration: The role of the analytical scientist will evolve from performing routine analyses to overseeing automated systems, designing experimental strategies, and interpreting complex, multi-modal data. The synergy between human expertise and advanced computational tools will define the next frontier of discovery [25].

A Practical Guide to Key Analytical Techniques and Their Applications

Inductively Coupled Plasma Mass Spectrometry (ICP-MS) has established itself as the undisputed gold standard for ultra-trace multielement analysis across scientific disciplines. This technique combines a high-temperature inductively coupled plasma source with a mass spectrometer to detect and quantify elements at exceptionally low concentrations [31]. The fundamental strength of ICP-MS lies in its ability to provide simultaneous multi-element detection with unparalleled sensitivity, wide dynamic range, and high sample throughput, making it indispensable for researchers requiring precise elemental characterization [32] [33].

In the broader context of molecular and elemental analysis research, ICP-MS fills a critical niche by enabling the detection of metals and several non-metals at trace and ultra-trace levels that are often inaccessible to other analytical techniques. The capability to measure isotopic ratios further expands its utility in tracking elemental pathways and sources in complex biological and environmental systems [31]. As instrumental advancements continue to address analytical challenges such as spectral interference and matrix effects, ICP-MS remains at the forefront of elemental analysis, supporting innovations from drug development to environmental monitoring and clinical diagnostics.

Fundamental Principles and Instrumentation

Core Working Principle

The analytical power of ICP-MS stems from its sophisticated integration of plasma ionization and mass separation technologies. The process begins when liquid samples are nebulized into a fine aerosol and introduced into the argon plasma, which operates at temperatures ranging from 6,000 to 10,000 K [34]. At these extreme temperatures, sample particles are completely desolvated, vaporized, atomized, and ionized, forming predominantly singly charged positive ions [33] [31]. These ions are then extracted through a series of interface cones into the high-vacuum mass spectrometer region, where they are separated based on their mass-to-charge ratio (m/z) before being detected and quantified [34].

The inductively coupled plasma itself is generated within a quartz torch consisting of three concentric tubes. Argon gas flows between the outer tubes while a radio-frequency electric current (typically 27.12 MHz) is applied through an induction coil surrounding the torch [31]. This configuration creates an oscillating magnetic field that accelerates free electrons, initiating a chain reaction of ionization events that sustains the stable plasma. The sample aerosol is channeled through the center of this plasma, where efficient ionization occurs for most elements in the periodic table except carbon, hydrogen, oxygen, nitrogen, and noble gases [33].

Key Instrumentation Components

Sample Introduction System: Conventional pneumatic nebulizers convert liquid samples into aerosol, though specialized introduction systems like microdroplet generators have been developed for fragile mammalian cells to preserve structural integrity [35]. Additional options include desolvating and ultrasonic nebulizers for improved sensitivity [32].

ICP Torch and RF Generator: The quartz torch assembly maintains the argon plasma through efficient coupling with the radio-frequency energy, typically operating at 27.12 MHz with argon flow rates of 13-18 L/min [31].

Interface Region: Features sampling and skimmer cones that extract ions from the high-temperature plasma (at atmospheric pressure) into the high-vacuum mass spectrometer region through differential pumping [31].

Mass Analyzer: Most commonly a quadrupole mass filter that rapidly separates ions by m/z, though magnetic sector and time-of-flight analyzers are used for higher resolution applications [31] [34].

Detection System: Typically employs electron multipliers or Faraday cups that measure ion counts, providing detection limits reaching parts per trillion (pg/mL) for many elements [33] [34].

Analytical Capabilities and Performance

Key Analytical Figures of Merit

ICP-MS delivers exceptional analytical performance that surpasses other elemental techniques in several critical parameters. The technique offers detection limits typically in the parts per trillion (ppt) range, a linear dynamic range spanning 8-9 orders of magnitude, and the capability for rapid multi-element analysis in a single run [33] [36]. This combination of sensitivity and wide concentration range allows researchers to quantify both major and trace elements simultaneously without sample dilution or reanalysis. The high sample throughput possible with ICP-MS—processing hundreds of samples per day—has revolutionized environmental monitoring and clinical testing workflows while reducing operational costs [31].

Comparison with Other Elemental Techniques

Table 1: Comparison of ICP-MS with Other Elemental Analysis Techniques

| Technique | Multi-element Capability | Detection Limits | Linear Dynamic Range | Sample Throughput | Key Limitations |

|---|---|---|---|---|---|

| ICP-MS | Full simultaneous multi-element | ppt (pg/mL) range | 8-9 orders of magnitude | High | Equipment cost, spectral interferences, requires skilled operators [32] |

| ICP-OES | Full simultaneous multi-element | ppb (ng/mL) range | 4-6 orders of magnitude | High | Higher detection limits compared to ICP-MS [32] |

| Graphite Furnace AAS | Single element | ppt-ppb range | 2-3 orders of magnitude | Low | Low sample throughput, single element capability [32] |

| Flame AAS | Single element | ppb range | 2-3 orders of magnitude | Medium | High detection limits, single element capability [32] |

Isotopic Analysis Capability

A distinctive advantage of ICP-MS over other elemental techniques is its ability to perform isotopic analysis. This capability enables applications in radiometric dating for geochemistry, isotope dilution quantification for superior accuracy, and tracking of isotopically labeled compounds in biological systems [31]. For pharmaceutical research, stable isotope labeling combined with ICP-MS detection allows precise pharmacokinetic studies of metal-containing drugs by distinguishing administered compounds from endogenous elements [33].

Methodologies and Experimental Protocols

Sample Preparation Strategies

Proper sample preparation is critical for accurate ICP-MS analysis, particularly for complex biological matrices. For biological fluids like serum, plasma, or urine, samples typically undergo dilution (10-50 fold) with acidic or alkaline diluents to reduce total dissolved solids below 0.2%, minimizing matrix effects and nebulizer clogging [32]. Common diluents include dilute nitric acid, ammonium hydroxide, or tetramethylammonium hydroxide, often supplemented with surfactants like Triton-X-100 to solubilize lipids and membrane proteins [32]. For tissues, hair, nails, or other solid samples, more extensive digestion using strong acids with heating in hot blocks or microwave digestion systems is required to completely dissolve the sample matrix [32] [33].

Quantitative Analysis Methods

External Calibration: Uses a series of separate standard solutions to establish a relationship between instrument response and analyte concentration. While straightforward, this method doesn't account for matrix-induced effects or instrument drift [37].

Internal Standardization: The most widely used quantification approach where one or more internal standard elements (e.g., Be, Co, Ga, Y, Rh, In, Te, Tl, Bi) are added to all samples, standards, and blanks. The internal standard corrects for matrix effects and instrument drift by normalizing analyte response based on elements with similar mass and ionization potential [38] [37].

Standard Addition Method: Most effective for complex matrices with high total dissolved solids (>0.3%), this approach involves spiking aliquots of the sample with known concentrations of analyte. The calibration curve is generated directly in the sample matrix, providing superior accuracy despite being more time-consuming [37].

Isotope Dilution: Considered the gold standard for quantification, this method uses enriched stable isotopes as internal standards, providing exceptional accuracy and precision by accounting for all losses during sample preparation and analysis [31].

Protocol for Whole Blood Analysis

A validated protocol for multi-element analysis in whole blood demonstrates the application of ICP-MS in complex biological matrices [36]:

Sample Collection and Preparation: Collect whole blood using trace element-free collection tubes. Dilute samples 50-fold with a solution containing 0.5% nitric acid, 0.1% Triton X-100, and internal standards (Sc, Ge, Y, Rh, Ir).

Instrument Parameters: Use a triple quadrupole ICP-MS system with oxygen reaction gas mode (TQ-O₂) for interference removal. Employ helium kinetic energy discrimination (He KED) mode for additional interference suppression.

Calibration: Prepare multi-element calibration standards covering 43 elements (Li to U) in a matched matrix. Include quality control samples at low, medium, and high concentrations.

Analysis: Introduce samples using an autosampler with matrix handling capability. Monitor internal standard intensities throughout the run to correct for signal drift.

Data Processing: Quantify elements using internal standard corrected calibration curves. Apply interference correction algorithms for elements like As and Se using mass shift reactions (e.g., monitoring (^{75}\text{As}^{16}\text{O}) instead of (^{75}\text{As})).

Table 2: Clinical Concentration Ranges for Selected Elements in Biological Samples

| Element | Clinical Application | Approximate Concentration Range |

|---|---|---|

| Aluminium | Toxic | 0.1–10 μmol/L [32] |

| Arsenic | Toxic | 0.01–80 μmol/L [32] |

| Cadmium | Toxic | 1–100 nmol/L [32] |

| Copper | Nutritional, Metabolic | 1–50 μmol/L [32] |

| Lead | Toxic | 0.01–10 μmol/L [32] |

| Manganese | Nutritional | 1–400 nmol/L [32] |

| Selenium | Toxic, Nutritional | 0.1–10 μmol/L [32] |

| Zinc | Nutritional | 1–40 μmol/L [32] |

Advanced Interference Removal

Modern ICP-MS systems employ sophisticated interference removal techniques, particularly in triple quadrupole instruments [36]. The first quadrupole serves as a mass filter to select ions with specific m/z values, which then enter a collision/reaction cell where gas-phase reactions (with oxygen, ammonia, or helium) remove polyatomic interferences. The second quadrupole filters the reaction products before detection. For example, arsenic determination in blood uses oxygen reaction gas to convert (^{75}\text{As}^+) to (^{75}\text{As}^{16}\text{O}^+), moving the measured signal away from the (^{40}\text{Ar}^{35}\text{Cl}^+) interference at m/z 75 [36].

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Reagents and Materials for ICP-MS Analysis

| Reagent/Material | Function | Application Notes |

|---|---|---|

| High-Purity Acids (Nitric, Hydrochloric) | Sample digestion and dilution | Essential for minimizing background contamination; trace metal grade recommended [32] |

| Internal Standard Mix (Sc, Ge, Y, Rh, In, Ir, Bi) | Correction for matrix effects and instrument drift | Added to all samples, standards, and blanks at consistent concentration [38] [36] |

| Multi-Element Calibration Standards | Quantitative calibration | Certified reference materials covering analyte elements of interest [38] |

| Surfactants (Triton X-100) | Solubilization of biological matrices | Helps disperse lipids and membrane proteins in biological samples [32] |

| Matrix Modifiers (Ammonia, EDTA) | Stabilization of elements in solution | Ammonia stabilizes proteinaceous samples; EDTA chelates elements at alkaline pH [32] |

| Collision/Reaction Gases (He, O₂, H₂, NH₃) | Interference removal in collision cell | Helium for kinetic energy discrimination; oxygen for mass shift reactions [36] |

Applications in Pharmaceutical and Clinical Research

Drug Development and Quality Control

ICP-MS plays multiple essential roles in pharmaceutical development, particularly in ensuring drug safety and regulatory compliance. According to current regulations (USP <232> and USP <233>), pharmaceutical products must be monitored for elemental impurities including arsenic, lead, mercury, and cadmium, which can be toxic even at minimal concentrations [39]. ICP-MS provides the required sensitivity and precision to detect these contaminants at regulatory limits, making it the preferred technique for quality control in active pharmaceutical ingredients (APIs), excipients, and final drug formulations [39]. Additionally, the technique is indispensable for monitoring residual metal catalysts (platinum, palladium, rhodium) used in drug synthesis, ensuring they remain within permitted limits in the final product [39].

Analysis of Metal-Based Pharmaceuticals

Metal-containing therapeutics represent an important drug class where ICP-MS provides distinct analytical advantages. Platinum-based chemotherapeutic agents (cisplatin, carboplatin, oxaliplatin) can be quantified directly via their platinum content without needing to detect the intact drug molecule [33]. This element-specific detection enables lower limits of quantification (LLOQ of 1 ng/mL for carboplatin) compared to LC-MS/MS methods (LLOQ of 10 ng/mL), while avoiding challenges with low ionization efficiency and weak chromatographic retention of the parent drug [33]. Similarly, ICP-MS supports the development of radionuclide drug conjugates (RDCs) like Pluvicto (¹⁷⁷Lu-PSMA-617) by enabling quantification of non-radioactive stable isotopes during preclinical studies, reducing costs and improving efficiency [33].

Single-Cell Analysis for Diagnostic Applications

Recent advancements in sample introduction systems have expanded ICP-MS applications to single-cell analysis, particularly for disease diagnosis and prognosis. Conventional pneumatic nebulizers often damage fragile mammalian cells during analysis, but microdroplet generators (μDG) now enable efficient introduction of intact cells [35]. This approach maintains cellular structure while allowing quantitative measurement of elemental content in individual cells, opening new avenues for health assessment through analysis of easily collectible blood cells [35]. Researchers have successfully applied this methodology to quantify magnesium, iron, phosphorus, sulfur, and zinc in human leukemia K562 cells, demonstrating potential for tracking metabolic changes at the single-cell level [35].

Biomolecule Quantification Through Elemental Tagging

ICP-MS enables highly sensitive protein and peptide quantification through strategic elemental tagging strategies. By labeling antibodies or other biological probes with distinct lanthanide elements rather than fluorochromes, researchers can perform highly multiplexed assays using specialized ICP-MS flow cytometers [31]. This approach theoretically allows hundreds of different biological probes to be analyzed in individual cells at rates of approximately 1,000 cells per second, effectively eliminating compensation challenges encountered in conventional fluorescence-based flow cytometry [31]. The exceptional sensitivity of ICP-MS detection also facilitates measurement of low-abundance proteins or peptides that would be challenging to quantify using conventional techniques [33].

ICP-MS has firmly established itself as the gold standard for ultra-trace multielement analysis, offering unmatched sensitivity, wide dynamic range, and versatile multi-element capability. The continuing evolution of ICP-MS technology—from advanced collision/reaction cell designs to innovative sample introduction systems—ensures its ongoing relevance for addressing emerging analytical challenges in pharmaceutical research, clinical diagnostics, and environmental monitoring. As researchers increasingly recognize the importance of elemental distributions in biological systems and pharmaceutical products, ICP-MS will continue to provide critical analytical capabilities that support drug development, safety assessment, and fundamental scientific discovery.

Combustion analysis, specifically CHNOS/O analysis, is a foundational analytical method in organic chemistry and related fields for determining the elemental composition of a substance. The acronym represents the core elements it quantifies: Carbon (C), Hydrogen (H), Nitrogen (N), Sulfur (S), and Oxygen (O) [40]. This technique provides a quantitative measure of these elements' presence in an organic sample, which is crucial for deciphering its empirical molecular formula, assessing purity, and characterizing unknown compounds [40] [41]. The analysis is based on the principle of complete and instantaneous oxidation of the sample via "flash combustion," which converts all organic and inorganic substances into volatile combustion products that can be separated and quantified [41]. Its applications are broad, spanning pharmaceutical development, materials science, food analysis, and environmental research, making it an indispensable tool in the molecular and elemental analysis research toolkit [40] [42].

Core Principles and Instrumentation

Fundamental Working Principle

The CHNOS/O analyzer operates on the principle of dynamic flash combustion. The solid or liquid organic sample is introduced into a combustion chamber and subjected to extremely high temperatures exceeding 1000 °C in a pure oxygen environment (≥99.9995%) [40] [42]. This process instantly and completely oxidizes the sample, converting its constituent elements into specific gaseous compounds [40]:

- Carbon (C) is oxidized to carbon dioxide (CO₂).

- Hydrogen (H) is oxidized to water vapor (H₂O).

- Nitrogen (N) is initially converted to nitrogen oxides (NOₓ) before being reduced to nitrogen gas (N₂).

- Sulfur (S) is oxidized to sulfur dioxide (SO₂).

For oxygen analysis, the approach is different. The sample undergoes high-temperature pyrolysis in an inert atmosphere, often at temperatures around 1480 °C, causing oxygen in the sample to convert into carbon monoxide (CO) before measurement [42].

Key Instrument Components

A CHNOS/O analyzer is a sophisticated system composed of several integral components, each fulfilling a critical role in the analytical process [40]:

- Sample Chamber: The port of entry for the prepared organic sample. Proper sample introduction is vital for analytical accuracy.

- Combustion Unit: The core of the instrument, consisting of a combustion tube or chamber where the flash combustion occurs. It is responsible for the quantitative breakdown of the sample into its elemental gases.

- Reduction Unit: Following combustion, the gas stream passes over a heated reductant, typically high-purity copper at approximately 600 °C. This copper removes any excess oxygen and converts nitrogen oxides into pure N₂ gas, which is essential for accurate nitrogen measurement [40].

- Gas Separation System: The mixture of resultant gases is separated, often using a gas chromatography (GC) column, to allow for individual element detection [42].

- Specialized Detectors: The separated gases are routed to specific detectors:

- Non-Dispersive Infrared (NDIR) Detectors: Typically used for quantifying CO₂ (for Carbon) and SO₂ (for Sulfur) [40].

- Thermal Conductivity Detector (TCD): Used for measuring N₂ (for Nitrogen) and H₂O (for Hydrogen) [40] [42]. The TCD detects these gases based on changes in the thermal conductivity of the carrier gas stream.

Analytical Performance and Specifications

The performance of CHNOS/O analysis is characterized by its sensitivity, sample requirements, and throughput. The following table summarizes key quantitative specifications for this analytical method, compiled from commercial laboratory data and technical descriptions [42].

Table 1: Typical Analytical Specifications for CHNOS/O Combustion Analysis

| Parameter | Specification | Notes |

|---|---|---|

| Sample Mass | ~2 - 20 mg | Higher masses (e.g., 300 mg) may be required for additional analyses like ash content [42] [41]. |

| Detection Limits | 0.05 wt-% (500 ppm) for C, H, N | |

| 0.100 wt-% for Sulfur | ||

| 0.050 wt-% for Oxygen | ||

| Combustion Temperature | > 1000 °C / 1800 °C (flash) | Temperatures can vary based on the specific instrument and method [40] [42]. |

| Oxygen Pyrolysis Temp. | 1480 °C | For conversion of oxygen to CO [42]. |

| Typical Turnaround Time | 3 weeks (commercial service) | Applies to external laboratory analysis [42]. |

| Price (Commercial Service) | 190 € per sample (excl. VAT) | Applies to the full CHNOS package; discounts are often available for large sample batches [42]. |

Detailed Experimental Workflow

The process of conducting a CHNOS/O analysis involves a meticulous sequence of steps to ensure precise and accurate results. The workflow below details the protocol from sample preparation to final quantification.

- Sample State: The sample can be a solid or liquid. Aqueous samples require drying prior to analysis, as water content would interfere with the hydrogen measurement and skew results [42].

- Homogenization: Solid samples must be dried and finely ground into a consistent powder. This is a critical step to ensure uniform combustion and the complete release of all elemental components during the flash combustion process [40].

- Weighing: A small, precisely measured quantity of the prepared sample (typically 2-20 mg) is weighed into a small tin or silver capsule. The high-precision weighing is essential for all subsequent calculations.

- Introduction: The sealed capsule containing the sample is then dropped by an auto-sampler into the high-temperature combustion chamber of the analyzer, which is continuously purged with an inert carrier gas like helium [40].

Combustion, Reduction, and Separation

- Flash Combustion: Upon entry into the combustion chamber, the sample capsule is exposed to a pure oxygen atmosphere and instantaneously heated to over 1000 °C. The tin capsule itself ignites, creating a temporary temperature surge up to 1800 °C that ensures complete and rapid oxidation of the sample [40] [42].