Optimizing Sample Throughput with Green Metrics: A Strategic Framework for Sustainable Drug Development

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to integrating green chemistry principles into analytical methodologies to enhance sample throughput without compromising data quality or...

Optimizing Sample Throughput with Green Metrics: A Strategic Framework for Sustainable Drug Development

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to integrating green chemistry principles into analytical methodologies to enhance sample throughput without compromising data quality or environmental responsibility. It explores the foundational principles of Green Analytical Chemistry (GAC), presents actionable methodological approaches for sustainable sample preparation and analysis, addresses common troubleshooting and optimization challenges, and offers a framework for the validation and comparative assessment of method greenness. By balancing analytical efficiency with sustainability goals, this framework supports the development of greener, faster, and more cost-effective processes in biomedical and clinical research.

The Pillars of Green Analytical Chemistry: Principles and Assessment Tools

Core Principles of Green Analytical Chemistry (GAC) for High-Throughput Labs

Green Analytical Chemistry (GAC) is an environmentally conscious methodology that aims to mitigate the detrimental effects of analytical techniques on the natural environment and human health [1]. For high-throughput laboratories, which are characterized by their need to process large numbers of samples efficiently, integrating GAC principles is paramount for achieving sustainable operations without compromising analytical performance. The core challenge lies in balancing the reduction of environmental impacts with the improvement of analysis results quality [2]. This technical support center provides actionable guidance and troubleshooting for implementing GAC in high-throughput environments, framed within the context of optimizing sample throughput for green metrics research.

Foundational Principles and Green Metrics

The Twelve Principles of Green Analytical Chemistry

The 12 principles of green chemistry provide a foundational framework for designing chemical processes and products that prioritize environmental and human health [3]. When applied to analytical techniques, these principles drive the development of methodologies that are safer, more efficient, and environmentally benign. Key principles highly relevant to high-throughput labs include:

- Waste prevention: Designing analytical processes that avoid generating waste rather than managing it after the fact, a critical consideration in high-throughput laboratories [3].

- Safer solvents and auxiliaries: Encouraging the use of non-toxic, biodegradable, or less harmful solvents, such as water, ionic liquids, or supercritical carbon dioxide, reducing reliance on hazardous organic solvents [3].

- Energy efficiency: Urging the development of techniques that operate under milder conditions to lower energy consumption [3].

- Real-time analysis for pollution prevention: Advocating for methodologies that monitor and control processes in real-time to prevent hazardous by-products before they form [3].

Essential Green Assessment Metrics

Proper GAC tools should be developed and employed to assess the greenness of different analytical assays [2]. The table below summarizes key metrics used in evaluating analytical methods:

Table 1: Green Analytical Chemistry Assessment Metrics

| Metric Name | Type | Key Parameters Assessed | Best For |

|---|---|---|---|

| NEMI (National Environmental Methods Index) [2] | Qualitative | PBT chemicals, hazardous solvents, pH, waste amount | Quick initial screening |

| Analytical Eco-Scale [2] | Semi-quantitative | Reagents, energy, hazards, waste | Ranking methods with penalty points |

| GAPI (Green Analytical Procedure Index) [2] | Semi-quantitative | Multiple aspects across entire analytical process | Comprehensive single-pictogram assessment |

| AGREE (Analytical GREEnness) [2] | Quantitative | Comprehensive 0-1 score based on 12 GAC principles | Detailed comparative analysis |

| BAGI (Blue Applicability Grade Index) [2] | Quantitative | Applicability and practicality alongside greenness | Balancing practical constraints with green goals |

Methodologies and Experimental Protocols

Core Strategies for High-Throughput Green Analysis

Implementing GAC in high-throughput environments requires specific methodologies that maintain efficiency while reducing environmental impact:

Miniaturization and Automation Miniaturization is the cornerstone of eco-friendly analysis, dramatically cutting down on sample and reagent consumption [4]. This not only minimizes waste but also lowers costs and speeds up analysis times. Automation aligns perfectly with GSP principles by saving time, lowering consumption of reagents and solvents, and consequently reducing waste generation [5].

Alternative Solvent Systems When solvents are necessary, green analytical chemistry champions the use of benign alternatives. Water is the ultimate green solvent, and its use is increasing with the development of water-compatible chromatography columns [4]. Bio-based solvents derived from renewable feedstocks, and non-volatile ionic liquids, which can often be reused, are also gaining popularity [4].

Energy-Efficient Sample Preparation Adapting traditional sample preparation techniques to the principles of green sample preparation involves optimizing energy efficiency while maintaining analytical quality [5]. Effective approaches include:

- Applying vortex mixing or assisting fields such as ultrasound and microwaves to enhance extraction efficiency and speed up mass transfer while consuming less energy [5].

- Parallel processing of multiple samples through miniaturized systems to increase overall throughput and reduce energy consumed per sample [5].

- Integrating multiple preparation steps into a single, continuous workflow to simplify operations while cutting down on resource use and waste production [5].

Detailed Experimental Protocol: Green Sample Preparation for High-Throughput Analysis

Table 2: Step-by-Step Green Sample Preparation Protocol

| Step | Procedure | Green Principles Applied | Troubleshooting Tips |

|---|---|---|---|

| 1. Sample Intake | Use automated micro-samplers for precise aliquoting (1-10 µL instead of 1-10 mL) | Source reduction, waste prevention | For viscous samples, use positive displacement pipettes to maintain accuracy |

| 2. Extraction | Employ parallel solid-phase microextraction (SPME) for 96-well plates | Solventless extraction, miniaturization, energy efficiency | Condition fibers properly; check for carryover with high-concentration samples |

| 3. Pre-concentration | Utilize integrated vacuum manifolds for simultaneous processing | Energy efficiency, reduced processing time | Ensure proper sealing of plates to prevent channel cross-talk |

| 4. Analysis Ready | Direct transfer to miniaturized chromatographic systems | Reduced derivatives, waste prevention | Maintain temperature control to prevent analyte degradation |

Essential Research Reagent Solutions

Table 3: Green Research Reagents and Materials for High-Throughput Labs

| Reagent/Material | Traditional Substance | Function | Environmental Benefit |

|---|---|---|---|

| Ionic Liquids | Volatile organic compounds (VOCs) | Extraction solvents | Non-volatile, recyclable, low toxicity |

| Bio-based Solvents (e.g., ethyl lactate) | Hexane, chloroform | Sample preparation | Biodegradable, from renewable resources |

| Solid-Phase Microextraction (SPME) Fibers | Liquid-liquid extraction | Sample preparation | Solventless, reusable |

| Water-based Mobile Phases | Acetonitrile, methanol | Chromatography | Non-toxic, biodegradable |

| Supercritical CO₂ | Organic solvents | Extraction | Non-flammable, non-toxic, easily removed |

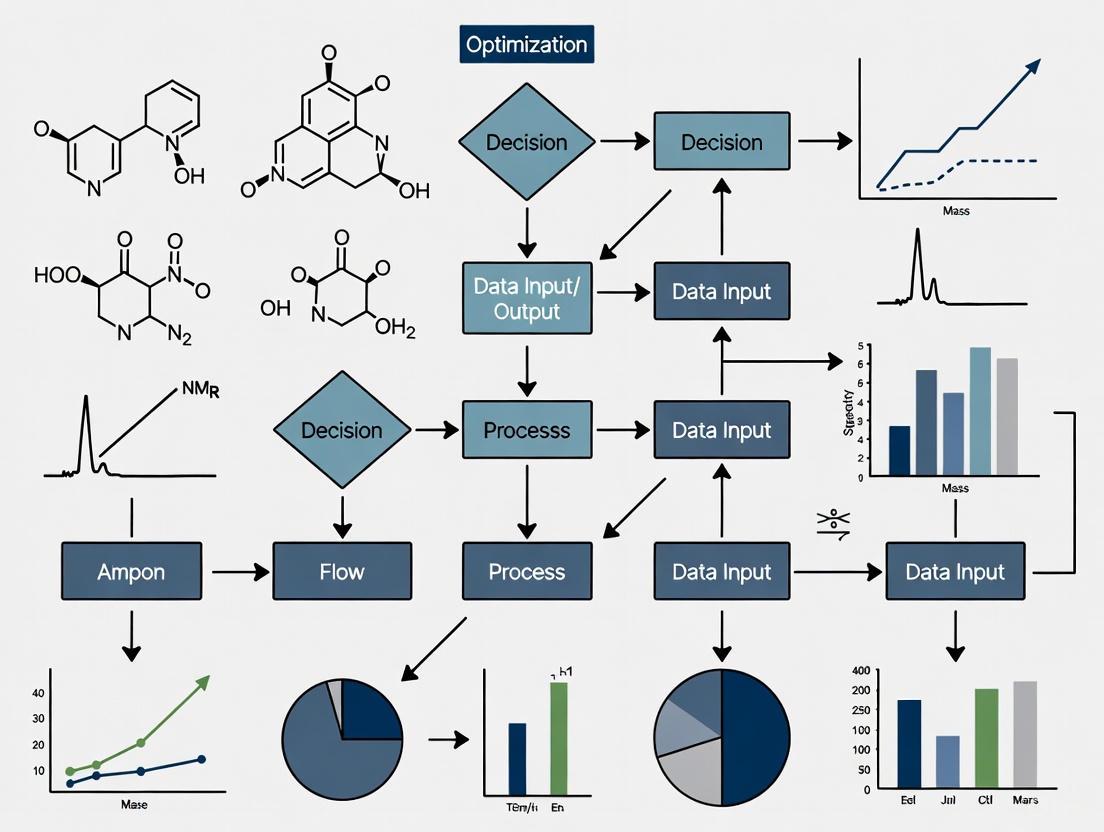

Workflow Visualization

Diagram 1: High-Throughput Green Analysis Workflow

Troubleshooting Guides and FAQs

FAQ 1: How can we validate that new green methods maintain accuracy and precision in high-throughput settings?

Challenge: Method validation for green alternatives against established traditional techniques can be time-consuming and requires careful documentation [4].

Solution:

- Implement parallel validation where traditional and green methods run simultaneously on split samples for statistical comparison

- Use standard reference materials with certified values to verify accuracy

- Apply chemometric tools for robust data analysis while minimizing resource use [3]

- Establish continuous monitoring with control charts to track method performance over time

Troubleshooting Tips:

- If precision decreases, check solvent compatibility with detection systems

- If carryover increases, examine cleaning protocols for reusable components

- If throughput drops, optimize automated systems for the new method parameters

Challenge: The rebound effect in green analytical chemistry refers to situations where efforts to reduce environmental impact lead to unintended consequences that offset or even negate the intended benefits [5]. For example, a novel, low-cost microextraction method might lead laboratories to perform significantly more extractions than before, increasing the total volume of chemicals used and waste generated [5].

Mitigation Strategies:

- Implement testing protocols to avoid redundant analyses

- Use predictive analytics to identify when tests are truly necessary

- Employ smart data management systems to ensure only necessary data is collected and analyzed

- Establish sustainability checkpoints in standard operating procedures

- Train laboratory personnel on the implications of the rebound effect and encourage a mindful laboratory culture where resource consumption is actively monitored [5]

FAQ 3: How can we overcome resistance to adopting green methods in established high-throughput workflows?

Challenge: Implementing green methodologies often requires significant investment in infrastructure and training, as well as overcoming resistance to change in established practices [3].

Solution Framework:

- Demonstrate economic benefits: Calculate and present cost savings from reduced solvent consumption, waste disposal fees, and energy usage [4]

- Phased implementation: Introduce one green method at a time to minimize disruption

- Staff training programs: Develop comprehensive training on new techniques and instruments, emphasizing both environmental and practical benefits [4]

- Performance metrics: Include green metrics alongside traditional performance indicators in laboratory assessments

FAQ 4: How do we select the most appropriate green metrics for our specific high-throughput applications?

Challenge: With numerous available GAC metrics (NEMI, Eco-Scale, GAPI, AGREE, BAGI, etc.), selecting the most appropriate one for specific applications can be challenging [2].

Selection Guidelines:

- For quick screening: Use NEMI for a simple pass/fail assessment [2]

- For comprehensive evaluation: Employ AGREE for detailed 0-1 scoring based on all 12 GAC principles [2]

- For method development: Apply GAPI to identify environmental hotspots in analytical procedures [2]

- For balancing practicality: Utilize BAGI when applicability and practical constraints are major concerns [2]

FAQ 5: What are the most effective ways to reduce solvent waste in high-throughput chromatographic applications?

Challenge: Traditional analytical methods rely on large volumes of toxic solvents, generating hazardous waste [4].

Proven Solutions:

- Switch to green solvents: Replace acetonitrile with ethanol or water-based mobile phases where possible [4]

- Miniaturize chromatographic systems: Use UHPLC and microfluidic chips instead of conventional HPLC [6]

- Implement solvent recycling: Install closed-loop systems for solvent recovery and reuse

- Optimize method parameters: Reduce flow rates, use gradient methods, and extend column lifetime through proper maintenance

Implementing Green Analytical Chemistry in high-throughput laboratories requires a systematic approach that balances analytical performance with environmental responsibility. By leveraging miniaturization, alternative solvents, automation, and comprehensive green metrics, laboratories can significantly reduce their environmental footprint while maintaining or even enhancing analytical throughput and quality. The troubleshooting guides and FAQs provided here address common implementation challenges, offering practical pathways for researchers and drug development professionals to optimize their workflows for both efficiency and sustainability. Continuous innovation, staff training, and appropriate metric selection are key success factors in the journey toward greener high-throughput analysis.

In the pharmaceutical industry and analytical chemistry laboratories, the principles of Green Analytical Chemistry (GAC) and White Analytical Chemistry (WAC) have become increasingly significant for reducing environmental impact while maintaining analytical efficiency. The release of any product to the consumer market requires rigorous quality control analysis, typically employing techniques such as high performance liquid chromatography (HPLC), spectrophotometry in the ultraviolet and visible regions (UV-Vis), infrared spectroscopy (IR), or thin layer chromatography (TLC). Most conventional analytical methods currently in use still employ toxic reagents, generate significant waste, involve multi-step sample preparation, and require extensive instrumentation and consumables - all contributing to greater environmental impact and cost compared to methods developed under GAC and WAC principles [7].

To address these concerns, several specialized assessment tools have been developed to provide objective, quantitative evaluations of analytical method environmental performance. The four primary tools - NEMI, ESA, GAPI, and AGREE - enable researchers to move beyond subjective assessments to obtain semi-quantitative or quantitative data that facilitates informed decision-making regarding eco-efficiency [7]. These tools are particularly valuable within the context of optimizing sample throughput for green metrics research, as they provide standardized frameworks for comparing the environmental footprint of different analytical approaches while maintaining data quality and throughput requirements.

Tool Specifications and Comparative Analysis

Detailed Tool Characteristics

Table 1: Comprehensive Comparison of Greenness Assessment Tools

| Assessment Tool | Full Name | Output Format | Scoring System | Key Advantages | Reported Limitations |

|---|---|---|---|---|---|

| NEMI | National Environmental Methods Index | Pictogram (4 quadrants) | Binary (pass/fail per criterion) | Simple, quick visualization | Limited differentiation; 14 of 16 methods had same pictogram in study [8] |

| ESA | Eco-Scale Assessment | Numerical score | Penalty points (0-100) | Reliable numerical assessment; intuitive scoring | Less detailed than AGREE or GAPI [8] |

| GAPI | Green Analytical Procedure Index | Three-colored pictogram (5 sections) | Qualitative (green/yellow/red) | Fully descriptive; covers entire method lifecycle | Complex compared to NEMI and ESA [8] |

| AGREE | Analytical GREEnness Metric | Circular pictogram (12 segments) | Numerical (0-1) + color code | Automated calculation; highlights weakest points | Requires specialized software [8] |

Technical Specifications and Methodological Frameworks

The National Environmental Methods Index (NEMI) employs a simple pictogram approach that evaluates four key criteria: whether the method uses persistent, bioaccumulative, and toxic chemicals; whether it uses corrosive reagents with pH ≤2 or ≥12; whether it uses hazardous reagents; and whether the waste generated exceeds specified limits. The major limitation identified in comparative studies is that NEMI provides limited differentiation between methods, with one study finding that 14 out of 16 methods for hyoscine N-butyl bromide assay received identical NEMI pictograms [8].

The Eco-Scale Assessment (ESA) operates on a penalty point system where analysts subtract points from a baseline of 100 for each environmental or safety deficiency. Points are deducted for excessive reagent use, energy consumption, toxicity, occupational hazards, and waste generation. This approach provides a reliable numerical assessment that facilitates comparison between methods, though it offers less granular detail than AGREE or GAPI [8].

The Green Analytical Procedure Index (GAPI) expands upon NEMI by evaluating multiple stages of the analytical process across five major categories: sample collection, preservation, transportation, and preparation; sample treatment and analysis; reagents and compounds used; instrumentation; and quantification method. Each category is color-coded (green, yellow, red) based on environmental impact, providing a comprehensive visual representation of method greenness across its entire lifecycle [7] [1].

The Analytical GREEnness (AGREE) metric represents the most advanced approach, incorporating ten principles of green chemistry across twelve evaluation segments. Each segment is scored from 0-1, with the overall score representing the average across all principles. AGREE has the distinct advantage of automation through dedicated software and effectively highlights the weakest points in analytical techniques that require improvement. The tool provides both a numerical score and a color-coded circular diagram for intuitive interpretation [8].

Troubleshooting Guides and FAQs

Tool Selection and Implementation Guidance

How do I select the most appropriate greenness assessment tool for my specific application? Research indicates that using multiple assessment tools provides the most comprehensive evaluation of method greenness [8]. For preliminary screening, NEMI offers quick assessment despite its limitations. For publication-quality analysis or method optimization, AGREE and GAPI provide more detailed insights. ESA serves as an excellent intermediate option when numerical scoring is preferred but resource constraints limit more complex evaluations. Consider starting with AGREE or GAPI for method development, as these tools highlight specific areas for improvement more effectively [8].

What is the relationship between Green Analytical Chemistry (GAC) and White Analytical Chemistry (WAC)? GAC focuses primarily on environmental impact reduction, while WAC adopts a more holistic perspective that balances environmental concerns with methodological functionality and practical applicability [1]. WAC aims to avoid unconditional increases in greenness at the expense of analytical performance, instead seeking an optimal balance that aligns with sustainable development goals. The Whiteness Assessment Criteria (WAC) have been developed specifically to quantify this balance [1].

Technical Issues and Resolution Strategies

Why do different assessment tools sometimes provide conflicting greenness evaluations for the same method? Different tools employ distinct evaluation criteria and weighting systems, which can lead to varying conclusions about method greenness [8]. For example, a method might score well on NEMI's basic criteria but perform poorly on AGREE's more comprehensive principles. This discrepancy highlights the importance of using multiple tools and understanding their specific evaluation frameworks. Research consistently shows that AGREE, GAPI, and ESA provide more reliable and precise assessments than NEMI [8].

How can I resolve ambiguity when assigning scores for reagent toxicity or energy consumption? Consult the original literature for each assessment tool to identify specific classification criteria. For AGREE, utilize the dedicated software to standardize scoring. When uncertainty persists, apply the precautionary principle by selecting the more conservative (less green) assessment to avoid overstating environmental benefits. Document all assumptions explicitly in methodology sections to ensure transparency and reproducibility [7] [8].

What should I do if my analytical method receives poor greenness scores but modification is constrained by analytical requirements? Focus on incremental improvements rather than complete method overhaul. Identify specific segments with the poorest scores (particularly in AGREE) and target these for optimization. Consider solvent substitution, waste minimization through micro-extraction techniques, energy reduction via lower temperature operation, or automation to reduce reagent consumption. Even modest improvements can significantly enhance overall greenness scores while maintaining analytical performance [8].

Integration with Method Validation and Quality Systems

How should greenness assessment be incorporated into analytical method validation protocols? Leading researchers strongly recommend including greenness evaluation as a standard component of method validation protocols [8]. This integration ensures environmental considerations are addressed during method development rather than as an afterthought. The assessment should be conducted before practical laboratory trials to reduce chemical hazards released into the environment during method optimization [8].

What documentation standards should be applied for greenness assessments in regulatory submissions? While formal regulatory requirements for greenness assessment are still emerging, comprehensive documentation should include: the specific tools employed, complete scoring calculations or algorithms, all underlying assumptions, comparative data against alternative methods, and verification of analytical performance metrics. Visual outputs (pictograms) from each tool should be included alongside numerical scores to facilitate review by diverse stakeholders [7] [8].

Experimental Protocols and Methodologies

Standardized Assessment Protocol

Figure 1: Standardized workflow for comprehensive greenness assessment integrating all four evaluation tools.

Implementation Protocol for AGREE Assessment

The AGREE assessment protocol represents the most advanced approach to greenness evaluation. Implementation follows this specific methodology:

Data Collection: Compile complete methodological details including sample preparation steps, reagent types and quantities, instrumentation specifications, energy consumption parameters, waste generation volumes, and operator safety requirements.

Software Utilization: Access the dedicated AGREE assessment software, which is available through referenced scientific literature [8]. The automation provided by this tool ensures standardized application of evaluation criteria.

Principle Evaluation: Score each of the twelve principles on a 0-1 scale based on specific criteria:

- Principle 1: Direct analysis without sample preparation (preferred)

- Principle 2: Minimal sample quantity requirements

- Principle 3: Minimal sample transportation

- Principle 4: Low energy consumption (<0.1 kWh per sample)

- Principle 5: Waste minimization (<1 mL per sample)

- Principle 6: Avoidance of derivatization

- Principle 7: Implementation of automated methods

- Principle 8: Miniaturization and integration

- Principle 9: Reagent toxicity reduction

- Principle 10: Degradable reagents and waste

- Principle 11: Operator safety

- Principle 12: Energy-efficient instrumentation

Result Interpretation: Analyze the circular output diagram to identify the lowest-scoring segments, which represent the most significant opportunities for greenness improvement. Focus optimization efforts on these critical areas [8].

Case Study Methodology: Hyoscine N-Butyl Bromide Assay

A comprehensive comparative study evaluated 16 chromatographic methods for hyoscine N-butyl bromide assay using all four assessment tools [8]. The experimental methodology provides a template for systematic greenness evaluation:

Method Selection: Identify 16 published chromatographic methods from scientific literature with complete methodological details.

Parallel Assessment: Apply each assessment tool (NEMI, ESA, GAPI, AGREE) to all methods using standardized criteria.

Comparative Analysis: Evaluate tool performance based on:

- Discrimination capability (ability to differentiate between methods)

- Ease of application

- Comprehensiveness of assessment

- Actionability of results

Validation: Verify that greenness rankings align with practical environmental impact considerations.

This methodology demonstrated that NEMI provided the least discrimination, while AGREE and GAPI offered the most detailed insights for method optimization [8].

Essential Research Reagents and Materials

Table 2: Research Reagent Solutions for Green Analytical Chemistry

| Reagent/Material Category | Specific Examples | Green Function | Application Context |

|---|---|---|---|

| Alternative Solvents | Water, ethanol, ethyl acetate, acetone | Replace toxic organic solvents | HPLC mobile phases, extraction solvents |

| Miniaturized Equipment | Micro-extraction devices, capillary columns, microfluidic chips | Reduce reagent consumption and waste generation | Sample preparation, separation techniques |

| Benign Sorbents | Biodegradable polymers, silica-based materials | Enable greener sample preparation | Solid-phase extraction, chromatography |

| Energy-Efficient Instruments | UHPLC, capillary electrophoresis, modern spectrophotometers | Reduce energy consumption | All analytical techniques |

| Digital Tools | AGREE software, method assessment databases | Facilitate greenness evaluation | Method development and optimization |

Advanced Implementation Framework

Figure 2: Integration framework showing relationship between GAC, WAC, assessment tools, and method optimization.

The advanced implementation of greenness assessment tools requires understanding their complementary relationships within the broader contexts of Green Analytical Chemistry and White Analytical Chemistry. GAC focuses primarily on reducing environmental impact, while WAC adopts a more holistic approach that balances environmental concerns with analytical functionality [1]. The four assessment tools serve as bridges between these philosophical approaches and practical method selection.

For researchers focused on optimizing sample throughput while maintaining green principles, the framework recommends:

- Strategic Tool Selection: Employ NEMI for rapid screening of multiple methods, then apply AGREE or GAPI for detailed analysis of promising candidates.

- Throughput Considerations: Recognize that greener methods often align with higher throughput capabilities through reduced sample preparation, faster analysis times, and automated processes.

- Iterative Optimization: Use assessment tool outputs to identify specific modifications that enhance both greenness and throughput simultaneously.

Based on comprehensive evaluation of the four primary greenness assessment tools, the following recommendations support optimal tool selection and implementation:

For Method Development: Prioritize AGREE and GAPI assessments during method development to identify and address environmental weaknesses before validation [8].

For Comparative Studies: Employ multiple assessment tools to obtain complementary perspectives on method greenness, as each tool provides unique insights [8].

For High-Throughput Environments: Focus on AGREE assessments, as this tool specifically highlights aspects that impact throughput (automation, energy consumption, waste generation) while providing actionable improvement guidance [8].

For Regulatory Compliance: Integrate greenness assessment formally into method validation protocols, with particular emphasis on GAPI or AGREE for comprehensive documentation [7] [8].

The strategic implementation of these assessment tools directly supports the optimization of sample throughput in green metrics research by enabling data-driven method selection that balances analytical performance, environmental impact, and operational efficiency.

The Role of Whiteness Assessment (WAC) in Balancing Sustainability and Functionality

White Analytical Chemistry (WAC) represents a holistic paradigm in modern analytical science, emerging as an extension and complement to Green Analytical Chemistry (GAC). [9] While GAC primarily focuses on environmental impact, WAC integrates three critical dimensions: Green (ecological aspects), Red (analytical performance), and Blue (practical/economic considerations). [10] [9] This integrated approach strives for a sustainable compromise that avoids unconditionally increasing greenness at the expense of functionality, thereby aligning more closely with the principles of sustainable development. [9] The term "white" symbolizes purity and the balanced combination of method quality, sensitivity, and selectivity with an eco-friendly and safe approach for analysts. [10]

Core Concepts and the RGB Model

The foundational framework of WAC is the RGB model, which functions as a unified system for evaluating analytical methods. [10] [9] According to this model, when the three primary "colors" or aspects are balanced and mixed, the resulting perception is one of "whiteness," indicating a coherent and synergistic method. [10] [9]

The three independent dimensions of the RGB model are:

- Green Dimension: Encompasses the principles of GAC, focusing on minimizing environmental impact, waste generation, energy consumption, and ensuring operator safety. [10]

- Red Dimension: Addresses analytical performance parameters, including sensitivity, selectivity, accuracy, precision, and trueness. [10]

- Blue Dimension: Covers practical and economic aspects, such as cost, speed of analysis, simplicity of use, and potential for automation. [10]

A method is considered "white" when it demonstrates a high level of performance across all three dimensions simultaneously. [9]

Troubleshooting Guide: Common WAC Implementation Issues

FAQ 1: My analytical method scores high on greenness metrics but fails to meet required performance standards for my application. How can I improve its "whiteness"?

Answer: This common issue indicates an imbalance in the RGB model, where greenness is prioritized at the expense of analytical performance (the Red dimension). To address this:

- Review Sample Preparation: Consider miniaturized techniques like Fabric Phase Sorptive Extraction (FPSE), magnetic SPE, or capsule phase microextraction (CPME). These can enhance pre-concentration of analytes and improve sensitivity without significantly increasing solvent consumption. [10]

- Optimize Instrument Parameters: For chromatographic methods, use shorter stationary phases to decrease separation time and reduce waste generation while potentially improving detection limits. [10]

- Apply the RGB 12 Algorithm: Systematically score your method against the 12 principles of WAC (covering Green, Red, and Blue aspects) to identify specific areas where analytical performance can be enhanced without drastically compromising environmental benefits. [9] [2]

FAQ 2: How can I quantitatively assess and compare the "whiteness" of different analytical methods?

Answer: You can quantify whiteness using several established tools and algorithms:

- RGB 12 Algorithm: This simple-in-use algorithm allows you to assess analytical methods based on the 12 principles of WAC. The final whiteness score provides a convenient parameter for comparing and selecting the optimal method. [9] [2]

- AGREE Metric: The Analytical GREEnness metric uses the 12 principles of green chemistry, generating a pictogram with a score from 0 to 1.0. While focused on greenness, it can be part of a broader WAC assessment. [10] [2]

- Combined Tool Approach: Use specialized tools for each dimension alongside the overall WAC assessment. For example, pair BAGI (Blue Applicability Grade Index) for practicality, RAPI (Red Analytical Performance Index) for performance, and AGREE for greenness to get a comprehensive view. [10]

FAQ 3: I am developing a new method and want to ensure it aligns with WAC principles from the start. What workflow should I follow?

Answer: Implementing a structured workflow from the beginning is key to developing a method with high whiteness. The following diagram outlines a logical development process centered on the RGB model:

Diagram: WAC Method Development Workflow

FAQ 4: The sample preparation step in my workflow is the least green component. What sustainable alternatives exist?

Answer: Sample preparation is often the least green step in analytical procedures, but several greener techniques have been developed: [11]

- Micro-extraction Techniques: These significantly reduce solvent consumption. Options include FPSE, magnetic SPE using magnetic nanoparticles, CPME, and ultrasound-assisted microextraction. [10]

- Dilute-and-Shoot: For suitable matrices, this approach eliminates extensive sample preparation, aligning well with WAC principles. [10]

- Evaluate with SPMS: Use the Sample Preparation Metric of Sustainability tool, an open-source metric that exclusively evaluates the sustainability of sample preparation steps using a clock-like diagram to display key parameters and a total score. [11]

Essential Tools for Whiteness Assessment

A variety of metrics have been developed to assess the greenness, performance, and practicality of analytical methods. The table below summarizes key assessment tools relevant to WAC implementation:

Table 1: Key Assessment Tools for White Analytical Chemistry

| Tool Name | Acronym | Primary Focus | Output Format | Key Principles Assessed |

|---|---|---|---|---|

| White Analytical Chemistry | WAC [10] [9] | Holistic (RGB) | Whiteness Score | 12 principles covering green, red, and blue aspects |

| Analytical GREEnness | AGREE [10] [2] | Greenness | Pictogram (0-1 score) & Color | 12 principles of green chemistry |

| Green Analytical Procedure Index | GAPI [10] [2] | Greenness | Pictogram | Multiple stages of analytical process |

| Blue Applicability Grade Index | BAGI [10] | Practicality (Blue) | Blue-shaded Pictogram | Cost, time, simplicity, automation |

| Red Analytical Performance Index | RAPI [10] | Performance (Red) | Numerical Score | Sensitivity, accuracy, precision, matrix effects |

| Analytical Eco-Scale | AES [2] | Greenness | Numerical Score (100-point scale) | Reagent hazards, energy, waste |

| Sample Preparation Metric of Sustainability | SPMS [11] | Sample Preparation Greenness | Clock-like Diagram | Extractant, time, energy, waste |

Experimental Protocols for WAC Implementation

Protocol 1: Comprehensive Method Whiteness Assessment Using the RGB 12 Algorithm

Principle: This protocol provides a systematic approach to evaluate analytical methods against the 12 principles of White Analytical Chemistry, resulting in a quantifiable "whiteness" score. [9]

Materials and Reagents:

- Detailed description of the analytical method to be assessed

- RGB 12 scoring sheet (digital or paper)

- Data on solvent consumption, energy use, waste generation

- Analytical performance data (sensitivity, accuracy, precision, etc.)

- Practical implementation data (cost per analysis, time requirements, ease of use)

Procedure:

- Define Assessment Scope: Clearly outline the analytical method's objectives, including target analytes, matrices, and required performance characteristics.

- Gather Method Data: Collect comprehensive data on all aspects of the method, including:

- Reagent types and volumes

- Energy consumption for each step

- Waste generation and disposal requirements

- Analytical performance metrics (LOQ, LOD, recovery, precision)

- Practical parameters (cost, time, required expertise)

- Score Each Principle: Evaluate the method against each of the 12 WAC principles, assigning a score from 0 (does not meet principle) to 10 (fully meets principle).

- Calculate Dimension Scores: Compute average scores for each RGB dimension:

- Green Dimension (Principles 1-4)

- Red Dimension (Principles 5-8)

- Blue Dimension (Principles 9-12)

- Compute Whiteness Percentage: Calculate the overall whiteness percentage using the formula:

Whiteness (%) = (Green Score + Red Score + Blue Score) / 30 × 100 - Interpret Results: Methods with whiteness >80% are considered excellent, 60-80% good, and <60% require improvement.

Troubleshooting:

- If one dimension scores significantly lower than others, focus improvement efforts on that specific area.

- If overall whiteness is low but individual dimensions are acceptable, consider whether the method is appropriately balanced for its intended application.

Protocol 2: Comparative Assessment of Multiple Methods Using Combined Metrics

Principle: This protocol enables direct comparison of multiple analytical methods for the same application using a combination of specialized metrics to provide comprehensive RGB assessment. [10] [2]

Materials and Reagents:

- Descriptions of all analytical methods to be compared

- AGREE calculator software

- BAGI assessment criteria

- RAPI assessment criteria

- Spreadsheet software for data compilation and visualization

Procedure:

- Method Characterization: Fully document each method's procedural details, including sample preparation, instrumentation, and data analysis.

- Greenness Assessment (AGREE):

- Input method parameters into AGREE calculator

- Record overall score (0-1) and pictorial output

- Note specific areas of environmental concern

- Practicality Assessment (BAGI):

- Evaluate each method against BAGI criteria focusing on applicability aspects

- Record score and note practical limitations

- Performance Assessment (RAPI):

- Assess analytical performance parameters using RAPI

- Record numerical score and performance strengths/weaknesses

- Integrated Analysis:

- Create a comparative table of all scores across metrics

- Identify methods with the most balanced RGB profile

- Select optimal method based on application requirements and sustainability goals

Troubleshooting:

- If metrics provide conflicting recommendations, prioritize based on the specific needs of your application (e.g., regulatory requirements may emphasize certain performance parameters).

- For methods with similar overall scores, examine sub-scores to identify which best meets specific laboratory constraints or sustainability targets.

Research Reagent Solutions for WAC-Optimized Analytics

Table 2: Essential Materials and Reagents for WAC Implementation

| Item | Function in WAC | Green & Practical Benefits |

|---|---|---|

| Fabric Phase Sorptive Extraction (FPSE) | Sample preparation and pre-concentration | Minimal solvent consumption, reusable phases, compatible with various matrices [10] |

| Magnetic Nanoparticles | SPE sorbents for sample preparation | Enable magnetic separation without centrifugation, reduce processing time and energy [10] |

| Capsule Phase Microextraction (CPME) | Sample preparation and pre-concentration | Minimal solvent use, high extraction efficiency, suitable for automation [10] |

| Short Chromatographic Columns | Rapid separation | Reduce analysis time, mobile phase consumption, and waste generation [10] |

| Low-Toxicity Solvents | Replacement for hazardous solvents | Reduce environmental impact, improve operator safety, simplify waste disposal [10] |

| Direct Analysis Probes | Sample introduction | Enable "dilute-and-shoot" approaches, eliminate extensive sample preparation [10] |

| Automated Microextraction Systems | Sample preparation robotics | Improve reproducibility, reduce manual labor, enable high-throughput analysis [10] |

Advanced WAC Assessment Framework

For comprehensive method evaluation, the relationship between different assessment tools and the RGB dimensions can be visualized as follows:

Diagram: WAC Assessment Tools Framework

This framework illustrates how specialized assessment tools contribute to the comprehensive evaluation of each WAC dimension, enabling researchers to identify specific areas for method improvement and optimization.

Connecting Sample Throughput with Environmental and Economic Impact

Frequently Asked Questions (FAQs)

General Principles and Methodology

1. What is the connection between sample throughput and Green Metrics? Improving sample throughput—the number of samples processed per unit of time—directly enhances the greenness of your research. Faster, more efficient methods consume less energy, generate less waste, and use smaller quantities of solvents and reagents. This aligns with the principles of Green Sample Preparation (GSP), which advocate for miniaturized, automated, and low-energy methods that minimize waste generation and the use of hazardous materials [12]. Essentially, a more efficient process is inherently a more sustainable and often more cost-effective one.

2. How can I quantitatively assess the environmental impact of my lab work? A comprehensive assessment should consider the entire lifecycle of the materials used. You can use a multi-objective framework that quantifies environmental impact in terms of greenhouse gas (GHG) emissions (measured in kg CO₂ equivalent) and life-cycle costs [13]. The formula below is a simplified way to model the total environmental impact (EI) of a process or portfolio of methods over a given time frame, helping to compare alternatives [13]:

minimize EIp(t) = ∑(Initial EI + (t × Daily EI))

Where:

- EIp(t) = Total environmental impact of the process portfolio over time

t - Initial EI = One-time environmental impact from equipment production and transport

- Daily EI = Ongoing environmental impact from daily energy use, solvent consumption, and waste generation

3. Why should I consider economic factors alongside environmental ones? Environmental and economic optima are often different but interconnected [14]. A method that reduces solvent use (an environmental benefit) also lowers purchasing and waste disposal costs (an economic benefit). However, sometimes greener technologies have a higher upfront cost. A complete analysis requires trade-off optimization between these two objectives to find a sustainable balance that is viable for your lab [13]. For example, investing in an automated system may have a high initial cost but can reduce long-term operational expenses and environmental footprint.

Troubleshooting Common Experimental Issues

4. My high-throughput method is generating too much plastic waste. What are my options? This is a common challenge. Consider these strategies based on the principles of GSP [12]:

- Strategy 1: Miniaturization. Scale down your reactions or assays to use smaller tubes, plates, and reduced reagent volumes. This directly cuts waste at the source.

- Strategy 2: Solvent/Reagent Evaluation. Actively seek out and use safer, biodegradable solvents or reagents. The GSP principles emphasize the use of safer, renewable, and recycled materials [12].

- Strategy 3: Process Re-engineering. Explore if your workflow can be adapted to reuse or recycle certain solvents or materials, moving from a linear "use-and-dispose" model to a more circular one.

5. How can I increase my sample throughput without compromising data quality? The key is to leverage technology and simplified protocols:

- Solution 1: Automation. Implement automated liquid handlers and sample processors. They not only increase speed and throughput but also improve reproducibility and minimize human error.

- Solution 2: Procedure Simplification. Critically review your workflow. Can any steps be combined or eliminated? The GSP principles highlight "procedure simplification" as a path to greener and more efficient methods [12].

- Solution 3: In-line Analysis. Where possible, use in-line or at-line analytics to avoid time-consuming sample preparation and transfer steps.

6. My lab wants to be more sustainable, but new equipment is too expensive. Where do I start? Focus on process improvements that have low or no cost:

- Action 1: Audit High-Impact Areas. Identify processes that use the largest volumes of solvents or energy. Even small efficiency gains here can have a significant impact.

- Action 2: Prioritize Low-Cost GSP Principles. Implement miniaturization, waste reduction, and energy conservation measures first. These often pay for themselves quickly through reduced consumable costs [12].

- Action 3: Leverage Predictive Tools. Use software and predictive tools early in your method development to simulate and identify greener and more cost-effective protocols before committing resources to the lab [15] [16].

The following tables summarize key data for comparing conventional and green methodologies.

Table 1: Environmental and Economic Impact of Common Lab Process Alternatives

| Process Category | Conventional Method Impact | Green Alternative Impact | Key Green Metric Improved |

|---|---|---|---|

| Sample Preparation | High solvent use, high energy demand, significant waste [12] | Miniaturized & automated methods [12] | >90% reduction in solvent use & waste generation [12] |

| Drug Development (ERA) | Unknown ecological risk for legacy drugs (>60% lack data) [15] | Early-stage assessment using predictive tools & non-animal methods [15] | Enables prediction of unintended effects on non-target organisms [15] |

| Biopharmaceutical Manufacturing | Batch processing: Large footprint, high capital cost [17] | Continuous processing: Small footprint, consistent quality [17] | Increased productivity, reduced operating cost [17] |

Table 2: Sustainability Trade-off Analysis for Infrastructure Decisions

| Objective | Optimal Strategy | Potential Trade-off |

|---|---|---|

| Minimize Environmental Footprint | Select technologies with lowest lifetime GHG emissions [13]. | Often higher upfront procurement cost and mobilization investment [13]. |

| Minimize Life-Cycle Cost | Select technologies with lowest combined procurement and operational cost [13]. | May result in higher long-term resource consumption and emissions [13]. |

| Multi-Objective Optimization | Use a genetic algorithm to find a "Pareto-optimal" portfolio that balances cost and environmental impact [14]. | Requires computational modeling and does not yield a single "perfect" solution, but a set of optimal compromises [14]. |

Experimental Protocols for Key Experiments

Protocol 1: Life-Cycle Environmental Impact Assessment for a Lab Workflow

This protocol provides a methodology to quantify the environmental footprint of a standard laboratory procedure.

1. Goal and Scope Definition:

- Define the unit of analysis (e.g., "per sample prepared" or "per assay run").

- Set the system boundaries (e.g., from reagent retrieval to waste disposal).

2. Life-Cycle Inventory (LCI) Analysis:

- Material Inputs: Record all consumables (plastics, reagents, solvents) and their masses.

- Energy Inputs: Measure the energy consumption (kWh) of all equipment used (e.g., centrifuges, heaters, HPLC systems).

- Outputs: Quantify all waste streams (solid, liquid, hazardous) by mass.

3. Life-Cycle Impact Assessment (LCIA):

- Convert LCI data into environmental impact indicators. The most common is Global Warming Potential (GWP) in kg CO₂e.

- Use emission factors (e.g., from the Product Environmental Footprint (PEF) guide [14]) to calculate GWP from energy use and material production.

- Total GWP = (Energy use × Electricity emission factor) + Σ(Mass of material × Production emission factor)

4. Interpretation:

- Analyze which steps or materials are the largest contributors to the total impact.

- Use this analysis to target and optimize the most damaging parts of your workflow.

Protocol 2: Implementing a Miniaturized and Automated Sample Preparation Method

This protocol outlines the steps to transition from a manual, macro-scale method to a greener, high-throughput alternative.

1. Feasibility and Scoping:

- Identify a candidate manual protocol that is frequently used, resource-intensive, and has a high potential for miniaturization (e.g., solid-phase extraction, liquid-liquid extraction).

- Determine the availability of suitable automated equipment (e.g., a liquid handling robot) and miniaturized labware (e.g., 96-well plates).

2. Method Translation and Optimization:

- Scale down reagent and sample volumes proportionally to the new format (e.g., from 1 mL to 100 µL in a 96-well plate).

- Program the automated liquid handler to execute the liquid transfers, mixing, and incubation steps.

- Optimize key parameters (e.g., mixing speed, incubation time) for the smaller scale to ensure recovery and accuracy are maintained.

3. Validation and Green Metrics Calculation:

- Validate the new method against the old one for key performance indicators (accuracy, precision, detection limit).

- Calculate and compare the green metrics for both methods using the data from Table 1. Key metrics to report include:

- Solvent volume used per sample

- Plastic waste generated per sample

- Total energy consumption per sample run

- Throughput (samples per hour)

Workflow and Relationship Diagrams

High-Level Sustainable Workflow

Throughput Optimization Logic

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Green, High-Throughput Research

| Item | Function & Application | Sustainability & Throughput Benefit |

|---|---|---|

| Automated Liquid Handlers | Precise, high-speed dispensing of samples and reagents in microplates. | Enables massive parallel processing, reduces human error, and ensures highly reproducible miniaturization [12]. |

| Multi-well Plates (e.g., 96, 384-well) | Platform for running dozens to hundreds of experiments simultaneously. | The foundation of miniaturization, drastically reducing per-sample consumable and reagent use [12]. |

| Safer Solvent Alternatives | Bio-based or less hazardous solvents replacing toxic options (e.g., Cyclopentyl methyl ether vs. dichloromethane). | Reduces environmental toxicity and waste hazard, aligning with GSP principles for safer reagents [12]. |

| Predictive Software Tools (e.g., GMT) | Tools to measure and optimize software/computational resource consumption. | Allows for "virtual" optimization of methods to reduce energy use and carbon emissions before lab work begins [16]. |

| High-Efficiency Chromatography Columns (e.g., UHPLC) | Separation of complex mixtures using smaller particle sizes and higher pressures. | Allows for faster run times (higher throughput) and lower solvent consumption per analysis compared to conventional HPLC. |

Implementing Green Sample Preparation and High-Throughput Analysis

Frequently Asked Questions (FAQs)

FAQ 1: What is the most significant source of environmental impact in traditional sample preparation? The most significant sources are the use of large volumes of hazardous organic solvents and the generation of associated hazardous waste. [18] Traditional solvents like benzene and chloroform are volatile, toxic, and persistent in the environment, creating occupational hazards and disposal challenges. [18] Furthermore, sample preparation methods that are not optimized contribute to excessive energy consumption and plastic waste, with research labs generating an estimated 5.5 million metric tons of single-use plastic waste annually. [19] [20]

FAQ 2: Are green solvents as effective as traditional solvents for analytical methods? Yes, many green solvents are designed to offer equivalent, and sometimes superior, performance while reducing environmental and health impacts. [18] Solvents like bio-based ethanol, supercritical CO2, and certain ionic liquids can be effectively used in various extraction and separation techniques. [18] [4] The key is to select a green solvent with the correct properties (e.g., polarity, solubility) for your specific application and analytical technique to ensure compatibility and reliable results. [18]

FAQ 3: What is the simplest first step I can take to make my sample prep greener? The simplest first step is to miniaturize your methods. [4] Reducing sample sizes from milliliters to microliters or milligrams directly reduces the consumption of samples, solvents, and reagents, thereby minimizing waste generation. [21] [22] This approach can often be implemented with existing equipment through careful method optimization and does not necessarily require a capital investment.

FAQ 4: How can I objectively assess and compare the 'greenness' of my sample preparation method? You can use established green assessment tools. The following table summarizes key metrics:

| Assessment Tool | Primary Focus | Key Metrics Evaluated |

|---|---|---|

| AGREE (Analytical Greenness Calculator) [22] | Overall analytical method | Uses 12 principles of GAC to provide a comprehensive score. |

| AGREEprep [22] | Sample preparation stage | Specifically evaluates the sample preparation step. |

| ComplexGAPI [22] | Complex analytical procedures | Provides a visual profile of the method's environmental impact. |

| GreenSOL [23] | Solvent selection | Employs a lifecycle approach to evaluate solvents from production to waste. |

FAQ 5: What are the common trade-offs when implementing green sample prep? The most common trade-off is between analytical performance and sustainability. [22] For instance, a highly sensitive and specific method might require energy-intensive instrumentation like a GC-QTOF-MS, which can consume over 1.5 kWh per sample. [22] Other challenges include the initial time investment for method validation and optimization, and potential costs for new equipment. However, these are often offset by long-term savings in reagent costs and waste disposal. [4]

Troubleshooting Common Challenges

Challenge 1: High Solvent Usage and Waste

Problem: My current liquid-liquid extraction method uses over 100 mL of chlorinated solvent per sample, generating significant hazardous waste.

Solution: Transition to solvent-minimized or solvent-free extraction techniques.

- Recommended Protocol: Solid-Phase Microextraction (SPME)

- Principle: A fiber coated with a stationary phase is exposed to the sample (or its headspace) to adsorb analytes. The analytes are then thermally desorbed directly into a GC inlet, eliminating the need for solvent. [4] [22]

- Steps:

- Condition the SPME fiber according to the manufacturer's instructions in the GC injection port.

- Expose the fiber to the sample vial. For headspace-SPME (HS-SPME), incubate the sample at an optimized temperature and time to allow volatiles to partition into the headspace. [22]

- Retract the fiber and transfer it to the GC injector.

- Desorb the analytes in the hot GC injector for a set time to transfer them to the analytical column.

- Optimization Tips: Fiber coating selection is critical (e.g., DVB/CAR/PDMS for VOCs). [22] Systematically optimize exposure time, temperature, and sample agitation to maximize recovery.

Challenge 2: Excessive Sample and Reagent Consumption

Problem: I need to analyze a rare or limited sample and cannot use the standard method requiring large volumes.

Solution: Implement method miniaturization and micro-extraction techniques.

- Recommended Protocol: Miniaturized Headspace Extraction

- Principle: The scale of the entire sample preparation process is reduced. As demonstrated in a study on tree emissions, effective profiling was achieved using only 0.20 grams of plant material. [22]

- Steps:

- Weigh a small, representative sample (e.g., 0.2 g) into a headspace vial.

- Seal the vial properly to maintain integrity.

- Apply a miniaturized extraction technique like HS-SPME or use a low-volume insert for liquid injection.

- Optimization Tips: Ensure sample homogenization is excellent. Use chemometric tools like Principal Component Analysis (PCA) to help validate that the miniaturized method retains the ability to differentiate samples reliably. [22]

Challenge 3: Selecting the Right Green Solvent

Problem: I want to replace a hazardous solvent but don't know which green alternative is suitable.

Solution: Use a structured solvent selection guide based on the principles of Green Chemistry.

- Recommended Protocol: Using the GreenSOL Guide

- Principle: This guide evaluates solvents across their entire lifecycle (production, use, waste) against multiple impact categories, providing a score from 1 (least favorable) to 10 (most recommended). [23]

- Steps:

- Identify the properties (e.g., polarity, boiling point) your application requires.

- Consult the GreenSOL guide (available online) to compare solvents within the same chemical group. [23]

- Select a solvent with a high GreenSOL score that also meets your technical needs.

- Common Green Solvent Categories:

- Bio-based solvents: Derived from renewable resources (e.g., ethanol from sugarcane, ethyl lactate, limonene from citrus peels). [18]

- Supercritical Fluids: Such as CO2, which is non-toxic and can be tuned with pressure/temperature. [18]

- Deep Eutectic Solvents (DES): Low-cost, tunable, and often biodegradable mixtures. [18]

Green Solvent Selection Workflow

Research Reagent Solutions

The following table details key materials and tools essential for implementing greener sample preparation.

| Reagent/Material | Function & Green Rationale |

|---|---|

| SPME Fibers (e.g., DVB/CAR/PDMS) | Enables solvent-free extraction and pre-concentration of analytes from liquid or gaseous samples, drastically reducing hazardous waste. [4] [22] |

| Bio-based Solvents (e.g., Ethyl Lactate, Limonene) | Renewable, often less toxic alternatives to petroleum-derived solvents. Effective for extraction and cleaning. [18] |

| Microfluidic/Lab-on-a-Chip Devices | Miniaturizes entire analytical processes, leading to massive reductions in sample and reagent consumption (down to nanoliters). [4] |

| Supercritical CO₂ | A non-toxic, non-flammable solvent for extraction (SFE). It avoids petroleum derivatives, and the extract is easily recovered by depressurization. [18] |

| Green Assessment Software (e.g., AGREE, GreenSOL) | Provides a quantitative and structured framework for evaluating and comparing the environmental footprint of analytical methods, guiding better choices. [23] [22] |

Advanced Experimental Protocol: A Green Workflow for VOC Analysis

This detailed protocol is adapted from a published method for analyzing biogenic volatile organic compounds (BVOCs) using HS-SPME-GC–MS, showcasing a practical integration of multiple green strategies. [22]

Aim: To determine the profile of volatile compounds from plant material using a miniaturized, solvent-free approach.

Principles: This method replaces traditional solvent-based extraction with headspace solid-phase microextraction (HS-SPME), eliminating solvent waste. Miniaturization reduces sample requirement to only 0.20 g, and automation improves reproducibility and throughput. [22]

Materials:

- Gas Chromatograph coupled to a Mass Spectrometer (GC-MS)

- Automated SPME sampler

- SPME fiber (e.g., DVB/CAR/PDMS 50/30 μm)

- Headspace vials and caps

- Analytical balance

- Cryogenic mill (optional, for homogenization)

- Liquid nitrogen for sample preservation

Procedure:

- Sample Collection and Preparation:

- Collect plant material in the field using standardized procedures (e.g., consistent time of day, canopy zone). [22]

- Immediately freeze samples in liquid nitrogen or on dry ice to preserve the volatile profile.

- Lyophilize (freeze-dry) the samples if necessary for storage.

- Gently homogenize the frozen material using a cryogenic mill. Avoid letting the sample thaw.

- Sample Weighing and Loading:

- Precisely weigh 0.20 g of homogenized plant material into a 20 mL headspace vial.

- Immediately seal the vial with a crimp cap equipped with a PTFE/silicone septum.

- HS-SPME Extraction:

- Place the vial in the autosampler tray.

- The automated method should include:

- Incubation: Heating the vial at a defined temperature (e.g., 60°C) for a set time (e.g., 10-15 minutes) with agitation to allow volatiles to equilibrate in the headspace.

- Extraction: Exposing the SPME fiber to the sample headspace for a defined time (e.g., 30-45 minutes) while maintaining temperature.

- GC-MS Analysis:

- Desorption: Retract the fiber and transfer it to the GC injector for thermal desorption (e.g., 250°C for 5 minutes).

- Chromatography: Use a suitable temperature program on a non-polar or mid-polar capillary column to separate the compounds.

- Detection: Acquire data in full-scan mode (e.g., m/z 40-350) for untargeted profiling.

- Data Analysis:

- Use chemometric tools like Principal Component Analysis (PCA) and Hierarchical Cluster Analysis (HCA) to interpret complex datasets, validate method performance, and identify discriminant compounds. [22]

Green Metric Assessment: The developers of this method used AGREE, AGREEprep, and ComplexGAPI tools, which highlighted its strengths in waste minimization and safety, while also transparently identifying energy consumption as a trade-off. [22]

Miniaturized Green Sample Prep Workflow

Applying the Sample Preparation Metric of Sustainability for Method Design

The Sample Preparation Metric of Sustainability (SPMS) is an open-source tool designed to explicitly and exclusively evaluate the environmental impact of your sample preparation procedures [24]. Traditional green metrics often assess the entire analytical method, making it difficult to isolate and improve the sustainability of the sample preparation step, which is typically the least green part of the process [24]. Using SPMS allows you to quantitatively compare different sample preparation techniques and make informed decisions that optimize your method for both performance and environmental impact.

Frequently Asked Questions (FAQs)

Q1: What is the advantage of SPMS over other green metrics like AGREE or GAPI? SPMS focuses solely on the sample preparation step, whereas other metrics evaluate the entire analytical procedure. This exclusive focus allows for a more precise and meaningful assessment of the sustainability of your sample preparation techniques, making it easier to identify specific areas for improvement [24].

Q2: How does the SPMS tool present its results? The metric is simple and reports its result with a clock-like diagram. This visual display shows the greenness outcome of the main sample preparation parameters and provides a total sustainability score [24].

Q3: Can SPMS differentiate between similar microextraction approaches? Yes, a key strength of this metric is its ability to differentiate between closely related microextraction approaches in terms of their sustainability, helping you select the greenest option for your specific needs [24].

Q4: Where can I find the SPMS tool to use in my lab? The metric is open-source. You can download the provided Excel sheet to begin assessing your own sample preparation procedures [24].

Troubleshooting Guide: Common Sample Prep Issues & Green Solutions

| Problem Category | Specific Failure Signs | Root Cause | Corrective Action for Recovery & Greenness |

|---|---|---|---|

| Sample Input & Quality [25] | Low yield; smear on analysis; low complexity. | Sample degradation; contaminants (phenol, salts); inaccurate quantification [25]. | Re-purify input; use fluorometric quantification (e.g., Qubit) over UV absorbance to reduce reagent waste from repeated attempts [25]. |

| Fragmentation & Ligation [25] | Unexpected fragment size; high adapter-dimer peaks. | Over-/under-shearing; improper adapter-to-insert ratio; poor ligase efficiency [25]. | Titrate adapter ratios to minimize waste; optimize fragmentation parameters to avoid repetition and save energy [25]. |

| Amplification & PCR [25] | High duplicate rate; amplification bias; artifacts. | Too many PCR cycles; enzyme inhibitors; mispriming [25]. | Reduce PCR cycles to save energy and reagents; ensure efficient polymerase to prevent need for re-amplification [25]. |

| Purification & Cleanup [25] | High adapter-dimer carryover; significant sample loss. | Incorrect bead-to-sample ratio; over-dried beads; pipetting errors [25]. | Precisely calibrate pipettes and master bead ratios to minimize sample loss and material waste [25]. |

| General Sample Management [26] | Mislabeled or lost samples; compromised integrity. | Human error; lack of standardized procedures; poor tracking [26]. | Implement barcoding or digital tracking systems (e.g., LIMS) to reduce errors and prevent the waste of resources on misplaced samples [26] [27]. |

SPMS Integrated Troubleshooting Workflow

Experimental Protocol: Implementing SPMS in Method Design

Objective: To integrate the Sample Preparation Metric of Sustainability (SPMS) into the development of a new sample preparation method, aiming to optimize its environmental performance.

1. Define Method Parameters:

- Clearly outline each step of your proposed sample preparation procedure, including the types and volumes of solvents, materials (e.g., sorbents, filters), and energy consumption (e.g., incubation time, centrifugation speed) [24].

2. Download and Input Data into SPMS Tool:

- Acquire the open-source SPMS Excel sheet [24].

- Input the defined parameters from Step 1 into the corresponding fields in the spreadsheet.

3. Run the Assessment and Interpret the Results:

- The tool will generate a clock-like diagram (radar chart) and a total score.

- Interpretation: The diagram visually highlights which parameters have high or low greenness scores. Use this to identify the least sustainable aspects of your method. A higher total score indicates a greener procedure [24].

4. Iterate and Optimize:

- Based on the SPMS output, modify your method to improve weak areas. For example:

- If solvent waste is high: Investigate switching to a micro-extraction technique or a less hazardous solvent.

- If energy use is high: Reduce incubation times or temperatures if possible.

- Re-run the SPMS assessment on the modified method to quantify the improvement in greenness.

5. Validate Method Performance:

- After optimization, ensure the sustainable method still meets all analytical performance criteria (e.g., recovery, reproducibility, sensitivity) as outlined by the "whiteness" concept in Green Analytical Chemistry, which balances environmental impact with functionality [1].

SPMS Method Development Cycle

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item or Reagent | Primary Function in Sample Prep | Green Considerations |

|---|---|---|

| Solid Phase Extraction (SPE) Sorbents | Selectively bind and concentrate analytes from a liquid sample [28]. | Choose sorbents that enable high analyte recovery to minimize solvent use for elution. Reusable sorbents are preferable. |

| Micro-extraction Devices | Extract analytes using very small volumes of solvent (e.g., SPME, SBSE) [24]. | Dramatically reduce hazardous solvent waste. SPMS is particularly effective for comparing these techniques [24]. |

| Bio-Based or Green Solvents | Replace traditional, hazardous solvents (e.g., hexane, chlorinated solvents). | Less toxic, biodegradable, and often from renewable resources. Their use directly improves SPMS scores related to waste and hazard. |

| Laboratory Information Management System (LIMS) | Digitally track samples, procedures, and data [26] [27]. | Prevents loss of samples and need for re-preparation, saving materials and energy. Improves data integrity for compliance [26]. |

| Concentrated Master Mixes | Pre-mixed, optimized solutions for steps like PCR [25]. | Reduces pipetting steps and volumetric errors, leading to less reagent waste and more reproducible results [25]. |

The following table summarizes the core characteristics of UFLC-DAD and Spectrophotometry to aid in initial technique selection [29].

| Feature | UFLC-DAD | Spectrophotometry |

|---|---|---|

| Overall Speed | Faster analysis times due to high-resolution separation [29] | Rapid analysis, but can be limited by sample preparation for complex mixtures [29] |

| Analysis Throughput | High | Very High (for simple mixtures) [29] |

| Key Advantage | High selectivity and sensitivity; can analyze complex mixtures and multiple components simultaneously [29] | Simplicity, low cost, precision, and operational ease [29] |

| Primary Greenness Consideration | Higher solvent consumption and waste generation [30] | Generally lower solvent use and energy consumption [29] |

| Sample Concentration Limits | Can analyze a wide range of concentrations (e.g., 50 mg and 100 mg tablets) [29] | Limited by Beer-Lambert law; may not detect higher concentrations without dilution (e.g., only 50 mg tablets in one study) [29] |

| Best Suited For | Complex matrices, multi-analyte determination, and situations requiring high specificity. | Routine quality control of simple formulations, single-analyte determination, and resource-limited settings. |

Experimental Protocols for Method Validation

To ensure reliable and reproducible results, analytical methods must be properly validated. The following protocols outline key experiments for both techniques, based on the determination of metoprolol tartrate (MET) in tablets [29].

Protocol 1: Validating a Spectrophotometric Method

This protocol is designed for the quantification of an active component in pharmaceuticals using a UV spectrophotometer.

- 1. Instrument Calibration: Ensure the spectrophotometer is calibrated according to the manufacturer's specifications. Use ultrapure water or the chosen solvent as a blank.

- 2. Preparation of Standard Solutions:

- Prepare a stock solution of the reference standard (e.g., MET with certified purity ≥98%) in a suitable solvent like ultrapure water [29].

- From the stock solution, prepare a series of standard solutions covering a defined concentration range (e.g., for MET, the range was validated for 50 mg tablets) [29].

- 3. Specificity/Selectivity Check:

- Scan the standard solution and a sample solution extracted from the placebo (tablet excipients without the active ingredient) over the relevant wavelength range (e.g., 200-400 nm).

- Confirm that the excipients do not produce any interfering absorbance at the analytical wavelength (e.g., λ = 223 nm for MET) [29].

- 4. Linearity and Range:

- Measure the absorbance of the standard solutions at the specified wavelength.

- Plot absorbance versus concentration and determine the correlation coefficient, y-intercept, and slope of the regression line. The method is linear if the correlation coefficient (r) is ≥ 0.999 [29].

- 5. Accuracy (Recovery):

- Perform a standard addition procedure by spiking a pre-analyzed sample with known quantities of the reference standard.

- Calculate the percentage recovery of the added standard. The method is accurate if recovery is close to 100% [29].

- 6. Precision:

- Repeatability: Analyze multiple preparations (n=6) of the same sample solution on the same day.

- Intermediate Precision: Analyze the same sample on different days or by different analysts.

- Calculate the relative standard deviation (RSD%) of the results. An RSD of less than 2% is typically acceptable [29].

Protocol 2: Validating a UFLC-DAD Method

This protocol outlines the critical steps for validating a chromatographic method, which includes an initial method optimization phase.

- A. Method Optimization (Prior to Validation):

- Column Selection: Choose an appropriate column (e.g., C18).

- Mobile Phase Optimization: Experiment with different compositions of the aqueous and organic phases (e.g., water with 0.1% formic acid and methanol or acetonitrile) to achieve optimal peak shape and resolution [29].

- Flow Rate and Gradient: Adjust the flow rate and gradient program to shorten the runtime while maintaining a clear separation of the analyte peak from any impurities or excipients [29].

- B. Method Validation:

- 1. Specificity: Inject the standard, placebo extract, and sample extract. Confirm that the analyte peak is pure (using DAD peak purity function) and has no interference from other components at its retention time [29].

- 2. Linearity: Prepare and inject a series of standard solutions. Plot the peak area versus concentration and assess the linearity of the calibration curve [29].

- 3. Accuracy: Perform a recovery study by spiking the placebo with known amounts of the standard at different concentration levels (e.g., 80%, 100%, 120%) and calculate the recovery percentage [29].

- 4. Precision: Evaluate repeatability and intermediate precision as described in the spectrophotometry protocol, but using peak areas or heights from the chromatograms [29].

- 5. Limit of Detection (LOD) and Quantification (LOQ): Calculate based on the signal-to-noise ratio (typically 3:1 for LOD and 10:1 for LOQ) using the formulae LOD = 3.3 × SD/Slope and LOQ = 10 × SD/Slope, where SD is the standard deviation of the response [29].

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Material | Function in Analysis |

|---|---|

| Ultrapure Water (UPW) | Primary solvent for preparing aqueous standard and sample solutions; minimizes background interference [29]. |

| Methanol / Acetonitrile (HPLC Grade) | Organic modifiers in the mobile phase for UFLC-DAD; used to elute analytes from the column and adjust separation [29]. |

| Reference Standard (e.g., Metoprolol ≥98%) | Provides a highly pure substance to create the calibration curve, ensuring accurate quantification of the analyte in the sample [29]. |

| Formic Acid / Phosphoric Acid | Mobile phase additives in UFLC-DAD to improve peak shape and ionization, particularly for basic compounds [29]. |

| Human Liver Microsomes (HLMs) | Used in advanced pharmacological studies (e.g., metabolic stability via UHPLC-MS/MS) to simulate in vitro drug metabolism [31]. |

Technique Selection Workflow

The following diagram illustrates a logical pathway for selecting the most appropriate analytical technique based on your project's goals and constraints.

Frequently Asked Questions (FAQs)

Q1: My spectrophotometric results show poor recovery when analyzing tablets. What could be wrong? A: This is often due to incomplete extraction of the active component from the tablet matrix or interference from excipients.

- Troubleshooting: Ensure a thorough and optimized extraction process (e.g., sufficient sonication time, correct solvent). Re-check the method's specificity by scanning a placebo solution. If interference is confirmed, you may need to switch to a more selective technique like UFLC-DAD or employ a more advanced spectrophotometric resolution method (e.g., ratio derivative spectra) to mathematically resolve the overlap [29] [32].

Q2: How can I make my UFLC-DAD method more environmentally friendly (greener)? A: The primary environmental impact of HPLC/UFLC methods comes from solvent consumption.

- Troubleshooting: Adopt miniaturization strategies. Use columns with smaller dimensions (e.g., 2.1 mm ID instead of 4.6 mm) which drastically reduce mobile phase flow rates and waste [30]. Where possible, replace toxic solvents like acetonitrile with greener alternatives (e.g., ethanol) or use them in lower proportions [30]. Also, employ fast gradients to shorten run times, saving both solvent and energy.

Q3: My UFLC-DAD analysis is taking too long, reducing my lab's throughput. How can I speed it up? A: Several parameters can be optimized to increase throughput.

- Troubleshooting:

- Mobile Phase: Increase the percentage of the organic modifier in the gradient.

- Flow Rate: Consider increasing the flow rate within the column's pressure limits.

- Column Technology: Use columns packed with smaller particles (e.g., core-shell technology) that allow for high efficiency at higher flow rates without losing resolution [29] [30].

- Method Conversion: Explore transferring your method to an Ultra-High-Performance Liquid Chromatography (UHPLC) system if available, which is designed for faster separations [31].

Q4: What is the simplest way to formally compare the greenness of my method vs. a published one? A: Use a standardized greenness assessment tool.

- Recommendation: The Analytical GREEnness (AGREE) metric is a popular and comprehensive tool. It uses a pictogram score based on the 12 principles of Green Analytical Chemistry, providing a quick, visual comparison between methods [29] [1] [33]. A score closer to 1 indicates a greener method. For example, one study found a UFLC-DAD method for metoprolol had a high greenness score, while another developed a UHPLC-MS/MS method with an AGREE score of 0.76 [29] [31].

Leveraging Automation and Miniaturization to Boost Throughput and Reduce Resource Consumption

Troubleshooting Guides