Pharmaceutical Quantification Spectroscopy: A 2025 Guide to Robust Method Validation & Lifecycle Management

This article provides a comprehensive guide for researchers and drug development professionals on validating spectroscopic methods for pharmaceutical quantification.

Pharmaceutical Quantification Spectroscopy: A 2025 Guide to Robust Method Validation & Lifecycle Management

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on validating spectroscopic methods for pharmaceutical quantification. It covers foundational principles rooted in ICH Q2(R2) and Q14 guidelines, explores practical methodological applications for small and large molecules, addresses common troubleshooting and optimization challenges, and details modern validation and comparative analysis strategies. Emphasizing a lifecycle approach, the content synthesizes current regulatory expectations, technological advancements like AI and Real-Time Release Testing (RTRT), and risk-based methodologies to ensure data integrity, regulatory compliance, and robust analytical performance throughout a method's lifetime.

Foundations of Spectroscopic Method Validation: Principles, Guidelines, and Regulatory Frameworks

In the highly regulated world of pharmaceutical manufacturing, ensuring product quality, consistency, and patient safety is paramount [1]. Analytical method validation serves as a foundational process in pharmaceutical quality assurance, providing documented evidence that laboratory analytical procedures consistently yield reliable and accurate results for their intended purposes [2] [3]. This process verifies that a method's performance characteristics meet predefined standards, ensuring that every batch of a pharmaceutical product meets the same rigorous quality, safety, and efficacy standards [1] [2]. From drug formulation to final packaging, validated methods underpin trustworthy measurements of critical quality attributes like potency, purity, stability, and impurity profiles [1] [2]. Regulatory agencies globally, including the FDA and EMA, mandate validation to safeguard public health, making it an indispensable component of pharmaceutical development, manufacturing, and control [1] [4].

Core Principles: Understanding Validation Parameters

The validation of analytical methods is governed by harmonized international guidelines, primarily the International Council for Harmonisation (ICH) Q2(R2) guideline, which outlines fundamental performance characteristics that must be evaluated to demonstrate a method is fit-for-purpose [4]. The specific parameters required depend on the type of method—whether it is an identification test, a quantitative test for impurities, or a assay for active ingredients [5]. The core validation parameters are detailed below.

Table 1: Key Analytical Method Validation Parameters and Their Definitions

| Parameter | Definition | Typical Assessment Method |

|---|---|---|

| Accuracy | The closeness of test results to the true value [2] [4]. | Spiking a known amount of analyte into the sample matrix and measuring recovery [5]. |

| Precision | The degree of agreement among individual test results from repeated samplings [2] [4]. Includes repeatability and intermediate precision [3]. | Multiple determinations by different analysts, on different days, or with different instruments [5]. |

| Specificity | The ability to assess the analyte unequivocally in the presence of other components [4] [5]. | Analyzing samples with and without potential interferents like impurities or matrix components [5]. |

| Linearity | The ability of the method to obtain test results proportional to the analyte concentration [2] [4]. | Analyzing a series of samples at different concentrations and performing linear regression [3]. |

| Range | The interval between upper and lower analyte concentrations for which suitable levels of linearity, accuracy, and precision are demonstrated [4]. | Derived from linearity studies, must bracket the product specifications [5]. |

| Limit of Detection (LOD) | The lowest amount of analyte that can be detected, but not necessarily quantified [4]. | Based on signal-to-noise ratio or standard deviation of the response [3]. |

| Limit of Quantitation (LOQ) | The lowest amount of analyte that can be quantified with acceptable accuracy and precision [4]. | The lowest point of the assay range, determined with acceptable accuracy and precision [5]. |

| Robustness | A measure of the method's capacity to remain unaffected by small, deliberate variations in method parameters [2] [4]. | Deliberately varying parameters like pH, temperature, or flow rate [3]. |

The validation process follows a lifecycle approach, beginning with systematic method development and continuing through to post-approval changes, as emphasized in the modernized ICH Q2(R2) and ICH Q14 guidelines [4]. This lifecycle management ensures methods remain robust and reliable throughout their use in quality control.

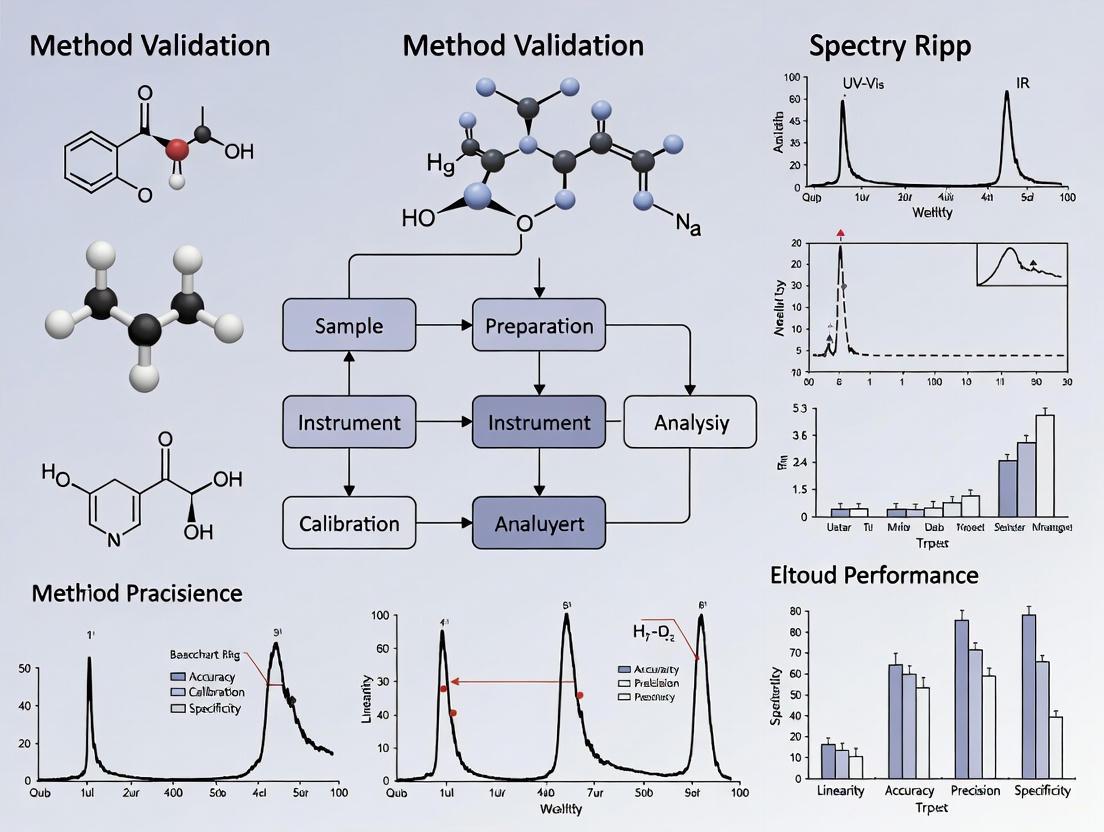

Diagram 1: The Analytical Procedure Lifecycle per ICH Q2(R2) & Q14.

Comparing Analytical Techniques: Performance and Applications

Pharmaceutical analysis employs a diverse array of spectroscopic and chromatographic techniques, each with distinct strengths, limitations, and applications. The choice of technique depends on the analyte's properties, the required sensitivity, and the complexity of the sample matrix [2]. The following section compares key analytical platforms, highlighting their performance in quantifying pharmaceutical compounds.

Table 2: Comparison of Analytical Techniques for Pharmaceutical Quantification

| Technique | Typical Applications | Key Performance Data (from cited studies) | Relative Advantages | Relative Limitations |

|---|---|---|---|---|

| UHPLC-MS/MS [6] | Trace analysis of pharmaceuticals in complex matrices (e.g., water). | LOD: 100-300 ng/L [6]LOQ: 300-1000 ng/L [6]Linearity: R² ≥ 0.999 [6]Precision: RSD < 5.0% [6] | Exceptional sensitivity and selectivity; short analysis time; no derivatization needed [6]. | High instrument cost; requires skilled operators. |

| LC-HRMS [7] | Quantifying peptide-related impurities in biopharmaceuticals. | LOQ: 0.02-0.03% of API [7]Validation: Specificity, accuracy, repeatability, robustness demonstrated [7] | High specificity and sensitivity; can simultaneously quantify numerous impurities [7]. | Complex data analysis; high instrument cost. |

| ICP-OES [8] | Quality assessment of radiometals (e.g., ⁶⁷Cu); trace metal analysis. | Validation: Accuracy, precision, specificity, linearity met for most elements [8] | Effective for trace metal impurities; high sensitivity and precision [8]. | Can suffer from matrix effects for some elements [8]. |

| HPGe γ-Spectrometry [8] | Assessing radionuclidic purity of radiopharmaceuticals. | Performance: Accurate discrimination of co-produced radionuclides at 99.5% purity [8] | High sensitivity and precision for radioactive traces; enables unambiguous quantification [8]. | Requires advanced spectral deconvolution for overlapping peaks [8]. |

| FT-IR Spectroscopy [9] | Protein characterization, contaminant identification, polymer analysis. | Technology: QCL-based microscopy enables imaging at 4.5 mm²/s [9] | Provides structural and chemical information; non-destructive. | Relatively low sensitivity for trace analysis in complex mixtures [6]. |

Focused Comparison: UHPLC-MS/MS vs. ICP-OES

To illustrate a practical comparison, consider two advanced techniques used for different but critical tasks in pharmaceutical quality control. UHPLC-MS/MS excels in separating and quantifying organic molecules at ultra-trace levels, as demonstrated by a validated method for environmental pharmaceutical contaminants with a remarkably low LOD of 100 ng/L for carbamazepine [6]. In contrast, ICP-OES is specialized for elemental analysis, playing a vital role in ensuring the chemical purity of radiometals like ⁶⁷Cu for targeted radionuclide therapy. Its validation involves confirming the absence of metallic contaminants that could compromise drug safety or efficacy [8]. While UHPLC-MS/MS identifies and quantifies specific molecular entities, ICP-OES measures the total concentration of specific elements, showcasing how technique selection is driven by the nature of the analytical question.

Experimental Deep Dive: Protocols and Reagents

To ground the principles of method validation in practice, this section details a specific experimental protocol from recent research.

Case Study: Validating a UHPLC-MS/MS Method for Trace Pharmaceuticals

A 2025 study developed and validated a green UHPLC-MS/MS method for simultaneously determining carbamazepine, caffeine, and ibuprofen in water samples [6]. The workflow and logical progression of the validation process are outlined below.

Diagram 2: UHPLC-MS/MS Method Workflow for Trace Pharmaceutical Analysis.

Table 3: Research Reagent Solutions for UHPLC-MS/MS Experiment

| Item | Function in the Experiment |

|---|---|

| Certified Pharmaceutical Standards (Carbamazepine, Caffeine, Ibuprofen) | Serve as the analytes of interest; used to prepare calibration standards and spiked samples for assessing accuracy, linearity, and range [6]. |

| Solid-Phase Extraction (SPE) Cartridges | To isolate, concentrate, and clean up the target pharmaceuticals from the complex water matrix before instrumental analysis [6]. |

| High-Purity Solvents (e.g., Traceselect Methanol, Acetonitrile) | Used as the mobile phase in UHPLC to achieve efficient separation of analytes; purity is critical to minimize background noise [6] [8]. |

| Ultra-Pure Water (Milli-Q Grade or equivalent) | Used for preparing all aqueous solutions and mobile phases; high purity prevents contamination and interference [6] [8]. |

| Internal Standards (e.g., Isotopically-labeled analogs) | Added to samples to correct for variability in sample preparation and instrument response, improving accuracy and precision [6]. |

Validation Protocol and Results

Following the ICH Q2(R2) guideline, the method was rigorously validated [6] [4]. The protocol involved:

- Specificity: The method could unequivocally distinguish each pharmaceutical in the presence of the others and matrix components, confirmed using MRM mode [6].

- Linearity and Range: Demonstrated by external calibration curves spanning the expected concentration range, yielding correlation coefficients (R²) of ≥ 0.999 [6].

- Accuracy: Assessed via recovery studies, where known amounts of analytes were spiked into the matrix. Recovery rates ranged from 77% to 160%, meeting pre-defined acceptance criteria [6].

- Precision: Evaluated as repeatability, expressed as the relative standard deviation (RSD) of repeated measurements. The method showed an RSD of less than 5.0%, confirming acceptable precision [6].

- Sensitivity: The Limits of Quantitation (LOQ) were established at 1000 ng/L for caffeine, 600 ng/L for ibuprofen, and 300 ng/L for carbamazepine, which are suitable for monitoring these contaminants in the environment [6].

Analytical method validation is not merely a regulatory hurdle but a fundamental pillar of pharmaceutical quality assurance [1] [3]. It provides the scientific and documented evidence that the data used to release a drug product—data confirming its identity, strength, quality, and purity—are trustworthy. The evolution of guidelines like ICH Q2(R2) and ICH Q14 towards a lifecycle approach underscores that validation is a continuous process, integral from early development through commercial production [4]. As analytical technologies advance, the principles of validation ensure that these new methods are implemented robustly, maintaining the integrity of the pharmaceutical industry's most crucial mandate: to deliver safe, effective, and high-quality medicines to patients. By systematically comparing techniques, understanding their performance parameters, and adhering to rigorous experimental protocols, researchers and scientists uphold this mandate, making method validation a critical, non-negotiable component of modern pharmaceutical science.

The framework governing pharmaceutical analytical methods is undergoing a significant transformation with the recent adoption of ICH Q2(R2) and ICH Q14 guidelines, which provide updated and harmonized approaches to analytical procedure validation and development. These documents, finalized in late 2023 and supplemented in 2025 with comprehensive training materials by the ICH Implementation Working Group, represent a paradigm shift toward a more holistic, science- and risk-based lifecycle management of analytical procedures [10] [11]. Concurrently, the start of 2025 has seen the FDA issue new guidance on Bioanalytical Method Validation for Biomarkers, creating both opportunities and challenges for the bioanalytical community [12].

For researchers and drug development professionals, navigating this interplay between global ICH standards and specific FDA expectations is crucial for successful regulatory submissions. This guide provides a comparative analysis of these guidelines, supported by experimental data and practical protocols, to facilitate robust analytical method development and validation within the context of modern pharmaceutical quantification.

Comparative Analysis of ICH Q2(R2), ICH Q14, and FDA Guidance

The following table summarizes the core focus, regulatory scope, and key emphases of each guideline to highlight their distinct roles and interconnected relationships in the analytical procedure lifecycle.

Table 1: Core Principles and Scope of Key Regulatory Guidelines

| Guideline | Core Focus | Regulatory Scope | Key Emphasis |

|---|---|---|---|

| ICH Q2(R2) | Validation of analytical procedures [13] | New/revised procedures for release/stability testing of commercial drug substances/products [13] | Validation parameters (Accuracy, Precision, Specificity, etc.); Analytical Procedure Validation strategy [13] [11] |

| ICH Q14 | Analytical procedure development [14] | New/revised procedures for release/stability testing of commercial drug substances/products [14] | Science/Risk-based development; Analytical Target Profile (ATP); Robustness/Parameter Ranges; Analytical Procedure Control Strategy; Lifecycle management [14] [10] |

| FDA BMV for Biomarkers (2025) | Validation for biomarker bioanalysis [12] | Biomarker assays used in drug development (Non-binding recommendations) [12] | Application of ICH M10 principles; Acknowledges biomarkers differ from drug analytes; Lack of specific criteria for Context of Use (COU) [12] |

The Integrated Framework of ICH Q2(R2) and ICH Q14

ICH Q14 fundamentally shifts analytical development from a linear process to an integrated, knowledge-driven framework. Its central element is the Analytical Target Profile (ATP), a predefined objective that explicitly states the intended purpose of the procedure and the required performance criteria [10]. The guideline introduces both minimal and enhanced approaches to development. The enhanced approach is particularly significant, as it encourages greater understanding of procedural variables, establishes defined parameter ranges, and implements an analytical procedure control strategy, facilitating more flexible and risk-based lifecycle management [14] [11].

ICH Q2(R2) is the direct companion to Q14, detailing how to demonstrate through validation that the procedure developed meets the criteria defined in its ATP. It provides updated discussions on validation tests and terminology for classic parameters like accuracy, precision, specificity, and linearity [13] [10]. Together, Q14 and Q2(R2) create a seamless lifecycle from development through validation and continual improvement, ensuring methods are not only validated at a point in time but remain robust and fit-for-purpose throughout their commercial application [10].

FDA's 2025 Biomarker Guidance and Its Interaction with ICH Standards

The FDA's 2025 guidance on Bioanalytical Method Validation for Biomarkers introduces specific expectations for a particularly challenging area of bioanalysis. A critical point of discussion in the bioanalytical community is that this guidance directs practitioners to ICH M10, a guideline which itself explicitly states that it does not apply to biomarkers [12]. This creates a complex situation for scientists, who must then rely on the relevant principles within ICH M10, such as those in Section 7.1 covering "Methods for Analytes that are also Endogenous Molecules," while adapting them appropriately for the biomarker context [12].

A significant critique from the European Bioanalytical Forum (EBF), echoed by many in the field, is the guidance's lack of explicit reference to Context of Use (COU) [12] [15]. Unlike drug assays, the validation criteria for a biomarker method are highly dependent on how the data will be used to make decisions in drug development. The guidance's brevity and lack of specific criteria mean that the responsibility falls on applicants to justify their validation protocols based on sound scientific rationale and the specific COU, potentially leading to inconsistencies and regulatory risk [15].

Experimental Protocols for Analytical Procedure Lifecycle Management

Implementing the ICH Q14 and Q2(R2) framework requires a structured, experimental approach. The following workflow and detailed protocols outline the key stages from defining the ATP to establishing a control strategy.

Diagram 1: Analytical Procedure Lifecycle Workflow

Protocol 1: Defining the Analytical Target Profile (ATP)

The ATP is the foundational document that drives the entire analytical procedure lifecycle [10].

- Objective: To create a predefined, quality-based directive that outlines the required quality of the analytical reportable result (e.g., impurity content in %) and links the procedure's performance to its intended use.

- Methodology:

- Identify the Attribute: Clearly define the analyte and the attribute to be measured (e.g., assay of active ingredient, related substance A).

- Define the Performance Requirement: Specify the maximum allowable uncertainty for the reportable value. This is often expressed as target total error (e.g., ± X% of the stated value for an assay) or as an acceptable relative standard deviation for impurities near the quantitation limit.

- Document the Context: Reference the intended use of the procedure (e.g., release testing, stability study).

- Data to be Collected: The finalized ATP document, which typically includes the analytical attribute, performance criteria, and the conditions under which the procedure will be used.

Protocol 2: Systematic Procedure Development & Robustness Testing

This protocol focuses on building procedural understanding and establishing working parameter ranges as advocated in ICH Q14's enhanced approach [14] [10].

- Objective: To identify critical procedure parameters and determine their robust ranges to ensure consistent performance.

- Methodology:

- Risk Assessment: Use tools (e.g., Fishbone diagram, FMEA) to identify potential input variables (e.g., mobile phase pH, column temperature, flow rate) that may impact the ATP's critical performance criteria (e.g., resolution, tailing factor).

- Design of Experiments (DoE): Employ a structured DoE (e.g., Full Factorial, Central Composite) to systematically evaluate the impact of the identified critical parameters.

- Parameter Range Determination: Based on the DoE results, establish verified, wider working ranges for each critical parameter. The operating value is then set within this verified range.

- Data to be Collected:

- Experimental data from the DoE runs (e.g., chromatographic data for each combination of factor levels).

- Statistical models and contour plots showing the relationship between input parameters and output responses.

- A final report documenting the verified parameter ranges and the justification for the set-points.

Protocol 3: Validation as Per ICH Q2(R2)

This protocol translates the performance criteria from the ATP into a formal validation study.

- Objective: To provide documented evidence that the analytical procedure, when operating within its defined parameter ranges, consistently meets the performance criteria outlined in the ATP for its intended use [13].

- Methodology: The specific tests are dictated by the type of procedure (e.g., identification, assay, impurity testing). Key validation parameters and their typical experimental approach are summarized in the table below.

- Data to be Collected: A comprehensive validation report containing all raw data, calculated results for each validation parameter, and a conclusion on the procedure's fitness for purpose.

Table 2: Key Validation Parameters and Experimental Approaches per ICH Q2(R2)

| Validation Parameter | Experimental Protocol Summary | Acceptance Criteria Example (Assay) |

|---|---|---|

| Accuracy (Recovery) | Analyze a minimum of 9 determinations across a specified range (e.g., 3 concentrations/3 replicates each) and compare measured value to a known reference (e.g., spiked placebo) [16]. | Mean Recovery: 98.0-102.0% |

| Precision | - Repeatability: 6 injections of a 100% test concentration [16].- Intermediate Precision: Different days, analysts, or equipment [16]. | RSD ≤ 1.0% for Repeatability; No significant difference between series (p > 0.05) |

| Specificity | Chromatographic separation: Analyze samples spiked with potential interferents (placebo, degradants) to demonstrate resolution and peak purity [16]. | Resolution > 1.5; Peak purity index match |

| Linearity | Prepare and analyze a minimum of 5 concentration levels from, for example, 50-150% of the target concentration. Plot response vs. concentration [16]. | Correlation Coefficient (r) > 0.999 |

| Range | Established from the linearity data, confirmed to provide acceptable accuracy, precision, and linearity [16]. | e.g., 80-120% of test concentration |

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key reagents and materials critical for successfully executing the development and validation protocols for spectroscopic quantification methods.

Table 3: Essential Research Reagent Solutions for Analytical Development & Validation

| Item / Reagent | Function & Rationale |

|---|---|

| Certified Reference Standard | Provides the known, high-purity analyte essential for method development (e.g., linearity, accuracy) and system suitability testing. Its certified purity and identity are foundational for data integrity. |

| Chromatographic Mobile Phase Solvents | High-purity solvents (e.g., HPLC-grade methanol, acetonitrile, and water) are used to prepare the mobile phase. Their quality is critical for achieving low baseline noise, consistent retention times, and avoiding ghost peaks. |

| System Suitability Test (SST) Solution | A prepared mixture containing the analyte and critical separations (e.g., a resolution solution) used to verify the performance of the chromatographic system meets predefined criteria (e.g., plate count, tailing factor, resolution) before analysis begins [16]. |

| Surrogate Matrix (for Biomarkers) | A matrix devoid of the endogenous biomarker (e.g., stripped serum, artificial cerebrospinal fluid) used to prepare calibration standards for quantifying the biomarker in its native biological matrix, as described in ICH M10 Section 7.1 [12]. |

The successful navigation of modern regulatory guidelines requires a deep understanding of the synergistic relationship between ICH Q14's development principles, ICH Q2(R2)'s validation requirements, and specific regional expectations like those in the FDA's 2025 Biomarker Guidance. The paradigm has shifted from a one-time validation exercise to a holistic, risk-based lifecycle approach anchored by the ATP. For biomarker analysis, while the path is less prescriptive, the principles of ICH M10 and a rigorous, scientifically justified approach tailored to the Context of Use are paramount. By adopting these structured protocols for development, validation, and control, scientists can ensure their analytical procedures are not only compliant but also robust, reliable, and ultimately capable of safeguarding patient safety and product quality throughout the product lifecycle.

In pharmaceutical quantification, the reliability of spectroscopic data is paramount. Analytical method validation provides the documented evidence required to assure that an analytical procedure is fit for its intended purpose, ensuring the identity, purity, potency, and safety of drug substances and products [17] [18]. This process is not merely a regulatory hurdle but a fundamental scientific activity that underpins product quality and patient safety. Regulatory agencies, including the U.S. Food and Drug Administration (FDA) and the European Medicines Agency (EMA), require fully validated methods for New Drug Applications (NDAs) and other submissions [17] [19].

The International Council for Harmonisation (ICH) provides the primary global framework for validation through its Q-series guidelines. ICH Q2(R1) has long been the cornerstone, defining the core validation parameters discussed in this guide [17] [4]. The landscape is evolving with the recent introduction of ICH Q2(R2) and ICH Q14, which modernize the approach by incorporating a lifecycle management model and emphasizing science- and risk-based development [20] [4]. For spectroscopic methods, demonstrating control over these parameters is critical for generating defensible data that stands up to regulatory scrutiny.

Core Validation Parameters: Definitions and Regulatory Expectations

The following table summarizes the fundamental validation characteristics as defined by ICH guidelines, their definitions, and typical acceptance criteria for quantitative spectroscopic assays.

Table 1: Core Analytical Method Validation Parameters Based on ICH Guidelines

| Parameter | Definition | Typical Acceptance Criteria (Quantitative Assays) | Primary Regulatory Reference |

|---|---|---|---|

| Specificity | The ability to assess the analyte unequivocally in the presence of components that may be expected to be present (e.g., impurities, degradants, matrix) [17]. | Analyte peak is well-resolved from interfering peaks; peak purity tests pass [18]. | ICH Q2(R1/R2) |

| Accuracy | The closeness of agreement between the test result and the accepted reference value (true value) [21]. | Recovery of 98–102% for drug substance; 98–102% for drug product (can vary by matrix) [17] [21]. | ICH Q2(R1/R2) |

| Precision | The degree of agreement among individual test results when the procedure is applied repeatedly to multiple samplings of a homogeneous sample [4]. | Repeatability: RSD < 2% for assay of drug substance [21]. Intermediate Precision: RSD < 2% for assay (varies with complexity) [21]. | ICH Q2(R1/R2) |

| Linearity | The ability of the method to elicit test results that are directly proportional to the concentration of the analyte [17]. | Correlation coefficient (R²) ≥ 0.999 [17] [21]. | ICH Q2(R1/R2) |

| Range | The interval between the upper and lower concentrations of analyte for which linearity, accuracy, and precision have been demonstrated [4]. | Typically 80-120% of the test concentration for assay [21]. | ICH Q2(R1/R2) |

| Limit of Detection (LOD) | The lowest amount of analyte in a sample that can be detected, but not necessarily quantitated [4]. | Signal-to-noise ratio of 3:1 is typical [21]. | ICH Q2(R1/R2) |

| Limit of Quantitation (LOQ) | The lowest amount of analyte in a sample that can be quantitatively determined with acceptable accuracy and precision [4]. | Signal-to-noise ratio of 10:1; accuracy and precision of 80-120% recovery and RSD < 20% at LOQ [21]. | ICH Q2(R1/R2) |

| Robustness | A measure of the method's capacity to remain unaffected by small, deliberate variations in method parameters [17]. | System suitability criteria are met despite variations (e.g., in pH, flow rate, temperature) [17]. | ICH Q2(R1/R2) |

Experimental Protocols for Key Validation Experiments

Protocol for Specificity and Selectivity

Purpose: To demonstrate that the spectroscopic method can distinguish the analyte from other components, proving that the measured signal is unique to the analyte of interest [17] [18].

Methodology:

- Sample Analysis: Analyze the following samples using the proposed spectroscopic procedure:

- Analyte Standard: Pure analyte at the target concentration.

- Placebo/Blank Matrix: Sample matrix without the analyte to identify interfering signals.

- Stressed Samples (Forced Degradation): Subject the analyte and drug product to stress conditions (e.g., acid/base, heat, light, oxidation) to generate degradation products. Analyze these samples to ensure the analyte peak is resolved from degradant peaks and that the method is "stability-indicating" [18].

- Samples with Added Impurities: Spike the sample with known and potential impurities to confirm separation.

Data Analysis: For spectroscopic methods like UV-Vis, overlay the spectra of the analyte, placebo, and degradants. The analyte spectrum should be clearly distinct, with no significant interference at the wavelength used for quantification. For chromatographic-spectroscopic hyphenated techniques (e.g., LC-UV, LC-MS), peak purity assessment using a photodiode array (PDA) detector is often required to confirm a single component within a peak [18].

Protocol for Accuracy (Recovery Study)

Purpose: To determine the closeness of the measured value to the true value of the analyte [21].

Methodology:

- Preparation: Prepare a placebo or blank matrix equivalent to the sample.

- Spiking: Spike the placebo with the analyte at a minimum of three concentration levels (e.g., 80%, 100%, 120% of the target concentration), with a minimum of three replicates per level (total of 9 determinations) [21].

- Analysis: Analyze the spiked samples using the validated method.

- Calculation: Calculate the percent recovery for each sample.

Data Analysis:

- % Recovery = (Measured Concentration / Theoretical Concentration) × 100

- Report the mean recovery and relative standard deviation (RSD) for each concentration level. The results should fall within pre-defined acceptance criteria, typically 98-102% for the drug substance [17] [21].

Protocol for Precision (Repeatability and Intermediate Precision)

Purpose: To demonstrate the consistency of the method under normal operating conditions [17].

Methodology:

- Repeatability (Intra-assay Precision):

- Intermediate Precision:

Data Analysis: The %RSD for repeatability of an assay is typically expected to be less than 2% [21]. The acceptance criteria for intermediate precision are often similar, acknowledging that variability may be slightly higher.

Protocol for Linearity and Range

Purpose: To demonstrate a proportional relationship between the analyte concentration and the spectroscopic response, and to define the concentration range over which this relationship holds with suitable accuracy and precision [17].

Methodology:

- Preparation: Prepare a series of standard solutions at a minimum of five concentration levels, spanning the intended range (e.g., 50%, 75%, 100%, 125%, 150% of the target concentration) [21].

- Analysis: Analyze each standard solution in a randomized order.

- Plotting: Plot the instrumental response (e.g., absorbance, peak area) against the known concentration of the standard.

Data Analysis: Perform linear regression analysis on the data. Calculate the correlation coefficient (R²), slope, and y-intercept. For a precise assay method, an R² value of ≥ 0.999 is typically expected [17] [21]. The range is established as the interval over which linearity, as well as acceptable accuracy and precision, is demonstrated [4].

Protocol for LOD and LOQ Determination

Purpose: To establish the lowest concentrations of analyte that can be reliably detected and quantified [21].

Methodology (Signal-to-Noise Ratio): This approach is commonly used for spectroscopic and chromatographic methods.

- Preparation: Prepare a sample with a known, low concentration of the analyte.

- Analysis: Analyze the sample and measure the signal (S) of the analyte and the noise (N) from the baseline.

- Calculation:

Alternative Method (Standard Deviation of the Response): LOD can be calculated as 3.3σ/S, and LOQ as 10σ/S, where σ is the standard deviation of the response (y-intercept) and S is the slope of the calibration curve [21].

Data Analysis: For LOQ, the determined concentration level should also be analyzed to confirm that it can be quantified with acceptable accuracy (e.g., 80-120% recovery) and precision (e.g., RSD < 20%) [21].

Protocol for Robustness

Purpose: To evaluate the method's resilience to small, deliberate changes in operational parameters, identifying critical factors that must be tightly controlled [17].

Methodology:

- Identify Parameters: Select key method parameters that could vary, such as pH of the buffer, wavelength detection, flow rate (for LC), mobile phase composition, and temperature.

- Design Experiment: Systematically vary one parameter at a time (OFAT) or, more efficiently, use a structured Design of Experiments (DoE) approach to study interactions [20] [19].

- Analysis: Analyze a system suitability sample or a standard under each varied condition.

- Evaluation: Monitor key performance indicators like resolution, tailing factor, theoretical plates, and %RSD to see if they remain within acceptance criteria despite the variations.

Data Analysis: The method is considered robust if the system suitability criteria are met and the quantitative results remain unaffected by the small, deliberate changes [17].

Visualization of the Method Validation Lifecycle

The following workflow diagram illustrates the modern, integrated approach to analytical procedure development and validation, as emphasized by recent ICH guidelines (Q2(R2) and Q14).

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials and solutions required for successful method validation experiments.

Table 2: Essential Research Reagent Solutions and Materials for Validation Studies

| Item | Function / Purpose in Validation |

|---|---|

| High-Purity Reference Standard | Serves as the benchmark for accuracy and linearity studies; its certified purity and concentration are essential for calculating recovery and preparing calibration curves [18]. |

| Placebo Formulation | The drug product matrix without the active ingredient; used in specificity testing to rule out matrix interference and in accuracy (recovery) studies by spiking with the analyte [18]. |

| Qualified Impurities and Degradants | Chemically characterized impurities and forced degradation products; critical for challenging the method's specificity and proving its stability-indicating capabilities [18]. |

| High-Quality Solvents & Reagents | Essential for preparing mobile phases, buffers, and sample solutions; their quality and consistency directly impact baseline noise, detection sensitivity (LOD/LOQ), and method robustness [22]. |

| Stable, Traceable Certified Reference Materials (CRMs) | Used for ultimate method verification and cross-laboratory comparison; provides a definitive link to a recognized standard, supporting claims of accuracy and reproducibility [23]. |

A rigorous understanding and application of the core validation parameters—specificity, LOD/LOQ, accuracy, precision, linearity, and robustness—are non-negotiable for generating reliable spectroscopic data in pharmaceutical research. The experimental protocols outlined provide a roadmap for systematically demonstrating that an analytical procedure is fit for its purpose, whether for release testing, stability studies, or regulatory submission. The evolving regulatory landscape, with its shift towards a lifecycle approach as seen in ICH Q2(R2) and Q14, makes this deep, science-based understanding more critical than ever. By adhering to these principles and employing a well-planned validation strategy, scientists can ensure the quality and integrity of their analytical methods, ultimately contributing to the development of safe and effective medicines.

The field of pharmaceutical analysis is undergoing a significant transformation, moving from a static, event-based model of analytical method validation to a dynamic, integrated lifecycle approach. This paradigm shift is formally recognized in recent regulatory guidelines, including ICH Q2(R2) and ICH Q14, which redefine method validation as a continuous process rather than a one-time milestone [24]. The lifecycle framework ensures that analytical procedures remain scientifically sound and fit for their intended purpose throughout the entire clinical development and commercial lifecycle of a pharmaceutical product [25].

For researchers and scientists involved in pharmaceutical quantification spectroscopy, this approach provides a structured yet flexible framework for managing analytical procedures. It emphasizes continuous verification and method performance monitoring, aligning analytical activities with stage-appropriate regulatory requirements while managing project risks and development costs [25]. This article explores the key stages of the analytical lifecycle, compares traditional versus modern approaches, and provides practical experimental protocols for implementation within spectroscopic quantification research.

The Analytical Procedure Lifecycle: Core Components

Defining the Lifecycle Framework

The analytical procedure lifecycle encompasses all activities related to analytical method development, qualification, validation, transfer, and ongoing monitoring [25]. At the heart of this approach is the Analytical Target Profile (ATP), which clearly defines what the method must measure and the required performance levels for accuracy, precision, and robustness [24]. The ATP serves as the foundational benchmark throughout the method's lifecycle, guiding development decisions and serving as the reference point for any future modifications.

The lifecycle concept recognizes that analytical methods, like manufacturing processes, can drift over time due to changes in equipment, reagents, operators, or even subtle changes in product attributes [24]. Rather than treating validation as a discrete event preceding commercial manufacturing, the lifecycle framework emphasizes continuous assurance of method performance through planned activities at each stage of drug development [25].

Stage-Appropriate Implementation

A fundamental principle of the lifecycle approach is implementing stage-appropriate validation activities throughout clinical development. The level of method understanding and validation rigor should align with the phase of clinical development and associated regulatory expectations [25].

- Early Phase (Phase I): Regulatory agencies require confirmation that methods are "scientifically sound, suitable, and reliable" for their intended purpose [25]. An initial performance assessment is strongly encouraged, keeping ICH Q2 parameters in mind while providing authorities with preliminary data on method performance [25].

- Late Phase (Phase II/III): Method refinement and robustness assessment are typically performed during Phase II [25]. Method validation should be completed before pivotal studies, following the clinical proof-of-concept establishment for the drug candidate [25].

- Commercial Phase: Continuous monitoring through system suitability trending, control charts, and tracking of out-of-specification (OOS) or out-of-trend (OOT) results provides evidence that methods continue to meet ATP expectations [24].

Traditional vs. Modern Lifecycle Approach: A Comparative Analysis

The shift from traditional validation to a modern lifecycle approach represents more than just procedural updates—it constitutes a fundamental change in philosophy and practice. The table below summarizes the key differences between these paradigms.

Table 1: Comparison of Traditional Validation vs. Modern Lifecycle Approaches

| Aspect | Traditional "Event-Based" Validation | Modern Lifecycle Approach |

|---|---|---|

| Core Philosophy | One-time demonstration of suitability; static process | Continuous verification; dynamic, ongoing process [24] |

| Regulatory Focus | Prerequisites for commercial manufacturing only [25] | Stage-appropriate activities throughout clinical development [25] |

| Primary Guidance | ICH Q2A/Q2B (focused mainly on validation) [26] [18] | ICH Q2(R2) & Q14 (integrated lifecycle view) [24] |

| Method Design | Often empirical; parameters optimized sequentially | Risk-based with robustness built into design using DOE [24] |

| Change Management | Often requires full revalidation | Risk-based; modifications possible without full revalidation if ATP criteria are met [24] |

| Performance Monitoring | Limited or reactive (post-OOS) | Proactive through system suitability trending and control charts [24] |

| Documentation Strategy | Fixed validation report | Living knowledge management throughout method lifespan |

The modern approach offers significant advantages in terms of scientific robustness and operational flexibility. Methods designed and monitored following a lifecycle model are more reliable, reducing the risk of batch rejections, failed audits, and costly investigations [24]. The framework also supports efficient change control, enabling organizations to adapt methods without revalidating from scratch—a critical capability for innovation and scale-up in fast-paced development environments [24].

Key Stages of the Analytical Lifecycle

Stage 1: Method Development and the Analytical Target Profile

Method development establishes the scientific foundation for the entire analytical lifecycle. During this phase, researchers define the ATP based on the target product profile, critical quality attributes, and the intended use of the method [25]. For spectroscopic quantification methods, this includes selecting appropriate instrumentation, establishing sample preparation procedures, and identifying critical method parameters.

The development phase should incorporate quality by design (QbD) principles, using risk-based tools such as Ishikawa diagrams or control, noise, and experimental (CNX) methods for identifying critical factors [25]. Design of experiments (DOE) approaches are particularly valuable for understanding method robustness early in development, creating a more resilient foundation for the subsequent lifecycle stages [24].

Stage 2: Method Qualification and Prevalidation

Qualification serves as the bridge between method development and formal validation. While not an official term in regulatory guidelines, qualification typically refers to activities starting after method development and ending with the assessment of method validation readiness [25].

Table 2: Analytical Method Qualification Activities

| Qualification Activity | Typical Timing | Key Objectives |

|---|---|---|

| Initial Performance Assessment | Phase I (for IMP dossier) [25] | Provide first results on method performance; set preliminary acceptance criteria |

| Method Refinement | Phase II [25] | Optimize testing processes; improve performance or throughput |

| Robustness Assessment | Phase II [25] | Identify critical parameters affecting method performance |

| Stability-Indicating Studies | During development or early qualification [25] | Demonstrate method can detect changes in product quality over time |

| Validation Readiness Assessment | Before formal validation [25] | Compile all development/qualification data to confirm method readiness |

For spectroscopic methods, demonstrating stability-indicating properties is particularly crucial. This involves testing the method with representative materials and their intentionally degraded forms to confirm the method can detect relevant product quality changes [25].

Stage 3: Formal Method Validation

Formal validation provides comprehensive evidence that the analytical method is suitable for its intended use. The ICH Q2(R2) guideline outlines key validation parameters that should be evaluated based on the type of analytical procedure [18]. For spectroscopic quantification methods, the following parameters are typically assessed:

Table 3: Validation Parameters for Spectroscopic Quantification Methods

| Validation Parameter | Definition | Typical Experimental Protocol |

|---|---|---|

| Accuracy | Closeness between measured value and true value [18] | Spike recovery experiments at multiple concentration levels (e.g., 50%, 100%, 150% of target) using certified reference materials [18] |

| Precision | Degree of agreement among individual test results [18] | Multiple measurements of homogeneous samples; includes repeatability (same conditions) and intermediate precision (different days, analysts, equipment) [18] |

| Specificity | Ability to measure analyte accurately in presence of interfering components [18] | Compare analyte response in presence of placebos, impurities, degradation products; use orthogonal detection (e.g., photodiode array, mass spectrometry) for peak purity [18] |

| Linearity | Ability to obtain results proportional to analyte concentration | Prepare and analyze standard solutions at 5+ concentration levels across specified range |

| Range | Interval between upper and lower concentration with suitable precision, accuracy, and linearity [18] | Established from linearity studies; must encompass all intended application concentrations |

| Quantitation Limit | Lowest amount of analyte that can be quantitatively determined [18] | Signal-to-noise approach (typically 10:1) or based on standard deviation of response and slope |

| Detection Limit | Lowest amount of analyte that can be detected [18] | Signal-to-noise approach (typically 3:1) or based on standard deviation of response and slope |

| Robustness | Capacity to remain unaffected by small, deliberate variations in method parameters [18] | DOE approaches evaluating impact of slight changes in critical parameters (e.g., wavelength, path length, temperature) |

The experimental design for validation studies should be statistically sound, with the number of replicates determined based on initial performance data and acceptance criteria [25]. For accuracy and precision studies, the minimal number of runs is best defined using statistical t-test considerations from initial performance assessment data [25].

Stage 4: Continuous Verification and Lifecycle Management

The post-validation phase represents a significant departure from traditional approaches. Rather than considering the method "fixed" after validation, the lifecycle approach emphasizes ongoing performance verification [24]. This includes:

- System Suitability Testing: Establishing and trending system suitability parameters to ensure continued method performance [24]

- Control Charts: Monitoring method performance over time using statistical process control principles [24]

- Change Control: Implementing a risk-based approach to method changes, where modifications can be justified without full revalidation as long as ATP criteria continue to be met [24]

- Knowledge Management: Maintaining comprehensive documentation of method performance throughout its lifecycle to support investigations and future improvements

This continuous verification mindset ensures that methods remain validated and fit for purpose throughout their entire operational lifespan, providing confidence not just at the point of validation, but continuously during routine use [24].

Experimental Protocols for Key Validation Parameters

Accuracy and Precision Protocol for Spectroscopic Quantification

Objective: To establish the accuracy and precision of a spectroscopic quantification method for active pharmaceutical ingredient (API) determination in drug product.

Materials and Reagents:

- Certified reference standard of API

- Pharmaceutical formulation (placebo and finished product)

- Appropriate solvents (HPLC grade or equivalent)

- Volumetric glassware (Class A)

Procedure:

- Prepare a stock solution of certified reference standard at the target concentration

- Prepare nine separate samples at three concentration levels (80%, 100%, 120% of target) in triplicate by spiking API into placebo matrix

- Analyze all samples using the validated spectroscopic method

- Calculate percent recovery for each sample: (Measured Concentration / Theoretical Concentration) × 100

- Calculate mean recovery (accuracy) and relative standard deviation (precision) at each concentration level

Acceptance Criteria: Mean recovery should be 98.0-102.0% with RSD ≤2.0% for repeatability [18]

Specificity Protocol For Stability-Indicating Methods

Objective: To demonstrate the method can specifically quantify the analyte without interference from degradation products or matrix components.

Materials and Reagents:

- API reference standard

- Stressed samples (acid, base, oxidative, thermal, photolytic degradation)

- Placebo formulation

- Finished product

Procedure:

- Prepare samples of API, placebo, and forced degradation materials

- Analyze all samples using the spectroscopic method with orthogonal detection (e.g., photodiode array)

- Examine spectral purity of analyte peaks in all samples

- Verify absence of interference at the retention time/maximum absorbance of the analyte

Acceptance Criteria: Analyte peak should be pure by spectral analysis (purity angle < purity threshold); no significant interference (>2% of analyte response) from placebo or degradation products at analyte retention time [18]

Essential Research Reagent Solutions

Table 4: Key Research Reagent Solutions for Analytical Lifecycle Management

| Reagent/Material | Function in Analytical Lifecycle | Critical Quality Attributes |

|---|---|---|

| Certified Reference Standards | Accuracy determination; method calibration [18] | Certified purity, documentation of traceability, stability data |

| System Suitability Standards | Verify method performance before sample analysis [24] | Well-characterized composition, appropriate stability |

| Placebo Formulations | Specificity testing; method development [18] | Representative of final product composition without API |

| Forced Degradation Materials | Specificity validation for stability-indicating methods [18] | Controlled degradation conditions (5-20% API loss) |

| Quality Control Samples | Ongoing method performance verification [24] | Established target values with acceptable ranges |

The analytical lifecycle approach represents the future of method validation in pharmaceutical quantification spectroscopy. By adopting this framework, organizations can ensure their analytical methods remain scientifically robust, regulatory compliant, and fit for purpose throughout the entire product lifecycle. The shift from event-based validation to continuous verification requires new ways of thinking, but offers significant rewards in terms of operational flexibility, reduced investigation costs, and enhanced regulatory readiness [24].

For researchers and scientists, implementing the analytical lifecycle approach means embracing the ATP as a guiding principle, building quality into method design rather than testing it in validation, and establishing systems for continuous performance monitoring. As regulatory authorities increasingly expect this mindset, companies that adopt lifecycle management will be better positioned for successful product development and sustainable commercial manufacturing.

Implementing Quality by Design (QbD) and Risk Management (ICH Q9) in Method Development

The pharmaceutical industry is undergoing a fundamental transformation in quality management, moving from traditional, reactive testing approaches to proactive, science-based methodologies. This shift is embodied by the implementation of Quality by Design (QbD) and Quality Risk Management (QRM) principles in analytical method development [27]. Rooted in the International Council for Harmonisation (ICH) guidelines Q8, Q9, and Q10, this integrated framework ensures that quality is built into methods from their inception, rather than merely tested at the end [28] [29].

For researchers and scientists developing spectroscopic and chromatographic methods for pharmaceutical quantification, this paradigm shift offers significant advantages: enhanced method robustness, reduced operational failures, and greater regulatory flexibility. The European Medicines Agency (EMA) describes QbD as "a systematic approach to development that begins with predefined objectives and emphasizes product and process understanding and process control, based on sound science and quality risk management" [27] [30]. When coupled with ICH Q9's systematic risk management principles, this approach provides a powerful foundation for developing analytical methods that remain reliable throughout their lifecycle [31].

Theoretical Framework: QbD and QRM Fundamentals

Core Principles of Quality by Design

QbD in analytical method development (often termed AQbD - Analytical Quality by Design) represents a systematic approach to method development that begins with predefined objectives. The core principles include:

- Establishing the Analytical Target Profile (ATP): A prospective description of the method's required performance characteristics that defines its purpose throughout the lifecycle [27].

- Identifying Critical Method Attributes (CMAs): The key performance characteristics (e.g., specificity, accuracy, precision) that must be achieved to ensure the method fulfills its intended purpose [27].

- Systematic Risk Assessment: Application of structured approaches to identify and prioritize method parameters that may impact CMAs [27].

- Design of Experiments (DoE): Using statistical experimental design to understand parameter interactions and establish a robust method operable design region [27].

- Continuous Monitoring and Improvement: Implementing controls and monitoring strategies to ensure method performance throughout its lifecycle [27].

Quality Risk Management According to ICH Q9

ICH Q9 provides a framework for quality risk management that can be applied to pharmaceutical and analytical method development. The guideline offers principles and examples of tools for quality risk management that can be applied throughout the lifecycle of drug substances and drug products [31]. Key elements include:

- Risk Assessment: Initiation of risk management process involving risk identification, risk analysis, and risk evaluation [31] [28].

- Risk Control: Implementing decisions to reduce or accept risks, including establishing a method control strategy [28].

- Risk Review: Monitoring and reviewing the method performance to ensure risk controls remain effective [31].

- Risk Communication: Sharing risk management activities and outcomes across stakeholders [31].

The 2023 revision of ICH Q9 (R1) explicitly applies these principles to development, manufacturing, distribution, and post-approval processes, reinforcing its relevance for analytical methods [28].

Implementation Workflow: From Theory to Practice

Structured Approach to QbD Implementation

Implementing QbD for analytical method development follows a logical, phased approach that integrates risk management at each stage. The workflow below visualizes this systematic process:

Systematic QbD Implementation Workflow: This diagram illustrates the structured approach to implementing Quality by Design in analytical method development, highlighting the integration of risk assessment throughout the process.

Comparative Analysis: Traditional vs. QbD Approach

The table below summarizes the fundamental differences between traditional and QbD-based approaches to analytical method development:

Table 1: Traditional vs. QbD Approach to Analytical Method Development

| Aspect | Traditional Approach | QbD Approach |

|---|---|---|

| Development Philosophy | Empirical, trial-and-error | Systematic, science-based |

| Quality Focus | Quality by testing (QbT) | Quality by design (QbD) |

| Parameter Understanding | One-factor-at-a-time (OFAT) | Multivariate (DoE) |

| Method Robustness | Limited understanding | Comprehensive understanding via design space |

| Regulatory Flexibility | Fixed conditions | Flexible within design space |

| Lifecycle Management | Reactive changes | Continuous improvement |

| Risk Management | Informal, experience-based | Formalized, ICH Q9-based |

Industry data demonstrates the significant advantages of QbD implementation. One AAPS Open case study reported a 30% reduction in development and validation time when a generic tablet product was developed under a QbD framework compared to conventional methods [28]. Furthermore, QbD implementation has been shown to reduce batch failures by 40% and enhance process robustness through real-time monitoring [27].

Case Studies and Experimental Evidence

Pharmaceutical Contaminant Analysis in Aquatic Environments

A 2025 study developed a UHPLC-MS/MS method for quantifying pharmaceutical contaminants in water and wastewater, explicitly following ICH Q2(R2) validation guidelines [6]. The method demonstrates key QbD principles:

- ATP Definition: Sensitivity to detect trace contaminants at ng/L levels with minimal environmental impact

- DoE Application: Optimization of extraction and chromatographic conditions through experimental design

- Green Chemistry Alignment: Omission of energy-intensive evaporation steps, reducing solvent consumption and waste

The validated method achieved impressive performance characteristics: correlation coefficients ≥0.999, precision (RSD <5.0%), and accurate recovery rates (77-160%) across target analytes including carbamazepine, caffeine, and ibuprofen [6]. This case exemplifies how QbD principles can be applied to environmental pharmaceutical analysis while maintaining sustainability goals.

Antineoplastic Agent Monitoring in Biological Matrices

A 2025 study addressing occupational exposure to antineoplastic agents developed two validated UPLC-ESI-MS/MS methods for quantifying five high-risk compounds in urine [32]. The QbD approach included:

- Risk-Based Parameter Selection: Identification of critical parameters affecting sensitivity and selectivity

- Design Space Establishment: Defining robust operating ranges for extraction efficiency and matrix effects

- Control Strategy: Implementing system suitability criteria and quality controls

The methods achieved exceptional sensitivity with lower limits of quantification ranging from 0.1 ng/mL for cyclophosphamide to 10 ng/mL for imatinib, demonstrating the effectiveness of QbD in developing highly sensitive bioanalytical methods [32].

Comparative Performance Data

The table below summarizes quantitative performance data from recent studies implementing QbD in analytical method development:

Table 2: Experimental Performance Data from QbD-Implemented Methods

| Application Area | Analytical Technique | Key Performance Metrics | QbD Elements Applied |

|---|---|---|---|

| Pharmaceutical contaminants in water [6] | UHPLC-MS/MS | LOD: 100-300 ng/LLOQ: 300-1000 ng/LPrecision: RSD <5.0%Linearity: R² ≥0.999 | ATP definition, DoE, Green chemistry principles |

| Antineoplastic drugs in urine [32] | UPLC-ESI-MS/MS | LLOQ: 0.1-10 ng/mLExtraction efficiency: ValidatedMatrix effect: Characterized | Risk-based parameter selection, Design space, Control strategy |

| CFTR modulators in plasma [33] | LC-MS/MS | Linear range: 0.1-20 µg/mLAccuracy: ≤15% biasPrecision: ≤15% RSD | ICH Q2(R2) validation, Robust sample preparation |

| Clozapine and metabolites in plasma [34] | UHPLC-MS/MS | Selectivity: No interferenceLinearity: R² >0.99LLOQ: Sub-therapeutic levels | Risk assessment, DoE, SPE optimization |

Essential Research Reagent Solutions

Successful implementation of QbD in analytical method development requires specific reagents, materials, and instrumentation. The table below details key research solutions referenced in the case studies:

Table 3: Essential Research Reagent Solutions for QbD Method Development

| Reagent/Material | Function in Method Development | Application Examples |

|---|---|---|

| UHPLC-MS/MS Systems | High-resolution separation with sensitive detection | Pharmaceutical contaminants [6], Antineoplastic drugs [32] |

| Solid-Phase Extraction (SPE) Cartridges | Sample clean-up and analyte concentration | Oasis HLB for alpha-fluoro-beta-alanine [32] |

| Stable Isotope-Labeled Internal Standards | Compensation for matrix effects and recovery variations | Clozapine-D8, NDC-D8 for metabolite quantification [34] |

| Design of Experiments (DoE) Software | Statistical optimization of multiple parameters | Multivariate analysis for parameter interactions [27] |

| Chromatography Columns | Stationary phases for specific separation needs | Various UHPLC columns for small molecules [32] [6] |

| Mass Spectrometry Reference Standards | Method development and qualification | Certified reference materials for all target analytes [32] [34] |

Regulatory Considerations and Future Directions

The implementation of QbD and QRM in analytical method development aligns with evolving regulatory expectations. Regulatory agencies, including the FDA and EMA, have demonstrated "strong alignment" on QbD concepts through joint initiatives such as the FDA–EMA QbD pilot program [28] [27]. The 2023 revision of ICH Q9 (R1) further emphasizes the application of risk management principles throughout development and manufacturing [28].

Emerging trends in the field include:

- AI-Integrated QbD: Machine learning algorithms for design space exploration and predictive modeling [27]

- Green Analytical Chemistry: Combining QbD principles with environmentally sustainable methodologies [6]

- Continuous Method Verification: Real-time performance monitoring using modern process analytical technology [27]

- Harmonized Standards: Efforts to address regulatory disparities between agencies and regions [27]

Adoption data indicates that approximately 38% of full marketing authorization submissions in the U.S. and EU now incorporate QbD elements, reflecting the growing acceptance of this approach [28]. As the pharmaceutical industry continues to evolve toward more sophisticated analytical technologies and complex molecules, the implementation of QbD and QRM provides a structured framework for ensuring method robustness, regulatory compliance, and ultimately, patient safety.

Applied Spectroscopy: Method Development, Transfer, and Real-World Case Studies

The accurate quantification of active pharmaceutical ingredients (APIs), impurities, and biomarkers is a cornerstone of drug development and quality control. Selecting an appropriate analytical technique is paramount to meeting the specific sensitivity, selectivity, and throughput requirements of a given application. This guide provides an objective comparison of three foundational spectroscopic and chromatographic methods—UV-Vis spectrophotometry, High-Performance Liquid Chromatography/Ultra-Fast Liquid Chromatography with Diode Array Detection (HPLC/UFLC-DAD), and Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS). By presenting validated experimental data and detailed protocols, this article serves as a decision-making framework for researchers and scientists in the pharmaceutical industry.

In pharmaceutical analysis, techniques are selected based on the complexity of the sample matrix, the concentration of the analyte, and the required level of specificity. UV-Vis spectrophotometry is a simple, cost-effective technique based on the measurement of light absorption by molecules in solution. While straightforward, it lacks the ability to separate mixtures, making it suitable only for pure substances or simple formulations. HPLC/UFLC-DAD couples the powerful separation capabilities of liquid chromatography with a detector that can record full UV-Vis spectra. This allows for the resolution of complex mixtures and provides spectral confirmation of peak identity and purity. LC-MS/MS represents the highest tier of specificity and sensitivity. It combines chromatographic separation with mass detection, using two mass analyzers in series to fragment the analyte and detect unique product ions, enabling unambiguous identification and quantification even in the most complex biological matrices at trace levels.

Technical Comparison and Experimental Data

The following table summarizes the key performance characteristics of the three techniques, based on validation data from recent studies.

Table 1: Comparative Performance of UV-Vis, HPLC-DAD, and LC-MS/MS Techniques

| Parameter | UV-Vis Spectrophotometry | HPLC/UFLC-DAD | LC-MS/MS |

|---|---|---|---|

| Typical Linear Range | Wide | Wide | Wide (over several orders of magnitude) |

| Limit of Detection (LOD) | µg/mL range (e.g., ~µg/mL for Voriconazole) [35] | ng/mL range (e.g., 16.5 ng/mL for Vitamin B1) [36] | pg/mL to ng/mL range (e.g., 100-300 ng/L for pharmaceuticals in water) [6] |

| Limit of Quantification (LOQ) | µg/mL range (e.g., ~µg/mL for Voriconazole) [35] | ng/mL range (e.g., 60 ng/mL for Andrographolide) [37] | pg/mL to ng/mL range (e.g., 300-1000 ng/L for pharmaceuticals in water) [6] |

| Precision (%RSD) | < 2% [35] | < 3.23% [36] | < 5.0% [6] |

| Accuracy (% Recovery) | 90-110% [35] | 100 ± 3% [36] | 77-160% (matrix-dependent) [6] |

| Key Advantage | Simplicity, speed, low cost | Separation and spectral identity confirmation | Unmatched specificity and sensitivity for trace analysis |

| Primary Limitation | No separation; prone to interference | Lower sensitivity and specificity than MS | High cost, complex operation, matrix effects |

Detailed Experimental Protocols

Protocol 1: HPLC-DAD for Vitamin Analysis in Gummies

A validated method for simultaneous analysis of Vitamins B1, B2, and B6 in pharmaceutical gummies and gastrointestinal fluids demonstrates the application of HPLC-DAD/FLD [36].

- Sample Preparation: For gummies, a liquid/solid extraction is used, achieving recoveries >99.8%. For complex GI fluids, Solid Phase Extraction (SPE) is employed, with recoveries of 100 ± 5%. Vitamin B1 requires a pre-column oxidation/derivatization step to convert it into a fluorescent thiochrome derivative for detection with a Fluorescence Detector (FLD).

- Chromatography:

- Column: Aqua C18 (250 mm × 4.6 mm, 5 µm)

- Mobile Phase: Isocratic elution with 70% NaH₂PO₄ buffer (pH 4.95) and 30% methanol.

- Flow Rate: 0.9 mL/min

- Temperature: 40 °C

- Detection: DAD for Vitamins B2 and B6; FLD for derivatized B1.

- Validation: The method was linear (R² > 0.999), precise (%RSD < 3.23), and accurate (% Mean Recovery 100 ± 3%), complying with ICH guidelines [36].

Protocol 2: LC-MS/MS for Trace Pharmaceutical Monitoring

A green UHPLC-MS/MS method for detecting carbamazepine, caffeine, and ibuprofen in water at ng/L levels highlights the extreme sensitivity of this technique [6].

- Sample Preparation: Solid-phase extraction (SPE) without an evaporation step, reducing solvent consumption and analysis time.

- Chromatography:

- Technique: UHPLC for fast separation.

- Run Time: 10 minutes.

- Mass Spectrometry:

- Ionization: Electrospray Ionization (ESI)

- Acquisition Mode: Multiple Reaction Monitoring (MRM) for high selectivity.

- Validation: The method was specific, linear (R² ≥ 0.999), precise (RSD < 5.0%), and achieved LODs of 100-300 ng/L [6].

Protocol 3: UV-Vis for API in Tablet Dosage Form

A simple UV-Vis method for estimating Voriconazole in bulk and tablet dosage forms underscores the technique's utility for routine analysis of simple mixtures [35].

- Sample Preparation: Tablets are dissolved in iso-propyl alcohol.

- Analysis:

- Solvent: Iso-propyl alcohol

- Wavelength (λmax): 256 nm

- Validation: The method was linear, precise (RSD < 2%), accurate (% Recovery within 90-110%), and robust according to ICH guidelines [35].

Workflow and Decision Pathway

The following diagram illustrates the typical analytical workflow for the quantification of a pharmaceutical compound, from sample preparation to data analysis.

Figure 1: Analytical Workflow for Pharmaceutical Quantification.

The decision-making process for technique selection is critical for efficient resource allocation. The following pathway provides a logical framework based on key analytical questions.

Figure 2: Technique Selection Decision Pathway.

Essential Research Reagent Solutions

The following table lists key reagents and materials commonly used in these analytical methods, with their specific functions.

Table 2: Key Reagents and Materials for Pharmaceutical Quantification

| Reagent/Material | Function/Application | Example from Literature |

|---|---|---|

| Ammonium Formate with Formic Acid | Mobile phase additive for LC-MS; improves ionization efficiency and peak shape. | Optimized mobile phase for detecting illegal dyes in olive oil by LC-MS/MS [38]. |

| Solid Phase Extraction (SPE) Cartridges | Sample clean-up and pre-concentration of analytes from complex matrices. | Used for extracting vitamins from gastrointestinal fluids [36] and pharmaceuticals from water [6]. |

| Derivatization Reagents | Chemically modify non-UV-absorbing or non-fluorescent analytes for detection. | Pre-column oxidation of Vitamin B1 to fluorescent thiochrome for FLD detection [36]. |

| Salting-Out Agents (e.g., MgSO₄) | Salt-assisted liquid-liquid extraction (SALLE) to enhance partitioning of analytes into the organic phase. | Used for efficient extraction of andrographolide from plasma with >90% recovery [37]. |

| PFP (Pentafluorophenyl) Columns | Specialized LC stationary phase offering unique selectivity for complex mixtures of analytes with diverse polarities. | Used for separating 40 hydrophilic and lipophilic contaminants in a single run [39]. |

The choice between UV-Vis, HPLC/UFLC-DAD, and LC-MS/MS is a strategic decision that balances analytical needs with practical constraints. UV-Vis remains a robust and efficient tool for the analysis of pure substances in quality control. HPLC-DAD is the workhorse for resolving and quantifying components in complex formulations without the need for extreme sensitivity. For the most demanding applications involving trace-level quantification in complex biological or environmental matrices, LC-MS/MS is the unequivocal gold standard due to its superior specificity and sensitivity. By understanding the capabilities and limitations of each technique, as demonstrated through validated experimental data, pharmaceutical professionals can make informed decisions to ensure the accuracy, efficiency, and regulatory compliance of their analytical methods.

In pharmaceutical development, the Analytical Target Profile (ATP) is a foundational concept that shifts the paradigm from simply executing analytical methods to strategically designing them to be fit-for-purpose. An ATP is defined as a prospective summary of the required quality characteristics of an analytical procedure, stating the quality of the reportable value it must produce in terms of target measurement uncertainty (TMU) [40] [41]. Within the framework of Analytical Procedure Lifecycle Management and guided by ICH Q14, the ATP establishes the predefined objectives for an analytical procedure, ensuring it delivers reliable data to support critical decisions about product quality, safety, and efficacy [42] [43].

This systematic approach mirrors the Quality by Design (QbD) principles applied to manufacturing processes. Just as the Quality Target Product Profile (QTPP) guides drug product development, the ATP serves as an analogous tool for analytical method development, creating a direct link between the measurement requirements and the Critical Quality Attributes (CQAs) of the drug substance or product [44] [43]. By defining what the method needs to achieve before determining how it will be achieved, the ATP provides a clear roadmap for development, validation, and ongoing performance monitoring throughout the method's lifecycle [41].

Core Components of an Effective ATP

Essential Elements and Structure

A well-constructed ATP explicitly defines the criteria for success for an analytical procedure. The key components that form a comprehensive ATP are detailed below.

Table 1: Essential Components of an Analytical Target Profile (ATP)

| ATP Component | Description | Example |

|---|---|---|

| Intended Purpose | Clearly defines what the procedure measures and its decision-making context [41] [43]. | "Quantification of active ingredient in drug product for release testing." |

| Link to CQAs | Summarizes how the procedure provides reliable results about the specific CQA being assessed [43]. | Link to potency or impurity profile CQA. |

| Reportable Result | Defines the format and units of the final value delivered by the procedure [40]. | Percentage (%) of label claim. |

| Performance Characteristics | Specifies the required levels for accuracy, precision, specificity, etc. [41]. | Accuracy within ±2.0%; Precision RSD ≤2.0%. |

| Acceptance Criteria | Sets the minimum acceptable performance levels for each characteristic [41]. | See performance characteristics. |

| Rationale | Provides justification for the set acceptance criteria [43]. | Based on product specification limits and TMU calculations. |

Establishing Precision Requirements: Target Measurement Uncertainty (TMU)

A central function of the ATP is to establish the required precision, expressed as the Target Measurement Uncertainty (TMU). This should be derived objectively from the specification limits (SL) of the product attribute, not merely from the capability of the analytical technique [40].

For a drug substance assay with specification limits of 98.0-102.0%, the TMU can be calculated based on the normal distribution probability. Assuming manufacturing process controls the true assay value between 99.5% and 100.0%, the one-sided range available for analytical error is 0.5%. To ensure a low probability (e.g., 0.27%) of an out-of-specification result due to analytical error alone, the TMU can be set at 0.17% (absolute standard deviation) [40]. The general relationship is defined by the formula for the % Tolerance of Measurement Error:

% Tolerance Measurement Error = (Standard Deviation Measurement Error × 5.15) / (USL - LSL) [44]

Where USL is the upper specification limit and LSL is the lower specification limit. A % tolerance of less than 20% is generally considered acceptable [44].

The ATP Within the Analytical Procedure Lifecycle

The ATP is the cornerstone of the analytical procedure lifecycle, connecting its three main stages as defined in USP <1220> [42]. The diagram below illustrates this integrated relationship.

ATP in the Analytical Procedure Lifecycle

- Stage 1: Procedure Design and Development – The ATP provides the predefined objectives. Development activities, including risk assessment and design of experiments (DOE), are conducted to create a procedure capable of meeting ATP requirements [44] [42].

- Stage 2: Procedure Performance Qualification – The final analytical procedure is validated against the performance characteristics and acceptance criteria defined in the ATP to demonstrate it is fit-for-purpose [42].

- Stage 3: Continued Procedure Performance Verification – During routine use, the procedure's performance is continuously monitored against the ATP to ensure it remains in a state of control [42].

A Comparative Framework for Spectroscopy Based on the ATP

The ATP provides an objective basis for selecting and optimizing analytical techniques. The table below compares common spectroscopic techniques used for pharmaceutical analysis, with their performance evaluated against typical ATP criteria.

Table 2: Comparison of Spectroscopic Techniques for Pharmaceutical Quantification

| Technique | Typical Application in Pharma | Key Performance Characteristics | Considerations for ATP Design |

|---|---|---|---|

| Ultraviolet-Visible (UV-Vis) | Assay of active ingredient in dissolution testing; Content uniformity [45]. | High precision and accuracy for specific chromophores; Limited specificity in complex matrices. | Well-suited for APIs with strong chromophores; Specificity must be verified against interferents. |

| Near-Infrared (NIR) | Raw material identification; Polymorph screening; Process Analytical Technology (PAT) [45] [46]. | Rapid, non-destructive; Requires chemometrics for multivariate calibration. | Ideal for rapid identity and physical property tests; Model robustness is a critical performance parameter. |

| Mid-Infrared (IR) | Compound identification; Functional group analysis [45]. | Rich in structural information; Intense, isolated absorption bands. | Excellent for qualitative identity tests; Quantitative use may require careful sample preparation. |

| Raman | Polymorph characterization; High-concentration API assay [45] [42]. | Minimal sample prep; Weak interference from water and glass. | Complementary to IR; Suitability for quantitative analysis depends on signal-to-noise and laser stability. |

Case Study: Spectroscopy for Structural Similarity Assessment