Qualitative vs Quantitative Spectroscopic Methods: A Researcher's Guide to Advantages, Disadvantages, and Applications in Drug Development

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on the strategic selection and application of qualitative and quantitative spectroscopic methods.

Qualitative vs Quantitative Spectroscopic Methods: A Researcher's Guide to Advantages, Disadvantages, and Applications in Drug Development

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on the strategic selection and application of qualitative and quantitative spectroscopic methods. It explores the foundational principles, core differences, and philosophical paradigms of both approaches, detailing their specific advantages and disadvantages. The content covers practical methodological applications across biomedical research, addresses common troubleshooting and optimization challenges, and offers a comparative framework for method validation. By synthesizing insights from both research paradigms, this guide aims to equip professionals with the knowledge to make informed decisions, optimize spectroscopic analyses, and effectively integrate these techniques to advance drug discovery and development.

Understanding the Spectrum: Core Principles of Qualitative and Quantitative Spectroscopic Analysis

In scientific research, particularly in fields like spectroscopy and drug development, the choice of research methodology is foundational to inquiry. The two primary paradigms that guide this discovery are qualitative research, which deals with words, meanings, and experiences, and quantitative research, which deals with numbers and statistics [1]. These approaches are not merely different techniques for data collection but represent fundamentally different worldviews on the nature of reality (ontology) and knowledge (epistemology) [2]. A precise understanding of this divide is critical for researchers and scientists to design robust studies, select appropriate analytical techniques, and draw valid conclusions from their data.

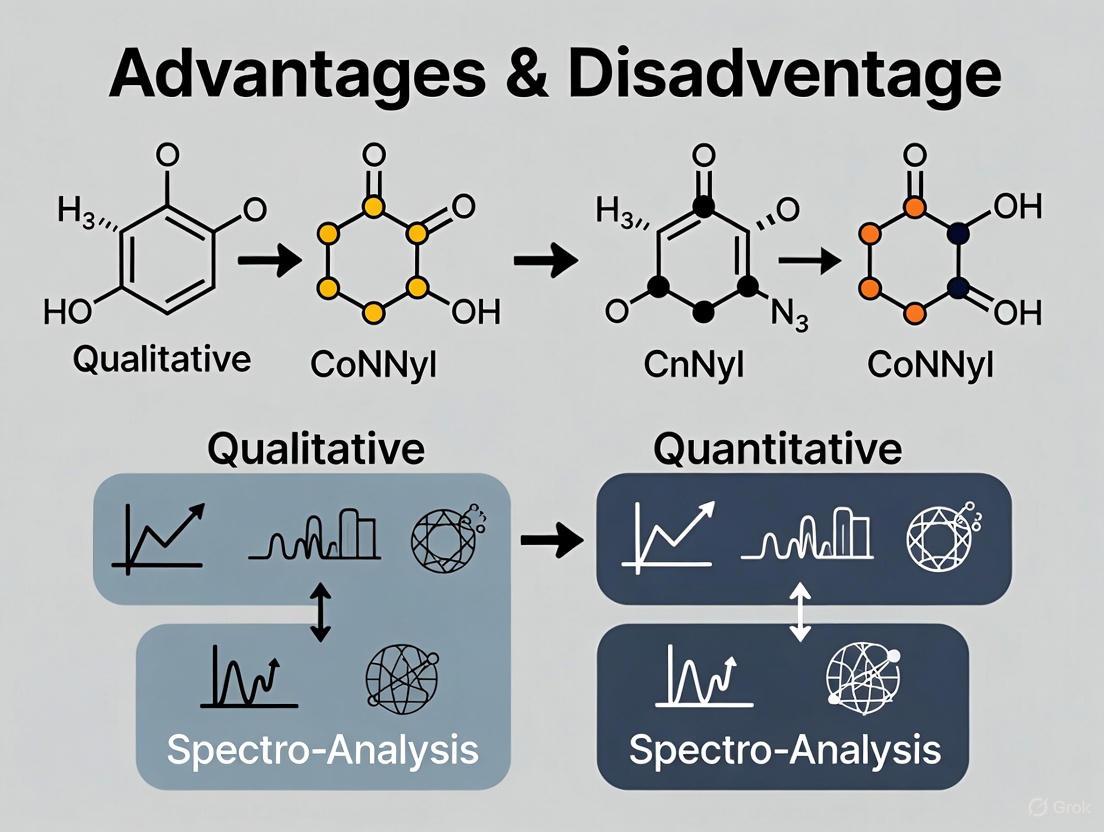

Qualitative research is primarily exploratory, seeking to explain the "how" and "why" behind a phenomenon, correlation, or behavior [3]. It is concerned with subjective information that cannot be numerically measured, focusing instead on understanding concepts, thoughts, and experiences [4]. In contrast, quantitative research is designed to test hypotheses or theories by examining the relationships among variables [1]. These variables are measured numerically and analyzed using statistical methods to answer questions of "how many," "how much," or "to what extent" [4]. The following diagram illustrates the fundamental workflow and logical relationship between these two paradigms.

Core Philosophical and Methodological Differences

The divergence between qualitative and quantitative research extends beyond methodology to their foundational philosophical assumptions. Quantitative research is typically rooted in a positivist philosophy, which asserts that there is a single objective reality that can be measured and explained using scientific methods [2]. This worldview values hypothesis-testing and seeks to establish general laws of behavior and phenomenon. In contrast, qualitative research often aligns with constructivist or postpositivist philosophies, which contend that reality is socially constructed and dynamic, with multiple perspectives shaped by individual experiences and contexts [2].

These philosophical differences manifest in distinct methodological approaches. Quantitative research employs a structured, controlled process, often conducted in laboratory settings to minimize external influences [1]. It follows a predefined research design established before data collection begins, aiming for objectivity by maintaining researcher distance from the data [1]. Qualitative research, however, embraces a flexible, adaptive design that evolves during the research process as new findings emerge [1]. Researchers actively participate in the research environment, often immersing themselves in the participants' natural settings to understand phenomena from an insider's perspective [1].

Key Distinctions in Approach and Execution

The table below summarizes the fundamental differences between qualitative and quantitative research paradigms across multiple dimensions, providing researchers with a clear framework for understanding their distinct characteristics.

Table 1: Core Differences Between Qualitative and Quantitative Research

| Dimension | Qualitative Research | Quantitative Research |

|---|---|---|

| Nature of Data | Words, images, sounds, observations [1] | Numbers, statistics, metrics [1] |

| Research Questions | Explores "why" and "how" [3] | Asks "how many," "how much," "what relationship" [1] |

| Sample Characteristics | Small, purposively selected samples [4] [5] | Large, often randomized samples [4] |

| Data Collection Methods | Interviews, focus groups, observations, document analysis [4] [6] | Surveys, experiments, polls, structured observations [4] [7] |

| Researcher Role | Active participant, immersed in data [1] | Objective observer, detached from data [1] |

| Analysis Approach | Thematic analysis, coding, interpretation [4] [1] | Statistical analysis, trend identification [4] [1] |

| Result Presentation | Narratives, themes, theories [1] | Statistics, figures, quantified relationships [1] |

| Philosophical Foundation | Constructivism, interpretivism [2] | Positivism, objectivism [2] |

Advantages and Disadvantages: A Comparative Analysis

Each research paradigm offers distinct strengths and faces particular limitations. Understanding these trade-offs is essential for researchers to select the most appropriate approach for their specific investigation, particularly in technical fields like spectroscopic analysis where both qualitative identification and quantitative measurement are often required.

Advantages and Disadvantages of Qualitative Research

Qualitative research provides deep, nuanced insights into complex phenomena, making it particularly valuable for exploring understudied areas or understanding processes and meanings.

Table 2: Advantages and Disadvantages of Qualitative Research

| Advantages of Qualitative Research | Disadvantages of Qualitative Research |

|---|---|

| Provides rich, detailed data that captures complexities and contradictions of real-life contexts [1] [6] | Small sample sizes limit generalizability to broader populations [4] [1] |

| Flexible approach allows researchers to adapt questions and explore emerging topics during the research process [6] [5] | Subjectivity and potential bias in data collection and interpretation due to close researcher involvement [4] [3] |

| Explores attitudes and behaviors in-depth on a personal level, providing context rather than just numbers [5] | Time-consuming data collection and analysis processes (e.g., transcribing interviews) [8] [1] |

| Identifies new relationships and theories through discovery of previously unknown dynamics [1] | Limited replicability due to context-specific nature of findings [1] |

| Gives voice to participant perspectives using their own words and experiences [8] | Artificiality of data capture in some settings (e.g., focus groups) may influence participant responses [6] |

Advantages and Disadvantages of Quantitative Research

Quantitative research offers precision, measurability, and generalizability, making it indispensable for establishing patterns, testing theories, and making predictions.

Table 3: Advantages and Disadvantages of Quantitative Research

| Advantages of Quantitative Research | Disadvantages of Quantitative Research |

|---|---|

| Objective, measurable results that reduce subjective bias through structured data collection [7] [3] | Lacks depth and context behind the numerical data, potentially overlooking subtleties [8] [7] |

| Efficient analysis of large datasets using statistical software and visualization tools [7] [3] | Limited by predefined questions that may restrict participants' ability to share nuanced perspectives [7] [5] |

| Generalizable findings when based on large, random samples that represent the target population [4] [5] | Risk of misleading results if questions are biased, samples are inadequate, or analysis is improper [7] |

| Fast data collection from large groups, especially using modern digital survey tools [5] [9] | Cannot capture decision-making processes or the reasons behind behaviors and attitudes [7] |

| Supports predictive decision-making by identifying patterns and trends over time [7] | Requires large samples for reliable statistical analysis, increasing costs and logistical challenges [4] [7] |

Experimental Protocols and Data Analysis Procedures

The implementation of qualitative and quantitative research follows distinct protocols for data collection, analysis, and validation. These procedures ensure the reliability and validity of findings within their respective paradigms.

Qualitative Research Protocols

Qualitative research employs various approaches tailored to the research question. Key methodologies include:

- Ethnography: The researcher immerses themselves in the participant's environment to produce a comprehensive account of social phenomena from the perspective of someone within the population [2].

- Grounded Theory: A theoretical model is developed through observation of a study population and comparative analysis of their speech and behavior, explaining how and why people behave a certain way [2].

- Phenomenology: This approach investigates "lived experiences" from the participants' perspective, examining how and why participants behaved a certain way based on their own viewpoints [2].

- Narrative Research: Researchers weave together a sequence of events from one or two individuals to create a cohesive story, understanding the influences that helped shape that narrative [2].

Data collection in qualitative research typically involves purposive sampling, where participants are selected based on the researcher's rationale for who can provide the most informative perspectives [2]. Specific techniques include unstructured or semi-structured interviews, focus groups, and participant observation [2] [6]. The analysis process generally follows these steps:

- Data compilation and organization

- Transcription of interviews and field notes

- Coding of data (manually or using CAQDAS like NVivo or ATLAS.ti)

- Thematic analysis to identify patterns and relationships

- Interpretation and theory development based on emergent themes [4] [1] [2]

Quantitative Research Protocols

Quantitative research follows a more structured, predetermined protocol:

- Experimental Designs: Researchers manipulate independent variables to observe their effect on dependent variables while controlling for extraneous factors.

- Survey Research: Structured questionnaires with closed-ended questions are administered to large samples to gather countable answers that can be transformed into quantifiable data [3].

- Longitudinal Studies: Data is collected at multiple time points to track changes and establish temporal sequences.

- Correlational Research: Researchers measure the relationship between variables without manipulating them.

The quantitative data analysis workflow typically involves:

- Connecting measurement scales to study variables

- Linking data with descriptive statistics (mean, median, mode, frequency)

- Organizing data into tables and visualizations

- Conducting statistical analyses (cross-tabulation, trend analysis, TURF analysis)

- Testing hypotheses using inferential statistics [4]

Research Reagent Solutions: Essential Methodological Tools

Both qualitative and quantitative research require specific "reagent solutions" – the essential methodological components that facilitate the research process. The table below details these fundamental tools and their functions in the research workflow.

Table 4: Essential Research Reagent Solutions and Their Functions

| Research Reagent | Function in Research Process |

|---|---|

| Semi-structured Interview Guides | Provides flexible framework for qualitative data collection while allowing exploration of emergent topics [6] [2] |

| Focus Group Protocols | Facilitates group discussions to explore shared views and interactions on specific topics [6] [5] |

| CAQDAS Software (Computer-Assisted Qualitative Data Analysis) | Supports organization, coding, and analysis of non-numerical data using platforms like NVivo or ATLAS.ti [4] [2] |

| Standardized Surveys with Closed-ended Questions | Enables collection of countable answers from large samples that can be transformed into quantifiable data [3] |

| Statistical Analysis Software (e.g., SPSS, R) | Facilitates organization and statistical analysis of numerical data to identify patterns and test hypotheses [1] |

| Validated Scales and Instruments | Provides reliable and consistent measurement tools for quantifying attitudes, opinions, and behaviors [1] |

Integration of Methods: Mixed-Methods Approach

Recognizing the complementary strengths and limitations of qualitative and quantitative research, many researchers in spectroscopy and pharmaceutical development adopt a mixed-methods approach. This integration provides a more comprehensive understanding of research problems than either method could achieve alone [10]. The mixed-methods paradigm avoids many criticisms directed at each approach individually by combining their strengths [4] [6].

Mixed-methods research can be implemented in several configurations:

- Exploratory Sequential Design: Qualitative methods explore a phenomenon and develop hypotheses, which are then tested using quantitative methods [10].

- Explanatory Sequential Design: Quantitative methods identify patterns or relationships, followed by qualitative methods to explain the mechanisms behind these findings [10].

- Convergent Parallel Design: Both qualitative and quantitative data are collected simultaneously and integrated during interpretation to provide complementary insights [4].

This integrated approach is particularly valuable in health and pharmaceutical research, where understanding both the statistical outcomes and the human experiences is essential. For example, quantitative methods can establish the efficacy of a new drug, while qualitative methods can reveal patient experiences with treatment side effects and adherence [10]. The following diagram illustrates how these methodologies can be integrated throughout the research process.

The divide between qualitative and quantitative research paradigms represents not a schism to be reconciled but a spectrum of complementary approaches to scientific inquiry. Qualitative research excels in exploring complex phenomena, understanding meanings, and generating theoretical frameworks, while quantitative research provides precision, generalizability, and statistical verification. For researchers in spectroscopy, drug development, and related scientific fields, the strategic selection of appropriate methodology – or the intentional integration of both – should be guided by the specific research questions, the nature of the phenomena under investigation, and the intended applications of the findings. By understanding the philosophical foundations, methodological requirements, and practical implications of each paradigm, scientists can design more robust, informative research programs that advance knowledge and innovation in their respective domains.

The Strategic Dichotomy in Research

In scientific research, particularly in drug development, two fundamental approaches frame our inquiry: qualitative methods that explore the 'why' and 'how' behind phenomena, and quantitative methods that precisely measure the 'how much'. Qualitative research seeks to understand underlying reasons, opinions, and motivations, providing rich, contextual insights [6] [8]. Quantitative research, in contrast, focuses on quantifying attitudes, opinions, and behaviors by generating numerical data that can be transformed into usable statistics to identify patterns and test hypotheses [9] [7].

This guide objectively compares these methodologies, providing supporting experimental data and protocols to help researchers, scientists, and drug development professionals select and combine these approaches effectively within their projects.

Theoretical Foundations: Core Objectives and Applications

The choice between qualitative and quantitative methods is not merely one of data type, but of fundamental objective. Each approach serves a distinct purpose and answers different types of research questions.

Qualitative Research: Exploring 'Why' and 'How'

This approach is exploratory and seeks to explain ‘how’ and ‘why’ a particular phenomenon or behavior operates as it does in a particular context [6]. It is at the "touchy-feely" end of the spectrum, concerned with capturing people’s opinions and emotions rather than "bean-counting" [6].

- Primary Objective: To gain an in-depth understanding of human behavior, experience, and the underlying reasons, motivations, and emotions that govern them [11] [8].

- Context in Drug Development: Ideal for exploring patient experiences with a disease or treatment, understanding barriers to medication adherence, and gathering deep expert insights from healthcare professionals during early-stage discovery [12].

Quantitative Research: Measuring 'How Much'

This is the ‘bean-counting' aspect of the research spectrum, now often encompassed by the term ‘People Analytics' [6]. It is primarily designed to capture numerical data to study a fact or phenomenon within a population [9].

- Primary Objective: To quantify data and generalize results from a sample to the population of interest. It answers questions about "how many," "how much," or "how often" [7] [8].

- Context in Drug Development: Critical for measuring pharmacokinetics, determining optimal dosage (how much), calculating incidence of side effects, analyzing clinical trial outcomes, and tracking productivity in R&D operations [6] [13].

The following workflow illustrates the interconnected relationship between these two approaches within a typical research and development cycle, showing how they can be integrated for a more complete understanding.

Diagram 1: Research Methodology Workflow showing the cyclical relationship between qualitative and quantitative approaches.

Comparative Analysis: Advantages and Disadvantages

A clear understanding of the strengths and limitations of each methodology is crucial for robust research design. The following tables summarize the key advantages and disadvantages of each approach.

Qualitative Research: Pros and Cons

| Advantage | Description | Context in Drug Development |

|---|---|---|

| In-Depth Understanding | Provides rich, detailed insights into participants' thoughts, feelings, and motivations, capturing complexities that numbers alone cannot [11] [8]. | Exploring nuanced reasons behind patient non-adherence to a medication regimen. |

| Flexibility & Adaptability | Researchers can adapt questions and methods in real-time based on participant responses, fostering organic discovery [6] [11]. | An interview guide can evolve as new, unexpected themes emerge from conversations with clinicians. |

| Exploration of New Areas | Ideal for investigating previously unexplored phenomena where variables are unknown [6]. | Early-stage investigation into a disease area with limited existing research. |

| Disadvantage | Description | Mitigation Strategy |

|---|---|---|

| Subjectivity & Bias | Interpretation is heavily influenced by the researcher's perspective, and participant selection can skew results [6] [11]. | Use triangulation (multiple data sources), and maintain reflexivity about one's own biases [6]. |

| Limited Generalizability | Findings from small, specific samples may not represent the broader population [11]. | Use qualitative findings to inform quantitative studies that test the applicability of insights on a larger scale. |

| Time-Consuming Analysis | Collecting, transcribing, and interpreting non-numerical data requires significant effort and resources [11] [8]. | Leverage AI-powered tools for transcription and initial thematic analysis to accelerate the process [12]. |

Quantitative Research: Pros and Cons

| Advantage | Description | Context in Drug Development |

|---|---|---|

| Measurable & Reliable Results | Provides structured, repeatable data that reduces guesswork and allows for precise measurement of improvements [7]. | Objectively measuring the reduction in tumor size or the change in a biomarker level in a clinical trial. |

| Scalability | Can gather structured data from a wide audience, providing confidence that results reflect broader user needs [9] [7]. | Deploying a patient satisfaction survey to thousands of participants to validate a finding from a small focus group. |

| Reduces Subjective Bias | The structured nature and numerical output remove personal opinions from the equation, focusing on measurable outcomes [7]. | Using a standardized assay to measure drug potency, eliminating individual researcher interpretation. |

| Disadvantage | Description | Mitigation Strategy |

|---|---|---|

| Lacks Depth and Context | Shows what is happening but not why. A survey may show low satisfaction but not the reasons behind it [7]. | Complement quantitative findings with qualitative follow-ups (e.g., open-ended survey questions, interviews). |

| Limited by Predefined Questions | Surveys force respondents into set answers, potentially missing critical, unanticipated feedback [7]. | Include open-ended response options and use qualitative pre-testing to improve survey design. |

| Risk of Misleading Data | Biased questions, small samples, or improper analysis can skew findings and lead to incorrect conclusions [9] [7]. | Ensure rigorous experimental design, use appropriate statistical tests, and validate with complementary methods. |

Experimental Protocols and Supporting Data

To illustrate the application of these methods, below are detailed protocols for representative qualitative and quantitative experiments relevant to drug development.

Protocol 1: Qualitative Focus Group on Patient Medication Adherence

- 1. Core Objective: To explore the 'why' and 'how' behind patient non-adherence to a new oral anticoagulant medication.

- 2. Methodology:

- Recruitment: A purposive sample of 8-10 patients diagnosed with atrial fibrillation and prescribed the medication within the last 6 months.

- Moderation: A trained facilitator uses a semi-structured discussion guide with open-ended questions (e.g., "Can you describe your experience with remembering to take this medication?" "What, if anything, makes it difficult to take it as prescribed?").

- Setting: A neutral, comfortable focus group room with audio and video recording.

- Duration: 90 minutes.

- 3. Data Collection: Audio-video recordings, transcribed verbatim. Observer notes on non-verbal cues and group dynamics.

- 4. Analysis:

- Coding: Transcripts are analyzed using thematic analysis. Initial codes (e.g., "cost concerns," "fear of side effects," "complex routine") are assigned to relevant text segments.

- Theming: Codes are grouped into broader themes (e.g., "Logistical Barriers," "Emotional and Psychological Factors").

- Validation: Themes are reviewed and refined by a second researcher to ensure consistency and reduce individual bias [6] [11].

Protocol 2: Quantitative Analysis of Drug Efficacy in a Preclinical Model

- 1. Core Objective: To measure 'how much' a novel drug candidate reduces tumor growth compared to a control.

- 2. Methodology:

- Design: Randomized, controlled experiment.

- Subjects: 50 laboratory mice with induced xenograft tumors.

- Groups: Randomly assigned to:

- Treatment Group (n=25): Receives novel drug candidate (e.g., 50 mg/kg, daily, oral gavage).

- Control Group (n=25): Receives vehicle control (daily, oral gavage).

- Blinding: Researchers measuring tumors are blinded to group assignment.

- 3. Data Collection:

- Primary Endpoint: Tumor volume, measured by digital calipers every three days for 30 days. Volume calculated as (length × width²)/2.

- Secondary Endpoints: Animal body weight (as a proxy for toxicity), and survival rate.

- 4. Analysis:

- Statistical Test: A repeated-measures ANOVA is used to compare the trend in tumor volume over time between the two groups.

- Significance Threshold: p < 0.05.

- Software: Data analyzed using software like GraphPad Prism or R. The results are presented as mean tumor volume ± standard error of the mean (SEM) [9] [7].

Table: Simulated Quantitative Results from Preclinical Efficacy Study

| Study Day | Mean Tumor Volume - Control Group (mm³) | Mean Tumor Volume - Treatment Group (mm³) | P-Value |

|---|---|---|---|

| 0 | 100 ± 5 | 102 ± 6 | 0.78 |

| 9 | 250 ± 15 | 180 ± 12 | 0.001 |

| 18 | 550 ± 25 | 210 ± 18 | < 0.001 |

| 27 | 980 ± 45 | 190 ± 20 | < 0.001 |

| 30 | 1250 ± 60 | 175 ± 15 | < 0.001 |

Simulated data demonstrating a statistically significant reduction in tumor growth in the treatment group.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key materials and tools used across qualitative and quantitative research paradigms in a drug development context.

| Item | Function | Applicable Context |

|---|---|---|

| Semi-Structured Interview Guide | A flexible protocol of open-ended questions to explore a topic in-depth while allowing for spontaneous probing. | Qualitative research (e.g., interviewing key opinion leaders or patients) [6]. |

| Digital Recorder & Transcription Software | To accurately capture and transcribe verbal interactions for detailed analysis. | Qualitative research (focus groups, in-depth interviews) [11]. |

| AI-Powered Qualitative Data Analysis Platform | Software that uses natural language processing to assist researchers in coding transcripts and identifying themes at scale [12]. | Qualitative research (analyzing large volumes of interview or open-ended survey data). |

| Standardized Survey with Likert Scales | A research instrument with closed-ended questions and scaled responses (e.g., 1-5) to generate numerical data. | Quantitative research (measuring patient-reported outcomes, satisfaction) [7]. |

| Cell-Based Assay Kit (e.g., ELISA, MTS) | A standardized biochemical test to quantitatively measure a substance (e.g., protein concentration) or cell viability. | Quantitative research (high-throughput drug screening, toxicity testing). |

| Statistical Analysis Software (e.g., R, SAS) | Software for performing complex statistical analyses on numerical datasets to test hypotheses and determine significance. | Quantitative research (analyzing clinical trial data, pharmacokinetic parameters) [9]. |

The dichotomy between exploring 'why/how' and measuring 'how much' is not a choice between superior and inferior methods. Instead, it represents a powerful strategic spectrum. Qualitative research provides the depth and context—the patient narrative, the clinician's intuition, the unexpected insight—that breathes life into data. Quantitative research provides the breadth and validation—the statistical power, the measurable outcomes, the generalizable truths—that ground insights in reality.

The future of innovative drug development lies in the purposeful integration of both. A quantitative finding that a drug is effective is incomplete without the qualitative understanding of a patient's experience taking it. Conversely, a qualitative observation from a handful of clinicians becomes far more powerful when validated across a large, diverse population through quantitative means. By mastering both toolkits and understanding their complementary advantages and disadvantages, researchers and scientists can build a more complete, robust, and ultimately successful path from discovery to patient cure.

In the realm of research, particularly within the sciences and drug development, the approach to inquiry is fundamentally guided by the researcher's underlying beliefs about reality and knowledge. These belief systems, known as research paradigms, form the philosophical foundation upon which studies are built, influencing everything from the formulation of research questions to the selection of methods and the interpretation of results [14]. The two predominant paradigms that often frame scientific discourse are positivism and constructivism [15]. For researchers, scientists, and drug development professionals, understanding the distinctions between these paradigms is not merely an academic exercise; it is crucial for designing rigorous, valid, and meaningful studies. This guide provides an objective comparison of the positivist and constructivist paradigms, detailing their philosophical underpinnings, methodological applications, and relative strengths and weaknesses.

Core Philosophical Pillars: A Comparative Framework

A research paradigm is structured upon three foundational pillars: ontology (the nature of reality), epistemology (the nature of knowledge and how it is acquired), and methodology (the process of research) [14] [16]. Some frameworks also include axiology (the role of values) as a fourth key component [15]. The core differences between positivism and constructivism emerge from their divergent answers to these philosophical questions.

The table below summarizes the fundamental distinctions between these two paradigms across these key dimensions.

Table 1: Philosophical Foundations of Positivist and Constructivist Paradigms

| Dimension | Positivist Paradigm | Constructivist Paradigm |

|---|---|---|

| Ontology (Nature of Reality) | A single, tangible, objective reality that exists independently of the researcher. "Truth" is out there to be discovered and measured [15] [17]. | Multiple, subjective, and socially constructed realities. Reality is relative to the individual or group [15] [16]. |

| Epistemology (Nature of Knowledge) | The knower and the known are independent. The researcher must remain objective and detached to discover the truth [15] [1]. | The knower and the known are interactively linked. The researcher's values and participants jointly create findings [15] [18]. |

| Axiology (Role of Values) | Inquiry is objective and value-free. Researcher biases can and should be eliminated through rigorous, controlled procedures [15]. | Inquiry is value-bound. The researcher's values, along with those of the participants, are inherent in the study and cannot be eliminated [15]. |

| Aim of Inquiry | Explanation, prediction, and control. The researcher acts as an "expert" [15]. | Understanding and reconstruction of meanings. The researcher acts as a "participant and facilitator" [15]. |

Methodological Implications: From Philosophy to Practice

These philosophical foundations directly inform the research methodologies typically associated with each paradigm. The positivist pursuit of a single, measurable reality leads to methods that generate quantitative data. In contrast, the constructivist focus on multiple, subjective realities necessitates methods that generate rich, qualitative data [1] [14].

The Positivist (Quantitative) Approach

Positivist research is characterized by a structured and controlled process [1]. It often begins with a specific hypothesis that is tested through empirical observation and measurement [14]. The goal is to produce objective, generalizable data that can be statistically analyzed to confirm or refute the hypothesis [1].

Common Methodologies and Data Sources:

- Experiments: Especially randomized controlled trials, which are the gold standard in clinical drug development for establishing cause-and-effect relationships [19].

- Structured Surveys and Questionnaires: Utilizing closed-ended questions and rating scales (e.g., Likert scales) to generate countable, numerical data [1] [3].

- Systematic Observations: Where behaviors are coded and quantified.

- Analysis of Numerical Records: Such as sales data or standardized test scores [1].

The Constructivist (Qualitative) Approach

Constructivist research is flexible and exploratory, seeking depth and context over breadth and generalization [1]. The research design often evolves as the study proceeds, allowing new insights to emerge directly from the data [15] [1].

Common Methodologies and Data Sources:

- In-depth Interviews: Open-ended conversations that allow participants to share their experiences and perspectives in their own words [5] [1].

- Focus Groups: Facilitated group discussions that explore shared views and interactions on a specific topic [5] [1].

- Ethnography: Detailed, long-term observation of a group or culture in their natural environment to understand their social dynamics and meanings [1].

- Case Studies: An in-depth exploration of a single individual, group, or event within its real-life context [1].

The following workflow diagram illustrates the logical progression from the core research paradigm to the final research outcome.

Comparative Analysis: Strengths, Weaknesses, and Applications

Each paradigm, with its associated methods, offers distinct advantages and suffers from particular limitations. A sophisticated researcher selects the paradigm based on the nature of the research question.

Table 2: Strengths, Weaknesses, and Applications of Positivist and Constructivist Paradigms

| Aspect | Positivist/Quantitative Approach | Constructivist/Qualitative Approach |

|---|---|---|

| Strengths | - Produces objective, empirical data that can be clearly communicated through statistics [3].- Allows for efficient analysis of large sample sizes, often with the aid of software [5] [3].- Findings can be generalized to the wider population if the sample is representative [1].- Establishes cause-and-effect relationships through controlled experiments [19]. | - Provides rich, detailed, in-depth understanding of human behavior and social phenomena [5] [1].- Offers flexibility to adapt the research process as new insights emerge [5].- Ideal for exploring new or complex areas where little is known [1].- Captures the voice and perspective of participants [8]. |

| Weaknesses | - May oversimplify complex human experiences by reducing them to numbers [8] [3].- The structured nature can be restrictive, preventing exploration of unanticipated topics [8].- Requires a large sample size for robust statistical analysis [5] [3].- Lacks the contextual and narrative detail found in qualitative data [8]. | - Small sample sizes limit the generalizability of findings [5] [1].- Interpretation can be highly subjective and influenced by researcher bias [8] [3].- Data collection and analysis are time-consuming and labor-intensive [5] [1].- Findings are difficult to aggregate and use for broad predictions [1]. |

| Typical Applications | - Market measurements (e.g., prevalence of a behavior) [5].- Testing hypotheses and establishing causal relationships (e.g., clinical trials for drug efficacy) [1] [18].- Identifying patterns and correlations across large populations [1]. | - Exploring attitudes, behaviors, and experiences in depth [5].- Testing concepts, advertisements, or developing new products [5].- Understanding the "why" and "how" behind phenomena [3]. |

The Researcher's Toolkit: Essential Methodological Components

While a philosophical paradigm does not use "reagents" in the traditional laboratory sense, each approach relies on a distinct set of core components or tools for conducting research. The following table details these essential elements for both paradigms.

Table 3: Key Components of the Positivist and Constructivist Research Toolkit

| Paradigm | Tool Category | Tool Name | Function in Research |

|---|---|---|---|

| Positivist | Data Collection | Structured Questionnaire | Gathers standardized, quantifiable data from a large sample using closed-ended questions [1]. |

| Measurement Instrument | Standardized Scale/Test (e.g., BDI) | Produces numerical scores to objectively measure constructs like psychological states or performance [1]. | |

| Research Design | Randomized Controlled Trial (RCT) | Isolates cause-and-effect by randomly assigning participants to control and experimental groups [19]. | |

| Data Analysis | Statistical Software (e.g., SPSS, R) | Analyzes numerical datasets to identify statistical patterns, relationships, and significance [1]. | |

| Constructivist | Data Collection | Semi-structured Interview Guide | Provides a flexible framework for open-ended conversations to explore participant experiences [1] [3]. |

| Data Generation | Audio/Video Recorder | Captures raw, nuanced data (conversations, behaviors) for detailed, verbatim analysis [1]. | |

| Research Design | Interview/Focus Group Transcripts | Serves as the primary textual data for analysis, containing the exact words of participants [1]. | |

| Data Analysis | Qualitative Analysis Software (e.g., NVivo) | Helps organize, code, and manage non-numerical data to identify recurring themes and patterns [1]. |

The choice between a positivist and a constructivist paradigm is not a matter of which is universally "better," but rather which is appropriate for the research question at hand [16]. Positivism, with its quantitative methods, is powerful for measuring, predicting, and establishing generalizable facts. It is indispensable in fields like drug development, where proving the efficacy and safety of a new treatment requires controlled, objective, and statistically verifiable evidence. Constructivism, with its qualitative methods, is essential for understanding complex human experiences, motivations, and social processes. It can provide critical insights in early-stage drug development, for example, by exploring patient adherence to medication regimes or understanding the lived experience of a disease.

In practice, many of the most robust research programs in science and medicine employ a mixed-methods approach, leveraging the strengths of both paradigms to gain a more comprehensive understanding [1] [16]. For instance, a quantitative study might identify that a drug is effective, while a follow-up qualitative study could explain why patients are or are not complying with the treatment regimen. By understanding the philosophical foundations and practical applications of both constructivist and positivist paradigms, researchers are equipped to design more nuanced, effective, and impactful studies.

In scientific research, particularly spectroscopic analysis and drug development, data manifests in two primary forms: quantitative and qualitative. Quantitative data captures numerical and statistical information, answering "how much" or "how many," while qualitative data deals with words, themes, and narratives, exploring "how" and "why." [6] [2] This guide objectively compares these approaches, focusing on their applications, advantages, and disadvantages within spectroscopic methods and research. Understanding the interplay between these data forms is crucial for researchers and scientists aiming to design robust, insightful studies.

Defining the Approaches: Core Concepts and Methodologies

Quantitative Research: The Realm of Numbers and Statistics

Quantitative research is a methodological approach that collects and analyzes numerical data to identify patterns, correlations, and causal relationships across a large sample size. [7] [9] It is rooted in positivist philosophy, which asserts that an objective reality exists and can be measured. [2] This approach is deductive, often beginning with a hypothesis that is tested through structured instrumentation.

Common Methodologies:

- Surveys and Questionnaires: Standardized tools with closed-ended questions to gather measurable data from a large population. [7] [9]

- Experiments: Controlled studies where variables are manipulated to observe their effect on outcomes. [6]

- Non-compartmental Analysis (NCA): A model-independent approach to estimate drug exposure directly from concentration-time data. [20]

- Statistical Analysis: The use of mathematical models to interpret numerical datasets, test hypotheses, and make predictions. [7]

Qualitative Research: The World of Words, Themes, and Narratives

Qualitative research explores and provides deeper insights into real-world problems by gathering participants' experiences, perceptions, and behaviors. [2] It seeks to understand the meaning and context behind social or human phenomena. This approach is often associated with constructivist philosophy, which posits that reality is socially constructed and dynamic. [2] It is inherently inductive, aiming to generate theories from collected data.

Common Methodologies:

- Interviews: Conversation-based inquiries, which can be unstructured or semi-structured, to obtain in-depth information from participants. [6] [2]

- Focus Groups: Group discussions where participants share their thoughts, opinions, and attitudes, allowing researchers to observe group dynamics. [6]

- Observation: A systematic method where researchers observe subjects in their typical environment to capture real-time data and behaviors. [6]

- Narrative Research: An approach that weaves together a sequence of events from one or two individuals to create a cohesive story. [2]

Comparative Analysis: Advantages and Disadvantages

The choice between qualitative and quantitative research depends on the research question, goals, and context. The table below summarizes their core strengths and weaknesses.

Table 1: Core Advantages and Disadvantages of Quantitative and Qualitative Research

| Aspect | Quantitative Research | Qualitative Research |

|---|---|---|

| Data Nature | Numerical, statistical, measurable. [7] [9] | Textual, descriptive, based on words, themes, and narratives. [6] [2] |

| Primary Advantage | Provides measurable, reliable, and generalizable results from large samples; reduces subjective bias. [7] [9] | Offers rich, deep context and explains the "how" and "why" behind human behavior and complex phenomena. [6] [2] |

| Primary Disadvantage | Lacks depth and context; cannot capture underlying motivations or decision-making processes. [7] | Findings are not easily generalizable; data collection and analysis can be time-consuming and susceptible to researcher bias. [6] |

| Typical Question | "How many?", "How much?", "What is the relationship between variables?" | "Why?", "How?", "What is the experience like?" [2] |

| Sample Size | Large, aiming for statistical significance. [7] | Small, focused on in-depth understanding. [6] |

| Analysis Approach | Statistical models and mathematical analysis. [7] [9] | Grouping data into categories and themes; identifying patterns. [6] [2] |

Application in Spectroscopic Methods and Drug Development

The principles of qualitative and quantitative analysis are directly applicable to analytical techniques like spectroscopy, which are fundamental to modern drug discovery.

A Spectroscopic Example: NMR vs. Mass Spectrometry

In metabolomics research, both Nuclear Magnetic Resonance (NMR) spectroscopy and Mass Spectrometry (MS) are employed, but they embody different aspects of the qualitative-quantitative spectrum. [21]

- NMR Spectroscopy is inherently quantitative; it does not require separation or derivatization and provides direct, reproducible measurements of metabolite concentrations. [21]

- Mass Spectrometry offers superior sensitivity and selectivity. While it can provide quantitative data, its strength in detecting a vast number of metabolites and elucidating structures also gives it a strong qualitative character, helping to identify "what" is present in a sample. [21]

Table 2: Qualitative and Quantitative Characteristics in Spectroscopy

| Method | Primary Strengths | Common Applications in Drug Development |

|---|---|---|

| NMR Spectroscopy | Quantitative analysis, minimal sample preparation, high reproducibility. [21] | Determining purity and concentration of a lead compound (Quantitative). [21] |

| Mass Spectrometry (MS) | High sensitivity and selectivity, identification of unknown compounds (Qualitative). [21] | Identifying drug metabolites in complex biological samples (Qualitative); Pharmacokinetic (PK) exposure analysis (Quantitative). [20] [21] |

The Drug Development Workflow: A Mixed-Methods Approach

Drug development follows a structured process from discovery to post-market surveillance. [20] A successful strategy, known as Model-Informed Drug Development (MIDD), integrates both data types. [20] The following workflow diagram illustrates how qualitative and quantitative methods complement each other throughout this process.

Experimental Protocols and Research Reagent Solutions

Detailed Methodologies for Key Experiments

To ensure reproducibility, here are detailed protocols for common qualitative and quantitative experiments cited in this field.

Protocol 1: Conducting a Focus Group for Qualitative Data Capture (e.g., gathering clinician feedback on a drug's administration) [6] [2]

- Participant Recruitment: Use purposive or criterion sampling to select 8-12 participants who represent the target group (e.g., cardiologists with 5+ years of experience). [2]

- Moderator Guide: Develop a semi-structured discussion guide with open-ended questions (e.g., "Can you describe your experience with the injectable formulation?").

- Environment: Conduct the session in a neutral, quiet location. Record audio and video with consent.

- Execution: The moderator facilitates the discussion, encourages participation from all members, and probes for deeper insights without leading the participants.

- Data Analysis: Transcribe recordings verbatim. Use qualitative data analysis software (CAQDAS) like NVivo or ATLAS.ti to code the text and identify emergent themes. [2]

Protocol 2: A Quantitative Population Pharmacokinetic (PPK) Study [20]

- Study Design: A clinical trial design where sparse blood samples are collected from a large and diverse patient population at various time points after drug administration.

- Bioanalytical Method: Use a validated quantitative technique, such as Liquid Chromatography with Tandem Mass Spectrometry (LC-MS/MS), to measure drug concentrations in each plasma sample. [21]

- Data Collection: Record precise dosing history, sampling times, and patient covariates (e.g., weight, renal function, concomitant medications).

- Modeling and Simulation: Input concentration-time data and patient covariates into specialized software (e.g., NONMEM) to build a PPK model. This model describes the typical population pharmacokinetics and identifies sources of variability.

- Output: The model can simulate exposure under different dosing regimens to support optimal, individualized dosing recommendations.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key materials and tools used in the featured research fields.

Table 3: Essential Research Reagents and Tools for Qualitative and Quantitative Analysis

| Item | Function | Application Context |

|---|---|---|

| NVivo / ATLAS.ti | Computer-Assisted Qualitative Data Analysis Software (CAQDAS) for organizing, coding, and analyzing textual, audio, and video data. [2] | Qualitative Research: Thematic analysis of interview transcripts, focus group discussions, and open-ended survey responses. [2] |

| LC-MS/MS System | An analytical instrument that separates compounds (Chromatography) and provides highly sensitive and selective quantitative detection (Tandem Mass Spectrometry). [21] | Quantitative Research: Measuring drug and metabolite concentrations in biological fluids for pharmacokinetic studies. [20] [21] |

| NMR Spectrometer | An instrument that uses magnetic fields to determine the physical and chemical properties of atoms in a molecule, providing quantitative and structural information. [21] | Drug Development: Quantifying compound purity, determining molecular structure, and studying biomolecular interactions. [21] |

| Structured Survey | A research instrument with a predefined set of closed-ended questions (e.g., multiple-choice, Likert scale) to collect standardized numerical data. [7] | Quantitative Research: Gathering data from a large sample to measure attitudes, behaviors, or characteristics in a statistically analyzable format. [7] [9] |

| Semi-Structured Interview Guide | A flexible protocol containing open-ended questions and prompts that allow the researcher to adapt the conversation based on participant responses. [2] | Qualitative Research: Conducting in-depth interviews to explore complex experiences, perceptions, and motivations in rich detail. [6] [2] |

In spectroscopic methods and drug development, the dichotomy between words and numbers is a false one. Quantitative research provides the essential, measurable "what"—the statistical trends, the pharmacokinetic parameters, the concentration levels. [7] [21] Qualitative research provides the crucial "why" and "how"—the contextual understanding of a drug's real-world use, the mechanistic hypotheses, and the patient experience. [6] [2] The most powerful research strategies, such as MIDD, do not choose one over the other but rather integrate them. [20] By leveraging the objectivity and generalizability of numbers alongside the depth and nuance of narratives, researchers and scientists can drive more informed, effective, and innovative discoveries.

In scientific research, particularly within fields employing spectroscopic methods, the role of the researcher exists on a continuum from complete passive observer to fully embedded active participant. This spectrum fundamentally shapes how data is collected, interpreted, and validated. Observational research is non-experimental and involves systematically observing and recording behavior to describe variables or obtain a snapshot of specific characteristics [22]. The chosen role affects everything from the depth of contextual understanding to the potential for bias, making this distinction critical for research design, especially when investigating complex phenomena using sophisticated analytical techniques like spectroscopy.

The positioning of the researcher is not merely a methodological detail; it is a core component of the research framework that influences the very nature of the knowledge produced. In spectroscopic analysis of natural products or drug compounds, for instance, the choice between a highly objective, detached role versus a more engaged, participatory role can determine whether the research uncovers quantifiable molecular patterns or generates rich, contextual insights into experimental processes and anomalies.

Defining the Observer Roles

The involvement level of a researcher can be categorized into several distinct roles, primarily defined by their physical and psychological proximity to the subject of study. These roles form a continuum from complete detachment to full immersion.

The Complete Observer

In this role, the researcher is entirely detached and unobtrusive, neither seen nor noticed by participants. This approach minimizes the Hawthorne Effect, where participants may alter their behavior because they know they are being studied, thus increasing the likelihood of observing natural behavior [23]. For example, a spectroscopic analysis of compound interactions might be fully automated and observed remotely to prevent any human influence on the process. However, this method raises ethical questions about deception and privacy, particularly in human subjects research, though it may be justified in public settings or fully automated experimental contexts [23].

The Observer as Participant

Here, the researcher is known to the participants, who are often aware of the research goals. Interaction is present but limited, with the researcher aiming to maintain a neutral role as much as possible [23]. This is common in studies where researchers "follow a customer home" to understand product use, or in scientific contexts where a researcher observes an experimental procedure with the full knowledge of the technicians involved, interacting only for clarification.

The Participant as Observer

In this role, the researcher becomes fully engaged with participants, acting more like a friend or colleague than a neutral third party, while still being known as a researcher [23]. This method is often employed when studying specialized populations or cultures, such as remote indigenous populations or inner-city cultures. In a laboratory setting, this might involve a senior scientist fully participating in the daily work of a research team while simultaneously conducting observational research on their methodologies.

The Complete Participant

This represents the fully embedded researcher, where the researcher actively partakes in participants' activities without disclosing their research role [23]. Participants are unaware that observation and research are being conducted, despite fully interacting with the researcher. This approach, sometimes called "going native," is exemplified by undercover operations or secret shopper scenarios. The rationale is that the most authentic understanding of a role, people, or culture comes from firsthand experience. In scientific contexts, this might involve a researcher taking an undisclosed position in a commercial laboratory to understand proprietary techniques.

Table 1: Comparison of Observer Roles in Research

| Observer Role | Researcher Visibility | Level of Participation | Key Advantage | Primary Ethical Concern |

|---|---|---|---|---|

| Complete Observer | Hidden/Unnoticed | None | Minimizes Hawthorne Effect; natural behavior | Deception; privacy violation |

| Observer as Participant | Known, recognized | Limited interaction | Maintains neutrality with transparency | Potential for limited reactivity |

| Participant as Observer | Known as researcher | Full interaction | Deep engagement while maintaining honesty | Relationship bias; objectivity concerns |

| Complete Participant | Hidden/Unnoticed | Full immersion | Firsthand authentic experience | Full deception; informed consent |

Qualitative vs. Quantitative Research Approaches

The researcher's role is intimately connected to the type of research methodology employed—qualitative or quantitative—each with distinct purposes, data types, and analytical approaches.

Fundamental Distinctions

Qualitative research deals with words, meanings, and experiences, collecting non-numerical data to understand concepts, opinions, or experiences [8] [1]. It focuses on the 'why' and 'how' of human behavior and social phenomena, providing insights into the depth and complexity of the subject under study [8]. In contrast, quantitative research involves collecting and analyzing numerical data to describe, predict, or control variables of interest [1]. It aims to produce objective, empirical data that can be measured and expressed numerically, often used to test hypotheses, identify patterns, and make predictions [1].

Data Collection and Analysis Methods

Qualitative research employs methods such as in-depth interviews, focus groups, observations, and diary accounts to gather rich, descriptive data [5] [1]. The data analysis is interpretive, using techniques like thematic analysis, content analysis, and grounded theory to identify patterns and themes [1]. This approach is flexible and adaptive, allowing the research focus to evolve as new information emerges [1].

Quantitative research typically uses experiments, surveys with closed-ended questions, and structured observations to collect measurable data [1]. The analysis employs statistical methods, including descriptive statistics (e.g., means, percentages) and inferential statistics, to identify relationships, make predictions, and generalize findings to larger populations [1] [3]. The research design is predetermined and structured, seeking to maintain objectivity and control throughout the process [1].

Table 2: Qualitative vs. Quantitative Research Characteristics

| Characteristic | Qualitative Research | Quantitative Research |

|---|---|---|

| Data Type | Words, images, sounds (descriptive) | Numbers and statistics (measurable) |

| Research Purpose | Explore ideas, understand experiences | Test hypotheses, identify patterns |

| Sample Size | Small, in-depth samples | Large, representative samples |

| Data Collection | Interviews, observations, focus groups | Surveys, experiments, structured observations |

| Analysis Approach | Identify themes, interpretations | Statistical analysis |

| Researcher Role | Often participatory, engaged | Typically objective, detached |

| Question Answered | "Why?" and "How?" | "How many?" and "How much?" |

| Context | Natural settings | Controlled environments |

Advantages and Disadvantages

Qualitative research offers several advantages, including the ability to explore attitudes and behavior in-depth, flexibility to adapt to emerging findings, and capacity to capture complexity and nuance often missed by quantitative methods [5] [1]. However, it also has limitations: small sample sizes may limit generalizability, interpretation can be subjective and biased by researcher perspective, and data collection and analysis are often time-intensive [8] [5] [1].

Quantitative research provides benefits such as objective data analysis, ability to study large populations and generalize findings, precise measurement and comparison of variables, and efficient data collection and analysis, especially with standardized tools and statistical software [8] [5] [3]. Its limitations include potential lack of depth and contextual detail, restrictive structured approaches that may miss unanticipated phenomena, and risk of misinterpreting numerical data without understanding underlying contexts [8] [5].

Application to Spectroscopic Methods in Research

Spectroscopic analytical techniques are crucial across numerous scientific domains, including environmental analysis, natural product characterization, and drug development [24] [25]. The researcher's role and methodological approach significantly influence how these techniques are applied and interpreted.

Common Spectroscopic Techniques

Advanced spectroscopic methods include:

- Atomic Spectroscopies: Inductively coupled plasma mass spectrometry (ICP-MS) and optical emission spectroscopy (ICP-OES) for trace elemental analysis with high sensitivity and precision [25].

- Vibrational Spectroscopies: Fourier-transform infrared (FT-IR) spectroscopy for identifying chemical bonds and functional groups, and Raman spectroscopy (including surface-enhanced Raman spectroscopy or SERS) for molecular imaging and pollutant detection [25].

- Electronic Spectroscopies: Ultraviolet-visible (UV-vis) spectroscopy for measuring absorbance and concentration of analytes [25].

- X-ray Techniques: X-ray fluorescence (XRF) for elemental analysis and X-ray diffraction (XRD) for assessing crystalline structures [25].

- Magnetic Resonance: Nuclear magnetic resonance (NMR) spectroscopy for detailed molecular structure information [25].

Researcher Roles in Spectroscopic Analysis

In spectroscopic research, the complete observer role is often embodied by highly automated instrumentation that collects data with minimal human intervention. For instance, ICP-MS technology for analyzing tire-wear particle emissions or monitoring potentially toxic elements in environmental samples typically operates with the researcher as a remote observer [25]. This approach prioritizes objectivity and standardization, generating quantitative data on elemental concentrations.

The participant observer role emerges when researchers are more directly engaged in sample preparation, method development, and data interpretation. For example, in developing novel SERS substrates like gold clusters anchored on reduced graphene oxide (Au clusters@rGO), researchers actively participate in both the synthesis and optimization processes, bringing subjective expertise and contextual understanding to the experimental process [25]. This approach combines technical execution with qualitative assessment of methodological challenges and opportunities.

Qualitative vs. Quantitative Approaches in Spectroscopy

Quantitative spectroscopic research focuses on measurable outcomes—concentrations, detection limits, signal intensities, and statistical correlations. For example, determining the levels of potentially toxic elements (Al, Cr, Mn, Fe, Co, Ni, Cu, Zn, Cd, and Pb) in tea leaves and infusions using ICP-OES, followed by multivariate data analysis to identify contamination sources [25]. This approach provides precise, generalizable data but may miss contextual factors affecting results.

Qualitative spectroscopic research explores the underlying characteristics, behaviors, and interpretations of spectroscopic data. This might include investigating how natural organic matter affects SERS performance, understanding the interactions within analyte-NOM-nanoparticle systems, or developing theoretical models to explain observed spectral phenomena [25]. This approach provides deeper insights into mechanisms and relationships but may lack statistical generalizability.

Diagram 1: Research Approach Selection for Spectroscopic Methods

Experimental Protocols and Methodologies

The design of spectroscopic experiments varies significantly based on the researcher's role and methodological approach, affecting protocols, data collection, and interpretation.

Quantitative Spectroscopic Protocol: ICP-OES Analysis of Potentially Toxic Elements in Tea

Objective: To quantitatively determine the levels of potentially toxic elements (Al, Cr, Mn, Fe, Co, Ni, Cu, Zn, Cd, and Pb) in tea leaves and infusions using ICP-OES [25].

Methodology:

- Sample Preparation: Tea leaves are dried, homogenized, and digested using microwave-assisted acid digestion with nitric acid and hydrogen peroxide.

- Instrumental Analysis: Analysis is performed using ICP-OES with appropriate wavelength selection for each element, calibration standards, and quality control samples.

- Data Collection: Intensity measurements are converted to concentrations using calibration curves. Each sample is analyzed in triplicate to ensure precision.

- Statistical Analysis: Multivariate data analysis methods, including principal component analysis (PCA) and hierarchical cluster analysis (HCA), are used to identify potential contamination sources. Pearson's correlation coefficient (PCC) assesses relationships between variables [25].

Researcher Role: In this protocol, the researcher acts primarily as a complete observer, following standardized procedures to minimize personal influence on results. The focus is on objective measurement, precision, and statistical validity.

Qualitative Spectroscopic Protocol: Investigating Matrix Effects in SERS Analysis

Objective: To understand how natural water matrices affect SERS analysis using silver nanoparticles (AgNPs) as a substrate [25].

Methodology:

- Substrate Preparation: Synthesis and characterization of AgNPs or specialized SERS substrates like gold clusters on reduced graphene oxide (Au clusters@rGO).

- Experimental Design: Exposure of SERS substrates to analytes in different natural water matrices with varying compositions of natural organic matter (NOM), inorganic ions, and other potential interferents.

- Data Collection: Collection of SERS spectra under different conditions, with careful observation and documentation of spectral changes, artefacts, and performance variations.

- Interaction Analysis: Investigation of interactions within the ternary system of analyte, NOM, and nanoparticles to understand the mechanisms behind observed matrix effects [25].

Researcher Role: Here, the researcher adopts a participant observer role, actively engaging with the experimental process, making real-time decisions about conditions to test, and interpreting complex spectral data based on expertise and contextual understanding.

Research Reagent Solutions for Spectroscopic Analysis

Table 3: Essential Research Reagents and Materials in Spectroscopic Analysis

| Reagent/Material | Function/Application | Example Uses |

|---|---|---|

| Silver Nanoparticles (AgNPs) | SERS substrate for enhanced signal detection | Environmental pollutant detection in water matrices [25] |

| Gold Clusters on rGO | High-enhancement SERS substrate combining electromagnetic and chemical enhancement | Ultra-sensitive molecular detection with enhancement factor of 3.5×10⁷ [25] |

| Nitric Acid (HNO₃) | Sample digestion and preparation for elemental analysis | Microwave-assisted digestion of tea leaves for ICP-OES analysis [25] |

| Certified Reference Materials | Quality control and method validation | Verification of analytical accuracy for environmental samples [25] |

| Magnetic Nanoparticles | Preconcentration and separation of analytes | Direct introduction into FAAS to enhance sensitivity [25] |

| Deuterated Solvents | NMR spectroscopy for molecular structure analysis | Solvent for natural product characterization in drug development |

Comparative Analysis: Advantages and Disadvantages in Spectroscopic Research

The choice between active participant and objective observer roles in spectroscopic research involves trade-offs that significantly impact research outcomes, validity, and applicability.

Impact on Data Quality and Validity

The objective observer role, typically associated with quantitative approaches, enhances reliability and reproducibility through standardized protocols and minimized human intervention [23] [1]. This is particularly valuable in applications requiring precise measurements, such as regulatory compliance monitoring or quality control in pharmaceutical development. However, this approach may miss important contextual factors or subtle anomalies that could indicate methodological issues or unexpected phenomena.

The active participant role, often aligned with qualitative approaches, allows researchers to identify and investigate complex interactions and unexpected results that automated protocols might overlook [5] [1]. For example, a researcher actively engaged in SERS substrate development might notice subtle performance variations related to environmental conditions or sample matrix effects that would not be captured in standardized quantitative protocols. The trade-off is potentially reduced objectivity and increased susceptibility to researcher bias.

Ethical Considerations

Ethical implications vary significantly across the observer spectrum. Complete observation raises questions about deception and privacy when human subjects are involved, though these concerns are less prominent in instrumental analysis [23] [22]. Participant observation requires careful consideration of informed consent and potential conflicts between research goals and participant relationships [22] [26]. In spectroscopic research, ethical considerations typically focus on data integrity, accurate representation of findings, and appropriate use of resources rather than human subjects protection.

Resource Requirements and Practical Considerations

Quantitative approaches with objective observer roles often require significant investment in instrumentation, automation, and data processing infrastructure but may be more efficient for large sample volumes [8] [5]. Qualitative approaches with active participant roles are typically more time-intensive and require specialized researcher expertise but may be more resource-efficient for exploratory studies or method development [8] [5].

Diagram 2: Advantages and Disadvantages of Observer Roles in Spectroscopy

The choice between active participant and objective observer roles in spectroscopic research is not a matter of identifying a superior approach but rather selecting the most appropriate strategy for the specific research context and objectives.

For method validation, routine analysis, and large-scale monitoring studies, the objective observer role with quantitative methodologies provides the standardization, statistical power, and reproducibility required for definitive conclusions and regulatory acceptance. The automated, standardized nature of techniques like ICP-OES and ICP-MS for elemental analysis makes them well-suited to this approach [25].

For method development, exploratory research, and investigating complex interactions, the active participant role with qualitative approaches offers the flexibility, depth of understanding, and adaptive capability needed to advance methodological frontiers and understand nuanced phenomena. The development of novel SERS substrates or investigation of matrix effects exemplifies research domains where this approach is particularly valuable [25].

In practice, many sophisticated spectroscopic research programs benefit from a mixed-methods approach that strategically employs both roles at different stages of the research process. For example, qualitative participant observation might guide initial method development and optimization, followed by quantitative objective observation for validation and large-scale application. This integrated approach leverages the strengths of both perspectives while mitigating their respective limitations, ultimately advancing spectroscopic science through both depth of understanding and breadth of application.

From Theory to Practice: Applying Spectroscopic Methods in Biomedical Research

Qualitative spectroscopic techniques form the cornerstone of molecular analysis, providing researchers with the tools to uncover the intricate narratives of chemical structures and compositions. Unlike quantitative methods that focus on "how much," qualitative analysis seeks to answer "what is present" and "what is its nature," serving as the first critical step in material identification, drug development, and diagnostic applications. In the broader context of spectroscopic research, understanding the advantages and disadvantages of both qualitative and quantitative methods is essential for selecting the appropriate analytical strategy. This guide objectively compares the performance of various spectroscopic techniques, focusing on their qualitative applications across different research scenarios, from pharmaceutical development to environmental analysis.

The fundamental principle underlying qualitative spectroscopy involves probing molecular interactions with electromagnetic radiation to generate unique spectral fingerprints. These fingerprints—whether arising from vibrational transitions, electronic excitations, or nuclear spin orientations—provide characteristic patterns that reveal molecular identity, functional groups, structural conformations, and intermolecular interactions. As technological advancements continue to enhance the sensitivity, resolution, and accessibility of these techniques, their applications in research and industry have expanded significantly, making comparative analysis of their capabilities more valuable than ever for scientists and drug development professionals.

Comparative Performance of Spectroscopic Techniques

Different spectroscopic techniques offer distinct advantages for qualitative analysis, with variations in sensitivity, resolution, sample requirements, and the type of structural information they provide. The selection of an appropriate method depends on the specific research question, sample characteristics, and available resources. The table below provides a structured comparison of major spectroscopic techniques based on their qualitative analysis capabilities, helping researchers identify the most suitable approach for their specific applications.

Table 1: Comparative Analysis of Qualitative Spectroscopic Techniques

| Technique | Principle | Key Qualitative Applications | Information Obtained | Sample Requirements |

|---|---|---|---|---|

| FTIR [27] | Molecular bond vibrations in infrared region | Identification of functional groups, molecular structure analysis, phase identification | Vibrational frequencies of chemical bonds, molecular fingerprints | Solids, liquids, gases; minimal preparation often required |

| Raman [28] | Inelastic scattering of light | Molecular fingerprinting, identification of polymorphs, spatial mapping | Molecular vibrations, crystal structure, chemical composition | Minimal preparation; suitable for solids, liquids, gases; through-container analysis possible |

| SERS [28] | Enhanced Raman scattering on metallic surfaces | Trace analysis, single molecule detection, food contaminants | Amplified vibrational signals for low-concentration analytes | Requires plasmonic substrates (Au, Ag nanoparticles); minimal sample volume |

| NMR [28] | Nuclear spin transitions in magnetic field | Molecular structure determination, conformational analysis, metabolite identification | Atomic connectivity, molecular conformation, dynamics | Typically requires soluble samples; moderate to high sample purity |

| UV-Vis [29] | Electronic transitions | Identification of chromophores, conjugation analysis, compound classification | Electronic energy levels, conjugation length | Requires UV-Vis active compounds; solution typically needed |

| ICP-MS/OES [30] | Plasma ionization and mass/optical detection | Elemental composition, trace metal identification, contamination screening | Elemental identity and isotopic patterns | Typically requires liquid samples; acid digestion often necessary |

Table 2: Strengths and Limitations for Qualitative Analysis

| Technique | Key Advantages | Major Limitations | Ideal Use Cases |

|---|---|---|---|

| FTIR [27] | Rapid analysis, broad applicability to organic/inorganic materials, non-destructive | Limited spatial resolution, water interference, weak signals for non-polar bonds | Polymer characterization, inorganic material analysis, quality control of raw materials |

| Raman [28] | Minimal sample preparation, non-destructive, water compatibility, spatial resolution | Fluorescence interference, weak signals, potential sample heating | Pharmaceutical polymorph identification, in situ reaction monitoring, cultural heritage analysis |

| SERS [28] | Extreme sensitivity, single-molecule detection, aqueous compatibility | Reproducibility challenges, substrate dependency, complex optimization | Trace contaminant detection, forensic analysis, biomarker discovery |

| NMR [28] | Atomic-level structural information, quantitative capabilities, non-destructive | Low sensitivity, expensive instrumentation, requires expert interpretation | Structural elucidation of unknown compounds, protein-ligand interactions, metabolic profiling |

| UV-Vis [29] | Simple operation, rapid analysis, inexpensive equipment | Limited structural information, overlapping bands, solvent effects | Compound classification, reaction monitoring, teaching laboratories |

| ICP-MS/OES [30] | Exceptional sensitivity for metals, multi-element capability, wide dynamic range | Destructive analysis, requires sample digestion, high instrumentation cost | Trace metal analysis in pharmaceuticals, environmental monitoring, forensic toxicology |

Experimental Protocols and Methodologies

Sample Preparation and Analysis Workflows

Proper sample preparation is critical for obtaining high-quality spectroscopic data. The following experimental protocols outline standardized methodologies for different spectroscopic techniques, ensuring reproducible and reliable qualitative analysis:

FTIR Spectroscopy Protocol for Inorganic Materials [27]:

- Sample Preparation: For solid inorganic materials, grind 1-2 mg of sample with 100-200 mg of dried potassium bromide (KBr) using an agate mortar and pestle until homogeneous. For fragile materials, use the attenuated total reflectance (ATR) accessory with minimal preparation—simply place a representative sample on the crystal surface and apply consistent pressure.

- Instrument Setup: Purge the instrument with dry air or nitrogen for at least 15 minutes to minimize atmospheric CO₂ and water vapor interference. Set resolution to 4 cm⁻¹ and accumulate 32 scans per spectrum to ensure adequate signal-to-noise ratio.

- Data Collection: Collect background spectrum with clean KBr pellet or empty ATR crystal. Place prepared sample in the beam path and collect sample spectrum over the range of 4000-400 cm⁻¹.

- Qualitative Analysis: Identify characteristic absorption bands: metal-oxygen bonds (1000-400 cm⁻¹), carbonate ions (1450-1400 cm⁻¹), sulfate groups (1100 cm⁻¹), and silicate networks (1200-900 cm⁻¹). Compare with reference spectra libraries for material identification.

Raman Spectroscopy Protocol for Non-Invasive Analysis [28]:

- Sample Preparation: For "through the container" analysis, ensure container material (typically glass or plastic) does not produce interfering Raman signals. Position the container securely to maintain consistent focus. For solid samples, place directly on microscope slide with minimal handling.

- Instrument Calibration: Calibrate the instrument using a silicon standard (peak at 520.7 cm⁻¹) before analysis. Set laser power appropriately to prevent sample degradation—typically start with low power (1-10 mW) and increase only if necessary.