Sample Homogeneity in Spectroscopy: A Complete Guide to Accurate Analysis for Biomedical Research

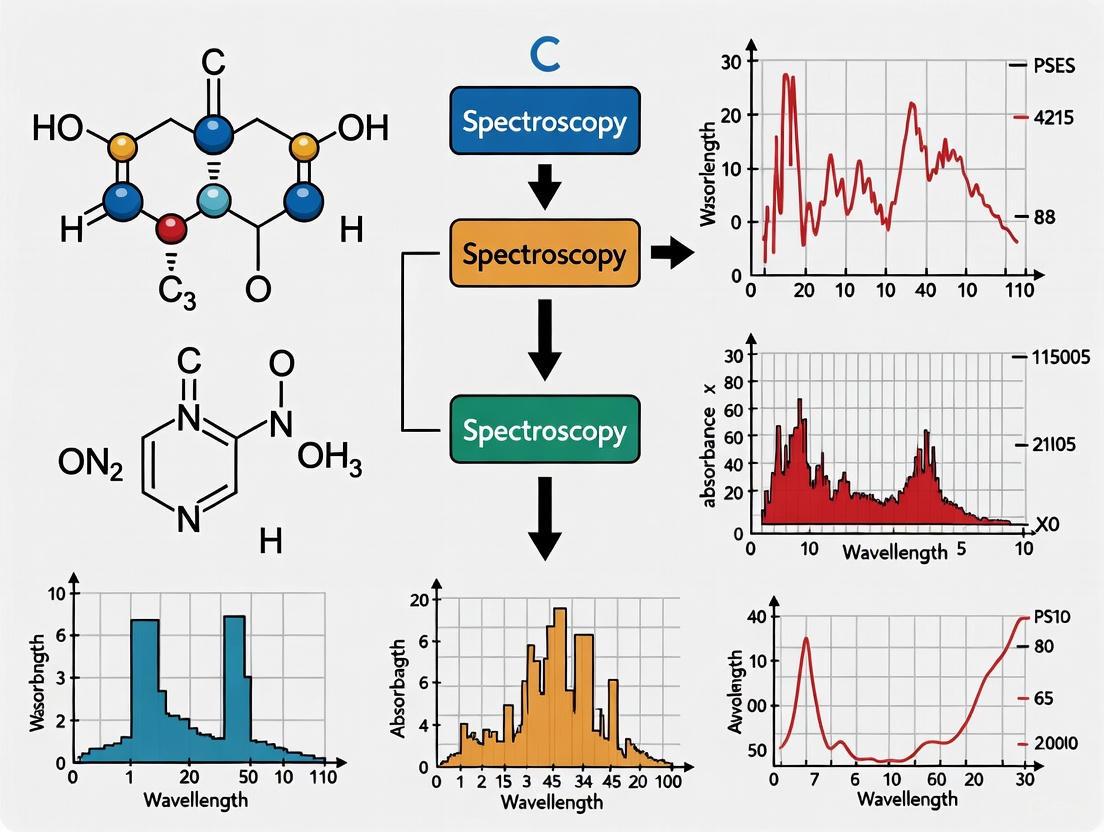

This comprehensive article explores the critical yet often overlooked role of sample homogeneity in ensuring accurate and reproducible spectroscopic analysis.

Sample Homogeneity in Spectroscopy: A Complete Guide to Accurate Analysis for Biomedical Research

Abstract

This comprehensive article explores the critical yet often overlooked role of sample homogeneity in ensuring accurate and reproducible spectroscopic analysis. Tailored for researchers, scientists, and drug development professionals, we dissect the fundamental challenges posed by chemical and physical heterogeneity, detail advanced preparation and analysis methodologies, provide practical troubleshooting strategies, and outline validation frameworks. By integrating foundational theory with cutting-edge applications in techniques like MALDI-MS, ICP-MS, and hyperspectral imaging, this guide serves as an essential resource for overcoming one of the most persistent obstacles in quantitative spectroscopic analysis, ultimately supporting robust method development and reliable data interpretation in biomedical and clinical research.

Why Homogeneity Matters: The Foundation of Reliable Spectroscopic Data

Sample heterogeneity represents a fundamental and persistent obstacle in quantitative and qualitative spectroscopic analysis. Within the broader thesis on the role of sample homogeneity in spectroscopy research, understanding the distinct nature of chemical and physical heterogeneity is critical for developing robust analytical methods. This guide provides a technical framework for differentiating these heterogeneity types, their specific spectral impacts, and methodologies to mitigate associated distortions.

Chemical heterogeneity refers to the uneven spatial distribution of molecular or elemental species throughout a sample, arising from factors such as incomplete mixing, uneven crystallization, or natural variations in raw materials [1]. Physical heterogeneity encompasses differences in a sample's physical morphology and structure—including particle size, shape, surface roughness, and packing density—without necessarily involving chemical composition changes [1].

Chemical Heterogeneity

In chemically heterogeneous samples, the measured spectrum represents a composite signal from unevenly distributed molecular constituents. A widely used mathematical approach for describing this scenario is the Linear Mixing Model (LMM), where each measured spectrum is considered a linear combination of endmember spectra [1]. However, this model assumes linearity and non-interaction, which may not hold in real systems where chemical interactions, band overlaps, or matrix effects can produce nonlinearities or violate additivity [1].

The primary challenge emerges when chemical heterogeneity occurs on spatial scales smaller than the spectrometer's measurement spot, causing subpixel mixing in imaging applications or averaging effects in point measurements [1]. This leads to inaccurate concentration estimates, particularly problematic in high-stakes environments like pharmaceutical quality control.

Physical Heterogeneity

Physical heterogeneity introduces spectral distortions through light-matter interactions dependent on structural properties rather than chemical composition. Key sources include:

- Particle size and shape: Larger particles scatter light more than smaller particles, altering pathlength and intensity according to Mie scattering and Kubelka-Munk relationships [1].

- Surface roughness: Irregular surfaces cause variations in diffuse or specular reflection, affecting absorbance values [1].

- Packing density: Voids or compressibility differences influence optical density and scattering light paths [1].

- Sample orientation: In anisotropic materials, the angle of illumination and detection alters spectral intensity [1].

These physical attributes primarily introduce additive and multiplicative distortions in spectra, commonly modeled through techniques like multiplicative scatter correction (MSC) [1]. Physical heterogeneity proves particularly challenging to control as it involves complex interactions between light and material structure that are highly dependent on optical geometry, sample preparation, and environmental factors [1].

Table 1: Comparative Analysis of Heterogeneity Types

| Aspect | Chemical Heterogeneity | Physical Heterogeneity |

|---|---|---|

| Primary Cause | Uneven distribution of molecular/elemental species | Variations in morphology, surface properties, packing density |

| Spectral Impact | Composite spectra from multiple constituents; band overlap | Additive/multiplicative distortions; baseline shifts |

| Mathematical Models | Linear Mixing Model (LMM) | Multiplicative Scatter Correction (MSC) |

| Key Challenges | Subpixel mixing; nonlinear interactions | Sensitivity to preparation, geometry, environment |

| Common Correction Approaches | Spectral unmixing; hyperspectral imaging | SNV; MSC; derivative spectroscopy |

Spectral Impacts and Distortion Mechanisms

Manifestation in Spectral Data

Heterogeneity-induced spectral distortions arise from multiple instrumental and sample-specific factors:

- Baseline drift caused by light scattering variations due to particle size differences, shape, surface roughness, and radiation penetration ability [2].

- Signal saturation in specific pixels or regions due to element shape, chemical nature, and incident light angle [2].

- Spectral noise inherent to recording instruments, light source fluctuations, and sample characteristics [2].

- Dead pixels containing spiked signals, saturated information, or no data due to sensor malfunction or improper light exposure [2].

In dispersive spectrographs, minor alignment and astigmatism distortions can significantly affect reproducibility and apparent fluorescence background complexity [3]. These seemingly slight distortions have substantial impacts on analytical accuracy, increasing the complexity of fluorescence backgrounds in techniques like Raman spectroscopy and complicating quantitative analysis [3].

Impact on Analytical Results

The consequences of unaddressed heterogeneity extend throughout the analytical workflow:

- Reduced calibration model performance with decreased prediction precision, accuracy, and transferability between instruments or sample batches [1].

- Increased apparent background complexity requiring higher-order polynomials for background correction, potentially introducing artifacts [3].

- Compositional misinterpretation when physical effects are incorrectly attributed to chemical signatures, particularly problematic in quantitative applications [1] [4].

In gold particle analysis, for example, natural gold is rarely homogeneous, with alloy heterogeneity present as microfabrics formed during primary mineralization or modified by subsequent chemical and physical processes [4]. This heterogeneity necessitates analyzing a minimum of 150 particles for adequate characterization using a two-stage approach involving spatial characterization of compositional heterogeneity plus crystallographic orientation mapping [4].

Methodologies for Assessment and Correction

Spectral Preprocessing Techniques

Spectral preprocessing represents the first line of defense against heterogeneity-induced variations:

- Standard Normal Variate (SNV): Centers and scales individual spectra to remove multiplicative and additive effects, particularly useful for diffuse reflectance spectra from powdery or granular samples [1].

- Multiplicative Scatter Correction (MSC): Adjusts spectra using linear regression against a reference spectrum to remove baseline offsets and multiplicative scatter [1].

- Derivative Spectroscopy (Savitzky-Golay): Reduces broad baseline trends and constant offsets through first or second derivatives, though it amplifies high-frequency noise requiring smoothing filters [1].

These techniques are empirically based, correcting data according to statistical patterns rather than explicit physical modeling, which may limit their effectiveness for complex, nonlinear scattering behaviors [1].

Advanced Sampling and Imaging Strategies

Localized Sampling and Adaptive Averaging

Localized sampling collects spectra from multiple points across the sample surface, with the average spectrum reducing local variation impact [1]. Adaptive sampling extends this concept by dynamically guiding measurement locations based on real-time spectral variance or predefined heuristics, focusing on regions of high spectral contrast to minimize uncertainty with minimal measurements [1].

Hyperspectral Imaging (HSI)

Hyperspectral imaging combines spatial resolution with chemical sensitivity, generating a three-dimensional data cube (x, y, λ) analyzed using chemometric techniques [1]:

- Principal Component Analysis (PCA): Reduces dimensionality and visualizes major variation sources.

- Independent Component Analysis (ICA): Separates mixed signals into statistically independent sources.

- Spectral Unmixing/Endmember Extraction: Identifies pure component spectra and their fractional abundances at each pixel [1].

HSI successfully models endmember variability—the spectral variability of the same chemical component under different physical or environmental conditions [1].

Optical Trapping-Raman Spectroscopy (OT-RS)

OT-RS systems enable single-particle analysis in gaseous environments, characterizing physical properties and heterogeneous chemistry without substrate interference [5]. This approach traps particles ranging from sub-micrometers to tens of microns, accommodating diverse materials from carbon nanotubes to bioaerosols, while monitoring temporal behavior with resolution from 10 ms to 5 minutes [5].

Distortion Correction Using Projective Transformation

Projective transformation, adapted from remote sensing, corrects inherent spectrograph distortions through polynomial functions that map distorted images to ideal positions [3]. The process involves:

- Control Point Identification: Using patterned white-light images and emission spectra to establish registration points [3].

- Polynomial Transformation: Calculating forward and inverse transforms using second-order polynomials:

x' = a₀ + a₁x + a₂x² + a₃y + a₄y² + a₅xyy' = b₀ + b₁x + b₂x² + b₃y + b₄y² + b₅xywhere x,y and x',y' represent positions in initial and transformed images [3].

- Intensity Interpolation: Assigning interpolated intensities from the measured image to appropriate positions in the output image [3].

This correction reduces apparent fluorescence background complexity and improves spectral reproducibility, providing advantages even in instruments where slit-image distortions and camera rotation were minimized manually [3].

Heterogeneity Assessment Workflow

Table 2: Research Reagent Solutions for Heterogeneity Analysis

| Reagent/Technique | Primary Function | Application Context |

|---|---|---|

| Hyperspectral Imaging (HSI) | Spatially-resolved chemical imaging | Mapping chemical distribution in heterogeneous solids |

| Nuclear Magnetic Resonance (NMR) Spectroscopy | Non-destructive spatial distribution analysis | Assessing water content and fabric in soil samples [6] [7] |

| Optical Trapping-Raman System | Single-particle analysis in gaseous environments | Studying heterogeneous chemistry of airborne solids [5] |

| Projective Transformation Algorithms | Software correction of spectral image distortions | Correcting inherent aberrations in dispersive spectrographs [3] |

| Multiplicative Scatter Correction (MSC) | Physical distortion correction in spectra | Compensating for particle size and packing effects [1] |

Distinguishing between chemical and physical heterogeneity remains fundamental to advancing spectroscopic accuracy and reliability. While chemical heterogeneity introduces composite spectral signatures from molecular unevenness, physical heterogeneity creates complex distortions through light-matter interactions dependent on structural properties. The methodologies outlined—from advanced sampling strategies and hyperspectral imaging to mathematical corrections like projective transformation—provide powerful tools for mitigating these challenges. As spectroscopic applications expand into increasingly complex materials and systems, rigorously addressing both forms of heterogeneity will remain essential for generating meaningful analytical results across research and industrial applications.

Sample heterogeneity represents a fundamental and persistent obstacle in quantitative spectroscopic analysis. In the context of spectroscopy research, sample heterogeneity refers to the spatial non-uniformity of a sample's chemical composition or physical structure, which introduces significant spectral distortions that compromise analytical accuracy and precision. This phenomenon manifests in two primary forms: chemical heterogeneity, involving uneven distribution of molecular species, and physical heterogeneity, encompassing variations in particle size, shape, packing density, and surface topography. The pervasive nature of heterogeneity across nearly all solid-state and particulate samples makes it a critical consideration for researchers, scientists, and drug development professionals seeking reliable quantitative results from spectroscopic techniques including near-infrared (NIR), mid-infrared (MIR), Raman, and NMR spectroscopy [1].

The core challenge presented by heterogeneous samples stems from an inherent disconnect between the measurement scale of spectroscopic instruments and the spatial complexity of real-world materials. When heterogeneity exists on scales smaller than the spectrometer's measurement spot, the resulting spectrum represents a composite signal that may not accurately represent the true sample composition. This effect is particularly problematic in quantitative applications such as pharmaceutical quality control, process analytical technology (PAT), and predictive modeling using chemometrics, where even minor spectral variations can significantly degrade calibration model performance, reduce prediction accuracy, and limit model transferability between instruments or sample batches [1]. Despite decades of research and numerous proposed correction strategies, sample heterogeneity remains a largely unsolved problem in analytical spectroscopy, necessitating continued development of advanced management approaches.

The Technical Challenges: How Heterogeneity Compromises Analysis

Fundamental Mechanisms of Spectral Distortion

The detrimental effects of sample heterogeneity on quantitative analysis operate through multiple interconnected mechanisms that distort spectral information. Chemically heterogeneous samples produce composite spectra resulting from the superposition of individual constituent spectra, which can violate the linearity assumptions fundamental to many quantitative models. The widely used Linear Mixing Model (LMM) mathematically represents this scenario, where each measured spectrum is considered a linear combination of endmember spectra. However, this model assumes linearity and non-interaction between components, assumptions that frequently break down in real systems due to chemical interactions, band overlaps, and matrix effects that produce nonlinearities or violate additivity principles [1].

Physical heterogeneity introduces equally problematic distortions through light-matter interactions dependent on sample morphology rather than chemical composition. Key mechanisms include:

- Particle size effects: Large particles scatter light more significantly than small particles, altering effective path length and spectral intensity according to Mie scattering and Kubelka-Munk relationships

- Surface roughness variations: Irregular surfaces cause fluctuations in diffuse or specular reflection characteristics, directly affecting absorbance measurements

- Packing density inconsistencies: Voids or compressibility differences in powdered samples influence optical density and scattering light paths

- Orientation effects: In anisotropic materials, the angle of illumination and detection can substantially alter spectral intensity [1]

These physical attributes primarily introduce additive and multiplicative distortions in spectra, commonly modeled through approaches like multiplicative scatter correction (MSC). Unfortunately, while preprocessing methods like MSC, standard normal variate (SNV), and derivatives can partially correct these effects, they rely on statistical assumptions rather than explicit physical modeling, limiting their effectiveness in strongly scattering or optically complex sample systems [1].

Impact on Quantitative Analytical Performance

The spectral distortions introduced by heterogeneity directly impair key performance metrics in quantitative spectroscopy. Calibration models developed using heterogeneous training sets typically demonstrate reduced predictive precision and accuracy, along with limited transferability between instruments or sample batches. The inherent variability in heterogeneous samples increases model uncertainty and expands confidence intervals for concentration predictions, potentially rendering analytical results unsuitable for decision-making in critical applications like pharmaceutical dosage form verification or clinical diagnostics [1].

In spectroscopic imaging techniques, heterogeneity creates additional challenges through subpixel mixing effects, where multiple chemical components occupy a single imaging pixel, complicating both identification and quantification. This effect is particularly problematic in hyperspectral imaging (HSI) applications, where the assumption of pure spectral signatures for each pixel frequently fails for real-world samples with complex microstructures. The resulting analytical inaccuracies can propagate through subsequent processing steps, potentially leading to incorrect conclusions about sample composition or distribution [1].

Table 1: Quantitative Impacts of Heterogeneity on Spectroscopic Analysis

| Performance Metric | Effect of Heterogeneity | Typical Magnitude of Impact |

|---|---|---|

| Calibration Accuracy | Increased prediction bias | 10-30% relative error increase |

| Measurement Precision | Expanded confidence intervals | 15-40% RSD deterioration |

| Model Transferability | Reduced robustness across instruments | 25-50% increased prediction error |

| Detection Limits | Elevated baseline noise | 2-5x degradation in LOD |

| Spectral Reproducibility | Increased inter-spectrum variance | 20-60% RSD increase |

The quantitative impact of heterogeneity is notably evident in magnetic resonance spectroscopy, where field inhomogeneity directly affects spectral quality. One quantitative assessment demonstrated that using higher-order shim corrections improved magnetic field homogeneity by approximately 30% compared to linear shims alone, significantly expanding the volume of brain tissue that could be effectively shimmed within acceptable line-broadening constraints [8]. This improvement directly translated to enhanced spectral quality for chemical shift imaging (CSI) studies, highlighting the critical relationship between homogeneity and analytical performance.

Quantitative Evidence: Documenting the Costs

Experimental Measurements of Heterogeneity Effects

Rigorous quantitative assessments have documented the specific performance costs associated with sample heterogeneity across multiple spectroscopic techniques. In NMR spectroscopy, the implementation of higher-order shim corrections demonstrated measurable improvements in field homogeneity essential for reliable quantitative analysis. A volunteer study (n=15) evaluating both intervoxel B0 uniformity and intravoxel T2* line broadening found that using higher-order shims compared to linear terms alone yielded approximately 30% greater volume of brain tissue that could be effectively shimmed within typical constraints for spectroscopic imaging [8]. Regional analysis revealed particularly significant improvements in homogeneity near tissue-air interfaces such as the skull base, areas traditionally problematic for magnetic field uniformity.

In optical spectroscopy, methodological comparisons have quantified the performance variations between different analytical approaches when handling heterogeneous samples. Studies evaluating metrics for Spectroscopic Optical Coherence Tomography (S-OCT) have demonstrated that the choice of processing algorithm significantly influences the ability to extract reliable information from heterogeneous samples. Phantom studies utilizing microsphere scatterers of different sizes (1.00μm and 3.00μm diameters) embedded in silicone foils confirmed that particles below the system's resolution limit could not be differentiated using standard intensity-based OCT images, but became distinguishable through specialized spectroscopic analysis of the spectral features arising from scattering property differences [9].

Table 2: Documented Performance Improvements from Homogeneity-Enhancing Techniques

| Technique | Homogeneity Challenge | Solution Implemented | Quantitative Improvement |

|---|---|---|---|

| Brain MRS | Magnetic field inhomogeneity | Higher-order shim corrections | 30% greater tissue volume with acceptable shimming [8] |

| NIR Coal Analysis | Natural variability in composition | NIR-XRF fusion with machine learning | R² values up to 0.9997 for quality indicators [10] |

| Polymer IR | Crystalline/amorphous structure | Spectral interpretation of splitting patterns | Identification of HDPE, LDPE, LLDPE structural variations [10] |

| S-OCT Phantom | Sub-resolution scatterers | Dual-window spectral analysis | Clear differentiation of 1μm vs 3μm microspheres [9] |

The Economic and Temporal Costs

Beyond technical performance metrics, heterogeneity imposes significant economic and temporal costs throughout the analytical workflow. The need for extensive sample preparation to improve homogeneity—through grinding, mixing, compression, or other homogenization techniques—adds substantial time to analytical procedures. In quality control environments where rapid analysis is essential, these additional steps can create bottlenecks that reduce overall throughput and increase operational costs. Furthermore, the development of robust calibration models capable of accommodating sample heterogeneity requires larger training sets with comprehensive representation of expected variations, increasing method development time and resource requirements.

The consequences of undetected or unaccounted-for heterogeneity can be particularly severe in regulated industries like pharmaceutical manufacturing. Inaccurate potency measurements due to heterogeneity-induced spectral distortions could lead to batch rejection, product recalls, or regulatory compliance issues, with potential financial impacts extending into millions of dollars. Similarly, in research environments, heterogeneity-related artifacts could compromise experimental conclusions, potentially invalidating months of work and requiring costly repetition of studies. These hidden costs underscore the economic imperative for effectively addressing heterogeneity in quantitative spectroscopic applications.

Methodologies for Characterizing and Quantifying Heterogeneity

Hyperspectral Imaging and Spatial-Spectral Analysis

Hyperspectral imaging (HSI) represents one of the most powerful approaches for characterizing sample heterogeneity, as it combines spatial resolving capability with chemical specificity through spectroscopy. An HSI system generates a three-dimensional data cube (x, y, λ) containing complete spectral information at each spatial position within the field of view. This rich dataset enables the application of sophisticated chemometric techniques specifically designed to unravel heterogeneous samples, including:

- Principal Component Analysis (PCA): Reduces dimensionality and visualizes major sources of spatial-spectral variation

- Independent Component Analysis (ICA): Separates mixed spectral signals into statistically independent sources

- Spectral Unmixing/Endmember Extraction: Identifies pure component spectra and their fractional abundances at each image pixel [1]

The application of HSI has demonstrated particular success in modeling endmember variability—the spectral variability exhibited by the same chemical component under different physical or environmental conditions. This capability makes HSI invaluable for characterizing complex heterogeneous systems ranging from pharmaceutical blends to biological tissues. Modern HSI systems combined with multivariate algorithms have been successfully deployed in real-time quality control applications, identifying physical heterogeneities that would remain undetected using conventional single-point spectrometers [1].

Statistical Approaches and Heterogeneity Metrics

Statistical methods provide complementary approaches for quantifying heterogeneity without full spatial characterization. Variogram analysis examines the relationship between spectral variance and spatial separation distance, providing quantitative parameters describing the scale and intensity of heterogeneity. Similarly, distance-based metrics such as Mahalanobis distance or spectral angle mapper can quantify differences between spectra collected from different sample positions, with increasing variance indicating greater heterogeneity.

In spectroscopic imaging applications, established and novel metrics facilitate the visualization and interpretation of heterogeneous features. For Spectroscopic Optical Coherence Tomography (S-OCT), these include:

- Center of Mass (COM) calculation: Determines the central wavelength of each spectrum, highlighting spectral shifts

- Autocorrelation Function (ACF) bandwidth: Characterizes spectral width variations

- Sub-band (SUB) metrics: Directly maps specific spectral regions into visualization channels [9]

Phantom studies utilizing microsphere scatterers of different sizes (1.00μm and 3.00μm diameters) have demonstrated that these metrics can clearly separate areas with different scattering properties in multi-layer phantoms, even when the features are below the system's resolution limit for standard intensity-based imaging [9]. This capability is particularly valuable for analyzing heterogeneous biological tissues, as demonstrated by contrast enhancement in bovine articular cartilage.

Heterogeneity Characterization Methods

Mitigation Strategies and Experimental Protocols

Sample Preparation and Presentation Protocols

Effective management of sample heterogeneity begins with optimized preparation and presentation protocols designed to minimize unnecessary variability. For powdered samples, carefully controlled grinding and milling procedures can reduce particle size variations, with specific protocols depending on sample material properties. Sieving through standardized mesh sizes (e.g., 100-200 mesh) following grinding provides additional control over particle size distribution. For compacted samples, standardized compression protocols using calibrated hydraulic presses with controlled force application (typically 1-10 tons for KBr pellets) improve packing density uniformity.

Liquid and suspension samples benefit from homogenization procedures including vortex mixing, sonication, or mechanical stirring with specified duration and intensity parameters. For particularly challenging samples, sample rotation or averaging techniques during spectral acquisition can effectively integrate over heterogeneity, providing more representative measurements. These approaches are particularly valuable when complete homogenization is impractical due to sample requirements or preservation needs.

Table 3: Research Reagent Solutions for Heterogeneity Management

| Reagent/Material | Function in Heterogeneity Management | Typical Application Protocol |

|---|---|---|

| KBr for Pellet Preparation | Creates uniform matrix for transmission analysis | 1:100 sample-to-KBr ratio; 8-ton compression for 2 minutes |

| Integrating Spheres | Reduces scattering artifacts from physical heterogeneity | Diffuse reflectance measurement with 150mm sphere diameter |

| Polybead Microspheres | Calibration standards for scattering characterization | 1.00μm and 3.00μm diameters in silicone matrix at 0.6-0.8% w/w |

| Reference Standards | Validation of homogeneity and method performance | NIST-traceable polymers or certified reference materials |

| Specialized Solvents | Matrix matching for chemical heterogeneity reduction | Deuterated solvents for NMR; spectral grade for UV-Vis |

Spectroscopic Techniques and Data Acquisition Strategies

Advanced spectroscopic techniques incorporate specific acquisition strategies to mitigate heterogeneity effects. Localized sampling approaches collect spectra from multiple spatially distributed points across the sample surface, with subsequent averaging to better represent global composition. The average spectrum ( \bar{S} = \frac{1}{N} \sum{i=1}^{N} Si ) from N sampling locations reduces the impact of local variations, especially when heterogeneity exists at scales smaller than the measurement beam size. Studies have demonstrated that increasing the number of sampling points significantly reduces calibration errors and improves reproducibility for NIR and Raman measurements of solid dosage forms and polymer films [1].

Adaptive sampling extends this concept by dynamically guiding measurement locations based on real-time spectral variance or predefined heuristics. Variance-based selection may focus on regions of high spectral contrast, while machine-learning-guided adaptive sampling uses active learning models to minimize uncertainty with the fewest measurements. This approach is particularly valuable for layered materials, nonuniform blends, and process-line applications where sample presentation cannot be easily controlled [1].

Sampling Strategy Workflow

Computational and Chemometric Approaches

Computational methods form the final layer of defense against heterogeneity-induced analytical errors. Spectral preprocessing techniques serve as the first line of defense against physical heterogeneity effects, with common approaches including:

- Standard Normal Variate (SNV): Centers and scales each spectrum individually to remove multiplicative and additive effects, particularly effective for diffuse reflectance spectra from powdery or granular samples

- Multiplicative Scatter Correction (MSC): Adjusts each spectrum using linear regression against a reference spectrum (typically the dataset mean) to remove baseline offsets and multiplicative scatter based on light scattering physics

- Derivative Spectroscopy (Savitzky-Golay): Computes first or second derivatives of spectra to reduce broad baseline trends and constant offsets, though this approach amplifies high-frequency noise requiring careful smoothing parameter optimization [1]

Beyond preprocessing, multivariate classification and regression models incorporating heterogeneity information directly into their architecture provide more robust quantification. Methods such as partial least squares (PLS) regression with specially designed training sets that encompass expected heterogeneity variations can create more adaptable calibration models. Emerging approaches include multi-modal data fusion combining multiple spectroscopic techniques (e.g., NIR and XRF) with machine learning to compensate for limitations of individual methods, as demonstrated in coal classification achieving R² values as high as 0.9997 for key quality indicators [10].

The frontier of heterogeneity management includes physics-informed machine learning where algorithms incorporate known physical constraints and relationships governing light-matter interactions, potentially offering more generalized solutions to heterogeneity challenges. Similarly, real-time feedback-controlled sampling systems that adjust measurement parameters based on immediate spectral assessment represent promising directions for next-generation spectroscopic systems capable of autonomous heterogeneity compensation [10] [1].

Future Directions and Research Opportunities

The evolving landscape of heterogeneity management points toward increasingly integrated, intelligent approaches that combine advanced instrumentation, computational power, and fundamental physical understanding. Multi-modal spectroscopy represents a promising direction, where complementary techniques (e.g., NIR with XRF or Raman with LIBS) provide overlapping information that can be fused to compensate for individual method limitations. The successful application of NIR-XRF fusion with machine learning for coal classification demonstrates the potential of this approach, achieving exceptional accuracy (R² up to 0.9997) for predicting ash, volatile matter, and sulfur content despite natural coal variability [10].

Artificial intelligence and machine learning applications for heterogeneity management are advancing beyond traditional chemometrics. Deep learning architectures capable of automatically learning relevant features from complex spectral datasets show particular promise for handling heterogeneous samples without explicit preprocessing. The integration of physical models directly into neural network structures—creating physics-informed machine learning—represents an especially promising direction that could yield more generalizable solutions to heterogeneity challenges [10].

Miniaturized and embedded spectroscopic sensors paired with machine learning create new opportunities for heterogeneity management through distributed sensing. Research demonstrating compact spectroscopic sensors (AS7265x) achieving over 96% classification accuracy for beverage identification using just four wavelengths suggests potential applications where multiple inexpensive sensors could characterize heterogeneity through spatial distribution rather than sophisticated instrumentation [10]. As these technologies mature, they may enable new paradigms for heterogeneity management that fundamentally reshape approaches to quantitative spectroscopic analysis.

The continuing fundamental research into light-matter interactions in complex, heterogeneous systems remains essential for developing next-generation solutions. Bridging optical physics with data-centric approaches may ultimately provide comprehensive solutions to the persistent challenges posed by heterogeneous samples in spectroscopy [10]. Through continued interdisciplinary collaboration across spectroscopy, chemometrics, materials science, and data analytics, the field appears poised to gradually transform heterogeneity from a debilitating limitation to a manageable—and potentially informative—aspect of spectroscopic analysis.

In spectroscopic analysis, achieving accurate and reproducible results is fundamentally linked to the physical and chemical properties of the sample itself. The core principles of particle size, spatial distribution, and matrix effects are critical, often interrelated factors that can dominate the measurement uncertainty, particularly in quantitative applications. Within the context of a broader thesis on the role of sample homogeneity in spectroscopy research, this guide examines how these principles manifest as significant challenges. Sample heterogeneity—both chemical and physical—represents a pervasive, unsolved problem that interferes with model building, reduces predictive accuracy, and complicates the transferability of methods across instruments and sample batches [1]. This guide provides an in-depth examination of these core principles, supported by quantitative data, detailed experimental protocols, and visual workflows, to equip researchers with the knowledge to mitigate their effects.

Particle Size and Its Spectroscopic Impact

The size of particles in a sample directly influences how light interacts with the material, affecting both absorption and scattering properties. For particulate samples analyzed using infrared spectroscopy, the particle size in relation to the analytical wavelength is a primary source of artifact in quantitative analysis [11].

Fundamental Mechanisms: Mie Scattering and the Size Regime

When the particle diameter approaches or exceeds the wavelength of the incident light (a regime governed by Mie scattering), scattering becomes a significant contributor to the total measured extinction (the sum of absorption and scattering) [11]. This scattering manifests as a slanted baseline in non-absorbing spectral regions, which can distort absorption bands and lead to inaccurate quantification [11].

- Size Parameter: The critical transition is defined by the size parameter, ( x = \pi dp / \lambda ), where ( dp ) is the particle diameter and ( \lambda ) is the wavelength. Significant scattering artifacts are typically observed for size parameters greater than 1 [11].

- Quantification Bias: Larger particles scatter more light, leading to an increase in extinction that is not related to analyte absorption. If the particle size distribution of a sample differs from that of the standard reference material (SRM) used for calibration, it can result in significant underestimation or overestimation of the true analyte mass concentration [11]. This is particularly critical for measurements near permissible exposure limits, such as for respirable crystalline silica.

Experimental Investigation of Particle Size Effects

Protocol: Investigating Particle Size-Related Artifacts in IR Spectroscopy [11]

- Objective: To quantify the bias in analyte quantification due to differences in particle size between samples and SRMs.

- Materials:

- Model systems: NIST-traceable spherical polystyrene microspheres (0.4 μm to 10 μm diameter).

- Real-world samples: Quartz SRM 1878 and SRM 1878b powders.

- Equipment: Infrared spectrometer, Andersen cascade impactor (ACI), micro-orifice uniform deposit impactor (MOUDI).

- Methodology:

- Theoretical Calculation: Calculate the theoretical extinction efficiency, ( Q_{ext} ), using Lorenz-Mie theory for spherical particles. This requires input of the complex refractive index (( m = n + ik )) of the material and the size parameter [11].

- Sample Preparation:

- For polystyrene microspheres, deposit aqueous suspensions onto filters.

- For quartz, aerosolize the powder and size-fractionate using the ACI or MOUDI to collect specific particle size ranges.

- Data Collection: Acquire IR transmittance spectra for all samples.

- Data Analysis:

- Convert transmittance to experimental extinction, ( \varepsilon{exp} = -\ln(T) ).

- Compare ( \varepsilon{exp} ) with the theoretical ( \varepsilon{Mie} ) calculated by integrating ( Q{ext} ) over the particle size distribution.

- Key Findings:

- For model polystyrene particles, measured and calculated extinction agreed within ±20% for a size parameter ( x < 1 ) (( d_p < 4.6 \mu m )) and at low packing densities (< 500 μg/cm²).

- For ( x > 1 ), scattering dominated absorption, and at high packing densities, "dependent scattering" from particle agglomeration became significant, leading to larger deviations from theory [11].

- For non-spherical, polydisperse quartz particles, deviations from Lorenz-Mie theory were observed, highlighting the limitations of the model for real-world, complex materials.

Table 1: Quantitative Impact of Particle Size on IR Extinction for Quartz

| Particle Size Regime | Impact on IR Extinction (ε) | Dominant Mechanism | Implication for Quantification |

|---|---|---|---|

| > 1 μm (approx.) | ε decreases with increasing particle size [11] | Increased Mie scattering | Overestimation if calibration SRM has smaller particles |

| Sub-micron (< 1 μm) | ε may decrease with decreasing size [11] | Reduced particle crystallinity from comminution | Underestimation if calibration SRM has larger particles |

Spatial Distribution and Homogeneity Assessment

Spatial distribution refers to the arrangement of chemical components within a sample. Chemical heterogeneity—the uneven distribution of analytes—is a common issue that can render a single point measurement non-representative of the whole sample [1].

The Challenge of Sub-Sampling

In point-based spectroscopy, if the measurement spot size is smaller than the scale of heterogeneity, the collected spectrum will be a composite signal from the various chemical components within that spot. This "sub-pixel mixing" violates the assumptions of linear calibration models and leads to inaccurate concentration estimates [1].

Hyperspectral Imaging and the Distributional Homogeneity Index (DHI)

Hyperspectral imaging (HSI) combines spatial and spectroscopic information, creating a data cube (X, Y, λ) that allows for the visualization of component distribution [12] [1]. A common method to assess homogeneity from such images is to analyze the histogram of pixel concentrations; however, this "constitutional homogeneity" lacks spatial context.

The Distributional Homogeneity Index (DHI) was developed to provide an objective, quantitative measure of spatial homogeneity [12] [13]. Its methodology is as follows:

Protocol: Assessing Distributional Homogeneity using DHI [12]

- Objective: To obtain an objective value of distributional homogeneity from a hyperspectral image.

- Materials: Hyperspectral image data cube of a solid dosage form (e.g., pharmaceutical tablet).

- Methodology:

- Macropixel Creation: The distribution map is progressively subdivided into smaller, non-overlapping macropixels. At each step, the entire map is partitioned into N macropixels of equal size.

- Concentration Calculation: The mean intensity (concentration) is calculated for each macropixel.

- Homogeneity Curve: The relative standard deviation (RSD) of the macropixel concentrations is calculated for each value of N and plotted against the macropixel size (or 1/N).

- DHI Calculation: The DHI is defined as the ratio of the area under the curve (AUC) of the homogeneity curve for the raw map to the AUC for a randomized version of the same map. A perfectly homogeneous map will have a DHI close to 1, as randomizing the pixels will not change the constitutional homogeneity.

- Key Findings:

- DHI provides a single, objective metric that effectively captures spatial information, overcoming the limitations of histogram analysis alone [12].

- Studies have shown a linear relationship between content uniformity values of pharmaceutical tablets and their DHI values, validating its use in formulation development [12] [13].

Table 2: Key Research Reagents and Materials for Homogeneity Studies

| Item | Function in Research |

|---|---|

| Hyperspectral Imaging System | Combines spatial and spectroscopic data to create chemical distribution maps [12]. |

| Solid Pharmaceutical Dosage Forms | Model systems for testing blend homogeneity and API distribution [12]. |

| Powder Blends | Used to study the effect of blending conditions and excipients on homogeneity [12]. |

| Distributional Homogeneity Index (DHI) | A quantitative criterion for assessing spatial homogeneity from imaging data [12] [13]. |

Matrix Effects

Matrix effects describe the alteration of an analyte's signal response due to the influence of other components in the sample. The "matrix" is the entirety of the sample other than the analyte of interest. Co-eluting matrix components can cause signal suppression or enhancement, leading to major issues in analytical accuracy [14].

Manifestations Across Techniques

While often discussed in the context of chromatography with mass spectrometry detection, matrix effects are a universal concern in spectroscopy.

- Mass Spectrometry: Phospholipids from clinical samples like plasma or serum are a classic example. They can co-elute with analytes and suppress or enhance ionization, leading to inaccurate quantification. Simple protein precipitation does not remove these phospholipids, requiring more selective clean-up techniques [14].

- Optical Spectroscopy: The sample matrix can cause light scattering, absorption band overlaps, or fluorescence, which alter the spectral baseline and analyte peak intensities. Physical matrix properties like packing density and surface roughness act as a source of physical heterogeneity, introducing multiplicative and additive spectral effects that can be difficult to distinguish from chemical information [1].

Mitigation Strategies

The primary strategy for managing matrix effects is to remove the interfering components through sample preparation.

Protocol: Evaluating and Mitigating Matrix Effects [14]

- Objective: To determine the presence and extent of matrix effects and to eliminate them for accurate quantification.

- Materials: Sample, appropriate analytical instrument (e.g., LC-MS), sample preparation materials (e.g., solid phase extraction sorbents).

- Methodology:

- Detection: Compare the analyte response in a pure standard solution to its response in a spiked, extracted sample matrix. A significant difference indicates a matrix effect.

- Sample Preparation Selection: Choose a sample preparation technique based on the required selectivity.

- Simple methods: Protein precipitation, filtration. These are less selective and may not fully remove matrix interferences.

- Advanced methods: Solid phase extraction (SPE), liquid-liquid extraction, immunoaffinity capture. These offer higher selectivity for removing specific interferents.

- Key Findings:

- As demonstrated in one study, a specialized polymeric SPE sorbent (Strata-X PRO) reduced the signal from interfering phospholipids in human serum by ten-fold compared to protein precipitation alone [14].

- While LC method development (e.g., changing gradients or columns) can sometimes help, sample preparation is often the most effective and robust approach, with the added benefit of protecting the analytical column from damage [14].

Table 3: Common Sample Preparation Techniques for Matrix Removal

| Technique | Principle | Effectiveness in Matrix Removal |

|---|---|---|

| Protein Precipitation | Denatures and removes proteins via organic solvents. | Low; does not remove small molecules, salts, or phospholipids [14]. |

| Liquid-Liquid Extraction | Partitioning of analytes between two immiscible liquids. | Medium; effectiveness depends on the partition coefficients of analytes vs. interferents. |

| Solid Phase Extraction (SPE) | Selective adsorption and elution from a solid sorbent. | High; can be tailored for selective removal of specific interferents like phospholipids [14]. |

The principles of particle size, spatial distribution, and matrix effects are not merely academic considerations but are fundamental drivers of accuracy in spectroscopic analysis. They collectively represent the multifaceted challenge of sample heterogeneity. Particle size dictates light-scattering artifacts, spatial distribution determines the representativeness of a measurement, and matrix effects introduce chemical interferences that skew the analytical signal. Ignoring these factors inevitably leads to models with poor predictive power and limited transferability. A comprehensive understanding and systematic investigation of these core principles, as outlined in this guide, are therefore essential for any rigorous spectroscopy research program, particularly those aimed at developing robust, real-world analytical methods. The ongoing research into advanced imaging, objective homogeneity metrics, and selective sample preparation continues to provide scientists with a growing toolkit to meet this persistent challenge.

Sampling Theory and Statistical Considerations for Representative Analysis

In spectroscopic analysis, the reliability of any quantitative or qualitative result is fundamentally dependent on the quality of the sample presented to the instrument. Sample heterogeneity—the spatial non-uniformity of a sample's chemical composition or physical structure—represents a persistent and foundational obstacle in analytical spectroscopy [1]. For researchers and drug development professionals, failing to account for heterogeneity introduces spectral distortions that compromise calibration model performance, reduce prediction accuracy, and limit model transferability between instruments or sample batches [1]. This guide examines the core theories, statistical frameworks, and practical methodologies for ensuring representative analysis, providing a structured approach to one of the remaining unsolved problems in spectroscopy.

Core Concepts of Sample Heterogeneity

Defining Heterogeneity in Analytical Samples

Sample heterogeneity manifests in multiple dimensions, each introducing distinct challenges for spectroscopic measurement:

Chemical Heterogeneity: Refers to the uneven distribution of molecular or elemental species throughout a sample. This arises from incomplete mixing, uneven crystallization, layering during manufacturing, or natural variation in raw materials [1]. In spectroscopy, the detected signal from a chemically heterogeneous sample is typically a composite spectrum representing a superposition of its constituents' individual spectra.

Physical Heterogeneity: Encompasses differences in a sample's morphology, surface properties, packing density, and internal structure that alter measured spectra without necessarily changing chemical composition [1]. Key sources include variations in particle size and shape, surface roughness, packing density, and sample orientation, which primarily introduce additive and multiplicative distortions in spectral data.

Theoretical Foundation: The Impact of Heterogeneity on Spectral Data

The mathematical formulation of spectral measurements must account for heterogeneity through models that describe the observed signals:

Linear Mixing Model (LMM): A widely used approach where each measured spectrum ( r ) is considered a linear combination of ( n ) endmember spectra ( ei ), weighted by their abundance ( ai ) [1]:

( r = a1e1 + a2e2 + \cdots + anen )

This model assumes linearity and non-interaction between components, which may not hold in real systems where chemical interactions, band overlaps, or matrix effects can produce nonlinearities.

Multiplicative Scatter Correction (MSC): A common approach to model physical distortions, where each spectrum is adjusted using linear regression against a reference spectrum to remove baseline offsets and multiplicative scatter effects [1].

The fundamental challenge arises when heterogeneity occurs on spatial scales smaller than the spectrometer's measurement spot, causing subpixel mixing in imaging applications or averaging effects in point measurements [1].

Statistical Framework for Representative Sampling

Foundational Statistical Concepts

Quantitative data analysis provides the statistical engine for evaluating and ensuring representative sampling through two main branches [15] [16]:

Table 1: Key Statistical Measures for Sampling Analysis

| Statistical Category | Specific Measures | Application in Sampling |

|---|---|---|

| Descriptive Statistics | Mean, Median, Mode | Describe central tendency of sample composition |

| Standard Deviation, Variance, Range | Quantify dispersion and variability within samples | |

| Skewness | Assess symmetry of component distribution | |

| Inferential Statistics | T-tests, ANOVA | Test for significant differences between sample batches |

| Correlation Analysis | Measure relationships between component distributions | |

| Regression Analysis | Model dependencies between sampling parameters and analytical results | |

| Advanced Techniques | Principal Component Analysis (PCA) | Reduce dimensionality and visualize major variation sources |

| Hyperspectral Unmixing | Identify pure component spectra and their fractional abundances |

Sampling Theory and Population Inference

The relationship between sample and population is fundamental to representative analysis:

Population and Sample: In statistical terms, the population is the entire group of material you're interested in analyzing, while the sample is the subset you actually measure [15]. For example, when analyzing a batch of pharmaceutical powder, the population would be the entire batch, while your sample consists of the specific aliquots selected for measurement.

Sampling Methods: Various probability sampling methods ensure representative selection [17]:

- Simple Random Sampling: Most straightforward approach with random selection without specific criteria

- Stratified Random Sampling: Divides population into subgroups (strata) and samples from each

- Cluster Sampling: Divides population into clusters, randomly selects clusters to sample entirely

- Systematic Sampling: Selects samples at regular intervals (every nth member) after a random start

The core principle is that descriptive statistics focus on characterizing the measured sample, while inferential statistics use these findings to make predictions about the entire population [15].

Experimental Protocols for Homogeneity Assessment

Methodologies for Homogeneity Investigation

Rigorous experimental design is essential for proper homogeneity assessment. The following protocols provide frameworks for systematic investigation:

Table 2: Standardized Sampling Protocols for Homogeneity Studies

| Protocol Name | Primary Application | Core Methodology | Statistical Analysis |

|---|---|---|---|

| Incremental Sampling | Bulk solids, powders | Collection of numerous small increments from throughout the lot | Descriptive statistics, variance component analysis |

| Spatial Mapping | Surfaces, films, tablets | Systematic measurement grid across sample surface | Spatial statistics, variogram analysis, PCA |

| Subsampling Hierarchy | Particulate materials | Sequential reduction from bulk to test portion | Nested ANOVA, variance component analysis |

| Time-series Sampling | Process streams, dynamic systems | Collection at predetermined time intervals | Time-series analysis, control charts |

Practical Implementation in Spectroscopic Analysis

For spectroscopic applications, several specialized approaches have been developed:

Localized Sampling and Adaptive Averaging: This strategy involves collecting spectra from multiple points across the sample surface, with the average spectrum ( \bar{r} ) calculated from ( n ) spatial positions [1]:

( \bar{r} = \frac{1}{n} \sum{i=1}^{n} ri )

Studies demonstrate that increasing sampling points significantly reduces calibration errors and increases reproducibility for NIR and Raman measurements of solid dosage forms and polymer films [1].

Hyperspectral Imaging (HSI): One of the most powerful tools for analyzing heterogeneous samples, HSI combines spatial resolving power with chemical sensitivity to produce a three-dimensional data cube ( D(x,y,\lambda) ) with two spatial dimensions ( (x,y) ) and one spectral dimension ( (\lambda) ) [1]. This dataset can be analyzed using chemometric techniques like PCA, Independent Component Analysis (ICA), and spectral unmixing to identify pure component spectra and their fractional abundances at each pixel.

Visualization of Sampling Strategies and Data Analysis Workflows

Sampling Decision Pathway

The following diagram outlines the systematic decision process for selecting appropriate sampling strategies based on material characteristics and analytical goals:

Hyperspectral Imaging Data Analysis Workflow

For complex heterogeneous materials, hyperspectral imaging provides a comprehensive approach to characterization, with a defined workflow for data processing:

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing robust sampling protocols requires specific materials and computational tools. The following table details essential resources for effective representative analysis:

Table 3: Essential Research Toolkit for Representative Sampling and Analysis

| Tool Category | Specific Tool/Reagent | Function in Sampling & Analysis |

|---|---|---|

| Spectral Preprocessing Tools | SNV (Standard Normal Variate) | Removes multiplicative and additive effects in diffuse reflectance spectra |

| MSC (Multiplicative Scatter Correction) | Corrects for baseline offsets and scatter effects using reference spectrum | |

| Derivative Spectroscopy (Savitzky-Golay) | Reduces broad baseline trends and constant offsets through differentiation | |

| Statistical Analysis Software | R Programming Language | Open-source environment for statistical computing and specialized chemometrics |

| Python with Sci-Kit Learn | Machine learning library for predictive modeling and pattern recognition | |

| SPSS, SAS, STATA | Commercial statistical packages for advanced inferential analysis | |

| Sampling Accessories | Automated Sample Changers | Enable high-throughput localized sampling across multiple sample positions |

| Fiber Optic Probes | Facilitate spatially-resolved measurements in process environments | |

| Microspectroscopy Accessories | Enable measurement of small sample areas for heterogeneity assessment | |

| Reference Materials | Certified Homogeneous Materials | Provide validation standards for sampling protocol performance |

| Matrix-Matched Calibrants | Ensure accurate calibration in complex sample matrices |

Sample heterogeneity remains a central and unresolved challenge in analytical spectroscopy, introducing spectral complexity that interferes with model building, reduces predictive accuracy, and complicates method transferability [1]. The persistent nature of this problem stems from the inherent disconnect between the scale of spectroscopic measurements and the spatial complexity of real-world materials. By adopting the systematic sampling theories, statistical considerations, and experimental protocols outlined in this guide, researchers and drug development professionals can significantly improve the representativeness of their analyses. Future advancements will likely focus on adaptive sampling algorithms, enhanced uncertainty quantification, and the integration of machine learning approaches to further mitigate the effects of heterogeneity in spectroscopic analysis.

Achieving Homogeneity: Advanced Sample Preparation and Analytical Strategies

The Critical Role of Sample Homogeneity in Spectroscopic Analysis

In spectroscopic research, the foundation of accurate and reproducible data lies in the often-overlooked step of sample preparation. Inadequate sample preparation is responsible for as much as 60% of all spectroscopic analytical errors [18]. Sample homogeneity—the uniform distribution of chemical and physical properties throughout a specimen—is not merely a best practice but a fundamental prerequisite for valid analytical outcomes. Heterogeneity introduces significant spectral distortions that can compromise research findings, quality control procedures, and analytical conclusions, regardless of the sophistication of the instrumentation used [18] [1].

The challenges of heterogeneity manifest in two primary forms: chemical heterogeneity, referring to the uneven spatial distribution of molecular or elemental species, and physical heterogeneity, which encompasses variations in particle size, shape, surface roughness, and packing density [1]. Physical heterogeneity can cause additive and multiplicative distortions in spectra through phenomena like light scattering, which alters the effective path length and intensity [1]. Without proper preparation techniques such as grinding and milling, even a chemically uniform sample can yield non-reproducible results because the analyzed portion may not represent the whole, leading to inaccurate quantitative analysis [18].

Solid Sample Preparation Fundamentals

Overarching Principles for All Techniques

The primary goal of solid sample preparation is to create a homogeneous specimen that interacts uniformly with the analytical probe, whether X-rays or plasma. Several universal factors must be controlled to achieve this, each directly impacting analytical accuracy:

- Particle Size: Controlling particle size is crucial as larger particles can scatter radiation excessively, while a broad size distribution creates sampling error. For techniques like XRF, particles are typically reduced to below 75 μm to ensure uniform exposure to the X-ray beam [18] [19] [20].

- Contamination Control: Cross-contamination between samples or from equipment can introduce spurious signals. Using clean equipment, proper cleaning protocols between samples, and selecting appropriate grinding media materials are essential to maintain sample integrity [18] [19].

- Homogeneity: The sample must be uniform in both composition and physical properties to ensure the analyzed portion is representative of the whole. Proper grinding, milling, and mixing techniques are employed to achieve this essential characteristic [18].

Technique-Specific Requirements: XRF vs. ICP-MS

While sharing some common principles, preparation for XRF and ICP-MS differs significantly due to their fundamental operating mechanisms.

X-Ray Fluorescence (XRF) requires a solid, stable sample with a flat, homogeneous surface. The preparation focuses on creating a uniform density and particle distribution to ensure consistent X-ray absorption and fluorescence characteristics across the analysis area [18] [19]. Common approaches include pressing powders into pellets or creating fused glass disks, which eliminate mineralogical and particle size effects [20].

Inductively Coupled Plasma Mass Spectrometry (ICP-MS) demands complete dissolution of solid samples into a liquid form. The preparation process must achieve total digestion to ensure all elements are introduced into the plasma in a consistent, reproducible manner. This requires aggressive acid digestion at elevated temperatures and pressures, followed by accurate dilution to appropriate concentration ranges and filtration to remove any particulate matter that could clog the nebulizer [18] [21].

Grinding and Milling: The First Critical Steps

Equipment Selection and Operation

Grinding and milling serve as the foundational steps for achieving sample homogeneity. The choice between grinding and milling depends on material properties and analytical requirements.

- Grinding reduces particle size through mechanical friction and is ideal for hard, brittle materials. Swing grinding machines use an oscillating motion rather than direct pressure, minimizing heat generation that could alter sample chemistry—a crucial consideration for thermally sensitive materials [18].

- Milling provides greater control over particle size reduction and creates superior surface quality, particularly for metallic samples. Modern spectroscopic milling machines offer programmable parameters (rotational speed, feed rate, cutting depth) and dedicated cooling systems to prevent thermal degradation [18] [22].

When selecting grinding or milling equipment, consider material hardness, required final particle size, and contamination risks. The grinding surfaces should be selected to minimize introducing interfering elements; common materials include agate, tungsten carbide, or hardened steel, chosen based on sample hardness and analytical concerns [18] [20].

Quantitative Impact on Analytical Results

The effectiveness of grinding directly influences analytical performance. Experimental data demonstrates that optimizing particle size distribution significantly enhances signal quality. The table below summarizes findings from a study on plant material preparation for Laser-Induced Breakdown Spectrometry (LIBS), illustrating the substantial signal improvements achievable through proper grinding.

Table 1: Signal Enhancement in LIBS Analysis Through Optimized Grinding [23]

| Plant Material | Optimal Grinding Method | Achieved Particle Size | Observed Signal Enhancement |

|---|---|---|---|

| Sugarcane Leaves | Ball Milling (60 min) | Generally <75 μm | Up to 50% for most elements |

| Orange Tree Leaves | Cryogenic Grinding (10 min) | Generally <75 μm | Up to 50% for most elements |

| Soy Leaves | Ball Milling (20 min) | Generally <75 μm | Up to 50% for most elements |

These findings underscore that the optimal grinding method varies by sample type, emphasizing the need for method development specific to the analyzed material.

Pelletizing for XRF Analysis

Process and Protocols

Pelletizing transforms powdered samples into solid disks with uniform surface properties and density, making it particularly suitable for XRF analysis. The standardized process involves several key steps:

- Grinding: Reduce the sample to a fine powder with consistent particle size, typically <75μm [19] [20].

- Mixing with Binder: Combine the ground powder with a binding agent (e.g., cellulose, wax, or boric acid) to ensure cohesion during pressing. Typical sample-to-binder ratios range from 5:1 to 10:1 [18] [19].

- Pressing: Compress the mixture using a hydraulic or pneumatic press at 15-30 tons of pressure to form a stable, solid pellet with a flat, smooth surface [18] [20].

The pelletizing process creates samples with consistent X-ray absorption properties, which is essential for quantitative analysis. Binder selection must consider the analytical objectives, as binders dilute the sample, potentially affecting detection limits for trace elements.

Advantages and Limitations

Pressed pellets offer a fast, cost-effective preparation method suitable for screening, process monitoring, and semi-quantitative analysis [20]. The primary advantages include minimal sample dilution, relatively simple preparation workflow, and compatibility with a wide range of sample types.

However, pressed pellets may not completely eliminate mineralogical effects or particle heterogeneity, which can limit accuracy for demanding applications. Variables such as binder distribution and surface texture can affect results, though these can be controlled through standardized procedures and careful handling [20].

Advanced Preparation: Fusion for XRF and Digestion for ICP-MS

Fusion Techniques for High-Accuracy XRF

Fusion represents the most rigorous preparation technique for XRF analysis, providing unparalleled accuracy for challenging materials. The process involves:

- Flux Addition: Mix the ground sample with a flux (typically lithium tetraborate or lithium metaborate) in ratios between 1:5 and 1:10 [18] [20].

- High-Temperature Melting: Heat the mixture to 950-1200°C in platinum crucibles until fully molten [18] [20].

- Casting: Pour the molten material into a preheated mold to form a homogeneous glass disk (bead) [20].

Fusion completely destroys the original crystal structure of the sample, creating a homogeneous glass disk that eliminates matrix and mineralogical effects. This method is particularly valuable for refractory materials, silicates, minerals, and ceramics that resist other preparation methods [18]. While more time-consuming and expensive than pelletizing, fusion provides superior accuracy for quantitative analysis of complex matrices like cement, slag, and geological samples [20].

Microwave Digestion for ICP-MS Analysis

For ICP-MS analysis, solid samples must undergo complete digestion to transform them into a solution suitable for nebulization. Microwave-assisted digestion has become the standard approach, offering controlled, efficient sample preparation.

Table 2: Key Parameters for Microwave Digestion in ICP-MS Sample Preparation [21]

| Parameter | Considerations | Impact on Analysis |

|---|---|---|

| Temperature | Must be sufficient to completely dissolve refractory phases | Incomplete digestion causes inaccurate quantitation and instrument drift |

| Pressure | Sealed vessels allow higher temperatures without evaporative loss | Prevents loss of volatile analytes; enables complete digestion |

| Acid Selection | Matrix-specific (e.g., HNO₃, HCl, HF) | Must ensure complete dissolution while maintaining analyte compatibility |

| Sample Size | Balance between representative sampling and digestion efficiency | Too large: incomplete digestion; Too small: poor detection limits |

Optimal digestion requires method development specific to the sample matrix. High-purity acids are essential to prevent contamination, and post-digestion filtration may be necessary to remove any undissolved particles that could interfere with instrument operation [18] [21].

The Researcher's Toolkit: Essential Equipment and Reagents

Successful implementation of sample preparation protocols requires access to appropriate laboratory equipment and consumables. The selection of specific items depends on the sample type, analytical technique, and required precision.

Table 3: Essential Equipment and Reagents for Solid Sample Preparation

| Item | Function | Application Notes |

|---|---|---|

| Jaw Crusher | Initial size reduction of bulk samples | Produces fragments of 2-12mm; foundation for subsequent steps [20] |

| Grinding/Milling Mills | Fine particle size reduction | Various types (swing, planetary ball) for different materials [18] [23] |

| Hydraulic Press | Compressing powders into pellets | 15-30 ton capacity for XRF pellet preparation [18] [19] |

| Fusion Furnace | Producing homogeneous glass beads | High-temperature (1000-1200°C) heating with flux [18] [20] |

| Microwave Digestion System | Complete dissolution of solids for ICP-MS | Controlled temperature/pressure vessels for acid digestion [21] |

| Binding Agents (Cellulose, Wax) | Providing cohesion for pressed pellets | Chemically inert; minimal spectral interference [18] [19] |

| Flux Agents (Lithium Tetraborate) | Creating homogeneous glass beads for fusion | High-purity grades prevent contamination [18] [20] |

| High-Purity Acids | Digesting samples for ICP-MS | Minimal trace metal background; matrix-specific selection [21] |

Integrated Workflows and Visual Guides

XRF Sample Preparation Workflow

The preparation of solid samples for XRF analysis follows a systematic progression from bulk material to analyzable specimen. The workflow incorporates multiple preparation paths depending on analytical requirements and sample properties.

ICP-MS Sample Preparation Workflow

Sample preparation for ICP-MS requires complete dissolution of solid samples, followed by additional steps to ensure compatibility with the instrument's introduction system.

Proper solid sample preparation through grinding, milling, and pelletizing is not merely a procedural prerequisite but a fundamental determinant of analytical success in both XRF and ICP-MS spectroscopy. The selection of appropriate preparation methods—whether pressing pellets, creating fused beads, or performing complete microwave digestion—must be guided by the sample characteristics, analytical technique, and required precision. As the spectroscopic field advances with new imaging strategies and adaptive sampling algorithms [1], the foundational importance of proper sample preparation remains constant. By implementing the systematic approaches and protocols outlined in this guide, researchers can ensure their spectroscopic analyses yield accurate, reproducible data that faithfully represents the material under investigation.

Sample preparation is a foundational step in spectroscopic analysis, with inadequate preparation accounting for approximately 60% of all analytical errors [18]. In the context of spectroscopic research, sample homogeneity—the uniform distribution of a sample's chemical and physical properties—is paramount for obtaining valid, accurate, and reproducible results [1]. Heterogeneous samples introduce spectral distortions that compromise both qualitative identification and quantitative analysis, ultimately undermining the integrity of research findings [1] [18].

This technical guide provides an in-depth examination of optimized protocols for liquid and gas sample preparation, focusing on the core techniques of dilution, filtration, and solvent selection. These procedures are essential for achieving the sample homogeneity required for reliable spectroscopic data across various analytical platforms, including ICP-MS, FT-IR, and optical emission spectrometry [18]. By implementing these standardized methodologies, researchers and drug development professionals can significantly enhance data quality, improve measurement precision, and ensure regulatory compliance in spectroscopic applications.

Fundamental Principles of Sample Preparation for Spectroscopy

The Impact of Preparation on Analytical Results

Sample preparation directly governs the quality and integrity of spectroscopic data through several fundamental mechanisms [18]. The interaction between electromagnetic radiation and sample material is heavily influenced by surface characteristics and particle properties. Rough surfaces scatter light randomly, while uniform particle size distribution ensures consistent radiation interaction, which is crucial for quantitative accuracy.

Matrix effects represent another critical consideration, where sample matrix components can absorb or enhance spectral signals, potentially obscuring or distorting analyte responses. Proper preparation techniques mitigate these interferences through strategic dilution, extraction, or matrix matching [18]. Furthermore, homogeneity is indispensable for representative sampling, as heterogeneous samples yield non-reproducible results where the analyzed portion may not accurately represent the whole specimen.

Sample Homogeneity: An Unsolved Challenge in Spectroscopy

Sample heterogeneity presents a persistent obstacle in both quantitative and qualitative spectroscopic analysis [1]. This variability manifests in multiple dimensions—chemically through uneven distribution of molecular species, and physically through differences in morphology, surface properties, and packing density [1]. In real-world samples, particularly solids and powders, these forms of heterogeneity represent the norm rather than the exception.

The challenge is particularly acute in quantitative spectroscopic applications such as process analytical technology (PAT), quality control, and predictive modeling using chemometrics [1]. Even minor deviations in sample presentation or composition can generate significant spectral variations that degrade calibration model performance, reducing both prediction precision and accuracy while limiting model transferability between instruments or sample batches [1].

Liquid Sample Preparation Protocols

Dilution and Filtration for ICP-MS Analysis

Inductively Coupled Plasma Mass Spectrometry (ICP-MS) demands stringent liquid sample preparation due to its exceptional sensitivity, where minor preparation errors can substantially skew analytical results [18]. The dilution process serves multiple essential functions: positioning analyte concentrations within the instrument's optimal detection range, reducing matrix effects that disrupt accurate measurement, and preventing damage to sensitive instrument components from elevated salt levels [18]. Samples with high dissolved solid content often require significant dilution—sometimes exceeding 1:1000 for highly concentrated solutions [18].

Filtration subsequently removes suspended particulates that could contaminate nebulizers or interfere with ionization efficiency. For most ICP-MS applications, filtration through 0.45 μm membrane filters suffices, though ultratrace analysis may necessitate 0.2 μm filtration [18]. Filter material selection is crucial to avoid introducing contamination or adsorbing analytes; PTFE membranes generally provide the optimal balance of chemical resistance and low background interference [18]. Additionally, high-purity acidification with nitric acid (typically to 2% v/v) maintains metal ions in solution by preventing precipitation and adsorption to container walls [18].

Table 1: Optimization Parameters for ICP-MS Sample Preparation

| Parameter | Optimal Specification | Function | Considerations |

|---|---|---|---|

| Dilution Factor | Variable (up to 1:1000) | Brings analytes to detectable range; reduces matrix effects | Sample-specific based on dissolved solid content |

| Filtration Size | 0.45 μm (standard); 0.2 μm (ultratrace) | Removes suspended particles | Use PTFE membranes to minimize contamination |

| Acidification | 2% v/v high-purity HNO₃ | Prevents precipitation; keeps metals in solution | Use ultra-high purity acids to avoid contamination |

| Internal Standard | Appropriate element (e.g., Sc, Y, In, Bi) | Compensates for matrix effects and instrument drift | Select elements not present in original sample |

Modern approaches to ICP-MS sample preparation also leverage automation to enhance throughput and reproducibility. Automated high-pressure ion chromatography (HPIC) systems can process 40-50 samples within 24 hours with minimal human intervention, significantly reducing potential for error and matching current MC-ICP-MS analytical capacity [24]. These systems can directly introduce filtered and acidified water samples, separating target analytes like strontium from interfering cations and collecting purified isolates for direct analysis [24].

Solvent Selection for UV-Vis and FT-IR Spectroscopy