Solving Signal Intensity Problems: A Scientist's Guide to Troubleshooting Spectroscopic Measurements

This article provides a comprehensive framework for researchers and drug development professionals to diagnose, troubleshoot, and resolve low signal intensity in spectroscopic measurements.

Solving Signal Intensity Problems: A Scientist's Guide to Troubleshooting Spectroscopic Measurements

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to diagnose, troubleshoot, and resolve low signal intensity in spectroscopic measurements. Covering foundational principles, advanced methodological approaches, practical troubleshooting protocols, and validation techniques, it synthesizes current best practices from diverse fields including biomedical analysis, nuclear safeguards, and materials science. The guidance is designed to help scientists optimize instrument performance, improve detection limits, and ensure the reliability of spectroscopic data in research and development.

Understanding the Fundamentals: What Low Signal Intensity Reveals About Your System

What are SNR and LOD, and why are they critical for my spectroscopic measurements?

Signal-to-Noise Ratio (SNR) is a measure that compares the level of a desired signal to the level of background noise. It quantifies how clearly a signal can be distinguished from the inherent fluctuations in your measurement system [1]. In analytical chemistry, it is often calculated by comparing the signal height of an analyte to the baseline noise [2].

Limit of Detection (LOD) is the lowest concentration or quantity of an analyte that can be reliably detected—but not necessarily quantified—with a stated level of confidence. It represents the point at which a measurement signal emerges with statistical significance from the background noise [3] [4].

These two metrics are intrinsically linked. The LOD is fundamentally determined by the SNR of your method [2]. A low SNR makes it impossible to distinguish weak analyte signals from random baseline fluctuations, directly raising your method's LOD and potentially causing you to miss critical data, such as low-level impurities in a pharmaceutical product [2].

The table below summarizes the standard and practical interpretations of SNR values in relation to detection and quantification.

Table: Interpreting Signal-to-Noise Ratios for LOD and LOQ

| SNR Value | Standard Interpretation | Practical Reality (as noted in industry) | Key Implication |

|---|---|---|---|

| 2:1 to 3:1 | Acceptable for estimating the Limit of Detection (LOD) according to ICH Q2(R1). A 3:1 ratio will be the sole benchmark in the upcoming ICH Q2(R2) revision [2]. | SNR between 3:1 and 10:1 is often required for LOD with real-life samples and challenging conditions [2]. | The analyte is presumed present, but quantification is unreliable. |

| 10:1 | Standard for the Limit of Quantification (LOQ), the level at which an analyte can be accurately quantified [2] [3]. | SNR from 10:1 to 20:1 may be needed for LOQ to ensure robust quantification in practice [2]. | The analyte concentration can be measured with stated accuracy and precision. |

How do I quantitatively determine the LOD from my data?

The LOD can be determined using several established methodologies. The following workflow outlines the primary approaches, with statistical methods being the most rigorous.

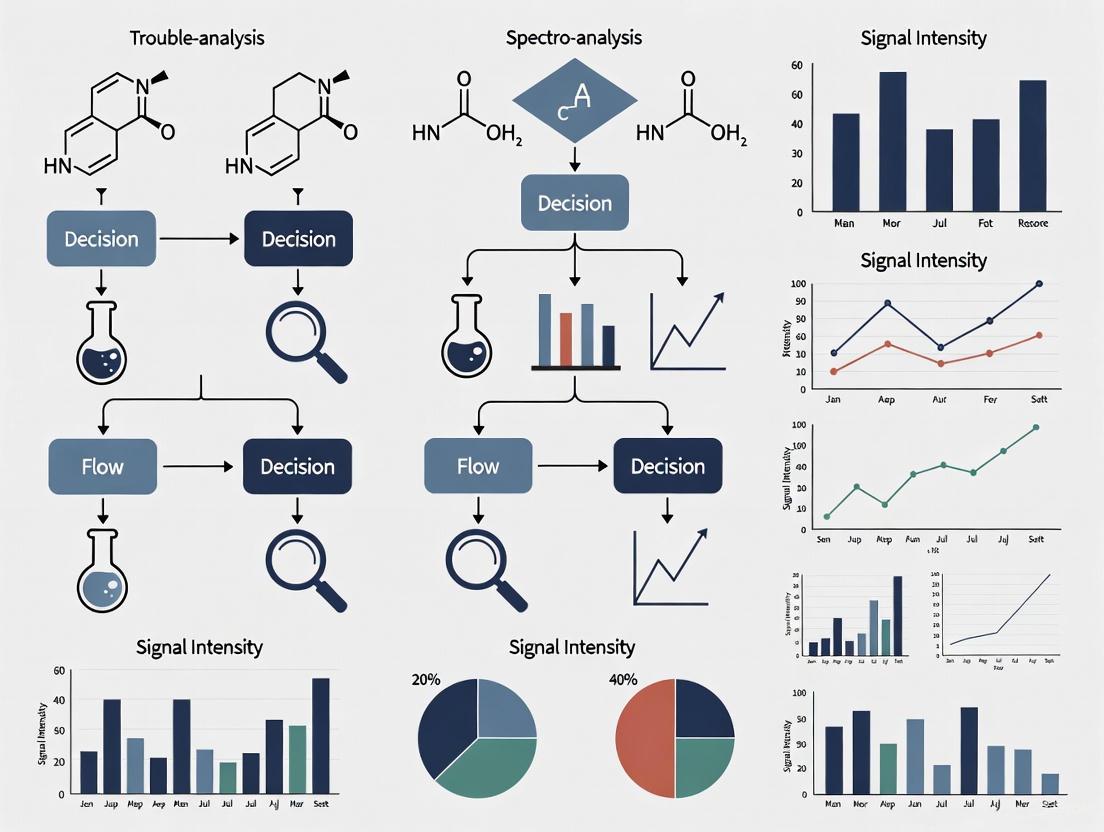

Diagram: Workflow for Determining the Limit of Detection (LOD). The statistical method is the most rigorous, while the signal-to-noise method is commonly used in chromatographic techniques.

Method 1: Statistical Determination (Most Rigorous)

This method is based on the standard deviation of the blank signal and is considered the most scientifically sound [4].

- Procedure: Analyze a minimum of 10 blank samples or a test sample with a very low analyte concentration, following the complete analytical procedure [4].

- Calculation: Calculate the standard deviation (s) of the concentration responses from these replicates. The LOD is then derived using the formula: LOD = 3.3 × s The multiplier 3.3 is based on a 5% risk of both false positives (α) and false negatives (β), using a t-value from the Student's t-distribution [4].

Method 2: Signal-to-Noise Ratio

This common approach is frequently used in chromatographic techniques like HPLC [2] [4].

- Procedure: Analyze a sample with the analyte at a concentration that produces a small but discernible peak. The signal-to-noise ratio (S/N) is calculated by dividing the height of the analyte peak (H) by the amplitude of the baseline noise (h), measured from a peak-free region of the chromatogram [4].

- Calculation: The LOD is the concentration that yields an S/N of 3. If you have a sample of known concentration, the LOD can be estimated as: LOD = (3 × Concentration) / (H/h)

Method 3: Visual Evaluation

This less formal method involves analyzing samples with known, decreasing concentrations of the analyte and identifying the lowest level at which the signal can be reliably observed [2].

What are the most effective strategies to improve SNR and lower the LOD in my experiments?

Improving SNR is a two-pronged approach: increasing the signal and reducing the noise. The following table categorizes common strategies.

Table: Troubleshooting Guide: Strategies to Improve SNR and Lower LOD

| Strategy Category | Specific Action | Brief Rationale & Implementation Tip |

|---|---|---|

| Increase Signal | Optimize Wavelength | Operate at the analyte's absorbance maximum. Use software to change wavelengths during a run for multiple components [5]. |

| Inject More Sample | Increase the mass of analyte on-column. Use weak injection solvents for on-column focusing to inject larger volumes without peak broadening [5]. | |

| Use a More Sensitive Detector | Switch to fluorescence, electrochemical, or mass spectrometric detection for select compounds that offer higher selectivity and signal for specific analytes [5]. | |

| Reduce Noise | Signal Averaging & Smoothing | Adjust the detector time constant and data sampling rate. Caution: Over-smoothing (excessive time constants) can distort or eliminate small peaks [2]. |

| Temperature Control | Use a column heater and insulate tubing to the detector. Prevents baseline drifts and noise from temperature fluctuations [5]. | |

| Improve Reagent/Solvent Purity | Use HPLC-grade solvents and high-purity reagents to reduce chemical background noise [5]. | |

| Sample Cleanup | Incorporate sample preparation steps (e.g., filtration, extraction) to remove interfering matrix components that contribute to noise [5]. | |

| Advanced Data Processing | Apply Mathematical Filters | Use post-acquisition processing like Savitsky-Golay smoothing, Fourier transform, or wavelet transforms (e.g., NERD method) to reduce noise without altering raw data. Wavelet transforms can retrieve signals from extremely noisy data (SNR ~1) [2] [6]. |

What common mistakes should I avoid when measuring and interpreting SNR and LOD?

- Over-smoothing the Signal: Applying excessive electronic filtering (time constant) or mathematical smoothing can flatten small peaks near the baseline, making them undetectable and artificially raising your LOD. Always check if SNR is sufficient with minimal filtering [2].

- Incorrect Baseline Noise Measurement: Noise should be measured on a blank sample or a peak-free section of the chromatogram. The European Pharmacopoeia recommends measuring the maximum amplitude of the noise over a distance equal to 20 times the peak width at half-height [4].

- Ignoring Statistical Principles: Defining LOD solely as a concentration that gives S/N=3 without verifying the false negative rate (β-error) can be misleading. The modern LOD definition requires accepting a specific probability for both false positives and false negatives [3] [4].

- Misapplying Data Processing: In spectroscopy, performing spectral normalization before background correction can bias the results, as the fluorescence background intensity becomes encoded in the normalization constant [7].

Frequently Asked Questions (FAQs)

Q: Can I use software to improve a poor SNR after I've already collected the data? A: Yes, but with caution. Mathematical techniques like Savitsky-Golay smoothing, Fourier transform, and wavelet transforms can be applied post-acquisition to reduce noise. The key advantage is that the raw data is preserved, allowing you to test different levels of smoothing. However, over-processing can lead to data distortion. The most reliable approach is always to optimize the experimental conditions to collect high-quality data first [2] [6].

Q: How does the instrument detection limit (IDL) differ from the method detection limit (MDL)? A: The Instrument Detection Limit (IDL) is the analyte concentration that produces a signal greater than three times the standard deviation of the noise level when a neat standard is introduced directly into the instrument. The Method Detection Limit (MDL) includes all sample preparation steps (e.g., digestion, extraction, concentration) and is therefore always higher than the IDL, as these additional steps introduce more sources of error and uncertainty [3].

Q: My data system calculates SNR automatically. Should I trust it? A: While data system calculations are convenient, it is good practice to understand the algorithm being used. Some systems use root-mean-square (RMS) noise, while others use peak-to-peak noise. Manually verifying the SNR for critical methods, especially those near the detection limit, ensures the accuracy of the automated report [5].

Research Reagent and Material Solutions

Table: Essential Materials for Signal and Noise Optimization

| Item | Function |

|---|---|

| HPLC-Grade Solvents | High-purity solvents minimize chemical background noise and baseline drift in chromatographic analyses [5]. |

| Low-Noise UV/Vis Lamps | A stable, high-intensity light source is crucial for spectroscopic signal strength and baseline stability. |

| Shielded Cables | Reduce the pickup of external electromagnetic interference, a common source of high-frequency noise [8]. |

| Wavelet Transform Software | Advanced software enables the application of powerful denoising algorithms like the NERD method, which can extract weak signals from very noisy data (SNR ~1) [6]. |

| Certified Reference Materials (CRMs) | Materials with a known, traceable analyte concentration are essential for the accurate calibration and validation of LOD/LOQ determinations. |

Frequently Asked Questions: FT-IR Spectral Artifacts

Q1: Why does my spectrum have a noisy baseline or strange, sharp peaks? This is often caused by physical vibrations or electronic interference affecting the highly sensitive interferometer. Ensure the instrument is on a stable, vibration-damping table, and keep it away from sources of disturbance like pumps, fans, or heavy foot traffic [9] [10]. Electronic noise or a failing laser can also cause a noisy interferogram [11] [12].

Q2: What causes sharp, negative peaks to appear in my absorbance spectrum? When using Attenuated Total Reflection (ATR), negative absorbance peaks almost always indicate that the ATR crystal was dirty when the background measurement was collected [9] [10]. The solution is to clean the crystal thoroughly with an appropriate solvent, collect a new background spectrum, and then re-measure your sample.

Q3: Why is my signal weak or absent, even with a good sample? Weak signal can stem from multiple instrumental issues. Common causes include an aging IR source that has lost intensity, dirty or misaligned optics, a saturated or failing detector, or a misaligned interferometer [11] [13]. A systematic check of these components, starting with a visual inspection of the source and a review of the interferogram signal strength, is recommended.

Q4: What are the common peaks from the environment, and how do I remove them? The most common atmospheric interferences are sharp peaks from water vapor (approx. 3400 cm⁻¹ and 1650 cm⁻¹) and carbon dioxide (approx. 2350 cm⁻¹ and 667 cm⁻¹) [14] [13]. To minimize them, ensure the instrument's sample compartment is properly purged with dry, CO₂-free air or nitrogen and that background scans are collected frequently under the same conditions as sample scans [13].

Q5: Why do I see distorted peaks in my diffuse reflectance spectrum? This is a data processing error. Spectra collected in diffuse reflection should be processed and displayed in Kubelka-Munk units, not absorbance [9] [10]. Processing in absorbance units distorts the peak shapes and intensities, making the data unreliable for analysis.

Troubleshooting Guide: From Symptom to Solution

The table below summarizes common spectral symptoms, their likely instrumental causes, and corrective actions.

| Symptom | Possible Instrumental Pitfall | Corrective Action |

|---|---|---|

| Noisy Baseline / Spurious Peaks | Physical vibrations; Electronic interference; Failing laser [9] [11] [12] | Place instrument on vibration-damping table; Eliminate interference sources; Check/replace reference laser [11]. |

| Negative Absorbance Peaks (ATR) | Dirty ATR crystal during background measurement [9] [10] | Clean ATR crystal with suitable solvent; Collect fresh background spectrum [9]. |

| Weak or No Signal | Aging IR source; Dirty/misaligned optics; Failing detector; Misaligned interferometer [11] [13] | Check and replace source if needed; Clean and align optics; Verify detector performance and alignment [11]. |

| Peaks at ~2350 cm⁻¹ & ~3400 cm⁻¹ | Inadequate purging of atmospheric CO₂ and water vapor [14] [13] | Purge instrument thoroughly with dry air/N₂; Collect new background; Ensure sample compartment is sealed. |

| Distorted Peaks (Diffuse Reflectance) | Incorrect data processing in absorbance units [9] [10] | Reprocess spectral data using Kubelka-Munk units [9]. |

| Poor Spectral Resolution | Reduced mirror travel; Damaged interferometer bearings; Inadequate apodization [11] [12] | Run instrument alignment routine; Service or replace interferometer; Check data processing settings [11]. |

| Unusual Baseline Drift | Detector saturation; Moisture in sample compartment; Source/Detector instability [11] [12] | Reduce aperture size; Ensure sample compartment and cells are dry; Allow instrument to warm up and stabilize [11]. |

Experimental Protocol: Diagnosing Low Signal Intensity

Low signal intensity is a critical problem that directly impacts data quality. The following workflow provides a systematic method for diagnosing its root cause. This protocol is adapted from best practices for instrument maintenance and troubleshooting [11] [13].

Objective: To methodically identify and resolve the causes of low signal-to-noise ratios or weak peak intensities in FT-IR measurements.

Materials and Reagents:

- FT-IR spectrometer

- Appropriate, known standard sample (e.g., polystyrene film)

- Lint-free wipes and optical-grade solvents (e.g., methanol)

- Manufacturer's service software or diagnostic tools

Procedure:

Instrument Warm-up: Ensure the FT-IR instrument has been powered on and allowed to stabilize for at least 30 minutes to minimize electronic and thermal drift [11].

Visual Inspection:

- Open the sample compartment and visually inspect for any obvious obstructions, debris, or heavy condensation on optical surfaces.

- Check the color indicator of the desiccant and regenerate or replace it if necessary to ensure a dry purge environment [11].

Background Measurement:

- With a clean accessory (e.g., empty ATR crystal) in place, collect a new background spectrum using your standard measurement parameters.

- Diagnostic Check: Examine the single-beam background spectrum. A healthy instrument should show a smooth, intense curve from 4000 to 400 cm⁻¹ without sharp, deep dips (aside from normal CO₂ and water vapor if purging is incomplete). A low-intensity background points to a source, optic, or detector issue.

Standard Sample Measurement:

- Measure a known standard, such as a polystyrene film, under identical conditions.

- Compare the obtained spectrum to a reference spectrum. Pay attention to both the signal-to-noise ratio and the absolute intensity of key peaks.

Interferogram and Diagnostic Data Analysis:

- Access the instrument's diagnostic data to view the interferogram. A strong, centered interferogram burst indicates good alignment and signal. A weak or poorly centered burst suggests problems [11].

- Check the instrument's internal diagnostics for laser intensity and modulation amplitude. Values outside the manufacturer's specified tolerance indicate a potential need for laser replacement or optical realignment [11].

Systematic Component Checking:

- If the signal remains low, the issue likely lies with a core component. Follow the logic in the diagram below to isolate the problem.

The following workflow visualizes the systematic diagnostic process:

The Scientist's Toolkit: Essential Research Reagents & Materials

The table below lists key materials and reagents essential for the maintenance, calibration, and troubleshooting of FT-IR instrumentation, particularly in a research environment focused on signal integrity.

| Item | Function / Application |

|---|---|

| Polystyrene Film | A standard reference material for verifying wavenumber accuracy and spectral resolution of the instrument. |

| Known Organic Compound (e.g., Methyl Thiocyanate) | Used as a model compound in protocols for extracting weak signals from a strong solvent background, crucial for method development [15]. |

| Optical-Grade Solvents (Methanol, Acetone) | For safely cleaning optical surfaces like ATR crystals (diamond, ZnSe) and mirrors without causing damage or residue [11] [16]. |

| Spectroscopic Grade KBr | For preparing solid samples via the pelleting method for transmission analysis. Must be stored in a desiccator to prevent moisture absorption [16] [13]. |

| Desiccant | Essential for maintaining a dry purge environment inside the instrument to minimize spectral interference from water vapor [11]. |

| Bandpass Filter | A physical filter placed in the optical path to limit the light reaching the detector to a specific range. This increases dynamic range and sensitivity for measuring weak vibrational probes in solution [15]. |

| ATR Calibration Standard | A material with a known, stable spectrum used to validate the performance of ATR accessories, ensuring data reproducibility. |

常见问题解答 (FAQs)

1. 问:为什么我的光谱信号强度弱且数据波动大? 答:这可能由多种因素导致。样品本身不均匀、环境电磁干扰、或仪器激发源功率波动都可能引起数据频繁波动 [17]。信号弱通常与光路系统受阻有关,例如光谱仪的镜头有灰尘覆盖,会阻挡部分光线到达探测器 [17]。对于生物流体样品,样本中目标分析物浓度过低或存在高浓度的干扰成分(如血液中的蛋白质和胆固醇)也会显著削弱有效信号 [18]。

2. 问:在测量全血样品时,如何减少背景干扰? 答:您可以尝试以下方法:

- 样品预处理:通过离心等方法去除红细胞,使用血清或血浆进行测量,以减少颗粒物散射的影响 [18]。

- 构建精确的基本组:在光谱分析中,建立一个包含血清中主要成分(如水、蛋白质、葡萄糖、尿素、胆固醇等)光谱的基本组,通过数学方法有效分离和扣除背景干扰 [18]。

- 使用标准品核查:在日常使用中,测量已知成分的标准样品,检查结果是否在合理范围内,以确保仪器状态 [17]。

3. 问:拉曼光谱测量生物液体时,遇到强荧光背景怎么办? 答:强荧光会湮灭拉曼峰,您可以尝试:

- 改变激发波长:如果仪器支持,更换更长波长的激光器可能有效降低荧光干扰。

- 淬灭荧光:尝试寻找一种能溶解样品并猝灭荧光背景的溶剂 [19]。

- 表面增强拉曼散射(SERS)技术:利用SERS技术可以显著增强拉曼信号,同时有效抑制荧光 [20]。或者将样品稀释到KBr等特定基体中进行测量 [19]。

4. 问:如何确保我的光谱仪在测量复杂样品时数据准确? 答:定期的仪器校准和维护至关重要。

- 定期校准:按照规定的时间间隔,使用与该样品矩阵相匹配的标准物质对仪器进行校准 [17]。例如,在测量血清葡萄糖时,基本组应包含血清中所有主要成分的参考光谱 [18]。

- 环境控制:将仪器放置在温度和湿度相对稳定的环境中(例如温度(20±5)°C,相对湿度40%-60%),并避免靠近强电磁场源 [17]。

故障排除指南:低信号强度

低信号强度是光谱分析中常见的挑战,尤其在复杂的生物基质中。以下指南系统地分析了原因并提供了解决方案。

低信号强度诊断流程

下图概述了诊断和解决低信号强度问题的逻辑路径。

关键实验方案:通过基本组方法校正血清样品中的光谱干扰

1. 背景与原理 复杂生物基质(如全血、血清)中包含多种干扰成分(水、蛋白质、尿素、胆固醇等),它们会重叠并掩盖目标分析物(如葡萄糖)的光谱信号。基本组方法通过预先测量所有主要干扰成分的参考光谱,建立数学模型,从混合光谱中 mathematically 提取出目标分析物的信号 [18]。

2. 实验步骤

- 步骤一:基本组制备

- 准备高纯度的血清主要成分:水、白蛋白、球蛋白、葡萄糖、尿素、胆固醇等 [18]。

- 使用缓冲液(如磷酸盐缓冲液)将这些成分制备成一系列不同浓度的标准溶液。

- 在与控制样品相同的条件下,测量每种成分的参考光谱。

- 步骤二:样品测量

- 血液样品经离心处理获取血清。

- 将血清样品置于光谱仪中,获取原始光谱数据。

- 步骤三:数学分析与校正

- 将混合光谱表示为基本组参考光谱的线性组合。

- 采用多元线性回归分析等算法,计算每种成分的贡献比例。

- 通过拟合过程,从总光谱中减去干扰成分的光谱,从而分离出目标分析物(如葡萄糖)的纯光谱及其浓度 [18]。

3. 预期结果 通过此方法,即使在全血或血清等复杂基质中,也能显著提高葡萄糖等小分子物质检测的灵敏度和准确性,有效解决因基质效应导致的信号强度低和测量偏差问题 [18]。

光谱法测定血清中葡萄糖的干扰因素与校正数据

下表总结了在血清基质中使用光谱法(如近红外光谱)测定葡萄糖时遇到的主要干扰成分及其定量影响和解决方案。

表:血清葡萄糖光谱分析中的干扰成分与处理策略

| 干扰成分 | 对光谱信号的影响机制 | 典型的浓度范围(血清) | 推荐的校正方法 |

|---|---|---|---|

| 水 | 在近红外区域有强烈的吸收峰,严重重叠葡萄糖的特征信号 [18] | ~80 M | 通过基本组方法引入水的参考光谱进行数学扣除 [18] |

| 蛋白质(白蛋白、球蛋白) | 引起强烈的光散射,导致基线漂移,并掩盖分析物信号 [18] | 60-80 g/L | 样品预处理(超滤、沉淀)或通过散射校正算法处理 [18] |

| 尿素 | 在葡萄糖特征峰附近有吸收带,造成光谱重叠 [18] | 2.5-7.5 mM | 将其纳入基本组,通过多元校正模型分离信号 [18] |

| 胆固醇 | 其C-H伸缩振动吸收与葡萄糖区域重叠 [18] | < 5.2 mM | 在基本组中包含胆固醇光谱以消除其影响 [18] |

| 红细胞 | 造成严重的光散射,增加背景噪声,降低信噪比 [18] | - | 测量前离心,使用血清或血浆样品 [18] |

研究人员工具包:关键试剂与材料

表:复杂生物样品光谱分析常用试剂与材料

| 试剂/材料 | 功能描述 | 典型应用示例 |

|---|---|---|

| 标准物质 | 用于仪器校准和方法验证,已知精确浓度的纯物质 [17] | 葡萄糖、白蛋白、胆固醇标准品,用于建立定量校准曲线 [18] |

| 磷酸盐缓冲液 (PBS) | 提供稳定的pH环境,稀释样品,维持生物分子的稳定性 [18] | 在制备血清样品或标准溶液时使用,防止pH波动影响光谱 [18] |

| 三乙酸甘油酯 | 在实验研究中用作模拟生物基质的溶剂或模型化合物 [18] | 在研究工作中用于测试方法的可靠性 [18] |

| 纯净水 | 作为溶剂、空白对照,其光谱也是基本组的重要组成部分 [18] | 用于溶解标准品、稀释样品,以及测量水的参考光谱 [18] |

| 固相萃取柱 | 对复杂样品进行预处理,富集目标分析物或去除干扰物质 [20] | 从血清中提取特定小分子代谢物,以降低蛋白质等大分子的干扰 |

样品前处理与信号优化工作流程

下图详细展示了为获得最佳信号强度而对复杂生物样品(以血液为例)进行处理的完整实验流程。

FAQ: How do vibration and temperature affect my spectroscopic signal?

Q: What are the common symptoms of environmental interference in my spectra? A: Environmental interference often manifests as increased baseline noise and instability, reduced signal-to-noise ratio (SNR), and in severe cases, peak broadening or shifting. Temperature fluctuations can cause baseline drift, while vibrations often introduce random high-frequency noise that obscures weak signals, directly impacting detection limits [21] [22].

Q: I'm troubleshooting low signal intensity. How can I tell if vibrations are the culprit? A: A key indicator is noise that correlates with the operation of nearby equipment (e.g., pumps, compressors, HVAC systems) or building vibrations. To confirm, try taking measurements during off-hours when such equipment is inactive. If the signal-to-noise ratio improves, vibration is likely a contributing factor [21].

Q: My FT-IR baseline is unstable. Could this be related to temperature? A: Yes. The interferometer in an FT-IR spectrometer is highly sensitive to thermal expansion or contraction. Even small, gradual temperature changes in the lab (such as from air conditioning cycles) can misalign the optical path, leading to a drifting baseline [21]. Similarly, research on thin films has shown that their optical characteristics exhibit marked variations with temperature shifts [23].

Troubleshooting Guide: Diagnosis and Mitigation

Step 1: Initial Diagnostic Assessment

Begin by systematically evaluating your instrument and environment. The table below outlines common symptoms and their potential environmental causes.

Table: Diagnosing Environmental Interference in Spectra

| Observed Symptom | Potential Environmental Cause | Quick Diagnostic Check |

|---|---|---|

| High-Frequency Noise [21] | Mechanical vibration from building, pumps, or nearby equipment. | Record a spectrum with no sample. Note if noise correlates with equipment cycles. |

| Baseline Drift [21] | Temperature fluctuations causing thermal expansion/contraction in optics. | Monitor laboratory temperature stability; record a blank spectrum over time. |

| Reduced Signal-to-Noise Ratio (SNR) [22] | Combination of vibration and temperature, increasing system noise. | Compare SNR with historical data from the same instrument and method. |

| Peak Shifting [23] | Significant temperature changes affecting sample or detector properties. | Use a stable standard reference material to check for peak position changes. |

Step 2: Systematic Isolation and Mitigation Protocols

Follow these detailed experimental protocols to identify and address the root cause.

Protocol A: Investigating Vibration Interference

- Instrument Preparation: Ensure your spectrometer is on a stable, level surface. If an active or pneumatic vibration isolation table is available, ensure it is powered and functioning.

- Environmental Baseline Measurement: During a period of relative quiet (e.g., overnight, weekend), prepare a standard sample and acquire a reference spectrum. Note the signal-to-noise ratio for a key peak.

- Controlled Vibration Test: Systematically observe the laboratory environment during normal working hours. Document the operation times of potential vibration sources such as HVAC systems, chillers, centrifuges, and pumps.

- Data Analysis: Acquire new spectra of the same standard while suspected sources are active. Compare the SNR and baseline noise to your baseline measurement. A reproducible degradation confirms vibration interference.

- Mitigation Strategies:

- Relocation: Move the spectrometer away from the identified vibration sources.

- Isolation: Invest in a high-quality vibration isolation table or optical breadboard.

- Scheduling: For highly sensitive measurements, schedule them during periods of low environmental vibration.

Protocol B: Investigating Temperature Interference

- Instrument Preparation: Initiate the spectrometer and allow it to warm up for the manufacturer's recommended time to reach thermal equilibrium.

- Environmental Monitoring: Place a high-precision data-logging thermometer near the spectrometer's optical path. Log ambient temperature for at least one hour before and during measurements to establish stability.

- Stability Test: Use a stable reference standard. Acquire sequential spectra over 60-90 minutes, documenting the time for each.

- Data Analysis: Plot the baseline value or a key peak position against both time and the logged temperature. A correlation between spectral drift and temperature fluctuations confirms thermal interference.

- Mitigation Strategies:

- Environmental Control: Work with facilities to improve laboratory temperature stability. Ensure air conditioning vents are not blowing directly on the instrument.

- Enclosure: Use an instrument cover or enclosure to minimize drafts.

- Purging: For FT-IR, ensure the purge gas is stable and dry, as fluctuations can cause thermal and spectral interference [21].

The following diagram illustrates this logical troubleshooting workflow.

The Scientist's Toolkit: Key Reagents and Materials

Table: Essential Materials for Investigating Environmental Interference

| Item | Function/Benefit |

|---|---|

| Stable Reference Standard | A material with well-characterized, sharp spectral peaks (e.g., a polystyrene standard for Raman) is essential for quantifying signal-to-noise ratio and detecting peak shifts. |

| High-Precision Data Logger | Allows for continuous monitoring of ambient temperature and humidity at the instrument during measurements to correlate environmental and spectral changes. |

| Vibration Isolation Table | Provides passive or active damping of floor-borne vibrations, protecting sensitive optical components. A foundational mitigation tool. |

| FT-IR Purge Gas Generator | Provides a stable, dry, CO₂-free purge gas for FT-IR, reducing spectral interference from atmospheric water vapor and improving thermal stability [21]. |

| Non-Glass LC-MS Vials/Containers | For mass spectrometry, using plastic containers eliminates alkali metal ion leaching from glass, which can form adducts and suppress the target signal [24]. |

Advanced Methods to Boost Signal: From Multi-Pixel Analysis to Machine Learning

Frequently Asked Questions (FAQs)

What is the fundamental difference between single-pixel and multi-pixel SNR calculations?

The core difference lies in how much spectral data is used to compute the signal.

- Single-Pixel Method: This approach uses only the intensity from the center pixel of a Raman band to represent the signal. The noise is typically the standard deviation of the background near this peak [22].

- Multi-Pixel Method: This approach utilizes information from multiple pixels across the entire bandwidth of the Raman band to calculate the signal. This can be done by summing the area under the peak (multi-pixel area method) or by using the intensity of a fitted function, like a Gaussian curve, over the band (multi-pixel fitting method) [22].

Why would using a multi-pixel method improve my detection limits?

Multi-pixel methods improve detection limits because they incorporate more of the genuine Raman signal from the entire spectral feature into the calculation. A single-pixel approach ignores the signal present in the bandwidth outside the center pixel. Research has demonstrated that multi-pixel methods can report approximately 1.2 to over 2 times larger SNR values for the same Raman feature compared to single-pixel methods. A higher SNR directly translates to a lower Limit of Detection (LOD), allowing you to detect fainter spectral features with statistical significance [22] [25].

Can you provide a real-world example of this difference?

Yes. A case study on data from the SHERLOC instrument on Mars analyzed a potential organic carbon feature. The analysis found that:

- The single-pixel method calculated an SNR of 2.93, which is below the standard LOD threshold of SNR ≥ 3.

- The multi-pixel methods calculated an SNR between 4.00 and 4.50, well above the LOD threshold [22]. This shows that relying on a single-pixel calculation could lead to a false negative, causing you to miss a statistically significant signal, while a multi-pixel method correctly identifies it.

Are there any drawbacks or considerations when switching to multi-pixel SNR?

The primary consideration is that SNR values from different calculation methods are not directly comparable [22] [25]. If you compare your results with literature that used a single-pixel method, the difference in SNR might be due to the calculation methodology and not just the sample itself. It is crucial to clearly report which SNR calculation method you used in your publications.

Besides SNR calculations, what are other common mistakes that affect data quality?

Several common experimental errors can overestimate model performance or distort spectra:

- Skipping Calibration: Failure to perform wavelength and intensity calibration using standards can lead to systematic drifts that obscure sample-related changes [7].

- Incorrect Preprocessing Order: Performing spectral normalization before background correction can bias your results, as the fluorescence background intensity becomes coded into the normalization constant [7].

- Cosmic Rays: High-energy particles can create sharp, intense spikes in spectra. These must be identified and removed using software algorithms to prevent misinterpretation [26].

- Laser-Induced Damage: Exceeding the laser power density threshold of your sample can cause structural or chemical changes. Use lower power or defocus the beam to spread the energy if needed [26].

Troubleshooting Guide: Low Signal-to-Noise Ratio

Problem: My Raman signals are too weak, leading to a poor SNR and high detection limits.

Here is a structured workflow to diagnose and solve low SNR issues, starting with data analysis before moving to more complex experimental changes.

Quantitative Comparison of SNR Calculation Methods

The table below summarizes key differences between the SNR calculation methods, based on a standardized study [22].

| Method | Signal Calculation Basis | Relative SNR Performance | Impact on Limit of Detection (LOD) | Best Use Cases |

|---|---|---|---|---|

| Single-Pixel | Intensity of the center pixel in the Raman band [22] | Baseline | Higher LOD | Quick, initial assessments; legacy data comparison |

| Multi-Pixel Area | Sum of the area under the Raman band across multiple pixels [22] | ~1.2 - 2+ times higher than single-pixel [22] | Lower LOD | General purpose; maximizing signal use from clear, defined peaks |

| Multi-Pixel Fitting | Intensity of a fitted function (e.g., Gaussian) to the Raman band [22] | ~1.2 - 2+ times higher than single-pixel [22] | Lower LOD | Noisy data; overlapping peaks where fitting can isolate signals |

Detailed Experimental Protocols

Protocol 1: Implementing a Multi-Pixel Area SNR Calculation

This protocol provides a step-by-step methodology to calculate SNR using the multi-pixel area method, as applied in research on SHERLOC instrument data [22].

- Identify the Raman Band: Select the Raman spectral feature (peak) of interest for analysis.

- Define the Signal Region (S): Determine the pixel range that covers the entire full-width-at-half-maximum (FWHM) of the peak.

- Define the Background Regions (σ_S): Select two regions on either side of the peak, ensuring they are free from other spectral features, to represent the noise.

- Calculate the Net Signal:

- Sum the intensity values (counts) for all pixels within the signal region defined in Step 2.

- Calculate the average intensity per pixel in each of the two background regions.

- Multiply the average background intensity by the number of pixels in the signal region to get the total estimated background under the peak.

- Subtract this total estimated background from the summed signal intensity to obtain the net signal (S).

- Calculate the Noise (σ_S):

- Use the standard deviation of the pixel intensities in the background regions as the basis for noise. The IUPAC standard method involves calculating the standard deviation using the formula:

σ_S = √[ Σ (y_i - y_a)² / n ]wherey_iis the intensity of a background pixel,y_ais the average intensity of the background, andnis the number of background pixels [22].

- Use the standard deviation of the pixel intensities in the background regions as the basis for noise. The IUPAC standard method involves calculating the standard deviation using the formula:

- Compute the SNR: Divide the net signal (S) from Step 4 by the standard deviation of the noise (σ_S) from Step 5.

Protocol 2: Transitioning from Single-Pixel to Multi-Pixel Analysis

This protocol helps researchers validate their transition to multi-pixel methods.

- Re-analyze Historical Data: Apply both single-pixel and multi-pixel SNR calculations to existing datasets where the presence of a signal is ambiguous or borderline (SNR ~3).

- Comparative Analysis: Create a table comparing the SNR values and the subsequent conclusion (detected/not detected) for both methods for each spectrum.

- Validate with Standards: Use samples with known, low concentrations of an analyte to empirically verify the improved LOD. Measure the lowest concentration that consistently gives an SNR ≥ 3 with each method.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key materials and techniques used to enhance Raman signals and improve SNR.

| Item / Technique | Function / Explanation | Key Considerations |

|---|---|---|

| SERS Substrates (Gold, silver, or aluminum nanoparticles) | Enhances Raman signal intensity by up to 1010 times via plasmonic effects when molecules are adsorbed onto the metal surface [27] [26]. | Reproducibility can be an issue; requires optimization of nanoparticle size and material for the specific analyte [28]. |

| TERS Tips | Provides extreme signal enhancement and nanoscale spatial resolution by using a metallic-coated AFM tip as a plasmonic antenna [26] [28]. | Not a push-button technique; requires significant expertise in both Raman spectroscopy and scanning probe microscopy [28]. |

| FT-Raman with 1064 nm Laser | Uses a longer excitation wavelength to virtually eliminate fluorescence interference, a common source of overwhelming background noise [27]. | Requires a modified spectrometer (interferometer) and a different detector (e.g., Germanium), as standard CCDs are less sensitive at this wavelength [27]. |

| Coherent Raman Scattering (SRS/CARS) | Optical method that uses multiple lasers to coherently excite molecular vibrations, boosting signals by 5-6 orders of magnitude for label-free bioimaging [29]. | Requires complex, expensive laser systems and expertise. SRS avoids the non-resonant background that can complicate CARS [29]. |

| Wavenumber Standard (e.g., 4-acetamidophenol) | A material with many well-defined peaks used to calibrate the wavenumber axis of the spectrometer, ensuring spectral accuracy and reproducibility [7]. | Critical for comparing data across different measurement days or instruments; skipping this step can lead to misinterpretation of spectral shifts [7]. |

This technical support center is designed for researchers and scientists facing the challenge of low signal intensity in gamma spectrometric measurements using Cadmium Zinc Telluride (CdZnTe) detectors. A frequent and critical issue encountered in this field is the trade-off between detector efficiency and spectral quality, which can manifest as poor peak resolution, increased systematic errors, and ultimately, unreliable quantification of radionuclides. The guides and FAQs herein are framed within a broader research thesis on troubleshooting low signal intensity. They provide targeted, data-driven solutions leveraging modern machine learning (ML) techniques to optimize detector performance without compromising efficiency.

Core Concepts & FAQ

Frequently Asked Questions (FAQ)

Q1: My CdZnTe detector has high efficiency, but the spectral peaks are broad and have high tailing. What is the root cause?

The root cause is often significant spectroscopic performance variation across the detector's crystal volume. CdZnTe crystals can suffer from inherent material defects and compositional inhomogeneity arising from the crystal growth process [30] [31]. This results in some regions of the detector having excellent energy resolution while others exhibit poor charge collection, leading to broadened and tailed photopeaks in the combined "bulk" spectrum [32]. Using data from all voxels, including these poor-performance regions, muddies the spectral signal and increases systematic errors in quantitative analysis [30].

Q2: How can I improve my signal-to-noise ratio without purchasing a new detector or impractically long measurement times?

Machine learning-driven voxel clustering and selection provides a powerful software-based solution. Instead of using the entire detector volume or sacrificing efficiency by using only a tiny "best" region, ML algorithms can automatically identify and group voxels with similar spectroscopic performance [30] [32]. You can then choose to accumulate counts only from the best-performing clusters. This approach selectively "mutes" the noisy or poorly-resolving parts of your detector, enhancing the signal quality (e.g., sharper peaks) from the material you are measuring without a significant loss of useable counts [30].

Q3: What is the primary computational challenge in optimizing a pixelated detector, and how does ML solve it?

For a modern pixelated detector like the H3D M400, brute-force optimization is computationally impossible. With over 24,000 voxels, there are (2^{24,200}) possible combinations to test—a number that exceeds the age of the universe to compute [30]. Machine learning overcomes this by identifying spatial correlations. It groups the 24,000+ voxels into a small number of clusters (e.g., 2-7) with similar performance. The optimization search is then performed over combinations of these few clusters, making the problem computationally feasible [32].

Q4: Are these ML optimization methods specific to nuclear safeguards, or can I use them for environmental monitoring or drug development?

The ML framework is general and application-agnostic. While initially demonstrated for uranium enrichment measurements in nuclear safeguards [30], the underlying algorithm is designed to optimize any user-defined spectroscopic performance metric. Whether your goal is to improve the resolution of a specific gamma peak for isotope identification in environmental samples [33] or to achieve better quantification in a complex mixture, the software can be tailored to your needs. The core principle of trading off efficiency for quality via intelligent voxel selection is universally applicable to highly-segmented detectors [32].

Troubleshooting Guides & Experimental Protocols

Guide 1: Diagnosing Low Signal-to-Noise Ratio using ML-Based Voxel Clustering

This guide outlines the primary ML-based optimization pipeline developed at LBNL, which uses non-negative matrix factorization (NMF) and clustering to identify the best-performing regions of a CdZnTe detector.

- Objective: To significantly improve a specific spectral performance metric (e.g., photopeak amplitude uncertainty, energy resolution) by finding an optimal sub-set of detector voxels, thereby troubleshooting low signal-to-noise and high systematic error.

- Prerequisites: A collected gamma-ray dataset from a pixelated CdZnTe detector where interaction positions (voxels) are known.

The following workflow details the sequence of data processing and analysis steps involved in this method:

Experimental Protocol:

- Data Preparation: Format your data into a matrix (\mathbf{X}^{[n{\text{vox}},\,n{\text{bins}}] \geq 0), where each row is the gamma spectrum from a single detector voxel [32].

- Dimensionality Reduction with NMF:

- Use Non-Negative Matrix Factorization (NMF) to decompose (\mathbf{X}) into lower-dimensional matrices: (\mathbf{X} \simeq \mathbf{W}\mathbf{H}).

- (\mathbf{W}^{[n{\text{vox}},\,n{\text{comp}}]) contains the weights for each voxel, and (\mathbf{H}^{[n{\text{comp}},\,n{\text{bins}}]) contains the spectral components [32].

- Key Parameter: The number of components (n{\text{comp}}) and a regularizer (\alphaW) to promote sparsity in (\mathbf{W}).

- Voxel Clustering:

- Using the weight matrix (\mathbf{W}) as the feature set for each voxel, apply a clustering algorithm to group voxels with similar spectral responses.

- Algorithm Options: The pipeline supports several algorithms, including Gaussian Mixture Models, Agglomerative Clustering, and BIRCH [32].

- Key Parameter: The number of clusters (n_{\text{clus}}) is a critical hyperparameter to sweep over.

- Performance Evaluation & Optimization:

- For a given cluster configuration, accumulate the gamma spectra from all voxels within one or multiple clusters.

- Calculate your target performance metric on this accumulated spectrum. Example metrics include the relative uncertainty in the amplitude of a specific photopeak (e.g., the 186 keV peak for U-235) or the overall energy resolution at a key energy [30] [32].

- Automate a parameter sweep over (n{\text{comp}}), (n{\text{clus}}), and the clustering algorithm type. The pipeline will select the combination that yields the best value for your performance metric.

- Application:

- The output is an optimal binary mask of which voxels to include in future analyses.

- Apply this mask to new measurements to acquire data with enhanced spectral quality.

Guide 2: Addressing Specific Spectral Features and Computational Constraints

This guide helps you choose an algorithm based on your specific spectral problem and available computational resources.

Table 1: Algorithm Selection Guide for Specific Scenarios

| Symptom / Constraint | Recommended Algorithm | Key Advantage | Performance Example |

|---|---|---|---|

| Poor resolution in a specific photopeak (e.g., U-235 186 keV) | NMF + Clustering Pipeline (Guide 1) | Data-driven; automatically learns to discard voxels that contribute to systematic errors like peak tailing [30]. | Reduced systematic fit error by a factor of ~3x for the 186 keV peak [30]. |

| Need for rapid, near-real-time processing | Greedy Depth-Based Algorithm | Significantly faster computation time, suitable for applications where a quick, good-enough solution is needed [32]. | Achieved similar performance improvements as the full ML pipeline in a fraction of the computation time for some metrics [32]. |

| Spectral distortions (tailing) in standard CZT | Genetic Algorithm for Spectral Unfolding | Directly deconvolves the measured spectrum to restore the true energy distribution, producing δ-like peaks [34]. | Effectively restored ideal peak shapes from distorted spectra of common radionuclides (e.g., ¹³⁷Cs, ⁶⁰Co) [34]. |

| Limited training data availability | Traditional ML (e.g., SVMs) | Remains effective and robust with smaller datasets, unlike deep learning models which require large data volumes [35] [36]. | Over 95% accuracy in radionuclide identification under low-count or shielded conditions [35]. |

The Scientist's Toolkit

This section details the essential software and materials referenced in the troubleshooting guides.

Table 2: Key Research Reagent Solutions for ML-Optimized Gamma Spectrometry

| Item Name | Function / Description | Relevance to Troubleshooting |

|---|---|---|

| spectre-ml Software | Software package (Spectral Peak Enhancement by Combining Trusted Response Elements via Machine Learning) implementing the NMF+Clustering pipeline [32]. | The primary tool for implementing the core ML optimization workflow described in Guide 1. Available for license from LBNL. |

| H3D M400 Detector | A commercial, large-volume, pixellated CdZnTe spectrometer system from H3D, Inc. [30] [32]. | The primary detector system used in the development of these methods. Its high voxel count (~24,000) makes it an ideal candidate for this optimization. |

| CdZnTeSe (CZTS) Crystals | A next-generation quaternary detector material. The addition of Selenium (Se) improves compositional homogeneity and reduces crystal defects [31]. | Addressing the root cause of performance variation. CZTS detectors offer more uniform performance from the outset, potentially simplifying the ML optimization task. |

| Genetic Algorithm (GA) Code | Custom code for spectral unfolding, which searches for the incident energy distribution that best matches the measured spectrum when convolved with the detector response [34]. | An alternative post-processing solution for correcting spectral distortions like peak tailing, as outlined in Table 1. |

| Scikit-learn Library | A popular open-source Python library for machine learning [32]. | Provides the implementations of clustering algorithms (Gaussian Mixture, Agglomerative, etc.) used within the spectre-ml pipeline. |

Welcome to the Technical Support Center for advanced biosensing research. This resource is designed for researchers and scientists encountering the common yet critical challenge of low signal intensity in spectroscopic measurements. Leveraging the synergistic combination of quantum dots (QDs) and plasmonic nanostructures can dramatically enhance optical signals, improving detection sensitivity for applications from medical diagnostics to drug discovery. The following guides and FAQs provide targeted, practical solutions for your experimental work.

Core Enhancement Mechanisms: FAQ

Q1: What are the fundamental physical mechanisms by which plasmonic nanostructures enhance the signal from quantum dots?

Plasmonic nanostructures enhance QD signals primarily through two mechanisms, depending on the distance between the QD and the nanostructure [37]:

- The Purcell Effect: This mechanism dominates at longer separation distances (typically beyond 5-10 nm). The plasmonic nanostructure acts as a nanoantenna, altering the local density of optical states (LDOS). This increases the spontaneous emission rate of the QD, leading to brighter fluorescence, reduced lifetime, and improved photostability. The enhancement scales with 1/r³, where r is the separation distance [37].

- Förster Resonance Energy Transfer (FRET): This mechanism is dominant at very short distances (typically under 5 nm). It involves a non-radiative, dipole-dipole energy transfer from the QD (donor) to the plasmonic nanoparticle (acceptor). For enhancement to occur via FRET, the LSPR band of the nanoparticle must overlap with the absorption spectrum of the QD. Incorrect spectral alignment or excessively short distances will lead to fluorescence quenching instead of enhancement [37].

Q2: My QD-plasmonic hybrid system is yielding lower signal than expected. What are the primary culprits?

Low signal can be attributed to several factors related to the nanoscale interaction:

- Insufficient Spectral Overlap: The LSPR peak of your plasmonic nanostructure must optimally overlap with the QD's excitation wavelength for enhancement via the Purcell effect, or with its absorption for FRET. Mismatch results in weak coupling [37] [38].

- Sub-Optimal Separation Distance: If the QD is too close to the metal surface (especially within ~5 nm), non-radiative energy transfer can quench the fluorescence. If it is too far, the enhancement effect diminishes rapidly [37].

- Inhomogeneous Field Enhancement: The enhanced electric field ("hot spots") around a plasmonic nanoparticle is highly localized. If the QDs are not positioned within these hot spots (e.g., at the tips of nanorods or within nanogaps), the average enhancement will be low [39].

- Poor QD Quality: The starting quantum efficiency (QE) of the QDs matters. Plasmonic enhancement multiplies the intrinsic radiative decay rate. QDs with low initial QE (e.g., due to surface defects) will show less absolute improvement [39].

Troubleshooting Guides & Protocols

Guide 1: Optimizing QD-Plasmonic Nanostructure Coupling

Problem: Weak or quenched photoluminescence from QDs coupled to plasmonic nanoarrays.

Objective: Achieve maximum emission enhancement by controlling the separation distance and spectral overlap.

Materials & Protocols:

This protocol is adapted from studies on coupling silica-coated QDs to silver nanorod arrays [38].

- Fabrication of Plasmonic Array: Create a periodic array of silver nanorods on a fused silica substrate using electron-beam lithography, PMMA resist, and metal evaporation (e.g., 30 nm Ag).

- Surface Functionalization:

- Immerse the substrate with the silver array in a 40 mM solution of (3-Mercaptopropyl)trimethoxysilane (3-MPTS) in ethanol for 72 hours to form a self-assembled monolayer (SAM). The thiol group binds covalently to the silver.

- Hydrolyze the SAM by immersing the substrate in a 10 mM NaOH aqueous solution for 4 hours. This creates silanetriols.

- QD Deposition: Immerse the functionalized substrate into a dilute suspension (e.g., 0.25 µM) of silica-coated QDs for 48 hours. The silanetriols on the SAM condense with the silica shell of the QDs, immobilizing them. The ~10 nm silica shell acts as a well-defined spacer to prevent quenching [38].

- Lift-Off: Perform a final lift-off step with acetone to remove excess metal, leaving QDs predominantly on the nanorods.

Troubleshooting Steps:

- Check Spectral Overlap: Measure the extinction spectrum of your plasmonic array and the photoluminescence (PL) spectrum of your QDs. Ensure the surface lattice resonance (SLR) or LSPR peak overlaps with the QD emission band [38].

- Verify Coupling: Use angle-resolved spectroscopy to acquire the emission spectrum. If coupling is successful, the QD emission will follow the narrow dispersion of the SLR, and directionality will be observed [38].

- Confirm Distance Control: Use atomic force microscopy (AFM) to verify an increase in height (~30 nm) of the functionalized nanorods, confirming the presence of the QDs [38].

- Measure Enhancement: Conduct fluorescence lifetime measurements using time-correlated single-photon counting (TCSPC). A reduction in fluorescence lifetime indicates an enhancement of the spontaneous emission rate due to the Purcell effect [38] [39].

Guide 2: Enhancing Multiexciton Emission in Single QDs

Problem: Biexciton emission from single QDs is quenched by fast Auger recombination, limiting brightness.

Objective: Use a plasmonic nanoantenna to enhance the radiative decay rate of both monoexcitons and biexcitons, counteracting non-radiative decay.

Materials & Protocols:

This protocol is based on the controlled coupling of a single "giant" QD to a gold nanocone antenna [39].

- Nanoantenna Fabrication: Fabricate gold nanocones on a glass substrate using focused ion beam milling.

- QD Selection: Use photostable "giant" QDs (e.g., CdSe/CdS core/shell with multiple shells) to suppress blinking and photodegradation during long measurements [39].

- Nanopositioning: Use a shear-force microscope with a glass fiber tip to pick up a single QD and position it with nanoscale precision in the near-field of the gold nanocone. Distance stabilization of a few nanometers is critical.

- Optical Measurement: Use a total internal reflection fluorescence (TIRF) microscope with a high-NA objective (e.g., NA=1.4) and a picosecond pulsed laser (e.g., 532 nm) for excitation.

- Data Analysis:

- Perform fluorescence lifetime decay measurements on the same QD both on a bare glass substrate and in the near-field of the antenna.

- Fit the decay curves with a bi-exponential model to extract lifetimes and relative weights for the monoexciton (long lifetime) and biexciton (short lifetime) components.

- Calculate the radiative (γr) and non-radiative (γnr) decay rates from the lifetime and quantum efficiency data.

Troubleshooting Steps:

- Ensure Single QD Measurement: Work at low dilution concentrations and confirm single-emitter behavior through photon antibunching measurements if necessary.

- Maximize Positioning Accuracy: The enhancement is extremely sensitive to position and orientation. Perform a lateral scan to find the location of maximum fluorescence enhancement, which should coincide with the antenna's hot spot [39].

- Decouple Excitation Enhancement: To isolate the pure spontaneous emission enhancement (Purcell factor), design your nanoantenna so its plasmon resonance coincides with the QD emission, not the excitation laser wavelength. This keeps the excitation enhancement factor (Kexc) close to 1 [39].

- Quantify Biexciton Enhancement: Compare the biexciton lifetime and quantum efficiency with and without the antenna. A successful experiment should show a significant reduction in lifetime and an increase in quantum efficiency for the biexciton state [39].

Quantitative Data & Reagent Solutions

Table 1: Reported Enhancement Factors for QD-Plasmonic Systems

| Plasmonic Structure | QD Type | Enhancement Factor & Key Metric | Experimental Conditions | Key Requirement |

|---|---|---|---|---|

| Gold Nanocone Antenna [39] | Single "Giant" CdSe/CdS QD | 109x (Monoexciton γr)100x (Biexciton γr) | QD positioned with nm-accuracy; Plasmon resonance at ~625 nm | Nanoscale positioning is critical |

| Silver Nanoparticle Array [38] | Silica-coated QDs | Enhanced directionality & reduced lifetime | Coupling to Surface Lattice Resonance (SLR) | Periodicity of array must be tuned to QD emission |

| Film-Coupled Ag Nanocube [37] | Organic Dyes / QDs | >2000x (Total Fluorescence) | Emitters in vertical dielectric gap <10 nm | Control of sub-10nm vertical gap |

| DNA Origami Nanoantenna [37] | Single Emitter | 5000x (Fluorescence) | Emitter placed in 6nm gap of Au sphere dimer | Precise self-assembly using DNA template |

The Scientist's Toolkit: Essential Research Reagents

| Reagent / Material | Function in Experiment | Troubleshooting Tip |

|---|---|---|

| (3-Mercaptopropyl)trimethoxysilane (3-MPTS) [38] | Bifunctional linker for covalent immobilization of silica-coated QDs on silver surfaces. | Ensure prolonged immersion (e.g., 72 hrs) for complete SAM formation. Hydrolysis in NaOH is crucial for QD binding. |

| Silica-Coated QDs [38] | Fluorescent emitters with a protective, functionalizable shell. | The silica shell (e.g., ~10 nm) acts as a precise spacer to prevent quenching while allowing strong near-field interaction. |

| "Giant" Core/Shell QDs [39] | Highly photostable QDs with suppressed blinking and high initial quantum efficiency. | Essential for single-QD measurements requiring high photostability for quantitative rate analysis. |

| DNA Origami Structures [37] | Scaffold for bottom-up assembly of plasmonic nanostructures with precise nanogaps. | Allows placement of a single QD in a designed hot spot (e.g., between two Au nanoparticles) with ~5 nm accuracy. |

Advanced FAQ

Q3: How can I specifically enhance biexciton emission, which is normally quenched?

Biexciton emission is typically quenched by fast Auger recombination. Plasmonic nanoantennas can outcompete this non-radiative decay by drastically enhancing the biexciton's radiative decay rate. Experimental work has shown that with a gold nanocone antenna, the radiative rate of biexcitons in a single QD can be enhanced 100-fold, raising its quantum efficiency from a low value to over 70% [39]. This requires precise spectral overlap of the antenna's resonance with the biexciton emission and exact nanoscale positioning.

Q4: What are the best practices for characterizing the enhancement in my system?

Beyond measuring total intensity increase, perform these quantitative characterizations:

- Fluorescence Lifetime Imaging Microscopy (FLIM): A reduction in fluorescence lifetime (τ) is a direct signature of an increased radiative decay rate (γr ∝ 1/τ) due to the Purcell effect [38] [39].

- Time-Correlated Single Photon Counting (TCSPC): This technique can resolve multi-exponential decays, allowing you to separately analyze the enhancement of monoexciton and biexciton states [39].

- Angle-Resolved Spectroscopy: If using periodic arrays, this method confirms coupling to collective modes like SLRs, which manifest as narrow, directional features in the emission spectrum [38].

- Single Particle Studies: For the most unambiguous results, conduct measurements on single QD-plasmonic nanostructure pairs to avoid ensemble averaging [39] [40].

A technical guide for researchers troubleshooting low signal intensity in spectroscopic measurements.

FAQs on Ground vs. Whole Sample Analysis

Q1: Why does sample homogeneity significantly impact my spectroscopic signal intensity?

Sample homogeneity directly influences how radiation interacts with your material. Inhomogeneous samples lead to inconsistent light scattering and absorption, causing significant variations in the collected signal. This not only reduces the overall signal-to-noise ratio but also compromises the reproducibility of your measurements. Proper preparation, such as grinding, creates a uniform matrix, ensuring that the analyzed portion is representative of the entire sample and that light-matter interactions are consistent [41].

Q2: For rapid, on-site NIR analysis, should I use whole or ground samples?

The choice depends on a trade-off between analytical speed and predictive accuracy. Using whole, unprocessed samples is faster and ideal for initial, high-throughput screening. However, for most compositional traits beyond dry matter, grinding improves predictive accuracy. The interference from water and the inherent heterogeneity of whole samples can obscure the spectral signatures of other nutrients. The performance loss is more pronounced in high-moisture samples, where errors can increase by 60-70% compared to 10-15% in drier samples [42].

Q3: My whole lentil samples are yielding inconsistent protein readings. Will grinding help?

Yes, grinding is highly recommended for consistent results. Research on lentils using Near-Infrared Reflectance Spectroscopy (NIRS) has shown that while some modern spectrometers can achieve similar accuracy for whole and ground samples for certain components, grinding generally improves homogeneity and model performance. For ingredients and raw materials like lentils, grinding reduces particle size variation, which is a primary source of sampling error and light scattering, leading to more reliable and intense signals for protein and amino acid content [43].

Q4: What are the primary disadvantages of the KBr pellet technique for FT-IR?

While the KBr pellet technique is a standard method for creating homogeneous solid samples for FT-IR, it has several drawbacks:

- Hygroscopic Nature: KBr readily absorbs moisture from the air, which can lead to fogged pellets and introduce interfering infrared absorption bands [44].

- Time-Consuming: The process of grinding, mixing, and pressing pellets can be slow, taking up to five minutes per sample [44].

- Brittleness: The resulting pellets are fragile and can easily crack or break, requiring careful handling [44].

- Potential for Polymorphic Changes: The high pressure used in pellet formation can sometimes induce changes in the crystallinity of the sample, altering its spectral properties [44].

Q5: How can I improve the signal-to-noise ratio (SNR) in my Raman measurements?

Beyond sample preparation, the method of calculating the signal itself can enhance your effective SNR. Multi-pixel calculation methods, which use information from multiple pixels across a Raman band (e.g., calculating band area or fitting a function), can provide a 1.2 to over 2-fold increase in SNR compared to single-pixel methods. This effectively lowers your limit of detection (LOD) and can make the difference between a feature being statistically significant or not [22].

Troubleshooting Low Signal Intensity

Low signal intensity often stems from poor sample preparation, which introduces physical and chemical heterogeneities. The following workflow and table address common symptoms and solutions.

Symptom and Solution Guide

| Symptom | Primary Cause | Corrective Action | Application Note |

|---|---|---|---|

| Signal Fluctuations during replicate measurements. | Sample Heterogeneity: The analyzed portion is not representative. | Grind or mill the sample to a consistent, small particle size (<75 μm for techniques like XRF) [41]. | For powdered samples, use a swing grinding machine for tough materials to avoid heat-induced chemical changes [41]. |

| Broad or Weak Absorption Peaks. | Large Particle Size or Inappropriate Sample Form: Causes excessive light scattering. | For FT-IR, use the KBr pellet technique or mull technique to create a homogeneous, transparent sample [44]. For liquids, ensure proper solvent selection [41]. | The KBr pellet technique offers better resolution and fewer spectral interferences than the Nujol mull technique [44]. |

| High Background Noise or Unidentified Peaks. | Contamination or Matrix Effects: From equipment or impurities. | Thoroughly clean all apparatus between samples. Use high-purity solvents and binders. For XRF pelletizing, use pure cellulose or wax binders [41]. | Inadequate preparation causes up to 60% of all spectroscopic analytical errors [41]. |

| Poor Predictive Model Performance in NIR for whole samples. | Water Interference & Nutrient Obscuring: Water bands dominate the NIR spectrum. | Dry and grind the sample. Calibrations from undried samples show significantly lower predictive accuracy for most traits except Dry Matter [42]. | The performance loss is most acute in high-moisture samples (e.g., errors up 60-70% for wet forage) [42]. |

Experimental Protocols for Homogeneity Comparison

Protocol 1: Verifying Homogeneity via NIR Predictive Performance

This protocol is adapted from studies on agricultural commodities to quantitatively compare the effect of grinding on analytical accuracy [42].

1. Objective: To determine the impact of sample grinding (whole vs. ground) on the predictive accuracy of a key nutritional component (e.g., protein content) using NIR spectroscopy.

2. Materials:

- Near-Infrared Spectrometer (e.g., benchtop NIR instrument)

- Laboratory grinder or mill

- Representative set of samples (e.g., 50+ units of a grain, powder, or ingredient)

- Reference method equipment (e.g., Kjeldahl apparatus for protein analysis)

3. Methodology:

- Sample Division: For each sample unit, split the material into two identical subsamples.

- Sample Processing:

- Whole Group: Keep one subsample whole and unprocessed.

- Ground Group: Grind the second subsample to a fine, consistent particle size.

- Spectral Acquisition: Acquire NIR spectra from all samples (both whole and ground) using the same instrument and settings.

- Reference Analysis: Determine the actual concentration of the analyte of interest (e.g., protein) for all samples using the standard reference method.

- Model Development & Comparison:

- Develop two separate Partial Least Squares (PLS) regression calibration models: one using spectra from whole samples and another using spectra from ground samples.

- Use a common set of validation samples not included in the model building.

- Compare key performance metrics: Coefficient of Determination (R²) and Standard Error of Cross-Validation (SECV).

4. Expected Outcome: The model built from ground samples will typically show a higher R² and a lower SECV, demonstrating superior predictive accuracy due to enhanced sample homogeneity.

Protocol 2: FT-IR Solid Sampling Technique Comparison

This protocol outlines a direct comparison of common solid sample preparation methods for FT-IR spectroscopy, highlighting trade-offs between ease of use and spectral quality [44].

1. Objective: To evaluate different solid sampling techniques for FT-IR based on signal quality, ease of preparation, and risk of interference.

2. Materials:

- FT-IR Spectrometer

- Hydraulic Pellet Press

- KBr powder (spectroscopic grade)

- Nujol (mineral oil)

- Mortar and pestle

- Agate ball mill (optional)

3. Methodology:

- KBr Pellet Method:

- Grind ~1 mg of sample with 200 mg of dry KBr powder.

- Press the mixture in a hydraulic press at high pressure (e.g., 10-15 tons) for 1-2 minutes to form a transparent pellet.

- Mount the pellet in the spectrometer and acquire the spectrum.

- Nujol Mull Method:

- Grind a small amount of sample to a fine powder.

- Mix with a few drops of Nujol to create a thick paste.

- Sandwich the paste between two salt plates (e.g., NaCl) and acquire the spectrum.

- Solid Film/Solution Cast Film:

- Dissolve the solid in a volatile, non-aqueous solvent (e.g., chloroform, acetone).

- Place a few drops of the solution onto a salt plate.

- Allow the solvent to evaporate completely, leaving a thin film of the solute for analysis.

4. Data Interpretation: Compare the acquired spectra for resolution of sharp peaks, baseline flatness, and the presence of interfering absorption bands (e.g., from Nujol or solvent residues).

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function | Application Note |

|---|---|---|

| KBr (Potassium Bromide) | A transparent matrix for creating pellets for FT-IR analysis. It is hygroscopic and must be kept dry [44]. | FT-IR Spectroscopy. |

| Nujol (Mineral Oil) | A mulling agent used to suspend fine powder samples for FT-IR analysis. It exhibits C-H absorption bands that can interfere with analyte signals [44]. | FT-IR Spectroscopy (Mull Technique). |

| M-Nitrobenzyl Alcohol (NBA) | A common matrix for solid samples in Fast Atom Bombardment (FAB) Mass Spectrometry [45]. | Mass Spectrometry (FAB). |

| Cellulose/Wax Binders | Binders used to provide structural integrity to powdered samples during pelletizing for XRF analysis [41]. | X-Ray Fluorescence (XRF). |

| Lithium Tetraborate | A common flux used in fusion techniques to fully dissolve refractory materials for homogeneous glass disk formation [41]. | XRF for silicates, minerals, ceramics. |

| Volatile Modifiers (Formic Acid, Ammonium Acetate) | Additives used in liquid carrier streams to promote ionization and stabilize the analyte for ESI-MS analysis [45]. | Electrospray Ionization Mass Spectrometry (ESI-MS). |

| Sinapinnic Acid / α-Cyano-4-hydroxycinnamic acid (CHCA) | Organic acids that act as matrices for desorption/ionization in Matrix-Assisted Laser Desorption/Ionization (MALDI) MS [45]. | MALDI Mass Spectrometry. |

Practical Troubleshooting Protocol: Systematic Diagnosis and Resolution

FAQs on Low Signal Intensity

What are the most common causes of low signal intensity in spectroscopy? Low signal intensity often stems from instrumental issues, accessory problems, or sample preparation errors. Common causes include a contaminated ATR crystal, misaligned optics, degraded light sources, improper detector settings, and environmental interference from vibrations or temperature fluctuations [10] [21].

How can I distinguish between an accessory problem and a detector problem? A systematic approach is required. First, run a background or blank measurement with the accessory in place but no sample. If the blank spectrum shows high noise, negative peaks, or an unstable baseline, the issue is likely with the accessory or environment. If the blank is stable but sample signals are weak, the problem may lie with the sample, the detector, or the source. Testing with a known standard can help isolate the detector's performance [10] [21].

What specific steps can I take to verify my ATR accessory is functioning properly? For ATR accessories, the most common issue is a dirty element [10].

- Inspect the ATR crystal visually for scratches or residue.

- Clean the crystal thoroughly with a recommended solvent and a soft cloth.

- Collect a new background spectrum on the clean crystal.

- Measure a well-understood standard (like a polymer film) and check for expected peak intensities and positions. Negative peaks in your sample spectrum indicate the background was collected on a dirty crystal [10].

My signal-to-noise ratio is poor. Is this a detector problem? A poor signal-to-noise ratio (SNR) can indicate detector issues, but it can also be caused by a weak source, insufficient purging, or electronic interference [22] [21]. To diagnose:

- Ensure adequate integration time and detector gain settings.

- Verify the instrument is properly purged to reduce atmospheric interference.

- Check for sources of electronic noise.

- Consult manufacturer specifications for the detector's typical SNR performance with a standard sample. Multi-pixel SNR calculations can also improve the limit of detection and provide a more robust assessment of signal quality [22].

Diagnostic Protocols and Procedures

Initial Assessment and Visual Inspection

Before advanced diagnostics, perform a basic inspection [21]:

- Accessory Check: Look for obvious damage, contamination, or misalignment of accessories like ATR crystals or fiber optic probes.

- Cables and Connections: Ensure all cables are secure and undamaged.

- Source Status: Check instrument indicators for source life or errors.

- Environment: Note any potential sources of vibration, temperature swings, or drafts.

Quantitative Detector Performance Verification

This procedure uses a stable luminescence or Raman standard to assess detector health quantitatively. The table below outlines key parameters to track.

Table 1: Key Parameters for Detector Performance Tracking

| Parameter | Description | Acceptance Criterion |

|---|---|---|

| Signal-to-Noise (SNR) | Ratio of peak signal intensity to baseline noise [22]. | ≥3:1 for detection limit; should meet or historical baseline for a standard. |

| Peak Intensity | Absolute signal count for a specific peak of the standard. | Within 10-15% of the historical average for the standard. |

| Spectral Noise | Standard deviation of the signal in a flat, featureless baseline region [22]. | Should be low and stable; significant increase indicates detector degradation or electronic interference [21]. |

| Dark Noise | Noise measured with the source off and detector active. | Should be low and stable per manufacturer's spec. |

Experimental Protocol:

- Select Standard: Choose a stable, solid or liquid standard with a well-characterized spectrum (e.g., a Raman standard like naphthalene, a luminescent standard, or a stable solvent for IR).

- Establish Baseline: Measure the standard using the exact same parameters (integration time, laser power, resolution) each time. Record the SNR, peak intensity, and baseline noise. This initial data set serves as your performance baseline.

- Routine Monitoring: Perform this measurement weekly or monthly, and whenever performance is suspect.

- Data Analysis: Compare current results to your baseline. A consistent drop in peak intensity or SNR suggests detector aging or source degradation. A sharp increase in noise suggests electronic issues or contamination [21].

Workflow for Systematic Diagnostics

The following diagram outlines a logical troubleshooting path for diagnosing low signal intensity.

Accessory-Specific Troubleshooting: ATR

ATR is a common sampling technique with specific failure modes. This workflow details the diagnostic steps.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table lists key materials required for the performance verification experiments described in this guide.

Table 2: Essential Materials for Performance Verification

| Item | Function | Example Materials |

|---|---|---|

| Stable Spectral Standard | Provides a consistent, known signal to verify detector response, SNR, and intensity over time. | Naphthalene (Raman), Polystyrene (IR/ATR), Luminescent standards (UV-Vis), Stable solvents (e.g., CCl4). |

| ATR Cleaning Solvents | Removes contamination from ATR crystals without damaging the crystal surface. | HPLC-grade methanol, acetone, isopropanol; suitable solvents vary by crystal material (ZnSe, diamond, etc.). |

| Certified Reference Material | Used for formal method validation and ensuring analytical accuracy against a traceable standard [46]. | NIST-traceable standards for your specific analyte. |

| Purging Gas | Reduces spectral interference from atmospheric water vapor and CO2 in the optical path. | Dry, compressed nitrogen or zero air. |

Technical FAQs: Solving Common ATR Problems

FAQ 1: Why does my ATR spectrum show strange negative peaks? This is a classic indicator of a contaminated ATR crystal. Negative absorbance peaks occur when a substance present during the background measurement (e.g., residue on a dirty crystal) is absent or different during the sample measurement [10]. The spectrometer interprets this change as a negative absorption. Common contaminants include residual sample from a previous run, oils from skin, or adhesive residues [9] [47].

- Solution: Clean the ATR crystal thoroughly with a soft cloth and an appropriate solvent (e.g., ethanol or methanol), then collect a new background spectrum with the clean, empty crystal. Always perform this step before analyzing a new sample [10] [48].

FAQ 2: My sample spectrum looks different from the reference standard. Could the problem be my sample? Yes, this is often due to a discrepancy between surface and bulk chemistry. Attenuated Total Reflection (ATR) is a surface-sensitive technique, typically probing only the first 0.5 to 2 microns of the sample [49] [50] [48]. The surface chemistry can be unrepresentative of the bulk material for several reasons:

- Surface Migration: Additives like plasticizers can migrate to or away from the surface over time [10].

- Surface Oxidation: The outer layer may be oxidized due to exposure to air, while the bulk remains unaffected [10] [51].

- Processing Effects: Sample preparation (e.g., molding, extrusion) can alter the surface composition.

- Solution: To analyze the bulk material, cut the sample to expose a fresh, interior surface and collect a new spectrum from this area. The spectrum from the fresh cut is often more representative of the true bulk composition [10].

FAQ 3: How can I verify that my ATR crystal is clean? You can perform a manual "clean check" procedure [47]:

- Set your FT-IR instrument to ATR mode and measure a background spectrum.

- Place a non-absorbing, hard item (e.g., an eggshell business card) on the crystal and apply the standard contact pressure. Measure its spectrum.

- Release the pressure to raise the crystal and measure another spectrum without any sample.

- Examine this final spectrum. If it is essentially flat, the crystal is clean. If distinct peaks are present, it indicates contamination from the sample, and the crystal requires cleaning [47].

FAQ 4: What should I do if I suspect poor contact between my hard, rigid sample and the ATR crystal? Poor contact is a known challenge for hard solids and can lead to distorted or low-intensity spectra [52]. To ensure good contact:

- Apply Pressure: Use the instrument's pressure applicator to press the sample firmly against the crystal. Ensure the sample has a flat surface for even contact [47] [48].

- Use a Durable Crystal: Diamond ATR crystals are ideal for this, as their high hardness allows for the application of significant pressure without damaging the crystal [48].

Troubleshooting Guide: Low Signal Intensity

Low signal intensity is a common symptom in ATR-FTIR experiments. The following flowchart outlines a systematic diagnostic approach.

Detailed Corrective Actions

- Ensuring Good Sample-Crystal Contact: For rigid solids, the contact problem can create a microscopic air gap that severely attenuates the signal. Use accessories that apply controlled pressure to flatten the sample against the crystal. Diamond crystals are recommended for this, as they can withstand high pressure without damage [52] [48].