Specificity and Selectivity in Spectroscopic Analysis: Validation Strategies for Drug Development and Biomedical Research

This article provides a comprehensive guide to validating the specificity and selectivity of spectroscopic methods, crucial for ensuring data integrity in drug development and biomedical research.

Specificity and Selectivity in Spectroscopic Analysis: Validation Strategies for Drug Development and Biomedical Research

Abstract

This article provides a comprehensive guide to validating the specificity and selectivity of spectroscopic methods, crucial for ensuring data integrity in drug development and biomedical research. It covers foundational principles, advanced methodological applications, troubleshooting for complex matrices, and rigorous validation protocols aligned with ICH/FDA guidelines. By integrating traditional chemometrics with emerging AI techniques, the content offers scientists a strategic framework for developing robust analytical procedures that accelerate regulatory approval and enhance research reliability.

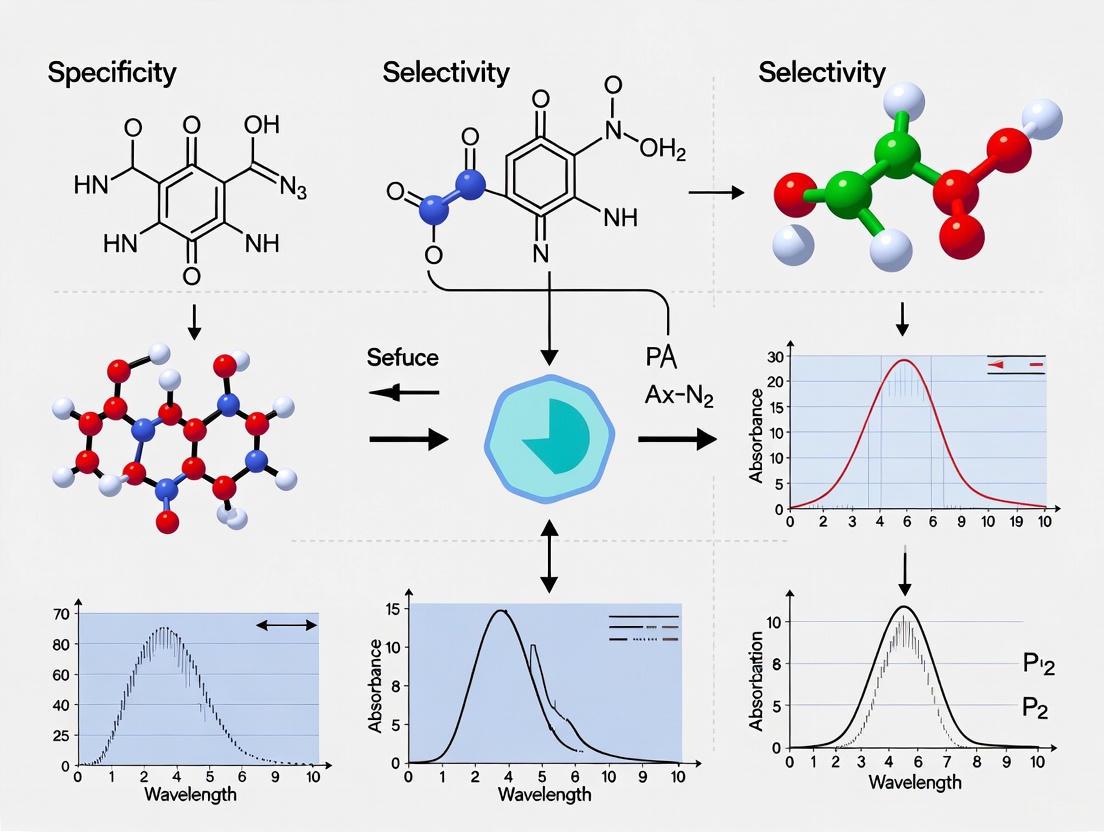

Core Principles: Defining Specificity and Selectivity in Spectroscopic Methods

In the rigorous world of analytical science, particularly within spectroscopic analysis and drug development, the terms specificity and selectivity represent foundational validation parameters. While often used interchangeably in casual discourse, they hold distinct scientific meanings with significant implications for method reliability and regulatory acceptance. According to International Union of Pure and Applied Chemistry (IUPAC) recommendations, specificity represents the ultimate degree of selectivity, describing methods that can respond exclusively to a single analyte in the presence of other components. Selectivity, in contrast, refers to a method's ability to measure several components simultaneously while clearly distinguishing between them, without implying exclusivity [1]. This distinction is not merely semantic; it forms the bedrock of dependable analytical methods in environmental monitoring, pharmaceutical development, and clinical diagnostics, ensuring that measurements reflect true analyte presence and concentration without interference from complex sample matrices.

Defining the Spectrum: From Selectivity to Specificity

IUPAC Terminology and Practical Interpretation

The relationship between selectivity and specificity is best visualized as a spectrum, with poorly selective methods at one end and truly specific methods at the other. The IUPAC conceptualizes specificity as the "ultimate of selectivity" [1], establishing a hierarchy where all specific methods are inherently selective, but not all selective methods achieve the gold standard of specificity. This distinction becomes critically important when validating methods for regulated environments like pharmaceutical quality control or environmental pollutant monitoring, where the claimed performance characteristics directly impact data integrity and decision-making.

Regulatory Context and Application Challenges

The January 2025 FDA guidance on biomarker method validation acknowledges this distinction, suggesting that traditional pharmacokinetic (PK) validation approaches serve only as a starting point [2]. For drug assays, specificity and selectivity are typically demonstrated through straightforward spike recovery experiments using the well-characterized drug product. However, biomarker assays present a more complex scientific reality as they measure endogenous molecules present in a biological matrix from the outset. This fundamental difference necessitates fundamentally different validation approaches:

- Specificity Assessment: The central question shifts from simple spike recovery to demonstrating that critical reagents recognize both the standard calibrator material and the endogenous analyte in a similar manner, typically confirmed through careful parallelism studies [2].

- Selectivity Evaluation: Rather than focusing solely on spike recovery, selectivity for biomarker assays requires demonstrating parallelism across a range of dilutions in individual samples containing the endogenous analyte, verifying consistent method performance across biological diversity [2].

Experimental Protocols for Demonstrating Specificity and Selectivity

Case Study: Raman Spectroscopy for Heavy Metal Detection in Rice

A recent 2025 study investigating heavy metal stress in rice provides a robust experimental model for demonstrating selectivity in vibrational spectroscopy [3]. The protocol highlights how spectroscopic techniques can distinguish between different stressors based on their unique biochemical signatures.

Experimental Workflow:

Diagram 1: Experimental workflow for detecting heavy metal stress in rice using Raman spectroscopy and ICP-MS validation [3].

Detailed Methodology:

- Plant Cultivation and Treatment: Rice plants (Oryza sativa) were cultivated in a controlled hydroponic system for two weeks before being exposed to varying concentrations of arsenic (As), cadmium (Cd), and lead (Pb) in a dose-response experimental design [3].

- Spectral Acquisition: An Agilent Resolve hand-held Raman spectrophotometer with an 830 nm laser was used to collect spectra from rice leaves. Acquisition parameters were set at 1 second with 495 mW laser power, with 24 Raman spectra collected for each treatment group weekly for six weeks [3].

- Reference Analysis: Inductively coupled plasma mass spectrometry (ICP-MS) using a PerkinElmer NexION 300D with a Cetac ASX-520 autosampler was performed on digested plant tissue to quantitatively determine heavy metal accumulation, establishing ground truth data [3].

- Data Processing and Chemometrics: Collected spectra were baselined and normalized. Advanced statistical analyses including analysis of variance (ANOVA), partial least squares discriminant analysis (PLS-DA), and two-dimensional correlation spectroscopy (2D-COS) were applied to identify significant spectral patterns and build predictive models [3].

Key Research Reagent Solutions

Table 1: Essential research reagents and instrumentation for spectroscopic specificity/selectivity studies.

| Item/Reagent | Function in Experiment | Technical Specifications |

|---|---|---|

| Agilent Resolve Raman Spectrophotometer | Spectral data acquisition from plant samples | 830 nm laser wavelength, 495 mW power, 1s acquisition time [3] |

| PerkinElmer NexION 300D ICP-MS | Quantitative elemental analysis for validation | Quadrupole ICP-MS with rhodium internal standard [3] |

| Yoshida Nutrient Solution | Standardized plant growth medium | Contains macronutrients (NH₄NO₃, NaH₂PO₄, etc.) and micronutrients (MnCl₂, H₃BO₃, etc.) [3] |

| Certified Reference Materials | ICP-MS calibration and method validation | Certified arsenic reference material in 2% nitric acid for generating 1-200 ng/mL calibration curve [3] |

| Chemometric Software (R, PLS_Toolbox) | Spectral data processing and pattern recognition | For ANOVA, PLS-DA, and 2D-COS analysis [3] |

Comparative Performance in Spectroscopic Techniques

Quantitative Analysis of Selectivity Performance

Table 2: Selectivity and specificity performance across analytical techniques.

| Analytical Technique | Demonstrated Capability | Experimental Evidence | Key Performance Metrics |

|---|---|---|---|

| Raman Spectroscopy (RS) | High selectivity for heavy metal stress | Distinguished As, Cd, Pb via unique carotenoid/phenylpropanoid signatures [3] | 84.5% classification accuracy with PLS-DA; dose-dependent spectral changes [3] |

| Surface-Enhanced Raman Spectroscopy (SERS) | High sensitivity but matrix susceptibility | Au clusters@rGO substrate achieved EF of 3.5×10⁷; NOM causes spectral artefacts [4] [5] | 10x sensitivity increase vs conventional SERS; microheterogeneous analyte distribution [4] [5] |

| Inductively Coupled Plasma Mass Spectrometry (ICP-MS) | High specificity for elemental analysis | Gold standard for heavy metal detection in plant tissue [3] | Low limit of detection; multi-analyte capability [4] [3] |

| Portable XRF/XRD (ID2B) | Moderate selectivity for field mineralogy | Combined XRD-XRF for in situ chemical/mineralogical characterization [4] | Rapid screening but light element detection limitations [4] |

Signaling Pathways and Molecular Mechanisms

The biochemical basis for Raman spectroscopy's selectivity lies in the distinct stress response pathways activated by different heavy metals in plants. These pathways produce unique molecular fingerprints detectable through vibrational spectroscopy.

Diagram 2: Heavy metal stress signaling pathways and detectable Raman spectral responses in plants [3].

Regulatory Importance and Industry Applications

Implications for Method Validation in Pharmaceutical Development

The specificity/selectivity distinction carries profound regulatory importance in drug development and biomarker validation. The recent FDA guidance emphasizes context-specific approaches, where:

- Drug Assay Validation relies on spike recovery experiments of the well-characterized drug product in biological matrices [2].

- Biomarker Assay Validation requires parallelism studies demonstrating consistent recognition of endogenous analyte across biological diversity [2].

This framework ensures that analytical methods are properly validated for their intended use, whether for pharmacokinetic studies, diagnostic applications, or environmental monitoring. Regulatory agencies increasingly require explicit demonstration of how methods distinguish target analytes from potential interferents in complex matrices.

Advanced Applications in Environmental and Food Analysis

The principles of specificity and selectivity find critical applications in environmental and food safety monitoring:

- Nanoplastic Detection: Advanced Raman techniques including SERS address challenges in detecting nanoplastics with required sensitivity and selectivity, though matrix effects remain problematic [4].

- Food Contaminant Screening: Wide Line SERS (WL-SERS) enables tenfold sensitivity increases for detecting contaminants like melamine in raw milk, while machine learning models achieve 99.85% accuracy in identifying adulterants [5].

- Single-Cell Analysis: ICP-MS/MS advancements enable high-resolution elemental analysis at the single-cell level, demonstrating exceptional selectivity for evaluating nanoparticle toxicity and cellular elemental composition [4].

The distinction between specificity and selectivity is far more than terminological pedantry; it represents a fundamental principle in analytical science with direct implications for method validation, regulatory compliance, and measurement reliability. As spectroscopic techniques continue to evolve with enhancements like SERS substrates, portable XRD-XRF instruments, and AI-powered spectral analysis [4] [5], the rigorous application of these concepts becomes increasingly critical. For researchers and drug development professionals, a precise understanding of specificity as the ultimate expression of selectivity provides a crucial framework for developing methods that generate trustworthy data, ensure public safety, and meet the exacting standards of regulatory scrutiny across pharmaceutical, environmental, and clinical domains.

The Role of Specificity in Biomarker Validation and Drug Development Pipelines

In the landscape of modern drug development, the concepts of specificity and selectivity are foundational to generating reliable and actionable data. While often used interchangeably, they address distinct analytical challenges. Specificity is the ability of a method to measure the analyte accurately and exclusively in the presence of other components in the sample, such as metabolites, degradants, or matrix interferences. Selectivity is the ability of the method to differentiate and quantify the analyte amidst other analytes that may produce similar signals [2] [6]. For biomarker validation, demonstrating that critical reagents recognize both the standard calibrator material and the endogenous analyte in a similar fashion is paramount; this is typically confirmed through careful parallelism studies rather than simple spike recovery experiments used for traditional drug assays [2].

The January 2025 FDA guidance on Bioanalytical Method Validation for Biomarkers has intensified the focus on these parameters, suggesting the use of pharmacokinetic (PK) validation approaches as a starting point but acknowledging that biomarkers demand fundamentally different scientific approaches due to their endogenous nature and the complexity of their biological context [2] [7]. This guide will objectively compare the performance of various analytical techniques and experimental protocols used to establish specificity and selectivity, providing a framework for researchers to select the most appropriate methods for their specific needs in spectroscopic analysis and drug development.

Comparative Analysis of Specificity Assessment Techniques

A "one-size-fits-all" approach is not applicable for specificity validation. The choice of technique is driven by the context of use (COU), the biological matrix, and the required sensitivity. The following sections compare key methodologies, from spectroscopic techniques to cellular profiling assays.

Spectroscopic Techniques for Elemental Analysis

The selection of a spectroscopic method depends heavily on the analytical need, such as the elements targeted, required sensitivity, and sample preparation tolerance. The table below compares the performance of four common techniques for multielemental analysis of biological tissues like hair and nails [8].

Table 1: Comparison of Spectroscopic Techniques for Multielemental Analysis

| Technique | Suitable Elements | Key Strengths | Sample Preparation | Primary Applications |

|---|---|---|---|---|

| Energy Dispersive X-ray Fluorescence (EDXRF) | Light elements at high concentrations (S, Cl, K, Ca) | Rapid, non-destructive | Minimal | Disease diagnostics, environmental monitoring |

| Total Reflection X-ray Fluorescence (TXRF) | Broad range, including Bromine (Br) | Information on most elements present | Moderate | Forensic investigations, material science |

| Inductively Coupled Plasma Optical Emission Spectroscopy (ICP-OES) | Major, minor, and trace elements (except Cl) | Wide dynamic range, good sensitivity | Extensive (digestion) | Research requiring broad elemental quantification |

| Inductively Coupled Plasma Mass Spectrometry (ICP-MS) | Major, minor, and trace elements (except Cl) | Excellent sensitivity, very low detection limits | Extensive (digestion) | Trace element analysis, exposure monitoring |

Advanced Cellular Selectivity Profiling Methods

For characterizing small molecule interactions in a physiologically relevant environment, cellular selectivity profiling is indispensable. Biochemical assays, while quantitative, often fail to predict true cellular selectivity. The table below compares three advanced live-cell profiling methods [9].

Table 2: Comparison of Cellular Selectivity Profiling Methods

| Method | Principle | Throughput | Target Coverage | Key Advantage |

|---|---|---|---|---|

| Chemical Proteomics | Probe-based enrichment of bound proteins for MS analysis | Low to Medium | Proteome-wide | Unbiased identification of novel off-targets |

| CETSA-MS (Cellular Thermal Shift Assay - Mass Spectrometry) | Measure protein stabilization upon compound binding (probe-free) | Low to Medium | Proteome-wide | Probe-free; detects ligand-induced stability changes |

| NanoBRET Target Engagement | BRET-based probe displacement using NanoLuc-tagged proteins | High (adaptable to HTS) | Defined panels (e.g., 192 kinases) | Direct, quantitative affinity measurement in live cells |

The performance differences between these methods can lead to distinct biological insights. For instance, profiling the kinase inhibitor Sorafenib against a panel of 192 kinases revealed an improved selectivity profile in live cells compared to cell-free biochemical analysis. Crucially, the cellular NanoBRET assay uncovered two novel off-targets, NTRK2 and RIPK2, which were missed by biochemical profiling, highlighting the potential of cellular methods for de-risking drug candidates [9].

Experimental Protocols for Specificity and Selectivity Assessment

Protocol 1: Biomarker Assay Parallelism for Specificity

Demonstrating specificity in biomarker assays requires parallelism experiments to confirm consistent recognition of the endogenous analyte by critical reagents [2].

Workflow Overview: Biomarker Parallelism Testing

Detailed Methodology:

- Sample Preparation: Prepare a dilution series of the reference standard calibrator spiked into a surrogate matrix. In parallel, prepare a dilution series of individual, endogenous sample matrices (e.g., serum or plasma) from multiple donors [2] [7].

- Analysis: Analyze all dilution series using the validated biomarker assay.

- Data Analysis: Plot the measured response against the dilution factor for both the calibrator curve and the individual sample curves.

- Interpretation: Assess the curves for superimposability or parallelism. Consistent, parallel curves between the calibrator and the endogenous samples demonstrate that the assay reagents recognize both entities similarly, thereby confirming assay specificity for the endogenous biomarker [2].

Protocol 2: Cross-Signal Contribution in LC-MS/MS

For techniques like LC-MS/MS, which have intrinsic specificity, validation must rule out subtle interferences, especially for ultra-trace analysis of genotoxic impurities like nitrosamines [6].

Workflow Overview: LC-MS/MS Cross-Signal Testing

Detailed Methodology:

- Sample Preparation: Prepare solutions containing each potential interfering analyte (e.g., known impurities or degradants) individually at expected maximum concentrations. Prepare a separate solution where all analytes are spiked together [6].

- Chromatographic Analysis: Inject the individual and spiked solutions into the LC-MS/MS system. Monitor all multiple reaction monitoring (MRM) transitions, accurate mass, and retention times.

- Data Analysis: Compare the signal for each analyte in the individual injection to its signal in the spiked mixture.

- Interpretation: The method is specific if no significant signal alteration, cross-talk, or in-source fragmentation is observed that would impact the accurate quantification of any analyte. This experiment validates signal integrity in a complex mixture [6].

Protocol 3: Cellular Target Engagement via NanoBRET

This protocol quantitatively measures a compound's affinity for its target directly in live cells, providing a physiologically relevant selectivity profile [9].

Detailed Methodology:

- Cell Preparation: Seed cells expressing NanoLuc-tagged target proteins of interest in a multi-well plate.

- Compound Treatment: Add a titration series of the test compound to the cells.

- Probe Addition: Add a constant concentration of a cell-permeable, fluorescent tracer that binds to the target protein and produces a BRET signal with the NanoLuc tag.

- Signal Measurement: Measure both the luminescence (from NanoLuc) and the BRET signal (from the tracer). The test compound will displace the tracer, leading to a decrease in the BRET signal in a dose-dependent manner.

- Data Analysis: Plot the normalized BRET ratio against the compound concentration to determine the apparent cellular IC₅₀ or Kd value. By profiling one compound against a panel of related targets (e.g., a kinome panel), a quantitative cellular selectivity index is generated [9].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful specificity validation relies on a suite of specialized reagents and tools. The following table details key solutions for the featured experiments.

Table 3: Key Research Reagent Solutions for Specificity Validation

| Item | Function / Description | Application Context |

|---|---|---|

| Certified Reference Materials (CRMs) | Materials with certified composition and purity for method calibration and accuracy assessment. | Spectroscopic analysis (e.g., ED-XRF, WD-XRF) to validate detection limits and elemental quantification [10]. |

| Surrogate Matrix | A matrix free of the endogenous analyte, used to prepare calibration standards for biomarker assays. | Ligand-binding assays (e.g., ELISA) where the native matrix contains the biomarker, enabling standard curve generation [7]. |

| NanoLuc-Fusion Constructs | Vectors for expressing target proteins (e.g., kinases) fused to a small, bright luciferase tag. | NanoBRET Target Engagement assays for live-cell, high-throughput selectivity profiling [9]. |

| Bioorthogonal Chemical Probes | Compound derivatives containing a small, live-cell compatible reactive handle (e.g., alkyne) for subsequent capture. | Chemical proteomics in intact cells for proteome-wide identification of compound off-targets [9]. |

| Stable Isotope-Labeled Internal Standards | Analytically identical molecules labeled with heavy isotopes (e.g., ¹³C, ¹⁵N) for mass spectrometric detection. | LC-MS/MS bioanalysis to correct for matrix effects and variability in sample preparation, improving accuracy and precision [6]. |

The rigorous demonstration of specificity and selectivity is not a mere regulatory checkbox but a scientific imperative that underpins the entire drug development pipeline. As evidenced by the comparative data and protocols, the choice of method—whether spectroscopic, chromatographic, or cell-based—must be driven by a fit-for-purpose strategy aligned with the biomarker's or drug's context of use [2] [11] [7]. The evolving regulatory landscape, exemplified by the 2025 FDA guidance, emphasizes that traditional drug assay approaches are insufficient for the complex reality of endogenous biomarkers. By leveraging advanced tools like cellular target engagement assays and cross-signal contribution experiments, researchers can generate more physiologically relevant and reliable data, ultimately de-risking drug candidates and accelerating the delivery of safe and effective therapies to patients.

The validation of specificity and selectivity forms the cornerstone of reliable spectroscopic analysis in research and development. For scientists and drug development professionals, choosing the appropriate analytical technique is paramount, as it directly impacts the accuracy, efficiency, and regulatory compliance of their work. This guide provides an objective comparison of four widely used spectroscopic techniques—X-Ray Fluorescence (XRF), Inductively Coupled Plasma Mass Spectrometry (ICP-MS), Fourier-Transform Infrared (FT-IR) Spectroscopy, and Raman Spectroscopy—framed within the critical context of specificity and selectivity validation. The ability of a technique to unambiguously identify an analyte (specificity) and distinguish it from other components in a mixture (selectivity) is a fundamental validation requirement in pharmaceutical methods and materials characterization. We explore how each technique meets these challenges, supported by experimental data and detailed protocols to inform method development and instrumental selection.

X-Ray Fluorescence (XRF)

XRF is an analytical technique used to determine the elemental composition of materials. It operates by exposing a sample to high-energy X-rays, causing the atoms to become excited and emit secondary (or fluorescent) X-rays that are characteristic of specific elements. By measuring the energies and intensities of these emitted X-rays, the instrument can identify and quantify the elements present [12] [13]. XRF is categorized into Energy Dispersive (EDXRF) and Wavelength Dispersive (WDXRF) systems, with the latter typically offering higher resolution and sensitivity, capable of detecting elements from beryllium to curium [13]. Its non-destructive nature and minimal sample preparation make it highly valuable for quality control and regulatory compliance across various industries.

Inductively Coupled Plasma Mass Spectrometry (ICP-MS)

ICP-MS is a powerful technique for trace element and isotopic analysis. In ICP-MS, a liquid sample is nebulized into an aerosol and transported into a high-temperature argon plasma (approximately 5500–6500 K), where it is atomized and ionized. The resulting ions are then separated and quantified based on their mass-to-charge ratio by a mass spectrometer [12] [14] [15]. This process provides exceptionally low detection limits, often in the parts per trillion (ppt) range, and the ability to measure almost all elements in the periodic table [12] [15]. The technique is known for its high sample throughput and wide dynamic range, making it a gold standard for ultratrace analysis in clinical, environmental, and pharmaceutical fields [14].

Fourier-Transform Infrared (FT-IR) Spectroscopy

FT-IR spectroscopy is a molecular analysis technique that probes the vibrational energy levels of chemical bonds. It measures the absorption of infrared light by a sample, producing a spectrum that serves as a molecular fingerprint. Attenuated Total Reflectance (ATR) is a prevalent sampling accessory for FT-IR that allows for the direct analysis of solids, liquids, and powders without extensive preparation [16]. ATR-FTIR works by pressing the sample against a high-refractive-index crystal. An infrared beam undergoes total internal reflection within the crystal, generating an evanescent wave that interacts with the sample, selectively absorbing energy at characteristic wavelengths [16]. This technique is particularly useful for identifying functional groups, characterizing molecular structure, and studying chemical changes in materials.

Raman Spectroscopy

Raman spectroscopy is based on the inelastic scattering of monochromatic light, typically from a laser. When light interacts with a molecule, a tiny fraction of the scattered light shifts in energy from the original laser frequency. These shifts correspond to the vibrational energies of the chemical bonds, providing a unique spectral fingerprint of the material [3] [17]. Unlike FT-IR, Raman spectroscopy is often less affected by water, making it suitable for analyzing aqueous solutions. It is a non-destructive technique that requires minimal sample preparation and is highly effective for identifying polymorphs, studying carbon-based materials, and imaging spatial distribution of components in a heterogeneous sample [3] [17].

Comparative Analysis of Performance Characteristics

The following tables summarize the key performance metrics, strengths, and limitations of each technique, providing a clear basis for comparative evaluation.

Table 1: Quantitative Performance Metrics for Spectroscopic Techniques

| Technique | Typical Detection Limits | Elemental/Molecular Range | Analytical Speed | Sample Throughput |

|---|---|---|---|---|

| XRF | ppm to ~100% [13]; High-power WDXRF can achieve sub-ppm [13] | Elements from Na (11) to Cm (96); WDXRF from Be (4) [13] | Rapid (seconds to minutes) [12] | High [12] |

| ICP-MS | ppt (ng/L) range [12] [15] | Most elements in the periodic table [15] | Rapid (multi-element analysis in a single run) [14] [15] | Very High [14] [15] |

| FT-IR (ATR) | ~1% (highly dependent on sample and mode) | Molecular; functional groups and molecular structure [16] [17] | Very Rapid (seconds) [16] | High [16] |

| Raman | ~0.1-1% (can be lower with enhanced techniques) | Molecular; vibrational fingerprints, symmetry [3] [17] | Rapid (seconds to minutes) [3] | Moderate to High [3] |

Table 2: Key Strengths and Limitations Governing Specificity and Selectivity

| Technique | Core Strengths | Key Limitations |

|---|---|---|

| XRF | Non-destructive [13]; Minimal sample preparation [12]; Direct analysis of solids, liquids, powders [13]; Quantitative and qualitative analysis | Cannot detect light elements (H-Li) easily [13]; Limited sensitivity vs. ICP-MS [13]; Generally cannot distinguish isotopes or oxidation states [13]; Matrix effects can be significant [13] |

| ICP-MS | Exceptionally low detection limits [15]; Wide dynamic range [15]; Multi-element and isotopic analysis capability [14] [15]; High sample throughput [14] | Destructive sample preparation [12] [3]; High equipment and operational cost [14]; Requires significant staff expertise [14] [15]; Susceptible to spectral interferences [14] [15] |

| FT-IR (ATR) | Non-destructive [16]; Rapid analysis with minimal preparation [16]; Versatile for solids, liquids, pastes [16]; High specificity for functional groups [17] | Primarily a surface technique (micron-scale penetration) [16]; Spectral artifacts from pressure/temperature changes [16]; Weak in detecting symmetric vibrations and metal bonds; Water absorption can interfere |

| Raman | Non-destructive [3] [17]; Minimal sample preparation; Excellent for aqueous solutions; High spatial resolution for mapping; Specificity for polymorphs and crystal forms [17] | Fluorescence interference can swamp signal; Generally less sensitive than FT-IR; Can cause thermal degradation of sensitive samples; Raman scattering is an inherently weak effect |

Experimental Protocols for Validation

Validating XRF for Pharmaceutical Elemental Impurities

Objective: To validate the specificity and quantitative performance of XRF for screening elemental impurities in Active Pharmaceutical Ingredients (APIs) according to guidelines like ICH Q3D [12].

Methodology:

- Sample Preparation: APIs and drug products are prepared as finely powdered solids. For quantitative analysis, powders are compressed into pellets using a hydraulic press to ensure a flat, uniform surface. Minimal preparation is a key advantage [12] [13].

- Calibration: Instrument calibration uses certified reference materials (CRMs) that closely match the sample matrix (e.g., powder pellets with known concentrations of target elements). A blank and at least three standard concentrations are used to build a calibration curve [13].

- Analysis: The pellet is placed in the spectrometer. The X-ray tube excites the sample, and the fluorescent X-rays are measured. Acquisition times typically range from 30 seconds to several minutes per sample [12].

- Specificity Validation: Specificity is demonstrated by analyzing the API and excipients individually to confirm the absence of spectral overlaps at the emission lines of the target elements. The technique's inherent specificity comes from the characteristic X-ray energies emitted by each element [13].

- Data Analysis: The instrument software quantifies element concentrations based on the calibration curve. Results are compared against the strict limits defined in ICH Q3D [12].

Establishing ICP-MS as a Reference Method

Objective: To achieve ultratrace quantification of heavy metals in biological tissues with high specificity and selectivity, serving as a reference method for validating other techniques [14] [3].

Methodology:

- Sample Digestion: A precisely weighed tissue sample (e.g., ~0.5 g of rice plant tissue from a dose-response study [3]) is subjected to microwave-assisted acid digestion with high-purity nitric acid. This process dissolves the organic matrix and liberates the target metals into solution [14] [3].

- Dilution: The digested sample is diluted with ultrapure water to achieve a total dissolved solid content of <0.2%, a critical step to prevent matrix effects and instrumental drift [14].

- ICP-MS Analysis: The diluted solution is introduced via a peristaltic pump to a pneumatic nebulizer, creating an aerosol for the plasma. Key instrumental parameters (nebulizer gas flow, torch alignment, ion lens voltages) are optimized for sensitivity.

- Interference Management (Selectivity Enhancement): To ensure selectivity, a collision/reaction cell (e.g., with He or H2 gas) is used to eliminate polyatomic interferences. For example, the interference of 40Ar16O+ on 56Fe+ is mitigated by kinetic energy discrimination or chemical reaction, allowing for accurate iron quantification [15].

- Quantification: Quantification is performed using external calibration with rhodium as an internal standard to correct for signal drift. For the highest accuracy, the method of isotope dilution can be employed, where an enriched stable isotope of the analyte (e.g., 57Fe) is added to the sample as an internal standard [15].

Correlating Raman Spectroscopy with ICP-MS for Heavy Metal Stress

Objective: To validate the specificity of Raman spectroscopy for detecting and discriminating between different types of heavy metal stress (e.g., Arsenic, Cadmium, Lead) in rice plants by correlating spectral changes with ICP-MS metal quantification data [3].

Methodology:

- Plant Treatment: Rice plants are cultivated hydroponically and exposed to varying, environmentally relevant concentrations of As, Cd, and Pb in a dose-response design for up to 6 weeks [3].

- Raman Spectral Acquisition: A handheld or benchtop Raman spectrometer with an 830 nm laser is used to collect spectra from the leaves weekly. Using a longer wavelength laser helps minimize fluorescence. Acquisition parameters are set to 1 second integration at 495 mW laser power, with multiple spectra averaged per plant [3].

- Spectral Pre-processing: Collected spectra are baselined and normalized to a consistent internal standard peak (e.g., the 1440 cm−1 band attributed to CH2 deformation) to correct for minor intensity fluctuations [3].

- Specificity and Selectivity Analysis: Statistical analysis, including Analysis of Variance (ANOVA) and Partial Least Squares - Discriminant Analysis (PLS-DA), is applied to the spectral data. This identifies specific, dose-dependent changes in Raman peaks (e.g., carotenoid and phenylpropanoid bands) that are unique to each heavy metal, demonstrating the technique's specificity and selectivity in diagnosing the type of stress [3].

- Validation with ICP-MS: Parallel plant tissues are harvested, digested with nitric acid, and analyzed by ICP-MS to precisely determine the internal concentration of each heavy metal [3]. Raman peak intensities are then plotted against the ICP-MS-derived metal concentrations to create calibration models, validating Raman's predictive capability for heavy metal uptake.

The workflow for this correlative study is outlined below:

Diagram 1: Workflow for validating Raman spectroscopy against ICP-MS for heavy metal stress detection.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Materials for Spectroscopic Analysis

| Item | Primary Function | Application Notes |

|---|---|---|

| High-Purity Acids (HNO₃, HCl) | Sample digestion and dilution for ICP-MS [14]. | Essential to minimize background contamination in trace analysis. Must be trace metal grade. |

| Certified Reference Materials (CRMs) | Instrument calibration and method validation [13]. | Should closely match the sample matrix (e.g., soil, plant tissue, API) for accurate results. |

| ATR Crystals (Diamond, ZnSe) | Internal reflection element for ATR-FTIR [16]. | Diamond is rugged and chemical-resistant; ZnSe offers a broader spectral range but is softer. |

| Hydraulic Pellet Press | Preparing uniform solid pellets for XRF and FT-IR analysis [13]. | Ensures reproducible sample presentation, critical for quantitative accuracy. |

| Collision/Reaction Cell Gases (He, H₂) | Mitigating spectral interferences in ICP-MS [15]. | He is used for kinetic energy discrimination; H₂ can react with and remove interfering ions. |

| Internal Standards (e.g., Rh, Sc, In) | Correcting for signal drift and matrix effects in ICP-MS [14] [15]. | An element not present in the sample is added to all standards and unknowns. |

| LASER Sources (e.g., 785 nm, 830 nm) | Excitation source for Raman spectroscopy [3]. | Longer wavelengths (NIR) are preferred for biological samples to reduce fluorescence. |

The selection of an appropriate spectroscopic technique is a critical decision that hinges on the analytical question, the required level of specificity and selectivity, and practical constraints. ICP-MS stands out for its unrivalled sensitivity and capability for isotopic analysis, making it the benchmark for quantitative elemental impurity testing, albeit with higher costs and operational complexity. XRF offers a rapid, non-destructive alternative for elemental screening, ideal for quality control where ultratrace detection is not required. For molecular analysis, FT-IR and Raman spectroscopy provide complementary information: FT-IR excels in identifying functional groups and is highly versatile, while Raman is superior for analyzing aqueous samples, detecting symmetric vibrations, and characterizing polymorphic forms. The ongoing integration of these techniques with advanced chemometric tools and their validation through correlative studies, as demonstrated in the Raman/ICP-MS workflow, continues to push the boundaries of specificity and selectivity, empowering researchers to solve complex analytical challenges with greater confidence and efficiency.

Understanding Matrix Effects and Spectral Interferences in Biological Samples

The quantitative analysis of target analytes in biological samples using advanced spectroscopic and spectrometric techniques is a cornerstone of modern bioanalytical research, drug development, and biomonitoring studies. However, the accuracy and reliability of these analyses are consistently challenged by two significant phenomena: matrix effects and spectral interferences. These issues can profoundly impact method validation, data integrity, and ultimately, scientific conclusions drawn from analytical data.

Matrix effects refer to the suppression or enhancement of a target analyte's signal caused by co-eluting compounds present in the biological sample matrix [18]. These effects are particularly problematic in liquid chromatography-mass spectrometry (LC-MS) and tandem mass spectrometry (MS/MS) applications, where they can alter ionization efficiency and compromise quantitative accuracy [18] [19]. Spectral interferences, more common in atomic spectroscopy techniques such as ICP-MS and ICP-OES, occur when overlapping signals from different elements or polyatomic ions impede the accurate detection and quantification of target analytes [20] [21] [22].

Understanding the distinct mechanisms, sources, and mitigation strategies for both matrix effects and spectral interferences is essential for researchers and drug development professionals seeking to validate robust analytical methods. This guide provides a comprehensive comparison of how these phenomena manifest across different analytical techniques and presents experimental approaches for their identification and control.

Fundamental Concepts and Mechanisms

Matrix Effects in Mass Spectrometry

In biological analysis using LC-MS/MS, matrix effects predominantly manifest as ion suppression or less commonly, ion enhancement [18]. This occurs when co-eluting matrix components interfere with the ionization process of target analytes in the instrument source. The biological matrix contains numerous endogenous compounds—including salts, carbohydrates, lipids, peptides, and metabolites—that can compete for available charges or affect droplet formation and desorption processes [18].

The mechanisms of matrix effects differ between ionization techniques. In electrospray ionization (ESI), which is particularly susceptible, interference occurs through several pathways: competition for charge in the liquid phase, reduced efficiency of analyte transfer to the gas phase due to increased surface tension, co-precipitation with non-volatile compounds, and gas-phase neutralization of analyte ions [18]. In contrast, atmospheric pressure chemical ionization (APCI) is generally less susceptible to matrix effects because ionization occurs primarily in the gas phase rather than in charged droplets [18].

Spectral Interferences in Atomic Spectroscopy

Spectral interferences in techniques like ICP-MS and ICP-OES present different challenges. These can be categorized into three main types [20]:

- Physical interferences: Affect sample transport and introduction into the plasma.

- Matrix-based effects: Alter plasma conditions and excitation efficiency.

- Spectral overlaps: Occur when emission lines or mass-to-charge ratios of interfering species overlap with those of target analytes.

In ICP-MS, spectral interferences predominantly arise from polyatomic ions formed from plasma gases and matrix components, isobaric overlaps from different elements with same mass isotopes, and doubly charged ions [21] [22]. For example, in biological matrices containing calcium, chlorine, phosphorus, potassium, carbon, sodium, and sulfur, numerous polyatomic ions can form that interfere with the detection of key elements [22].

The following diagram illustrates the fundamental mechanisms of matrix effects in Electrospray Ionization (ESI) mass spectrometry:

Figure 1: Mechanisms of Matrix Effects in Electrospray Ionization Mass Spectrometry

Comparative Analysis of Techniques

Technique-Specific Vulnerabilities and Manifestations

Different analytical techniques exhibit distinct susceptibility profiles to matrix effects and spectral interferences. Understanding these technique-specific vulnerabilities is crucial for selecting appropriate methodology and implementing effective countermeasures.

Table 1: Comparison of Matrix Effects and Spectral Interferences Across Analytical Techniques

| Analytical Technique | Primary Interference Type | Main Sources | Key Manifestations | Susceptibility Level |

|---|---|---|---|---|

| LC-ESI-MS/MS | Matrix effects (Ion suppression) | Phospholipids, salts, lipids, metabolites | Reduced/enhanced analyte signal; Impacted accuracy & precision [18] | High (ESI more susceptible than APCI) [18] |

| ICP-MS | Spectral interferences | Polyatomic ions, isobaric overlaps, doubly charged ions [21] | False positives/negatives; Inaccurate quantification [21] [22] | High (especially with biological matrices) [22] |

| ICP-OES | Spectral interferences | Matrix elements with overlapping emission lines [20] | Inaccurate results despite good spike recovery [20] | Medium-High (wavelength-dependent) |

| ETAAS | Spectral & matrix effects | Complex sample matrices (sediments, soils) [23] | Background absorption, structured background [23] | Medium (depends on matrix complexity) |

| Raman Spectroscopy | Minimal spectral interference | Fluorescent compounds (can mask signals) | Indirect detection via stress biomarkers [3] | Low (detects biochemical changes) |

| LIBS | Matrix effects | Sample physical properties (ablation differences) [24] | Inconsistent spectral response [24] | Medium (sample form dependent) |

Experimental Protocols for Interference Assessment

Post-column Infusion for LC-MS Matrix Effects

A robust experimental approach for visualizing matrix effects in LC-MS methods involves post-column infusion [18]. The protocol consists of:

- Sample Preparation: Extract blank biological matrix (plasma, urine, tissue) using the intended sample preparation protocol.

- Analyte Infusion: Connect a syringe pump containing the target analyte solution to the LC system via a T-connector between the column outlet and the MS source.

- Chromatographic Separation: Inject the blank matrix extract onto the LC column and run the separation method while continuously infusing the analyte.

- Signal Monitoring: Monitor the analyte signal throughout the chromatographic run. Regions where the signal deviates from the baseline indicate the presence of matrix effects from co-eluting compounds.

This method provides a comprehensive profile of matrix effects across the entire chromatogram, identifying regions where ion suppression or enhancement occurs.

Interference Check Solutions for ICP-MS/OES

For atomic spectroscopy techniques, systematic assessment of spectral interferences requires:

- Preparation of Interference Check Solutions: Create solutions containing potential interfering elements at concentrations representative of typical samples [20].

- Multi-wavelength/Multi-isotope Monitoring: Analyze these solutions while monitoring all analytical wavelengths (ICP-OES) or isotopes (ICP-MS) of interest.

- Signal Deviation Analysis: Compare signals obtained from interference check solutions with those from pure standard solutions to identify significant spectral overlaps.

- Interference Factor Calculation: Quantify the magnitude of interference using interference factors (IF), calculated as IF = 10⁶ × apparent analyte concentration / concentration of interfering element [22].

This protocol enables the identification of problematic wavelengths or isotopes and guides the selection of alternative, interference-free analytical lines.

Mitigation Strategies and Method Validation

Approaches for Minimizing Interferences

Multiple strategies have been developed to address matrix effects and spectral interferences across different analytical platforms. The effectiveness of these approaches varies by technique and matrix complexity.

Table 2: Comparison of Interference Mitigation Strategies Across Techniques

| Mitigation Strategy | LC-MS/MS | ICP-MS | ICP-OES | ETAAS |

|---|---|---|---|---|

| Sample Cleanup | Effective (SPE, LLE) [19] | Limited effectiveness | Limited effectiveness | Helpful (slurry sampling) [23] |

| Chromatographic/Separation Optimization | Highly effective [18] | Not applicable | Not applicable | Partially effective |

| Isotope Dilution | Gold standard (costly) [19] | Effective | Not applicable | Not applicable |

| Mathematical Correction | Limited use | Effective (with uncertainty increase) [21] | Effective (IEC) [20] | Effective (background correction) [23] |

| Standard Addition Method | Possible | Effective for non-spectral effects [21] | Does not correct spectral interferences [20] | Effective |

| Alternative Ionization Source | APCI less susceptible [18] | Not applicable | Not applicable | Not applicable |

| Dilution | Possible (sensitivity loss) | Effective | Effective | Possible |

| Collision/Reaction Cells | Not applicable | Highly effective | Not applicable | Not applicable |

Advanced Chemometric Approaches

Recent advances in chemometrics and machine learning provide powerful tools for addressing interference challenges. As recognized in the 2025 EAS Award for Outstanding Achievements in Chemometrics, these approaches are particularly valuable for handling complex spectral data [25].

In Raman spectroscopy applications, for example, partial least squares discriminant analysis (PLS-DA) has successfully diagnosed specific heavy metal toxicity in rice with 84.5% accuracy by interpreting subtle spectral changes in biochemical profiles [3]. Similarly, orthogonal PLS-DA (OPLS-DA) has been employed to distinguish matrix species-induced ME variations in multi-pesticide residue analysis, enabling the identification of pesticides that contribute most significantly to observed variations [26].

These multivariate statistical approaches can disentangle complex overlapping signals and identify patterns indicative of specific interferences, providing powerful alternatives to traditional univariate correction methods.

Essential Research Reagents and Materials

Successful management of matrix effects and spectral interferences requires appropriate selection of research reagents and analytical materials. The following toolkit outlines essential items for method development and validation.

Table 3: Research Reagent Solutions for Interference Management

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Isotopically Labeled Internal Standards | Compensate for matrix effects by experiencing same suppression/enhancement as analytes [19] | LC-MS/MS quantitative methods |

| Chemical Modifiers | Modify sample matrix to stabilize analytes or reduce interferences during atomization [23] | ETAAS analysis of complex matrices |

| QuEChERS Kits | Efficient sample cleanup to remove phospholipids and other interfering compounds [26] | Multi-pesticide residue analysis in food |

| Certified Reference Materials | Method validation and accuracy verification despite interferences [20] | All techniques (quality control) |

| Matrix-Matched Standards | Calibration standards prepared in similar matrix to samples to compensate for effects [26] | LC-MS/MS, ICP-MS when IS not available |

| Interference Check Solutions | Identify and quantify specific spectral interferences [20] [22] | ICP-MS, ICP-OES method development |

| Collision/Reaction Gases | Selectively remove polyatomic interferences through chemical reactions [21] | ICP-MS with reaction cell |

Experimental Workflow for Comprehensive Method Validation

The following diagram outlines a systematic workflow for assessing and controlling matrix effects and spectral interferences during analytical method validation:

Figure 2: Comprehensive Workflow for Interference Assessment and Control

Matrix effects and spectral interferences present significant but manageable challenges in spectroscopic analysis of biological samples. The susceptibility to these phenomena varies considerably across analytical techniques, with LC-ESI-MS/MS being particularly vulnerable to matrix effects and ICP-MS facing substantial spectral interference challenges.

Successful management requires technique-specific strategies: improved sample preparation and chromatographic separation for LC-MS; mathematical corrections, reaction cells, and isotope dilution for ICP-MS; and advanced background correction systems for ETAAS. Across all platforms, method validation must include comprehensive assessment of these effects using post-column infusion, interference check solutions, spike recovery tests, and matrix-matched calibration.

Emerging approaches incorporating chemometrics and machine learning show significant promise for addressing these challenges, particularly for complex multi-analyte applications. By implementing systematic assessment protocols and appropriate mitigation strategies, researchers can develop robust analytical methods that deliver accurate and reliable data for biomonitoring studies and drug development programs.

Spectroscopic techniques are fundamental tools for material characterization across pharmaceutical, environmental, and biological research. However, the effective interpretation of spectral data presents significant challenges due to inherent complexities including weak signals prone to environmental noise, instrumental artifacts, sample impurities, and scattering effects [27]. These perturbations can substantially degrade measurement accuracy and impair analytical outcomes. Furthermore, spectral differences between sample groups—such as healthy versus diseased tissues or authentic versus adulterated botanical products—are often minimal and visually indistinguishable, requiring sophisticated analytical approaches to detect meaningful patterns [28].

Chemometrics addresses these challenges by applying multivariate statistical methods to chemical data, enabling researchers to extract meaningful information from complex spectral measurements. These mathematical approaches are essential for transforming spectral data into actionable biological and chemical insights. Within this domain, Principal Component Analysis (PCA) and Partial Least Squares (PLS) regression, including its discriminant analysis variant (PLS-DA), have emerged as two cornerstone techniques for dimensionality reduction, pattern recognition, and classification [29] [28]. This guide provides a comprehensive comparison of these methods, focusing on their theoretical foundations, practical applications, and implementation protocols within spectroscopic analysis, particularly framed within the context of validating method specificity and selectivity.

Theoretical Foundations: PCA vs. PLS

Core Principles and Algorithmic Differences

Although both PCA and PLS are multivariate techniques that reduce data dimensionality, they operate under fundamentally different principles and objectives, which determines their appropriate application scenarios.

Principal Component Analysis (PCA) is an unsupervised technique, meaning it analyzes spectral data without using prior knowledge about sample class memberships. Its primary objective is to explain the maximum possible variance within the predictor variable matrix (X), which typically consists of spectral intensities at various wavelengths [29] [30]. PCA achieves this by identifying new, orthogonal axes called Principal Components (PCs). These PCs are linear combinations of the original spectral variables, with the first PC capturing the greatest variance, the second PC capturing the next greatest variance while being orthogonal to the first, and so on [30]. The resulting scores and loadings plots facilitate the visualization of data structure, identification of trends, and detection of outliers.

Partial Least Squares (PLS) and its discriminant analysis variant (PLS-DA) are supervised methods. These techniques incorporate prior knowledge about sample classes (the Y-response variable) to guide the dimensionality reduction process. Instead of maximizing only the variance in X, PLS aims to maximize the covariance between the predictor variables (X, the spectra) and the response variable (Y, such as class labels or analyte concentrations) [29] [30]. PLS-DA is a specific adaptation used for classification tasks, where the Y-variable is categorical (e.g., "healthy" vs. "diseased"). It works by transforming the original spectral variables into a set of latent variables (LVs) that are most predictive of the class membership [28].

The following diagram illustrates the core operational difference between these two algorithms:

Key Functional Distinctions

Table 1: Fundamental Differences Between PCA and PLS/PLS-DA

| Feature | PCA | PLS/PLS-DA |

|---|---|---|

| Supervision Type | Unsupervised [29] | Supervised [29] |

| Use of Group Information | No [29] | Yes [29] |

| Primary Objective | Capture overall variance in X [29] [30] | Maximize covariance between X and Y [29] [30] |

| Model Outputs | Scores, Loadings, Variance Explained [28] | Scores, Loadings, VIP Scores, Regression Coefficients [29] [28] |

| Risk of Overfitting | Low [29] | Moderate to High (requires validation) [29] |

| Primary Application in Spectroscopy | Exploratory analysis, outlier detection, data structure visualization [29] | Classification, quantitative prediction, biomarker identification [29] |

Experimental Protocols and Methodologies

Standardized Workflow for Spectral Analysis

Implementing PCA and PLS-DA follows a systematic workflow from sample preparation through model validation. The following diagram outlines the key stages, highlighting both shared steps and method-specific processes:

Detailed Methodological Protocols

Protocol for Principal Component Analysis (PCA)

- Sample Preparation and Spectral Acquisition: Collect vibrational spectra (e.g., Raman or FTIR) from all samples under consistent conditions. For a study comparing healthy and diseased cells, this would involve preparing cell pellets or tissue sections and acquiring multiple spectra per sample to ensure statistical robustness [28].

- Data Preprocessing: Apply necessary preprocessing techniques to mitigate analytical artifacts:

- Cosmic Ray Removal: Use methods like Moving Average Filters or Nearest Neighbor Comparison to remove sharp spikes [27].

- Baseline Correction: Apply techniques such as Piecewise Polynomial Fitting or Morphological Operations to correct for fluorescence background and instrumental drift [27].

- Normalization: Standardize spectral intensities using methods like Standard Normal Variate (SNV) to minimize path-length effects and concentration variations [27].

- Smoothing: Apply Savitzky-Golay filters or similar approaches to reduce high-frequency noise without significantly distorting spectral features [27].

- Data Matrix Construction: Assemble all preprocessed spectra into a data matrix X of dimensions

n × m, wherenis the number of measured spectra andmis the number of wavelength/wavenumber variables [28]. - Data Scaling: Center the data by subtracting the mean of each variable (wavelength), and often scale each variable to unit variance to prevent high-intensity signals from dominating the model [31].

- PCA Decomposition: Perform PCA on the scaled data matrix to compute principal components. The number of components to retain is typically determined by evaluating the cumulative proportion of variance explained, often aiming for >90-95% of total variance [31]. For example, an analysis of 460 tablets using 650 wavelengths showed that the first three principal components explained 94.2% of all spectral variation [31].

- Interpretation: Visualize the results using scores plots (to observe sample clustering and outliers) and loadings plots (to identify which spectral regions contribute most to the observed separation) [28].

Protocol for Partial Least Squares Discriminant Analysis (PLS-DA)

- Initial Steps (Shared with PCA): Follow identical procedures for sample preparation, spectral acquisition, preprocessing, and data matrix construction as described in the PCA protocol [28].

- Response Matrix Construction: Create a categorical response matrix Y that encodes the predefined class membership for each spectrum. For a two-class system (e.g., Class A vs. Class B), this is typically done using dummy variables (e.g., -1 for Class A and +1 for Class B) [28].

- Model Training: Build the PLS-DA model using both the spectral data (X) and the response matrix (Y). The algorithm identifies Latent Variables (LVs) that maximize the covariance between X and Y [29] [28].

- Model Validation: Implement rigorous validation to prevent overfitting, which is a common risk with supervised methods:

- Cross-Validation: Use techniques such as Venetian blinds or leave-one-out cross-validation to compute model performance metrics like R²Y (goodness-of-fit) and Q² (predictive ability) [29]. A Q² value > 0.5 is generally considered indicative of a valid model, while Q² > 0.9 signifies an outstanding model [29].

- Permutation Testing: Randomly permute the class labels multiple times (e.g., 200 permutations) and rebuild the model for each permutation. Compare the original model's performance metrics with the distribution from permuted models to assess statistical significance [29].

- Feature Selection: Utilize Variable Importance in Projection (VIP) scores to identify which spectral variables (wavelengths) contribute most significantly to class separation. Features with VIP scores > 1.0 are typically considered most relevant for further investigation as potential biomarkers [29].

- Classification: Apply the validated model to classify unknown test spectra and report performance metrics including accuracy, sensitivity, and specificity [28].

Performance Comparison and Experimental Data

Quantitative Performance Metrics

Empirical studies directly comparing PCA-LDA (a hybrid approach) and PLS-DA demonstrate the capabilities of these methods in real-world classification tasks. The table below summarizes performance metrics from a study analyzing vibrational spectra of breast cells:

Table 2: Performance Comparison of PCA-LDA and PLS-DA in Classifying Vibrational Spectra of Breast Cells [28]

| Dataset Description | Method | Accuracy (%) | Sensitivity (%) | Specificity (%) |

|---|---|---|---|---|

| Simulated Dataset (Control vs. Exposed) | PCA-LDA | 98 | 96 | 100 |

| PLS-DA | 100 | 100 | 100 | |

| Raman Spectra (Control vs. Proton-Beam Exposed MCF10A Cells) | PCA-LDA | 93 | 86 | 100 |

| PLS-DA | 96 | 91 | 100 | |

| FTIR Spectra (MCF7 vs. MDA-MB-231 Breast Cancer Cells) | PCA-LDA | 95 | 90 | 100 |

| PLS-DA | 97 | 95 | 100 |

Interpretation of Comparative Data

The experimental data reveals several key patterns relevant for spectroscopic method selection:

- Both methods offer high performance: Across all datasets, both PCA-LDA and PLS-DA achieved high classification accuracy (93-100%), sensitivity (86-100%), and specificity (100%) [28], confirming their utility in spectral discrimination tasks.

- PLS-DA demonstrates marginally superior performance: In all three experimental scenarios, PLS-DA equaled or exceeded the performance of PCA-LDA across all metrics [28]. This performance advantage stems from PLS-DA's supervised nature, which directly optimizes components for class separation rather than merely for variance explanation.

- Context-dependent selection is crucial: Despite its slightly lower performance metrics in these classification tasks, PCA-LDA remains highly valuable, particularly for initial exploratory analysis where the goal is understanding data structure rather than prediction [29].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Materials and Computational Tools for Chemometric Analysis of Spectral Data

| Item/Category | Specification/Example | Primary Function in Analysis |

|---|---|---|

| Spectrometer | FTIR, Raman, or NIR Spectrometer | Generates raw spectral data from samples through radiation-matter interaction [28]. |

| Reference Standards | Pure chemical compounds (e.g., quercetin, kaempferol for botanicals) [32] | Provides validated benchmarks for targeted analysis and method validation. |

| Preprocessing Software | MATLAB, Python (SciPy, NumPy), R | Implements algorithms for baseline correction, normalization, and smoothing [27] [31]. |

| Multivariate Analysis Software | SIMCA, PLS_Toolbox, JMP, custom scripts in R/Python | Performs PCA, PLS-DA, and related chemometric calculations and visualization [31]. |

| Validation Tools | Cross-validation routines, permutation testing algorithms | Assesses model robustness and prevents overfitting, especially crucial for PLS-DA [29]. |

| Data Visualization Tools | Score and loading plot generators, VIP score calculators | Enables interpretation of model results and identification of discriminatory features [29] [28]. |

The comparative analysis of PCA and PLS-DA reveals a clear, application-dependent pathway for method selection in spectroscopic interpretation. PCA serves as an indispensable tool for initial, unbiased data exploration, providing insights into natural clustering, outlier detection, and overall data structure without the influence of prior assumptions [29]. Its unsupervised nature makes it ideal for quality control, detecting batch effects, and formulating initial hypotheses.

Conversely, PLS-DA excels in supervised classification and biomarker discovery contexts where the research objective is to maximize separation between predefined sample classes or to predict categorical outcomes [29] [28]. The requirement for rigorous validation through cross-validation and permutation testing is paramount for PLS-DA to ensure model reliability and avoid overfitting [29].

For research focused on validating specificity and selectivity in spectroscopic methods, a sequential approach is often most effective: begin with PCA to understand the fundamental structure of the spectral data and identify potential confounders, then progress to PLS-DA to develop a robust, validated classification model that leverages prior knowledge of sample classes to maximize discriminatory power.

Advanced Applications: Implementing Specificity in Spectroscopic Workflows

In spectroscopic analysis, sample preparation is not merely a preliminary step but a critical determinant of data quality and reliability. Inadequate sample preparation accounts for approximately 60% of all spectroscopic analytical errors, overshadowing even the most advanced instrumental capabilities [33]. The pursuit of specificity and selectivity—core tenets of analytical validation—begins at the sample preparation stage, where material homogeneity, contamination control, and matrix effects are initially managed. This comprehensive guide objectively compares preparation methodologies across three foundational techniques: X-Ray Fluorescence (XRF), Inductively Coupled Plasma Mass Spectrometry (ICP-MS), and Fourier Transform Infrared (FT-IR) spectroscopy. By examining experimental data and protocols, we establish a rigorous framework for minimizing analytical errors through optimized sample preparation, directly supporting valid specificity and selectivity claims in spectroscopic research.

The distinct physical principles underlying XRF, ICP-MS, and FT-IR spectroscopy dictate their specific sample preparation requirements and vulnerability to different error types. XRF spectroscopy measures secondary X-ray emission from irradiated samples, requiring careful control of particle size, homogeneity, and surface characteristics to minimize matrix and mineralogical effects [34]. ICP-MS ionizes samples in high-temperature plasma before mass separation, demanding complete dissolution, precise dilution, and stringent contamination control to achieve its exceptional sensitivity [35]. FT-IR spectroscopy probes molecular vibrations through infrared absorption, necessitating optimal sample thickness, appropriate solvent selection, and uniform particle distribution to avoid spectral artifacts [36]. Understanding these fundamental interactions illuminates why standardized preparation protocols are indispensable for method validation.

Table 1: Fundamental Requirements and Dominant Error Sources by Technique

| Technique | Primary Analytical Signal | Critical Preparation Factors | Dominant Error Sources |

|---|---|---|---|

| XRF | Secondary X-ray fluorescence | Particle size (<75 μm ideal), homogeneity, surface flatness, infinite thickness | Mineralogical effects, particle heterogeneity, surface imperfections, moisture content [37] [34] |

| ICP-MS | Mass-to-charge ratio of ions | Complete dissolution, accurate dilution, contamination control, internal standardization | Contaminated reagents/labware, incomplete digestion, inaccurate dilution, polyatomic interferences [38] [33] |

| FT-IR | Infrared absorption | Sample thickness, particle uniformity, solvent transparency, appropriate concentration | Moisture contamination, poor particle dispersion, solvent interference, saturated peaks [39] [36] |

XRF Sample Preparation: Techniques and Experimental Data

Preparation Methodologies: Pressed Powder vs. Fusion

XRF sample preparation predominantly employs two established techniques: pressed powder pellets and fused beads. The pressed powder method involves drying, crushing, and pressing the sample into a uniform tablet with or without binders [37]. This approach offers operational simplicity and rapid execution, making it suitable for high-throughput environments. However, it does not eliminate mineral effects or particle size variations, limiting its accuracy for precise composition determination [37]. Alternatively, the fusion method incorporates flux addition and high-temperature melting (950-1200°C) to create homogeneous glass discs, effectively eliminating composition, density, and particle size inconsistencies [37] [33]. While more time-consuming and technically demanding, fusion significantly reduces matrix effects and enables highly accurate quantitative analysis, particularly for complex mineral samples [34].

Experimental Protocol: Pressed Pellet Preparation

- Sample Drying: Dry samples at 105°C for 2 hours to remove moisture [37].

- Particle Size Reduction: Grind samples to ≤75 μm using a spectroscopic grinding machine with appropriate surfaces to prevent contamination [33].

- Binder Addition: Mix ground powder with binder (cellulose wax or boric acid) at typical 5:1 sample-to-binder ratio [33].

- Pressing: Transfer mixture to die set and press at 10-30 tons pressure for 30-60 seconds using hydraulic press [33].

- Storage: Store pellets in desiccator to prevent moisture absorption before analysis.

Experimental Protocol: Fusion Preparation

- Flux Mixing: Accurately weigh 1.00 g sample and mix with 10.00 g lithium tetraborate flux [33].

- Fusion: Transfer mixture to platinum crucible and melt at 1050°C for 15 minutes in fusion furnace, swirling periodically [37].

- Casting: Pour molten mixture into pre-heated platinum mold and allow to cool [33].

- Annealing: Anneal glass disc at 500°C for 5 minutes to relieve internal stresses [37].

Comparative Performance Data

Table 2: XRF Preparation Method Comparison Based on Cement Standard Reference Materials

| Preparation Method | Analytical Precision (RSD%) | Accuracy Deviation (%) | Typical Processing Time | Relative Cost |

|---|---|---|---|---|

| Pressed Powder | 0.5-2.0% for major elements | 2-10% (matrix dependent) | 15-30 minutes | Low |

| Fusion | 0.1-0.5% for major elements | 0.5-2% (matrix independent) | 45-60 minutes | High |

Experimental data demonstrates that fusion methods yield superior accuracy and precision compared to pressed powder techniques, particularly for complex mineral matrices where mineralogical effects significantly impact XRF intensities [34]. The pressed powder method shows acceptable precision but potentially poor accuracy when standard and unknown samples differ mineralogically [34].

XRF Sample Preparation Workflow

ICP-MS Sample Preparation: Techniques and Contamination Control

Specialized Preparation Methodologies

ICP-MS sample preparation demands exceptional rigor due to the technique's extreme sensitivity, capable of detecting elements at parts-per-trillion levels. Complete sample dissolution is paramount, typically achieved through acid digestion in open or closed vessels [38]. Microwave-assisted digestion provides superior recovery for refractory materials through controlled temperature and pressure conditions. For nanoparticle analysis, single-particle ICP-MS (spICP-MS) employs highly diluted suspensions to ensure individual nanoparticle introduction, generating transient signals proportional to particle mass [35]. Advanced approaches like laser ablation spICP-MS enable direct solid sampling without liquid introduction, eliminating dissolution-related errors [35].

Experimental Protocol: Acid Digestion for Solid Samples

- Weighing: Accurately weigh 0.1-0.5 g sample into digestion vessel.

- Acid Addition: Add 5 mL high-purity nitric acid (trace metal grade) and 1 mL hydrochloric acid as needed [38].

- Digestion: Heat at 95°C for 2 hours or use microwave digestion system (180°C, 30 minutes).

- Dilution: Cool and dilute to 50 mL with ultrapure water (18.2 MΩ·cm) [38].

- Filtration: Filter through 0.45 μm PTFE membrane, with 0.2 μm filtration for ultratrace analysis [33].

- Internal Standardization: Add 1 mL rhodium or indium internal standard (1 ppm) to all samples and standards [35].

Contamination Control Experimental Data

Contamination control represents the most significant challenge in ICP-MS sample preparation. Experimental data demonstrates dramatic contamination reduction through optimized practices:

Table 3: Contamination Reduction Through Optimized ICP-MS Preparation (Values in ppb)

| Element | Manual Cleaning | Automated Pipette Washer | Reduction Factor |

|---|---|---|---|

| Sodium | 18.5 ppb | <0.01 ppb | >1850x |

| Calcium | 19.2 ppb | <0.01 ppb | >1920x |

| Aluminum | 3.8 ppb | 0.05 ppb | 76x |

| Iron | 2.1 ppb | 0.03 ppb | 70x |

Studies comparing manual versus automated cleaning of laboratory pipettes revealed orders of magnitude reduction in contamination for key elements when implementing automated cleaning systems [38]. Similarly, distilled nitric acid prepared in HEPA-filtered clean rooms showed significantly lower contamination levels compared to regular laboratory environments, with aluminum contamination reduced from 12.3 ppb to 0.2 ppb and iron from 8.7 ppb to 0.1 ppb [38].

FT-IR Sample Preparation: Techniques and Spectral Quality

Preparation Methodologies by Sample Type

FT-IR sample preparation techniques vary significantly based on sample physical state and analytical objectives. For solid samples, the KBr pellet method remains prevalent, involving grinding 1-2 mg sample with 200-400 mg potassium bromide followed by pressing under vacuum [36]. Attenuated Total Reflection (ATR) enables direct analysis of solids and liquids without extensive preparation by measuring surface interactions with an internal reflection element [39]. For liquids, transmission cells with precisely spaced infrared-transparent windows control path length from 0.1-1.0 mm, while diffuse reflectance techniques analyze powdered samples without pressing [36].

Experimental Protocol: KBr Pellet Preparation

- Drying: Dry KBr powder at 110°C for 2 hours and store in desiccator.

- Grinding: Gently grind 1-2 mg sample with 200 mg KBr in agate mortar to uniform particle size (<5 μm).

- Pressing: Transfer mixture to die set and press under vacuum at 8-12 tons for 2-5 minutes.

- Analysis: Immediately analyze transparent pellet to minimize moisture absorption.

Experimental Protocol: ATR Analysis

- Background Collection: Clean ATR crystal with appropriate solvent and collect background spectrum [39].

- Sample Application: Place sample in direct contact with ATR crystal, applying uniform pressure.

- Data Collection: Acquire spectrum with 4 cm⁻¹ resolution and 32 scans.

- Cleaning: Thoroughly clean crystal between samples to prevent cross-contamination.

Spectral Quality Assessment Data

Proper FT-IR sample preparation dramatically impacts spectral quality and interpretability:

Table 4: Impact of Preparation Techniques on FT-IR Spectral Quality

| Preparation Issue | Spectral Manifestation | Corrective Action | Result Improvement |

|---|---|---|---|

| Moisture in KBr | Broad O-H stretch ~3300 cm⁻¹, variable baseline | Dry KBr at 110°C, use desiccator | Eliminates interfering broad bands |

| Poor ATR Contact | Weak signal, distorted band ratios | Apply uniform pressure, use flat samples | Improves signal-to-noise 5-10x |

| Particle Size Too Large | Increased scattering, skewed baseline | Grind to <5 μm, mix thoroughly | Restores band intensity ratios |

| Dirty ATR Crystal | Negative peaks, spectral artifacts | Clean crystal before background | Eliminates false negative peaks [39] |

Research demonstrates that diffuse reflection spectra processed in Kubelka-Munk units instead of absorbance correct peak distortion and apparent saturation, recovering interpretable spectral information [39]. Similarly, ATR analysis of plastic materials reveals significant surface versus bulk compositional differences due to plasticizer migration, highlighting the importance of understanding preparation limitations when interpreting results [39].

The Scientist's Toolkit: Essential Research Reagents and Equipment

Successful spectroscopic analysis requires carefully selected materials and equipment to minimize introduction of errors during sample preparation. The following research reagent solutions represent essential components for reliable results across XRF, ICP-MS, and FT-IR techniques.

Table 5: Essential Research Reagent Solutions for Spectroscopic Sample Preparation

| Item | Technical Function | Application Specifics | Quality Requirements |

|---|---|---|---|

| High-Purity Water | Sample dilution, rinsing, reagent preparation | ICP-MS dilutions, final rinsing of labware | Type I (18.2 MΩ·cm), <5 ppb TOC [38] |

| Ultrapure Acids | Sample digestion, dissolution, dilution | ICP-MS digestions, vessel cleaning | Trace metal grade, certified <50 ppt contaminants [38] |

| Potassium Bromide | IR-transparent matrix for pellet preparation | FT-IR pellet method | FT-IR grade, dry, spectroscopic purity |

| Lithium Tetraborate | Flux for XRF fusion methods | Glass bead preparation for XRF | High purity, minimal elemental contamination [33] |

| PTFE Filters | Particulate removal from liquid samples | ICP-MS sample clarification | 0.45 μm standard, 0.2 μm for ultratrace analysis [33] |

| Internal Standards | Correction for instrument drift, matrix effects | ICP-MS quantification | Non-interfering isotopes, high purity (Rh, In, Re) [35] |

| Cellulose Binders | Binding agent for powder pellets | XRF pressed pellets | High purity, minimal elemental contamination |

The experimental data and methodological comparisons presented demonstrate that sample preparation technique selection directly determines analytical accuracy, precision, and reliability. The pressed powder method in XRF provides rapid analysis with acceptable precision for quality control but potentially compromised accuracy for complex mineral matrices. Fusion techniques deliver superior accuracy through complete mineralogical destruction but require greater technical investment. ICP-MS achieves unmatched sensitivity only when coupled with scrupulous contamination control and complete sample dissolution. FT-IR spectral quality depends fundamentally on appropriate technique selection and meticulous execution to avoid artifacts and misinterpretation. Within validation frameworks, specificity and selectivity claims must consider preparation-induced artifacts that can compromise these analytical attributes. By aligning preparation methodologies with analytical objectives and sample characteristics, researchers can minimize errors at their source, establishing a solid foundation for reliable spectroscopic analysis and valid scientific conclusions.