Spectral Interference in Spectrophotometry: A Comprehensive Guide for Biomedical Researchers

This article provides a complete resource for researchers and drug development professionals on spectral interference in spectrophotometry.

Spectral Interference in Spectrophotometry: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a complete resource for researchers and drug development professionals on spectral interference in spectrophotometry. It covers the fundamental principles of how and why interference occurs, explores advanced methodological and chemometric approaches for accurate analysis in complex matrices like serum and pharmaceuticals, details practical troubleshooting and optimization strategies for instrument calibration and error reduction, and discusses validation protocols to ensure data reliability. By synthesizing foundational knowledge with applied techniques, this guide aims to enhance the accuracy and precision of spectroscopic analysis in biomedical and clinical research.

What is Spectral Interference? Defining the Core Challenge in Molecular Analysis

Spectral interference occurs when the absorbance spectra of multiple components in a mixture overlap, compromising the accuracy of quantitative analysis. This phenomenon represents a fundamental challenge in spectrophotometry, particularly in pharmaceutical analysis where multi-component formulations are common. The core problem stems from the inability of conventional spectrophotometers to distinguish between photons absorbed by different analytes at a given wavelength, leading to a measured absorbance that represents the summed contribution of all absorbing species. When these absorption bands overlap, it becomes mathematically challenging to determine the individual concentration of each component, resulting in systematic errors that can impact drug quality, safety, and efficacy.

The clinical significance of this problem is substantial. Comparative tests have revealed alarming variations in spectrophotometric measurements across different laboratories, with coefficients of variation in absorbance reaching up to 22% in one extensive study [1]. Even after excluding laboratories with instruments exhibiting significant stray light, coefficients of variation remained as high as 15% [1]. This level of inaccuracy is unacceptable in pharmaceutical development and quality control, where precise quantification of active ingredients is critical for ensuring proper dosing and therapeutic effect.

The Fundamental Mechanism of Overlapping Absorbance

Core Principles of Absorbance Summation

At its core, the Beer-Lambert law states that absorbance (A) at a given wavelength is proportional to the concentration (c) of the absorbing species, the path length (b), and a molecular-specific absorptivity coefficient (ε): A = εbc. For mixtures containing multiple absorbing components, the total measured absorbance at any wavelength becomes the sum of individual absorbances:

Atotal = A1 + A2 + A3 + ... + A_n

This additive property becomes problematic when different compounds have significant absorptivity at the same wavelength, as their individual contributions become indistinguishable in the combined measurement. The degree of interference correlates directly with the extent of spectral overlap and the relative concentrations of the interfering species. In severe cases, the absorption spectrum of a minor component can be completely obscured by a major component, making accurate quantification impossible without specialized analytical approaches.

Visualizing the Interference Mechanism

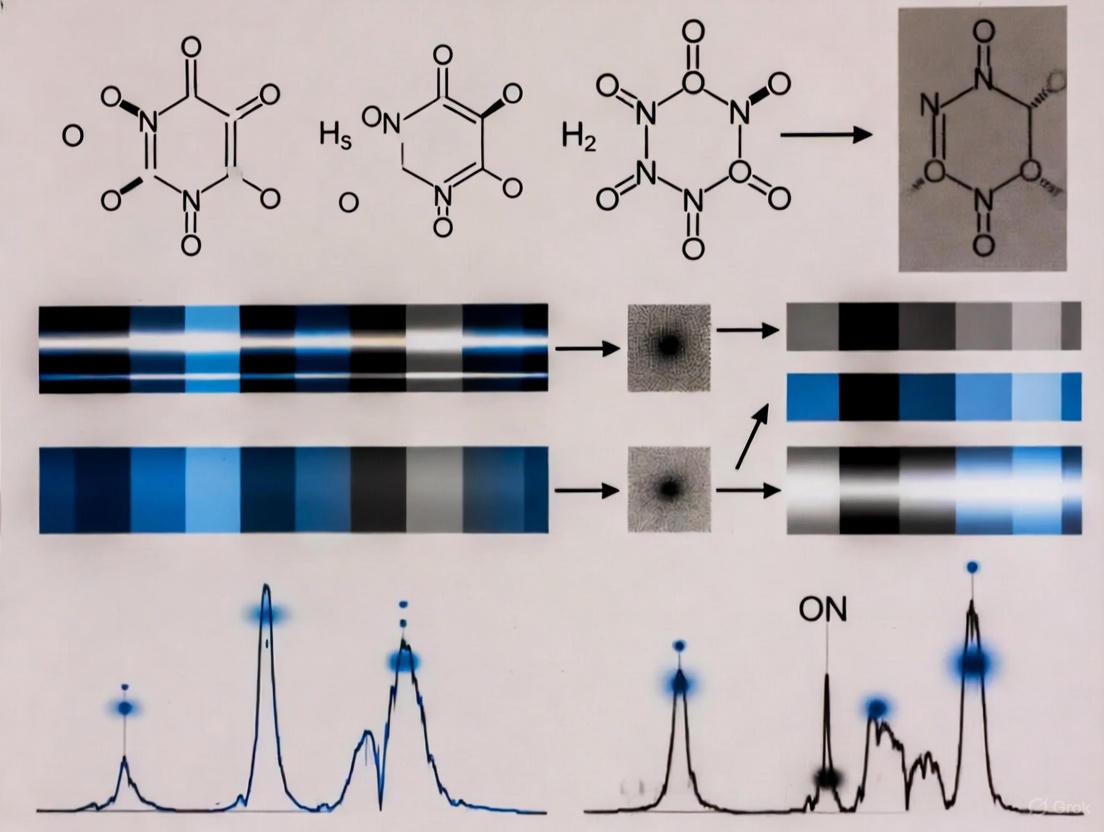

The following diagram illustrates the fundamental relationship between overlapping spectra and the resulting inaccurate data in spectrophotometric analysis:

Experimental Evidence and Impact Assessment

Quantitative Evidence of Measurement Errors

The impact of overlapping absorbance on data accuracy is demonstrated in comparative studies. The following table summarizes quantitative findings from interlaboratory tests that highlight the practical consequences of spectral interference and other spectrophotometric errors:

Table 1: Quantitative Evidence of Spectrophotometric Measurement Errors

| Solution Composition | Wavelength (nm) | Absorbance (A) | ΔA/A C.V.% | Transmittance (%) | ΔT/T C.V.% |

|---|---|---|---|---|---|

| Acid potassium dichromate | 380 | 0.109 | 11.1 | 77.8 | 2.79 |

| Alkaline potassium chromate | 300 | 0.151 | 15.1 | 70.9 | 5.25 |

| Alkaline potassium chromate | 340 | 0.318 | 9.2 | 48.3 | 6.74 |

| Acid potassium dichromate | 328 | 0.432 | 5.0 | 38.0 | 4.97 |

| Acid potassium dichromate | 366 | 0.855 | 5.8 | 14.0 | 11.42 |

| Acid potassium dichromate | 240 | 1.262 | 2.8 | 5.47 | 8.14 |

Data adapted from Beeler and Lancaster study on spectrophotometric errors [1]

The data demonstrates that errors are particularly pronounced in specific wavelength regions and absorbance ranges, with coefficients of variation in absorbance (ΔA/A C.V.%) reaching up to 15.1% and transmittance variations (ΔT/T C.V.%) as high as 11.42% [1]. These inaccuracies directly impact analytical results in pharmaceutical quality control and research applications.

Classification of Spectral Interferences

Spectral interferences in analytical spectroscopy can be categorized into three main types:

- Direct Overlap: Occurs when an interfering species absorbs at the exact analytical wavelength of the target compound, causing positive errors in quantification [2].

- Partial Overlap: Happens when the absorption band of an interfering species partially overlaps with the target analyte's peak, affecting both the baseline and peak intensity [2].

- Stray Light Effects: A different category of interference where light outside the intended wavelength band reaches the detector, particularly problematic at high absorbance values and at the spectral extremes of an instrument [1].

Methodologies for Resolving Overlapping Spectra

Mathematical Resolution Techniques

Researchers have developed numerous mathematical approaches to deconvolve overlapping spectra without physical separation. The following table summarizes key techniques employed in modern spectrophotometric analysis:

Table 2: Mathematical Techniques for Resolving Overlapping Spectra

| Method Category | Specific Technique | Principle of Operation | Application Example |

|---|---|---|---|

| Zero Order Methods | Dual Wavelength [3] | Measures at two wavelengths where interferent has equal absorbance | HCQ and PAR determination [3] |

| Zero Crossing [3] | Measures at wavelength where interferent shows zero absorbance | HCQ at 329 nm where PAR absorbance is zero [3] | |

| Advanced Absorbance Subtraction [4] | Uses isoabsorptive point and selective wavelengths | CIP and MET determination [4] | |

| Derivative Methods | First Derivative Zero Crossing [3] | Utilizes zero-crossing points in derivative spectra | Resolving overlapping peaks through derivative transformation |

| Ratio Methods | Ratio Difference [3] [4] | Measures difference in ratios at selected wavelengths | Binary mixture analysis with reduced excipient interference |

| Ratio Derivative [3] | Applies derivative transformation to ratio spectra | Enhancing spectral resolution in complex mixtures | |

| Mathematical Modeling | Bivariate Method [3] [4] | Solves simultaneous equations at two wavelengths | CIP and MET combination drugs [4] |

| Simultaneous Equation [3] | Uses absorptivity data at multiple wavelengths | HCQ and PAR using 220 nm and 242.5 nm [3] | |

| Q-Absorbance Method [3] | Ratio-based method at isoabsorptive points | Multi-component analysis with high precision |

Detailed Experimental Protocols

Simultaneous Equation Method for Hydroxychloroquine and Paracetamol

The simultaneous equation method provides a straightforward approach for quantifying two-component mixtures with overlapping spectra [3]:

Standard Solution Preparation: Prepare stock solutions of Hydroxychloroquine (HCQ) and Paracetamol (PAR) at 1000 μg/mL concentration in distilled water. Dilute to working concentrations of 3-25 μg/mL for HCQ and 2-35 μg/mL for PAR.

Wavelength Selection: Identify two analytical wavelengths—220 nm (λmax of HCQ) and 242.5 nm (λmax of PAR)—from the overlain spectra.

Absorptivity Determination: Calculate the A(1%, 1 cm) values for both drugs at both selected wavelengths:

- HCQ: ax₁ = 0.0881 (220 nm), ax₂ = 0.0339 (242.5 nm)

- PAR: ay₁ = 0.0419 (220 nm), ay₂ = 0.0521 (242.5 nm)

Equation Application: Apply the simultaneous equations:

- Cx = (A₂ay₁ - A₁ay₂)/(ax₂ay₁ - ax₁ay₂)

- Cy = (A₁ax₂ - A₂ax₁)/(ax₂ay₁ - ax₁ay₂) Where Cx and Cy are concentrations of HCQ and PAR, A₁ and A₂ are absorbances of sample at 220 nm and 242.5 nm.

Advanced Absorbance Subtraction for Ciprofloxacin and Metronidazole

The advanced absorbance subtraction (AAS) method effectively resolves overlapping spectra using isoabsorptive points [4]:

Standard Preparation: Prepare stock solutions of Ciprofloxacin (CIP) and Metronidazole (MET) at 50 μg/mL concentration.

Spectra Recording: Record absorption spectra in the 200-400 nm range using 1 cm quartz cells.

MET Determination in Presence of CIP:

- Measure absorbance at 291.5 nm (isoabsorptive point) and 250 nm

- CIP shows equal absorbance at both wavelengths, yielding zero difference

- MET concentration is calculated using the regression equation from the difference in absorbance values

CIP Determination in Presence of MET:

- Measure absorbance at 291.5 nm (isoabsorptive point) and 345 nm

- MET shows equal absorbance at both wavelengths, yielding zero difference

- CIP concentration is calculated using the regression equation from the difference in absorbance values

Workflow for Spectral Resolution Method Selection

The following diagram outlines a systematic approach for selecting appropriate methodologies to address overlapping spectra in analytical practice:

Essential Research Reagents and Materials

Successful resolution of overlapping spectra requires specific reagents and materials optimized for spectrophotometric analysis:

Table 3: Essential Research Reagents and Materials for Spectrophotometric Analysis of Overlapping Spectra

| Reagent/Material | Specifications | Function in Analysis |

|---|---|---|

| Double Beam UV/Visible Spectrophotometer | Jenway Model 6800 or equivalent with Flight Deck Software [3] [4] | Provides accurate absorbance measurements across UV-VIS range |

| Quartz Cuvettes | 1 cm path length, high transparency down to 200 nm [3] [4] | Sample holder with consistent optical characteristics |

| Reference Standards | High purity (>99%) drug standards [3] [4] | Ensures accurate calibration and method validation |

| Deuterium Lamp | Wavelength range 190-400 nm [1] | UV light source for spectral measurements |

| Holmium Oxide Filters | Certified wavelength standards [1] | Validates wavelength accuracy of spectrophotometer |

| Neutral Density Filters | Certified transmittance standards [1] | Checks photometric linearity across absorbance range |

| Distilled Water | HPLC grade or better [3] [4] | Solvent for aqueous preparations and dilutions |

Overlapping absorbance spectra present a fundamental challenge in pharmaceutical spectrophotometry, directly leading to inaccurate concentration data with potential impacts on drug quality and safety. The phenomenon arises from the additive nature of absorbance measurements, where multiple components contribute to the total signal at any given wavelength. Through systematic approaches including mathematical resolution techniques, careful wavelength selection, and robust calibration procedures, researchers can effectively mitigate these errors. The development of advanced spectral processing methods continues to enhance our ability to extract accurate quantitative information from complex mixtures, ensuring reliability in pharmaceutical analysis and quality control. As spectroscopic technologies evolve, the integration of intelligent preprocessing algorithms and multi-wavelength analysis approaches promises further improvements in resolving power and accuracy for complex multi-component systems.

Distinguishing Spectral Interference from Other Matrix Effects

In analytical chemistry, the sample matrix—all components other than the analyte of interest—can significantly influence measurement accuracy. The International Union of Pure and Applied Chemistry (IUPAC) defines the matrix effect as the "combined effect of all components of the sample other than the analyte on the measurement of the quantity" [5]. Within this broad domain, spectral interference represents a distinct and pervasive challenge that analysts must identify and correct to ensure data integrity. This whitepaper provides a technical guide for researchers and drug development professionals, placing spectral interference within the systematic taxonomy of matrix effects and providing robust experimental protocols for its diagnosis and correction.

Defining Matrix Effects and Spectral Interference

Matrix effects arise from two primary sources: (a) Chemical and Physical Interactions, where matrix components chemically interact with the analyte or alter its physical environment, and (b) Instrumental and Environmental Effects, where variations in instrumental conditions create artifacts in the analytical signal [5]. These broad categories manifest as several specific interference types.

Table 1: Classification of Major Matrix Effects in Spectroscopic Techniques

| Interference Type | Definition | Primary Cause | Resulting Error |

|---|---|---|---|

| Spectral Interference | Overlap of an analyte's emission line/peak with signals from other elements or molecular species [2] [6]. | Lack of specificity in the measured spectral window. | False positives/negatives; over/under-estimation of concentration [2]. |

| Chemical Interference | Alteration of atomization or ionization efficiency of the analyte due to the sample matrix [5] [2]. | Formation of stable compounds (e.g., refractory oxides) in the atomization/ionization source. | Signal suppression or enhancement, dependent on matrix composition. |

| Physical Interference | Modification of the sample's physical transport or nebulization efficiency into the instrument [2]. | Variations in viscosity, surface tension, or dissolved solid content. | Signal drift and variability, affecting precision and accuracy [2]. |

| Ionization Interference | Perturbation of the ionization equilibrium of the analyte in the plasma [7]. | Presence of Easily Ionizable Elements (EIEs) that change electron density. | Enhancement or suppression of ionic vs. atomic spectral lines [7]. |

Spectral interference is particularly problematic in spectroscopic imaging, as any unidentified interference in the chosen spectral range will generate a biased distribution image, potentially showing over-concentrations or false presence of the element of interest [6].

Figure 1: A taxonomy of matrix effects, positioning spectral interference alongside other primary mechanisms.

Diagnostic Methods for Spectral Interference

Diagnosing spectral interference is a critical first step before accurate quantification can be achieved. Several established experimental protocols can be employed.

Post-Column Infusion Analysis

This method is predominantly used in Liquid Chromatography-Mass Spectrometry (LC-MS) to qualitatively assess ionization suppression or enhancement [8].

Experimental Protocol:

- Setup: A solution of the analyte is infused post-column into the HPLC eluent at a constant flow rate using a syringe pump, creating a steady signal.

- Injection: A blank sample extract (the matrix without the analyte) is injected into the chromatographic system.

- Detection: The signal of the infused analyte is monitored over time. A depression in the signal indicates ionization suppression caused by matrix components eluting at that specific retention time, while a signal increase indicates enhancement.

- Analysis: The chromatogram is examined for regions of signal variation. The analytical method can then be optimized to shift the analyte's retention time away from these interference regions [8].

Limitations: The process is time-consuming, requires additional hardware, and can be challenging to interpret for multi-analyte methods [8].

Post-Extraction Spike Method

This quantitative method compares the signal response of an analyte in a pure solution to its response in a matrix sample.

Experimental Protocol:

- Preparation: Prepare a set of calibration standards in a pure, solvent-based mobile phase. Simultaneously, perform an extraction on a blank matrix sample.

- Spiking: Spike the extracted blank matrix with the same known concentration of analyte.

- Measurement: Analyze both the pure standard and the post-extraction spiked sample.

- Calculation: Calculate the matrix effect (ME) using the formula: ME (%) = (Response of post-extraction spike / Response of pure standard) × 100% A value of 100% indicates no matrix effect, <100% indicates suppression, and >100% indicates enhancement [8].

Limitations: This method requires a true blank matrix, which is unavailable for endogenous analytes like metabolites [8].

Chemometric Diagnosis using PCA and MCR-ALS

For complex samples, such as those analyzed by Laser-Induced Breakdown Spectroscopy (LIBS) imaging, advanced chemometric tools offer a powerful diagnostic approach [6].

- Experimental Protocol:

- Data Collection: Acquire a full hyperspectral dataset from the sample.

- Spectral Domain Isolation: Restrict the analysis to a narrow spectral range centered on the analyte's characteristic emission line.

- Principal Component Analysis (PCA): Perform PCA on this restricted dataset. The emergence of multiple significant principal components suggests the presence of several independent sources of variation, indicating potential spectral interferences.

- Validation with MCR-ALS: Apply Multivariate Curve Resolution - Alternating Least Squares (MCR-ALS) to resolve the mixed signals into pure component spectra and their concentration profiles. The identification of more than one component in the restricted window confirms spectral interference [6].

Table 2: Comparison of Spectral Interference Diagnostic Methods

| Method | Principle | Technique | Key Advantage | Key Limitation |

|---|---|---|---|---|

| Post-Column Infusion | Qualitative visualization of ionization suppression/enhancement regions. | LC-MS [8] | Identifies chromatographic regions of interference. | Qualitative, time-consuming, requires extra hardware. |

| Post-Extraction Spike | Quantitative comparison of analyte response in neat solvent vs. matrix. | LC-MS, ICP-OES/MS [8] | Provides a quantitative measure (%). | Requires a blank matrix. |

| Chemometric (PCA/MCR) | Multivariate decomposition of spectral signals into pure components. | LIBS, OES [6] | Does not require a priori knowledge of all interferents. | Requires a multi-spectral dataset; complex data analysis. |

Figure 2: Experimental workflow for diagnosing spectral interference across different analytical techniques.

Correction Strategies and Experimental Protocols

Once diagnosed, spectral interference must be corrected through instrumental, methodological, or mathematical means.

Instrumental and Methodological Corrections

- High-Resolution Spectrometry: Using instruments with superior spectral resolution can physically separate overlapping emission lines, thereby reducing spectral interferences [6].

- Chromatographic Separation: In LC-MS, optimizing the chromatographic method to increase the separation between the analyte and interfering compounds is a fundamental strategy to minimize co-elution and subsequent ionization effects [8].

- Careful Spectral Line Selection: The most straightforward correction is to select an alternative, interference-free analytical line for the element of interest. This requires a thorough investigation of the sample's spectral background [6] [9].

Mathematical and Chemometric Corrections

When instrumental separation is insufficient, mathematical corrections are essential.

- Background Correction: A classical approach involves measuring the background signal adjacent to the analyte's peak and subtracting it from the gross peak intensity. This method is effective for simple, unstructured background [6].

- Multivariate Curve Resolution (MCR-ALS): This powerful chemometric technique can mathematically "unmix" the measured signal in a spectral window into the contributions from the pure analyte and the pure interferent. The protocol involves:

- Input: Building a data matrix D from the hyperspectral image crop around the analyte line.

- Decomposition: Resolving D into the product of concentration profiles C and spectral profiles S^T (D = CS^T + E).

- Application: Using the resolved spectral profile of the pure analyte to reconstruct an interference-free distribution map [5] [6].

- Multivariate Regression and Matrix Matching: For non-spectral matrix effects that complicate calibration, using a matrix-matching strategy is highly effective. This involves preparing calibration standards in a matrix that closely mimics the composition of the unknown samples, thereby preemptively correcting for physical and chemical effects [5] [9]. A study on battery materials using MICAP OES successfully employed matrix-matched calibration to correct for effects caused by high lithium concentrations [9].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Matrix Effect Studies

| Reagent/Material | Function | Application Example |

|---|---|---|

| Stable Isotope-Labeled Internal Standards (SIL-IS) | Co-elutes with analyte, correcting for ionization suppression/enhancement by mirroring the analyte's behavior. | Gold standard for LC-MS quantitative analysis [8]. |

| High-Purity Metal Salts & Nitrates | Used to prepare synthetic matrix-matched calibration standards and for post-extraction spiking experiments. | Preparing Co, Ni, Mn solutions for battery cathode analysis by OES/MS [9]. |

| Blank Matrix | A real sample containing the matrix but not the analyte, essential for post-extraction spike and matrix-matched calibration. | Blank urine for clinical LC-MS assays; pure powder diluent for pressed pellets in LIBS [8] [10]. |

| Stearic Acid Binder | Inert binder for homogenizing and pressing powder samples into solid pellets for direct solid analysis techniques like LIBS. | Preparing pressed pellets of WC-Co alloys or powdered rock samples [7] [10]. |

| Post-Column Infusion T-Union | Hardware required to merge a constant infusion of analyte with the HPLC eluent for post-column infusion experiments. | Diagnosing ionization suppression regions in LC-MS method development [8]. |

Spectral interference is a specific, identifiable mechanism within the broader spectrum of matrix effects, characterized by the direct overlap of signals in the spectral domain. Distinguishing it from chemical or physical interferences is a critical diagnostic step, achievable through targeted experimental protocols like post-column infusion and chemometric analysis. Effective correction leverages a hierarchy of strategies, from optimal line selection and chromatographic separation to advanced mathematical resolution techniques like MCR-ALS. For all matrix effects, a comprehensive approach that includes robust sample preparation, matrix-matched calibration, and the use of appropriate internal standards remains foundational for achieving accurate and reliable quantitative results in pharmaceutical research and development.

Spectral interference is a fundamental challenge in spectrophotometry that occurs when unwanted signals impede the accurate measurement of the target analyte's absorbance. These interferences can lead to positive or negative errors in concentration measurements, directly impacting the reliability of analytical results in research and drug development [11]. The core principle of absorption spectroscopy, governed by the Beer-Lambert law (A = εcl), relies on measuring the specific light absorption by ground-state atoms or molecules. However, this measurement is compromised when other phenomena attenuate the light source [11] [12]. In the context of a broader thesis on spectral interference, understanding these sources is paramount for developing robust analytical methods. This guide details the three common sources—molecular absorption, scattering, and stray light—providing methodologies for their identification and correction to ensure data integrity.

Molecular Absorption Bands

Mechanism and Impact

Molecular absorption bands arise when molecular species or radicals within the sample absorb radiation at or near the wavelength of the analyte. Unlike the sharp absorption lines of atoms, molecules produce broad absorption bands due to rotational and vibrational energy transitions [13]. In atomic absorption spectroscopy (AAS), this is often called "background interference," which can be caused by components from the sample matrix or combustion reactions of the flame itself [11]. A specific example is the interference from phosphate (PO) molecules, which form a broad-band spectrum during atomization and can overlap with a narrow atomic line of an analyte like Copper (Cu) at 324.75 nm, leading to inaccurate concentration measurements [11].

Experimental Characterization Protocol

Objective: To identify and quantify molecular absorption interference from a phosphate matrix on copper analysis.

Materials and Reagents:

- Atomic Absorption Spectrometer (e.g., Perkin Elmer 2380) equipped with deuterium background correction.

- Copper standard solutions (concentration range: 50–1000 µg/L).

- Phosphoric acid (H₃PO₄) solutions (2% and 4% v/v) as a chemical modifier.

- An air-acetylene flame atomizer.

Methodology:

- Calibration Curve without Interferent: Prepare and analyze a series of pure copper standard solutions (e.g., 50, 200, 500, 1000 µg/L) to establish a baseline analytical curve.

- Introduction of Interferent: Add 2% and 4% H₃PO₄ to the copper standard solutions, ensuring the copper concentration range remains identical.

- Measurement: Under identical instrumental conditions (e.g., wavelength 324.75 nm, slit width, gas flow rates), aspirate the copper-phosphoric acid solutions and record the absorbance values.

- Data Analysis: Plot the analytical curves (absorbance vs. concentration) for copper both with and without phosphoric acid. The presence of molecular absorption from PO species will manifest as a positive deviation or shift in the calibration curve for the solutions containing H₃PO₄ compared to the pure copper standards [11].

Scattering

Mechanism and Impact

Scattering occurs when small particles or undissolved solids in the sample matrix cause the incident light to be deflected from its original path, thereby reducing the intensity of transmitted light detected. This is often observed in flame AAS due to the presence of refractory particles or in liquid samples with suspended solids [11] [12]. The attenuation caused by scattering is wavelength-dependent, being more pronounced at shorter wavelengths (below 300 nm) [13]. Since the instrument's detector interprets any reduction in light intensity as absorbance, scattering leads to a positive bias in the measured analyte concentration, falsely indicating a higher analyte presence.

Experimental Protocol for Scattering Cavity-Enhanced Pathlength

Objective: To demonstrate how induced multiple scattering can be harnessed to increase effective optical pathlength and enhance sensitivity for dilute solutions.

Materials and Reagents:

- Halogen lamp light source and a spectrometer (e.g., Ocean Optics HR4000).

- A custom-made scattering cavity (e.g., machined from hexagonal boron nitride, h-BN, due to its high diffuse reflectance and low absorption).

- Standard cuvette.

- Malachite green or crystal violet aqueous solutions at various low concentrations (e.g., 0.004 µM to 1 µM).

Methodology:

- Conventional Measurement (Control): Place the sample cuvette in the standard holder. Measure the reference intensity (I₀) with deionized water and the sample intensity (I) with the analyte solution.

- Cavity-Enhanced Measurement: Enclose the same sample cuvette within the h-BN scattering cavity. The cavity should have offset entrance and exit holes to prevent direct light transmission and ensure multiple scattering events.

- Data Collection: Record I and I₀ using the cavity setup for the same series of analyte concentrations.

- Data Analysis: Calculate absorbance (A = -log(I/I₀)) for both methods. Plot absorbance against concentration for both the conventional and cavity-enhanced methods. The enhancement factor can be quantified as the ratio of the slopes of the two calibration curves. Studies have demonstrated that this setup can provide more than a tenfold enhancement in sensitivity, significantly lowering the limit of detection (LOD) [14].

Stray Light

Mechanism and Impact

Stray light, or "Falschlicht," is defined as detected light that falls outside the nominal bandwidth of the monochromator [1]. It is typically caused by scattering from optical surfaces, imperfections in gratings, or higher-order diffraction. Stray light constitutes a fundamental limitation in a spectrometer, as it is present even when no sample is in the beam path [1]. The severe effect of stray light becomes apparent when measuring high-absorbance samples. It causes a deviation from the Beer-Lambert law, flattening the calibration curve at high absorbances and leading to significant negative errors in concentration determination because the measured absorbance is lower than the true absorbance [1].

Stray Light Analysis and Suppression Protocol

Objective: To quantify the stray light level in a spectrophotometer and evaluate the effectiveness of suppression measures.

Materials and Reagents:

- Spectrophotometer system under test.

- Sharp-cut-off filter solutions or solid filters (e.g., potassium chloride, sodium nitrite, or holmium glass filters).

- High-power monochromatic light source (e.g., laser) for grating analysis.

Methodology:

- Identification with Filters:

- Select a sharp-cut-off filter that transmits minimally at wavelengths below its cut-off and fully above it.

- Measure the transmittance of this filter at a wavelength where it should block all light (e.g., below 200 nm for KCl). Any signal detected by the instrument at this wavelength is defined as the stray light ratio [1].

- Grating-Specific Stray Light: In instruments using gratings, stray light can be identified by directing a high-power, monochromatic light source (e.g., a laser) into the monochromator and observing the output signal while scanning wavelengths. The presence of signal at non-target wavelengths indicates stray light generated from grating imperfections or multi-order diffraction [15].

- Suppression and Validation: Stray light is suppressed through improved optical design, such as using double monochromators, incorporating baffles, and applying anti-reflective coatings. The success of these measures is validated by repeating the filter test; high-performance systems can suppress stray light to levels of 10⁻⁴ or lower, thereby improving the signal-to-noise ratio and weak light detection capability [16].

- Identification with Filters:

Data Presentation and Analysis

Table 1: Characteristics of Common Spectral Interferences

| Interference Type | Primary Cause | Effect on Measured Absorbance | Typical Wavelength Dependence |

|---|---|---|---|

| Molecular Absorption | Absorption by molecular species (e.g., PO, OH) | Positive or Negative Error [11] | Broad bands [13] |

| Scattering | Particulates or refractory compounds in light path | Positive Error [11] [13] | Inverse proportionality (stronger at shorter λ) [13] |

| Stray Light | Imperfections in monochromator and optical components | Negative Error at high absorbance [1] | Dependent on source and grating [1] |

Reagent and Material Toolkit

Table 2: Essential Research Reagents and Materials for Interference Management

| Item | Function/Application | Example Use Case |

|---|---|---|

| Phosphoric Acid (H₃PO₄) | Chemical modifier to study molecular absorption | Modeling PO interference on metal analysis (e.g., Cu) [11] |

| Holmium Oxide Solution/Glass | Wavelength accuracy standard for validation | Checking spectrometer wavelength calibration [1] |

| Sharp-Cut-Off Filters (e.g., KCl) | Stray light quantification | Measuring stray light ratio at blocking wavelengths [1] |

| Hexagonal Boron Nitride (h-BN) | Material for constructing scattering cavities | Enhancing pathlength and sensitivity in dilute solution analysis [14] |

| Deuterium Lamp | Continuum source for background correction | Correcting for broad-band molecular absorption and scattering in AAS [13] [12] |

Visualization of Interference Mechanisms and Workflows

Mechanisms of Spectral Interference

The following diagram illustrates how molecular absorption, scattering, and stray light interfere with the intended measurement path of analytical light.

Background Correction with a Deuterium Lamp

This workflow outlines the standard procedure for correcting for broad-band molecular absorption and scattering using a deuterium lamp in Atomic Absorption Spectroscopy.

Molecular absorption bands, scattering, and stray light represent three critical sources of spectral interference that can systematically compromise quantitative analysis in spectrophotometry. Accurately diagnosing these interferences is the first step, which can be achieved through the experimental protocols outlined, such as using chemical modifiers, scattering cavities, and sharp-cut-off filters. Effective correction leverages both hardware solutions, like deuterium lamps and improved optical design to suppress stray light, and software algorithms. For researchers in drug development and other fields requiring precise quantification, a deep understanding of these common interference sources is not merely a technical detail but a fundamental prerequisite for generating reliable, high-quality data.

In spectrophotometric analysis, the accurate determination of analyte concentration relies on the fundamental principle of the Beer-Lambert law, which establishes a direct proportionality between absorbance and concentration [17] [18]. However, this relationship can be significantly compromised by various instrumental and sample-related factors, leading to erroneous apparent increases in absorbance and, consequently, calculated concentrations. Within the context of a broader thesis on spectral interferences, this whitepaper examines the phenomena that cause such inaccuracies, with particular emphasis on spectral interference—a prevalent issue in drug development and complex matrix analysis.

Spectral interference occurs when an absorbing species other than the analyte, or other optical phenomena, contributes to the total measured absorbance at the target wavelength [13]. This results in a positive deviation from the true value, directly impacting quantitative accuracy. The 1974 College of American Pathologists comparative test underscored this reality, revealing coefficients of variation in absorbance as high as 15% among laboratories, translating to an 11% variation in transmittance measurements [1]. This guide details the sources, experimental identification, and mitigation strategies for these critical inaccuracies.

Fundamental Principles and Definitions

The Beer-Lambert Law

The Beer-Lambert law forms the cornerstone of absorption spectroscopy for quantitative analysis. It states that the absorbance (A) of a solution is directly proportional to the concentration (c) of the absorbing species and the path length (l) of the light through the solution [17] [19]. The law is expressed mathematically as:

A = εcl

Here, ε is the molar absorptivity (or extinction coefficient), a substance-specific constant at a given wavelength [18]. This linear relationship enables the construction of calibration curves for determining unknown concentrations. However, this relationship is valid only for monochromatic light, dilute solutions, and in the absence of interacting chemical equilibria or instrumental artifacts [18].

Transmittance and Absorbance

Absorbance is a dimensionless quantity calculated from the ratio of incident (I₀) to transmitted (I) light intensity [17] [19]:

A = log₁₀(I₀/I)

Transmittance (T), defined as T = I/I₀, is inversely and logarithmically related to absorbance [17]. The following table shows this core relationship:

Table 1: The Relationship Between Absorbance and Transmittance

| Absorbance (A) | Transmittance (T) | % Transmittance |

|---|---|---|

| 0 | 1 | 100% |

| 1 | 0.1 | 10% |

| 2 | 0.01 | 1% |

| 3 | 0.001 | 0.1% |

It is critical to note that the term "optical density" (OD) has been used synonymously with absorbance, but its use is discouraged by IUPAC for clarity, as OD is also used in contexts involving significant light scattering, such as in microbial growth measurements (OD₆₀₀) [19].

Deviations from the Beer-Lambert law leading to falsely elevated absorbance readings can be categorized into spectral and non-spectral sources.

Spectral Interferences

Spectral interferences are among the most significant contributors to inaccurately high absorbance readings.

- Background Absorption from Matrix Components: In atomic absorption spectroscopy, a spectral interference occurs when molecular species or particulates from the sample matrix (the flame or furnace environment) exhibit broad absorption bands that overlap with the analyte's narrow absorption line [13]. This is a common problem with complex samples like steel, soil, or ores [20].

- Stray Light: Stray light, or "Falschlicht," is defined as light of wavelengths outside the intended bandpass that reaches the detector [1]. This heterochromatic stray light causes a decrease in the measured absorbance, but at high sample absorbances, the relative error becomes positive, leading to an apparent increase and a non-linear calibration curve [1] [18]. Its effect is particularly pronounced at the spectral extremes of an instrument.

- Scattering Effects: Light scattering by particulates, colloids, or microbial cells in a solution can artificially increase the measured signal attenuation. This is the principle behind optical density measurements at 600 nm (OD₆₀₀) used to monitor microbial growth, but it constitutes a serious interference if the goal is to measure a dissolved chromophore in a turbid solution [19].

- Self-Absorption: Primarily a concern in emission techniques like Laser-Induced Breakdown Spectroscopy (LIBS), self-absorption occurs when emitted radiation from the hot plasma core is re-absorbed by cooler atoms of the same element in the plasma periphery [20]. This leads to a distortion of the spectral line profile and a reduction in the measured emission intensity, which can complicate quantitative analysis.

Non-Spectral and Instrumental Interferences

- Photometric Non-Linearity: The detector response may not be linear across its entire dynamic range. If an instrument is not properly calibrated for photometric linearity, readings can be systematically inaccurate [1].

- Chemical Interferences: At high concentrations, solute molecules can interact electrostatically, altering the molar absorptivity (ε) and causing a deviation from the Beer-Lambert law [18]. Chemical reactions such as dissociation, association, or polymerization can also change the nature of the absorbing species [18].

- Bandwidth and Wavelength Inaccuracy: Using non-monochromatic light or an incorrect wavelength can lead to measurements on the slope of an absorption band, where the relationship between A and c is no longer linear [1] [18]. The use of holmium oxide solutions or glasses with sharp absorption bands is recommended for verifying wavelength accuracy [1].

Table 2: Summary of Interference Sources and Their Impact

| Interference Type | Cause | Effect on Measured Absorbance |

|---|---|---|

| Spectral Interference | Background absorption from matrix | Apparent increase |

| Stray Light | Light outside bandpass reaches detector | Apparent increase (at high true absorbance) |

| Light Scattering | Particulates/cells in solution | Apparent increase |

| Self-Absorption | Re-absorption of emitted light (LIBS) | Apparent decrease in emission line intensity |

| Chemical Interference | Molecular interactions at high concentration | Non-linearity (Deviation from Beer's Law) |

| Wavelength Inaccuracy | Incorrect wavelength setting | Unpredictable; often an apparent increase |

Experimental Protocols for Identification and Quantification

Protocol for Stray Light Verification

Principle: To measure the fraction of stray light at critical wavelengths, particularly in the UV region.

Method (Absorption Cut-Off Method):

- Obtain a set of certified solid cutoff filters or highly concentrated solutions (e.g., potassium chloride) that absorb all transmitted light below a specific wavelength.

- Set the spectrophotometer to the test wavelength (e.g., 220 nm for KCl).

- With an empty beam or a matched solvent blank, set the transmittance to 100%.

- Place the cutoff filter or solution in the light path. A high-quality instrument should read 0% T. Any reading above 0% is the stray light ratio [1].

- Repeat this procedure at various wavelengths, especially at the extremes of the instrument's operational range.

Protocol for Background Correction using a D₂ Lamp

Principle: To correct for broad-band background absorption and scattering in atomic absorption spectrometry.

Method:

- The instrument is equipped with a deuterium (D₂) continuum lamp and the primary hollow cathode lamp (HCL).

- The HCL emission is absorbed by both the analyte (narrow line) and the background (broad band).

- The D₂ lamp emission is only absorbed by the background, as analyte absorption of the continuum is negligible.

- The instrument electronically subtracts the D₂ lamp absorbance signal from the HCL absorbance signal, yielding a background-corrected analyte absorbance [13].

- This method requires the background absorbance to be constant over the monochromator's bandwidth.

Protocol for Assessing Linearity and Calibration

Principle: To verify the linear dynamic range of the spectrophotometer and identify deviations.

Method:

- Prepare a series of at least five standard solutions of the analyte across the expected concentration range.

- Measure the absorbance of each standard at the recommended wavelength.

- Plot absorbance versus concentration. The curve should be linear.

- Deviations from linearity at high concentrations indicate potential chemical interferences or instrument non-linearity. For reliable quantitative work, maintain absorbance readings between 0.1 and 1.0, which corresponds to 80% of light being transmitted down to just 10% [19]. Measurements with an absorbance above 3-4 are subject to significant error [19].

- A fresh calibration curve should be generated for each analytical session.

The following diagram illustrates the core workflow for troubleshooting apparent absorbance increases:

Advanced Mitigation Techniques

The Zeeman Effect for Background Correction

This is a sophisticated method used primarily in graphite furnace atomic absorption to correct for structured background.

- Principle: A magnetic field is applied to the atomizer, which splits the analyte's absorption line into several polarized components (Zeeman effect) [13].

- Implementation: A rotating polarizer is placed between the light source and the atomizer. The instrument alternates between measuring absorbance with and without the magnetic field's influence. The difference between these measurements yields a background-corrected absorbance value with high specificity, even in the presence of complex spectral overlaps [13].

Laser-Stimulated Absorption (LSA-LIBS)

For techniques like LIBS suffering from self-absorption and spectral interference, LSA-LIBS has shown promise.

- Principle: A secondary, wavelength-tunable laser (e.g., an Optical Parametric Oscillator) irradiates the laser-induced plasma. This stimulated absorption excites "cold" atoms in the plasma periphery, depopulating the lower energy level and thus reducing re-absorption of the emitted light [20].

- Efficacy: In one study on alloy steel, LSA-LIBS reduced the self-absorption factor of a Nickel line by 85% and decreased the average relative error of quantitative analysis by 83%, while simultaneously eliminating spectral interference from iron [20].

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table lists key reagents and materials critical for validating spectrophotometric accuracy and mitigating interferences.

Table 3: Key Reagents and Materials for Spectrophotometric Analysis

| Item | Function/Brief Explanation |

|---|---|

| Holmium Oxide (Ho₂O₃) Filters/Solutions | Certified reference materials with sharp absorption peaks for verifying wavelength accuracy of the spectrophotometer [1]. |

| Neutral Density Filters | Solid, non-wavelength-specific attenuators used for checking the photometric linearity of the instrument across its range [1]. |

| Potassium Chloride (KCl) Solutions | Used at specific concentrations (e.g., 12 g/L) as a cutoff filter to quantify levels of stray light in the UV region (e.g., at 220 nm) [1]. |

| Didymium Glass Filters | Filters containing rare earth oxides for a less precise, quick visual check of wavelength function, though holmium is preferred for accuracy [1]. |

| Certified Reference Materials (CRMs) | Samples with known analyte concentrations in a defined matrix, essential for method validation and assessing accuracy in the presence of interferences. |

| High-Purity Solvents | Essential for preparing blanks and standards to ensure that measured absorbance originates from the analyte, not from impurities. |

| Deuterium (D₂) Lamp | A continuum source integrated into AA spectrometers for the standard background correction method [13]. |

| Optical Parametric Oscillator (OPO) Laser | A wavelength-tunable laser used in advanced techniques like LSA-LIBS to reduce self-absorption effects in complex samples [20]. |

Apparent increases in absorbance and concentration present a significant challenge to the integrity of spectrophotometric data, particularly in regulated fields like drug development. These inaccuracies predominantly stem from spectral interferences—including background absorption, stray light, and scattering—as well as chemical and instrumental factors. A rigorous approach involving regular instrument calibration using certified standards, awareness of the Beer-Lambert law's limitations, and the application of specialized background correction techniques is paramount. By implementing the detailed experimental protocols and mitigation strategies outlined in this guide, researchers and scientists can significantly enhance the reliability of their analytical results, ensuring that reported concentrations reflect true chemical reality rather than analytical artifact.

Advanced Techniques and Chemometrics for Interference Correction

Spectral interference poses a significant challenge in analytical spectrophotometry, particularly in atomic absorption spectrometry (AAS) where it can severely compromise quantitative accuracy. This whitepaper examines two principal instrumental background correction techniques: the established deuterium (D2) lamp method and the more advanced Zeeman effect-based correction. Within the broader context of spectral interference management in spectrophotometric research, we provide a technical comparison of these methodologies, detailed experimental protocols, and visualization of their operational mechanisms. The focus remains on their application in pharmaceutical development and materials science, where accurate trace element analysis is paramount for drug safety and material characterization.

Spectral interference occurs when non-analyte components in a sample produce signals that overlap with or obscure the target analyte's signal. In atomic absorption spectrometry, this manifests primarily as background absorption—a phenomenon where an analytical line darkens due to causes other than absorption by the target metallic element [21]. This interference arises from various sources, including molecular absorption, light scattering by particulates, and overlapping spectral lines from other elements.

In pharmaceutical analysis, such as the simultaneous quantification of ophthalmic drugs like alcaftadine and ketorolac tromethamine, the presence of preservatives like benzalkonium chloride can cause significant spectral interference due to its strong UV absorbance [22]. Similarly, in geological analysis, overlapping fluorescence lines from elements like manganese, iron, and cobalt present substantial challenges for accurate quantification [23]. Without effective correction, these interferences lead to positively biased results, inaccurate quantification, and compromised data integrity, particularly concerning in regulated environments like drug development laboratories.

The Deuterium (D2) Lamp Background Correction Method

Fundamental Principles and Instrumentation

The D2 lamp correction method, the oldest and most common background correction technique particularly in flame AAS, operates on a sequential measurement principle [24]. It employs two different light sources: a hollow cathode lamp (HCL) specific to the analyte element and a broad-spectrum deuterium lamp.

The underlying principle involves measuring total absorption (atomic vapor absorption plus background absorption) using the HCL, then measuring exclusively background absorption at the same wavelength using the D2 lamp, with the difference yielding the true atomic absorption [21]. The D2 lamp achieves this because its emission bandwidth, determined by the spectroscope's slit width, is much larger than the narrow atomic absorption lines. Consequently, the extremely narrow atomic absorption becomes negligible when measured against this broad emission profile [21].

Experimental Protocol and Considerations

Instrument Setup and Measurement Sequence:

- Optical Configuration: Align the HCL and D2 lamp sources to follow the identical optical path through the atomizer (graphite furnace or flame). A beamsplitter typically combines the paths [21].

- Sequential Measurement:

- Step 1: Activate the hollow cathode lamp and measure the combined absorbance (analyte atomic absorption + background absorption).

- Step 2: Activate the deuterium lamp and measure the background absorption at the same wavelength.

- Step 3: Electronically subtract the D2 lamp signal (background only) from the HCL signal (total absorption) to obtain the corrected atomic absorption [21].

- Wavelength Verification: Confirm the wavelength alignment between both light sources to ensure identical measurement positions.

Critical Limitations for Pharmaceutical Applications:

- The technique cannot correct for structured background (background with fine spectral features) because the D2 lamp measures a broad bandwidth [24].

- It is ineffective at wavelengths above 320 nm due to the weak emission intensity of the deuterium lamp in this region [24].

- As a "single-beam" system measuring two different beams under non-identical conditions, it is susceptible to baseline drift [25].

Table 1: D2 Lamp Background Correction Specifications

| Parameter | Specification | Technical Implication |

|---|---|---|

| Application Range | Ultraviolet region (<320 nm) | Unsuitable for elements absorbing above 320 nm |

| Background Type | Continuous, non-structured | Limited efficacy against fine-structured background |

| Optical Path | Single-beam (different sources) | Potential for baseline drift due to source differences |

| Implementation Cost | Lower | Economical for routine flame AAS analysis |

Zeeman Effect Background Correction

Theoretical Foundation

The Zeeman effect describes the splitting of atomic spectral lines under the influence of an external magnetic field. For "weak" magnetic fields relevant to AAS, this splitting follows the anomalous Zeeman pattern where energy levels shift according to the formula:

ΔE = gM µBB

where g is the Landé g-factor, M is the magnetic quantum number, µB is the Bohr magneton, and B is the magnetic flux density [26]. This results in the original absorption line splitting into multiple components: a π component that remains at the original wavelength and σ± components that are shifted to higher and lower wavelengths [24].

Instrumental Implementation

Zeeman correction systems apply an alternating magnetic field directly to the atomizer (graphite furnace), affecting the atoms in the vapor state. The key innovation is using a polarizer to exploit the polarization characteristics of the split components.

- The π component, which absorbs light polarized parallel to the magnetic field, remains at the central wavelength.

- The σ components, which absorb light polarized perpendicular to the field, are shifted away from the original wavelength [25].

When the magnetic field is OFF, the atomic energy levels are unsplit, and the system measures total absorption (atomic + background). When the magnetic field is ON and the polarizer is set to transmit only the perpendicular component, the σ components are shifted away from the emission line of the light source. Since the background absorption remains unaffected by the magnetic field and exhibits no polarization dependence, the system now measures only background absorption [24] [25]. The difference between these two measurements yields the background-corrected atomic absorption.

Experimental Protocol

Instrument Configuration and Measurement:

- Magnet Placement: Position an electromagnet to generate an alternating magnetic field perpendicular to the light path around the graphite furnace atomizer.

- Polarizer Installation: Install a rotating polarizer or equivalent optical component between the light source and the atomizer.

- Measurement Cycle:

- Magnetic Field OFF: No Zeeman splitting occurs. Measure total absorption (Atotal = Aatomic + Abackground).

- Magnetic Field ON with perpendicular polarization: The π component is suppressed, and the shifted σ components do not overlap with the lamp's emission profile. Measure background absorption (Abackground).

- Signal Processing: Subtract the background measurement from the total absorption measurement to obtain the corrected atomic absorption signal.

Advantages in Pharmaceutical and Materials Research:

- Superior Accuracy: Measures total and background absorption with the same light source and identical optical path, correcting for all background types, including structured background [24] [25].

- Stable Baseline: Functions as a true double-beam system, minimizing baseline drift [25].

- Full Wavelength Coverage: Effective across the entire UV-Vis wavelength range [25].

Table 2: Zeeman Effect Background Correction Specifications

| Parameter | Specification | Technical Implication |

|---|---|---|

| Application Range | Full wavelength region | Universal for all elements |

| Background Type | All types (continuous & structured) | Superior correction capability |

| Optical Path | Double-beam (same source/path) | Enhanced stability, minimal drift |

| System Complexity | Higher | Requires powerful magnet and supply |

Comparative Analysis and Research Applications

Technical Comparison

The following diagram illustrates the core operational difference between the single-beam D2 method and the double-beam Zeeman method.

Diagram 1: D2 Single-Beam vs. Zeeman Double-Beam Correction.

Selection Guidelines for Research Scientists

The choice between D2 and Zeeman correction depends on analytical requirements and operational constraints:

- For routine flame AAS analysis of elements in the UV spectrum with simple matrices, D2 correction offers a cost-effective and reliable solution [24].

- For challenging applications such as:

- Graphite furnace AAS with complex biological or pharmaceutical samples (e.g., drug compounds with preservatives).

- Analysis requiring measurement at wavelengths >320 nm.

- Situations involving high, structured background (e.g., geological samples, environmental particulates).

- Zeeman correction is the unequivocal choice due to its superior accuracy and stability [24] [25].

The Researcher's Toolkit: Essential Components for Background Correction

Table 3: Key Research Reagents and Instrumental Components

| Component | Function in Background Correction | Application Notes |

|---|---|---|

| Deuterium (D2) Lamp | Continuous UV source for background measurement in D2 method. | Requires precise alignment with HCL path; limited to <320 nm [21] [24]. |

| Hollow Cathode Lamp (HCL) | Element-specific line source for total absorption measurement. | Standard light source for AAS; integrity critical for both methods [21]. |

| Electromagnet | Generates alternating magnetic field for Zeeman splitting. | High-power component; central to Zeeman systems [24]. |

| Polarizer | Selects specific polarization components of split lines in Zeeman systems. | Enables isolation of background signal when field is ON [25]. |

| Internal Standards | Corrects for sample matrix effects and signal drift. | e.g., Scandium or Yttrium; added to all samples and standards [24]. |

| Matrix Modifiers | Modifies sample matrix to reduce background during atomization. | e.g., Pd salts; used in graphite furnace to separate analyte from interferent volatilization. |

Advanced Applications and Future Directions

Advanced background correction is pivotal in modern spectroscopic techniques. In Laser-Induced Breakdown Spectroscopy (LIBS), novel methods using optical computation and artificial neural networks (ANNs) are being developed to screen interfering spectral lines, with one study showing a dramatic improvement in the coefficient of determination (R²) from 0.6378 to 0.9992 [27]. In Total Reflection X-Ray Fluorescence (TXRF), chemometric techniques like partial least squares (PLS) regression and novel spectral decomposition algorithms are employed to resolve overlapping elemental lines in complex samples like polymetallic nodules [23].

The integration of machine learning with instrumental background correction represents the future frontier, moving beyond hardware-based solutions to create intelligent, adaptive correction systems that can handle increasingly complex sample matrices encountered in pharmaceutical research and material science.

Effective management of spectral interference is a cornerstone of reliable spectrophotometric analysis. While the D2 lamp method remains a viable option for specific, routine applications, the Zeeman effect provides a more robust, versatile, and scientifically sound solution for demanding research environments. The choice between these technologies must be guided by the specific analytical problem, sample matrix, and required data integrity. As spectroscopic applications expand into more complex domains, from biopharmaceuticals to advanced materials, the role of sophisticated, instrument-led background correction will only grow in importance, ensuring the accuracy and validity of critical analytical data.

Leveraging Isosbestic Points for Analysis of Pharmaceutical Mixtures

Spectral interference, the overlapping of absorption spectra between different components in a mixture, represents a fundamental challenge in analytical spectrophotometry. This interference complicates the quantitative analysis of pharmaceutical compounds, particularly in multi-component formulations where active ingredients exhibit severely overlapping spectra, making direct measurement of individual components impossible without sophisticated resolution techniques [28] [29]. Within this challenging analytical landscape, isosbestic points—wavelengths where two or more chemical species exhibit identical molar absorptivity—emerge as powerful tools for simplifying complex analyses and enabling accurate determinations without prior separation [30] [31].

The presence of spectral interference directly compromises the fundamental principle of spectrophotometric analysis: the accurate correlation between absorbance and concentration for individual analytes. When absorption bands overlap significantly, as demonstrated in anti-Parkinson drugs levodopa and carbidopa [28] or COVID-19 therapeutics remdesivir and moxifloxacin [29], conventional univariate analysis becomes impossible, necessitating advanced mathematical or instrumental approaches. This technical limitation is particularly problematic in pharmaceutical quality control and therapeutic drug monitoring, where precise quantification of multiple components is essential for ensuring product safety and efficacy.

Theoretical Foundations: The Nature and Significance of Isosbestic Points

Fundamental Principles and Characteristics

An isosbestic point manifests as a specific wavelength in the absorption spectrum where two or more chemical species possess identical molar absorptivity coefficients [30]. This phenomenon occurs during chemical equilibria involving interconverting species, such as acid-base pairs, oxidation-reduction partners, or different conformational states. The theoretical foundation rests on the Beer-Lambert law, where at the isosbestic wavelength, the total absorbance of a mixture remains constant throughout the conversion process, provided the total analyte concentration remains unchanged.

The diagnostic significance of isosbestic points in spectroscopy cannot be overstated. Their presence provides compelling evidence for: (1) the equilibrium between two interconverting species, (2) the absence of intermediate forms or side reactions during the transformation, and (3) the validity of the analytical method for quantifying total analyte concentration regardless of the species' distribution [30]. In pharmaceutical analysis, these characteristics make isosbestic points particularly valuable for method validation and stability-indicating assays.

Isosbestic Points as Analytical Tools in Pharmaceutical Contexts

The practical utility of isosbestic points in resolving spectral interference stems from their unique properties. When analyzing binary mixtures, the isosbestic point allows quantification of the total concentration of both analytes, which can then be leveraged with additional mathematical manipulations to determine individual component concentrations [29] [32]. This principle extends to more complex mixtures; for ternary systems, the presence of two isosbestic points between two components can be exploited to determine a third component through techniques like Ratio Difference-Isoabsorptive Point (RD-ISO) methods [31].

The application of isosbestic points aligns with the growing emphasis on green analytical chemistry, as these methods typically require minimal solvent consumption, avoid expensive reagents, and generate little waste compared to chromatographic techniques [28] [29]. Furthermore, the simplicity and accessibility of spectrophotometric instrumentation make these methods particularly valuable for routine quality control in pharmaceutical manufacturing and clinical monitoring.

Current Methodologies and Advanced Applications

Established Spectrophotometric Techniques

Contemporary research has yielded several sophisticated spectrophotometric methods that leverage isosbestic points to resolve complex pharmaceutical mixtures:

Absorbance Subtraction (AS) Method: This technique applies when a binary mixture exhibits an isosbestic point and one component has a more extended spectrum. The method utilizes the extended region where only one component absorbs to calculate an "absorbance factor," which is then used to resolve contributions at the isosbestic point [28] [29]. This approach has been successfully applied to mixtures of remdesivir and moxifloxacin, where moxifloxacin's absorption extends into regions where remdesivir shows no absorption [29].

Advanced Absorbance Subtraction (AAS) and Advanced Amplitude Modulation (AAM): These represent evolution of traditional subtraction methods, incorporating mathematical manipulations of ratio spectra to enhance selectivity in complex mixtures [31].

Ratio Difference-Isoabsorptive Point (RD-ISO) Method: For ternary mixtures where two components show two isosbestic points, this method enables determination of the third component by dividing the mixture spectrum by a normalized spectrum of one component and measuring amplitude differences at the isosbestic wavelengths [31].

Double Divisor-Ratio Difference-Dual Wavelength (DD-RD-DW) Method: This advanced approach addresses even more complex quaternary mixtures by combining double divisor methodology with dual wavelength principles to resolve severely overlapping spectra [31].

Comparative Analysis of Recent Pharmaceutical Applications

Table 1: Recent Applications of Isosbestic Points in Pharmaceutical Analysis

| Drug Combination | Analytical Challenge | Method Employed | Key Finding | Reference |

|---|---|---|---|---|

| Levodopa (LEV) & Carbidopa (CBD) (Anti-Parkinson) | Severe spectral overlap 200-296 nm | Absorbance Subtraction (AS) & Net Analyte Signal (NAS) | Successful determination in binary mixtures, tablets, and urine samples without separation | [28] |

| Remdesivir (RDV) & Moxifloxacin (MFX) (COVID-19 treatment) | Significant spectral overlap | Absorbance Subtraction (AS) using isosbestic point at 229 nm | Enabled quantification in formulations and plasma; green, cost-effective approach | [29] |

| Glimepiride & Linagliptin (Anti-diabetic) | Spectral interference in synthetic mixtures | Absorbance correction at isosbestic point (261 nm) | Validated method suitable for routine quality control of combined dosage forms | [32] |

| Drotaverine, Caffeine, Paracetamol & Para-aminophenol (Analgesic combination) | Quaternary mixture with severe overlap | Multiple methods including RD-ISO and DD-RD-DW | Successfully resolved four-component mixture without separation steps | [31] |

| Nebivolol & Valsartan (Antihypertensive) | Interference from valsartan impurity | Double Divisor-Ratio Spectra Derivative (DD-RS-DS) | Simultaneous determination of drugs in presence of synthetic precursor impurity | [33] |

Experimental Protocols: Methodologies for Isosbestic Point-Based Analysis

Standardized Experimental Workflow

The following workflow provides a generalized protocol for implementing isosbestic point-based methods, synthesizing common elements from recent applications [28] [29] [32]:

Diagram 1: Generalized workflow for isosbestic point-based analysis of pharmaceutical mixtures.

Detailed Experimental Procedure

Instrumentation and Reagent Preparation

Materials and Equipment:

- UV-Visible spectrophotometer with scanning capability (e.g., Varian Cary 100, Shimadzu UV-1800, JASCO V-630)

- Quartz cells (1 cm path length)

- Analytical balance

- Ultrasonic bath

- Volumetric flasks, pipettes

- HPLC-grade methanol, acetonitrile, or appropriate solvents

- Certified reference standards of target analytes

Standard Solution Preparation:

- Precisely weigh 10 mg of each reference standard drug

- Transfer to separate 100 mL volumetric flasks

- Dissolve in 60-70 mL of appropriate solvent (methanol commonly used)

- Sonicate for 15 minutes to ensure complete dissolution

- Dilute to volume with solvent to obtain 100 μg/mL stock solutions

- Prepare working solutions through appropriate dilution [28] [29] [32]

Spectral Analysis and Isosbestic Point Identification

- Scan standard solutions of individual components across relevant UV range (typically 200-400 nm)

- Overlay spectra to identify wavelength(s) where absorbances cross (isosbestic points)

- Verify isosbestic point by scanning mixtures at different concentration ratios

- Confirm that total absorbance remains constant at this wavelength while ratio varies

Absorbance Subtraction Method Protocol

Based on the method successfully applied to remdesivir and moxifloxacin [29]:

Isoabsorptive Point Calibration:

- Prepare series of standard solutions for both analytes

- Measure absorbance at isosbestic point (λiso, e.g., 229 nm for RDV/MFX)

- Construct calibration curve of absorbance versus concentration for total analyte

Absorbance Factor Determination:

- For component with extended spectrum (Y, e.g., moxifloxacin)

- Measure absorbance at λiso and at wavelength where only Y absorbs (λY, e.g., 360 nm)

- Calculate absorbance factor (AF) = Aλiso / AλY

Sample Analysis:

- Record sample absorbance at λiso (Amλiso) and λY (AmλY)

- Calculate concentration of Y in mixture: CY = (AmλY × AF) / slopeY

- Determine concentration of X: CX = (Amλiso - CY) / slopeX

This method is particularly effective when one component exhibits no absorption at a specific wavelength while the other shows measurable absorption, enabling mathematical resolution of the mixture [28].

Method Validation Parameters

All developed methods should be validated according to ICH guidelines, assessing:

- Linearity: Correlation coefficient (r² > 0.998 typically achieved [32])

- Accuracy: Recovery studies (98-102% acceptable)

- Precision: Intra-day and inter-day RSD (<2% acceptable)

- Specificity: Confirmation of no interference from excipients

- LOD/LOQ: Adequate sensitivity for intended application [28] [29] [32]

Essential Research Reagents and Materials

Table 2: Essential Research Reagent Solutions for Isosbestic Point-Based Analysis

| Reagent/Material | Specification | Function in Analysis | Example Application |

|---|---|---|---|

| Pharmaceutical Reference Standards | Certified purity >98% | Primary standards for calibration curve construction | Quantification of active ingredients in formulations [28] [32] |

| HPLC-Grade Methanol | Low UV cutoff, high purity | Solvent for standard and sample preparation | Extraction and dilution medium for spectral analysis [29] [33] |

| Acetonitrile (HPLC Grade) | Low UV absorbance | Alternative solvent for poorly methanol-soluble compounds | Solvent for glimepiride and linagliptin analysis [32] |

| Standard Tablet Formulations | Marketed pharmaceutical products | Method application and validation in real samples | Analysis of commercial levodopa-carbidopa tablets [28] |

| Synthetic Mixture Components | Laboratory-synthesized impurities/degradants | Specificity and interference studies | Valsartan Desvaleryl analysis in antihypertensive formulations [33] |

The strategic application of isosbestic points provides powerful solutions to the persistent challenge of spectral interference in pharmaceutical analysis. By enabling accurate quantification of individual components in complex mixtures without expensive instrumentation or extensive separation procedures, these methodologies represent both practical and sustainable approaches for pharmaceutical laboratories. As evidenced by recent applications across diverse therapeutic categories—from anti-Parkinson drugs to COVID-19 treatments—isosbestic point-based methods continue to evolve in sophistication while maintaining the simplicity and accessibility that make them invaluable for routine analysis and quality control in drug development and manufacturing.

In the field of spectrophotometry research, spectral interference presents a fundamental challenge that compromises analytical accuracy. This phenomenon occurs when the spectral signatures of non-target components or external environmental factors obscure or distort the signal of the target analyte. The 'M plus N' theory provides a comprehensive theoretical framework to address this pervasive issue, shifting the analytical paradigm from isolated target observation to a holistic consideration of the entire measurement system [34].

Formally, the theory defines "M" factors as all measurable components within a complex solution, including both the target analyte and all non-target constituents. The "N" factors encompass the multitude of external interference variables inherent to the measurement process itself, such as instrumental fluctuations, environmental conditions, and operational inconsistencies [34] [35]. The core premise of the theory posits that the ultimate accuracy of quantifying a target component is determined by the collective uncertainty introduced by all non-target components (M-1 factors) and all external interference factors (N factors) [34]. This systematic approach to error source identification and management offers a robust methodology for enhancing the precision of spectroscopic analyses, particularly in complex matrices like biological fluids.

Core Principles and Mathematical Framework

Theoretical Foundation in Spectrophotometry

The 'M plus N' theory is grounded in a realistic adaptation of the Beer-Lambert law, acknowledging its limitations when applied to complex, real-world samples. While the Beer-Lambert law describes a linear relationship between absorbance and analyte concentration in an ideal scenario, complex solutions often contain scattering components, leading to significant deviations from this ideal behavior [34] [35]. The 'M plus N' theory explicitly accounts for these deviations, recognizing that the relationship between the measured spectrum and the concentration of any single component is often nonlinear due to the combined influences of other components and external variables [34].

The total analytical error (σ²total) can be conceptualized as a function of these contributing factors: σ²total = f(σ²M1, σ²M2, ..., σ²Mm-1, σ²N1, σ²N2, ..., σ²Nn) where σ²Mi represents the variance contributed by the i-th non-target component, and σ²Nj represents the variance from the j-th external interference factor [34] [35]. The theory provides strategies to minimize the composite effect of these variances.

System Classification: White, Grey, and Black Analysis

A critical conceptual contribution of the 'M plus N' theory is the classification of analytical systems based on prior knowledge of component composition:

- White Analysis System: A mixed system whose qualitative composition is completely known. In such a system, the concentration of every component can, in theory, be determined precisely from the absorption spectrum if the system is well-conditioned [35].

- Grey Analysis System: A mixed system whose qualitative composition is only partially known. This accurately describes most real-world analytical scenarios, such as blood analysis, where the major components are known, but unknown interferents or varying matrix effects may be present [35].

- Black Analysis System: A mixed system whose qualitative composition is completely unknown. In this case, one can only establish a model between component content and spectrum through empirical methods, with accuracy susceptible to many uncontrolled factors [35].

Most biological applications, including the analysis of serum creatinine, platelets, and blood glucose, are典型的Grey Analysis Systems [34] [36] [37]. The total uncertainty of all non-target components determines the measurement accuracy of the target components; reducing this total uncertainty is the key to improving accuracy [35].

Experimental Methodologies and Validation

Strategic Workflow for Implementing the 'M plus N' Theory

Implementing the 'M plus N' theory involves a structured, multi-stage process designed to systematically address different categories of error sources. The following workflow synthesizes the common strategies employed across multiple studies:

Detailed Experimental Protocols

Protocol for Serum Creatinine Determination

This protocol is adapted from research aimed at improving the accuracy of spectrophotometer determination of serum creatinine, a crucial marker for evaluating glomerular filtration rate [34].

- Sample Preparation: 248 human serum samples were obtained from a clinical setting (e.g., a hospital central lab). The creatinine concentration range should be broad (e.g., 3.85–1140.50 μmol/L) to ensure a robust model, covering both normal (53–104 μmol/L) and pathological levels [34].

- Multi-Mode Spectrum Acquisition:

- Utilize a spectrophotometer system with a high-stability light source (e.g., halogen lamp) and a sensitive detector (e.g., CCD spectrometer).

- Collect spectra at multiple optical pathlengths (e.g., 0.5 mm, 1 mm) and/or multiple integration times to increase the information volume regarding solution components [34].

- For each sample, collect spectral data across a wide range (e.g., UV-Vis-NIR) to capture overlapping absorption features of various components.

- Data Preprocessing and Wavelength Optimization:

- Employ the "one-by-one elimination method" for wavelength selection. This algorithm iteratively removes wavelengths that contribute least to the model's predictive power, reducing redundancy and minimizing the risk of overfitting [34].

- The goal is to retain wavelengths in high signal-to-noise ratio bands, as increasing the number of wavelengths in low SNR bands can degrade model performance [34].

- Modeling and Validation: