Spectrometer Optical Paths Demystified: From Core Components to Cutting-Edge Biomedical Applications

This article provides a comprehensive exploration of spectrometer optical paths, bridging fundamental principles with modern advancements for researchers and drug development professionals.

Spectrometer Optical Paths Demystified: From Core Components to Cutting-Edge Biomedical Applications

Abstract

This article provides a comprehensive exploration of spectrometer optical paths, bridging fundamental principles with modern advancements for researchers and drug development professionals. It begins by establishing the core components and classical designs that form the foundation of spectral analysis. The discussion then progresses to innovative computational and miniaturized systems, detailing their application in biopharmaceutical research for tasks like vaccine characterization and protein analysis. Practical guidance on troubleshooting common optical path issues and optimizing performance for sensitive measurements is provided. Finally, the article offers a comparative analysis of different spectrometer technologies, validating their performance to help scientists select the ideal configuration for specific biomedical applications, from high-throughput screening to trace gas detection.

The Building Blocks of Light Analysis: Core Components and Classical Optical Path Designs

Optical spectrometry is founded on the principle that matter interacts with light in predictable ways, revealing information about its composition, structure, and dynamics. When light traverses an optical path and encounters a material, several physical phenomena occur, including absorption, emission, fluorescence, and scattering. The specific interaction is governed by the relationship between the photon energy and the energy levels within the material's atoms or molecules. These interactions form the basis for analytical techniques that identify substances, quantify concentrations, and probe molecular environments. This guide examines the fundamental principles of these light-matter interactions within the context of spectrometer optical path components, providing researchers and drug development professionals with the theoretical and practical framework necessary for advanced spectroscopic analysis.

The optical path within a spectrometer is meticulously designed to maximize the information yield from these interactions. From the initial light source to the final detection, each component—including slits, collimators, gratings, and focusing mirrors—serves to prepare the light, disperse it into spectral components, and direct it to the detector with minimal aberration and maximum throughput. Understanding how this engineered path facilitates and optimizes light-matter interactions is crucial for developing new spectroscopic methods, improving instrument design, and correctly interpreting analytical data in fields ranging from pharmaceutical development to materials science.

Fundamental Interaction Mechanisms

Elastic and Inelastic Scattering

When photons encounter matter, they may be scattered with or without a change in energy. Elastic scattering, such as Rayleigh scattering, occurs when the scattered photon has the same energy as the incident photon. This process is responsible for the diffusion of light and does not involve resonance with molecular transitions. In contrast, inelastic scattering, such as Raman scattering, involves an energy shift where the scattered photon has either lost (Stokes shift) or gained (Anti-Stokes shift) energy corresponding to vibrational or rotational energy levels of the molecule. The probability of Raman scattering is significantly lower than elastic scattering, making its detection challenging but extremely informative for molecular fingerprinting [1].

The Raman effect can be described by the equation:

ν_scattered = ν_incident ± ν_vib

where ν_incident is the frequency of the incident photon, ν_scattered is the frequency of the scattered photon, and ν_vib is the frequency of a molecular vibration. The design of a Raman spectrometer's optical path must therefore efficiently collect this weak inelastically scattered light while rejecting the predominant elastically scattered component, typically through the use of high-quality notch filters [1].

Absorption and Emission

Absorption occurs when a photon's energy precisely matches the energy difference between two quantum states in a molecule (electronic, vibrational, or rotational), resulting in the photon's energy being transferred to the molecule. The resulting excited state has a finite lifetime and may return to the ground state through various pathways, including non-radiative relaxation or the emission of light. Photoluminescence, which includes fluorescence and phosphorescence, is the re-emission of light at longer wavelengths (lower energy) following absorption. The temporal characteristics and spectral distribution of emitted light provide insights into the molecular environment, energy transfer processes, and molecular conformations.

In fluorescence spectroscopy, instruments like spectrofluorometers are designed with specific optical paths to separate the excitation light from the emitted light, which is typically at a longer wavelength. Modern systems, such as the FS5 spectrofluorometer, are targeted at photochemistry and photophysics communities for studying these phenomena with high sensitivity [2]. The simultaneous collection of Absorbance, Transmittance, and Fluorescence Excitation Emission Matrix (A-TEEM), as implemented in the Veloci A-TEEM Biopharma Analyzer, provides a powerful multidimensional approach for analyzing complex biological systems like monoclonal antibodies and vaccines without traditional separation methods [2].

Nonlinear Optical Phenomena

When light intensities are sufficiently high, as with pulsed lasers, nonlinear optical effects become significant. These processes include phenomena like two-photon absorption, second harmonic generation, and four-wave mixing (FWM), where the material's response depends on the square or higher powers of the incident electric field. In nonlinear spectroscopy, a sequence of time-ordered light fields interacts with the sample, inducing a nonlinear polarization that emits coherent radiation in specific, phase-matched directions [3].

For a third-order nonlinear response (χ⁽³⁾), interaction with three light fields generates a fourth field via FWM. The amplitude and phase of this signal carry detailed information about excited-state dynamics and quantum correlations. The optical paths for nonlinear spectroscopy are complex, often requiring precise temporal and spatial overlap of multiple beams. Quantum metrology approaches using squeezed or entangled light states can enhance the sensitivity of these measurements beyond the classical shot-noise limit, enabling the detection of weaker signals or the use of lower light intensities that are less damaging to biological samples [3].

Spectrometer Optical Path Design and Components

Core Optical Components

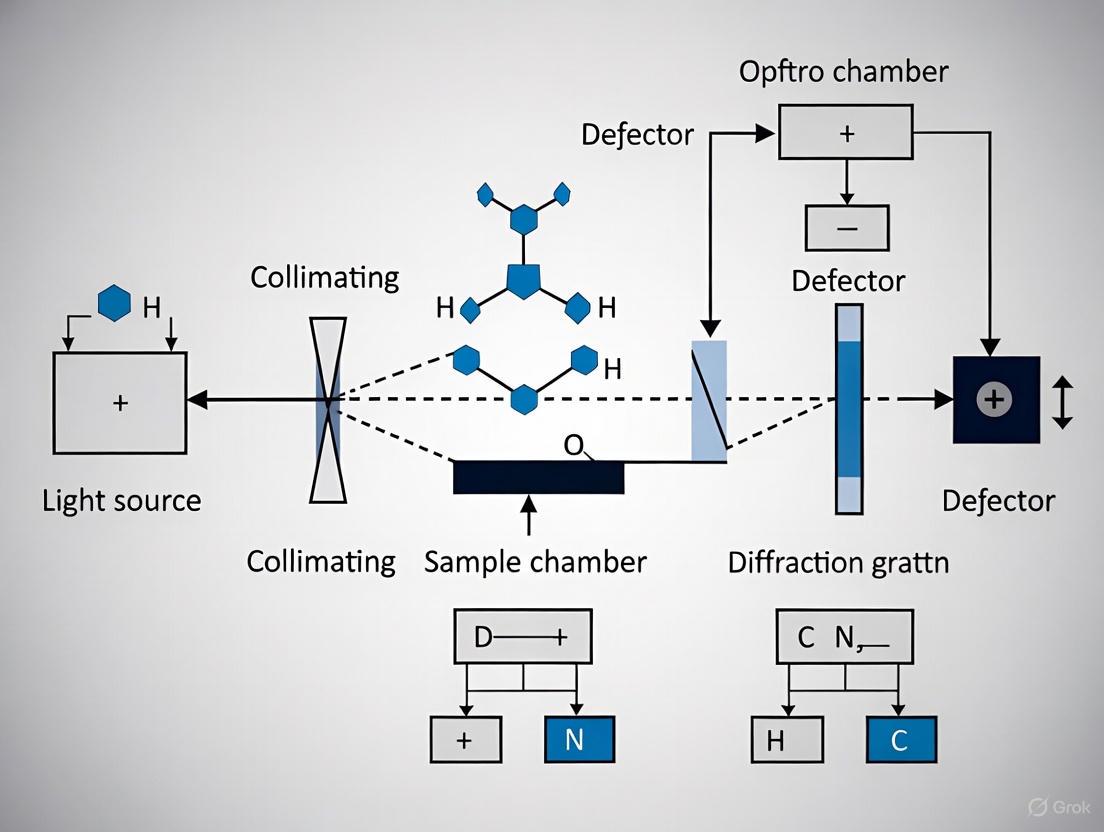

The optical path of a dispersive spectrometer consists of several key components, each serving a specific function in the process of generating and analyzing spectral data. The following diagram illustrates the fundamental layout and component relationships in a classic dispersive spectrometer.

The fundamental components of a dispersive spectrometer optical path include:

- Light Source: Provides illumination across the spectral range of interest. For Raman spectroscopy, semiconductor lasers at specific wavelengths (e.g., 785 nm) are commonly used [4] [1].

- Input Slit: Defines the entrance aperture and affects both optical resolution and signal intensity. A narrower slit provides better resolution but reduces throughput [4] [1].

- Collimation Mirror: Converts the divergent beam from the slit into a parallel (collimated) beam, ensuring uniform illumination of the dispersive element.

- Diffraction Grating: Angularly disperses different wavelengths of light according to the grating equation:

mλ = d(sinα + sinβ), wheremis the diffraction order,λis wavelength,dis the groove spacing, andαandβare the angles of incidence and diffraction, respectively [4]. - Focus Mirror: Focuses the dispersed wavelengths onto different positions of the detector array.

- Detector Array: Captures the intensity of light at different wavelengths simultaneously. Common detectors include CCD and CMOS arrays with specific spectral response characteristics [4].

Design Trade-offs and Performance Parameters

The design of a spectrometer optical path involves balancing several competing performance parameters. The key relationships governing this balance are quantified in the following table.

Table 1: Key Performance Relationships in Spectrometer Optical Path Design

| Parameter | Mathematical Relationship | Design Impact | Application Consideration |

|---|---|---|---|

| Spectral Resolution | Δλ = λ²/(2Δz) for Gaussian window (STFT) [5] |

Shorter spatial window (Δz) improves spatial resolution but worsens spectral resolution | Higher resolution needed for distinguishing closely spaced spectral features |

| Focal Length | L_F ≈ L_D / [G · (λ₂ - λ₁) · cosβ] [4] |

Higher groove density (G) or smaller detector (L_D) enables shorter focal length | Compact designs favor high groove density gratings and smaller detectors |

| Numerical Aperture | NA = sin(θ) where θ is half-angle of input cone |

Higher NA increases light throughput but requires larger optical elements | Critical for weak signal applications like Raman spectroscopy |

| Optical Resolution | Δλ_FWHM ≈ λ/(G · w_beam) where w_beam is beam width on grating [4] |

Wider illumination on grating improves resolution | Limited by physical size constraints of spectrometer |

The focal length of the focusing mirror (L_F) is a primary determinant of overall spectrometer size. As shown in Figure 2 of the search results, the focal length can vary by nearly two orders of magnitude depending on the selected grating groove density and detector size [4]. For a compact Raman spectrometer covering 800-1100 nm, using a 1800 lines/mm grating with a ¼-inch detector enables a focal length of approximately 30 mm, resulting in a footprint as small as 30×30 mm [4].

The numerical aperture (NA) determines the light-gathering ability of the spectrometer. A higher NA collects more light from the sample, which is particularly important for weak signals like Raman scattering, but requires larger optical elements to accommodate the wider light cone, creating a trade-off between compactness and sensitivity [4]. For battery-operated portable instruments, this trade-off often favors designs that maximize throughput while maintaining reasonable size constraints.

Experimental Methods and Protocols

Raman Spectrometer Implementation Protocol

The implementation of an experimental Raman spectrometer follows a systematic methodology with specific protocols for component selection, assembly, and characterization:

Light Source Selection and Characterization: Choose a laser diode with wavelength appropriate for the sample (e.g., 785 nm for reduced fluorescence in biological samples). Characterize the optical power output versus drive current using a power meter. The laser principle follows the equation:

P_optical = η · (I - I_th), whereP_opticalis output power,ηis slope efficiency,Iis drive current, andI_this threshold current [1].Optical Path Configuration: Connect the laser to a multimode optical probe using a matching sleeve. Incorporate a notch filter with the same wavelength as the pump laser to reject the elastically scattered Rayleigh light while transmitting the Raman-shifted signal. Precisely align the filter using micro-positioners [1].

Spectrometer Core Setup: Configure the spectrometer with appropriate slit width (e.g., 25 μm), grating groove density (e.g., 600 lines/mm for general purpose), and detector array. Calculate the expected spectral range using the grating equation and verify the optical resolution based on slit width and diffraction limitations [1].

Wavelength Calibration: Use a calibration source with known emission lines (e.g., argon lamp) to establish the relationship between detector pixel position and wavelength. Measure the full width at half maximum (FWHM) of narrow emission lines to determine experimental resolution [4] [1].

System Performance Validation: Record Raman spectra of standard materials with known spectra (e.g., silicon) and compare with reference databases to verify correct spectral acquisition and resolution. The RRUFF database provides reference spectra for mineral validation [1].

The following workflow diagram illustrates the key stages in implementing and validating a Raman spectrometer system.

Spectroscopic OCT Analysis Protocol

Spectroscopic Optical Coherence Tomography (sOCT) requires specialized analysis methods to extract depth-resolved spectral information. The following protocol outlines the key steps for sOCT analysis using the Short-Time Fourier Transform (STFT) method, which has been identified as optimal for hemoglobin concentration and oxygen saturation quantification [5]:

Data Acquisition: Acquire interferometric data either directly in the spatial domain (time-domain OCT) or through Fourier transformation of spectral domain data. Ensure proper sampling to satisfy the Nyquist criterion for the desired spectral range.

Spatial Windowing: Apply a spatial window

w(z, Δz)centered at depthzwith widthΔzto the interferometric signali_D(z'). Gaussian windows are commonly used for their optimal time-frequency localization properties [5].Spectral Transformation: Compute the STFT using the equation:

STFT(k,z;w) = ∫[-∞,∞] i_D(z') · w(z-z'; Δz) · e^(-ikz') dz'This generates a complex-valued spectrogram with depth and wavenumber axes [5].Spectral Analysis: Take the amplitude of the complex spectrogram to obtain the depth-resolved power spectrum. Analyze the spectral features at each depth to determine wavelength-dependent absorption and scattering properties.

Chromophore Quantification: Fit the extracted absorption spectra to known chromophore extinction coefficients (e.g., oxy- and deoxyhemoglobin) using least-squares methods to determine concentration and oxygen saturation.

The performance of sOCT analysis methods can be quantitatively compared using the mean squared difference (χ²) between input and recovered absorption coefficient spectra, with particular attention to errors in derived hemoglobin concentration and oxygen saturation [5].

Data Analysis and Interpretation Methods

Spectrum Reconstruction Techniques

The reconstruction of spectra from detector signals involves solving a linear system that describes how the spectrometer responds to different wavelengths. The generic model for a spectrometer can be represented as:

I_i = ∫ R_i(λ) T_i(λ) S(λ) dλ + η_i

where I_i is the signal intensity on the i-th detector, R_i(λ) is the detector responsivity, T_i(λ) is the optical transmittance for that detector path, S(λ) is the input power spectral density, and η_i is the measurement noise [6].

Discretizing this equation leads to the matrix formulation:

y = Gs + η

where y is the measurement vector, G is the system matrix representing the combined optical and detector response, s is the discretized spectrum vector, and η is the noise vector [6]. The spectrum reconstruction problem involves inverting this relationship to estimate s given the measurements y.

For well-conditioned square system matrices, direct inversion ŝ = G⁻¹y is possible. However, most practical systems require regularization to handle noise and ill-conditioning. Tikhonov regularization (ridge regression) solves:

ŝ = argmin ‖Gs - y‖₂² + α‖s‖₂²

where α ≥ 0 is a regularization parameter that controls the trade-off between data fidelity and solution smoothness [6]. This approach is particularly valuable for miniaturized spectrometers and integrated photonic spectrometers where the system matrix may be inherently ill-conditioned due to size constraints.

Advanced Time-Frequency Analysis

In spectroscopic OCT and related techniques, advanced time-frequency analysis methods enable the extraction of depth-resolved spectral information. The key methods include:

Short-Time Fourier Transform (STFT): Applies a fixed window to the signal before Fourier transformation, providing constant time-frequency resolution throughout the frequency spectrum. The spatial resolution Δz and spectral resolution Δk are related by Δk = 1/(2Δz) for a Gaussian window [5].

Wavelet Transform: Uses variable window sizes adapted to frequency, providing better spatial resolution at high frequencies and better spectral resolution at low frequencies. This method maintains constant relative bandwidth across the spectrum [5].

Wigner-Ville Distribution: A bilinear distribution that provides high resolution in both time and frequency domains but suffers from interference terms between signal components, making interpretation challenging [5].

Dual Window Method: Combines two STFTs with different window sizes to partially overcome the resolution trade-off inherent in single-window methods [5].

For the specific application of quantifying hemoglobin concentration and oxygen saturation, studies have concluded that STFT provides the optimal balance of spectral/spatial resolution and accurate spectral recovery, minimizing errors in the derived physiological parameters [5].

Research Toolkit: Essential Components and Reagents

Table 2: Research Reagent Solutions for Spectrometer Development and Application

| Component/Reagent | Function | Example Specifications | Application Notes |

|---|---|---|---|

| NIR Diode Laser | Excitation source for Raman spectroscopy | 785 nm, 0-100 mW adjustable power (Thorlabs LP785SF-100) [1] | Reduced fluorescence in biological samples; power adjustable for different sample types |

| Notch Filter | Rejects Rayleigh scattered laser light | Center wavelength matching laser (e.g., 785 nm) [1] | Critical for detecting weak Raman signals; requires precise positioning |

| Diffraction Grating | Disperses light into spectral components | 600-1800 lines/mm, dependent on application [4] | Higher groove density enables more compact designs; efficiency varies with wavelength |

| CCD/CMOS Detector | Captures dispersed spectrum | 1024-3648 pixels, back-thinned for enhanced NIR response [4] [1] | Cooling reduces dark noise but increases power consumption; pixel size affects resolution |

| Calibration Source | Wavelength scale calibration | Argon lamp with known emission lines [4] or white-light tungsten lamp [1] | Essential for accurate wavelength assignment; should be traceable to standards |

| Optical Fibers | Light delivery and collection | Multimode fibers for higher light throughput [1] | Enable flexible sample presentation; numerical aperture affects light collection efficiency |

| 85Rb Vapor Cell | Quantum light generation | Dense vapor for four-wave mixing [3] | Used in quantum spectroscopy for generating squeezed light with reduced noise |

| Nonlinear Crystals | Wavelength conversion and squeezing | χ⁽²⁾ materials for optical parametric amplification [3] | Enable frequency conversion and generation of non-classical light states |

The selection of appropriate components depends on the specific spectroscopic technique and application requirements. For medical diagnostics using Raman spectroscopy, the optimization of these components enables the development of systems capable of in vivo, real-time "optical biopsy" without the need for sample preparation or destructive processing [1]. For advanced research involving quantum-enhanced measurements, elements like 85Rb vapor cells and nonlinear crystals enable the generation of squeezed light that can surpass the standard quantum limit, providing superior measurement precision [3].

The continuing miniaturization of spectroscopic components, including the development of integrated photonic spectrometers, promises to further expand applications in point-of-care diagnostics, environmental monitoring, and pharmaceutical development while reducing the size, weight, power, and cost of analytical systems [6].

This technical guide provides an in-depth analysis of the core components that constitute a modern optical spectrometer, tracing the optical path from illumination to detection. Aimed at researchers and scientists in drug development and related fields, this whitepaper synthesizes current instrumentation principles to support foundational research in spectrometer optical path design. The performance of a spectrometer is governed by the intricate interplay between its constituent parts, where optimization of one component often involves trade-offs with others. Understanding these relationships is crucial for selecting appropriate instrumentation for specific applications, from routine concentration assays to advanced research in photochemistry and biopharmaceutical analysis.

An optical spectrometer is an instrument used to measure the intensity of light as a function of its wavelength or frequency [7]. It is a foundational tool in scientific research, enabling the qualitative and quantitative analysis of materials by examining their interaction with electromagnetic radiation. In pharmaceutical and biotech research, spectrometry is indispensable for tasks ranging from characterizing protein stability and vaccine components to monitoring chemical reactions and ensuring product purity [2].

The fundamental operating principle of any spectrometer involves separating incoming polychromatic light into its constituent wavelengths and quantitatively measuring the intensity of each spectral component [8]. This process occurs within a structured optical path, where each component plays a critical role in defining the instrument's final performance characteristics, including its spectral range, resolution, sensitivity, and signal-to-noise ratio. The following sections dissect these key components in detail, from the entrance slit to the detector.

The Optical Path: Component Breakdown

Entrance Slit

The entrance slit is the gateway through which light enters the spectrometer, serving as the effective object that the rest of the optical system images onto the detector. Its primary functions are to control the amount of light entering the system and to define the theoretical resolution limit of the instrument [9].

Function and Trade-offs: The width of the entrance slit is one of the main parameters determining the resolution of the spectrometer and the amount of light that can enter for processing [9]. A narrower slit provides higher spectral resolution by more strictly limiting the angles of light entering the optical system, which reduces optical aberrations and creates sharper images on the detector. However, this comes at the cost of reduced optical throughput, which can increase the measurement time required to acquire a signal with adequate signal-to-noise characteristics. Conversely, a wider slit maximizes light intake, beneficial for low-light applications, but decreases spectral resolution by allowing a broader range of wavelengths to reach each detector pixel [9] [8].

Technical Specifications: Slits are available in a wide range of widths, typically from 5 µm up to 800 µm, with heights generally standardized between 1 mm and 2 mm [9]. Due to its critical alignment requirements, the slit is often permanently mounted within the spectrometer, making the initial choice of slit width a significant decision that balances resolution and throughput needs for the intended application [9].

Collimating and Focusing Optics

Once light passes through the entrance slit, it diverges and must be collimated before reaching the dispersive element. This is typically accomplished by a collimating mirror, which creates a beam of parallel rays. After dispersion, a focusing mirror directs the separated wavelengths onto the detector plane.

Optical Configurations: The most common configuration for compact spectrometers is the Czerny-Turner design, which uses two concave mirrors—one for collimating and one for focusing [7] [10]. This design is favored for its flexibility, relatively low cost, and ability to produce a flat focal plane ideal for array detectors. Variations include the Crossed Czerny-Turner, which offers a more compact layout but may introduce more optical aberrations, and the Unfolded Czerny-Turner (or "W" configuration), which incorporates beam blocks to reduce stray light and improve the signal-to-noise ratio, particularly beneficial for low-light applications like Raman spectroscopy [7].

An alternative design is the concave holographic spectrograph, where a single concave grating performs both the dispersion and focusing functions, reducing the number of optical components and associated stray light [7].

Dispersive Element

The heart of the spectrometer is the dispersive element, which spatially separates light by its wavelength. While prisms can be used, diffraction gratings are the most common dispersive element in modern instruments [8].

Operating Principle: A diffraction grating operates on the principle of diffraction and interference. It consists of a surface with a large number of parallel, equally spaced grooves. The fundamental grating equation that governs the dispersion is: [ d\sin(Θ) = mλ ] where ( d ) is the grating spacing, ( Θ ) is the diffraction angle, ( m ) is the diffraction order, and ( λ ) is the wavelength of light [7] [8]. This relationship shows how different wavelengths are diffracted at different angles.

Grating Types and Selection:

- Ruled Gratings: Manufactured by physically etching grooves onto a reflective substrate with a diamond tool. They offer flexibility and can operate across a wide wavelength range (50 nm to 50 µm) with groove densities from 50 to 3600 grooves/mm [7].

- Holographic Gratings: Produced optically using laser-created interference patterns, resulting in more consistent groove spacing and form. This leads to superior performance in terms of lower stray light and higher fidelity, but they are typically optimized for a more fixed spectral range [7] [10].

The choice of grating involves a critical trade-off. Gratings with higher groove density (e.g., 1200-3600 lines/mm) provide higher spectral resolution but cover a narrower wavelength range. Those with lower groove density (e.g., 300-600 lines/mm) cover a broader range but with lower resolution [10].

Detector

The detector translates the optical signal at the focal plane into an electrical signal for quantitative analysis. It is the final critical component in the optical path. Detectors are broadly classified as single-channel or multichannel detectors [11].

Detector Technologies:

- Silicon CCD (Charge-Coupled Device): The most common detector for UV-Vis applications (∼200-1100 nm). Scientific-grade CCDs offer high dynamic range and uniform pixel response. They can be front-illuminated (often with a phosphor coating for UV enhancement) or back-thinned for higher quantum efficiency [11] [10].

- CMOS (Complementary Metal-Oxide-Semiconductor): Similar to CCDs but often favored for high-speed applications due to faster readout capabilities [10].

- InGaAs (Indium Gallium Arsenide): Used for near-infrared (NIR) measurements, typically from 900-2500 nm, bridging the gap where silicon becomes transparent [11] [10].

- Photomultiplier Tube (PMT): A classic single-channel detector known for high gain and excellent sensitivity for low-light-level detection, though it requires scanning to build a full spectrum [11].

Performance Enhancement: To reduce electronic noise (dark current), detectors, especially CCDs used in sensitive applications, are often thermoelectrically cooled [11] [8]. For instance, specialized spectrometers for Raman spectroscopy feature cooled detectors to maintain signal integrity during long integration times [10].

Table 1: Key Spectrometer Components and Their Performance Characteristics

| Component | Key Function | Design Trade-offs | Common Types/Specifications |

|---|---|---|---|

| Entrance Slit | Controls light input and resolution [9] | Narrow width → Higher resolution, Lower throughput [9] [8] | Width: 5-800 µm; Height: 1-2 mm [9] |

| Optics | Collimates and focuses light | Complex design → Lower stray light vs. size/complexity | Czerny-Turner, Concave Holographic [7] |

| Diffraction Grating | Spatially separates light by wavelength [7] [8] | High groove density → Higher resolution, Narrower range [10] | Ruled, Holographic; 300-3600 lines/mm [7] [10] |

| Detector | Converts light to electrical signal [11] | High sensitivity vs. speed vs. cost vs. spectral range | CCD, CMOS, InGaAs, PMT; Cooled/Uncooled [11] [10] |

The Integrated System: From Light to Data

The components described above function as an integrated system to convert incoming light into a usable spectrum. The process begins when light from a sample or source is delivered to the entrance slit, often via fiber optics [10]. The slit defines the source geometry, and the collimating mirror directs a parallel beam onto the grating. The grating then angularly disperses the light, sending different wavelengths in different directions. The focusing mirror converges these diverging beams, creating a series of images of the entrance slit—each at a different wavelength—across the focal plane where the detector is located [12].

In a multichannel spectrometer with a fixed grating, each pixel on the linear detector array corresponds to a specific narrow band of wavelengths [10]. The intensity recorded at each pixel is digitized and processed by software to generate a plot of intensity versus wavelength—the final spectrum. The calibration that maps pixel position to wavelength is a critical step, derived from the grating equation and verified using light sources with known emission lines [12].

Experimental Protocols for System Characterization

To ensure accurate and reliable data, characterizing the performance of a spectrometer system is essential. The following protocols outline key experiments.

Protocol for Spectral Resolution and Bandpass Measurement

Objective: To determine the minimum resolvable wavelength difference of the spectrometer system.

- Setup: Illuminate the entrance slit with a low-pressure spectral calibration lamp (e.g., Mercury-Argon) that emits sharp, known atomic emission lines.

- Data Acquisition: Acquire a spectrum of the calibration lamp with the spectrometer. Use integration times that avoid detector saturation.

- Analysis: Identify a well-isolated, narrow emission line in the captured spectrum. Measure the Full Width at Half Maximum (FWHM) of this peak. The FWHM (in nanometers) is the instrumental bandpass, a direct measure of its resolution capability [10].

Protocol for Signal-to-Noise (S/N) Optimization

Objective: To maximize the S/N ratio for a given measurement, a critical parameter for detecting small absorbance differences (chemometric sensitivity) [10].

- Setup: Connect a stable light source (e.g., tungsten-halogen) to the spectrometer via fiber optics.

- Baseline Measurement: Block the light source and acquire a "dark" spectrum with the same integration time as the test measurement. This captures the detector's noise floor.

- Signal Measurement: Acquire a spectrum of the light source.

- Optimization: The S/N ratio is proportional to the square root of both the signal intensity and the number of spectral averages. To optimize:

- Increase Integration Time: Lengthen the exposure until just before saturation occurs.

- Software Averaging: Acquire and average multiple spectra. The S/N improves with the square root of the number of averages (e.g., 4 averages improve S/N by 2x) [10].

- Slit Width: If adjustable, a wider slit will increase signal but decrease resolution.

Research Reagent Solutions: Essential Materials

The following table details key components and materials essential for configuring and operating a spectrometer system for research applications.

Table 2: Essential Research Reagents and Materials for Spectrometry

| Item | Function/Description | Application Example |

|---|---|---|

| Spectral Calibration Lamp | A light source with known, sharp emission lines (e.g., Hg, Ne, Ar). | Wavelength accuracy verification and system calibration [5]. |

| Stable Broadband Light Source | A source emitting continuous spectrum (e.g., Tungsten-Halogen, Deuterium). | As a reference for absorbance measurements and system alignment. |

| NIST-Traceable Standards | Filters or materials with certified optical properties. | Validating photometric accuracy (e.g., absorbance, transmittance). |

| Fiber Optic Probes | Flexible light guides for remote sampling. | Measuring samples in reactors, living tissues, or harsh environments [10]. |

| Integration Sphere | A device producing a spatially uniform light source. | Measuring diffuse reflectance of scattering samples. |

| Ultrapure Water System | Provides water free of chemical and particulate contaminants. | Sample preparation, dilution, and cleaning of cuvettes to prevent stray light [2]. |

Visualizing Spectrometer Workflows

Optical Path and Signal Processing

The following diagram illustrates the physical path of light through a Czerny-Turner spectrometer and the subsequent electronic signal processing.

Resolution vs. Throughput Trade-off

This diagram visualizes the critical engineering trade-off governed by the entrance slit width.

The performance of a modern optical spectrometer is a direct consequence of the careful selection and integration of its core components: the entrance slit, collimating and focusing optics, diffraction grating, and detector. As evidenced by the latest instrumentation reviews, the field continues to evolve with trends toward miniaturization, higher sensitivity, and greater application-specific customization, such as systems dedicated to biopharmaceutical analysis [2]. A deep understanding of the optical path and the inherent trade-offs between resolution, sensitivity, and speed empowers researchers to make informed decisions when selecting or configuring a spectrometer. This foundational knowledge is crucial for leveraging this powerful analytical technology to its fullest potential in drug development and scientific research.

Spectrometers are indispensable instruments across numerous scientific and industrial fields, from chemical analysis and biomedical research to environmental monitoring and pharmaceutical development. Their fundamental purpose is to dissect light into its constituent wavelengths, providing a fingerprint of the matter with which it has interacted. The optical geometry at the heart of any spectrometer is the primary determinant of its performance characteristics, including spectral range, resolution, signal-to-noise ratio, and physical footprint.

This whitepaper provides an in-depth technical guide to three foundational spectrometer configurations: the Czerny-Turner, Fourier Transform Infrared (FT-IR), and Littrow geometries. Understanding the principles, advantages, and limitations of these classical optical paths is crucial for researchers, scientists, and drug development professionals seeking to select, optimize, or develop spectroscopic methods for their specific applications. The content is framed within the broader context of spectrometer optical path component research, emphasizing the design trade-offs inherent in achieving desired analytical performance.

Core Principles and Optical Geometries

Czerny-Turner Configuration

The Czerny-Turner (C-T) configuration is a workhorse in spectroscopy, renowned for its excellent performance over a broad spectral range [13]. It is a prime example of a design following the fixed geometry convention in the grating equation, denoted as Φ = α + β, where Φ is the fixed deviation angle and α and β are the angles of incidence and diffraction, respectively [13].

As illustrated in Diagram 1, a typical C-T system comprises an entrance slit, a spherical collimating mirror, a planar diffraction grating, a spherical focusing mirror, and a detector array [13] [14]. Light enters through the slit and is collimated by the first mirror. This collimated beam strikes the diffraction grating, where it is separated into its constituent wavelengths. The diffracted light is then focused by the second spherical mirror onto the detector array [13]. The two-mirror design allows for precise control of optical aberrations, leading to high-quality spectral data.

A significant challenge in C-T designs is managing off-axis aberrations such as coma, astigmatism, and field curvature, which worsen as the off-axis angle increases [14]. To meet the demand for portable, high-performance spectrometers, advanced aberration correction methods have been developed. These include using the Shafer equation to correct coma at a central wavelength, optimizing the grating position to correct field curvature, and introducing tailored optical elements like tilt and wedge cylindrical lenses to eliminate astigmatism across the entire spectral band [14].

FT-IR Configuration

Fourier Transform Infrared (FT-IR) spectroscopy operates on a fundamentally different principle than dispersive spectrometers. Instead of spatially separating wavelengths, it uses an interferometer to encode all spectral information simultaneously into an interference pattern, which is then converted into a spectrum using a Fourier Transform [15] [16].

The most common interferometer is the Michelson type, consisting of a beam splitter, a fixed mirror, and a moving mirror [16]. Broadband IR light from the source is split into two beams. These beams are reflected from the fixed and moving mirrors, respectively, and recombine at the beam splitter, creating an interference pattern known as an interferogram that is directed to the detector [15] [16]. The central peak of this interferogram, the centerburst, occurs at the Zero Path Difference (ZPD), the point where the optical paths of the two beams are equal and all wavelengths interfere constructively [16]. The spectral resolution of an FT-IR is inversely proportional to the maximum Optical Path Difference (OPD) achieved by the moving mirror [16].

Modern FT-IR designs often enhance stability and performance. For instance, some systems employ a compact, highly stable double-moving mirror swing interferometer with cube corner reflectors to generate OPD. Cube corner reflectors reduce alignment sensitivity and allow for a larger OPD in a smaller physical space, making the interferometer more robust and compact [17].

FT-IR spectrometers hold several inherent advantages over dispersive instruments, known as the Felgett (multiplex) advantage, Jacquinot (throughput) advantage, and Connes' advantage [15]. These contribute to higher signal-to-noise ratios, faster acquisition times, better spectral resolution, and superior wavelength accuracy [15] [16].

Littrow Configuration

The Littrow configuration is a specific alignment for optical systems containing a reflective grating wherein the grating is oriented so that the diffracted light for a particular order (often the first order) travels back along the direction of the incident beam [18]. This configuration is useful in applications such as laser resonators, where the grating can act as one of the cavity mirrors, and in certain monochromators and spectrometers [18].

In the Littrow condition, the angles of incidence and diffraction are approximately equal (α ≈ -β), meaning the light is diffracted directly back on itself [13] [18]. This leads to a very straight, compact optical path. A common spectrometer design that utilizes the Littrow condition is the Lens Grating Lens (LGL) configuration [13]. In an LGL system, light passes through a collimation lens, a transmission diffraction grating, and a focusing lens before reaching the detector. This transmission-based design is typically more compact and cost-effective than mirror-based systems like the C-T, though it may offer less control over certain aberrations [13].

Table 1: Key Characteristics of Classical Spectrometer Geometries

| Feature | Czerny-Turner | FT-IR | Littrow (LGL Example) |

|---|---|---|---|

| Dispersion Method | Diffraction Grating (Reflection) | Interferometry (Michelson) | Diffraction Grating (Transmission) |

| Typical Components | Entrance slit, two spherical mirrors, planar grating | Beam splitter, fixed & moving mirrors, detector | Entrance slit, two lenses, transmission grating |

| Optical Path | Folded (fixed deviation angle) | Interferometric | Straight, compact |

| Primary Advantage | Excellent aberration control, broad range | High speed, SNR, and throughput (Multiplex & Throughput advantages) | Compact size, simplicity |

| Common Spectral Range | UV-VIS-NIR | NIR-FIR (0.77 - 200 µm reported) [17] | VIS-NIR |

| Reported Resolution | 0.01 nm demonstrated [14] | 0.25 cm⁻¹ demonstrated [17] | Varies with miniaturization |

Comparative Performance and Advanced Design

Quantitative Performance Metrics

The selection of an optical geometry directly impacts critical performance parameters. The following table summarizes key metrics as demonstrated in recent research.

Table 2: Reported Performance Metrics from Recent Spectrometer Designs

| Configuration | Spectral Range | Resolution | Signal-to-Noise Ratio (SNR) | Key Innovation |

|---|---|---|---|---|

| Ultra-Wide-Band FT-IR [17] | 0.770 – 200 µm (50 - 13,000 cm⁻¹) | 0.25 cm⁻¹ | > 50,000:1 | Double-moving mirror swing interferometer; switchable sources/detectors |

| Aberration-Corrected Crossed C-T [14] | 440 – 640 nm | 0.2 nm (@546.07 nm) | Not Specified | Tilt/wedge cylindrical lens for astigmatism correction; sine-constrained calibration |

| Portable Grating Spectrometer [19] | 3800 cm⁻¹ range | 1.4 cm⁻¹ | Low noise (no cooling required) | Based on fast F0.95/50 mm camera lens; volume < 2 L |

| Planar Waveguide C-T [20] | 450 – 750 nm | < 4 nm | Optimized via sagittal plane design | Hollow planar waveguide for miniaturization; separate tangential/sagittal design |

Advanced Design Considerations and Aberration Correction

Achieving high performance requires careful attention to optical design and the correction of aberrations. For Czerny-Turner systems, a key advancement is the move beyond simple spot diagram evaluation to criteria that balance luminous flux and aberration (LFAB), control the variation of the Airy disk at imaging points (ADVI), and ensure optical-detector resolution matching (ORDM) [21]. This holistic approach allows designers to increase the numerical aperture at the slit (e.g., to 0.11) to collect more light for weak signal detection while still maintaining controlled aberrations and a uniform performance across the spectral band [21].

For miniaturization, the hollow planar waveguide spectrometer (HPWS) based on the C-T structure represents a significant innovation. In this design, the light beam travels between two parallel mirrors, folding the optical path. The design is separated into the tangential plane (affecting resolution) and the sagittal plane (affecting energy throughput). The height of the waveguide is a critical parameter, as it determines the number of reflections and thus the energy loss, requiring careful optimization to ensure the detector receives sufficient optical flux [20].

In FT-IR systems, the design of the infrared light source and its collimation is crucial. One design uses a secondary imaging scheme with an ellipsoidal reflector to image the radiation source onto a variable diaphragm, which is then collimated by an off-axis parabolic mirror. This scheme tightly controls the beam divergence angle (e.g., to 4 mrad), which is essential for achieving high spectral resolution (e.g., 0.25 cm⁻¹) [17].

Experimental Protocols and Methodologies

Protocol for Aberration Correction in a Czerny-Turner Spectrometer

This protocol is adapted from methods used to achieve high resolution and imaging quality in portable spectrometers [14].

Objective: To correct for coma, astigmatism, and field curvature in a crossed Czerny-Turner spectrometer to achieve a target resolution of 0.2 nm or better.

Materials and Reagents:

- Optical Bench or Breadboard: A stable, vibration-damped platform.

- Spectrometer Components: Entrance slit, spherical collimating mirror, planar diffraction grating (e.g., 1800 grooves/mm), spherical focusing mirror, and a CCD detector.

- Aberration Correction Element: A cylindrical lens with adjustable tilt and wedge angles.

- Light Sources for Calibration: Mercury-argon (Hg-Ar) lamp or other sources with known, sharp emission lines (e.g., 546.07 nm).

- Alignment Tools: He-Ne laser, pinholes, and power meter.

Procedure:

- Initial Assembly: Mount the optical components (slit, collimating mirror, grating, focusing mirror) in the crossed C-T layout according to the designed angles and distances.

- Coma Correction: Adjust the angles of the collimating and focusing mirrors to satisfy the Shafer coma-free condition for the central wavelength [14].

- Field Curvature Correction: Translate the diffraction grating along the optical axis to find the position that minimizes field curvature on the detector plane.

- Astigmatism Correction: a. Insert the cylindrical lens between the focusing mirror and the detector. b. Systematically adjust both the tilt and wedge angles of the cylindrical lens while illuminating the system with a known spectral line. c. Iterate until the spot size on the detector is minimized and symmetric across the spectral range, indicating eliminated astigmatism.

- Wavelength Calibration: a. Illuminate the slit with the Hg-Ar calibration lamp. b. Record the positions of known spectral lines on the CCD. c. Establish the relationship between pixel position and wavelength using a sine-constrained least squares fitting algorithm, which has been shown to achieve accuracy of 0.01 nm [14].

- Validation: Measure the full width at half maximum (FWHM) of a narrow emission line (e.g., 546.07 nm) to confirm the spectral resolution meets the 0.2 nm target.

Protocol for Characterizing an Ultra-Wide-Band FT-IR Spectrometer

This protocol outlines the key steps for verifying the performance of a wide-band FT-IR system, based on a design covering from the visible to the far-infrared [17].

Objective: To verify the spectral range, resolution, and signal-to-noise ratio of an ultra-wide-band FT-IR spectrometer.

Materials and Reagents:

- FT-IR Spectrometer: Equipped with a double-moving mirror interferometer, and switchable sources (e.g., air-cooled SiC for MIR/FIR, halogen tungsten for NIR) and detectors.

- Beam Splitter(s): Optimized for different spectral regions (e.g., NIR, MIR, FIR).

- Nitrogen Purge System: To remove atmospheric water vapor and CO₂.

- Reference Materials: Polystyrene film, known gas cells (e.g., CO), and a mid-infrared linearity standard.

- Software: Capable of performing Fast Fourier Transform (FFT), apodization, and phase correction.

Procedure:

- System Configuration: a. Select the appropriate combination of light source, beam splitter, and detector for the initial target spectral band (e.g., MIR). b. Allow the system to warm up and purge the optical compartment with dry nitrogen for at least 30 minutes.

- Background Measurement: Collect a high-SNR background interferogram with no sample in the beam path. This will be used to ratio against sample measurements.

- Spectral Range Verification: a. For NIR Verification: Switch to the halogen lamp source and a corresponding detector. Acquire a spectrum of a known NIR-reflective material. b. For FIR Verification: Switch to the SiC source and a dedicated FIR detector. Acquire a spectrum of a polyethylene window or another FIR-transparent material. c. Confirm that the measured spectra show meaningful signal levels at the extremes of the claimed range (e.g., 0.77 µm and 200 µm) [17].

- Resolution Measurement: a. Introduce a gas cell containing a low-pressure gas with sharp, well-defined rotational-vibrational lines (e.g., CO) into the sample compartment. b. Acquire an interferogram with a long optical path difference (e.g., corresponding to a resolution of 0.25 cm⁻¹). c. Transform the interferogram and examine the FWHM of an isolated gas line. The resolution is the FWHM of this line in cm⁻¹.

- Signal-to-Noise Measurement: a. Using the standard polystyrene film, acquire a high-quality reference transmission spectrum. b. Collect a series of rapid, single-scan spectra of the same sample under identical conditions. c. At a specific wavelength where the polystyrene has a strong peak (e.g., 1600 cm⁻¹), calculate the RMS noise in a nearby region with no spectral features. The SNR is the peak height divided by the RMS noise. A ratio of 50,000:1 is achievable [17].

Essential Research Reagent Solutions

The following table details key components and materials essential for the development and operation of high-performance spectrometers, as referenced in the experimental protocols and literature.

Table 3: Key Research Reagent Solutions for Spectrometer Development

| Item | Function / Application | Technical Notes |

|---|---|---|

| Holographic Reflection Grating | Dispersive element in C-T spectrometers; separates light by wavelength. | 1800 g/mm used in aberration-corrected design; line density and blaze angle determine efficiency and range [14]. |

| Air-Cooled Silicon Carbide (SiC) Source | High-intensity broadband infrared emitter for MIR/FIR regions. | Spectral range 50–9600 cm⁻¹; air-cooling offers lower cost/power consumption vs. water or Peltier cooling [17]. |

| Halogen Tungsten Lamp | Bright, continuous light source for NIR and Visible regions. | Spectral range 3000–25,000 cm⁻¹; used as switchable source in wide-band FT-IR [17]. |

| Gold-Coated Optics (Mirrors, Cube Corners) | High-reflectivity mirrors and retroreflectors for IR light. | Gold film reflectivity >90% across NIR, MIR, FIR; K9 glass is a common, low-cost substrate [17]. |

| Mercury-Argon (Hg-Ar) Calibration Lamp | Wavelength standard for accurate calibration of dispersive spectrometers. | Provides multiple, narrow emission lines at known wavelengths across a broad spectrum [14]. |

| Cylindrical Lens (with Tilt/Wedge Adjustment) | Active optical element for astigmatism correction in C-T systems. | Placed between focusing mirror and detector; tilt and wedge angles are optimized to eliminate astigmatic focus [14]. |

| Internal Reflection Element (IRE) | Core component of Attenuated Total Reflectance (ATR) sampling in FT-IR. | Diamond, ZnSe, or Ge crystals enable direct analysis of solids/liquids with minimal sample prep [15]. |

| Nitrogen Purge Gas | Inert gas for purging optical path to remove atmospheric absorbers. | Eliminates spectral interference from water vapor and CO₂, crucial for quantitative IR analysis [15]. |

Diagrammatic Representations

The Role of Optical Path Length in Resolution and Sensitivity

In spectrometer design and application, the optical path length is a fundamental parameter that directly influences two critical performance metrics: resolution and sensitivity. Resolution defines an instrument's ability to distinguish between closely spaced spectral features, while sensitivity determines its capacity to detect weak signals. Within the context of optical path components research, understanding the role of path length is essential for optimizing spectrometer configurations for specific applications, from drug development to material characterization. This technical guide explores the underlying principles, quantitative relationships, and practical methodologies for leveraging optical path length to achieve desired analytical performance, providing researchers and scientists with a framework for informed instrument selection and experimental design.

Fundamental Principles of Optical Path in Spectrometry

The optical path in a spectrometer is the route light travels from the source, through various optical components, to the detector [22]. Its design lays out how light is collected, collimated, dispersed, and finally focused onto the detection system. The optical path length, specifically, can refer to the physical distance light travels within the instrument or, in sample analysis, the distance light travels through the sample itself. Both interpretations have a profound impact on the quality of the spectral data obtained.

A core principle governing light behavior in these systems is Fermat's principle, which states that light travels the path of least time [22]. Engineers use this principle to predict how light bends through lenses and at interfaces, ensuring consistent performance across wavelengths. The manipulation of light along the optical path involves three key stages:

- Collimation: Converting diverging light from the entrance slit into a parallel beam for even interaction with dispersive elements [22].

- Dispersion: Using prisms or diffraction gratings to split collimated light into its constituent wavelengths [22].

- Focusing: Using lenses or mirrors to focus the dispersed spectrum onto the detector plane [22].

The careful alignment of each stage is crucial for maintaining sharp wavelength resolution. Misalignment can introduce aberrations, leading to overlapping peaks or blurred spectra [22].

Optical Path Length and Spectral Resolution

Spectral resolution (R) is formally defined as R = λ/Δλ, where λ is the wavelength and Δλ is the smallest resolvable wavelength difference [23]. The optical path length within the spectrometer's optical bench is a key determinant of resolution.

The Grating Equation and Resolving Power

The resolution in diffraction-based systems is governed by the grating equation [22] [4]: [ d(\sin \alpha + \sin \beta) = m\lambda ] where (d) is the grating period, (\alpha) is the angle of incidence, (\beta) is the diffraction angle, and (m) is the diffraction order. The resolving power of a grating is given by (R = mN), where (N) is the total number of grooves illuminated by the light beam [24]. A longer optical path, often associated with a longer focal length ((LF) in Figure 1), allows for a wider beam width ((w{beam})) to illuminate more grating grooves. Equation 3 from the research demonstrates how this beam width limits the theoretical resolution [4]: [ \Delta\lambda{FWHM} = \frac{\lambda}{w{beam} G m} ] This shows that a wider beam, enabled by a longer focal length, directly improves the spectral resolution.

Focal Length and Instrument Size

The focal length of the spectrometer's focusing mirror is a primary factor in its physical size and resolution. The relationship between focal length ((LF)), detector length ((LD)), grating groove density ((G)), and wavelength range ((\lambda2 – \lambda1)) is approximated by [4]: [ LF \approx \frac{LD}{(\lambda2 - \lambda1) G \cos \beta} ] This indicates that for a fixed wavelength range and detector, a higher groove density grating can achieve the same resolution with a shorter focal length, enabling more compact spectrometer designs [4]. Figure 2 illustrates the nearly two-order-of-magnitude difference in spectrometer size achievable through different combinations of detector size and grating density.

Optical Path Length and Sensitivity

Sensitivity describes a spectrometer's ability to detect weak signals. The relationship between optical path length and sensitivity is particularly critical in absorption spectroscopy of samples.

Path Length in Absorption Spectroscopy

According to the Beer-Lambert law, the absorbance (A) of a sample is directly proportional to the concentration (c) of the analyte and the optical path length (l) through the sample: (A = \epsilon c l), where (\epsilon) is the molar absorptivity. A longer path length increases the measured absorbance, thereby improving the sensitivity for detecting low-concentration analytes [25]. This is especially valuable in Near-Infrared (NIR) spectroscopy of aqueous solutions, where analyte absorption is weak compared to the strong absorption bands of water [25].

The Signal-to-Noise Ratio and Detection Limits

The ultimate limit of detection (LOD) for an analyte is governed by the signal-to-noise (S/N) ratio [25]. While a longer sample path length increases the analytical signal (absorbance), research shows its effect on the S/N ratio is complex and must be optimized. A study investigating the detection of potassium hydrogen phthalate (KHP) in water found that optical path length is a key factor affecting the S/N ratio and thus the LOD [25]. However, an excessively long path can lead to signal loss if the dynamic range of the detector is exceeded. Therefore, the optimal path length balances sufficient signal enhancement against potential noise amplification or signal loss.

Quantitative Relationships and Trade-offs

The interplay between resolution, sensitivity, and optical path involves inherent trade-offs that must be managed during spectrometer design and experimental configuration.

Table 1: Impact of Spectrometer Component Changes on Performance

| Component | Parameter Change | Effect on Resolution | Effect on Sensitivity |

|---|---|---|---|

| Entrance Slit | Narrower Width | Increases [24] [23] | Decreases (less light) [24] [23] |

| Diffraction Grating | Higher Groove Density | Increases [24] [10] | Decreases (more light dispersion) [24] |

| Optical Bench Focal Length | Longer Focal Length | Increases [4] | Decreases (lower throughput) |

| Sample Path Length | Longer Path Length | No Direct Effect | Increases (for absorption) [25] |

Table 2: Experimental Limit of Detection (LOD) for KHP at Different Path Lengths [25]

| Path Length (mm) | Aperture Type | Co-added Scans | Approximate LOD (ppm) |

|---|---|---|---|

| 1 | BRM2065 | 128 | ~300 |

| 2 | BRM2065 | 128 | ~200 |

| 5 | BRM2065 | 128 | ~150 |

| 10 | BRM2065 | 128 | >500 |

The data in Table 2, derived from a study on KHP detection, demonstrates that an optimal path length exists. While increasing the path from 1 mm to 5 mm improved the LOD, further increasing it to 10 mm was detrimental, likely due to excessive absorption by the solvent leading to a poor S/N ratio [25].

Experimental Protocols for Path Length Optimization

The following detailed methodology, adapted from a published study, provides a framework for empirically determining the optimal optical path length for a given application [25].

Determination of Optimal Sample Path Length for Aqueous Solutions

6.1.1 Research Objective: To determine the optimal optical path length that minimizes the Limit of Detection (LOD) for a specific analyte in an aqueous solution using transmission NIR spectroscopy and Partial Least Squares (PLS) calibration.

6.1.2 Materials and Reagents:

- Analyte: Potassium Hydrogen Phthalate (KHP).

- Solvent: Purified water.

- Stock Solution: 10,000 ppm KHP in purified water.

- Sample Set: 38 samples spanning 1 to 10,000 ppm, prepared via serial dilution from the stock solution.

- Cuvettes: Rectangular quartz cells with precisely defined path lengths (e.g., 1, 2, 5, and 10 mm).

- Spectrometer: FT-NIR spectrometer (e.g., Bruker Matrix-F) equipped with a fiber optic cable attachment and a TE-InGaAs detector.

- Software: For spectral acquisition (e.g., OPUS) and multivariate analysis (e.g., MATLAB).

6.1.3 Procedure:

- System Setup: Configure the FT-NIR spectrometer with a resolution of 8 cm⁻¹. Connect the fiber optic cable and install the temperature-controlled cuvette holder, setting it to 30.0 ± 0.1 °C.

- Baseline Collection: For each path length being tested, collect a reference spectrum using the corresponding empty quartz cell or a cell filled with purified water.

- Spectral Acquisition: For each KHP concentration and at each path length (e.g., 1, 2, 5, 10 mm): a. Place the sample in the temperature-controlled cuvette holder. b. Acquire transmission spectra over the relevant NIR range (e.g., 6300–5800 cm⁻¹, a spectral window between strong water absorptions). c. Collect multiple replicate spectra (e.g., 3 replicates). d. Repeat the measurements under different conditions of co-added scan times (e.g., 8, 16, 32, 64, 128) and aperture size to vary light intensity.

- Data Pre-processing: Apply necessary spectral pre-treatments to the absorbance data. The study compared no pre-treatment, linear baseline correction, Multiplicative Scattering Correction (MSC), and 2nd derivative (Savitzky-Golay) methods [25].

- Multivariate Calibration: Develop a PLS regression model for each path length condition, using the spectral data in the 6300–5800 cm⁻¹ range to predict KHP concentration. Use a method like leave-one-out cross-validation to determine the optimal number of latent variables and avoid overfitting [25].

- LOD Calculation: Calculate the LOD for each experimental condition according to IUPAC-consistent methods for PLS calibration, which account for types I and II errors and uncertainties in the slope and intercept of the calibration model [25].

- Optimal Path Identification: Compare the calculated LOD values across all path lengths. The path length yielding the lowest LOD represents the optimal compromise between signal enhancement and noise for that specific analyte-solvent system.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Materials and Reagents for Spectroscopic Path Length Experiments

| Item | Function / Rationale |

|---|---|

| Potassium Hydrogen Phthalate (KHP) | A common standard analyte for validating methods in aqueous solution; used for its well-defined C-H overtone bands in the NIR [25]. |

| Precision Quartz Cuvettes | Provide a range of exact optical path lengths (e.g., 1, 2, 5, 10 mm) for transmission measurements; quartz is transparent in UV-Vis-NIR ranges. |

| FT-NIR Spectrometer | Enables high-throughput, high-signal-to-noise spectral acquisition across a broad NIR range, suitable for detecting weak overtone and combination bands [25]. |

| TE-InGaAs Detector | Offers high sensitivity in the NIR region (e.g., 800-2500 nm), which is essential for detecting weak signals from aqueous solutions [25]. |

| Partial Least Squares (PLS) Software | Multivariate analysis tool essential for extracting quantitative analyte information from complex, overlapping spectra typical of NIR data [25]. |

Visualization of Concepts and Workflows

The following diagrams illustrate the core relationships and experimental process discussed in this guide.

Diagram 1: Optical Path Length Relationships. This diagram shows how various design parameters influence optical path length and how path length, in turn, differentially affects resolution and sensitivity, creating a fundamental engineering trade-off.

Diagram 2: Path Length Optimization Workflow. This flowchart outlines the experimental protocol for empirically determining the optical path length that provides the lowest Limit of Detection for a specific analytical application.

The optical path length is a pivotal parameter in spectrometer design and operation, exerting a direct and often competing influence on resolution and sensitivity. A longer path within the optical bench enhances resolution by enabling finer wavelength discrimination at the detector, while a longer path through a sample boosts sensitivity for absorption measurements. However, these benefits are subject to practical constraints and trade-offs, necessitating a careful balance tailored to the specific analytical goal. As demonstrated in experimental research, an optimal path length exists that minimizes the detection limit, beyond which performance degrades. For researchers and scientists, a deep understanding of these principles is not merely academic but is an essential component of designing robust experiments, selecting appropriate instrumentation, and pushing the boundaries of what is detectable in fields ranging from pharmaceutical development to environmental monitoring.

The paraboloid of revolution crystal spectrometer represents a significant advancement in high-resolution X-ray spectroscopy, particularly for diagnostic precision in demanding fields such as inertial confinement fusion (ICF) research [26]. This innovative design simultaneously addresses three critical challenges in spectroscopic instrumentation: achieving high spectral resolution, maintaining high photon collection efficiency, and ensuring strict equal optical path conditions across a broad operational energy range [26] [27]. The fundamental operating principle leverages a curved crystal geometry configured to a paraboloid of revolution surface, which effectively suppresses spherical aberrations and ensures that all diffracted rays from the source to the detector traverse identical path lengths [26]. This equal-path property is crucial for minimizing phase differences and intensity attenuation, thereby enhancing spectral fidelity and imaging clarity [26]. For researchers and drug development professionals, understanding this technology is essential for pushing the boundaries of analytical capabilities in material characterization and elemental analysis.

Theoretical Foundation and Operating Principle

Fundamental Optical Geometry

The optical configuration of the paraboloid of revolution spectrometer is built upon a specific geometrical relationship between the X-ray source, the curved crystal, and the detector plane, as shown in the diagram below:

Figure 1: Optical geometry of the paraboloid of revolution spectrometer showing the equal-path relationship between source, crystal, and detector.

In this configuration, the X-ray source (S) is positioned at the focus of the parabolic crystal surface [26]. Incident rays from S undergo diffraction at point C on the crystal before reaching detection point P. The unique property of this arrangement is that every point on the crystal surface maintains an equal distance to both the directrix (a fixed reference line) and the X-ray source, establishing the fundamental equal optical path condition expressed mathematically as |SC| + |CP| = D + p, where D is the source-detector separation distance and p is the parabolic parameter [26].

Bragg Diffraction and Sagittal Focusing

The wavelength dispersion in this spectrometer follows Bragg's diffraction law, which governs X-ray interaction with crystalline materials [26]. The fundamental relationship is expressed as:

nλ = 2d sin θ

Where n is the diffraction order (typically n=1 for first-order diffraction), λ is the X-ray wavelength, d is the crystal interplanar spacing, and θ is the angle between the incident X-rays and the crystal plane [26]. For X-ray energy E, this relationship becomes E = hc/(2d sin θ), where h is Planck's constant and c is the speed of light [26].

To enhance photon collection efficiency without compromising resolution, the design incorporates sagittal focusing in the direction perpendicular to the dispersion plane [26]. This is achieved by constructing the crystal as a paraboloid of revolution rather than a simple parabolic curve. In the sagittal direction, an arc is constructed where all points share the same Bragg angle, ensuring that incident rays from the source diffracted at any point on this arc converge precisely at the detection point P [26]. This sagittal focusing mechanism enables simultaneous high resolution and high photon collection efficiency, even for relatively large source sizes.

Performance Specifications and Quantitative Data

The paraboloid of revolution spectrometer achieves remarkable performance characteristics, as verified through both simulation and experimental validation. The table below summarizes key performance metrics across different evaluation methods:

Table 1: Performance Specifications of Paraboloid of Revolution Spectrometer

| Performance Parameter | Simulation Results | Experimental Results | Testing Conditions |

|---|---|---|---|

| Spectral Resolution (E/ΔE) | >6,600 | >2,800 at Cu Kα1 | Extended source (150 μm diameter) [26] |

| Sagittal Spot Diameter | <0.1 mm | Not specified | Tight focusing capability [26] |

| Energy Range | 7.7-8.3 keV | Validated in similar range | Broad operational bandwidth [26] |

| Key Advantage | Equal optical path maintained | High photon collection efficiency | For large source sizes [26] |

These performance metrics demonstrate the spectrometer's capability to maintain exceptional resolution while efficiently collecting photons from extended sources, addressing a fundamental limitation in traditional X-ray spectroscopy where spectral broadening typically occurs with larger source sizes [26].

Experimental Protocol and Validation Methodology

Experimental Setup and Workflow

The experimental validation of the paraboloid of revolution spectrometer follows a systematic workflow to verify both its theoretical performance predictions and practical utility:

Figure 2: Experimental workflow for validating paraboloid of revolution spectrometer performance.

Instrument Configuration: The spectrometer is configured with the X-ray source positioned at the focus of the parabolic crystal structure. Detectors are precisely aligned perpendicular to the incident beam in the meridional direction to optimize optical path alignment [26].

Source Preparation: Experimental validation typically employs a copper X-ray tube source with a controlled diameter of approximately 150 μm to simulate extended source conditions relevant to practical applications [26].

Data Acquisition: X-ray spectra are collected across the operational energy range (7.7-8.3 keV), with careful measurement of spectral line profiles, particularly at characteristic emission lines such as Cu Kα1 [26].

Performance Quantification: The spectral resolution is calculated from the measured full width at half maximum (FWHM) of characteristic emission lines using the relationship E/ΔE, where ΔE is determined from the FWHM of the spectral line [26].

Research Reagent Solutions and Essential Materials

Table 2: Essential Research Materials for Paraboloid Spectrometer Implementation

| Component/Material | Technical Function | Application Context |

|---|---|---|

| Curved Crystal Element | Diffracts and disperses X-rays via Bragg reflection; paraboloid shape ensures equal optical path | Core dispersive element [26] |

| X-ray Source (Cu target) | Generates characteristic X-ray emissions (Cu Kα at ~8 keV) for system calibration | Experimental validation [26] |

| High-Precision Detectors | Measures position and intensity of diffracted X-rays; perpendicular to incident beam | Spectral data acquisition [26] |

| Alignment Fixtures | Maintains precise geometrical relationships between source, crystal, and detector | Critical for equal-path condition [26] |

| Computational Simulation Tools | Models performance and predicts resolution before physical implementation | Design optimization [26] |

Comparative Analysis with Alternative Spectrometer Designs

The paraboloid of revolution spectrometer addresses several limitations present in conventional curved-crystal spectrometer designs:

Cylindrically bent crystals, while enhancing photon collection efficiency across broad spectral bands, are primarily suitable only for small-sized X-ray sources [26].

Spherically and toroidally bent crystals offer moderate spectral focusing and can achieve high resolution, but their performance is constrained to narrow energy ranges, limiting their utility for broad-spectrum applications [26].

Elliptical surface crystals provide point-to-point focusing across an extended range of Bragg angles while maintaining equifocal conditions. However, this configuration suffers from two fundamental limitations: (1) spectral lines become inseparable at the focal point due to complete spatial overlap, and (2) detector positions displaced from the focal plane deviate from strict equal optical path conditions [26].

Sinusoidal spiral-bent crystals enable high-resolution spectroscopy across a broad spectral range but introduce significant optical path differences for distinct photon energies. Additionally, their optical configuration (source-crystal-detector) is complex, and the positions of detector points relative to the crystal are constrained [26].

The paraboloid of revolution design effectively overcomes these limitations by maintaining strict equal optical path conditions across the entire operational energy range while simultaneously providing sagittal focusing for enhanced photon collection [26].

Implications for Spectrometer Optical Path Components Research

The development of the paraboloid of revolution spectrometer represents a significant advancement in the broader field of spectrometer optical path components research, demonstrating several important principles:

Equal Optical Path Optimization: This design validates the importance of maintaining equal optical path lengths for all diffracted rays propagating from source to detector. This equality ensures that all rays reach the detector with the same phase and consistent light intensity attenuation, reducing the reduction of X-ray intensity due to time broadening caused by the grating [26].

Aberration Control: The parabolic surface effectively suppresses spherical aberrations and can eliminate other aberrations caused by optical path differences, such as coma, thereby enhancing imaging clarity and spectral fidelity [26].

Geometrical Configuration Advantages: The spectrometer's unique configuration, with detectors precisely perpendicular to the incident beam in the meridional direction, optimizes optical path alignment and simplifies the mechanical design compared to more complex configurations like the sinusoidal spiral-bent crystal [26].

These principles contribute valuable insights to ongoing research in spectrometer optical path components, particularly for applications requiring both high resolution and high collection efficiency from extended sources.

The paraboloid of revolution crystal spectrometer represents a sophisticated advancement in X-ray spectroscopic instrumentation, successfully addressing the competing demands of high resolution, high photon collection efficiency, and strict equal optical path conditions. Through its innovative geometrical configuration employing a paraboloid of revolution curved crystal, this design achieves exceptional spectral resolution (E/ΔE > 6600 in simulation, >2800 experimentally) while maintaining efficient performance with extended sources up to 150 μm in diameter [26]. The theoretical foundation, validated through both simulation and experimental protocols, demonstrates robust performance across the 7.7-8.3 keV energy range [26]. For researchers and drug development professionals, this technology offers enhanced capabilities for precise elemental analysis and material characterization, pushing the boundaries of what is achievable in X-ray spectroscopic diagnostics. Future developments will likely focus on extending this principle to wider energy ranges and adapting it for specialized applications across scientific and industrial domains.

Next-Generation Systems and Their Biopharmaceutical Applications

Spectrometers, the indispensable workhorses for analyzing light-matter interactions across scientific research and industry, are undergoing a revolutionary transformation. Traditional instruments, which disperse light into its constituent wavelengths using bulky components like prisms and gratings, are historically constrained by large size, complexity, and high cost [28]. The emerging paradigm of computational spectrometry surmounts these limitations by synergistically combining miniaturized hardware encoders with advanced computational decoding algorithms [28] [29]. This revolution shifts the design philosophy from purely optical separation to a hardware-software co-design principle, enabling the reconstruction of high-fidelity spectra from compressed, encoded measurements [28]. This review explores the core principles, encoding strategies, and decoding methodologies that define computational spectrometers, framing them within the broader context of advanced spectrometer optical path component research. Their compact size, portability, and cost-effectiveness are expanding the reach of spectroscopic techniques into field-based, real-time applications in biomedicine, environmental monitoring, and consumer electronics [28] [29].

Fundamental Principles: The Encoding and Decoding Framework

The operational principle of a reconstructive spectrometer can be distilled into a concise mathematical model and a three-stage process: calibration, measurement, and reconstruction [28].

Mathematical Foundation

The fundamental encoding process is described by a linear model. The signal (I) generated at a detector upon light incidence is:

[ I = \int{\lambda1}^{\lambda_2} R(\lambda) \cdot S(\lambda) \, d\lambda ]

where (R(\lambda)) is the spectral response function of the encoder at a specific wavelength (\lambda), and (S(\lambda)) is the input light intensity at (\lambda) [28]. This equation can be discretized into a matrix form, which is more practical for computational processing:

[ \mathbf{I} = \mathbf{R} \cdot \mathbf{S} ]

Here, (\mathbf{R}) is the response matrix with dimensions (m \times n) (where (m) is the number of measurements and (n) is the number of discrete wavelengths), (\mathbf{I}) is the vector of (m) measured signals, and (\mathbf{S}) is the discrete representation of the target spectrum vector with dimension (n) [28]. The power of compressed sensing is harnessed when (m < n), creating an underdetermined system. Successful reconstruction relies on the sparsity of the spectral signal, meaning it can be represented by a few significant components in a transformed domain [28].

The Reconstructive Workflow