Spectrometry vs. Spectroscopy: A Clear-Cut Guide for Scientists and Drug Developers

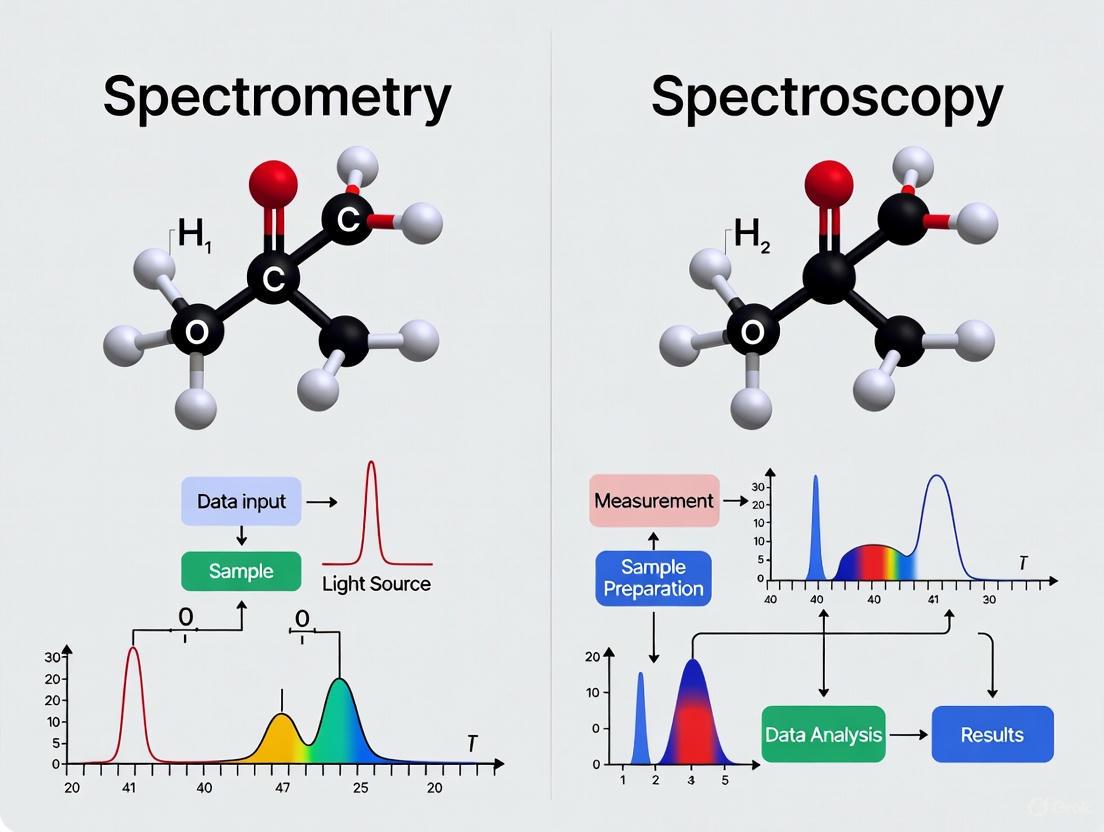

This article clarifies the critical distinction between spectroscopy, the theoretical study of light-matter interactions, and spectrometry, the practical measurement of spectra, a nuance essential for researchers and drug development professionals.

Spectrometry vs. Spectroscopy: A Clear-Cut Guide for Scientists and Drug Developers

Abstract

This article clarifies the critical distinction between spectroscopy, the theoretical study of light-matter interactions, and spectrometry, the practical measurement of spectra, a nuance essential for researchers and drug development professionals. We explore foundational principles, delve into key methodological applications in biomedicine, address common operational challenges, and provide a framework for instrument selection and data validation. By synthesizing current trends, including the integration of AI and portable devices, this guide aims to enhance analytical precision and strategic decision-making in research and development.

Spectroscopy and Spectrometry Demystified: Core Concepts for Researchers

In the fields of analytical science and drug development, the terms "spectroscopy" and "spectrometry" are often used interchangeably, creating a persistent source of confusion. However, for researchers and scientists, understanding this distinction is crucial for selecting the appropriate technique, accurately interpreting data, and effectively communicating findings. This guide delineates the core differences by framing spectroscopy as the theoretical framework for understanding energy-matter interactions, and spectrometry as the practical application concerned with the measurement of spectra to obtain quantitative data [1] [2] [3].

Core Definitions: Theory Meets Practice

The International Union of Pure and Applied Chemistry (IUPAC) provides definitive distinctions that form the basis for a clear understanding of these concepts [2] [3].

Spectroscopy is defined as "the study of physical systems by the electromagnetic radiation with which they interact or that they produce" [4] [2] [3]. It is the science of observing what happens when light or other radiant energy interacts with matter. This field is deeply rooted in quantum mechanics, as the observed interactions—whether absorption, emission, or scattering of radiation—reveal fundamental information about the electronic, vibrational, and rotational energy levels of molecules and atoms [2]. In essence, spectroscopy provides the theoretical foundation and principles.

Spectrometry is defined as "the measurement of such radiations as a means of obtaining information about the systems and their components" [4] [2] [3]. If spectroscopy is the science, spectrometry is the practical methodology. It involves the actual process of acquiring and quantifying a spectrum—a plot of the intensity of radiation as a function of wavelength or mass [1]. The term is most accurately applied to techniques that do not rely solely on electromagnetic radiation for analysis, with mass spectrometry being the prime example [2].

The relationship between these concepts, along with their associated instruments, is summarized in the table below.

Table 1: Core Concepts and Their Relationships

| Term | Core Definition | Primary Focus | Example Instrument |

|---|---|---|---|

| Spectroscopy | The study of energy-matter interactions [2] [3]. | Theoretical understanding of quantum states and molecular structure. | N/A |

| Spectrometry | The measurement of a specific spectrum [1]. | Quantitative analysis and data acquisition. | Spectrometer |

| Spectrometer | The physical instrument used to measure spectra [1]. | Hardware for generating, dispersing, and detecting signals. | Mass Spectrometer, Optical Emission Spectrometer |

Technical Distinctions in Instrumentation and Data

The theoretical divide between spectroscopy and spectrometry manifests in tangible differences in laboratory instrumentation and the nature of the data produced.

Instrumentation and Measurement Principles

The design of instruments for spectroscopy versus spectrometry varies significantly based on the physical principles being harnessed.

Spectroscopic Instrumentation: Techniques like Ultraviolet-Visible (UV-Vis), Infrared (IR), Nuclear Magnetic Resonance (NMR), and Raman spectroscopy rely on a light source and a detector, often with optical components to manipulate electromagnetic radiation [2]. The detector, which could be a simple photodiode or an array detector like a CMOS camera, records changes in the radiation after its interaction with the sample [3].

Spectrometric Instrumentation: In a technique like mass spectrometry, the instrumentation is designed to handle charged particles. It typically includes an ionization source to fragment the sample, electromagnetic fields to control ion trajectories, and a charged particle detector such as a combination of phosphor screens and multichannel plates [2].

Table 2: Comparison of Technique Principles and Applications

| Technique | Classification | Fundamental Principle | Key Measured Output | Primary Application in Pharma |

|---|---|---|---|---|

| NMR Spectroscopy [5] | Spectroscopy | Absorption of radio waves by atomic nuclei in a magnetic field. | Chemical shift (ppm) | Molecular structure determination [6]. |

| Raman Spectroscopy [5] | Spectroscopy | Inelastic scattering of monochromatic light. | Wavenumber shift (cm⁻¹) | Molecular identification, impurity detection [7]. |

| Mass Spectrometry [8] | Spectrometry | Ionization and separation of ions by mass-to-charge ratio (m/z). | Mass-to-charge ratio (m/z) | Protein identification, metabolite screening [8]. |

| UV-Vis Spectroscopy [3] | Spectroscopy | Absorption of ultraviolet or visible light. | Absorbance | Concentration determination, HOMO-LUMO gap analysis [3]. |

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key reagents and materials used in the featured spectroscopic and spectrometric techniques within pharmaceutical research.

Table 3: Key Research Reagent Solutions in Pharmaceutical Analysis

| Item | Function | Example Technique |

|---|---|---|

| Deuterated Solvents | Provides a magnetically inert environment for NMR analysis without adding interfering signals. | NMR Spectroscopy [6] |

| Internal Standard | A compound of known concentration and properties used for quantitative calibration in spectroscopic analysis. | qNMR [6] |

| Referent Compound | A pure substance used as a benchmark for calculating the concentration of an analyte in quantitative measurements. | qNMR [6] |

| Matrix for MALDI | A compound that absorbs laser energy and facilitates the soft ionization of large, non-volatile molecules. | Mass Spectrometry [9] |

Experimental Protocols: From Theory to Quantification

The distinction between spectroscopy and spectrometry comes to life in experimental workflows. The following protocols illustrate how the theoretical principles of spectroscopy are applied through spectrometric measurement to yield quantitative, actionable data.

Protocol 1: Quantitative NMR (qNMR) for Drug Solubility and Purity

Quantitative NMR is a powerful application that transforms the qualitative, information-rich technique of NMR spectroscopy into a precise quantitative spectrometric method [6].

1. Sample Preparation:

- Dissolve a precisely weighed amount of the drug substance (analyte) in a suitable deuterated solvent (e.g., D₂O, CDCl₃) [6].

- Add a precise amount of a well-characterized internal standard. Ideal standards have a simple, non-overlapping NMR signal, are chemically stable, and do not interact with the analyte. Common examples include caffeine or 3-(trimethylsilyl)propionic acid [6].

2. Data Acquisition:

- Use a adequately powered NMR spectrometer (e.g., 400 MHz or higher).

- Employ a pulse sequence that allows for complete relaxation of nuclei between scans (long recycle delay) to ensure the integrated signal area is directly proportional to the number of nuclei [6].

- Acquire a sufficient number of scans to achieve a high signal-to-noise ratio.

3. Data Analysis and Quantification:

- Identify a characteristic, non-overlapping signal for the analyte and the internal standard in the acquired spectrum.

- Precisely integrate the area under each chosen peak (Ianalyte and Istandard).

- Calculate the concentration or molar ratio using the inherent quantitative relationship of qNMR [6]:

- Molar Ratio: ( n{\text{analyte}} / n{\text{standard}} = (I{\text{analyte}} / N{\text{analyte}}) / (I{\text{standard}} / N{\text{standard}}) )

- Absolute Content: ( m{\text{analyte}} = (I{\text{analyte}} / N{\text{analyte}}) \times (N{\text{standard}} / I{\text{standard}}) \times (M{\text{analyte}} / M{\text{standard}}) \times m{\text{standard}} )

Where

nis moles,Iis integral area,Nis the number of nuclei contributing to the signal,Mis molar mass, andmis gravimetric mass [6].

Diagram: qNMR Experimental Workflow. This protocol transforms NMR spectroscopy into a quantitative spectrometric tool.

Protocol 2: AI-Enhanced Raman Spectroscopy for Impurity Detection

The integration of artificial intelligence with Raman spectroscopy exemplifies the evolution of a spectroscopic technique into a high-throughput, quantitative analysis platform [7].

1. Spectral Data Collection:

- Use a Raman spectrometer to analyze multiple batches of a pharmaceutical product, including both pure samples and those with known, controlled levels of impurities.

- For each sample, collect the full Raman spectrum, which serves as a molecular "fingerprint" [7].

2. AI Model Training:

- Pre-process the raw spectral data to reduce noise and correct for baseline drift.

- Annotate the spectra with their known impurity status or concentration to create a labeled training dataset.

- Train a deep learning model, such as a Convolutional Neural Network (CNN) or Transformer, to learn the complex patterns in the spectral data that are correlated with the presence of impurities [7]. The model learns to differentiate the subtle spectral changes caused by contaminants from the signal of the main API.

3. Prediction and Interpretation:

- Input the Raman spectrum of an unknown production batch into the trained AI model.

- The model outputs a prediction regarding impurity presence and can often provide a confidence score.

- To address the "black box" nature of AI, employ interpretability methods like attention mechanisms to highlight which regions of the Raman spectrum were most influential in the model's decision, thereby connecting the AI's output back to spectroscopic theory [7].

Diagram: AI-Enhanced Raman Workflow. AI adds a quantitative, predictive layer to spectroscopic data.

The divide between spectroscopy and spectrometry is not a barrier but a definition of roles. Spectroscopy provides the fundamental theoretical understanding of how matter behaves at the quantum level when probed with energy. Spectrometry provides the rigorous, quantitative measurement framework that turns those interactions into actionable data. In modern drug development, from using qNMR to determine API solubility to employing AI-powered Raman for quality control, these disciplines are not opposed but are synergistic partners [6] [7]. A clear comprehension of this distinction empowers scientists to better select techniques, interpret results, and drive innovation in pharmaceutical research and beyond.

The journey from a simple glass prism to modern mass spectrometers represents a cornerstone of analytical science, fundamentally shaping research across physics, chemistry, and biology. This evolution is framed by a critical conceptual distinction: spectroscopy is the theoretical science investigating the interaction between radiated energy and matter [10] [1], while spectrometry refers to the practical measurement and quantification of a spectrum to generate analytical results [10] [1]. This whitepaper traces the key historical milestones in this field, details the experimental methodologies that enabled these discoveries, and provides a toolkit for researchers, all within the context of this fundamental dichotomy between theory and practice. Understanding this history and its underlying principles is essential for today's scientists, particularly in drug development, where these techniques are pivotal for everything from target identification to clinical candidate analysis [11] [12].

Historical Timeline: Key Milestones

The development of spectroscopy and spectrometry was not a single event but a cumulative process spanning centuries, with each building upon the work of predecessors. The table below summarizes the pivotal discoveries and the key figures behind them.

Table 1: Key Historical Milestones in Spectroscopy and Spectrometry

| Date | Scientist(s) | Contribution | Impact on Spectroscopy/Spectrometry |

|---|---|---|---|

| 1666-1672 | Sir Isaac Newton [13] [14] | Used a prism to disperse white light into a continuous spectrum of colors, which he named the "spectrum" [15] [14]. | Foundational Spectroscopy: Established the composite nature of white light and provided the basic experimental model. |

| 1802 | William Hyde Wollaston [13] | Built an improved spectrometer with a lens to focus the spectrum onto a screen, noting dark gaps in the Sun's spectrum [13]. | Early Spectrometry: Developed the first true instrument for spectral measurement, leading to the discovery of absorption lines. |

| 1815 | Joseph von Fraunhofer [13] | Replaced the prism with a diffraction grating, greatly improving resolution; systematically mapped and quantified the dark lines in the solar spectrum (Fraunhofer lines) [13]. | Father of Spectroscopy: Transitioned from qualitative observation to precise, quantifiable spectrometry. |

| 1859 | Gustav Kirchhoff & Robert Bunsen [15] [13] | Demonstrated that each element has a unique spectral signature and that Fraunhofer lines correspond to absorption by elements in the Sun's atmosphere [15] [13]. | Theoretical Spectroscopy: Linked spectral lines to atomic composition, creating spectroscopy as a tool for chemical identification. |

| Early 1900s | Niels Bohr, Werner Heisenberg, Erwin Schrödinger [15] | Developed quantum theory, providing the theoretical explanation for why elements emit and absorb at specific wavelengths [15]. | Theoretical Foundation: Explained the mechanistic origin of spectral lines, completing the theoretical framework of spectroscopy. |

| 20th-21st Century | Continuous Innovations | Development of diverse techniques (UV, IR, Atomic, Mass Spectrometry) [15] and advanced instruments like ICP-MS [15] and hybrid mass spectrometers [12]. | Modern Spectrometry: Expansion into various energy-matter interactions and application across countless fields, from pharmaceuticals to materials science [15] [12]. |

The following diagram visualizes this progression of knowledge and technology from the initial observation of the spectrum to the modern theoretical understanding.

Experimental Methodologies: From Foundational to Modern

The historical progression was enabled by key experiments whose methodologies can be clearly delineated.

Newton's Prism Experiment (1666)

- Objective: To investigate the nature of white light.

- Protocol:

- A beam of sunlight was allowed into a dark room through a small slit.

- This beam was passed through a glass prism.

- The resulting output was observed on a screen [14].

- Outcome: The white light was dispersed into its constituent colors—red, orange, yellow, green, blue, indigo, violet—producing a continuous spectrum. This demonstrated that white light is composite, not a simple, pure entity [14].

Fraunhofer's Diffraction Grating Experiment (c. 1815)

- Objective: To achieve higher resolution spectral measurements and quantify wavelengths.

- Protocol:

- Newton's prism was replaced with a diffraction grating (a plate with many parallel, closely spaced slits) as the dispersive element [13].

- Light from a source (e.g., the sun) was passed through a single rectangular slit before hitting the diffraction grating.

- The resulting dispersed spectrum was observed and measured [13].

- Outcome: The spectral resolution was vastly improved, allowing for the precise mapping of the dark absorption lines (Fraunhofer lines) in the solar spectrum. This marked a shift from qualitative observation to quantitative spectrometry [13].

Kirchhoff and Bunsen's Spectral Analysis (1859)

- Objective: To determine the relationship between absorption/emission lines and chemical elements.

- Protocol:

- Purified chemical elements were heated in a flame (Bunsen burner) to produce emission spectra [15].

- The characteristic bright lines in the emission spectra of these heated elements were recorded [15].

- These laboratory emission lines were then compared to the dark absorption lines (Fraunhofer lines) in the solar spectrum [15] [13].

- Outcome: They established that the dark lines in the solar spectrum were due to the absorption of light by specific elements in the Sun's (and Earth's) atmosphere. This proved that spectral analysis could identify elements in distant stars and terrestrial samples, founding the science of spectroscopic chemical analysis [15] [13].

The Scientist's Toolkit: Instruments and Reagents

Modern spectrometry encompasses a wide array of techniques, each with specific instrumentation and applications, particularly in drug discovery and development.

Table 2: Key Spectrometry Techniques and Research Reagent Solutions

| Technique / Item | Category | Function & Application in Research |

|---|---|---|

| Prism | Optical Component | Disperses light via refraction. Foundational for early spectrometers and educational demonstrations [15]. |

| Diffraction Grating | Optical Component | Disperses light via diffraction and interference. Provides higher resolution than a prism and is standard in modern optical spectrometers [15] [10]. |

| Mass Spectrometer (MS) | Instrument | Measures mass-to-charge ratio of ions to identify and quantify molecules in a sample. Central to proteomics, metabolomics, and pharmacokinetics [10] [12]. |

| Liquid Chromatograph (LC) | Sample Prep / Separation | Often coupled with MS (LC-MS) to separate complex mixtures before mass analysis. Essential for analyzing biomolecules in biological fluids [12] [16]. |

| Inductively Coupled Plasma (ICP) Source | Ionization Source | Used in ICP-MS to detect minute quantities of trace elements, such as metals in biological samples (e.g., urine) [15]. |

| Triple Quadrupole Mass Analyzer | Mass Analyzer | A type of mass spectrometer known for high sensitivity and precision in quantitative analysis, commonly used in biomarker and drug metabolite quantification [12] [16]. |

| Infrared (IR) Spectrometer | Instrument | Measures molecular vibrations to identify functional groups and unknown compounds. Used for protein characterization and quality control [15] [10]. |

| Quantum Cascade Laser (QCL) | Light Source | A modern, tunable IR laser source that provides high intensity and precision for specific absorption bands (e.g., the Amide I band for proteins), improving IR sensitivity [10]. |

The workflow for a generic optical spectrometer, integrating these core components, is illustrated below.

The journey from Newton's prism to today's high-resolution mass spectrometers is a powerful narrative of scientific progress. It underscores the perpetual and critical interplay between theory (spectroscopy) and practice (spectrometry). The theoretical understanding of how energy interacts with matter, pioneered by Newton, Fraunhofer, Kirchhoff, and the quantum physicists, provided the essential framework. This framework, in turn, empowered the development of increasingly sophisticated tools of measurement—the spectrometers—that define modern analytical science.

This legacy is profoundly evident in today's biopharmaceutical industry, where spectrometry is indispensable. It accelerates drug discovery [11], enables the precise quantification of biomarkers [16], ensures drug safety [12], and drives the development of novel therapies through techniques like multi-omics research [11] [12]. As spectrometry continues to evolve with advancements in automation, AI, and quantum technologies [11], its role in empowering researchers and scientists to solve complex biological problems will only become more central, continuing a history of innovation that began with a simple beam of light and a prism.

In scientific research, particularly in fields like drug development and material science, the terms spectroscopy and spectrometry are often used interchangeably, yet they represent distinct concepts. Spectroscopy is the theoretical science that studies the interaction between radiated energy and matter [10]. It involves the principles behind how matter absorbs, emits, or scatters electromagnetic radiation to reveal its properties [1]. Spectrometry, in contrast, is the practical application; it is the method used to acquire a quantitative measurement of a spectrum [10]. Essentially, spectroscopy provides the theoretical framework for understanding energy-matter interactions, while spectrometry is the process of generating quantifiable results [1].

The spectrometer is the physical instrument that forms the crucial bridge between these concepts and empirical data. It is the device that measures the variation of a physical characteristic—such as light intensity, mass-to-charge ratio, or nuclear resonant frequencies—over a given range to produce a spectrum [10]. This guide explores how spectrometers translate theoretical interactions into actionable data, detailing the core technologies, methodologies, and applications that make them indispensable in modern research.

Spectrometer Technologies: The Core of Quantitative Analysis

Spectrometers are engineered to measure specific types of interactions, and their design is tailored to the physical principles they exploit. The most common types found in research laboratories are optical spectrometers, mass spectrometers, and Nuclear Magnetic Resonance (NMR) spectrometers [10]. Each type generates a different kind of spectrum, from which researchers can extract precise quantitative information about a sample's composition, structure, and dynamics.

Table 1: Key Spectrometer Technologies and Their Applications in Research

| Technology | Measured Property | Primary Research Applications | Key Strengths |

|---|---|---|---|

| Optical Spectrometer [17] | Variation in light absorption, emission, or scattering | UV-VIS: Protein, metabolite, and nucleic acid analysis [17]. IR: Vibrational analysis of molecular bonds [18]. | Non-destructive, highly accurate, applicable to solids, liquids, and gases [17]. |

| Mass Spectrometer (MS) [10] | Mass-to-charge ratio (m/z) of ions | Isotope dating, protein characterization, identification of unknown compounds [10] [18]. | High sensitivity for trace element detection, capable of identifying a wide range of compounds [19]. |

| NMR Spectrometer [10] | Nuclear resonant frequencies | Molecular structure determination, metabolic profiling (e.g., MRS for brain chemistry) [10]. | Provides detailed atomic-level structural information. |

| X-ray Fluorescence (XRF) [20] | Emission of inner-shell electrons | Quantitative elemental analysis of soils, alloys, and materials [20]. | Non-destructive, requires minimal sample preparation. |

| Raman Spectrometer [17] | Inelastically scattered light | Identification of chemical structures and phases in solids, liquids, and gases. | Requires no sample preparation, non-destructive, can probe aqueous samples [17]. |

The fundamental operation of an optical spectrometer, the most common type, involves three core functions: producing a spectrum from a light source, dispersing it, and measuring the intensities of its spectral lines [17]. Light is passed from an incandescent source to a diffraction grating and then to a mirror, which projects the diffracted wavelengths onto a detector, such as a charge-coupled device (CCD) [10]. This process allows scientists to identify a substance by comparing its unique spectral pattern to known markers [17].

Table 2: Hybrid Chromatography-Spectrometry Systems

| System | Separation Method | Ionization Method | Ideal Application in Drug Development |

|---|---|---|---|

| Gas Chromatography-Mass Spectrometry (GC/MS) [19] | Gas mobile phase & heat | Electron impact ionization | Analysis of volatile, thermally stable compounds (e.g., residual solvents, metabolic screening) [19]. |

| Liquid Chromatography-Mass Spectrometry (LC/MS) [19] | Liquid mobile phase & high pressure | Electrospray ionization (ESI) | Analysis of non-volatile, thermally labile, or polar molecules (e.g., proteins, peptides, most pharmaceuticals) [19]. |

Experimental Methodologies: From Sample to Spectrum

Robust experimental protocols are essential for generating reliable data. The following sections detail methodologies for two critical techniques: XRF quantitative analysis and chromatographic separation coupled with mass spectrometry.

Protocol: Deep Learning-Enhanced Quantitative XRF Analysis

This protocol, based on a 2025 study, uses a Multi-energy State Attention Fusion Network (MSAF-Net) to achieve high-precision elemental analysis [20].

- Objective: To accurately quantify the concentration of elements (Si, Al, Fe, Mg, Ca, K, and heavy metals) in soil samples using multi-energy XRF spectra.

- Materials and Reagents:

- XRF Spectrometer with a multi-energy excitation source.

- 9855 simulated soil spectra for model training.

- 118 field-collected soil samples for validation.

- MSAF-Net Software: A custom deep learning environment (Python, TensorFlow/PyTorch).

- Procedure:

- Data Acquisition: Collect XRF spectral data from the sample at multiple energy states.

- Spectral Preprocessing:

- Model Application:

- Process the weighted spectra from each energy state through the Dynamic Fusion Scoring Module (DFSM). This module learns distinct weights for each energy state to ensure balanced information integration [20].

- The DFSM evaluates the fused output using a pre-training scoring mechanism.

- Model Training:

- Employ a two-stage optimization strategy to prevent the model from settling on a mediocre solution [20]:

- Stage 1 (Pre-training): Individually train each energy state branch of the neural network.

- Stage 2 (Joint Training): Conduct constrained joint training of all branches to promote comprehensive information sharing.

- Employ a two-stage optimization strategy to prevent the model from settling on a mediocre solution [20]:

- Quantitative Prediction: Use the trained MSAF-Net model to predict elemental concentrations from the fused and processed spectral data.

- Validation: The model's performance is validated by achieving a coefficient of determination (R²) above 0.98 for major elements and a Ratio of Performance to Deviation (RPD) above 7.5 [20].

Protocol: Compound Identification via GC/MS or LC/MS

This protocol outlines the general workflow for separating and identifying compounds in a complex mixture, common in pharmaceutical and biomedical analysis [19].

- Objective: To separate, identify, and quantify individual components within a complex chemical mixture (e.g., drug metabolites, forensic samples).

- Materials and Reagents:

- GC/MS or LC/MS System.

- GC Consumables: Inert gas carrier (e.g., Helium), fused-silica capillary column, liners, septa.

- LC Consumables: High-purity solvents (e.g., water, acetonitrile, methanol), analytical column (e.g., C18), buffers.

- Standard solutions of target analytes for calibration.

- Procedure:

- Sample Preparation:

- For GC/MS: The sample must be volatile and thermally stable. Derivatization may be necessary to increase volatility.

- For LC/MS: The sample is dissolved in a solvent compatible with the mobile phase. Filtration is often required to remove particulates.

- Chromatographic Separation:

- GC/MS: A微量 amount of sample is injected into a heated port, vaporized, and carried by an inert gas through a capillary column. The oven temperature is precisely controlled to separate compounds based on their volatility and affinity for the stationary phase [19].

- LC/MS: The sample is injected into a stream of liquid mobile phase and pumped at high pressure through a packed column. Compounds are separated based on their polarity, size, and affinity for the stationary phase [19].

- Ionization and Mass Analysis:

- As separated compounds elute from the chromatograph, they are ionized.

- GC/MS typically uses Electron Impact (EI) ionization.

- LC/MS typically uses softer ionization like Electrospray Ionization (ESI).

- The resulting ions are introduced into the mass spectrometer, where they are separated based on their mass-to-charge ratio (m/z) and detected [19].

- As separated compounds elute from the chromatograph, they are ionized.

- Data Analysis: The data system generates a chromatogram (intensity vs. time) and a mass spectrum for each detected peak. Compounds are identified by comparing their mass spectra and retention times to those of known standards in a library.

- Sample Preparation:

Diagram 1: GC/MS analysis workflow.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Materials for Spectrometric Experiments

| Item | Function | Application Example |

|---|---|---|

| Quantum Cascade Laser (QCL) [10] | A tunable mid-infrared laser source that provides high intensity and precision for excitation. | Used in advanced IR spectrometers (e.g., RedShiftBio Aurora) for highly sensitive analysis of the Amide I band in proteins [10]. |

| Diffraction Grating [10] | Disperses incident light into its constituent wavelengths to create a spectrum for measurement. | A core component in optical spectrometers, replacing prisms in modern devices for higher resolution [10]. |

| Charge-Coupled Device (CCD) [10] | A highly sensitive light detector that captures the 2D spectral data projected by the diffraction grating. | Used in digital spectroscopy to capture spectra that are then extracted and manipulated into 1D spectral data [10]. |

| EDX Detector [10] | Identifies and quantifies elements present in a sample by measuring energy-dispersive X-rays. | Coupled with Scanning Electron Microscopes (SEM) for spatially-resolved elemental analysis at the nanoscale [10]. |

| Mobile Phases (GC & LC) [19] | The solvent or gas that carries the sample through the chromatographic column. | GC: Inert gases like Helium. LC: Solvent mixtures (e.g., water/acetonitrile) often with modifiers for optimal separation [19]. |

Data Processing and Analysis: From Raw Signal to Scientific Insight

The raw data captured by a spectrometer is rarely used directly. Spectral data is inherently prone to interference from environmental noise, instrumental artifacts, and sample impurities, which can significantly degrade measurement accuracy [21]. A robust preprocessing pipeline is therefore critical.

Diagram 2: Spectral data preprocessing workflow.

Key preprocessing steps include [21]:

- Cosmic Ray Removal: Filtering out sharp, high-intensity spikes caused by high-energy particles.

- Baseline Correction: Removing the non-linear background signal caused by instrumental artifacts or sample scattering.

- Normalization: Scaling spectra to a common standard to correct for variations in absolute intensity.

- Spectral Derivatives: Applying derivatives (e.g., Savitzky-Golay) to enhance spectral features and resolve overlapping peaks.

The field is undergoing a transformation with the integration of machine learning. As demonstrated by the MSAF-Net for XRF, deep learning models can adaptively weight spectral features, fuse data from multiple sources, and overcome traditional limitations in quantitative analysis, achieving classification accuracy greater than 99% [20] [21].

The spectrometer stands as the definitive instrumental link, transforming the theoretical concepts of spectroscopy into the quantitative data of spectrometry. This bridge enables researchers to decode the fundamental composition of matter, from characterizing a protein's structure to quantifying toxic elements in soil. As spectrometer technology continues to evolve—integrated with advanced computing, sophisticated data processing, and machine learning—its role as the cornerstone of empirical scientific discovery will only grow more profound, ensuring that the questions posed by theoretical science can be answered with precise, actionable data.

The study of how light and matter interact forms the cornerstone of analytical techniques indispensable to modern scientific research, particularly in pharmaceutical development. These interactions—absorption, emission, and scattering—provide the fundamental basis for spectroscopy, which is defined as the study of physical systems by the electromagnetic radiation with which they interact or that they produce [3]. The measurement of such radiations to obtain information about systems and their components is termed spectrometry [3] [2]. This distinction frames spectroscopy as the theoretical framework and spectrometry as the practical measurement process. In pharmaceutical sciences, these methodologies enable researchers to determine molecular structures, identify compounds, quantify concentrations, and monitor reactions in real-time, forming an critical part of drug discovery, development, and quality control [22] [23].

The electromagnetic spectrum utilized in these investigations spans a broad range of energies, each interacting with matter in distinct ways. X-ray regimes (0.1 nm to 100 nm) involve high-energy photons that excite core electrons and can cause ionization, making them suitable for elemental analysis [22]. The ultraviolet and visible (UV-Vis) regime (100 nm to 1 μm) is dominated by electronic transitions in molecules, particularly those with chromophores, conjugated pi-systems, or aromatic rings [22]. The infrared regime (1 to 30 μm) probes molecular vibrations, with the near-infrared (NIR) revealing overtone and combination bands, while the mid-infrared exposes fundamental vibrational modes [22]. The terahertz regime (30 to 3000 μm) investigates intermolecular vibrations such as hydrogen bonding and dipole-dipole interactions, and the microwave regime (3 to 300 mm) studies molecular rotations [22]. Understanding how each spectral region interacts with matter provides researchers with a diverse analytical toolkit for addressing complex pharmaceutical challenges.

Fundamental Principles and Theoretical Framework

Absorption

Absorption occurs when the energy of an incident photon corresponds exactly to the energy difference between two quantum mechanical states in an atom or molecule, resulting in the photon's energy being transferred to the species [22]. This process promotes electrons from ground states to excited states or excites molecular vibrations/rotations, depending on the photon energy. The resulting attenuation of the transmitted light intensity follows the Beer-Lambert Law, which states that absorbance is proportional to the concentration of the absorbing species and the path length of light through the sample [22]. In the X-ray region, absorption involves the ejection of core-level electrons (e.g., from 1s, 2s, or 2p orbitals) when the incident photon energy equals or exceeds their binding energy [24]. This creates a characteristic sharp increase in absorption known as an "absorption edge," which is element-specific [24]. In UV-Vis spectroscopy, absorption corresponds to electronic transitions between molecular orbitals, providing information about chromophores and conjugated systems, with the HOMO-LUMO gap being particularly important for optoelectronic materials [3] [22].

Emission

Emission processes occur when excited species return to lower energy states, releasing the excess energy as photons. This can happen through various pathways: photoluminescence (including fluorescence and phosphorescence) involves prior absorption of photons, chemiluminescence results from chemical reactions, and radioluminescence occurs following ionization [3]. In X-ray spectroscopy, emission accompanies the relaxation of atoms following the creation of a core hole. When an electron from a higher shell fills the core hole, the energy difference is emitted as a characteristic X-ray photon [24]. This emitted radiation is measured in techniques like X-ray Emission Spectroscopy (XES), providing information about the electronic structure and local chemical environment [24]. The intensity and spectral distribution of emission can reveal molecular concentrations, environmental conditions, and energy transfer efficiencies, with quantum yield being an important parameter for light-emitting applications [3].

Scattering

Scattering involves the redirection of photons by matter, occurring in two primary forms. Elastic scattering (Rayleigh or Mie scattering) happens when photons change direction without energy exchange, preserving the incident wavelength [25] [22]. Inelastic scattering involves energy transfer between the photon and the scattering material. The most significant inelastic process is Raman scattering, where the photon either loses energy to (Stokes shift) or gains energy from (anti-Stokes shift) molecular vibrations or rotations [25]. Raman scattering occurs due to temporary distortion of the electron cloud during photon interaction, depending on changes in molecular polarizability rather than dipole moments [25]. Unlike absorption-emission processes that occur on picosecond-to-microsecond timescales, scattering is virtually instantaneous, happening within femtoseconds [22]. Raman spectroscopy benefits from low interference from water molecules, making it particularly valuable for biomedical and pharmaceutical applications where aqueous environments are common [25] [22].

Table 1: Fundamental Light-Matter Interaction Processes

| Interaction Type | Energy Exchange | Key Governing Principle | Resulting Phenomena |

|---|---|---|---|

| Absorption | Photon energy transferred to matter | Energy matching between photon and quantum states | Electronic, vibrational, or rotational excitation |

| Emission | Excess energy released as photon | Relaxation from excited to ground state | Fluorescence, phosphorescence, X-ray emission |

| Elastic Scattering | No energy exchange | Electromagnetic interaction preserving photon energy | Rayleigh scattering, Mie scattering |

| Inelastic Scattering | Energy exchange between photon and matter | Temporary distortion of electron cloud | Raman scattering (Stokes, anti-Stokes) |

Quantum Mechanical Foundations

The interaction between light and matter is fundamentally governed by quantum mechanics, with spectroscopy often described as "applied quantum mechanics" [3] [2]. The energy of electromagnetic radiation is quantized into photons, with energy E = hν, where h is Planck's constant and ν is the frequency. Molecules possess discrete energy levels corresponding to electronic, vibrational, and rotational states, with electronic energies typically in the UV-Vis range, vibrational energies in the infrared, and rotational energies in the microwave region [22]. Transitions between these states obey selection rules based on quantum numbers and symmetry considerations. For absorption to occur, the incident photon must match the energy difference between states, and the interaction must induce a change in dipole moment (for IR absorption) or polarizability (for Raman scattering) [25] [22]. The quantum mechanical framework not only explains observed spectroscopic phenomena but also enables prediction of molecular behavior and design of materials with tailored optical properties [3].

Spectroscopic Techniques Based on Light-Matter Interactions

Absorption-Based Techniques

Ultraviolet-Visible (UV-Vis) Spectroscopy measures electronic transitions between molecular orbitals, particularly in chromophores with conjugated π-systems [22]. This technique provides information about HOMO-LUMO gaps in optoelectronic materials and can monitor solute-solvent interactions [3]. Quantitative analysis follows the Beer-Lambert law, where absorbance is proportional to concentration, enabling determination of solute concentrations in solutions [22]. Infrared (IR) Spectroscopy probes molecular vibrations that involve changes in dipole moment, with the mid-IR region (400-4000 cm⁻¹) providing characteristic fingerprint patterns for molecular identification [22]. IR spectroscopy can be performed in transmission mode or using attenuated total reflection (ATR), which is particularly useful for analyzing solids, liquids, and pastes without extensive sample preparation [22]. X-ray Absorption Spectroscopy (XAS) measures the absorption coefficient of a material as a function of incident X-ray energy, providing element-specific information about unoccupied electronic states and local atomic structure [24]. XAS encompasses several sub-techniques: XANES (X-ray Absorption Near-Edge Structure) reveals oxidation states and coordination chemistry, while EXAFS (Extended X-ray Absorption Fine Structure) provides bond distances and coordination numbers [24].

Emission-Based Techniques

Fluorescence Spectroscopy measures the emission of photons following electronic excitation, providing information about molecular environment, conformational changes, and intermolecular interactions [3]. The technique offers high sensitivity and is widely used in pharmaceutical analysis for studying drug-biomolecule interactions [24]. X-ray Emission Spectroscopy (XES) analyzes the characteristic X-rays emitted when core holes are filled by higher-level electrons, offering complementary information to XAS about occupied electronic states and chemical bonding [24]. Resonant Inelastic X-ray Scattering (RIXS) is a more advanced emission technique that provides enhanced insights into electronic excitations by tuning the incident X-ray energy to specific absorption resonances [24]. In pharmaceutical applications, emission techniques are valuable for probing metal centers in proteins, studying drug-DNA interactions, and characterizing active pharmaceutical ingredients (APIs) in both crystalline and amorphous forms [24].

Scattering-Based Techniques

Raman Spectroscopy relies on inelastic scattering of light to probe molecular vibrations that involve changes in polarizability [25]. Unlike IR spectroscopy, Raman is particularly effective for studying aqueous systems and symmetric molecular vibrations [25]. The Raman effect is inherently weak, with only approximately 1 in 10⁷ photon-matter interactions resulting in inelastic scattering [25]. Surface-Enhanced Raman Spectroscopy (SERS) dramatically increases sensitivity by several orders of magnitude through adsorption of molecules on rough metal surfaces or colloidal nanoparticles, enabling single-molecule detection in some cases [25]. Resonance Raman Spectroscopy (RRS) provides signal enhancements of up to six orders of magnitude by tuning the excitation wavelength to coincide with electronic transitions of the analyte [25]. These enhanced Raman techniques have opened new possibilities for biomedical diagnostics, including cancer detection, neurosurgical guidance, and analysis of circulating tumor cells [25]. Elastic Scattering Techniques including Rayleigh and Mie scattering are used for particle size determination and structural characterization, though they provide less chemical information than inelastic methods [22].

Table 2: Major Spectroscopic Techniques and Their Applications in Pharmaceutical Research

| Technique | Primary Interaction | Information Obtained | Pharmaceutical Applications |

|---|---|---|---|

| UV-Vis Spectroscopy | Absorption | Electronic structure, concentration | API quantification, HOMO-LUMO gap determination |

| IR Spectroscopy | Absorption | Molecular vibrations, functional groups | Compound identification, polymorph characterization |

| XAS/XES | Absorption & Emission | Local atomic structure, oxidation states | Metal-drug complexes, protein-metal interactions |

| Fluorescence | Emission | Molecular environment, interactions | Drug-biomolecule binding studies, conformational changes |

| Raman/SERS | Inelastic scattering | Molecular vibrations, structural fingerprint | In vivo tissue analysis, cancer diagnosis, formulation testing |

Experimental Methodologies and Measurement Approaches

X-ray Absorption Spectroscopy Protocols

XAS experiments are typically performed at synchrotron facilities that provide intense, tunable X-ray sources [24]. Samples can be analyzed as solids, liquids, or gases without special preparation, though the measurement mode must be selected based on sample properties [24]. The transmission mode is preferred for concentrated samples (>10% element of interest) with uniform thickness, measuring intensity before (I₀) and after (Iₜ) the sample using ionization chambers [24]. The absorption coefficient μ is calculated as ln(I₀/Iₜ) [24]. For dilute samples or those with inhomogeneous distribution, fluorescence detection is employed, where the intensity of characteristic X-rays (Iƒ) emitted after absorption is measured at 90° to the incident beam using specialized detectors [24]. This approach significantly improves signal-to-noise for trace elements but may suffer from self-absorption effects that require mathematical correction [24]. Electron yield detection measures electrons emitted during the relaxation process and is particularly surface-sensitive [24]. Modern XAS experiments often employ in situ or operando setups to monitor dynamic processes in real-time, with acquisition times ranging from milliseconds to minutes depending on concentration and beam intensity [24].

Raman and SERS Experimental Protocols

Conventional Raman spectroscopy requires minimal sample preparation, with solids, liquids, and gases all amenable to analysis [25]. The experimental setup involves a laser source (typically in UV, visible, or NIR regions), a spectrometer with wavelength dispersion capability, and a sensitive detector (commonly CCD cameras) [25]. For SERS measurements, substrate preparation is critical: roughened metal electrodes, metal nanoparticle colloids, or nanostructured metal films are used to create plasmonic hot spots that enhance Raman signals [25]. Optimal enhancement occurs when the laser wavelength overlaps with the surface plasmon resonance of the metal substrate [25]. Biological samples for SERS analysis often require specific preparation techniques to ensure proper interaction with the enhancing substrate while maintaining biological activity [25]. For in vivo applications, fiber-optic probes enable remote sensing and imaging in clinical settings [25]. Data acquisition parameters (laser power, integration time, spectral range) must be optimized to maximize signal while minimizing sample degradation, particularly for sensitive biological specimens [25].

Data Analysis and Interpretation Methods

Spectroscopic data analysis ranges from simple univariate approaches to complex multivariate techniques [22]. Qualitative analysis typically involves comparison of measured spectra with reference databases using cross-correlation algorithms [22]. Quantitative analysis may employ univariate methods based on Beer-Lambert law (for absorption) or calibration curves when distinct spectral signatures can be assigned to specific analytes [22]. For complex mixtures with overlapping signals, multivariate analysis techniques are essential: Partial Least Squares Regression (PLSR) establishes relationships between spectral variables and concentration, Support Vector Machines (SVM) handle classification tasks, and Artificial Neural Networks (ANN) model nonlinear relationships in large datasets [22]. Raman spectral analysis often incorporates machine learning frameworks to identify disease-specific patterns from complex biological samples, though care must be taken to avoid overfitting with overly complex models [25]. XAS data processing involves background subtraction, normalization, and Fourier transformation of EXAFS oscillations to obtain radial distribution functions for bond distance and coordination number determination [24].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Spectroscopic Experiments

| Reagent/Material | Function/Purpose | Application Examples |

|---|---|---|

| Synchrotron Radiation Source | High-intensity, tunable X-rays for excitation | XAS, XES experiments requiring element-specific excitation [24] |

| Metal Nanoparticles (Au, Ag) | Plasmonic enhancement for signal amplification | SERS substrates for trace molecular detection [25] |

| Ionization Chambers | Measurement of X-ray intensity before/after sample | Transmission mode XAS experiments [24] |

| ATR Crystals (Diamond, ZnSe) | Internal reflection element for evanescent wave sampling | FTIR spectroscopy of solids, liquids without preparation [22] |

| Fluorescence Detectors | Measurement of characteristic emission signals | XES, fluorescence yield XAS for dilute samples [24] |

| Monochromators | Wavelength selection and dispersion | Scanning spectroscopy, Raman spectrometer systems [3] |

| CCD Detectors | High-sensitivity light detection for weak signals | Raman spectroscopy, dispersive spectrometer systems [3] [25] |

| UHPLC Systems | High-pressure separation for complex mixtures | LC-MS integration for proteomics, biopharmaceutical analysis [26] |

| Orbitrap Mass Analyzers | High-resolution accurate mass measurement | Proteomic research, biopharmaceutical characterization [27] [26] |

Pharmaceutical Applications and Research Implications

The application of spectroscopy and spectrometry in pharmaceutical research continues to expand with technological advancements. Drug Development and Quality Control increasingly relies on spectroscopic methods for both qualitative and quantitative analysis [22]. UV-Vis spectroscopy provides rapid concentration measurements, while IR and Raman techniques offer molecular fingerprinting for identity confirmation and polymorph characterization [22]. The non-destructive nature of many spectroscopic methods makes them ideal for analyzing precious compounds and for at-line or in-line process analytical technology (PAT) applications in manufacturing [22]. Biopharmaceutical Characterization has been revolutionized by advanced mass spectrometry techniques, particularly LC-MS systems incorporating Orbitrap technology that deliver increased speed, sensitivity, and multiplexing capabilities [27]. These systems enable researchers to quantify and validate proteins with greater precision, accelerating discoveries in precision medicine and complex diseases like Alzheimer's and cancer [27]. The Orbitrap Astral Zoom mass spectrometer, for example, enables 35% faster scan speeds and 40% higher throughput compared to previous generations, marking an important milestone in translating proteomics to clinical research applications [27].

Biomedical Diagnostics represents another growing application area, particularly for Raman and SERS techniques [25]. The integration of Raman spectroscopy with machine learning algorithms has demonstrated diagnostic accuracy exceeding 85% for conditions including brain disorders, various cancers, and infectious diseases like COVID-19 [25]. SERS-based biosensors can detect viral RNA and proteins from swab samples within minutes, offering extremely high sensitivity, rapid response, and convenient operation [25]. In neurosurgery, Raman techniques provide real-time guidance for tumor margin detection, helping surgeons maximize tumor resection while preserving healthy tissue [25]. Drug-Molecule Interaction Studies benefit greatly from X-ray absorption and emission spectroscopies, which can probe local atomic structure around metal centers in metallodrugs and investigate coordination environments in protein-metal complexes [24]. These element-specific techniques provide information about oxidation states, electronic configuration, and local geometry that complements structural data from other analytical methods [24]. The high penetration depth of X-rays enables studies of samples in various states (solid, liquid, gas) without special preparation, while the absence of long-range order requirements makes XAS suitable for both crystalline and amorphous materials [24].

The fundamental principles of light-matter interaction—absorption, emission, and scattering—provide the theoretical foundation for spectroscopic science, while their practical measurement constitutes spectrometry. These complementary approaches enable comprehensive characterization of pharmaceutical compounds from atomic to macroscopic scales. Absorption techniques reveal electronic and vibrational structures, emission methods provide insights into excited states and relaxation processes, and scattering approaches yield molecular fingerprint information even in challenging environments like aqueous solutions. The continuing evolution of spectroscopic instrumentation, including higher-resolution mass spectrometers, brighter X-ray sources, and enhanced Raman systems, promises to further expand pharmaceutical applications. Likewise, advances in data analysis through machine learning and multivariate algorithms are extracting increasingly sophisticated information from spectroscopic data. As these technologies mature and become more accessible, spectroscopy and spectrometry will remain indispensable tools for pharmaceutical researchers addressing complex challenges in drug discovery, development, and clinical application.

Techniques in Action: Applying Spectroscopic and Spectrometric Methods in Biomedicine

In the field of analytical science, the terms spectroscopy and spectrometry are often used interchangeably, but they represent distinct concepts. Spectroscopy is the theoretical science studying the interaction between radiated energy and matter. It focuses on the absorption, emission, or scattering of electromagnetic radiation to gather qualitative information about a sample's molecular structure and environment [10] [1]. In contrast, spectrometry refers to the practical measurement of spectra to obtain quantifiable results. It is the application of spectroscopic principles to acquire and analyze data, often involving instruments called spectrometers [10] [3]. This whitepaper explores three core spectroscopic techniques—NMR, UV-Vis, and IR spectroscopy—framed within this important distinction, focusing on their application in protein characterization and biomarker research for drug development.

Fundamental Principles and Techniques

Nuclear Magnetic Resonance (NMR) Spectroscopy

NMR spectroscopy exploits the magnetic properties of certain atomic nuclei, such as ^1H or ^13C. When placed in a strong magnetic field, these nuclei absorb and re-emit electromagnetic radiation in the radio frequency range. The resulting NMR spectrum provides detailed information about the local electronic environment of each nucleus, allowing researchers to determine molecular structure, dynamics, and interaction states of proteins in solution at an atomic level [28].

Ultraviolet-Visible (UV-Vis) Spectroscopy

UV-Vis spectroscopy measures the absorption of ultraviolet and visible light by molecules, which causes electronic transitions from ground state to excited states. In proteins, the aromatic amino acids—tryptophan, tyrosine, and phenylalanine—act as intrinsic chromophores, absorbing light in the UV range (around 280 nm). Shifts in absorption maxima can indicate conformational changes, protein folding, unfolding, and ligand-binding events [28].

Infrared (IR) Spectroscopy

IR spectroscopy probes the vibrational motions of chemical bonds within a molecule. When infrared radiation is passed through a sample, bonds absorb energy at characteristic frequencies based on atom mass and bond strength. The amide I band (approximately 1600-1700 cm⁻¹), primarily from C=O stretching vibrations of the peptide backbone, is particularly valuable for determining protein secondary structure content (α-helices and β-sheets). Fourier Transform Infrared (FTIR) spectroscopy has largely replaced dispersive IR methods, offering higher signal-to-noise ratio, rapid scanning, and improved resolution through interferometry [28] [29].

Comparative Analysis of Spectroscopic Techniques

Table 1: Key Characteristics of NMR, UV-Vis, and IR Spectroscopy for Protein Analysis

| Feature | NMR Spectroscopy | UV-Vis Spectroscopy | IR Spectroscopy |

|---|---|---|---|

| Physical Principle | Nuclear spin transitions in a magnetic field [28] | Electronic transitions in chromophores [28] | Molecular bond vibrations [28] [29] |

| Spectral Range | Radio frequency | 200-400 nm (UV), 400-800 nm (Visible) [28] | Mid-IR: ~4000-400 cm⁻¹ [28] |

| Sample Form | Solution, solid | Solution (liquid), solid films [28] | Solution, solid, powders, tissues [28] |

| Key Protein Information | Atomic-level 3D structure, dynamics, interactions [28] | Concentration, folding/unfolding, aromatic residue environment [28] | Secondary structure (via Amide I/II bands), functional groups [28] [29] |

| Typical Application | Protein 3D structure determination, ligand binding kinetics | Protein quantification (A280), stability studies, kinetic assays | Secondary structure quantification, conformational changes, post-translational modification analysis [28] |

Table 2: Advantages and Limitations for Biomarker Research

| Technique | Key Advantages | Major Limitations |

|---|---|---|

| NMR | Provides atomic-resolution structural data; can study proteins in near-native states; label-free quantification [28] | Low sensitivity requires high sample concentration; expensive instrumentation; complex data analysis [28] |

| UV-Vis | Simple, rapid, and inexpensive; requires small sample volumes; non-destructive [28] | Limited structural detail; interference from other chromophores; less specific [28] |

| IR | High structural sensitivity; can analyze complex samples (tissues, films); compatible with H₂O solutions [28] [29] | Water absorption can obscure signals; complex spectral interpretation; overlapping bands can be challenging to deconvolute [28] |

Experimental Protocols for Protein Analysis

Protein Secondary Structure Analysis via FTIR

This protocol uses the Amide I band to quantify secondary structure elements in proteins [28] [29].

Sample Preparation:

- Prepare protein solution in appropriate buffer. Note that phosphate buffers are preferred over those with strong IR absorption (e.g., Tris).

- For solution studies, use a demountable cell with CaF₂ or BaF₂ windows and a path length of 5-50 μm.

- Alternatively, create a solid film by air-drying or lyophilizing a small volume of protein solution directly onto an IR-transparent crystal.

Instrument Setup:

- Use an FTIR spectrometer equipped with a deuterated triglycine sulfate (DTGS) or mercury cadmium telluride (MCT) detector.

- Purge the instrument with dry, CO₂-free nitrogen for at least 15 minutes before and during data acquisition to minimize atmospheric water vapor interference.

- Set resolution to 4 cm⁻¹ and accumulate 64-256 scans to achieve an optimal signal-to-noise ratio.

Data Acquisition:

- Collect a background spectrum with the empty cell or clean crystal.

- Load the sample and collect the sample spectrum under identical instrument conditions.

- The spectrum should be measured across at least the 1800-1500 cm⁻¹ region to encompass the Amide I (~1700-1600 cm⁻¹) and Amide II (~1600-1500 cm⁻¹) bands.

Data Processing and Analysis:

- Subtract the buffer or background spectrum from the sample spectrum.

- Perform baseline correction to ensure the spectrum baseline is flat.

- Apply Fourier self-deconvolution or second-derivative analysis to resolve overlapping bands within the Amide I region.

- Use curve-fitting procedures (e.g., Gaussian or Lorentzian functions) to quantify the area of individual bands assigned to α-helices (~1650-1658 cm⁻¹), β-sheets (~1620-1640 cm⁻¹ & ~1670-1695 cm⁻¹), and random coils (~1640-1650 cm⁻¹).

Protein-Ligand Interaction Studied by UV-Vis Spectroscopy

This method detects binding-induced changes in a protein's UV absorption spectrum [28].

Sample Preparation:

- Prepare a highly purified protein solution in a suitable buffer. The buffer should not absorb significantly in the UV range; avoid buffers containing azides or high concentrations of amines.

- The optimal protein concentration is typically in the range of 0.1-1 mg/mL to ensure the absorbance at 280 nm is within the linear range of the instrument (0.1-1.0 AU).

- Prepare a concentrated stock solution of the ligand in the same buffer or a compatible solvent (e.g., DMSO, ensuring the final DMSO concentration is ≤1%).

Instrument Setup:

- Use a dual-beam UV-Vis spectrophotometer with matched quartz cuvettes (1 cm path length).

- Set the scanning speed to medium or slow (e.g., 60-120 nm/min) and the data interval to 0.5-1 nm for sufficient spectral detail.

- Set the wavelength range from 240 nm to 350 nm to capture protein absorption and potential ligand-related shifts.

Titration Experiment:

- Place the protein solution in the sample cuvette and the reference buffer in the reference cuvette.

- Acquire a baseline-corrected spectrum of the protein alone.

- Add small, incremental volumes of the ligand stock solution to the sample cuvette, mixing thoroughly after each addition. Add a corresponding volume of pure buffer to the reference cuvette to maintain matched conditions.

- After each addition and mixing step, allow the solution to equilibrate for 1-2 minutes, then record the spectrum.

- Continue the titration until no further spectral changes are observed, indicating binding saturation.

Data Analysis:

- Note the change in absorption intensity (hypochromicity or hyperchromicity) and/or the shift in the wavelength of maximum absorption (λmax).

- Plot the change in absorbance (ΔA) at a specific wavelength (e.g., 280 nm) against the ligand concentration.

- Fit the binding isotherm to an appropriate model (e.g., 1:1 binding) to calculate the dissociation constant (Kd).

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Materials for Spectroscopic Protein Analysis

| Item | Function/Application | Technical Notes |

|---|---|---|

| Deuterated Solvents (e.g., D₂O) | NMR solvent; minimizes interfering ^1H signals, allows exchangeable proton studies [28] | High isotopic purity (>99.9%) is critical; store under inert atmosphere |

| ATR Crystals (ZnSe, Diamond, Ge) | FTIR sampling; internal reflection element for attenuated total reflectance measurement [29] | Diamond is most durable; ZnSe offers best general performance; clean thoroughly between uses |

| Quartz Cuvettes | UV-Vis sample holder; transparent down to 190 nm UV range [28] | Use for UV measurements; ensure pathlength is appropriate for sample concentration |

| Chaotropes & Stabilizers | Control protein folding state; induce unfolding for stability studies | Urea, guanidine HCl (chaotropes); Sucrose, trehalose (stabilizers) |

| Isotope-Labeled Nutrients (^15N, ^13C) | NMR; produce labeled proteins for structural studies, simplifying complex spectra [28] | Used in bacterial/yeast expression systems; significantly increases cost |

| Buffer Components | Maintain protein stability and pH; mimic physiological conditions | Phosphate, HEPES, Tris; avoid IR-absorbing buffers (e.g., citrate) for FTIR |

NMR, UV-Vis, and IR spectroscopy provide a complementary toolkit for protein and biomarker analysis, each operating on distinct physical principles and offering unique insights. NMR excels in providing atomic-resolution structural details, UV-Vis offers simplicity for quantification and interaction studies, and FTIR is powerful for probing secondary structure and conformational changes. The distinction between spectroscopy as the science of energy-matter interaction and spectrometry as the practice of spectral measurement underpins the application of these techniques. For drug development professionals, selecting the appropriate technique—or a combination thereof—depends on the specific research question, from initial biomarker discovery and protein characterization to detailed mechanistic studies of drug-target interactions.

To understand the power of mass spectrometry, it is essential to distinguish it from the broader field of spectroscopy. Spectroscopy is the theoretical science studying the absorption and emission of light and other radiation by matter, focusing on the interaction between energy and materials to deduce physical and chemical properties [10]. Spectrometry refers to the practical measurement of these interactions, generating quantitative data about a spectrum [10]. Mass spectrometry (MS) is a prime example of spectrometry, measuring the mass-to-charge ratio (m/z) of ions to identify and quantify molecules within a sample [10].

Mass spectrometry has revolutionized proteomics and metabolomics by offering unparalleled capabilities for both discovery and validation. The metabolome, representing the total complement of metabolites in a sample, is highly informative as it reflects genetics, diet, drug effects, disease status, and more [30]. Similarly, in proteomics, MS enables detailed analysis of protein expression, modifications, and interactions [31]. This guide explores how targeted and untargeted mass spectrometry strategies provide a comprehensive toolkit for deciphering biological systems, driving innovations in biomarker discovery, drug development, and clinical diagnostics.

Core Analytical Strategies: Targeted vs. Untargeted Workflows

Mass spectrometry-based 'omics' studies primarily follow two analytical strategies: targeted and untargeted. Each has distinct objectives, workflows, and applications.

Untargeted Metabolomics and Proteomics

Untargeted analysis is a global, hypothesis-generating approach designed to comprehensively measure all detectable analytes (metabolites, lipids, or peptides) in a sample [32].

- Objective: To achieve broad coverage for biomarker discovery and novel biological insight without bias toward specific metabolites or pathways [32].

- Workflow: Involves global metabolite extraction, often using biphasic solvents like methanol/chloroform/water to capture a wide physico-chemical diversity of molecules [30]. Data acquisition is typically via high-resolution mass spectrometry (HRMS) such as Quadrupole-Time-of-Flight (Q-TOF) or Orbitrap instruments, which provide accurate mass measurements for tentative compound identification [32] [33].

- Data Output: Results in relative quantification of thousands of features, enabling the detection of unexpected metabolic changes [32].

Targeted Metabolomics and Proteomics

Targeted analysis is a hypothesis-driven approach focused on the precise measurement of a predefined set of analytes [32].

- Objective: To achieve highly accurate and reproducible absolute quantification of specific compounds, often for validation purposes [32].

- Workflow: Relies on optimized sample preparation for specific metabolites, frequently using isotopically labeled internal standards to correct for matrix effects and ionization efficiency variations [30] [32]. Data acquisition commonly employs triple quadrupole (QQQ) mass spectrometers operating in Selected/Multiple Reaction Monitoring (SRM/MRM) mode, which offers high sensitivity, specificity, and a broad dynamic range [33].

- Data Output: Provides absolute concentrations for a typically smaller set of analytes (e.g., ~20 in many protocols), facilitating robust statistical comparisons between sample groups [32].

Strategic Comparison and Selection

The choice between targeted and untargeted approaches involves trade-offs, summarized in the table below.

Table 1: Comparison of Untargeted and Targeted Mass Spectrometry Approaches

| Feature | Untargeted Approach | Targeted Approach |

|---|---|---|

| Scope | Global, comprehensive analysis of all detectable analytes [32] | Focused analysis of a predefined set of characterized analytes [32] |

| Hypothesis | Hypothesis-generating [32] | Hypothesis-driven [32] |

| Identification | Qualitative identification and relative quantification of thousands of metabolites [32] | Absolute quantification of known metabolites [32] |

| Quantification | Relative quantification [32] | Absolute quantification using internal standards [30] [32] |

| Throughput | High-throughput for discovery [32] | High-throughput for validation [33] |

| Key Advantage | Unbiased discovery of novel biomarkers and pathways [32] | High sensitivity, specificity, and precision for validation [32] |

| Primary Limitation | Complex data processing, unknown metabolite identification challenges [32] | Limited to known metabolites, requiring a priori knowledge [32] |

The Mass Spectrometry Instrumentation Toolkit

A range of mass spectrometers, each with unique strengths, is deployed in proteomics and metabolomics.

Table 2: Comparison of Mass Spectrometry Instruments for Proteomics and Metabolomics

| Instrument | Mass Analyzer Type | Key Features | Strengths | Ideal Use Cases |

|---|---|---|---|---|

| TSQ Quantum Access MAX [33] | Triple Quadrupole | H-SRM, fast polarity switching | High sensitivity and selectivity for quantification; robust LC-MS/MS | Targeted quantification, clinical assays, environmental monitoring [33] |

| Orbitrap Fusion Lumos [33] | Quadrupole + Orbitrap + Linear Ion Trap | Ultrafast MSn, multiple fragmentation modes | Versatile; excellent for structural analysis; ultrahigh resolution | Advanced proteomics, post-translational modifications, metabolomics [33] |

| Agilent 6540 UHD Q-TOF [33] | Quadrupole + Time-of-Flight | Jet Stream ESI, high mass accuracy | Good resolution; accurate mass; fast MS/MS | Small molecule identification, metabolomics, fast screening [33] |

| Q Exactive Plus [33] | Quadrupole + Orbitrap | High resolution (up to 280,000), HCD fragmentation | Excellent for both quantification and identification; high resolution | Quantitative proteomics, lipidomics, complex mixture analysis [33] |

Ionization techniques are critical for generating ions from liquid or solid samples. Key methods include:

- Electrospray Ionization (ESI): Ideal for liquid chromatography (LC) coupling and analyzing large, non-volatile molecules like proteins and peptides [31].

- Nano-ESI: An enhancement of ESI using finer capillaries, offering improved sensitivity for limited samples [31].

- Matrix-Assisted Laser Desorption/Ionization (MALDI): Often used for surface analysis and imaging, allowing for spatial mapping of molecules in tissues [31].

Integrated and Advanced Methodologies

The SQUAD Workflow: Bridging Discovery and Validation

A significant innovation is the Simultaneous Quantitation and Discovery (SQUAD) approach, which combines targeted and untargeted workflows into a single experiment [34]. SQUAD leverages advanced mass spectrometers like the Orbitrap Exploris MS, which can perform full-scan MS1 profiling for untargeted discovery while simultaneously conducting targeted MS2 experiments for precise quantification in the same injection [35]. This hybrid method allows researchers to gain deep biological knowledge without compromising on quantitative rigor, saving time and resources [34] [35].

Sample Preparation and Experimental Design

Robust sample preparation is foundational to successful MS analysis. Key steps include:

- Sample Collection and Quenching: Rapid quenching of metabolism (e.g., flash-freezing in liquid nitrogen) is crucial for tissues and cells to immediately halt enzymatic activity and preserve the in vivo metabolic state [30].

- Metabolite Extraction: Liquid-liquid extraction is common. For broad coverage in untargeted studies, biphasic solvents like methanol/chloroform/water are used to extract both polar (into the methanol/water phase) and non-polar metabolites (into the chloroform phase) [30].

- Internal Standards: Adding known amounts of isotopically labeled standards prior to extraction is vital in targeted workflows. They correct for losses during preparation and variability in instrument response, enabling absolute quantification [30].

Essential Research Reagents and Materials

Successful MS experiments rely on a suite of high-quality reagents and materials.

Table 3: Key Research Reagent Solutions for Mass Spectrometry

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Isotopically Labeled Internal Standards (e.g., ¹³C, ¹⁵N) [30] | Enables accurate absolute quantification by correcting for matrix effects and analytical variability. | Quantification of specific amino acids, lipids, or drugs in plasma [30]. |

| LC-MS Grade Solvents (e.g., methanol, acetonitrile, water) [30] | High-purity solvents for mobile phases and sample extraction to minimize background noise and ion suppression. | Reversed-phase liquid chromatography; metabolite extraction using methods like Bligh & Dyer [30]. |

| Chromatography Columns (e.g., C18, HILIC) | Separates complex mixtures of peptides or metabolites prior to MS analysis to reduce ion suppression and isobaric interferences. | C18 for proteomics and lipidomics; HILIC for polar metabolomics. |

| Derivatization Reagents (e.g., for GC-MS) | Chemically modifies analytes to enhance volatility, stability, or ionization efficiency. | Silylation of metabolites for Gas Chromatography-MS analysis. |

Workflow Visualization

The following diagram illustrates the integrated SQUAD workflow for mass spectrometry analysis:

Applications and Future Directions

Mass spectrometry has become indispensable in biomedical research and drug development. Key applications include:

- Biomarker Discovery: Untargeted metabolomics and proteomics identify novel diagnostic, prognostic, or predictive biomarkers for diseases like cancer, diabetes, and neurodegenerative disorders [36] [31].

- Drug Metabolism and Pharmacokinetics (DMPK): Targeted MS assays quantify drug compounds and their metabolites in biological fluids to study absorption, distribution, metabolism, and excretion (ADME) [31].

- Precision Medicine: FTIR spectroscopy, combined with MS, shows promise for rapid patient stratification, such as predicting outcomes in critically ill patients, facilitating faster clinical decision-making [36].

Future directions point toward deeper integration and higher throughput. The Orbitrap Astral MS, for example, demonstrates emerging capabilities by providing over 90% MS2 coverage of all compounds in a sample within a single run, nearly eliminating the trade-off between coverage and analytical depth [35]. The continued convergence of targeted and untargeted paradigms, along with advancements in ambient ionization and miniaturization, will further solidify the role of MS as a cornerstone of analytical science [31] [33].

The biopharmaceutical industry is navigating a period of unprecedented innovation, driven by an increasing understanding of disease biology and the rise of novel therapeutic modalities [37]. In this complex landscape, advanced analytical techniques are not merely supportive tools but critical enablers for drug discovery, development, and quality control. The distinction between spectroscopy—the theoretical study of the interaction between radiated energy and matter—and spectrometry—the practical measurement of a specific spectrum to obtain quantifiable results—is foundational [10] [1]. This whitepaper explores two powerful techniques embodying this principle: Hybrid Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS), a cornerstone of quantitative spectrometry, and A-TEEM (Absorbance-Transmission and Excitation-Emission Matrix), an advanced form of fluorescence spectroscopy. Framed within the broader thesis of spectrometry and spectroscopy research, this guide details their operational principles, methodologies, and applications, providing drug development professionals with the insights needed to leverage these technologies for complex analytical challenges.

Core Principles: Spectrometry vs. Spectroscopy

While the terms are often used interchangeably, understanding their distinct roles is crucial for selecting the appropriate analytical strategy.

Spectroscopy is the science of studying how radiated energy and matter interact. It involves the splitting of light into its constituent wavelengths to create a spectrum, which can be analyzed to gather information about a sample's properties, such as its composition or structure [10]. It is primarily a theoretical and observational science.

Spectrometry is the methodological application that deals with the measurement of a specific spectrum. It uses instruments to produce quantifiable data, such as the intensity of radiation at different wavelengths or mass-to-charge ratios [10] [1].

In essence, spectroscopy provides the theoretical framework, while spectrometry provides the practical measurement tools and data [1]. Mass spectrometry is a prime example of a spectrometry technique, where the mass-to-charge ratio (m/z) of ions is measured to identify and quantify molecules in a sample [38].

Hybrid LC-MS/MS: A Workhorse for Bioanalysis