Spectroscopic Analysis in 2025: A Comprehensive Guide for Research and Drug Development

This article provides a contemporary overview of spectroscopic analysis, tailored for researchers and drug development professionals.

Spectroscopic Analysis in 2025: A Comprehensive Guide for Research and Drug Development

Abstract

This article provides a contemporary overview of spectroscopic analysis, tailored for researchers and drug development professionals. It covers foundational principles and explores the latest instrumental advances, including molecular rotational resonance (MRR) and quantum cascade laser (QCL) microscopy. The scope extends to practical methodologies for biomedical applications, strategies for troubleshooting and optimizing analyses with new machine learning tools, and frameworks for validating methods and comparing techniques. Designed as a holistic resource, it integrates the most recent developments from 2025 to equip scientists with the knowledge to leverage spectroscopy effectively in their work.

Core Principles and 2025's Frontier: Understanding the Spectrum of Modern Techniques

Light-matter interaction forms the foundational principle of spectroscopic analysis, a critical methodology for researchers and drug development professionals seeking to understand material composition, molecular structure, and dynamic processes at the most fundamental level. This interaction encompasses the ways in which electromagnetic radiation couples with matter, resulting in absorption, emission, or scattering phenomena that provide characteristic fingerprints for qualitative identification and quantitative measurement [1]. The field has evolved dramatically from early classical descriptions to sophisticated quantum mechanical models that account for the complex interplay between photons and materials at nanoscale dimensions [2]. For analytical scientists, understanding these fundamental mechanisms is not merely academic—it directly enables the development of more sensitive detection methods, enhances analytical precision, and unlocks new capabilities for characterizing complex biological systems and pharmaceutical compounds.

Recent theoretical advances have revealed that crystal symmetry and quantum confinement effects significantly influence light-matter interactions, with lower-symmetry crystals demonstrating enhanced capabilities for trapping light at nanometer scales [1]. Simultaneously, experimental breakthroughs have enabled unprecedented control over the magnetic component of light, which had traditionally been overlooked in favor of electric field interactions [3]. These developments are reshaping the analytical landscape, providing researchers with new tools to probe molecular systems with greater specificity and under previously inaccessible conditions. This technical guide examines both established and emerging concepts in light-matter interaction, with particular emphasis on their practical application in spectroscopic analysis across drug discovery and materials characterization.

Fundamental Concepts and Theoretical Framework

Classical and Quantum Descriptions

The theoretical description of light-matter interaction spans multiple scales, from classical electrodynamics governing macroscopic phenomena to quantum electrodynamics (QED) essential for understanding nanoscale and molecular interactions. In the classical framework, light propagates as electromagnetic waves characterized by oscillating electric and magnetic fields, while matter responds through its dielectric function. This description adequately explains many spectroscopic phenomena including refraction, reflection, and basic absorption processes. However, for interactions at the molecular level or when quantum effects become significant, a quantum mechanical treatment becomes necessary [2].

In the QED framework, both light and matter are quantized, with photons representing discrete energy packets and matter described by quantum states. When light and matter interact strongly, they form hybrid states known as polaritons, which inherit properties from both constituents [2]. These hybrid states enable the modification of material properties by engineering the electromagnetic environment, forming the basis of the emerging cavity-materials-engineering paradigm [2]. The formation of polaritons is particularly significant in analytical spectroscopy because it can alter energy transfer pathways, modify reaction rates, and enable new detection mechanisms not possible through conventional means.

The Overlooked Magnetic Component

Conventional spectroscopic analysis has predominantly focused on the interaction between matter and the electric field component of light, with the magnetic component largely neglected. However, recent research has demonstrated that magnetic light-matter interactions play pivotal roles in various optical processes including chiral light-matter interactions, photon-avalanching, and forbidden photochemistry [3]. The magnetic field of light can be strategically manipulated at nanoscale dimensions using specially designed plasmonic nano-antennas that spatially decouple electric and magnetic fields, creating isolated magnetic "hot spots" with minimal electric field [3].

This capability has profound implications for spectroscopic analysis, particularly for studying materials with magnetic dipole transitions. For example, trivalent europium ions (Eu³⁺) exhibit both purely electric and magnetic dipolar transitions, enabling selective excitation through either component depending on the experimental configuration [3]. This selectivity provides analytical chemists with an additional dimension for probing complex systems, potentially reducing interference and increasing specificity in the analysis of pharmaceutical compounds and biological macromolecules.

Symmetry Considerations in Light-Matter Interactions

Crystal symmetry fundamentally governs how materials interact with light, with lower symmetry crystals often demonstrating enhanced light-matter interactions [1]. When light generates special vibrations (phonons) in crystals with broken symmetry, special polaritons are created that can trap light in extremely small spaces—approximately the size of a nanometer [1]. This confinement, which is about 80,000 times thinner than a human hair, enables unprecedented control over light at the nanoscale.

For analytical applications, this symmetry dependence means that polymorphic forms of pharmaceutical compounds may exhibit dramatically different spectroscopic signatures and responses, providing opportunities for distinguishing between crystal forms that are otherwise challenging to differentiate. The relationship between symmetry and light confinement also enables the development of enhanced spectroscopic substrates that can boost signal intensity for trace analysis in drug development workflows.

Advanced Experimental Methodologies

Thermo-Optical Characterization for Process Analysis

The interaction between near-infrared laser radiation and nano-additivated polymers across different states of matter represents a significant methodological advancement for process analysis and quality control. Researchers have developed sophisticated approaches for simultaneous NIR measurement of reflection and transmission in polymer powders, enabling thermo-optical analysis under conditions that closely mimic actual manufacturing environments [4].

A key methodology involves employing a double integrating sphere setup to measure attenuation coefficients and penetration depths from measured transmittance and reflectance across all relevant states of matter [4]. This approach allows researchers to quantitatively evaluate the effects of feedstock particle size, nano-additivation method, and nanoparticle quantity on optical properties. The measured optical parameters can be qualitatively correlated with the depth of fusion in processed materials, providing critical quality control metrics for pharmaceutical manufacturing and advanced materials development.

Table 1: Quantitative Parameters in Thermo-Optical Characterization

| Parameter | Analytical Significance | Measurement Technique |

|---|---|---|

| Attenuation Coefficient | Quantifies light absorption and scattering properties | Calculated from transmittance and reflectance measurements |

| Penetration Depth | Determines process depth in laser-based manufacturing | Derived from attenuation coefficients |

| NIR Reflectance | Indicates material interaction with specific laser wavelengths | Double integrating sphere measurement |

| NIR Transmittance | Complementary to reflectance for complete optical characterization | Simultaneous measurement with reflectance |

Nanoscale Magnetic Field Mapping

Advanced nanoscale mapping of magnetic light-matter interactions enables unprecedented analytical capabilities for characterizing materials with magnetic dipole transitions. The experimental methodology involves several sophisticated components:

Plasmonic Nano-antenna Design: Fabrication of an aluminum nanodisk (50nm thickness, 550nm diameter) optimized to spatially decouple electric and magnetic components of localized plasmonic fields under specific excitation wavelengths (527.5nm for magnetic dipole transitions and 532nm for electric dipole transitions) [3].

Near-Field Scanning Optical Microscopy (NSOM): Integration of the nano-antenna with an NSOM tip enables deterministic positioning within nanometers of the sample, typically europium-doped yttrium oxide nanoparticles approximately 150nm in diameter [3].

Selective Excitation: Using a finely filtered supercontinuum laser source to selectively target either magnetic (⁷F₀→⁵D₁ at 527.5nm) or electric (⁷F₁→⁵D₁ at 532nm) dipolar transitions in Eu³⁺ ions [3].

Spatial Mapping: Scanning the plasmonic nano-antenna in the plane of the doped nanoparticle while collecting luminescence signals at specific emission wavelengths (593nm for MD transitions and 611nm for ED transitions) to construct two-dimensional images of electric and magnetic field distributions [3].

This methodology provides researchers with the capability to separately map electric and magnetic interactions at subwavelength scales, offering new dimensions for material characterization in pharmaceutical analysis and biomolecular research.

Computational Approaches to Light-Matter Interaction

Density functional theory (DFT) provides powerful computational methodologies for predicting and understanding light-matter interactions in complex materials. Recent studies have demonstrated the application of DFT approaches to determine light-matter interaction and thermal heat conversion efficiency in double perovskite materials, revealing direct bandgaps ranging from 3.25eV (Z = F) to 0.37eV (Z = I) with significant UV absorption in optical spectra [5].

The computational protocol involves:

Structural Optimization: Using the FP-LAPW+lo method to confirm structural and thermodynamic stability through formation energy calculations and Goldschmidt's tolerance factor [5].

Electronic Structure Calculation: Employing TB-mBJ+SOC potential to determine electronic band structures and density of states [5].

Optical Property Calculation: Computing dielectric functions, absorption coefficients, and other optical parameters from the electronic structure [5].

Thermoelectric Performance Evaluation: Applying Boltzmann transport theory to assess thermoelectric performance versus chemical potential, showing promising ZT values approaching 1.0 at 1000K [5].

These computational methodologies enable researchers to predict material properties and optimize analytical approaches before undertaking experimental work, significantly accelerating the development of new spectroscopic methods and materials for pharmaceutical applications.

Instrumentation and Technical Implementation

Advanced Spectroscopic Instrumentation

The field of spectroscopic instrumentation continues to evolve rapidly, with recent introductions demonstrating enhanced capabilities for both laboratory and field applications. The 2025 review of spectroscopic instrumentation reveals several key trends, including a clear division between laboratory and field/portable instruments and the increasing importance of miniaturization without compromising analytical performance [6].

Table 2: Recent Advances in Spectroscopic Instrumentation (2024-2025)

| Technique | Instrument | Key Features | Applications |

|---|---|---|---|

| Fluorescence Spectroscopy | FS5 v2 Spectrofluorometer (Edinburgh Instruments) | Increased performance and capabilities | Photochemistry and photophysics research |

| UV-Vis-NIR Spectroscopy | NaturaSpec Plus (Spectral Evolution) | Field-based with real-time video and GPS coordinates | Field documentation and environmental analysis |

| IR Microscopy | LUMOS II ILIM (Bruker) | QCL-based imaging from 1800-950 cm⁻¹ at 4.5 mm²/s | High-speed chemical imaging of pharmaceuticals |

| Raman Spectroscopy | TaticID-1064ST (Metrohm) | Handheld with onboard camera and note-taking | Hazardous materials response and quality control |

| Microwave Spectroscopy | Broadband chirped pulse spectrometer (BrightSpec) | First commercial instrument using this technique | Unambiguous determination of molecular structure |

Experimental Protocol: Double Integrating Sphere Measurement

For researchers implementing thermo-optical characterization of materials, the following detailed protocol enables accurate measurement of light-matter interaction parameters:

Objective: Simultaneously measure transmission and reflection of polymer powders and nanocomposites under conditions relevant to laser powder bed fusion processes.

Materials and Equipment:

- Double integrating sphere setup (e.g., Ulbricht spheres)

- NIR laser source (e.g., 808nm diode laser)

- Spectrophotometer for validation measurements

- Polymer powders (e.g., Polyamide 12)

- Nano-additives (e.g., carbon black nanoparticles)

- Powder handling and containment fixtures

Procedure:

- Sample Preparation:

- Prepare polymer powder samples with controlled particle size distributions

- Surface-coat powders with carbon black nanoparticles using standardized additivation methods

- Compact powders to reproducible densities matching process conditions

Instrument Calibration:

- Perform baseline correction with reference standards

- Validate sphere alignment and detector responses

- Confirm laser wavelength and power stability

Measurement Sequence:

- Place sample between integrating spheres

- Illuminate with NIR laser at predetermined power settings

- Simultaneously measure diffuse reflection (Rₑ) and total transmission (Tₑ)

- Calculate absorption Aₑ = 100 - Rₑ - Tₑ

- Derive attenuation coefficient using Kubelka-Munk theory

Data Validation:

- Compare results with commercial spectrophotometer measurements

- Correlate optical parameters with depth of fusion in processed specimens

- Perform statistical analysis on replicate measurements

This methodology provides researchers with quantifiable parameters for comparing material behavior under process-relevant conditions, enabling predictive modeling of laser-material interactions in pharmaceutical processing and additive manufacturing.

Research Reagent Solutions and Materials

Successful investigation of light-matter interactions requires carefully selected materials and reagents optimized for specific analytical applications. The following table details essential research solutions for implementing the experimental methodologies discussed in this guide.

Table 3: Essential Research Reagent Solutions for Light-Matter Interaction Studies

| Material/Reagent | Function | Application Examples |

|---|---|---|

| Polyamide 12 Powders | Model polymer substrate for thermo-optical studies | Laser powder bed fusion process development [4] |

| Carbon Black Nanoparticles | Absorption-enhancing nano-additives | Tailoring optical properties in polymer nanocomposites [4] |

| Yttrium Oxide Nanoparticles | Host material for lanthanide ion dopants | Magnetic light-matter interaction studies [3] |

| Europium-doped Nanoparticles | Solid-state emitters with MD and ED transitions | Nanoscale mapping of electric and magnetic fields [3] |

| K₂TlAsZ₆ Double Perovskites | Model materials for computational validation | DFT studies of light-matter interaction and thermal conversion [5] |

| Aluminum Nanodisks | Plasmonic nano-antenna components | Spatial decoupling of electric and magnetic fields [3] |

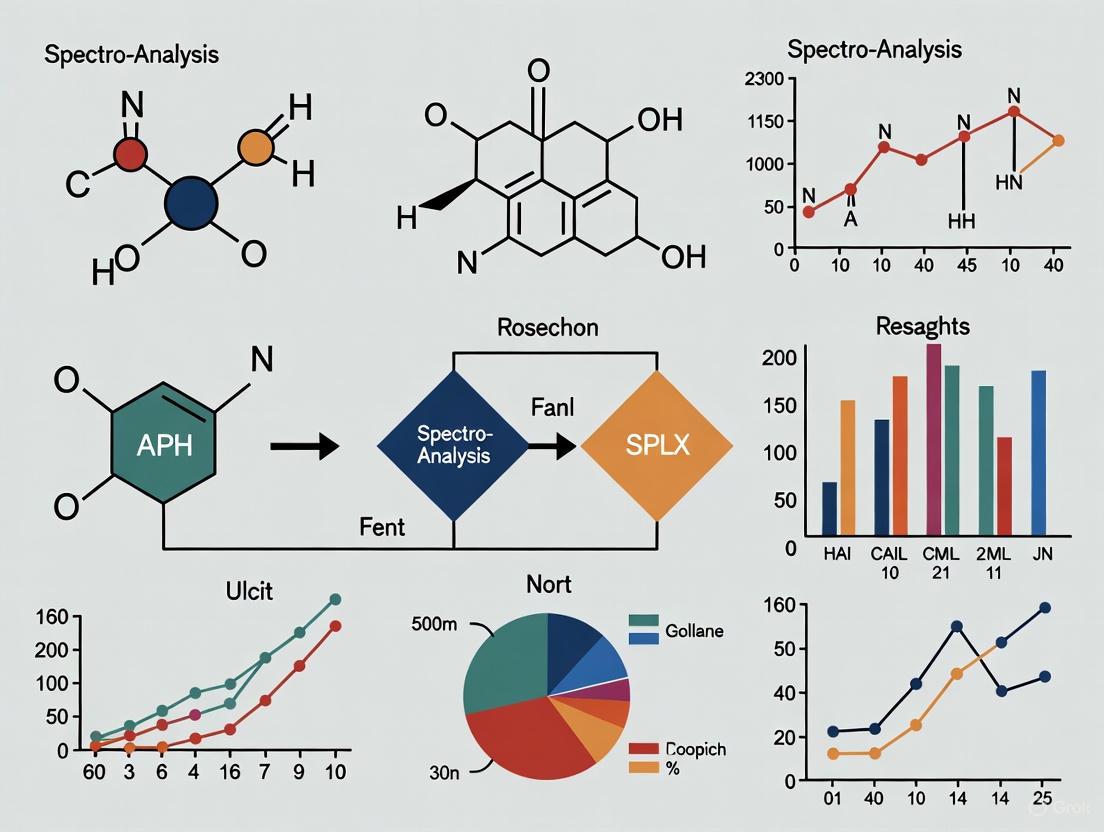

Visualizing Experimental Workflows

The following diagrams illustrate key experimental workflows and conceptual frameworks for studying light-matter interactions, providing researchers with visual references for implementing these methodologies.

Diagram 1: Double Integrating Sphere Measurement

Diagram 2: Plasmonic Nano-antenna Measurement

Applications in Drug Discovery and Pharmaceutical Research

The principles of light-matter interaction find critical applications throughout drug discovery and pharmaceutical development, enabling key analytical capabilities that accelerate research and improve outcomes. The global molecular spectroscopy market, valued at USD 7.15 billion in 2025 and projected to reach USD 9.04 billion by 2034, reflects the growing importance of these techniques in pharmaceutical applications [7].

Pharmaceutical applications dominate the molecular spectroscopy market, driven by increasing drug development activities and quality control requirements [7]. Spectroscopy provides essential capabilities for identification, stability testing, and purity verification of drug candidates. The growing development of biologics, vaccines, and other complex pharmaceutical products further increases reliance on advanced spectroscopic methods for characterization [7].

The magnetic component of light-matter interactions offers particular promise for pharmaceutical analysis, enabling selective excitation of specific transitions in complex molecules [3]. This selectivity can reduce interference in analytical methods and provide additional dimensions for characterizing complex biological systems and pharmaceutical formulations. Furthermore, the ability to spatially separate electric and magnetic interactions at nanoscale dimensions opens new possibilities for studying drug-target interactions and cellular uptake mechanisms.

Emerging trends in drug discovery for 2025 highlight the growing integration of artificial intelligence and computational approaches with experimental validation methods [8]. Light-matter interactions form the fundamental basis for many of these analytical techniques, including cellular thermal shift assays (CETSA) for target engagement studies and advanced spectroscopic methods for characterizing complex biologics [8]. The continued evolution of spectroscopic instrumentation ensures that these tools will remain essential for pharmaceutical researchers seeking to understand and optimize drug candidates throughout the development pipeline.

Light-matter interaction represents a dynamic and evolving field with profound implications for spectroscopic analysis across drug discovery, materials characterization, and fundamental research. Recent advances in understanding magnetic interactions, symmetry effects, and nanoscale confinement have expanded the analytical toolbox available to researchers, enabling more specific, sensitive, and informative measurements. The integration of theoretical, computational, and experimental approaches provides a comprehensive framework for designing and implementing spectroscopic methods tailored to specific analytical challenges.

For drug development professionals, these advances translate to enhanced capabilities for characterizing complex pharmaceutical compounds, understanding drug-target interactions, and ensuring product quality throughout development and manufacturing. As instrumentation continues to evolve toward more portable and accessible formats while maintaining laboratory-level performance, spectroscopic analysis based on fundamental light-matter interactions will continue to expand its applications across the drug discovery continuum, from initial target identification through manufacturing quality control.

The electromagnetic spectrum provides the fundamental foundation for spectroscopic analysis, enabling researchers to probe molecular and atomic structures through light-matter interactions. This entire spectrum represents a continuum of electromagnetic radiation, traveling in waves and spanning from very long radio waves to very short gamma rays [9]. For research scientists, particularly in drug development and analytical chemistry, understanding this spectrum is crucial for selecting appropriate spectroscopic techniques to solve specific analytical challenges, from determining protein structures to quantifying active pharmaceutical ingredients.

The fundamental principle uniting all spectroscopic methods is that electromagnetic radiation interacts with matter in distinct, measurable ways depending on its energy. These interactions—whether absorption, emission, or scattering—provide characteristic fingerprints of molecular composition, structure, and dynamics [10]. The relationship between wavelength (λ), frequency (f), and photon energy (E) is mathematically defined by: f = c/λ and E = hf, where c is the speed of light in vacuum and h is the Planck constant [10]. This direct relationship means that higher frequency radiation carries more energy, which determines the types of molecular transitions it can probe.

Our atmosphere creates critical "windows" that affect how we employ different spectral regions. While visible light largely passes through the atmosphere, other regions like X-rays and far-infrared are significantly absorbed, necessitating satellite-based instrumentation or specialized laboratory equipment for certain analyses [9]. This atmospheric filtering is particularly relevant for environmental monitoring and remote sensing applications in research.

The Electromagnetic Spectrum: Regions and Characteristics

The electromagnetic spectrum is systematically divided into regions based on frequency, wavelength, and photon energy, with each region enabling specific spectroscopic techniques. The following table provides a comprehensive overview of these regions and their research applications:

Table 1: Regions of the Electromagnetic Spectrum and Their Research Applications

| Class | Wavelength Range | Frequency Range | Photon Energy Range | Primary Research Applications |

|---|---|---|---|---|

| Gamma Rays | < 10 pm | > 30 EHz | > 124 keV | Nuclear chemistry, Mossbauer spectroscopy, radiation damage studies |

| X-Rays | 10 pm - 10 nm | 30 EHz - 30 PHz | 124 eV - 124 keV | X-ray crystallography, X-ray fluorescence, medical imaging |

| Ultraviolet (UV) | 10 nm - 400 nm | 30 PHz - 750 THz | 124 eV - 3.1 eV | UV-Vis spectroscopy, DNA analysis, protein characterization |

| Visible Light | 400 nm - 700 nm | 750 THz - 480 THz | 3.1 eV - 1.7 eV | Colorimetry, fluorescence spectroscopy, microscopy |

| Infrared (IR) | 700 nm - 1 mm | 480 THz - 300 GHz | 1.7 eV - 1.24 meV | FT-IR, vibrational spectroscopy, chemical fingerprinting |

| Microwaves | 1 mm - 1 m | 300 GHz - 300 MHz | 1.24 meV - 1.24 μeV | Electron paramagnetic resonance, rotational spectroscopy |

| Radio Waves | > 1 m | < 300 MHz | < 1.24 μeV | Nuclear Magnetic Resonance (NMR), MRI |

The energy characteristics of each spectral region determine its interaction with matter. Gamma rays, X-rays, and extreme ultraviolet radiation possess sufficient energy to ionize atoms by ejecting electrons—a property leveraged in analytical techniques like X-ray photoelectron spectroscopy [10] [11]. In contrast, lower-energy radiation such as infrared, microwaves, and radio waves causes non-destructive excitations, including molecular vibrations, rotations, and nuclear spin transitions, making them invaluable for analyzing molecular structure without damaging samples [10].

The boundaries between these regions are not sharply defined but rather represent transitions where properties gradually change. For instance, the distinction between X-rays and gamma rays is based on origin rather than energy: photons generated from nuclear decay are termed gamma rays, while those from electronic transitions involving inner atomic electrons are classified as X-rays [10]. This nuanced understanding is essential for researchers selecting appropriate spectroscopic methods for specific analytical problems.

Spectroscopic Techniques Across the Spectrum

Atomic and Molecular Spectroscopy

Spectroscopic techniques exploit characteristic interactions between matter and specific regions of the electromagnetic spectrum. Atomic spectroscopy methods, including atomic absorption and emission spectroscopy as well as inductively coupled plasma mass spectrometry (ICP-MS), primarily utilize ultraviolet and visible regions to probe electronic transitions in atoms [6]. These techniques provide exceptional sensitivity for elemental analysis and metal quantification in pharmaceutical ingredients.

Molecular spectroscopy encompasses a broader range of techniques. UV-Vis spectroscopy measures electronic transitions in conjugated systems, while infrared spectroscopy probes vibrational modes of chemical bonds [6]. Nuclear Magnetic Resonance (NMR), operating in the radio frequency region, exploits the magnetic properties of atomic nuclei to determine molecular structure and dynamics [10]. The recent introduction of multi-collector ICP-MS instruments demonstrates ongoing advancements in atomic spectrometry, offering improved resolution for isotopic analysis [6].

Advanced Spectroscopic Technologies

Recent instrumentation advances have expanded capabilities across the spectral range. The 2025 review of spectroscopic instrumentation highlights several cutting-edge technologies [6]:

Fluorescence Innovations: The FS5 v2 spectrofluorometer targets photochemistry research with enhanced performance, while the Veloci A-TEEM Biopharma Analyzer simultaneously collects absorbance, transmittance, and fluorescence excitation-emission matrices for monoclonal antibodies and vaccine characterization.

IR Microscopy Advances: Quantum Cascade Laser (QCL)-based systems like the LUMOS II ILIM and Protein Mentor provide enhanced imaging capabilities in the 1800-950 cm⁻¹ range, enabling protein characterization and impurity identification in biopharmaceuticals.

Portable Field Instruments: Miniature and handheld devices, such as the NaturaSpec Plus UV-vis-NIR instrument, incorporate real-time video and GPS coordinates for field documentation, while the TaticID-1064ST handheld Raman spectrometer assists hazardous materials teams with analysis guidance and documentation features.

Novel Techniques: The first commercial broadband chirped pulse microwave spectrometer from BrightSpec enables unambiguous determination of molecular structure and configuration in the gas phase through rotational spectroscopy.

Table 2: Advanced Spectroscopic Instrumentation and Applications

| Technique | Spectral Region | Instrument Examples | Research Applications |

|---|---|---|---|

| Fluorescence Spectroscopy | UV-Vis | Edinburgh Instruments FS5 v2, Horiba Veloci A-TEEM | Photochemistry, biopharmaceutical analysis, vaccine characterization |

| Raman Spectroscopy | Visible | Horiba SignatureSPM, PoliSpectra, Metrohm TaticID-1064ST | Semiconductor analysis, high-throughput screening, hazardous material identification |

| Infrared Microscopy | Mid-IR | Bruker LUMOS II ILIM, Protein Mentor, PerkinElmer Spotlight Aurora | Contaminant analysis, protein stability, deamidation monitoring |

| UV-Vis-NIR Spectroscopy | UV-Vis-NIR | Shimadzu UV-vis, Avantes AvaSpec ULS2034XL+, Spectral Evolution NaturaSpec Plus | Quality control, agricultural testing, geochemical analysis |

| Microwave Spectroscopy | Microwave | BrightSpec broadband chirped pulse spectrometer | Molecular structure determination, gas-phase analysis |

Experimental Protocols: Combined Spectroscopic Analysis

Protocol for Catalytic Material Characterization

This detailed protocol exemplifies how multiple spectroscopic techniques can be integrated to characterize complex catalytic materials, specifically MoVOₓ catalysts for selective oxidation [12].

Sample Preparation

- Synthesize MoV oxide hydrothermally using ammonium heptamolybdate and vanadyl sulfate dissolved separately in water (230 mL and 30 mL respectively) [12].

- Mix solutions at 40°C under vigorous stirring, then transfer to a corrosion-resistant autoclave.

- Heat to 150°C at 1°C min⁻¹ rate and maintain for 100 hours with continuous stirring at 100 rpm.

- Isolate resulting solid by filtration, wash with water, and dry at 80°C.

- Thermally treat dried solid at 400°C in flowing argon (100 mL min⁻¹) for 2 hours in a rotary tube furnace.

UV-Vis Spectroscopy Analysis

- Record UV-vis spectra in diffuse reflectance mode using an Agilent Cary 5000 instrument with Praying Mantis DRP-SAP attachment and HVC-VUV reaction chamber [12].

- Use Spectralon as white reference and present spectra in Kubelka-Munk function (F(R)).

- Employ in situ cell for potential measurements under reaction conditions.

Multi-wavelength Raman Spectroscopy

- Conduct Raman measurements using a confocal microscope system with 750 mm focal length monochromator and UV-enhanced CCD camera [12].

- Obtain spectra under resonance conditions using multiple laser excitations: 266 nm, 325 nm, 355 nm, 442 nm, 488 nm, and 532 nm.

- Minimize beam damage by adjusting energy density and using different irradiated spots for consecutive measurements.

- For 532 nm excitation: Use ×10 objective, 600 g mm⁻¹ grating, and ND filters (1.4-1.8) resulting in 0.2-0.5 mW power at sample, 300 s exposure.

- For 325 nm excitation: Use UVB objective, 2400 g mm⁻¹ grating, and ND filters (0.7, 1.1, 1.2) resulting in 0.14-0.44 mW power, 1800 s exposure.

Computational Analysis

- Perform calculations with ORCA suite version 3.0.3 using density functional theory (DFT) with B3LYP functional and Grimme's dispersion correction [12].

- Employ time-dependent DFT (TD-DFT) with independent mode displaced harmonic oscillator model (IMDHO) for spectral predictions.

- Use triple-ζ quality basis sets (TZV-DKH) for all atoms with relativistically determined contraction coefficients.

- Construct cluster models with up to 9 Mo and V metallic centers to represent bulk MoVOₓ structure, saturating cut bonds with hydrogen atoms.

The experimental workflow for this multi-technique characterization is visually summarized below:

Chemometric Analysis in Raman Spectroscopy

Modern spectroscopic analysis increasingly relies on advanced computational methods to extract meaningful information from complex spectral data. The following protocol outlines a standardized workflow for chemometric analysis of Raman spectral data [13]:

Experimental Design

- Ensure adequate sample size through power analysis to achieve statistical significance.

- Implement randomized measurement sequences to avoid systematic biases.

- Include appropriate control samples and calibration standards.

- Consider single-cell mode or raster scanning-based spectral imaging depending on research question.

Data Preprocessing

- Apply spectral calibration using known Raman shift standards.

- Remove cosmic ray spikes and correct for fluorescence background using techniques like shifted excitation Raman difference spectroscopy (SERDS).

- Perform vector normalization or standard normal variate (SNV) transformation to minimize scattering effects.

- Employ quality tests to identify and exclude outlier spectra.

Data Learning and Modeling

- Utilize principal component analysis (PCA) for unsupervised pattern recognition and dimensionality reduction.

- Apply supervised methods like linear discriminant analysis (LDA) or partial least squares discriminant analysis (PLS-DA) for classification.

- Implement machine learning algorithms (support vector machines, random forests) for complex spectral patterns.

- Validate models using cross-validation and independent test sets.

Model Transfer

- Standardize instrumentation and measurement conditions across laboratories.

- Apply calibration transfer techniques to maintain model performance between different instruments.

- Document all preprocessing and modeling parameters for reproducibility.

The Scientist's Toolkit: Research Reagent Solutions

Successful spectroscopic analysis requires carefully selected reagents and materials to ensure accurate, reproducible results. The following table details essential research reagents and their functions in spectroscopic experiments:

Table 3: Essential Research Reagents and Materials for Spectroscopic Analysis

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Ultrapure Water (Milli-Q SQ2 series) | Sample preparation, dilution, mobile phase preparation | FT-IR sample suspension, UV-Vis blank measurements, buffer preparation |

| Spectralon | White reference standard | Diffuse reflectance measurements in UV-Vis-NIR spectroscopy |

| Calibration Standards | Instrument calibration and validation | Raman shift standards, wavelength accuracy verification |

| Deuterated Solvents | NMR solvent with minimal interference | Protein structure determination, organic compound characterization |

| ATR Crystals (Diamond, ZnSe) | Internal reflection element | FT-IR spectroscopy of solids, liquids, and semi-solids |

| Quantum Cascade Lasers | Mid-IR light source | IR microscopy, high-resolution spectral imaging |

| Focal Plane Array Detectors | Multichannel detection | Hyperspectral imaging, rapid spectral acquisition |

| Fluorescent Dyes | Molecular probes and markers | Fluorescence spectroscopy, cellular imaging, binding assays |

The relationships between these research tools and their applications in a complete spectroscopic workflow can be visualized as follows:

The strategic navigation of the electromagnetic spectrum provides researchers with a powerful toolkit for probing matter at molecular and atomic levels. From radio waves revealing molecular structure through NMR to gamma rays probing nuclear properties, each spectral region offers unique analytical capabilities. The continuing advancement of spectroscopic instrumentation—particularly portable field devices, enhanced sensitivity detectors, and specialized systems for pharmaceutical applications—ensures that electromagnetic spectroscopy remains at the forefront of analytical science. For research scientists in drug development and analytical chemistry, mastering these techniques and their underlying principles enables sophisticated material characterization, driving innovation in chemical analysis, pharmaceutical development, and materials science. The integration of computational methods with experimental spectroscopy further enhances our ability to extract maximum information from spectral data, solidifying spectroscopy's role as an indispensable tool in scientific research.

Molecular Rotational Resonance (MRR) spectroscopy, also known as rotational spectroscopy or microwave spectroscopy, is an analytical technique that characterizes polar molecules through their pure rotational transitions in the gas phase [14]. This technology has experienced a remarkable transformation from a specialized research tool to a commercially available analytical solution with groundbreaking potential for molecular analysis [15]. MRR provides unambiguous structural information on compounds and isomers within mixtures without requiring pre-analysis separation, making it particularly valuable for pharmaceutical, chemical, and environmental applications [14].

The fundamental principle underlying MRR spectroscopy is its exceptional sensitivity to a molecule's three-dimensional mass distribution [16]. When a molecule rotates in space, its moments of inertia create a unique rotational fingerprint in the microwave or millimeter-wave regions of the electromagnetic spectrum [17]. This fingerprint is exquisitely sensitive to the smallest structural changes, enabling definitive distinction between similar species, including isomers that are challenging to differentiate with other techniques [15]. The technology's high degree of spectral redundancy—where many spectral lines determine a few parameters—creates an extraordinary level of certainty in molecular identification [15].

Recent advancements, particularly the development of chirped-pulse Fourier transform microwave spectroscopy in 2006 by Brooks Pate at the University of Virginia, revolutionized the field by reducing acquisition times from hours or days to seconds [18] [15]. This breakthrough, coupled with commercial instrumentation now available from companies like BrightSpec, has positioned MRR as a transformative tool for modern analytical challenges [6] [15].

Technical Foundations and Advantages of MRR

Fundamental Principles and Instrumentation

MRR spectroscopy operates by measuring pure rotational energy transitions in the microwave (1-40 GHz) or millimeter-wave (approximately 30-1000 GHz) regions with extremely narrow linewidths (< 1 MHz) under low-pressure conditions [16]. The technique probes the quantized rotational energy levels of molecules, which are determined by their moments of inertia along three principal axes [17]. These moments of inertia (Iaa, Ibb, and Icc) are calculated based on atomic masses and their spatial coordinates relative to the molecule's center of mass, creating a direct mathematical relationship between molecular structure and spectral signature [16].

The rotational transitions measured by MRR provide direct information about the three principal moments of inertia, which serve as unique identifiers of molecular structure [15]. This relationship enables unprecedented structural specificity, as even subtle conformational changes alter these moments and produce distinct spectral patterns [17]. Once a molecule has been characterized by MRR, it becomes "forever recognizable" due to the uniqueness of its rotational signature [14].

Modern MRR instruments incorporate significant technological advancements that have enhanced their practical utility. The chirped-pulse Fourier transform methodology enables broadband spectral acquisition, while supersonic jet expansion cools analytes to approximately 2 Kelvin, simplifying spectral interpretation by reducing the number of populated rotational and vibrational states [16]. Commercial systems now feature more user-friendly interfaces and automated analysis capabilities, making the technology accessible to non-specialists [15].

Comparative Advantages Over Established Techniques

MRR spectroscopy offers a unique combination of capabilities that address limitations of established analytical techniques, positioning it as a valuable complement to traditional laboratory methods.

Table 1: Comparison of MRR with Other Analytical Techniques

| Technique | Key Strengths | Limitations | MRR Advantages |

|---|---|---|---|

| Mass Spectrometry (MS) | High sensitivity; works with LC/GC systems | Limited isomer differentiation; requires reference standards; expensive consumables [14] | Unambiguous isomer identification; reference-standard-free quantification [14] [15] |

| Nuclear Magnetic Resonance (NMR) | Gold standard for structural elucidation | Limited sensitivity for mixtures; requires expert interpretation [14] | Direct mixture analysis; minimal expert interpretation needed [14] |

| Fourier-Transform Infrared (FT-IR) | Functional group identification | Challenging for mixtures without references; limited structural specificity [14] | Clear structural information even in complex mixtures [14] |

| Chromatography (LC/GC) | Excellent separation capabilities | Extensive method development; consumables; limited structural information [14] | Minimal method development; no consumables; built-in structural information [14] |

MRR's distinctive value proposition lies in its ability to combine the structural specificity of NMR with the speed of MS and apply it directly to mixtures without separation [15]. This unique combination addresses critical unmet needs in analytical chemistry, particularly for isomer differentiation, reaction monitoring, and impurity profiling [14].

Applications in Pharmaceutical Research and Development

Residual Solvent Analysis

Solvents are extensively used in pharmaceutical manufacturing processes, and their incomplete removal can compromise drug product quality and safety [14]. Regulatory frameworks like the U.S. Pharmacopeia "<467> Residual Solvents" chapter establish strict limits for these potentially toxic impurities [14]. While headspace gas chromatography (GC) coupled with flame ionization detection or mass spectrometry represents the current standard methodology, this approach faces limitations for certain solvents, particularly those classified as USP <467> Class 2, Procedure C mixtures, where GC lacks the required sensitivity [14].

MRR spectroscopy offers a transformative solution for residual solvent analysis by dramatically simplifying method development, eliminating consumables and solvents, reducing analysis times, and providing equivalent sensitivity with superior quantitative performance compared to GC systems [14]. The technique's ability to directly analyze complex mixtures without pre-separation makes it particularly valuable for challenging solvents that traditionally require complex analytical procedures, potentially accelerating decision-making in pharmaceutical development [14].

Impurity Profiling and Raw Material Validation

Impurities in pharmaceutical raw materials can introduce significant variations in final drug product quality, making reliable identification and quantification of these impurities a critical challenge [14]. Unlike most process parameters, manufacturers have limited direct control over raw material impurities, which often exhibit similar reactivity to the desired compound and can introduce toxic by-products with structures analogous to the active pharmaceutical ingredient [14].

MRR spectroscopy has demonstrated exceptional capabilities in impurity profiling. A landmark study utilized MRR to quantify regioisomeric, dehalogenated, and enantiomeric impurities in raw materials for cabotegravir synthesis (a HIV integrase inhibitor) [14]. This application represented the first use of MRR for rapid quantitative monitoring of isomeric and dehalogenated impurities in pharmaceutical raw materials, leveraging the technique's high resolution and selectivity toward subtle molecular structural changes [14]. The study concluded that MRR offers "unique value in pharmaceutical process analytical technology (PAT) and quality by design (QbD) programs" [14].

Reaction Monitoring and Optimization

The optimization of synthetic routes represents a crucial aspect of pharmaceutical development, aligned with the U.S. Food and Drug Administration's Process Analytical Technology (PAT) initiative aimed at enhancing manufacturing processes through timely measurement of critical parameters [14]. MRR's ability to directly identify and quantify individual components in reaction mixtures without chromatography makes it particularly valuable for automated real-time reaction monitoring [15].

Research published in 2024 established MRR as "an emerging and extraordinarily selective spectroscopic technique to perform automated reaction monitoring measurements" [15]. Another study demonstrated the application of MRR for online reaction monitoring to rapidly characterize yield, specificity, and impurities in chemical reactions, representing the first demonstration of MRR for pharmaceutical synthetic process purity characterization [14]. The key advantage over alternative process monitoring techniques lies in MRR's high resolution and specificity, enabling unambiguous resolution and quantification of different species in complex reaction mixtures [14].

Table 2: Key Pharmaceutical Applications of MRR Spectroscopy

| Application Area | Specific Use Cases | Demonstrated Benefits |

|---|---|---|

| Residual Solvent Analysis | USP <467> Class 2 Mixture C solvents; volatile organic compounds | Simplified method development; reduced analysis time; equivalent sensitivity to GC [14] |

| Impurity Profiling | Regioisomeric, dehalogenated, and enantiomeric impurities in raw materials | Direct analysis without separation; high resolution for structurally similar compounds [14] |

| Reaction Monitoring | Real-time reaction optimization; yield determination; impurity characterization | High specificity in mixtures; automated measurements; no chromatographic separation [14] [15] |

| Chiral Analysis | Enantiomeric excess determination; absolute configuration establishment | Reference-standard-free analysis; high-throughput capability [14] |

| Deuterium Switching | Metabolic stability optimization; pharmacokinetic improvement | Specific identification of deuterated compounds [14] |

Chiral Analysis

The rapid analysis of chiral purity represents a significant unmet need in the pharmaceutical sector, where enantiomeric composition critically influences drug efficacy and safety [14]. MRR offers a novel approach to chiral analysis through chiral tag methodology, enabling determination of enantiomeric excess (EE) and absolute configuration without chromatography [14].

A recent study demonstrated MRR analysis of pantolactone, a chiral lactone intermediate in pantothenic acid (vitamin B5) synthesis, without requiring reference samples of known enantiopurity [14]. Complexes formed between pantolactone and small chiral tag molecules produced distinct rotational spectra resolved by MRR, enabling EE determination with a rapid 15-minute sample-to-sample cycle time [14]. The results demonstrated "quantitative agreement with chiral GC" while offering "significant reductions in measurement time and sample consumption" [14].

Experimental Methodologies and Protocols

Standard MRR Analysis Workflow

The standard experimental workflow for MRR analysis involves several key steps that ensure accurate and reproducible results. While specific protocols vary based on instrument configuration and analytical goals, the fundamental process remains consistent across applications.

Standard MRR Analysis Workflow

The experimental process begins with sample preparation, where solid or liquid samples are introduced without extensive purification [17]. For solid samples with limited volatility, gentle heating may be applied to generate sufficient vapor pressure [17]. The vaporization step converts the sample to the gas phase, typically at low pressure (0.1-10 torr) to minimize molecular collisions [16].

A critical advancement in modern MRR is supersonic expansion, where the gas-phase sample undergoes adiabatic expansion through a small nozzle into a vacuum chamber [16]. This process cools internal molecular degrees of freedom to approximately 2 Kelvin, dramatically simplifying rotational spectra by collapsing population into the lowest vibrational and rotational states [16]. The resulting microwave excitation employs chirped-pulse protocols that simultaneously probe broad frequency ranges (typically 2-8 GHz or 9-18 GHz in commercial instruments) [17] [16].

Following excitation, signal detection captures the molecular free induction decay, which is Fourier-transformed to produce a frequency-domain rotational spectrum [17]. The spectral analysis phase involves fitting experimental transitions to theoretical models to determine rotational constants, which are directly related to molecular moments of inertia [17]. Finally, structural identification compares these experimental parameters with quantum chemical calculations to unambiguously determine molecular structure and conformation [15].

GC-MRR Hyphenated Technique

The coupling of gas chromatography with MRR spectroscopy creates a powerful analytical system that combines high-efficiency separation with unparalleled structural specificity [16]. This hyphenated technique addresses the challenge of analyzing complex mixtures where direct MRR analysis would produce overly congested spectra.

GC-MRR Hyphenated Technique Workflow

The GC-MRR workflow begins with conventional GC injection and separation using standard capillary columns [16]. As analytes elute from the column, makeup gas introduction optimizes flow conditions for subsequent MRR analysis [16]. The pulsed nozzle introduces discrete analyte packets into the MRR spectrometer, synchronized with GC elution profiles [16]. Supersonic expansion cools the molecules before targeted MRR analysis probes specific frequency ranges known to contain strong rotational transitions for expected compounds [16]. Finally, data correlation combines retention time information with structural data from MRR for comprehensive molecular characterization [16].

This hyphenated approach provides three significant advantages over conventional GC-MS: (1) unambiguous identification of isomeric compounds, (2) resolution and quantification of co-eluting compounds without accuracy loss, and (3) absolute quantification without reference standards [16]. A 2020 study demonstrated that GC-MRR could separate and unequivocally identify a microliter sample containing 24 isotopologues and isotopomers—a feat impossible with conventional GC columns alone [16].

Essential Research Reagent Solutions

MRR spectroscopy utilizes specialized reagents and materials to optimize analytical performance. The following table details key components and their functions in MRR experiments.

Table 3: Essential Research Reagents and Materials for MRR Spectroscopy

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Chiral Tag Molecules | Form transient complexes with analytes for chiral distinction | Small, chiral molecules like propylene oxide; enable enantiomeric excess determination [14] |

| Supersonic Expansion Gases | Carrier for adiabatic cooling; rotational temperature reduction | Neon provides optimal cooling; helium offers broader application range; nitrogen enhances sensitivity for specific compounds [16] |

| Quantum Chemistry Software | Predict molecular structure and rotational constants | Programs like Gaussian, Molpro; guide experimental spectral assignment [17] [15] |

| Spectral Fitting Programs | Analyze experimental rotational spectra | Pickett's SPFIT/SPCAT suite; determine rotational parameters from transition frequencies [17] |

| Reference Compounds | Method validation and instrument calibration | Compounds with well-characterized rotational spectra [16] |

Current Market Landscape and Future Directions

Commercial Instrumentation and Adoption

The MRR spectroscopy market has transformed significantly with the introduction of the first commercial instruments, making this powerful technology accessible beyond academic research laboratories. BrightSpec debuted the first commercial broadband chirped-pulse microwave spectrometer, signaling the technique's transition from custom-built research instrumentation to routine analytical solution [6]. This commercialization follows two decades of development supported by the U.S. National Science Foundation, including foundational work on chirped-pulse Fourier transform microwave spectroscopy and its applications in physical chemistry [18].

The broader spectroscopy equipment market, estimated at $23.5 billion in 2024 and projected to reach $35.2 billion by 2033, provides context for MRR adoption [19]. Key growth drivers include increased pharmaceutical demand, environmental monitoring mandates, and R&D acceleration across biotechnology and materials science [19]. While MRR represents a specialized segment within this broader market, its unique capabilities position it for significant growth in coming years.

Commercial MRR platforms are being adopted across diverse sectors. Pharmaceutical companies utilize MRR for drug development and quality control, while environmental laboratories apply it to challenging analytical problems like persistent organic pollutant detection [17]. Chemical manufacturers employ MRR for reaction optimization and impurity profiling, leveraging its specificity for structurally similar compounds [14].

Emerging Applications and Research Directions

MRR spectroscopy continues to evolve with several promising research directions and emerging applications:

Environmental Analysis: MRR has demonstrated exceptional capability for analyzing persistent environmental contaminants like perfluorooctanoic acid (PFOA), where it identified six conformers and provided insights into their structural preferences and isomerization pathways [17]. This application highlights MRR's value for environmental matrices where traditional techniques struggle with interference elimination.

Reaction Optimization: The technology's ability to provide real-time, quantitative data on multiple reaction components simultaneously makes it ideal for kinetic studies and mechanism elucidation [14]. Recent work has demonstrated MRR for continuous monitoring of flow chemistry processes, enabling immediate parameter adjustment based on compositional data [14].

GC-MRR Advancements: Next-generation GC-MRR systems with improved sensitivity demonstrate limits of detection comparable to GC thermal conductivity detectors while providing unparalleled structural specificity [16]. These systems successfully analyzed diverse compound classes including alcohols, nitrogen heterocyclics, halogenated compounds, dioxins, and nitro compounds across a molecular mass range of 46-244 Da [16].

Hyphenated Technique Development: Research continues on coupling MRR with additional separation and detection methods. A prototype GC-MRR spectrometer has demonstrated performance exceeding high-resolution MS and NMR in selectivity, resolution, and compound identification for co-eluting compounds, including isotopes [15].

Future Outlook and Development Trends

The future trajectory of MRR spectroscopy includes several significant technological and application trends:

Artificial Intelligence Integration: AI and machine learning are transforming spectral interpretation, with algorithms enhancing pattern recognition and enabling predictive analytics for molecular identification [15] [19]. These capabilities may eventually enable complete structure elucidation from rotational spectra without reference databases.

Miniaturization and Portability: Following broader spectroscopy trends, MRR may see development of compact systems for field-based analysis [19]. While current instruments require specialized infrastructure, technological advances could enable more portable configurations for specific applications.

Hybrid Technology Development: Instrumentation combining multiple spectroscopic techniques in single platforms represents a growing trend [19]. The integration of MRR with complementary methods like IR or Raman spectroscopy could provide comprehensive molecular characterization capabilities.

Data Lake Integration: The uniqueness of MRR spectral signatures makes the technique ideal for data lake architectures and library matching approaches [15]. As spectral databases grow, MRR could become increasingly valuable for forensic analysis, unknown identification, and regulatory compliance.

Process Analytical Technology: MRR's suitability for real-time reaction monitoring positions it for increased adoption in pharmaceutical manufacturing as a Process Analytical Technology (PAT) tool [14]. This application aligns with industry trends toward continuous manufacturing and quality-by-design principles.

Molecular Rotational Resonance spectroscopy represents a significant advancement in analytical technology, offering unprecedented structural specificity for molecular identification and quantification. Its unique ability to differentiate isomers, analyze complex mixtures without separation, and provide absolute quantification without reference standards addresses critical limitations of established techniques like mass spectrometry and NMR [14] [15].

The technique's transformation from specialized research tool to commercial analytical solution, accelerated by chirped-pulse methodologies and quantum chemistry advances, has unlocked applications across pharmaceutical development, environmental analysis, and chemical manufacturing [14] [18] [15]. As instrumentation becomes more accessible and applications continue to expand, MRR is poised to become an indispensable tool for researchers tackling challenging analytical problems.

For the research community, MRR spectroscopy offers a powerful new approach to molecular characterization that complements existing techniques while providing unique capabilities unavailable through other methods. Its ongoing development, particularly through hyphenated techniques and artificial intelligence integration, ensures that MRR will remain at the forefront of analytical innovation for years to come [15] [19] [16].

Spectroscopic analysis, a fundamental technique in analytical chemistry that involves the interaction of light with matter to determine substance composition and structure, is undergoing a revolutionary transformation [20]. The year 2025 has been particularly significant for the field, with premier conferences like Pittcon and SciX serving as vital platforms for showcasing cutting-edge innovations. These gatherings have highlighted the convergence of artificial intelligence (AI) with traditional spectroscopic methods, accelerated the development of portable instrumentation, and recognized groundbreaking research from both established and emerging scientific leaders [21] [22] [23].

This technical guide examines the most significant trends and award-winning research presented at these 2025 conferences, providing researchers and drug development professionals with a comprehensive overview of the current state and future direction of spectroscopic analysis. By exploring these advancements within the broader context of spectroscopic fundamentals, this document aims to serve as a strategic resource for laboratories seeking to enhance their analytical capabilities.

Award-Winning Research and Emerging Leaders

The year 2025 has seen significant recognition for scientists who have made outstanding contributions to analytical chemistry and applied spectroscopy.

Major Award Recipients of 2025

Table 1: Key Award Recipients at Pittcon 2025 and SciX 2025

| Conference | Award Name | Recipient | Affiliation | Research Contribution |

|---|---|---|---|---|

| Pittcon 2025 | Pittsburgh Spectroscopy Award | Joseph S. Francisco | University of Pennsylvania | Revolutionary research in atmospheric chemistry spectroscopy, connecting molecular spectroscopy with interdisciplinary fields [24] |

| Pittcon 2025 | Pittsburgh Analytical Chemistry Award | Daniel W. Armstrong | University of Texas at Arlington | Fundamental studies in chiral recognition, enantiomeric separations, and development of ionic liquids for chemical investigations [24] |

| Pittcon 2025 | Pittcon Achievement Award | Long Luo | University of Utah | Significant independent impact in electrochemistry with applications in organic synthesis, sensing, and catalysis within ten years of PhD [24] |

| SciX 2025 | Emerging Leader in Molecular Spectroscopy | Lingyan Shi | University of California, San Diego | Development of a multimodal nanoscopy platform combining Raman and fluorescence for studying cellular metabolism [21] |

Ralph N. Adams Award in Bioanalytical Chemistry

- Recipient: Lloyd M. Smith, University of Wisconsin-Madison [24]

- Contributions: Prof. Smith was recognized for his extensive impacts across bioanalytical methods. He co-conceived and developed automated DNA sequencing with Leroy Hood, pioneered biomolecular array technology for lectins, DNA, and RNA, and made substantial innovations in mass spectrometry for protein analysis, including coining the term "proteoform" [24].

Emerging Trends in Spectroscopy

The 2025 conference season revealed several powerful trends shaping the future of spectroscopic analysis, largely driven by technological integration and evolving application demands.

AI and Chemometrics Integration

A dominant trend at both Pittcon and SciX was the deep integration of artificial intelligence into spectroscopic workflows [21]. Traditional chemometric methods like Principal Component Analysis (PCA) and Partial Least Squares (PLS) regression are now being enhanced by machine learning (ML), deep learning, and generative AI [21]. These tools automate complex feature extraction, handle nonlinear data relationships, and significantly improve prediction accuracy and interpretability [21]. Platforms such as SpectrumLab and SpectraML are emerging as crucial tools for standardizing and ensuring reproducibility in AI-driven chemometrics [21]. Future directions highlighted include the integration of large language models (LLMs) and physics-informed neural networks for automated spectral interpretation [21].

At Pittcon, LabVantage Solutions demonstrated this trend with the release of LabVantage 8.9, which incorporates "Lottie AI" for voice command functionality, enabling hands-free laboratory operations [22]. The company's vision involves building a "digital labor force" within their Laboratory Information Management System (LIMS) to reduce the administrative burden on laboratory personnel [22].

Miniaturization and Portability

The demand for on-site analysis is fueling a significant shift toward compact, portable analytical instruments [23]. This trend was prominently displayed at Pittcon 2025, where multiple manufacturers showcased systems designed for field deployment.

- Axcend: Introduced a compact chromatography system featuring a 40-vial/96-well plate autosampler and an in-line process analytical technology (PAT) monitoring system. This design supports low solvent usage while delivering precise sample examinations at the point of collection [23] [25].

- Exum Instruments: Unveiled the Massbox, the first commercial instrument using Laser Ablation Laser Ionization Time of Flight Mass Spectrometry (LALI-TOF-MS). The system occupies just two feet of desk space and can analyze solid samples in minutes, providing qualitative and quantitative analyses, elemental mapping, and depth profiling for nearly the entire periodic table [22].

Advancements in PFAS Analysis

The ongoing concern about polyfluoroalkyl substances (PFAS) continues to drive analytical innovation [22] [23]. At Pittcon 2025, manufacturers responded with new instruments and workflows specifically designed for detecting these persistent environmental contaminants.

PerkinElmer launched the QSight 500 LC/MS/MS System, engineered for advanced detection of PFAS and other emerging contaminants in complex matrices such as sludge and grease [22] [25]. The system features a contamination-resistant design that prevents PFAS buildup and enables more than 25,000 continuous analyses without cleaning [22]. This robust design minimizes extensive sample preparation, with the company highlighting a simple "blend-and-inject" workflow that can reduce analysis time from sample to result to as little as five minutes [22] [25].

Experimental Protocols and Workflows

Multimodal Nanoscopy for Cellular Metabolism

Diagram 1: Multimodal nanoscopy workflow

This workflow, recognized with the Emerging Leader in Molecular Spectroscopy Award at SciX 2025, integrates Raman and fluorescence spectroscopy to study cellular metabolism [21].

- Sample Preparation: Culture cells on appropriate substrates compatible with high-resolution optical imaging. Maintain controlled environmental conditions (temperature, CO₂) throughout.

- Instrument Calibration: Calibrate the multimodal nanoscopy platform using standard reference materials for both Raman and fluorescence channels to ensure spectral accuracy and spatial alignment.

- Data Acquisition:

- Perform Raman spectral mapping across the cellular region of interest. This provides molecular fingerprint information based on vibrational energies.

- Acquire fluorescence images of the same region, leveraging specific fluorophores or autofluorescence to target metabolic co-factors (e.g., NADH, FAD).

- Data Integration: Co-register the hyperspectral Raman and fluorescence datasets using spatial alignment algorithms to create a unified data structure.

- Chemometric Analysis: Process the integrated data using multivariate analysis techniques (e.g., PCA) enhanced by machine learning algorithms to extract meaningful metabolic patterns and identify distinct cellular compartments.

- Metabolic Profiling: Interpret the analyzed data to map spatial distributions of metabolites and identify metabolic heterogeneity within the cellular sample.

High-Throughput Raman Screening of Reaction Kinetics

Diagram 2: High-throughput Raman screening process

This protocol utilizes the HORIBA PoliSpectra Rapid Raman Plate Reader (RPR) showcased at Pittcon 2025, which can scan a 96-well plate in approximately one minute [22].

- Experimental Setup: Prepare reaction mixtures directly in a 96-well microplate. For heterogeneous reactions, ensure adequate mixing prior to measurement or utilize agitation systems.

- Temperature Equilibration: Place the microplate in the instrument's integrated plate heater and allow temperatures to equilibrate to the desired reaction condition.

- Instrument Configuration: Set the Raman acquisition parameters (laser power, integration time, spectral range) via the dedicated software. The system's patent-pending technology enables high-speed reading.

- Kinetic Data Acquisition: Initiate the automated reading sequence. The system performs non-destructive Raman measurements across all wells at user-defined time intervals, enabling real-time monitoring of reaction progression.

- Spectral Processing: Use integrated software for automated preprocessing of spectral data, which may include cosmic ray removal, baseline correction, and normalization.

- Quantitative Analysis: Apply multivariate calibration models or monitor specific Raman peak intensities to quantify reactant consumption and product formation over time, generating kinetic profiles for each reaction condition.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of advanced spectroscopic techniques requires specific materials and reagents. The following table details key components for the workflows described in this guide.

Table 2: Essential Research Reagent Solutions for Advanced Spectroscopy

| Item | Function | Application Examples |

|---|---|---|

| Ionic Liquids | Advanced solvents for chiral separations; enhance selectivity and sensitivity in mass spectrometry [24] | Enantiomeric separation of pharmaceuticals; MS analysis enhancement |

| Fluoropolymer-Free Fluidics | Minimize background contamination in trace-level PFAS analysis [23] | Environmental PFAS testing; compliant environmental monitoring |

| Metabolic Fluorophores (e.g., NADH, FAD) | Native or exogenous labels for fluorescence-based monitoring of cellular metabolism [21] | Live-cell metabolic imaging; multimodal nanoscopy |

| Raman-Stable Isotopes | Non-perturbing labels for tracking molecular pathways without altering chemical properties | Reaction kinetics studies; metabolic flux analysis |

| Chiral Selector Phases | Stationary phases for HPLC/GC that enable separation of enantiomers based on stereospecific interactions [24] | Pharmaceutical purity analysis; chiral drug development |

| Low-Adsorption Consumables | Vials, tips, and tubing that minimize sample loss and analyte adsorption | Trace analysis; PFAS testing; high-sensitivity workflows |

The landscape of spectroscopic analysis in 2025 is characterized by rapid, purposeful evolution. The trends emerging from Pittcon and SciX 2025—the fusion of AI and chemometrics, the push for portable and accessible instrumentation, and the focused response to analytical challenges like PFAS testing—collectively signal a broader shift toward more intelligent, adaptable, and application-driven science. For researchers and drug development professionals, embracing these trends means not only enhancing laboratory efficiency and data quality but also unlocking new capabilities for discovery and innovation. As spectroscopic techniques continue to evolve, guided by the pioneering work of award-winning scientists, their role as indispensable tools across the scientific spectrum appears more secure and promising than ever.

From Lab to Pipeline: Selecting and Applying Spectroscopic Methods in Biomedical Research

Spectroscopy, the study of the interaction between matter and electromagnetic radiation, is a cornerstone of modern analytical chemistry. For researchers and drug development professionals, selecting the appropriate spectroscopic technique is crucial for obtaining accurate, reliable, and meaningful data. This analytical decision primarily branches into two fundamental categories: atomic spectroscopy and molecular spectroscopy. While atomic spectroscopy concerns the analysis of elemental composition by examining light interactions with free atoms, molecular spectroscopy probes the structure, functional groups, and dynamics of molecules by observing their collective quantum transitions [26]. This guide provides an in-depth technical comparison of these fields, empowering you to make an informed choice for your specific research needs, from drug discovery to material characterization.

Core Principles and Energetic Foundations

The fundamental distinction between atomic and molecular spectroscopy lies in the nature of the energy transitions they measure.

Atomic Spectroscopy focuses on electronic transitions in the outer shells of free, gaseous atoms. When an atom is vaporized in a flame or plasma, its electrons can be promoted to higher energy levels by absorbing light of specific wavelengths. When these electrons return to the ground state, they emit light at characteristic wavelengths. The energy of these transitions corresponds to the ultraviolet (UV) and visible regions of the electromagnetic spectrum, providing a unique fingerprint for each element, independent of its chemical form [27] [26]. This principle is the basis for techniques like Atomic Absorption Spectroscopy (AAS) and Inductively Coupled Plasma Mass Spectrometry (ICP-MS).

Molecular Spectroscopy, in contrast, investigates the quantized energy levels of entire molecules. These energy states are more complex than those of individual atoms due to additional modes of motion:

- Vibrational Transitions: Correspond to the stretching and bending of chemical bonds.

- Rotational Transitions: Correspond to the rotation of the entire molecule.

- Electronic Transitions: Involve the promotion of electrons in molecular orbitals.

These transitions are not independent; rotational fine structure is often superimposed on vibrational transitions, which are themselves superimposed on electronic transitions. The energy required for these transitions places molecular spectroscopy across a wider range of the electromagnetic spectrum, from the microwave (for rotation) to the infrared (IR, for vibration) and UV-visible (for electronic transitions) [26].

The following diagram illustrates the fundamental interaction processes measured by each technique.

Techniques, Applications, and Instrumentation

The core principles of atomic and molecular spectroscopy give rise to a diverse array of analytical techniques, each with specialized instrumentation and application domains.

Atomic Spectroscopy Techniques

Atomic spectroscopy is the benchmark for elemental analysis, offering exceptional sensitivity and selectivity for detecting metals and some non-metals.

Inductively Coupled Plasma Mass Spectrometry (ICP-MS): This technique ionizes a sample in a high-temperature argon plasma (~6000-10000 K), and the resulting ions are separated and quantified by a mass spectrometer. It is renowned for its ultra-trace detection limits (often parts-per-trillion), wide linear dynamic range, and capability for isotopic analysis. Recent advancements include high-resolution multi-collector designs that provide unparalleled precision for isotope ratio measurements, crucial in nuclear material characterization and geochemistry [28] [6].

Atomic Absorption Spectroscopy (AAS): AAS measures the absorption of light by free, ground-state atoms. The sample is atomized in a flame or graphite furnace, and a hollow cathode lamp emits element-specific light. The amount of light absorbed is proportional to the concentration of the element. Graphite Furnace AAS (GFAAS) offers exceptional absolute sensitivity for very small sample volumes [27].

Laser-Induced Breakdown Spectroscopy (LIBS): A rapid, minimally destructive technique where a high-power laser pulse is focused on a sample, creating a microplasma. The emitted light from the plasma is collected and analyzed to determine elemental composition. LIBS is valued for its speed, minimal sample preparation, and ability to perform stand-off analysis, making it ideal for field applications and sorting materials in industrial processes [28] [29].

Molecular Spectroscopy Techniques

Molecular spectroscopy provides insights into chemical structure, identity, and intermolecular interactions, making it indispensable for pharmaceutical and materials research.

Raman Spectroscopy: This technique measures the inelastic scattering of monochromatic light, usually from a laser. The shifts in photon frequency correspond to vibrational modes of the molecule, providing a vibrational fingerprint. Advanced implementations like Stimulated Raman Scattering (SRS) microscopy, as pioneered by researchers like Lingyan Shi, allow for high-sensitivity, label-free chemical imaging of biological processes, such as tracking metabolic activity using deuterium-labeled compounds [30].

Fourier-Transform Infrared (FT-IR) Spectroscopy: FT-IR absorbs mid-infrared light, which corresponds to the fundamental vibrational frequencies of chemical bonds. It is a workhorse technique for identifying functional groups, quantifying components in mixtures, and studying polymer structure. Modern instrumentation includes vacuum systems to eliminate atmospheric interference and QCL-based microscopes for high-resolution chemical imaging [6].

UV-Visible (UV-Vis) Spectroscopy: This method probes electronic transitions in molecules, particularly those with conjugated systems. It is widely used for concentration determination, reaction kinetics monitoring, and protein quantification in biopharmaceuticals [6].

Fluorescence Spectroscopy: Including techniques like Fluorescence Lifetime Imaging (FLIM), it measures the emission of light from molecules after being excited by a higher-energy photon. It is exceptionally sensitive and used for studying biomolecular interactions, conformational changes, and the local environment of fluorophores [30].

Table 1: Comparative Analysis of Key Spectroscopic Techniques

| Technique | Core Principle | Primary Information Obtained | Common Applications | Detection Limits |

|---|---|---|---|---|

| ICP-MS [28] [31] | Ionization in plasma & mass analysis | Elemental concentration & isotope ratios | Trace metal analysis, nuclear forensics, clinical toxicology | ppt (ng/L) to ppb (µg/L) |

| AAS [27] | Absorption of light by free atoms | Elemental concentration | Food safety, environmental monitoring, pharmaceutical impurities | ppb (µg/L) to ppm (mg/L) |

| LIBS [28] [29] | Analysis of laser-induced plasma emission | Elemental composition (qualitative/semi-quant) | Material sorting, geochemistry, planetary exploration | ppm (mg/kg) to % |

| Raman/SRS [30] | Inelastic light scattering | Molecular vibrations, chemical structure, spatial distribution | Bioimaging, polymer characterization, pharmaceutical polymorphs | Varies (e.g., µM for SRS) |

| FT-IR [6] | Absorption of IR light | Functional groups, molecular identity | Quality control, contaminant ID, material science | ~0.1% concentration |

| Fluorescence/FLIM [30] | Emission from excited states | Molecular environment, interactions, localization | Drug discovery, cellular biology, protein folding | Single molecule (in ideal conditions) |

Decision Framework: Selecting the Right Tool

Choosing between atomic and molecular spectroscopy hinges on the fundamental question your research aims to answer. The following workflow provides a strategic path for this critical decision.

Key Selection Criteria

- Analytical Question: As the decision framework shows, the nature of your research question is the primary driver. For determining what elements and how much, choose atomic spectroscopy. For determining what molecules and what structures, choose molecular spectroscopy [26].

- Detection Limits: For ultra-trace elemental analysis, ICP-MS is unmatched. For detecting specific molecular interactions, fluorescence offers extreme sensitivity [28] [30].

- Sample Throughput and Preparation: LIBS and some Raman configurations require minimal sample preparation, ideal for high-throughput screening or field analysis. In contrast, ICP-MS and GF-AAS often involve extensive sample digestion and preparation [28] [29].

- Destructive vs. Non-Destructive: Most atomic techniques (ICP-MS, AAS) are destructive. Many molecular techniques (Raman, FT-IR microscopy) are non-destructive, allowing for further analysis of the same sample [6].

- Spatial Information: Techniques like Raman microscopy, SRS, and LIBS can provide spatial mapping of chemical or elemental distribution, whereas bulk AAS and ICP-MS provide an average composition of the digested sample [28] [30] [6].

The Synergetic Fusion Approach