Spectroscopic Method Validation According to ICH Guidelines: A Lifecycle Approach from Q2(R2) to Q14

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on validating spectroscopic methods using the modern International Council for Harmonisation (ICH) lifecycle framework.

Spectroscopic Method Validation According to ICH Guidelines: A Lifecycle Approach from Q2(R2) to Q14

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on validating spectroscopic methods using the modern International Council for Harmonisation (ICH) lifecycle framework. Covering the newly adopted ICH Q2(R2) and Q14 guidelines, it details a systematic approach from foundational principles and Quality by Design (QbD) to practical application, troubleshooting, and robust validation for techniques like NIR and Raman spectroscopy. The content synthesizes regulatory expectations, strategic method development, and advanced optimization to ensure reliable, compliant analytical procedures that enhance efficiency and data integrity in pharmaceutical development.

Understanding the ICH Regulatory Framework: From Q2(R1) to the Modern Lifecycle

The International Council for Harmonisation (ICH) has fundamentally transformed the landscape of analytical procedure validation and development with the simultaneous introduction of Q2(R2) and Q14 guidelines. This evolution from the previous Q2(R1) standard, which had served as the global benchmark since 1994, represents a significant shift from a primarily validation-focused approach to a more comprehensive lifecycle management system for analytical procedures [1] [2]. The update addresses critical gaps in the original guideline, which was primarily designed around traditional small molecule drugs and lacked specific guidance for the unique challenges posed by biologics and modern analytical technologies [1]. Driven by the increasing complexity of biopharmaceutical products and rapid advancements in analytical technologies, these revised guidelines aim to enhance the robustness, reliability, and reproducibility of analytical methods throughout their entire operational life [1] [3].

The close interrelationship between Q2(R2) and Q14 creates a cohesive framework where analytical development and validation are intrinsically linked rather than treated as separate activities [4] [2]. ICH Q14 introduces structured, science-based approaches to analytical procedure development, while Q2(R2) provides the updated validation requirements to ensure these procedures remain fit-for-purpose throughout their lifecycle [1] [4]. This harmonized approach aligns with the broader pharmaceutical quality system concepts described in ICH Q8-Q12, promoting better integration between method development, validation, and continual improvement [2]. For researchers and drug development professionals working with spectroscopic methods, this evolution supports more flexible, risk-based approaches that can adapt to emerging technologies while maintaining rigorous quality standards.

Comparative Analysis: Key Changes from Q2(R1) to Q2(R2) and Q14

Foundational Conceptual Shifts

The transition from ICH Q2(R1) to the new Q2(R2) and Q14 framework introduces several paradigm shifts that fundamentally change how analytical methods are developed, validated, and maintained [2]. The most significant change is the introduction of a comprehensive lifecycle approach that extends beyond initial validation to include continuous monitoring and improvement throughout the method's operational use [1]. This shift requires organizations to implement systems for ongoing method evaluation rather than treating validation as a one-time event [1]. Additionally, the new guidelines formally integrate Quality by Design (QbD) principles into analytical science, emphasizing proactive development based on predefined objectives rather than retrospective validation [1] [3]. The concept of an Analytical Target Profile (ATP) is introduced as a foundational element, providing a clear statement of the method's required performance characteristics before development begins [1] [3].

Another crucial advancement is the strengthened risk management framework that permeates both development and validation activities [1] [2]. The updated guidelines encourage systematic risk assessments using tools such as Failure Mode and Effects Analysis (FMEA) to identify and control potential method failures before they occur [1] [2]. Furthermore, the new framework offers enhanced support for modern technologies, including multivariate methods and advanced spectroscopic techniques that were not adequately addressed in Q2(R1) [4] [3]. This modernization allows for more appropriate validation approaches for complex analytical systems commonly used in pharmaceutical analysis today [4].

Detailed Parameter Comparison

The following table summarizes the key differences in validation parameters and conceptual approaches between the legacy and new guidelines:

Table 1: Comprehensive Comparison of ICH Q2(R1) vs. Q2(R2) and Q14 Frameworks

| Parameter/Concept | ICH Q2(R1) Approach | ICH Q2(R2) & Q14 Approach | Key Evolutionary Changes |

|---|---|---|---|

| Lifecycle Management | Not addressed | Central concept [2] | Promotes continuous method verification and improvement [1] |

| Method Development | Limited guidance | Structured approach with ATP [1] | Q14 introduces QbD principles; defines Analytical Target Profile [3] |

| Risk Assessment | Not formally included | Required element [2] | Systematic risk management integrated [1] |

| Specificity | Required | Required with expanded guidance [2] | More guidance on matrix effects and peak purity [2] |

| Linearity & Range | Required | Required with broader application [2] | Explicit linkage to ATP; modern statistical approaches [1] |

| Accuracy & Precision | Required | Required with enhanced requirements [1] | Intra- and inter-laboratory studies for reproducibility [1] |

| LOD & LOQ | Required for limit tests | Required with clearer guidance [2] | Additional approaches for estimation and reporting [2] |

| Robustness | Optional with limited detail | Recommended with lifecycle focus [2] | Now compulsory and tied to continuous evaluation [1] |

| System Suitability | Implied | Explicitly emphasized [2] | Linked to ongoing performance monitoring [2] |

| Regulatory Documentation | Standard requirements | Enhanced documentation [1] | Increased focus on data integrity and transparency [1] |

| Technology Applicability | Limited to traditional methods | Expanded to modern techniques [4] | Includes multivariate, spectroscopic methods [3] |

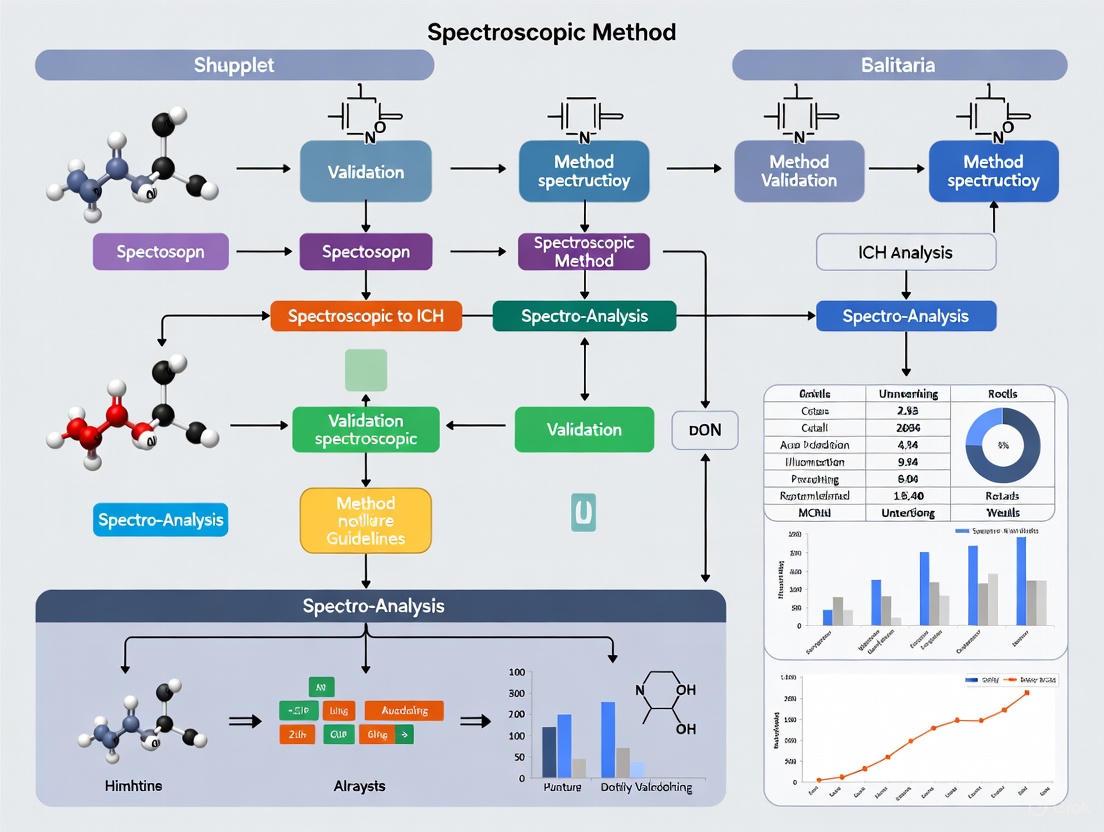

Analytical Procedure Lifecycle Workflow

The modernized approach to analytical procedures integrates development, validation, and ongoing verification into a seamless lifecycle management system. The following workflow illustrates this comprehensive framework:

Figure 1: Analytical Procedure Lifecycle Management. This workflow illustrates the integrated approach mandated by ICH Q2(R2) and Q14, emphasizing continuous method performance verification rather than treating validation as a one-time event.

The lifecycle begins with defining an Analytical Target Profile (ATP), which specifies the performance requirements for the method before development commences [1] [3]. This crucial first step establishes the foundation for all subsequent activities and ensures the method is designed to meet its intended purpose. The development phase then incorporates QbD principles and risk assessment activities to identify and address potential failure modes early in the process [1] [2]. Method validation under Q2(R2) confirms that the developed method meets all predefined performance characteristics, with particular attention to parameters such as specificity, accuracy, precision, and robustness [1] [5].

During routine use, the method enters the ongoing performance verification stage, where system suitability testing and data trending provide continuous assurance of method performance [2]. The lifecycle approach incorporates regular performance monitoring and a structured change management process to manage modifications and ensure the method remains in a state of control [1]. When monitoring data indicates potential issues, the framework provides pathways for adjustments, continuous improvement, or when necessary, a return to development or revalidation activities [2]. This dynamic model represents a significant advancement over the static approach of Q2(R1), where validation was often considered complete after initial testing.

Experimental Protocols for Method Validation

Validation Experimental Design

Implementing the Q2(R2) guideline requires carefully designed experimental protocols that demonstrate method performance across all relevant parameters. The following diagram outlines a comprehensive validation workflow for a spectroscopic method, such as the UV-Vis spectrophotometric method referenced in the search results:

Figure 2: Method Validation Experimental Workflow. This protocol outlines the sequential testing approach for analytical method validation under ICH Q2(R2), culminating in system suitability test definition for ongoing verification.

For specificity testing, experiments must demonstrate the method's ability to unequivocally assess the analyte in the presence of potential interferents, such as impurities, excipients, or matrix components [3] [2]. For spectroscopic methods, this typically involves comparing samples containing the analyte alone versus samples with added interferents, confirming that the analytical signal originates specifically from the target analyte [6]. Linearity evaluation requires testing a minimum of five concentration levels across the specified range, with statistical analysis of the relationship between analyte concentration and instrument response [3] [6]. In the UV-Vis method for chalcone quantification, linearity was demonstrated across 0.3-17.6 μg/mL with R² of 0.9994, indicating excellent correlation [6].

Accuracy studies typically involve spiking known amounts of analyte into placebo or sample matrix and calculating percentage recovery, which should ideally fall within 98-102% for API quantification as demonstrated in the chalcone method [3] [6]. Precision assessment includes both repeatability (same analyst, same conditions) and intermediate precision (different days, different analysts, different instruments) expressed as %RSD, with values ≤2% generally acceptable for assay methods [3] [2]. The LOD and LOQ determinations employ statistical approaches based on the standard deviation of the response and the slope of the calibration curve, with the chalcone method demonstrating appropriate sensitivity for its intended use [6].

Robustness testing, now compulsory under Q2(R2), involves deliberate variations of method parameters such as wavelength, pH, mobile phase composition, or temperature to evaluate the method's reliability [1] [2]. The experimental design should identify critical parameters through risk assessment and test their impact on method performance [1]. Finally, system suitability tests are established based on the validation data to ensure the ongoing reliability of the analytical system during routine use [2].

Application to Spectroscopic Methods

The implementation of Q2(R2) for spectroscopic method validation follows the same fundamental principles but requires technique-specific considerations. For UV-Vis spectrophotometry, particular attention should be paid to wavelength accuracy, stray light effects, and resolution requirements [6]. The validation of the chalcone quantification method demonstrates several key aspects: proper analytical wavelength determination (390 nm), specificity against structurally similar compounds (flavonoids), and appropriate precision with coefficients of variation below 2.1% [6].

For more complex spectroscopic techniques such as NMR or IR, additional validation parameters may include spectral resolution, chemical shift stability, or signal-to-noise ratio requirements. The expanded guidance in Q2(R2) accommodates these technique-specific needs while maintaining the core principles of method validation [4] [3]. The guideline also provides specific considerations for multivariate analytical procedures, which are increasingly common in modern spectroscopic applications [4].

Essential Research Reagents and Materials

The implementation of robust analytical methods according to Q2(R2) and Q14 requires specific high-quality materials and reagents. The following table outlines essential components for spectroscopic method development and validation:

Table 2: Essential Research Reagent Solutions for Spectroscopic Method Validation

| Reagent/Material | Function in Analytical Procedure | Quality Requirements |

|---|---|---|

| Reference Standards | Quantification and method calibration | Certified purity with documentation; traceable to primary standards [3] |

| Chromatographic Solvents | Mobile phase preparation; sample dilution | HPLC-grade with low UV absorbance; specified expiration dating [6] |

| Sample Preparation Reagents | Extraction, dilution, or derivatization | Appropriate grade for intended use; tested for interference [3] |

| System Suitability Solutions | Verification of analytical system performance | Well-characterized mixtures of target analytes and potential interferents [2] |

| Stability Testing Solutions | Forced degradation studies | Controlled concentration of degradation agents (acid, base, oxidant) [3] |

The selection of appropriate reference standards is particularly critical, as these materials form the basis for method calibration and quantification [3]. These standards must be of certified purity and properly characterized, with documentation supporting their identity and quality [3]. For spectroscopic methods, high-purity solvents are essential to minimize background interference and ensure accurate measurement of the target analyte [6]. In the chalcone quantification method, carbon tetrachloride was specifically selected as the dilution solvent based on its compatibility with the analytical technique and analyte [6].

System suitability solutions, now explicitly emphasized in Q2(R2), should be designed to challenge critical aspects of method performance, typically containing the target analyte at specified concentrations along with potential interferents to verify specificity [2]. For stability-indicating methods, forced degradation solutions containing appropriate concentrations of acid, base, oxidant, or other stress conditions are necessary to demonstrate the method's ability to detect degradation products without interference from the main analyte [3].

Regulatory Implementation Strategy

Transition Framework from Q2(R1) to Q2(R2)

Implementing the updated ICH guidelines requires a systematic approach to ensure compliance while maximizing the benefits of the enhanced framework. Research indicates that a successful transition involves multiple coordinated activities [1] [7]. The following strategic approach is recommended:

Comprehensive Gap Analysis: Conduct thorough assessments of existing methods and validation processes to identify gaps and areas for improvement in line with the new ICH guidelines [1] [7]. This should include evaluation of current method performance data, documentation practices, and change control systems against Q2(R2) requirements [7].

Structured Training Programs: Invest in education to familiarize staff with the new guidelines and their practical applications, ensuring a smooth transition to the updated standards [1]. Training should cover the changes between ICH Q2(R1) and Q2(R2), as well as education on the guidance provided in ICH Q14, focusing on the lifecycle approach, risk management, and the importance of defining the ATP [1].

Enhanced Documentation Systems: Upgrade documentation practices to meet the increased transparency requirements of Q2(R2), ensuring all phases of method development, validation, and changes are thoroughly recorded [1]. This includes maintaining detailed records of the method's performance over time and the rationale behind any methodological adjustments [1].

Risk-Based Method Management: Adopt proactive risk management strategies as recommended by ICH Q14, conducting thorough risk assessments during early method development stages [1]. Leveraging tools such as Failure Mode and Effects Analysis (FMEA) can systematically evaluate potential risks and their impacts on method performance [1].

Lifecycle Implementation Plan: Develop a structured approach for implementing lifecycle management across the analytical portfolio, including regular performance reviews and defined criteria for method improvement or revalidation [2].

Global Regulatory Perspectives

The implementation of ICH Q2(R2) and Q14 has prompted regulatory agencies worldwide to re-evaluate their expectations for analytical method validation and lifecycle management [2]. Global authorities including the U.S. FDA, European Medicines Agency (EMA), and other ICH member regulatory authorities are increasingly promoting risk-based, science-driven validation strategies that go beyond static testing and emphasize ongoing method control [2]. Regulatory inspections across markets have increasingly focused on deficiencies related to incomplete method robustness data, lack of performance verification, and inadequate change control documentation [2].

The U.S. FDA has long supported principles now embedded in Q2(R2), as seen in its previous guidance encouraging integration of method development with performance qualification and system suitability [2]. The agency now expects pharmaceutical manufacturers to demonstrate not only that a method works initially, but also that it will continue to perform throughout the product lifecycle [2]. Similarly, the EMA has encouraged lifecycle-based validation through the adoption of ICH Q8-Q12 and now Q2(R2) and Q14, with particular emphasis on method robustness, data integrity, and adequate ongoing verification systems [2].

The evolution from ICH Q2(R1) to Q2(R2) and the introduction of ICH Q14 represent a fundamental transformation in the regulatory framework for analytical procedures. This shift from a validation-focused checklist to a comprehensive lifecycle management approach enables more robust, reliable, and reproducible analytical methods that can adapt to the increasing complexity of modern pharmaceuticals, particularly biologics [1] [2]. The integration of QbD principles, enhanced risk management, and emphasis on continuous method verification provide a scientific foundation for maintaining method performance throughout the product lifecycle [1] [3].

For researchers and pharmaceutical development professionals, these updated guidelines offer both challenges and opportunities. The initial investment required for implementation is significant, involving staff training, process modification, and enhanced documentation systems [1]. However, the long-term benefits include improved method reliability, reduced operational failures, and more efficient regulatory compliance [1] [4]. By embracing these guidelines, the pharmaceutical industry can enhance analytical quality while supporting the development of safer and more effective medicines for patients worldwide [1].

In the highly regulated field of pharmaceutical development, precise terminology is not just a matter of semantics—it is a fundamental requirement for ensuring quality, regulatory compliance, and patient safety. Within the framework of spectroscopic method validation according to International Council for Harmonisation (ICH) guidelines, a clear and consistent understanding of the terms "analytical procedure" and "analytical method" is critical. Confusing these terms can lead to gaps in validation protocols, inadequate control strategies, and potential regulatory deficiencies. This guide objectively compares these two concepts, delineating their distinct roles within the analytical lifecycle.

Core Definitions and Conceptual Comparison

The distinction between an analytical procedure and an analytical method is foundational. An analytical procedure encompasses the entire process from sample collection to the reporting of the final result [8]. In contrast, an analytical method typically refers only to the specific instrumental technique or analytical principle used for the measurement itself [8].

The following table summarizes the key differences.

| Feature | Analytical Procedure | Analytical Method |

|---|---|---|

| Scope | The complete process from sampling to result reporting [8]. | The instrumental portion or analytical technique (e.g., HPLC, spectroscopy) [8]. |

| Components | Includes sampling, sample preparation, reagents, instrumentation, analysis, and data processing [8]. | Focuses on the operational parameters of the analytical technique (e.g., wavelength, flow rate) [9]. |

| Context | A holistic, process-oriented view. | A subset of the overall analytical procedure. |

To visualize this hierarchical relationship, the following diagram maps the components of an analytical procedure, showing how the analytical method fits within the broader process.

The Regulatory and Lifecycle Context

Alignment with ICH Guidelines

The distinction between an analytical procedure and a method is reinforced and given practical significance through modern regulatory guidelines. The ICH Q2(R2) guideline on the validation of analytical procedures provides the framework for evaluating the performance characteristics of these controls [5] [3]. Importantly, this validation must demonstrate that the entire analytical procedure is suitable for its intended purpose, a fundamental Good Manufacturing Practice (GMP) requirement [8].

Complementing this, the ICH Q14 guideline on analytical procedure development introduces a systematic, science- and risk-based approach for designing these procedures [3] [10]. A cornerstone of this enhanced approach is the Analytical Target Profile (ATP), which is a prospective summary of the quality attribute's measurement requirements [11]. The ATP defines the performance criteria for the reportable result—the output of the analytical procedure—thereby guiding its development and validation [11] [12].

The Analytical Procedure Lifecycle

Modern regulatory science, as reflected in ICH Q14, USP general chapter <1220>, and the revised ICH Q2(R2), champions a lifecycle approach to analytical procedures [8] [10] [1]. This model consists of three stages:

- Procedure Design and Development: Establishing an ATP and developing a procedure, which includes selecting the appropriate analytical method, to meet the ATP criteria [8].

- Procedure Performance Qualification: Traditionally known as method validation, this stage qualifies the performance of the final procedure against the parameters defined in the ATP [8].

- Continued Procedure Performance Verification: Ongoing monitoring to ensure the procedure remains in a state of control during routine use [8].

This lifecycle management ensures that the analytical procedure, inclusive of the analytical method, remains robust and fit-for-purpose, facilitating more efficient investigation of out-of-specification (OOS) results and management of post-approval changes [11] [12].

Experimental Validation Workflow

The following diagram outlines a generalized experimental workflow for validating an analytical procedure, highlighting stages where the holistic "procedure" view is critical versus where the specific "method" is the focus.

Essential Research Reagent Solutions

The following table details key reagents and materials used in the development and validation of analytical procedures, with an emphasis on spectroscopic applications.

| Item | Function in Analytical Procedure |

|---|---|

| Chemical Reference Standards | Certified standards used to calibrate the analytical method and demonstrate accuracy and specificity of the overall procedure [9]. |

| High-Purity Solvents & Reagents | Ensure minimal interference during sample preparation and analysis, directly impacting precision and detection limits [9]. |

| System Suitability Test (SST) Materials | Reference mixtures or samples used to verify that the entire analytical system (from instrument to columns) is performing as required before analysis [11]. |

Within the context of ICH-guided spectroscopic method validation, the terms "analytical procedure" and "analytical method" are not interchangeable. An analytical method is the specific technical core of the measurement, while the analytical procedure is the comprehensive, regulated process that ensures the method's result is meaningful, reliable, and reportable. Adopting this precise terminology and the associated lifecycle approach, centered on a well-defined ATP, is fundamental to developing robust control strategies, ensuring regulatory compliance, and ultimately protecting patient health.

From Traditional Workflow to Modern Lifecycle Management

The Analytical Procedure Lifecycle (APL) represents a fundamental shift in how analytical methods are developed, validated, and maintained within the pharmaceutical industry. This modern framework moves beyond the traditional, linear approach of development, validation, and routine use toward an integrated lifecycle management model that emphasizes robustness, scientific understanding, and continuous improvement [13].

The traditional view emphasized rapid method development followed by validation and operational use, with changes requiring revalidation or redevelopment [13]. In contrast, the APL concept, championed by new guidances like USP <1220> and ICH Q14, adopts a Quality by Design (QbD) approach. This ensures procedures are more robust and scientifically sound by focusing on earlier lifecycle phases, such as defining procedure specifications in an Analytical Target Profile (ATP) [13].

Regulatory frameworks have evolved to support this holistic view. ICH Q2(R2) on analytical procedure validation and ICH Q14 on analytical procedure development are designed to complement each other, creating a unified framework for the entire lifecycle [3] [14]. This harmonized approach is applicable to both small-molecule drugs and biological products, supporting both traditional and enhanced, risk-based development approaches [3].

Core Stages of the Analytical Procedure Lifecycle

The APL framework consists of three interconnected stages:

- Stage 1: Procedure Design and Development: This initial stage is derived from the ATP and involves scientifically sound method development to understand critical method parameters and their controls [13].

- Stage 2: Procedure Performance Qualification: Corresponding to traditional method validation, this stage demonstrates that the procedure is suitable for its intended purpose [13].

- Stage 3: Procedure Performance Verification: This ongoing stage involves continuous monitoring of procedure performance during routine use to ensure maintained suitability [13].

These stages feature feedback loops for continual improvement, allowing knowledge gained during routine use to inform procedure refinements [13].

The Regulatory Framework: ICH Q2(R2) and Q14

The APL concept is supported by two key ICH guidelines that provide the regulatory foundation for modern analytical practices. ICH Q2(R2) focuses on validation principles, while ICH Q14 addresses analytical procedure development, together creating a comprehensive framework for managing the entire analytical procedure lifecycle [3].

Key Components of ICH Q2(R2)

ICH Q2(R2) builds upon the previous Q2(R1) guideline and provides guidance on validation tests for analytical procedures, with the objective of demonstrating that a procedure is "fit for the intended purpose" [15] [5]. The scope applies to analytical procedures used for release and stability testing of commercial drug substances and products, covering identity, potency, purity, and impurity testing [15] [5].

The guideline has been updated to include validation principles for advanced analytical techniques, including spectroscopic or spectrometry data (e.g., NIR, Raman, NMR, MS) that often require multivariate statistical analyses [15]. This expansion makes the guideline particularly relevant for modern spectroscopic method validation.

Key Components of ICH Q14

ICH Q14 complements Q2(R2) by providing a structured approach to analytical procedure development [3]. It emphasizes science- and risk-based approaches, encouraging the use of prior knowledge, robust method design, and clear definition of the Analytical Target Profile (ATP) [3]. The guideline also introduces important concepts such as control strategy, established conditions, and lifecycle management, ensuring analytical procedures remain robust and compliant throughout their use [3].

Table: Comparison of Key ICH Guidelines Governing the Analytical Procedure Lifecycle

| Guideline | Focus Area | Key Concepts | Applicability |

|---|---|---|---|

| ICH Q2(R2) [5] [3] | Validation of Analytical Procedures | Defines validation parameters (accuracy, precision, specificity); Demonstrates fitness for purpose | Release & stability testing; Chemical & biological drugs |

| ICH Q14 [3] | Analytical Procedure Development | ATP, Science- & risk-based approach, Control strategy, Lifecycle management | Procedure design & development; Enhanced approach for robust methods |

| USP <1220> [13] | Analytical Procedure Lifecycle Management | Three-stage lifecycle (Design, Qualification, Verification), QbD, Continuous improvement | Compendial procedures; General scientific principles for all procedures |

Implementing the APL: A Practical Workflow

Successful implementation of the Analytical Procedure Lifecycle begins with defining the Analytical Target Profile (ATP), which serves as the foundational specification for the procedure [13]. The ATP clearly defines the intended purpose of the analytical procedure and the required quality criteria, ensuring all subsequent activities are aligned with this target.

The following workflow diagram illustrates the logical relationship between the key stages, activities, and regulatory frameworks in the Analytical Procedure Lifecycle:

Stage 1: Procedure Design and Development

During this critical stage, developers build quality into the method rather than simply testing for it later. Key activities include:

- Analytical Understanding: Developing a thorough understanding of the analyte and its behavior in the specific matrix, including consideration of any prior analytical methods [3].

- Parameter Optimization: Optimizing the procedures and conditions involved with extracting and detecting the analyte [3].

- Robustness Testing: Evaluating the method's reliability under small, deliberate variations in conditions, which is assessed during development rather than validation [14].

This stage emphasizes thorough documentation and scientific understanding, contrasting with earlier approaches that considered method development as less documentation-intensive [13].

Stage 2: Procedure Performance Qualification (Validation)

This stage corresponds to traditional method validation but is now integrated into the broader lifecycle. The core parameters for validation according to ICH Q2(R2) include:

- Specificity/Selectivity: Ability to measure the analyte unequivocally in the presence of other components [3] [14].

- Accuracy: Closeness of agreement between the accepted reference value and the value found [3].

- Precision: Degree of agreement among individual test results under prescribed conditions, including repeatability and intermediate precision [3].

- Linearity: Ability to obtain test results directly proportional to analyte concentration [3].

- Range: Interval between upper and lower concentration with suitable precision, accuracy, and linearity [14].

- LOD and LOQ: Lowest amounts of analyte that can be detected or quantified with accuracy and precision [3].

Table: Validation Parameters and Requirements for Different Analytical Procedures

| Performance Characteristic | Identification | Testing for Impurities | Assay/Potency |

|---|---|---|---|

| Specificity/Selectivity [3] [14] | Required | Required (for impurities) | Required |

| Accuracy [3] | Not required | Required | Required |

| Precision [3] | Not required | Required | Required |

| Linearity [3] [14] | Not required | Required | Required |

| Range [14] | Not required | Required | Required |

| LOD/LOQ [3] | Not required | Required (LOD for limit test, LOQ for quantitative) | Not typically required |

Stage 3: Procedure Performance Verification

The lifecycle approach continues after validation through ongoing monitoring during routine use. This includes:

- System Suitability Testing: Routine checks to confirm the analytical system is performing as expected [3].

- Trend Analysis: Regular review of data to identify potential method performance issues before they cause failures.

- Control Strategy: Implementing measures to ensure the procedure remains in a state of control throughout its operational life [3].

This ongoing verification creates feedback loops that support continuous improvement of analytical procedures [13].

The Scientist's Toolkit: Essential Materials for Analytical Procedure Validation

Implementing the APL approach requires specific materials and reagents to ensure reliable results. The following table outlines key research reagent solutions and their functions in analytical procedure validation:

Table: Essential Research Reagent Solutions for Analytical Procedure Validation

| Reagent/Material | Function in Validation | Application Examples |

|---|---|---|

| Well-Characterized Reference Standards | Demonstrate accuracy by comparing results to known values; Establish calibration curves [3] | API quantification; Impurity identification and quantification |

| Placebo/Blank Matrix | Evaluate specificity/selectivity by demonstrating absence of interference [3] | Forced degradation studies; Excipient interference testing |

| System Suitability Solutions | Verify chromatographic system performance before or during analysis [3] | HPLC assay verification; Resolution check between critical pairs |

| Stressed Samples | Demonstrate specificity of stability-indicating methods [14] | Forced degradation studies (heat, light, acid, base, oxidation) |

Experimental Protocols for Key Validation Studies

Protocol for Specificity/Selectivity Testing

Specificity is the ability to assess unequivocally the analyte in the presence of components that may be expected to be present [3]. The experimental protocol typically involves:

Sample Preparation:

- Prepare analyte standard at target concentration

- Prepare placebo/excipient mixture without analyte

- Prepare synthetic mixture containing analyte and all potential interfering components

- For stability-indicating methods, prepare stressed samples (acid/base, thermal, oxidative, photolytic degradation)

Analysis:

- Analyze all samples using the proposed procedure

- Compare chromatograms/spectra for interference at analyte retention time

- For chromatographic methods, resolution factors should be >1.5 between analyte and closest eluting potential interferent [3]

Data Interpretation:

- Demonstrate that interfering components do not contribute significantly to the analyte response

- For impurity methods, demonstrate accurate quantification of impurities in presence of each other and the main analyte

Protocol for Accuracy and Precision Evaluation

Sample Preparation:

- Prepare a minimum of three concentrations across the validated range (e.g., 50%, 100%, 150% of target)

- For each concentration, prepare a minimum of three replicates

- Use quality control samples with known concentrations when possible

Analysis:

- Analyze all samples in a randomized sequence to avoid bias

- For intermediate precision, repeat the study on different days, with different analysts, or different instruments [3]

Data Analysis:

- Calculate mean value for each concentration level (accuracy)

- Calculate standard deviation and %RSD for each concentration level (precision)

- Acceptance criteria typically require accuracy within ±2% of theoretical value and precision with %RSD ≤2% for assay methods [3]

The Analytical Procedure Lifecycle concept represents a significant evolution in pharmaceutical analysis, moving from a discrete, linear process to an integrated, holistic framework. By incorporating principles of Quality by Design, science- and risk-based approaches, and continuous improvement, the APL provides a robust foundation for developing and maintaining reliable analytical procedures throughout their entire lifespan [13] [3].

The harmonized framework of ICH Q2(R2) and ICH Q14 supports this modern approach, ensuring analytical methods are not only validated for initial use but remain fit-for-purpose throughout their operational life. This is particularly important for complex analytical techniques, including advanced spectroscopic methods, which are explicitly addressed in the updated guidelines [15] [3].

For researchers and drug development professionals, adopting the APL concept means building quality into methods from the beginning, leading to more robust procedures, fewer out-of-specification investigations, and more reliable data throughout the product lifecycle.

The Role of ICH Q8 (Pharmaceutical Development) and Q9 (Quality Risk Management) in Method Validation

The International Council for Harmonisation (ICH) guidelines have fundamentally reshaped pharmaceutical development, moving the industry from a reactive, quality-by-testing model to a proactive, science- and risk-based approach. Within this framework, analytical method validation has transcended its traditional role as a mere compliance exercise. When framed by the principles of ICH Q8 (Pharmaceutical Development) and ICH Q9 (Quality Risk Management), method validation becomes an integral part of building quality into the product from the earliest development stages, ensuring robust and reliable analytical methods throughout the product lifecycle [16] [17]. This is particularly critical for spectroscopic methods, such as UV-Vis, NIR, and Raman spectroscopy, which are widely used for identity, assay, and dissolution testing. Their performance directly impacts critical quality decisions.

The synergy between ICH Q8 and Q9 creates a powerful paradigm for method validation. ICH Q8(R2) introduces Quality by Design (QbD) principles, advocating for a systematic approach to development that begins with predefined objectives [18]. For analytical methods, this means the method's requirements are defined by its intended use, leading to a method designed to consistently meet its performance criteria. ICH Q9(R1), in turn, provides the Quality Risk Management (QRM) framework that enables the identification and control of potential variables that could affect the method's performance [19] [20]. This integrated Q8-Q9 approach results in analytical methods that are not only validated but are inherently robust, well-understood, and adaptable, directly supporting the broader thesis of enhanced spectroscopic method validation.

Core Principles of ICH Q8 and Q9 as Applied to Analytical Method Validation

ICH Q8: Pharmaceutical Development and Quality by Design (QbD)

ICH Q8(R2) focuses on pharmaceutical development and formally defines the concept of Quality by Design (QbD) as "a systematic approach… that begins with predefined objectives and emphasizes product and process understanding and process control, based on sound science and quality risk management" [16]. When applied to analytical method development and validation, this systematic approach involves several key elements derived from Q8's core concepts.

The foundational step is defining the Analytical Target Profile (ATP), which is the analog to the Quality Target Product Profile (QTPP) for a drug product. The ATP is a prospective summary of the method's performance requirements—it defines what the method is intended to measure and the necessary performance characteristics for its intended use [18]. For a spectroscopic method, the ATP would specify the key performance criteria, as detailed in Table 1.

Subsequently, the Critical Method Attributes (CMAs) are identified. These are the physical, chemical, or biological properties or characteristics of the method that must be within an appropriate limit, range, or distribution to ensure the method fulfills its ATP [18]. Examples for a spectroscopic method could include parameters like wavelength accuracy, spectral resolution, or baseline noise. The goal of the QbD-based development process is to then understand the impact of Critical Method Parameters (CMPs)—the variables in the method procedure (e.g., sample preparation time, solvent ratio, temperature, instrumental settings)—on the CMAs. This understanding is achieved through structured experimentation, such as Design of Experiments (DOE), to establish a method's "design space" [18] [21]. The design space is the multidimensional combination and interaction of CMPs that have been demonstrated to provide assurance of the method meeting its ATP. Operating within the design space provides flexibility, as changes within this space are not considered a regulatory variation, fostering continuous improvement.

ICH Q9: Quality Risk Management

ICH Q9(R1) provides a systematic framework for Quality Risk Management (QRM)—a process for assessing, controlling, communicating, and reviewing risks to the quality of a product across its lifecycle [19] [20]. The 2023 revision (R1) places enhanced emphasis on reducing subjectivity in risk assessments and clarifying risk-based decision-making [20]. For analytical methods, QRM is the engine that drives a science-based understanding of what can go wrong and ensures controls are in place to prevent it.

The QRM process is structured into four key phases [19]:

- Risk Assessment: This initial phase involves identifying potential hazards (e.g., "What could cause the method precision to fail?"), analyzing those risks by estimating their probability, severity, and detectability, and finally evaluating them to determine their risk priority.

- Risk Control: This phase involves deciding whether to accept, reduce, or eliminate the identified risks. For high-priority risks, mitigation strategies are implemented, such as adding a filtration step to control particulate interference or tightening control over environmental conditions.

- Risk Communication: The outputs of the risk assessment and control activities are documented and shared with all relevant stakeholders, such as between development and quality control laboratories.

- Risk Review: The risks and the effectiveness of the controls are reviewed periodically, especially when new knowledge is gained (e.g., from method transfer or out-of-specification results), ensuring the method remains in a state of control.

ICH Q9 suggests various tools to facilitate this process, with Failure Mode and Effects Analysis (FMEA) being one of the most widely used for analytical methods [19]. FMEA provides a structured way to identify potential failure modes for each method step, their causes and effects, and to score them to prioritize mitigation efforts.

Table 1: Example Risk Assessment (FMEA) for a UV-Vis Spectrophotometric Assay Method

| Method Step | Potential Failure Mode | Potential Effect on CQA | Severity | Potential Cause | Occurrence | Current Controls | Detection | Risk Priority | Recommended Action |

|---|---|---|---|---|---|---|---|---|---|

| Sample Preparation | Incomplete dissolution of API | Low assay result, high impurity profile | 8 | Poor solubility, incorrect solvent | 3 | Sonication step | Visual inspection, system suitability | 72 | Define & validate sonication time/temp (CMP) |

| Instrument Operation | Wavelength drift | Incorrect identity/assay result | 9 | Poor instrument calibration | 2 | Periodic calibration with holmium oxide filter | System suitability check pre-run | 72 | Robust PQ schedule & vendor qualification |

| Data Analysis | Incorrect integration | Inaccurate potency calculation | 7 | Manual processing, poor peak separation | 4 | Defined integration parameters, analyst training | Second-person review | 112 | Automate integration; define CMP for threshold |

Scoring Scale: 1 (Low) to 10 (High). Risk Priority = Severity × Occurrence × Detection. A score above 100 typically requires mandatory action [16] [19].

The Integrated Q8-Q9 Workflow for Spectroscopic Method Validation

The true power of ICH Q8 and Q9 is realized when their principles are integrated into a single, seamless workflow for spectroscopic method development and validation. This lifecycle approach ensures that quality and robustness are built into the method from its inception. The following diagram illustrates this logical, iterative process.

Diagram 1: Integrated Q8-Q9 Workflow for Analytical Method Lifecycle Management

Experimental Protocols for QbD-based Method Development

The workflow depicted above is operationalized through specific experimental protocols. The following are detailed methodologies for key experiments cited in the literature for developing robust spectroscopic methods.

Protocol 1: Risk Assessment and Screening with a Plackett-Burman Design

- Objective: To screen a large number of potential method parameters (CMPs) efficiently and identify the few that are truly critical and require further study.

- Application: Early-stage method development for a new NIR method for API assay in a tablet.

- Methodology:

- Identify Factors: List all potential variables (e.g., sample presentation pressure, grinding time, moisture content, instrument gain, scan number, environmental temperature).

- Design Experiment: Use a Plackett-Burman design, which is a highly fractionated design that allows for the screening of

n-1variables withnruns (where n is a multiple of 4). This is highly efficient for isolating major effects. - Response Variables: The responses (CMAs) would include method precision (RSD), accuracy (% recovery), and signal-to-noise ratio.

- Execution: Prepare samples according to the experimental matrix. Acquire spectra and calculate the CMA responses for each run.

- Analysis: Use statistical software (e.g., JMP, Minitab) to perform an analysis of variance (ANOVA). Parameters showing a statistically significant effect (p-value < 0.05) on the CMAs are classified as CMPs and moved to the optimization stage [21].

Protocol 2: Method Optimization and Design Space Definition with a Central Composite Design (CCD)

- Objective: To fully characterize the relationship between the Critical Method Parameters (CMPs) and Critical Method Attributes (CMAs) and to define the method design space.

- Application: Optimizing a UV-Vis method for dissolution testing where pH of the medium and wavelength are known CMPs.

- Methodology:

- Select Factors: Use the CMPs identified from the screening design (e.g., pH of dissolution medium, wavelength, and % organic solvent).

- Design Experiment: A Central Composite Design (CCD) is ideal, as it includes factorial points, center points, and axial points, allowing for the estimation of linear, interaction, and quadratic effects.

- Response Variables: CMAs such as accuracy, precision, linearity (R²), and robustness.

- Execution: Prepare dissolution samples according to the CCD matrix. Perform the analysis and record the CMA responses.

- Analysis & Modeling: Use multiple linear regression to build a mathematical model (e.g., Response Surface Methodology) for each CMA. The design space is defined by the overlapping region of the CMP ranges where all CMA predictions meet their ATP criteria [16] [18]. A case study noted that this approach could reduce development and validation time by up to 30% compared to conventional, one-factor-at-a-time approaches [16].

Comparative Analysis: QbD vs. Conventional Method Validation

The implementation of an integrated Q8-Q9 framework fundamentally changes the approach to analytical method validation compared to the conventional paradigm. The differences are not merely procedural but philosophical, impacting regulatory flexibility, operational efficiency, and long-term product quality. The table below summarizes a comparative analysis of the two approaches.

Table 2: Comparative Analysis of Conventional vs. QbD-based Analytical Method Validation

| Aspect | Conventional Approach | QbD-based (Q8/Q9) Approach | Supporting Data / Implication |

|---|---|---|---|

| Philosophy | Reactive; "Quality by Testing" | Proactive; "Quality by Design" | Shift from confirming quality to building it in [18] |

| Development Basis | One-factor-at-a-time (OFAT) | Systematic, multivariate (e.g., DOE) | DOE reveals parameter interactions missed by OFAT [21] |

| Risk Management | Informal, experience-based | Formal, systematic (ICH Q9) | Reduces subjectivity; prioritizes resources on high risks [19] [20] |

| Method Controls | Fixed operating ranges | Flexible Design Space | Regulatory flexibility: changes within design space not considered a variation [16] [22] |

| Validation Focus | Proof of validity at a single point | Demonstration of robustness across a design space | Ensures method performance despite reasonable input variation |

| Lifecycle Management | Limited post-validation change | Continued Method Verification | Ongoing data collection provides assurance of state of control [21] |

| Regulatory Impact | Standard review | Potential for streamlined assessment | EMA/FDA pilots show "strong alignment" on QbD concepts [16] |

| Development Efficiency | Potentially longer, unpredictable | Higher initial investment, faster validation & tech transfer | Case study: 30% reduction in development/validation time [16] |

| Industry Adoption | Historically dominant | Rising but variable (~31-38% of major submissions 2014-2019) [16] | Trend indicates steady growth as benefits are recognized |

The Scientist's Toolkit: Essential Reagents and Solutions for Spectroscopic Method Validation

The following table details key research reagent solutions and materials essential for conducting the experiments described in this guide, particularly for spectroscopic method development and validation under a QbD framework.

Table 3: Essential Research Reagents and Materials for Spectroscopic Method Development

| Item / Solution | Function in Method Development & Validation | Key Considerations / Specifications |

|---|---|---|

| Certified Reference Standard | Serves as the primary benchmark for establishing method accuracy, linearity, and specificity. | Purity and traceability are critical; must be obtained from a certified supplier (e.g., USP, EP). |

| System Suitability Solutions | Verifies the adequate performance of the chromatographic or spectroscopic system at the time of testing. | Must contain all analytes of interest at specified levels to test for resolution, precision, and signal-to-noise. |

| Placebo/Blank Matrix | Used to demonstrate method specificity by proving the absence of interference at the analyte retention time or spectral window. | Should be representative of the final drug product formulation, excluding only the Active Pharmaceutical Ingredient (API). |

| Forced Degradation Samples | Stressed samples (acid, base, oxidative, thermal, photolytic) used to validate method stability-indicating capability and specificity. | Must demonstrate separation of degradation products from the main analyte peak (or spectral signature). |

| Buffer Salts & pH Standards | Used to prepare mobile phases or dissolution media; critical for controlling a key CMP (pH) in spectroscopic methods. | pH meter calibration with NIST-traceable buffers is essential for robustness and reproducibility. |

| Holmium Oxide Filter / Wavelength Standards | Validates the wavelength accuracy of UV-Vis and spectrophotometers, a critical instrument qualification step. | A mandatory control for ensuring data integrity, especially after instrument maintenance or relocation. |

| HPLC-Grade Solvents | Used in sample preparation and as mobile phase components. High purity is essential to minimize baseline noise and ghost peaks. | Low UV absorbance, controlled viscosity, and minimal particulate matter are key specifications. |

The integration of ICH Q8's Pharmaceutical Development principles with ICH Q9's Quality Risk Management framework elevates analytical method validation from a regulatory checkbox to a cornerstone of a modern, robust pharmaceutical quality system. For spectroscopic methods, this paradigm shift means that methods are designed with their intended use in mind from the very beginning, developed with a deep scientific understanding of parameter interactions, and managed throughout their lifecycle with proactive risk controls. While the initial investment in DOE and risk assessment is greater than with conventional approaches, the return is substantial: more robust methods, fewer failures during tech transfer and routine use, and greater regulatory flexibility. As the industry continues to mature, this Q8-Q9 integrated approach will undoubtedly become the standard for ensuring the reliability and quality of the analytical data that underpins every critical decision in drug development and manufacturing.

In the pharmaceutical industry, the quality, safety, and efficacy of drug products are underpinned by the reliability of the analytical methods used for their testing. These methods are governed by a robust regulatory framework designed to ensure data integrity and product quality. The US Food and Drug Administration (FDA) regulation 21 CFR 211.194(a) and the European Union Good Manufacturing Practice (EU GMP) requirements form the cornerstone of this framework, mandating that all testing methods used are scientifically sound and suitable for their intended purpose [23] [13]. Specifically, 21 CFR 211.194(a) states that laboratory records must include "a statement of each method used in the testing of the sample," and that "[t]he suitability of all testing methods used shall be verified under actual conditions of use" [24]. Similarly, EU GMP Chapter 6.15 dictates that "[t]esting methods should be validated," and that a laboratory using a method it did not originally validate must "verify the appropriateness of the testing method" [25] [13].

These regulations are operationalized through a harmonized international approach guided by the International Council for Harmonisation (ICH) guidelines, particularly ICH Q2(R2) on validation and the complementary ICH Q14 on analytical procedure development [5] [3]. This guide provides a detailed comparison of these regulatory drivers, illustrating their requirements and their practical application in ensuring spectroscopic and other analytical methods are fit for their intended use throughout the analytical procedure lifecycle.

Comparative Analysis: FDA 211.194(a) vs. EU GMP

The following table provides a structured comparison of the core regulatory requirements for analytical method validation and verification in the US and EU.

Table 1: Direct Comparison of FDA and EU GMP Requirements for Analytical Methods

| Aspect | FDA (21 CFR 211.194(a)) | EU GMP |

|---|---|---|

| Core Legal Citation | Title 21, Chapter I, Subchapter C, Part 211, Subpart J, Section 211.194 [24] | EudraLex, Volume 4, Part I, Chapter 6 (Quality Control) [13] |

| Primary Requirement | "The suitability of all testing methods used shall be verified under actual conditions of use." [24] | "Testing methods should be validated. A laboratory that is using a testing method and which did not perform the original validation, should verify the appropriateness of the testing method." [13] |

| Focus of the Text | Laboratory records and data integrity, requiring complete data from all tests [24]. | Overall quality control system, ensuring approved methods are used and are appropriate [13]. |

| Key Implication | Requires data proving method suitability to be readily available in laboratory records for inspector review [24] [13]. | Formally requires validation for original methods and verification for transferred or compendial methods [25]. |

| Guidance for Implementation | FDA guidance documents (e.g., on Analytical Procedures and Methods Validation) and adoption of ICH Q2(R2) [23] [3]. | ICH Q2(R2) and ICH Q14; detailed in EU GMP Annex 15 on Qualification and Validation [13]. |

Despite differences in phrasing and structure, both regulatory systems converge on the same fundamental principle: analytical procedures must be demonstrated to be suitable for their intended purpose through validation, verification, or qualification, backed by rigorous documentation [23] [13]. The terms "validation," "verification," and "qualification" have specific applications, summarized in the table below.

Table 2: Categories of Analytical Method Suitability

| Category | Definition | Typical Application Context |

|---|---|---|

| Validation | A comprehensive process to demonstrate, through specific laboratory studies, that a method's performance characteristics are suitable for its intended analytical application [23] [26]. | New analytical procedures; required for methods used to release commercial products and stability testing [23] [3]. |

| Verification | The demonstration that a compendial or previously validated method is suitable for use under actual conditions of use in a specific laboratory [24] [23]. | Methods published in pharmacopoeias (e.g., USP, Ph. Eur.) or methods transferred from another laboratory [23] [26]. |

| Qualification | A performance assessment with limited scope, where full validation knowledge is not yet available, but some data on reliability and variability control is required [23]. | Methods used during early-phase clinical development (e.g., Phase I and early Phase II) [23]. |

The ICH Framework: Harmonizing Global Requirements

The ICH guidelines provide the harmonized scientific and technical framework that fulfills the general requirements of both FDA CFR 211.194(a) and EU GMP. ICH Q2(R2) "Validation of Analytical Procedures" offers a globally accepted standard for defining the validation characteristics required for different types of analytical procedures [5] [3].

The relationship between the overarching regulations and the detailed ICH guidelines, integrated with the modern Analytical Procedure Lifecycle (APL) approach, can be visualized as a cohesive workflow.

This lifecycle model, endorsed by the USP general chapter <1220>, emphasizes that a robust validation (Stage 2) is built upon a foundation of systematic procedure design and development (Stage 1), and is followed by ongoing monitoring during routine use (Stage 3) [25] [13]. This represents a shift from a one-time validation exercise to a holistic, science- and risk-based lifecycle management of analytical procedures [13].

Core Validation Parameters and Experimental Protocols

According to ICH Q2(R2), which details the expectations of both FDA and EU regulators, the validation of an analytical procedure requires the assessment of specific performance characteristics [5] [3]. The parameters required depend on the type of procedure (e.g., identification, assay, impurity testing). The following table summarizes the core validation parameters and their definitions, which are critical for spectroscopic methods.

Table 3: Core Analytical Procedure Validation Parameters per ICH Q2(R2)

| Validation Parameter | Experimental Protocol & Definition | Common Spectroscopic Application |

|---|---|---|

| Accuracy | The closeness of agreement between the value found and a reference value. Protocol: Spiking a known quantity of reference standard into the sample matrix and determining percent recovery. Multiple concentrations across the range should be tested [23] [26]. | Recovery studies in UV-Vis spectroscopy to determine active ingredient in a tablet formulation. |

| Precision | The closeness of agreement among a series of measurements. Includes repeatability and intermediate precision. Protocol: Multiple measurements of a homogenous sample under the same conditions (repeatability) and by different analysts/days/instruments (intermediate precision). Expressed as %RSD [23] [3]. | Measuring the relative standard deviation (%RSD) of multiple readings of the same sample in a near-infrared (NIR) assay. |

| Specificity | The ability to assess the analyte unequivocally in the presence of other components. Protocol: Compare analyte response in the presence of impurities, degradation products, or matrix components to the response of the pure analyte [23] [3]. | Using derivative UV spectroscopy to resolve and quantify two drugs with overlapping spectra in a binary mixture [27]. |

| Linearity | The ability of the procedure to obtain results directly proportional to analyte concentration. Protocol: Prepare and analyze a series of standard solutions across the claimed range. Plot response vs. concentration and perform linear regression analysis [23] [26]. | Creating a calibration curve for an API using a range of standard solutions in HPLC-UV. |

| Range | The interval between the upper and lower concentrations of analyte for which suitable levels of accuracy, precision, and linearity are demonstrated. Protocol: The range is confirmed by obtaining acceptable accuracy, precision, and linearity results across the specified interval [23]. | The validated range for a spectroscopic assay may be from 80% to 120% of the label claim. |

| LOD & LOQ | Detection Limit (LOD): The lowest concentration that can be detected. Quantitation Limit (LOQ): The lowest concentration that can be quantified with acceptable accuracy and precision. Protocol: Based on signal-to-noise ratio or standard deviation of the response [23] [26]. | Determining the lowest level of an impurity that can be reliably detected and quantified in a drug substance. |

| Robustness | The reliability of the method under small, deliberate variations in method parameters. Protocol: Varying parameters such as wavelength, pH of buffer, or extraction time and observing the impact on the results [23]. | Testing the effect of small wavelength variations in a UV-Vis spectroscopic method for content uniformity. |

The Scientist's Toolkit: Essential Reagents and Materials

The successful development and validation of a spectroscopic method relies on a suite of critical materials. The following table details these essential components and their functions.

Table 4: Essential Research Reagent Solutions for Analytical Method Development & Validation

| Reagent / Material | Critical Function & Justification |

|---|---|

| Well-Characterized Reference Standard | Serves as the benchmark for accuracy and calibration. The quality of the standard directly impacts the reliability of all quantitative results [26]. |

| High-Purity Solvents | Used for sample and standard preparation. Impurities can cause spectral interference, affecting specificity, baseline noise, and accuracy [27]. |

| Appropriate Biological or Placebo Matrix | Essential for specificity testing and accuracy/recovery studies. It proves the method can distinguish the analyte from other components in the sample [26]. |

| System Suitability Test Materials | Used to verify that the total analytical system (instrument, reagents, and procedure) is functioning correctly and provides adequate performance at the time of testing [26]. |

| Stable Control Samples | Homogeneous samples with known characteristics used to establish and monitor the precision (repeatability and intermediate precision) of the method over time [26]. |

The regulatory drivers FDA 21 CFR 211.194(a) and EU GMP Chapter 6, while originating from different jurisdictions, are effectively harmonized in their intent and implementation. Both mandate that analytical methods must be proven suitable for their intended purpose, a principle operationalized through the ICH Q2(R2) and ICH Q14 guidelines. The modern paradigm has shifted from a checklist approach to validation towards a holistic Analytical Procedure Lifecycle model. This model, encompassing procedure design, performance qualification, and ongoing verification, provides a scientifically sound framework for developing robust, reliable spectroscopic methods. For researchers and drug development professionals, a deep understanding of these converged requirements is not merely a regulatory obligation but a fundamental component of ensuring product quality and patient safety.

Implementing QbD and Developing Robust Spectroscopic Methods

Establishing the Analytical Target Profile (ATP) for Spectroscopic Methods

An Analytical Target Profile (ATP) is a foundational pre-defined objective that articulates the intended purpose of an analytical procedure. It specifies what the method is required to measure, on which material, and the required quality of the result [3]. Within the framework of ICH Q14, the ATP is the critical starting point for analytical procedure development, providing a clear goal for method design, validation, and lifecycle management. For spectroscopic methods, which are essential tools in pharmaceutical analysis due to their rapid, non-destructive nature [28], establishing a robust ATP is paramount. It ensures that the method is fit-for-purpose, providing reliable data for identity, assay, purity, or impurity testing, whether used in a quality control lab or as a Process Analytical Technology (PAT) tool.

The ATP shifts the focus from simply following a set of instructions to understanding the fundamental quality requirements of the analytical data. This strategic approach is perfectly aligned with the principles of ICH Q2(R2) and Q14, which advocate for a science- and risk-based framework for analytical procedures [3]. For spectroscopic techniques, which can range from UV-Vis and infrared to Raman and terahertz spectroscopy [28], the ATP defines the performance standards that the method must consistently meet, guiding the selection of the most appropriate technology and the subsequent validation activities.

Critical Components of an ATP for Spectroscopy

The core of an ATP is a clear statement specifying the analyte, the matrix, and the required quality of the reportable value. For spectroscopic methods, this translates into several key components:

- Analytical Technique and Principle: The ATP should specify the general technique (e.g., UV-Vis Spectrophotometry, NIR Spectroscopy, Raman Spectroscopy) and the principle of measurement (e.g., absorption, emission, scattering). This provides a boundary for the method development space.

- Reportable Value and Defined Measurement: The ATP must unambiguously state what is being measured. This could be the identity of a drug substance, the assay of an Active Pharmaceutical Ingredient (API) in a tablet, or the quantification of a specific impurity. The unit of the reportable value (e.g., % w/w, % area, pass/fail) must be defined.

- Performance Requirements: This is the quantitative heart of the ATP. It defines the maximum permissible uncertainty for the reportable value, which directly links to the validation parameters described in ICH Q2(R2). Key requirements include:

- Accuracy: The required closeness of agreement between the measured value and an accepted reference value.

- Precision: The allowed total uncertainty, encompassing repeatability and intermediate precision.

- Specificity: The ability to unequivocally assess the analyte in the presence of other components like impurities, degradation products, or excipients [3].

The following workflow outlines the science- and risk-based process for developing a spectroscopic method from its ATP, in accordance with ICH Q14 and Q2(R2).

Comparative Analysis of Spectroscopic Techniques for Pharmaceutical Analysis

Selecting the correct spectroscopic technique is a critical decision following the definition of the ATP. Different techniques offer distinct advantages and limitations based on their underlying light-matter interactions [28]. The choice depends on the specific analytical need defined in the ATP, such as the nature of the analyte, required sensitivity, sample matrix, and whether the analysis is for qualitative identity, quantitative assay, or structural elucidation.

Table 1: Comparison of Key Spectroscopic Techniques for Pharmaceutical Analysis

| Technique | Spectral Region | Primary Interaction | Primary Applications | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| UV-Vis Spectrophotometry [29] [28] | Ultraviolet-Visible (100 nm – 1 µm) | Electronic transitions in chromophores | API quantification in formulations, dissolution testing | Cost-effective, simple, green solvent potential [29], high sensitivity for absorbing species | Lacks molecular specificity, difficult for multi-component analysis without chemometrics |

| Near-Infrared (NIR) Spectroscopy [28] | Near-Infrared (1 – 30 µm) | Overtone/combination vibrations | Raw material ID, PAT for blending, moisture analysis | Rapid, non-destructive, requires minimal/no sample prep, suitable for PAT | Complex spectra requiring multivariate calibration, less specific than Mid-IR |

| Mid-Infrared (Mid-IR) Spectroscopy [28] | Mid-Infrared (1 – 30 µm) | Fundamental molecular vibrations | Identity testing, polymorph screening, structural analysis | High molecular specificity, fingerprinting capability | Often requires sample preparation (e.g., ATR, KBr pellets), can be affected by water |

| Raman Spectroscopy [28] | Visible/NIR lasers | Inelastic (Raman) scattering | Polymorph characterization, API distribution, identity | Low interference from water, high specificity, suitable for aqueous solutions | Can be affected by fluorescence, requires robust instrumentation |

| Terahertz Spectroscopy [28] | Terahertz (30 – 3000 µm) | Intermolecular vibrations | Solid-state form analysis, crystallinity | Probes crystalline structure and hydrates, penetrates packaging | Specialized equipment, less established in pharmacopoeias |

Method Validation for Spectroscopic Techniques: Translating ATP to ICH Q2(R2) Parameters

Once a technique is selected and developed, the ATP's performance requirements must be verified through a formal validation study as per ICH Q2(R2). The validation parameters chosen and their acceptance criteria are a direct reflection of the ATP's requirements. The following table maps common spectroscopic applications to the core validation parameters and typical acceptance criteria.

Table 2: Method Validation Parameters and Typical Acceptance Criteria for Spectroscopic Methods per ICH Q2(R2)

| Validation Parameter | Definition (from ICH) | Quantitative Assay (e.g., UV-Vis) | Identity Test (e.g., IR) | Impurity Quantification (e.g., NIR/Chemometrics) |

|---|---|---|---|---|

| Accuracy [3] | Closeness of agreement to true value | Recovery: 98.0–102.0% | Not required | Recovery: 80–120% at specification level |

| Precision (Repeatability) [3] | Closeness of agreement under same conditions | %RSD ≤ 2.0% | Not required | %RSD ≤ 10.0% (at specification level) |

| Specificity [23] [3] | Ability to assess analyte in presence of other components | Resolve analyte from placebo, impurities, and degradants (e.g., via spectral resolution) | Must discriminate between analyte and similar compounds | Resolve impurity from API and other impurities |

| Linearity [3] | Direct proportionality of response to concentration | r² ≥ 0.998 (over specified range) | Not required | r² ≥ 0.990 (over reporting range) |

| Range [23] | Interval between upper and lower concentration | Typically 80–120% of test concentration | Not applicable | From LOQ to 120% of specification |

| LOD/LOQ [23] [3] | Detection/Quantitation Limit | Not typically required for assay | Not required | S/N ≥ 3 for LOD; S/N ≥ 10 for LOQ, with accuracy/precision |

Experimental Protocol: Univariate UV-Vis Method Validation for an Antihypertensive Combination

A practical example of applying these principles is the analysis of a ternary antihypertensive mixture containing Telmisartan (TEL), Chlorthalidone (CHT), and Amlodipine (AML) [29]. The following protocol outlines the key steps for developing and validating a univariate method using Successive Ratio Subtraction coupled with Constant Multiplication (SRS-CM).

- Step 1: Standard Solution Preparation: Prepare individual stock solutions of TEL, CHT, and AML in ethanol at a concentration of 500 µg/mL. From these, prepare working solutions of 100 µg/mL. Store all solutions in light-protected containers at 2–8°C [29].

- Step 2: Linearity and Range: Using the working solutions, prepare calibration standards across the following ranges: 5.0–40.0 µg/mL for TEL, 10.0–100.0 µg/mL for CHT, and 5.0–25.0 µg/mL for AML. Scan the zero-order absorption spectra of each standard from 200–400 nm using ethanol as a blank [29].

- Step 3: Determination of Wavelength Maxima: From the scanned spectra, determine the wavelength of maximum absorption (λmax) for each analyte: 295.7 nm for TEL, 275.0 nm for CHT, and 359.5 nm for AML [29].

- Step 4: SRS-CM Spectral Resolution: This mathematical procedure is applied to the overlapped spectra of the mixture to resolve the individual components without physical separation, allowing for their quantification at their respective λmax [29].

- Step 5: Method Validation: Construct calibration curves at the specified wavelengths and determine the regression equations. Validate the method by assessing its accuracy (recovery %), precision (%RSD), specificity (in the presence of tablet excipients), and robustness according to ICH Q2(R2) guidelines [29].

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key materials and reagents required for developing and validating spectroscopic methods, as exemplified in the cited research.

Table 3: Essential Research Reagent Solutions for Spectroscopic Analysis

| Item Name | Function / Purpose | Example from Literature |

|---|---|---|

| Pharmaceutical Reference Standards | Serves as the benchmark for identity, purity, and potency; essential for calibration and method validation. | Telmisartan (99.58%), Amlodipine Besylate (98.75%), Chlorthalidone (99.12%) [29] |

| Green Solvents | Used for dissolving samples and standards; green solvents like ethanol reduce environmental impact. | Ethanol (HPLC grade) was used as a green solvent for UV-Vis analysis [29] |

| UV/VIS Quartz Cuvettes | Holds the liquid sample for analysis in UV-Vis spectrophotometry; must be transparent in the UV-Vis range. | Absorption spectra were measured in a 1.0 cm quartz cell [29] |

| Calibration Standards | A series of solutions with known analyte concentrations used to establish the relationship between response and concentration (calibration curve). | Prepared from stock solutions to cover ranges of 5–40 µg/mL for TEL, 10–100 µg/mL for CHT, and 5–25 µg/mL for AML [29] |

| Chemometrics Software | Software for multivariate data analysis; essential for developing models for techniques like NIR and Raman. | MATLAB R2024a with PLS Toolbox was used for developing iPLS and GA-PLS models [29] |

Advanced Chemometric Models for Spectral Resolution

For complex mixtures with significant spectral overlap, univariate methods reach their limits. Here, multivariate chemometric models, as highlighted in ICH Q2(R2), become essential. These models can resolve the contributions of individual analytes from a complex composite signal.

- Interval Partial Least Squares (iPLS): This technique enhances the classical PLS model by dividing the full spectrum into smaller intervals and selecting only the most informative regions for regression. This reduces noise and the risk of overfitting, leading to a more precise and interpretable model [29].

- Genetic Algorithm-Partial Least Squares (GA-PLS): GA-PLS is an evolutionary optimization method that selects an optimal combination of wavelengths from the full spectrum. It works by evolving a population of potential solutions through selection, crossover, and mutation, ultimately finding the set of wavelengths that produces the most predictive PLS model [29].

The application of these models, as demonstrated for the TEL/CHT/AML mixture, shows that adding variable selection techniques like iPLS and GA-PLS significantly improves model performance compared to using full-spectrum PLS alone [29]. The following diagram illustrates the workflow for applying these chemometric models to a spectroscopic dataset.

Applying Quality by Design (QbD) Principles to Method Development

Quality by Design (QbD) is a systematic, proactive approach to development that begins with predefined objectives and emphasizes product and process understanding and control based on sound science and quality risk management [30]. Pioneered by Dr. Joseph M. Juran, QbD fundamentally shifts quality assurance from retrospective testing to building quality directly into the product or method from the outset [30] [31]. The pharmaceutical industry has successfully applied QbD to drug development and manufacturing, and is now increasingly harnessing its power for Analytical Method Development [31].