Spectroscopic Sample Preparation: A Comprehensive Guide for Accurate Analysis in Biomedical Research

This article provides a complete guide to sample preparation for spectroscopic methods, a critical step where up to 60% of analytical errors originate.

Spectroscopic Sample Preparation: A Comprehensive Guide for Accurate Analysis in Biomedical Research

Abstract

This article provides a complete guide to sample preparation for spectroscopic methods, a critical step where up to 60% of analytical errors originate. Tailored for researchers and drug development professionals, it covers foundational principles, method-specific protocols for techniques like NMR, AAS, FT-IR, and ICP-MS, advanced troubleshooting strategies, and a comparative analysis to guide method selection. The content synthesizes current best practices and emerging technologies to empower scientists in achieving reproducible, high-quality data for biomedical and clinical applications.

The Critical Role of Sample Preparation: Foundations for Spectroscopic Success

In the realm of analytical science, the quality of data is only as good as the sample from which it is derived. A staggering 60% of all spectroscopic analytical errors can be traced back to inadequate sample preparation [1]. This statistic underscores a fundamental truth in the laboratory: even the most advanced and sensitive instrumentation cannot compensate for a poorly prepared sample. For researchers in drug development and other fields relying on spectroscopic analysis, a rigorous, method-specific sample preparation protocol is not merely a best practice—it is the absolute foundation for obtaining valid and reproducible results.

This guide details the critical role of sample preparation, providing a technical framework for scientists to minimize error and maximize data integrity in their spectroscopic analyses.

The Overwhelming Impact of Sample Preparation on Data Quality

The 60% error figure highlights sample preparation as the most significant source of uncertainty in the analytical workflow [1]. Recent surveys of laboratory professionals confirm that issues related to preparation persist as predominant problems, with poor sample recovery and a lack of reproducibility now ranking as the top challenges [2].

The following table summarizes the major sources of error in a typical analytical process, demonstrating how sample preparation-related issues constitute a major portion of the problem.

| Source of Analytical Error | Contribution to Overall Error | Relation to Sample Preparation |

|---|---|---|

| Sample Processing | ~22% (1991 survey) [2] | Directly encompasses all steps of sample preparation. |

| Operator Error | ~17% (1991 survey) [2] | Manual preparation is highly susceptible to technician technique and consistency. |

| Contamination | ~15% (1991 survey) [2] | Introduced from impure reagents, solvents, or equipment during preparation. |

| Calibration | Leading concern (2023 survey) [2] | Proper preparation ensures calibrants and samples have matched matrices. |

| Integration | Major concern (2023 survey) [2] | Poor preparation (e.g., incomplete extraction) can lead to difficult-to-interpret data. |

Errors introduced during preparation manifest in several specific ways that directly compromise spectroscopic data [1]:

- Particle Size and Surface Effects: Inhomogeneous or rough samples scatter light unpredictably, distorting spectral baselines and intensities.

- Matrix Effects: Co-existing elements or compounds in the sample can absorb radiation or enhance analyte signals, leading to inaccurate quantitative results.

- Sample Inhomogeneity: A non-representative sample yields non-reproducible results, as each measured aliquot has a different composition.

- Contamination: The introduction of external contaminants during grinding, dilution, or container transfer creates spurious spectral signals.

Sample Preparation Fundamentals for Core Spectroscopic Methods

Different spectroscopic techniques probe different sample properties, necessitating tailored preparation protocols. A one-size-fits-all approach is a common route to analytical failure.

Technique-Specific Preparation Requirements

| Spectroscopic Method | Primary Preparation Goals | Common Techniques | Critical Parameters to Control |

|---|---|---|---|

| X-Ray Fluorescence (XRF) | Flat, homogeneous surface; uniform density and particle size [1] | Grinding/milling, pressed pelletizing, fusion [1] [3] | Particle size (<75 µm), binder selection, pressing force [1] |

| Inductively Coupled Plasma Mass Spectrometry (ICP-MS) | Complete dissolution of solids; accurate dilution; removal of particulates [1] | Acid digestion, filtration, precise dilution, acidification [1] | Digestion temperature/time, final acid concentration, dilution factor [1] |

| Fourier Transform Infrared (FT-IR) | Optimal pathlength and concentration for measurement [1] | KBr pellets for solids, solution in IR-transparent solvents, ATR with flat contact [1] | Solvent transparency in spectral region, sample homogeneity, film thickness [1] |

| Liquid Chromatography-Mass Spectrometry (LC-MS) | Isolate analyte from matrix; concentrate; remove interfering species (e.g., proteins, salts) [4] [5] | Protein precipitation, solid-phase extraction (SPE), liquid-liquid extraction, filtration [4] [5] | Solvent/sorbent chemistry selection, pH adjustment, sample load and elution volume [5] |

Detailed Experimental Protocols

Pressed Pellet Preparation for XRF Analysis

This method is ideal for creating solid, homogeneous disks from powdered samples for quantitative XRF analysis [1].

Materials: Spectroscopic grinding mill (e.g., swing mill), hydraulic press (10-30 ton capacity), pellet die, primary standard cellulose or wax binder, sample powder. Procedure:

- Grinding: Grind the representative sample to a fine powder, targeting a particle size of <75 µm to minimize particle size effects [1].

- Mixing: Accurately weigh a portion of the ground sample and mix it uniformly with a binder (e.g., 0.5 g sample with 5 g boric acid backing) [1] [3].

- Pressing: Transfer the mixture into a pellet die. Place the die in a hydraulic press and apply a pressure of 10-25 tons for 30-60 seconds to form a stable, flat pellet [1].

- Storage: Store the prepared pellet in a desiccator to prevent moisture absorption before analysis.

Solid-Phase Extraction (SPE) for LC-MS Sample Clean-up

SPE is used for purifying and concentrating analytes from complex liquid matrices like biological fluids, removing proteins and phospholipids that cause ion suppression [5].

Materials: SPE cartridges (C18 for reversed-phase), vacuum manifold, high-purity solvents (methanol, water, acetonitrile), buffering salts. Procedure:

- Conditioning: Pass 2-3 mL of methanol through the SPE sorbent, followed by 2-3 mL of water or a buffer matching the sample's pH. Do not let the sorbent run dry [5].

- Loading: Load the prepared liquid sample (e.g., plasma filtrate) onto the cartridge at a slow, drop-wise flow rate to maximize analyte-sorbent interaction.

- Washing: Wash with 1-2 mL of a mild aqueous buffer (e.g., 5% methanol) to remove weakly retained matrix interferences.

- Elution: Elute the purified analytes with 1-2 mL of a strong solvent (e.g., 90% methanol in water). Collect the eluate for direct analysis or concentration.

The Scientist's Toolkit: Essential Reagents & Materials

| Tool/Reagent | Function | Typical Application |

|---|---|---|

| Borate Flux (e.g., Lithium Tetraborate) | Flux for fusion; dissolves refractory materials to form a homogeneous glass disk [1] [3] | XRF fusion for minerals, ceramics, and other difficult-to-dissolve materials [1] |

| Platinum Crucibles | High-temperature, inert containers for fusion preparation [1] | Withstanding 950-1200°C temperatures during XRF fusion without contaminating the sample [1] |

| Solid-Phase Extraction (SPE) Cartridges | Selective isolation and concentration of analytes from liquid matrices [5] | Clean-up of biological samples (plasma, urine) for LC-MS analysis; environmental water testing [5] |

| ATR Crystal (Diamond) | Robust crystal for direct measurement of solids and liquids via attenuated total reflection [6] | FT-IR spectroscopy of bitumen, polymers, and other solids without extensive preparation [6] |

| Membrane Filters (0.45 µm, 0.2 µm) | Removal of suspended particles from liquid samples [1] | Preparation of aqueous samples for ICP-MS to prevent nebulizer clogging [1] |

| Deuterated Solvents (e.g., CDCl₃) | Spectroscopically transparent solvents for nuclear magnetic resonance (NMR) and FT-IR [1] | Dissolving samples for FT-IR analysis in transmission mode with minimal interfering absorption bands [1] |

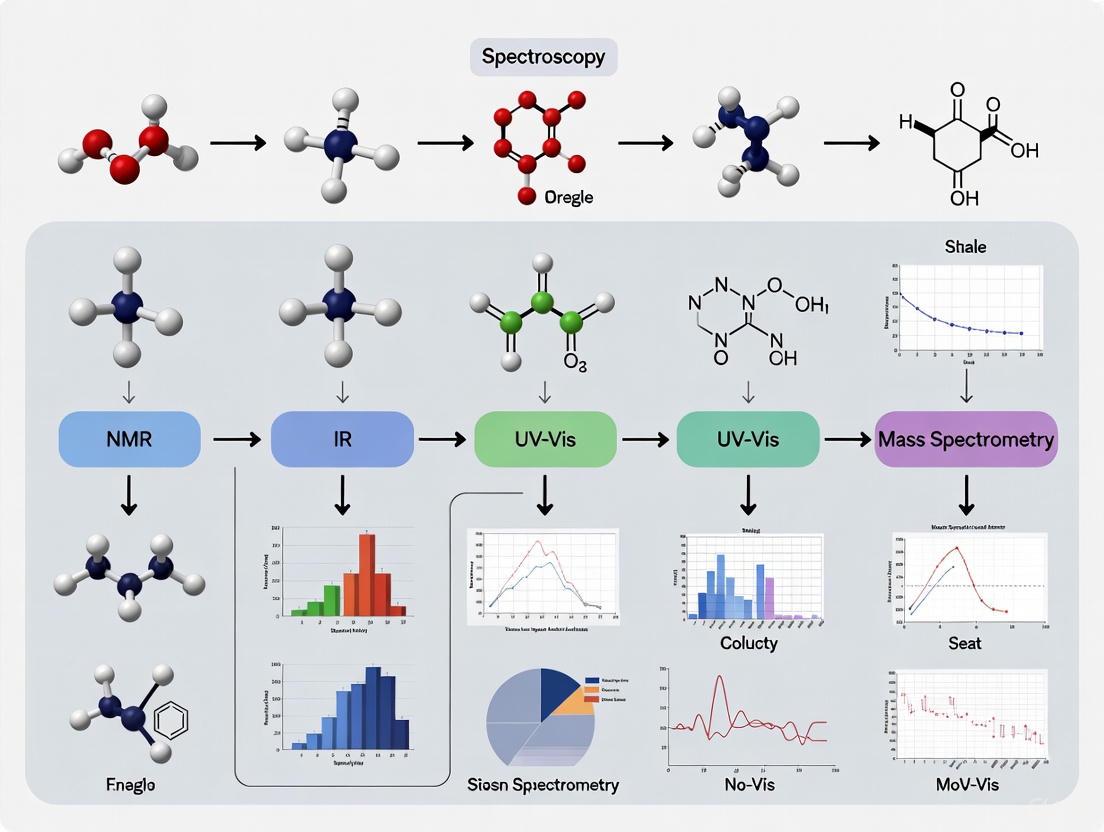

The following diagram maps the sample preparation journey for a solid sample, highlighting critical control points where errors are most likely to be introduced, leading to the 60% statistic.

Best Practices for Mitigating Error and Enhancing Reproducibility

To combat the high error rate associated with sample preparation, laboratories should adopt the following evidence-based practices:

- Prioritize Homogeneity and Contamination Control: The foundation of accurate analysis is a sample that is both uniform and pure. Invest in high-quality grinding equipment with non-contaminating surfaces and establish rigorous cleaning protocols between samples [1]. For solids, ensure particle size is reduced and consistent.

- Standardize and Document Protocols: Reproducibility is paramount. Develop and adhere to Standard Operating Procedures (SOPs) for each sample type and analytical technique. Automated systems excel here, as they log parameters (time, pressure, temperature) to create an audit trail, transforming preparation from an "art" to a traceable science [7].

- Select Methods Appropriate to Your Technique and Sample: Understand the fundamental requirements of your spectroscopic method. For instance, XRF demands a flat, homogeneous surface, while ICP-MS requires total dissolution [1]. Similarly, solvent selection for FT-IR must consider the solvent's own IR absorption profile [1].

- Embrace Automation Where Possible: Automated grinding, polishing, and liquid handling systems significantly reduce operator-dependent variability and human error [7]. These systems enhance throughput and provide the consistency needed for high-quality, reproducible results, directly addressing the top challenges of recovery and reproducibility [2] [7].

- Consider Green Chemistry Principles: With 88.6% of survey respondents concerned about solvent environmental, health, and safety effects, there is a push toward sustainable practices [2]. Explore solventless methods (like SPME), use smaller solvent volumes, or investigate alternative solvents to reduce this source of error and hazard [2].

The 60% error statistic is a powerful reminder that the analytical process begins long before a sample is placed in an instrument. For researchers in drug development and spectroscopy, compromising on sample preparation means compromising the very data upon which critical decisions are based. By understanding the rigorous, method-specific requirements, implementing standardized and automated protocols, and maintaining a relentless focus on homogeneity and contamination control, scientists can turn this major source of error into a cornerstone of reliability. Excellent sample preparation is, and will remain, non-negotiable for excellent science.

In analytical sciences, the accuracy of spectroscopic data is not solely determined by the performance of the instrument but is profoundly influenced by the physical characteristics of the sample itself. Homogeneity, particle size, and surface quality constitute a critical triad of physical properties that directly govern how matter interacts with electromagnetic radiation. These parameters form the foundation of reliable spectral analysis, yet their systematic impact is often overlooked in spectroscopic practice.

Sample preparation represents the most significant source of analytical error in spectroscopy, accounting for approximately 60% of all analytical errors [1]. Within the context of a broader thesis on spectroscopic method development, understanding these core physical principles becomes paramount for researchers aiming to develop robust analytical protocols. This technical guide examines the fundamental relationships between sample physical properties and spectral data quality, providing a scientific basis for standardized sample preparation protocols across various spectroscopic techniques.

Fundamental Principles of Light-Matter Interaction

The interaction between light and matter provides the theoretical foundation for all spectroscopic techniques. When electromagnetic radiation strikes a sample, it may be absorbed, transmitted, reflected, or scattered, with the relative proportions of each interaction determined by both the material's chemical composition and its physical structure.

The Role of Surface Roughness in Light Scattering

Surface topography directly influences the fate of incident radiation through scattering phenomena. Rough surfaces disrupt the specular reflection of light, instead scattering it diffusely in random directions. This scattering effect is quantitatively described by the root mean square (RMS) roughness parameter (σ), which must be substantially less than the wavelength of incident light to maintain measurement integrity [8].

The relationship between surface roughness and scattered light can be modeled using a single-layer homogeneous model, which treats surface roughness as a thin layer with a refractive index intermediate between the ambient medium and the substrate material [8]. This approach allows researchers to quantify how rough surfaces impact both reflectance and transmittance measurements for s-polarized and p-polarized light, with implications for both qualitative identification and quantitative analysis.

Particle Size Effects on Radiation Penetration and Scattering

Particle size dictates the optical path length and scattering cross-section within powdered samples. Larger particles create heterogeneous environments with inconsistent penetration depths, while excessively fine particles can promote agglomeration and increase scattering losses. The optimal particle size range for most spectroscopic techniques is <75 μm, though specific applications may require different size distributions [1].

Laser diffraction studies have established that particle size distributions directly correlate with sample homogeneity, particularly for mycotoxin analysis in food and feed matrices [9]. When particle size measurements fall below 850 μm, mycotoxin concentrations become consistent across independent test portions, confirming the fundamental relationship between particle size reduction and analytical homogeneity [9].

Quantitative Effects on Spectral Data Quality

Homogeneity and Representativeness

Sample homogeneity ensures that a small test portion accurately represents the entire sample material. Heterogeneous distributions of analytes or matrix components introduce sampling errors that cannot be corrected through instrumental refinement alone. The variance associated with homogeneity can be isolated and quantified using approaches described in ISO Guide 35:2017, which provides statistical methods for homogeneity assessment [9].

Laser diffraction particle size analysis has emerged as a rapid, reliable technique for homogeneity characterization, correlating strongly with established ISO protocols [9]. This method quantifies within-subsample and between-subsample variances through multiple measurements, providing a practical homogeneity index for routine analysis.

Particle Size and Spectral Reproducibility

Particle size directly influences spectral reproducibility through multiple mechanisms. The following table summarizes key quantitative relationships between particle size and spectral parameters:

Table 1: Quantitative Effects of Particle Size on Spectral Data

| Particle Size Parameter | Spectral Effect | Magnitude/Impact | Analytical Technique |

|---|---|---|---|

| Dv50 < 75 μm | Homogeneous X-ray interaction | Required for quantitative analysis | XRF [1] |

| Particles > 850 μm | Increased sampling error | Mycotoxin concentration variance | NIR, LC-MS [9] |

| Fine fraction increase | Elevated surface roughness | Adhered particles increase Sa, Sz | L-PBF manufacturing [10] |

| Optimal size range | Reduced light scattering | Improved signal-to-noise ratio | NIRS, FT-IR [1] |

The particle size distribution also affects final product properties in manufacturing processes. In laser powder bed fusion (L-PBF) for metal parts, the smallest particles in a powder batch disproportionately contribute to surface roughness by adhering to the manufactured part surface [10]. This relationship demonstrates how particle size effects transcend analytical spectroscopy and extend to materials processing applications.

Surface Quality and Signal Fidelity

Surface roughness parameters provide quantitative descriptors of surface quality and its impact on spectral measurements. The most commonly cited parameters include:

Table 2: Surface Roughness Parameters and Their Spectral Significance

| Roughness Parameter | Definition | Spectral Significance | Limitations |

|---|---|---|---|

| Ra/Sa | Arithmetical mean height | General surface quality indicator | Insufficient alone for complex surfaces [11] |

| Rq/Sq | Root mean square height | More sensitive to extreme values | Better for Gaussian surfaces |

| Rz/Sz | Maximum height of profile | Peak-to-valley distance | Limited reproducibility [11] |

| Multiparameter approach | Combined roughness assessment | Comprehensive surface characterization | Required for irregular surfaces [11] |

Research on shot-peened surfaces has demonstrated that relying on a single roughness parameter like Ra or Rz can yield misleading conclusions when comparing dissimilar surfaces [11]. Surfaces with identical Ra values may exhibit dramatically different spatial distributions of peaks and valleys, resulting in different light interaction behaviors. A comprehensive set of amplitude and spacing parameters provides more reliable surface characterization for spectroscopic applications [11].

Experimental Protocols for Characterization

Laser Diffraction Particle Size Analysis

Objective: To determine particle size distribution and assess sample homogeneity. Materials: Laser diffraction particle size analyzer, appropriate dispersant (e.g., methanol), sample dividing (riffling) device.

- Sample Preparation: Comminute bulk samples using wet, cryogenic, or dry milling. Obtain representative subsamples using a sample dividing device [9].

- Dispersant Selection: Use methanol to wet particle surfaces and separate agglomerated particles. Verify stability of particle size distribution over sequential measurements [9].

- Stirring Optimization: Set stirring rate to 3500 rpm to eliminate agglomeration and create uniform suspension. Lower rates (e.g., 500 rpm) cause agglomeration and yield unrealistic particle sizes [9].

- Optical Parameter Configuration: Set refractive index to 1.6 with absorption of 0.01 for organic materials. Adjust for specific matrix properties [9].

- Data Collection: Perform minimum of 60 sequential measurements to verify stability. Calculate Dv10, Dv50, and Dv90 values with relative standard deviations (RSD) [9].

Surface Roughness Characterization

Objective: To quantitatively characterize surface topography relevant to light interaction. Materials: Optical profilometer, surface roughness standard for calibration.

- Surface Scanning: Use non-contact optical microscope (e.g., Alicona Infinite Focus) with appropriate magnification. Scan sufficiently large area (e.g., 10.2 × 5.6 mm²) to ensure representative sampling [11].

- Parameter Extraction: Extract both profile (Ra, Rq, Rz) and areal (Sa, Sq, Sz) roughness parameters according to ISO 25178-2 [11] [10].

- Multiparameter Approach: Utilize comprehensive set of amplitude and spacing parameters rather than relying solely on Ra or Rz [11].

- Statistical Analysis: Assess parameter reproducibility across multiple surface locations. For treated surfaces, evaluate evolution of parameters as function of processing time or exposure [11].

Method-Specific Preparation Requirements

X-Ray Fluorescence (XRF) Spectrometry

XRF analysis requires flat, homogeneous surfaces with consistent density. Particle size must be reduced to <75 μm through grinding, followed by pelletizing with binding agents or fusion with fluxing materials to create uniform specimens [1]. The pelletizing process typically involves blending ground sample with a binder (e.g., wax or cellulose) and pressing at 10-30 tons to form stable disks with smooth surfaces [1].

Near-Infrared (NIR) Spectroscopy

NIR spectroscopy benefits from consistent particle size distribution to minimize light scattering variations. Effective spectral pre-processing methods, including simple ratio indices (SRI), normalized difference indices (NDI), and three-band index transformations (TBI), significantly enhance prediction accuracy for soil properties and other complex matrices [12]. Feature selection approaches like recursive feature elimination (RFE) and least absolute shrinkage and selection operator (LASSO) help manage the high dimensionality of transformed spectral data [12].

Inductively Coupled Plasma Mass Spectrometry (ICP-MS)

ICP-MS demands complete sample dissolution to avoid nebulizer clogging and matrix effects. Samples require accurate dilution to appropriate concentration ranges, filtration (typically 0.45 μm) to remove particulates, and acidification with high-purity nitric acid to prevent precipitation [1]. Internal standardization compensates for matrix effects and instrument drift, improving quantitative accuracy.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Materials for Spectroscopic Sample Preparation

| Item | Function | Application Examples |

|---|---|---|

| Spectroscopic Grinding Mills | Particle size reduction to <75 μm | XRF, NIR, ICP-MS sample preparation [1] |

| Laser Diffraction Particle Analyzers | Particle size distribution measurement | Homogeneity assessment [9] |

| Hydraulic Pellet Presses | Fabrication of uniform sample disks | XRF pellet preparation [1] |

| Optical Profilometers | Non-contact surface roughness measurement | Surface quality verification [11] [10] |

| Enhanced Matrix Removal (EMR) Cartridges | Selective removal of matrix interferences | PFAS, mycotoxin analysis in food [13] |

| High-Purity Flux Agents | Sample fusion for refractory materials | XRF glass disk preparation [1] |

| Volatile Modifiers | Enhancement of ionization efficiency | ESI-LC/MS mobile phase preparation [14] |

| MALDI Matrices | Energy absorption and sample incorporation | MALDI-MS target preparation [14] |

The physical principles governing sample homogeneity, particle size, and surface quality represent fundamental determinants of spectroscopic data quality. These parameters directly control light-matter interactions through defined mechanisms that can be quantified and optimized. Standardized characterization protocols, including laser diffraction particle size analysis and multiparameter surface roughness assessment, provide researchers with robust tools for sample quality control.

Within the broader context of spectroscopic method development, acknowledging these physical principles as core analytical parameters rather than peripheral sample preparation concerns represents a paradigm shift toward more robust and reproducible spectroscopic analysis. Future research should focus on establishing quantitative relationships between specific physical parameters and spectral fidelity metrics across different material classes and spectroscopic techniques.

Contamination control is a foundational pillar of analytical integrity in trace analysis, where the accuracy of results is paramount. Inadequate sample preparation is responsible for up to 60% of all spectroscopic analytical errors, underscoring the critical need for rigorous contamination prevention protocols [1]. The process of transforming a raw sample into a form suitable for spectroscopic analysis introduces numerous potential contamination vectors, each capable of compromising data validity. This guide addresses the pervasive challenge of contamination within the context of sample preparation for spectroscopic methods such as ICP-MS, XRF, and FT-IR, providing a systematic framework for identifying contamination sources, assessing associated risks, and implementing effective mitigation strategies. The goal is to equip researchers and scientists with the knowledge to produce reliable, reproducible, and analytically sound results, thereby supporting robust scientific research and quality control in fields like drug development [1].

Contamination in trace analysis can originate from every stage of the analytical process, from sample collection to final analysis. Understanding these sources is the first step toward developing effective countermeasures.

- Sample Collection and Handling: The sample itself can be a primary source of contamination, particularly if it is heterogeneous or collected from a polluted environment. Cross-contamination between samples, contact with non-inert collection tools, and adsorption of contaminants from the atmosphere during handling are significant risks. For instance, trace metal pollution from industrial, agricultural, or mining activities can be introduced during sampling [15].

- Laboratory Environment: The ambient laboratory air can introduce particulate matter, aerosols, and volatile organic compounds. Dust, skin cells, and fibers from clothing are common contaminants. Activities in nearby lab areas can also contribute to cross-contamination.

- Reagents and Solvents: Acids, bases, solvents, and water used in digestion, dilution, and other preparation steps can contain impurity metals or organic compounds at levels significant for ultra-trace analysis. For example, the purity of water from a purification system is critical for techniques like ICP-MS [16].

- Labware and Equipment: Containers, volumetric flasks, pipettes, syringes, and filters can leach elements or absorb analytes. Grinding and milling equipment can contribute particulate wear debris to solid samples [1]. The choice of material (e.g., glass, quartz, plastics like PTFE, PP) is crucial as each has different leaching and adsorption properties.

- Sample Preparation Processes: Specific preparation techniques carry inherent contamination risks. Grinding and milling can cause cross-contamination from previous samples or from the grinding media itself [1]. In ICP-MS preparation, inaccurate dilution or the use of impure reagents can directly skew results [1].

Quantifying Contamination Risks in Spectroscopic Analysis

The impact of contamination is technique-dependent, influenced by the method's sensitivity and the specific analytical question. The table below summarizes the primary contamination effects and their impact on major spectroscopic techniques.

Table 1: Contamination Risks and Impacts on Common Spectroscopic Techniques

| Analytical Technique | Primary Contamination Concerns | Impact on Analysis |

|---|---|---|

| ICP-MS | Contamination from reagents, water, and labware; incomplete sample dissolution; particle introduction clogging nebulizer [1]. | Skews isotopic ratios, causes spectral interferences, elevates baseline, leads to inaccurate quantification, and damages instrument components. |

| XRF | Surface impurities, inconsistent particle size, and cross-contamination during grinding/pelletizing [1]. | Alters X-ray absorption and emission characteristics, leading to inaccurate elemental composition data and reduced precision. |

| FT-IR | Contamination from solvents, KBr pellets, or the atmosphere affecting the sample matrix [1] [17]. | Introduces extraneous absorption bands, obscuring the sample's molecular "fingerprint" and complicating spectral interpretation. |

| Non-Target Analysis (NTA) with HRMS | Contamination that co-elutes chromatographically with analytes of interest; contamination introduced during sample purification (e.g., SPE cartridges) [18]. | Generates spurious chemical signals, complicating the already complex dataset and leading to false positives in contaminant identification. |

Beyond the analytical instrument, contamination poses significant ecological and human health risks when data is used for environmental decision-making. Trace metals like lead, cadmium, and mercury can bioaccumulate, impacting biodiversity and entering the food chain [15]. Inaccurate risk assessments due to contaminated samples can therefore lead to flawed environmental management and policy decisions.

Experimental Protocols for Contamination Prevention and Control

Implementing robust, standardized protocols is essential for mitigating contamination. The following methodologies provide a framework for safeguarding sample integrity.

Protocol for Solid Sample Preparation (Grinding/Pelletizing)

This protocol is critical for techniques like XRF and FT-IR to ensure a homogeneous, contaminant-free sample [1].

- Equipment Cleaning: Prior to processing, clean grinding vessels and milling tools thoroughly. Use a multi-step wash with high-purity solvents (e.g., acetone) and acids (e.g., dilute nitric acid for metal analysis), followed by copious rinsing with high-purity water (e.g., from a system like the Milli-Q SQ2) and drying in a particle-free environment [1] [16].

- Sample Homogenization: For heterogeneous materials, pre-homogenize the bulk sample using a jaw crusher or similar device.

- Grinding/Milling: Select a grinding or milling machine with materials of construction that minimize contamination (e.g., zirconia or tungsten carbide for hard materials). Process the sample to a consistent, fine particle size (e.g., <75 μm for XRF). Clean all equipment meticulously between samples to prevent cross-contamination [1].

- Pelletizing (for XRF): Mix the ground powder with a suitable binder (e.g., cellulose or wax). Press the mixture into a pellet using a hydraulic press (typically 10-30 tons force) to create a flat, uniform-density disk for analysis [1].

- Fusion (for refractory materials): For complete dissolution and matrix normalization, fuse the sample with a flux (e.g., lithium tetraborate) at high temperatures (950-1200°C) in platinum crucibles to create a homogeneous glass disk [1].

Protocol for Liquid Sample Preparation (ICP-MS Focus)

ICP-MS is exceptionally sensitive, requiring ultra-clean preparation techniques [1].

- Sample Digestion: For solid samples, use total acid digestion in a closed-vessel microwave digestion system to ensure complete dissolution and prevent atmospheric contamination.

- Dilution and Acidification: Perform all dilutions with high-purity acids (e.g., nitric acid to 2% v/v) and ultrapure water. Use accurate, calibrated pipettes and volumetric glassware. Acidification helps keep metals in solution and prevents adsorption to container walls [1].

- Filtration: After digestion or dilution, filter the liquid sample through a membrane filter (e.g., 0.45 μm or 0.2 μm for ultratrace analysis) to remove any suspended particles that could clog the nebulizer. Use PTFE membranes to minimize contamination [1].

- Internal Standardization: Add internal standards to all samples, blanks, and calibration standards to correct for matrix effects and instrument drift, improving quantitative accuracy [1].

General Quality Control Measures

- Laboratory Blanks: Process method blanks (all reagents without sample) alongside every batch of samples. The blank is used to identify and correct for background contamination originating from reagents or the preparation process.

- Replication: Analyze samples in duplicate or triplicate to assess the precision and reproducibility of the entire preparation and analytical method.

- Certified Reference Materials (CRMs): Include CRMs with a known matrix and analyte composition that matches the samples. The recovery of the certified values verifies the accuracy of the entire analytical protocol [18].

Advanced Techniques and Machine Learning for Contamination Tracking

Emerging technologies are enhancing the ability to identify and account for contamination.

Machine Learning (ML) for Non-Target Analysis (NTA): ML algorithms are powerful tools for interpreting complex datasets from techniques like high-resolution mass spectrometry (HRMS). They can be trained to distinguish source-specific chemical fingerprints, helping to identify whether a detected compound is a true analyte or an external contaminant introduced during sampling or preparation [18]. For example, classifiers like Random Forest (RF) and Support Vector Classifier (SVC) have been used to screen hundreds of features (chemical signals) across samples with high accuracy, identifying their contamination source [18].

Hyphenated and Advanced Spectroscopic Techniques: The integration of FT-IR with chemometric models is an advancement that improves the detection limits and specificity for identifying metal-binding functional groups in complex matrices like food [17]. Furthermore, the development of portable and handheld spectrometers, as noted in the 2025 instrumentation review, allows for on-site screening, reducing the number of handling and transportation steps where contamination can occur [16].

The workflow below illustrates how machine learning integrates with non-target analysis to improve the identification of contamination sources.

The Scientist's Toolkit: Essential Reagents and Materials

Selecting the right tools is fundamental to any contamination-control strategy. The following table details key reagents and materials used in trace analysis sample preparation.

Table 2: Essential Research Reagent Solutions for Trace Analysis

| Item | Function | Key Considerations |

|---|---|---|

| High-Purity Acids (e.g., HNO₃, HCl) | Sample digestion and dissolution; equipment cleaning. | Use ultra-pure trace metal grade to minimize introduction of elemental impurities, especially for ICP-MS. |

| Solid Phase Extraction (SPE) Cartridges | Purification and pre-concentration of analytes; removal of interfering matrix components [18]. | Select sorbent phase (e.g., Oasis HLB, Strata WAX) based on the target analytes' physicochemical properties to ensure optimal recovery and selectivity. |

| Ultrapure Water Purification System | Preparation of blanks, standards, and dilution of samples; final rinsing of labware. | Systems like the Milli-Q SQ2 series deliver Type 1 water (18.2 MΩ·cm) with low organic content, critical for sensitive techniques [16]. |

| Membrane Filters | Removal of particulate matter from liquid samples prior to analysis (e.g., ICP-MS). | Pore size (0.45 μm or 0.2 μm) and membrane material (e.g., PTFE, nylon) must be selected to avoid analyte adsorption and contamination. |

| Spectroscopic Grinding/Milling Equipment | Particle size reduction and homogenization of solid samples [1]. | Equipment should have hardened grinding surfaces (e.g., tungsten carbide) to minimize wear debris and be easy to clean to prevent cross-contamination. |

| Binders for XRF Pelletizing (e.g., cellulose, boric acid) | Create robust, uniform pellets for analysis by providing structural integrity [1]. | Must be free of the target analytes and produce a pellet with consistent density and surface properties. |

Vigilance against contamination is not a single step but a pervasive mindset that must be embedded throughout the entire analytical workflow. From the initial selection of sample collection tools to the final data validation, every action presents an opportunity to either introduce or prevent error. As analytical techniques like ICP-MS and non-target HRMS push detection limits ever lower, the margin for error shrinks accordingly, making rigorous contamination control protocols non-negotiable. The integration of advanced tools, including machine learning for data interpretation and high-purity reagent systems, provides a powerful defense. By systematically understanding contamination sources, quantifying their risks, and implementing the detailed prevention protocols and essential tools outlined in this guide, researchers can ensure the production of reliable, accurate, and defensible data. This commitment to analytical integrity is the cornerstone of valid scientific research, robust environmental monitoring, and safe drug development.

Matrix effects represent a fundamental challenge in analytical spectroscopy, detrimentally impacting the accuracy, sensitivity, and reproducibility of quantitative analyses [19]. In essence, a matrix effect occurs when components in a sample, other than the analyte of interest, interfere with the analytical measurement process [20]. These interfering components, collectively known as the "matrix," can co-elute or co-exist with the analyte and cause unintended signal suppression or enhancement [19] [21]. In techniques like liquid chromatography-mass spectrometry (LC-MS), this interference predominantly happens during the ionization process, leading to ionization suppression or enhancement [19]. The matrix can include a wide variety of substances, such as salts, proteins, lipids, metabolites, or humic acids, depending on the sample origin (e.g., biological fluids, environmental samples, or food) [20] [21].

The mechanisms behind matrix effects are diverse. In mass spectrometry, one theory suggests that co-eluting basic compounds may deprotonate and neutralize analyte ions, reducing the formation of protonated ions [19]. Other theories propose that less-volatile compounds can affect droplet formation efficiency in the electrospray ion source, or that high-viscosity interferents increase the surface tension of charged droplets, thereby reducing droplet evaporation efficiency [19]. In atomic spectroscopy, matrix effects can also manifest as flame noise, spectral interferences, and chemical interferences [22]. Understanding these mechanisms is the first step toward developing effective strategies to mitigate their impact, which is crucial for generating reliable data in research and drug development.

Detection and Quantification of Matrix Effects

Established Detection Methods

Several experimental methods are routinely employed to detect and assess the presence and extent of matrix effects.

Post-Extraction Spike Method: This method evaluates matrix effects by comparing the signal response of an analyte spiked into a blank matrix extract with the signal response of an equivalent amount of the analyte in a neat mobile phase or pure solvent [19]. The difference in response indicates the extent of the matrix effect. A significant drawback is the requirement for a blank matrix, which is not available for endogenous analytes like metabolites [19].

Post-Column Infusion Method: In this qualitative approach, a constant flow of analyte is infused into the HPLC eluent while a blank sample extract is injected [19]. Variations in the signal response of the infused analyte caused by co-eluting interfering compounds indicate regions of ionization suppression or enhancement in the chromatogram. While useful for method development, this process is time-consuming, requires additional hardware, and is less suitable for multi-analyte samples [19].

Simple Recovery-Based Method: To overcome the limitations of the above methods, a simpler, fast, and reliable method based on recovery has been proposed. This method can be applied to any analyte, including endogenous compounds, and to any matrix without requiring additional hardware [19].

Quantitative Evaluation

The matrix effect can be quantified to understand its practical impact on an analysis. The formula below is used to calculate the matrix effect (ME), expressed as a percentage:

ME (%) = (Signal in Matrix / Signal in Neat Standard) × 100% [21]

For example, if the signal for a pesticide in a strawberry matrix extract is only 70% of the signal for an identical concentration in a pure solvent, this indicates a 30% signal loss due to matrix effect, or an instrumental recovery of 70% [21]. A value of 100% indicates no matrix effect, values below 100% indicate signal suppression, and values above 100% indicate signal enhancement.

Table 1: Interpretation of Matrix Effect Quantification

| Matrix Effect Value | Interpretation |

|---|---|

| > 100% | Signal Enhancement |

| ≈ 100% | No Significant Matrix Effect |

| < 100% | Signal Suppression |

Strategies for Mitigating Matrix Effects

It is often impossible to completely eliminate matrix effects, so a combination of preventative techniques and data correction strategies is required to manage them [19] [20].

Sample Preparation and Cleanup

Optimizing sample preparation is a primary line of defense. The goal is to remove interfering compounds from the sample prior to analysis [19] [1].

- Technique Selection: Methods like liquid-liquid extraction, solid-phase extraction, and filtration can significantly reduce matrix components [1]. For ICP-MS, filtration through a 0.45 μm or 0.2 μm membrane is standard to remove particulates [1].

- Sample Dilution: Simply diluting the sample can reduce the concentration of interfering compounds to a level where their impact is minimized [19] [19]. This approach is only feasible when the method's sensitivity is high enough to tolerate the dilution [19].

Chromatographic and Instrumental Optimization

Modifying the analytical separation can prevent interferents from co-eluting with the analyte.

- Chromatographic Separation: Changing parameters such as the mobile phase composition, gradient, and column type can improve the separation, thereby avoiding the co-elution of analytes and interfering compounds [19] [23] [16].

- Sample Introduction: In atomic spectroscopy, standard addition is a general method used to account for unknown matrix effects [22].

Calibration and Data Correction Techniques

When matrix effects cannot be fully removed, data correction techniques are essential.

- Stable Isotope-Labeled Internal Standards (SIL-IS): This is considered the gold standard for correcting matrix effects in LC-MS [19]. The SIL-IS is chemically identical to the analyte and co-elutes with it, experiencing the same matrix effects. The response of the analyte is normalized to the response of the SIL-IS, effectively canceling out the variability caused by the matrix [19]. The main limitations are the high cost and lack of commercial availability for all analytes [19].

- Standard Addition Method: This method involves adding known increments of the analyte to the sample itself [19] [22]. The signal for each increment is measured and plotted against the added concentration. The resulting line is extrapolated back to the x-axis to determine the original analyte concentration in the sample. The underlying assumption is that the added analyte experiences the same matrix effect as the native analyte [22]. This method is particularly useful for complex or unique matrices where a blank is unavailable [19].

- Structural Analogue as Internal Standard: A co-eluting structural analogue of the analyte can serve as a more affordable alternative to a SIL-IS [19]. While it may not perfectly mimic the analyte's behavior, it can provide a reasonable correction for matrix effects [19].

- Matrix-Matched Calibration: This technique involves preparing calibration standards in a matrix that is free of the analyte but otherwise similar to the sample matrix [20]. This can be challenging as it requires a suitable blank matrix and it is difficult to exactly match the matrix of every unknown sample [19].

The following workflow outlines a systematic approach to diagnosing and addressing matrix effects in the laboratory.

Experimental Protocols for Key Experiments

Protocol: Post-Extraction Spike for Matrix Effect Quantification

This protocol is used to quantify the matrix effect for an analyte in a given matrix [19] [21].

Sample Preparation:

- Obtain a blank matrix (e.g., organically grown strawberry extract for pesticide analysis).

- Prepare a post-extraction spiked sample: To 900 µL of the blank matrix extract, add 100 µL of a known concentration of analyte spiking solution (e.g., 50 ppb) to make the final concentration (e.g., 5 ppb) [21].

- Prepare a neat standard: To 900 µL of pure solvent, add 100 µL of the same analyte spiking solution to achieve the same final concentration.

Analysis:

- Analyze both the post-extraction spiked sample and the neat standard using the developed LC-MS/MS or other spectroscopic method.

- Record the peak areas (or other signal metrics) for the analyte in both samples.

Calculation:

- Calculate the Matrix Effect (ME) using the formula: ME (%) = (Peak Area in Post-Extraction Spike / Peak Area in Neat Standard) × 100% [21].

Protocol: Standard Addition Method

This protocol is used to both detect a matrix effect and accurately quantify the analyte concentration in its presence [19] [22].

Sample Aliquots:

- Take several equal-volume aliquots of the sample containing the unknown concentration of analyte (X).

- To each aliquot, add a known and increasing volume of a standard analyte solution.

- Dilute all aliquots to the same final volume. This results in a series of samples with the same original matrix and original analyte concentration, but with known amounts of added standard (e.g., +0, +C, +2C, +3C).

Analysis:

- Analyze all samples in the standard addition series.

- Record the signal (e.g., peak area) for the analyte in each sample.

Data Plotting and Calculation:

- Plot the measured signal on the y-axis against the concentration of the added standard on the x-axis.

- Perform a linear regression and extrapolate the line to where it crosses the x-axis (i.e., where signal = 0).

- The absolute value of the x-intercept is the original concentration of the analyte in the sample [22].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials and reagents used in the detection and mitigation of matrix effects.

Table 2: Key Research Reagent Solutions for Matrix Effect Management

| Reagent/Material | Function in Managing Matrix Effects |

|---|---|

| Stable Isotope-Labeled Internal Standard (SIL-IS) | Co-elutes with the analyte, undergoes identical ionization, and provides a reference signal to correct for suppression/enhancement; considered the most effective correction method [19]. |

| Structural Analogue Internal Standard | A chemically similar compound used as a more accessible alternative to SIL-IS to correct for matrix effects, though it may not be as precise [19]. |

| High-Purity Solvents & Acids | Used for sample dilution, preparation of mobile phases, and acidification (e.g., for ICP-MS) to minimize the introduction of contaminants that can cause or exacerbate matrix effects [1]. |

| Solid-Phase Extraction (SPE) Cartridges | Used for selective sample clean-up to remove interfering matrix components (e.g., proteins, lipids) before analysis [1]. |

| Filtration Membranes (e.g., 0.22 µm PTFE) | Used to remove particulate matter from samples, preventing clogging and reducing a source of physical interference, especially in ICP-MS and HPLC [19] [1]. |

| Matrix-Matched Blank Material | An analyte-free sample of the same or similar matrix used to prepare calibration standards for the matrix-matched calibration technique, compensating for consistent matrix effects [20]. |

Method-Specific Protocols: A Practical Guide to Preparing Samples for Major Spectroscopic Techniques

Within the broader context of spectroscopic methods research, Nuclear Magnetic Resonance (NMR) spectroscopy stands as a powerful technique for elucidating molecular structure, dynamics, and interaction. The critical differentiator between success and failure in NMR analysis often lies not in the spectrometer's capabilities but in the preparatory stages long before the experiment begins. Proper sample preparation is a foundational requirement that transcends all spectroscopic methods, and in NMR, it dictates the clarity, resolution, and ultimate interpretability of the data. This guide provides an in-depth examination of the three pillars of effective NMR sample preparation: the selection of appropriate deuterated solvents, the choice of a correctly specified NMR tube, and the formulation of a solution with an optimal concentration. These factors collectively determine the quality of the magnetic field homogeneity, the signal-to-noise ratio, and the accuracy of the resulting spectrum, forming a non-negotiable protocol for researchers and drug development professionals aiming to generate reliable, high-quality data.

Deuterated Solvent Selection

Deuterated solvents are a group of compounds in which one or more hydrogen atoms have been replaced with deuterium (²H). They serve as the backbone of NMR spectroscopy for two primary reasons: they provide a deuterium signal for the spectrometer to "lock" onto, compensating for magnetic field drifts, and they minimize the intense solvent background signals that would otherwise obscure the analyte's spectrum, particularly in ¹H NMR [24] [25].

Table 1: Common Deuterated Solvents and Their Properties

| Solvent | Common Applications | Boiling Point (°C) | Key Advantages & Considerations |

|---|---|---|---|

| Chloroform-D (CDCl₃) | Non-polar organic compounds [26] | 61.2 [25] | Relatively inexpensive, high isotopic purity, easy to evaporate for sample recovery [25] |

| Dimethyl Sulfoxide-D6 (DMSO-d6) | Compounds difficult to solubilize (e.g., polar organics, pharmaceuticals) [25] | 189 [25] | Excellent solubilizing power; high boiling point makes sample recovery difficult without specialized equipment [25] |

| Deuterium Oxide (D₂O) | Water-soluble molecules, biomolecules [26] | 101.4 | Essential for biological samples; pH is measured as pD (pD = pH meter reading + 0.4) [24] |

| Methanol-D4 (CD₃OD) | Medium-polarity compounds, reaction mixtures | 64.7 | Useful for a wide range of polarities |

| Acetonitrile-D3 (CD₃CN) | Versatile polar solvent | 81.6 | Low viscosity, sharp peaks |

When selecting a solvent, the primary consideration is whether it can dissolve your analyte completely to create a homogeneous solution [25]. One should also consult the solvent's NMR spectrum to ensure its residual proton signals do not overlap with critical peaks of the analyte. For ¹H NMR, it is recommended to dissolve between 2 and 10 mg in 0.6 to 1 mL of solvent [25]. Furthermore, for samples that need to be recovered post-analysis, the solvent's boiling point becomes a major factor, with low-boiling solvents like CDCl₃ being significantly easier to remove than high-boiling ones like DMSO-d6 [25].

NMR Tube Selection and Handling

The NMR tube is a deceptively simple piece of equipment whose quality directly impacts spectral resolution. NMR tubes are typically made from borosilicate glass or high-precision quartz and come in standard lengths, most commonly 7 inches (17.8 cm) [27] [28]. The most prevalent outer diameter is 5 mm, which fits the probes of most modern spectrometers [27] [28].

Tube Grades and Specifications

The quality of NMR tubes is categorized into several tiers, each suited for different applications and field strengths. Key specifications to understand are concentricity (how round the tube is, affecting wobble), camber (the tube's straightness), and wall thickness [29].

Table 2: NMR Tube Grades by Application and Field Strength

| Tube Grade | Typical Field Strength | General Application | Key Characteristics & Limitations |

|---|---|---|---|

| High-Throughput / Economy | 100 - 400 MHz [30] | Routine organic chemistry, educational applications [31] [30] | Least costly; thicker walls reduce S/N; longer shimming times; not for VT experiments [31] |

| Precision / Research | 400 - 700 MHz [30] | Routine synthetic chemistry research, metabolic mixture analysis [30] | Better dimensional control (concentricity, camber); improved S/N and resolution [31] |

| Ultra-Precision / Premium | 700 - 900+ MHz [30] | Structural biology, multi-purpose research [30] | Highest quality control; often thin-walled for maximum S/N; essential for high-field instruments [31] |

Sample Preparation and Tube Handling

Proper handling is crucial. The optimal solution height in a 5 mm NMR tube is 4 cm (approximately 0.55 mL) [31]. Shorter samples are difficult or impossible to shim properly, while longer samples can be wasteful and may also present shimming challenges [31]. The sample must be free of solid particles, as they distort the magnetic field homogeneity, causing broad, indistinct spectral lines [31]. If solids are present, the solution should be filtered using a Pasteur pipette with a tightly packed plug of glass wool (cotton wool should be avoided as it can leach impurities) [31]. After use, tubes should be rinsed with an appropriate solvent like acetone and dried lying flat, preferably in a vacuum oven at low temperature or with a blast of dry air to avoid distortion from high heat [31] [28].

Sample Concentration Guidelines

The concentration of the analyte in the deuterated solvent is a critical determinant of data quality, directly influencing the signal-to-noise (S/N) ratio and the required acquisition time.

Standard Organic Molecules

For routine ¹H NMR analysis of organic compounds, a sample quantity of 5 to 25 mg dissolved in 0.5 - 0.6 mL of solvent is typically adequate [31] [28]. At very low concentrations, peaks from common contaminants like water and grease can dominate the spectrum, and achieving a satisfactory S/N will require significantly more spectrometer time (halving the concentration requires four times the acquisition time to achieve the same S/N) [31]. Conversely, over-concentration can lead to broad or asymmetric lines due to increased solution viscosity or if the sample concentration varies along the height of the solution [28].

Proteins and Biomolecules

NMR studies of proteins and peptides have more specific concentration requirements, which are also influenced by the need for isotopic labeling.

Table 3: Sample Concentration Guidelines for NMR Experiments

| Analyte Type | Recommended Concentration | Typical Quantity Required (in ~0.5 mL) | Special Considerations |

|---|---|---|---|

| Small Organic Molecules (¹H NMR) | 5 - 25 mg [31] | 5 - 25 mg | Higher concentrations can cause viscosity broadening [31] [28] |

| Small Organic Molecules (¹³C NMR) | 20 - 50 mg [26] | 20 - 50 mg | ~6000x less sensitive than ¹H; high concentration is key [28] |

| Peptides | 1 - 5 mM [32] | 1.5 - 7.5 mg | Requires higher concentrations than larger proteins [32] |

| Proteins (< 30-50 kDa) | 0.3 - 0.5 mM [32] | 5 - 10 mg (for a 20 kDa protein) | High-resolution structure determination [32] |

| Protein Interaction Studies | ~0.1 mM [32] | Varies by system | Lower concentrations may suffice depending on the binding affinity [32] |

For biomolecules, labeling with ¹⁵N and/or ¹³C is often essential. For small proteins (≤40 residues), labeling may not be strictly necessary, but ¹⁵N labeling is beneficial for low-concentration samples or more precise data. For larger proteins, ¹⁵N and ¹³C labeling is required to reduce spectral overlap, and ²H labeling is necessary to enhance the S/N ratio for proteins larger than 20 kDa [32].

Experimental Workflow for NMR Sample Preparation

The following diagram outlines the logical workflow for preparing a standard NMR sample, from selection of materials to final quality checks before insertion into the spectrometer.

NMR Sample Preparation Workflow

Detailed Methodology for Key Steps

- Solvent and Tube Selection: The choice of solvent and tube should be made in tandem, driven by the analyte's properties and the experimental goals outlined in Sections 2 and 3. For instance, a drug discovery professional working with a novel, poorly soluble active pharmaceutical ingredient might empirically determine that

DMSO-d6is the only viable solvent and would therefore select a standard precision-grade tube for a 500 MHz instrument [25] [30]. - Weighing and Dissolution: Accurately weigh the analyte, targeting the concentrations detailed in Section 4. The compound should be dissolved in a clean vial or directly in the NMR tube using approximately 0.6 mL of the selected deuterated solvent. Gentle vortexing or sonication can be used to ensure complete dissolution and a homogeneous solution [26].

- Transfer and Filtration: Use a clean pipette to transfer the solution to the NMR tube, avoiding the introduction of air bubbles. If the solution is cloudy or contains solid particles, it must be filtered. This can be done by preparing a slightly larger volume of solution and filtering it through a Pasteur pipette containing a tight plug of glass wool (not cotton, which can leach impurities) into the NMR tube [31] [28].

- Final Preparation Steps:

- Solution Depth: Use a ruler or depth gauge to ensure the solution height is at least 4 cm from the bottom of the tube [31] [26].

- Capping: Push a polyethylene cap fully onto the tube to prevent solvent evaporation, particularly for volatile solvents like

CDCl₃[31] [28]. - Cleaning: Wipe the outside of the NMR tube thoroughly with a lint-free tissue and a solvent like ethanol or acetone to remove any fingerprints, dust, or residue. This is critical to prevent dirt from building up inside the NMR probe [26] [28].

- Labeling: Label the top of the tube or the cap with a permanent marker. Do not use paper labels, tape, or any other materials that could come loose inside the spectrometer [31] [28].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Essential Materials for NMR Sample Preparation

| Item | Function in NMR Sample Preparation |

|---|---|

| Deuterated Solvents (e.g., CDCl₃, DMSO-d6) | Dissolves the analyte, provides a deuterium lock signal, and minimizes solvent interference in the spectrum [24] [25]. |

| NMR Tubes (5 mm standard) | Holds the sample solution; high-quality tubes with good concentricity and camber are essential for high-resolution spectra [31] [29]. |

| Internal Reference (e.g., TMS, DSS) | Provides a standard peak (0 ppm) for chemical shift referencing in ¹H NMR [31]. |

| Micropipettes/Syringes | Allows for accurate and precise measurement and transfer of solvent and sample solutions [26]. |

| Pasteur Pipettes & Glass Wool | Used for filtering samples to remove solid particles that degrade spectral quality [31]. |

| Analytical Balance | Accurately weighs milligram quantities of the analyte for precise concentration preparation [26]. |

| Tube Depth Gauge | Ensures the solution is at the optimal height (~4 cm) in the NMR tube for proper shimming [26]. |

| Inert Atmosphere (N₂/Ar) | Used when handling air- or moisture-sensitive compounds to prevent sample decomposition [24]. |

| Molecular Sieves | Added to solvent bottles to absorb water and maintain anhydrous conditions, minimizing the water peak in the spectrum [28]. |

In the realm of material characterization, spectroscopic methods are indispensable, yet they often present a significant trade-off between information quality and analytical speed. Sample preparation requirements constitute a major bottleneck in analytical workflows, particularly for complex, multi-layered, or delicate materials. Traditional Fourier-Transform Infrared (FT-IR) spectroscopy, while powerful, typically demands extensive sample manipulation—including microtoming, embedding, polishing, or KBr pellet formation—to yield usable data. These procedures are not only time-consuming but also introduce risks of altering the sample's inherent properties or introducing contaminants [33] [34].

Attenuated Total Reflection (ATR) FT-IR spectroscopy has already made strides in simplifying sample preparation by enabling direct measurement of solids and liquids. However, even conventional ATR methods could require substantial pressure to achieve adequate optical contact, often destroying delicate samples or necessitating structural supports. This technical guide explores a transformative innovation: Live ATR-FT-IR imaging. This advancement enables truly preparation-free, high-resolution chemical analysis of polymers, revolutionizing workflows in pharmaceutical development, materials science, and industrial quality control [33].

Technical Foundations: From Conventional ATR to Live Imaging

Fundamental Principles of ATR-FT-IR

ATR-FT-IR spectroscopy operates on the principle of total internal reflection. Infrared light passes through an Internal Reflection Element (IRE) crystal with a high refractive index (e.g., diamond, germanium). When this light strikes the crystal-sample interface at an angle exceeding the critical angle, it generates an evanescent wave that penetrates approximately 0.5–2 µm into the sample in contact with the crystal. The sample absorbs specific wavelengths of this energy, creating an absorption spectrum that serves as a molecular "fingerprint" [34] [35].

Compared to transmission FT-IR, which requires samples thin enough to be IR-transparent (typically 5–20 µm), ATR imposes no such thickness limitations. Furthermore, ATR provides a significant enhancement in spatial resolution—by a factor of four over transmission mode—enabling the identification and mapping of micron-scale features within complex samples [33].

The Evolution to Live Chemical Imaging

The critical innovation enabling preparation-free analysis is the integration of focal plane array (FPA) detectors with a "live ATR imaging" mode that provides real-time enhanced chemical contrast [33].

Unlike linear-array detectors that build images sequentially, a 64×64 FPA detector simultaneously captures 4,096 individual spectra, generating an instantaneous 2D chemical image. The live imaging mode processes this data in real-time, dramatically enhancing the visual contrast between different chemical species. This allows the operator to visually monitor the exact moment of sample-to-crystal contact and assess the quality of that contact across the entire field of view before collecting the final data. This visual feedback enables the application of extremely low pressure, eliminating the buckling or distortion that previously required rigid sample supports like resin embedding for delicate materials [33].

Table 1: Comparative Analysis of FT-IR Sampling Techniques

| Parameter | Transmission FT-IR | Conventional ATR-FT-IR | Live ATR-FT-IR Imaging |

|---|---|---|---|

| Sample Preparation | Extensive (thin sectioning, KBr pellets) | Moderate (flattening, often requires embedding) | Minimal to None ("as-is" samples) |

| Spatial Resolution | ~10-15 µm [33] | ~1.1 µm pixel size [33] | ~1.1 µm pixel size [33] |

| Typical Analysis Time | Hours to days (including prep) | Minutes to hours | Minutes (including prep) |

| Pressure Required | Not Applicable | High (risk of damage) | Ultra-low (non-destructive) |

| Suitability for Delicate Laminates | Poor | Poor without embedding | Excellent |

| Real-Time Contact Monitoring | No | No | Yes |

Experimental Protocol: Preparation-Free Analysis of Polymer Laminates

The following detailed methodology is adapted from a landmark study demonstrating the direct analysis of a commercial polymer laminate sausage wrapper (~55 µm thick) without any structural support [33].

Research Reagent Solutions and Essential Materials

Table 2: Essential Materials and Equipment for Live ATR-FT-IR Imaging

| Item | Specification/Function |

|---|---|

| FT-IR Imaging System | Agilent Cary 670-IR FT-IR spectrometer coupled to a Cary 620-IR FT-IR microscope [33] |

| Detector | 64x64 MCT Focal Plane Array (FPA) [33] |

| ATR Accessory | "Slide-on" micro Germanium (Ge) ATR accessory attached to a 15× IR objective [33] |

| Sample Holder | Micro-vice (e.g., from ST-Japan) for securing unsupported sample cross-sections [33] |

| Spectral Resolution | 4 cm⁻¹ [33] |

| Number of Scans | 64 co-added scans [33] |

| Field of View (FOV) | 70 µm × 70 µm [33] |

| Pixel Sampling Size | 1.1 µm [33] |

Step-by-Step Workflow

- Sample Sectioning: A small piece of the polymer laminate is cut and secured vertically in a micro-vice. A clean razor blade is used to cross-section the sample, creating a fresh, flat edge for analysis [33].

- Mounting: The micro-vice holding the unsupported, cross-sectioned sample is placed on the motorized microscope stage [33].

- Live Contact Monitoring: The stage is raised incrementally toward the ATR crystal. The live ATR imaging mode with enhanced chemical contrast is activated, allowing the operator to observe the sample making initial contact with the crystal in real-time. This visual feedback ensures optimal, uniform contact is achieved with minimal applied force [33].

- Data Acquisition: Once optimal contact is confirmed, the final hyperspectral data cube is collected. For the referenced study, this involved collecting 64 scans at 4 cm⁻¹ resolution, taking approximately 2 minutes [33].

- Data Processing and Analysis: Software is used to generate chemical images based on the distribution of specific absorption bands (e.g., carbonyl stretches, methylene bends). Library searching and multivariate analysis identify components and their spatial distribution [33].

Results and Analysis: Uncovering Hidden Laminate Structures

Application of this protocol to a commercial 55 µm thick sausage wrapper successfully identified a five-layer structure, including three primary polymer layers and two sub-micron adhesive "tie" layers, all without resin embedding [33].

- Layer Identification: The three main layers were identified via spectral library search as polyethylene (outer left, 11 µm), nylon (middle, 16 µm), and polyethylene (outer right, 20 µm) [33].

- Visualizing Thin Tie Layers: Two distinct tie layers (adhesives) were chemically visualized between the main layers. Their extreme thinness resulted in some spectral mixing from the adjacent polymers in the chemical maps, but their presence and location were clearly confirmed [33].

This analysis would have been impossible with traditional transmission FT-IR due to the sample's thickness and opacity. Conventional ATR would have likely buckled the unsupported laminate, requiring an overnight resin embedding process that risks contamination and obscures the delicate tie layers [33].

Advanced Applications and Future Directions

Expanding into Biomedical and Pharmaceutical Fields

The principles of live ATR-FT-IR imaging are transferable beyond polymer laminates. In the biopharmaceutical industry, it is being developed for in-line monitoring of protein formulations during purification processes, such as protein A chromatography. Multi-channel microfluidic designs integrated with ATR-FT-IR imaging allow for high-throughput comparison of protein stability under different conditions, drastically reducing experimental variability and enhancing biopharmaceutical quality control [36].

Integration with Machine Learning and Automated Systems

The future of this technology points toward greater automation and intelligence. The integration of machine learning (ML) techniques with FT-IR spectroscopic imaging is poised to handle the vast, complex datasets generated, automatically identifying spectral patterns and predicting material properties [36]. Furthermore, the development of semi-automated systems like the MARS (Microplastic Analyzer using Reflectance-FTIR Semi-automatically) for large microplastics demonstrates a trend towards high-throughput, automated chemical identification and sizing, reducing analysis time by a factor of 6.6 compared to manual ATR-FT-IR [37].

Live ATR-FT-IR chemical imaging represents a paradigm shift in spectroscopic analysis, effectively eliminating the long-standing bottleneck of sample preparation for polymer and soft materials. By providing real-time visual feedback that enables ultralow-pressure contact, this innovation allows researchers to obtain high-resolution chemical maps from delicate, complex samples in their native state. The resulting acceleration of analytical workflows, combined with the preservation of intrinsic sample structure, provides researchers and product developers with a more powerful, efficient, and truthful analytical tool. As the technology converges with machine learning and automated high-throughput systems, its role in driving innovation across material science, pharmaceuticals, and industrial quality control is set to expand dramatically.

Within the broader context of spectroscopic sample preparation research, the precision of any analytical result is fundamentally rooted in the initial steps of sample handling. In Ultraviolet-Visible (UV-Vis) spectroscopy, where molecular information is derived from light-matter interactions, proper technique is not merely beneficial but essential for data integrity. Inadequate sample preparation accounts for approximately 60% of all spectroscopic analytical errors [1], highlighting the critical importance of methodological rigor. This guide addresses two pivotal aspects of UV-Vis sample preparation: cuvette path length selection and solvent optimization. These parameters directly influence the Beer-Lambert law relationship, where absorbance (A) is proportional to path length (b) and concentration (c), making their proper configuration fundamental to quantitative accuracy. The choices researchers make in these domains determine whether their instruments measure true molecular properties or artifacts of poor preparation, ultimately affecting research validity in fields from pharmaceutical development to environmental monitoring.

Theoretical Framework: The Interplay of Light and Matter

The Beer-Lambert Law in Practical Applications

The Beer-Lambert law (A = εbc) establishes the theoretical foundation for UV-Vis spectroscopy, creating a direct mathematical relationship between absorbance (A) and the product of molar absorptivity (ε), path length (b), and concentration (c). In practical laboratory settings, this relationship dictates that for any given analyte, the measured absorbance can be modulated by either changing the concentration of the solution or altering the path length of the light traveling through the sample. This principle becomes particularly valuable when analyzing samples with very high or low absorbance values, where instrumental limitations can compromise data quality. Understanding this interplay allows researchers to strategically optimize their experimental parameters to maintain absorbance readings within the instrument's ideal detection range (typically 0.1-1.0 AU) [38], thereby maximizing signal-to-noise ratio and photometric linearity.

Impact of Path Length on Sensitivity and Detection Limits

Path length manipulation provides a powerful tool for extending the dynamic range of UV-Vis measurements without modifying sample composition. Increasing path length enhances sensitivity for dilute samples by providing a longer interaction distance between light and analyte molecules, effectively increasing the observed absorbance. Conversely, decreasing path length is essential for highly concentrated samples that would otherwise produce absorbances beyond the instrument's photometric range (commonly above 3 AU) [38]. This approach is scientifically superior to excessive dilution for concentrated samples, as dilution can introduce errors through volumetric manipulations and alter matrix effects. The strategic selection of appropriate path length therefore serves as a primary method for optimizing analytical conditions across diverse sample scenarios, from trace analysis in environmental samples to concentrated stock solutions in pharmaceutical quality control.

Cuvette Selection: Material Compatibility and Path Length Optimization

Cuvette Material Properties and Analytical Requirements

The selection of cuvette material constitutes the first critical decision in UV-Vis sample preparation, as material properties determine the spectral range, chemical compatibility, and application suitability. Quartz cuvettes, fabricated from high-purity fused silica, provide exceptional transparency from approximately 190 nm to 2500 nm, encompassing the deep UV range essential for nucleic acid (260 nm) and protein (280 nm) analysis [39]. This material exhibits minimal autofluorescence, making it indispensable for sensitive fluorescence assays where background signal would otherwise obscure weak emissions. Chemically, quartz demonstrates robust resistance to most solvents and acids, though it is incompatible with hydrofluoric acid, and offers superior thermal stability, withstanding temperatures up to 1000°C for specific configurations [39].

Optical glass cuvettes present a cost-effective alternative but with significant limitations, transmitting only above approximately 350 nm and exhibiting moderate autofluorescence that reduces signal-to-noise ratio in detection. Plastic disposables,

Table 1: Performance Comparison of Cuvette Materials for UV-Vis Spectroscopy

| Feature | Quartz (Fused Silica) | Optical Glass | Plastic (PS/PMMA) |

|---|---|---|---|

| UV Transmission Range | 190–2500 nm | >320 nm | ~400–800 nm (No UV support) |

| Visible Transmission | Excellent | Excellent | Good |

| Autofluorescence | Low | Moderate | High |

| Chemical Resistance | High (except HF) | Moderate | Low |

| Max Temperature | 150–1200°C | ~90°C | ~60°C |

| Lifespan | Years (with proper care) | Months–Years | Disposable |

| Best Applications | UV-Vis, fluorescence, solvents | Visible-only assays | Teaching labs, colorimetric assays |

while economical for high-throughput visible light applications, are spectrally limited to approximately 400-800 nm and suffer from high autofluorescence and poor solvent resistance [39]. The material decision tree therefore follows a clear logic: quartz for UV transparency and chemical durability, glass for visible-only applications requiring reuse, and plastic for disposable visible-range analyses where cost outweighs performance considerations.

Path Length Optimization Strategies

Path length optimization requires understanding both theoretical principles and practical constraints. The standard 10 mm path length serves as the global calibration standard for UV-Vis, providing an optimal balance between sensitivity and sample volume requirements (typically 2.5-4 mL) [39]. The industry standard tolerance for path length is ±0.05 mm [40], a specification crucial for quantitative comparative studies. For samples with limited availability or high value, micro volume cells (250-1000 μL) and sub-micro cells (10-250 μL) with tapered designs maintain the 10 mm path length while reducing volume requirements [38]. These configurations employ specialized masking to prevent stray light effects when window dimensions are smaller than the beam diameter.

For analytical scenarios requiring concentration adjustment without dilution, variable path length cuvettes offer adaptable dimensions. The calculations follow a straightforward geometric principle: Path Length = Outer Dimension - (2 × Wall Thickness) [40]. Dual path length cuvettes provide a practical solution for analyzing samples of varying concentration within a single experiment, functioning as standard 10 mm cells when light traverses the long axis, but providing shorter paths (1 mm, 2 mm, etc.) when rotated 90 degrees [40]. This capability is particularly valuable for screening applications where analyte concentration may be unknown beforehand, allowing researchers to rapidly identify the optimal measurement conditions without sample manipulation.

Table 2: Cuvette Path Length Selection Guide for Common Sample Scenarios

| Sample Scenario | Recommended Path Length | Typical Volume | Key Considerations |

|---|---|---|---|

| Standard Concentration Solutions | 10 mm | 3.0–3.5 mL | Global standard; ideal for absorbance 0.1–1.0 AU |

| High Concentration Samples | 1–5 mm | 0.5–2 mL | Prevents signal saturation; avoids excessive dilution |

| Trace Analysis | 20–50 mm | 4–10 mL | Enhances sensitivity for low-concentration analytes |

| Limited/Precious Samples | 10 mm (micro) | 250–1000 µL | Maintains standard path with reduced volume |

| Highly Scattering Samples | 2 mm | 1–2 mL | Reduces multiple scattering artifacts |

| Screening Unknown Concentrations | Dual (e.g., 10×1 mm) | 1.5–3 mL | Enables quick optimization without dilution |

The following workflow diagram illustrates the decision process for selecting appropriate cuvette parameters based on experimental requirements:

Solvent Selection and Sample Preparation Protocols

Strategic Solvent Selection for Optimal Spectral Quality

Solvent selection represents a critical parameter that directly influences spectral quality through its effects on solubility, stability, and spectral interference. The primary consideration involves the solvent cutoff wavelength, defined as the point below which the solvent itself exhibits significant absorbance, thereby creating spectral backgrounds that obscure analyte signals. For UV-transparent measurements, high-purity solvents with low cutoff wavelengths are essential: water (~190 nm), acetonitrile (~190 nm), and hexane (~195 nm) provide the broadest UV transmission windows [38]. In contrast, common solvents like methanol (~205 nm) and chloroform (~245 nm) impose more significant limitations on the accessible spectral range.