Spectroscopic Sample Preparation Fundamentals: A Complete Guide to Accurate and Reproducible Results

This comprehensive guide details the essential principles and techniques for spectroscopic sample preparation, a critical stage responsible for up to 60% of all analytical errors.

Spectroscopic Sample Preparation Fundamentals: A Complete Guide to Accurate and Reproducible Results

Abstract

This comprehensive guide details the essential principles and techniques for spectroscopic sample preparation, a critical stage responsible for up to 60% of all analytical errors. Tailored for researchers, scientists, and drug development professionals, the article provides a methodical framework covering foundational theory, technique-specific protocols for methods like ICP-MS, FT-IR, and XRF, common troubleshooting strategies, and validation procedures. By systematizing this often-overlooked discipline, the content empowers professionals to enhance data accuracy, ensure reproducibility, and streamline analytical workflows in biomedical and clinical research.

Why Sample Preparation is the Cornerstone of Reliable Spectroscopy

The Critical Impact of Sample Preparation on Data Validity

In the realm of analytical science, the quality of data is only as robust as the foundation upon which it is built. Sample preparation constitutes this critical foundation, a stage so vital that inadequate preparation is the cause of approximately 60% of all spectroscopic analytical errors [1]. Despite significant investments in advanced analytical instrumentation, the importance of sample preparation equipment and procedures is frequently underestimated. Without proper preparation, researchers risk collecting misleading data that can compromise research projects, quality control practices, and analytical conclusions [1]. This technical guide examines the profound impact of sample preparation on data validity, focusing specifically on spectroscopic analysis within drug development and research contexts, and provides detailed methodologies for implementing robust preparation protocols.

The fundamental principle underlying effective sample preparation is that it directly controls key parameters affecting analytical outcomes: homogeneity, particle characteristics, and matrix effects [1]. Surface and particle characteristics influence how radiation interacts with your sample, where rough surfaces scatter light randomly, while uniform particle size ensures consistent interaction with radiation. Furthermore, matrix effects occur when sample matrix constituents absorb or add to spectral signals, obscuring or enhancing the analyte response. Proper preparation techniques mitigate these interferences through dilution, extraction, or matrix matching [1].

Key Sample Preparation Techniques and Their Analytical Consequences

Solid Sample Preparation Methods

The transformation of raw solid materials into analyzable specimens requires techniques specifically designed to achieve the homogeneity, particle size, and surface quality necessary for valid spectroscopic analysis.

Grinding and Milling: Grinding reduces particle size and generates homogeneous samples through mechanical friction. The method significantly impacts spectral quality by ensuring uniform interaction with radiation. Swing grinding machines are particularly effective for tough samples like ceramics and ferrous metals, using oscillating motion rather than direct pressure to reduce heat formation that might alter sample chemistry [1]. For optimal results, samples should be ground to typically <75μm for XRF analysis, with consistent grinding time across sample sets and intensive cleaning between samples to prevent cross-contamination [1].

Pelletizing for XRF: Pelletizing transforms powdered samples into solid disks with uniform surface properties and density, essential for XRF analysis. The process involves blending the ground sample with a binder (e.g., wax or cellulose), pressing using hydraulic or pneumatic presses (typically 10-30 tons), and producing pellets with flat, smooth surfaces and equal thickness [1]. Proper pellet preparation directly affects analytical accuracy through improved sample stability and reduced matrix effects.

Fusion Techniques: Fusion represents the most stringent preparation technique for complete dissolution of refractory materials into homogeneous glass disks. The process involves blending the ground sample with a flux (typically lithium tetraborate), melting at temperatures between 950-1200°C in platinum crucibles, and casting the molten charge as a disk for analysis [1]. Fusion completely breaks down crystal structures in silicate materials, minerals, and ceramics, simultaneously standardizing the sample matrix to eliminate effects that hinder quantitative analysis.

Liquid and Gas Sample Preparation Methods

Liquid and gaseous samples present distinct analytical challenges that necessitate specialized preparation approaches to ensure data validity.

Dilution and Filtration for ICP-MS: Due to its high sensitivity, ICP-MS demands stringent liquid sample preparation where subtle errors can dramatically skew results. Dilution places analyte concentrations within optimal instrument detection ranges while reducing matrix effects. Filtration (typically using 0.45μm or 0.2μm membrane filters) removes suspended material that could contaminate nebulizers or hinder ionization [1]. High-purity acidification with nitric acid (typically to 2% v/v) maintains metal ions in solution by preventing precipitation and adsorption to vessel walls.

Solvent Selection for Molecular Spectroscopy: For techniques like UV-Vis and FT-IR, solvent choice significantly influences spectral quality. The optimal solvent completely dissolves the sample without being spectroscopically active in the analytical region of interest. For UV-Vis, key solvent properties include cutoff wavelength (below which the solvent absorbs strongly), polarity, and purity grade [1]. For FT-IR, deuterated solvents like deuterated chloroform (CDCl₃) provide excellent alternatives with minimal interfering absorption bands across most of the mid-IR spectrum.

Table 1: Common Preparation Errors and Their Impact on Spectroscopic Data Validity

| Preparation Error | Impact on Spectroscopic Data | Corrective Action |

|---|---|---|

| Inconsistent Particle Size (>75μm for XRF) | Increased scattering, reduced signal-to-noise ratio, sampling bias | Implement controlled grinding/milling with particle size verification |

| Incomplete Dissolution (ICP-MS) | Signal suppression, inaccurate quantification, instrument drift | Optimize digestion protocols; use high-purity acids with appropriate temperatures |

| Matrix Contamination | Spectral interference, false positives/negatives, baseline distortion | Use high-purity reagents; implement clean protocols; include preparation blanks |

| Improper Hydration State (FT-IR) | Spectral bands from water obscure analyte signals | Dry samples properly; use moisture-free atmosphere during preparation |

| Surface Irregularities (XRF) | Incorrect intensity measurements, quantification errors | Employ precision polishing; use binder for homogeneous pellet formation |

Advanced Materials and Strategies for Enhanced Preparation

The evolution of sample preparation has seen significant advances in functional materials and strategic approaches designed to enhance analytical performance across multiple parameters.

Functional Material-Based Strategies

The development of analytical chemistry has been significantly shaped by interdisciplinary demands from life sciences, environmental monitoring, medical diagnostics, and food safety. Functional materials represent a widely adopted strategy where these materials act as additional phases that disrupt the equilibrium of the sample preparation system, enabling efficient enrichment and selective separation of target analytes [2]. This approach enhances both sensitivity and selectivity of the analytical method, though it may increase operational complexity and extend overall analysis time [2].

Key advanced materials include:

Magnetic Nanocomposites: These materials combine the selectivity of functionalized surfaces with the convenience of magnetic separation, enabling rapid isolation of analytes from complex matrices without centrifugation or filtration [2].

Covalent Organic Frameworks (COFs): These porous crystalline materials offer designable structures and functionalities that can be tailored for specific extraction applications, providing exceptional selectivity for target compounds [2].

Deep Eutectic Solvents (DES): As green alternatives to traditional organic solvents, DES are formed by mixing hydrogen bond donors and acceptors, resulting in mixtures with significantly lower melting points than their individual components. These solvents offer advantages for extracting various analytes while aligning with green chemistry principles [3].

Energy Field-Assisted Strategies

External energy fields play a crucial role in enhancing sample preparation by significantly accelerating mass transfer and reducing the duration of phase separation processes [2]. Various energy fields—including thermal, ultrasonic, microwave, electric, and magnetic—have been investigated for their ability to improve extraction efficiency and separation performance. These techniques are now extensively applied across environmental, food, and biological analyses due to their strong acceleration effects [2].

Table 2: Performance Comparison of Sample Preparation Strategies

| Strategy | Selectivity | Sensitivity | Speed | Automation Potential | Sustainability |

|---|---|---|---|---|---|

| Functional Materials | High | High | Medium | Medium | Medium |

| Chemical/Biological Reactions | Very High | High | Low | Low | Low |

| Energy Field-Assisted | Medium | Medium | Very High | High | Medium |

| Device Integration | Medium | Medium | High | Very High | High |

Experimental Protocols for Specific Applications

Green FT-IR Quantitative Analysis of Pharmaceutical Formulations

The selection of IR spectroscopic approaches for drug quantification supports green analytical chemistry principles without compromising methodological performance. The following protocol, adapted from research on antihypertensive drugs, demonstrates a solvent-free approach for simultaneous drug quantification [4].

Methodology:

- Standard Preparation: Prepare standard mixtures of active pharmaceutical ingredients (APIs) in the range of 0.2-1.2% w/w by accurately weighing and mixing with potassium bromide (KBr).

- Pellet Formation: Use a hydraulic press to prepare transparent pellets from the standard mixtures (approximately 100-200 mg total weight) under controlled pressure.

- Spectral Acquisition: Collect FT-IR transmission spectra using an FT-IR spectrometer with specified parameters (resolution: 4 cm⁻¹, scans: 16).

- Data Processing: Convert transmittance spectra to absorbance spectra. Select characteristic absorption bands (e.g., 1206 cm⁻¹ for amlodipine besylate R-O-R stretching; 863 cm⁻¹ for telmisartan C-H out-of-plane bending).

- Quantification: Measure area under curve (AUC) for selected peaks and construct calibration curves by plotting AUC against concentration (%w/w).

Validation Parameters:

- Specificity: Confirm absence of interference from excipients or between selected bands.

- Linearity: Establish linear range (0.2-1.2% w/w for both APIs).

- Precision: Evaluate through intraday and interday studies (RSD < 2%).

- Accuracy: Determine via recovery studies (98-102%).

- LOD/LOQ: For demonstrated example: LOD of 0.009359% w/w and LOQ of 0.028359% w/w for amlodipine besylate [4].

ICP-MS Sample Preparation for Trace Element Analysis

Inductively Coupled Plasma Mass Spectrometry (ICP-MS) provides exceptionally sensitive elemental analysis but demands meticulous sample preparation to avoid erroneous results.

Methodology:

- Sample Digestion: For solid samples, use high-purity nitric acid in closed-vessel microwave digestion systems to achieve complete dissolution.

- Dilution: Precisely dilute samples to bring analyte concentrations within the optimal instrument range (typically ppb to ppt levels), while considering matrix effects.

- Filtration: Pass samples through 0.45μm membrane filters (or 0.2μm for ultratrace analysis) to remove particulate matter.

- Acidification: Maintain samples in 2% v/v high-purity nitric acid to prevent adsorption and precipitation.

- Internal Standardization: Add appropriate internal standards (e.g., In, Re, Rh) to correct for matrix effects and instrument drift.

Critical Considerations:

- Use high-purity reagents (trace metal grade) and solvents to minimize contamination.

- Employ labware composed of perfluoroalkoxy (PFA) or polypropylene to reduce elemental leaching.

- Implement blank corrections throughout the preparation process to account for background contamination.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Materials for Spectroscopic Sample Preparation

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Potassium Bromide (KBr) | Matrix for FT-IR pellet preparation; transparent to IR radiation | FT-IR analysis of solid pharmaceuticals, polymers [4] |

| Lithium Tetraborate | Flux for fusion techniques; creates homogeneous glass disks | XRF analysis of minerals, ceramics, refractory materials [1] |

| Deep Eutectic Solvents (DES) | Green extraction media; tunable properties for specific analytes | Extraction of organic compounds from food, environmental samples [3] |

| Covalent Organic Frameworks (COFs) | Selective solid-phase extraction adsorbents; high surface area | Enrichment of trace analytes from complex biological matrices [2] |

| High-Purity Nitric Acid | Digestion reagent for elemental analysis; oxidizing agent | Sample digestion for ICP-MS, ICP-OES [1] [5] |

Sample preparation is not merely a preliminary step but a deterministic factor in analytical data validity. As spectroscopic technologies advance with innovations like QCL-based microscopy [6] and high-performance sample preparation strategies [2], the necessity for corresponding advances in preparation methodologies becomes increasingly critical. The fundamental relationship between preparation quality and data integrity remains unchanged: without proper attention to this critical stage, even the most sophisticated instrumentation cannot compensate for preparation deficiencies. By implementing the detailed protocols and strategies outlined in this guide, researchers can significantly enhance the reliability, accuracy, and validity of their spectroscopic data, ultimately strengthening scientific conclusions in drug development and related fields.

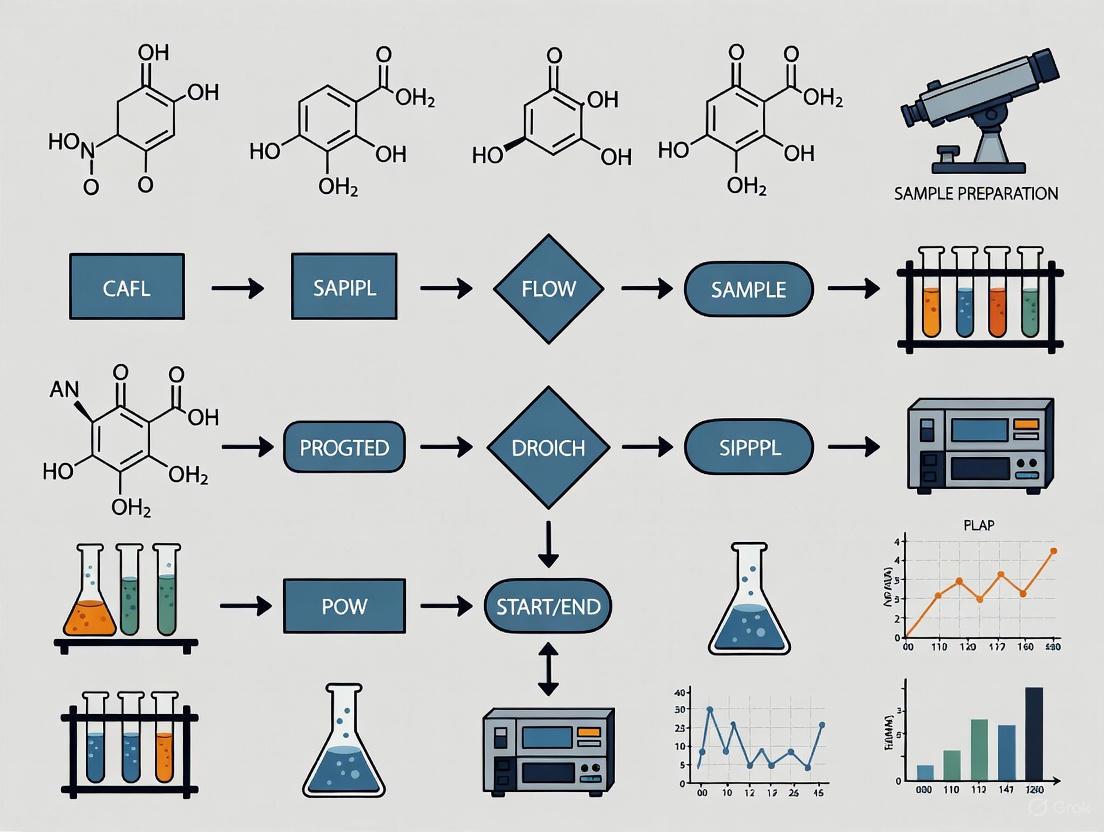

Workflow Diagrams

Sample Preparation Workflow: This diagram illustrates the comprehensive pathway for preparing various sample types for spectroscopic analysis, highlighting critical steps and strategic approaches that ensure data validity.

FT-IR Drug Quantification Protocol: This diagram details the specific step-by-step procedure for solvent-free quantitative pharmaceutical analysis using FT-IR spectroscopy with potassium bromide pellet preparation.

The fundamental principle of spectroscopy hinges on the interaction between light and matter. However, the physical and chemical form of a sample critically determines the nature of this interaction, thereby dictating the accuracy, sensitivity, and reproducibility of the analytical results [1]. Inadequate sample preparation is a significant source of error, accounting for an estimated 60% of all spectroscopic analytical errors [1]. This guide details the core physical principles by which sample form—encompassing characteristics such as physical state, surface topology, particle size, and homogeneity—modulates light-matter interactions. Framed within essential sample preparation research, this knowledge provides a foundational framework for developing robust analytical methods in drug development and scientific research.

Fundamental Light-Matter Interactions in Spectroscopy

The Dual Nature of Light and Matter

Light, or electromagnetic radiation, exhibits both wave-like and particle-like properties [7]. As a wave, it is characterized by its wavelength (λ), the distance between successive peaks, which determines its color and energy [7]. As a particle, light consists of photons, discrete packets of energy where each photon's energy is inversely related to its wavelength [7]. Matter, composed of atoms and molecules, exists in specific quantized energy states. Electrons occupy discrete energy levels, and molecules possess unique vibrational and rotational states [8] [7]. The interaction spectrum is probed by different techniques, from electronic transitions (UV-Vis) to vibrational modes (IR) and rotational changes (microwave) [8] [9].

Primary Interaction Phenomena

When light encounters matter, three primary phenomena can occur, each providing distinct analytical information:

- Absorption: A photon's energy is transferred to the atom or molecule, promoting it to a higher energy state [7] [10]. The pattern of absorbed wavelengths creates an absorption spectrum, which serves as a fingerprint for identification [10] [9].

- Emission: After absorbing energy, an excited species can return to a lower energy state by emitting a photon, producing an emission spectrum [10] [9].

- Scattering: The path of photons can be altered upon interaction with a sample. Raman spectroscopy, for instance, relies on inelastic scattering, where the scattered photon's energy differs from the incident photon's, providing information about molecular vibrations [10].

The following diagram illustrates the core decision-making workflow for selecting a sample preparation method based on the spectroscopic technique and the initial sample form.

The Impact of Sample Form on Spectral Data

The physical form of a sample directly influences the fundamental light-matter interactions, introducing physical artifacts that can obscure or distort the chemical information sought.

Surface Topology and Roughness

The surface of a sample is the primary interface for light interaction. Rough surfaces scatter light randomly, reducing the signal-to-noise ratio and leading to inaccurate intensity measurements [1]. For techniques like X-ray Fluorescence (XRF), a flat, homogeneous surface is critical to ensure consistent X-ray penetration and fluorescence emission, enabling precise quantitative analysis [1]. Milling machines are often used to create the even, flat surfaces required for high-quality data [1].

Particle Size and Homogeneity

Particle size is a critical parameter for solid samples, especially in diffuse reflectance or transmission measurements. Excessive variation in particle size creates sampling errors and compromises quantitative analysis because smaller particles pack more densely, potentially leading to shadowing effects and altering the effective path length of light [1]. For reproducible results, samples must be ground to a consistent particle size (typically <75 μm for XRF) to ensure a homogeneous matrix that interacts uniformly with radiation [1].

Matrix Effects

The sample matrix—all components other than the analyte—can cause matrix effects, where constituents absorb radiation or contribute spectral signals that obscure or enhance the analyte's response [1]. This can lead to severe inaccuracies in quantification. Proper preparation techniques, such as dilution, extraction, or matrix matching (e.g., fusion for XRF), are designed to remove these interferences [1].

Table 1: Quantitative Impact of Sample Form on Spectroscopic Analysis

| Sample Form Characteristic | Primary Spectral Impact | Affected Techniques | Typical Target for Preparation |

|---|---|---|---|

| Surface Roughness | Increased light scattering; reduced signal-to-noise ratio [1] | XRF, FT-IR, UV-Vis (solid samples) | Flat, polished surface [1] |

| Large/Variable Particle Size | Sampling error; non-uniform absorption/scattering; poor reproducibility [1] | XRF, NIR, FT-IR | Consistent particle size, often <75 μm [1] |

| Sample Heterogeneity | Non-representative spectra; poor quantitative accuracy [1] | All, especially micro-spectroscopy | Homogeneous distribution of analytes [1] |

| Matrix Composition | Absorption/enhancement of analyte signal (matrix effects) [1] | ICP-MS, XRF, UV-Vis | Matrix removal or matching (e.g., fused beads) [1] |

Sample Preparation Techniques by Physical Form

Solid Sample Preparation

The goal for solid samples is to create a homogeneous, representative specimen with controlled surface and particle properties.

- Grinding and Milling: These techniques reduce particle size and increase homogeneity. Swing grinding is ideal for tough samples like ceramics, using oscillating motion to minimize heat generation that could alter sample chemistry [1]. Milling provides greater control, producing a fine, flat surface that minimizes light scattering and ensures consistent density for analysis [1].

- Pelletizing for XRF: This method involves blending a ground powder with a binder (e.g., wax or cellulose) and pressing it under high pressure (10-30 tons) into a solid disk [1]. This process creates a pellet with uniform density and surface properties, standardizing X-ray absorption and enabling accurate quantitative analysis [1].

- Fusion Techniques: Used for refractory materials like minerals and ceramics, fusion involves dissolving a ground sample in a flux (e.g., lithium tetraborate) at high temperatures (950–1200 °C) to create a homogeneous glass disk [1]. This method completely destroys the original crystal structure, eliminating mineralogical and particle size effects that complicate analysis [1].

Table 2: Comparative Analysis of Solid Sample Preparation Methods

| Preparation Method | Underlying Physical Principle | Key Technical Parameters | Ideal Sample Types | Quantitative Performance |

|---|---|---|---|---|

| Grinding/Milling | Reduction of particle size to minimize scattering and ensure homogeneity [1] | Grinding time, material hardness, final particle size (<75 μm) [1] | Hard and brittle materials, alloys [1] | Good, dependent on particle size consistency [1] |

| Pelletizing (Pressed Powder) | Creation of a uniform density and surface for consistent radiation interaction [1] | Pressure (10-30 tons), binder type and ratio [1] | Powders, soils, sediments [1] | High, when surface and density are uniform [1] |

| Fusion (Glass Bead) | Total dissolution of crystal structures to eliminate mineralogical and particle effects [1] | Flux-to-sample ratio, temperature (950-1200°C), flux type [1] | Refractory materials, silicates, minerals [1] | Excellent, unparalleled for difficult materials [1] |

Liquid and Gas Sample Preparation

Liquid and gas samples require techniques that ensure stability, correct concentration, and freedom from interference.

- Dilution and Filtration for ICP-MS: Due to its high sensitivity, ICP-MS requires careful liquid preparation. Dilution brings analyte concentrations into the optimal detection range and reduces matrix effects [1]. Filtration (using 0.45 or 0.2 μm membranes) removes suspended particles that could clog the nebulizer or contribute to spectral interference [1]. High-purity acidification maintains metal ions in solution [1].

- Solvent Selection for UV-Vis and FT-IR: The solvent must dissolve the sample completely without interfering in the spectral region of interest. For UV-Vis, the solvent must have a cutoff wavelength below the analyte's absorption band (e.g., water at ~190 nm, acetonitrile at ~190 nm) [1]. For FT-IR, solvents like deuterated chloroform (CDCl₃) are preferred because they are largely transparent in the mid-IR region, avoiding overlapping absorption bands with the analyte [1].

Experimental Protocols for Key Preparative Methods

Protocol: Preparing a Pressed Pellet for XRF Analysis

This protocol is designed to create a uniform solid pellet for quantitative elemental analysis via XRF.

- Grinding: Use a spectroscopic grinding or milling machine to reduce the sample to a fine powder with a particle size of less than 75 μm.

- Mixing with Binder: Accurately weigh the ground sample and mix it with a binding agent (e.g., boric acid or cellulose) in a specified ratio (e.g., 10:1 sample-to-binder ratio) to ensure the pellet holds together.

- Pressing: Transfer the mixture into a pellet die. Place the die in a hydraulic or pneumatic press and apply a pressure of 10-30 tons for a specified duration (e.g., 30-60 seconds).

- Ejection and Storage: Carefully eject the resulting pellet from the die. The pellet should have a smooth, flat surface. Store in a desiccator if not analyzed immediately to prevent moisture absorption.

Protocol: Liquid Sample Preparation for ICP-MS

This protocol ensures a liquid sample is free of particulates and within the optimal concentration range for sensitive ICP-MS analysis.

- Digestion/Dissolution: For solid samples, begin with a complete acid digestion to bring all analytes into solution.

- Dilution: Perform a serial dilution with high-purity acidified water (e.g., 2% v/v nitric acid) to bring the analyte concentration within the instrument's calibration curve. The dilution factor is determined by the expected analyte concentration and matrix complexity.

- Filtration: Pass the diluted sample through a 0.45 μm syringe filter (or 0.2 μm for ultratrace analysis) to remove any remaining suspended particles. Use PTFE membranes to minimize contamination.

- Internal Standard Addition: Add a known concentration of an internal standard (e.g., Indium or Bismuth) to the final solution. This corrects for instrument drift and matrix effects during analysis.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for Spectroscopic Sample Preparation

| Item Name | Function/Application | Technical Specification |

|---|---|---|

| Lithium Tetraborate (Li₂B₄O₇) | Flux for fusion preparation of refractory samples [1] | High-purity grade for XRF fusion, melts at ~950°C [1] |

| Boric Acid / Cellulose | Binder for pressed powder pellets [1] | Serves as a binding matrix in XRF pellet preparation [1] |

| Deuterated Chloroform (CDCl₃) | Solvent for FT-IR spectroscopy [1] | IR-transparent solvent for mid-IR region; minimizes spectral interference [1] |

| PTFE Membrane Filter | Filtration of liquid samples for ICP-MS [1] | 0.45 μm or 0.2 μm pore size; low analyte adsorption [1] |

| Potassium Bromide (KBr) | Matrix for solid-sample analysis in FT-IR [1] | IR-transparent material for preparing KBr pellets [1] |

The path to reliable spectroscopic data is paved long before the sample is placed in the instrument. A deep understanding of the core physical principles—how surface topology, particle size, homogeneity, and matrix composition govern the fundamental interactions between light and matter—is not merely beneficial but essential. For researchers in drug development and other high-stakes fields, mastering these sample preparation techniques is a critical investment. It transforms spectroscopy from a simple tool for characterization into a powerful engine for generating precise, reproducible, and meaningful analytical results that drive discovery and ensure quality.

In the realm of analytical chemistry, spectroscopic analysis serves as a fundamental tool for deciphering the composition and structure of matter through its interaction with electromagnetic radiation. The validity of these analyses, however, is profoundly contingent upon the initial steps of sample preparation. Inadequate preparation is not a minor oversight but a primary source of error, responsible for as much as 60% of all spectroscopic analytical errors [1]. The physical and chemical characteristics of a sample—specifically its particle size, homogeneity, and matrix composition—directly govern how it interacts with spectroscopic radiation. These factors influence everything from light scattering and path length to ionization efficiency and spectral superposition, making their management a critical prerequisite for obtaining accurate, reproducible, and meaningful data [1] [11]. This guide details the core challenges these factors present and outlines robust, modern strategies to overcome them, providing a foundation for excellence in spectroscopic research and application.

Core Challenge 1: Particle Size Effects

Fundamental Principles and Spectral Impact

Particle size is a critical physical property that directly affects the interaction between a sample and incident radiation. The primary mechanism of interference is light scattering, where the path of photons is deviated by particles in the sample. The nature and extent of this scattering are governed by the relationship between the particle size and the wavelength of the light used [11]. With larger particles, scattering increases, leading to longer and more variable path lengths for the light. This results in non-linear absorption behavior and a phenomenon known as spectral dilation, where absorbance values are distorted and the linear relationship between concentration and signal, fundamental to quantitative analysis, is compromised [11]. For techniques like laser diffraction, which is used to measure particle size distributions from the sub-micron scale to several millimeters, the angular dependence of scattered light is the key measurement parameter [12].

Consequences for Analytical Data

The spectral distortions caused by improper particle size can manifest in several ways:

- Increased Baseline Shift and Multiplicative Effects: Variations in particle size and packing density introduce additive and multiplicative noise, which can obscure true analyte absorption bands [11].

- Reduced Predictive Model Performance: In quantitative applications using chemometrics, particle size effects are a major source of variability that degrades the precision and accuracy of calibration models, such as those built with Partial Least Squares (PLS) regression [11].

- Sampling Bias: A wide distribution of particle sizes (polydispersity) can lead to segregation during handling, meaning the small portion analyzed may not be representative of the whole batch, causing non-reproducible results [1].

Experimental Protocol: Laser Diffraction for Particle Size Distribution

Objective: To determine the particle size distribution of a powdered sample using laser diffraction. Principle: A beam of laser light passes through a dispersed sample. Particles scatter light at angles inversely proportional to their size. The angular intensity data is then analyzed using an appropriate optical model (e.g., Mie theory or Fraunhofer approximation) to calculate the particle size distribution [12].

- Instrument Calibration: Verify instrument performance using a certified standard reference material (e.g., NIST-traceable latex beads).

- Sample Dispersion:

- Dry Powder: Use a dry powder disperser with compressed air to de-agglomerate particles without causing fragmentation.

- Suspension: For wet dispersion, add the powder to a suitable solvent (e.g., water, isopropanol) in a recirculating bath. Application of ultrasound for 30-60 seconds may be necessary to break apart weak agglomerates.

- Measurement: Pass the dispersed sample through the measurement cell of the laser diffraction instrument (e.g., a Mastersizer series). Ensure the obscuration level falls within the manufacturer's recommended range.

- Data Analysis: The software automatically calculates the particle size distribution based on the scattered light pattern. Key results are typically reported as volume-based percentiles (D10, D50, D90).

- Replication: Perform at least three independent measurements to ensure reproducibility.

Table 1: Techniques for Particle Size Control and Their Applications

| Technique | Mechanism | Target Size Range | Typical Applications |

|---|---|---|---|

| Swing Grinding | Oscillating motion reduces heat generation | <75 µm (for XRF) [1] | Hard, brittle materials (ceramics, ferrous metals) |

| Milling | Controlled cutting for a flat, uniform surface | Varies with material | Creating uniform surfaces for XRF analysis [1] |

| Pelletizing | Pressing powder with a binder into a solid disk | Creates a uniform surface | XRF analysis of powdered samples [1] |

Core Challenge 2: Sample Homogeneity

Defining Chemical and Physical Heterogeneity

Sample heterogeneity refers to the spatial non-uniformity of a sample's composition or physical structure and is a pervasive challenge in the analysis of real-world materials [11]. It is useful to distinguish between two primary forms:

- Chemical Heterogeneity: This involves the uneven distribution of molecular or elemental species throughout a sample. Common in pharmaceuticals, food powders, and geological samples, it results in a measured spectrum that is a composite of the spectra of its individual constituents [11].

- Physical Heterogeneity: This encompasses differences in properties such as particle size, shape, surface roughness, and packing density. These factors alter the light-scattering properties and effective path length, introducing multiplicative and additive distortions into the spectral data [11].

Consequences of Heterogeneous Samples

The failure to achieve a homogeneous sample leads to a lack of representative sampling, where the small portion subjected to analysis does not reflect the true composition of the entire batch [1]. This directly causes non-reproducible results and severely compromises any quantitative calibration, as the spectral data no longer reliably correlates with analyte concentration [1] [11]. In imaging or microspectroscopy, heterogeneity at a scale smaller than the measurement spot leads to subpixel mixing, where the signal is an average of different components, complicating both identification and quantification [11].

Experimental Protocol: Hyperspectral Imaging (HSI) for Heterogeneity Assessment

Objective: To spatially resolve chemical and physical heterogeneity within a solid sample. Principle: HSI combines conventional imaging with spectroscopy to acquire a full spectrum at every pixel in a scene, generating a three-dimensional data cube (x, y, λ) [11].

- Sample Presentation: Place the solid sample (e.g., a pharmaceutical tablet or polymer film) on the motorized stage. Ensure the surface is level and in focus.

- Spatial and Spectral Calibration: Use a certified reflectance standard for spatial calibration and a wavelength standard (e.g., a rare-earth oxide) for spectral calibration.

- Data Acquisition:

- Set the acquisition parameters (spatial resolution, spectral range, integration time).

- The system scans the sample, collecting a full spectrum for each pixel. The result is a hypercube, denoted as ( \mathbf{H} \in \mathbb{R}^{M \times N \times L} ), where ( M ) and ( N ) are spatial dimensions and ( L ) is the number of wavelengths [11].

- Data Analysis:

- Exploratory Analysis: Use Principal Component Analysis (PCA) to visualize the major sources of spatial-spectral variance.

- Spectral Unmixing: Apply algorithms like Vertex Component Analysis (VCA) to extract "endmember" spectra (pure component spectra) and their corresponding "abundance" maps, which visually display the distribution of each component [11].

- Validation: Correlate HSI findings with a reference method, such as HPLC, if quantitative validation of distribution is required.

Table 2: Strategies to Manage Sample Heterogeneity

| Strategy | Description | Primary Benefit | Limitations |

|---|---|---|---|

| Spectral Preprocessing | Mathematical treatment of spectra (e.g., SNV, MSC) to remove scatter effects [11]. | Simple, fast, no hardware changes. | Empirical; may not fix root cause. |

| Localized Sampling | Collecting and averaging spectra from multiple points on a sample [11]. | More representative of bulk composition. | Increases analysis time. |

| Hyperspectral Imaging (HSI) | Spatially resolves chemical distribution across a sample [11]. | Directly visualizes and quantifies heterogeneity. | High data load; complex analysis. |

| Fusion | Melting sample with a flux (e.g., Li₂B₄O₇) to form a homogeneous glass disk [1]. | Eliminates mineralogy and particle size effects. | High cost; potential for volatile loss. |

Core Challenge 3: Matrix Effects

Understanding the Matrix Interference

Matrix effects describe the phenomenon where co-eluting or co-existing substances in a sample alter the analytical response of the target analyte. This is a particularly severe challenge in high-sensitivity techniques like ICP-MS and LC-MS/MS [1] [13]. In MS-based methods, the effect typically manifests as ion suppression or ion enhancement within the source, where matrix components compete with the analyte for charge or disrupt the droplet evaporation process, leading to inaccurate quantification [13]. In optical spectroscopy, the matrix can contribute to a background signal or cause absorption band overlaps, which obscure the analyte's spectral signature.

Impact on Analytical Performance

The most significant impact of matrix effects is on the accuracy and precision of an analytical method. It can lead to both false positives and false negatives, with serious implications in fields like drug development and environmental monitoring [13]. Furthermore, matrix effects undermine the sensitivity of an assay by increasing the background noise and can affect the linearity of the calibration curve [13]. The variability of matrix effects between different lots of a biological fluid (e.g., plasma from different individuals), known as relative matrix effects, is a critical parameter that must be assessed during method validation as it directly impacts precision [13].

Experimental Protocol: Assessing Matrix Effect in LC-MS/MS

Objective: To systematically evaluate the matrix effect, recovery, and process efficiency for a bioanalytical LC-MS/MS method. Principle: The method, pioneered by Matuszewski et al., involves comparing the analyte response in pre-extraction and post-extraction spiked samples to those in neat solvent [13].

- Sample Set Preparation: Prepare three sets of samples using at least six different lots of the biological matrix (e.g., human plasma) [13].

- Set 1 (Neat Solution): Analyte spiked into pure mobile phase.

- Set 2 (Post-extraction Spike): Blank matrix is extracted, then the analyte is spiked into the extracted supernatant.

- Set 3 (Pre-extraction Spike): Analyte is spiked into the blank matrix and then carried through the entire extraction process.

- LC-MS/MS Analysis: Analyze all samples in replicate.

- Calculation:

- Matrix Factor (MF): ( MF = \frac{Peak\ Area{Set\ 2}}{Peak\ Area{Set\ 1}} ). An MF ≠ 1 indicates a matrix effect.

- Reccovery (RE): ( RE = \frac{Peak\ Area{Set\ 3}}{Peak\ Area{Set\ 2}} ). This assesses the efficiency of the extraction process.

- Process Efficiency (PE): ( PE = \frac{Peak\ Area{Set\ 3}}{Peak\ Area{Set\ 1}} ). This reflects the overall method efficiency, combining both extraction and matrix effects.

- IS-Normalization: Repeat calculations using the peak area ratio (analyte/internal standard) to determine the degree of compensation provided by the IS [13].

The Scientist's Toolkit: Key Research Reagent Solutions

The following table catalogues essential materials and reagents critical for addressing the challenges of particle size, homogeneity, and matrix effects in modern spectroscopic and bioanalytical sample preparation.

Table 3: Essential Reagents and Materials for Advanced Sample Preparation

| Reagent/Material | Function | Key Application Example |

|---|---|---|

| Lithium Tetraborate (Li₂B₄O₇) | Fluxing agent for fusion techniques | Creates homogeneous glass disks from refractory materials for XRF analysis, eliminating particle size and mineralogical effects [1]. |

| Diethylene Glycol (DEG) | Working fluid in condensation particle counters | Acts as the supersaturated vapor for activating and growing sub-10 nm particles in Particle Size Magnifier (PSM) instruments [14]. |

| Enhanced Matrix Removal (EMR) Cartridges | Solid-phase extraction for selective matrix cleanup | Pass-through cleanup for complex matrices (e.g., food) in PFAS and mycotoxin analysis, reducing ion suppression in LC-MS/MS [15]. |

| Graphitized Carbon Black (GCB) | Sorbent in solid-phase extraction | Removes organic interferences like pigments and planar molecules (e.g., in pesticide analysis for EPA Method 8081) [15]. |

| Weak Anion Exchange (WAX) Sorbent | Sorbent in solid-phase extraction | Selective retention of acidic compounds like PFAS in aqueous samples following EPA Method 1633 [15]. |

| Internal Standard (IS) | Reference compound added to samples | Corrects for variability in sample preparation and ionization efficiency in mass spectrometry, improving accuracy and precision [13]. |

The challenges posed by particle size, homogeneity, and matrix effects are intrinsic to spectroscopic analysis, but they are not insurmountable. A deep understanding of how these factors distort analytical signals is the first step toward mitigation. As demonstrated, a combination of robust mechanical preparation (grinding, fusion), advanced statistical and spatial sampling strategies (HSI, localized sampling), and sophisticated cleanup techniques (SPE, EMR) provides a powerful arsenal to ensure data integrity. The ongoing integration of automation, AI for data handling, and the development of new functional materials promise to further enhance the performance of sample preparation [12] [2]. By rigorously addressing these foundational challenges, researchers can unlock the full potential of spectroscopic techniques, driving reliability and innovation in drug development, material science, and beyond.

In the realm of analytical science, the integrity of spectroscopic analysis is fundamentally dependent on the quality of sample preparation. Inadequate sample preparation is the root cause of approximately 60% of all spectroscopic analytical errors [1]. Contamination, introduced during these preliminary stages, can compromise data validity, leading to misleading research conclusions, flawed quality control assessments, and incorrect analytical decisions [1]. Even the most advanced and sophisticated instrumentation cannot compensate for a poorly prepared sample [1]. This guide details the systematic approaches necessary to mitigate contamination risks across various spectroscopic methods, ensuring the accuracy and reliability essential for research and drug development.

The potential impacts of contamination are multifaceted. It can introduce foreign materials that produce spurious spectral signals, mask or alter the target analyte's signal, and ultimately lead to false positives or inaccurate quantitative results [1] [5]. For researchers and scientists in drug development, where results inform critical decisions, maintaining sample purity from collection to analysis is a non-negotiable prerequisite.

Contamination in a laboratory setting can originate from multiple vectors. A thorough understanding of these sources is the first step in developing effective countermeasures.

- Pipette-to-Sample Contamination: Occurs when aerosol particles or liquid residues from the pipette or tip come into contact with the sample, potentially transferring contaminants from previous uses [16].

- Sample-to-Pipette Contamination: Takes place when samples, particularly those with high viscosity or particulate matter, are drawn into the pipette shaft, contaminating the internal mechanism and subsequent samples [16].

- Sample-to-Sample Contamination (Carryover): This is the unintentional transfer of material from one sample to another, typically via reusable equipment, improperly cleaned labware, or aerosol generation [16] [5].

- Environmental Contamination: Includes airborne particulates, dust, and skin cells (such as keratin, a common contaminant in protein analysis) that can settle on samples or equipment [5].

- Reagent and Solvent Contamination: Involves impurities present in the water, chemicals, acids, or solvents used during sample preparation, digestion, or dilution [1] [5].

- Labware and Equipment Contamination: Arises from residues left on grinding surfaces, milling heads, containers, cuvettes, or filtration apparatus from previous use [1].

Contamination Control by Spectroscopic Technique

The specific strategies for contamination control are highly dependent on the spectroscopic technique being employed, as each has unique sample requirements and vulnerabilities.

X-Ray Fluorescence (XRF) Spectrometry

XRF analysis determines elemental composition and requires solid samples with uniform physical properties. Contamination control focuses on the preparation of solid surfaces.

Table 1: Contamination Control in XRF Sample Preparation

| Preparation Step | Contamination Risk | Mitigation Strategy |

|---|---|---|

| Grinding & Milling | Cross-contamination from equipment; Introduction of wear metals from grinding surfaces | Use dedicated grinding sets for sample types; Clean equipment thoroughly between samples with brushes and compressed air; Choose grinding surface material harder than the sample [1]. |

| Pelletizing | Contamination from binders (e.g., wax, cellulose); Residual matter from press dies | Use high-purity binders; Ensure press dies are meticulously cleaned between uses [1]. |

| Fusion | Contamination from flux (e.g., lithium tetraborate); Leaching from platinum crucibles at high temperatures (950-1200°C) | Use high-purity fluxes; Dedicate crucibles to specific sample matrices and condition them properly [1]. |

Inductively Coupled Plasma-Mass Spectrometry (ICP-MS)

ICP-MS offers extremely sensitive elemental analysis, making it highly susceptible to contamination from reagents and labware. It requires complete sample dissolution.

Table 2: Contamination Control in ICP-MS Sample Preparation

| Preparation Step | Contamination Risk | Mitigation Strategy |

|---|---|---|

| Digestion | Impurities in acids (e.g., nitric acid); Leaching of elements from digestion vessels | Use high-purity (e.g., trace metal grade) acids; Employ clean labware (e.g., PTFA, PFA); Perform blank digestions to monitor background [5]. |

| Dilution | Contaminants in diluents; Impurities from pipette tips | Use high-purity water (e.g., from a system like Milli-Q); Use high-purity acids for acidification; Use filter tips to prevent aerosol contamination [1] [16] [5]. |

| Filtration | Leachates from filter membranes; Adsorption of analytes onto the filter | Use appropriate membrane materials (e.g., PTFE) that are pre-cleaned; Avoid filters for trace element analysis unless necessary [1] [5]. |

Fourier Transform-Infrared (FT-IR) Spectroscopy

FT-IR identifies molecular structures through infrared absorption. Contamination can introduce foreign organic compounds that obscure the sample's spectral fingerprint.

- Solid Sample Preparation (KBr Pellets): The potassium bromide (KBr) must be of spectroscopic grade purity to avoid introducing its own absorption bands. The pellet press must be scrupulously clean to prevent cross-contamination [1].

- Liquid Sample Preparation: The choice of solvent is critical. The solvent must not only dissolve the sample but also be spectroscopically pure and have minimal absorption in the infrared region of interest to avoid masking the analyte's signal. Deuterated solvents are often used for this purpose [1].

- Cleaning of Accessories: ATR (Attenuated Total Reflectance) crystals and other accessories must be cleaned with appropriate, high-purity solvents immediately after use to prevent residue buildup and cross-contamination.

Detailed Experimental Protocols for Contamination Control

The following protocols provide detailed methodologies designed to minimize contamination during sample preparation.

Protocol: Preparation of Solid Samples for XRF Analysis via Pressed Pellet Method

This protocol is designed to produce homogeneous solid pellets with minimal contamination risk.

Materials:

- Sample Grinder/Mill: With tungsten carbide or other hard, non-contaminating surfaces.

- Hydraulic Pellet Press: Capable of applying 15-30 tons of pressure.

- Pellet Die: Cleaned with ethanol and dried before use.

- High-Purity Binder: Boric acid or cellulose powder, spectrochemical grade.

- Labware: Non-powdering gloves, weighing boats.

Procedure:

- Grinding: Grind the sample to a consistent particle size (<75 µm) using a clean grinder. Between samples, disassemble and clean all contact surfaces with a brush and compressed air.

- Mixing: Weigh a precise ratio of ground sample (e.g., 4g) and binder (e.g., 0.9g binder). Mix thoroughly in a clean vial to ensure homogeneity.

- Loading: Assemble the clean pellet die. Transfer the mixture into the die cavity, ensuring an even distribution.

- Pressing: Place the die in the hydraulic press. Apply pressure gradually to 15-25 tons and hold for 60 seconds to form a stable pellet.

- Ejection & Storage: Carefully eject the pellet. Place it in a clean, labeled Petri dish or bag and store in a desiccator to protect it from moisture and dust until analysis [1].

Protocol: Aseptic Pipetting for Liquid Sample Handling

This protocol is critical for preparing samples for sensitive techniques like ICP-MS and LC-MS, where even minor carryover can cause significant errors.

Materials:

- Calibrated Micropipettes: Regularly serviced and calibrated.

- Filter Pipette Tips: To prevent aerosol and liquid from entering the pipette shaft.

- High-Purity, Low-Binding Microcentrifuge Tubes.

Procedure:

- Tip Selection: Always use a new, sterile filter tip for each sample and each reagent to prevent sample-to-sample and sample-to-pipette contamination [16].

- Aspiration: Hold the pipette vertically when aspirating. Do not immerse the tip too deeply. For viscous samples, use reverse pipetting for better accuracy.

- Dispensing: Touch the tip to the side of the receiving vessel when dispensing. When dispensing into a liquid, pause briefly after the first stop before expelling any residual liquid.

- Tip Ejection: Eject the tip into a waste container using the ejector button without touching the tip by hand, minimizing the risk of contaminating subsequent samples [16] [5].

The Scientist's Toolkit: Essential Reagents and Materials

The selection of high-purity materials and reagents is fundamental to successful contamination control.

Table 3: Essential Research Reagent Solutions for Contamination Control

| Item | Function | Contamination Control Consideration |

|---|---|---|

| High-Purity Water (e.g., from Milli-Q system) | Sample dilution, reagent preparation, and equipment rinsing. | Removes ions and organics; Essential for preparing mobile phases in LC-MS and blanks for ICP-MS [6] [5]. |

| Spectroscopic Grinding Sets | Homogenization and particle size reduction of solid samples. | Sets made of materials harder than the sample (e.g., tungsten carbide for ceramics) prevent introduction of wear metals; Dedicated sets avoid cross-contamination [1]. |

| High-Purity Acids & Solvents (Trace metal grade, HPLC grade) | Sample digestion, dilution, and extraction. | Minimizes background elemental or organic signals; Critical for achieving low detection limits in ICP-MS and FT-IR [5]. |

| Filter Pipette Tips | Liquid handling and transfer. | Creates a physical barrier against aerosols, protecting the pipette shaft from sample-to-pipette contamination and subsequent samples [16]. |

| Solid-Phase Extraction (SPE) Cartridges | Clean-up and concentration of analytes from complex matrices. | Removes interfering compounds that can cause signal suppression or overlap in MS and chromatography; Select sorbent phase based on target analytes [5]. |

Vigilance against contamination is not merely a procedural step but a fundamental principle underpinning the integrity of spectroscopic analysis. The consequences of neglect are quantifiable and severe, with the majority of analytical errors originating in the sample preparation phase. By understanding contamination vectors, adhering to technique-specific preparation protocols, and utilizing high-purity reagents and materials, researchers and drug development professionals can ensure their data is accurate, reliable, and meaningful. In a field where decisions are driven by data, a rigorous, contamination-aware sample preparation protocol is the cornerstone of scientific validity.

In the realm of analytical chemistry, sample preparation represents a pivotal stage that fundamentally determines the validity and accuracy of final analytical results. Despite its critical importance, this area has historically been characterized by a significant paradox: while advanced instrumentation like mass spectrometers and spectroscopic devices receive extensive scientific attention, the optimization of sample preparation parameters often relies on traditional trial-and-error approaches rather than systematic scientific methodologies [17]. This reliance on empirical methods persists even though inadequate sample preparation is responsible for as much as 60% of all spectroscopic analytical errors [1]. The consequences of poorly prepared samples extend across research and industrial applications, potentially compromising pharmaceutical development, quality control processes, and scientific conclusions.

The fundamental impediment to progress in sample preparation lies in the underdeveloped understanding of extraction principles, particularly when dealing with natural, complex samples where native analyte-matrix interactions differ significantly from spiked standards [17]. This stands in stark contrast to the physiochemically simpler systems employed in subsequent separation and quantification steps, such as chromatography and mass spectrometry. Consequently, the fundamentals of sample preparation are typically overlooked in analytical chemistry curricula, perpetuating a cycle of methodological underdevelopment [17]. This paper establishes a new paradigm that challenges the perception of sample preparation as merely an artistic endeavor and reframes it as a scientifically-grounded discipline essential for analytical accuracy.

The Limitations of Trial-and-Error Approaches

The traditional trial-and-error approach to sample preparation optimization suffers from several fundamental limitations that impact both methodological robustness and operational efficiency. When optimization relies primarily on iterative testing without theoretical foundation, it creates a system vulnerable to unrecognized variables and uncontrolled parameters that compromise analytical outcomes.

A primary deficiency of the trial-and-error paradigm is its inadequate consideration of native analyte-matrix interactions. Research demonstrates that extraction methods providing good recovery of spiked standards may perform very differently with natural samples, where analytes are bound within complex matrix structures through various chemical interactions [17]. This discrepancy arises because spiked standards typically exhibit simpler physicochemical relationships with the sample matrix compared to native analytes, which have established equilibrium within their native environment.

Furthermore, trial-and-error methodologies typically fail to account for the multi-factorial nature of sample preparation parameters. These approaches often focus on one variable at a time while holding others constant, potentially missing important interactive effects between factors such as pH, solvent composition, temperature, and extraction time. Without a systematic framework for understanding how these variables interact, the optimization process becomes resource-intensive and may still yield suboptimal results.

Quantitative Impact of Preparation Errors

Table 1: Common Sample Preparation Errors and Their Analytical Consequences

| Error Type | Impact on Analysis | Common Techniques Affected |

|---|---|---|

| Insufficient Homogenization | Non-representative sampling, high variance | XRF, ICP-MS, FT-IR |

| Particle Size Inconsistency | Light scattering, inaccurate quantitation | XRF (<75 μm required), NIR |

| Surface Irregularities | Signal attenuation, noise introduction | XRF, FT-IR |

| Matrix Effects | Signal suppression/enhancement, accuracy errors | ICP-MS, LC-MS |

| Contamination | False positives/negatives, background interference | All techniques, especially trace analysis |

| Incomplete Dissolution | Low recovery, inaccurate concentration | ICP-MS, HPLC |

The reliance on trial-and-error approaches becomes particularly problematic when considering the specialized requirements of different analytical techniques. For instance, X-Ray Fluorescence (XRF) spectrometry requires flat, homogeneous surfaces with controlled particle size (typically <75 μm), while Inductively Coupled Plasma-Mass Spectrometry (ICP-MS) demands complete dissolution of solid samples and meticulous contamination control [1]. Fourier Transform Infrared Spectroscopy (FT-IR) has its own specific requirements for solid sample preparation, often involving grinding with KBr for pellet production [1]. Without systematic understanding of these technique-specific requirements, preparation errors inevitably introduce analytical inaccuracies.

Principles of Systematic Method Development

The transition from trial-and-error to systematic approaches requires establishing fundamental principles that govern sample preparation efficacy. Systematic method development begins with comprehensive understanding of extraction fundamentals applied to the specific analytical challenge, followed by methodical optimization and validation.

Foundational Theoretical Framework

At its core, systematic sample preparation recognizes that extraction efficiency depends on disrupting the equilibrium established between analytes and their native matrix environment. This requires understanding the physicochemical interactions responsible for analyte retention, including hydrophobic interactions, hydrogen bonding, ionic attractions, and van der Waals forces. Different sample types exhibit characteristic interaction profiles; for instance, biological tissues often present complex protein-binding scenarios, while environmental samples may involve strong adsorption to particulate matter [17].

A systematic approach further acknowledges that the fundamental purpose of sample preparation extends beyond mere extraction to include additional critical functions: (1) removal of potential interferences, (2) analyte enrichment or concentration, (3) medium exchange for compatibility with analytical instrumentation, and (4) chemical modification to enhance detection characteristics. Each function must be addressed through scientifically-principled methods rather than empirical testing.

Method Validation and Quality Assessment

Systematic approaches incorporate rigorous validation protocols to quantify method performance and reliability. The comparison of methods experiment provides a framework for assessing systematic error by analyzing patient samples using both new and established reference methods [18]. This approach should include a minimum of 40 different patient specimens selected to cover the entire working range of the method and represent the spectrum of expected sample matrices [18].

Statistical treatment of comparison data should include both graphical analysis and appropriate statistical calculations. Difference plots displaying the difference between test and comparative results versus the comparative result effectively visualize systematic errors, while linear regression statistics allow estimation of systematic error at medically important decision concentrations [18]. For results covering a narrow analytical range, calculation of the average difference between methods (bias) with standard deviation of differences provides critical performance metrics [18].

Reliability studies should specifically assess how different sources of variation (raters, instruments, time) influence measurement results. The intraclass correlation coefficient (ICC) quantifies the proportion of total variance due to true differences between samples, while the standard error of measurement (SEM) indicates the precision of an individual measurement score [19]. These statistical approaches transform sample preparation from an art to a quantitatively-characterized scientific process.

Technique-Specific Systematic Approaches

Systematic sample preparation requires technique-specific strategies that acknowledge the distinct physical and chemical requirements of different analytical platforms. The fundamental principle remains consistent—understanding and controlling variables through scientific principles—but the implementation varies significantly based on detection mechanism and sample form.

Solid Sample Preparation Techniques

Solid samples present particular challenges due to their inherent heterogeneity and complex physical structure. Systematic approaches to solid sample preparation employ sequential processing steps designed to achieve homogeneity, controlled particle size, and appropriate surface characteristics.

Grinding and Milling: Mechanical particle size reduction represents the foundational step for solid sample preparation. Systematic approaches select grinding equipment based on material hardness, required final particle size, and contamination risks. Swing grinding machines employ oscillating motion rather than direct pressure to reduce heat formation that might alter sample chemistry, making them ideal for tough samples like ceramics and ferrous metals [1]. For enhanced control over particle size reduction, spectroscopic milling machines provide programmable parameters for rotational speed, feed rate, and cutting depth, often incorporating cooling systems to prevent thermal degradation [1].

Pelletizing for XRF Analysis: For XRF spectrometry, powdered samples must be transformed into solid disks with uniform density and surface properties. The systematic pelletizing process involves: (1) blending the ground sample with an appropriate binder (e.g., wax or cellulose), (2) pressing using hydraulic or pneumatic presses at typically 10-30 tons pressure, and (3) producing pellets with flat, smooth surfaces of consistent thickness [1]. The choice of binder and pressure parameters depends on the powder characteristics, with poorly-binding powders requiring binders like boric acid or lithium tetraborate.

Fusion Techniques: For refractory materials that resist conventional dissolution, fusion provides complete dissolution into homogeneous glass disks. This systematic approach involves: (1) blending the ground sample with a flux (typically lithium tetraborate), (2) melting at temperatures between 950-1200°C in platinum crucibles, and (3) casting the molten material as a homogeneous disk for analysis [1]. Fusion completely breaks down crystal structures and standardizes the sample matrix, eliminating mineral effects that complicate other preparation techniques. Although more expensive than pressing methods, fusion offers unparalleled accuracy for challenging materials like cement, slag, and refractory oxides.

Liquid and Gas Sample Preparation

Liquid and gaseous samples present distinct challenges that require specialized systematic approaches focusing on dilution, filtration, and matrix modification.

ICP-MS Preparation: The exceptional sensitivity of ICP-MS demands stringent liquid sample preparation protocols. Systematic approaches include: (1) accurate dilution to appropriate concentration ranges, (2) filtration using 0.45 μm membrane filters (0.2 μm for ultratrace analysis) to remove suspended material, (3) high-purity acidification with nitric acid (typically to 2% v/v) to prevent precipitation and adsorption, and (4) internal standardization to compensate for matrix effects and instrument drift [1]. PTFE membranes typically provide the best balance of chemical resistance and low background contamination.

Solvent Selection for Molecular Spectroscopy: For UV-Vis and FT-IR spectroscopy, systematic solvent selection requires matching solvent properties to both analytical technique and sample characteristics. For UV-Vis, key considerations include cutoff wavelength (below which the solvent absorbs strongly), polarity, and purity grade [1]. Common UV-Vis solvents include water (~190 nm cutoff), methanol (~205 nm cutoff), and acetonitrile (~190 nm cutoff). For FT-IR, solvent absorption bands must not overlap with significant analyte features, making deuterated solvents like CDCl₃ valuable alternatives with minimal interfering absorption bands [1].

Automation and High-Throughput Systems

Modern systematic approaches increasingly incorporate automation to enhance reproducibility and efficiency. Automated liquid-handling stations capable of processing 96- or 384-well plates in under an hour demonstrate a 1.8-fold reduction in sample-to-sample variation for proteomics workflows [20]. These systems not only improve analytical quality but also reallocate technician time from repetitive pipetting tasks to data interpretation, shortening report-generation timelines—a critical competitive advantage in the contract-research market [20].

Table 2: Systematic Sample Preparation Requirements by Analytical Technique

| Analytical Technique | Sample Form Requirements | Critical Parameters | Common Systematic Approaches |

|---|---|---|---|

| XRF Spectrometry | Flat, homogeneous surface | Particle size <75 μm, uniform density | Grinding, pelletizing, fusion |

| ICP-MS | Complete dissolution, particle-free | Accurate dilution, contamination control | Acid digestion, filtration, dilution schemes |

| FT-IR Spectroscopy | Appropriate optical characteristics | Consistent pathlength, solvent compatibility | KBr pellets, solvent selection, ATR |

| Raman Spectroscopy | Minimal fluorescence | Surface characteristics, laser compatibility | Surface enhancement, quenching approaches |

| UV-Vis Spectroscopy | Controlled concentration | Absorbance in linear range, solvent compatibility | Dilution series, solvent selection |

Case Study: Systematic LC-MS Analysis of Catecholamines

The analysis of catecholamines and their metabolites in biological samples provides an illustrative case study of systematic versus trial-and-error approaches to sample preparation. Catecholamines, including dopamine, norepinephrine, and epinephrine, present particular analytical challenges due to their low stability, spontaneous oxidation, and low concentrations in complex biological matrices [21] [22].

Matrix-Specific Systematic Protocols

A systematic approach recognizes that different biological matrices demand tailored preparation strategies based on their unique composition and analyte presentation:

Plasma/Serum Samples: Systematic preparation must address both protein content and analyte instability. Protocol includes: (1) addition of stabilizing agents (antioxidants like glutathione or metabisulfite), (2) protein precipitation with acids (perchloric or phosphoric acid) or organic solvents (acetonitrile or methanol), (3) sample purification using solid-phase extraction (SPE) with mixed-mode cation-exchange cartridges, and (4) concentration steps to achieve required detection limits [21]. Critical parameters include maintaining pH at 2-4 to prevent oxidation and using isotopically-labeled internal standards to correct for recovery variations.

Urine Samples: While urine contains fewer proteins, systematic preparation must address concentration variability and conjugate metabolites. Protocol includes: (1) specific gravity or creatinine normalization, (2) enzymatic deconjugation (with sulfatase/glucuronidase for phase II metabolites), (3) dilution with aqueous acid or buffer, and (4) SPE clean-up similar to plasma methods [21]. pH adjustment to 2-4 is critical but carries risks for MS instrumentation if not properly controlled.

Brain Tissue Samples: Systematic preparation for brain tissue focuses on complete homogenization and stabilization. Protocol includes: (1) rapid freezing after collection, (2) homogenization in ice-cold acidified solvents, (3) protein precipitation, and (4) extract purification using SPE or liquid-liquid extraction [21]. The critical systematic consideration is minimizing degradation during processing through temperature control and antioxidant presence.

Quantitative Method Validation

Systematic approaches incorporate comprehensive validation parameters to quantify method performance. For catecholamine analysis, key metrics include: extraction recovery (typically 70-120% for reliable quantitation), matrix effects (assessed by post-column infusion experiments), limit of detection (LOD, dependent on sample volume and detector sensitivity), and limit of quantification (LOQ, sufficient for physiological concentrations) [21]. These validation parameters transform subjective assessment into objectively quantified method performance characteristics.

The Scientist's Toolkit: Essential Research Reagent Solutions

Systematic sample preparation requires specific reagents and materials designed to address the technical challenges of different sample matrices and analytical techniques. The following toolkit represents essential solutions for implementing systematic approaches across various applications.

Table 3: Essential Research Reagent Solutions for Systematic Sample Preparation

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Lithium Tetraborate | Flux for fusion techniques | XRF analysis of refractory materials [1] |

| Mixed-Mode Cation-Exchange Sorbents | Selective extraction of basic analytes | SPE cleanup of catecholamines from biological fluids [21] |

| Deuterated Internal Standards | Correction for recovery variations | Quantitative LC-MS of neurotransmitters [21] |

| PTFE Membrane Filters | Particle removal without contamination | ICP-MS sample preparation [1] |

| Antioxidant Cocktails | Stabilization of oxidizable analytes | Preservation of catecholamines in biological samples [21] |

| KBr for Spectroscopy | Pellet formation for FT-IR | Solid sample analysis by FT-IR [1] |

| High-Purity Acids | Sample digestion and preservation | Trace metal analysis, ICP-MS [1] |

| Cellulose/Boric Acid Binders | Powder binding for pellet formation | XRF pellet preparation [1] |

Future Trends and Implementation Framework

The evolution of sample preparation continues toward increasingly systematic approaches characterized by automation, miniaturization, and enhanced scientific foundation. Understanding these trends provides a roadmap for implementing systematic paradigms in research and quality control environments.

Emerging Technological Trends

Several technological developments are shaping the future of systematic sample preparation:

Automation and High-Throughput Systems: Laboratories are increasingly investing in automated liquid-handling stations capable of processing 96- or 384-well plates in under an hour, delivering a 1.8-fold reduction in sample-to-sample variation for proteomics workflows [20]. These systems enhance both quality and productivity while reallocating technician time from repetitive tasks to data interpretation.

Microextraction Techniques: Miniaturized extraction approaches are gaining prominence, particularly for biological and environmental applications. These techniques offer advantages including reduced solvent consumption, smaller sample requirements, and potential for on-line coupling with analytical instruments [21].

Integrated Platform Solutions: Vendors are increasingly offering comprehensive solutions that combine instrumentation with validated reagent kits and data pipelines. This approach reduces validation burdens for end users and ensures method consistency [20]. Procurement decisions increasingly favor suppliers offering both reagents and informatics support under single service level agreements.

Advanced Material Science: Novel sorbent materials with enhanced selectivity and capacity are expanding systematic preparation options. Molecularly imprinted polymers, restricted access media, and hybrid inorganic-organic materials provide improved cleanup and enrichment capabilities for challenging applications.

Implementation Strategy

Transitioning from trial-and-error to systematic approaches requires structured implementation:

Fundamental Education: Reincorporate sample preparation fundamentals into analytical chemistry curricula, emphasizing extraction principles and method validation protocols [17].

Method Validation Infrastructure: Implement standardized validation protocols including comparison studies, reliability assessments, and statistical treatment of results [18] [19].

Automation Strategy: Develop phased automation implementation plans based on sample volume, staffing expertise, and quality requirements [20].

Cross-Functional Collaboration: Foster collaboration between laboratory managers, analytical scientists, and IT departments to specify integrated solutions combining sample preparation with data management [20].

The transition from trial-and-error to systematic approaches in sample preparation represents a necessary evolution in analytical science. By embracing fundamental principles, implementing rigorous validation protocols, and leveraging technological advancements, researchers can overcome the limitations of empirical methods that currently contribute to the majority of analytical errors. The systematic paradigm reframes sample preparation from an ancillary consideration to a scientifically-grounded discipline essential for analytical accuracy, reproducibility, and efficiency. As sample preparation technologies continue to advance toward greater automation, miniaturization, and integration, those who adopt systematic approaches will achieve not only improved analytical outcomes but also enhanced operational efficiency in both research and quality control environments.

Technique-Specific Protocols: From Grinding to Gas Sampling

In the context of research on the fundamentals of spectroscopic sample preparation techniques, the processing of solid samples represents a critical foundational step. The validity of all subsequent analytical data generated by techniques such as X-Ray Fluorescence (XRF), Inductively Coupled Plasma Mass Spectrometry (ICP-MS), and Fourier Transform Infrared (FT-IR) spectroscopy is contingent upon the initial preparation of the sample [1]. Inadequate sample preparation is a primary source of error, accounting for as much as 60% of all spectroscopic analytical errors [1]. The core objective of solid sample preparation is to transform a raw, often heterogeneous material into a homogeneous, analyzable specimen that is representative of the whole. This process directly influences analytical accuracy by controlling key parameters such as particle size, surface characteristics, and overall homogeneity, thereby ensuring that the sample interacts with radiation in a consistent and reproducible manner [1]. Without proper preparation, even the most advanced instrumentation can yield misleading data, compromising research integrity, quality control protocols, and scientific conclusions.

The Imperative of Proper Solid Sample Preparation

The physical characteristics of a solid sample have a profound impact on spectroscopic results. Proper grinding, milling, and homogenization mitigate several fundamental problems that can otherwise invalidate analytical data.

- Particle Size and Surface Effects: Radiation interacts with matter in ways highly dependent on surface and particle characteristics. Rough surfaces can scatter light randomly, while a consistent, monodisperse particle size ensures uniform interaction. Significant variation in particle size introduces sampling error that severely compromises quantitative analysis [1].

- Homogeneity and Representativeness: Heterogeneous samples yield non-reproducible results because the specific portion analyzed may not represent the entire sample bulk. Grinding and milling are therefore essential for creating homogeneous samples that yield reliable and reproducible data [1] [23].

- Matrix Effects: Constituents within the sample matrix can absorb or enhance spectral signals, obscuring the response of the target analyte. Proper preparation techniques, such as dilution or matrix matching via fusion, remove these interferences [1].

The specific preparation requirements vary significantly by analytical technique. For instance, XRF spectrometry primarily requires flat, homogeneous surfaces with a controlled particle size (typically <75 μm), often achieved through pressed pellets or fused beads [1]. In contrast, ICP-MS demands the total dissolution of solid samples, a process for which initial fine grinding is often a critical first step to facilitate complete acid digestion [1]. FT-IR analysis of solids requires grinding with an infrared-transparent matrix like potassium bromide (KBr) to form pellets for transmission analysis [1]. Thus, the selection of a sample preparation protocol is directly dictated by the requirements of the subsequent spectroscopic method.

The Sample Preparation Workflow: A Step-by-Step Guide

A systematic approach to solid sample preparation is vital for achieving consistent results. The entire process, from initial sampling to final analysis, can be visualized in the following workflow. This workflow integrates the key steps of sampling, drying, size reduction, and homogenization, highlighting their cyclical nature until the desired analytical fineness is achieved.

Step 1: Sampling and Sample Division