Spectroscopy Data Analysis and Software Tools: A 2025 Guide for Biomedical Research and Drug Development

This article provides a comprehensive guide to the rapidly evolving landscape of spectroscopy data analysis, tailored for researchers, scientists, and drug development professionals.

Spectroscopy Data Analysis and Software Tools: A 2025 Guide for Biomedical Research and Drug Development

Abstract

This article provides a comprehensive guide to the rapidly evolving landscape of spectroscopy data analysis, tailored for researchers, scientists, and drug development professionals. It covers foundational principles and explores the latest software tools, including those enhanced with AI and machine learning. The scope includes practical methodologies for pharmaceutical applications, proven troubleshooting techniques for common instrumentation issues, and a critical framework for method validation and comparative analysis of techniques like ED-XRF and WD-XRF. By synthesizing current market trends and recent technological advancements, this guide aims to empower professionals to enhance data accuracy, accelerate research workflows, and meet stringent regulatory standards in biomedical and clinical research.

Mastering the Fundamentals: Core Concepts and Emerging Trends in Spectroscopy Software

Spectroscopy software has transitioned from a specialized tool for operating instruments to a critical platform for data intelligence and workflow automation. In modern laboratories, this software serves as the central nervous system, integrating with spectrometers to enable precise collection, analysis, and interpretation of spectral data across pharmaceutical development, food safety testing, and environmental monitoring [1]. The global market, valued between $1.1 billion and $1.49 billion in 2024 and 2025, reflects this growing importance, with projections indicating a rise to $2.33 billion to $2.5 billion by 2029-2034 [2] [1] [3]. This growth, driven by technological innovation and stringent regulatory demands, has made understanding the software landscape essential for researchers and drug development professionals aiming to maintain analytical excellence and competitive advantage.

Current Market Size and Future Projections

The spectroscopy software market is experiencing robust global growth, fueled by advancements in artificial intelligence, cloud computing, and increasing application across regulated industries. The following table summarizes the key market size figures and growth projections from recent industry analyses:

| Market Size & Growth Metric | 2024 Value | 2025 Value | 2032/2034 Value | Compound Annual Growth Rate (CAGR) |

|---|---|---|---|---|

| Business Research Company [2] | $1.33 billion | $1.49 billion | $2.33 billion (2029) | 12.1% (2024-2025), 11.9% (2025-2029) |

| 360iResearch [4] | $250.88 million | $277.44 million | $610.20 million (2032) | 11.75% (2024-2032) |

| Global Market Insights [1] [3] | $1.1 billion | - | $2.5 billion (2034) | 9.1% (2025-2034) |

| Marketsizeandtrends [5] | $1.2 billion | - | $2.30 billion (2033) | 7.5% (2026-2033) |

Note: Discrepancies in absolute values arise from different research methodologies and market definitions (e.g., inclusion or exclusion of related services and hardware). However, all sources consistently indicate strong, positive growth.

Market Growth Drivers and Trends

Several interconnected factors are propelling the expansion and transformation of the spectroscopy software market:

Pharmaceutical Industry Demand: The pharmaceutical sector accounted for over 28.9% of the market share in 2024 [1] [3]. The need for high-throughput screening in drug discovery, rigorous quality control, and compliance with regulatory standards is a primary driver. The FDA's approval of 55 new drugs in 2023 exemplifies the industry's pace, which relies heavily on advanced analytical tools [1].

Stringent Regulatory and Safety Requirements: Increasing global emphasis on food safety and environmental monitoring is boosting software adoption. For instance, food and beverage recalls in the U.S. rose by 8% in 2023, highlighting the need for robust contaminant detection and quality verification tools [2].

Technology Integration: The integration of Artificial Intelligence (AI) and Machine Learning (ML) is revolutionizing data analysis by enabling advanced pattern recognition, predictive analytics, and automated anomaly detection [2] [6] [1]. Furthermore, the shift toward cloud-based and hybrid deployment models offers scalability, remote access, and enhanced collaboration for geographically dispersed teams [2] [6] [4].

Rise of Portable and Connected Systems: The market is seeing growing demand for software compatible with portable and handheld spectrometers, enabling on-site analysis in fields like agriculture, forensics, and environmental monitoring [6] [7] [1]. The integration with Laboratory Information Management Systems (LIMS) and other lab platforms is also creating more efficient, connected workflows [2] [6].

Technical Support Center: Troubleshooting and FAQs

This section addresses common technical challenges researchers face when using spectroscopy software, providing practical guidance for resolving data analysis and operational issues.

Frequently Asked Questions (FAQs)

Q1: What are the primary considerations when choosing between cloud-based and on-premise spectroscopy software?

The choice depends on your data security, compliance, and collaboration needs. On-premise solutions, which dominated the market in 2024 with USD 549.5 million in revenue, offer direct control over sensitive data, which is crucial for meeting strict regulatory requirements in pharmaceuticals and healthcare [1] [3]. They also allow for deep customization and integration with existing lab systems. Cloud-based solutions provide superior scalability, remote access, and easier collaboration, and they reduce upfront capital expenditure. They are ideal for distributed teams and labs that need to process large, variable datasets flexibly [6] [4] [1].

Q2: How is AI transforming the analysis of spectroscopic data?

AI, particularly machine learning, is revolutionizing spectroscopy software by automating complex analytical tasks [6]. Key transformations include:

- Automated Pattern Recognition and Anomaly Detection: ML algorithms can rapidly identify spectral features and correlate them with specific compounds or materials, significantly reducing analysis time and increasing accuracy [6].

- Enhanced Data Processing: Deep learning models are employed for advanced spectral deconvolution, noise reduction, and baseline correction, resulting in cleaner and more reliable data [6].

- Predictive Modeling and Insights: AI-driven software can provide actionable recommendations for quality control and R&D by interpreting complex datasets, thereby supporting better decision-making [6] [1].

Q3: Our lab is implementing new spectroscopy software. What are the key steps for successful validation, particularly under GAMP 5?

For labs operating under GxP, validating software is critical. A risk-based approach, as outlined in GAMP 5, is the industry standard [8]. The process should be integrated into your project lifecycle, from planning to decommissioning. The diagram below outlines the core logical workflow for a GAMP 5 compliant validation process.

Q4: A common issue we face is poor signal-to-noise ratio in our spectral data, especially with low-concentration samples. What are the standard troubleshooting steps?

Poor signal-to-noise ratio is a frequent challenge. Follow this systematic troubleshooting workflow to identify and resolve the issue.

Q5: We are experiencing integration failures between our spectroscopy software and the Laboratory Information Management System (LIMS). What is the recommended protocol to resolve this?

Integration issues between software systems are common. The following protocol provides a detailed methodology for diagnosing and resolving these problems.

Experimental Protocol: Diagnosing Software-LIMS Integration Failures

Objective: To systematically identify and resolve communication and data transfer failures between spectroscopy software and a LIMS.

Materials and Reagents:

- Primary Systems: Spectroscopy software (e.g., Waters Connect, Genie 4.0), LIMS (e.g., LabVantage, LabWare) [2] [1] [8].

- Testing Tools: Network diagnostic tools (e.g., ping, telnet), API testing platform (e.g., Postman).

- Documentation: System configuration files, API documentation, and data mapping specifications.

Methodology:

- Verification of Network Connectivity:

- Using a command-line interface, ping the LIMS server's IP address to confirm basic network reachability.

- Use the

telnet [LIMS_Server_IP] [Port]command to verify that the specific port required for communication is open and accessible. A failed connection at this stage indicates a network firewall or configuration issue.

Authentication and Credential Validation:

- Manually test the authentication process using an API tool like Postman. Send a login request to the LIMS API endpoint with the credentials used by the spectroscopy software.

- Confirm the credentials have not expired and have the necessary permissions for data writing and reading. Note any error codes related to "unauthorized" or "forbidden" access.

Data Format and Payload Inspection:

- Capture a sample of the data payload being sent from the spectroscopy software. Compare the structure (e.g., JSON, XML schema) and field names directly against the expected format defined in the LIMS API documentation.

- Pay specific attention to data types (e.g., text vs. numeric), mandatory fields, and character limits. Mismatches here are a common source of "silent" failures where the connection is successful but data is rejected.

Log File Analysis:

- Examine the application log files of both the spectroscopy software and the LIMS. Filter for errors, warnings, or failed transactions that occurred at the time of the integration attempt.

- Cross-reference timestamps to correlate events between the two systems. The logs often provide specific error messages that are not displayed in the user interface.

Expected Outcome: By following this protocol, the root cause of the integration failure will be identified, typically falling into one of the categories above. The resolution may involve network reconfiguration, credential updates, data mapping adjustments, or software patching.

Troubleshooting Note: If the issue persists after these steps, contact the technical support teams for both the spectroscopy software and LIMS vendors, providing them with the detailed findings from this diagnostic protocol.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents and materials frequently used in spectroscopic experiments, particularly in pharmaceutical applications.

| Item Name | Function / Role in Experiment |

|---|---|

| Ultrapure Water | Used for sample preparation, dilution, and as a blank solvent; essential for achieving low background noise in UV-Vis and FT-IR spectroscopy [7]. |

| Deuterated Solvents (e.g., D₂O, CDCl₃) | Required for Nuclear Magnetic Resonance (NMR) spectroscopy to provide a non-interfering signal lock and allow for accurate solvent suppression [1]. |

| PCR Primers & Probes | Designed using specialized software (e.g., Primer3) for specific DNA amplification in genetics and molecular biology research, with subsequent analysis often verified by spectroscopic methods [9]. |

| Monoclonal Antibodies | Key analytes in biopharmaceutical characterization; analyzed using specialized fluorescence techniques like A-TEEM for stability, aggregation, and identity testing [7]. |

| Certified Reference Materials | Provide known spectral signatures for instrument calibration, method validation, and ensuring quantitative accuracy across all spectroscopic techniques [1]. |

Troubleshooting Guides

Common FT-IR Spectroscopy Problems and Solutions

Modern spectroscopy software integrates advanced capabilities for data collection, analysis, and interpretation, but users may still encounter technical issues. The following table outlines common problems in FT-IR spectroscopy and their recommended solutions [10].

| Problem | Symptoms | Likely Cause | Solution |

|---|---|---|---|

| Instrument Vibrations | Noisy spectra; strange, unexplained peaks | Physical disturbances from nearby equipment or lab activity | Relocate spectrometer to a vibration-free surface; isolate from pumps and heavy traffic [10]. |

| Dirty ATR Crystal | Negative absorbance peaks; distorted baselines | Contaminated or dirty crystal surface | Clean the ATR crystal thoroughly with appropriate solvent and acquire a fresh background scan [10]. |

| Incorrect Data Processing | Distorted spectral output in diffuse reflection | Data processed in absorbance units instead of Kubelka-Munk | Reprocess the data, converting to Kubelka-Munk units for a more accurate analytical representation [10]. |

| Surface vs. Bulk Effects | Inconsistent results from the same material sample | Surface chemistry (e.g., oxidation) differs from bulk material | Collect spectra from both the material's surface and a freshly cut interior section for comparison [10]. |

Software and Data Integrity FAQs

Q: Our laboratory must adhere to strict data security and compliance protocols. What software deployment option is most suitable? [1] A: The on-premises deployment model is often preferred in regulated environments like pharmaceuticals and healthcare. It provides organizations with direct control over sensitive spectral data, helps meet specific regulatory requirements (e.g., FDA compliance), and allows for deeper customization and integration with existing laboratory systems [1].

Q: How can our team quickly interrogate data to plot chromatograms, perform library searches, or annotate spectra without launching a full quantitative analysis? [11] A: Many software suites, such as Thermo Scientific Xcalibur, include built-in applications for ad-hoc data review. The FreeStyle application, for example, allows users to qualitatively interrogate data by displaying chromatograms and spectra, integrating peaks, searching mass spectral libraries, and annotating plots with text and graphics [11].

Q: What are the key technological trends making spectroscopy software more powerful and accessible? [1] A: The market is rapidly evolving with several key trends:

- Integration of AI and ML: Enhances data processing speed, pattern recognition, and predictive analytics.

- Cloud-Based Solutions: Enables remote access, facilitates collaboration among geographically dispersed teams, and offers scalable computing resources.

- Intuitive User Interfaces: Development of user-friendly dashboards, automated workflows, and customizable reporting makes the software accessible to non-specialists.

- Modular Software Design: Allows users to select and pay for only the features they need, providing flexibility for evolving research requirements [1].

Q: Where can I find resources for instrument maintenance, operation, and software support? [12] A: Most instrument manufacturers provide comprehensive technical support centers. These typically offer:

- Expert Guidance: Remote or on-site help with installation, calibration, and advanced troubleshooting.

- Comprehensive Training: Sessions on basic operation and advanced software functionalities.

- Extensive Online Resources: Access to user manuals, FAQs, troubleshooting guides, and instructional videos [12]. For specific platforms, such as Thermo Fisher's Real-Time PCR systems, dedicated support centers provide technical notes, FAQs, and workflow walkthroughs [13].

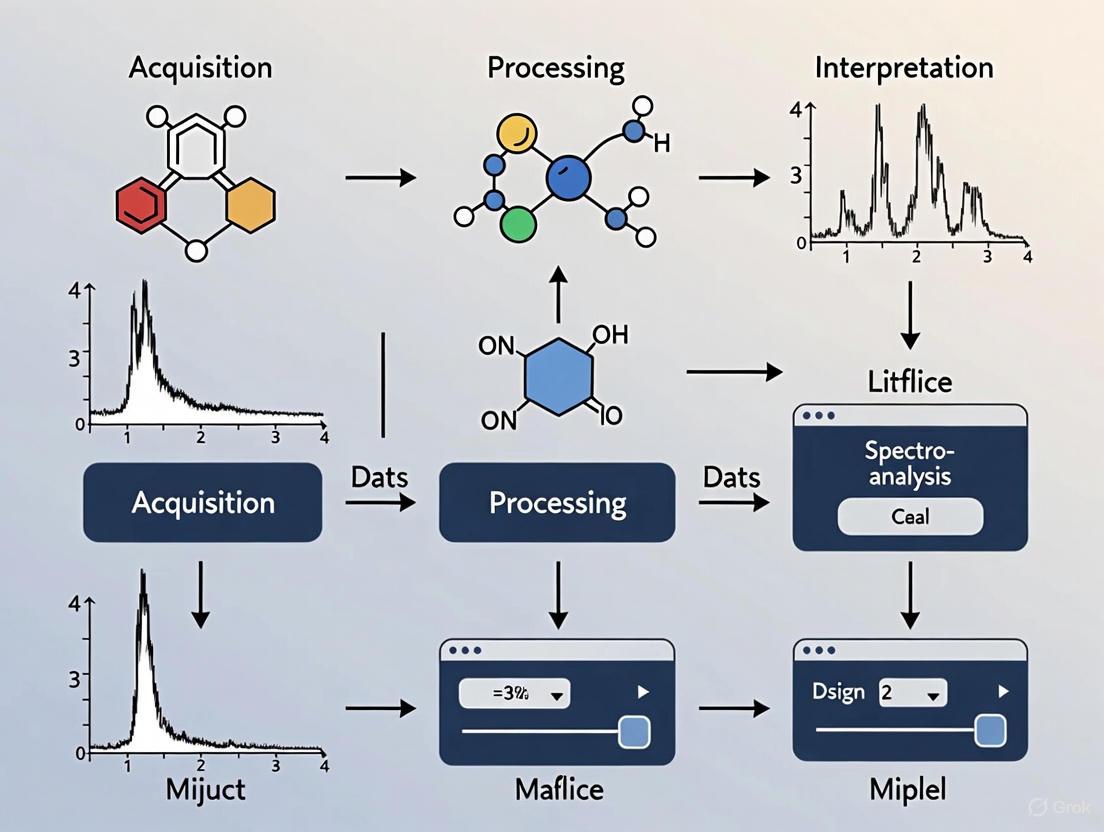

Workflow Visualization

Spectroscopy Data Analysis Workflow

The following diagram illustrates the core logical workflow of spectroscopy software, from data acquisition to final reporting, highlighting the key functions at each stage.

The Scientist's Toolkit: Essential Software Solutions

The following table details key software solutions and their primary functions in the spectroscopy workflow, crucial for ensuring data integrity and analytical efficiency [1] [11] [14].

| Software/Tool | Primary Function | Key Application in Research |

|---|---|---|

| Xcalibur Software [11] | Data acquisition, control, and interrogation for LC-MS systems. | Provides a centralized platform for method setup, data review, and integration with cloud-based tools for collaborative analysis. |

| SynerJY Software [14] | Integrated data acquisition and analysis for spectroscopic systems. | Offers intuitive control of spectrometers and detectors, with advanced data processing and presentation tools like 3-D plots and contour maps. |

| LabSpec 6 Software [14] | Dedicated data acquisition and analysis suite for Raman spectroscopy. | Delivers powerful, specialized capabilities for Raman analysis, including control of modular Raman systems. |

| Cary WinUV Color [15] | Color measurement and quality control software for UV-vis spectroscopy. | Automates generation of QA/QC reports using international color coordinate systems (e.g., chromaticity, CIELAB) for industries where color consistency is critical. |

| AI/ML Enhanced Platforms [1] | Advanced data analysis and pattern detection. | Improves speed and precision in processing spectral data, enabling predictive analytics and high-throughput screening in drug discovery. |

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between traditional chemometrics and new AI methods for spectral analysis?

Traditional chemometrics relies on linear models like Principal Component Analysis (PCA) and Partial Least Squares (PLS) regression, which are vital for transforming multivariate datasets into actionable insights [16]. In contrast, modern Artificial Intelligence (AI) and Machine Learning (ML) frameworks automate feature extraction, handle nonlinear calibration, and facilitate data fusion. Key AI subfields include Machine Learning (ML), which develops models that learn from data without explicit programming, and Deep Learning (DL), which uses multi-layered neural networks for hierarchical feature extraction [16]. This enables AI to process unstructured data like hyperspectral images and high-throughput sensor arrays more effectively.

Q2: How can I quantify the uncertainty of predictions made by my machine learning model on spectroscopic data?

You can implement Quantile Regression Forest (QRF), a machine learning technique based on Random Forest. Unlike standard models that provide only a single prediction, QRF retains the distribution of responses within its decision trees. This allows it to calculate prediction intervals and provide a sample-specific uncertainty estimate alongside each prediction [17]. For example, values near the detection limit will naturally produce larger prediction intervals, clearly communicating greater uncertainty to the user [17].

Q3: Our team struggles with interpreting "black box" AI models. What are some strategies for improving model interpretability?

Interpretability is a common challenge. You can employ Explainable AI (XAI) frameworks to identify informative wavelength regions and preserve chemical insight. Techniques include:

- SHAP (SHapley Additive exPlanations): Explains the output of any ML model by quantifying the contribution of each feature.

- Grad-CAM (Gradient-weighted Class Activation Mapping): Highlights important regions in an input, often used with convolutional neural networks.

- Spectral Sensitivity Maps: Visualize which parts of the spectrum most influence the model's decision [16]. Using these tools helps reconcile powerful AI predictions with fundamental chemical principles.

Q4: What are the best practices for visualizing magnetic resonance spectroscopy (MRS) data to ensure study validity?

A survey of the MRS literature revealed generally poor visualization standards [18]. To ensure robustness and interpretability:

- Voxel Location: Present the voxel location from all participants, not just a single example. Participant group membership (e.g., patients and controls) should be clearly indicated [18].

- Spectral Quality: Provide a visual representation of the MRS spectra from all participants, not just an average or a single spectrum. This allows readers to directly judge data quality and identify artefacts [18]. Presenting this information is essential for judging the validity and replicability of the experiment.

Q5: How can we use AI to analyze hyperspectral imaging (HSI) data cubes for pharmaceutical quality control?

Hyperspectral data cubes integrate spatial and chemical information [19]. The analysis workflow typically involves:

- Data Acquisition: Using HSI to gather spatial and spectroscopic data into a cube where the x- and y-axes are spatial, and the z-axis is spectral [19].

- Data Correction: Processing data to correct for baseline drifts, spectral overlap, and multiplicative scattering [20].

- Region of Interest (ROI) Identification: Applying unsupervised ML models like PCA to determine the ROI that best represents your analyte of interest [20].

- Classification/Quantification: Using supervised models like K-Nearest Neighbor (KNN) for classification or PLS for quantitative modeling based on the identified ROI [20].

Troubleshooting Guides

Issue 1: Poor Generalization and Overfitting in Supervised ML Models

- Problem: Your model performs well on training data but poorly on new, unseen spectral data.

- Solution:

- Increase and Diversify Training Data: Ensure your training set is sufficiently large and comprehensively covers the chemical space of interest. The application of ML to experimental data is often limited by the amount of consistent data that can be produced [21].

- Apply Regularization: Use techniques that add a penalty to complex solutions in your model to prevent it from fitting to noise in the training data [21].

- Use Ensemble Methods: Algorithms like Random Forest (RF) build many decision trees and aggregate their results, offering strong generalization capability and reduced overfitting [16].

- Validate Rigorously: Always use a hold-out test set or cross-validation to assess real-world performance.

Issue 2: Handling Nonlinear Relationships in Spectral Data

- Problem: Traditional linear models (PLS, PCR) fail to capture complex, nonlinear relationships in your dataset.

- Solution:

- Explore Nonlinear Algorithms: Implement models designed for nonlinearity, such as:

- Support Vector Machines (SVM) with nonlinear kernels (e.g., Radial Basis Function) [16].

- Artificial Neural Networks (ANNs) and Deep Neural Networks (DNNs), which can approximate complex calibration functions [16].

- Extreme Gradient Boosting (XGBoost), which sequentially corrects residual errors and excels at complex, nonlinear relationships [16].

- Preprocess Data: Apply scatter correction or normalization to mitigate physical nonlinearities like light scattering effects.

- Explore Nonlinear Algorithms: Implement models designed for nonlinearity, such as:

Issue 3: "Circumstantial" or Spurious Correlations in Chemometric Models

- Problem: The model shows a strong statistical correlation between a spectral feature and a sample property, but the correlation is not grounded in sound chemistry (e.g., predicting sulfur in gasoline with molecular spectroscopy) [22].

- Solution:

- Apply "Chemical Thinking": Reason whether the component responsible for the property can genuinely express itself spectrally in the technique you are using. Molecular spectroscopy measures chemical bonds, so it may be unsuitable for quantifying elements regardless of their molecular form [22].

- Investigate Underlying Factors: Correlations can be rooted in the interconnected nature of process data. A change in one compound might be artificially correlated with another due to the process itself, not their spectral properties [22].

- Validate with First Principles: Ensure your model has a plausible basis in chemistry and physics, not just statistics.

Essential Research Reagent Solutions

The following table details key software tools and algorithms used in modern AI-driven spectroscopic analysis.

| Research Reagent / Tool | Type | Primary Function in Analysis |

|---|---|---|

| Partial Least Squares (PLS) [20] [16] | Algorithm | A foundational supervised method for linear regression and quantitative analysis, finding latent variables that relate spectral signals to response matrices. |

| Principal Component Analysis (PCA) [20] [16] | Algorithm | An unsupervised technique for exploratory data analysis, dimensionality reduction, and clustering; essential for identifying patterns and outliers in hyperspectral data. |

| Random Forest (RF) [16] [17] | Algorithm | An ensemble learning method used for classification and regression that offers strong generalization and robustness against spectral noise and collinearity. |

| Quantile Regression Forest (QRF) [17] | Algorithm | An extension of Random Forest that provides both accurate predictions and sample-specific uncertainty estimates, crucial for reliable analytical reporting. |

| Convolutional Neural Network (CNN) [16] | Algorithm | A deep learning architecture ideal for automatically extracting hierarchical features from raw spectral data or hyperspectral images. |

| Hyperspectral Imaging (HSI) [20] [19] | Technique & Data | A measurement method that simultaneously acquires spatial and spectroscopic data, creating a data cube for detailed material characterization and mapping. |

| Explainable AI (XAI) [16] | Framework | A set of tools (e.g., SHAP, Grad-CAM) used to interpret complex AI models, identifying which spectral features drive predictions to maintain chemical insight. |

Experimental Protocol: Developing a QRF Model for Quantitative Spectral Analysis

This protocol outlines the methodology for applying a Quantile Regression Forest to predict sample properties and estimate prediction uncertainty from infrared spectroscopic data, as demonstrated in soil and agricultural analysis [17].

1. Sample Preparation and Spectral Acquisition

- Collect a representative set of samples (e.g., soil, agricultural produce, pharmaceutical blends).

- For each sample, acquire the infrared spectrum (e.g., NIR, FTIR) using a calibrated spectrometer. Ensure consistent measurement conditions (e.g., pathlength, temperature) across all samples.

- In parallel, use a primary test method (PTM) to obtain reference values for the property of interest (e.g., cation exchange capacity, dry matter content) for each sample [22].

2. Dataset Construction and Preprocessing

- Construct a dataset where each entry is a paired spectrum and its corresponding reference value.

- Randomly split the dataset into a calibration (training) set (typically 70-80% of samples) and a validation (test) set (the remaining 20-30%).

- Apply necessary spectral pre-processing to the calibration set, such as smoothing, baseline correction, or standard normal variate (SNV) transformation. Critical: The parameters for these pre-processing steps must be derived from the calibration set only and later applied to the validation set to avoid data leakage.

3. Model Training and Calibration

- Train the Quantile Regression Forest model on the pre-processed calibration set.

- The model will learn the relationship between the spectral features (X) and the reference values (Y) from the PTM.

- Unlike standard Random Forest, QRF retains the full distribution of the response variables in the leaves of its trees, which is essential for uncertainty estimation [17].

4. Model Validation and Uncertainty Estimation

- Use the trained QRF model to predict the property values for the hold-out validation set.

- For each prediction, the model will also output a prediction interval (e.g., a 90% interval). This interval provides a range within which the true value is likely to fall, offering a sample-specific measure of uncertainty [17].

- Assess the model's performance by comparing the predicted values to the known reference values from the PTM for the validation set. Calculate metrics like Root Mean Square Error (RMSE) and R².

5. Interpretation and Operational Use

- Examine the validation results. Note that some predictions, especially for extreme values or samples near the model's detection limit, will have wider prediction intervals, indicating higher uncertainty [17].

- The model is ready for operational use once it demonstrates satisfactory predictive accuracy and reliable uncertainty quantification on the validation set.

The workflow for this protocol is summarized in the following diagram:

For researchers, scientists, and drug development professionals, the choice of software deployment model is a critical strategic decision that directly impacts the efficiency, security, and scalability of spectroscopy data analysis. The core of this decision often involves a fundamental trade-off: the extensive control and data security offered by on-premises solutions versus the unparalleled scalability and collaborative flexibility of cloud platforms. In the context of spectroscopy, where data integrity and regulatory compliance are paramount, understanding this balance is essential. This guide provides a technical support framework to help scientific teams navigate this complex landscape, troubleshoot common issues, and implement best practices tailored to analytical research environments.

Core Concepts and Definitions

- On-Premises Deployment: The software and all associated data are hosted on infrastructure located within the organization's own facilities (e.g., a local server room or data center). The organization is entirely responsible for all maintenance, security, updates, and physical access control [23] [24].

- Cloud Deployment: The software is hosted on the service provider's remote servers and accessed over the internet. The provider manages the infrastructure, and resources are typically delivered via a subscription-based, pay-as-you-go model [23] [25]. Common models include Software as a Service (SaaS), which is most prevalent for end-user applications.

- Spectroscopy Data Analysis: The process of using specialized software tools to collect, process, and interpret spectral data from instruments like spectrometers. This software is crucial for determining material composition and is widely used in drug discovery, quality assurance, and process control [1].

Comparative Analysis: On-Premises vs. Cloud

The following tables summarize the key differences between the two deployment models, with a focus on aspects critical to research and development environments.

Table 1: Strategic Comparison of Deployment Models

| Parameter | On-Premises Security | Cloud Security |

|---|---|---|

| Data Location & Control | Data resides on internal servers, providing complete physical and logical control over data and encryption keys [23]. | Data is stored in the vendor's remote data centers; users have less direct control, which is managed by a third-party provider [23]. |

| Customization | Solutions can be highly customized to specific research workflows and integrated with existing laboratory systems [23]. | Offers limited customization, typically confined to the features and configurations provided by the vendor [23]. |

| Compliance | Often preferred for heavily regulated industries (e.g., pharma, healthcare) as it simplifies meeting strict data sovereignty and audit requirements like HIPAA and GDPR [23] [1]. | Providers offer compliance certifications, but the shared responsibility model requires users to ensure their configuration and use meet regulatory standards [23] [26]. |

| Software Updates | The internal IT team has full control over the timing and implementation of upgrades and patches [24]. | The provider manages all software updates and patches automatically, ensuring access to the latest features without manual intervention [25]. |

Table 2: Quantitative Market Data for Spectroscopy Software (2024)

| Aspect | On-Premises Deployment | Cloud Deployment & Market Trends |

|---|---|---|

| Market Size (2024) | USD 549.5 Million [1] | Part of a global market valued at USD 1.1 Billion [1] |

| Growth Driver | Data security and compliance capabilities, particularly in pharmaceuticals and healthcare [1]. | Incorporation of AI and ML for data analysis, and the rise of remotely accessible solutions for collaboration [1]. |

| Key Advantage | Direct control over sensitive information and faster data processing for time-sensitive applications [1]. | Scalability of storage and computing resources to handle increasing volumes of spectral data [1]. |

Decision Framework and Workflow

Choosing the right deployment model requires a systematic assessment of your project's specific needs. The following diagram outlines the key decision-making workflow.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Software and Hardware Components for Spectroscopy

| Item | Function in Spectroscopy |

|---|---|

| FT-IR Spectrometer | A core instrument for collecting molecular fingerprint data; new platforms (e.g., Bruker Vertex NEO) enhance performance by minimizing atmospheric interference [7]. |

| Spectroscopy Software Suite | Specialized tools (from vendors like Thermo Fisher, Agilent) for instrument control, spectral data acquisition, processing, and interpretation [1]. |

| Laboratory Information Management System (LIMS) | Software that tracks samples and associated data, ensuring workflow integrity and regulatory compliance [27]. |

| Quantum Cascade Laser (QCL) Microscope | An advanced tool (e.g., Bruker LUMOS II) for high-resolution infrared imaging of micro-samples, crucial for pharmaceutical analysis [7]. |

| Cloud-Native Data Analysis Platform | A platform that provides centralized, remotely accessible storage and built-in AI/ML tools for processing high volumes of spectral data [1]. |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Our team is geographically dispersed. How can we securely collaborate on the same spectral data sets in real-time?

A1: Cloud platforms are inherently designed for this challenge. They provide a centralized repository for spectral data that authorized users can access from any location with an internet connection. This enables real-time collaboration on data analysis and shared projects. To ensure security, implement Role-Based Access Control (RBAC) to define precisely which data and functions each researcher can access, adhering to the principle of least privilege [28] [29]. All data in transit and at rest should be protected with enterprise-grade encryption [25].

Q2: We have legacy instrumentation and custom analysis scripts. Which deployment model offers better integration?

A2: On-premises solutions typically excel here. They offer a higher degree of customization and direct system access, making it easier to integrate with specialized legacy equipment and execute custom scripts or workflows that may not be compatible with standardized cloud environments [23] [24]. The controlled local network can also provide the low-latency connection sometimes required for direct instrument control [30].

Q3: We are experiencing slow performance when analyzing large hyperspectral imaging datasets. How can we improve processing speed?

A3:

- If using an on-premises system: Investigate upgrading your local hardware, specifically the CPU, GPU, and RAM, as these directly impact processing speed for large datasets [30]. Ensure your IT team performs regular maintenance and that no other network-intensive processes are consuming resources.

- If using a cloud platform: Leverage its scalability. Most cloud services allow you to temporarily provision more powerful computing resources (e.g., high-memory or GPU-optimized instances) specifically for the duration of the intensive analysis task, scaling back down afterward to manage costs [28] [26].

Q4: How do we ensure our spectroscopy data management is compliant with regulations like FDA 21 CFR Part 11?

A4: Compliance is a shared responsibility. For on-premises deployments, the organization has full control to implement the required technical controls (e.g., audit trails, electronic signatures, access security) and can more easily maintain detailed records for audits, as all data resides internally [23] [1]. When using a cloud service, you must select a provider that explicitly offers compliance certifications relevant to your industry (e.g., HIPAA, GDPR). Crucially, you are responsible for configuring the cloud application and user access in a way that maintains compliance with these regulations [26].

Q5: Our internet connection is unstable. What is the impact on cloud-based spectroscopy software, and what are the alternatives?

A5: Unstable internet will render cloud software inaccessible during outages and can cause severe lag or data transfer failures, disrupting research activities [24] [26]. In such scenarios, the most robust alternative is a hybrid approach. In this model, primary data analysis workflows run on an on-premises server to ensure uninterrupted operation. The cloud can then be used for specific tasks that benefit from its power, such as long-term data archiving, running occasional large-scale AI-driven analyses, or sharing finalized results with external partners, which can be scheduled for periods of stable connectivity [30].

The field of spectroscopy is undergoing a significant transformation, characterized by two powerful, interconnected trends: the rapid adoption of portable and handheld spectrometers and the development of increasingly intelligent, user-friendly software dashboards. This shift is moving analytical power from centralized laboratories directly into the field and onto the production floor, enabling real-time, data-driven decision-making. For researchers and drug development professionals, this evolution is not merely about convenience; it enhances high-throughput screening, facilitates on-site quality assurance, and accelerates research and development cycles. The global spectroscopy software market, valued at approximately USD 1.1 billion in 2024 and projected to grow at a compound annual growth rate (CAGR) of 9.1% to reach USD 2.5 billion by 2034, underscores the critical role of advanced data analysis in this ecosystem [1].

This technical support center is designed to help scientists navigate this new paradigm. It provides immediate troubleshooting for common hardware and software issues and serves as a knowledge base framed within the context of broader research on spectroscopy data analysis and software tools. The following sections offer detailed guides, protocols, and visual aids to ensure you can leverage these emerging technologies effectively and confidently.

Technical Support & Troubleshooting Guides

Frequently Asked Questions (FAQs)

Q1: What are the key advantages of modern spectroscopy software dashboards? Modern software platforms, such as OMNIC Paradigm, are designed to streamline analysis through user-friendly dashboard screens that provide quick access to recent work and data processing tools. Key features include a visual, drag-and-drop workflow creator, one-click library creation, and pre-defined reporting templates, all aimed at simplifying data acquisition, processing, and interpretation [31].

Q2: My portable spectrometer is producing a weak or inconsistent signal. What should I check? A weak signal is a common issue. First, verify that the laser power (for Raman) or light source is set to an appropriate level for your sample. Second, inspect and clean the optics and sampling window, as dust or fingerprints can scatter light. Finally, ensure the laser is properly focused and that the optical components are aligned according to the manufacturer's manual [32].

Q3: How can cloud-based spectroscopy software enhance collaboration? Cloud-enabled software like OMNIC Anywhere allows researchers to view, analyze, and share spectral files from any device or location. It provides a centralized platform (e.g., with free starting storage of 10 GB) where team members can comment on results, which is invaluable for geographically dispersed teams in drug development and research [31].

Q4: My spectra show excessive noise or broad, fluorescent backgrounds. What can I do? This is often due to sample fluorescence or ambient light interference. To mitigate this, you can optimize the integration time to improve the signal-to-noise ratio, use the instrument's background subtraction feature, and perform measurements in a darkened environment to reduce ambient light [32]. For Raman systems, the choice of excitation wavelength may also be a factor [33].

Q5: What routine maintenance is critical for portable spectrometers? Regular maintenance is essential for consistent performance. Key tasks include:

- Cleaning optics and the sampling window regularly with a lint-free cloth and optical-grade solution.

- Regular calibration using certified reference materials.

- Inspecting and replacing consumables like filters and O-rings as needed.

- Storing the instrument in a clean, dry environment when not in use [32].

Troubleshooting Common Hardware Issues

Table 1: Troubleshooting Portable and Handheld Spectrometers

| Problem | Possible Explanation | Recommended Solution |

|---|---|---|

| Weak/No Signal | Laser is off; dirty optics; misalignment; computer communication error [33] [32]. | Check laser is on; clean sampling window/optics; verify focus/alignment; restart software/check USB [32]. |

| Spectral Noise/Artifacts | Fluorescence; CCD saturation; ambient light; insufficient integration time [33] [32]. | Adjust integration time; use background subtraction; perform measurement in dark; defocus beam if saturated [32]. |

| Inaccurate Calibration | Calibration drift over time; software requires update [32]. | Recalibrate with certified reference materials; update instrument firmware/software [32]. |

| Peak Locations Incorrect | System is not calibrated or requires verification [33]. | Perform system verification/calibration using a known standard (e.g., verification cap, isopropyl alcohol) [33]. |

| Software "Unable to Find Device" | Communication driver error; incorrect software settings [33]. | Shut down and restart software; check device manager for hardware; reinstall drivers if necessary [33]. |

Troubleshooting Common Software and Data Analysis Issues

Table 2: Troubleshooting Spectroscopy Software and Data

| Problem | Possible Explanation | Recommended Solution |

|---|---|---|

| Poor Model Performance | Unprocessed data with outliers; suboptimal algorithm [34]. | Preprocess data (smoothing, baseline correction); remove outliers; test multiple regression algorithms [34]. |

| Difficulty Identifying Unknowns | Sample is a mixture; not in library [31]. | Use software's multi-component search (mixture analysis)功能; leverage functional group info for classification [31]. |

| Data Collaboration Challenges | Using non-cloud, localized software [31]. | Utilize cloud-based platforms (e.g., OMNIC Anywhere) for centralized data sharing and project management [31]. |

| Complex Data Interpretation | Overlapping peaks in complex samples [32]. | Compare with spectral libraries; apply multivariate analysis (e.g., PCA, PLS) [31] [32]. |

Experimental Protocols & Methodologies

Protocol: Non-Destructive Chlorophyll Content Prediction in Leaves Using a Portable NIR System

This protocol, adapted from a 2023 study, details the use of a custom IoT-based portable NIR device for predicting chlorophyll content, demonstrating the application of portable spectroscopy in agricultural science [34].

1. Hypothesis: The spectral data collected from a portable near-infrared spectrometer can be used to build a reliable predictive model for the chlorophyll content in Hami melon leaves, providing a non-destructive alternative to traditional methods.

2. Research Reagent Solutions & Materials

Table 3: Essential Materials for Portable Leaf Analysis

| Item | Function/Description |

|---|---|

| Portable NIR Spectrometer | The core sensor (e.g., AS7341 used in the study) for collecting spectral data in the field [34]. |

| Chlorophyll Meter | A validated device (e.g., Top Cloud-agri TYS-4N) for measuring reference SPAD values [34]. |

| Leaf Fixing Plate | Ensures consistent and equidistant positioning of the leaf relative to the sensor for reproducible data [34]. |

| Cloud Server/Data Platform | For data reception, storage, and processing using services like EMQX, Node-RED, and InfluxDB [34]. |

| Lint-Free Cloths | For cleaning the spectrometer's window to prevent data drift caused by contaminants. |

3. Methodology:

- Step 1: System Setup. Deploy the cloud data server using containerization (e.g., Docker) to handle MQTT communication, data flow orchestration (Node-RED), and storage (InfluxDB). Configure the portable spectrometer with its microcontroller (e.g., ESP8266-12F) to transmit data via WiFi to this server [34].

- Step 2: Sample Preparation & Data Collection. Select 100 or more leaf samples from plants at different growth stages. For each sample, first measure the chlorophyll content using the chlorophyll meter, avoiding major veins. Immediately after, place the leaf on the fixing plate and collect the spectral data using the portable device, ensuring the hardware acquisition parameters (e.g., acquisition times) are set via the web interface [34].

- Step 3: Data Preprocessing. Apply preprocessing algorithms to the raw spectral data to reduce noise. Methods can include smoothing, baseline correction, and normalization. Use algorithms like the Isolation Forest to detect and remove spectral outliers from the dataset [34].

- Step 4: Model Building & Validation. Split the data into training and prediction sets. Test a suite of regression algorithms (e.g., Linear Regression, Decision Tree, Support Vector Regression, Random Forest) on the data to identify the best-performing model. Evaluate model performance using metrics like Root Mean Square Error (RMSE) and the Coefficient of Determination (R²) for both the training and prediction sets [34].

The workflow for this experimental protocol is summarized in the diagram below:

Protocol: On-Site Material Verification with a Handheld Raman Spectrometer

This protocol outlines a standard operating procedure for verifying materials using a handheld Raman spectrometer, a common task in pharmaceutical and forensic fields.

1. Hypothesis: A handheld Raman spectrometer can quickly and accurately identify an unknown solid material by matching its spectral fingerprint against a built-in library.

2. Methodology:

- Step 1: Instrument Preparation. Ensure the spectrometer is fully charged. Turn on the device and allow it to initialize. Perform a quick verification check using a known standard (e.g., a verification cap or isopropyl alcohol) to confirm the wavelength calibration is accurate [33].

- Step 2: Sample Presentation. Place the solid sample on a clean, flat surface. For best results, the sample should have a flat and clean surface. If the sample is in a container, ensure the container is transparent at the laser wavelength and does not produce a interfering Raman signal.

- Step 3: Data Acquisition. Firmly press the spectrometer's sampling window against the sample. Ensure a good seal to block ambient light. Trigger a measurement. The integration time may be automatically set or may need to be manually optimized to obtain a spectrum with a good signal-to-noise ratio without saturating the detector [32].

- Step 4: Data Analysis and Reporting. The instrument's software will automatically compare the acquired spectrum against its spectral library and present a list of potential matches with confidence scores. Visually inspect the match between the sample spectrum and the top library hit to confirm the identification. Generate a report using the software's built-in template, which can include the sample spectrum, the matched library spectrum, and relevant sample information.

The Scientist's Toolkit: Key Technologies Driving the Trends

Portable Spectrometer Technologies

Table 4: Comparison of Portable Spectrometer Technologies

| Technology | Typical Applications | Example Products | Key Features |

|---|---|---|---|

| Handheld NIR | Pharmaceutical QA, agriculture, chemical ID [7]. | SciAps ReveNIR, Metrohm OMNIS NIRS [35] [7]. | Non-destructive; rapid material verification; minimal sample prep [35]. |

| Handheld Raman | Hazmat response, raw material ID, forensics [7]. | Metrohm TaticID-1064ST [7]. | Library-based ID; through-container testing; 1064 nm laser reduces fluorescence [7]. |

| Handheld LIBS | Alloy analysis, geochemistry, light elements (Li, Be) [35]. | SciAps Z-Series [35]. | Fast elemental analysis; particularly effective for light elements [35]. |

| Handheld XRF | Scrap metal sorting, mining, environmental monitoring [35]. | SciAps X-Series [35]. | Lab-quality elemental results in seconds; robust field design [35]. |

| Field-Portable Spectroradiometer | Environmental monitoring, geology, remote sensing [35]. | ASD Range [35]. | Full-range UV/Vis/NIR/SWIR (350-2500 nm); high signal-to-noise ratio [35]. |

Spectroscopy Software Platforms

Table 5: Comparison of Spectroscopy Software Platforms

| Software | Deployment | Key Features | Target Audience |

|---|---|---|---|

| OMNIC Paradigm (Thermo Fisher) | On-premises/Desktop [31]. | Drag-and-drop workflows; multi-component search; quantification tools; diagnostic tools [31]. | Lab managers, industrial scientists, educators [31]. |

| OMNIC Anywhere (Thermo Fisher) | Cloud-based [31]. | Cross-platform (PC, Mac, iOS, Android); data sharing & collaboration; 10GB+ free storage [31]. | Research teams, students, collaborative projects [31]. |

| Vernier Spectral Analysis | App-based (Windows, macOS, Chromebook) [36]. | Free app; simplified Beer's law & kinetics; designed for educational use [36]. | Students, educational institutions [36]. |

| WISER (Caltech) | On-premises/Desktop [37]. | Open-source; imaging spectroscopy analysis; modular plugin API; supports GEOTIFF/PDS [37]. | Researchers (Earth & planetary science) [37]. |

The integration of hardware and software, powered by AI, is creating a seamless workflow from measurement to insight, as shown below.

Applied Workflows: Advanced Techniques for Pharmaceutical and Biomedical Analysis

Troubleshooting Guides and FAQs

This section addresses common challenges researchers face when using spectroscopic techniques in pharmaceutical development.

Frequently Asked Questions

Q1: What is the first step when I obtain an Out-of-Specification (OOS) spectroscopic result? Your initial action must be to notify your supervisor and preserve the original data. A formal laboratory investigation must be initiated immediately. The first phase is an informal assessment where the analyst and supervisor review the testing procedure, calculations, instrumentation, and the notebooks containing the OOS result. A retest should not be performed until this initial investigation is complete [38].

Q2: Can I use an outlier test to invalidate an initial OOS result in chemical assays? The use of outlier tests is highly restricted. According to FDA guidance, outlier tests are inappropriate for chemical testing results and for statistically based tests like content uniformity and dissolution. An initial OOS result cannot be invalidated solely based on a statistical outlier test [38].

Q3: How many retests are permissible for an OOS result? The court provides explicit limitations on retesting. You cannot simply conduct two retests and base a release decision on the average of three tests. The investigation must determine if the original result was due to a laboratory error. The number of retests should be specified in a pre-defined, scientifically justified procedure, not determined ad-hoc [38].

Q4: What are the key trends in spectroscopy software that can enhance our lab's capabilities? Key trends include the integration of Artificial Intelligence (AI) and Machine Learning (ML) for improved data processing and predictive analytics, a shift towards cloud-based and remotely accessible solutions for collaboration, and the development of more intuitive user interfaces and automated workflows. There is also a growing emphasis on software for portable and handheld spectrometers for on-site analysis [1].

Q5: Our team struggles with overlapping spectra in chromatography. What solutions are available? Deconvolution software is designed specifically for this challenge. Tools and tutorials, such as those offered by CHROMacademy, are available to help "demystify deconvolution" and make sense of overlapping spectral data. These software solutions use algorithms to separate co-eluting peaks for accurate identification and quantification [39].

Troubleshooting Common Spectral Data Issues

Issue: Poor Signal-to-Noise Ratio in FT-IR Analysis of Proteins

- Potential Cause: Inefficient detector or atmospheric interference (water vapor, CO₂).

- Solution: Utilize a vacuum optics system, like that found in the Bruker Vertex NEO platform, which removes atmospheric contributions. Ensure the detector is appropriate for the spectral range and that the instrument is properly purged [7].

Issue: Inconsistent Results in High-Throughput Screening with Raman

- Potential Cause: Poor calibration or plate positioning errors.

- Solution: Implement a fully automated system like the PoliSpectra Raman plate reader, which integrates liquid handling and dedicated software to ensure consistency across 96-well plates. Establish a rigorous and frequent calibration schedule [7].

Issue: Data Security Concerns with Spectral Data Management

- Potential Cause: Using cloud-based systems that may not meet corporate security policies.

- Solution: Many organizations, especially in pharmaceuticals, prefer on-premises deployment of spectroscopy software. This provides direct control over sensitive data and helps meet stringent regulatory requirements like FDA 21 CFR Part 211 [1].

Spectroscopy Software Market and Application Data

The following tables summarize quantitative data on the spectroscopy software market and its primary applications, highlighting its critical role in the pharmaceutical industry.

Table 1: Global Spectroscopy Software Market Overview [1]

| Metric | Value / Share | Timeframe / Context |

|---|---|---|

| Market Size in 2024 | USD 1.1 Billion | Base Year 2024 |

| Projected CAGR | 9.1% | Forecast Period 2025–2034 |

| Market Size in 2034 | USD 2.5 Billion | Projected Value |

| Pharmaceutical Segment Share | 28.9% | Share of market in 2024 |

| Leading Deployment Model | On-Premises (USD 549.5 Million Revenue) | 2024 Revenue |

| U.S. Market Revenue | USD 310.2 Million | 2024 Revenue |

Table 2: Key Techniques and Applications in Drug Discovery & Development [40] [7]

| Therapeutic Modality | Key Spectroscopic Techniques | Primary Application in Development |

|---|---|---|

| Small Molecule Pharmaceuticals | NMR, FT-IR, UV-Vis | Solid polymorph identification, purity analysis, chemical stability |

| Protein Biologics & Vaccines | Fluorescence (A-TEEM), Raman, QCL Microscopy | Higher Order Structure (HOS) analysis, aggregation kinetics, stability |

| mRNA & Lipid Nanoparticles (LNPs) | Small-Angle Scattering, Atomic Force Microscopy | Particle structure, component location in complex systems |

| All Modalities | LC-MS, GC-MS | Impurity identification, quantification, and fate mapping |

Experimental Protocols for Pharmaceutical Analysis

Protocol 1: Impurity Fate and Purge Analysis Using Hyphenated LC-MS and Data Visualization

This protocol is critical for ensuring drug product safety by tracking the formation and removal of process-related impurities [41].

1. Objective: To identify, quantify, and track the "fate" of synthetic impurities through various synthesis and purification steps to ensure they are purged to acceptable levels.

2. Materials and Software:

- Luminata Software (ACD/Labs) or equivalent for impurity fate mapping [41].

- Liquid Chromatograph coupled to a Mass Spectrometer (LC-MS).

- Samples from each stage of the chemical synthesis process (starting materials, intermediates, reaction mixtures, and purified active pharmaceutical ingredient (API)).

3. Methodology:

- Step 1: Sample Acquisition: Collect representative samples from every unit operation in the synthetic process.

- Step 2: LC-MS Analysis: Analyze all samples using a consistent, validated LC-MS method to separate and detect components.

- Step 3: Data Integration: Import all chromatographic and spectral data into the visualization software. Link each spectral data set to the corresponding chemical structure and process step.

- Step 4: Impurity Mapping: Use the software's interactive process map to visualize the presence and concentration of each impurity at each stage.

- Step 5: Purge Calculation: The software will quantitatively determine the purge factor for each impurity, calculating its reduction over several process steps.

4. Data Interpretation: The resulting fate map provides a visual confirmation of which impurities are effectively removed and which may require additional control strategies. This is a core component of a Quality by Design (QbD) approach to process development [41].

Protocol 2: Protein Aggregation Study Using Quantum Cascade Laser (QCL) Microscopy

This protocol uses advanced infrared microscopy to monitor protein stability, a key concern for biologic therapeutics [7].

1. Objective: To identify and characterize protein aggregates within a formulated biologic drug product to assess its stability and shelf-life.

2. Materials:

- QCL-based Infrared Microscope (e.g., Bruker LUMOS II or ProteinMentor system).

- Attenuated Total Reflection (ATR) crystal.

- Sample of the biologic product (e.g., a monoclonal antibody formulation).

3. Methodology:

- Step 1: Sample Preparation: Place a small droplet (e.g., 2-10 µL) of the protein formulation onto the ATR crystal. Allow it to air-dry to form a thin film for analysis.

- Step 2: Spectral Acquisition: Using the microscope, acquire infrared spectra in the mid-infrared range (1800-1000 cm⁻¹). For imaging, define a region of interest and collect a hyperspectral image cube using the focal plane array detector.

- Step 3: Data Analysis: Analyze the amide I band (≈1650 cm⁻¹) for shifts in shape or position, which indicate changes in secondary structure. Use statistical analysis (e.g., principal component analysis) on the hyperspectral data to identify spatial regions with different spectral signatures, corresponding to aggregates.

4. Data Interpretation: The presence of aggregates will be indicated by distinct spectral features in the amide bands. The QCL microscope's speed and sensitivity allow for the detection of even small, localized aggregates that might be missed by bulk analysis techniques.

Experimental Workflow Visualization

The following diagrams illustrate the logical workflow for key spectroscopic analyses in pharmaceutical quality control and drug development.

Diagram 1: FDA OOS Investigation Workflow

Diagram 2: Impurity Fate Mapping Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents, Software, and Instrumentation for Spectroscopic Analysis

| Item / Solution | Function / Application | Example Products / Technologies |

|---|---|---|

| Ultrapure Water System | Preparation of mobile phases, buffers, and sample dilution to prevent interference. | Milli-Q SQ2 Series [7] |

| Spectrofluorometer | Protein stability analysis, vaccine characterization, and monitoring molecular interactions. | Edinburgh Instruments FS5 v2; HORIBA Veloci A-TEEM [7] |

| QCL Infrared Microscope | High-sensitivity detection of protein aggregates and chemical impurities in biopharmaceuticals. | Bruker LUMOS II; ProteinMentor system [7] |

| Data Visualization Software | Impurity fate mapping, linking chemical structures to analytical data for QbD. | ACD/Labs Luminata [41] |

| Cheminformatics Toolkit | Managing chemical libraries, virtual screening, and SAR analysis in drug discovery. | RDKit (Open-Source) [42] |

| Handheld Raman Spectrometer | On-site raw material identification and quality control in the warehouse or production line. | Metrohm TaticID-1064ST [7] |

Hybrid analytical techniques represent a powerful paradigm in modern instrumentation, combining the separation capabilities of chromatography with the identification and quantification powers of mass spectrometry (MS) and spectroscopy. These integrated systems, such as GC-MS, LC-MS, GC-IR, and LC-NMR, have revolutionized chemical analysis by enabling precise characterization of complex mixtures in fields ranging from pharmaceuticals and environmental monitoring to food safety and clinical diagnostics [43]. The core strength of these hybrid platforms lies in their synergistic operation: chromatography efficiently separates individual components in a mixture, while the coupled spectroscopic or spectrometric detector provides detailed structural information for each separated analyte [43].

The global market for spectroscopy software, valued at approximately $1.1 billion in 2024 and projected to grow at a compound annual growth rate (CAGR) of 9.1% through 2034, underscores the critical importance and expanding adoption of these technologies [1]. This growth is largely driven by technological advancements, including the integration of artificial intelligence (AI) and machine learning (ML) into spectroscopy software, which enhances data processing, pattern recognition, and predictive analytics capabilities [1]. Furthermore, the pharmaceutical industry represents a major end-user, accounting for 28.9% of the spectroscopy software market share in 2024, highlighting its essential role in drug discovery and quality control [1].

Technical Support Center: Troubleshooting Guides and FAQs

GC-MS Troubleshooting Guide

Common Problem: No Peaks or Loss of Sensitivity

- Potential Cause and Solution: Gas leaks can cause sensitivity loss and sample contamination. Systematically check for leaks at the gas supply filter, shutoff valves, EPC connections, weldment lines, and column connectors using a leak detector. Retighten connections or replace cracked components as needed [44].

- Potential Cause and Solution: A blocked injection needle or syringe can prevent the sample from reaching the detector. Flush the needle or replace it if necessary. Also, verify that the auto-sampler is functioning correctly and that the sample is properly prepared [44].

- Potential Cause and Solution: Check the column for cracks, which would prevent analytes from reaching the detector. Ensure the MS detector flame is lit (if applicable) and that all gases are flowing at the correct rates [44].

Common Problem: High System Pressure

- Potential Cause and Solution: A blockage in the chromatographic column is a frequent culprit. Attempt to backflush the column with a strong organic solvent. If pressure remains high, replace the guard column and/or the analytical column [45].

- Potential Cause and Solution: The system's in-line filter may be blocked. Replace the filter to restore normal flow and pressure [45].

LC-MS Troubleshooting Guide

Common Problem: Baseline Noise or Drift

- Potential Cause and Solution: Check for loose fittings throughout the system and tighten them gently. Also, inspect pump seals and replace them if they appear worn out [45].

- Potential Cause and Solution: Air bubbles in the mobile phase or system can cause significant noise and drift. Degas the mobile phase thoroughly and purge the LC system to remove air [45].

- Potential Cause and Solution: A contaminated or aging detector flow cell can introduce noise. Clean the flow cell with a strong organic solvent. If the problem persists, the detector lamp may be low on energy and require replacement [45].

Common Problem: Peak Tailing or Broadening

- Potential Cause and Solution: Active sites or a blockage within the chromatographic column can cause peak tailing. Reverse-flush the column with a strong solvent or replace it. Using a different stationary phase chemistry may also help [45].

- Potential Cause and Solution: Inappropriate mobile phase pH or composition can lead to poor peak shape. Prepare a fresh mobile phase with the correct pH and buffer concentration [45].

- Potential Cause and Solution: An excessively long or wide internal diameter tubing between the column and the detector can broaden peaks. Minimize this post-column volume by using shorter, narrower tubing [45].

Common Problem: Fluctuating or Unstable Pressure

- Potential Cause and Solution: Air trapped in the pump or check valve malfunction can cause pressure fluctuations. Degas all solvents thoroughly, purge the pump, and inspect or replace the check valves [45].

- Potential Cause and Solution: A partial blockage in the injector or a failing pump seal can cause pressure instability. Flush the injector and tubing. If needed, replace the pump seal [45].

FT-IR Spectroscopy Troubleshooting Guide

Common Problem: Noisy Spectra

- Potential Cause and Solution: Instrument vibration is a common source of noise. FT-IR spectrometers are highly sensitive to physical disturbances from nearby equipment like pumps or general lab activity. Ensure the instrument is placed on a stable, vibration-free surface [10].

Common Problem: Negative Absorbance Peaks

- Potential Cause and Solution: A dirty Attenuated Total Reflection (ATR) crystal is the most likely cause. Contaminants on the crystal surface can scatter light and produce anomalous peaks. Clean the crystal carefully according to the manufacturer's instructions and collect a fresh background spectrum [10].

Common Problem: Distorted or Inaccurate Spectral Features

- Potential Cause and Solution: The analyzed surface may not be representative of the bulk material (e.g., due to surface oxidation or additives). For reliable results, compare spectra from the material's surface with spectra collected from a freshly cut interior section [10].

- Potential Cause and Solution: Using incorrect data processing modes can distort spectral outputs. For example, when using diffuse reflection, data should be processed in Kubelka-Munk units rather than absorbance for an accurate representation [10].

General Mass Spectrometry Troubleshooting

Common Problem: Loss of Sensitivity or Signal

- Potential Cause and Solution: Source contamination is a frequent issue in MS. Regularly clean the ion source according to the instrument manufacturer's scheduled maintenance protocol. Using high-quality, clean solvents and samples can prevent premature contamination [44].

- Potential Cause and Solution: Incorrect tuning or calibration can lead to sensitivity loss. Perform routine mass calibration and tuning using the recommended calibration standards to ensure the instrument is operating optimally [46].

Essential Research Reagent Solutions

The following table details key consumables and reagents critical for ensuring the reliability and reproducibility of experiments using hybrid analytical techniques.

Table 1: Essential Research Reagents and Consumables

| Item | Function in Hybrid Analysis |

|---|---|

| High-Purity Chromatography Vials and Caps | Designed for precision and reliability in demanding applications, they prevent sample contamination and evaporation, ensuring accuracy and repeatability in GC and LC analyses [43]. |

| Ultrapure Water (e.g., from Milli-Q SQ2 systems) | Essential for sample preparation, buffer and mobile phase preparation, and sample dilution in LC-MS. Guarantees a contamination-free baseline, which is critical for sensitive detection [7]. |

| Leak-Tight Column Connectors | A common source of gas leaks in GC-MS; using high-quality, properly installed connectors is vital for maintaining system integrity, sensitivity, and accurate quantification [44]. |

| Certified Standard Solutions | Used for instrument calibration, method development, and quantification. Their purity and certification are fundamental for achieving accurate and legally defensible results [47]. |

| SPME Fibers & HPLC-Grade Solvents | Solid-phase microextraction (SPME) fibers are used for sample extraction and concentration (e.g., in HS-SPME-GC-MS). HPLC-grade solvents ensure clean baselines and consistent chromatographic performance [47] [45]. |

Quantitative Data on Techniques and Applications

The following table summarizes quantitative data and key application areas for prominent hybrid techniques, highlighting their specific strengths.

Table 2: Hybrid Technique Quantitative Data and Applications

| Technique | Key Performance Metric | Primary Application Areas |

|---|---|---|

| GC-MS [43] | High separation efficiency for volatile compounds. | Environmental monitoring, forensic analysis, aroma compound profiling [43] [47]. |

| LC-MS [43] | Superior for analyzing thermally unstable compounds. | Pharmaceutical development, proteomics, metabolomics, food safety [43] [46]. |

| Orbitrap MS [46] | Mass resolution >100,000 at m/z 35,000. | Detailed molecular characterization in proteomics and structural biology [46]. |

| GC-IR [43] | Effective identification of functional groups. | Petrochemical analysis (e.g., gasoline components), environmental contaminant identification [43]. |

| LC-NMR [43] | Provides detailed molecular structural information. | Drug discovery, natural product research, metabolomics [43]. |

| Spectroscopy Software Market [1] | USD 1.1 Billion (2024), CAGR of 9.1% (2025-2034). | Ubiquitous across all sectors using spectroscopic analysis, especially pharmaceuticals [1]. |

Experimental Protocols for Hybrid Analysis

Protocol: Food Quality Assessment via UHPLC-MS/MS and GC-MS

This protocol is adapted from food analysis research for profiling compounds like amino acids and aroma volatiles [47].

1. Sample Preparation:

- For UHPLC-MS/MS (Amino Acids): Homogenize the food sample (e.g., honey or beef). Perform a solid-liquid extraction using a suitable solvent like methanol or water. Centrifuge the mixture and filter the supernatant through a 0.2 µm membrane prior to analysis.

- For GC-MS (Volatile Aroma Compounds): Use Headspace Solid-Phase Microextraction (HS-SPME). Place the sample in a sealed vial and incubate at a controlled temperature. Expose a coated SPME fiber to the sample headspace to adsorb volatile compounds, then desorb the fiber directly in the GC injector.

2. Instrumental Analysis:

- UHPLC-MS/MS Conditions:

- Column: C18 reversed-phase column (e.g., 2.1 x 100 mm, 1.7 µm).

- Mobile Phase: (A) Water with 0.1% formic acid; (B) Acetonitrile with 0.1% formic acid.

- Gradient: Ramp from 5% B to 95% B over 10-15 minutes.

- Mass Spectrometer: Triple quadrupole MS operated in Multiple Reaction Monitoring (MRM) mode for high sensitivity and selective quantification [47].

- GC-MS Conditions:

- Column: Mid-polarity fused silica capillary column (e.g., 30 m x 0.25 mm ID, 0.25 µm film).

- Temperature Program: Ramp from 40°C (hold 2 min) to 250°C at 10°C/min.

- Mass Spectrometer: Electron ionization (EI) source at 70 eV; scan range m/z 40-450 [47].

3. Data Processing:

- Identify compounds by comparing mass spectra to reference libraries (NIST for GC-MS) and by matching retention times and fragmentation patterns with authentic standards.

- Use chemometric software tools (e.g., OPLS-DA) to build predictive models that correlate analyte profiles (e.g., amino acids) with qualities like geographic origin or aroma [47].

Protocol: Contaminant Screening in Food Matrices using HPLC-MS/MS

This method is designed for detecting trace-level contaminants like antimicrobials in complex food matrices [47].

1. Sample Extraction and Cleanup (QuEChERS):

- Homogenize the sample (e.g., lettuce).

- Extract with acetonitrile and partition salts (magnesium sulfate, sodium chloride).

- Perform a cleanup step using dispersive Solid-Phase Extraction (d-SPE) with sorbents like PSA and C18 to remove interfering matrix components.

2. Instrumental Analysis:

- HPLC-MS/MS Conditions (QTRAP MS):

- Column: C18 reversed-phase column.

- Mobile Phase: (A) Water and (B) Methanol, both with 5mM ammonium formate.

- Gradient: Optimize for the target analytes.

- Mass Spectrometer: Triple quadrupole-linear ion trap hybrid system (QTRAP). Use MRM for quantification and enhanced product ion (EPI) scans for confirmatory library matching.

- Validation: The method should be validated for parameters including specificity, linearity, recovery, precision, and limits of detection (LOD) and quantification (LOQ), which can be as low as 0.8 µg·kg⁻¹ for certain analytes [47].

Workflow Diagram for Systematic Troubleshooting

The following diagram outlines a logical, step-by-step workflow for diagnosing common issues in hybrid analytical systems, integrating checks for both chromatographic and detection components.

Systematic Troubleshooting Workflow

Advancements in Software and Data Analysis

The integration of advanced software is paramount for leveraging the full potential of hybrid techniques. Key trends include the incorporation of Artificial Intelligence (AI) and Machine Learning (ML) to enhance data processing speed, enable sophisticated pattern detection, and provide predictive analytics for spectral interpretation [1]. There is also a significant shift towards cloud-based and remotely accessible software solutions, which facilitate collaboration among geographically dispersed research teams and provide scalable computing resources for handling large spectral datasets [1].

The development of open-source software platforms, such as the Workbench for Imaging Spectroscopy Exploration and Research (WISER), addresses the need for flexible, modifiable analysis tools that can be customized for specific research requirements in imaging spectroscopy [37]. Furthermore, software is increasingly focusing on user accessibility, featuring intuitive dashboards, automated workflows, and customizable reporting to make powerful analytical tools available to a broader range of users, including non-specialists [1]. These advancements collectively make data analysis more efficient, collaborative, and accessible, directly supporting the complex data interpretation needs of researchers using hybrid MS, chromatography, and spectroscopy systems.

Technical Support Center: Troubleshooting Guides and FAQs

This section provides practical solutions for common issues encountered in FT-IR and QCL microscopy, directly supporting research in spectroscopy data analysis.

Frequently Asked Questions (FAQs)

Q: What is the core difference between FT-IR and QCL microscopy?

- A: FT-IR spectroscopy uses a broadband thermal source to collect a full infrared spectrum at once, offering a wide spectral range but requiring sensitive, often cooled detectors. QCL microscopy uses a tunable laser source that emits at specific wavelengths, providing a much higher spectral power density that enables faster imaging speeds and the use of room-temperature detectors, but over a more limited spectral range [48].

Q: Can FT-IR and QCL technologies be combined?

- A: Yes, modern instrumentation platforms like the HYPERION II now seamlessly integrate both FT-IR and QCL (Infrared Laser Imaging) in a single instrument. This allows researchers to leverage the full spectral range of FT-IR for initial analysis and then use the high speed of QCL for targeted chemical imaging [49].

Q: What are "coherence artefacts" in QCL imaging and how can they be mitigated?

- A: Coherence artefacts, such as fringes and speckles in IR images, are physical interference patterns caused by the highly coherent nature of laser light used in QCL systems. They can obscure chemical information. Bruker's ILIM technology addresses this with a patented hardware-based spatial coherence reduction method to collect artifact-free chemical images [49] [48].

Q: My FT-IR spectrum has strange, sharp negative peaks. What is the likely cause?

Q: Why does my spectrum of a plastic sample look different when I analyze the surface versus a freshly cut interior section?

- A: ATR is a surface-sensitive technique. Differences between surface and bulk spectra can be due to surface oxidation, migration of additives (like plasticizers) to or from the surface, or other effects from sample processing. Analyzing both surfaces provides valuable chemical insight into these heterogeneities [50].

Troubleshooting Common Experimental Issues

Problem 1: Noisy or Low-Intensity Spectra in FT-IR

- Potential Cause: Inadequate purging or contamination of the optical path.

- Solution: Ensure the instrument is properly purged with dry, CO₂-free air to minimize spectral contributions from atmospheric water vapor and CO₂. For instruments like the Bruker Vertex NEO, utilizing its vacuum optical path can effectively eliminate these interferences [7].

Problem 2: Distorted Peaks in Diffuse Reflection Measurements

- Potential Cause: Incorrect data processing.