Type Standardization for OES Spectrometers: A Complete Guide to Enhanced Accuracy and Troubleshooting

This article provides a comprehensive guide to Type Standardization, a critical calibration procedure for Optical Emission Spectrometry (OES) used to achieve superior analytical accuracy.

Type Standardization for OES Spectrometers: A Complete Guide to Enhanced Accuracy and Troubleshooting

Abstract

This article provides a comprehensive guide to Type Standardization, a critical calibration procedure for Optical Emission Spectrometry (OES) used to achieve superior analytical accuracy. Aimed at researchers and scientists, it covers the foundational principles of why OES requires this specialized calibration to correct for matrix effects and instrumental drift. The guide details a step-by-step methodological approach for implementation, explores advanced troubleshooting for persistent deviations, and validates the procedure against other calibration methods. By synthesizing these core intents, the article serves as an essential resource for professionals seeking to ensure data integrity and optimize spectrometer performance in demanding analytical environments.

Understanding Type Standardization: Why Basic OES Calibration Isn't Always Enough

The Principle of Relative Measurement in Spark OES

Optical Emission Spectrometry (OES) employing a spark source is a dominant technique for the direct elemental analysis of solid metals. A foundational concept that underpins this method is its reliance on relative measurement rather than absolute measurement. Unlike analytical techniques that measure absolute quantities, Spark OES instruments determine the concentration of an element in an unknown sample by comparing the intensity of its characteristic spectral lines to the intensities measured from reference materials of known composition [1]. This principle is crucial because the absolute intensity of light emitted from the spark-induced plasma is susceptible to numerous instrumental and environmental variables. The instrument's detector measures light intensities from the plasma at the sample surface, and the software then compares these spectral line intensities with those from known elemental concentrations to return useful quantitative information to the user [1]. The initial calibration, which establishes the relationship between light intensity and elemental concentration, is therefore not merely a preliminary step but an essential process that makes the instrument practically useful for analysis [1].

The reliance on relative measurement makes the traceability of the calibration to certified reference materials (CRMs) a non-negotiable aspect of quality assurance. As noted by industry experts, OES performance is directly tied to the quality of the standards used, as "results can ONLY be as good as certified standards that were used in the initial calibrations" [2]. This establishes a clear chain of comparability from the unknown sample back to national or international measurement standards through the CRMs.

The Spark OES Analytical Process

The process of relative measurement in Spark OES unfolds through a sequence of physical and electronic events, each critical to the final analytical result.

Physical and Electronic Workflow

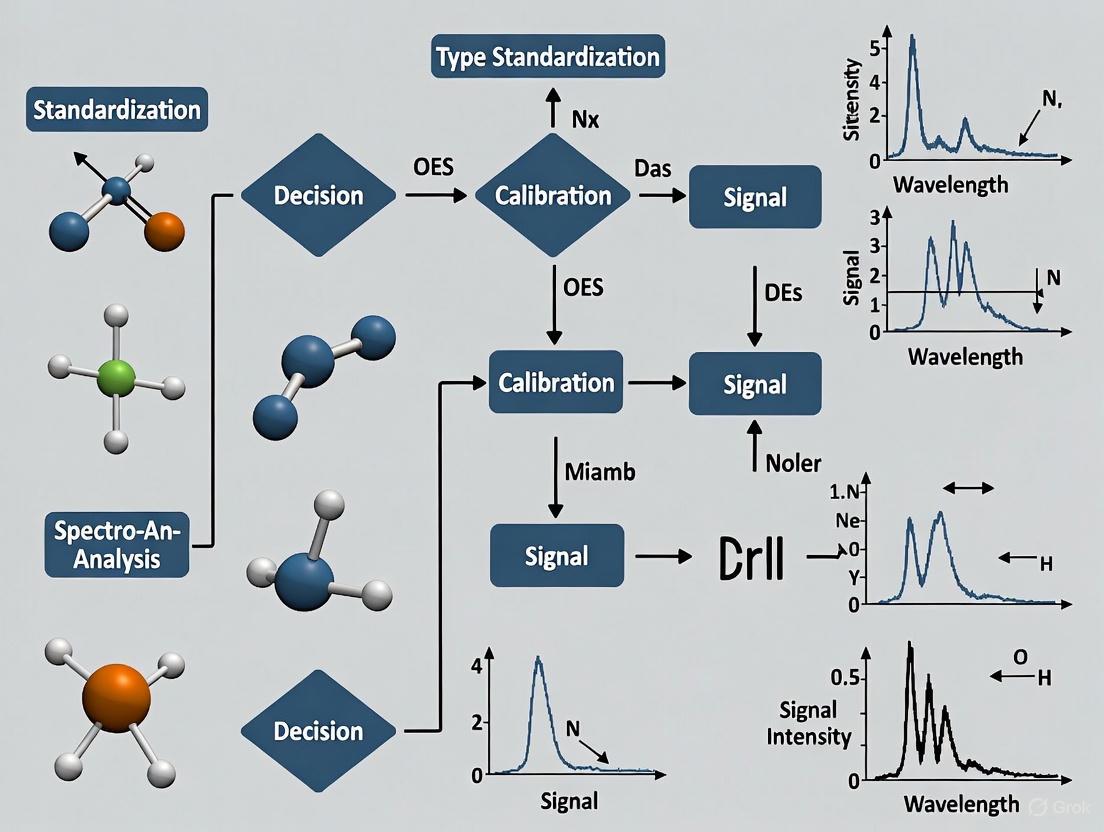

The analytical sequence begins when a high-energy spark discharge is applied to the surface of the metal sample, causing localized vaporization of the material. This vaporized material, consisting of atoms and ions, is then excited within the spark plasma [3]. As these excited atoms and ions return to lower energy states, they emit element-specific radiation. The collected light is passed to a spectrometer, where it is dispersed into its constituent wavelengths [3]. Within the spectrometer, the intensity of selected characteristic spectral lines for each element is measured using detectors such as CCDs or photomultiplier tubes (PMTs) [3]. Finally, the software converts these measured light intensities into elemental concentrations by comparing them to a stored set of calibration curves derived from reference materials [3]. The following workflow diagram illustrates this integrated process:

The Critical Role of Calibration Curves

The calibration curve represents the mathematical heart of the relative measurement principle. It transforms the raw analytical signal—photon intensity at a specific wavelength—into the required analytical result, which is elemental concentration. During initial calibration, a series of certified reference materials with known concentrations are sparked, and the intensities at specific wavelengths for each element are recorded. A curve is then plotted for each element, establishing the functional relationship between intensity and concentration [3]. This stored calibration model is subsequently used to convert the intensity measured from an unknown sample into a concentration value. The proportional relationship between radiation intensity and elemental concentration in the sample is fundamental to this process, though this relationship must be regularly verified against standards to maintain its validity over time [3] [1].

Calibration Methodologies and Protocols

Maintaining the accuracy of Spark OES measurements requires a structured approach to calibration, encompassing initial setup, routine verification, and matrix-specific corrections.

Calibration Hierarchy and Workflow

A systematic calibration strategy ensures that measurements remain traceable and accurate throughout the instrument's operational lifetime. The process involves multiple tiers of calibration activities, from fundamental setup to application-specific adjustments, as illustrated below:

Types of Calibration Procedures

Table 1: Summary of Spark OES Calibration Methods

| Method | Purpose | When Performed | Key Requirements |

|---|---|---|---|

| Initial Calibration | Establish fundamental relationship between intensity and concentration [1]. | New instrument installation; after major maintenance [1]. | Multiple Certified Reference Materials (CRMs) covering expected concentration ranges [2]. |

| Drift Correction | Correct for minor changes in instrumental response over time [1]. | Daily or before each use; typically automated [1]. | Stable, homogeneous drift correction standards provided by instrument manufacturer [2]. |

| Type Standardization | Improve accuracy for specific alloy types that deviate from the general calibration [1]. | When analyzing alloys with compositions differing from initial calibration standards [1]. | CRM or proven sample very similar in composition to the test sample [1]. |

Detailed Experimental Protocol: Type Standardization

Type standardization is an advanced calibration procedure used when highest accuracy is required for specific alloy compositions. The following protocol provides step-by-step guidance:

Principle

Type standardization fine-tunes the existing calibration for a specific alloy composition by applying a correction factor based on a single, closely-matched reference material. It is not a replacement for fundamental calibration but rather a refinement applied on top of a valid existing calibration [1].

Prerequisites

- A valid and recently verified initial calibration must be established on the instrument [1].

- A certified reference material (CRM) or proven reliable sample whose composition is very close to the unknown sample to be analyzed must be available [1].

- The reference material should ideally match the unknown sample in both chemical composition and metallurgical structure.

Procedure

- Recalibration Verification: Ensure that a full recalibration has been performed immediately prior to beginning the type standardization procedure [1].

- Reference Material Analysis: Spark the selected type standardization reference material repeatedly (a minimum of 3-5 repetitions) to establish a precise intensity measurement.

- Result Comparison: Compare the average measured concentration values for each element to the certified values of the reference material.

- Correction Factor Calculation: The instrument software automatically calculates the difference between measured and certified values, determining a correction factor (bias) for each element.

- Application of Correction: The correction factors are stored and applied to subsequent analyses of unknown samples that are similar in composition to the type standardization sample [1].

Limitations and Considerations

- Specificity: A type standardization is valid only for unknown materials that are similar in composition to the standardization sample used [1].

- Scope: It is not an alternative to basic calibration and cannot correct for large systematic deviations across different material types [1].

- Management: Laboratories must maintain and apply different type standardizations for every different alloy or composition they need to measure [1].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Essential Materials for Spark OES Calibration and Analysis

| Material/Reagent | Function | Critical Specifications |

|---|---|---|

| Certified Reference Materials (CRMs) | Provide the known concentration values for establishing and verifying calibration curves; ensure traceability [2]. | Certified to national/international standards; matrix-matched to samples; documented uncertainty. |

| Drift Correction Standards | Monitor and correct for short-term instrumental drift in signal response [1] [2]. | Highly homogeneous; stable over time; provided or approved by instrument manufacturer. |

| Control Samples | Independently verify calibration stability and analytical accuracy between CRM measurements [1]. | Known composition; similar to production samples; homogeneous and stable. |

| Type Standardization Samples | Fine-tune calibration for specific alloy compositions to improve analytical accuracy [1]. | Composition very close to specific test samples; certified or proven reliability. |

Advanced Calibration Techniques: Multi-Energy Calibration

Recent research has explored innovative calibration strategies to address matrix effects in complex samples. Multi-Energy Calibration (MEC) is a promising approach that can be adapted for plasma-based spectrometry techniques [4] [5]. Unlike traditional external calibration that uses a single wavelength per element and multiple standards, MEC utilizes multiple emission lines (wavelengths) for each element but requires only two calibration solutions per sample [4]. In this method, one solution (S1) consists of 50% sample and 50% standard solution containing the analytes, while the second solution (S2) consists of 50% sample and 50% blank solution [4]. The calibration curve is constructed by plotting signals from multiple wavelengths of the analyte in S1 against signals from the same wavelengths in S2 [4].

The significant advantage of MEC is its inherent ability to identify and mitigate spectral interferences, as affected wavelengths appear as outliers on the calibration plot [5]. This technique has demonstrated improved accuracy (with recoveries of 80-105%) for determining essential minerals in complex matrices like animal feeds when compared to traditional external calibration [5]. While MEC has been more frequently applied with ICP-OES due to the richer spectrum it generates, its use with Spark OES represents a potential avenue for future methodological development in metal analysis, particularly for challenging applications where matrix effects pose significant problems.

Identifying the Limits of Standard Calibration with CRMs

Optical Emission Spectrometry (OES) is an industry-standard technique for the elemental analysis of a wide range of metals and alloys, providing rapid elemental analysis of solid metallic samples with exceptional accuracy and precision [6]. The technique operates on the principle of relative rather than absolute measurements—the detector measures the intensity and wavelength of light emitted after a localized plasma is created on the sample by a spark, but these raw measurements alone cannot determine chemical composition without proper calibration [7].

Certified Reference Materials (CRMs) serve as the foundational link between these measured intensities and quantitative chemical analysis. Officially defined as "a material or substance of sufficient homogeneity for which one or more property values are sufficiently well established to be used for the calibration of measuring instruments, the assessment of measurement methods or for assigning property values" [7], CRMs create essential calibration curves through extensive databases based on numerous samples and tests. These calibration curves enable OES instruments to interpret detected results and deliver accurate quantitative chemical analysis, making them indispensable for ensuring measurement result accuracy and reliability [7].

Theoretical Limits of Standard CRM-Based Calibration

Fundamental Constraints in CRM Methodology

Despite their critical role in OES calibration, CRM-based approaches encounter several inherent limitations that constrain their effectiveness in certain analytical scenarios. These constraints originate from methodological, material, and instrumental factors that can compromise measurement accuracy if not properly addressed.

Statistical Reliability Boundaries: The calibration of a spectrometer should utilize as many CRMs as possible to reduce statistical variation, with the uncertainty of the calibration curve ideally not exceeding ± 2SR, where SR represents statistical reliability [7]. This statistical reliability can be calculated using the formula provided in established guidelines, but achieving optimal statistical performance requires extensive CRM resources that may not always be available in routine laboratory settings.

Matrix and Structural Limitations: A significant limitation arises from the synthetic manufacturing of most CRMs, which cannot guarantee correspondence to the composition or structure of actual production samples being analyzed [8]. This disparity becomes particularly problematic when analyzing exotic alloys that deviate strongly from the matrix material used in CRM production, leading to measurement inaccuracies that standard calibration cannot correct [8].

Instrumental Drift Effects: OES instruments are engineered for extreme sensitivity to detect elements at very low detection limits, but this sensitivity makes them susceptible to environmental parameters over the mid to long term, causing results to 'drift' over time and reducing accuracy [1]. While modern OES systems include features to monitor and correct for drift, this fundamental instrumental characteristic remains a constraint for standard CRM-based calibration.

Quantitative Limitations of Standard Calibration

Table 1: Quantitative Limitations of Standard CRM-Based Calibration

| Limitation Factor | Impact on Measurement Accuracy | Typical Manifestation |

|---|---|---|

| CRM Uncertainty | Baseline inaccuracy in calibration | Variation based on testing labs involved in certification process [7] |

| Exotic Alloy Deviation | Strong deviation from actual values | Measured values significantly different from true composition [8] |

| Structural Mismatch | Inaccurate representation of sample | Discrepancy between synthetic CRM structure and actual sample structure [8] |

| Instrument Drift | Progressive accuracy degradation | Slow change in instrument sensitivity over time [8] [1] |

Experimental Protocols for Identifying Calibration Limits

Protocol 1: CRM Verification and Acceptance Testing

Objective: To establish statistically valid acceptance criteria for CRM measurements and identify when standard calibration exceeds operational limits.

Materials and Equipment:

- Certified Reference Materials (CRMs) with documented uncertainties

- Properly calibrated OES spectrometer

- Control samples of known composition

- Data recording and statistical analysis software

Methodology:

- Calibrate the OES spectrometer using multiple CRMs according to manufacturer specifications and documented procedures [7].

- Measure each CRM repeatedly (minimum 6 measurements) to establish baseline intensity values and statistical distributions.

- Calculate the statistical reliability (SR) using the established formula for uncertainty assessment.

- Determine acceptance limits as ± 2SR from the certified values based on the statistical reliability calculation.

- Compare measured values against the certified values using the defined acceptance criteria.

- Investigate any instances where CRM measurements fall outside acceptance limits to determine root causes (e.g., wrong sample loading, incorrect method application, instrumental issues) [7].

Interpretation: Consistent deviations beyond ± 2SR indicate fundamental limitations in the standard calibration approach for the specific material type being analyzed and signal the need for supplemental standardization procedures.

Protocol 2: Control Sample Validation Procedure

Objective: To validate calibration stability using control samples and detect instrumental drift or matrix-related inaccuracies.

Materials and Equipment:

- Control samples comparable to production materials

- Certified Reference Materials for baseline establishment

- Sample preparation equipment (grinders, milling machines)

Methodology:

- Ensure the OES instrument is properly calibrated with CRMs before beginning control sample measurements.

- Prepare control samples using standardized preparation techniques (grinding or milling) to ensure consistent surface conditions [6].

- Measure control samples at least ten times to establish average values and standard deviations for key elements [1].

- Implement regular control sample measurements at predetermined intervals (e.g., after every 100 production samples) or when results are questionable.

- Record all control sample measurements for statistical process control and quality assurance purposes.

- Evaluate whether control sample measurements remain within established tolerance limits of the determined values [7].

Interpretation: Systematic deviations in control sample measurements from expected values indicate calibration drift or matrix-specific inaccuracies that standard CRM-based calibration cannot adequately address, necessitating type standardization.

Uncertainty Quantification Methods

For laboratories accredited to DIN EN ISO/IEC 17025, determining measurement uncertainty is mandatory for retaining accreditation [7]. Two established approaches exist for quantifying uncertainty in OES measurements:

Bottom-up Method (GUM): This method follows the Guide to the Expression of Uncertainty in Measurement and requires deep understanding of spark emission spectroscopy and statistical mathematics. While comprehensive, this approach is complex and resource-intensive to implement properly [7].

Top-down Method: This practical alternative relies on measurement results of CRMs and incorporates sample variables such as extraction method from the melt and sample preparation. The method is described in the Nordtest report TR537 "Handbook for calculating of uncertainty in environmental laboratories" and provides a more accessible approach for routine laboratory implementation [7].

Visualization of Calibration Limits and Solutions

Diagram 1: OES Calibration Limits and Correction Workflow. This workflow illustrates the systematic process for identifying limitations in standard CRM-based calibration and implementing type standardization to address specific accuracy deviations.

Research Reagent Solutions for Calibration Studies

Table 2: Essential Research Materials for OES Calibration Studies

| Reagent/Material | Specification Requirements | Research Application |

|---|---|---|

| Certified Reference Materials (CRMs) | Documented uncertainty from certified providers (e.g., NIST, Brammer Standards) [2] | Establish primary calibration curves and reference values [7] |

| Control Samples | Composition similar to production materials; precise homogeneity [7] | Verify calibration stability and detect instrument drift between CRM measurements [1] |

| High-Purity Acids | Trace metal grade (e.g., Suprapur, Merck) [9] | Sample preparation and digestion without introducing elemental contaminants |

| Monoelemental Calibration Solutions | SI-traceable with minimal uncertainties (e.g., TraceCERT) [10] | Method validation and specific element verification |

| Type Standardization Samples | Proven reliable specimens with composition closely matching test materials [8] [1] | Correct matrix-specific deviations in standard calibration |

Standard calibration with CRMs provides the essential foundation for accurate OES analysis but encounters significant limitations when confronted with exotic alloys, structural mismatches between CRMs and production samples, and inevitable instrumental drift. These constraints manifest as measurable deviations beyond established statistical reliability boundaries (± 2SR), signaling the need for supplemental approaches.

The experimental protocols outlined provide systematic methodologies for identifying these calibration limits through CRM verification testing, control sample validation, and proper uncertainty quantification. When standard calibration reaches its operational boundaries, type standardization serves as a targeted correction—not replacement—for basic calibration, specifically addressing matrix-specific inaccuracies for similar materials while maintaining traceability to primary CRMs [8] [1]. This hierarchical approach ensures optimal analytical accuracy while acknowledging the inherent constraints of standardized reference materials in practical metallurgical analysis.

Optical Emission Spectrometry (OES) is a dominant analytical technique for determining the elemental composition of materials, particularly in metallurgy, pharmaceuticals, and material science. Despite its speed and accuracy, OES performance can degrade without proper maintenance, verification, and recalibration [8] [11]. Type Standardization is a critical calibration procedure applied after initial calibration with Certified Reference Materials (CRMs) to correct for specific inaccuracies, particularly when analyzing materials with compositions that deviate from the original calibration standards [8]. This application note details the key indicators that signal the necessity for Type Standardization, providing researchers with clear diagnostic protocols and methodologies for its implementation.

Key Indicators for Type Standardization

Regular monitoring of specific performance metrics can pre-empt analytical inaccuracies. The following table summarizes the primary indicators that necessitate Type Standardization.

Table 1: Key Indicators Requiring Type Standardization

| Indicator Category | Specific Manifestations | Impact on Analytical Accuracy |

|---|---|---|

| Persistent Deviations | Discrepancies between OES results and values from CRM or control samples, despite a valid initial calibration [8] [11]. | Leads to incorrect composition analysis, affecting product quality and compliance. |

| Analysis of Exotic Alloys | Analysis of alloys that deviate strongly from the matrix of the CRMs used for initial calibration [8]. | Standard calibration models fail, resulting in significant concentration errors for key elements. |

| Sample Matrix Mismatch | Synthetic CRMs do not correspond to the actual composition or metallurgical structure of the production samples being analyzed [8] [11]. | Causes systematic errors due to differences in how the sample and standard materials respond to excitation. |

| Instrument Drift | Slow, continuous change in instrument sensitivity over time, observed as a gradual shift in results for stable control samples [8]. | Results in a progressive loss of accuracy, potentially going unnoticed without rigorous control procedures. |

| Inadequate Calibration Accuracy | The standard calibration does not fulfill the required accuracy level for the specific application, even without obvious drift [11]. | The instrument is fundamentally incapable of meeting the required precision and accuracy specifications. |

Experimental Protocol for Identifying Deviations and Drift

Objective: To systematically monitor OES performance and quantitatively determine the need for Type Standardization.

Materials and Reagents:

- Certified Reference Materials (CRMs)

- Well-characterized in-house control samples

- OES spectrometer with calibrated settings

Methodology:

- Daily Control Sample Analysis: Analyze a minimum of two control samples with known composition at the beginning and end of each analytical sequence [8].

- Data Recording: Record the measured concentrations for all elements of interest.

- Deviation Calculation: Calculate the percentage deviation of the measured values from the accepted reference values for each control sample.

- Formula:

% Deviation = [(Measured Value - Reference Value) / Reference Value] * 100

- Formula:

- Trend Analysis: Plot the results of the control samples over time on a control chart to visualize drift and identify persistent deviations that exceed pre-defined acceptance criteria (e.g., ±2σ from the historical mean) [8].

Interpretation: A consistent, statistically significant trend in the control data or repeated, uncorrectable deviations indicates that the standard calibration is no longer sufficient and Type Standardization is required.

The Type Standardization Procedure

Type Standardization fine-tunes the existing calibration for a specific alloy type just before analyzing one or more samples of that type [8] [11]. It is not a global correction method and is only valid for correcting unknown materials that are similar in composition to the standardization sample used [8].

The following diagram illustrates the logical decision pathway for determining when and how to apply Type Standardization.

Experimental Protocol for Executing Type Standardization

Objective: To perform Type Standardization to correct for observed deviations and restore analytical accuracy for a specific alloy type.

Materials and Reagents:

- High-quality Type Standardization sample (preferably a CRM) with a composition very similar to the unknown production samples [8].

- Recently prepared and easy-to-measure control samples.

- Properly calibrated OES spectrometer.

Methodology:

- Verification: Confirm that the basic calibration with CRMs is at its optimal level of accuracy before beginning [8].

- Sample Preparation: Ensure the standardization sample is prepared according to the manufacturer's specifications (e.g., surfaced on a belt sander, lathe, or milling machine) to present a clean, homogeneous analysis surface [12].

- Instrument Setup: Access the Type Standardization function within the OES software.

- Sample Analysis: Run the Type Standardization sample. The instrument will measure the deviations between the expected values (certified concentrations) and the measured values.

- Calculation of Corrections: The instrument software automatically calculates and stores a set of correction factors (additive or multiplicative) for each elemental channel based on the analyzed sample [8].

- Validation: Immediately analyze one or more independent control samples of the same alloy type to verify that the standardization has successfully brought the results within acceptable limits.

Critical Note: Type Standardization should be performed just before running the unknown samples of that specific alloy type to ensure the correction factors are current and valid [11].

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful implementation of Type Standardization and ongoing OES accuracy relies on several key materials.

Table 2: Essential Research Reagents and Materials for OES Calibration

| Item | Function & Importance |

|---|---|

| Certified Reference Materials (CRMs) | High-purity standards with certified elemental concentrations; form the foundation of initial instrument calibration and validation of analytical methods [8] [11]. |

| Type Standardization Samples | Well-characterized samples, ideally CRMs, with a composition nearly identical to the production samples; used to fine-tune the calibration for a specific alloy matrix [8]. |

| In-House Control Samples | Homogeneous, stable samples of known composition analyzed regularly to monitor instrument stability, track performance over time, and identify drift [8] [11]. |

| Natural Deep Eutectic Solvents (NADES) | Emerging as green alternatives for sample preparation in elemental analysis; can be used to create homogeneous suspensions for calibration in techniques like XRF, minimizing matrix effects [13]. |

For researchers and scientists relying on OES for critical elemental analysis, recognizing the indicators for Type Standardization is paramount for data integrity. Persistent deviations, matrix mismatches, and instrument drift are clear signals that the standard calibration is no longer sufficient. By implementing the detailed monitoring and standardization protocols outlined in this document, laboratories can proactively maintain the highest levels of analytical accuracy, ensure product quality, and comply with stringent regulatory standards.

The Impact of Exotic Alloys and Sample Structure on Accuracy

Optical Emission Spectrometry (OES) is a fundamental tool for the precise determination of elemental composition in metals and alloys. However, the accuracy of this technique is highly dependent on the relationship between the calibration standards and the unknown samples being analyzed. This application note examines a critical challenge in OES analysis: the significant impact that exotic alloys and sample structure have on analytical accuracy. We explore the underlying causes of these inaccuracies and present Type Standardization as a targeted procedure to correct them, framing this discussion within broader research on advanced OES calibration protocols for scientific and industrial professionals [8] [11].

The Underlying Challenge: Matrix Effects and Structural Disparities

The core of the problem lies in the fact that OES is a comparative technique; it measures the intensity of spectral lines from an unknown sample and compares them to a calibration curve established using Certified Reference Materials (CRMs) [8] [1]. Accuracy degrades when the physical or chemical nature of the sample diverges from that of the CRMs.

The primary factors leading to these deviations are summarized in the table below.

Table 1: Factors Leading to Analytical Deviations in OES

| Factor | Description | Impact on Accuracy |

|---|---|---|

| Exotic/Complex Alloys [8] [1] | Alloys that deviate strongly from the common matrix material (e.g., high-alloy steels, specialty superalloys). | Causes significant deviations in the excitation characteristics within the plasma, leading to incorrect concentration calculations. |

| Synthetic CRM Structure [8] [11] | Most CRMs are manufactured synthetically, which does not guarantee correspondence to the actual composition or structure of a production sample. | The difference in metallurgical structure (e.g., grain size, phase distribution) between the CRM and the sample affects how material is sputtered and excited, altering emission intensities. |

| Sample Homogeneity & Surface Finish [14] [15] | Variations in the homogeneity of the elemental distribution or imperfections in the sample surface prepared for analysis. | Rough surfaces, swirl marks, or contaminants scatter light and cause unstable spark conditions, reducing the reproducibility and reliability of results [14]. |

These factors introduce systematic errors that a standard calibration, even with high-quality CRMs, cannot fully correct. When routine quality control using control samples indicates persistent deviations, a more specific correction procedure—Type Standardization—is required [1].

Quantitative Impacts and Diagnostic Signals

The deviations caused by matrix and structural mismatches are not merely theoretical but have quantifiable effects on analytical performance. The following table outlines common diagnostic indicators and their consequences.

Table 2: Quantitative Impacts and Diagnostic Signals of Accuracy Deviations

| Diagnostic Signal / Impact | Quantitative/Descriptive Measure | Notes & Implications |

|---|---|---|

| Drift in Instrument Sensitivity [8] [16] | Slow change over time (mid- to long-term). | Can be monitored via daily control samples. Hitachi High-TTech OES analyzers automate spectral position monitoring across 130-800 nm for early detection [8]. |

| Systematic Bias for Specific Elements | Consistent positive or negative deviation from expected values in control samples. | Indicates a matrix effect. The need for Type Standardization is often identified through this daily monitoring [11]. |

| Insufficient Calibration Accuracy [8] | Deviation remains despite calibration with multiple CRMs. | Suggests the required accuracy level is above what the standard calibration can deliver for that specific sample type. |

| Influence of High-Concentration Elements [17] | Elements at high concentrations (≥500 ppm / µg/mL) in the sample. | Can alter plasma conditions (electron temperature/concentration), changing the sensitivity for other analytes. |

Experimental Protocol for Type Standardization

Type Standardization is a post-calibration correction procedure designed to fine-tune the calibration for a specific, narrowly defined alloy type. The following protocol ensures optimal results.

Prerequisites and Initial Setup

- Verified Base Calibration: Before beginning, confirm that the instrument's basic calibration using CRMs is optimal and up-to-date [1].

- Reference Material Acquisition: Secure a Proven Reliable Specimen (PRS) or a CRM that is very close in composition and structure to the unknown samples you intend to analyze. This material must be homogeneous and certified for the elements of interest [1] [11].

- Sample Preparation: Prepare the PRS and subsequent test samples to a high surface finish.

- Grinding/Milling: Use a spectroscopic grinding or milling machine to achieve a flat, homogeneous surface free of contaminants, oxides, and imperfections like swirl marks [15] [16].

- Cooling Control (for cast materials): When analyzing cast iron for carbon, ensure rapid cooling (e.g., using copper moulds) to promote white solidification, which prevents carbon nodule formation and ensures homogeneity [16].

Step-by-Step Standardization Procedure

- Spark the Standardization Sample: Analyze the prepared PRS using the same analytical program and conditions used for the unknown samples.

- Input Certified Values: The OES software will display the measured concentrations for the PRS. Enter the certified concentration values for the PRS into the instrument.

- Calculate Correction Factors: The instrument software automatically calculates additive and/or multiplicative correction factors for each element channel. These factors are determined by the difference between the measured and certified values of the PRS.

- Apply the Standardization: Save and apply the calculated correction factors. This creates a "Type Standardization" curve for that specific alloy type.

Workflow Visualization

The following diagram illustrates the logical relationship between the problem of analytical deviations and the solution provided by the Type Standardization protocol.

Diagram 1: Type Standardization Diagnostic and Correction Workflow (76 characters)

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of the protocols described above requires specific high-quality materials and equipment.

Table 3: Essential Materials for High-Accuracy OES Analysis

| Item | Function & Importance | Specification & Best Practices |

|---|---|---|

| Certified Reference Materials (CRMs) [8] [18] | Establish the fundamental calibration curve. Provides the known elemental concentrations to which unknown samples are compared. | Must be traceable to national standards (e.g., NIST). Ideally, acquire 10-20 standards for common material grades [18]. |

| Proven Reliable Specimen (PRS) [1] | Serves as the standard for Type Standardization. Its composition and structure must be exceptionally close to the production samples. | Can be a well-characterized in-house sample or a specially certified CRM. Critical for correcting matrix-specific deviations. |

| Spectroscopic Grinding/Milling Machine [15] [16] | Produces a flat, homogeneous, and contamination-free surface on solid samples. | Ensures consistent spark conditions and light emission. Using a cup wheel grinder for cast iron is recommended for optimal carbon analysis [16]. |

| Correct Abrasive Media [16] | Removes surface oxides and contaminants to expose fresh, representative metal. | Use aluminium oxide for steel, nickel, cobalt, and titanium. Change paper every 5-10 samples to prevent cross-contamination. |

| Control Samples [1] [11] | Used for daily performance verification and drift monitoring. | Should be similar in composition to routine production samples. Measuring them repeatedly establishes a control chart for instrument stability. |

Critical Considerations and Limitations

Type Standardization is a powerful correction, but it is not a universal solution. Researchers must be aware of its boundaries:

- Narrow Applicability: The procedure is only valid for correcting unknown materials that are very similar in composition to the PRS used for the standardization. It is named "Type Standardization" for this reason [8] [11].

- Not a Replacement for Calibration: Type Standardization is an additional correction step applied on top of a valid and stable base calibration. It cannot compensate for a poor or outdated initial calibration [1].

- Corrects Systematic Bias, Not Random Error: This method is designed to correct for consistent, systematic deviations. It does not improve the precision (repeatability) of the analysis.

Within the broader context of OES calibration research, Type Standardization emerges as a vital, targeted procedure to achieve the highest levels of analytical accuracy. By understanding and addressing the specific challenges posed by exotic alloys and sample structure, researchers and drug development professionals can significantly enhance data quality. The successful application of this protocol hinges on a robust base calibration, the use of a well-matched Proven Reliable Specimen, and meticulous sample preparation. When applied correctly within its defined scope, Type Standardization is an indispensable tool for ensuring material quality and advancing research in fields requiring precise elemental analysis.

How Type Standardization Corrects for Matrix-Specific Biases

Optical Emission Spectrometry (OES) is an industry-standard analytical technique for determining the elemental composition of metals and alloys, playing a crucial role in quality and process control within metallurgical facilities [19]. However, its quantitative accuracy is frequently compromised by matrix effects, where the sample's overall composition influences the emission intensity of analyte elements, even when their concentrations are identical [20]. Type Standardization is a advanced calibration procedure designed to correct these matrix-specific biases, enabling high-precision analysis essential for material specification and research integrity.

This matrix effect manifests as a change in the slope of the calibration curve, which can be either positive or negative depending on the sample's physical and chemical properties [20]. Traditional calibration using Certified Reference Materials (CRMs) alone is often insufficient, as synthetically manufactured CRMs may not perfectly correspond to the composition or microstructure of the actual samples being analyzed [8]. Type Standardization addresses this limitation by fine-tuning the instrument's calibration using a sample that is chemically similar to the test materials, thereby correcting for biases induced by specific matrix compositions.

The Problem of Matrix Effects in Spectrochemical Analysis

Origins and Types of Matrix Effects

Matrix effects in OES arise from complex interactions between the sample matrix and the excitation source. These effects can be categorized as follows:

- Spectral Interferences: Occur when emission lines from matrix elements overlap with analyte lines, leading to falsely elevated intensity readings [20]. For instance, in steel analysis, the aluminum line at Al II 193.1 nm can interfere with the important carbon line at C I 193.07 nm [20].

- Physical Matrix Effects: Result from variations in sample properties such as thermal conductivity, heat capacity, and absorption coefficient, which alter the laser-sample interaction dynamics and subsequent emission intensities [21].

- Chemical Matrix Effects: Stem from chemical interactions within the sample, including the formation of stable compounds or differences in ionization potentials that alter excitation and emission behavior of analytes [21].

Consequences for Quantitative Analysis

The primary consequence of uncorrected matrix effects is reduced analytical accuracy, where the same analyte concentration in different matrices produces different measured signals. This effect is particularly pronounced in "exotic alloys" that deviate significantly from the matrix material of available CRMs [8]. Furthermore, instrument drift—the slow change in instrumental sensitivity over time—can compound these matrix effects, further distorting results without appropriate correction protocols [8].

Theoretical Foundation of Type Standardization

Type Standardization operates on the principle that while general calibrations establish baseline relationships between intensity and concentration, matrix-specific deviations from this baseline can be quantified and corrected using a well-characterized standard of similar composition to the unknown samples.

The fundamental correction principle can be understood through the lens of influence coefficient algorithms commonly used in spectrochemistry. For a single interfering element, the matrix effect correction takes the form:

Corrected Intensity = Uncorrected Intensity × (1 ± k × Concentration_Interfering_Element)

where k is the correction factor [20]. Type Standardization effectively determines these correction factors empirically by measuring the instrument response to a standard with known composition that closely matches the test samples.

The mathematical foundation extends to scenarios with multiple interfering elements through the equation:

C_i = A_0 + A_1 × I_i × (1 ± Σ k_ij × C_j)

where the summation is over the j subscript of the interfering elements [20]. Type Standardization provides a practical approach to implementing these corrections without requiring explicit knowledge of all interference coefficients.

Protocol for Implementing Type Standardization

Prerequisites and Preparation

Before performing Type Standardization, ensure the following prerequisites are met:

- The OES instrument has a valid initial calibration performed with multiple CRMs.

- Type Standardization samples are available with known composition that closely matches the test materials.

- The instrument is in a stable condition, with the optical system thermally stabilized.

Step-by-Step Standardization Procedure

- Verification of Initial Calibration: Run a control sample to verify the current calibration status. Note any significant deviations from expected values [8].

- Selection of Standardization Samples: Obtain one or more samples of the same alloy type as your test materials with known chemical composition. These should be recently prepared and easy to measure [8].

- Instrument Preparation: Ensure the OES analyzer is properly configured with the correct analytical program for the specific alloy type.

- Sample Measurement: Run the standardization sample multiple times (typically 3-5 repetitions) to establish a precise measurement value.

- Calculation of Correction Factors: The instrument software compares the measured values to the known values of the standardization sample and calculates correction factors for each element.

- Application of Corrections: The correction factors are applied to subsequent measurements of unknown samples of similar composition.

- Verification: Run a control sample of known composition to verify the accuracy of the Type Standardization.

Table 1: Troubleshooting Guide for Type Standardization

| Problem | Potential Cause | Solution |

|---|---|---|

| Large corrections required | Initial calibration has drifted significantly | Recalibrate the instrument before standardization |

| Poor repeatability | Sample heterogeneity or surface preparation issues | Verify sample preparation method; use multiple measurements |

| Some elements show poor correction | Incorrect analytical line selection | Verify spectral line interferences and consider alternative lines |

| Standardization fails verification | Standardization sample does not match test material composition | Source a more appropriate standardization sample |

Post-Standardization Quality Control

After performing Type Standardization, implement these quality control measures:

- Daily Control Samples: Use control samples to monitor the spectrometer's performance daily [8].

- Recalibration Schedule: Establish a regular schedule for complete recalibration based on instrument usage and stability requirements.

- Documentation: Maintain detailed records of standardization procedures, including sample identities, measurement results, and correction factors applied.

Experimental Validation and Case Studies

Performance Metrics for Validation

The effectiveness of Type Standardization can be quantified using several performance metrics:

- Accuracy Improvement: Measure the reduction in bias between measured and known values of control samples.

- Precision: Assess the repeatability of measurements on homogeneous samples before and after standardization.

- Long-term Stability: Monitor how correction factors remain valid over time through regular control sample measurements.

Table 2: Typical Performance Improvement After Type Standardization

| Parameter | Before Standardization | After Standardization |

|---|---|---|

| Accuracy (relative to CRM) | 5-15% deviation | 0.5-3% deviation |

| Repeatability (RSD) | 2-8% | 0.5-2% |

| Long-term stability (drift per week) | 3-10% | 0.5-2% |

Case Study: Analysis of Complex Alloys

In the analysis of nickel silvers (copper-nickel-zinc alloys), the addition of zinc was found to significantly change the slope of the nickel calibration curve, a classic matrix effect [20]. Traditional calibration using copper-based CRMs showed deviations of up to 12% when analyzing these materials. After Type Standardization using a nickel silver standard with certified composition, the deviations were reduced to less than 2%, demonstrating the procedure's effectiveness for matrix-specific correction.

Advanced Applications and Method Integration

Integration with Other Calibration Techniques

Type Standardization can be effectively combined with other calibration methods to enhance analytical performance:

- Internal Standardization: The addition of internal standard elements to correct for instrumental fluctuations can complement Type Standardization's matrix effect correction [22].

- Standard Addition Method: Particularly useful for complex matrices where blanks are unavailable, this method involves adding known quantities of analyte to the sample itself [23].

- Multi-Wavelength Methods: New approaches like Multi-Wavelength Internal Standardization (MWIS) use multiple emission wavelengths for analytes and internal standards to create numerous signal ratios for improved calibration [22].

The workflow below illustrates how Type Standardization integrates with other calibration methods in a comprehensive quality assurance system.

The Researcher's Toolkit for Type Standardization

Table 3: Essential Materials and Reagents for Type Standardization Protocols

| Item | Specification | Function in Protocol |

|---|---|---|

| Certified Reference Materials (CRMs) | NIST-traceable, matrix-matched | Establish initial calibration curves |

| Type Standardization Samples | Well-characterized composition, similar to test materials | Correct for matrix-specific biases |

| Internal Standard Elements | High purity, not present in samples | Correct for instrumental fluctuations [22] |

| Sample Preparation Equipment | Grinding machines, milling tools | Ensure consistent surface preparation [19] |

| Quality Control Samples | Stable, homogeneous reference materials | Verify standardization effectiveness |

Type Standardization represents a critical procedure in the OES analytical workflow, specifically designed to correct for matrix-specific biases that conventional calibration cannot address. By using well-characterized standards that closely match the composition of test materials, this method significantly improves analytical accuracy for precise material specification and research applications. The procedure is most effective when implemented as part of a comprehensive quality assurance system that includes regular instrument calibration, appropriate sample preparation, and ongoing verification through control samples. As OES technology continues to evolve, with developments in solid-state generators, reduced gas consumption, and faster analysis times [17], Type Standardization remains an essential tool for ensuring measurement accuracy in the face of complex matrix effects.

Executing Type Standardization: A Step-by-Step Protocol for Precision

In Optical Emission Spectrometry (OES), a valid and recent base calibration forms the essential foundation for all subsequent analytical measurements. Type Standardization, a procedure for fine-tuning the instrument's calibration using a sample of known composition, is entirely dependent on this initial baseline being accurate and stable [8]. Without this prerequisite, even a perfectly executed Type Standardization will yield incorrect results, leading to material misidentification and significant financial losses. This application note details the protocols for establishing, verifying, and maintaining the base calibration critical for advanced OES research and pharmaceutical development.

The Critical Role of Base Calibration in Type Standardization

Type Standardization is not a standalone calibration but a corrective procedure applied on top of an existing base calibration. It is designed to correct for minor, alloy-specific drifts and is only valid for materials similar in composition to the standardization sample [8] [7].

The relationship between base calibration and Type Standardization can be visualized as a hierarchical process, as shown in the workflow below.

If the base calibration is invalid, the Type Standardization will amplify these inaccuracies. Research indicates that attempting to use Type Standardization for initial charge or ladle correction when the instrument cannot reproduce Certified Reference Material (CRM) values can yield disastrous results [2].

Establishing a Valid Base Calibration

The Foundation: Certified Reference Materials (CRMs)

A primary base calibration requires CRMs. These are materials of sufficient homogeneity with property values that are sufficiently well established for the calibration of measuring instruments [7]. The calibration should be performed with as many CRMs as possible to reduce statistical variation and create a more accurate calibration curve [7].

Key Instrument Parameters for Robust Methods

The following table summarizes optimized ICP-OES parameters from pharmaceutical method development, demonstrating the level of detail required for a robust base calibration.

Table 1: Optimized ICP-OES Parameters for Elemental Impurity Analysis in Pharmaceuticals

| Parameter | Setting for Lead (Pb) | Setting for Palladium (Pd) | Setting for Zinc (Zn) |

|---|---|---|---|

| Wavelength (nm) | 220.3 nm | 340.4 nm | 213.8 nm |

| Plasma View | Axial | Axial | Radial |

| RF Power | 1150 W | 1150 W | 1150 W |

| Nebulizer Gas Flow | 0.40 L/min | 0.40 L/min | 0.40 L/min |

| Auxiliary Gas Flow | 0.5 L/min | 0.5 L/min | 0.5 L/min |

| Linear Range | > 0.9990 (R²) | > 0.9990 (R²) | > 0.9990 (R²) |

| Sample Preparation | Dissolution in H₂O₂, HCl, H₂SO₄; Centrifugation [24] | Dissolution in H₂O₂, HCl, H₂SO₄; Centrifugation [24] | Dissolution in H₂O₂, HCl, H₂SO₄; Centrifugation [24] |

Protocols for Verification and Maintenance of Base Calibration

Ongoing Verification Using Control Samples

Regular verification is essential to ensure the base calibration remains valid over time. This is done using control samples (also called drift control samples). These are stable, homogeneous samples whose composition has been linked to the original CRM-based calibration [7].

Procedure:

- Linking Control Samples: Immediately after a successful base calibration, measure the control sample at least six times to establish a precise reference value and acceptable tolerance limits [7].

- Routine Checks: Analyze the control sample at regular intervals (e.g., daily, every 100 samples, or at the start of each shift) [7].

- Evaluation: Compare the results against the established control limits. Consistent deviation indicates instrument drift and signals that the base calibration may no longer be valid, necessitating a recalibration.

Internal Standardization for Enhanced Accuracy

The use of internal standards (IS) is a critical technique to correct for variations in sample matrices and analyte intensities, ensuring data accuracy, especially in complex pharmaceutical samples [25].

Protocol for Internal Standard Implementation:

- Selection: Choose IS elements not present in the samples and free from spectral interferences. Yttrium (Y) and Scandium (Sc) are common choices, but the selection must be method-specific [25].

- Concentration: The IS concentration must produce sufficient intensity for good precision (RSD < 2% in calibration solutions) while remaining within the linear range [25].

- Introduction: The IS must be added at the same concentration to all analytical solutions (blanks, standards, and samples), typically via an automated pump channel to ensure consistency [25].

- Data Evaluation: Monitor IS recoveries. Recoveries in samples should typically be within 70-120% of the average recovery in the calibration standards. Poor precision in IS replicates (>3% RSD) indicates issues with mixing or sample introduction [25].

Frequency of Recalibration

The base calibration is not permanent. It is subject to drift over time due to changes in instrument sensitivity [8]. Recalibration is required when:

- Control sample results consistently fall outside pre-defined tolerance limits [7].

- After major instrument maintenance or component replacement [26].

- As stipulated by quality assurance protocols, such as regular six-monthly inspections, to ensure ongoing compliance with standards like ISO 17025 [2] [7].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for OES Calibration and Analysis

| Reagent/Material | Function & Importance | Application Notes |

|---|---|---|

| Certified Reference Materials (CRMs) | Primary calibrators for establishing the fundamental calibration curve. Provide traceability to international standards. | Must be matrix-matched to the analyzed samples where possible. Require a sufficient number to reduce statistical uncertainty [7]. |

| Control Samples (Drift Standards) | Stable samples for daily verification of calibration stability. Monitor instrument drift between CRM-based recalibrations. | More cost-effective than CRMs for routine use. Must be "linked" to the initial calibration [7]. |

| Internal Standard Solutions | Elements added to all solutions to correct for physical and matrix-related interferences, improving accuracy and precision. | Yttrium (Y) and Scandium (Sc) are common, but selection is critical to avoid interferences [25]. |

| High-Purity Acids & Solvents | For sample preparation and digestion to introduce samples into the ICP-OES in a soluble form without introducing contaminants. | Includes HNO₃, HCl, H₂O₂ of trace metal grade. Purity is paramount to avoid elevating method blanks [24] [27]. |

| Multi-Element Standard Solutions | Used for preparing calibration standards for multiple analytes simultaneously, ensuring consistency and saving preparation time. | Commercially available with well-characterized concentrations (e.g., 1000 mg/L). Used for constructing the calibration curve [24] [27]. |

A valid and recent base calibration is the non-negotiable foundation for any reliable OES analysis, including the application of Type Standardization. This foundation, built on high-quality CRMs, verified regularly with control samples, and corrected using internal standards, ensures data integrity and result traceability. For researchers in drug development, adhering to these rigorous protocols is essential for complying with regulatory guidelines and guaranteeing the safety and efficacy of pharmaceutical products by accurately controlling elemental impurities.

Sourcing and Selecting Appropriate Reference Materials

Reference Materials (RMs) and Certified Reference Materials (CRMs) are fundamental to achieving accurate and reliable calibration for Optical Emission Spectrometers (OES). These materials, characterized by their homogeneity and well-established property values, form the basis for creating calibration curves that translate instrument signal intensity into quantitative chemical composition [7]. Within the context of Type Standardization—a procedure to correct for matrix-specific deviations and enhance measurement accuracy after a general calibration—the selection of appropriate RMs becomes even more critical [8]. Type Standardization is valid only for correcting analyses of unknown materials that are similar in composition to the standardization sample itself [8]. This application note details the protocols for sourcing, selecting, and verifying RMs to support robust Type Standardization procedures in OES calibration research.

Reference Material Classification and Properties

Types of Reference Materials

RMs used in OES calibration serve distinct purposes, from initial instrument calibration to ongoing quality control. Their roles in the calibration ecosystem are summarized in the table below.

Table 1: Types of Materials for OES Calibration and Control

| Material Type | Primary Function | Key Characteristics | Role in Type Standardization |

|---|---|---|---|

| Certified Reference Materials (CRMs) | Establish the primary calibration curve [7]. | Supplied with a certificate of analysis detailing element concentrations and associated uncertainties [28] [7]. | Serves as the foundational benchmark for accuracy. |

| Control Samples | Daily verification of spectrometer functionality and drift checking [7]. | Homogeneous, larger in size, and more cost-effective than CRMs; composition is linked to the CRM calibration [7]. | Used to monitor instrument stability before performing Type Standardization. |

| Type Standardization Samples | Correct for matrix effects and improve accuracy for specific alloy types post-initial calibration [8]. | Should closely match the production sample's composition and structure; often a well-characterized production sample. | The core material for the Type Standardization procedure. |

Essential Characteristics of High-Quality RMs

The effectiveness of any RM hinges on several key properties:

- Homogeneity: The composition must be uniform throughout the entire sample to ensure consistent spark excitation and reliable results. Homogeneity is evaluated according to standards such as ASTM E826-1996 [28].

- Certified Uncertainty: Each certified element must have a documented uncertainty, which quantifies the confidence in the certified value and is derived from inter-laboratory testing [28] [7].

- Matrix Matching: The RM should closely mirror the chemical composition and metallurgical structure of the samples being analyzed. Synthetically manufactured CRMs do not always correspond to the composition or structure of a production sample, which can lead to deviations [8].

Experimental Protocols for RM Evaluation and Application

Protocol 1: Sourcing and Procurement of RMs

Objective: To acquire CRMs and control samples that meet analytical requirements and quality standards.

- Identify Needs: Define the alloy types (e.g., iron-based, aluminum-based, copper-based) and concentration ranges of interest [28].

- Select Suppliers: Source CRMs from accredited producers (e.g., BAM in Germany, NIST) or specialized commercial manufacturers. For certain applications, internal laboratory reference materials may be calibrated against primary CRMs [29] [7].

- Verify Documentation: Ensure each CRM is supplied with a certificate detailing certified values, uncertainties, and recommended storage conditions.

- Procure Control Samples: Acquire homogeneous control samples that are chemically comparable to production samples for daily instrument verification [7].

Protocol 2: Verification of RM Suitability for Type Standardization

Objective: To experimentally confirm that a candidate Type Standardization sample is free from interferences and is fit for purpose.

- Analyze Major Components: Run single-element standards for all major matrix components at expected concentrations (e.g., 1% or 10,000 mg/L). Overlay the resulting spectra with the analyte peaks of the candidate RM [30].

- Check for Interferences: Examine the spectral regions around the analyte wavelengths in the candidate RM. Identify any spectral overlaps from major components. A wavelength is unsuitable if peaks from interferents sit directly over the analyte peak [30].

- Compare Peak Shapes: After analysis, compare the peak shapes of the candidate RM with those from a pure standard. Significantly different peak shapes indicate a potential interference, necessitating a different RM or analytical wavelength [30].

- Validate with CRM: Analyze the candidate Type Standardization sample as an unknown against a CRM of similar but not identical composition. The results should fall within the certified uncertainty range of the CRM to confirm the sample's suitability.

Protocol 3: Establishing Acceptance Limits for CRMs

Objective: To define statistical criteria for accepting a CRM into the calibration curve.

- Calibrate with Multiple CRMs: Perform an initial calibration using as many CRMs as possible to reduce statistical variation and build a robust calibration curve [7].

- Calculate Statistical Reliability (SR): Measure each CRM multiple times and use the formula for Statistical Reliability (SR), which is the standard deviation of the residuals, to assess the scatter of the data points around the calibration curve [7].

- Set Acceptance Criteria: The uncertainty of the calibration curve should not exceed ± 2SR [7]. A CRM measurement that deviates significantly beyond this limit should be investigated for issues such as incorrect sample loading or method application.

Diagram 1: Workflow for Sourcing and Validating Reference Materials for Type Standardization.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for OES Calibration and Type Standardization Research

| Reagent / Material | Function | Specific Application Notes |

|---|---|---|

| Certified Reference Materials (CRMs) | Provide the traceable foundation for all calibration activities, enabling quantitative analysis [7]. | Use a high number of CRMs to reduce statistical variation. Always check certificate validity and uncertainty. |

| Type Standardization Samples | Fine-tune calibration for a specific alloy type to correct matrix-induced deviations [8]. | Must be similar in composition and structure to production samples. Can be a well-characterized, homogeneous production sample. |

| Control Samples / Check Samples | Monitor spectrometer stability, perform statistical process control, and check for instrumental drift [7]. | A cost-effective alternative to frequent CRM use. Must be "linked to the calibration" by repeated measurement after initial calibration. |

| Single-Element Standards | Investigate and identify spectral interferences from major matrix components during method development [30]. | Run at high concentrations (e.g., 1-10%) to map potential interferences across analyte wavelengths. |

| Internal Standard Elements (e.g., Sc, Y) | Compensate for signal fluctuations caused by physical differences in samples, instrument drift, or matrix effects [31] [32]. | Must be added consistently to all samples and standards. Should not be present in the original sample and must behave similarly to the analytes in the plasma. |

The meticulous sourcing and selection of reference materials are paramount for achieving top accuracy in OES analysis, particularly when employing advanced calibration procedures like Type Standardization. By adhering to the protocols outlined herein—including rigorous verification for spectral interferences, establishing statistical acceptance limits, and utilizing the appropriate type of standard for each specific task—researchers can ensure their OES spectrometers provide data of the highest reliability. This systematic approach to reference materials forms the foundation for robust and accurate Type Standardization, ultimately enhancing quality control and research outcomes in metallurgical analysis.

In elemental analysis using Optical Emission Spectrometry (OES), the reliability of results is fundamentally dependent on procedures performed before the analysis itself begins. Inadequate sample preparation is a primary contributor to analytical errors, responsible for as much as 60% of all spectroscopic analytical inaccuracies [15]. Simultaneously, verifying instrument status through calibration and standardization is critical because OES spectrometers are highly sensitive instruments whose performance can deteriorate without frequent maintenance and verification [8]. This application note establishes a definitive pre-analysis checklist, framed within OES Type Standardization research, to ensure data integrity for researchers, scientists, and drug development professionals.

Sample Preparation Techniques for OES

Proper sample preparation is the first critical control point for data quality. The physical state and presentation of the sample directly influence its interaction with the spark source, plasma stability, and the resulting spectral emissions.

Solid Metallic Samples

OES is an industry-standard technique for the elemental analysis of a range of metals and alloys, typically using Arc/Spark excitation for solid metallic samples [33]. Preparation aims to create a homogeneous, representative surface for analysis.

- Grinding and Milling: Use spectroscopic grinding or milling machines to create a flat, homogeneous surface [15]. This minimizes light scattering and ensures consistent spark interaction. Select equipment based on material hardness to avoid contamination; harder materials require more powerful machinery with specialized grinding surfaces.

- Surface Finish: The final surface quality must be optimized for the specific analysis. For quantitative OES, a consistent surface finish across all samples and standards is vital as it affects spark penetration and plasma formation.

Liquid Samples

While direct analysis of solids is a key advantage of Arc/Spark OES, some applications require liquid analysis via related techniques like Inductively Coupled Plasma OES (ICP-OES). Sample preparation must ensure complete dissolution and compatibility with the introduction system.

- Dissolution and Digestion: Solid samples must undergo total dissolution using appropriate acids or mixtures. The dissolution method must be validated for complete recovery of all target analytes.

- Dilution and Filtration: Dilution places analyte concentrations within the instrument's optimal detection range and reduces matrix effects [15]. Filtration (e.g., using 0.45 μm membrane filters) removes suspended particles that could clog the nebulizer [15].

- Acidification: High-purity acidification with nitric acid (e.g., to 2% v/v) keeps metal ions in solution, preventing precipitation and adsorption to container walls [15].

Table 1: Common Sample Preparation Errors and Impacts on OES Analysis

| Preparation Error | Impact on OES Analysis |

|---|---|

| Irregular or Rough Surface | Inconsistent spark discharge, poor reproducibility, and light scattering [15] |

| Sample Contamination | Introduction of spurious spectral signals from foreign material [15] |

| Inhomogeneous Sample | Non-representative sampling, leading to incorrect composition reporting [15] |

| Improper Dilution | Matrix effects or instrument detection issues (over-range or poor detectability) [15] [34] |

Instrument Status Verification and Calibration

Verifying that the spectrometer is fit for its intended purpose is a mandatory step before any analytical run. This involves a multi-tiered approach from fundamental qualification to application-specific standardization.

Analytical Instrument Qualification (AIQ)

Regulated laboratories require a structured qualification process. According to regulatory perspectives, Analytical Instrument Qualification (AIQ) and Computerized System Validation (CSV) should be integrated to ensure no gaps exist [35]. The foundational model is the 4Qs:

- Design Qualification (DQ): For a spectrometer, a supplier selection report typically replaces DQ, confirming the chosen instrument meets user requirements [35].

- Installation Qualification (IQ): Verifies the instrument is correctly installed as per specifications.

- Operational Qualification (OQ): Demonstrates the instrument operates as intended in the selected environment.

- Performance Qualification (PQ): For CSV, this confirms the overall suitability of the system against the User Requirements Specification (URS) [35].

Routine Calibration and Type Standardization

OES spectrometers use relative measurements, making calibration with Certified Reference Materials (CRMs) essential. Type Standardization is a finer correction applied on top of the standard calibration to achieve the highest accuracy for specific alloy types [8].

Indications for Type Standardization:

- Observed deviations despite a calibration with numerous CRMs.

- Analysis of "exotic" alloys that deviate strongly from the matrix of the standard CRMs.

- A discrepancy between the synthetic composition of CRMs and the actual composition/structure of the production sample.

- The instrument's calibration is no longer sufficient for the required accuracy level, potentially due to long-term drift [8].

Benefits and Limitations:

- Benefit: Delivers a significant improvement in the accuracy of OES results for specific alloy types similar to the standardization sample [8].

- Limitation: It is not a global correction and is only valid for materials similar in composition to the standardization sample [8].

Table 2: Key Materials for Instrument Calibration and Standardization

| Material / Reagent | Function in OES Analysis | Critical Specifications |

|---|---|---|

| Certified Reference Materials (CRMs) | Establishes the primary calibration curve; verifies instrument performance [8] | Matrix-matched to samples; certified concentrations traceable to national standards |

| Type Standardization Samples | Provides a finer, secondary correction for specific alloy types to enhance accuracy [8] | Well-characterized, homogeneous production sample with a known composition |

| High-Purity Gases | Maintains plasma (Argon) and provides a purged environment for optical path | High-purity Argon (e.g., 99.996% minimum) to ensure stable plasma formation |

Experimental Protocol: Type Standardization for OES

This protocol details the steps for performing a Type Standardization to correct for deviations in a calibrated OES spectrometer for a specific alloy type.

Principle

Type Standardization fine-tunes the instrument's existing calibration for a specific alloy family by analyzing one or more samples of that known composition. It corrects for minor deviations arising from differences between synthetic CRMs and actual production samples or from long-term instrumental drift [8].

Materials and Equipment

- OES Spectrometer with established, valid baseline calibration.

- Homogeneous, well-characterized Type Standardization sample(s) of the target alloy.

- Certified Reference Materials (CRMs) for verification.

- Appropriate sample preparation tools (grinder, cutter, etc.).

- Personal protective equipment (safety glasses, gloves).

Step-by-Step Procedure

- Pre-Standardization Check: Ensure the OES spectrometer has a current and optimal baseline calibration performed with the appropriate CRMs.

- Sample Preparation: Prepare the Type Standardization sample identically to production samples. For a metal, this involves grinding to create a fresh, flat, clean surface.

- Sample Analysis: Run the prepared Type Standardization sample on the OES. The instrument software will compare the measured values to the known, stored values for this standard.

- Apply Correction: The instrument's software calculates a correction factor (or set of factors) based on the measured deviation. Apply this correction as per the manufacturer's instructions.

- Verification: Immediately after standardization, analyze a different CRM or a control sample of the same alloy type to verify that the standardization has correctly improved the accuracy without over-correction.

- Documentation: Record the date, time, operator, standardization sample ID, correction factors applied, and verification results in the instrument logbook.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for OES Analysis

| Item | Function / Application |

|---|---|

| High-Purity Acids (e.g., HNO₃) | For sample digestion of non-metallic samples and acidification of liquid samples to stabilize analytes [15] [34]. |

| Certified Reference Materials (CRMs) | To establish a traceable calibration, verify analytical method accuracy, and monitor instrument performance [8]. |

| Type Standardization Samples | To perform a secondary, fine correction on the standard calibration for specific alloy types to achieve top accuracy [8]. |

| Grinding Media & Belts | To prepare a flat, homogeneous, and contamination-free surface on solid metallic samples for Spark OES analysis [15]. |

| High-Purity Argon Gas | To create and maintain the stable plasma required for excitation in the OES source [36]. |

Adherence to a rigorous pre-analysis checklist for sample preparation and instrument status is non-negotiable for generating reliable OES data. Proper sample preparation mitigates up to 60% of potential analytical errors, while a robust instrument qualification and calibration strategy, culminating in Type Standardization where needed, ensures the spectrometer itself is a reliable measuring tool. For researchers in drug development and other regulated environments, this integrated approach is the foundation of data integrity, ensuring that results for elemental composition are accurate, reproducible, and fit for their intended purpose.

Optical Emission Spectrometry (OES) is a powerful technique for determining the elemental composition of materials, particularly in metal analysis. However, the technique relies on relative rather than absolute measurements, making calibration a critical factor for analytical accuracy [1] [8]. Type Standardization represents an advanced calibration procedure that fine-tunes an OES instrument's calibration for specific alloy types or sample matrices. This specialized procedure addresses situations where even a well-calibrated instrument may yield deviations due to unique sample characteristics.

This protocol outlines the complete sequence for performing Type Standardization, providing researchers and analytical professionals with a systematic approach to enhance measurement accuracy for specialized applications. The procedure is particularly valuable when analyzing exotic alloys that deviate strongly from standard matrix materials or when the physical structure of certified reference materials (CRMs) does not perfectly match that of production samples [8]. When implemented correctly, Type Standardization delivers the precision required for rigorous quality control and research applications.

Prerequisites and Preparatory Steps

Instrument Readiness Verification