Unlocking Spectral Data: A Comprehensive Guide to PCA Applications in Drug Discovery

This article provides a comprehensive exploration of Principal Component Analysis (PCA) for analyzing spectral data in pharmaceutical and biomedical research.

Unlocking Spectral Data: A Comprehensive Guide to PCA Applications in Drug Discovery

Abstract

This article provides a comprehensive exploration of Principal Component Analysis (PCA) for analyzing spectral data in pharmaceutical and biomedical research. Tailored for researchers and drug development professionals, it covers foundational principles, advanced methodological applications for drug screening and biomarker discovery, practical troubleshooting for real-world data challenges, and validation techniques against other chemometric methods. By synthesizing current research and case studies, this guide serves as a critical resource for leveraging PCA to accelerate drug discovery, enhance product quality control, and decipher complex biological systems from high-dimensional spectral profiles.

Demystifying PCA: Core Concepts and Its Power in Spectral Data Exploration

Principal Component Analysis (PCA) is an unsupervised multivariate technique fundamental to exploring high-dimensional biological datasets. By reducing data complexity while preserving essential information, PCA enables researchers to identify patterns, outliers, and natural groupings within data, serving as a powerful catalyst for hypothesis generation. This Application Note details standardized protocols for implementing PCA in biological research, from experimental design and data preprocessing through interpretation and downstream hypothesis formulation, with particular emphasis on applications in genomics, pharmacogenomics, and spectral analysis.

Theoretical Foundation of PCA in Biology

Core Principles and Mathematical Basis

PCA operates by transforming potentially correlated variables into a new set of uncorrelated variables called Principal Components (PCs). These PCs are linear combinations of the original variables and are ordered such that the first PC (PC1) captures the greatest possible variance in the data, the second PC (PC2) captures the next greatest variance while being orthogonal to the first, and so on. This transformation allows for a low-dimensional projection of high-dimensional data, typically in 2D or 3D scatter plots, making it possible to visualize the dominant structure of the data.

In biological contexts, where datasets often include measurements from thousands of genes, proteins, or metabolic features, this dimensionality reduction is invaluable. The technique reveals the intrinsic data structure without prior knowledge of sample classes, making it ideal for exploratory analysis. A key advancement in interpreting PCA outputs is informational rescaling, which transforms standard PCA maps—where distances can be challenging to interpret—into entropy-based maps where distances are based on mutual information. This rescaling quantifies relative distances into information units like "bits," enhancing cluster identification and the interpretation of statistical associations, particularly in genetics [1].

PCA as a Hypothesis-Generating Engine

The primary utility of PCA in biological discovery lies in its ability to generate testable hypotheses from untargeted data exploration. Key observations from PCA plots and their corresponding hypothetical implications are summarized in the table below.

Table 1: Hypothesis Generation from PCA Plot Observations

| PCA Observation | Potential Biological Implication | Example Testable Hypothesis |

|---|---|---|

| Clear separation of sample groups along PC1 | A major experimental factor or underlying biological state drives global differences. | Samples from 'Disease' and 'Control' cohorts have distinct molecular profiles. |

| Outliers isolated from main cluster | Potential sample contamination, technical artifact, or rare biological phenomenon. | The outlier sample represents a novel subtype or a failed experiment. |

| Continuous gradient of samples along a PC | A progressive biological process (e.g., development, disease progression). | Gene expression changes continuously along a pathological trajectory. |

| Clustering by batch rather than phenotype | Strong batch effect confounding biological signal. | Technical variability (e.g., processing date) must be corrected before analysis. |

Experimental Protocol: A Standard Workflow for PCA

This protocol provides a generalized workflow for performing PCA on biological data, such as gene expression or spectral data, using tools like MATLAB or R, with specific notes for web-based applications like SimpleViz [2].

Data Preprocessing and Normalization

The validity of a PCA result is critically dependent on proper data preprocessing, which mitigates technical artifacts and enhances biological signals.

- Step 1: Data Cleaning and Imputation: Handle missing values through imputation (e.g., k-nearest neighbors) or removal. Address cosmic rays and other random noise in spectral data using specialized algorithms [3].

- Step 2: Normalization: Normalize data to correct for systematic technical variation (e.g., sequencing depth, total ion current). A common approach is linear normalization to standardize the data, transforming each feature to have a mean of 0 and a standard deviation of 1 [4]. This ensures all features contribute equally to the variance and prevents features with larger native scales from dominating the PCs.

- Step 3: Filtering and Transformation: Filter out low-variance features, as they contribute little to the separation of samples. Apply log-transformations to right-skewed data (e.g., RNA-seq counts) to stabilize variance.

Execution and Visualization

- Step 4: PCA Calculation: Input the preprocessed data matrix into a PCA algorithm. Standard functions are available in

scikit-learn(Python),stats(R), orMatlab[4]. - Step 5: Visualization Generation: Create a 2D scatter plot using the first two principal components (PC1 vs. PC2). For tools like SimpleViz, a free, web-based platform, users can upload their data file (e.g., CSV format), select the "PCA plot" visualization type, and the tool will automatically generate the publication-ready figure without requiring programming skills [2].

- Step 6: Interpretation and Output: Examine the scatter plot for clusters, gradients, and outliers. The percentage of total variance explained by each PC should be noted to gauge the reliability of the observed patterns. The analysis can be used to prioritize genes or features for further investigation.

The following diagram illustrates the logical workflow from raw data to hypothesis generation.

The Scientist's Toolkit: Research Reagent Solutions

Successful execution of a PCA-based study relies on a combination of biological reagents, data analysis tools, and computational resources.

Table 2: Essential Materials and Reagents for PCA-Driven Research

| Category / Item | Function / Application | Specific Examples / Notes |

|---|---|---|

| Biological Reagents | ||

| High-Throughput Assay Kits | Generate the primary high-dimensional data. | RNA-seq kits, Genotyping arrays, Metabolomics panels. |

| Reference Materials | Validate analytical workflows and ensure genotyping accuracy. | Genome-In-A-Bottle (GIAB), Genetic Testing Reference Material (GeT-RM) [5]. |

| Data Analysis Tools | ||

| Programming Environments | Provide flexibility for custom data preprocessing and PCA execution. | MATLAB [4], R (with factoextra), Python (with scikit-learn, scanpy). |

| Web-Based Platforms | Enable accessible, code-free analysis and visualization. | SimpleViz (for RNA-seq, PCA, volcano plots) [2]. |

| Specialized Algorithms | Perform critical preprocessing steps for specific data types. | Convolutional Neural Networks (CNN) for image-based data segmentation (e.g., ecDNA detection) [5]. Cosmic ray removal algorithms for spectral data [3]. |

| Computational Resources | ||

| High-Performance Computing | Handle large-scale data matrix computations. | University/cluster resources, cloud computing (AWS, Google Cloud). |

Case Study: ProstaMine and PCA in Prostate Cancer Subtyping

The application ProstaMine exemplifies PCA's role in a sophisticated systems biology tool for deciphering prostate cancer (PCa) complexity. This case study outlines the experimental workflow and resulting hypotheses.

- Objective: To systematically identify co-alterations of genes associated with aggressiveness in molecular subtypes of PCa, defined by high-fidelity alterations like

NKX3-1-lossandRB1-loss[5]. - Methods: ProstaMine leverages multi-omics data (genomic, transcriptomic) integrated with clinical data from multiple PCa cohorts. PCA and other integrative genomic methods are applied to this data to prioritize co-alterations enriched in metastatic disease. The tool can mine any user-selected molecular subtype to identify high-confidence alteration hotspots.

- Results and Hypothesis Generation: Application of ProstaMine to

RB1-lossPCa identified novel subtype-specific co-alterations inp53,STAT6, andMHC class Iantigen presentation pathways, which are associated with tumor aggressiveness. These findings generate a direct testable hypothesis: that the co-alteration ofRB1-losswith dysregulatedMHC class Iantigen presentation promotes immune evasion and drives disease progression in a defined PCa subtype [5].

The workflow for this integrative analysis is depicted below.

Advanced Applications and Future Directions

PCA continues to evolve, integrating with more complex AI frameworks to tackle disease complexity. Future directions include the development of context-aware adaptive processing and physics-constrained data fusion to achieve unprecedented detection sensitivity and classification accuracy [3]. A major frontier is the integration of generative AI and large language models (LLMs) with systems biology tools like PCA. This synergy promises to enhance multi-omics data integration and automate the formulation of mechanistic hypotheses regarding disease etiology and progression, ultimately accelerating discovery in pharmacological sciences and precision medicine [5].

Principal Component Analysis (PCA) is a powerful dimensionality reduction technique that simplifies complex, high-dimensional datasets into fewer dimensions while retaining the most significant patterns and trends. At its heart are the mathematical concepts of eigenvectors and eigenvalues, which respectively define the orientation of the new component axes and the amount of variance each component captures [6] [7]. This connection is fundamental: the eigenvalues of the covariance matrix directly represent the variance explained by each principal component [8].

In spectral data research, such as analyzing Raman or Near-Infrared (NIR) spectroscopy data in pharmaceutical development, PCA is invaluable. It transforms thousands of correlated spectral features into a smaller set of uncorrelated variables (principal components), preserving essential information for building robust predictive models [9] [10]. This process enhances computational efficiency and mitigates overfitting, making it a cornerstone of modern chemometric analysis.

Theoretical Foundations: Connecting Eigenproperties to Variance

The Covariance Matrix and Eigendecomposition

PCA begins by standardizing the data to ensure each feature contributes equally, followed by computing the covariance matrix [7] [11]. This symmetric matrix summarizes how every pair of variables in the dataset covaries. The entries on the main diagonal represent the variances of individual variables, while the off-diagonal elements represent the covariances between variables [7]. A positive covariance indicates that two variables increase or decrease together, whereas a negative value signifies an inverse relationship [11].

The core of PCA lies in the eigendecomposition of this covariance matrix. This process solves the equation: [ \mathbf{A}\mathbf{v} = \lambda \mathbf{v} ] Where (\mathbf{A}) is the covariance matrix, (\mathbf{v}) is an eigenvector, and (\lambda) is its corresponding eigenvalue [11]. The eigenvectors represent the directions of maximum variance in the data—the principal components themselves. The eigenvalues, being scalar coefficients, denote the magnitude of variance along each corresponding eigenvector direction [7] [8]. Ranking eigenvectors by their eigenvalues in descending order gives the principal components in order of significance [7].

Variance Explanation Geometric Interpretation

Geometrically, PCA can be visualized as fitting an ellipsoid to the data. Each axis of this ellipsoid represents a principal component. The eigenvectors define the directions of these axes, and the eigenvalues correspond to the lengths of the axes, indicating the spread of the data along that direction [6]. A longer axis (higher eigenvalue) means greater variance and more information captured along that component.

The proportion of total variance explained by a single principal component is calculated by dividing its eigenvalue by the sum of all eigenvalues. The cumulative variance explained by the first (k) components is the sum of their eigenvalues divided by the total sum of eigenvalues [6] [7]. This quantifies how well the reduced-dimensional representation approximates the original dataset.

Table 1: Key Mathematical Objects in PCA and Their Interpretation

| Mathematical Object | Role in PCA | Statistical Interpretation |

|---|---|---|

| Covariance Matrix | A symmetric matrix with variances on the diagonal and covariances off-diagonal [7]. | Summarizes the structure and relationships between all variables in the data. |

| Eigenvector | Defines the direction of a principal component axis [7] [11]. | A linear combination of original variables that defines a new, uncorrelated feature. |

| Eigenvalue | The scalar associated with an eigenvector [11]. | The amount of variance captured by its corresponding principal component [8]. |

| Proportion of Variance Explained | Ratio of an eigenvalue to the sum of all eigenvalues [6]. | The fraction of the total dataset information carried by a specific component. |

Experimental Protocols for Spectral Data Analysis

Standard PCA Workflow for Spectral Preprocessing

The following protocol, adapted from a study on polysaccharide-coated drugs, details the application of PCA for preprocessing high-dimensional spectral data before machine learning modeling [10].

Objective: To reduce the dimensionality of a Raman spectral dataset and extract principal components for subsequent regression analysis of drug release profiles. Materials: Spectral dataset (e.g., 155 samples with >1500 spectral features per sample) [10].

Data Normalization:

- Purpose: Ensure all spectral features are on a comparable scale to prevent variables with larger ranges from dominating the analysis [7] [10].

- Procedure: For each spectral feature, subtract its mean ((\mu)) and divide by its standard deviation ((\sigma)) across all samples to achieve a mean of 0 and a standard deviation of 1 [10]. The formula for a value (X) is: [ X_{\text{standardized}} = \frac{X - \mu}{\sigma} ]

Covariance Matrix Computation:

Eigendecomposition:

- Purpose: Identify the principal components (eigenvectors) and their associated variances (eigenvalues) [10].

- Procedure: Perform eigendecomposition on the covariance matrix. This yields a set of eigenvalues and their corresponding eigenvectors.

Outlier Detection (Optional but Recommended):

- Purpose: Identify and remove influential outliers that could distort the PCA model and subsequent analysis.

- Procedure: Calculate Cook’s Distance for the data projected into the principal component space. Data points with a high Cook’s Distance are considered influential outliers and should be treated with caution or removed [10].

Component Selection & Data Projection:

- Purpose: Create a lower-dimensional dataset for modeling.

- Procedure: Rank the eigenvectors by their eigenvalues in descending order. Select the top (k) eigenvectors that capture a sufficient amount of cumulative variance (e.g., >95%) to form a feature vector. Project the original standardized data onto this new subspace to obtain the final principal component scores [7] [10].

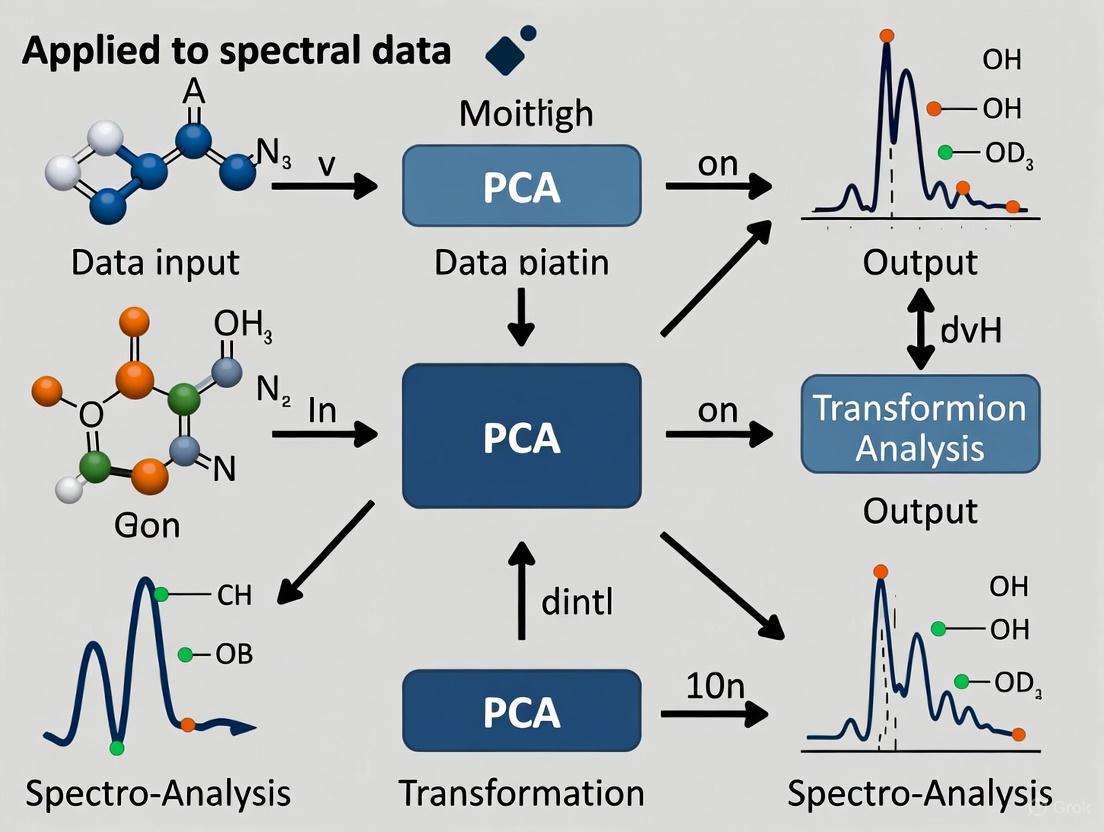

Figure 1: PCA Preprocessing Workflow for Spectral Data

Protocol for Calibration Transfer Between Spectrometers

This protocol describes an Improved PCA (IPCA) method for transferring calibration models between different types of NIR spectrometers, a common challenge in pharmaceutical spectroscopy [9].

Objective: To transfer a quantitative model from a source spectrometer to a target spectrometer with different spectral resolutions or wavelength ranges using IPCA.

Materials:

- Spectral datasets from source and target spectrometers.

- A set of standardized samples measured on both instruments (transfer set).

Source Model Establishment:

- Use the source spectrometer's spectra to establish a baseline quantitative model (e.g., PLS regression) for the analyte of interest (e.g., API content) [9].

Transfer Matrix Construction via IPCA:

- Perform PCA on the spectra from the transfer set acquired on the source instrument.

- Perform PCA on the spectra from the same transfer set acquired on the target instrument.

- Construct a transfer matrix that maps the principal component space of the target instrument to that of the source instrument, effectively associating their spectral data structures [9].

Spectrum Correction:

- For any new spectrum from the target spectrometer, use the transfer matrix to correct (or "transfer") it into the spectral space of the source instrument [9].

Prediction with Transferred Spectra:

- Use the transferred spectra with the original model built on the source instrument for prediction. Evaluate the model's performance on the transferred validation set using metrics like Root Mean Square Error of Prediction (RMSEP) [9].

Table 2: Research Reagent Solutions for Spectroscopic PCA

| Item | Function in Experiment |

|---|---|

| NIR/Raman Spectrometer | Generates high-dimensional spectral data from physical samples (e.g., pharmaceutical tablets) [9] [10]. |

| Standardized Samples (Transfer Set) | A set of samples measured on both source and target instruments; enables construction of the transfer function in calibration transfer [9]. |

| Computational Environment (e.g., Python/R) | Provides libraries for linear algebra operations (covariance matrix, eigendecomposition) and implementation of PCA [11]. |

| Spectral Database | A curated collection of historical spectral data used for model building and validation [10]. |

Application in Drug Development Research

Case Study: Predicting Drug Release from Raman Spectra

A 2025 study on colonic drug delivery showcases the practical application of this mathematical foundation [10]. Researchers used Raman spectroscopy to monitor the release of 5-aminosalicylic acid (5-ASA) from polysaccharide-coated formulations. The dataset consisted of 155 samples, each with over 1500 spectral features.

Methodology and Results: The spectral data underwent preprocessing, including standard normalization and PCA for dimensionality reduction. The principal components, derived from the eigenvectors and eigenvalues of the spectral covariance matrix, became the new input features for machine learning models. A Multilayer Perceptron (MLP) model trained on these components achieved exceptional predictive accuracy for drug release (R² = 0.9989), outperforming other models. This demonstrates how PCA effectively distills the essential information from complex spectral data, enabling highly accurate predictions critical for pharmaceutical development [10].

Advanced Application: Calibration Transfer Between Instruments

In a novel application, the principles of PCA were extended to enable calibration transfer between different types of NIR spectrometers (e.g., benchtop vs. portable) [9]. This is a significant challenge because the instruments may have different wavelengths and absorbance readings. The proposed Improved PCA (IPCA) method successfully transformed spectra from a target instrument to align with the data structure of a source instrument. The results showed that IPCA could achieve a successful bi-transfer without degrading the prediction model's ability, providing a robust solution for the practical application of NIR spectroscopy across different hardware platforms [9].

Figure 2: Logical Flow from Data to Variance Explanation

Within the framework of a broader thesis on the application of Principal Component Analysis (PCA) in spectral data research, the preprocessing of data emerges as a foundational step. PCA is a linear dimensionality reduction technique that transforms data to a new coordinate system, highlighting the directions of maximum variance through principal components [6]. For spectral data, which is often high-dimensional and complex, the raw data must be preprocessed to ensure that the PCA model captures meaningful chemical or biological information rather than artifacts of measurement scales or baseline offsets [12] [13]. This document outlines detailed protocols and application notes for mean-centering and scaling, two critical preprocessing steps for spectral analysis within drug development and scientific research.

Theoretical Foundations of PCA and Preprocessing

The Geometry of PCA and the Need for Preprocessing

The geometric interpretation of PCA provides the clearest rationale for preprocessing. A PCA model is a latent variable model that finds a sequence of principal components, each oriented in the direction of maximum variance in the data, with the constraint that each subsequent component is orthogonal to the preceding ones [6] [12].

- Mean-Centering: The first step in PCA is to move the data to the center of the coordinate system. This process, called mean-centering, removes the arbitrary bias from measurements. Geometrically, it ensures that the best-fit line (the first principal component) passes through the origin of the coordinate system, allowing the model to focus on the variance around the mean rather than the mean itself [12]. Without centering, the principal components could be heavily influenced by the mean values of the variables, providing a suboptimal approximation of the data structure [13].

- Scaling (Unit-Variance): After centering, scaling the data to unit-variance is common, especially when variables are in different units of measurement. This step ensures that each variable contributes equally to the analysis. If variables are on different scales, those with larger magnitudes and variances would dominate the first principal components simply due to their scale, not their underlying informational importance [12] [13]. For spectral data, this is crucial, as intensity readings across different wavelengths or mass-to-charge ratios can vary by orders of magnitude.

Mathematical Basis

Mathematically, PCA is solved via the Singular Value Decomposition (SVD) of the data matrix, which finds linear subspaces that best represent the data in the squared sense [13]. The principal components are the eigenvectors of the data's covariance matrix, and the eigenvalues represent the amount of variance captured by each component [6] [14].

The process begins with a data matrix X. For mean-centering, the column mean is subtracted from each value in that column. For scaling to unit variance, each mean-centered value is divided by the column's standard deviation, producing a new matrix where every variable has a mean of 0 and a standard deviation of 1 [14]. The covariance matrix of this processed data is then computed, which forms the basis for eigen decomposition [14].

Figure 1: The sequential workflow for preprocessing spectral data prior to PCA. Both centering and scaling are critical prerequisite steps.

Quantitative Comparison of Preprocessing Methods

The choice of preprocessing technique can dramatically alter the results of a PCA, as it changes the input to the covariance matrix calculation [13]. The table below summarizes the core characteristics and implications of different preprocessing approaches.

Table 1: Comparison of Data Preprocessing Techniques for PCA

| Technique | Mathematical Operation | Primary Goal | Impact on PCA | Best Suited For | ||||

|---|---|---|---|---|---|---|---|---|

| Mean-Centering | ( X_{\text{centered}} = X - \mu ) | Remove baseline offset, center data at origin. | Ensures PC directions describe variance around the mean. [12] | All PCA applications, essential first step. | ||||

| Standard Scaling (Z-Score) | ( X_{\text{scaled}} = \frac{X - \mu}{\sigma} ) | Achieve unit variance for all variables. | Prevents variables with large scales from dominating PCs. [13] | Spectral data with variables of different units/intensities. | ||||

| No Scaling | --- | Use original data scales. | PCs reflect scale differences; often undesirable. [13] | All variables are already on a comparable scale. | ||||

| Normalization (L2) | ( X_{\text{norm}} = \frac{X}{ | X | _2} ) | Scale each sample to have a unit norm. | Alters data structure; not standard for PCA. [13] | Specific use cases like spatial sign covariance. |

Failure to properly preprocess data can lead to misleading results. For example, a dataset containing a mix of binary variables (0/1) and continuous variables (0-5) will, if unscaled, produce principal components dominated by the continuous variable simply because it has a larger scale and variance. This can create illusory clusters that disappear after proper scaling [13].

Experimental Protocols for Spectral Data Preprocessing

This protocol provides a detailed, step-by-step methodology for preprocessing spectral data (e.g., from Imaging Mass Spectrometry, IMS) prior to PCA, ensuring reproducibility and robust analysis [15].

Protocol 1: Standardization of Spectral Data

Objective: To perform mean-centering and scaling on a raw spectral data matrix, preparing it for Principal Component Analysis.

Materials & Software:

- Raw Spectral Data File (e.g., Bruker .d files for IMS data) [15]

- Computing Environment: Python with NumPy, Pandas, and Scikit-learn libraries [14]

Procedure:

- Data Import and Initialization:

- Import the raw spectral data into your analysis environment (e.g., a Python script).

- Structure the data into an ( n \times p ) matrix, X, where ( n ) is the number of spectra (observations) and ( p ) is the number of variables (e.g., m/z bins, wavelengths).

Mean-Centering:

- Calculate the mean value for each variable (column) across all observations: ( \muj = \frac{1}{n} \sum{i=1}^{n} X_{ij} ).

- Subtract the respective column mean from each value in the data matrix: ( X{\text{centered}, ij} = X{ij} - \mu_j ). This results in a new matrix where every variable has a mean of zero [12].

Scaling to Unit Variance:

- Calculate the standard deviation for each mean-centered variable: ( \sigmaj = \sqrt{\frac{1}{n-1} \sum{i=1}^{n} (X_{\text{centered}, ij})^2 } ).

- Divide each value in the mean-centered matrix by the standard deviation of its column: ( X{\text{scaled}, ij} = \frac{X{\text{centered}, ij}}{\sigma_j} ). The resulting matrix has variables with a mean of 0 and a standard deviation of 1 [14] [13].

Output:

- The final output, ( X_{\text{scaled}} ), is the preprocessed data matrix ready for input into a PCA algorithm.

Protocol 2: Integration with Principal Component Analysis

Objective: To apply PCA to the preprocessed spectral data and extract principal components for visualization and analysis.

Procedure:

- Compute Covariance Matrix:

- Given the preprocessed data matrix ( X{\text{scaled}} ), compute the ( p \times p ) covariance matrix: ( C = \frac{1}{n-1} X{\text{scaled}}^T X_{\text{scaled}} ) [14]. This matrix describes how all pairs of variables covary.

Eigen Decomposition:

Select Principal Components:

- Rank the eigenvectors by their eigenvalues in descending order. The eigenvalue represents the amount of variance captured by that component.

- Choose the top ( k ) eigenvectors (e.g., the first 2 or 3 for visualization) to form a ( p \times k ) projection matrix, W [14].

Transform Data:

- Project the original preprocessed data onto the new principal component axes to obtain the scores, which are the coordinates of the data in the new subspace: ( T = X{\text{scaled}} W ) [6]. The matrix T contains the score vectors (( \mathbf{t}1, \mathbf{t}_2, ... )) and is used for downstream visualization and analysis.

Figure 2: The logical relationship between data and model components in PCA after preprocessing. The scores matrix (T) is used for visualization like scatter plots.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key materials and computational tools essential for executing the preprocessing and PCA protocols described herein.

Table 2: Essential Research Reagents and Tools for Spectral Data Analysis

| Item Name | Function / Role in Analysis | Example / Specification |

|---|---|---|

| Spectral Data Source | Provides the raw, high-dimensional data for analysis. | Imaging Mass Spectrometry (IMS) raw files (e.g., Bruker .d format) [15]. |

| StandardScaler | A software function that automatically performs mean-centering and scaling to unit variance. | StandardScaler from the sklearn.preprocessing library in Python [14]. |

| PCA Algorithm | The core computational tool that performs the dimensionality reduction on preprocessed data. | PCA class from the sklearn.decomposition library in Python [14]. |

| Computational Library (Python) | Provides the environment and mathematical functions for data manipulation, linear algebra, and visualization. | Libraries: NumPy, Pandas, Scikit-learn, Matplotlib [14]. |

Principal Component Analysis (PCA) is a powerful linear dimensionality reduction technique with widespread applications in exploratory data analysis, visualization, and data preprocessing. Within spectral data research and drug development, PCA provides an indispensable mathematical framework for transforming complex, high-dimensional datasets into a simplified structure that retains essential patterns. The fundamental objective of PCA is to perform an orthogonal linear transformation that projects data onto a new coordinate system where the directions of maximum variance—the principal components—can be systematically identified and interpreted [6].

In biological and spectral contexts, where datasets often contain numerous correlated variables, PCA serves to compress information while minimizing information loss. This process identifies dominant trends within one dataset by transforming correlated spectral bands or biological measurements into uncorrelated synthetic variables called principal components [16]. The technique is particularly valuable for visualizing patterns such as clusters, clines, and outliers that might indicate significant biological phenomena or spectral signatures [17]. For researchers analyzing spectral patterns from various analytical platforms, PCA offers a robust methodology for separating biologically meaningful signals from technical noise and identifying underlying structures that correlate with physiological states, therapeutic effects, or molecular subtypes.

Theoretical Foundations of PCA

Mathematical Framework

The mathematical foundation of PCA rests on linear algebra operations applied to the data matrix. Given a data matrix X of dimensions ( n \times p ), where ( n ) represents the number of observations (samples) and ( p ) represents the number of variables (spectral bands or biological measurements), PCA begins with data centering to ensure each variable has a mean of zero [6]. The core transformation in PCA can be expressed as:

T = XW

where T is the matrix of principal component scores, X is the original data matrix, and W is the matrix of weights whose columns are the eigenvectors of the covariance matrix XᵀX [6] [16]. These eigenvectors, called loadings in PCA terminology, define the directions of maximum variance in the data, while the corresponding eigenvalues indicate the amount of variance explained by each principal component [16].

The first principal component is determined by the weight vector w₍₁₎ that satisfies:

w₍₁₎ = argmax‖w‖=1 {‖Xw‖²} = argmax‖w‖=1 {wᵀXᵀXw}

This maximizes the variance of the projected data [6]. Subsequent components are computed sequentially from the deflated data matrix after removing the variance explained by previous components, with each successive component capturing the next highest variance direction orthogonal to all previous ones.

Geometric Interpretation

Geometrically, PCA can be conceptualized as fitting a p-dimensional ellipsoid to the data, where each axis represents a principal component. The principal components align with the axes of this ellipsoid, with the longest axis corresponding to the first principal component (direction of greatest variance), the next longest to the second component, and so forth [6]. When some axis of this ellipsoid is small, the variance along that axis is also small, indicating that the data can be effectively described without that dimension [6].

This geometric interpretation extends to the view of PCA as a rotation procedure that aligns the coordinate system with the directions of maximum variance [18]. In the context of spectral data, this rotation effectively identifies new composite variables (principal components) that are linear combinations of the original spectral features, often revealing underlying patterns that were obscured in the original high-dimensional space.

Practical Implementation Protocol

Data Preprocessing Workflow

Table 1: Data Preprocessing Steps for PCA on Spectral Data

| Step | Procedure | Rationale | Considerations |

|---|---|---|---|

| 1. Data Collection | Acquire raw spectral measurements | Foundation for analysis | Ensure proper instrument calibration and consistent measurement conditions |

| 2. Data Centering | Subtract mean from each variable | Ensures mean of each variable is zero | Essential for PCA on covariance matrix [18] |

| 3. Data Standardization | Divide by standard deviation (optional) | Normalizes variables to comparable scales | Use for PCA on correlation matrix; critical when variables have different units [18] |

| 4. Missing Data Imputation | Estimate missing values | Encomplete dataset for PCA | Use appropriate methods (mean, regression, KNN) based on data structure |

| 5. Data Validation | Check for outliers and inconsistencies | Ensures data quality before PCA | Use diagnostic plots and statistical tests |

The decision to center versus standardize data represents a critical choice in PCA implementation. Centering (subtracting the mean) is mandatory for PCA, while standardization (dividing by the standard deviation) is optional but recommended when variables have different units or scales, as is common in spectral datasets combining different measurement types [18]. PCA performed on standardized data (correlation matrix) gives equal weight to all variables, while PCA on centered data (covariance matrix) preserves the influence of variables with naturally larger variances [18].

PCA Computation and Visualization

Table 2: Key Outputs from PCA and Their Interpretation

| PCA Output | Description | Interpretation in Biological Context | Visualization Methods |

|---|---|---|---|

| Eigenvalues | Variance explained by each PC | Indicates importance of each pattern | Scree plot (variance vs. component number) [19] |

| Loadings | Weight of original variables in each PC | Identifies which spectral features contribute to pattern | Biplot, loading plots [6] [16] |

| Scores | Coordinates of samples in PC space | Reveals sample clustering and patterns | 2D/3D scatter plots [19] |

| Explained Variance | Cumulative variance captured | Determines how many PCs to retain | Cumulative variance plot [19] |

The following workflow diagram illustrates the complete PCA process from data preparation to interpretation:

Implementation of PCA typically proceeds through eigenvalue decomposition of the covariance matrix or singular value decomposition (SVD) of the data matrix [6]. For a practical implementation, the following protocol is recommended:

- Compute the covariance matrix of the preprocessed data (C = XᵀX/(n-1))

- Perform eigenvalue decomposition of the covariance matrix to obtain eigenvectors (loadings) and eigenvalues

- Sort components by decreasing eigenvalues (variances)

- Select the number of components to retain based on scree plots, cumulative variance, or other criteria

- Project original data onto selected components to obtain scores (T = XW)

- Visualize and interpret the results using score plots, loading plots, and biplots

The cumulative explained variance plot is particularly valuable for determining the optimal number of components to retain. A common threshold is 95% of total variance, though this may be adjusted based on specific research goals and data complexity [19].

Interpreting Principal Components in Biological Contexts

Extracting Biological Meaning from Loadings and Scores

The interpretation of PCA results represents the most critical phase for extracting biological insights from spectral data. This process involves simultaneous analysis of both loadings (which reveal how original variables contribute to components) and scores (which show how samples distribute along components) [16].

Loadings with large absolute values indicate variables that strongly influence a particular component. In spectral applications, these high-loading variables often correspond to specific spectral regions or biomarkers that drive the observed patterns. When these patterns correlate with sample groupings visible in score plots, researchers can infer biological relevance. For example, if a particular principal component separates drug-treated from control samples, and has high loadings for specific spectral frequencies, those frequencies may represent spectral signatures of drug response.

Score plots reveal sample relationships, clustering patterns, and potential outliers. Samples positioned close together in the principal component space share similar spectral profiles and potentially similar biological characteristics, while distant samples differ substantially. The following diagram illustrates this interpretative process:

Advanced Interpretation: Contrastive PCA

Standard PCA identifies dominant patterns within a single dataset, but these patterns may reflect universal variations rather than dataset-specific phenomena of interest. Contrastive PCA (cPCA) addresses this limitation by utilizing a background dataset to enhance visualization and exploration of patterns enriched in a target dataset relative to comparison data [17].

The cPCA algorithm identifies low-dimensional structures that are enriched in a target dataset {xi} relative to background data {yi}. This is achieved by finding directions that exhibit high variance in the target data but low variance in the background data, effectively highlighting patterns unique to the target dataset [17]. In biological applications, this enables researchers to visualize dataset-specific patterns that might be obscured by dominant but biologically irrelevant variations in standard PCA.

For example, when analyzing gene expression data from cancer patients, standard PCA might highlight variations due to demographic factors, while cPCA using healthy patients as background can reveal patterns specific to cancer subtypes [17]. Similarly, in spectral analysis of therapeutic responses, cPCA can help isolate spectral signatures specifically associated with treatment effects by using control samples as background.

Application Notes for Spectral Data in Drug Development

Essential Research Reagents and Computational Tools

Table 3: Essential Research Reagent Solutions for PCA-Based Spectral Analysis

| Reagent/Resource | Function in PCA Workflow | Application Context | Technical Considerations |

|---|---|---|---|

| Standardized Reference Materials | Instrument calibration and data validation | Ensures cross-experiment comparability | Use certified reference materials specific to analytical technique |

| Spectral Preprocessing Kits | Sample preparation for consistent spectral acquisition | Minimizes technical variance in spectral measurements | Follow standardized protocols for sample processing |

| Chemical Standards | Identification of spectral features | Links loadings to specific molecular entities | Use high-purity compounds relevant to biological system |

| Quality Control Samples | Monitoring analytical performance | Detects instrumental drift or batch effects | Include in every analytical batch |

| Statistical Software (R, Python) | PCA computation and visualization | Implementation of analytical algorithms | Use validated scripts and maintain version control |

Case Study: Soil Macronutrient Analysis Using PCA-Based Spectral Index

A practical example of PCA application to spectral data comes from agricultural research, where researchers developed a PCA-based standardized spectral index (SSRI) from Sentinel-2 satellite data for modeling soil macronutrients [20]. This approach demonstrates how PCA can transform raw spectral data into biologically meaningful information.

In this study, researchers first extracted six spectral bands from Sentinel-2 imagery (Blue, Green, Red, NIR, SWIR1, SWIR2) and applied PCA to these correlated spectral bands [20]. The first principal component captured the majority of spectral variance and was used to create a standardized spectral reflectance index (SSRI). This PCA-derived index showed superior performance for predicting total nitrogen (TN) compared to conventional spectral indices, achieving R² = 0.77 in linear regression models [20].

This case study illustrates key advantages of PCA for spectral analysis: (1) reduction of data dimensionality by transforming six correlated spectral bands into a single informative index, (2) minimization of noise and redundancy in spectral data, and (3) creation of a robust predictive variable that captures essential spectral patterns related to biological variables of interest (soil macronutrients) [20]. The methodology demonstrates a transferable approach for developing optimized spectral indices in various drug development contexts where spectral signatures correlate with biological outcomes.

Troubleshooting and Technical Considerations

Successful application of PCA to spectral biological data requires attention to several technical considerations. A common challenge is the interpretation of loadings when variables are highly correlated, which can lead to arbitrary sign flipping in component definitions. This can be addressed by focusing on the magnitude rather than the sign of loadings and comparing loading patterns across multiple components.

Another consideration involves missing data, which must be addressed prior to PCA implementation. While simple imputation methods may suffice for small amounts of missing data, more sophisticated approaches such as multiple imputation or maximum likelihood estimation are preferable for datasets with substantial missingness.

The choice between covariance-based and correlation-based PCA warrants careful consideration based on research objectives. Covariance-based PCA preserves the natural variance structure of the data, giving more influence to variables with larger scales, while correlation-based PCA standardizes all variables to unit variance, giving equal weight to all variables regardless of their original measurement units [18]. In spectral applications where variables represent different types of measurements or scales, correlation-based PCA is generally preferred.

Finally, researchers should be cautious against overinterpreting minor components that may represent noise rather than biologically meaningful patterns. Validation through resampling methods such as bootstrapping or permutation testing can help distinguish robust patterns from random variations.

The study of behavior involves analyzing complex, high-dimensional data to uncover the underlying structure and organization of actions. Spontaneous behavior is not a random sequence but is composed of modular elements or "syllables" that follow probabilistic, structured sequences [21]. These patterns are influenced by internal states such as motivation, arousal, and circadian rhythms, as well as external conditions [22]. The challenge for neuroscientists is to reduce the complexity of these rich behavioral datasets to identify meaningful patterns and their neural correlates.

Principal Component Analysis (PCA) serves as a powerful computational technique for addressing this challenge. By performing dimensionality reduction, PCA helps researchers identify the primary axes of variation—the principal components—that capture the most significant sources of structure in behavioral data. This case study explores the application of PCA and related spectral preprocessing techniques in neuroscience, with a specific focus on uncovering behavioral patterns. We provide detailed protocols and analytical frameworks that enable researchers to decompose complex behaviors into interpretable components, facilitating a deeper understanding of brain-behavior relationships.

Key Experiments and Quantitative Findings

Dimensionality Reduction in Spontaneous Behavior

The application of a Hierarchical Behavioral Analysis Framework (HBAF) combined with PCA in mice has revealed fundamental principles of behavioral organization. Researchers discovered that sniffing acts as a central hub node for transitions between different spontaneous behavior patterns, making the sniffing-to-grooming ratio a valuable quantitative metric for distinguishing behavioral states in a high-throughput manner [22]. These behavioral states and their transitions are systematically influenced by the animal's emotional status, circadian rhythms, and ambient lighting conditions.

Using three-dimensional motion capture combined with unsupervised machine learning, behavior can be decomposed into sub-second "syllables" that follow probabilistic rather than random sequences [21]. This hierarchical decomposition scales effectively across species and timescales, revealing conserved behavioral motifs from millisecond movements to extended action sequences like courtship or speech.

Cognitive Pattern Classification with Integrated PCA

A recent study introduced a novel PCA-ANFIS (Adaptive Neuro-Fuzzy Inference System) method for classifying cognitive patterns from multimodal brain signals. This approach achieved unprecedented classification accuracy of 99.5% for EEG-based cognitive patterns by leveraging PCA for dimensionality reduction followed by neuro-fuzzy inference for pattern recognition [23].

The methodology successfully addressed key challenges in brain signal analysis, including artifact contamination and non-stationarity, by extracting robust features from the dimensionality-reduced data. This enhanced classification performance has significant implications for diagnosing cognitive disorders and understanding the neural basis of behavior.

Table 1: Performance Comparison of Dimensionality Reduction Techniques in Behavioral Neuroscience

| Technique | Primary Application | Key Advantage | Reported Accuracy/Effectiveness |

|---|---|---|---|

| PCA + HBAF | Spontaneous behavior pattern analysis | Identifies hub transitions and behavioral states | Sniffing-to-grooming ratio effectively distinguishes states [22] |

| PCA-ANFIS | Multimodal brain signal classification | Combines dimensionality reduction with fuzzy inference | 99.5% classification accuracy for cognitive patterns [23] |

| Neural Manifold Visualization | Neural population dynamics | Reveals low-dimensional organization of neural activity | Captures dominant modes governing behavior [21] |

| Spectral Preprocessing + PCA | Spectral data analysis | Reduces instrumental artifacts and environmental noise | Enables >99% classification accuracy in complex spectra [3] |

Experimental Protocols

Comprehensive Protocol: Behavioral Pattern Analysis with PCA

Objective: To identify and characterize the principal components underlying spontaneous behavioral organization in rodent models.

Materials and Reagents:

- Experimental animals (e.g., C57BL/6 mice)

- High-speed video recording system (≥100 fps)

- Behavioral testing arena with controlled lighting

- Computational workstation with adequate processing power

- Data analysis software (Python with scikit-learn, SciPy, or MATLAB)

Procedure:

Video Acquisition and Preprocessing

- Record spontaneous behavior in the home cage or neutral arena for 60-minute sessions.

- Ensure consistent lighting conditions and minimal external disturbances.

- Extract pose information using markerless tracking software (e.g., DeepLabCut, SLEAP).

- Compile time-series data for all tracked body parts (e.g., snout, ears, paws, tail base).

Behavioral Feature Engineering

- Calculate derivative features: velocity, acceleration, and angular relationships between body parts.

- Compute dynamic features: distance between body points, relative angles, and movement trajectories.

- Generate ethological features: duration, frequency, and transitions between annotated behavioral states.

Data Preprocessing for PCA

- Standardize features by removing the mean and scaling to unit variance.

- Address missing values using appropriate imputation methods.

- For spectral data, apply necessary preprocessing: smoothing, baseline correction, and scatter correction [3].

Principal Component Analysis Implementation

- Construct a feature matrix where rows correspond to timepoints and columns to behavioral features.

- Perform PCA on the covariance matrix to identify principal components.

- Retain components explaining >90% of cumulative variance or based on the scree plot inflection point.

- Interpret component loadings to identify which behavioral features contribute most to each component.

Validation and Interpretation

- Project behavioral data onto the principal component space for visualization.

- Cluster data points in the reduced-dimensionality space to identify behavioral states.

- Validate findings by correlating component scores with independent physiological measures.

- Perform statistical testing to determine how experimental manipulations affect component expression.

Table 2: Research Reagent Solutions for Behavioral Neuroscience

| Reagent/Material | Function/Application | Specifications |

|---|---|---|

| High-speed camera system | Behavioral recording | ≥100 fps, high resolution for detailed movement capture |

| Markerless pose estimation software | Animal tracking | DeepLabCut, SLEAP for feature extraction |

| MATLAB/Python with toolboxes | Data analysis | Statistics, Machine Learning, Signal Processing toolboxes |

| Behavioral arena | Controlled testing environment | Standardized size, lighting, and sensory conditions |

| EEG/fNIRS equipment | Neural signal acquisition | Multimodal brain signal recording for correlation with behavior |

| Spectral preprocessing algorithms | Data quality enhancement | Cosmic ray removal, baseline correction, scattering correction [3] |

Supplemental Protocol: PCA-ANFIS for Cognitive Pattern Recognition

Objective: To implement a hybrid PCA-ANFIS system for classifying cognitive states from brain signals.

Procedure:

Multimodal Brain Signal Acquisition

Feature Extraction and Dimensionality Reduction

- Extract spectral, temporal, and nonlinear features (fractal dimension, entropy).

- Apply PCA to reduce feature dimensionality while retaining salient information.

ANFIS Model Development

- Design the fuzzy inference system with appropriate input membership functions.

- Train the hybrid network using backpropagation and least squares estimation.

- Validate model performance on held-out test datasets.

Cognitive State Classification

- Use the trained PCA-ANFIS model to classify cognitive states or patterns.

- Evaluate performance using accuracy, sensitivity, and specificity metrics.

Technical Specifications and Data Presentation

Spectral Preprocessing for Enhanced PCA

The effectiveness of PCA in behavioral neuroscience depends heavily on proper data preprocessing, particularly when working with spectral data. Critical preprocessing steps include:

- Cosmic Ray Removal: Eliminates high-intensity spikes from radiation events

- Baseline Correction: Removes background drift and offset variations

- Scattering Correction: Compensates for light scattering effects in spectroscopic measurements

- Spectral Normalization: Standardizes signal intensity across measurements

- Filtering and Smoothing: Reduces high-frequency noise while preserving signal features

These preprocessing techniques enable unprecedented detection sensitivity achieving sub-ppm levels while maintaining >99% classification accuracy in spectral analysis [3].

Table 3: Quantitative Results from PCA Applications in Behavioral Neuroscience

| Study/Application | Data Type | Key Quantitative Finding | Variance Explained by Top Components |

|---|---|---|---|

| Spontaneous behavior patterning [22] | 3D pose tracking | Sniffing as hub for behavioral transitions | Not specified |

| Neural population dynamics [21] | Neural firing rates | Low-dimensional manifolds structure behavior | Typically 70-90% by first 5-10 components |

| Cognitive pattern classification [23] | Multimodal EEG | 99.5% classification accuracy with PCA-ANFIS | Not specified |

| Real-world cognitive prediction [24] | Resting-state fMRI | Significant prediction of academic test scores | Not specified |

Visualization of Analytical Workflows

PCA Workflow for Behavioral Analysis

Integrated PCA-ANFIS System Architecture

Behavioral State Transition Network

From Theory to Practice: Implementing PCA in Pharmaceutical Spectral Workflows

Principal Component Analysis (PCA) serves as a powerful multivariate technique for reducing the dimensionality of complex, correlated data while preserving essential information. Within spectral data research and drug development, PCA transforms high-dimensional datasets into a new set of uncorrelated variables—the principal components (PCs)—which often reveal underlying patterns and structures that are not immediately apparent in the original data [25]. This guide provides a detailed, practical workflow for acquiring data, performing necessary pre-processing, and executing a PCA transformation, with a specific focus on applications in spectroscopic analysis and pharmaceutical research.

The application of PCA is particularly valuable in fields dealing with high-dimensional data, such as hyperspectral imaging and quantitative structure-activity relationship (QSAR) studies. For hyperspectral data, which can comprise hundreds of correlated bands, PCA acts as a spectral rotation that outputs uncorrelated data, creating a more manageable dataset for subsequent analysis without significant loss of information [26]. In drug discovery, PCA provides a "hypothesis-generating" framework, allowing researchers to approach complex biological systems from a systemic perspective rather than relying solely on reductionist approaches, thus identifying latent factors within biomedical datasets [25] [27].

Foundational Concepts

What is Principal Component Analysis?

Principal Component Analysis is a multivariate statistical technique that identifies patterns in data and expresses the data in a way to highlight their similarities and differences. The core mathematical foundation of PCA involves:

- Covariance Matrix Calculation: PCA begins with computing the covariance matrix of the data, which captures how different variables change together.

- Eigenvalue Decomposition: The eigenvectors and eigenvalues of this covariance matrix are then computed. The eigenvectors (principal components) indicate the directions of maximum variance in the data, while the eigenvalues represent the magnitude of this variance.

- Dimensionality Reduction: By projecting the original data onto the first few principal components, researchers can reduce dimensionality while retaining most of the information.

The first principal component (PC1) accounts for the largest possible variance in the data, with each succeeding component accounting for the highest possible variance under the constraint that it is orthogonal to the preceding components [26] [25]. This transformation can be expressed as:

PC = aX₁ + bX₂ + cX₃ + … + kXₙ

Where X₁-Xₙ are the original variables, and the coefficients a, b, c,...,k are determined by the eigenvectors [25].

Key Applications in Spectral Data Research and Drug Development

PCA finds diverse applications across scientific domains:

- Hyperspectral Data Analysis: For airborne or satellite hyperspectral imagery (e.g., NEON AOP hyperspectral data with ~426 bands), PCA reduces data dimensionality, facilitates visualization, and enables identification of key spectral features related to vegetation health, mineral composition, or environmental monitoring [26] [28].

- Drug Discovery and Biomedical Research: PCA helps analyze molecular descriptors in QSAR studies, identifies patterns in 'omics' data, and aids in understanding complex biological systems without strong a priori theoretical assumptions [25] [27] [29]. For instance, PCA has been applied to identify quercetin analogues with improved blood-brain barrier permeability by analyzing molecular descriptors related to solubility and lipophilicity [29].

- Solar-Induced Fluorescence (SIF) Reconstruction: Recent research demonstrates PCA's utility in reconstructing full-spectrum SIF emission from limited band measurements, supporting environmental monitoring and mission preparation for satellite missions like FLEX [28].

Experimental Workflow and Protocols

This section provides a detailed, step-by-step protocol for designing a complete workflow from data acquisition through PCA transformation, with specific examples from spectral data analysis.

Data Acquisition and Ingestion

The initial phase involves gathering high-quality data from appropriate sources.

Table 1: Data Acquisition Methods for Different Research Applications

| Research Domain | Data Source Examples | Acquisition Method | Key Considerations |

|---|---|---|---|

| Hyperspectral Imaging | NEON AOP Hyperspectral Reflectance [26], HyPlant [28] | Airborne/satellite sensors, spectral libraries | Spatial and spectral resolution, atmospheric conditions, calibration |

| Drug Discovery | Molecular descriptors, chemical libraries [25] [29] | Laboratory measurements, computational chemistry, public databases | Data standardization, descriptor selection, domain relevance |

| Biomedical Research | Metabolomic profiles, genomic data [25] | High-throughput screening, genomic sequencing | Sample preparation, normalization, ethical compliance |

Protocol 1.1: Acquiring Hyperspectral Reflectance Data

- Identify Data Source: Select an appropriate hyperspectral dataset. For example, the NEON AOP Hyperspectral Reflectance data available in Google Earth Engine provides 426 bands at 1m resolution [26].

- Filter and Import: Apply spatial, temporal, and quality filters. Example code for Google Earth Engine:

- Visual Inspection: Create a natural color composite for initial quality assessment using appropriate bands (e.g., B053 ~660nm red, B035 ~550nm green, B019 ~450nm blue) [26].

Protocol 1.2: Sourcing Molecular Data for Drug Discovery

- Define Molecular Set: Identify the compound series for analysis (e.g., quercetin and its analogues) [29].

- Compute Molecular Descriptors: Calculate relevant descriptors (e.g., logP, molecular weight, polar surface area) using computational tools like VolSurf+ or similar platforms.

- Compile Data Matrix: Create a structured dataset with compounds as rows and molecular descriptors as columns for subsequent analysis.

Data Pre-processing and Transformation

Raw data requires careful pre-processing before PCA to ensure meaningful results.

Table 2: Data Pre-processing Steps for Different Data Types

| Processing Step | Hyperspectral Data | Molecular Data | Rationale |

|---|---|---|---|

| Noise Removal | Exclude water vapor bands and noisy spectral regions [26] | Remove descriptors with near-zero variance | Enhances signal-to-noise ratio |

| Data Cleaning | Handle missing pixels or sensor errors | Address missing values, outliers | Ensures data integrity |

| Normalization | Standardize reflectance values | Scale descriptors to comparable ranges | Prevents dominance by high-variance variables |

| Data Centering | Subtract mean spectrum | Subtract mean for each descriptor | Essential for PCA covariance calculation |

Protocol 2.1: Pre-processing Hyperspectral Data

- Band Selection: Remove problematic bands (e.g., water vapor absorption regions). The NEON AOP data has approximately ~380 valid bands after exclusion [26].

- Noise Reduction: Apply spectral smoothing if necessary (e.g., Savitzky-Golay filter).

- Data Transformation: Convert data to a suitable format for analysis. Example Earth Engine code:

- Mean Centering: Calculate and subtract the mean value for each band:

Protocol 2.2: Preparing Molecular Data

- Descriptor Filtering: Remove redundant or highly correlated descriptors to reduce multicollinearity.

- Data Standardization: Scale all descriptors to have zero mean and unit variance to prevent variables with large scales from dominating the PCA.

- Data Validation: Check for normality and linear relationships, as PCA is most effective with linearly related variables.

PCA Execution and Transformation

This core phase involves performing the principal component analysis.

Protocol 3.1: Computing Principal Components

- Covariance Matrix Calculation: Compute the covariance matrix of the mean-centered data. Example implementation:

- Eigenanalysis: Perform eigenvalue decomposition of the covariance matrix:

- Project Data: Project the original data onto the eigenvectors to obtain principal component scores:

- Select Components: Choose the number of components to retain based on eigenvalues (variance explained) or scree plot analysis.

Protocol 3.2: Efficient PCA Sampling Strategy

For large datasets, compute PCA on a representative sample to reduce computational demands:

- Define Sample Strategy: Collect random samples from the dataset:

- Compute PCA on Sample: Apply the PCA algorithm to the sample rather than the full dataset.

- Project Full Dataset: Use the resulting eigenvectors to transform the entire dataset.

Results Interpretation and Validation

The final phase focuses on extracting meaningful insights from PCA results.

Protocol 4.1: Interpreting Principal Components

- Variance Explanation: Examine the proportion of total variance explained by each component. Typically, the first 2-3 components capture most of the variance.

- Component Loading Analysis: Identify which original variables contribute most to each component. High absolute loadings indicate important variables.

- Pattern Identification: Interpret the biological or physical meaning of components:

- In hyperspectral data: PC1 often represents overall brightness/albedo (typically 90%+ of variance), PC2 frequently highlights vegetation vs. non-vegetation contrasts, and PC3 may reveal subtle features not visible in original bands [26].

- In molecular data: Components might represent underlying physicochemical properties like lipophilicity, polarity, or molecular size [29].

Protocol 4.2: Validating and Exporting Results

- Visual Validation: Create scatter plots of observations in the space of the first few PCs to identify clusters, outliers, or patterns.

- Result Export: Save PCA results for further analysis:

- Downstream Analysis: Use PCA results as input for subsequent analyses like clustering, classification, or regression modeling.

Workflow Visualization

The following diagram illustrates the complete workflow from data acquisition to PCA transformation:

PCA Workflow Diagram

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Tool/Resource | Function/Purpose | Example Applications |

|---|---|---|

| Google Earth Engine | Cloud-based geospatial analysis | Processing NEON AOP hyperspectral data [26] |

| VolSurf+ | Computation of molecular descriptors | Calculating physicochemical properties for drug discovery [29] |

| Python/R Libraries | Statistical computing and PCA implementation | scikit-learn (Python), prcomp (R) |

| Covariance Calculator | Matrix operations for PCA | Earth Engine Reducer.centeredCovariance() [26] |

| Molecular Docking Software | Binding affinity estimation | Assessing protein-ligand interactions (e.g., for IPMK) [29] |

| Data Visualization Tools | Results interpretation and presentation | Creating score plots, loading plots, biplots |

Troubleshooting and Optimization

Even well-designed workflows may encounter challenges. This section addresses common issues and optimization strategies.

Table 4: Common PCA Challenges and Solutions

| Challenge | Symptoms | Solution Approaches |

|---|---|---|

| High Computational Demand | Long processing times, memory errors | Use representative sampling (e.g., 500 pixels) rather than full dataset [26] |

| Overfitting | Components explaining negligible variance | Retain components based on scree plot or eigenvalue >1 criterion |

| Interpretation Difficulty | Unclear meaning of principal components | Analyze component loadings to identify contributing original variables |

| Insufficient Variance Captured | First PCs explain small variance percentage | Check data pre-processing, consider non-linear methods if appropriate |

Optimization Strategy 1: Sampling Parameters

Adjust sampling based on data characteristics:

- For homogeneous landscapes: Fewer samples may suffice (100-500)

- For heterogeneous areas: Increase sample size (500-1000)

- Always set a random seed for reproducibility [26]

Optimization Strategy 2: Component Selection

Use multiple criteria for determining how many components to retain:

- Kaiser criterion (eigenvalue >1)

- Scree plot elbow point

- Cumulative variance explained (e.g., >80%)

- Cross-validation techniques

This guide has presented a comprehensive workflow for designing and executing Principal Component Analysis from data acquisition through transformation, with specific applications in spectral data research and drug development. The structured approach—encompassing careful data collection, appropriate pre-processing, efficient PCA computation, and thoughtful interpretation—ensures robust and meaningful dimensional reduction across diverse scientific domains.

The protocols and troubleshooting guidance provided here offer researchers a practical foundation for implementing PCA in their own work, whether analyzing hyperspectral imagery with hundreds of bands or identifying key molecular descriptors in pharmaceutical research. By following this workflow, scientists can effectively uncover hidden patterns in complex datasets, reduce dimensionality for subsequent analyses, and generate valuable hypotheses for further investigation.

Vibrational Painting (VIBRANT) represents a significant advancement in high-content phenotypic screening by integrating vibrational imaging, multiplexed vibrational probes, and optimized data analysis pipelines for measuring single-cell drug responses. This method was developed to overcome the limitations of existing techniques, such as low throughput, high cost, and substantial batch effects, which often hinder large-scale drug discovery efforts. Unlike traditional bulk measurements that mask cell-to-cell heterogeneity, VIBRANT provides a robust platform for assessing drug efficacy, understanding mechanisms of action (MoAs), overcoming drug resistance, and optimizing therapy at the single-cell level. Its high sensitivity, rich metabolic information content, and minimal batch effects make it a promising tool for advancing phenotypic drug discovery [30] [31].

The core principle of VIBRANT involves the use of mid-infrared (MIR) metabolic imaging coupled with specially designed IR-active vibrational probes. This coupling drastically improves metabolic sensitivity and specificity compared to label-free approaches. An advantage of Fourier-transform infrared (FTIR) spectroscopic imaging in measuring single-cell drug responses is its minimal background, as it measures MIR absorbance of cells without significant interference from autofluorescence or the added drugs themselves, which typically are at much lower concentrations [30].

Principal Component Analysis of Spectral Data

The Role of PCA in Spectral Profiling

Principal Component Analysis (PCA) is a fundamental statistical technique for reducing the dimensionality of large datasets, increasing interpretability while minimizing information loss. It operates by creating new, uncorrelated variables (principal components) that successively maximize variance. Finding these components involves solving an eigenvalue/eigenvector problem, and the resulting new variables are defined by the dataset itself, making PCA an adaptive data analysis technique [32].

In the context of VIBRANT, the spectral data collected from single cells is inherently high-dimensional, with each wavelength representing a separate variable. PCA is applied as an exploratory tool to analyze the spectral fingerprints of cells under different drug perturbations. The "variance" preserved by the principal components in this context represents the statistical information or variability in the biochemical composition of cells as captured by their vibrational spectra. This process is crucial for mapping cell phenotypes from large-scale spectral data and serves as a foundational step before further machine learning analysis [30] [32].

PCA Workflow for Spectral Data

The standard PCA workflow begins with a dataset containing observations on p numerical variables (spectral wavelengths) for each of n entities (single cells). These data values define p n-dimensional vectors or, equivalently, an n×p data matrix X. PCA seeks linear combinations of the columns of X that exhibit maximum variance. In spectroscopic applications, the data matrix is first centered, meaning the mean spectrum is subtracted from each individual spectrum. The principal components (PCs) are then obtained from the eigendecomposition of the covariance matrix of this centered data matrix or, equivalently, from the singular value decomposition (SVD) of the centered matrix itself [32].

The following diagram illustrates the core data processing and analysis pipeline, from raw spectral data to machine learning classification, with PCA playing a central role in feature reduction.

Key Reagents and Research Solutions

The VIBRANT methodology relies on a specific set of vibrational probes designed to report on distinct metabolic activities within live cells. The table below details these essential reagents and their functions.

Table 1: Key Research Reagent Solutions for VIBRANT Profiling

| Reagent Name | Type/Function | Key Spectral Features | Biological Process Monitored |

|---|---|---|---|

| ¹³C-Amino Acids (¹³C-AA) | IR-active metabolic probe | Red-shifted amide I band at 1616 cm⁻¹ (from 1650 cm⁻¹) | De novo protein synthesis [30] |

| Azido-Palmitic Acid (Azido-PA) | IR-active metabolic probe | Characteristic peak at 2096 cm⁻¹ (azide bond) | Saturated fatty acid metabolism [30] |

| Deuterated Oleic Acid (d34-OA) | IR-active metabolic probe (newly introduced) | Peaks at 2092 cm⁻¹ and 2196 cm⁻¹ (CD₂ vibrations) | Unsaturated fatty acid metabolism [30] |

Experimental Protocol for Single-Cell Drug Profiling

Cell Culture and Probe Loading

- Cell Line Selection: Begin with an appropriate cell model. The metastatic breast cancer cell line MDA-MB-231 has been used as a model for anticancer drug screening [30].

- Probe Co-culture: Culture cells in medium supplemented with the three vibrational probes (¹³C-AA, Azido-PA, and d34-OA) for 48 hours. This allows for metabolic incorporation of the probes into newly synthesized macromolecules [30].

- Drug Treatment: Prior to the main experiment, determine the half-maximal inhibitory concentration (IC₅₀) for each drug of interest using cell viability assays after 48 hours of treatment. This ensures cells across different drug categories are measured in a comparable state [30].

Spectral Image Acquisition and Preprocessing

- Image Acquisition: Perform large-area MIR metabolic imaging of the probe-loaded cells using an FTIR microscope. Ensure spatial resolution is sufficient to resolve single cells [30].

- Spectral Unmixing: Apply a linear unmixing algorithm to the acquired spectral data to separate the overlapping signals of Azido-PA and d34-OA at ~2090 cm⁻¹. The unique peak of d34-OA at 2196 cm⁻¹ is used for this purpose [30].

- Single-Cell Segmentation: Use computational methods to identify and segment individual cells within the spectral images [30].

- Spectral Profile Extraction: Extract the full IR spectrum or specific probe signals for each segmented cell. This constitutes the single-cell spectral profile used for downstream analysis [30].

Data Analysis and MoA Prediction

- PCA and Feature Reduction: Perform PCA on the preprocessed single-cell spectral data. This critical step reduces the dimensionality of the dataset, transforming the original spectral variables into a smaller set of principal components that capture the majority of the variance in the data. These components serve as the input features for subsequent machine learning models [30] [32].

- Classifier Training for MoA Identification: Train a supervised machine learning classifier (e.g., a support vector machine or random forest) using the principal components from cells treated with drugs of known MoA. The model learns to associate specific spectral phenotypes with known drug mechanisms [30].

- Novelty Detection for Drug Discovery: Implement a novelty detection algorithm. This unsupervised approach identifies drug candidates that induce spectral phenotypes distinct from those of known MoAs, flagging them as having potentially novel mechanisms of action [30].

The following diagram details the flow of data and analytical steps from raw image acquisition to final pharmacological insights.

Quantitative Profiling and Performance Metrics

The VIBRANT platform has been rigorously validated through large-scale profiling studies. The table below summarizes quantitative data from a key study, demonstrating the scale and performance of the method.

Table 2: VIBRANT Profiling Scale and Classification Performance

| Profiling Metric | Result / Value | Context & Significance |

|---|---|---|

| Single-Cell Profiles Collected | > 20,000 | Corresponding to 23 different drug treatments [30] |

| MoA Prediction Accuracy | Extremely High | Successful prediction of 10-class drug MoAs at the single-cell level [30] [33] |

| Key Advantage | Minimal Batch Effects | Overcomes a major limitation of image-based profiling methods like Cell Painting [30] [31] |

Application Notes for Drug Discovery

Mechanism of Action Identification

The application of VIBRANT for MoA identification relies on the high sensitivity of the spectral profile to drug-perturbed cell phenotypes. The protocol involves treating cells with a panel of drugs with well-annotated MoAs to create a training set. A machine learning classifier, such as the one described in Section 4.3, is then trained on the principal components derived from the spectral data of these cells. This model can subsequently predict the MoA of unknown compounds based on the spectral phenotypes they induce. The high content of the metabolic information allows the classifier to distinguish between even closely related mechanisms with high accuracy, providing a powerful tool for deconvoluting the action of new drug candidates [30].

Novelty Detection for First-in-Class Drugs

A particularly innovative application of VIBRANT is its use in discovering drug candidates with novel MoAs, which is a primary goal of phenotypic screening. This is achieved through a novelty detection algorithm that operates on the principal component-reduced data. Instead of classifying into known categories, this algorithm identifies treated cells whose spectral profiles are outliers compared to the profiles induced by any known MoA in the training set. This approach is invaluable for identifying first-in-class therapeutics that act through previously untargeted biological pathways, thereby expanding the therapeutic landscape [30] [31].

Assessing Drug Combination Therapy