Validating Specificity and Selectivity in Spectrophotometric Methods: A Guide for Pharmaceutical and Biomedical Research

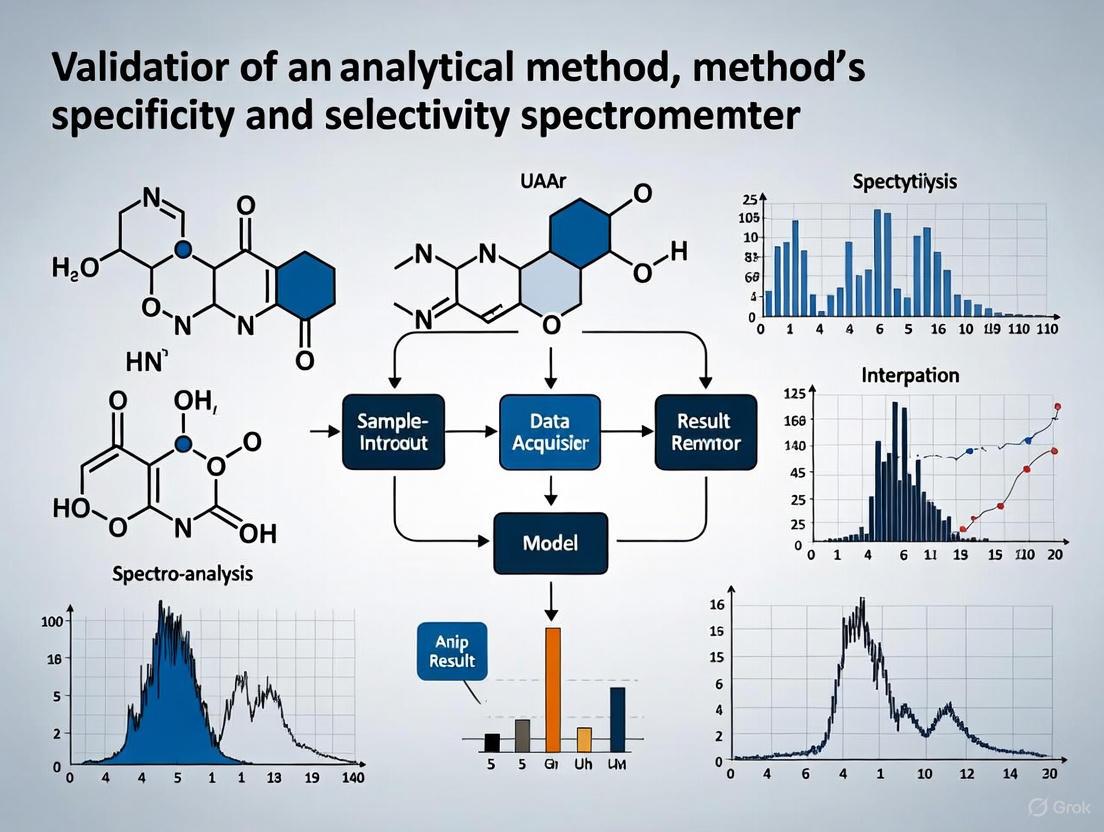

This article provides a comprehensive guide for researchers and drug development professionals on validating the specificity and selectivity of analytical methods using spectrophotometers.

Validating Specificity and Selectivity in Spectrophotometric Methods: A Guide for Pharmaceutical and Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on validating the specificity and selectivity of analytical methods using spectrophotometers. It covers foundational principles distinguishing specificity from selectivity, methodological approaches aligned with ICH Q2(R2) and USP <857> guidelines, troubleshooting for common instrument and method failures, and strategies for robust performance qualification to ensure regulatory compliance and data integrity in biomedical and clinical research.

Specificity vs. Selectivity: Core Concepts for Robust Spectrophotometric Analysis

In analytical chemistry, specificity refers to the ability of a method to measure the analyte of interest accurately and specifically in the presence of other components in a complex matrix. This parameter is fundamental in analytical method validation, ensuring that the signal measured can be unequivocally attributed to the target analyte, free from interference from the sample matrix. The challenge of achieving high specificity intensifies with the complexity of the matrix, such as in biological fluids (serum, urine), environmental samples (wastewater, sludge), and food products, where numerous other compounds can interfere with the detection and quantification of the analyte.

Matrix effects represent a significant challenge to specificity, as additional unknown components can alter the instrument's sensitivity to the analyte. This situation frequently arises in environmental and pharmaceutical analyses, where calibration plots cannot be reliably performed because the composition of the matrix is complex and unknown. The ability to distinguish the target analyte from structurally similar compounds, metabolites, or matrix components is paramount for generating reliable data in research, drug development, and regulatory compliance.

Comparative Analysis of Analytical Techniques

The choice of analytical technique significantly impacts the ability to achieve the required specificity for an analysis. Modern separation techniques coupled with advanced detection systems provide various pathways to address the challenges posed by complex matrices.

High-Performance Liquid Chromatography vs. Gas Chromatography

High-Performance Liquid Chromatography (HPLC) and Gas Chromatography (GC) are two foundational chromatography techniques used for separation prior to detection. Their fundamental principles and applicability differ based on the nature of the analytes and the matrix.

- HPLC is tailored for analyzing non-volatile, thermally labile, and high-molecular-weight compounds, such as proteins, peptides, and many pharmaceuticals. It operates at ambient temperatures, making it suitable for compounds that would degrade at the high temperatures used in GC [1].

- GC excels in the separation of volatile and semi-volatile organic compounds. It requires samples to be vaporized, which limits its application to compounds that are thermally stable at the high temperatures (150–300°C) typically used [1]. For non-volatile or polar compounds, a chemical derivatization step is often necessary to improve volatility and thermal stability, adding complexity to sample preparation [2].

Table 1: Comparison of HPLC and GC Characteristics for Complex Matrix Analysis

| Feature | HPLC | GC |

|---|---|---|

| Analyte Type | Non-volatile, thermally labile, high molecular weight [1] | Volatile, semi-volatile, thermally stable [1] |

| Typical Matrices | Biological fluids, pharmaceuticals, polymers [1] | Environmental samples (air, water), fuels, essential oils [1] |

| Sample Preparation | Often involves dilution, protein precipitation, solid-phase extraction | May require derivatization for non-volatile compounds [2] |

| Key Strength for Specificity | Versatility in handling a wide range of polar and ionic compounds | Exceptional separation efficiency for volatile mixtures |

Detection Platforms: MS/MS, HRMS, and Emerging Biophysical Techniques

The coupling of chromatography to mass spectrometry dramatically enhances specificity by adding a second dimension of separation based on the mass-to-charge ratio of ions.

- Liquid Chromatography-Mass Spectrometry (LC-MS) and GC-MS: The tandem mass spectrometry (MS/MS) platform, particularly using Multiple Reaction Monitoring (MRM), is a gold standard for achieving high specificity. In MRM, a specific precursor ion is selected, fragmented, and a specific product ion is monitored. This two-stage selection process provides a high degree of confidence in the identity of the analyte [3] [4]. The commission decision within the European community, for instance, requires the precursor ion and at least two transitions for confirming banned substances [3].

- Liquid Chromatography-High-Resolution Mass Spectrometry (LC-HRMS): HRMS instruments, such as Orbitrap and TOF, provide accurate mass measurement, which adds another powerful filter for specificity. It allows for the distinction of isobaric compounds (compounds with the same nominal mass but different exact masses) and is highly effective for non-targeted screening [5] [3].

- Multi-Stage Mass Spectrometry (MSⁿ): Techniques like LC-HR-MS³ provide an additional layer of confirmation by generating a second generation of product ions. This offers more in-depth structural information, which can be crucial for identifying compounds in complex matrices like serum and urine, and can improve detection limits for some analytes [5].

- Emerging Biophysical Techniques: Methods like Focal Molography (FM) offer a novel approach for characterizing biomolecular interactions directly in complex matrices like serum. FM uses a patterned sensor (mologram) to create a coherent signal from specific binding events, while intrinsically subtracting signals from non-specific binding. This makes it particularly robust for determining equilibrium and kinetic constants in biologically relevant conditions where techniques like Surface Plasmon Resonance (SPR) and Bio-Layer Interferometry (BLI) struggle with baseline instability due to non-specific binding [6].

Table 2: Comparison of Detection Techniques for Specificity in Complex Matrices

| Technique | Principle | Advantages for Specificity | Example Performance Data |

|---|---|---|---|

| LC-MS/MS (MRM) | Monitoring precursor ion > product ion transition | High selectivity; widely used for targeted quantification; robust confirmation criteria [3] | LODs for pharmaceuticals in water: 100-300 ng/L [7] |

| LC-HRMS | Accurate mass measurement of analyte and fragments | Distinguishes isobaric compounds; enables retrospective data analysis | Resolution >20,000 FWHM for confident identification [3] |

| LC-HR-MS³ | MS² product ion further fragmented to yield MS³ spectrum | Provides deeper structural information; increases confidence for challenging IDs | Improved identification for 4-8% of analytes at lower concentrations in serum/urine [5] |

| Focal Molography | Coherent diffraction from a nano-patterned ligand surface | Intrinsic referencing minimizes non-specific binding; works in serum [6] | KD measurement in 50% bovine serum within 1.8-fold of buffer value [6] |

Experimental Protocols for Demonstrating Specificity

Standard Addition for Compensating Matrix Effects in High-Dimensional Data

Matrix effects can severely impact the accuracy of quantitative analysis, particularly in techniques like spectroscopy. The standard addition method is a classical approach to compensate for these effects.

Protocol Overview:

- Pure Analyte Training: A training set of the pure analyte (without matrix) is measured at various concentrations to establish the unit response, ε(xj), at all measurement points (e.g., wavelengths) [8].

- Model Building: A predictive model, such as Principal Component Regression (PCR), is built based on this pure analyte training set [8].

- Sample Measurement: The signals of the sample in the complex matrix are measured.

- Standard Additions: Known quantities of the pure analyte are successively added to the sample, and the signals are measured after each addition [8].

- Linear Regression per Variable: For each measurement point (j), a linear regression of the signal versus the added concentration is performed. The intercept (βj) and slope (αj) are recorded [8].

- Signal Correction: A corrected signal is calculated for each point j: fcorr(xj) = ε(xj) * (βj / αj). This step effectively compensates for the matrix-induced sensitivity change [8].

- Prediction: The built PCR model is applied to the corrected signal, fcorr, to predict the analyte concentration in the original sample [8].

Performance: This algorithm has been shown to dramatically improve prediction accuracy, reducing Root Mean Square Error (RMSE) by factors of over 1000 in the presence of strong matrix effects, outperforming the direct application of chemometric models [8].

Experimental workflow for standard addition method

Specificity Evaluation for Pharmaceutical Analysis in Water

The following protocol, adapted from the development of a UHPLC-MS/MS method for trace pharmaceuticals, outlines a comprehensive approach to validate method specificity in a complex aqueous matrix [7].

Protocol Overview:

- Sample Preparation:

- Water/Wastewater Samples: Collect and filter samples. Perform solid-phase extraction (SPE). A key "green" innovation can be the omission of the solvent evaporation step after SPE, reconstituting the eluent directly in the mobile phase [7].

- Biological Fluids (e.g., Serum): Mix 125 µL serum with 375 µL acetonitrile to precipitate proteins. Centrifuge, collect supernatant, dry under nitrogen, and reconstitute in a compatible solvent [5].

- Chromatographic Separation:

- Mass Spectrometric Detection:

- Technique: Tandem Mass Spectrometry (MS/MS).

- Ionization: Electrospray Ionization (ESI), positive or negative mode.

- Acquisition: Multiple Reaction Monitoring (MRM). For each analyte, one precursor ion and at least two characteristic product ions are monitored.

- Optimization: Optimize collision energies for each transition [7] [4].

- Specificity Assessment:

- Analyze representative blank samples from the matrix (e.g., drug-free water, serum, urine) to demonstrate the absence of interfering signals at the retention times of the target analytes and their monitored transitions [5] [7].

- Confirm that the ratio of the two monitored transitions for each analyte in the sample falls within a pre-defined range (e.g., ±20-30%) of the average ratio observed in the standard solutions [3].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Specificity Analysis

| Item | Function in Analysis |

|---|---|

| C18 Chromatography Column | The workhorse stationary phase for reversed-phase LC-MS, separating analytes based on hydrophobicity [5] [7]. |

| Ammonium Formate / Formic Acid | Common mobile phase additives that promote ionization in ESI-MS and help control peak shape [5] [7]. |

| Solid-Phase Extraction (SPE) Cartridges | For sample clean-up and pre-concentration of analytes from complex matrices like water or serum, reducing matrix interference [5] [7]. |

| LC-MS Grade Solvents (MeOH, ACN, H₂O) | High-purity solvents minimize chemical noise and background interference, crucial for achieving low limits of detection [5]. |

| Stable Isotope-Labeled Internal Standards | Correct for variability in sample preparation and ionization suppression/enhancement, improving quantitative accuracy [5]. |

| Reference Standards (Target Analytes) | Essential for method development, calibration, and verifying retention time and fragmentation patterns to confirm specificity [5] [4]. |

Specificity assurance workflow in LC-MS/MS

For researchers and drug development professionals, the validation of an analytical method is a critical step in ensuring the reliability of data supporting product quality and safety. Within this framework, selectivity is a fundamental parameter. It is defined as the ability of a method to differentiate and quantify the analyte of interest accurately and reliably in the presence of other components in the sample, such as impurities, degradation products, matrix components, or other active pharmaceuticals [9]. This concept is distinct from specificity, which is often considered an absolute term indicating that a method responds only to a single analyte. In practice, most chromatographic methods are selective, as they can measure and report responses for multiple analytes independently, without interference [9]. Demonstrating selectivity is essential for generating credible pharmacokinetic, toxicokinetic, and stability data, as a lack of selectivity can lead to inaccurate quantification, potentially compromising patient safety and drug efficacy.

Theoretical Foundations and Regulatory Definitions

The terms "specificity" and "selectivity" are often used interchangeably, but regulatory guidelines provide distinct definitions. According to the ICH Q2(R1) guideline, specificity is "the ability to assess unequivocally the analyte in the presence of components which may be expected to be present" [9]. This is often illustrated as the ability to identify a single correct key from a bunch of keys. In contrast, selectivity, a term used in other guidelines like the European guideline on bioanalytical method validation, requires the identification of all components in a mixture [9]. For chromatographic methods, this translates to achieving clear resolution between the peaks of different analytes and interferences.

The foundation of selectivity in separation techniques like HPLC and GC is rooted in the differential interactions of various compounds with the chromatographic system. As explained by Colin Poole, selectivity in chromatography is measured by the separation factor (α), which is the ratio of the retention factors of two adjacent peaks [10]. This separation arises from differences in the free energy change as analytes partition between the mobile and stationary phases, driven by intermolecular interactions such as dispersion, dipole-dipole, orientation, and hydrogen bonding [10]. The following diagram illustrates the core concepts and workflow for establishing method selectivity.

Diagram 1: Conceptual foundation and workflow for establishing method selectivity and specificity.

Experimental Protocols for Demonstrating Selectivity

Case Study: LC-MS/MS Method for TT-478

A recent clinical trial for the novel therapeutic TT-478 provides a robust example of selectivity validation. The researchers developed and validated an LC-MS/MS method for quantifying TT-478 in human plasma. To demonstrate selectivity, the method's ability to unequivocally quantify the drug in the presence of its prodrug (TT-702) and potential matrix interferences from plasma was assessed. The protocol involved a simple protein precipitation extraction with acetonitrile [11]. The validation confirmed that the assay was sensitive and selective for TT-478, with the prodrug rapidly and completely hydrolyzing to the active moiety post-administration, thus not interfering with quantification. The method showed excellent precision (coefficient of variation < 12%) and accuracy (96-107%) across the analytical range of 75-25,000 ng/mL, proving its suitability for a first-in-human pharmacokinetic study [11].

Case Study: GC-ECNI-MS for Complex Mixtures

The quantification of Short-Chain Chlorinated Paraffins (SCCPs) presents a significant selectivity challenge due to their complex nature as mixtures of numerous congeners. A novel approach using Gas Chromatography with Electron Capture Negative Ionization Mass Spectrometry (GC-ECNI-MS) was developed to address the interference from medium-chain chlorinated paraffins (MCCPs). Traditional linear quantification methods were inaccurate due to the influence of chlorine content on the response. The new protocol involved creating a nonlinear surface fitting quantitative method that simultaneously accounts for the two independent variables of concentration and chlorine content [12]. This three-dimensional calibration model greatly improved the accuracy of SCCPs quantification in complex matrices like footwear materials, overcoming the interference from structurally similar MCCPs [12].

Assessment of Matrix Effects

A critical part of validating selectivity in LC-MS is assessing matrix effects—the suppression or enhancement of analyte ionization caused by co-eluting compounds from the sample matrix. A practical protocol for this involves:

- Post-extraction Addition: Spiking the analyte into a blank, extracted matrix sample and comparing its response to the same amount of analyte in a pure solvent [13]. A difference in response indicates a matrix effect.

- Standard Addition Method: This technique can compensate for matrix effects without requiring a blank matrix. It involves adding known increments of the analyte to the sample and extrapolating to find the original concentration [13].

- Using Stable Isotope-Labeled Internal Standards (SIL-IS): This is considered the gold standard for correcting matrix effects, as the SIL-IS experiences nearly identical ionization suppression/enhancement as the analyte, effectively normalizing the signal [13].

The diagram below outlines the primary workflow for detecting and mitigating matrix effects in LC-MS analysis.

Diagram 2: Strategies for the detection and elimination of matrix effects in quantitative LC-MS analysis.

Comparison of Analytical Techniques for Selectivity

The choice of analytical technique and its operational parameters profoundly impacts the ability to achieve the necessary selectivity for a given application. The table below summarizes key characteristics of different analytical approaches concerning selectivity.

Table 1: Comparison of Analytical Technique Selectivity and Application Context

| Analytical Technique | Key Selectivity Mechanism | Typical Application Context | Advantages for Selectivity | Limitations/Challenges |

|---|---|---|---|---|

| HPLC-UV [14] | Chromatographic retention time and UV spectrum | Quantification of drugs in pharmaceutical formulations (e.g., Meropenem) | Robust, reliable, simpler instrumentation; suitable for routine analysis | Limited for unresolved peaks; less specific for co-eluting compounds |

| LC-MS/MS [11] [7] | Retention time + molecular mass + specific fragmentation (MRM) | Bioanalysis (e.g., TT-478 in plasma), trace environmental contaminants | High specificity and sensitivity; unambiguous identification via MRM | Matrix effects can suppress/enhance ionization [15] [13] |

| GC-ECNI-MS [12] | Retention time + selective ion formation in ECNI mode | Complex mixtures (e.g., Short-Chain Chlorinated Paraffins) | High sensitivity for halogenated compounds; provides homologue patterns | Complex calibration; interference from similar compound classes (e.g., MCCPs) |

| GC×GC–MS [12] | Two independent separation mechanisms + MS | Highly complex mixtures | Superior separation power; reduces interferences | High cost; requires skilled operation; complex data handling |

The Scientist's Toolkit: Essential Reagents and Materials

The following table lists key reagents, materials, and instruments crucial for developing and validating selective analytical methods, as featured in the cited research.

Table 2: Key Research Reagent Solutions for Selective Bioanalytical Methods

| Item | Function in Selectivity/Validation | Example from Research |

|---|---|---|

| Stable Isotope-Labeled Internal Standard (SIL-IS) | Corrects for analyte loss during preparation and matrix effects during ionization; essential for high-quality LC-MS/MS quantification [13]. | Creatinine-d3 used in LC-MS/MS creatinine assay [13]. |

| Chromatography Columns (C18) | Provides the primary separation mechanism based on hydrophobic interactions; column chemistry is central to achieving resolution from interferences. | Kinetex C18, Hyperclone C18 used in Meropenem HPLC method [14]. |

| Sample Preparation Materials (SPE, Filters) | Removes interfering matrix components (proteins, phospholipids) prior to analysis, reducing matrix effects and column fouling. | Solid-Phase Extraction (SPE) used in green UHPLC-MS/MS method; 0.22 μm PTFE filters for mobile phase [7] [14]. |

| Mass Spectrometry & Detectors | Provides a second dimension of selectivity based on mass-to-charge ratio (m/z) and fragmentation patterns, crucial for confirming analyte identity. | API 3000 tandem mass spectrometer; GC-ECNI-MS for SCCPs [13] [12]. |

| Mobile Phase Modifiers | Improve peak shape and separation; additives can compete with analytes for adsorption sites, fine-tuning selectivity [16]. | Formic acid, ammonium acetate, triethylamine used in various LC methods [13] [14]. |

Selectivity is not merely a box-ticking exercise in method validation but is the cornerstone of generating reliable and meaningful analytical data. As demonstrated by the case studies, proving that a method can accurately quantify an analyte amidst a backdrop of similar compounds and complex matrix interferences requires a strategic combination of sophisticated instrumentation, well-designed experiments, and intelligent calibration techniques. Whether through advanced chromatographic separation, the power of mass spectrometry, or mathematical modeling to account for complex variables, a demonstrably selective method is non-negotiable for confident decision-making in drug development, environmental monitoring, and regulatory compliance.

The Critical Role in Method Validation for Regulatory Compliance (ICH Q2(R2), USP, EP)

In the realm of analytical chemistry, particularly for pharmaceutical analysis, the concepts of specificity and selectivity are foundational for ensuring the accuracy, reliability, and regulatory compliance of any method. Within the framework of guidelines like ICH Q2(R2), USP, and EP, validating these parameters is not merely a recommendation but a mandatory requirement for the approval of drug substances and products. The terms, while often used interchangeably in casual discourse, hold distinct meanings and implications for method development. Specificity refers to the ability of a method to assess unequivocally the analyte in the presence of components that may be expected to be present, such as impurities, degradants, or excipients [17]. It is the gold standard for identity tests. Selectivity, on the other hand, describes the ability of the method to distinguish and measure the analyte in a mixture containing other structurally similar compounds, such as isomers or analogs, without overlapping signals [17]. In essence, specificity can be considered the ultimate expression of selectivity—a fully selective method is specific. The validation of these parameters is critical for avoiding false results, ensuring product safety, and meeting the stringent requirements of regulatory agencies like the FDA and EMA [18] [17].

The recent adoption of the updated ICH Q2(R2) guideline further emphasizes a life-cycle approach to analytical procedures, integrating method development data into the validation process and encouraging enhanced risk management [19]. This evolution underscores the need for scientists to not only perform validation as a checkbox exercise but to deeply understand the capability and limitations of their methods. For researchers and drug development professionals, mastering specificity and selectivity is paramount for developing robust control strategies, whether for assay, purity, impurity profiling, or identity testing. This guide will compare these critical attributes, provide experimental data from spectroscopic applications, and detail the protocols necessary for successful validation.

Regulatory Definitions and Comparative Analysis

Conceptual Distinctions as per ICH Guidelines

According to ICH guidelines, the distinction between specificity and selectivity is a matter of scope and application. The following table summarizes the key differences between these two validated parameters.

Table 1: Key Differences Between Specificity and Selectivity in Analytical Method Validation

| Parameter | Specificity | Selectivity |

|---|---|---|

| Core Definition | The ability to unequivocally assess the analyte in the presence of other components [17]. | The ability to distinguish and measure the analyte among structurally similar substances [17]. |

| Primary Focus | Ensures no interference from impurities, degradants, or excipients [17]. | Prevents signal overlap from similar compounds (e.g., isomers, analogs) [17]. |

| Analogy | "Finding the right person in a crowd without being distracted by others." | "Distinguishing between identical twins in a group." |

| Common Applications | Identity tests, assay of active ingredients [17]. | Quantification of analytes in complex mixtures, impurity testing. |

Practical Implications for Spectroscopic Methods

For spectroscopic techniques like Raman spectroscopy, demonstrating specificity or selectivity is achieved by proving that the analytical signal (e.g., a specific Raman peak) is unique to the analyte of interest or can be deconvoluted from a complex mixture. For instance, a method using Raman spectroscopy to identify an active pharmaceutical ingredient (API) in a final tablet product must be specific—the spectrum of the API must be identifiable without interference from the signals of fillers, binders, or lubricants. If the method is instead used to quantify two co-eluting isomers in a drug mixture, the method must be selective enough to resolve their individual spectral signatures, perhaps through chemometric analysis [20] [17]. The regulatory expectation is that the method is "fit-for-purpose," and the choice of validating for specificity or selectivity depends on the intended use of the procedure as defined in the Analytical Target Profile (ATP) [18] [19].

Experimental Protocols for Demonstrating Specificity and Selectivity

Generalized Workflow for Method Validation

A standardized approach is crucial for the objective demonstration of specificity and selectivity. The following diagram outlines a general workflow for this validation process.

Case Study: Specificity in Raman Spectroscopy for Heavy Metal Stress Detection

A recent study investigating heavy metal (HM) uptake in rice provides a compelling example of validating specificity using Raman spectroscopy [20]. This case is directly relevant to demonstrating how a method can distinguish between different stressors acting on the same biological system.

1. Experimental Design and Reagents: The experiment employed a dose-response design. Rice plants were cultivated hydroponically and exposed to varying concentrations of three different heavy metals: arsenic (As), cadmium (Cd), and lead (Pb), with a control group for comparison [20]. The key research reagents and their functions are listed below.

Table 2: Research Reagent Solutions for Raman Spectroscopy Experiment

| Reagent / Material | Function in the Experiment |

|---|---|

| Yoshida Nutrient Solution | Standardized growth medium to ensure consistent plant development and isolate the effect of heavy metals [20]. |

| Arsenic, Cadmium, Lead Solutions | Prepared at specific concentrations to induce distinct, dose-dependent biochemical stress responses in the rice plants [20]. |

| Hand-held Raman Spectrophotometer (830 nm) | Instrument to collect non-destructive spectral data from rice leaves, monitoring biochemical changes [20]. |

| ICP-MS (Inductively Coupled Plasma Mass Spectrometry) | Gold standard method used to quantitatively validate the actual concentration of heavy metals in the plant tissue [20]. |

2. Detailed Methodology:

- Sample Preparation: Rice seeds were germinated and grown for two weeks in a controlled environment chamber before the introduction of HM treatments. The HMs were administered concurrently with the Yoshida nutrient solution, which was replaced every three days [20].

- Spectral Acquisition: Raman spectra were collected directly from rice leaves using an Agilent Resolve hand-held Raman spectrophotometer with an 830 nm laser. Acquisition time was 1 second at a laser power of 495 mW. A total of 24 spectra were acquired for each treatment group weekly [20].

- Data Analysis: The collected spectra were baselined and normalized. The data was then subjected to chemometric analysis, including Analysis of Variance (ANOVA) and Partial Least Squares Discriminant Analysis (PLS-DA), to identify statistically significant spectral changes attributable to each heavy metal [20].

3. Data Interpretation and Specificity Demonstration: The analysis revealed that each heavy metal induced specific, dose-dependent changes in the Raman peaks associated with different biomolecules. For example, arsenic stress led to changes in carotenoid and phenylpropanoid abundances. The PLS-DA machine learning algorithm could interpret the full Raman spectrum to diagnose the specific type of HM toxicity with an average accuracy of 84.5% after only one week of stress [20]. This high degree of accuracy in classifying the stressor demonstrates the specificity of the Raman spectroscopic method. It proved capable of not just detecting general stress, but of identifying the exact causative agent (As, Cd, or Pb) based on the unique biochemical "fingerprint" each one produced.

Comparative Performance Data of Analytical Techniques

The following table summarizes quantitative data from the featured Raman spectroscopy study and contrasts it with a traditional technique, illustrating the performance metrics relevant to validation.

Table 3: Performance Comparison for Heavy Metal Detection in Plant Tissue

| Analytical Technique | Key Performance Metrics | Result / Value | Inference on Specificity/Selectivity |

|---|---|---|---|

| Raman Spectroscopy | Diagnostic Accuracy (PLS-DA model) | 84.5% accuracy in classifying HM type [20]. | High specificity: Method distinguishes between different HM stressors based on unique biochemical responses. |

| Raman Spectroscopy | Detection Sensitivity | Detected HM stress at levels aligned with environmental contamination [20]. | Selective enough to detect relevant, low-level stressors. |

| ICP-MS | Detection Limit & Quantification | "Super low limit of detection"; considered the gold standard [20]. | Highly specific and selective for individual metal atoms via mass-to-charge ratio. |

| ICP-MS | Sample Throughput & Destructiveness | Destructive; labor-intensive; requires sample digestion [20]. | N/A (Inherent to technique, not a performance attribute) |

The rigorous validation of specificity and selectivity is not an academic formality but a critical component of ensuring data integrity and product quality in pharmaceutical development and beyond. As demonstrated by the experimental case study, techniques like Raman spectroscopy, when combined with robust chemometrics, can achieve a high degree of specificity, allowing researchers to distinguish between subtly different physiological states or contaminants. The ICH Q2(R2) guideline provides the structured framework for this validation, emphasizing a risk-based, life-cycle approach. For scientists and regulators, a clear understanding and demonstration of these parameters provide the confidence that an analytical procedure is truly "fit-for-purpose," delivering reliable results that protect public health and ensure regulatory compliance.

Consequences of Poor Specificity and Selectivity on Product Quality and Consumer Safety

In the rigorous fields of pharmaceutical development, food safety, and environmental monitoring, the precision of analytical methods is paramount. The specificity and selectivity of an analytical procedure are foundational performance characteristics, defining its ability to accurately measure the analyte of interest in the presence of other components that may be expected to be present [21]. A lack of these properties can directly lead to severe consequences, including the release of substandard or hazardous products, therapeutic failures, and significant risks to consumer health. This guide objectively compares the performance of various spectroscopic techniques against traditional chromatographic methods, framing the discussion within the critical context of analytical method validation. Supported by experimental data, we illustrate how the choice of technology impacts the accuracy and reliability of results, with a direct bearing on product quality and public safety.

Performance Comparison of Analytical Techniques

The capability of an analytical method to correctly identify and quantify a target substance is the first line of defense against quality failures. The following table summarizes key performance metrics from comparative studies, highlighting the real-world implications of methodological choice.

Table 1: Comparative Performance of Analytical Techniques in Various Applications

| Analytical Technique | Application Context | Reported Sensitivity | Reported Specificity | Consequence of Poor Performance |

|---|---|---|---|---|

| Handheld NIR Spectrometer [22] | Screening of substandard/falsified (SF) medicines (e.g., analgesics, antibiotics) | 11% (All medicines), 37% (Analgesics) | 74% (All medicines), 47% (Analgesics) | High false-negative rate; allows ~89-63% of SF medicines to pass undetected, reaching patients. |

| Raman Spectroscopy (RS) [20] | Detection of heavy metal stress (As, Cd, Pb) in rice crops | Machine learning algorithm achieved 84.5% accuracy in diagnosing specific heavy metal toxicity. | Demonstrated specificity to distinct heavy metal-induced biochemical changes. | Inability to distinguish between toxic metals delays intervention, allowing contaminated food into the supply chain. |

| LC-HR-MS3 [5] | Screening toxic natural products (e.g., alkaloids) in serum and urine | Improved identification for ~4-8% of analytes at lower concentrations vs. MS2. | Provided deeper structural information, enhancing confidence for specific compounds. | Failure to identify toxic compounds leads to misdiagnosis and improper medical treatment. |

| UV-Spectrophotometry [23] | Quantification of Terbinafine HCl in pharmaceutical formulation | LOD: 0.42 μg/mL, LOQ: 1.30 μg/mL (in water). | Specific for drug in formulation; may lack inherent ability to distinguish from interferents. | Overestimation of API content if interferents co-elute, releasing sub-potent product. |

The data reveals a stark contrast between technologies. The study on NIR spectrometers for drug screening demonstrates a critical public safety risk: a device with 11% sensitivity would fail to detect 89% of poor-quality medicines, allowing them to reach consumers [22]. In contrast, Raman spectroscopy, when coupled with machine learning, shows high specificity in distinguishing between different heavy metals in crops, a crucial capability for identifying the exact source of contamination [20]. Furthermore, advanced mass spectrometry techniques like LC-HR-MS3 can provide a marginal but critical improvement in sensitivity for specific toxic compounds, which can be the difference between correct identification and a dangerous false negative [5].

Experimental Protocols and Methodologies

The reliability of the data presented in Table 1 is underpinned by rigorous experimental designs. Below are the detailed methodologies from key cited studies.

- Objective: To investigate the sensitivity and specificity of Raman spectroscopy (RS) for diagnosing arsenic, cadmium, and lead uptake in rice.

- Sample Preparation: Rice was cultivated hydroponically. After 2 weeks of growth, heavy metal (HM) treatments were introduced at varying concentrations using a dose-response design.

- Instrumentation & Data Acquisition: Raman spectra were collected weekly from rice leaves using a handheld Raman spectrophotometer (830 nm laser, 495 mW power, 1s acquisition time). A total of 24 spectra were acquired per plant group.

- Reference Analysis: Inductively Coupled Plasma Mass Spectrometry (ICP-MS) was performed on harvested tissue to quantify the actual heavy metal concentration.

- Data Analysis: Raman peaks associated with carotenoids and phenylpropanoids were analyzed. A machine-learning algorithm (Partial Least Squares Discriminant Analysis, PLS-DA) was built to interpret the spectra and diagnose the specific type of HM toxicity.

- Objective: To compare the sensitivity and specificity of a handheld NIR spectrometer against HPLC for detecting substandard and falsified (SF) medicines in Nigeria.

- Sample Collection: 246 drug samples (analgesics, antimalarials, antibiotics, antihypertensives) were purchased from retail pharmacies across six geopolitical regions.

- Testing with NIR: The handheld NIR spectrometer (750-1500 nm range) analyzed each pill's spectral signature, comparing it to a cloud-based AI reference library. Results were reported as a match or non-match in about 20 seconds.

- Reference Analysis (HPLC): The same drug samples were analyzed using an Agilent 1100 HPLC system with a validated method for each active pharmaceutical ingredient (API). System suitability was confirmed prior to each analysis.

- Data Comparison: The results from NIR and HPLC were compared to calculate sensitivity (true positive rate) and specificity (true negative rate).

- Objective: To evaluate the potential of LC-HR-MS3 in enhancing the identification of toxic natural products compared to standard MS2.

- Library Construction: A spectral library of 85 natural product standards was constructed using a quadrupole-linear-ion-trap-Orbitrap mass spectrometer, containing both MS2 and MS3 mass spectra.

- Sample Preparation: The standards were spiked into drug-free human serum and urine to produce contrived clinical samples at a series of concentrations.

- Instrumentation & Data Acquisition: Analysis was performed using a UHPLC system coupled with an Orbitrap mass spectrometer. The data-dependent acquisition (DDA) method included a full scan, MS2 fragmentation of the top 10 precursors, and MS3 fragmentation of the top 3 MS2 product ions.

- Data Analysis: Results were searched against the spectral library in two ways: using only MS2 spectra, and using a combined MS2-MS3 tree. The match scores and identification rates at different concentrations were compared.

Diagram: Experimental Workflow for Method Validation and Consequence Analysis

Figure 1: This workflow illustrates the critical role of specificity/selectivity validation. Failure at the assessment stage leads directly to the deployment of an unreliable method and consequent risks.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials and reagents essential for conducting experiments to validate the specificity and selectivity of analytical methods, particularly in spectroscopic applications.

Table 2: Key Research Reagent Solutions for Spectroscopic Method Validation

| Item | Function in Validation | Application Example |

|---|---|---|

| Certified Reference Materials (CRMs) | Provides an accepted reference value with known uncertainty to establish method accuracy and calibration [20] [24]. | Used in ICP-MS for quantifying heavy metals in plant tissue [20] and in XRF for calibrating alloy analysis [24]. |

| Drug-Free Biological Matrices | Used to prepare spiked samples for assessing specificity, accuracy, and detection limits in complex backgrounds like serum or urine [5]. | Essential for validating LC-HR-MS3 methods for toxic natural products in clinical toxicology [5]. |

| Authentic Drug Standards | Serves as the gold-standard reference for building spectral libraries and verifying the identity and purity of the target analyte [22]. | Required for training AI-powered NIR spectrometers and for HPLC method development to screen for SF medicines [22]. |

| Heavy Metal Standards | Used in dose-response studies to create calibration curves and evaluate the method's sensitivity to specific contaminants at environmentally relevant levels [20]. | Critical for correlating Raman spectral changes with ICP-MS data for heavy metal uptake in crops [20]. |

| Chromatographic Solvents & Mobile Phase Additives | High-purity solvents (MeOH, ACN) and additives (e.g., ammonium formate, formic acid) are vital for achieving optimal separation, peak shape, and ionization efficiency in LC-MS [5]. | Used in the LC-HR-MS3 method to separate and detect 85 natural products in a single run [5]. |

The consequences of poor specificity and selectivity in analytical methods are not merely statistical deviations but represent a direct threat to product integrity and consumer safety. Empirical evidence shows that while advanced and portable spectroscopic techniques like Raman and NIR offer tremendous benefits in speed and non-destructiveness, their performance must be rigorously validated against reference methods like HPLC and ICP-MS for each specific application. A method that fails to distinguish an analyte from an interferent, or one that lacks the sensitivity to detect a harmful contaminant at a regulated level, can erode trust in the pharmaceutical supply chain, compromise food safety, and ultimately endanger lives. Therefore, a thorough, well-documented validation process that explicitly demonstrates specificity and selectivity is an indispensable component of responsible research and development, ensuring that analytical results are a reliable foundation for quality and safety decisions.

In modern analytical laboratories, particularly in pharmaceutical and materials science research, the reliability of spectroscopic data is paramount. Method validation provides the foundation for scientific confidence, ensuring that analytical results are accurate, precise, and fit for their intended purpose [25]. This process is especially critical in regulated environments like drug development, where decisions affecting product quality and patient safety depend on analytical integrity [26]. Among the numerous validation parameters, three foundational requirements form the cornerstone of reliable spectroscopic measurements: wavelength accuracy, which verifies that the instrument measures at the correct spectral position; photometric linearity, which confirms the instrument's response proportionality to analyte concentration; and effective spectral resolution, which determines the ability to distinguish between closely spaced spectral features [27] [24]. These parameters collectively establish what is known as analytical specificity—the ability of a method to measure the analyte accurately in the presence of potential interferents [28] [26]. Without rigorous validation of these foundational requirements, the selectivity of an analytical method remains questionable, potentially compromising research conclusions, product quality assessments, and regulatory submissions.

Wavelength Accuracy: Principles and Experimental Assessment

Wavelength accuracy refers to the agreement between the measured wavelength value and its true or accepted reference value. This parameter is crucial for both qualitative identification and quantitative analysis, as peak misidentification or shifts in characteristic spectral features can lead to incorrect compound identification or concentration errors [29] [24].

Experimental Protocol for Wavelength Calibration

The most established method for verifying wavelength accuracy involves using reference materials with well-characterized, stable emission or absorption lines across the spectral range of interest.

- Light Source Preparation: Utilize calibration lamps containing elements with known atomic emission lines. Mercury-argon (Hg-Ar) lamps are particularly valuable as they provide numerous sharp, well-distributed lines from the ultraviolet to near-infrared regions (e.g., 360-600 nm) [29].

- Data Acquisition: Illuminate the spectrometer with the reference source and acquire spectral data. For integral field spectrographs (IFS) or similar instruments, this typically involves projecting the lamp spectrum through the entire optical system onto the detector [29].

- Spectral Peak Identification: Apply algorithms to identify the precise center of each characteristic peak in the detected spectrum. The 4FFT-LMG Gaussian fitting algorithm has demonstrated superior performance for this purpose compared to weighted centroid or simple polynomial fitting methods, achieving correlation coefficients >99.99% between pixel position and wavelength [29].

- Calibration Curve Construction: Establish a mathematical relationship (typically polynomial) between the known reference wavelengths and their corresponding measured positions on the detector. The residuals from this fit quantify the wavelength accuracy across the operational range [29].

Performance Comparison of Wavelength Calibration Methods

Table 1: Comparison of wavelength calibration peak-finding algorithms

| Method | Principle | Reported Accuracy | Advantages | Limitations |

|---|---|---|---|---|

| Gaussian Fitting (4FFT-LMG) | Fits spectral peaks to Gaussian profile | 0.0067 nm | High precision, robust against noise | Computationally intensive |

| Weighted Centroid | Calculates intensity-weighted center of mass | Not specified | Simple computation, fast | Sensitive to background asymmetry |

| Polynomial Fitting | Fits peak region with polynomial function | Not specified | Moderate complexity | Less accurate for non-ideal peaks |

| Direct Peak Detection | Selects highest intensity point | Not specified | Extremely simple | Poor accuracy, noise-sensitive |

The experimental data demonstrates that proper wavelength calibration using reference lamps and advanced fitting algorithms can achieve exceptional accuracy down to 0.0067 nm, establishing a reliable foundation for subsequent analytical measurements [29].

Photometric Linearity: Validation Methodologies

Photometric linearity validates that the instrument's response (absorbance, transmittance, or reflectance) is directly proportional to analyte concentration throughout the specified measurement range. This relationship is fundamental to quantitative analysis, as deviations from linearity introduce concentration-dependent errors [27] [26].

Experimental Protocol for Linearity Assessment

- Reference Material Selection: Prepare a series of standard solutions at a minimum of five concentrations spanning the expected analytical range. Potassium dichromate solutions are well-established reference materials for ultraviolet verification, while neutral density filters (e.g., NIST SRM 930d) serve for transmittance validation in the visible region [27].

Measurement Procedure: Measure each standard in triplicate across the operational wavelength range. For absorbance-based spectrophotometers, measure both sample (I) and reference (I₀) intensities, applying appropriate dark current corrections (D) using the equation [27]:

( A = -\log\left(\frac{I - D}{I_0 - D}\right) )

Data Analysis: Perform linear regression analysis of the measured response against concentration at each wavelength. Calculate the correlation coefficient (R²), y-intercept, slope, and residual sum of squares to quantify linearity [27] [30].

- Acceptance Criteria: According to method validation guidelines, an R² value ≥0.998 typically demonstrates acceptable linearity. Additionally, the response should be visually inspected for systematic deviations from the regression line [30].

Performance Standards Across Spectral Regions

Table 2: Photometric reference materials and their applications

| Spectral Region | Reference Material | Concentration/Type | Key Wavelengths | Application |

|---|---|---|---|---|

| Ultraviolet (UV) | Potassium Dichromate | 20-200 mg/L | 257, 235, 350, 313 nm | Absorbance linearity verification |

| Visible (Vis) | NIST SRM 930d Filters | 3 neutral density filters | Across visible range | Transmittance accuracy |

| Near Infrared (NIR) | Fluorilon (PTFE) | R99 (~99% reflectance) | 780-2500 nm | Reflectance standard |

| Visible Reflectance | Russian Opal Glass | ~97% reflectance | 380-750 nm | Reflectance factor |

The photometric accuracy specification is typically expressed as ±0.002 absorbance units (Au) for the range 0.0-0.5 Au and ±0.004 Au for 0.5-1.0 Au, when verified against NIST-traceable standards [27]. This ensures the instrument's photometric axis remains properly aligned for reliable quantitative measurements.

Spectral Resolution and Selectivity Assessment

Spectral resolution defines a spectrometer's ability to distinguish between closely spaced spectral features, directly impacting method selectivity—the degree to which a method can quantify an analyte without interference from other components in the sample matrix [28].

Experimental Protocol for Resolution Verification

- Source Selection: For emission instruments, use low-pressure spectral lamps with narrow, well-defined atomic lines (e.g., mercury vapor lamps). For absorption instruments, utilize materials with characteristic fine spectral features [29] [24].

- Measurement and Analysis: Acquire the spectrum of the resolution standard and identify appropriate closely-spaced peaks. Measure the full width at half maximum (FWHM) of isolated peaks to determine instrumental bandwidth [24].

- Selectivity Assessment: For analytical methods, demonstrate selectivity by analyzing the target analyte in the presence of potentially interfering substances (e.g., sample matrix components, related compounds, or metabolites). The method should be able to quantify the analyte without significant interference (<20% deviation) [28] [26].

Limit of Detection (LOD) Determination: The LOD, defined as the lowest analyte concentration detectable with 95% confidence, is determined from the background signal near the analyte peak using the formula [24] [30]:

( LOD = \frac{3.3 \times \sigma}{S} )

where σ is the standard deviation of the blank response and S is the calibration curve slope.

Detection Capabilities in Complex Matrices

Research on silver-copper (Ag-Cu) alloys demonstrates how detection limits vary with analytical technique and sample matrix. In Ag₀.₇₅Cu₀.₂₅ alloys, Energy Dispersive XRF (ED-XRF) achieved detection limits of 0.039% for Ag and 0.029% for Cu, while Wavelength Dispersive XRF (WD-XRF), with superior spectral resolution, achieved even lower detection limits of 0.021% for Ag and 0.012% for Cu [24]. This highlights how enhanced spectral resolution directly improves sensitivity and selectivity in complex samples.

Integrated Validation Workflow

Validation Workflow Diagram

The interdependence of wavelength accuracy, photometric linearity, and spectral resolution creates a validation hierarchy where each parameter builds upon the previous one. As illustrated in the workflow diagram, these three foundational requirements form the essential foundation upon which comprehensive method specificity is built [28] [26]. Without proper wavelength calibration, peak identification becomes unreliable, compromising selectivity. Without demonstrated photometric linearity, quantitative results lack proportionality, regardless of spectral resolution. And without sufficient resolution, closely eluting compounds or overlapping spectral features cannot be distinguished, fundamentally limiting method specificity [28]. This integrated approach to validation ensures that analytical methods produce reliable data capable of withstanding scientific and regulatory scrutiny.

Essential Research Reagent Solutions

Table 3: Key reagents and materials for spectroscopic method validation

| Reagent/Material | Function in Validation | Application Scope |

|---|---|---|

| Mercury-Argon (Hg-Ar) Lamp | Wavelength calibration standard | Provides multiple sharp emission lines from UV to NIR |

| Potassium Dichromate (SRM 935a) | Photometric linearity verification | UV absorbance standard at specific concentrations |

| NIST SRM 930d Filters | Transmittance accuracy verification | Neutral density filters for visible region calibration |

| Fluorilon (PTFE) | Reflectance standard | ~99% reflectance reference for NIR measurements |

| White Dwarf Standard Stars | Absolute flux calibration | Astronomical spectrometer photometric calibration |

| Linagliptin Primary Standard | Method development analyte | Pharmaceutical compound for specific method validation |

These reference materials, when properly utilized and traceable to national measurement institutes, form the metrological foundation for reliable spectroscopic measurements across diverse applications from pharmaceutical analysis to astronomical observations [29] [27] [30].

The validation of wavelength accuracy, photometric linearity, and spectral resolution represents a non-negotiable foundation for any analytical method claiming to produce reliable spectroscopic data. Experimental evidence demonstrates that through careful implementation of standardized protocols using appropriate reference materials, laboratories can achieve exceptional measurement certainty—with wavelength accuracy below 0.01 nm, photometric linearity exceeding R² values of 0.998, and detection limits capable of quantifying trace components in complex matrices [29] [24] [30]. These three parameters are deeply interconnected, with deficiencies in any one component potentially compromising the entire analytical method. As regulatory expectations continue to evolve and analytical challenges grow more complex, adherence to these foundational validation principles remains essential for generating data that withstands scientific scrutiny and supports critical decisions in research, development, and quality control.

A Step-by-Step Methodology for Specificity and Selectivity Validation

The International Council for Harmonisation (ICH) Q2(R2) guideline provides a foundational framework for the validation of analytical procedures for drug substances and products. This guideline details the validation of various analytical procedure characteristics, serving as a critical resource for establishing standardized acceptance criteria in the pharmaceutical industry [18]. A well-designed validation plan is paramount for demonstrating that an analytical method is suitable for its intended purpose, ensuring the reliability, accuracy, and consistency of data generated to support drug development and quality control. Within this framework, the comparison of methods experiment is a critical activity for assessing the systematic error, or bias, between a new (test) method and a comparative method, providing essential data on the method's trueness [31] [32].

Core Validation Parameters and Acceptance Criteria

The ICH Q2(R2) guideline outlines key analytical performance parameters that must be validated. The table below summarizes the primary validation characteristics and typical acceptance criteria for a quantitative impurity assay.

Table 1: Key Validation Parameters and Example Acceptance Criteria based on ICH Q2(R2)

| Validation Parameter | Objective | Typical Acceptance Criteria Example (for Impurity Assay) |

|---|---|---|

| Accuracy/Trueness | Closeness between measured value and accepted reference value [18]. | Recovery of 98–102% for drug substance; 95–105% for drug product (depending on concentration). |

| Precision | ||

| - Repeatability | Precision under same operating conditions over a short time [18]. | Relative Standard Deviation (RSD) ≤ 2.0% for drug substance assay. |

| - Intermediate Precision | Within-laboratory variations (different days, analysts, equipment) [18]. | RSD of results from intermediate precision study ≤ 3.0%. |

| Specificity/Selectivity | Ability to assess analyte unequivocally in the presence of potential interferents [18]. | Chromatographic method: Peak purity of analyte is unaffected by interferents (e.g., placebo, degradation products). |

| Detection Limit (LOD) | Lowest amount of analyte that can be detected [18]. | Signal-to-Noise ratio ≥ 3 (for instrumental methods). |

| Quantitation Limit (LOQ) | Lowest amount of analyte that can be quantified [18]. | Signal-to-Noise ratio ≥ 10; Accuracy and Precision at LOQ level meet pre-defined criteria. |

| Linearity | Ability to obtain results directly proportional to analyte concentration [18]. | Correlation coefficient (r) ≥ 0.998. |

| Range | Interval between upper and lower concentration of analyte for which suitable levels of precision, accuracy, and linearity are demonstrated [18]. | Typically from LOQ level to 120% of specification level for assay. |

Experimental Protocols for Key Validation Studies

Protocol for the Comparison of Methods Experiment

The comparison of methods experiment is a critical design for estimating the systematic error (bias) of a new analytical method against a reference or comparative method [31].

- Purpose: To estimate the inaccuracy or systematic error of the test method by comparing it with a comparative method using real patient specimens [31].

- Experimental Design:

- Sample Number: A minimum of 40 different patient specimens should be tested, with 100-200 recommended to better assess specificity and matrix effects [31] [32].

- Sample Selection: Specimens must cover the entire clinically meaningful measurement range and represent the spectrum of diseases expected in routine application. Quality and range of specimens are more critical than sheer quantity [31].

- Replication: Analyze specimens in duplicate for both methods, ideally in different runs or at least in different order, to identify transcription errors or sample-specific interferences [31].

- Timeframe: Conduct the experiment over a minimum of 5 days, with multiple runs to minimize systematic errors from a single run and mimic real-world conditions [31] [32].

- Sample Stability: Analyze test and comparative method specimens within two hours of each other to prevent stability-related differences from being misattributed as analytical error [31].

Data Analysis and Statistical Evaluation

Appropriate statistical analysis is crucial for interpreting comparison data. Correlation analysis and t-tests are commonly misapplied and are not adequate for assessing method comparability [32].

- Graphical Data Inspection:

- Scatter Plots: Plot test method results (y-axis) against comparative method results (x-axis) to visualize the relationship, data range, and identify outliers [32].

- Difference Plots (Bland-Altman): Plot the difference between methods (y-axis) against the average of the two methods (x-axis). This helps describe the agreement between methods and reveals any concentration-dependent bias [31] [32].

- Statistical Calculations:

- Linear Regression: For data covering a wide analytical range, use linear regression to obtain the slope (estimates proportional error), y-intercept (estimates constant error), and standard deviation about the regression line (s~y/x~). The systematic error (SE) at a critical medical decision concentration (X~c~) is calculated as: SE = Y~c~ - X~c~, where Y~c~ = a + bX~c~ [31].

- Bias Calculation: For a narrow analytical range, calculate the average difference (bias) between methods and the standard deviation of the differences [31].

- Avoiding Inadequate Statistics: The correlation coefficient (r) only measures the strength of a linear relationship, not the agreement between methods. Two methods can be perfectly correlated yet have a large, unacceptable bias. t-tests can fail to detect clinically meaningful differences with small sample sizes or can detect statistically significant but clinically irrelevant differences with large sample sizes [32].

Workflow and Decision Pathways for Method Validation

The following diagram illustrates the logical workflow for designing and executing a method validation plan, culminating in the comparison of methods study.

Figure 1: Method validation design and execution workflow.

Statistical Analysis Decision Pathway

After data collection from a comparison of methods experiment, selecting the correct statistical approach is critical for a valid estimate of systematic error.

Figure 2: Decision pathway for statistical analysis of method comparison data.

The Scientist's Toolkit: Essential Reagents and Materials

A successful validation study requires high-quality materials and reagents. The following table details key solutions and their critical functions in conducting a robust comparison of methods experiment.

Table 2: Essential Research Reagent Solutions for Method Validation Studies

| Item | Function/Justification |

|---|---|

| Certified Reference Standards | Provides a traceable and well-characterized analyte of known purity and concentration, essential for establishing accuracy and calibrating both the test and comparative methods. |

| Characterized Patient Specimens | Real patient samples covering the full clinical measurement range and disease spectrum are crucial for assessing method performance under realistic conditions and detecting matrix effects [31] [32]. |

| Appropriate Calibrators | Standard solutions used to establish the relationship between instrument response and analyte concentration for both the test and comparative methods. |

| Quality Control (QC) Materials | Stable materials with known concentrations (low, mid, high) used to monitor the stability and performance of the analytical methods throughout the validation study. |

| Interference Check Solutions | Solutions containing potential interferents (e.g., bilirubin, hemoglobin, lipids, co-medications) are used to challenge the method and validate its specificity/selectivity. |

| Stability-Testing Samples | Aliquots of patient samples and QC materials stored under defined conditions (time, temperature) to verify analyte stability within the predefined testing window (e.g., 2 hours) [31]. |

Demonstrating the specificity of an analytical method is a fundamental requirement in bioanalytical method validation, proving that the method can accurately and reliably quantify the target analyte in the presence of other components that may be expected to be present in the sample matrix [33]. Matrix effects—the suppression or enhancement of an analyte's signal caused by co-eluting matrix components—represent a significant challenge to method specificity, potentially leading to erroneous concentration data, reduced precision, and in severe cases, incorrect scientific or dosing decisions [34] [35] [36].

The use of blank and spiked samples is a cornerstone practice for experimentally detecting and quantifying these interferences. This guide objectively compares the core experimental approaches for assessing specificity, providing researchers with validated protocols and performance data to ensure the robustness of their analytical methods, particularly those employing liquid chromatography-mass spectrometry (LC-MS) and related techniques.

Core Principles: Matrix Effect and Specificity

The sample matrix encompasses all components of a sample except for the analytes of interest, such as phospholipids, proteins, salts, and anticoagulants in plasma, or excipients in a drug product [34] [33]. The matrix effect describes the adverse impact of these components on the ionization efficiency of the analyte in techniques like LC-MS, primarily through ion suppression or enhancement [34] [36].

Regulatory guidelines from the International Council for Harmonisation (ICH), the United States Pharmacopoeia (USP), and the Food and Drug Administration (FDA) all emphasize the necessity of demonstrating that a method is unaffected by the sample matrix [33]. The FDA's bioanalytical method validation guidance, for instance, mandates testing blank matrices from at least six sources to ensure selectivity [33]. Failure to adequately investigate and mitigate matrix effects can be detrimental to data quality and program success [34].

Experimental Protocols for Assessing Specificity

Three primary experimental methodologies are employed to assess matrix effects: post-column infusion, post-extraction spiking, and pre-extraction spiking. The workflows for these methods are summarized in the diagram below.

Post-Column Infusion

This technique provides a qualitative, visual assessment of matrix effects throughout the chromatographic run [34].

- Procedure: A neat solution of the analyte is continuously infused via a syringe pump into the mobile phase post-column. A blank matrix extract (e.g., extracted plasma) is then injected onto the chromatographic system. The MS signal for the analyte is monitored in real-time.

- Data Interpretation: A stable signal indicates no matrix effect. Any significant dip (suppression) or peak (enhancement) in the baseline of the ion chromatogram indicates the retention time windows where matrix components co-elute and interfere with the analyte's ionization.

- Application: This method is particularly valuable during initial method development and troubleshooting to optimize chromatographic separation and sample clean-up procedures [34].

Post-Extraction Spiking

Widely regarded as the "gold standard" for quantitative assessment, this method calculates the Matrix Factor (MF) to quantify the extent of the matrix effect [34].

- Procedure: A blank biological matrix is processed through the sample preparation procedure. The analyte is then spiked into the resulting blank extract at a known concentration. The LC-MS response (peak area) of this sample is compared to the response of a neat solution of the analyte at the same concentration, prepared in a solvent [34].

- Data Interpretation: The MF is calculated as follows:

- Absolute MF = Peak Area (Post-spiked extract) / Peak Area (Neat solution)

- An MF < 1 indicates signal suppression; MF > 1 indicates signal enhancement. For a robust method, the absolute MF should ideally be between 0.75 and 1.25 and be non-concentration dependent [34].

- IS-normalized MF = MF (Analyte) / MF (Internal Standard). This value should be close to 1.0, demonstrating that the internal standard effectively compensates for the matrix effect [34].

Pre-Extraction Spiking (Spike-and-Recovery)

This method, referenced in guidelines like ICH M10, assesses the combined impact of the matrix effect and the efficiency of the sample preparation process (recovery) [34] [35] [37].

- Procedure: The analyte is spiked into a blank matrix at a known concentration before the sample extraction and clean-up steps. This sample is then processed through the entire analytical method. The measured concentration is compared to the theoretical spiked concentration [37].

- Data Interpretation: The result is expressed as percentage recovery.

Performance Comparison of Assessment Methods

The table below summarizes the key characteristics, advantages, and limitations of the three core assessment methodologies.

Table 1: Comparative Performance of Blank and Spiked Sample Methods

| Method | Assessment Type | Key Measurable | Regulatory Citation | Primary Advantage | Key Limitation |

|---|---|---|---|---|---|

| Post-Column Infusion [34] | Qualitative | Signal disruption profile | – | Identifies chromatographic regions of interference | Does not provide quantitative data |

| Post-Extraction Spiking [34] | Quantitative | Matrix Factor (MF) | – | Quantifies absolute & IS-normalized matrix effect | Does not assess extraction recovery |

| Pre-Extraction Spiking [34] [37] | Quantitative | % Recovery | ICH M10 [34] | Assesses overall method performance (matrix effect + recovery) | Does not isolate the specific cause of inaccuracy |

The following table compiles example acceptance criteria for recovery experiments from environmental analytical protocols, illustrating the application-specific nature of these benchmarks.

Table 2: Example Acceptance Criteria for Recovery (%) from Regulatory Protocols [37]

| Analyte Category | Matrix | Acceptable Recovery Range |

|---|---|---|

| Metals and Inorganics | Water, Soil | 80% - 120% |

| Volatile Organic Compounds (VOCs) | Water, Soil | 60% - 130% |

| Dioxins & Furans | Water, Soil | 70% - 140% |

| Polycyclic Aromatic Hydrocarbons (PAHs) | Water, Soil | 50% - 140% |

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful assessment of specificity requires the use of well-characterized materials. The following table details key reagents and their functions in these experiments.

Table 3: Essential Research Reagent Solutions for Specificity Assessment

| Reagent / Material | Function & Importance in Specificity Assessment |

|---|---|

| Blank Matrix [33] | The foundation of all tests. Should be free of the target analyte and representative of the study samples (e.g., human plasma from at least 6 different lots). |

| Stable Isotope-Labeled Internal Standard [34] | The preferred IS (e.g., ¹³C-, ¹⁵N-labeled). It co-elutes with the analyte and experiences an identical matrix effect, allowing for optimal compensation. |

| Quality Control Samples [34] | Spiked at low and high concentrations in the blank matrix. Used in pre-extraction spiking to demonstrate accuracy and precision despite any matrix effect. |

| Neat Analyte Solutions [34] | Prepared in a pure solvent. Serves as the baseline for comparison in post-extraction spiking experiments to calculate the Matrix Factor. |

| Phospholipid Monitoring Solutions [34] | Used to identify if observed matrix effects are attributable to endogenous phospholipids, guiding method optimization. |

The rigorous assessment of specificity using blank and spiked samples is non-negotiable for validating robust analytical methods. Each technique—post-column infusion, post-extraction spiking, and pre-extraction spiking—provides complementary information, from pinpointing chromatographic interferences to quantifying the matrix effect and overall method recovery.

For researchers, the choice of method depends on the stage of development and the specific question being addressed. Post-column infusion is an excellent diagnostic tool, while post-extraction spiking offers the definitive quantitative measure of the matrix effect. The pre-extraction spike-and-recovery approach, as endorsed by regulatory guidelines, provides the ultimate check on the method's accuracy in the presence of the matrix. Employing a stable isotope-labeled internal standard is the most effective strategy to compensate for residual, consistent matrix effects, ensuring that the generated data is accurate, precise, and reliable for critical decision-making in drug development.

In analytical chemistry, selectivity refers to the ability of a method to determine a particular analyte accurately and specifically in the presence of other components that may be expected to be present in the sample matrix [28]. This term is often used interchangeably with "specificity," though a crucial distinction exists: selectivity is a graded property that can be quantified, whereas specificity implies an absolute ability to distinguish an analyte without any ambiguity [28]. Establishing selectivity is a fundamental requirement in method validation, particularly in regulated industries such as pharmaceutical development and clinical diagnostics, where interference from similar compounds, matrix components, or concomitant medications can compromise result accuracy and lead to incorrect decisions [38] [39].

The process of establishing selectivity involves systematic testing against potential interferents to demonstrate that the method can reliably quantify the analyte of interest without bias. For modern analytical techniques, particularly liquid chromatography coupled with tandem mass spectrometry (LC-MS/MS), this process leverages multiple dimensions of selectivity—including chromatographic separation, mass-resolved precursor ion selection, and fragment ion monitoring—to achieve the necessary discrimination power [38] [40]. This guide outlines the experimental strategies and comparison data necessary to establish selectivity against likely and worst-case interferences, providing a framework for researchers and scientists to validate their analytical methods with confidence.

Core Principles of Interference Testing

Analytical interferences can originate from various sources, and their identification is the first step in designing a robust selectivity experiment. These interferences can be broadly categorized as follows:

- Endogenous Interferences: Substances naturally present in the sample matrix, such as proteins, lipids, salts, and metabolites from the biological sample itself. Effects like hemolysis, icterus, and lipemia in blood-based samples are common examples [38].

- Exogenous Interferences: Substances introduced from external sources, including medications (prescription, over-the-counter, or illicit drugs), dietary supplements, parenteral nutrition, plasma expanders, and substances added during sample collection or preparation (e.g., anticoagulants, preservatives, stabilizers, or leachables from collection tubes or plasticware) [38] [41].

- Isobaric Interferences: Compounds that share the same nominal mass as the analyte or its internal standard, potentially causing direct overlap in mass spectrometric detection if not separated chromatographically [38] [42].

- Matrix Effects: A particular form of interference where co-eluting matrix components alter the ionization efficiency of the analyte, leading to either ion suppression or ion enhancement. This is a prevalent concern in LC-MS/MS, especially with electrospray ionization (ESI) [43] [41].

The Selectivity Hierarchy in LC-MS/MS

Liquid chromatography-tandem mass spectrometry offers multiple layers of selectivity, which can be optimized during method development to mitigate interferences.

The diagram above illustrates how selectivity is built incrementally in a well-developed LC-MS/MS method. Chromatographic separation serves as the first critical dimension, separating the analyte from potential interferents based on retention time [38]. The first mass analyzer (MS1) then selects the precursor ion based on its mass-to-charge ratio (m/z). Finally, the second mass analyzer (MS2) selects characteristic product ions after collision-induced dissociation. The combination of a specific precursor ion and one or more product ions—a technique known as selected reaction monitoring (SRM) or multiple reaction monitoring (MRM)—creates a highly specific analytical signal [38]. The consistency of the ion abundance ratio between different product ion transitions provides an additional quality control metric to flag potential interferences [40].

Experimental Protocols for Establishing Selectivity

Testing for Specific Interferences

The protocol for testing specific, known interferents follows a systematic approach to quantify the bias introduced by the potential interfering substance.

Protocol: Spiked Interference Recovery Test This method is adapted from CLSI guideline EP7-A2 and is designed to quantify the effect of a specific interferent on analyte measurement [38].

Sample Preparation:

- Prepare a pool of the sample matrix (e.g., pooled human plasma) with a known, quantified concentration of the target analyte. This is the base pool.

- Split the base pool into two aliquots.

- Test Pool: Spike the potential interferent into the first aliquot at the highest concentration expected to be encountered in real samples.

- Control Pool: Add an equal volume of the interferent's solvent to the second aliquot.

- Ensure both pools are processed identically through the entire analytical procedure.

Analysis and Calculation:

- Analyze both the test and control pools with adequate replication (e.g., n=5) within the same analytical run to minimize run-to-run variability.

- Calculate the mean measured concentration for the test pool (C~test~) and the control pool (C~control~).

- Determine the percentage bias using the formula:

- Bias (%) = [(C~test~ - C~control~) / C~control~] × 100

Interpretation:

- A bias that exceeds pre-defined acceptance criteria (e.g., ±15% for bioanalytical methods) indicates clinically significant interference.

- If interference is found, further testing at different concentrations of the interferent is recommended to determine the threshold for interference [38].

Testing for Unidentified Interferences and Matrix Effects

For a comprehensive selectivity assessment, it is crucial to evaluate the potential for unknown interferences and matrix effects, particularly in LC-MS/MS.

Protocol: Post-Column Infusion for Ion Suppression/Enhancement This qualitative experiment helps visualize regions of ion suppression or enhancement throughout the chromatographic run [38] [43].

Experimental Setup:

- Prepare a solution of the analyte (or its stable isotope-labeled internal standard) at a concentration that provides a steady signal.

- Use a syringe pump to continuously infuse this solution post-column into the MS interface.

- While the solution is being infused, inject a blank matrix extract (e.g., from plasma, urine) that has been processed through the sample preparation workflow.

Data Analysis:

- Monitor the MRM channel for the infused analyte. A stable baseline indicates no matrix effects.

- A drop in the signal (a negative peak) indicates ion suppression caused by matrix components eluting at that time.

- A rise in the signal indicates ion enhancement.

Outcome: