Validation of NIR Spectrometer Sensitivity and Specificity: A Comprehensive Guide for Biomedical Researchers

This article provides a comprehensive analysis of Near-Infrared (NIR) spectrometer validation studies, addressing critical needs for researchers and drug development professionals.

Validation of NIR Spectrometer Sensitivity and Specificity: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a comprehensive analysis of Near-Infrared (NIR) spectrometer validation studies, addressing critical needs for researchers and drug development professionals. It explores the fundamental principles establishing NIR's analytical credibility and examines diverse methodological applications from pharmaceutical authentication to food quality control. The content details advanced troubleshooting and optimization strategies for enhancing model robustness, including novel algorithms like ECCARS. Finally, it presents rigorous comparative validation frameworks against reference methods like HPLC, offering evidence-based performance assessments across sectors. This synthesis of foundational knowledge and cutting-edge advancements serves as an essential resource for ensuring reliable NIR spectrometer implementation in research and quality control.

Establishing the Foundation: Core Principles and Importance of NIR Spectrometer Validation

Defining Sensitivity and Specificity in NIR Spectroscopy Context

Near-Infrared (NIR) spectroscopy has emerged as a powerful analytical tool across numerous fields, from pharmaceutical quality control to medical diagnostics. Its value proposition hinges on being a rapid, non-destructive, and cost-effective alternative to traditional laboratory methods like High-Performance Liquid Chromatography (HPLC) [1] [2]. However, to objectively assess its reliability and validate its use in critical applications, understanding its diagnostic accuracy through the lens of sensitivity and specificity is paramount. These metrics quantitatively answer two fundamental questions: Can NIR correctly identify positive cases (sensitivity)? And can it correctly rule out negative cases (specificity)? [2]. This guide provides a structured comparison of NIR spectrometer performance against established analytical techniques, supported by experimental data and detailed protocols, to inform decision-making for researchers and regulatory scientists.

Quantitative Performance Comparison Across Applications

The performance of NIR spectroscopy varies significantly depending on the application, sample matrix, and the specific analytical question being addressed. The following tables summarize its sensitivity and specificity across different fields, providing a clear, data-driven comparison.

Table 1: Diagnostic Sensitivity and Specificity of NIR in Medical and Pharmaceutical Applications

| Application Domain | Reference Method | Reported Sensitivity | Reported Specificity | Key Study Findings |

|---|---|---|---|---|

| Detection of Substandard and Falsified (SF) Medicines (All Categories) [2] | HPLC | 11% | 74% | NIR demonstrated poor sensitivity but moderate specificity for detecting poor-quality drugs across analgesics, antimalarials, antibiotics, and antihypertensives. |

| Detection of SF Medicines (Analgesics only) [2] | HPLC | 37% | 47% | Performance was better for analgesics but still suboptimal, indicating that efficacy is highly dependent on the drug formulation. |

| HCV Detection in Serum Samples [3] | PCR | N/A | N/A | A model integrating NIR with clinical data achieved an accuracy of 72.2% and an AUC-ROC of 0.850, showing enhanced diagnostic capability. |

Table 2: Analytical Performance of NIR for Quality Control in Industry

| Application Domain | Analyte/Parameter | Performance vs. Reference Method | Key Study Findings |

|---|---|---|---|

| Pharmaceutical API Quantification [1] | Dexketoprofen content | Error of Prediction: 1.01% (granulate), 1.63% (coated tablets) | NIR proved to be a good, rapid alternative for monitoring API concentration in different production steps. |

| Soybean Quality Analysis [4] | Moisture, Protein, Lipids, Ashes | Consistent PLS models with good predictive ability | Both MIR and NIR were effective, reducing analysis time from 10-16 hours to under 5 minutes. |

Detailed Experimental Protocols for Performance Validation

Protocol 1: Detection of Substandard and Falsified Drugs

This protocol outlines the methodology for a comparative study that benchmarked a handheld NIR spectrometer against HPLC, a gold standard method for drug composition analysis [2].

- Objective: To determine the sensitivity and specificity of a proprietary, AI-powered handheld NIR spectrometer in detecting substandard and falsified medicines in a real-world setting.

- Sample Preparation: Researchers purchased 246 drug samples from retail pharmacies across Nigeria. The samples included analgesics, antimalarials, antibiotics, and antihypertensives. For the NIR analysis, intact tablets were scanned directly. For HPLC analysis, a sub-sample of the collected drugs was prepared according to validated methods for each molecule [2].

- NIR Analysis Procedure: Spectral data was acquired using a handheld NIR spectrometer with a dispersive range of 750–1500 nm. Each drug's spectral signature was compared against a cloud-based AI reference library of authentic products. The device provided a "match" or "non-match" result in approximately 20 seconds based on the spectral signature (for falsified drugs) and intensity (for substandard drugs) [2].

- HPLC Reference Method: HPLC analysis was performed using an Agilent 1100 HPLC system equipped with a variable UV detector and quaternary pump. A validated method was employed for each active pharmaceutical ingredient (API). System suitability was confirmed prior to each analysis using a reference standard [2].

- Data Analysis: The results from the NIR device and HPLC were compared to create a classification matrix (true positives, false positives, true negatives, false negatives). Sensitivity was calculated as [True Positives / (True Positives + False Negatives)], and Specificity as [True Negatives / (True Negatives + False Positives)] [2].

Protocol 2: Quantification of Active Pharmaceutical Ingredients (API)

This protocol describes the use of NIR for quantitative analysis, a common application in pharmaceutical process analytical technology (PAT) [1].

- Objective: To develop and validate NIR methods for quantifying the active ingredient (Dexketoprofen) in a solid dosage form at two different production steps: after granulation and after tablet coating.

- Sample Preparation: Calibration samples were created by milling production tablets and then underdosing or overdosing them with known amounts of API or excipients to create a concentration range of 75–120 mg/g. This expanded the variability beyond the narrow range of production samples, which is crucial for building a robust calibration model [1].

- NIR Analysis Procedure: Spectra were recorded using a Foss NIRSystems 5000 spectrophotometer over the range 1100–2498 nm. Granulated samples were placed in a quartz cell, and coated tablets were placed directly on the quartz window of the rapid content analyzer. Spectra were an average of 32 scans [1].

- Chemometric Modeling: Partial Least Squares (PLS1) calibration models were developed. The spectra were preprocessed using the second derivative (Savitzky–Golay algorithm) to enhance spectral features and reduce scatter. The model's quality was assessed using the relative standard error of prediction (RSEP) [1].

- Validation: The quantitative methods were validated according to ICH and EMEA guidelines, demonstrating that NIR is a suitable alternative to time-consuming chromatographic methods for in-process control [1].

Signaling Pathways and Experimental Workflows

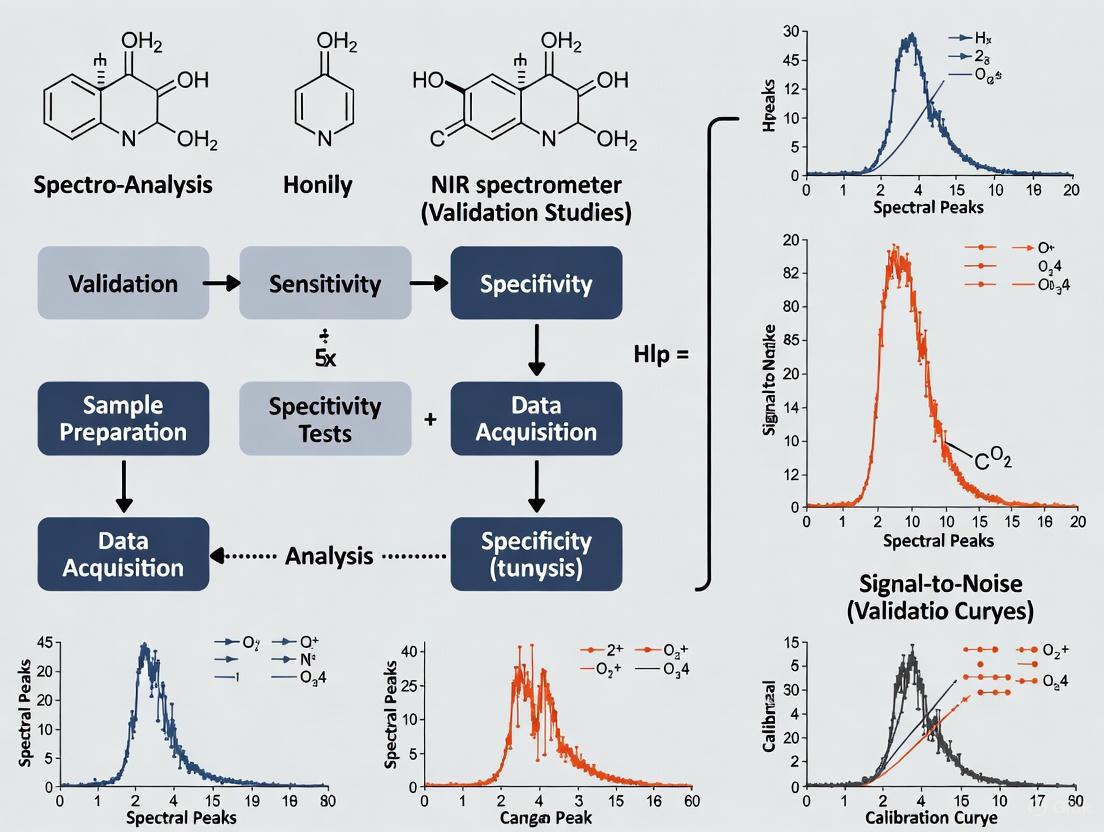

The following diagrams illustrate the logical workflow for validating NIR spectroscopy methods, based on the experimental protocols cited.

Drug Authentication Workflow

Quantitative API Analysis Workflow

The Scientist's Toolkit: Key Research Reagents and Materials

Successful implementation of NIR spectroscopy, particularly for method validation, relies on several key materials and solutions.

Table 3: Essential Research Reagents and Materials for NIR Validation Studies

| Item/Solution | Function in NIR Validation | Example from Literature |

|---|---|---|

| Reference Standards | Provides a known concentration baseline for developing and validating quantitative calibration models. | Pure Dexketoprofen API used to create overdosed samples for PLS model development [1]. |

| Authentic Drug Samples | Serves as the "ground truth" spectral library for comparative authentication of products. | Branded drugs sourced by the company to build a proprietary AI reference library for detecting SF medicines [2]. |

| Calibration Sample Set | A set of samples with a wide range of analyte concentrations, essential for building robust PLS models. | Laboratory-prepared samples spanning 75–120 mg/g API, created by milling production tablets and adding API/excipients [1]. |

| Chemometric Software | Software for multivariate data analysis, including preprocessing spectra and building predictive models (e.g., PLS). | Software like The Unscrambler used for PLS1 model calculation and spectral pretreatment (e.g., SNV, derivatives) [1] [3]. |

| Validated Reference Method | The gold-standard analytical method (e.g., HPLC) used to provide the true values for NIR model calibration and validation. | HPLC with UV detection was used as the reference method to determine the true API content in drug samples [2]. |

| Biobank Serum Samples | Well-characterized clinical samples used to train and test NIR models for medical diagnostic applications. | 137 serum samples from an HCV biobank with confirmed HCV status via PCR [3]. |

The sensitivity and specificity of NIR spectroscopy are not intrinsic, fixed values but are highly context-dependent. As the data shows, performance can range from excellent for quantitative analysis of API in a controlled production environment to poor for screening a wide variety of substandard and falsified drugs with a single device [1] [2]. Key factors influencing these metrics include the complexity of the sample matrix, the robustness of the chemometric model, the quality and breadth of the reference library, and the rigor of the validation protocol [5]. Therefore, while NIR presents a compelling rapid-alternative to traditional methods, its adoption for critical applications must be preceded by rigorous, application-specific validation studies that transparently report both sensitivity and specificity against accepted reference methods.

The Critical Role of Validation in Regulatory Compliance and Quality Assurance

The global threat of substandard and falsified (SF) medicines represents a critical public health challenge, contributing to approximately 1 million deaths annually, with low- and middle-income countries (LMICs) disproportionately affected [6]. In the pharmaceutical sector, analytical validation forms the cornerstone of regulatory compliance and quality assurance, ensuring that medicines meet established standards for identity, strength, quality, and purity. As technological advancements introduce novel screening devices, rigorous performance validation becomes paramount—not merely for regulatory approval but for safeguarding patient safety and maintaining trust in healthcare systems. This comparative guide examines the validation of Near-Infrared (NIR) spectroscopy against the established reference standard of High-Performance Liquid Chromatography (HPLC), providing researchers and regulatory professionals with critical performance data essential for informed decision-making in pharmaceutical quality control.

Methodology: Comparative Validation Framework

Study Design and Sample Collection

The validation data presented herein stems from a rigorous comparative study conducted across six geopolitical regions of Nigeria—Abuja, Kano, Lagos, Onitsha, Port Harcourt, and Yola [6]. Researchers employed a systematic mystery-shopper approach, with twelve enumerators purchasing medicine samples from 1,296 randomly selected pharmacies in both rural and urban areas [2]. The study analyzed 246 drug samples across four critical therapeutic categories: analgesics, antimalarials, antibiotics, and antihypertensives, reflecting market distribution patterns [6].

Analytical Techniques Compared

Reference Method: High-Performance Liquid Chromatography (HPLC)

- Equipment: Agilent 1100 HPLC system with online degasser, variable UV detector, quaternary pump, autoliquid sampler, and thermostated column compartment [2]

- Data Processing: Chemstation Rev. B.04.03-SP1 software [2]

- Methodology: Validated methods employed for each molecule with confirmed system suitability using reference standards for each analyte [2]

- Laboratory: Hydrochrom Analytical Services Limited, Lagos [6]

Evaluated Technology: Handheld NIR Spectrometer

- Technology: Patented, AI-powered handheld spectrometer with proprietary machine-learning algorithm [6]

- Spectral Range: NIR-Dispersive range of 750 to 1500 nm [6]

- Analysis Method: Compares drug's spectral signature to cloud-based AI reference library of authentic products [6]

- Measurement Time: Approximately 20 seconds per sample [6]

- Key Features: Non-destructive testing, real-time analysis with results sent to smartphone app [6]

Performance Metrics

The validation study employed standardized statistical measures for diagnostic test evaluation:

- Sensitivity: Proportion of SF medicines correctly identified by NIR out of all those identified as poor quality by HPLC (true positive rate) [2]

- Specificity: Proportion of authentic medicines correctly identified by NIR out of all those determined to be good quality by HPLC (true negative rate) [2]

- Additional Calculations: Positive predictive value and negative predictive value were also calculated [2]

Comparative Performance Analysis

The comparative analysis revealed significant disparities in performance between the handheld NIR spectrometer and the reference HPLC method:

Table 1: Overall Performance Comparison of NIR Spectrometer vs. HPLC

| Performance Metric | NIR Spectrometer Result | HPLC Reference Standard |

|---|---|---|

| SF Medicine Detection Rate | Limited subset (primarily analgesics) | 25% failure rate (overall samples) |

| Overall Sensitivity | 11% | Reference method (100%) |

| Overall Specificity | 74% | Reference method (100%) |

| Analysis Time | ~20 seconds | Hours to days (with sample preparation) |

| Sample Destruction | Non-destructive | Destructive |

| Operational Environment | Field-deployable | Laboratory setting required |

Performance Across Therapeutic Categories

The performance of the NIR spectrometer varied substantially across different drug categories, highlighting formulation-specific challenges:

Table 2: NIR Spectrometer Performance by Drug Category

| Drug Category | Sensitivity | Specificity | Sample Size (N) | Key Challenges |

|---|---|---|---|---|

| Analgesics | 37% | 47% | 110 | Spectral library completeness |

| Antibiotics | Not reported | Not reported | 38 | Complex API structures |

| Antihypertensives | Not reported | Not reported | 31 | Low dosage detection |

| Antimalarials | Not reported | Not reported | 67 | Combination therapies |

Technology Workflow Comparison

The fundamental differences in operational workflows between the two technologies illustrate the trade-offs between speed and analytical depth:

Diagram 1: Comparative analytical workflows for NIR spectrometry and HPLC methods.

Critical Factors Influencing NIR Spectrometer Performance

Spectral Library Completeness

The effectiveness of AI-powered NIR spectrometers is intrinsically tied to the comprehensiveness of their reference spectral libraries. In the validation study, only 3 of the 20 drug products tested were pre-existing in the library—May & Baker Para (Paracetamol) Tablets, Emzor Paracetamol Tablets (500mg), and Lonart-DS Artemether Lumefantrine Tablets (80mg/480mg) [6]. The remaining drug samples required sourcing and addition to the reference library by the company, with researchers noting that they were not provided details on training exercise results or specific detection thresholds [6]. This dependency creates significant limitations for widespread deployment, particularly for diverse drug formulations across multiple markets.

Analytical Capabilities and Limitations

The NIR spectrometer's analytical approach differs fundamentally from chromatographic methods, with distinct advantages and limitations:

Spectral Signature Analysis: The device captures the spectrum of the entire drug (both API and excipients), storing the spectral signature of the medical product [6]. It also measures spectral intensity, which is proportional to that of the authentic product from the manufacturer [6].

Detection Capabilities:

- Counterfeit Detection: Achieved by matching signature spectrum of reference product with field-collected drug samples [6]

- Substandard Detection: Performed by matching intensity of reference product with field-collected drug samples [6]

Technology Limitations: The study revealed that the device performed best with analgesics but showed markedly reduced sensitivity with more complex formulations, indicating potential challenges with specific API characteristics, excipient interference, or dosage form variations [6].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Materials and Methods for NIR Spectrometer Validation

| Component | Function in Validation | Technical Specifications | Critical Considerations |

|---|---|---|---|

| Reference Standards | HPLC calibration and method verification | Certified reference materials with documented purity | Must cover all APIs and potential degradants |

| NIR Spectral Library | Reference database for authentic products | Cloud-based AI library with spectral signatures | Requires continuous expansion for new formulations |

| HPLC System | Reference method for quantitative analysis | Agilent 1100 system with UV detection | System suitability testing prerequisite for validation |

| Chemometric Software | Spectral data processing and model development | Proprietary algorithms with machine learning | Validation requires transparency in algorithms and thresholds |

| Sample Preparation Supplies | HPLC sample processing and extraction | Solvents, filters, and extraction apparatus | Standardized protocols essential for reproducibility |

Regulatory Implications and Quality Assurance Framework

Validation in Regulatory Decision-Making

The validation data presented carries significant implications for regulatory science and quality assurance protocols. With 25% of samples failing HPLC testing in the Nigerian study context, the documented 11% sensitivity of the NIR spectrometer indicates that a substantial proportion of SF medicines would evade detection if relied upon as a primary screening method [6]. This performance gap necessitates a careful regulatory approach when considering the adoption of emerging technologies for quality surveillance.

Regulatory agencies including Nigeria's National Agency for Food and Drug Administration and Control (NAFDAC) have implemented multiple technologies in combating SF medicines, including Raman Spectroscopy, GPHF Minilab, Mobile Authentication Service, and most recently a "Green Book" database of registered and approved drugs [6]. The study authors appropriately recommend that "regulators should require more independent evaluations of various drug formulations before implementing them in real-world settings" [6].

Strategic Implementation Framework

Based on the comparative performance data, a strategic implementation framework for NIR spectrometers in regulatory compliance would include:

Tiered Screening Approach:

- Level 1: Rapid NIR screening for high-volume, simple formulations (e.g., analgesics)

- Level 2: Targeted HPLC confirmation for suspicious samples, complex formulations, and random quality control

Technology Development Priorities:

- Immediate: Expand spectral libraries for essential medicines across therapeutic categories

- Medium-term: Enhance sensitivity through improved algorithms and hardware modifications

- Long-term: Develop integrated systems combining multiple complementary technologies

Quality Assurance Protocols:

- Regular proficiency testing with known standards

- Continuous performance monitoring against reference methods

- Clear escalation pathways for discrepant results

The comparative validation of NIR spectrometer technology against HPLC underscores a critical tension in pharmaceutical quality assurance: the compelling need for rapid, portable screening tools versus the non-negotiable requirement for accurate detection of substandard and falsified medicines. While AI-powered NIR spectrometers offer transformative potential for field-based screening with their speed, portability, and non-destructive capabilities, their current sensitivity limitations render them insufficient as standalone solutions for comprehensive quality control.

The validation data unequivocally demonstrates that improving the sensitivity of these devices must be prioritized before they can be reliably deployed as primary screening tools in regulatory compliance settings [6]. Pharmaceutical quality professionals and regulatory scientists should adopt a complementary technology approach, leveraging the strengths of both rapid screening methods and definitive quantitative analysis. Ultimately, the critical role of validation in regulatory compliance resides not in rejecting innovative technologies, but in rigorously characterizing their performance boundaries to inform appropriate implementation strategies that protect public health without impeding technological progress.

Molecular Basis of NIR Spectral Signatures for Material Characterization

Near-infrared (NIR) spectroscopy has emerged as a powerful analytical technique for material characterization, valued for its rapid, non-destructive analysis capabilities. This guide explores the fundamental molecular interactions that generate NIR spectral signatures and provides a comparative analysis of its performance against other analytical techniques across various applications.

Molecular Foundations of NIR Spectroscopy

NIR spectroscopy operates in the 780–2500 nm region of the electromagnetic spectrum, analyzing organic compounds through absorption of near-infrared radiation by functional groups containing hydrogen atoms, particularly O-H, C-H, and N-H bonds [7] [8]. The technique measures overtone and combination vibrations of these fundamental molecular bonds, creating a unique "global molecular fingerprint" for each material [3] [9].

The resulting spectrum represents complex interactions between a material's molecular composition and NIR radiation, with absorption patterns influenced by factors including molecular structure, hydrogen bonding, and the physical state of the material. These spectral signatures provide both qualitative and quantitative information about material composition [8].

Comparative Performance Analysis

NIR Versus Reference Analytical Techniques

The following table summarizes quantitative performance comparisons between NIR spectroscopy and established analytical methods across different applications:

Table 1: Performance comparison of NIR spectroscopy against reference analytical methods

| Application Domain | Comparison Technique | Key Performance Metrics | Experimental Findings |

|---|---|---|---|

| Pharmaceutical Quality Control | High-Performance Liquid Chromatography (HPLC) | Sensitivity: 11-37%Specificity: 47-74% | NIR showed lower sensitivity but reasonable specificity for detecting substandard and falsified medicines; performance varied significantly by drug category [2] |

| Biofuel Feedstock Characterization | Conventional Chemical Analysis | R²: 0.66-0.84RPD: 1.68-2.51 | NIR demonstrated fair to good predictive accuracy for key properties including acid value, density, and kinematic viscosity in used cooking oil [10] |

| Agricultural Nutrient Management | Nuclear Magnetic Resonance (NMR) | R²: 0.66-0.84RPD: 1.68-2.51 | NIR showed fair predictive accuracy for manure properties, though factory-calibrated NMR demonstrated superior precision for chemical characterization [11] |

| Wood Property Analysis | Laboratory Benchtop NIR | R²: Comparable performance | Portable NIR hyperspectral imaging systems achieved performance equivalent to laboratory benchtop systems for specific gravity and stiffness prediction [12] |

Technique Selection Guidelines

Table 2: Optimal application scenarios for NIR spectroscopy and alternative techniques

| Technique | Optimal Use Cases | Strengths | Limitations |

|---|---|---|---|

| NIR Spectroscopy | Rapid screening, field analysis, quality control | Non-destructive, rapid analysis (seconds), portable options, minimal sample preparation [8] | Limited sensitivity for trace analysis, requires robust calibration, overlapping spectral peaks [2] [7] |

| FTIR Spectroscopy | Molecular structure identification, detailed chemical analysis | Detailed molecular fingerprinting, broad spectral range, effective for unknown material identification [8] | Longer preparation time, less portable, typically requires laboratory setting [8] |

| HPLC | Quantitative pharmaceutical analysis, regulatory testing | High sensitivity and specificity, established regulatory acceptance [2] | Destructive, time-consuming, requires sample preparation, laboratory-bound [2] |

| NMR Spectroscopy | Precise molecular-level analysis, structural elucidation | High precision for chemical properties, detailed molecular information [11] | Expensive equipment, requires expert knowledge, less accessible for routine use [11] |

Experimental Methodologies

Standardized NIR Analysis Protocol

The following diagram illustrates a generalized workflow for NIR spectral analysis and model development:

Spectral Acquisition Parameters: Typical NIR analysis employs a wavelength range of 900-1700 nm for portable systems and 400-2500 nm for laboratory systems, with scan times ranging from 20 seconds to 1 minute per sample depending on instrumentation [2] [13]. Measurements can be performed in reflectance or transmission mode, with reflectance being more common for solid samples.

Data Preprocessing: Raw spectral data typically undergoes preprocessing to enhance signal quality and reduce noise. Common techniques include Standard Normal Variate (SNV) correction, derivative transformations, multiplicative scatter correction (MSC), and Savitzky-Golay smoothing [11] [3].

Pharmaceutical Quality Control Protocol

A specific implementation for pharmaceutical analysis involved purchasing 246 drug samples from retail pharmacies across six geopolitical regions of Nigeria. Samples included analgesics, antimalarials, antibiotics, and antihypertensives. Each sample was analyzed using both a handheld NIR spectrometer (750-1500 nm range) and HPLC as the reference method [2].

The NIR device employed a proprietary machine-learning algorithm to compare spectral signatures against a cloud-based AI reference library. The process took approximately 20 seconds per sample, with results transmitted to a smartphone application. Sensitivity and specificity were calculated by comparing NIR results with HPLC findings [2].

Advanced Applications and Methodological Innovations

Integration with Artificial Intelligence

Recent advancements have demonstrated the powerful synergy between NIR spectroscopy and artificial intelligence. Self-supervised learning (SSL) frameworks based on convolutional neural networks (CNNs) have shown remarkable classification accuracy (97-99%) even with limited labeled datasets [7].

This approach utilizes a two-stage process with pre-training on pseudo-labeled data followed by fine-tuning with a smaller set of labeled samples. This methodology significantly reduces the dependency on extensive labeled datasets and domain expertise while maintaining high classification accuracy across diverse sample types including pharmaceuticals, food products, and coal [7].

Wavelength Selection for Specific Applications

Research has identified optimal wavelength regions for specific analytical applications:

HCV Detection in Serum: Informative wavelengths were identified at 1150 nm, 1410 nm, and 1927 nm, associated with water molecular states and liver function biomarkers [3].

Wood Property Analysis: SWIR-HSI models heavily favored wavelengths greater than 1900 nm for predicting specific gravity and stiffness [12].

Used Cooking Oil Analysis: NIR demonstrated superior performance over Raman spectroscopy for determining acid value, density, and kinematic viscosity when combined with appropriate chemometric methods [10].

Essential Research Reagent Solutions

Table 3: Key research reagents and materials for NIR spectroscopic analysis

| Reagent/Material | Function in NIR Analysis | Application Examples |

|---|---|---|

| Reference Standards | Calibration and validation of spectral libraries | Pharmaceutical authentication [2], material verification [9] |

| White Reference Materials | Instrument calibration and background correction | Spectral normalization [13], signal correction [12] |

| Chemical Standards | Method development and validation | Quantification of specific analytes [10] [11] |

| Sample Containers | Hold samples during analysis without interfering with spectra | Borosilicate glass vials for liquids [3], quartz cells for solid samples [13] |

NIR spectroscopy provides a versatile, rapid, and non-destructive approach for material characterization across diverse fields from pharmaceuticals to agriculture. While it may not match the sensitivity of techniques like HPLC or the molecular-level precision of NMR for specific applications, its portability, speed, and minimal sample preparation requirements make it invaluable for screening and quality control applications. The integration of artificial intelligence with NIR spectroscopy represents a significant advancement, addressing previous limitations related to data interpretation and calibration stability. As instrumentation continues to evolve and computational methods advance, NIR spectroscopy is poised to expand its applications in both laboratory and field-based material characterization.

Market Growth and Adoption Trends Driving Validation Needs

The global Near-Infrared (NIR) Spectroscopy Market is experiencing significant growth, driven by applications in pharmaceuticals, food and beverage, agriculture, and biomedical diagnostics [14]. This expansion is fueling an urgent need for rigorous validation studies to establish instrument sensitivity and specificity across diverse use cases. As NIR technology evolves toward portability and artificial intelligence integration, the demand for standardized performance verification has become critical for research, regulatory compliance, and quality control professionals who rely on these instruments for critical decision-making.

Comparative Performance Analysis: NIR Spectrometers vs. Reference Methods

Independent validation studies reveal significant variability in NIR spectrometer performance across different applications and sample types. The following tables summarize key comparative data from recent scientific investigations.

Table 1: Performance Comparison of NIR Spectrometers in Pharmaceutical Analysis

| Study Focus | Reference Method | NIR Sensitivity | NIR Specificity | Key Findings | Citation |

|---|---|---|---|---|---|

| SF Medicines in Nigeria (246 samples) | HPLC | 11% (all medicines); 37% (analgesics) | 74% (all medicines); 47% (analgesics) | 25% of samples failed HPLC; NIR detected only a subset, primarily analgesics. | [6] [2] |

| Substance P in Saliva (102 subjects) | ELISA | Not explicitly stated | Not explicitly stated | Bland-Altman plots showed a strong agreement between NIR and ELISA (p > 0.05). | [15] |

| Pharmaceutical Tablets (Classification) | Chemical Assay | Not explicitly stated | Not explicitly stated | CNN-based self-supervised learning achieved 98.14% classification accuracy. | [7] |

Table 2: NIR Performance in Food Authenticity and Adulteration Detection

| Sample Matrix | Analysis Type | Performance Metric | Result | Citation |

|---|---|---|---|---|

| Hazelnuts (300+ samples) | Cultivar/Origin Authentication | Accuracy (Benchtop NIR) | ≥ 93% | [16] |

| Hazelnuts (300+ samples) | Cultivar/Origin Authentication | Accuracy (Handheld NIR) | Effective for cultivar, lower sensitivity for origin | [16] |

| Protein Powders (819 samples) | Adulterant Detection (Melamine, Urea) | Limit of Detection (LOD) | ~0.1% for best-performing benchtop grating instrument | [17] |

| Peanut Oil | Adulteration Identification | Coefficient of Determination (R²) | > 0.9311 | [18] |

Detailed Experimental Protocols for Key Validation Studies

Protocol 1: Validation of Handheld NIR for Pharmaceutical Quality Screening

A 2025 study in Nigeria provided a direct comparison between a proprietary AI-powered handheld NIR spectrometer and High-Performance Liquid Chromatography (HPLC) for detecting substandard and falsified (SF) medicines [6] [2].

- Device Specifications: The study utilized a patented handheld spectrometer with a NIR range of 750–1500 nm, leveraging a proprietary machine-learning algorithm and a cloud-based AI reference library of spectral signatures [6] [2].

- Sample Collection: Researchers purchased 246 drug samples (analgesics, antimalarials, antibiotics, antihypertensives) from retail pharmacies across six geopolitical regions of Nigeria using mystery shoppers [6] [2].

- Testing Methodology: Each sample was analyzed non-destructively with the NIR device, which compared the spectral signature and intensity against authenticated references in its library. A "non-match" result indicated a poor-quality medicine. The entire process took approximately 20 seconds per sample [6] [2].

- Reference Method Analysis: The same samples underwent compositional quality analysis using an Agilent 1100 HPLC system. A validated method was employed for each molecule, and system suitability was confirmed prior to each analysis using reference standards [2].

- Data Analysis: Sensitivity and specificity of the NIR device were calculated using HPLC results as the reference standard [2].

Protocol 2: Validation of NIR for Biomarker Detection in Saliva

A 2025 study validated an NIR device for detecting Substance P, a neuropeptide biomarker, in saliva samples from patients with Chronic Obstructive Pulmonary Disease (COPD) [15].

- Sample Preparation: Saliva was collected from 102 subjects (44 with COPD, 58 controls) in Salivette tubes, immediately centrifuged, treated with a protease inhibitor, and stored at -80°C until analysis [15].

- NIR Measurement Procedure:

- The sample was placed in a sterilized quartz cuvette for an absorbance measurement in the 900–1900 nm band.

- An interdigitated electrode sensor measured the electrochemical impedance of the sample.

- Pre-configured algorithms performed clustering classification.

- The analyte was identified, and its concentration was determined in pg/ml. Each sample was measured 10 times, with outliers discarded and the mean of the remaining eight used [15].

- Reference Method: The same saliva samples were analyzed using a commercial Enzyme-Linked Immunosorbent Assay (ELISA) kit following the manufacturer's instructions [15].

- Data Modeling & Validation: A Convolutional Neural Network (CNN) regression model was developed to predict Substance P concentration from NIR data. Agreement between NIR and ELISA results was assessed using paired t-tests and Bland-Altman plots [15].

Essential Research Reagent Solutions for NIR Validation

Successful implementation and validation of NIR spectroscopy require specific reagents and materials. The following table details key components used in the featured studies.

Table 3: Essential Research Reagents and Materials for NIR Validation Studies

| Item Name | Function/Application | Example from Research |

|---|---|---|

| Authenticated Drug Samples | Serves as a reference standard to build spectral libraries for pharmaceutical verification. | The company sourced exact branded drug samples to build the reference library for the Nigeria study [6] [2]. |

| HPLC System & Reference Standards | Provides the primary, validated quantitative method against which NIR performance is benchmarked. | An Agilent 1100 HPLC system with validated methods and reference standards for each analyte was used [2]. |

| ELISA Kit | Provides a gold-standard reference for quantifying specific biomarkers in biological samples. | The Human Substance P kit (MBS3800193) was used to validate NIR readings in the COPD study [15]. |

| Protease Inhibitor | Preserves protein and peptide integrity in biological samples prior to analysis. | Added to saliva supernatant after centrifugation to prevent degradation of Substance P [15]. |

| Chemometric Software | Used for spectral preprocessing, data modeling, and generating predictive algorithms. | Used for techniques like Savitzky-Golay filtering, SNV, PLS Regression, and CNN modeling [15] [7] [18]. |

Visualizing NIR Spectrometer Validation Workflows

The following diagrams illustrate the logical workflow for validating NIR spectrometers and the key factors influencing their performance in scientific studies.

NIR Validation Workflow

NIR Performance Factors

Technical Requirements for Effective NIR Implementation

The transition of NIR spectroscopy from a promising technology to a validated analytical tool depends on several critical factors, as identified in the research:

- Robust Chemometric Models: Effective NIR analysis relies on sophisticated data processing. Techniques such as Partial Least Squares (PLS) regression, Support Vector Machines (SVM), and Convolutional Neural Networks (CNN) are essential for extracting meaningful information from complex spectral data [7] [18]. The integration of self-supervised learning (SSL) frameworks is a key advancement, dramatically improving classification accuracy even with minimal labeled data, as demonstrated by an accuracy of 99.12% on a tea dataset using only 5% labeled data [7].

- Comprehensive Reference Libraries: The accuracy of any NIR system is contingent on the quality and breadth of its reference spectral library. Building these libraries requires sourcing and analyzing a wide range of authentic samples to capture natural and manufacturing variations [6] [2].

- Rigorous Handling of Data Variability: Research indicates that analytical flexibility in data processing pipelines can significantly impact NIR results and their reproducibility. Factors such as how poor-quality data are handled, the choice of preprocessing steps, and statistical modeling techniques are key sources of variability that must be controlled through standardized protocols [19].

Near-Infrared (NIR) spectroscopy has become a cornerstone analytical technique across pharmaceutical, agricultural, and food industries due to its non-destructive nature, rapid analysis capabilities, and minimal sample preparation requirements. However, the transition of NIR methods from controlled laboratory environments to robust industrial applications faces significant validation challenges. Two of the most critical hurdles are instrument variability and environmental factors, which can compromise data integrity and method transferability if not properly addressed. In pharmaceutical development, where precision and regulatory compliance are paramount, even minor inconsistencies in spectral data can lead to incorrect conclusions about drug product quality, potentially resulting in batch failures or regulatory issues [20].

The complexity of NIR validation stems from the fact that spectroscopic measurements are influenced by a interconnected web of factors including the instrument itself, sample characteristics, and the environment where analysis occurs. While NIR technology offers substantial benefits as a green analytical tool that reduces solvent consumption and enables real-time monitoring, its full potential can only be realized when these validation challenges are systematically addressed through rigorous experimental design and comprehensive calibration strategies [21]. This guide examines the core challenges of instrument variability and environmental factors, providing researchers with comparative data, experimental protocols, and visualization tools to enhance the reliability of NIR validation studies.

The Instrument Variability Challenge

The "Identical Twins" Conundrum in NIR Spectroscopy

A fundamental challenge in NIR validation is that even instruments of the same make and model can demonstrate noticeable performance differences—a phenomenon often termed the "identical twins" conundrum. These variations originate from subtle differences in optical components, sensor alignment, and inherent manufacturing tolerances that collectively impact spectral responses [20]. In practice, this means one spectrometer might consistently report slightly higher moisture content readings than another identical unit, potentially leading to different conclusions about product quality.

The implications of instrument variability are particularly acute in regulated industries like pharmaceuticals. Consider a scenario where multiple NIR instruments are used to measure active pharmaceutical ingredient (API) content across different manufacturing sites. If one instrument consistently reads lower than others, a batch that appears compliant when tested on this instrument might fail specification when analyzed on a properly calibrated unit. Such discrepancies can trigger costly product recalls, regulatory non-compliance, and potential patient safety concerns [20]. The problem intensifies when instruments from different manufacturers are involved, as they may employ distinct optical designs, detection technologies, and performance characteristics that further complicate method transfer and data comparison.

Quantitative Performance Comparisons Across Instrument Platforms

Table 1: Performance Comparison of NIR Spectrometers in Pharmaceutical Blending Applications

| Instrument Type | API | Spectral Pre-processing | PLS Components | R² | RMSECV | Reference |

|---|---|---|---|---|---|---|

| Portable NIR (MicroNIR) | Ibuprofen | SNV | 4 | 0.957 | 1.118 | [22] |

| Portable NIR (MicroNIR) | Paracetamol | Second Derivative | 5 | 0.984 | 0.558 | [22] |

| Portable NIR (MicroNIR) | Caffeine | SNV | 6 | 0.911 | 0.319 | [22] |

| Benchtop FT-NIR | Ibuprofen | Not Specified | Not Specified | 0.99 | 0.85 | [22] |

| Benchtop FT-NIR | Paracetamol | Not Specified | Not Specified | 0.99 | 0.45 | [22] |

| Benchtop FT-NIR | Caffeine | Not Specified | Not Specified | 0.96 | 0.25 | [22] |

Table 2: Performance Comparison of NIR Spectrometers for Olive Quality Parameters

| Instrument Type | Parameter | R²pred | Bias | Spectral Range | Reference |

|---|---|---|---|---|---|

| FT-NIR with Integrating Sphere | Moisture | 0.84 | Comparable (p>0.05) | 12,500–3600 cm⁻¹ | [23] |

| FT-NIR with Fiber Optic Probe | Moisture | 0.82 | Comparable (p>0.05) | 12,500–3600 cm⁻¹ | [23] |

| Vis/NIR Handheld Device | Moisture | 0.64 | Slightly Higher | 500–1000 nm | [23] |

| FT-NIR with Integrating Sphere | Oil Content | 0.81 | Comparable (p>0.05) | 12,500–3600 cm⁻¹ | [23] |

| FT-NIR with Fiber Optic Probe | Oil Content | 0.79 | Comparable (p>0.05) | 12,500–3600 cm⁻¹ | [23] |

Performance comparisons between instrument platforms reveal distinct trade-offs between portability and analytical precision. As shown in Table 1, portable NIR systems like the MicroNIR demonstrate solid predictive capability for pharmaceutical blending applications (Q² > 0.9), though they generally exhibit higher errors compared to benchtop systems [22]. Similarly, Table 2 highlights that while FT-NIR spectrometers with different sampling accessories (integrating sphere vs. fiber optic probe) deliver comparable results for agricultural applications, handheld Vis/NIR devices show noticeably lower performance (R²pred = 0.64 for moisture) [23]. These findings underscore the importance of matching instrument selection to specific application requirements, with benchtop systems preferable for high-precision quantification and portable units offering adequate performance for screening applications.

Environmental and Sample-Related Factors

Impact of Environmental Conditions on NIR Measurements

Environmental factors represent another significant dimension of NIR validation challenges, with temperature, humidity, and ambient lighting conditions capable of altering instrument response and sample characteristics. These factors are particularly problematic for methods transferred between different geographic locations or manufacturing sites where environmental control may vary [20]. Temperature fluctuations can affect both the instrument's detector response and the sample's spectral properties, potentially shifting absorption bands or altering scattering behavior. Similarly, humidity variations can impact samples that are hygroscopic, leading to moisture uptake that masks or interferes with the measurement of target analytes.

The FRESH (fNIRS Reproducibility Study Hub) initiative, which involved 38 research teams analyzing identical functional NIRS datasets, demonstrated that environmental and operational factors significantly influence analytical outcomes [24]. Teams with higher self-reported analysis confidence, which correlated with years of experience, showed greater agreement in results, highlighting how methodological choices in response to environmental conditions can affect reproducibility. The study identified that how researchers handled poor-quality data—often resulting from suboptimal environmental conditions—was a major source of variability in reported outcomes [24].

Sample Variability: The "Every Sample's a Snowflake" Dilemma

Beyond environmental factors, sample-related variations present a persistent challenge for NIR validation. Different samples naturally exhibit variations in physical and chemical properties, including moisture content, particle size distribution, and matrix effects that collectively complicate spectral interpretation [20]. For example, in pharmaceutical blending, slight changes in particle size can alter light scattering properties, impacting NIR spectra and potentially leading to inaccurate conclusions about blend uniformity [22].

The impact of sample variability is particularly pronounced in agricultural and food applications where natural product heterogeneity is inherent. Research on olive quality assessment demonstrates that even with sophisticated instrumentation, variations in fruit ripeness, cultivar characteristics, and growing conditions introduce spectral variability that must be accounted for during method development [23]. Similarly, in barley malt analysis, factors including genetics, growth conditions, seasonal variation, and processing history contribute to spectral differences that challenge the development of robust calibration models [25]. These sources of variability necessitate comprehensive sampling strategies that encompass the full range of expected product heterogeneity to ensure methods remain accurate throughout their lifecycle.

Experimental Protocols for Validation Studies

Protocol for Multi-Instrument Validation Study

Objective: To evaluate and mitigate instrument-to-instrument variability across multiple NIR spectrometers of the same and different models.

Materials and Reagents:

- Primary reference standards (e.g., Spectralon for reflectance)

- Chemical standards relevant to the application (e.g., USP standards for pharmaceuticals)

- At least 3-5 instruments of the same model

- 2-3 instruments of different models from various manufacturers

- Controlled environment chamber (if available)

Procedure:

- System Suitability Testing: Daily, collect spectra from certified reference materials to verify instrument performance. Document signal-to-noise ratio, wavelength accuracy, and photometric stability [20].

Cross-Instrument Comparison: Analyze a standardized set of samples (50-100 samples covering the expected concentration range) on all instruments within a narrow time window to minimize environmental drift [20].

Spectral Data Collection: Acquire spectra using consistent parameters (number of scans, resolution, measurement mode) across all instruments. For reflectance measurements, maintain consistent packing density and presentation geometry [22].

Chemometric Analysis: Develop Partial Least Squares (PLS) models for each instrument using the standardized sample set. Compare regression coefficients, latent variables, and prediction errors across instruments [22].

Model Transfer: Apply calibration models developed on a "master" instrument to "slave" instruments using algorithm transfer techniques such as Direct Standardization or Piecewise Direct Standardization [20].

Validation: Predict a separate validation set (20-30 samples) on all instruments using both instrument-specific and transferred models. Compare prediction errors and accuracy.

Data Analysis: Calculate root mean square error of prediction (RMSEP), bias, and standard error of prediction (SEP) for each instrument. Perform statistical testing (e.g., t-tests, ANOVA) to identify significant differences between instruments.

Protocol for Environmental Robustness Testing

Objective: To assess the impact of environmental factors on NIR method performance and establish operational tolerances.

Materials and Reagents:

- Stable control samples representing the application matrix

- Environmental chamber for temperature and humidity control

- Multiple batches of samples with known variation in critical quality attributes

Procedure:

- Temperature Effects: Acquire spectra of control samples at different temperatures (e.g., 15°C, 20°C, 25°C, 30°C) using a single instrument. Allow sufficient equilibration time at each temperature [23].

Humidity Effects: Under constant temperature, acquire spectra at different relative humidity levels (e.g., 30%, 50%, 70%) for hygroscopic samples.

Sample Presentation Effects: Analyze samples with deliberate variations in particle size, packing density, and orientation to simulate real-world variability [20].

Temporal Stability: Monitor control samples over an extended period (days to weeks) under consistent environmental conditions to assess method drift.

Data Pre-processing: Apply various spectral pre-processing techniques (SNV, derivatives, MSC) to evaluate their effectiveness in minimizing environmental effects [22].

Data Analysis: Use Principal Component Analysis (PCA) to visualize spectral clustering patterns related to environmental conditions. Develop PLS models with and without environmental challengers and compare prediction performance.

Visualization of NIR Validation Workflows

NIR Validation Workflow Diagram

The diagram above illustrates a comprehensive workflow for evaluating both instrument variability and environmental factors during NIR method validation. The parallel assessment pathways acknowledge that these challenges must be addressed simultaneously rather than sequentially to develop robust analytical methods. The workflow emphasizes the importance of chemometric model development and data pre-processing optimization as critical bridges between raw spectral data and validated methods suitable for regulatory submission.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for NIR Validation Studies

| Item | Function in NIR Validation | Application Examples | Critical Specifications |

|---|---|---|---|

| Spectralon Reference Standard | Provides consistent, high-reflectance surface for instrument calibration | Daily instrument qualification, wavelength verification | >99% reflectance, NIST-traceable |

| USP Analytical Standards | Certified reference materials for method validation | Accuracy determination, specificity testing | >95% purity, well-characterized |

| Controlled Environment Chamber | Maintains constant temperature and humidity during testing | Environmental robustness studies | ±0.5°C temperature control, ±5% RH control |

| Sample Cells with Precise Pathlength | Consistent sample presentation for transmission measurements | Liquid sample analysis, method transfer studies | UV-quartz or sapphire windows, precise spacing |

| Rotating Sample Cups | Minimizes sampling bias for heterogeneous powders | Solid dosage form analysis, agricultural products | Consistent rotation speed, diffuse reflectance geometry |

| Certified Microsphere Size Standards | Verification of instrument resolution and sensitivity | Performance qualification, troubleshooting | NIST-traceable, multiple size distributions |

| Stability-Indicating Reference Materials | Monitoring instrument performance over time | System suitability testing, longitudinal drift assessment | Documented stability, matrix-matched to samples |

The research reagents and materials detailed in Table 3 represent essential components for comprehensive NIR validation studies. These materials enable researchers to isolate specific sources of variability, distinguish instrument-related effects from sample-related effects, and demonstrate method robustness under varied conditions. Particularly critical are certified reference materials, which provide the anchor points for establishing method accuracy and facilitating instrument-to-instrument comparability. For regulatory submissions, documentation of these materials—including their certification, traceability, and storage conditions—is essential for demonstrating method validity to regulatory agencies.

Instrument variability and environmental factors present persistent, interconnected challenges in NIR validation that demand systematic assessment strategies. The comparative data presented in this guide demonstrates that performance differences between instrument platforms—even of the same model—are measurable and clinically significant, particularly for applications requiring high precision. Environmental conditions further complicate method robustness, with temperature, humidity, and sample presentation factors capable of altering spectral responses and prediction accuracy.

Successful NIR validation requires a holistic approach that addresses these challenges through comprehensive experimental designs, appropriate chemometric tools, and strategic deployment of reference materials. The protocols and workflows outlined provide researchers with structured frameworks for quantifying and mitigating these variability sources, ultimately supporting the development of robust NIR methods suitable for regulatory submission. As NIR technology continues to evolve toward more portable and automated systems, the fundamental validation principles outlined in this guide will remain essential for ensuring data integrity and method reliability across the product lifecycle.

Methodological Approaches and Real-World Application Studies

Substandard and falsified (SF) medical products represent a critical global public health challenge. The World Health Organization (WHO) estimates that 1 in 10 medical products in low- and middle-income countries (LMICs) is substandard or falsified, leading to approximately 1 million deaths annually and an economic burden of around $30.5 billion per year [26]. These products range from those with incorrect active pharmaceutical ingredients (APIs) or dosages to those containing harmful contaminants or no therapeutic content at all [27] [26]. The consequences include treatment failure, increased antimicrobial resistance, direct patient harm, and erosion of trust in healthcare systems [6] [26].

The complexity of global pharmaceutical supply chains, coupled with sophisticated falsification techniques, necessitates robust detection technologies. As criminal networks become more advanced, the demand for sophisticated, portable, and cost-effective authentication methods has intensified, particularly in resource-limited settings with the highest SF medicine prevalence [27]. This guide provides an objective comparison of current pharmaceutical authentication technologies, with particular focus on validating the sensitivity and specificity of Near-Infrared (NIR) spectrometry against established laboratory methods.

Pharmaceutical authentication technologies span a wide spectrum, from simple visual inspection to sophisticated laboratory analysis. Each method offers distinct advantages and limitations in terms of cost, complexity, portability, and analytical capability [27].

Table 1: Pharmaceutical Authentication Technology Categories

| Technology Category | Examples | Primary Use | Skill Requirement | Relative Cost |

|---|---|---|---|---|

| Visual Inspection | Packaging analysis, physical inspection | Preliminary screening | Low | Low |

| Physical/Chemical Testing | Colorimetry, disintegration tests | Field screening | Low to Moderate | Low to Moderate |

| Portable Instrumental Methods | Handheld NIR, Raman spectrometers | Field screening and analysis | Moderate | Moderate |

| Laboratory Chromatography | HPLC, UPLC-MS | Confirmatory analysis | High | High |

| Advanced Laboratory Methods | Mass spectrometry, forensic chemistry | Forensic investigation | High | Very High |

Technology Selection Considerations

The choice of authentication method depends on multiple factors including the required sensitivity and specificity, regulatory requirements, available resources, and operational context. While simple colorimetric tests provide rapid, inexpensive screening for specific APIs, they offer limited capability against sophisticated fakes [27]. Chromatographic methods like High-Performance Liquid Chromatography (HPLC) provide definitive quantitative analysis but require laboratory settings, trained personnel, and destroy samples [6] [2]. Portable technologies like NIR and Raman spectrometers bridge this gap by offering non-destructive, rapid analysis with minimal sample preparation, though their performance varies significantly based on implementation and reference libraries [28] [29].

Comparative Analysis: NIR Spectrometry Versus HPLC

A 2025 comparative study conducted in Nigeria provides critical performance data on a proprietary AI-powered handheld NIR spectrometer versus HPLC analysis. The research analyzed 246 drug samples across four therapeutic categories purchased from retail pharmacies [6] [2].

Experimental Protocol

- Sample Collection: Researchers purchased medicine samples from randomly selected pharmacies in urban and rural areas across six geopolitical zones of Nigeria using mystery shoppers [6] [2].

- Sample Composition: The final sub-sample included 110 analgesics (44.72%), 38 antibiotics (15.45%), 31 antihypertensives (12.60%), and 67 antimalarials (27.24%) [6].

- NIR Analysis: The handheld NIR spectrometer (750-1500nm range) used a cloud-based AI reference library to compare spectral signatures of samples against authentic products, providing results in approximately 20 seconds [6] [2].

- HPLC Reference Method: HPLC analysis was performed at Hydrochrom Analytical Services Limited using an Agilent 1100 HPLC system with validated methods for each molecule, establishing the reference standard for quality assessment [2].

- Performance Metrics: Sensitivity and specificity were calculated by comparing NIR results against HPLC findings, with HPLC considered the reference standard [2].

Performance Results

The study revealed that 25% of samples failed HPLC quality testing, confirming the high prevalence of SF medicines in the region. When compared against HPLC results, the NIR spectrometer demonstrated variable performance across therapeutic categories [6] [2].

Table 2: Performance Metrics of NIR Spectrometry vs. HPLC by Drug Category [6] [2]

| Drug Category | Sensitivity (%) | Specificity (%) | HPLC Failure Rate (%) |

|---|---|---|---|

| All Medicines | 11 | 74 | 25 |

| Analgesics | 37 | 47 | Not specified |

| Antibiotics | Not specified | Not specified | Not specified |

| Antihypertensives | Not specified | Not specified | Not specified |

| Antimalarials | Not specified | Not specified | Not specified |

The significantly higher sensitivity for analgesics (37%) compared to the overall sensitivity (11%) suggests that NIR performance varies substantially based on drug formulation and the completeness of reference spectral libraries [6] [2]. The researchers noted that only 3 of the 20 drugs tested were previously included in the device's reference library, potentially impacting performance [6].

Figure 1: NIR vs. HPLC Validation Study Workflow

Alternative Authentication Technologies

Chromatographic Methods

HPLC and related chromatographic techniques represent the gold standard for pharmaceutical quality verification, providing both qualitative and quantitative information about active ingredients and impurities [27]. These methods separate drug components based on chemical and physical properties, allowing precise quantification of API content and detection of contaminants. The primary limitations include destructive sample analysis, requirement for laboratory settings, trained personnel, sample preparation, and significant operational costs [6] [27].

Vibrational Spectroscopy Techniques

Beyond NIR spectroscopy, several related spectroscopic methods offer complementary capabilities:

NIR Chemical Imaging (NIR-CI): Combines spectroscopy with imaging to provide spatial and chemical information, enabling detection of formulation heterogeneity and counterfeit products without sample preparation. One study demonstrated effective discrimination between genuine antimalarials and counterfeits containing substitute APIs like paracetamol [29].

Raman Spectroscopy: Provides molecular fingerprinting capabilities similar to NIR but based on different physical principles. Often deployed in handheld devices for field use [30].

Thermal Analysis

Differential Scanning Calorimetry (DSC) measures thermal properties of pharmaceuticals, detecting differences in melting points and decomposition profiles between authentic and counterfeit products. Research has demonstrated DSC's ability to distinguish authentic Viagra and Cialis from counterfeits through their distinct thermal signatures, providing a complementary technique to spectroscopic methods [30].

Technical Workflows and Implementation

NIR Spectrometer Operation

The AI-powered NIR spectrometer evaluated in the Nigerian study operates through a defined workflow that enables rapid field authentication [6] [2]:

Figure 2: NIR Pharmaceutical Authentication Workflow

The process requires customized chemometric models and a comprehensive reference library of authentic products for comparison. The device captures the spectral signature of the entire drug (both API and excipients) and compares it against reference spectra using proprietary machine-learning algorithms [6] [2]. For optimal performance, the reference library must contain spectral data for specific branded products and dosage forms, highlighting the importance of comprehensive library development [6].

Key Research Reagent Solutions

Successful implementation of pharmaceutical authentication technologies requires specific materials and reference standards:

Table 3: Essential Research Materials for Pharmaceutical Authentication

| Material/Reagent | Function | Application Context |

|---|---|---|

| Authentic Drug Standards | Reference materials for spectral libraries or calibration | NIR, HPLC, DSC |

| Chemical Reference Standards | Pure API for method validation | All quantitative methods |

| Validated HPLC Methods | Protocol for reference analysis | HPLC quality verification |

| Chemometric Software | Spectral data processing and pattern recognition | NIR spectroscopy |

| Sample Preparation Kits | Standardized extraction and preparation | HPLC, colorimetry |

Research Implications and Future Directions

The comparative performance data between NIR spectrometry and HPLC reveals several critical considerations for researchers and regulators. The low overall sensitivity (11%) but higher specificity (74%) suggests current handheld NIR devices may function better as rule-in rather than rule-out tools in their present state of development [6] [2]. The significant variation in performance across drug categories (e.g., 37% sensitivity for analgesics) indicates that universal claims about NIR performance are inappropriate without category-specific validation [6].

Future development should prioritize expanding reference spectral libraries, improving machine learning algorithms for better detection of substandard products with correct ingredients but wrong concentrations, and category-specific model optimization. The research findings suggest that regulators should require more independent evaluations across diverse drug formulations before implementing these technologies in real-world settings [6].

Emerging trends include the integration of multiple technologies (e.g., NIR with Raman), miniaturization of laboratory-grade capabilities for field use, and blockchain-supported authentication systems that combine physical verification with digital tracking [26] [31]. For researchers, comprehensive validation studies across diverse geographic contexts and drug formulations remain essential to establish performance benchmarks and implementation best practices.

Pharmaceutical authentication represents a critical frontier in global public health protection against substandard and falsified medicines. While traditional laboratory methods like HPLC provide definitive reference-quality analysis, practical field solutions like NIR spectrometry offer rapid, non-destructive screening capabilities, though with varying sensitivity and specificity across drug categories. The choice of authentication technology must balance operational constraints with necessary performance requirements, recognizing that current portable solutions show promise but require further refinement to achieve the sensitivity needed to ensure no SF medicines reach patients. For researchers and drug development professionals, rigorous validation against established reference methods remains essential when evaluating any authentication technology's real-world applicability.

In the global food industry, the economic value of agricultural commodities is intrinsically linked to their cultivar and geographic origin, making the sector particularly vulnerable to fraudulent practices such as mislabeling and counterfeiting. Hazelnuts, with a market value projected to reach approximately $554 million in 2025, exemplify this challenge, as their price fluctuates significantly based on these attributes [32]. Within this context, the validation of analytical methods for food authentication becomes paramount. Near-Infrared (NIR) spectroscopy has emerged as a powerful, non-destructive tool for rapid quality control. This guide objectively compares the performance of NIR spectroscopic methods with mid-infrared (MIR) and handheld NIR (hNIR) alternatives for hazelnut authentication, providing researchers and food development professionals with critical experimental data on sensitivity, specificity, and methodological protocols to inform analytical decisions.

Performance Comparison of Spectroscopic Methods

A seminal study directly compared three spectroscopic techniques—benchtop NIR, handheld NIR (hNIR), and mid-infrared (MIR) spectroscopy—for authenticating hazelnut cultivar and geographic origin using over 300 samples from diverse origins, cultivars, and harvest years [16] [32]. The models were built using spectroscopic fingerprints and validated with Partial Least Squares-Discriminant Analysis (PLS-DA).

Table 1: Overall Performance Metrics for Hazelnut Authentication

| Spectroscopic Method | Classification Accuracy (All Models) | Cultivar Classification Sensitivity | Cultivar Classification Specificity | Performance on Geographic Origin |

|---|---|---|---|---|

| Benchtop NIR | ≥ 93% [16] [32] | 0.92 [32] | 0.98 [32] | Slightly outperformed MIR [16] |

| Mid-Infrared (MIR) | ≥ 93% [16] | Data not specified | Data not specified | High accuracy, slightly lower than NIR [16] |

| Handheld NIR (hNIR) | Lower than benchtop methods [16] | Effective for cultivars [16] [32] | Effective for cultivars [16] [32] | Struggled due to lower sensitivity [16] [32] |

The study concluded that benchtop NIR spectroscopy demonstrated superior performance for hazelnut authentication, establishing it as a fast and highly reliable tool [16] [32]. The regression coefficients in the models indicated that discrimination was primarily based on variations in protein and lipid composition within the hazelnuts [16] [32]. Furthermore, the physical state of the sample significantly influenced results, with ground hazelnuts providing better outcomes than whole kernels due to greater sample homogeneity [16].

Detailed Experimental Protocols

The following workflow generalizes the key steps employed in validated hazelnut authentication studies [16] [33] [34].

Sample Preparation and Analysis

- Sample Collection and Preparation: Researchers analyzed over 300 hazelnut samples from different cultivars, geographic origins, and harvest years to ensure model robustness [16]. For optimal results, samples were ground to a homogeneous powder, which reduces scattering effects and improves spectral quality compared to whole kernels [16]. Studies have also successfully tracked samples throughout the industrial processing chain, from fresh in-shell nuts to roasted products [33].

- Instrumentation and Spectral Acquisition: The compared techniques were:

- Benchtop NIR Spectroscopy [16] [32].

- Handheld NIR (hNIR) Spectroscopy [16] [32].

- Mid-Infrared (MIR) Spectroscopy [16]. Spectra are typically collected using a diffuse reflectance module for ground solids. The study utilizing Fourier-Transform NIR (FT-NIR) recorded spectra in the range of 12,500–3800 cm⁻¹, where overtones and combinations of C-H, O-H, and N-H vibrations occur [35] [33].

- Reference Data and Chemometric Analysis: This is a critical two-step process:

- Spectral Pre-processing: Raw spectra are cleaned and transformed using techniques like Standard Normal Variate (SNV) and Savitzky-Golay smoothing and derivatives to remove physical noise, correct baseline shifts, and enhance spectral features [35] [34].

- Model Development and Validation: Pre-processed spectral data are linked to sample identities (cultivar/origin) using PLS-DA. The model is trained on one set of samples and then externally validated using a separate, unknown set to test its real-world predictive accuracy [16] [35]. This step is crucial for assessing generalizability beyond the calibration dataset.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Materials and Reagents for Hazelnut Authentication Studies

| Item Name | Function & Application in Research |

|---|---|

| Authentic Hazelnut Reference Samples | Crucial for building calibration models. Samples must be verified by genotype and geographic origin to serve as reliable benchmarks for authentication [16] [36]. |

| Chemometric Software | Essential for spectral data pre-processing, developing classification models (e.g., PLS-DA, SVM), and validating model performance [16] [35] [34]. |

| Standard Reference Materials | Used for instrument calibration and ensuring analytical accuracy across different sessions and laboratories [35]. |

| Grinding Apparatus | Used to homogenize hazelnut kernels into a fine powder, reducing particle size variation and improving spectral data consistency and model performance [16]. |

This comparison establishes benchtop NIR spectroscopy as a highly accurate and reliable method for authenticating hazelnut cultivar and geographic origin, demonstrating superior performance against MIR and handheld NIR alternatives. The technique's non-destructive nature, rapid analysis time, and minimal sample preparation make it an ideal solution for quality control in the food industry. For researchers, the critical path forward involves expanding reference libraries to include a wider range of cultivars and growing regions, and incorporating multi-year harvest data to enhance model robustness against climatic variability. Continued advancement in portable NIR technology and machine learning algorithms promises to further extend these capabilities, enabling more pervasive and sophisticated food authentication from the laboratory to the supply chain.

The valorization of used cooking oil (UCO) is central to advancing the circular economy, transforming a waste product into valuable feedstocks for biofuels like biodiesel and hydrotreated vegetable oil (HDRD) [10] [37]. The physicochemical properties of UCO directly determine its suitability for these applications, making rapid and accurate analysis critical. Traditional methods for determining key properties such as acid value, density, and kinematic viscosity are labor-intensive, time-consuming, and generate chemical waste [10] [38].

Near-Infrared (NIR) spectroscopy has emerged as a powerful, non-destructive analytical technique that addresses these limitations. This guide objectively compares the performance of NIR spectroscopy with Raman spectroscopy, another vibrational technique, for the rapid analysis of UCO properties, providing experimental data and protocols to validate its sensitivity and specificity for industrial and research applications [10].

Technical Comparison: NIR vs. Raman Spectroscopy

NIR and Raman spectroscopy are both rapid, non-destructive vibrational techniques that, when combined with chemometrics, can quantify multiple sample properties from a single spectral measurement [10]. However, their underlying physical principles differ, leading to distinct practical advantages and limitations.

NIR spectroscopy measures molecular absorption related to overtones and combinations of fundamental vibrations of C-H, O-H, and N-H bonds. This results in broad, overlapping spectral bands that require robust chemometric analysis for interpretation [35] [10]. Raman spectroscopy, in contrast, measures the inelastic scattering of light, providing information about molecular vibrations and symmetries, often yielding sharper, more distinct spectral features [10].

A recent direct comparison study quantified the performance of these two techniques for predicting three critical UCO properties: Acid Value (AV), Density, and Kinematic Viscosity. The following table summarizes the key experimental findings, demonstrating the comparative predictive performance of each spectroscopic method [10].

Table 1: Comparative Performance of NIR and Raman Spectroscopy for UCO Analysis [10]

| Physicochemical Property | Spectroscopic Technique | Optimal Spectral Range | Optimal Pretreatment Method | Performance (R²) | Best Technique |

|---|---|---|---|---|---|

| Acid Value (AV) | FT-NIR | 4500–9000 cm⁻¹ | First Derivative + Mean Centering | 0.993 | NIR |

| Raman | 200–3200 cm⁻¹ | Data Normalization | 0.983 | ||

| Density | FT-NIR | 4500–9000 cm⁻¹ | First Derivative + Mean Centering | 0.998 | NIR |

| Raman | 200–3200 cm⁻¹ | Data Normalization | 0.964 | ||

| Kinematic Viscosity | FT-NIR | 4500–9000 cm⁻¹ | First Derivative + Mean Centering | 0.996 | NIR |

| Raman | 200–3200 cm⁻¹ | Data Normalization | 0.991 |

Experimental Protocols for UCO Analysis

Sample Preparation and Spectral Acquisition

The following workflow is adapted from a study that directly compared NIR and Raman spectroscopy for UCO analysis [10].

1. Sample Collection and Pretreatment:

- Collection: Gather UCO samples from diverse sources (e.g., households, restaurants) to ensure a representative dataset. Samples should be derived from different base oils like rapeseed, sunflower, and olive oil [10].

- Filtration: Filter all samples to remove solid food impurities larger than 400 μm [10].

- Mixing: Prepare mixed samples (e.g., 5–25 L volumes) to homogenize the collection and create a robust calibration set [10].

2. Reference Analysis:

- Determine the true values for Acid Value, Density, and Kinematic Viscosity using standardized reference methods before spectral measurement. This creates the essential dataset for building the chemometric model [10].

3. Spectral Measurement:

- For FT-NIR Spectroscopy:

- Instrument: Use an FT-NIR spectrometer with a heatable sample compartment [39] [10].

- Setup: Place the UCO sample in a disposable 8 mm glass vial. Maintain a consistent temperature during measurement, typically 75°C as per some standard methods [39] [10].

- Acquisition: Collect spectra in the range of 4,500–9,000 cm⁻¹. Co-add multiple scans (e.g., 3 spectra per sample) at a resolution of 8 cm⁻¹ to improve the signal-to-noise ratio [39] [10].

- For Raman Spectroscopy:

- Instrument: Use a standard Raman spectrometer.

- Acquisition: Collect spectra in the range of 200–3,200 cm⁻¹ [10].

Chemometric Modeling and Data Processing

1. Data Preprocessing: